Lecture 14 Iterative Refinement Physically and BiologicallyInspired Algorithms

Lecture 14. Iterative Refinement, Physically- and Biologically-Inspired Algorithms Cp. Sc 212: Algorithms and Data Structures Brian C. Dean School of Computing Clemson University Fall, 2012

Physical Computation • Many different physical processes in nature can be made to perform efficient computation… – – Ball and string models for shortest paths (gravity) Linkages; Kempe’s universality theorem Diffraction gratings and Fourier transforms Chemical computing (e. g. , finding Hamiltonian paths using DNA binding) Hecht. Optics, 3 rd edition L. M. Adleman. Molecular computation of solutions to combinatorial problems. Science 11 (1994).

Biological Computation • Many different processes in biology are quite good at performing computation or optimization… – Evolution (Spencer/Darwin: “survival of the fittest”) – Finding shortest paths with slime molds – Bees solving the traveling salesman problem – And of course, your brain…

Physical and Biologically Inspired Computing Paradigms • • • Simulated Annealing Genetic/Evolutionary Algorithms Artificial Immune Systems Swarm Intelligence Ant Colony Optimization Neural Networks 4

Iterative Refinement • Simple yet powerful idea: – Start with some arbitrary feasible solution (or better yet, a solution obtained via some other heuristic) – As long as we can improve it, keep making it better. – Simple example: bubble sort. • Getting stuck in local minima can be a problem. • Many approaches refine a population of solutions, rather than a single solution.

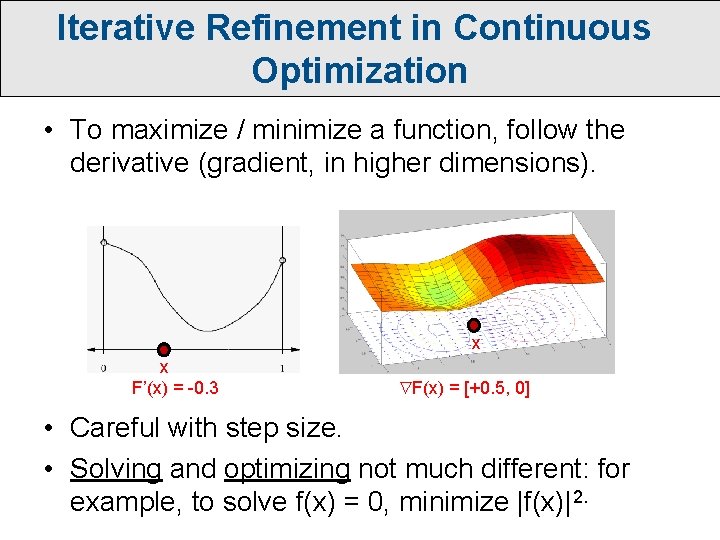

Iterative Refinement in Continuous Optimization • To maximize / minimize a function, follow the derivative (gradient, in higher dimensions). x x F’(x) = -0. 3 F(x) = [+0. 5, 0] • Careful with step size. • Solving and optimizing not much different: for example, to solve f(x) = 0, minimize |f(x)|2.

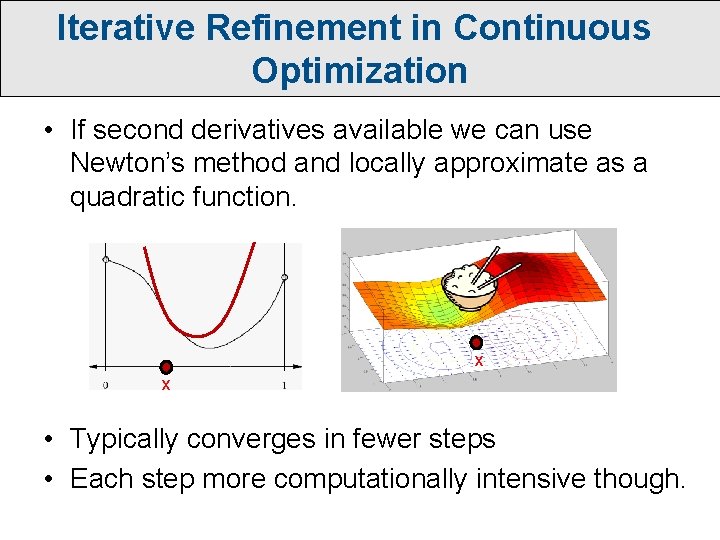

Iterative Refinement in Continuous Optimization • If second derivatives available we can use Newton’s method and locally approximate as a quadratic function. x x • Typically converges in fewer steps • Each step more computationally intensive though.

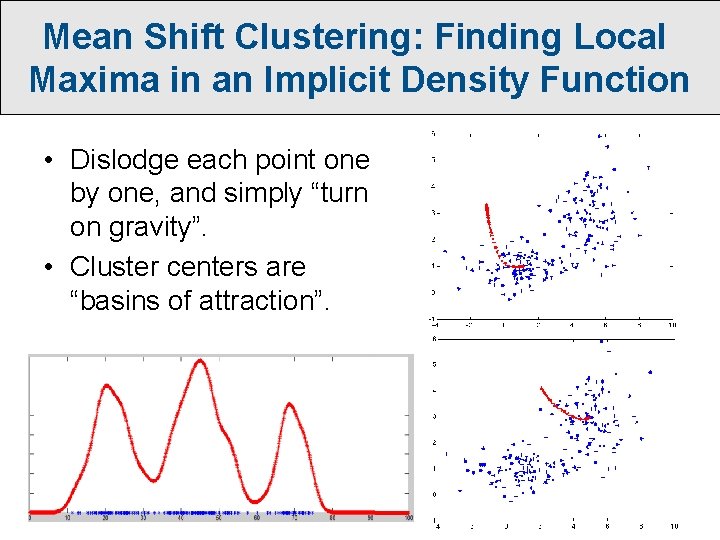

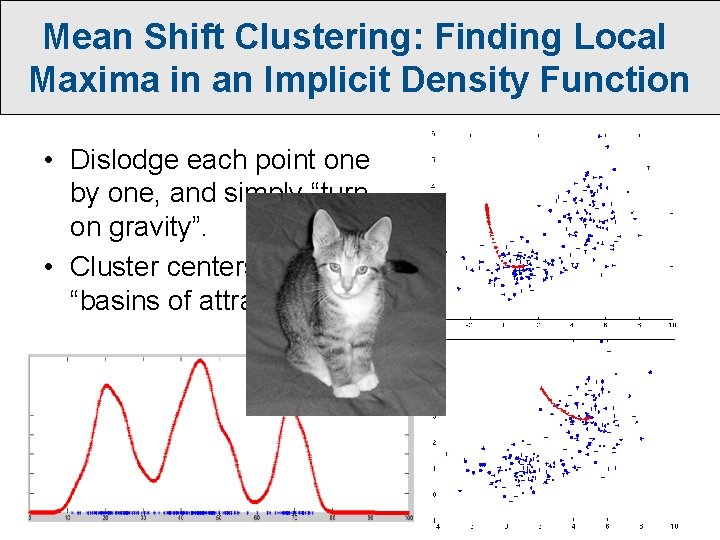

Mean Shift Clustering: Finding Local Maxima in an Implicit Density Function • Dislodge each point one by one, and simply “turn on gravity”. • Cluster centers are “basins of attraction”.

Mean Shift Clustering: Finding Local Maxima in an Implicit Density Function • Dislodge each point one by one, and simply “turn on gravity”. • Cluster centers are “basins of attraction”.

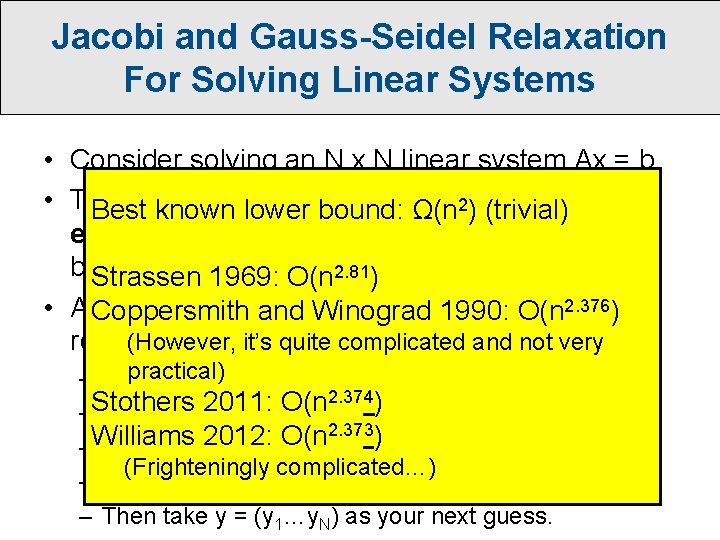

Jacobi and Gauss-Seidel Relaxation For Solving Linear Systems • Consider solving an N x N linear system Ax = b. 3) time with Gaussian • This normally takes O(N Best known lower bound: Ω(n 2) (trivial) elimination, although in theory it can be done a bit. Strassen faster… 1969: O(n 2. 81) 2. 376) • A Coppersmith much faster solution in practice uses and Winograd 1990: O(niterative (However, it’s quite complicated and not very refinement: practical) – Guess an initial solution x = (x 1…x. N) 2. 374) Stothers 2011: O(n – Solve equation 1 for x 1 to get y 1. 2. 373) Williams 2012: O(n – Solve equation 2 for x 2 to get y 2. (Frighteningly complicated…) – Etc… – Then take y = (y 1…y. N) as your next guess.

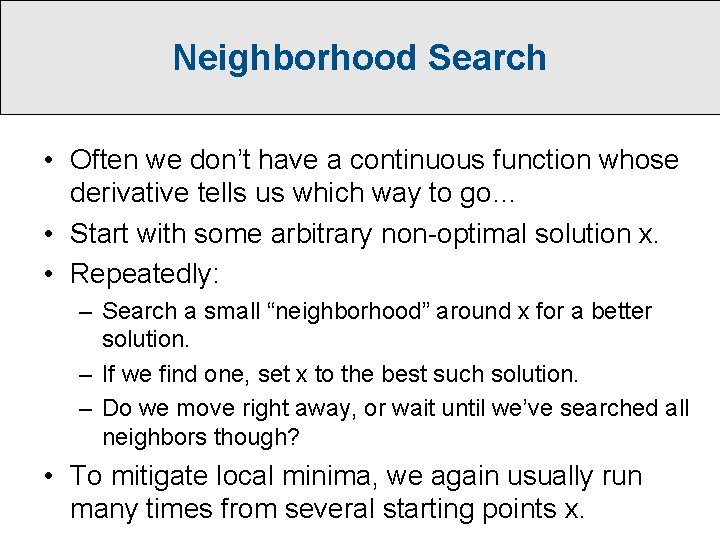

Neighborhood Search • Often we don’t have a continuous function whose derivative tells us which way to go… • Start with some arbitrary non-optimal solution x. • Repeatedly: – Search a small “neighborhood” around x for a better solution. – If we find one, set x to the best such solution. – Do we move right away, or wait until we’ve searched all neighbors though? • To mitigate local minima, we again usually run many times from several starting points x.

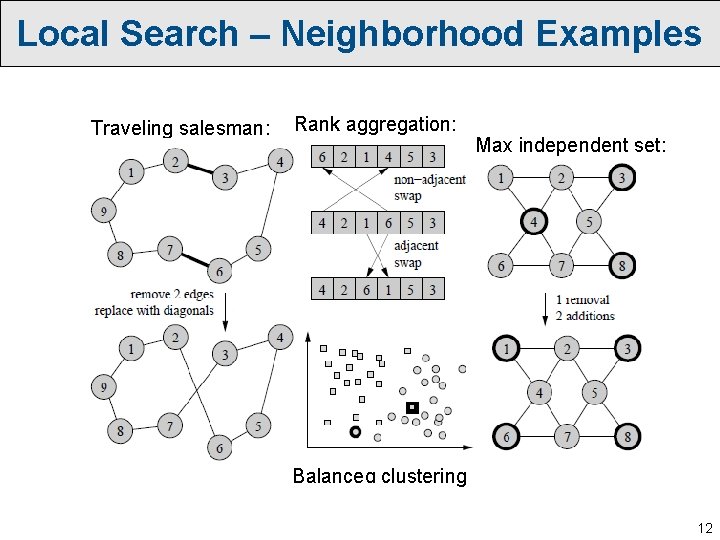

Local Search – Neighborhood Examples Traveling salesman: Rank aggregation: Max independent set: Balanced clustering 12

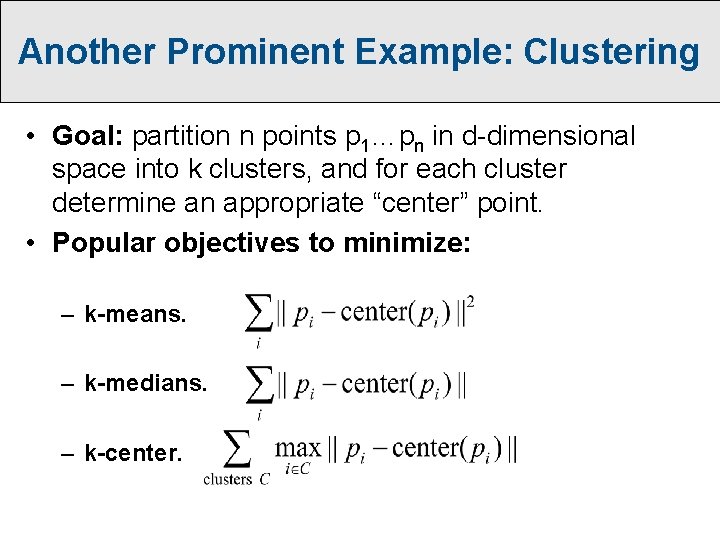

Another Prominent Example: Clustering • Goal: partition n points p 1…pn in d-dimensional space into k clusters, and for each cluster determine an appropriate “center” point. • Popular objectives to minimize: – k-means. – k-medians. – k-center.

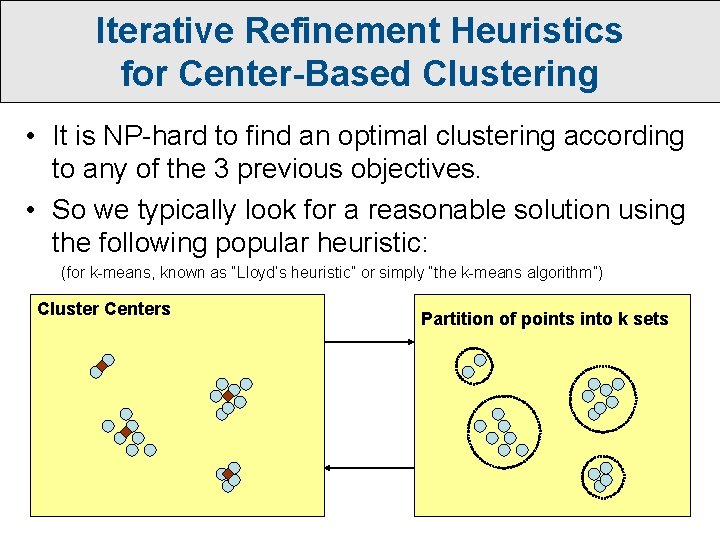

Iterative Refinement Heuristics for Center-Based Clustering • It is NP-hard to find an optimal clustering according to any of the 3 previous objectives. • So we typically look for a reasonable solution using the following popular heuristic: (for k-means, known as “Lloyd’s heuristic” or simply “the k-means algorithm”) Cluster Centers Partition of points into k sets

Tabu Search • Like neighborhood search, only you are allowed to move to worse solutions. • However, we keep a “tabu list” of recent places we’ve been, to prevent us from immediately turning around and falling back into the same locally-optimal solution.

Simulated Annealing • Like neighborhood search, only you are allowed to move to worse solutions (with a goal of escaping local minima). • Probability of moving to a worse solution proportional to how much worse it is, and this probably decreases over time as well according to a “cooling” schedule. • Think of this as a “random walk” through different solutions, where it’s much more likely to move in an improving direction.

Genetic Algorithms • Start with a population of initial solutions. • Repeatedly: – Kill off some number of the least fit solutions (probability of survival proportional to “fitness”) – Replace them with new solutions obtained by “mating” pairs of surviving solutions, or by mutating single solutions. • Getting all the parameters tuned just right can take some work, but these are quite effective for a wide range of problems…

Crossover Example Sardine Cookies

Crossover Example Sardine Cookies Liver and Chocolate Chips

Particle Swarm Optimization • Particle Swarm Optimization methods also maintain a population of solutions, but: – No crossover. – Solutions move according to velocities that are constantly (and randomly) adjusted based on gravitational pull towards good solutions found so far.

“Ameoba Search” • How can you use a GA-like approach to minimize a function f(x) over x in [0, 1]? • In higher dimensions, this leads to an idea known as downhill simplex search, the Nelder-Mead algorithm, or “amoeba” search. • Advantage: no derivative computation necessary!

- Slides: 21