Lecture 13 Reactor Design Pattern 1 Overview Blocking

Lecture 13 Reactor Design Pattern 1

Overview • Blocking sockets - impact on server scalability. • Non-blocking IO in Java - java. nio package • Involves with complications due to asynchronous nature • Impact on message parsing algorithms • Reactor design pattern • Generic server • More scalable than our earlier solutions … 2

Sockets: internal implementation • To understand how non-blocking operations work – we first need to understand how RTE internally manages IO • There’s a buffer associated with each socket. • Example: write to socket: The RTE copies bytes to the internal buffer. RTE then sends bytes from the buffer to network. When we write: no guarantee that the buffer has room – if not, we wait (block). 3

Blocking vs Non-Blocking IO • Blocking Read: • If a thread invokes read(), and internal buffer is empty • Thread is then blocked until it completes successfully • Non-Blocking Read: • Check if socket has some data available to read. • Non-block read available data from socket. Return any amount of data. • RTE copies bytes available in socket's input buffer to a process buffer, and returns number of copied bytes • If zero bytes are read – then an empty buffer is returned!

Java Non-Blocking Sockets • Blocking Write: • If the output buffer is full – the process invoking write() will be blocked until output buffer has enough free space for data • Non-Blocking Write: • Check if socket can send some data. • Non-block write data to socket. Return immediately – even if not the complete data was written! • RTE copies bytes as possible, returns the number of bytes successfully written. • Writing zero bytes is a successful invocation – it means the buffer is full!

Java Non-Blocking Sockets • Non-Block Accept: • Process holds until there is a connection. • Non-Block Accept: • Check if new connection is requested. If so, accept it, otherwise return.

RTE perspective • When do we try? How many times? When? • We need someone to notify us! • We partition the solution in two logical parts: 1. Readiness notification 2. Non-blocking input output. • Modern RTEs supply both mechanisms: • Is data available for read() in socket? • Is socket ready to send some data? • Is there new connection pending for socket? 7

Disadvantages of Thread Per Client • It's wasteful • Creating a new Thread is relatively expensive. • Each thread requires a fair amount of memory. • Threads are blocked most of the time waiting for network IO. • It's not scalable • A Multi-Threaded server can't grow to accommodate hundreds of concurrent requests • It's also vulnerable to Denial Of Service attacks • Poor availability • It takes a long time to create a new thread for each new client. • The response time degrades as the number of clients rises. • Solution • The Reactor Pattern is another (better) design for handling several concurrent clients.

Reactor Pattern – the idea • It's based on the observation: if threads do not wait for Network IO, a single thread could easily handle thousands of client requests alone. • Uses Non-Blocking IO, so threads don't waste time waiting. • Have one thread in charge of the communication: • Accepting new connections and handling network related IO. • As the Network is non-blocking, read, write and accept operations "take no time", and a single thread is enough for all the clients. • Have a fixed number of threads, in charge of the protocol. • These threads perform the message framing, decoding and encoding • And perform message processing to create responses

Java Non-blocking IO • In thread-per-client solution, the server gets stuck on • msg = in. read. Line() • client. Socket = server. Socket. accept() • Write() • Java provides an efficient Input/Output package, which supports Non-blocking IO. • Provides readiness notification. • Fundamental NIO Concepts: • Channels • Buffers • Selectors

![Channels [the new Sockets] • Socket. Channel: • Same as regular Socket object. • Channels [the new Sockets] • Socket. Channel: • Same as regular Socket object. •](http://slidetodoc.com/presentation_image_h/a6bc79164dc983dec26fefa174e86262/image-11.jpg)

Channels [the new Sockets] • Socket. Channel: • Same as regular Socket object. • Difference: read(), write() can be non-blocking. • Server. Socket. Channel: • accept() returns Socket. Channel • Same as regular Server. Socket object. • Difference: accept() can be non-blocking. Checks if a client is trying to connect, if so returns a new socket, otherwise returns null! • By default - new channels are in blocking mode. They must be set manually to non-blocking mode. 11

![Buffers [containers] • A wrapper classes used by NIO to send and recieve data Buffers [containers] • A wrapper classes used by NIO to send and recieve data](http://slidetodoc.com/presentation_image_h/a6bc79164dc983dec26fefa174e86262/image-12.jpg)

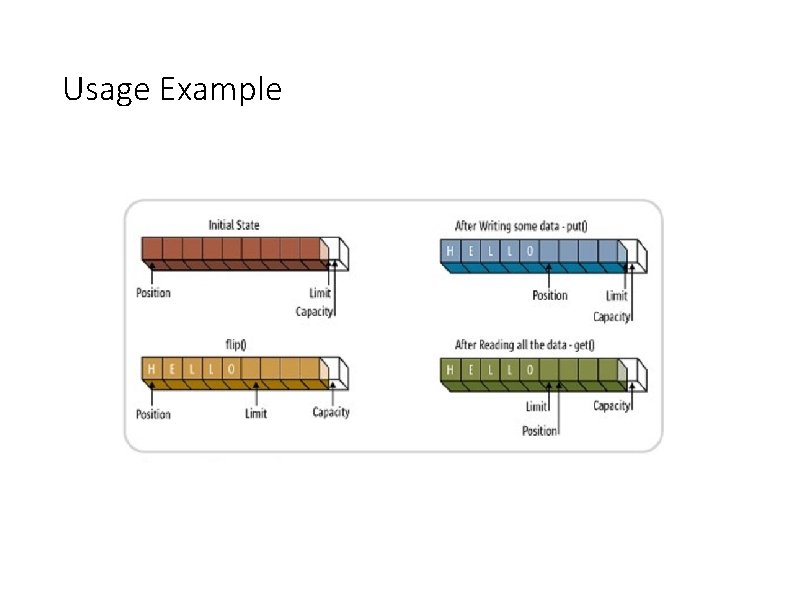

Buffers [containers] • A wrapper classes used by NIO to send and recieve data through a Channel. • Byte. Buffer for sending and receiving bytes. • A buffer can be in “write mode” when: sock. read(buff). The socket reads from the stream and writes to buff. • A buffer can be in “read mode” when: sock. write(buff). The socket reads from the buff and writes to stream. • In between read and write: flip(). 12

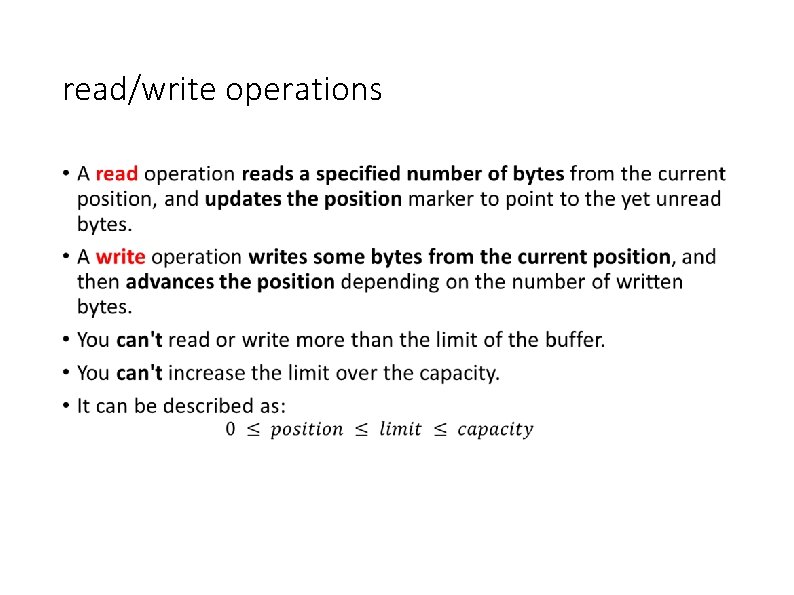

Buffer IO Operations • Reading from a channel – writing to a buffer: num. Bytes. Read = socket. Channel. read(buf); • Contents found in socket. Channel are read from their internal container object to our buffer. • Writing from a buffer – reading from a channel: num. Bytes. Written = socket. Channel. write(buf); • Contents from our buf object are written to the socket. Channel’s internal container to be sent. • If read or write returns -1, it means that the channel is closed. • Read and write operations on Buffers update the position marker accordingly.

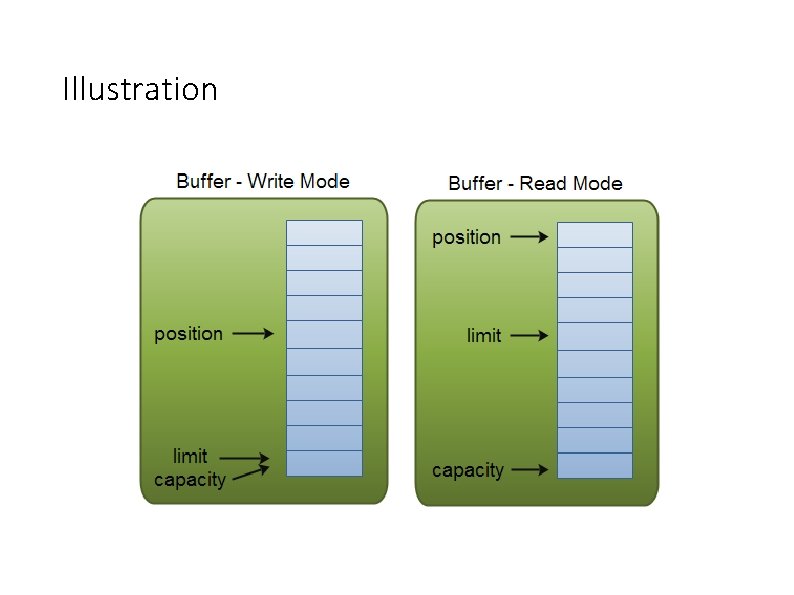

Buffer Markers • Buffers can be in “write mode” or in “read mode”. • Between writing and reading from a buffer – we should invoke flip(). • Each buffer has capacity, limit, and position markers. • Capacity: • A Buffer has a certain fixed size, also called its "capacity". • You can only write capacity bytes into the Buffer. • Once the Buffer is full, you need to empty it (read the data, or clear it) before you can write more data into it. • Position: • Writing data to buffer: • Initially the position is 0. • When a byte is written to the Buffer the position is advanced. • Reading data from buffer: • When you flip a Buffer from writing mode to reading mode, the position is reset to 0. As you read data from the Buffer you do so from position, and position is advanced.

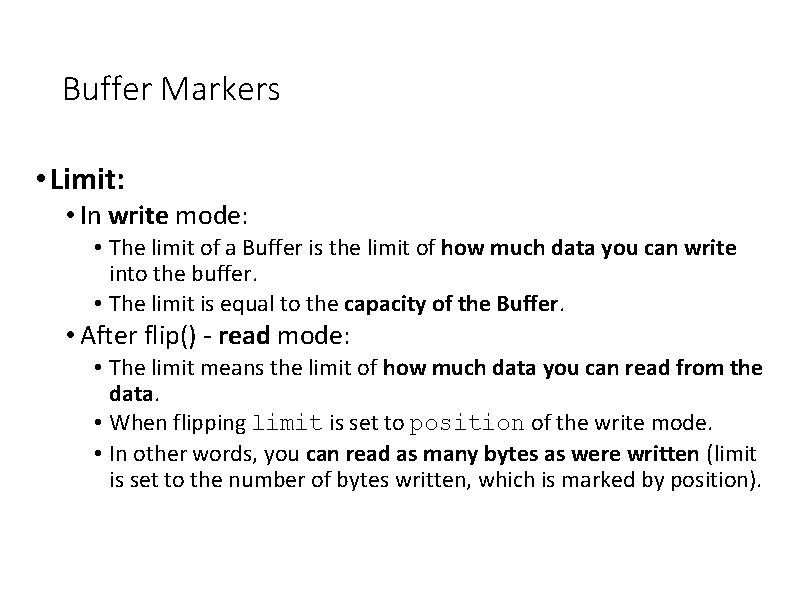

Buffer Markers • Limit: • In write mode: • The limit of a Buffer is the limit of how much data you can write into the buffer. • The limit is equal to the capacity of the Buffer. • After flip() - read mode: • The limit means the limit of how much data you can read from the data. • When flipping limit is set to position of the write mode. • In other words, you can read as many bytes as were written (limit is set to the number of bytes written, which is marked by position).

Illustration

Usage Example

read/write operations •

Buffer Flipping • The flip() method switches a Buffer from writing mode to reading mode. • Calling flip() sets the position back to 0, and sets the limit to where position just was. • The position marker now marks the reading position, and limit marks how many bytes were written into the buffer - the limit of how many bytes, chars etc. that can be read. • Example: • • You create a Byte. Buffer. Write data into the buffer. Flip() Send the buffer to the channel.

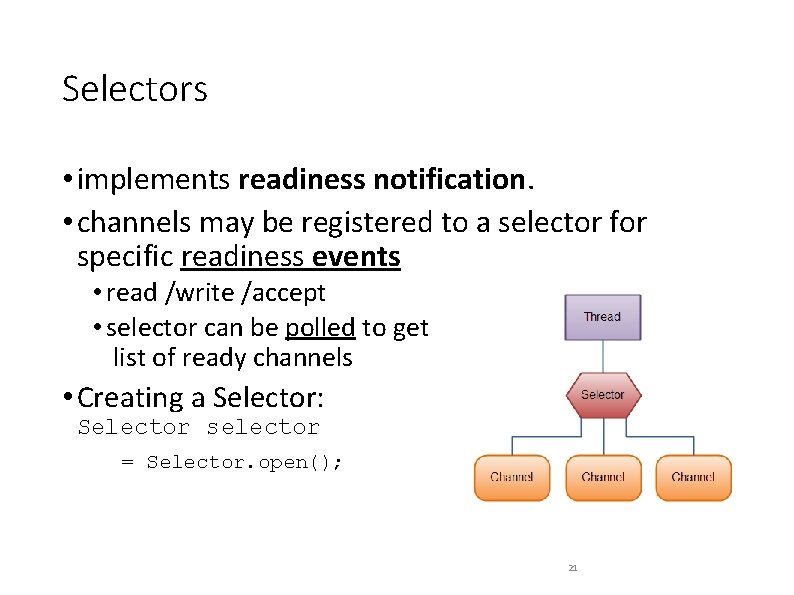

Selectors • implements readiness notification. • channels may be registered to a selector for specific readiness events • read /write /accept • selector can be polled to get list of ready channels • Creating a Selector: Selector selector = Selector. open(); 21

Selectors • channel ready for read guarantees that a read operation will return some bytes. • channel ready for write guarantees that a write operation will write some bytes • channel ready for accept guarantees that accept() will result in a new connection. • Example: Selector selector = Selector. open(); channel. configure. Blocking(false); Selection. Key key = channel. register(selector, Selection. Key. OP_READ); 22

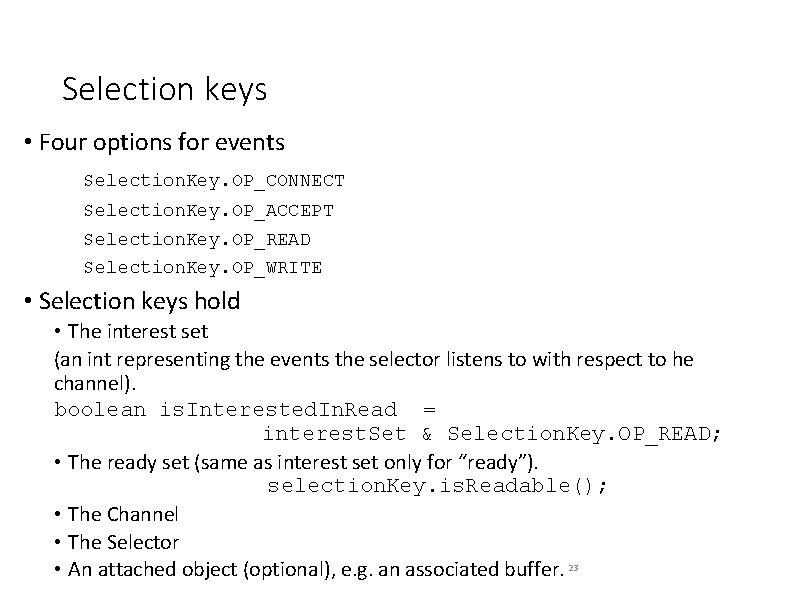

Selection keys • Four options for events Selection. Key. OP_CONNECT Selection. Key. OP_ACCEPT Selection. Key. OP_READ Selection. Key. OP_WRITE • Selection keys hold • The interest set (an int representing the events the selector listens to with respect to he channel). boolean is. Interested. In. Read = interest. Set & Selection. Key. OP_READ; • The ready set (same as interest set only for “ready”). selection. Key. is. Readable(); • The Channel • The Selector • An attached object (optional), e. g. an associated buffer. 23

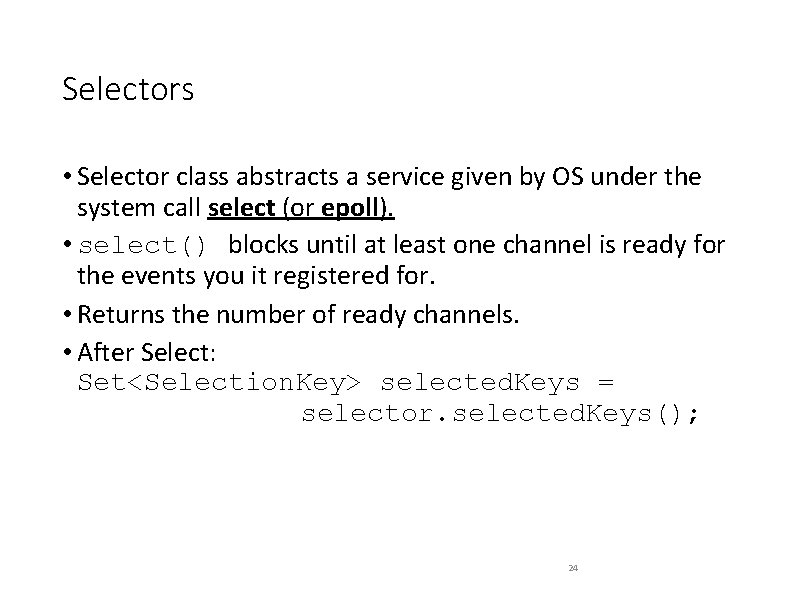

Selectors • Selector class abstracts a service given by OS under the system call select (or epoll). • select() blocks until at least one channel is ready for the events you it registered for. • Returns the number of ready channels. • After Select: Set<Selection. Key> selected. Keys = selector. selected. Keys(); 24

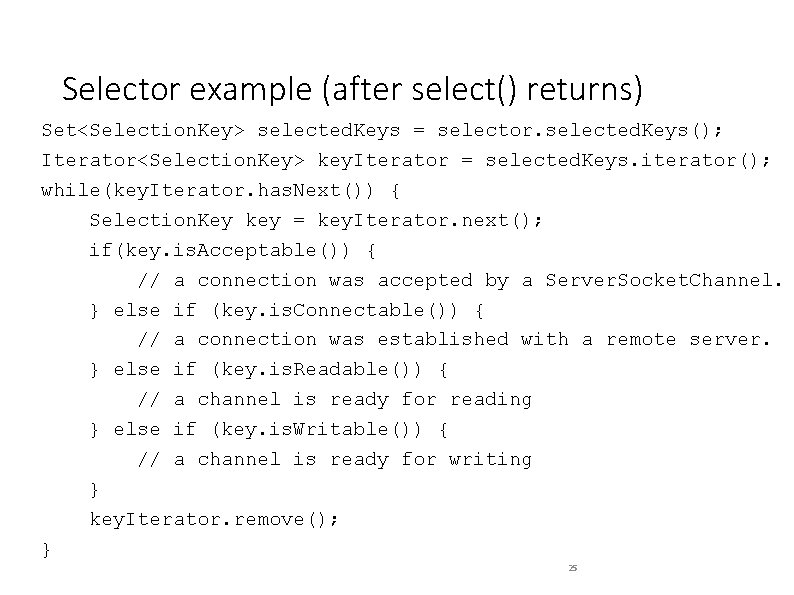

Selector example (after select() returns) Set<Selection. Key> selected. Keys = selector. selected. Keys(); Iterator<Selection. Key> key. Iterator = selected. Keys. iterator(); while(key. Iterator. has. Next()) { Selection. Key key = key. Iterator. next(); if(key. is. Acceptable()) { // a connection was accepted by a Server. Socket. Channel. } else if (key. is. Connectable()) { // a connection was established with a remote server. } else if (key. is. Readable()) { // a channel is ready for reading } else if (key. is. Writable()) { // a channel is ready for writing } key. Iterator. remove(); } 25

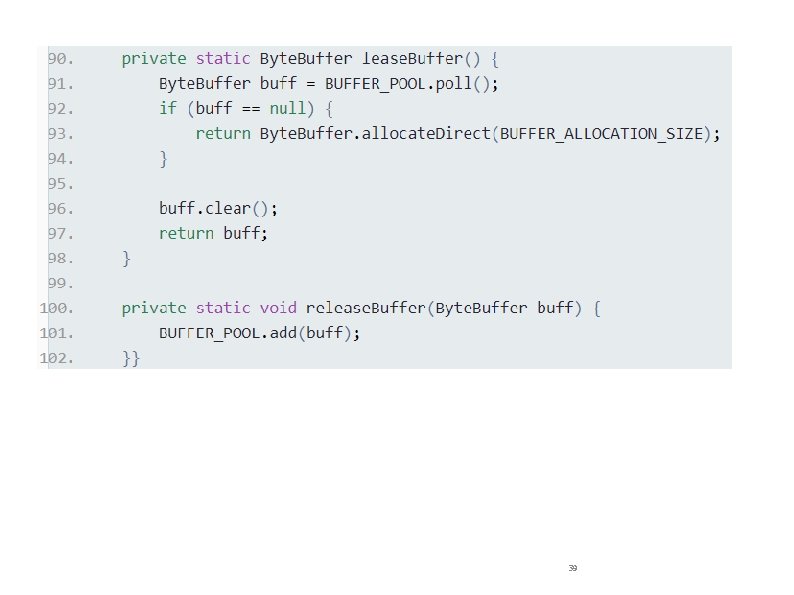

Reactor IO • reactor is accepting new connections. • If bytes ready to read from socket, reactor read bytes and transfer to protocol (previous lecture). • if socket is ready for writing, reactor checks if there is a write request - if so, reactor sends data. 26

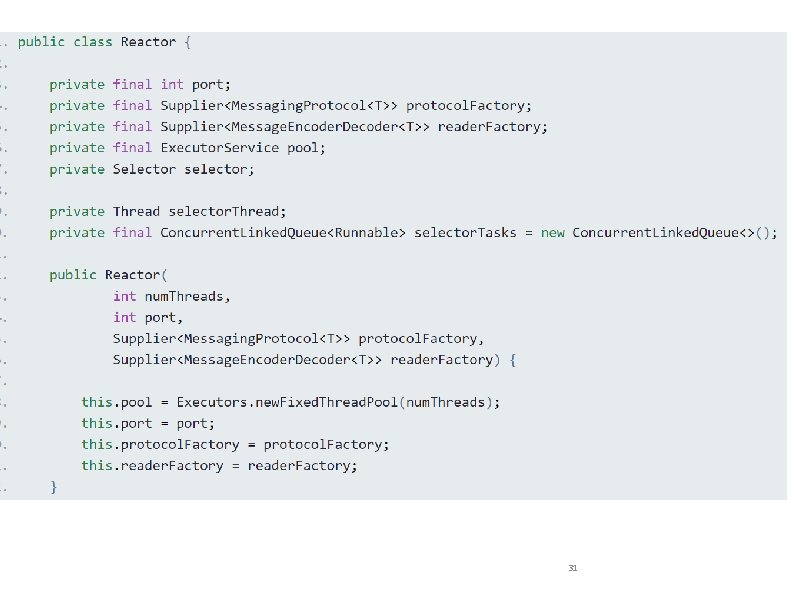

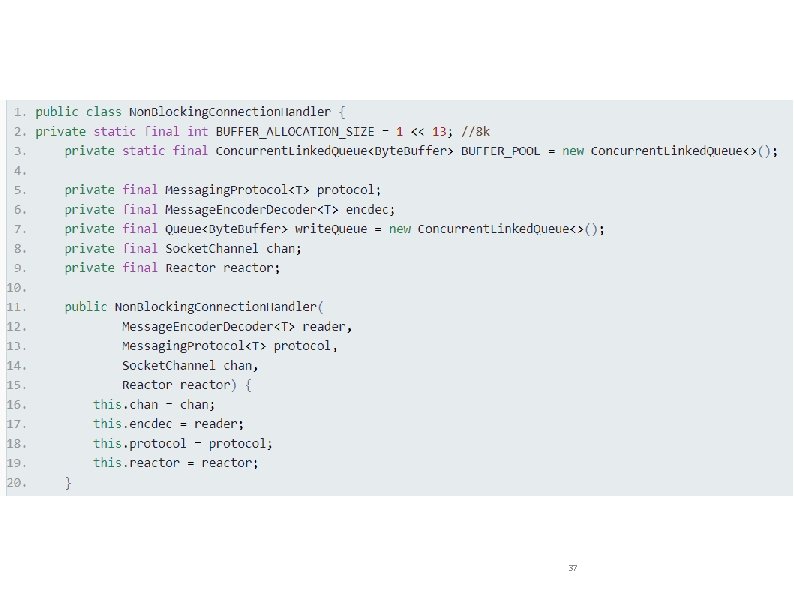

Reactor class (a server class) • Has port. • Has abstract protocol and message decoder. • Holds a Thead pool and the main thread. • Holds a selector. • Holds a Task (“Runnable”) queue. • It defines a Non. Blocking. Connection. Handler. • Handles each client. • The tasks of processing data are performed on a different thread. 27

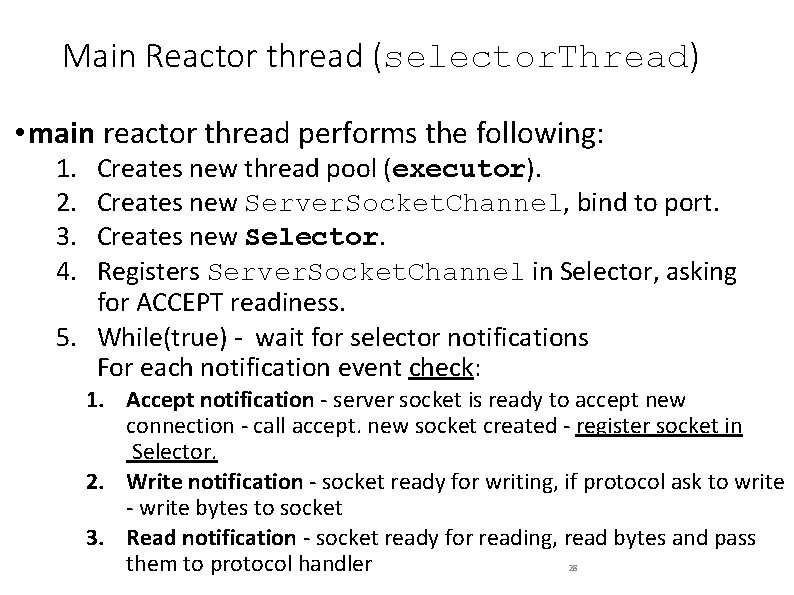

Main Reactor thread (selector. Thread) • main reactor thread performs the following: 1. 2. 3. 4. Creates new thread pool (executor). Creates new Server. Socket. Channel, bind to port. Creates new Selector. Registers Server. Socket. Channel in Selector, asking for ACCEPT readiness. 5. While(true) - wait for selector notifications For each notification event check: 1. Accept notification - server socket is ready to accept new connection - call accept. new socket created - register socket in Selector. 2. Write notification - socket ready for writing, if protocol ask to write - write bytes to socket 3. Read notification - socket ready for reading, read bytes and pass 28 them to protocol handler

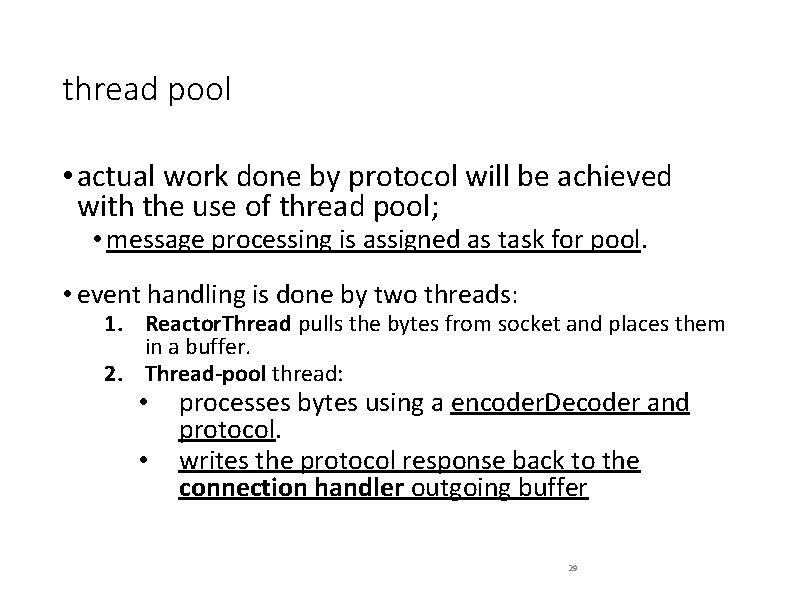

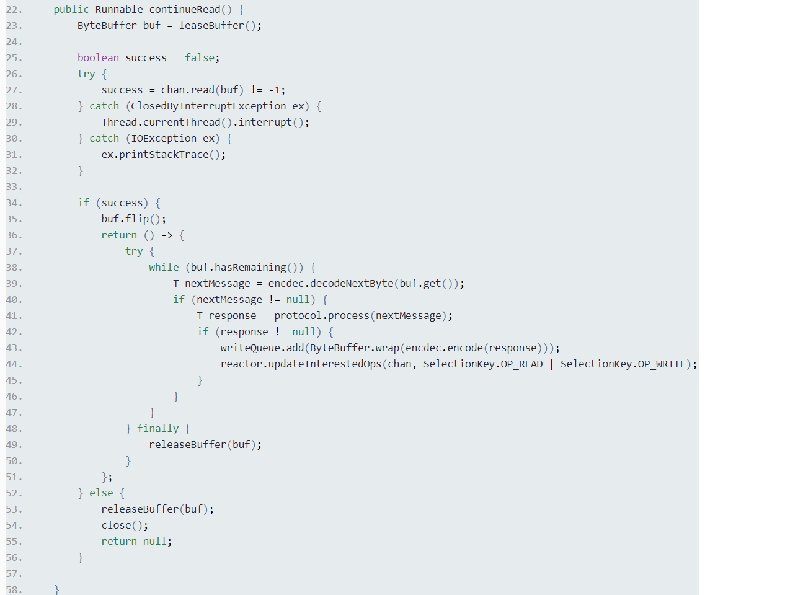

thread pool • actual work done by protocol will be achieved with the use of thread pool; • message processing is assigned as task for pool. • event handling is done by two threads: 1. Reactor. Thread pulls the bytes from socket and places them in a buffer. 2. Thread-pool thread: • • processes bytes using a encoder. Decoder and protocol. writes the protocol response back to the connection handler outgoing buffer 29

31

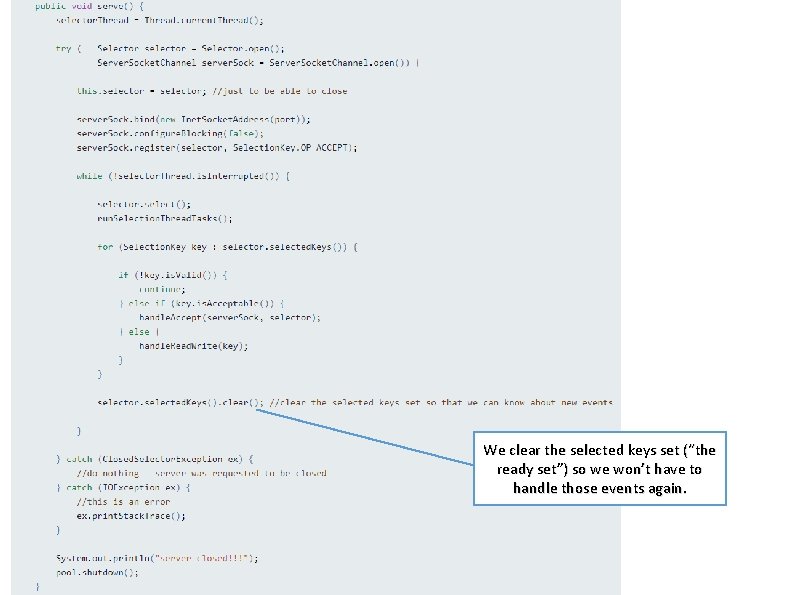

We clear the selected keys set (“the ready set”) so we won’t have to handle those events again. 32

33

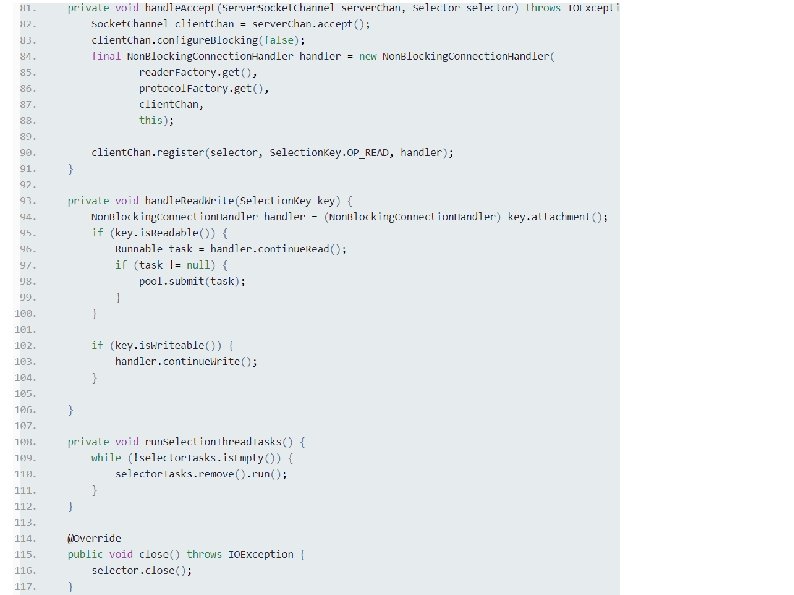

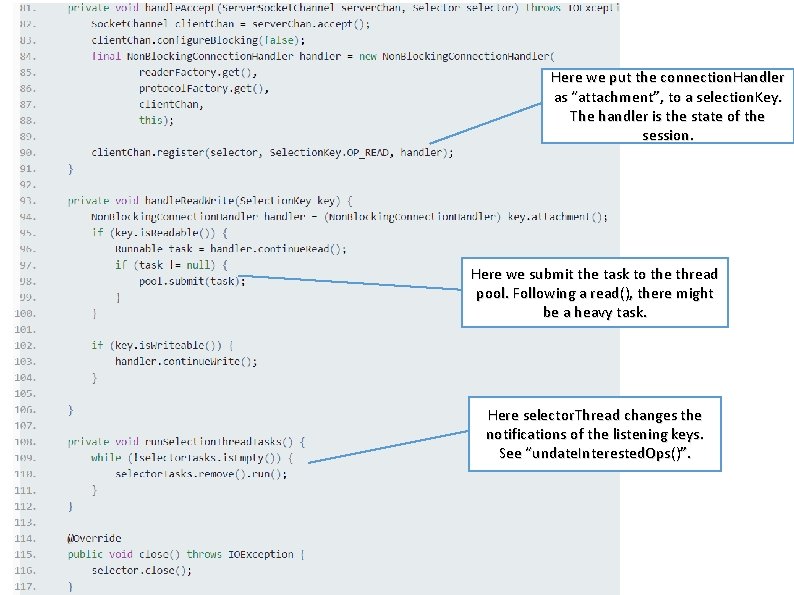

Here we put the connection. Handler as “attachment”, to a selection. Key. The handler is the state of the session. Here we submit the task to the thread pool. Following a read(), there might be a heavy task. Here selector. Thread changes the notifications of the listening keys. See “undate. Interested. Ops()”. 34

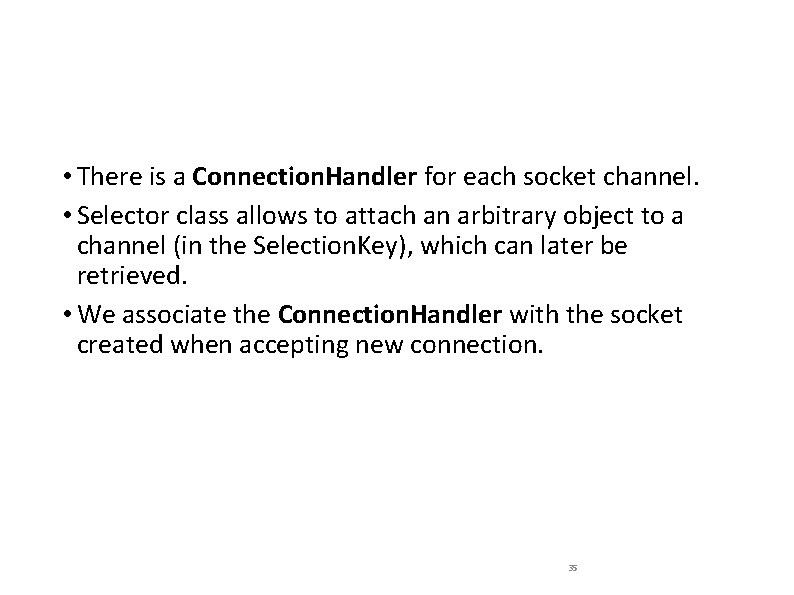

• There is a Connection. Handler for each socket channel. • Selector class allows to attach an arbitrary object to a channel (in the Selection. Key), which can later be retrieved. • We associate the Connection. Handler with the socket created when accepting new connection. 35

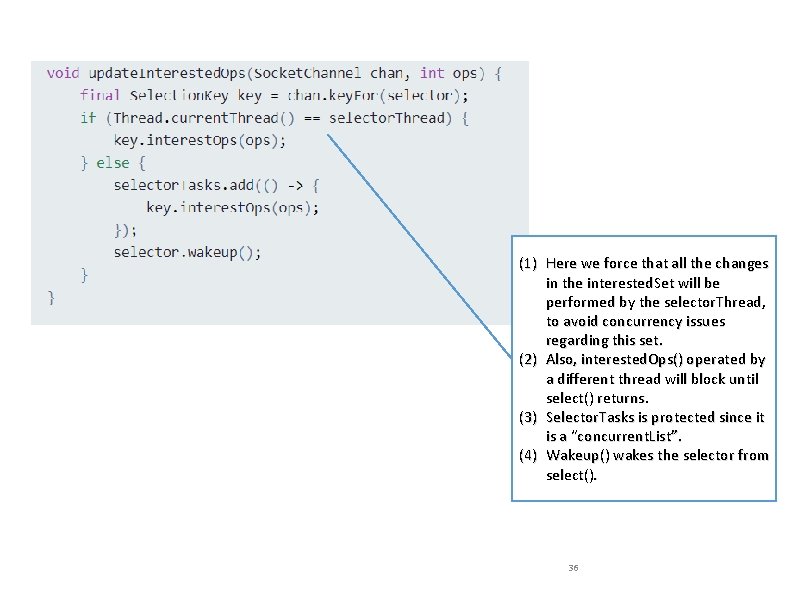

(1) Here we force that all the changes in the interested. Set will be performed by the selector. Thread, to avoid concurrency issues regarding this set. (2) Also, interested. Ops() operated by a different thread will block until select() returns. (3) Selector. Tasks is protected since it is a “concurrent. List”. (4) Wakeup() wakes the selector from select(). 36

37

38

39

40

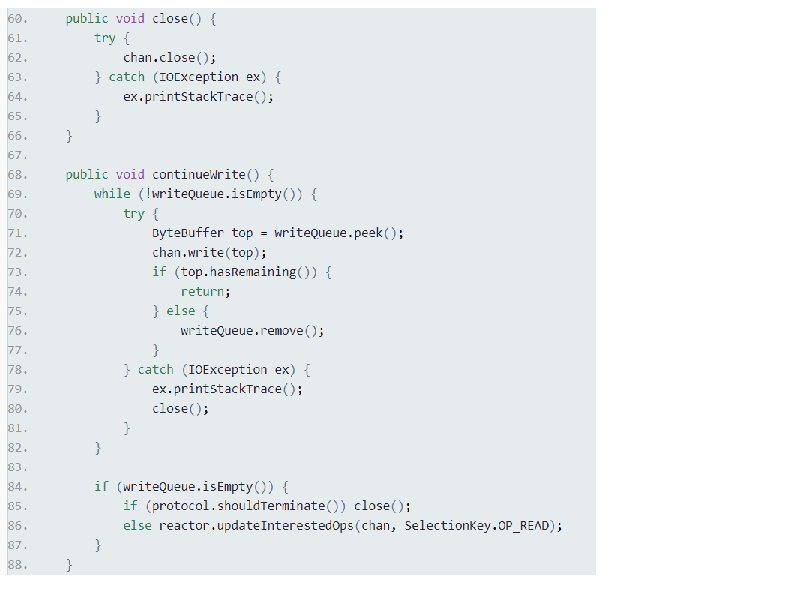

Direct vs. non-direct buffers Byte. Buffer. allocate. Direct() • A byte buffer is either direct or non-direct. • Given a direct byte buffer, the Java virtual machine will make a best effort to perform native I/O operations directly upon it. • That is, it will attempt to avoid copying the buffer's content to (or from) an intermediate buffer before (or after) each invocation of one of the underlying operating system's native I/O operations. • The direct buffers typically have somewhat higher allocation and deallocation costs than non-direct buffers. • It is therefore recommended that direct buffers be allocated primarily for large, long-lived buffers that are subject to the underlying system's native I/O operations. 41

Buffer Pool • The connection. Handler uses a buffer pool. • That is because Direct. Byte. Buffers are expensive to allocate/deallocate. • Buffer. Pool in Connection. Handler caches the already-used buffers. • This is called the “Fly-weight design pattern”. 42

Suggested Solution: Concurrency Issues • Reading tasks are performed by different threads. • What about consecutive reads from the same client? • Assume a client that sends two messages; M 1 and M 2 to the server • The server then, creates two tasks; T 1 and T 2 corresponding to these messages • Since two different threads may handle the two tasks concurrently • T 2 may complete before T 1! • The response to M 2 will be sent before the response to M 1! • The protocol order may be broken! 43

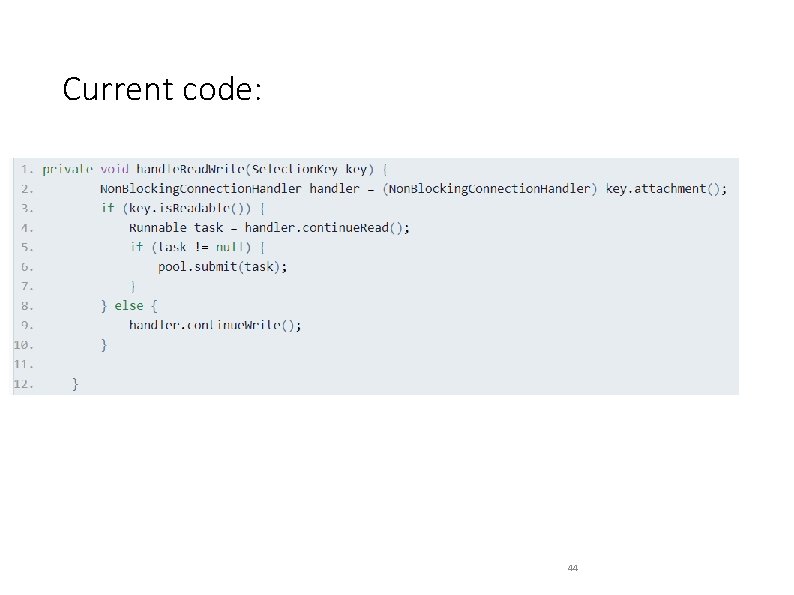

Current code: 44

Solution: Task Queue for Each Client • Solution: • Create a task queue for each client • Synchronize over the queue to execute the task at beginning of queue • Ensures no other task is taken from queue until current task is completed • Result: • Protocol order is ensured • Concerns: • Performance issues due to synchronization • Task queues management is required

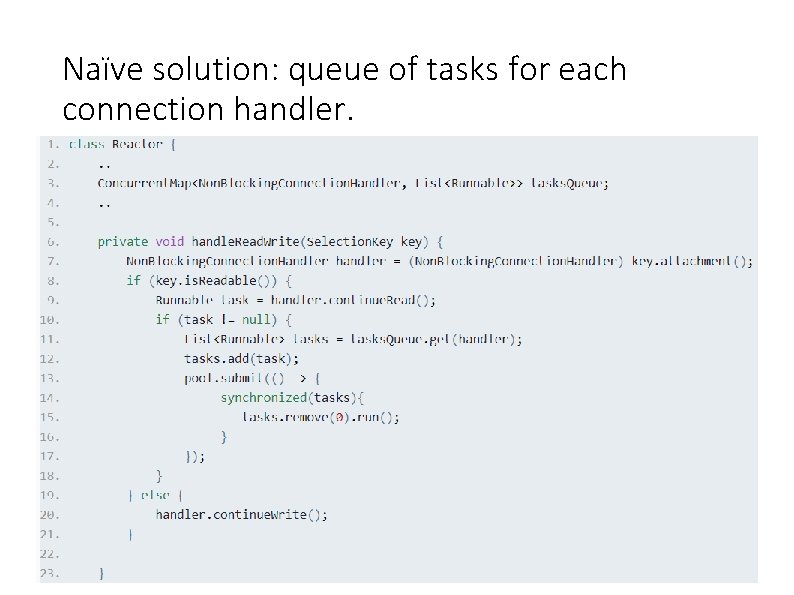

Naïve solution: queue of tasks for each connection handler. 46

Proposed Solution: Issues • Issue 1: • If a client sends two requests, the thread pool will have two tasks taking up two slots! The first is executed, while the other is blocked. • We may clog the thread pool! • We could have used the other slot to serve other clients in the meanwhile! • Issue 2: • tasks. Queue needs to be managed (initialized and deleted). • In the proposed solution above there is no real management • What happens when new client connects? • What happens when current client disconnects? • We need a better solution!

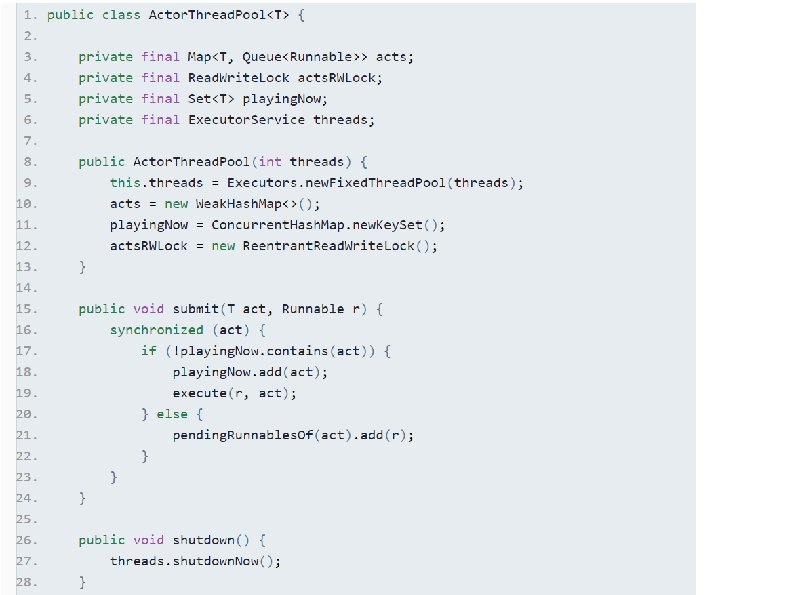

Actor Thread Pool • Terminology: • Actor – A Connection. Handler • Action – Task of an Actor • Design: • Create dependency list of Actions for each Actor, • Ensure that only one Action per Actor is submitted to the executor at any given time

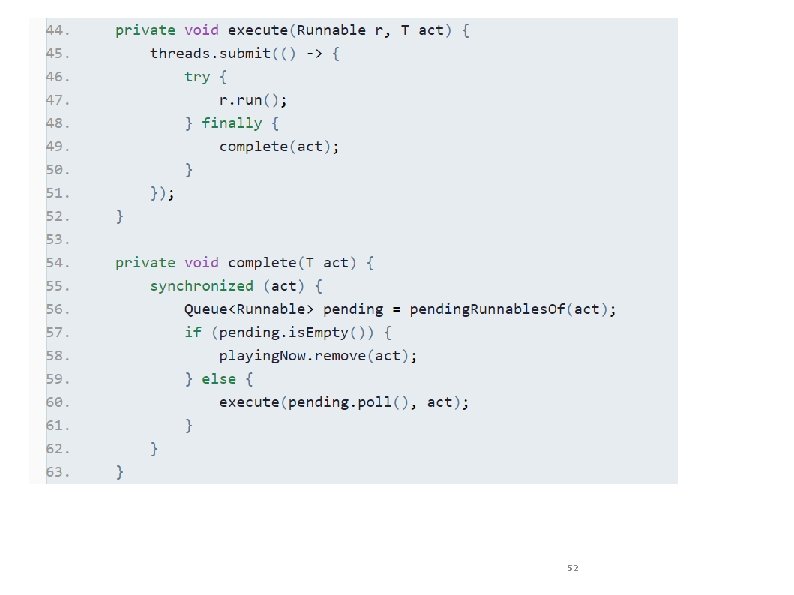

Actor Thread Pool • Implementation: • Upon new Action submission -> check if another Action for same Actor is being executed • If there are none, submit to executor for execution • If there is one, add the new Action to the Action pending list for this Actor • Once current Action is complete -> get first Action from pending list and execute it.

execute 50

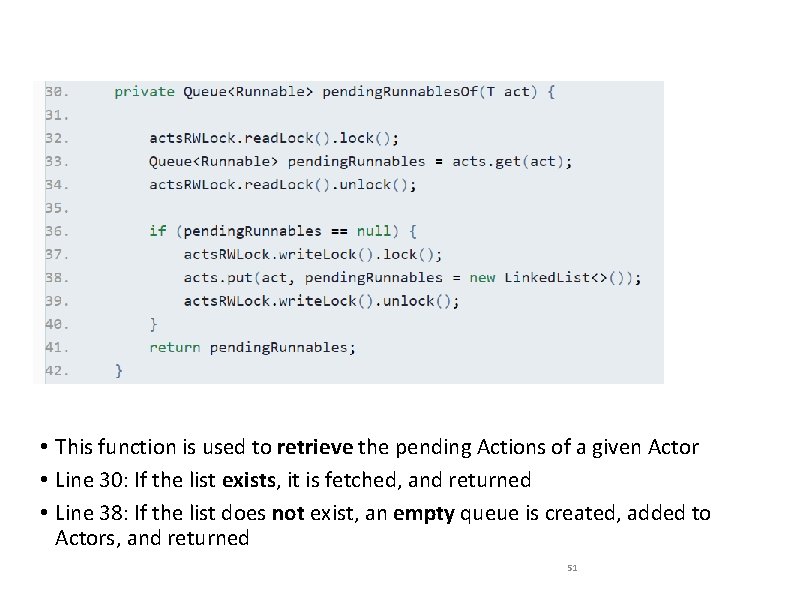

• This function is used to retrieve the pending Actions of a given Actor • Line 30: If the list exists, it is fetched, and returned • Line 38: If the list does not exist, an empty queue is created, added to Actors, and returned 51

52

Some notes: Weak. Hash. Map acts • the Actor. Thread. Pool uses Weak. Hash. Map to hold the task queues of the actors. • An entry in a Weak. Hash. Map will automatically be removed when its key is no longer in ordinary use. • The presence of a mapping for a given key will not prevent the key from being discarded by the garbage collector. • When a key has been discarded its entry is effectively removed from the map. • This class is not synchronized. And therefore we will guard access to it using the read-write lock. 53

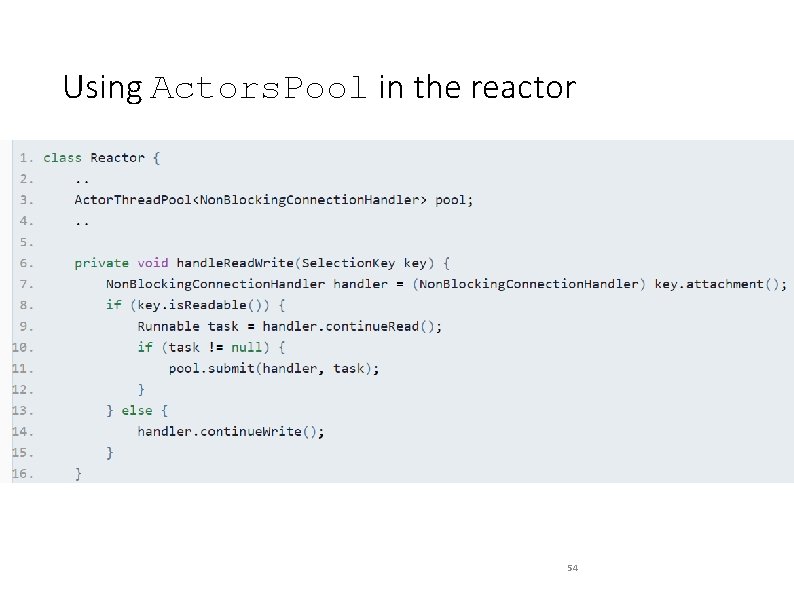

Using Actors. Pool in the reactor 54

- Slides: 52