Lecture 02 Part B Problem Solving by Searching

Lecture 02 – Part B Problem Solving by Searching Search Methods : Informed (Heuristic) search

Using problem specific knowledge to aid searching Without incorporating knowledge into searching, one can have no bias (i. e. a preference) on the search space. Search everywhere!! Without a bias, one is forced to look everywhere to find the answer. Hence, the complexity of uninformed search is intractable. 2

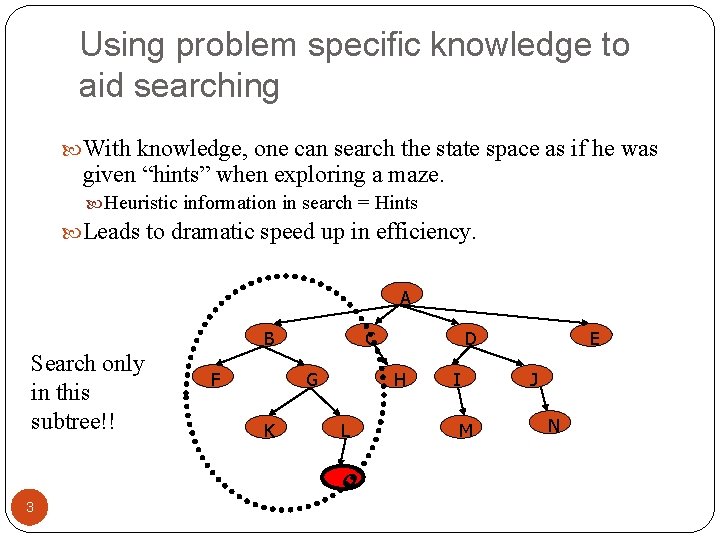

Using problem specific knowledge to aid searching With knowledge, one can search the state space as if he was given “hints” when exploring a maze. Heuristic information in search = Hints Leads to dramatic speed up in efficiency. A B Search only in this subtree!! F C G K H L O 3 D I M E J N

More formally, why heuristic functions work? In any search problem where there at most b choices at each node and a depth of d at the goal node, a naive search algorithm would have to, in the worst case, search around O(bd) nodes before finding a solution (Exponential Time Complexity). Heuristics improve the efficiency of search algorithms by reducing the effective branching factor from b to (ideally) a low constant b* such that 1 =< b* << b 4

Heuristic Functions A heuristic function is a function f(n) that gives an estimation on the “cost” of getting from node n to the goal state – so that the node with the least cost among all possible choices can be selected for expansion first. Three approaches to defining f: f measures the value of the current state (its “goodness”) f measures the estimated cost of getting to the goal from the current state: f(n) = h(n) where h(n) = an estimate of the cost to get from n to a goal f measures the estimated cost of getting to the goal state from the current state and the cost of the existing path to it. Often, in this case, we decompose f: f(n) = g(n) + h(n) where g(n) = the cost to get to n (from initial state) 5

Approach 1: f Measures the Value of the Current State Usually the case when solving optimization problems Finding a state such that the value of the metric f is optimized Often, in these cases, f could be a weighted sum of a set of component values: N-Queens Example: the number of queens under attack … Data mining Example: the “predictive-ness” (a. k. a. accuracy) of a rule discovered 6

Approach 2: f Measures the Cost to the Goal A state X would be better than a state Y if the estimated cost of getting from X to the goal is lower than that of Y – because X would be closer to the goal than Y • 8–Puzzle h 1: The number of misplaced tiles (squares with number). h 2: The sum of the distances of the tiles from their goal positions. 7

Approach 3: f measures the total cost of the solution path (Admissible Heuristic Functions) A heuristic function f(n) = g(n) + h(n) is admissible if h(n) never overestimates the cost to reach the goal. Admissible heuristics are “optimistic”: “the cost is not that much …” However, g(n) is the exact cost to reach node n from the initial state. Therefore, f(n) never over-estimate the true cost to reach the goal state through node n. Theorem: A search is optimal if h(n) is admissible. I. e. The search using h(n) returns an optimal solution. Given h 2(n) > h 1(n) for all n, it’s always more efficient to use h 2(n). h 2 is more realistic than h 1 (more informed), though both are optimistic. 8

Traditional informed search strategies Greedy Best First Search “Always chooses the successor node with the best f value” where f(n) = h(n) We choose the one that is nearest to the final state among all possible choices A* Search Best first search using an “admissible” heuristic function f that takes into account the current cost g Always returns the optimal solution path 9

Informed (Huristic) Search Strategies Greedy Best-First. Search eval-fn: f(n) = h(n)

An implementation of Best First Search function BEST-FIRST-SEARCH (problem, eval-fn) returns a solution sequence, or failure queuing-fn = a function that sorts nodes by eval-fn return GENERIC-SEARCH (problem, queuing-fn) 11

Greedy Best First Search Start 75 A 118 B 140 C 111 E D 80 99 G F 97 H 211 101 I 12 Goal State Heuristic: h(n) A 366 B 374 C 329 D 244 E 253 F 178 G 193 H 98 I 0 f(n) = h (n) = straight-line distance heuristic

Greedy Best First Search Start 75 A 118 B 140 C 111 E D 80 99 G F 97 H 211 101 I 13 Goal State Heuristic: h(n) A 366 B 374 C 329 D 244 E 253 F 178 G 193 H 98 I 0 f(n) = h (n) = straight-line distance heuristic

Greedy Best First Search Start 75 A 118 B 140 C 111 E D 80 99 G F 97 H 211 101 I 14 Goal State Heuristic: h(n) A 366 B 374 C 329 D 244 E 253 F 178 G 193 H 98 I 0 f(n) = h (n) = straight-line distance heuristic

Greedy Best First Search Start 75 A 118 B 140 C 111 E D 80 99 G F 97 H 211 101 I 15 Goal State Heuristic: h(n) A 366 B 374 C 329 D 244 E 253 F 178 G 193 H 98 I 0 f(n) = h (n) = straight-line distance heuristic

Greedy Best First Search Start 75 A 118 B 140 C 111 E D 80 99 G F 97 H 211 101 I 16 Goal State Heuristic: h(n) A 366 B 374 C 329 D 244 E 253 F 178 G 193 H 98 I 0 f(n) = h (n) = straight-line distance heuristic

Greedy Best First Search Start 75 A 118 B 140 C 111 E D 80 99 G F 97 H 211 101 I 17 Goal State Heuristic: h(n) A 366 B 374 C 329 D 244 E 253 F 178 G 193 H 98 I 0 f(n) = h (n) = straight-line distance heuristic

Greedy Best First Search Start 75 A 118 B 140 C 111 E D 80 99 G F 97 H 211 101 I 18 Goal State Heuristic: h(n) A 366 B 374 C 329 D 244 E 253 F 178 G 193 H 98 I 0 f(n) = h (n) = straight-line distance heuristic

Greedy Best First Search Start 75 A 118 B 140 C 111 E D 80 99 G F 97 H 211 101 I 19 Goal State Heuristic: h(n) A 366 B 374 C 329 D 244 E 253 F 178 G 193 H 98 I 0 f(n) = h (n) = straight-line distance heuristic

Greedy Best First Search Start 75 A 118 B 140 C 111 E D 80 99 G F 97 H 211 101 I 20 Goal State Heuristic: h(n) A 366 B 374 C 329 D 244 E 253 F 178 G 193 H 98 I 0 f(n) = h (n) = straight-line distance heuristic

Greedy Best First Search Start 75 A 118 B 140 C 111 E D 80 99 G F 97 H 211 101 I 21 Goal State Heuristic: h(n) A 366 B 374 C 329 D 244 E 253 F 178 G 193 H 98 I 0 f(n) = h (n) = straight-line distance heuristic

Greedy Best First Search A 22 Start State h(n) A 366 B 374 C 329 D 244 E 253 F 178 G 193 H 98 I 0

![Greedy Search: Tree Search 118 [329] C Start 75 A 140 [253] E 23 Greedy Search: Tree Search 118 [329] C Start 75 A 140 [253] E 23](http://slidetodoc.com/presentation_image_h2/089b05a7675499f4c3c04e310f78b884/image-23.jpg)

Greedy Search: Tree Search 118 [329] C Start 75 A 140 [253] E 23 [374] B State h(n) A 366 B 374 C 329 D 244 E 253 F 178 G 193 H 98 I 0

![Greedy Search: Tree Search 118 [329] Start 75 A 140 C [253] E 80 Greedy Search: Tree Search 118 [329] Start 75 A 140 C [253] E 80](http://slidetodoc.com/presentation_image_h2/089b05a7675499f4c3c04e310f78b884/image-24.jpg)

Greedy Search: Tree Search 118 [329] Start 75 A 140 C [253] E 80 [193] 24 G [366] A [374] B 99 F [178] State h(n) A 366 B 374 C 329 D 244 E 253 F 178 G 193 H 98 I 0

![Greedy Search: Tree Search 118 [329] A [253] E 80 [193] G A 366 Greedy Search: Tree Search 118 [329] A [253] E 80 [193] G A 366](http://slidetodoc.com/presentation_image_h2/089b05a7675499f4c3c04e310f78b884/image-25.jpg)

Greedy Search: Tree Search 118 [329] A [253] E 80 [193] G A 366 B 374 C 329 D 244 E 253 F 178 G 193 H 98 I 0 [374] B 99 F [366] A [178] 211 [253] E I Goal 25 h(n) Start 75 140 C State [0]

![Greedy Search: Tree Search 118 [329] A [253] E 80 [193] G A 366 Greedy Search: Tree Search 118 [329] A [253] E 80 [193] G A 366](http://slidetodoc.com/presentation_image_h2/089b05a7675499f4c3c04e310f78b884/image-26.jpg)

Greedy Search: Tree Search 118 [329] A [253] E 80 [193] G A 366 B 374 C 329 D 244 E 253 F 178 G 193 H 98 I 0 [374] B 99 F [366] A dist(A-E-F-I) = 140 + 99 + 211 = 450 [178] 211 [253] E 26 h(n) Start 75 140 C State I Goal [0]

Greedy Best First Search : Optimal ? Start 75 A 118 B 140 C 111 E D 80 99 G F 97 H 211 101 I 27 Goal State Heuristic: h(n) A 366 B 374 C 329 D 244 E 253 F 178 G 193 H 98 I 0 f(n) = h (n) = straight-line distance heuristic dist(A-E-G-H-I) =140+80+97+101=418

Greedy Best First Search : Optimal ? Start 75 A 118 B 140 C 111 E D 80 99 G F 97 H 211 101 I 28 Goal State Heuristic: h(n) A 366 B 374 ** C 250 D 244 E 253 F 178 G 193 H 98 I 0 f(n) = h (n) = straight-line distance heuristic

Greedy Search: Tree Search A 29 Start State h(n) A 366 B 374 C 250 D 244 E 253 F 178 G 193 H 98 I 0

![Greedy Search: Tree Search 118 [250] C Start 75 A 140 [253] E 30 Greedy Search: Tree Search 118 [250] C Start 75 A 140 [253] E 30](http://slidetodoc.com/presentation_image_h2/089b05a7675499f4c3c04e310f78b884/image-30.jpg)

Greedy Search: Tree Search 118 [250] C Start 75 A 140 [253] E 30 [374] B State h(n) A 366 B 374 C 250 D 244 E 253 F 178 G 193 H 98 I 0

![Greedy Search: Tree Search 118 [250] 31 A 140 [253] E 111 [244] C Greedy Search: Tree Search 118 [250] 31 A 140 [253] E 111 [244] C](http://slidetodoc.com/presentation_image_h2/089b05a7675499f4c3c04e310f78b884/image-31.jpg)

Greedy Search: Tree Search 118 [250] 31 A 140 [253] E 111 [244] C Start 75 D [374] B State h(n) A 366 B 374 C 250 D 244 E 253 F 178 G 193 H 98 I 0

![Greedy Search: Tree Search A 118 [250] 140 C [253] E 111 [244] D Greedy Search: Tree Search A 118 [250] 140 C [253] E 111 [244] D](http://slidetodoc.com/presentation_image_h2/089b05a7675499f4c3c04e310f78b884/image-32.jpg)

Greedy Search: Tree Search A 118 [250] 140 C [253] E 111 [244] D Infinite Branch ! [250] 32 C Start 75 [374] B State h(n) A 366 B 374 C 250 D 244 E 253 F 178 G 193 H 98 I 0

![Greedy Search: Tree Search A 118 [250] 140 C [253] E 111 [244] D Greedy Search: Tree Search A 118 [250] 140 C [253] E 111 [244] D](http://slidetodoc.com/presentation_image_h2/089b05a7675499f4c3c04e310f78b884/image-33.jpg)

Greedy Search: Tree Search A 118 [250] 140 C [253] E 111 [244] D Infinite Branch ! [250] [244] D 33 C Start 75 [374] B State h(n) A 366 B 374 C 250 D 244 E 253 F 178 G 193 H 98 I 0

![Greedy Search: Tree Search A 118 [250] 140 C [253] E 111 [244] D Greedy Search: Tree Search A 118 [250] 140 C [253] E 111 [244] D](http://slidetodoc.com/presentation_image_h2/089b05a7675499f4c3c04e310f78b884/image-34.jpg)

Greedy Search: Tree Search A 118 [250] 140 C [253] E 111 [244] D Infinite Branch ! [250] [244] D 34 C Start 75 [374] B State h(n) A 366 B 374 C 250 D 244 E 253 F 178 G 193 H 98 I 0

Greedy Search: Time and Space Complexity ? Start 75 A 118 B 140 C 111 E D 80 99 G F 97 H 211 101 I 35 Goal • Greedy search is not optimal. • Greedy search is incomplete without systematic checking of repeated states. • In the worst case, the Time and Space Complexity of Greedy Search are both O(bm) Where b is the branching factor and m the maximum path length

Informed Search Strategies A* Search eval-fn: f(n)=g(n)+h(n)

A* (A Star) Greedy Search minimizes a heuristic h(n) which is an estimated cost from a node n to the goal state. However, although greedy search can considerably cut the search time (efficient), it is neither optimal nor complete. Uniform Cost Search minimizes the cost g(n) from the initial state to n. UCS is optimal and complete but not efficient. New Strategy: Combine Greedy Search and UCS to get an efficient algorithm which is complete and optimal. 37

A* (A Star) A* uses a heuristic function which combines g(n) and h(n) f(n) = g(n) + h(n) g(n) is the exact cost to reach node n from the initial state. Cost so far up to node n. h(n) is an estimation of the remaining cost to reach the goal. 38

A* (A Star) g(n) f(n) = g(n)+h(n) n h(n) 39

A* Search Start 75 A 118 B 140 C 111 E D 80 99 G F 97 H 211 101 I 40 Goal State Heuristic: h(n) A 366 B 374 C 329 D 244 E 253 F 178 G 193 H 98 I 0 f(n) = g(n) + h (n) g(n): is the exact cost to reach node n from the initial state.

A* Search: Tree Search State h(n) A 366 B 374 C 329 D 244 E 253 F 178 G 193 H 98 I 0 41 A Start

A* Search: Tree Search State h(n) A 366 B 374 C 329 D 244 E 253 F 178 G 193 H 98 I 0 42 Start A 118 [447] C 140 E [393] C[447] = 118 + 329 E[393] = 140 + 253 B[449] = 75 + 374 75 B [449]

A* Search: Tree Search State h(n) A 366 B 374 C 329 D 244 E 253 F 178 G 193 H 98 I 0 43 Start A 118 [447] 140 E C 80 [413] 75 G [393] B 99 F [417] [449]

A* Search: Tree Search State h(n) A 366 B 374 C 329 D 244 E 253 F 178 G 193 H 98 I 0 44 Start A 118 [447] 140 E C 80 [413] G 97 [415] H 75 [393] B 99 F [417] [449]

A* Search: Tree Search State h(n) A 366 B 374 C 329 D 244 E 253 F 178 G 193 H 98 I 0 118 [447] E 80 [413] G 97 [415] H Goal I [418] 75 140 C 101 45 Start A [393] B 99 F [417] [449]

A* Search: Tree Search State h(n) A 366 B 374 C 329 D 244 E 253 F 178 G 193 H 98 I 0 118 [447] E 80 [413] 75 140 C G [393] B 99 F [417] 97 [415] H 101 Goal 46 Start A I [418] I [450] [449]

A* Search: Tree Search State h(n) A 366 B 374 C 329 D 244 E 253 F 178 G 193 H 98 I 0 118 [447] E 80 [413] 75 140 C G [393] B 99 F [417] 97 [415] H 101 Goal 47 Start A I [418] I [450] [449]

A* Search: Tree Search State h(n) A 366 B 374 C 329 D 244 E 253 F 178 G 193 H 98 I 0 118 [447] E 80 [413] 75 140 C G [393] B 99 F [417] 97 [415] H 101 Goal 48 Start A I [418] I [450] [449]

N Conditions for optimality c(N, N’) Admissibility An admissible heuristic never overestimates the cost to reach the goal Straight-line distance h. SLD obviously is an admissible heuristic A* is optimal if h is admissible or consistent 49 N’ h(N) h(N’) Admissibility / Monotonicity The admissible heuristic h is consistent (or satisfies the monotone restriction) if for every node N and every successor N’ of N: h(N) c(N, N’) + h(N’) (triangular inequality) A consistent heuristic is

A* Algorithm A* with systematic checking for repeated states An Example: Map Searching

SLD Heuristic: h() Straight Line Distances to Bucharest Town SLD Arad 366 Mehadai 241 Bucharest 0 Neamt 234 Craiova 160 Oradea 380 Dobreta 242 Pitesti 98 Eforie 161 Rimnicu 193 Fagaras 178 Sibiu 253 Giurgiu 77 Timisoara 329 Hirsova 151 Urziceni 80 Iasi 226 Vaslui 199 Lugoj 244 Zerind 374 We can use straight line distances as an admissible heuristic as they will never overestimate the cost to the goal. This is because there is no shorter distance between two 51 cities than the straight line distance. Press space to continue with the slideshow.

Distances Between Cities Neamt Oradea 71 Zerind 87 75 151 Arad 92 140 118 Sibiu Timisoara 99 Vaslui Faragas 80 111 Lugoj Mehadia 75 Dobreta 120 142 211 Rimnicu 70 52 Iasi 97 Urziceni Hirsova 86 Pitesti 146 98 101 Bucharest 138 86 90 Craiova Giurgui Eforie

Greedy Search in Action … Map Searching

Press Once space Aradwith to is see expanded aninitial Greedy we look search of thethe node Romanian with theline map lowest featured cost. from Sibiu in the has previous the lowest slide. value We begin state offor Arad. The distance Arad to Bucharest We Wenow nowexpandthe Sibiu Fagaras (that is, we expand thestraight the node with the lowest value of fof ). f. Press ). Press space(or Note: forvalue) f. Throughout (The straight the lineanimation distance all tolabelled theofgoal is 253 = h(n). miles. However, we Thisspace givestoawill totalbe is the 366 search. miles. This givesfrom usnodes a Sibiu totalare value ( f with =state h ) f(n) 366 miles. Press expand toh space continue to continue the search. using of themiles). abbreviations space f, and to move h to make to this thenode notation and expand simplerit. the 253 initial state. Press of Arad. Zerind F= Oradea 374 F= 380 F= 366 Arad F= 366 Fagaras Sibiu F= 178 F= F= 329 Timisoara 54 F=253 F= 193 253 Rimnicu Bucharest F= 0

A* in Action … Map Searching

We In Once Press We actual have begin Arad Rimnicu Fagaras space fact, just now with istoexpanded the is arrived is see the expanded algorithm an initial at A* awe Bucharest. node search state we look we will look (Pitesti) look of for not of Arad. for the As the for really the this Romanian that the node The lowest recognise is node revealed cost with the with lowest of cost map thewith reaching Bucharest, that the lowest node. featured cost we lowest As have node cost. Arad you in but cost. found Sibiu the AND from it can As has previous has see, Arad the Bucharest. you aofof cost goal the we can slide. lowest of state g). see, 418. value) Ithave Note: just Pitesti we value If is 0 now expand Fagaras Sibiu Rimnicu Pitesti (that is, is, we we we expand the the node with the the lowest value of f(or ). f). ). fnow Press space for has there keeps two can Throughout miles. f. the terminate Bucharest is (The expanding The lowest any cost straight other the value nodes. to animation the search. lower reach line for lowest One cost Sibiu f. distance If(The of all you cost node these from nodes cost look nodes from (and nodes Arad to are back Arad reach (based inlabelled is(this over Arad 140 to Pitesti case on Bucharest the miles, –with f Sibiu )there slides from until and f(n) is –Arad (or you it. Rimnicu the one = finds hg(n) will straight is value) cheaper a 317 see +goal –h(n). miles, Pitesti is that line node, state 366 However, we the distance and –miles. AND Fagaras, solution Bucharest theit. This from straight has with will gives )thea space to continue to to continue the search. Sibiu line cost lowest has returned be ausing us an distance of total tofvalue 417) value the by the value the goal then from of abbreviations of. A* f. of state 418. we So, Pitesti (search fneed we = The is g 253 must to +to pattern other f, the hexpand miles. g)now and 366 goal node ( Arad This move hmiles. state itto (Arad inmake gives –case is to Press Sibiu 98 –Fagaras Sibiu itthe amiles. leads total space –notation Rimnicu –and of Fagaras This to to 393 aexpand better simpler gives –miles). Pitesti – Bucharest solution ait. the total Press –initial Bucharest ofto space (2) 415 state Bucharest ) has miles). to to of ), continue. an move Arad. is fin. Press than value fact to this 450. space of the optimal 418 node to. We solution move and therefore solution. expand towe thishave Press move node it. already space and to the expand to found. first continue Bucharest it. Presswith space node thetoslideshow. and continue. expand it. Press space to continue Zerind Oradea F= 75 + 374 F= 449 F= 291 + 380 F= 671 F= 0 + 366 Arad F= 366 Fagaras Sibiu F= 239 + 178 F= 417 F= 140 + 253 F= 118 + 329 F= 447 Timisoara F= 220 + 193 F= 413 Bucharest(2) F= 393 F= 450 + 0 Rimnicu F= 450 Bucharest F= 418 + 0 Pitesti Craiova F= 366 + 160 56 F= 526 F= 317 + 98 F= 415 F= 418

Informed Search Strategies Iterative Deepening A*

Iterative Deepening A*: IDA* Use f(N) = g(N) + h(N) with admissible and consistent h Each iteration is depth-first with cutoff on the value of f of expanded nodes 58

IDA* Algorithm In the first iteration, we determine a “f-cost limit” – cut-off value f(n 0) = g(n 0) + h(n 0) = h(n 0), where n 0 is the start node. We expand nodes using the depth-first algorithm and backtrack whenever f(n) for an expanded node n exceeds the cut-off value. If this search does not succeed, determine the lowest f-value among the nodes that were visited but not expanded. Use this f-value as the new limit value – cut-off value and do another depth- first search. Repeat this procedure until a goal node is found. 59

8 -Puzzle 4 Cutoff=4 6 60 f(N) = g(N) + h(N) with h(N) = number of misplaced tiles

8 -Puzzle f(N) = g(N) + h(N) with h(N) = number of misplaced tiles 4 Cutoff=4 4 6 61 6

8 -Puzzle f(N) = g(N) + h(N) with h(N) = number of misplaced tiles 4 Cutoff=4 62 4 5 6 6

8 -Puzzle f(N) = g(N) + h(N) with h(N) = number of misplaced tiles 5 4 Cutoff=4 63 4 5 6 6

8 -Puzzle f(N) = g(N) + h(N) with h(N) = number of misplaced tiles 6 5 4 5 6 6 4 Cutoff=4 64

8 -Puzzle 4 Cutoff=5 6 65 f(N) = g(N) + h(N) with h(N) = number of misplaced tiles

8 -Puzzle f(N) = g(N) + h(N) with h(N) = number of misplaced tiles 4 Cutoff=5 4 6 66 6

8 -Puzzle f(N) = g(N) + h(N) with h(N) = number of misplaced tiles 4 Cutoff=5 67 4 5 6 6

8 -Puzzle f(N) = g(N) + h(N) with h(N) = number of misplaced tiles 4 Cutoff=5 4 5 7 6 68 6

8 -Puzzle f(N) = g(N) + h(N) with h(N) = number of misplaced tiles 4 Cutoff=5 5 4 5 7 6 69 6

8 -Puzzle f(N) = g(N) + h(N) with h(N) = number of misplaced tiles 4 Cutoff=5 5 4 5 7 6 70 6 5

8 -Puzzle f(N) = g(N) + h(N) with h(N) = number of misplaced tiles 4 Cutoff=5 5 4 5 7 6 71 6 5

Heuristic Evaluation The Effect of Heuristic Accuracy on Performance An Example: 8 -puzzle

Admissible heuristics E. g. , for the 8 -puzzle: h 1(n) = number of misplaced tiles h 2(n) = total Manhattan distance (i. e. , no. of squares from desired location of each tile) h 1(S) = ? h 2(S) = ?

Admissible heuristics E. g. , for the 8 -puzzle: h 1(n) = number of misplaced tiles h 2(n) = total Manhattan distance (i. e. , no. of squares from desired location of each tile) h 1(S) = ? 8 h 2(S) = ? 3+1+2+2+2+3+3+2 = 18

Dominance If h 2(n) ≥ h 1(n) for all n (both admissible) then h 2 dominates h 1 h 2 is better for search Why? Self Study Typical search costs (average number of nodes expanded): d=12 d=24 IDS = 3, 644, 035 nodes A*(h 1) = 227 nodes A*(h 2) = 73 nodes IDS = too many nodes A*(h 1) = 39, 135 nodes A*(h 2) = 1, 641 nodes

When to Use Search Techniques The search space is small, and There are no other available techniques, or It is not worth the effort to develop a more efficient technique The search space is large, and There is no other available techniques, and There exist “good” heuristics 76

Conclusions Frustration with uninformed search led to the idea of using domain specific knowledge in a search so that one can intelligently explore only the relevant part of the search space that has a good chance of containing the goal state. These new techniques are called informed (heuristic) search strategies. Even though heuristics improve the performance of informed search algorithms, they are still time consuming especially for large size instances. 77

References Chapter 3 of “Artificial Intelligence: A modern approach” by Stuart Russell, Peter Norvig. Chapter 4 of “Artificial Intelligence Illuminated” by Ben Coppin 78

- Slides: 78