Learning with Trees Rob Nowak University of WisconsinMadison

Learning with Trees Rob Nowak University of Wisconsin-Madison Collaborators: Rui Castro, Clay Scott, Rebecca Willett www. ece. wisc. edu/~nowak Artwork: Piet Mondrian

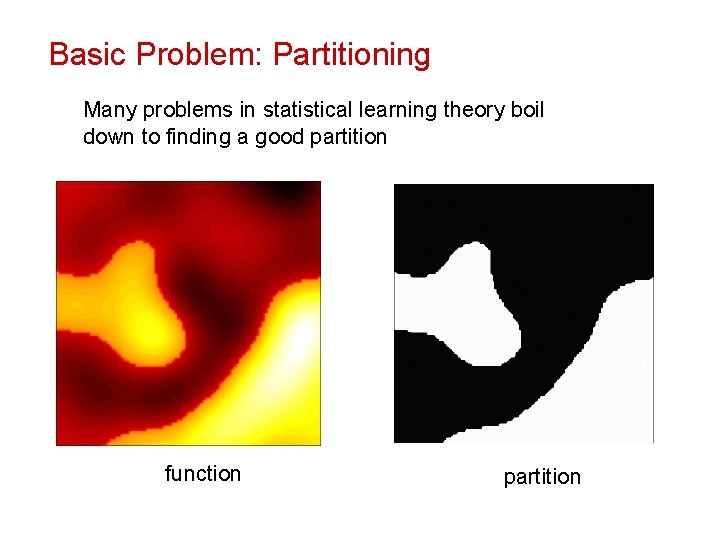

Basic Problem: Partitioning Many problems in statistical learning theory boil down to finding a good partition function partition

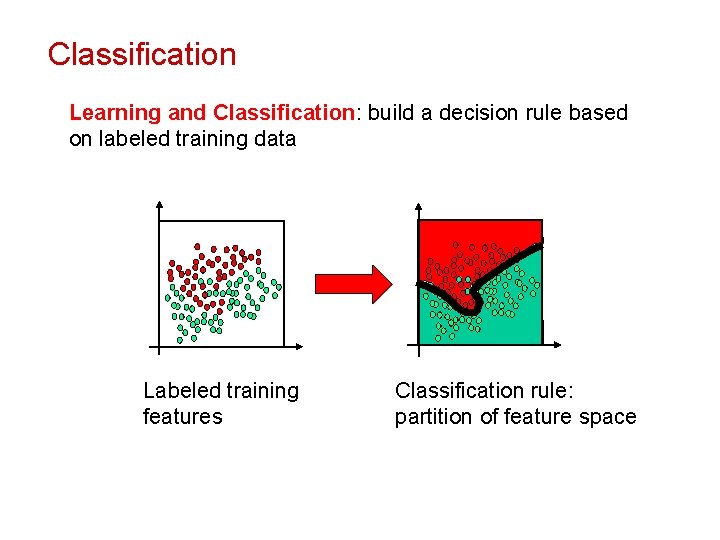

Classification Learning and Classification: build a decision rule based on labeled training data Labeled training features Classification rule: partition of feature space

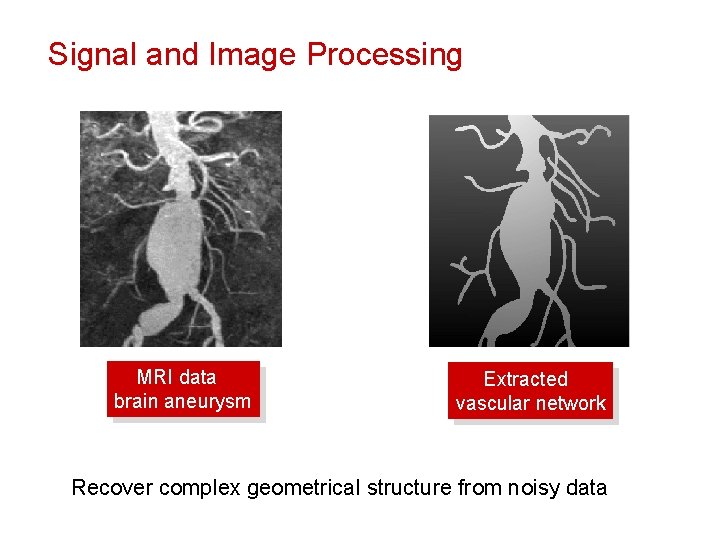

Signal and Image Processing MRI data brain aneurysm Extracted vascular network Recover complex geometrical structure from noisy data

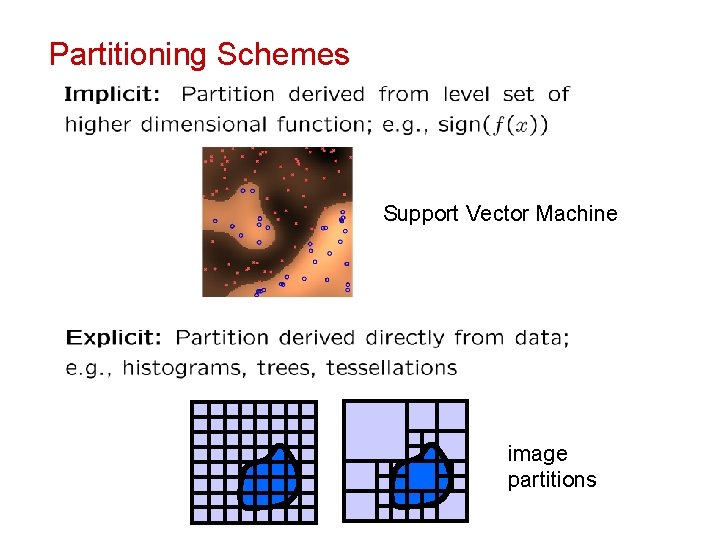

Partitioning Schemes Support Vector Machine image partitions

Why Trees ? • Simplicity of design • Interpretability • Ease of implementation • Good performance in practice Trees are one of the most popular and widely used machine learning / data analysis tools CART: Breiman, Friedman, Olshen, and Stone, 1984 Classification and Regression Trees C 4. 5: Quinlan 1993, C 4. 5: Programs for Machine Learning JPEG 2000: Image compression standard, 2000 http: //www. jpeg. org/jpeg 2000/

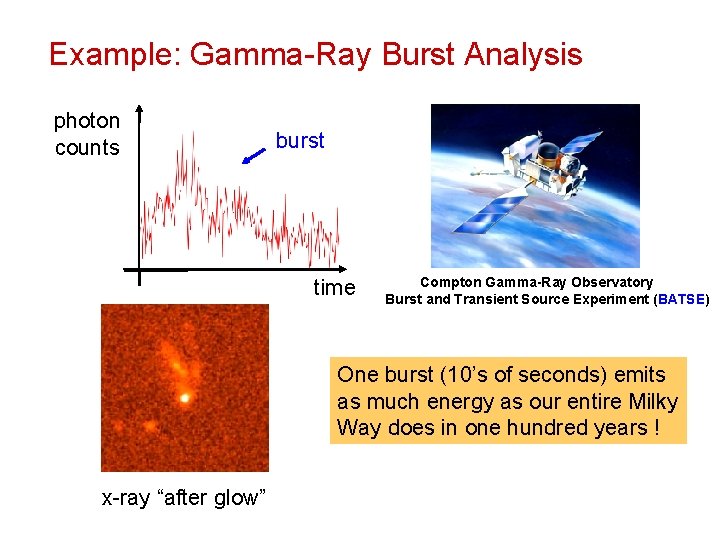

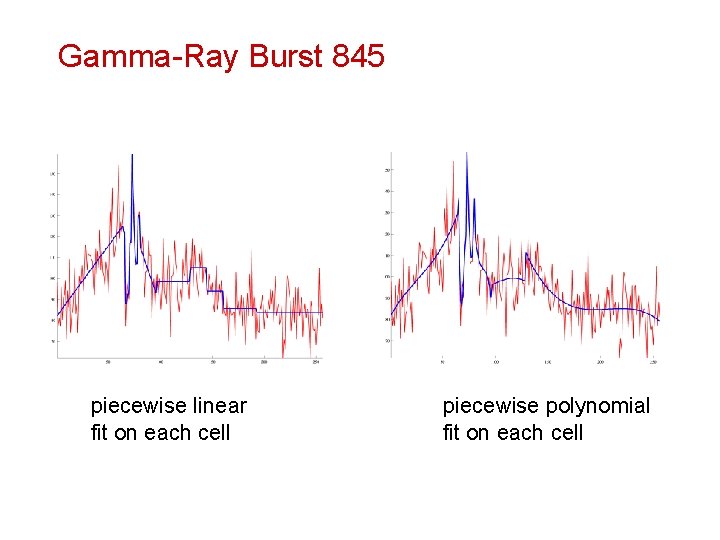

Example: Gamma-Ray Burst Analysis photon counts burst time Compton Gamma-Ray Observatory Burst and Transient Source Experiment (BATSE) One burst (10’s of seconds) emits as much energy as our entire Milky Way does in one hundred years ! x-ray “after glow”

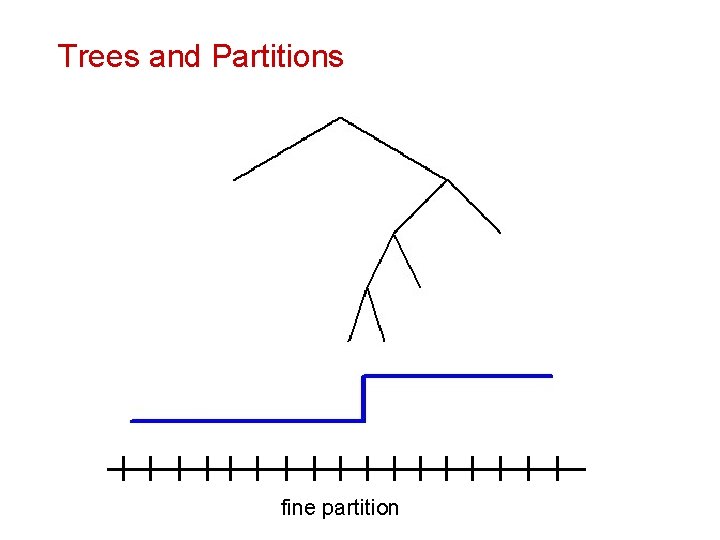

Trees and Partitions coarse fine partition

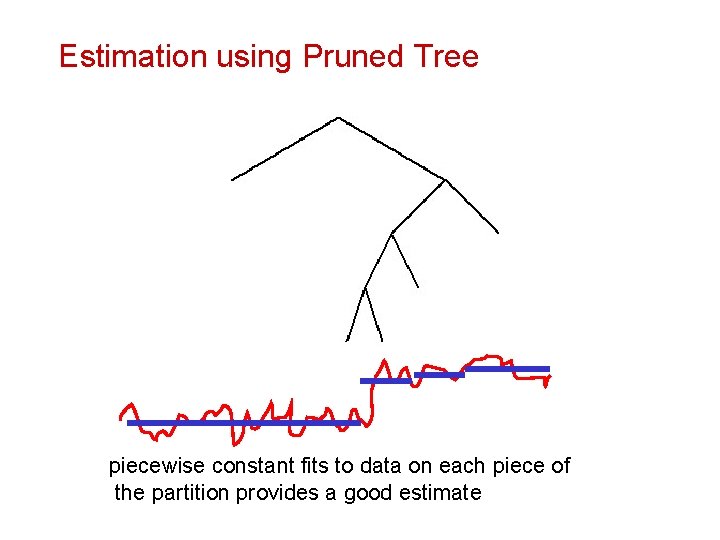

Estimation using Pruned Tree piecewise constant fits to data on each piece of Each leaf corresponds to a sample f(ti ), i=0, …, N-1 the partition provides a good estimate

Gamma-Ray Burst 845 piecewise linear fit on each cell piecewise polynomial fit on each cell

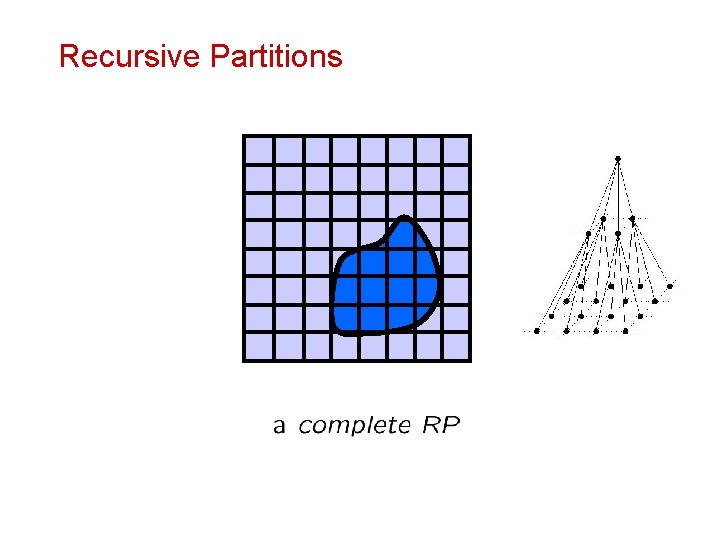

Recursive Partitions

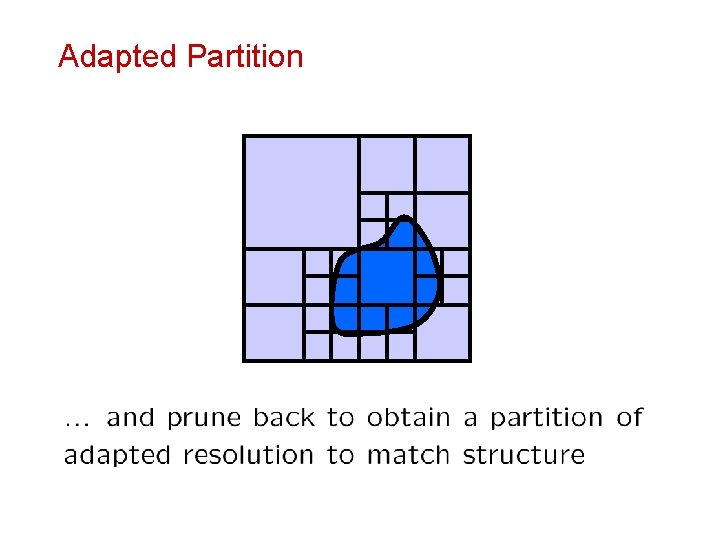

Adapted Partition

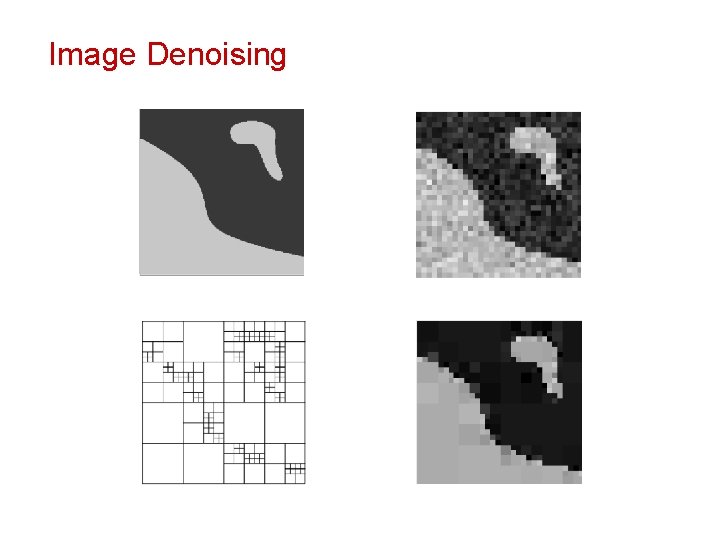

Image Denoising

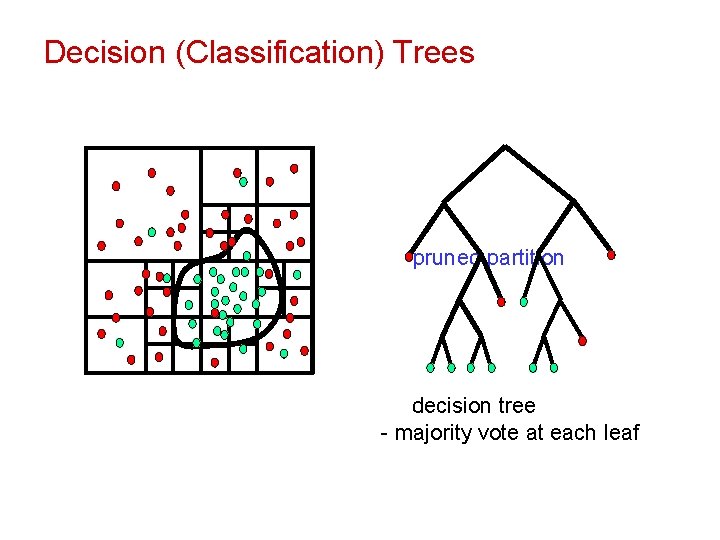

Decision (Classification) Trees Bayes boundary labeled training data complete partition pruneddecision partition decision tree - majority vote at each leaf

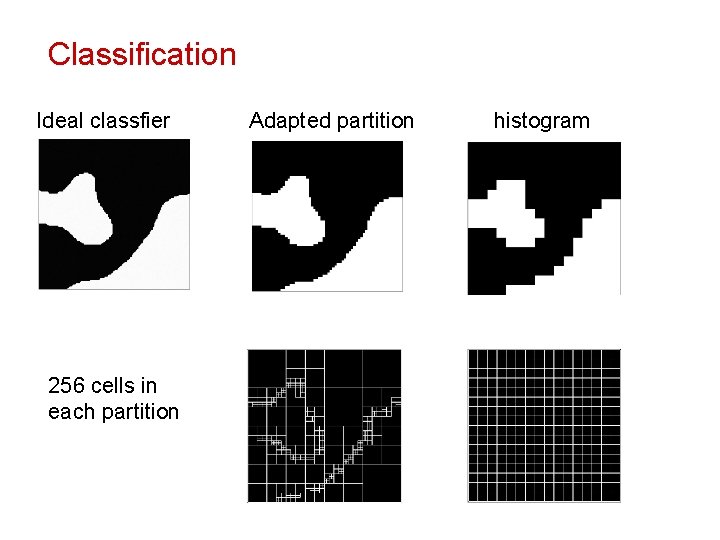

Classification Ideal classfier 256 cells in each partition Adapted partition histogram

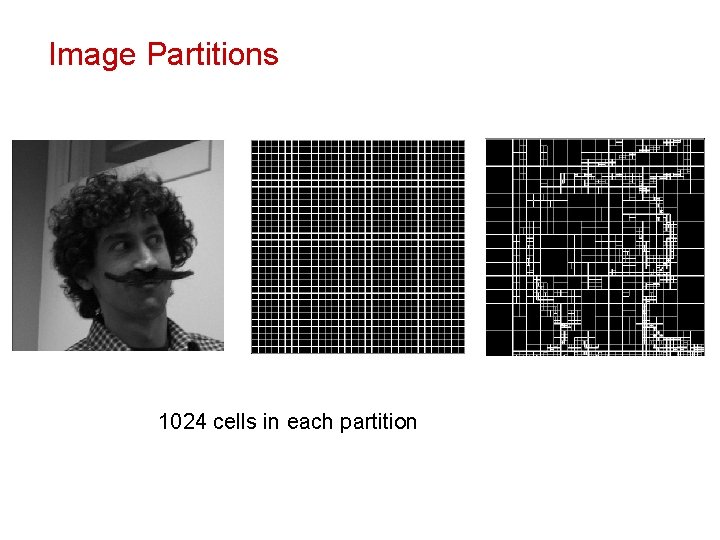

Image Partitions 1024 cells in each partition

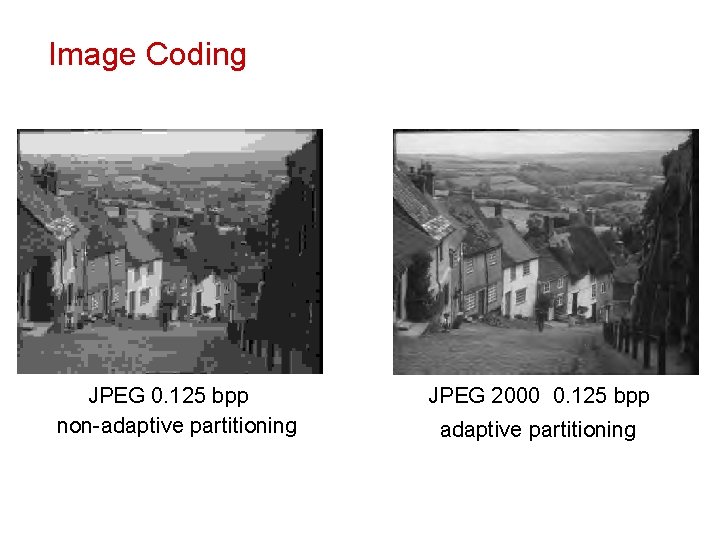

Image Coding JPEG 0. 125 bpp non-adaptive partitioning JPEG 2000 0. 125 bpp adaptive partitioning

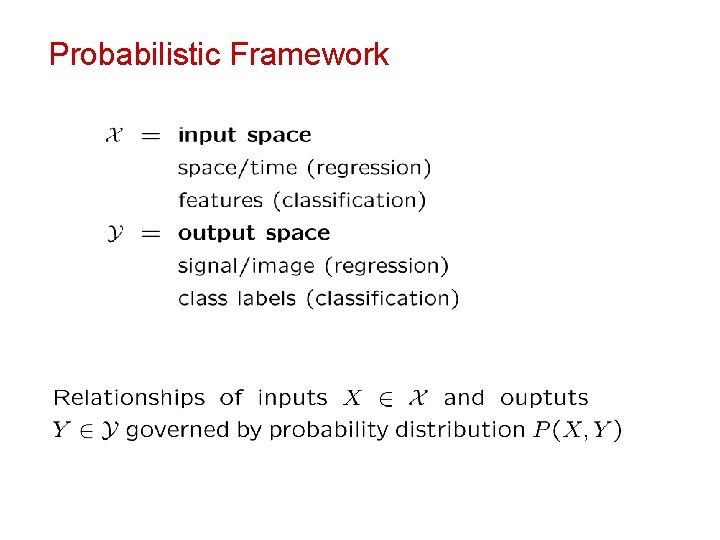

Probabilistic Framework

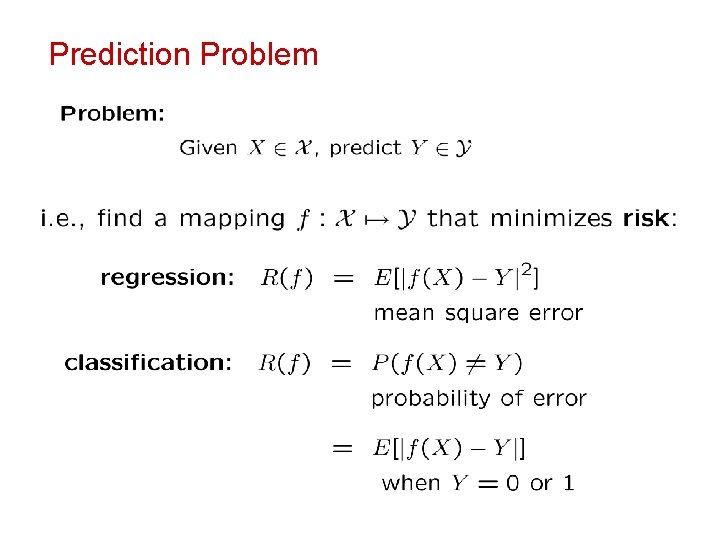

Prediction Problem

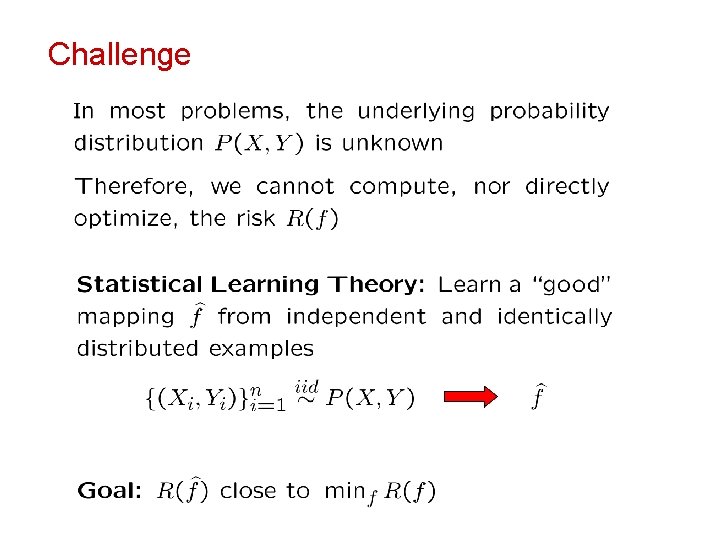

Challenge

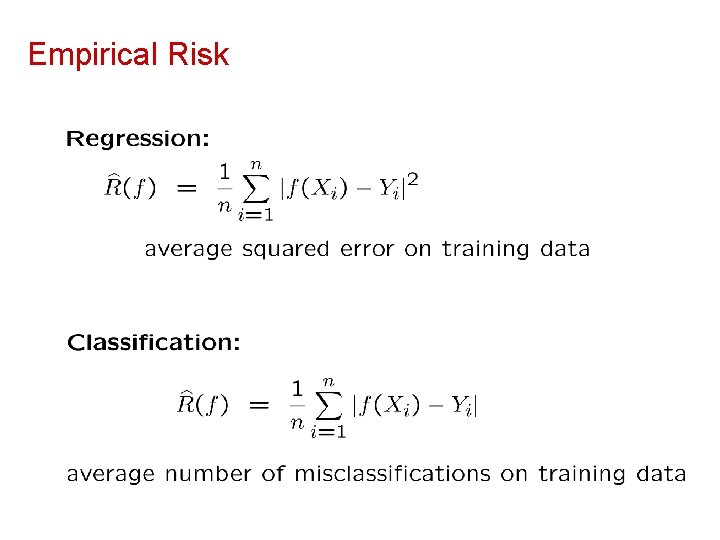

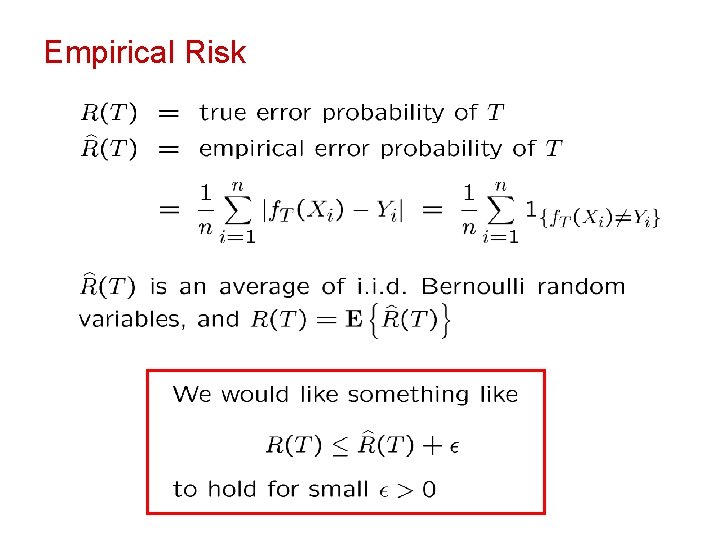

Empirical Risk

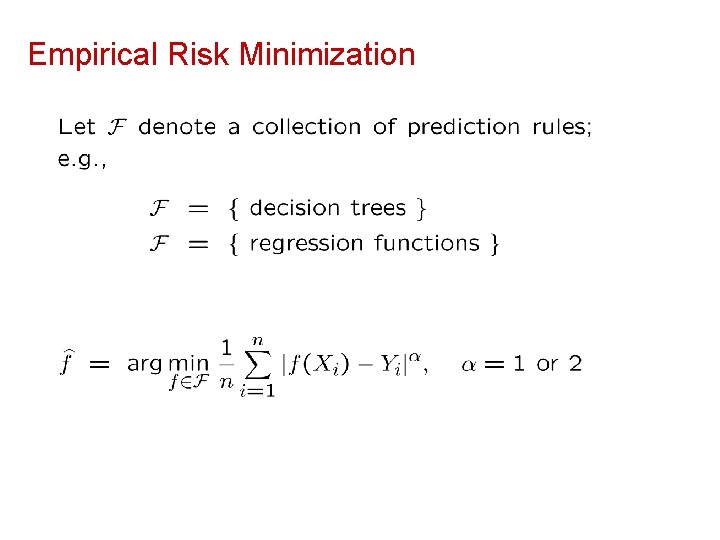

Empirical Risk Minimization

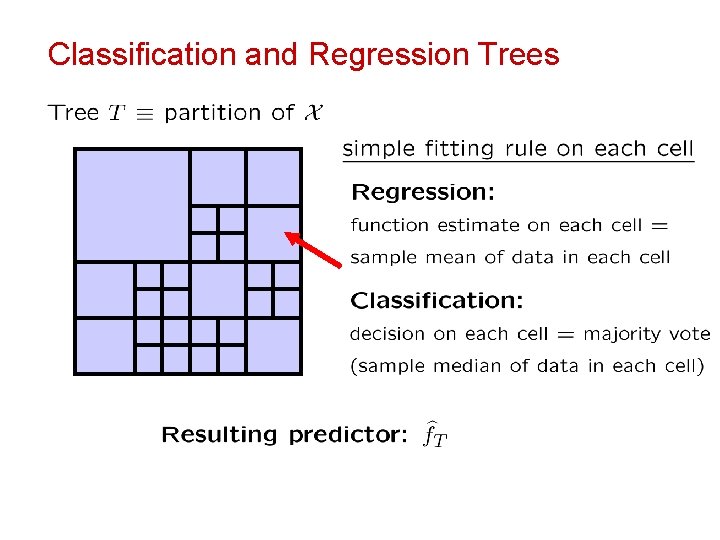

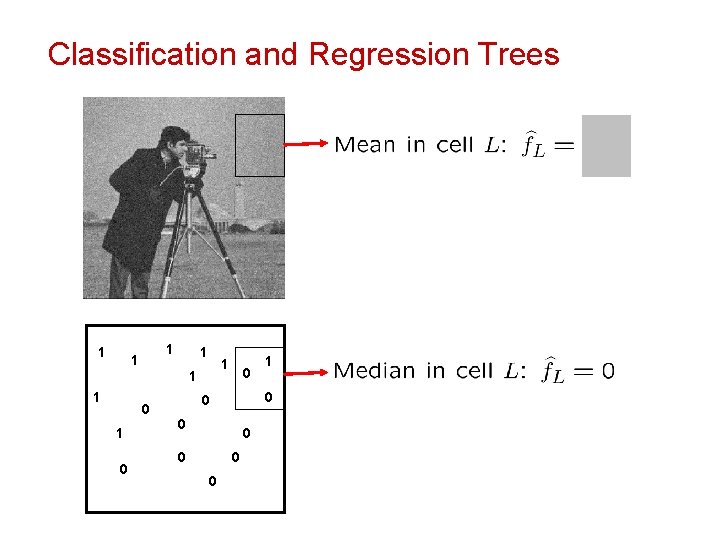

Classification and Regression Trees

Classification and Regression Trees 1 1 1 1 0 0 0 0 0 1

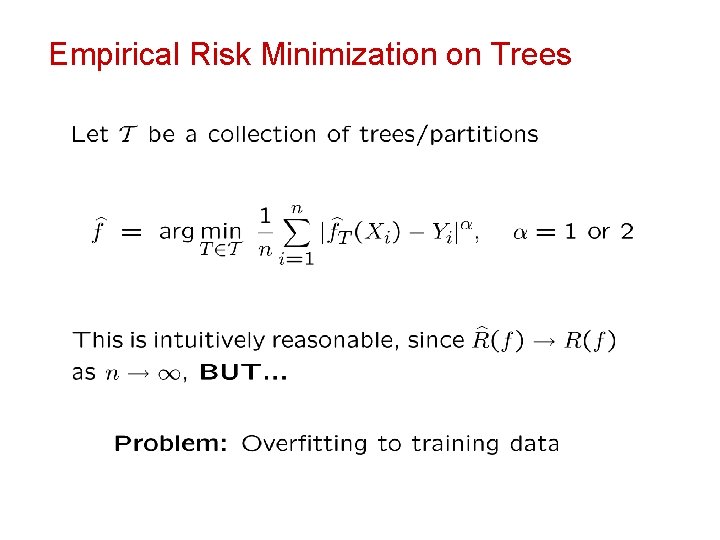

Empirical Risk Minimization on Trees

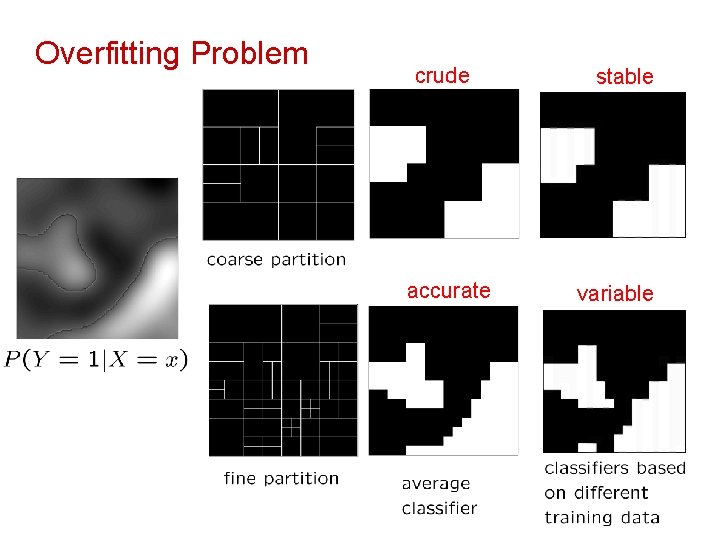

Overfitting Problem crude accurate stable variable

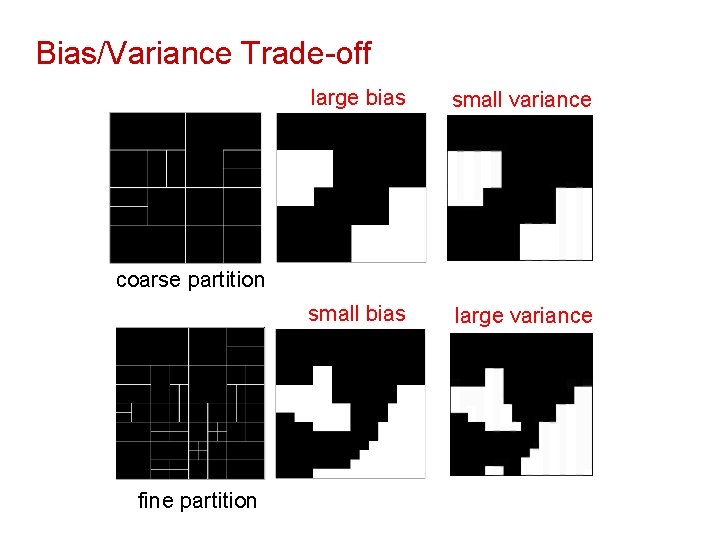

Bias/Variance Trade-off large bias small variance small bias large variance coarse partition fine partition

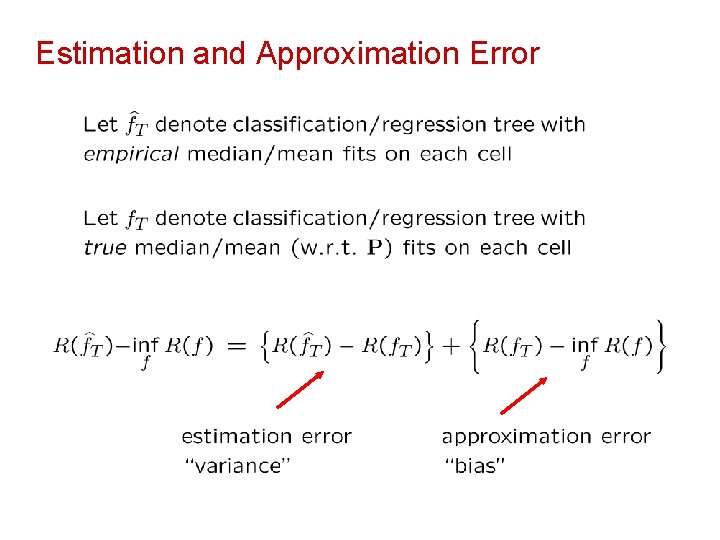

Estimation and Approximation Error

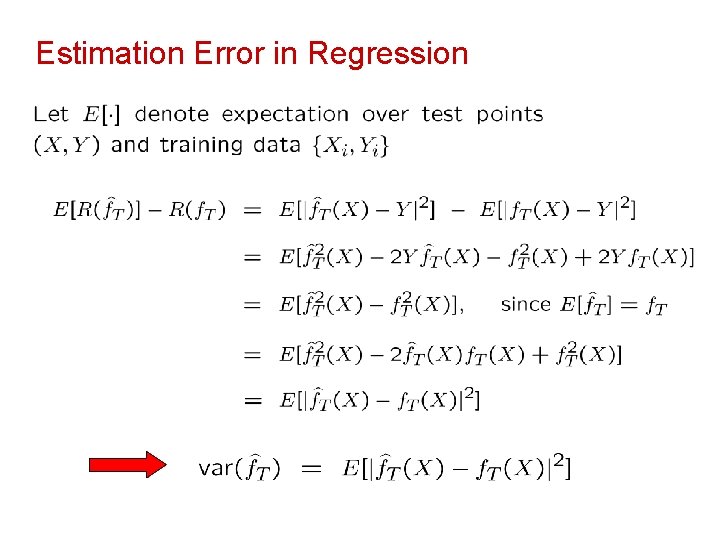

Estimation Error in Regression

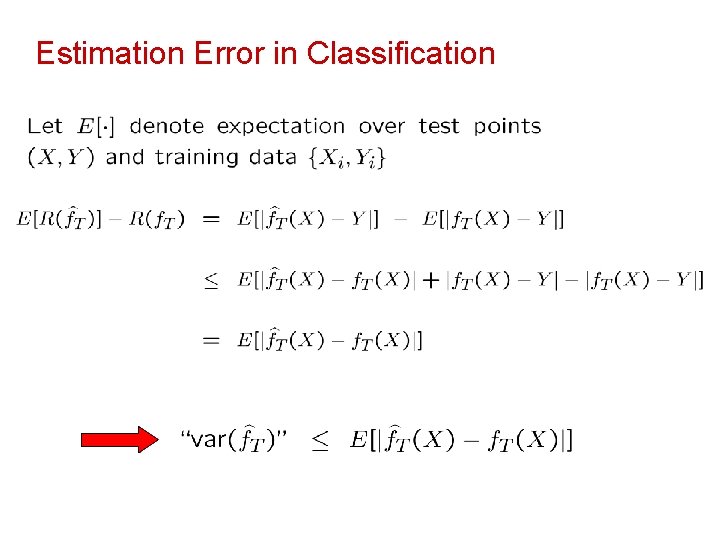

Estimation Error in Classification

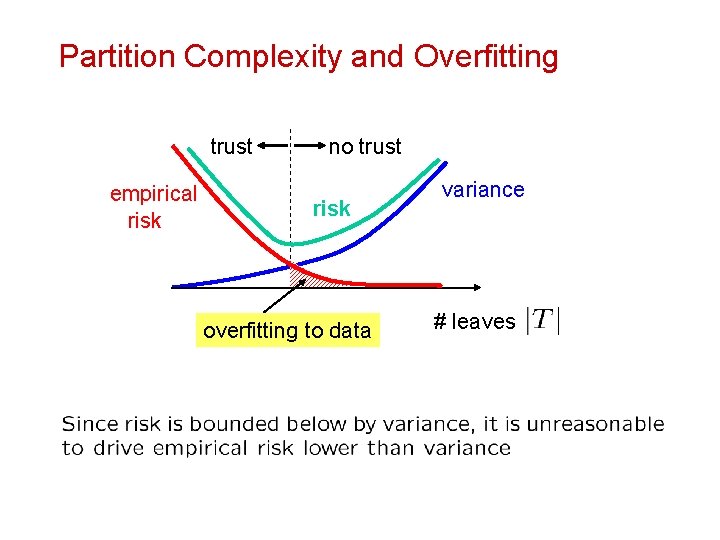

Partition Complexity and Overfitting trust empirical risk no trust risk overfitting to data variance # leaves

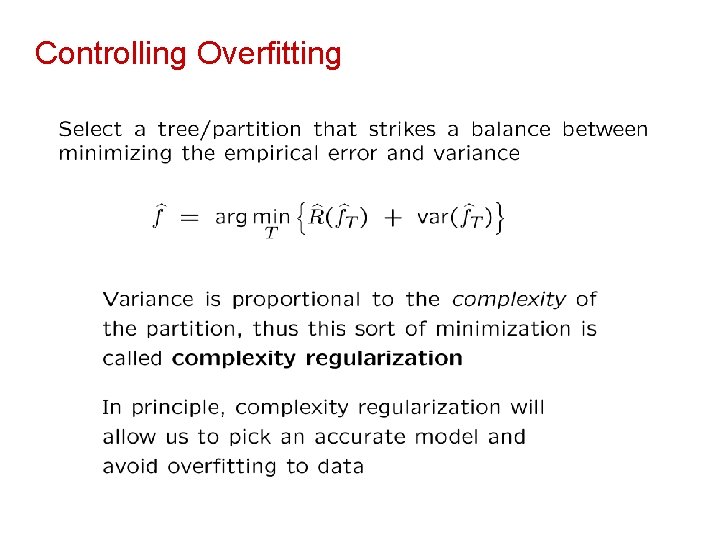

Controlling Overfitting

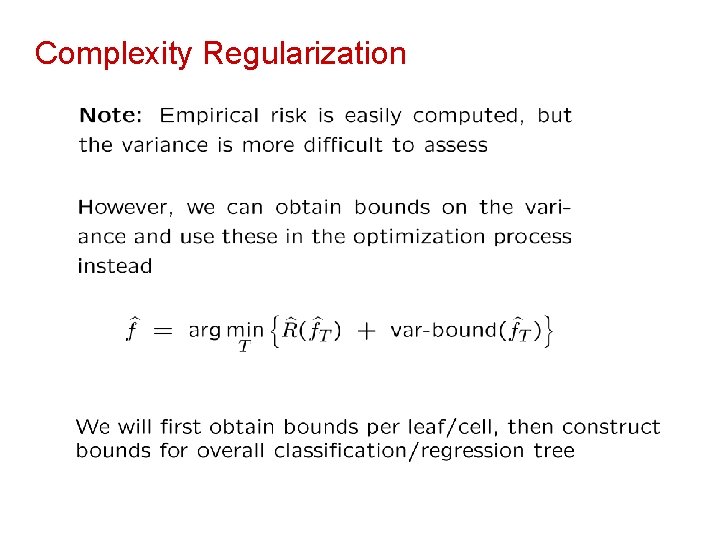

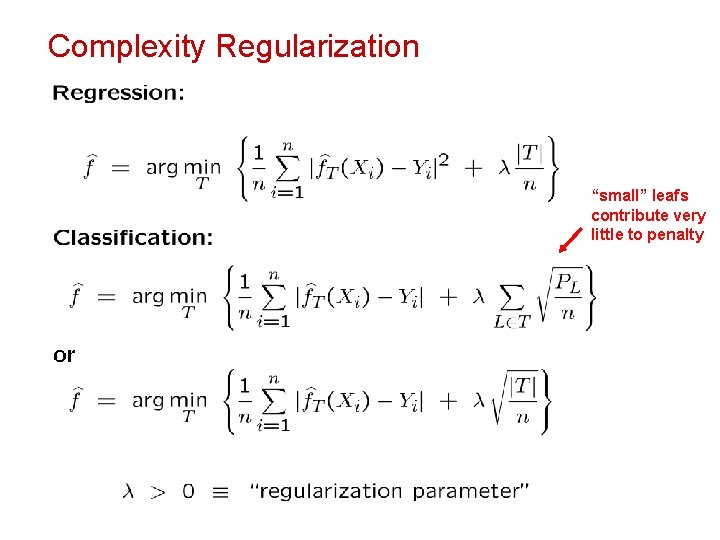

Complexity Regularization

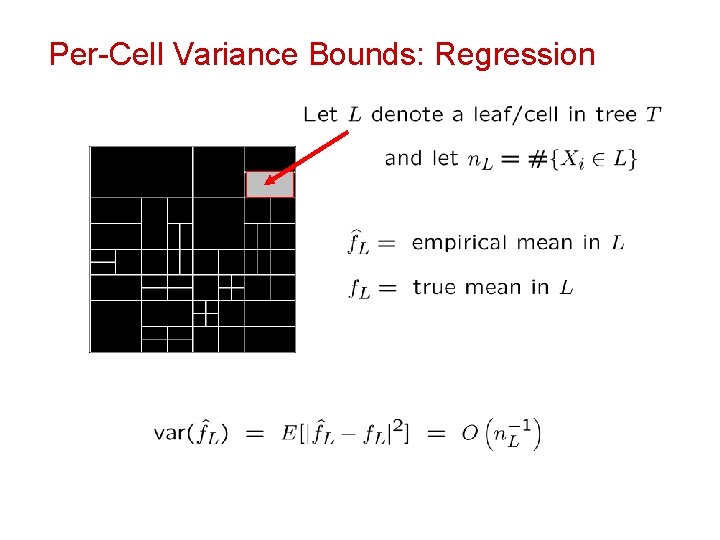

Per-Cell Variance Bounds: Regression

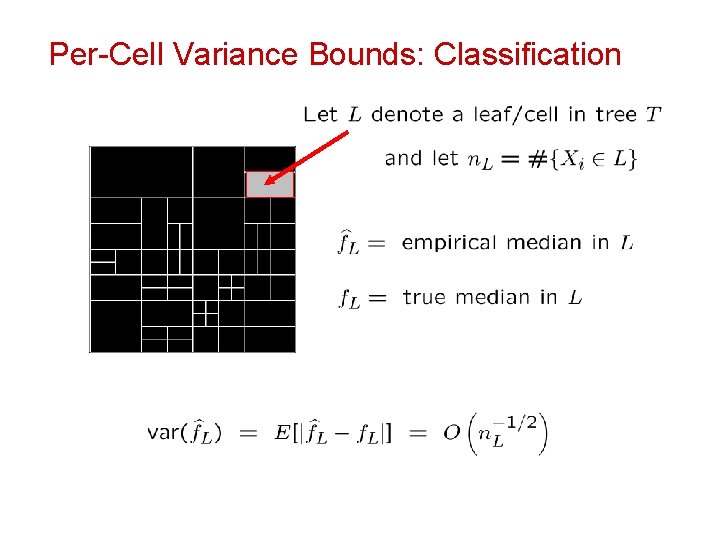

Per-Cell Variance Bounds: Classification

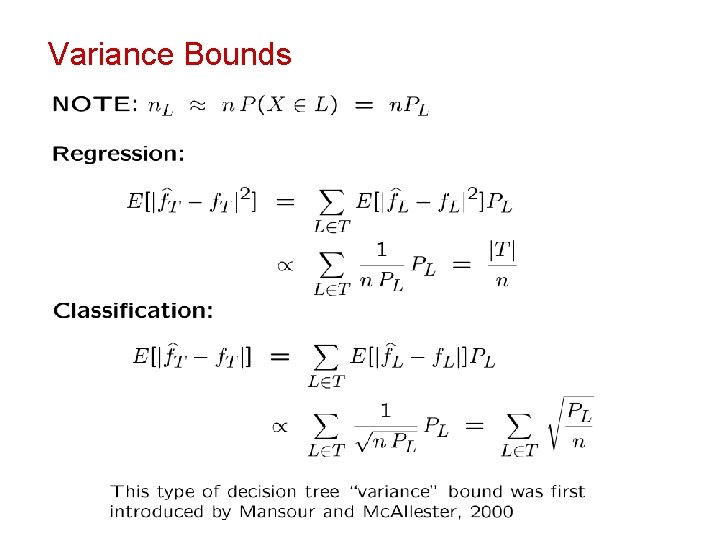

Variance Bounds

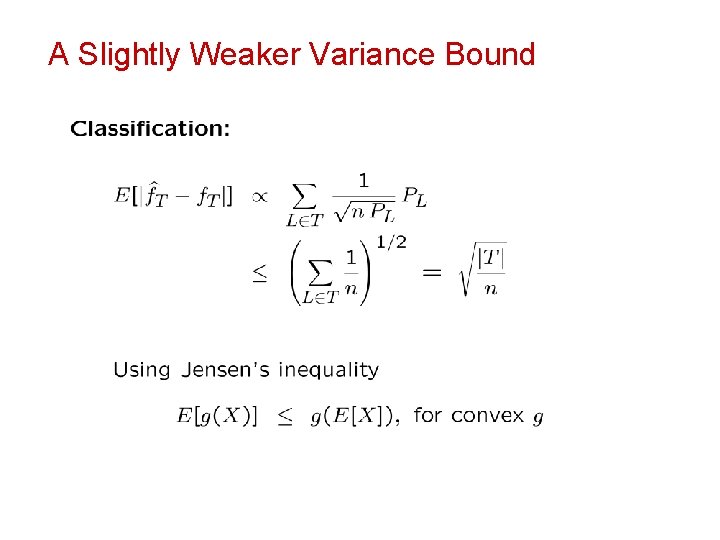

A Slightly Weaker Variance Bound

Complexity Regularization “small” leafs contribute very little to penalty

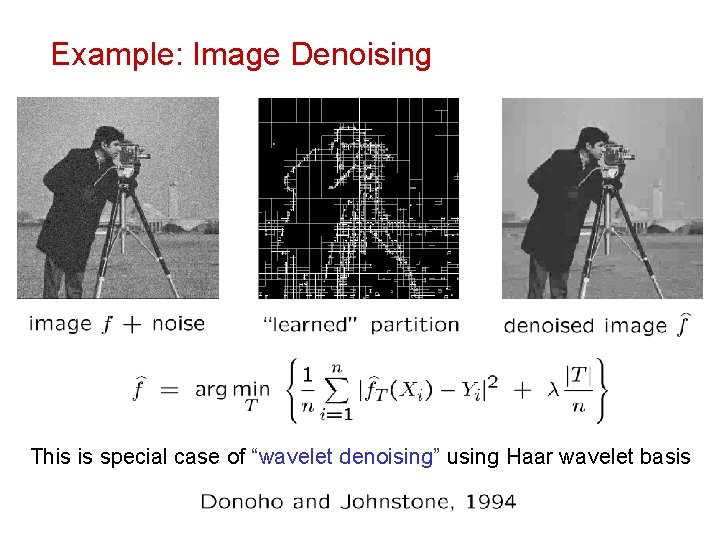

Example: Image Denoising This is special case of “wavelet denoising” using Haar wavelet basis

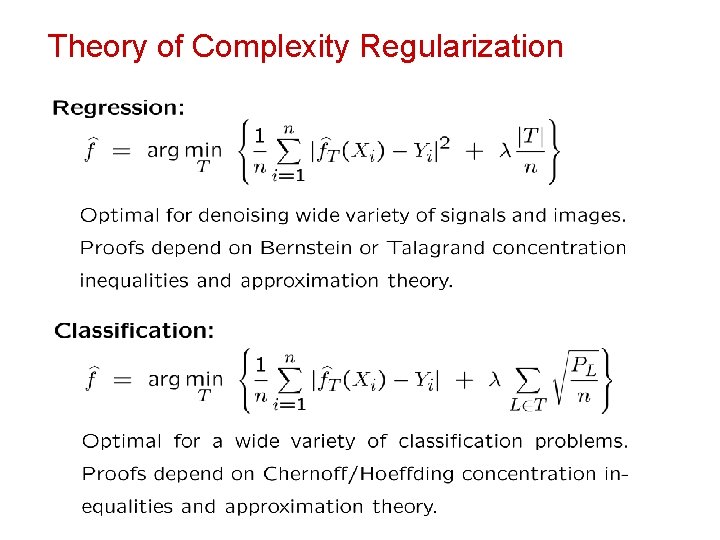

Theory of Complexity Regularization

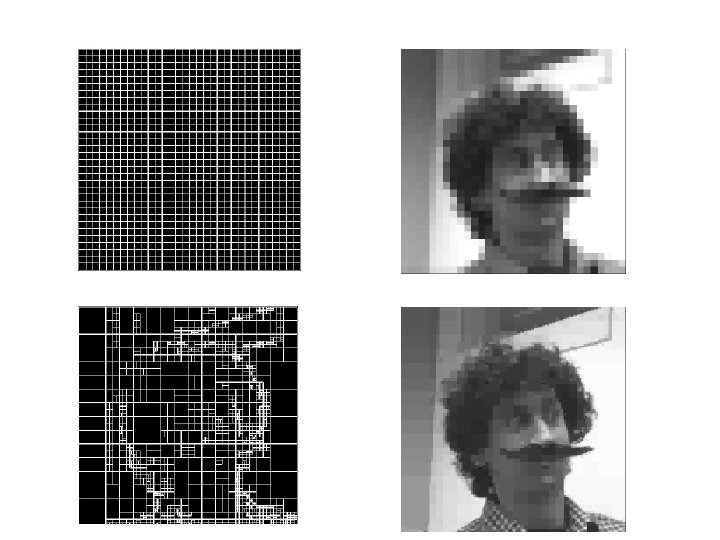

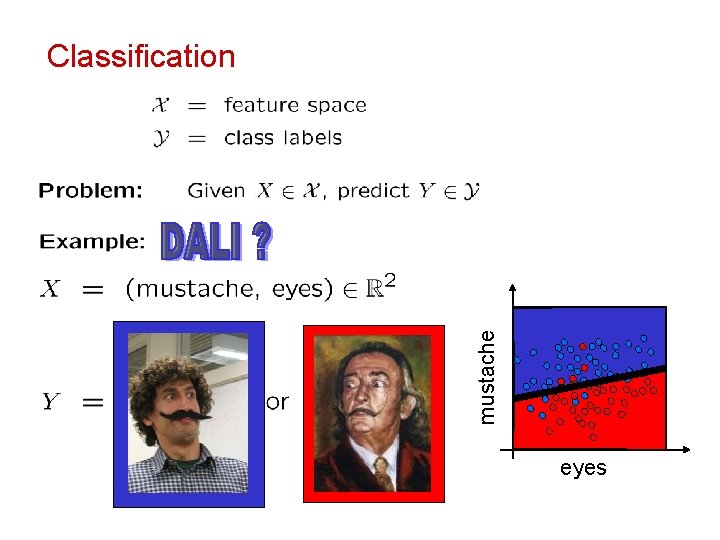

mustache Classification eyes

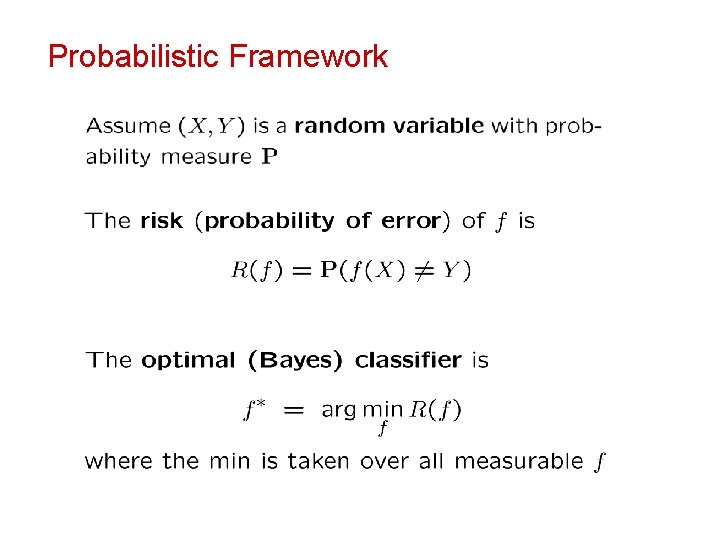

Probabilistic Framework

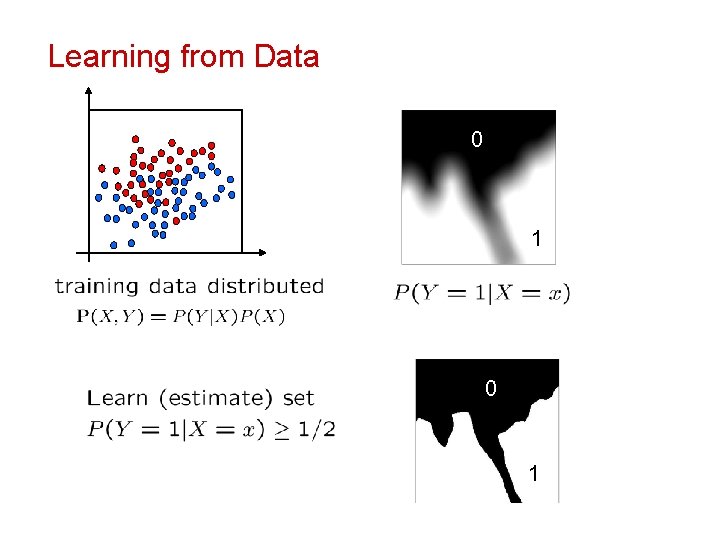

Learning from Data 0 1

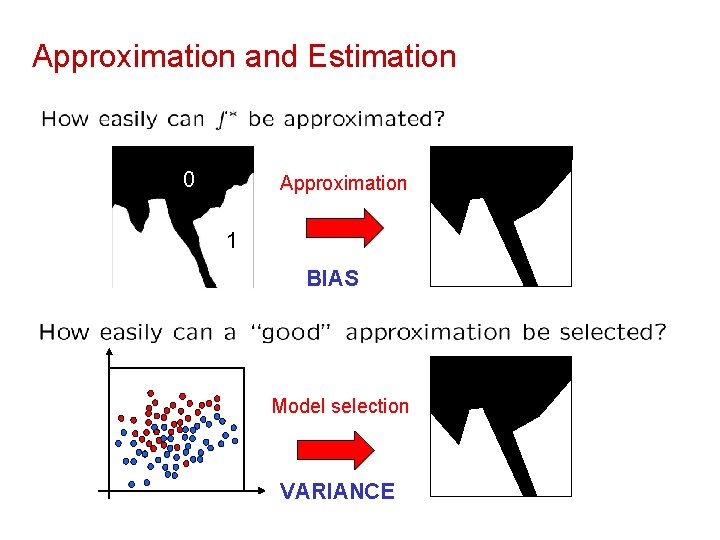

Approximation and Estimation 0 Approximation 1 BIAS Model selection VARIANCE

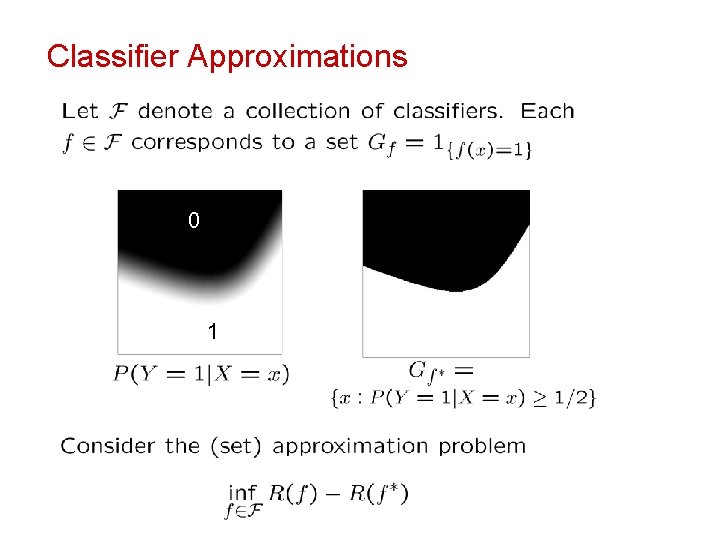

Classifier Approximations 0 1

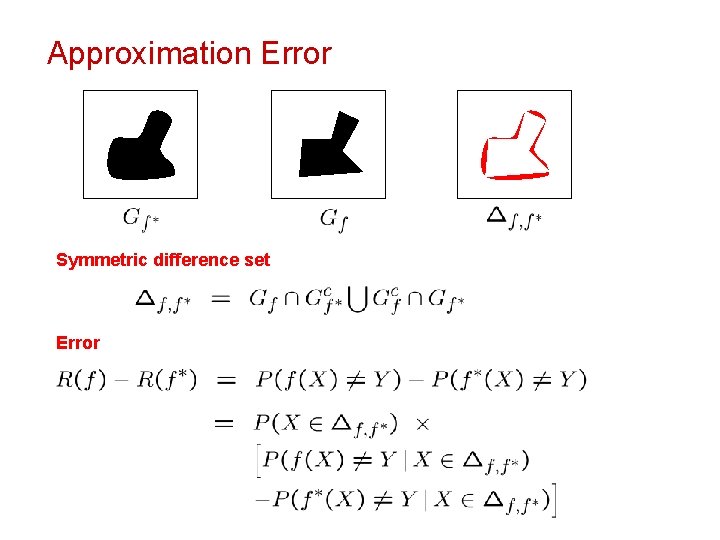

Approximation Error Symmetric difference set Error

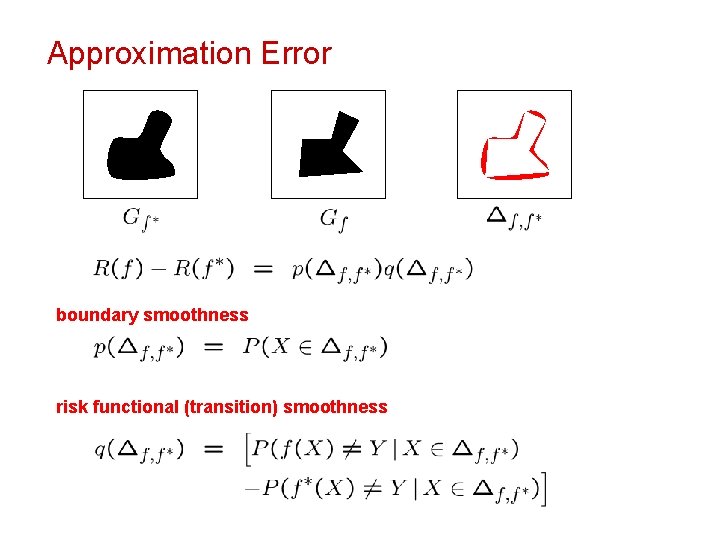

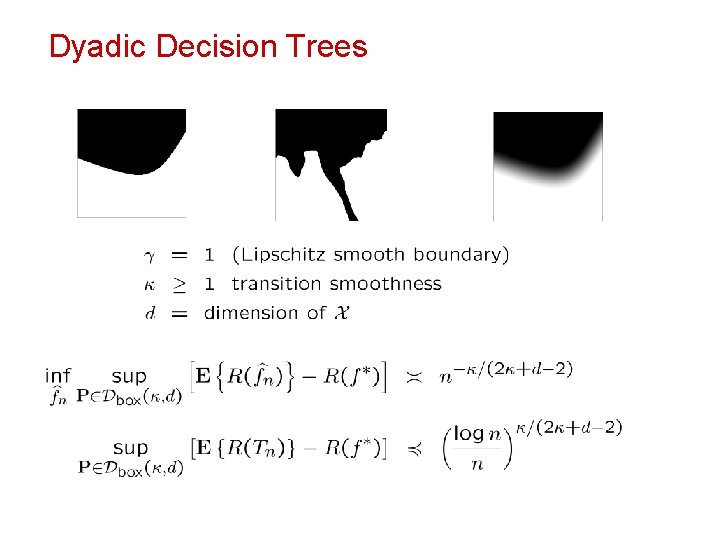

Approximation Error boundary smoothness risk functional (transition) smoothness

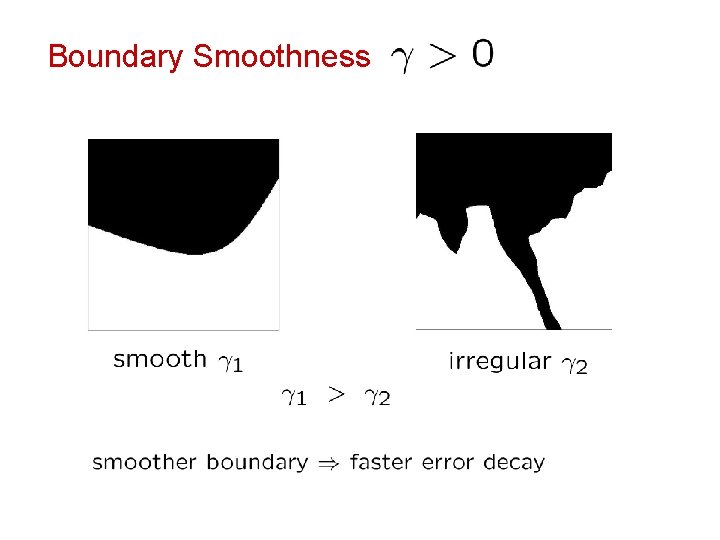

Boundary Smoothness

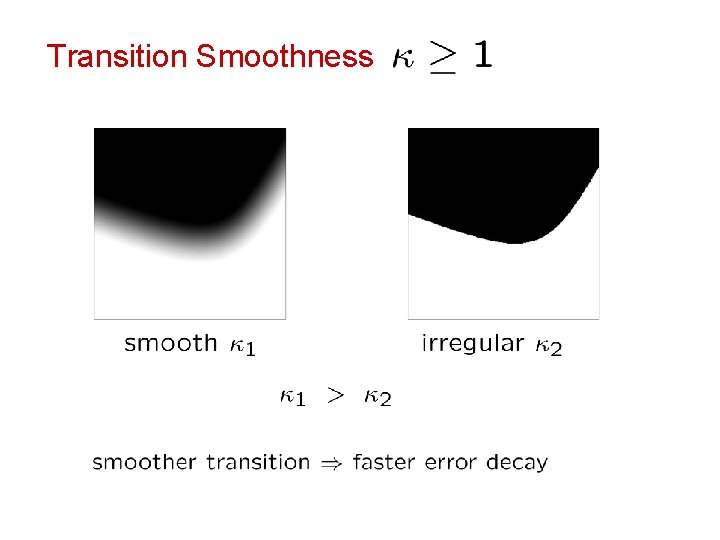

Transition Smoothness

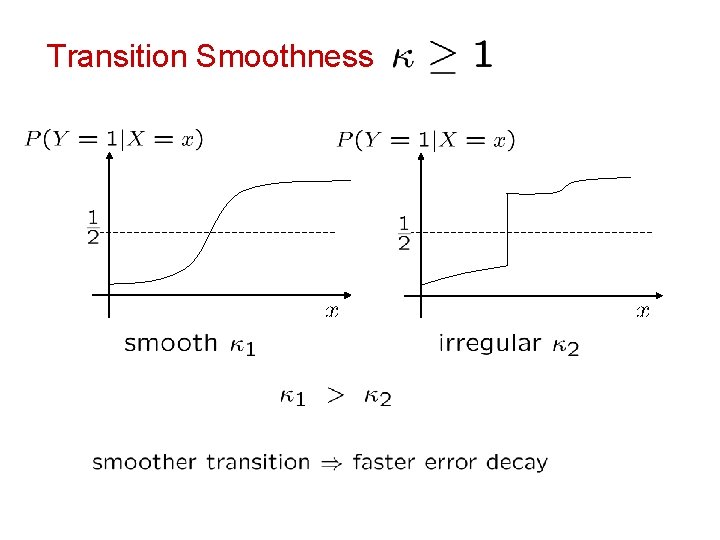

Transition Smoothness

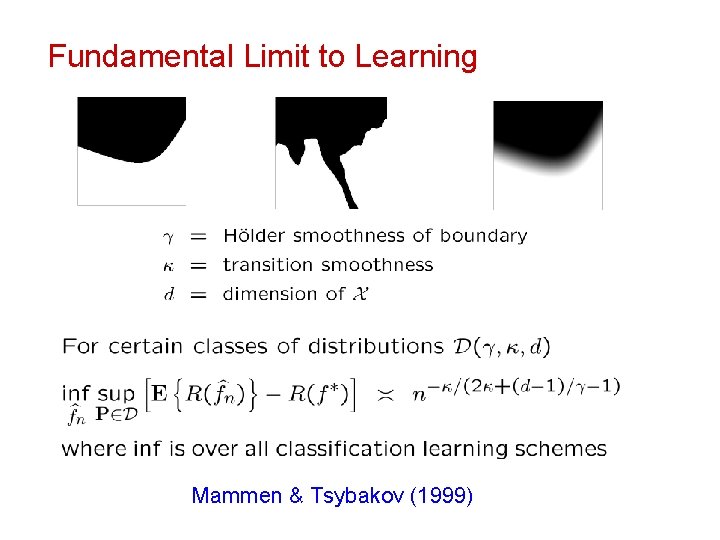

Fundamental Limit to Learning Mammen & Tsybakov (1999)

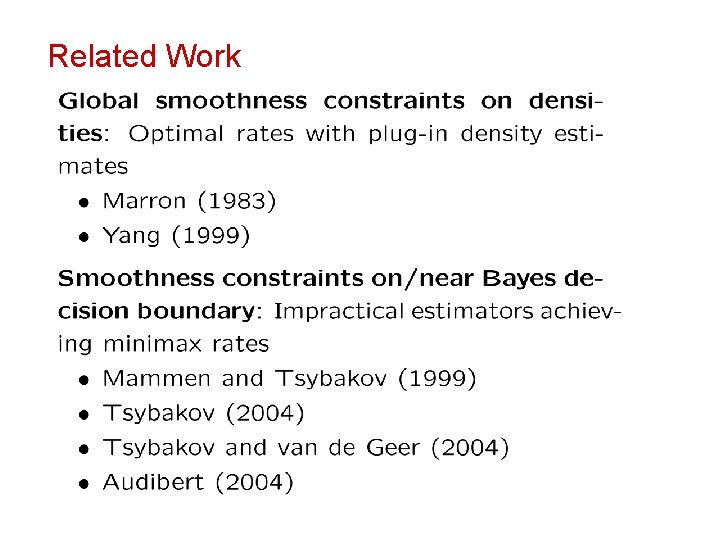

Related Work

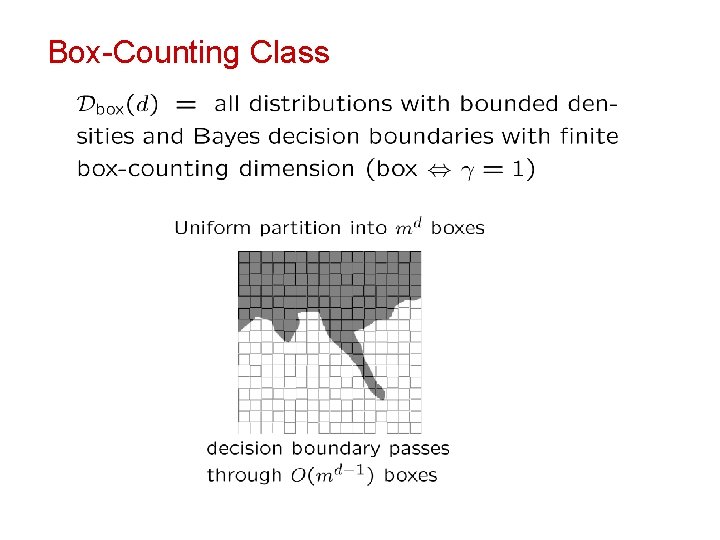

Box-Counting Class

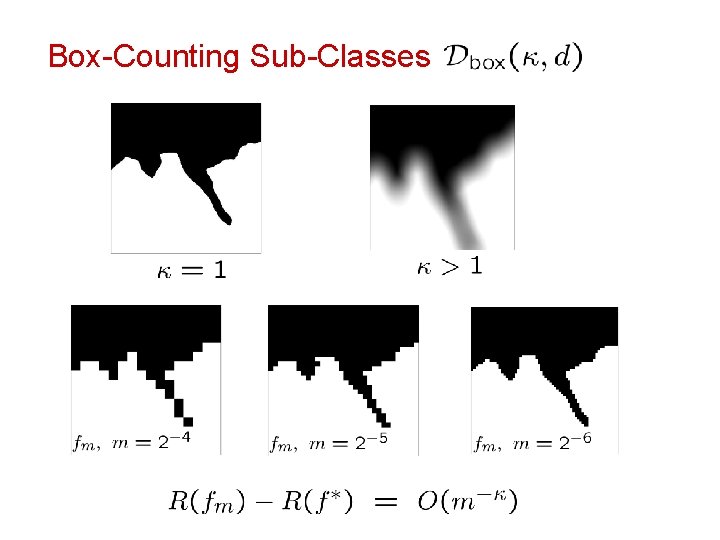

Box-Counting Sub-Classes

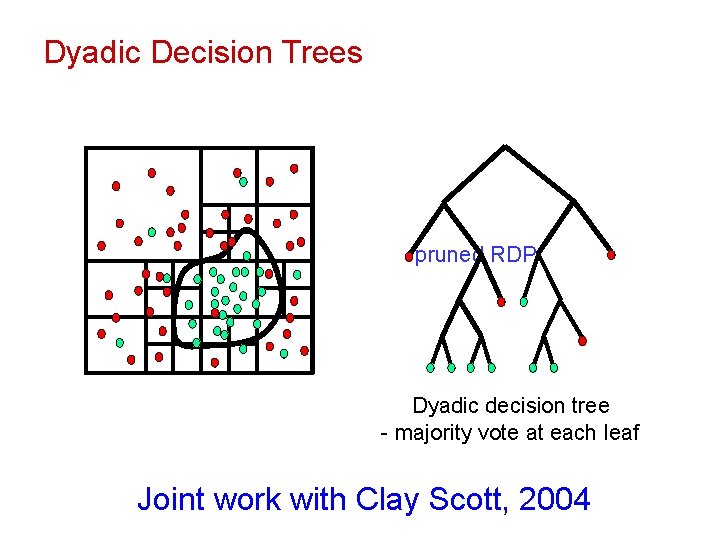

Dyadic Decision Trees Bayes decision labeled pruned training RDP data complete RDP boundary Dyadic decision tree - majority vote at each leaf Joint work with Clay Scott, 2004

Dyadic Decision Trees

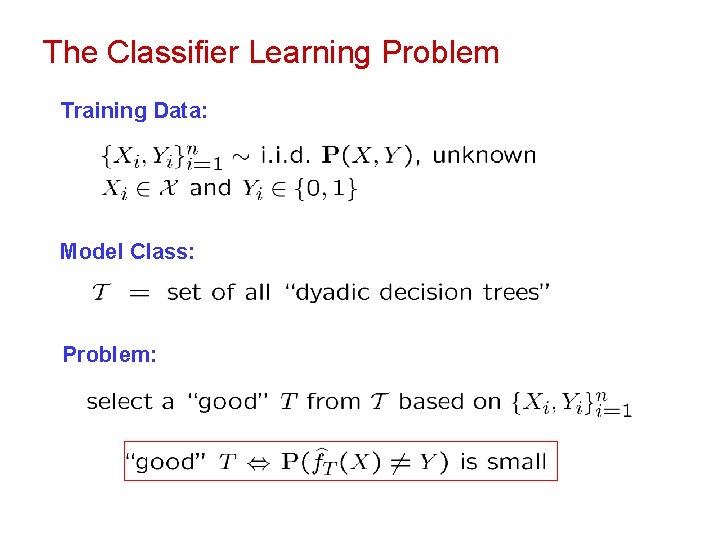

The Classifier Learning Problem Training Data: Model Class: Problem:

Empirical Risk

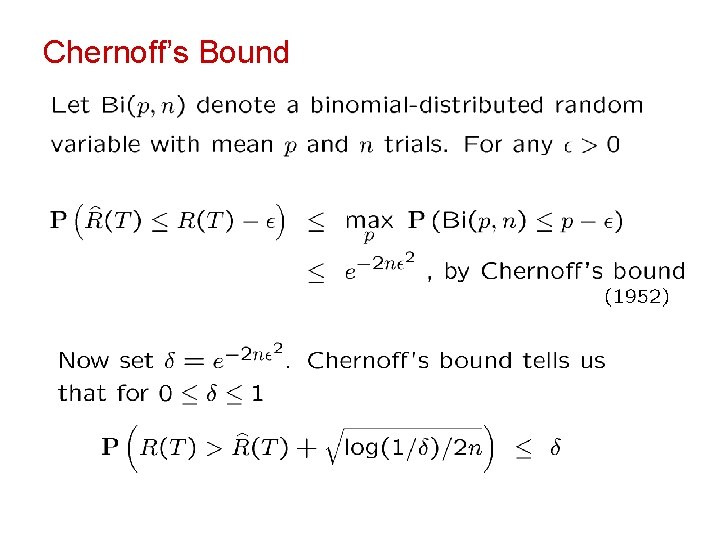

Chernoff’s Bound

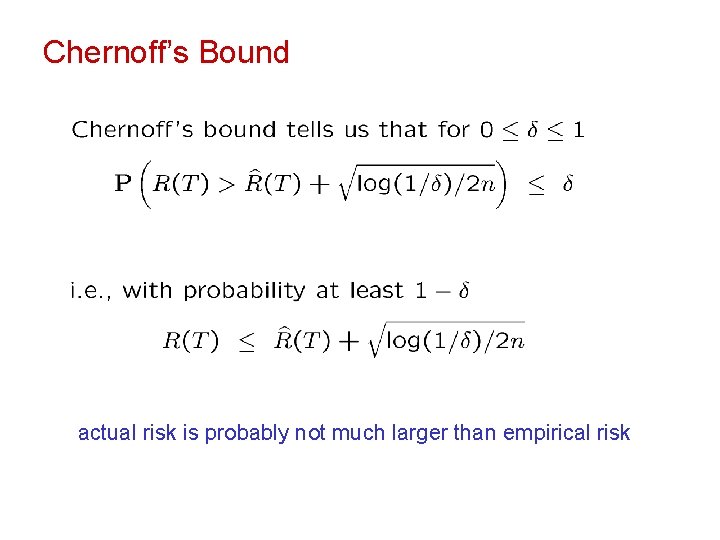

Chernoff’s Bound actual risk is probably not much larger than empirical risk

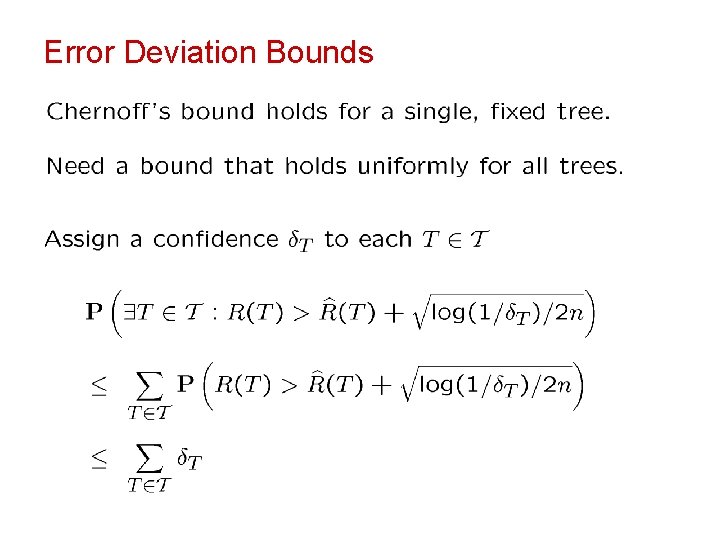

Error Deviation Bounds

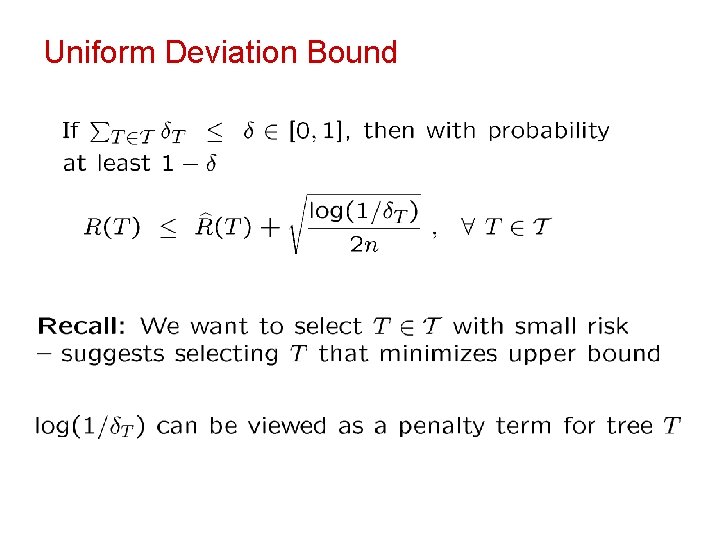

Uniform Deviation Bound

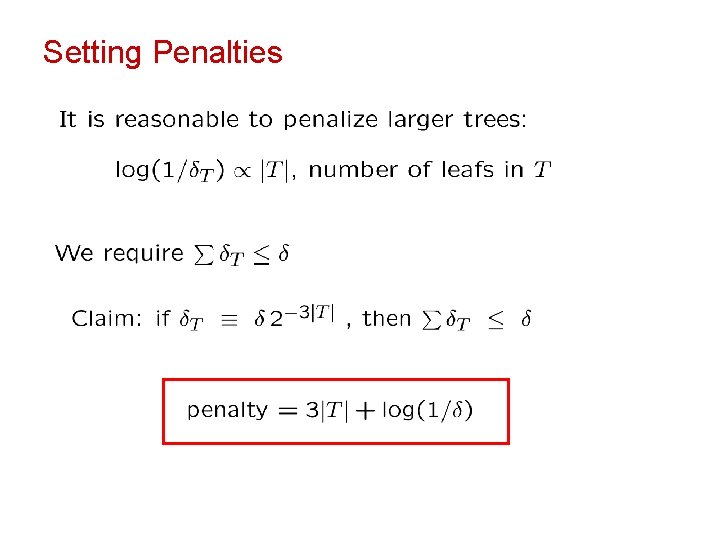

Setting Penalties

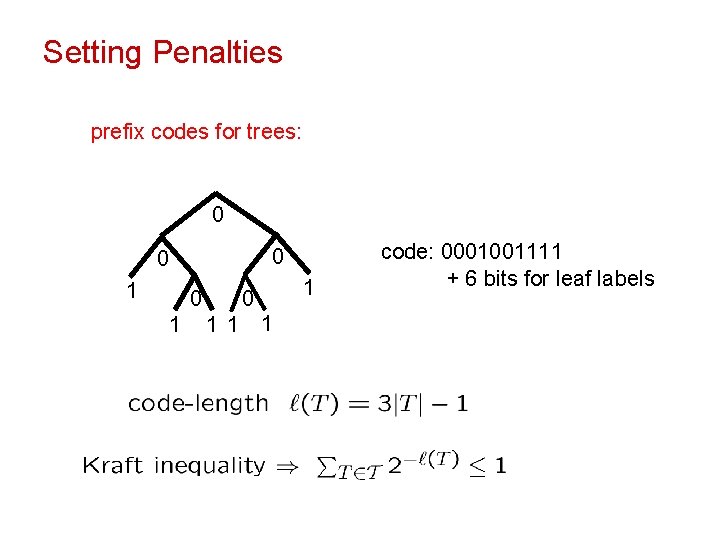

Setting Penalties prefix codes for trees: 0 0 0 1 0 1 1 code: 0001001111 + 6 bits for leaf labels

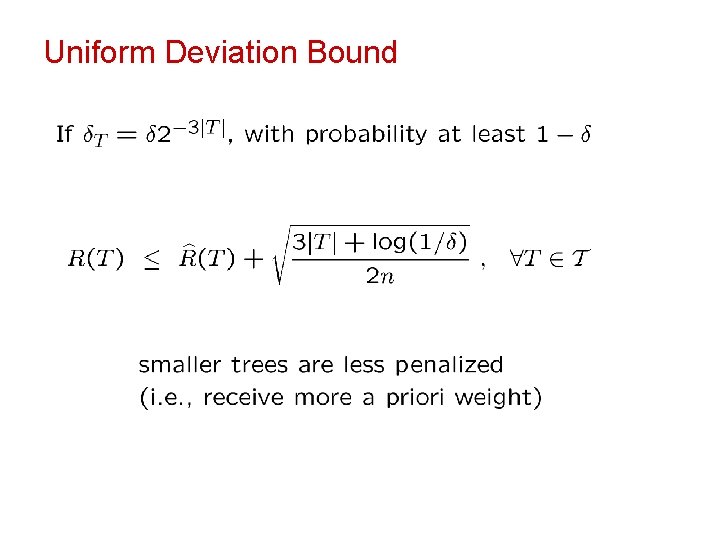

Uniform Deviation Bound

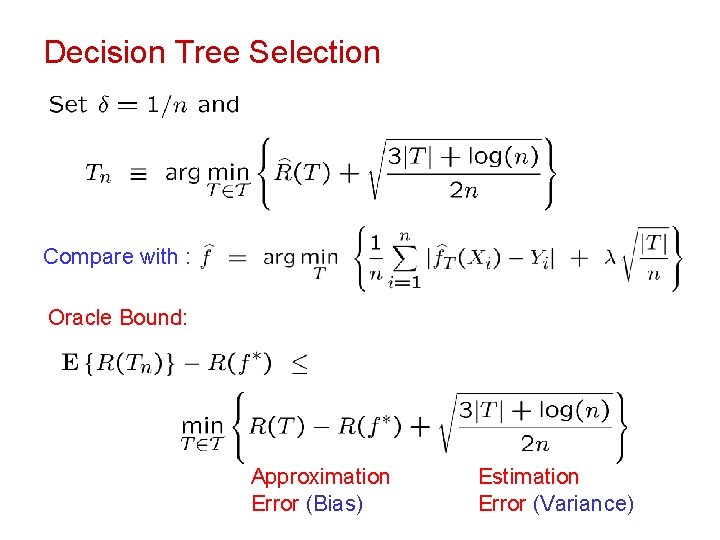

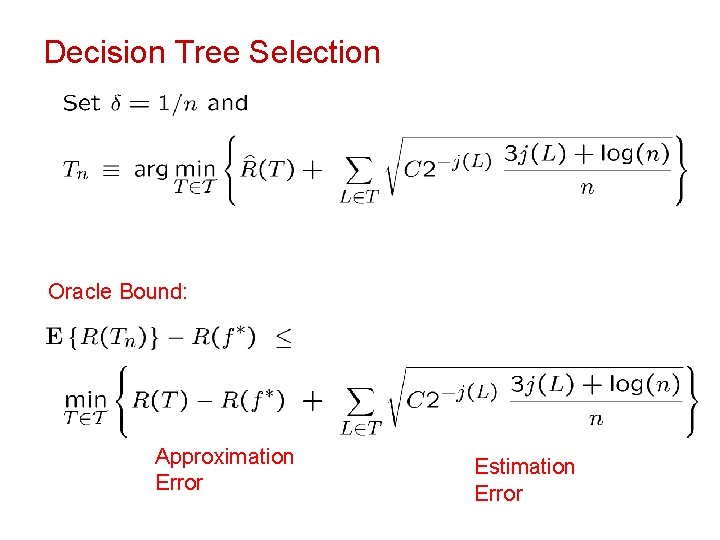

Decision Tree Selection Compare with : Oracle Bound: Approximation Error (Bias) Estimation Error (Variance)

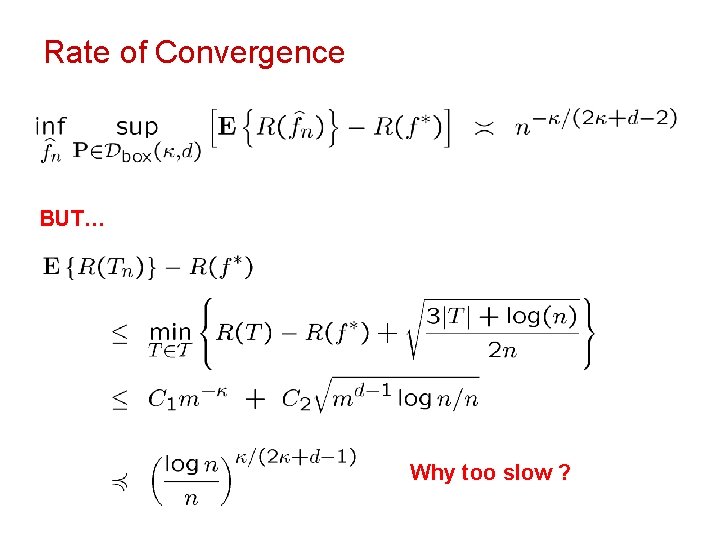

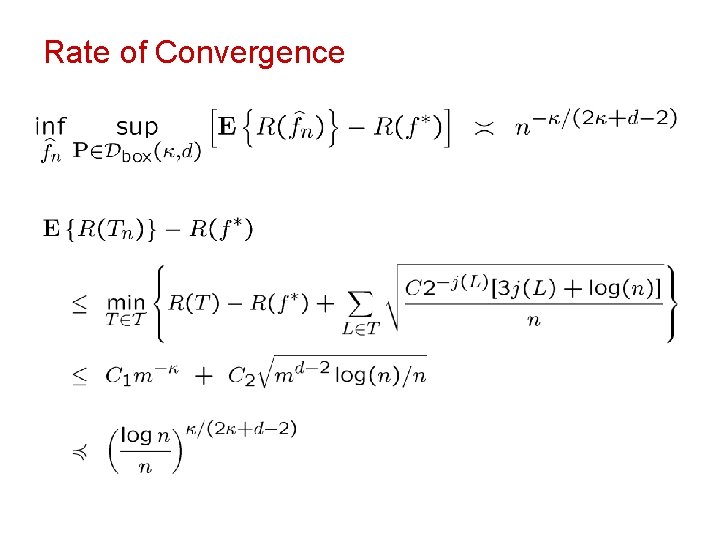

Rate of Convergence BUT… Why too slow ?

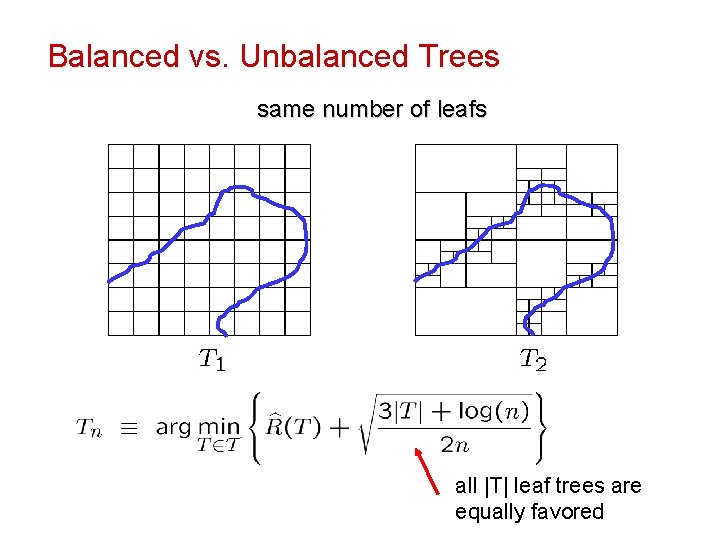

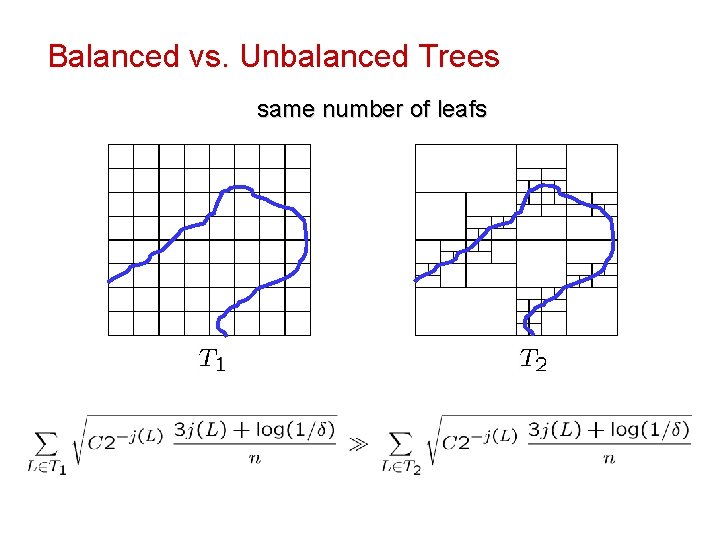

Balanced vs. Unbalanced Trees same number of leafs all |T| leaf trees are equally favored

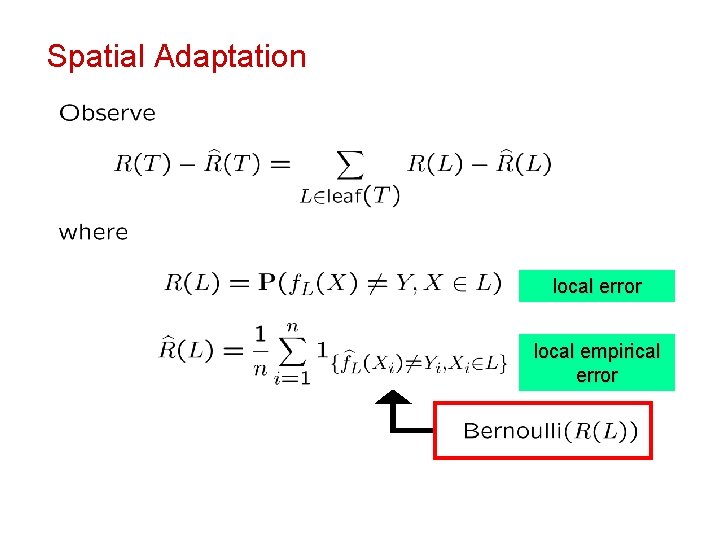

Spatial Adaptation local error local empirical error

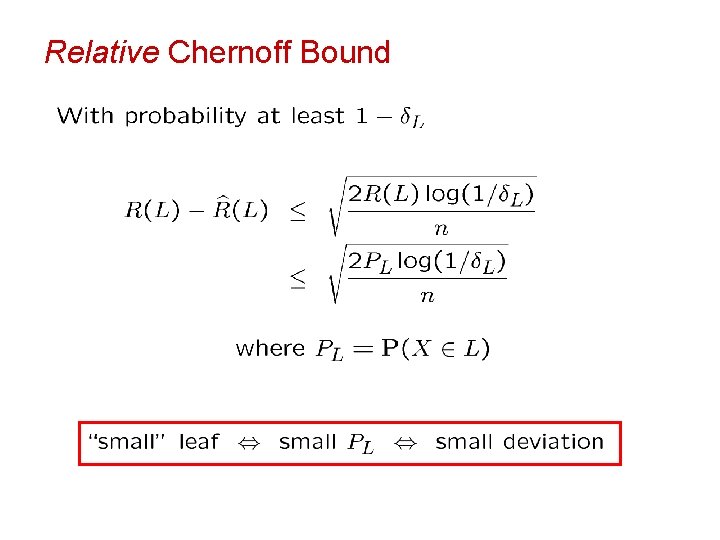

Relative Chernoff Bound

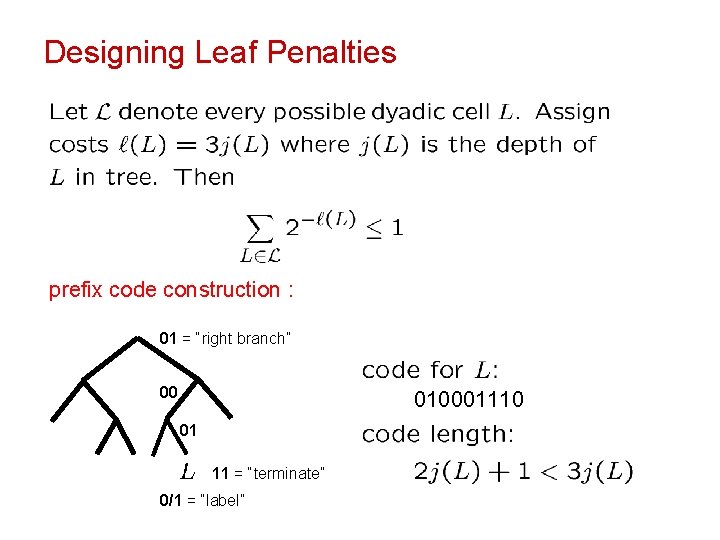

Designing Leaf Penalties prefix code construction : 01 = “right branch” 00 010001110 01 11 = “terminate” 0/1 = “label”

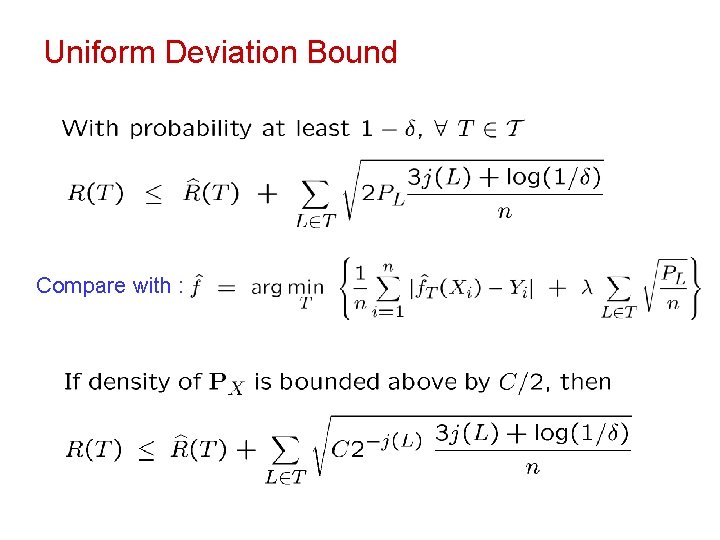

Uniform Deviation Bound Compare with :

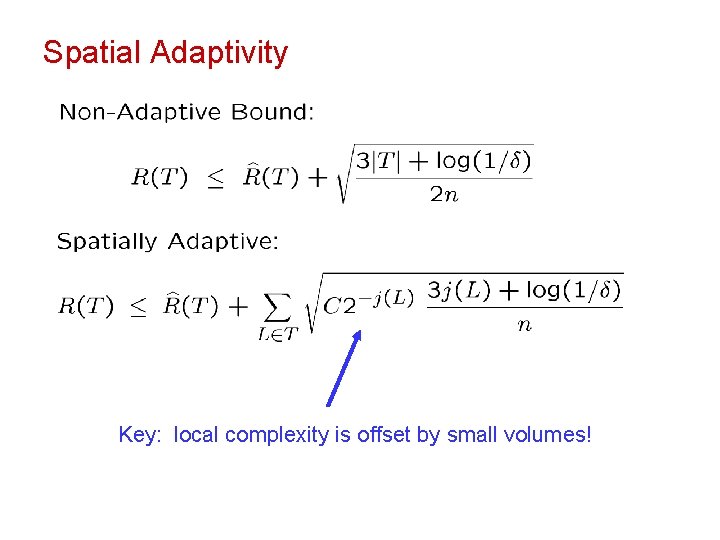

Spatial Adaptivity Key: local complexity is offset by small volumes!

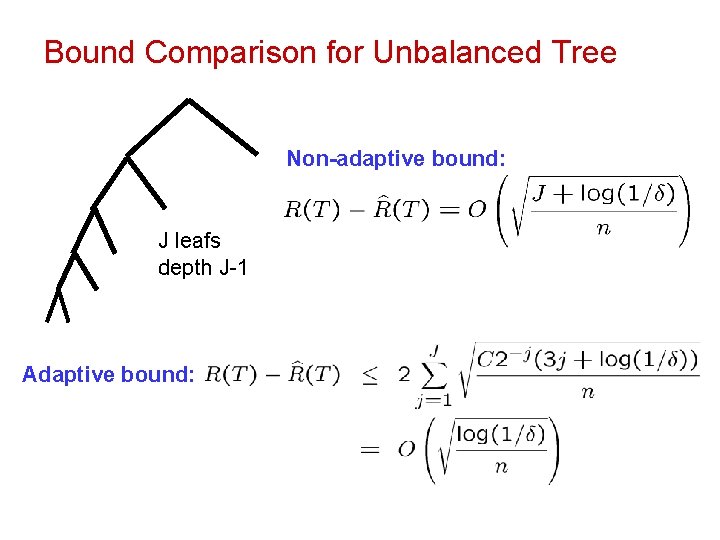

Bound Comparison for Unbalanced Tree Non-adaptive bound: J leafs depth J-1 Adaptive bound:

Balanced vs. Unbalanced Trees same number of leafs

Decision Tree Selection Oracle Bound: Approximation Error Estimation Error

Rate of Convergence

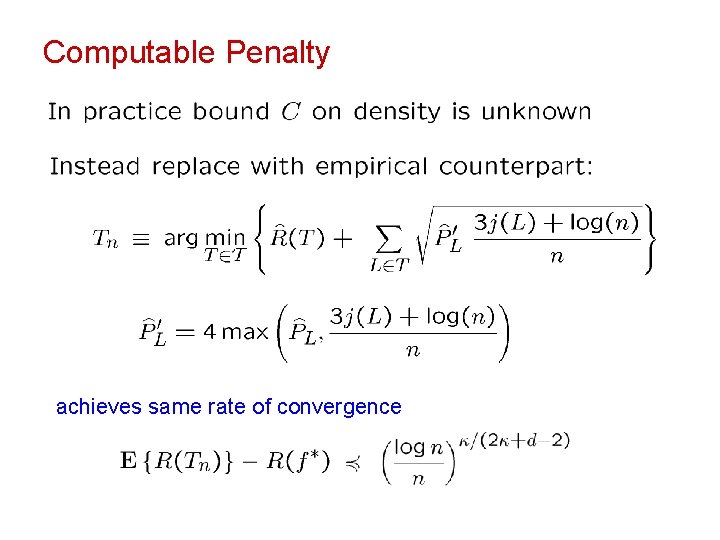

Computable Penalty achieves same rate of convergence

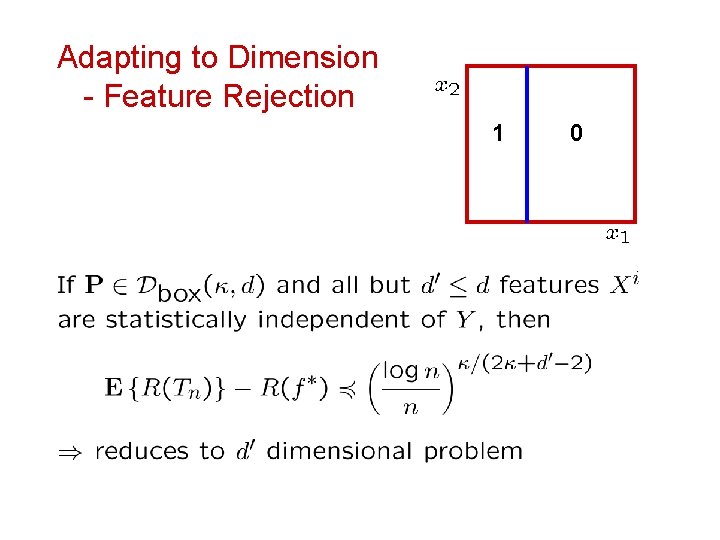

Adapting to Dimension - Feature Rejection 1 0

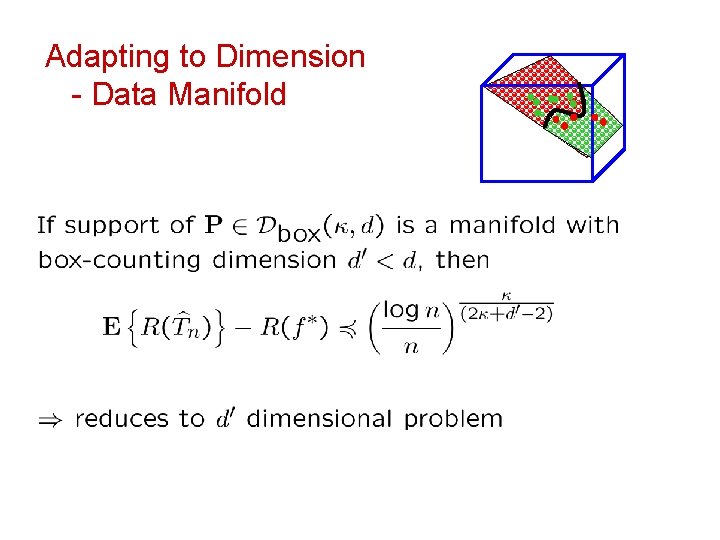

Adapting to Dimension - Data Manifold

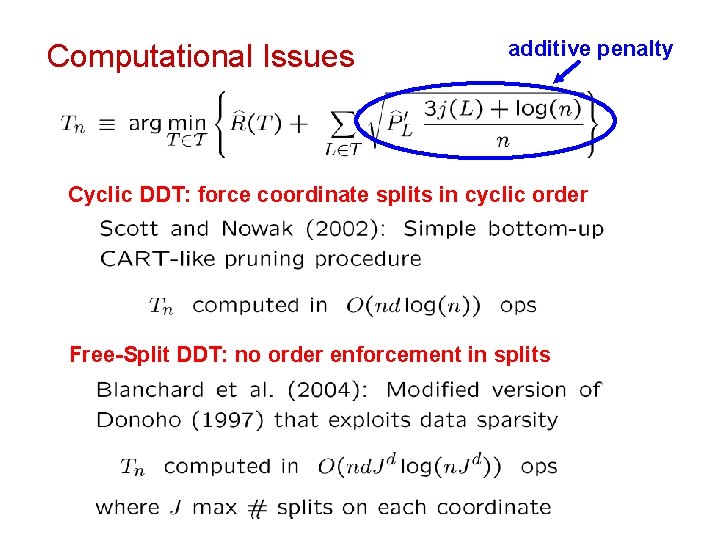

Computational Issues additive penalty Cyclic DDT: force coordinate splits in cyclic order Free-Split DDT: no order enforcement in splits

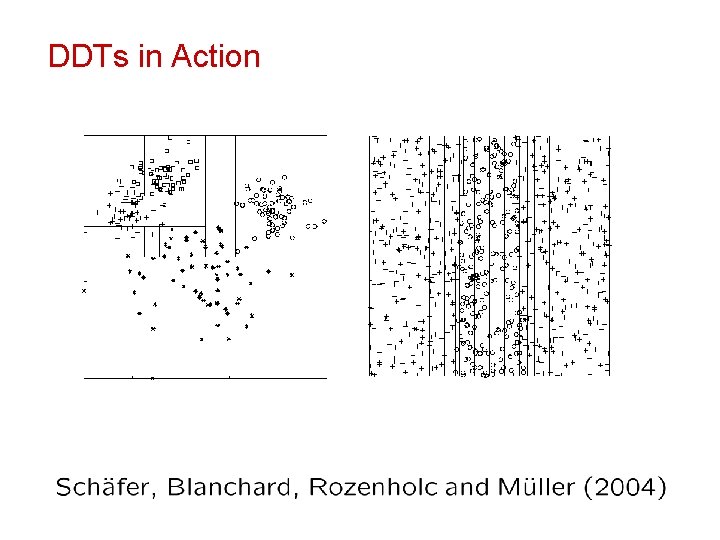

DDTs in Action

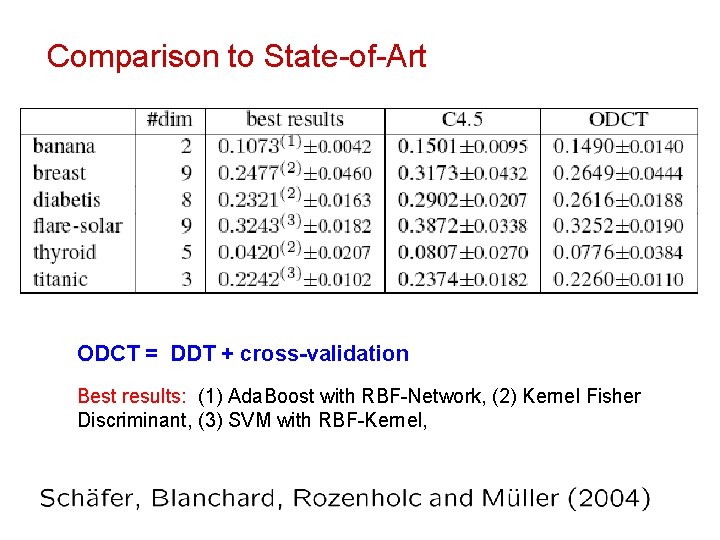

Comparison to State-of-Art ODCT = DDT + cross-validation Best results: (1) Ada. Boost with RBF-Network, (2) Kernel Fisher Discriminant, (3) SVM with RBF-Kernel,

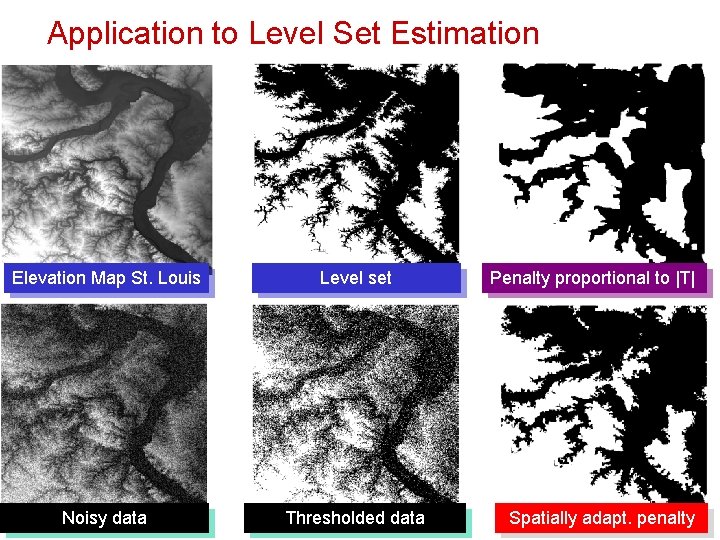

Application to Level Set Estimation Elevation Map St. Louis Level set Noisy data Thresholded data Penalty proportional to |T| Spatially adapt. penalty

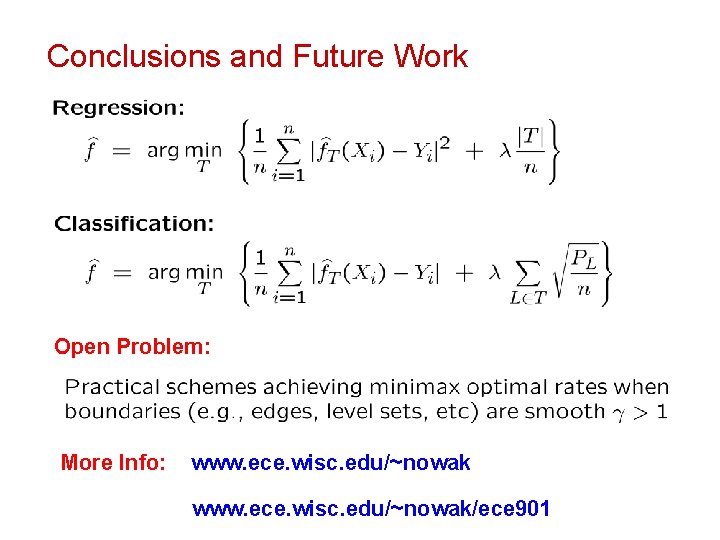

Conclusions and Future Work Open Problem: More Info: www. ece. wisc. edu/~nowak/ece 901

- Slides: 87