Learning to Maximize Reward Reinforcement Learning Brian C

Learning to Maximize Reward: Reinforcement Learning Brian C. Williams 16. 412 J/6. 834 J October 28 th, 2002 Slides adapted from: Manuela Veloso, Reid Simmons, & Tom Mitchell, CMU 5/21/2021 1

Reading • Today: Reinforcement Learning • Read 2 nd ed AIMA Chapter 19, or 1 st ed AIMA Chapter 20 • Read “Reinforcement Learning: A Survey” by L. Kaebling, M. Littman and A. Moore, Journal of Artificial Intelligence Research 4 (1996) 237 -285. • For Markov Decision Processes • Read 1 st/2 nd ed AIMA Chapter 17 sections 1 – 4. • Optional Reading: : Planning and Acting in Partially Observable Stochastic Domains, by L. Kaebling, M. Littman and A. Cassandra, Elsevier (1998) 237 -285. 2

Markov Decision Processes and Reinforcement Learning • Motivation • Learning policies through reinforcement • Q values • Q learning • Multi-step backups • Nondeterministic MDPs • Function Approximators • Model-based Learning • Summary 3

![Example: TD-Gammon [Tesauro, 1995] Learns to play Backgammon Situations: • Board configurations (1020) Actions: Example: TD-Gammon [Tesauro, 1995] Learns to play Backgammon Situations: • Board configurations (1020) Actions:](http://slidetodoc.com/presentation_image_h2/425ea31d90890b8d5934715e63e73050/image-4.jpg)

Example: TD-Gammon [Tesauro, 1995] Learns to play Backgammon Situations: • Board configurations (1020) Actions: • Moves Rewards: • • +100 if win - 100 if lose 0 for all other states Trained by playing 1. 5 million games against self. è Currently, roughly equal to best human player. 4

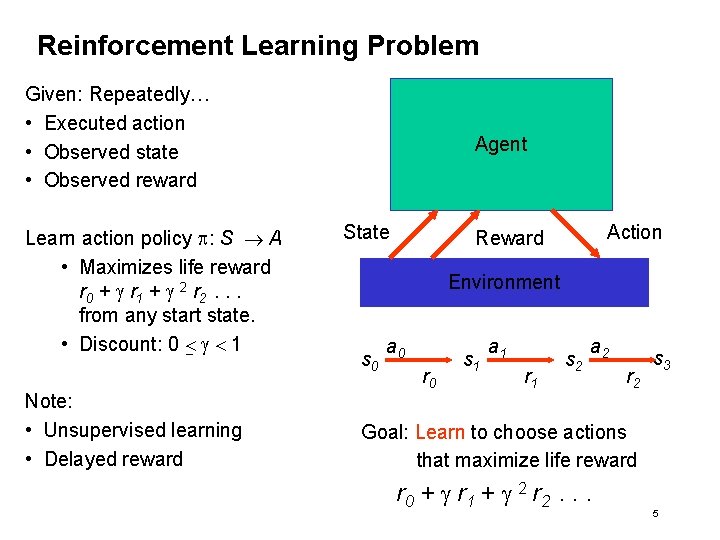

Reinforcement Learning Problem Given: Repeatedly… • Executed action • Observed state • Observed reward Learn action policy p: S A • Maximizes life reward r 0 + g r 1 + g 2 r 2. . . from any start state. • Discount: 0 < g < 1 Note: • Unsupervised learning • Delayed reward Agent State Action Reward Environment s 0 a 0 r 0 s 1 a 1 r 1 s 2 a 2 r 2 s 3 Goal: Learn to choose actions that maximize life reward r 0 + g r 1 + g 2 r 2. . . 5

How About Learning the Policy Directly? 1. p*: S A 2. fill out table entries for p* by collecting statistics on training pairs <s, a*>. 3. Where does a*come from? 6

How About Learning the Value Function? 1. Have agent learn value function Vp*, denoted V*. 2. Given learned V*, agent selects optimal action by one step lookahead: p*(s) = argmaxa [r(s, a) + g. V*(d(s, a)] Problem: • Works well if agent knows the environment model. • d: S x A S • r: S x A • With no model, agent can’t choose action from V*. • With a model, could compute V* via value iteration, why learn it? 7

How About Learning the Model as Well? 1. Have agent learn d and r by statistics on training instances <st, rt+1, st+1> 2. Compute V* by value iteration. Vt+1(s) maxa [r(s, a) + g. V t(d(s, a))] 3. Agent selects optimal action by one step lookahead: p*(s) = argmaxa [r(s, a) + g. V*(d(s, a)] Problem: A viable strategy for many problems, but … • When do you stop learning the model and compute V*? • May take a long time to converge on model. • Would like to continuously interleave learning and acting, but repeatedly computing V* is costly. • How can we avoid learning the model and V* explicitly? 8

Eliminating the Model with Q Functions p*(s) = argmaxa [r(s, a) + g. V*(d(s, a)] Key idea: • Define function that encapsulates V*, d and r: Q(s, a) = r(s, a) + g. V*(d(s, a)) • From learned Q, can choose an optimal action without knowing d or r. p*(s) = argmaxa Q(s, a) V = Cumulative reward of being in s. Q = Cumulative reward of being in s and taking action a. 9

Markov Decision Processes and Reinforcement Learning • Motivation • Learning policies through reinforcement • Q values • Q learning • Multi-step backups • Nondeterministic MDPs • Function Approximators • Model-based Learning • Summary 10

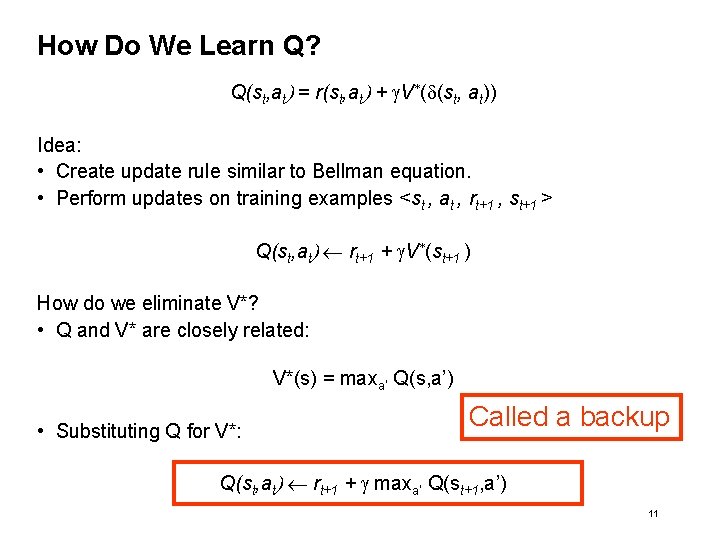

How Do We Learn Q? Q(st, at) = r(st, at) + g. V*(d(st, at)) Idea: • Create update rule similar to Bellman equation. • Perform updates on training examples <st , at , rt+1 , st+1 > Q(st, at) rt+1 + g. V*(st+1 ) How do we eliminate V*? • Q and V* are closely related: V*(s) = maxa’ Q(s, a’) • Substituting Q for V*: Called a backup Q(st, at) rt+1 + g maxa’ Q(st+1, a’) 11

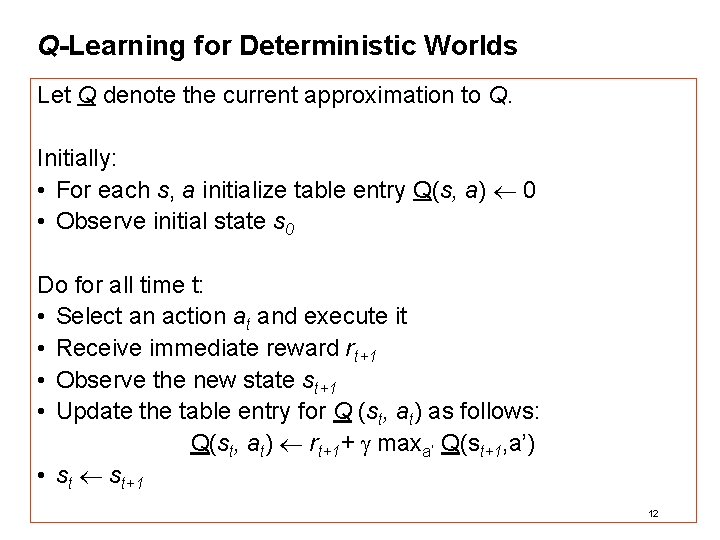

Q-Learning for Deterministic Worlds Let Q denote the current approximation to Q. Initially: • For each s, a initialize table entry Q(s, a) 0 • Observe initial state s 0 Do for all time t: • Select an action at and execute it • Receive immediate reward rt+1 • Observe the new state st+1 • Update the table entry for Q (st, at) as follows: Q(st, at) rt+1+ g maxa’ Q(st+1, a’) • st st+1 12

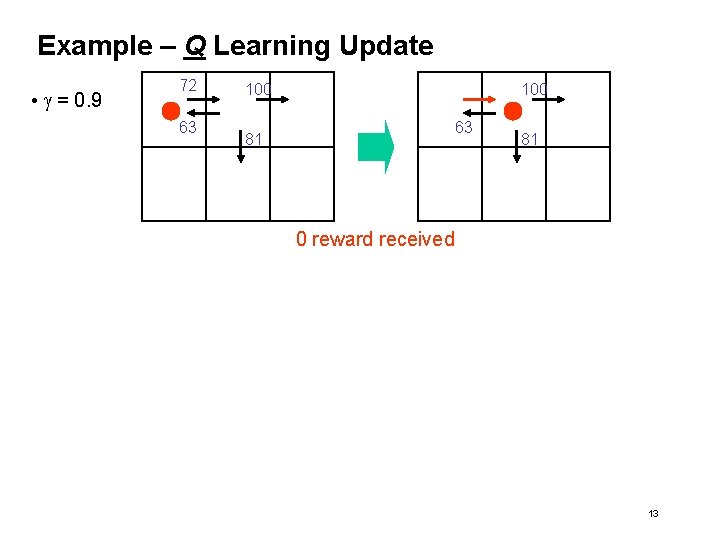

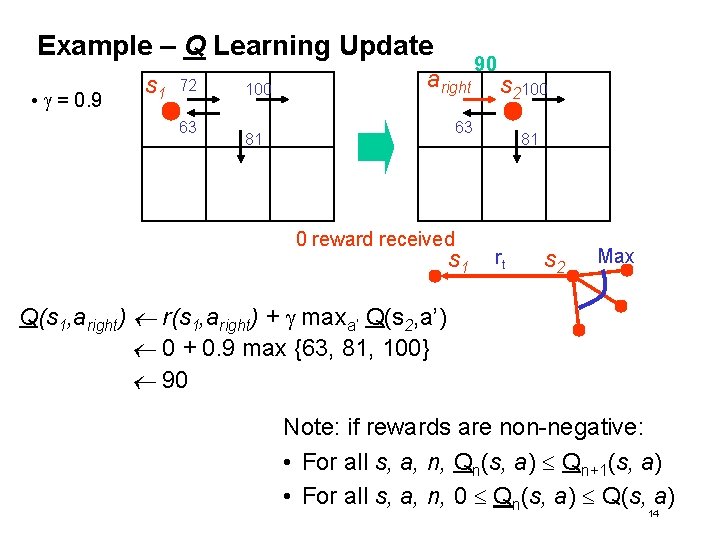

Example – Q Learning Update • g = 0. 9 72 63 100 63 81 81 0 reward received 13

Example – Q Learning Update • g = 0. 9 s 1 72 63 100 aright 90 s 2100 63 81 0 reward received s 1 81 rt s 2 Max Q(s 1, aright) r(s 1, aright) + g maxa’ Q(s 2, a’) 0 + 0. 9 max {63, 81, 100} 90 Note: if rewards are non-negative: • For all s, a, n, Qn(s, a) Qn+1(s, a) • For all s, a, n, 0 Qn(s, a) Q(s, a) 14

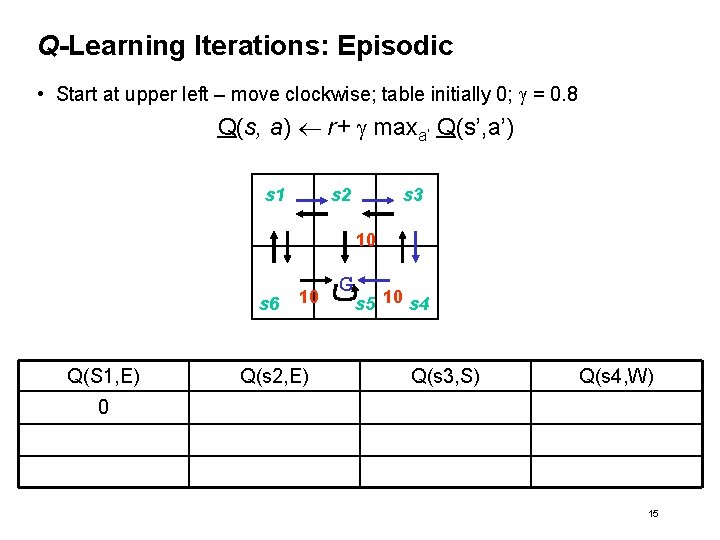

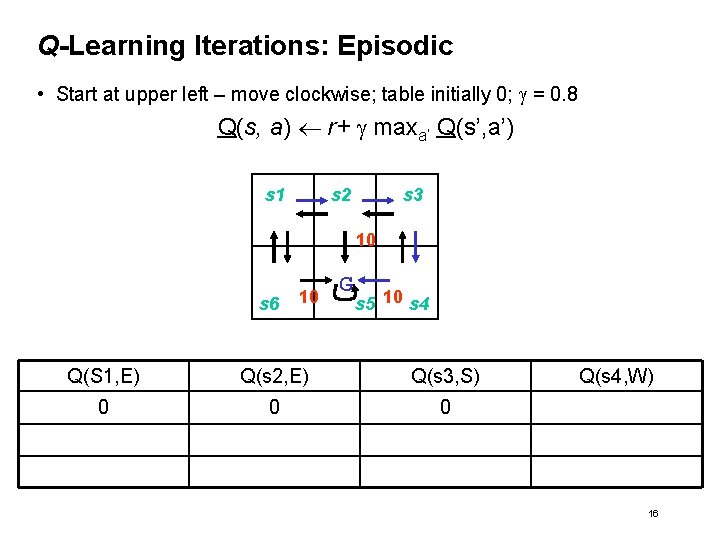

Q-Learning Iterations: Episodic • Start at upper left – move clockwise; table initially 0; g = 0. 8 Q(s, a) r+ g maxa’ Q(s’, a’) s 1 s 2 s 3 10 s 6 Q(S 1, E) 10 Q(s 2, E) G s 5 10 s 4 Q(s 3, S) Q(s 4, W) 0 15

Q-Learning Iterations: Episodic • Start at upper left – move clockwise; table initially 0; g = 0. 8 Q(s, a) r+ g maxa’ Q(s’, a’) s 1 s 2 s 3 10 s 6 10 G s 5 10 s 4 Q(S 1, E) Q(s 2, E) Q(s 3, S) 0 0 0 Q(s 4, W) 16

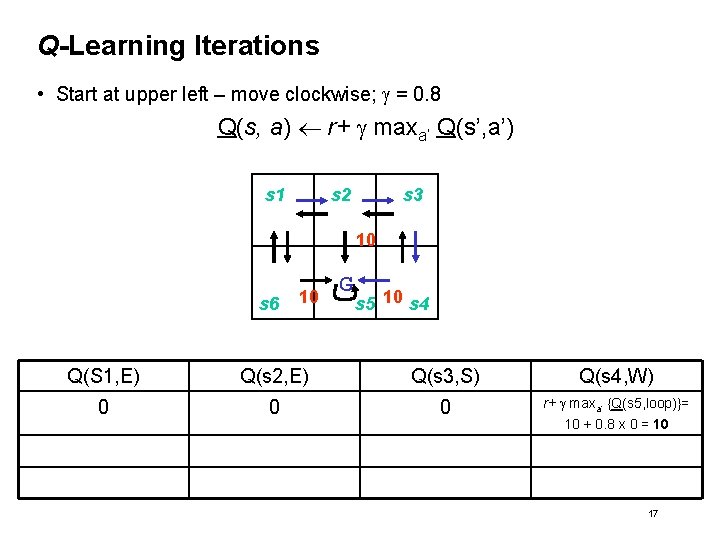

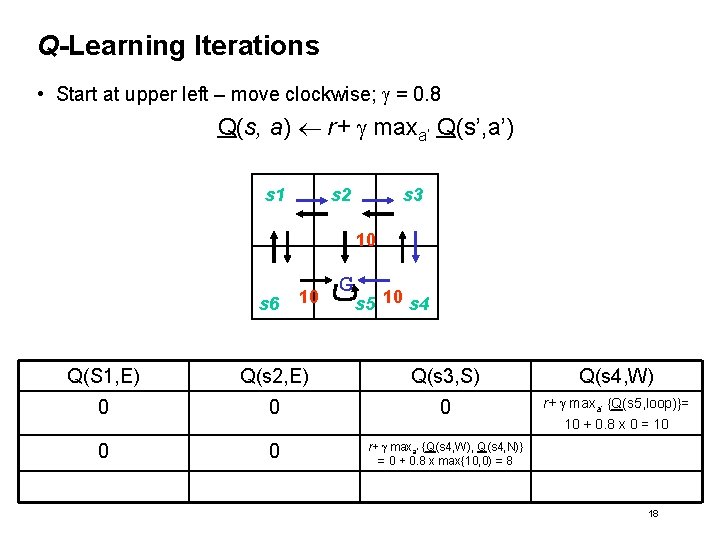

Q-Learning Iterations • Start at upper left – move clockwise; g = 0. 8 Q(s, a) r+ g maxa’ Q(s’, a’) s 1 s 2 s 3 10 s 6 10 G s 5 10 s 4 Q(S 1, E) Q(s 2, E) Q(s 3, S) Q(s 4, W) 0 0 0 r+ g maxa’ {Q(s 5, loop)}= 10 + 0. 8 x 0 = 10 17

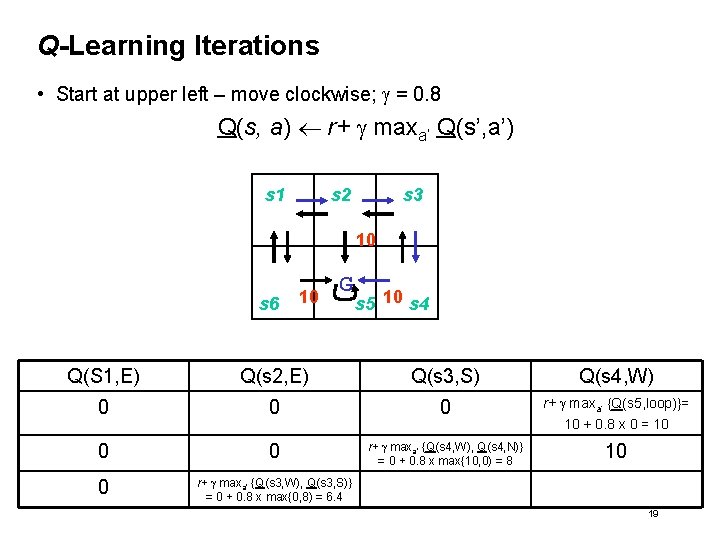

Q-Learning Iterations • Start at upper left – move clockwise; g = 0. 8 Q(s, a) r+ g maxa’ Q(s’, a’) s 1 s 2 s 3 10 s 6 10 G s 5 10 s 4 Q(S 1, E) Q(s 2, E) Q(s 3, S) Q(s 4, W) 0 0 0 r+ g maxa’ {Q(s 5, loop)}= 10 + 0. 8 x 0 = 10 0 0 r+ g maxa’ {Q(s 4, W), Q(s 4, N)} = 0 + 0. 8 x max{10, 0) = 8 18

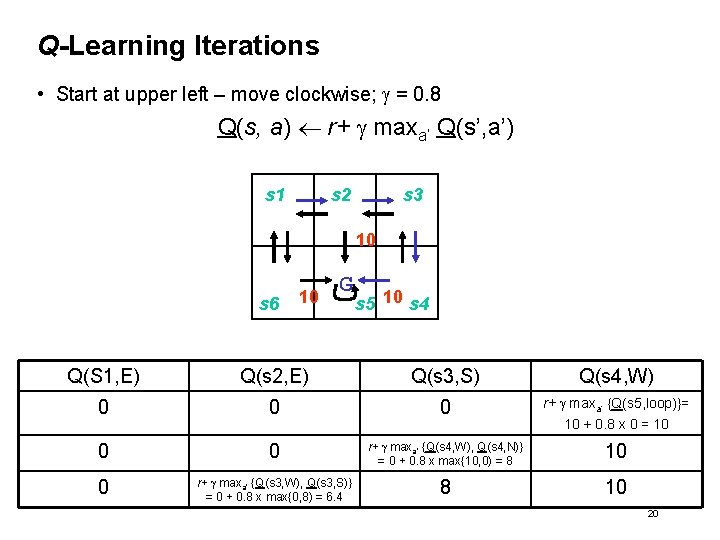

Q-Learning Iterations • Start at upper left – move clockwise; g = 0. 8 Q(s, a) r+ g maxa’ Q(s’, a’) s 1 s 2 s 3 10 s 6 10 G s 5 10 s 4 Q(S 1, E) Q(s 2, E) Q(s 3, S) Q(s 4, W) 0 0 0 r+ g maxa’ {Q(s 5, loop)}= 10 + 0. 8 x 0 = 10 0 0 r+ g maxa’ {Q(s 4, W), Q(s 4, N)} = 0 + 0. 8 x max{10, 0) = 8 10 0 r+ g maxa’ {Q(s 3, W), Q(s 3, S)} = 0 + 0. 8 x max{0, 8) = 6. 4 19

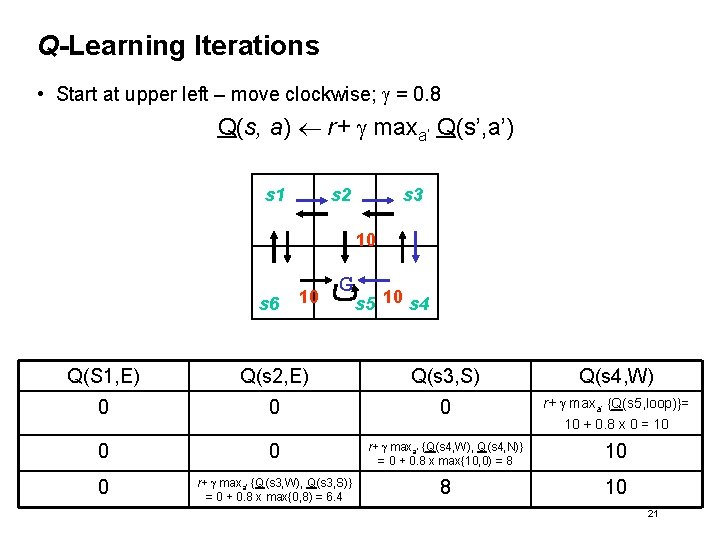

Q-Learning Iterations • Start at upper left – move clockwise; g = 0. 8 Q(s, a) r+ g maxa’ Q(s’, a’) s 1 s 2 s 3 10 s 6 10 G s 5 10 s 4 Q(S 1, E) Q(s 2, E) Q(s 3, S) Q(s 4, W) 0 0 0 r+ g maxa’ {Q(s 5, loop)}= 10 + 0. 8 x 0 = 10 0 0 r+ g maxa’ {Q(s 4, W), Q(s 4, N)} = 0 + 0. 8 x max{10, 0) = 8 10 0 r+ g maxa’ {Q(s 3, W), Q(s 3, S)} = 0 + 0. 8 x max{0, 8) = 6. 4 8 10 20

Q-Learning Iterations • Start at upper left – move clockwise; g = 0. 8 Q(s, a) r+ g maxa’ Q(s’, a’) s 1 s 2 s 3 10 s 6 10 G s 5 10 s 4 Q(S 1, E) Q(s 2, E) Q(s 3, S) Q(s 4, W) 0 0 0 r+ g maxa’ {Q(s 5, loop)}= 10 + 0. 8 x 0 = 10 0 0 r+ g maxa’ {Q(s 4, W), Q(s 4, N)} = 0 + 0. 8 x max{10, 0) = 8 10 0 r+ g maxa’ {Q(s 3, W), Q(s 3, S)} = 0 + 0. 8 x max{0, 8) = 6. 4 8 10 21

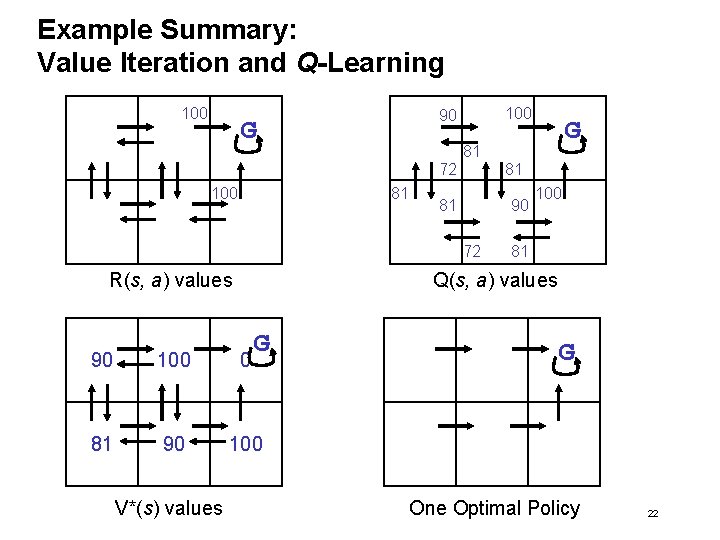

Example Summary: Value Iteration and Q-Learning 100 90 G 81 72 100 81 81 81 90 72 R(s, a) values 100 81 Q(s, a) values G 90 100 0 81 90 100 V*(s) values G G One Optimal Policy 22

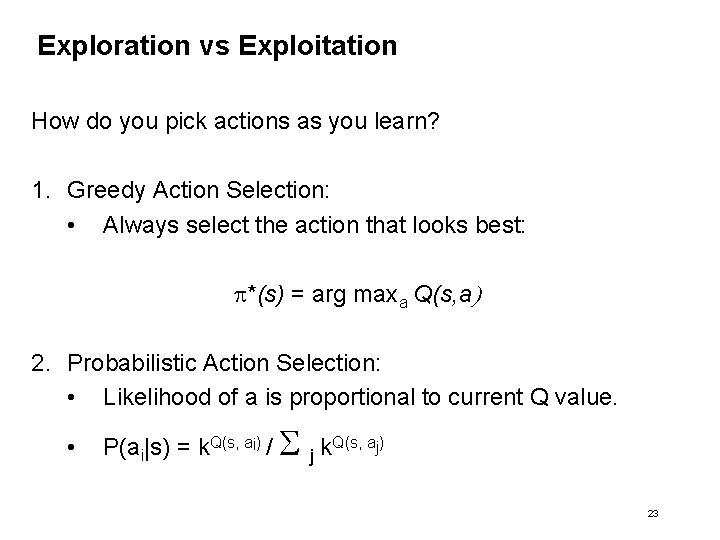

Exploration vs Exploitation How do you pick actions as you learn? 1. Greedy Action Selection: • Always select the action that looks best: p*(s) = arg maxa Q(s, a) 2. Probabilistic Action Selection: • Likelihood of a is proportional to current Q value. • P(ai|s) = k. Q(s, ai) / j k. Q(s, aj) 23

Markov Decision Processes and Reinforcement Learning • Motivation • Learning policies through reinforcement • Q values • Q learning • Multi-step backups • Nondeterministic MDPs • Function Approximators • Model-based Learning • Summary 24

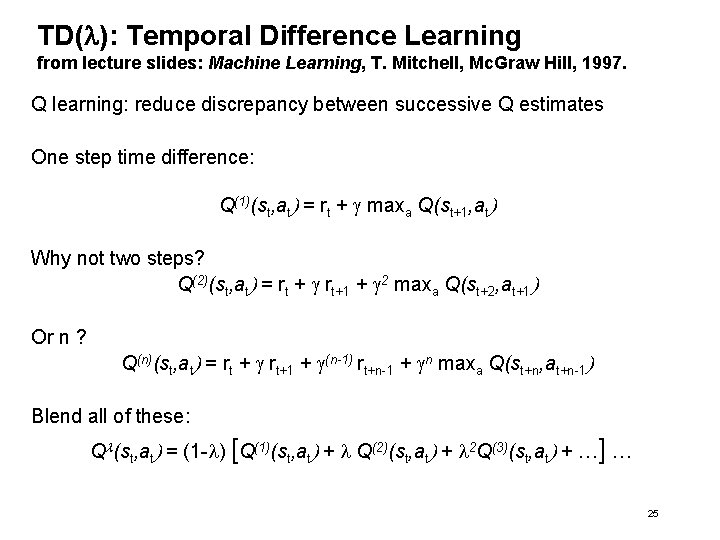

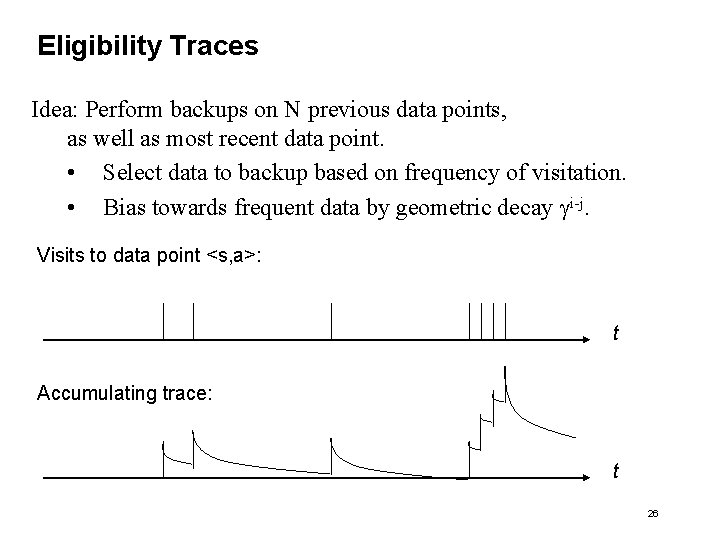

TD(l): Temporal Difference Learning from lecture slides: Machine Learning, T. Mitchell, Mc. Graw Hill, 1997. Q learning: reduce discrepancy between successive Q estimates One step time difference: Q(1)(st, at) = rt + g maxa Q(st+1, at) Why not two steps? Q(2)(st, at) = rt + g rt+1 + g 2 maxa Q(st+2, at+1) Or n ? Q(n)(st, at) = rt + g rt+1 + g(n-1) rt+n-1 + gn maxa Q(st+n, at+n-1) Blend all of these: Ql(st, at) = (1 -l) [Q(1)(st, at) + l Q(2)(st, at) + l 2 Q(3)(st, at) + …] … 25

Eligibility Traces Idea: Perform backups on N previous data points, as well as most recent data point. • Select data to backup based on frequency of visitation. • Bias towards frequent data by geometric decay gi-j. Visits to data point <s, a>: t Accumulating trace: t 26

Markov Decision Processes and Reinforcement Learning • Motivation • Learning policies through reinforcement • Nondeterministic MDPs: • Value Iteration • Q Learning • Function Approximators • Model-based Learning • Summary 27

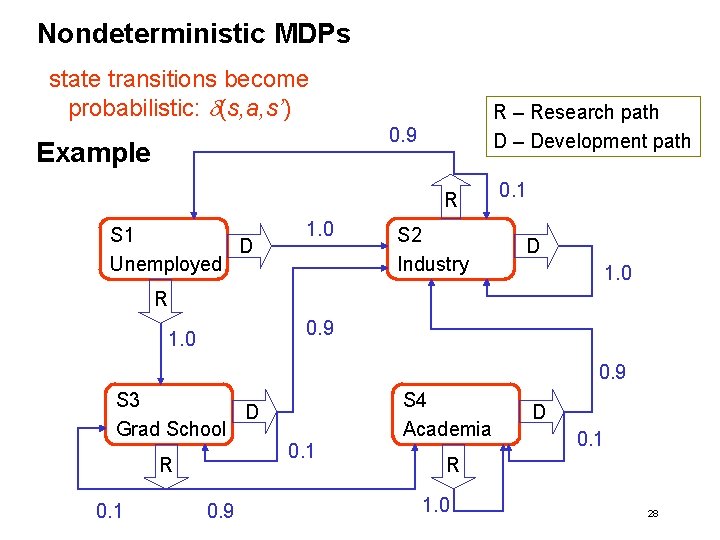

Nondeterministic MDPs state transitions become probabilistic: d(s, a, s’) R – Research path D – Development path 0. 9 Example R S 1 D Unemployed 1. 0 S 2 Industry 0. 1 D 1. 0 R 0. 9 1. 0 0. 9 S 3 D Grad School R 0. 1 0. 9 0. 1 S 4 Academia D 0. 1 R 1. 0 28

Non. Deterministic Case • How do we redefine cumulative reward to handle nondeterminism? • Define V and Q based on expected values: Vp(st) = E[rt + g rt+1 + g 2 rt+2. . . ] Vp(st) = E[ g i rt+I ] Q(st, at) = E[r(st, at) + g. V*(d(st, at))] 29

Value Iteration for Non-deterministic MDPs V 1(s) : = 0 for all s t : = 1 loop t : = t + 1 30

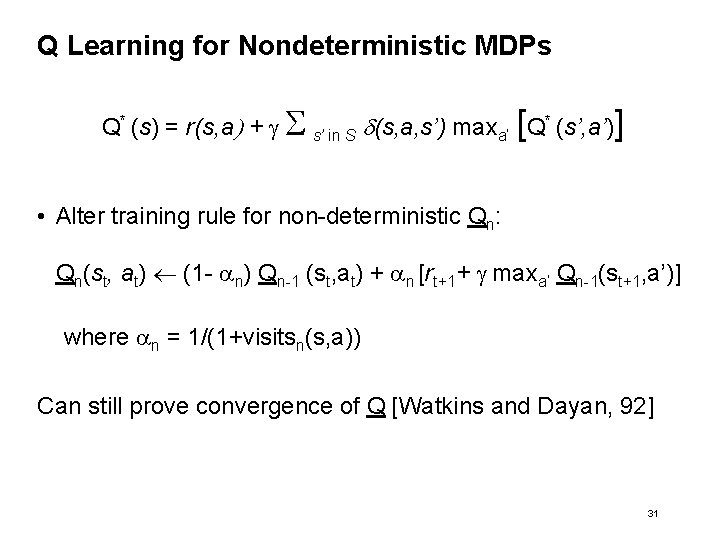

Q Learning for Nondeterministic MDPs Q* (s) = r(s, a) + g s’ in S d(s, a, s’) maxa’ [Q* (s’, a’)] • Alter training rule for non-deterministic Qn: Qn(st, at) (1 - an) Qn-1 (st, at) + an [rt+1+ g maxa’ Qn-1(st+1, a’)] where an = 1/(1+visitsn(s, a)) Can still prove convergence of Q [Watkins and Dayan, 92] 31

Markov Decision Processes and Reinforcement Learning • • • Motivation Learning policies through reinforcement Nondeterministic MDPs Function Approximators Model-based Learning Summary 32

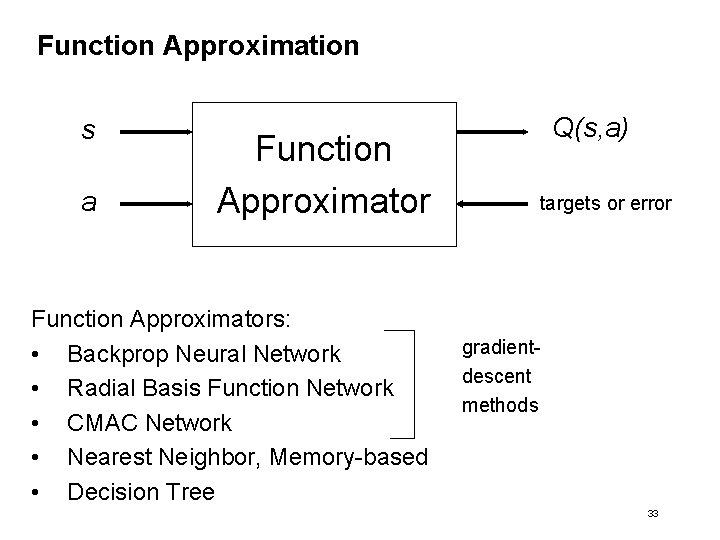

Function Approximation s a Function Approximators: • Backprop Neural Network • Radial Basis Function Network • CMAC Network • Nearest Neighbor, Memory-based • Decision Tree Q(s, a) targets or error gradientdescent methods 33

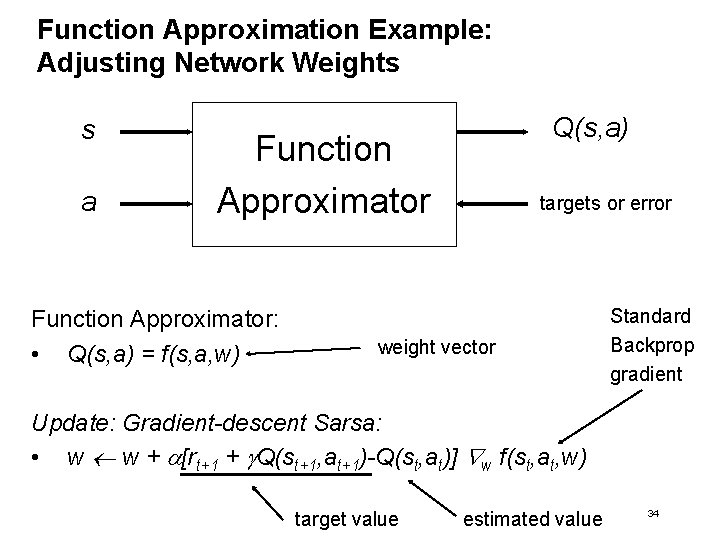

Function Approximation Example: Adjusting Network Weights s a Q(s, a) Function Approximator: • Q(s, a) = f(s, a, w) targets or error weight vector Standard Backprop gradient Update: Gradient-descent Sarsa: • w w + a[rt+1 + g. Q(st+1, at+1)-Q(st, at)] w f(st, at, w) target value estimated value 34

![Example: TD-Gammon [Tesauro, 1995] Learns to play Backgammon Situations: • Board configurations (1020) Actions: Example: TD-Gammon [Tesauro, 1995] Learns to play Backgammon Situations: • Board configurations (1020) Actions:](http://slidetodoc.com/presentation_image_h2/425ea31d90890b8d5934715e63e73050/image-35.jpg)

Example: TD-Gammon [Tesauro, 1995] Learns to play Backgammon Situations: • Board configurations (1020) Actions: • Moves Rewards: • • +100 if win - 100 if lose 0 for all other states Trained by playing 1. 5 million games against self. è Currently, roughly equal to best human player. 35

![Example: TD-Gammon [Tesauro, 1995] V(s) predicted probability of winning On win: Outcome = 1 Example: TD-Gammon [Tesauro, 1995] V(s) predicted probability of winning On win: Outcome = 1](http://slidetodoc.com/presentation_image_h2/425ea31d90890b8d5934715e63e73050/image-36.jpg)

Example: TD-Gammon [Tesauro, 1995] V(s) predicted probability of winning On win: Outcome = 1 On Loss: Outcome = 0 TD error V(st+1) – V(st) Hidden Units 0 - 160 Random Initial Weights Raw Board Position (# of pieces at each position) 36

Markov Decision Processes and Reinforcement Learning • • • Motivation Learning policies through reinforcement Nondeterministic MDPs Function Approximators Model-based Learning Summary 37

Model-based Learning: Certainty-Equivalence Method For every step: 1. Use new experience to update model parameters. • Transitions • Rewards 2. Solve the model for V and p. • Value iteration. • Policy iteration. 3. Use the policy to choose the next action. 38

Learning the Model For each state-action pair <s, a> visited accumulate: 1. Mean Transition: T(s, a, s’) = number-times-seen(s, a s’) number-times-tried(s, a) 2. Mean Reward: R(s, a) 39

Comparison of Model-based and Model-free methods Temporal Differencing / Q Learning: Only does computation for the states the system is actually in. • Good real-time performance • Inefficient use of data Model-based methods: Computes the best estimates for every state on every time step. • Efficient use of data • Terrible real-time performance What is a middle ground? 40

![Dyna: A Middle Ground [Sutton, Intro to RL, 97] At each step, incrementally: 1. Dyna: A Middle Ground [Sutton, Intro to RL, 97] At each step, incrementally: 1.](http://slidetodoc.com/presentation_image_h2/425ea31d90890b8d5934715e63e73050/image-41.jpg)

Dyna: A Middle Ground [Sutton, Intro to RL, 97] At each step, incrementally: 1. Update model based on new data 2. Update policy based on new data 3. Update policy based on updated model Performance, until optimal, on Grid World: 1. Q-Learning: 1. 531, 000 Steps 2. 531, 000 Backups 2. Dyna: 1. 61, 908 Steps 2. 3, 055, 000 Backups 41

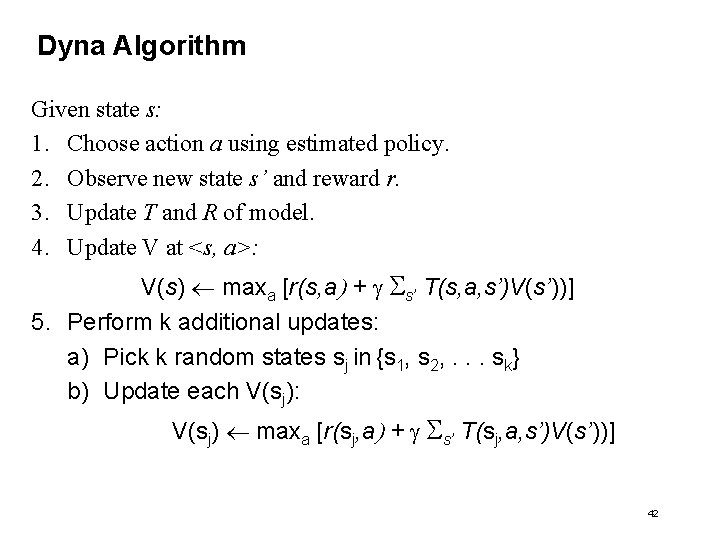

Dyna Algorithm Given state s: 1. Choose action a using estimated policy. 2. Observe new state s’ and reward r. 3. Update T and R of model. 4. Update V at <s, a>: V(s) maxa [r(s, a) + g s’ T(s, a, s’)V(s’))] 5. Perform k additional updates: a) Pick k random states sj in {s 1, s 2, . . . sk} b) Update each V(sj): V(sj) maxa [r(sj, a) + g s’ T(sj, a, s’)V(s’))] 42

Markov Decision Processes and Reinforcement Learning • • • Motivation Learning policies through reinforcement Nondeterministic MDPs Function Approximators Model-based Learning Summary 43

Ongoing Research • • • Handling cases where state is only partially observable Design of optimal exploration strategies Extend to continuous action, state Learn and use d : S x A S Scaling up in the size of the state space • Function approximators (neural net instead of table) • Generalization • Macros • Exploiting substructure • Multiple learners – Multi-agent reinforcement learning 44

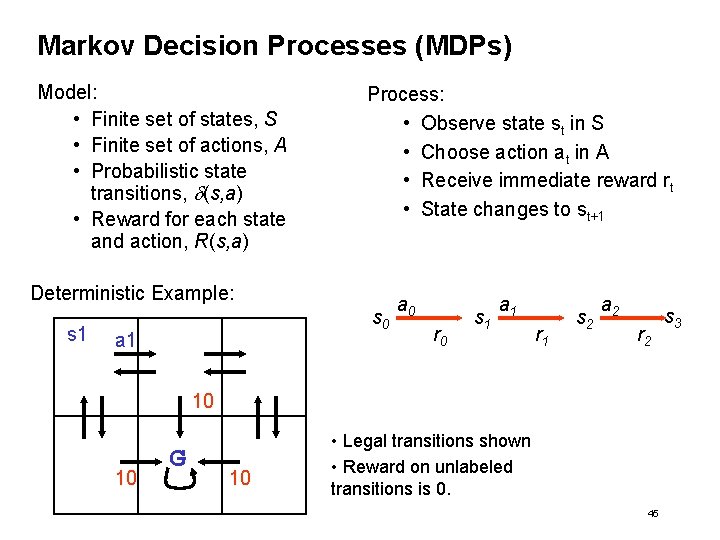

Markov Decision Processes (MDPs) Model: • Finite set of states, S • Finite set of actions, A • Probabilistic state transitions, d(s, a) • Reward for each state and action, R(s, a) Process: • Observe state st in S • Choose action at in A • Receive immediate reward rt • State changes to st+1 Deterministic Example: s 1 s 0 a 1 a 0 r 0 s 1 a 1 r 1 s 2 a 2 r 2 10 10 G 10 • Legal transitions shown • Reward on unlabeled transitions is 0. 45 s 3

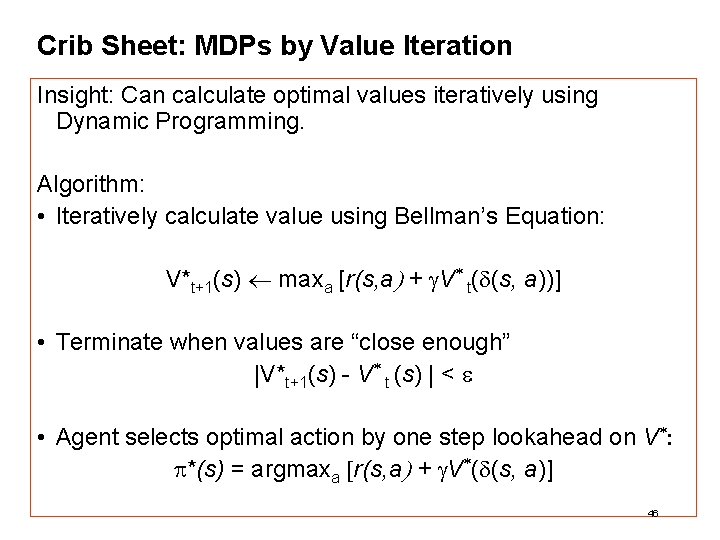

Crib Sheet: MDPs by Value Iteration Insight: Can calculate optimal values iteratively using Dynamic Programming. Algorithm: • Iteratively calculate value using Bellman’s Equation: V*t+1(s) maxa [r(s, a) + g. V* t(d(s, a))] • Terminate when values are “close enough” |V*t+1(s) - V* t (s) | < e • Agent selects optimal action by one step lookahead on V*: p*(s) = argmaxa [r(s, a) + g. V*(d(s, a)] 46

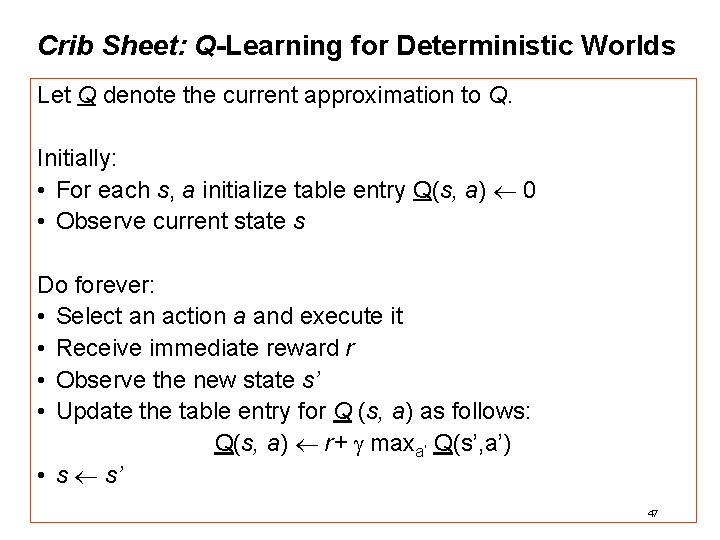

Crib Sheet: Q-Learning for Deterministic Worlds Let Q denote the current approximation to Q. Initially: • For each s, a initialize table entry Q(s, a) 0 • Observe current state s Do forever: • Select an action a and execute it • Receive immediate reward r • Observe the new state s’ • Update the table entry for Q (s, a) as follows: Q(s, a) r+ g maxa’ Q(s’, a’) • s s’ 47

- Slides: 47