Learning testing and approximating halfspaces Rocco Servedio Columbia

Learning, testing, and approximating halfspaces Rocco Servedio Columbia University DIMACS-RUTCOR Jan 2009

Overview Halfspaces over learning + + + + - - testing + approximation

Joint work with: Ilias Diakonikolas Kevin Matulef Ryan O’Donnell Ronitt Rubinfeld

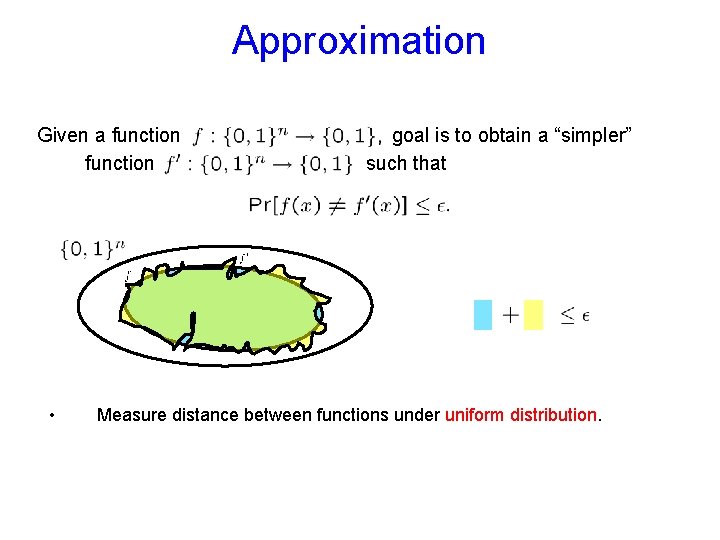

Approximation Given a function • goal is to obtain a “simpler” such that Measure distance between functions under uniform distribution.

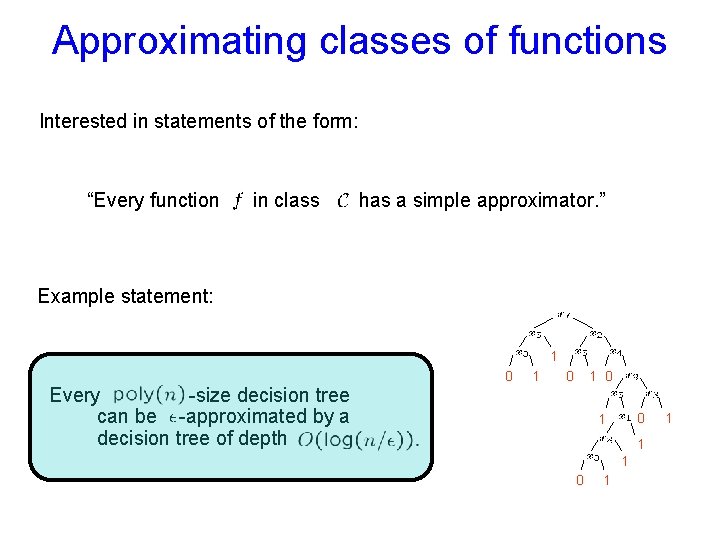

Approximating classes of functions Interested in statements of the form: “Every function in class has a simple approximator. ” Example statement: 1 Every -size decision tree can be -approximated by a decision tree of depth 0 1 0 0 1 1 1 0 1 1

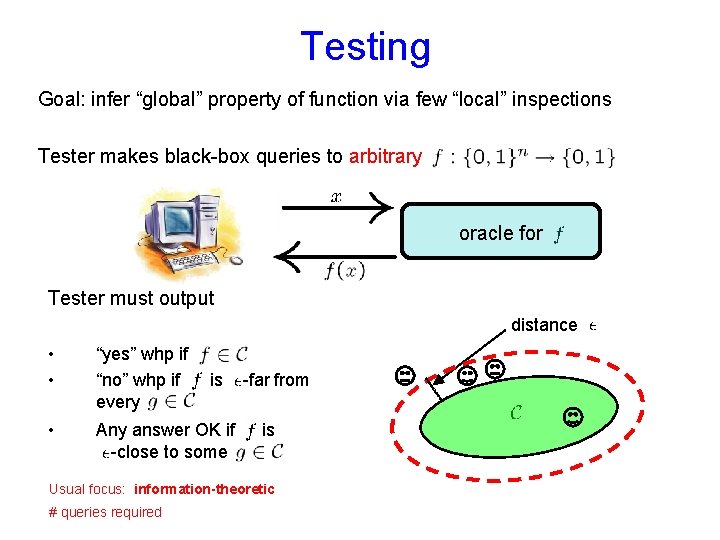

Testing Goal: infer “global” property of function via few “local” inspections Tester makes black-box queries to arbitrary oracle for Tester must output distance • • “yes” whp if “no” whp if every • Any answer OK if -close to some is -far from is Usual focus: information-theoretic # queries required

![Some known property testing results Class of functions over parity functions [BLR 93] deg- Some known property testing results Class of functions over parity functions [BLR 93] deg-](http://slidetodoc.com/presentation_image_h/1f7bfd39f7f5f0132176d67a23ee3dab/image-7.jpg)

Some known property testing results Class of functions over parity functions [BLR 93] deg- polynomials [AKK+03] literals [PRS 02] conjunctions [PRS 02] -juntas [FKRSS 04] -term monotone DNF [PRS 02] -term DNF [DLM+07] size- decision trees [DLM+07] -sparse polynomials [DLM+07] # of queries

We’ll get to learning later

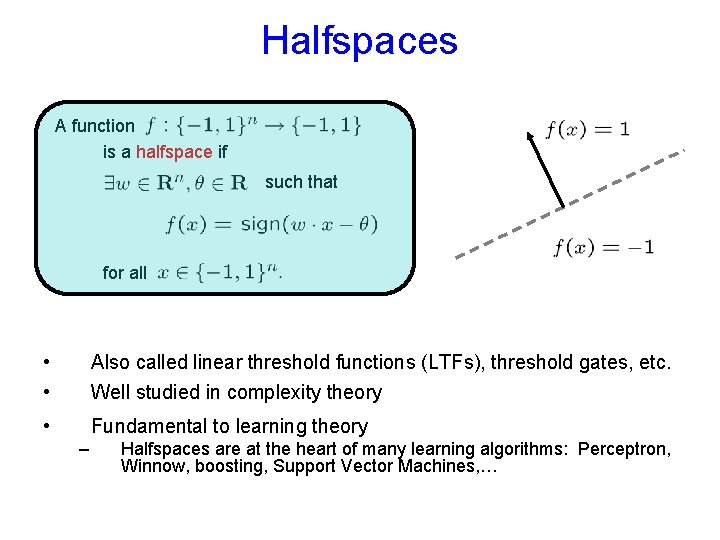

Halfspaces A function is a halfspace if such that for all • • Also called linear threshold functions (LTFs), threshold gates, etc. Well studied in complexity theory • Fundamental to learning theory – Halfspaces are at the heart of many learning algorithms: Perceptron, Winnow, boosting, Support Vector Machines, …

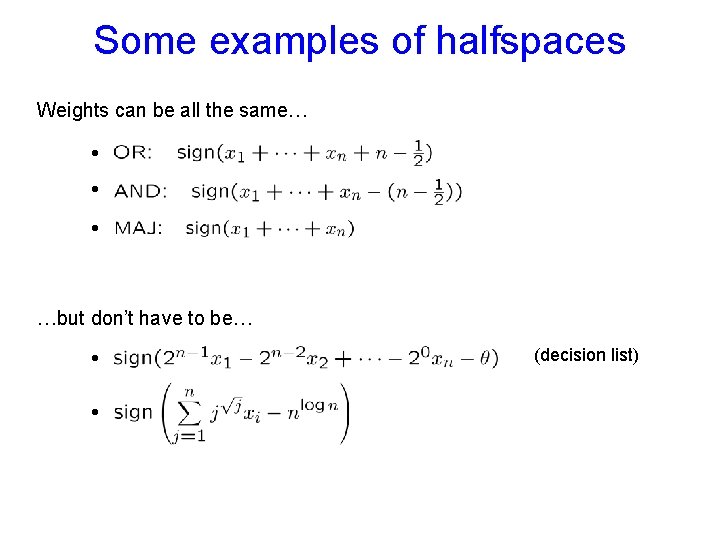

Some examples of halfspaces Weights can be all the same… • • • …but don’t have to be… • • (decision list)

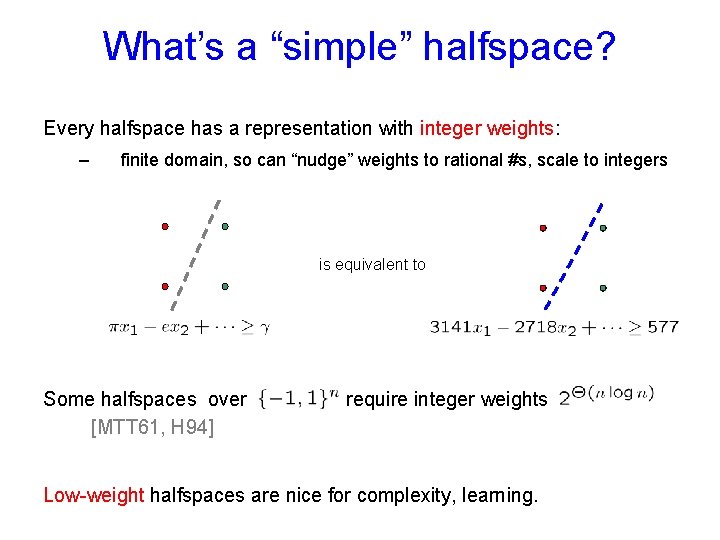

What’s a “simple” halfspace? Every halfspace has a representation with integer weights: – finite domain, so can “nudge” weights to rational #s, scale to integers is equivalent to Some halfspaces over [MTT 61, H 94] require integer weights Low-weight halfspaces are nice for complexity, learning.

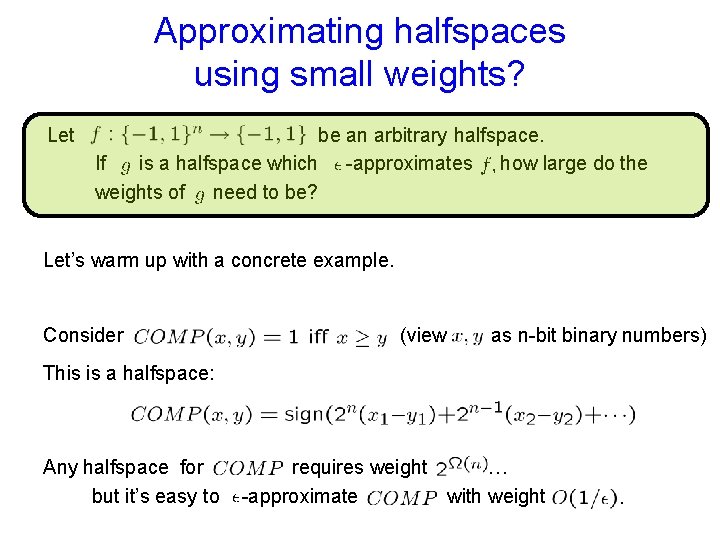

Approximating halfspaces using small weights? Let be an arbitrary halfspace. If is a halfspace which -approximates how large do the weights of need to be? Let’s warm up with a concrete example. Consider (view as n-bit binary numbers) This is a halfspace: Any halfspace for but it’s easy to requires weight … -approximate with weight

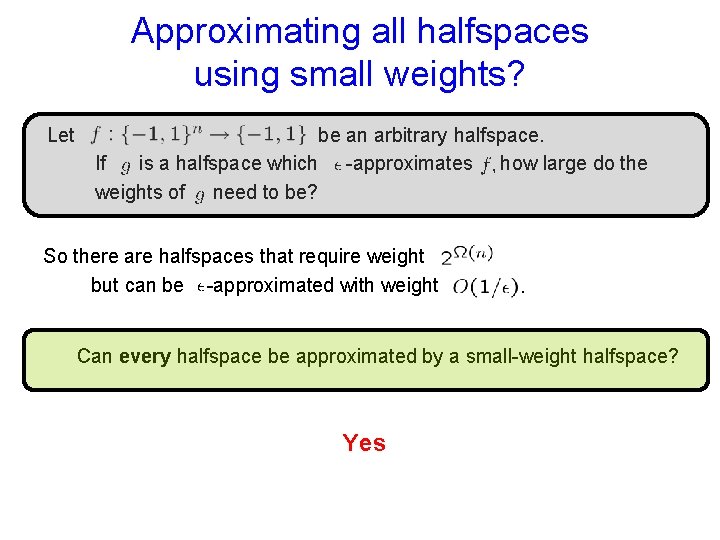

Approximating all halfspaces using small weights? Let be an arbitrary halfspace. If is a halfspace which -approximates how large do the weights of need to be? So there are halfspaces that require weight but can be -approximated with weight Can every halfspace be approximated by a small-weight halfspace? Yes

![Every halfspace has a low-weight approximator Theorem: [S 06] Let there is an -approximator Every halfspace has a low-weight approximator Theorem: [S 06] Let there is an -approximator](http://slidetodoc.com/presentation_image_h/1f7bfd39f7f5f0132176d67a23ee3dab/image-14.jpg)

Every halfspace has a low-weight approximator Theorem: [S 06] Let there is an -approximator integer weights that has be any halfspace. For any with How good is this bound? • Can’t do better in terms of • Dependence on must be ; may need some [H 94]

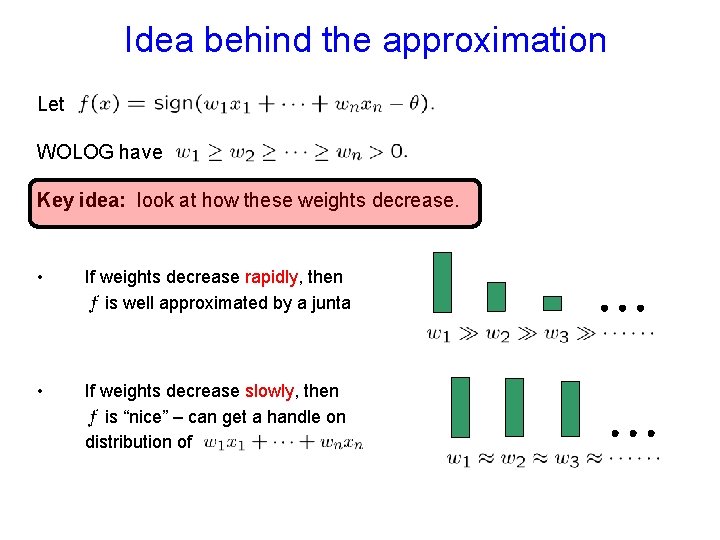

Idea behind the approximation Let WOLOG have Key idea: look at how these weights decrease. • If weights decrease rapidly, then is well approximated by a junta • If weights decrease slowly, then is “nice” – can get a handle on distribution of

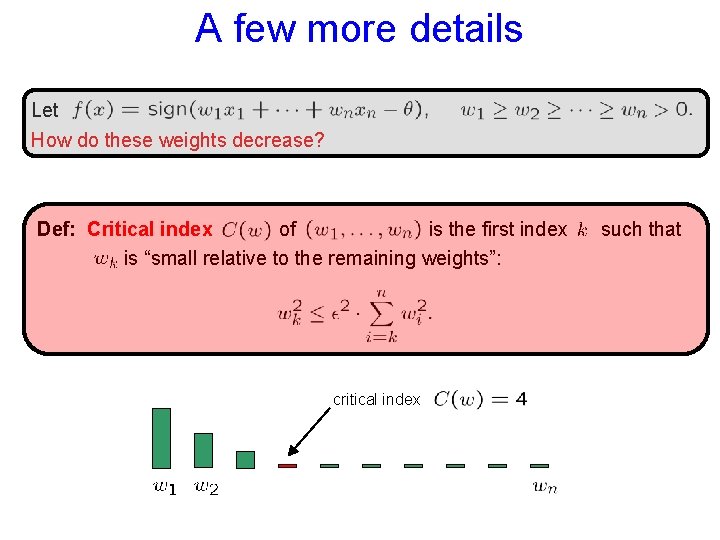

A few more details Let How do these weights decrease? Def: Critical index of is the first index is “small relative to the remaining weights”: critical index such that

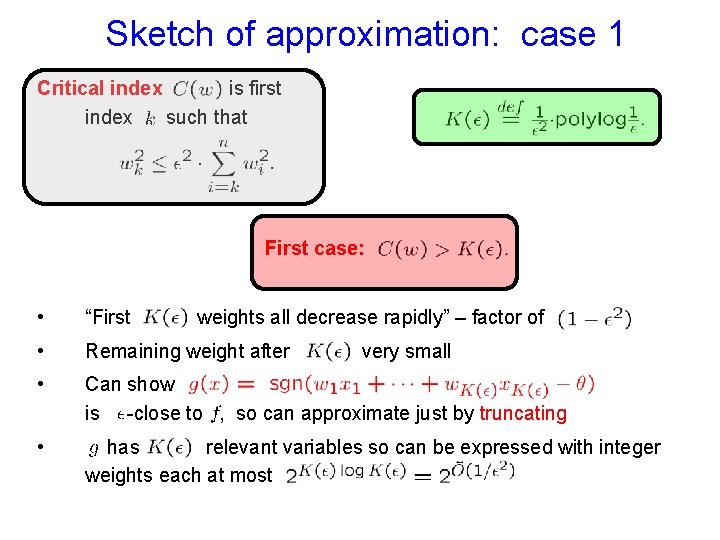

Sketch of approximation: case 1 Critical index is first index such that First case: • “First • Remaining weight after • Can show is -close to , so can approximate just by truncating • has relevant variables so can be expressed with integer weights each at most weights all decrease rapidly” – factor of very small

Why does truncating work? Let’s write for Have only if either or each of these weights small, so unlikely by Hoeffding bound unlikely by more complicated argument (split up into blocks; symmetry argument on each block bounds prob by ½; use independence)

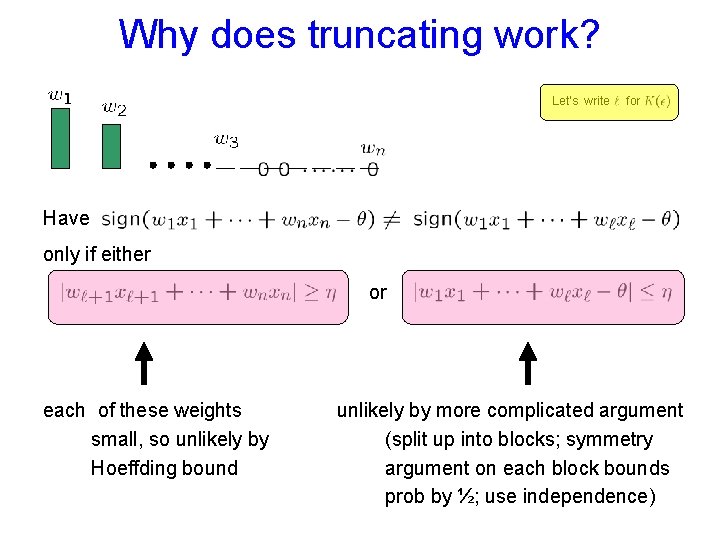

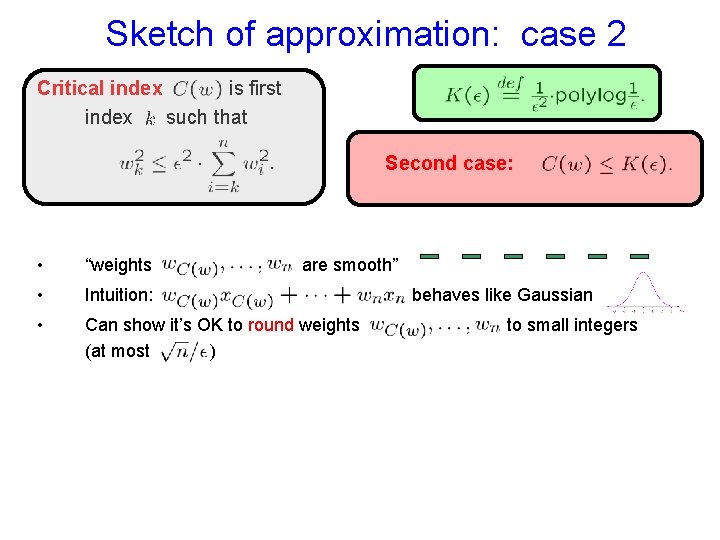

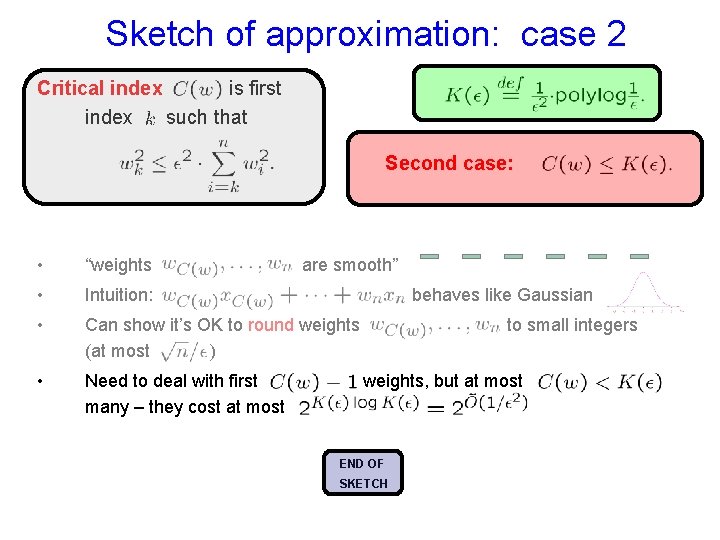

Sketch of approximation: case 2 Critical index is first index such that Second case: • “weights • Intuition: • Can show it’s OK to round weights (at most ) are smooth” behaves like Gaussian to small integers

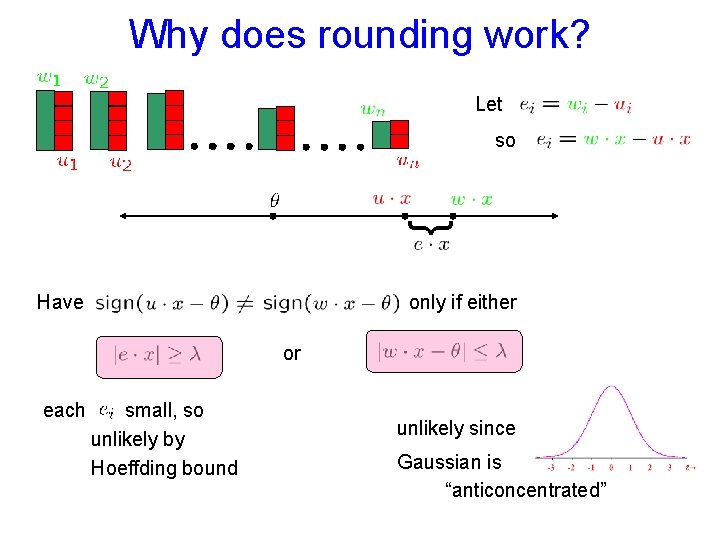

Why does rounding work? Let so } Have only if either or each small, so unlikely by Hoeffding bound unlikely since Gaussian is “anticoncentrated”

Sketch of approximation: case 2 Critical index is first index such that Second case: • “weights • Intuition: • Can show it’s OK to round weights (at most ) • Need to deal with first many – they cost at most are smooth” behaves like Gaussian to small integers weights, but at most END OF SKETCH

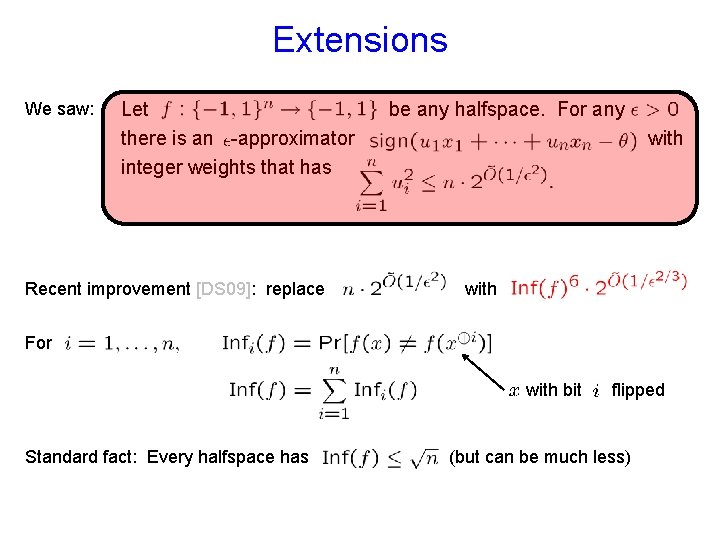

Extensions We saw: Let there is an -approximator integer weights that has Recent improvement [DS 09]: replace be any halfspace. For any with For with bit Standard fact: Every halfspace has flipped (but can be much less)

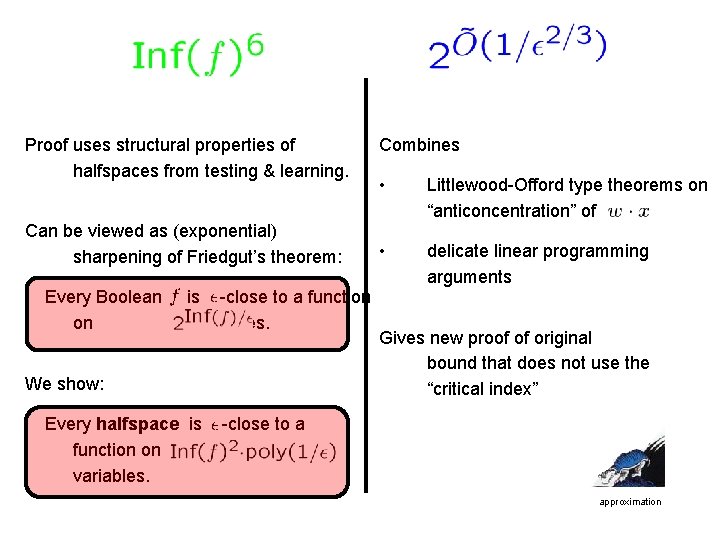

Proof uses structural properties of halfspaces from testing & learning. Can be viewed as (exponential) sharpening of Friedgut’s theorem: Every Boolean on is -close to a function variables. We show: Every halfspace is function on variables. Combines • Littlewood-Offord type theorems on “anticoncentration” of • delicate linear programming arguments Gives new proof of original bound that does not use the “critical index” -close to a approximation

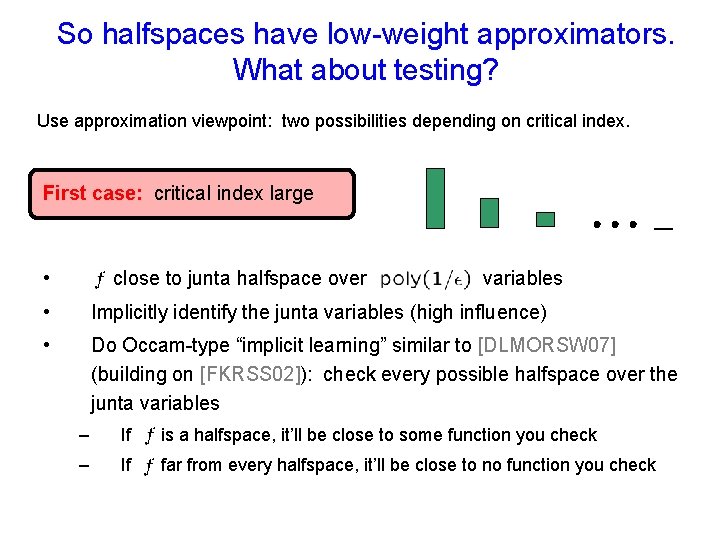

So halfspaces have low-weight approximators. What about testing? Use approximation viewpoint: two possibilities depending on critical index. First case: critical index large • close to junta halfspace over variables • Implicitly identify the junta variables (high influence) • Do Occam-type “implicit learning” similar to [DLMORSW 07] (building on [FKRSS 02]): check every possible halfspace over the junta variables – If is a halfspace, it’ll be close to some function you check – If far from every halfspace, it’ll be close to no function you check

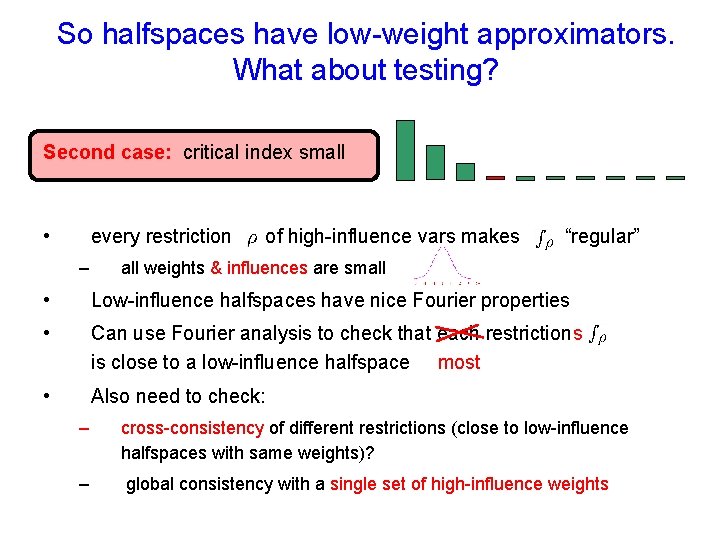

So halfspaces have low-weight approximators. What about testing? Second case: critical index small • every restriction – of high-influence vars makes “regular” all weights & influences are small • Low-influence halfspaces have nice Fourier properties • Can use Fourier analysis to check that each restriction s is close to a low-influence halfspace most • Also need to check: – cross-consistency of different restrictions (close to low-influence halfspaces with same weights)? – global consistency with a single set of high-influence weights

![A taste of Fourier A helpful Fourier result about low-influence halfspaces: “Theorem”: [MORS 07] A taste of Fourier A helpful Fourier result about low-influence halfspaces: “Theorem”: [MORS 07]](http://slidetodoc.com/presentation_image_h/1f7bfd39f7f5f0132176d67a23ee3dab/image-26.jpg)

A taste of Fourier A helpful Fourier result about low-influence halfspaces: “Theorem”: [MORS 07] Let be any Boolean function such that: • all the degree-1 Fourier coefficients of • the degree-0 Fourier coefficient synchs up with the degree-1 coeffs Then is close to a halfspace are small

![A taste of Fourier A helpful Fourier result about low-influence halfspaces: “Theorem”: [MORS 07] A taste of Fourier A helpful Fourier result about low-influence halfspaces: “Theorem”: [MORS 07]](http://slidetodoc.com/presentation_image_h/1f7bfd39f7f5f0132176d67a23ee3dab/image-27.jpg)

A taste of Fourier A helpful Fourier result about low-influence halfspaces: “Theorem”: [MORS 07] Let be any Boolean function such that: • all the degree-1 Fourier coefficients of • the degree-0 Fourier coefficient synchs up with the degree-1 coeffs Then • are small is close to a halfspace – in fact, close to the halfspace Useful for soundness portion of test

![Testing halfspaces When all the dust settles: Theorem: [MORS 07] The class of halfspaces Testing halfspaces When all the dust settles: Theorem: [MORS 07] The class of halfspaces](http://slidetodoc.com/presentation_image_h/1f7bfd39f7f5f0132176d67a23ee3dab/image-28.jpg)

Testing halfspaces When all the dust settles: Theorem: [MORS 07] The class of halfspaces over testing approximation is testable with queries.

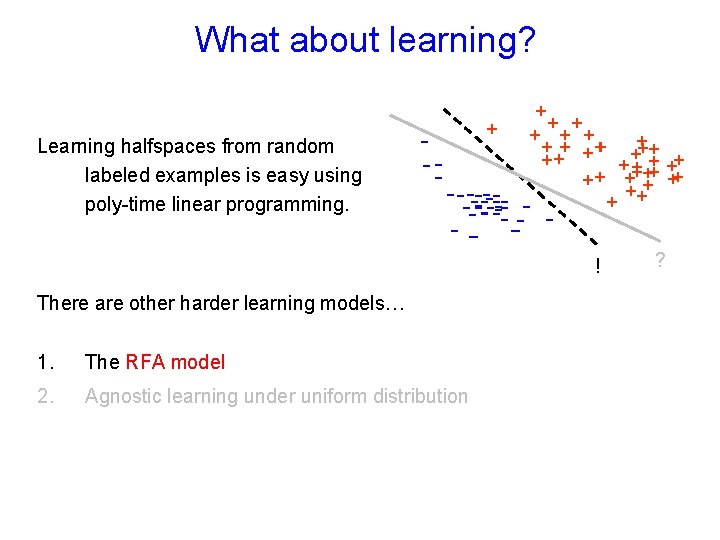

What about learning? Learning halfspaces from random labeled examples is easy using poly-time linear programming. - -- + + ++ ++ ++ + + + ++ + - ------- - ! There are other harder learning models… 1. The RFA model 2. Agnostic learning under uniform distribution ?

![The RFA learning model • Introduced by [BDD 92]: “restricted focus of attention” • The RFA learning model • Introduced by [BDD 92]: “restricted focus of attention” •](http://slidetodoc.com/presentation_image_h/1f7bfd39f7f5f0132176d67a23ee3dab/image-30.jpg)

The RFA learning model • Introduced by [BDD 92]: “restricted focus of attention” • For each labeled example the learner gets to choose one bit of the example that he can see (plus the label of course). • Examples are drawn from uniform distribution over • Goal is to construct -accurate hypothesis Question: [BDD 92, ADJKS 98, G 01] Are halfspaces learnable in RFA model?

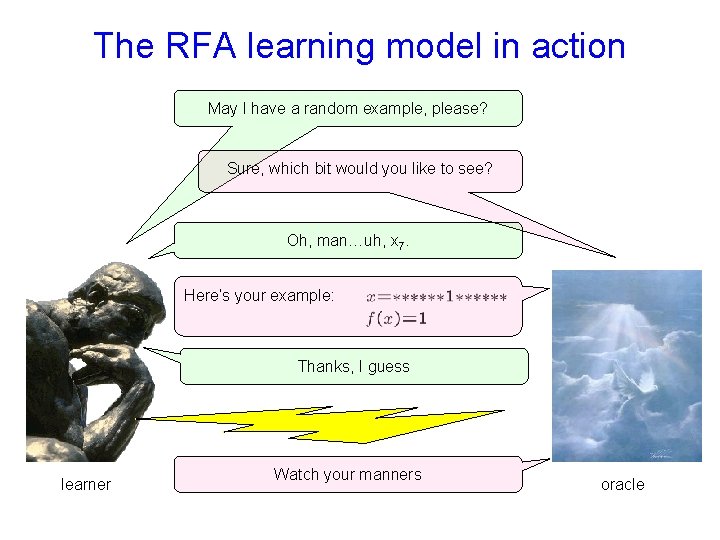

The RFA learning model in action May I have a random example, please? Sure, which bit would you like to see? Oh, man…uh, x 7. Here’s your example: Thanks, I guess learner Watch your manners oracle

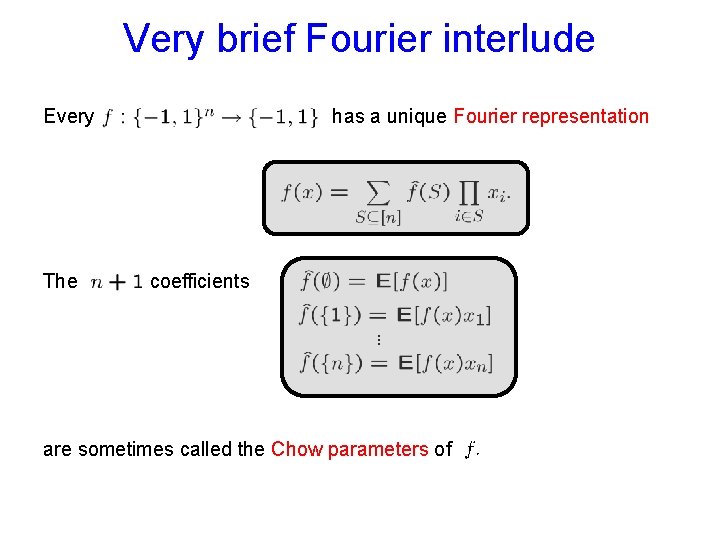

Very brief Fourier interlude Every The has a unique Fourier representation coefficients are sometimes called the Chow parameters of

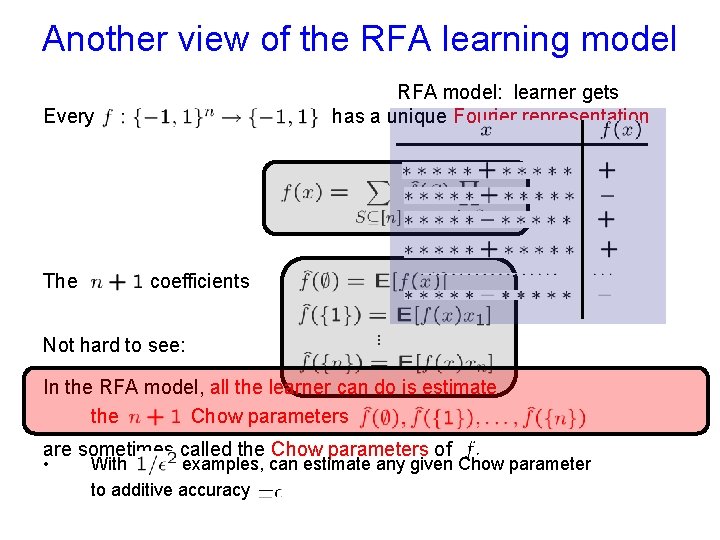

Another view of the RFA learning model RFA model: learner gets has a unique Fourier representation Every The coefficients Not hard to see: In the RFA model, all the learner can do is estimate the Chow parameters are sometimes called the Chow parameters of • With examples, can estimate any given Chow parameter to additive accuracy

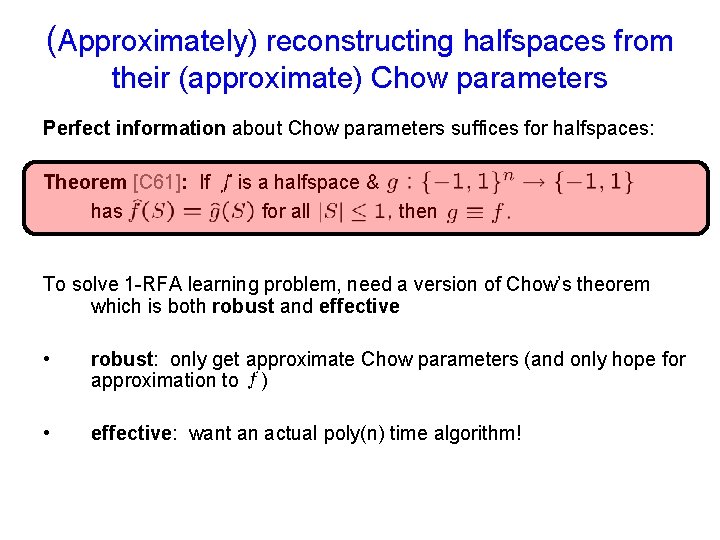

(Approximately) reconstructing halfspaces from their (approximate) Chow parameters Perfect information about Chow parameters suffices for halfspaces: Theorem [C 61]: If has is a halfspace & for all then To solve 1 -RFA learning problem, need a version of Chow’s theorem which is both robust and effective • robust: only get approximate Chow parameters (and only hope for approximation to ) • effective: want an actual poly(n) time algorithm!

![Previous results [ADJKS 98] proved: Theorem: Let be a weighthalfspace. Let be any Boolean Previous results [ADJKS 98] proved: Theorem: Let be a weighthalfspace. Let be any Boolean](http://slidetodoc.com/presentation_image_h/1f7bfd39f7f5f0132176d67a23ee3dab/image-35.jpg)

Previous results [ADJKS 98] proved: Theorem: Let be a weighthalfspace. Let be any Boolean function satisfying for all Then is an -approximator for • Good for low-weight halfspaces, but could be [Goldberg 01] proved: Theorem: Let be any halfspace. Let any function satisfying for all Then is an -approximator for • be Better bound for high-weight halfspaces, but superpolynomial in n. Neither of these results is algorithmic.

![Robust, effective version of Chow’s theorem Theorem: [OS 08] For any constant and any Robust, effective version of Chow’s theorem Theorem: [OS 08] For any constant and any](http://slidetodoc.com/presentation_image_h/1f7bfd39f7f5f0132176d67a23ee3dab/image-36.jpg)

Robust, effective version of Chow’s theorem Theorem: [OS 08] For any constant and any halfspace given accurate enough approximations of the Chow parameters of algorithm runs in time and w. h. p. outputs a halfspace that is -close to Corollary: [OS 08] Halfspaces are learnable to any constant accuracy in time in the RFA model. Fastest runtime dependence on of any algorithm for learning halfspaces, even in usual random-examples model – Previous best runtime: – Any algorithm needs time for learning to constant accuracy examples, i. e. bits of input

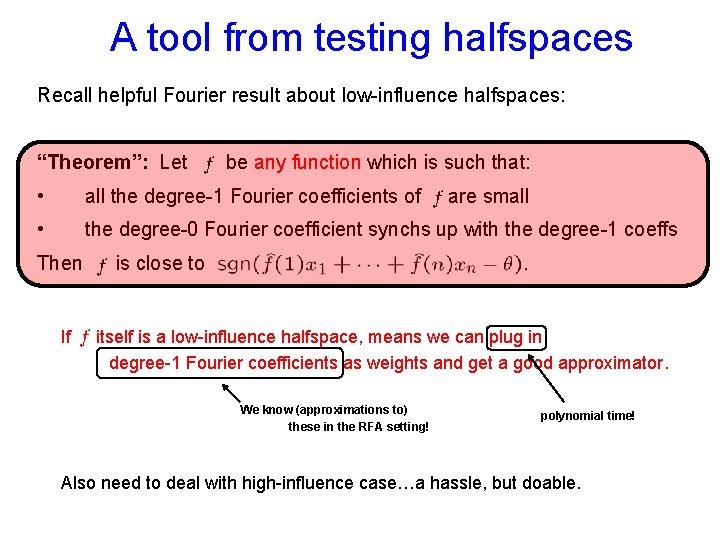

A tool from testing halfspaces Recall helpful Fourier result about low-influence halfspaces: “Theorem”: Let be any function which is such that: • all the degree-1 Fourier coefficients of • the degree-0 Fourier coefficient synchs up with the degree-1 coeffs Then If are small is close to itself is a low-influence halfspace, means we can plug in degree-1 Fourier coefficients as weights and get a good approximator. We know (approximations to) these in the RFA setting! polynomial time! Also need to deal with high-influence case…a hassle, but doable.

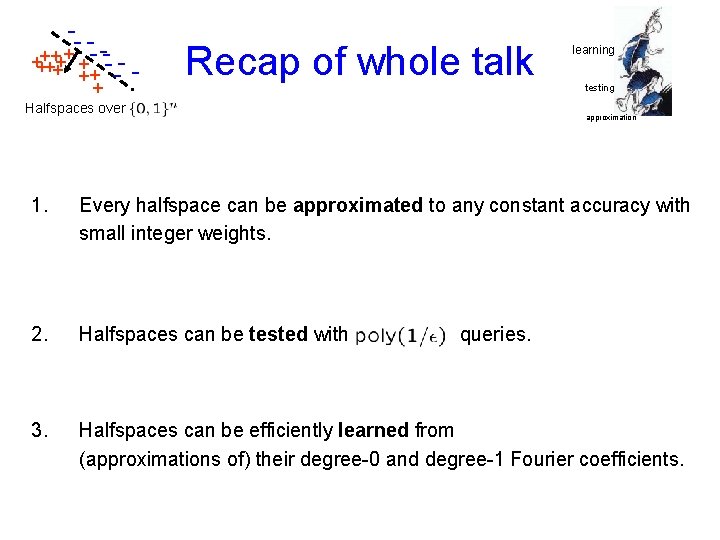

-- ++++ + - -- ++ - + Recap of whole talk Halfspaces over learning testing approximation 1. Every halfspace can be approximated to any constant accuracy with small integer weights. 2. Halfspaces can be tested with 3. Halfspaces can be efficiently learned from (approximations of) their degree-0 and degree-1 Fourier coefficients. queries.

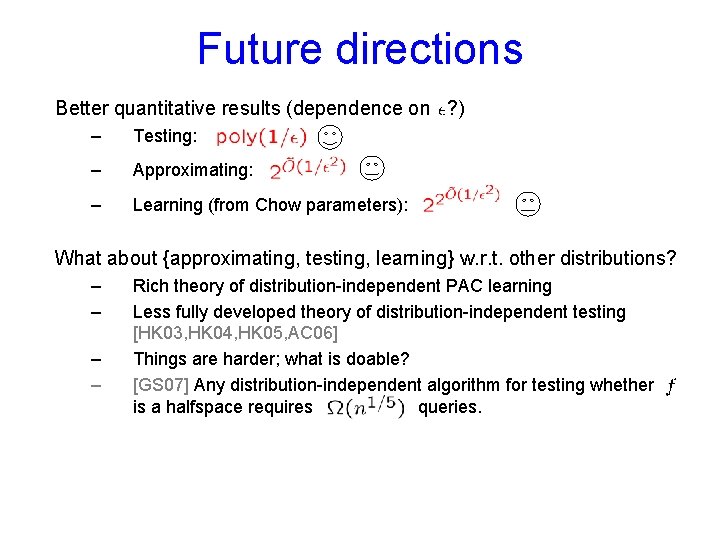

Future directions Better quantitative results (dependence on ? ) – Testing: – Approximating: – Learning (from Chow parameters): What about {approximating, testing, learning} w. r. t. other distributions? – – Rich theory of distribution-independent PAC learning Less fully developed theory of distribution-independent testing [HK 03, HK 04, HK 05, AC 06] Things are harder; what is doable? [GS 07] Any distribution-independent algorithm for testing whether is a halfspace requires queries.

Thank you for your attention

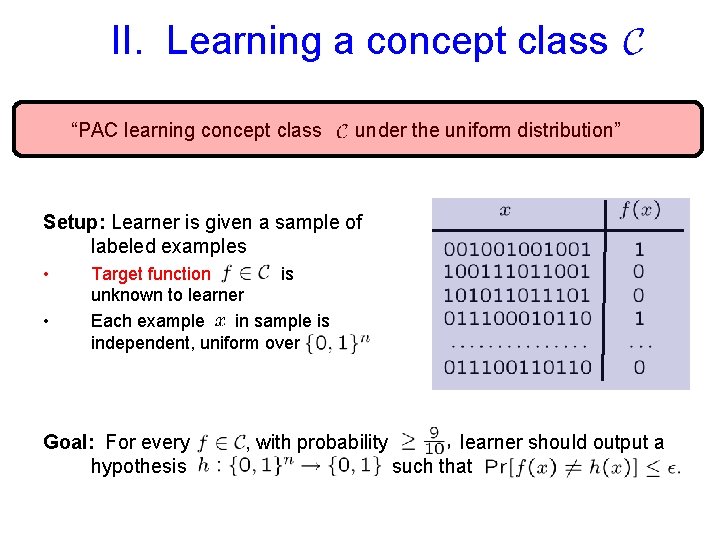

II. Learning a concept class “PAC learning concept class under the uniform distribution” Setup: Learner is given a sample of labeled examples • • Target function is unknown to learner Each example in sample is independent, uniform over Goal: For every hypothesis , with probability learner should output a such that

- Slides: 41