Learning Subjective Nouns using Extraction Pattern Bootstrapping Ellen

Learning Subjective Nouns using Extraction Pattern Bootstrapping Ellen Riloff, Janyce Wiebe, Theresa Wilson Presenter: Gabriel Nicolae

Subjectivity – the Annotation Scheme n http: //www. cs. pitt. edu/~wiebe/pubs/ardasummer 02/ n Goal: to identify and characterize expressions of private states in a sentence. n Private state = opinions, evaluations, emotions and speculations. The time has come, gentlemen, for Sharon, the assassin, to realize that injustice cannot last long. n Also judge the strength of each private state: low, medium, high, extreme. n Annotation gold standard: a sentence is n n subjective if it contains at least one private-state expression of medium or higher strength objective – all the rest

Using Extraction Patterns to Learn Subjective Nouns – Meta-Bootstrapping (1/2) n (Riloff and Jones 1999) n Mutual bootstrapping: n Begin with a small set of seed words that represent a targeted semantic category n (e. g. begin with 10 words that represent LOCATIONS) n and an unannotated corpus. n Produce thousands of extraction patterns for the entire corpus (e. g. “<subject> was hired”) n Compute a score for each pattern based on the number of seed words among its extractions n Select the best pattern, all of its extracted noun phrases are labeled as the target semantic category n Re-score extraction patterns (original seed words + newly labeled words)

Using Extraction Patterns to Learn Subjective Nouns – Meta-Bootstrapping (2/2) n Meta-bootstrapping: n After the normal bootstrapping n n all nouns that were put into the semantic dictionary are reevaluated each noun is assigned a score based on how many different patterns extracted it. only the 5 best nouns are allowed to remain in the dictionary; the others are discarded restart mutual bootstrapping

Using Extraction Patterns to Learn Subjective Nouns – Basilisk n (Thelen and Riloff 2002) n Begin with an unannotated text corpus and n a small set of seed words for a semantic category n Bootstrapping: n Basilisk automatically generates a set of extraction patterns for the corpus and scores each pattern based upon the number of seed words among its extractions best patterns in the Pattern Pool. n All nouns extracted by a pattern in the Pattern Pool Candidate Word Pool. Basilisk scores each noun based upon the set of patterns that extracted it and their collective association with the seed words. n The top 10 nouns are labeled as the targeted semantic class and are added to the dictionary. n Repeat bootstrapping process. n

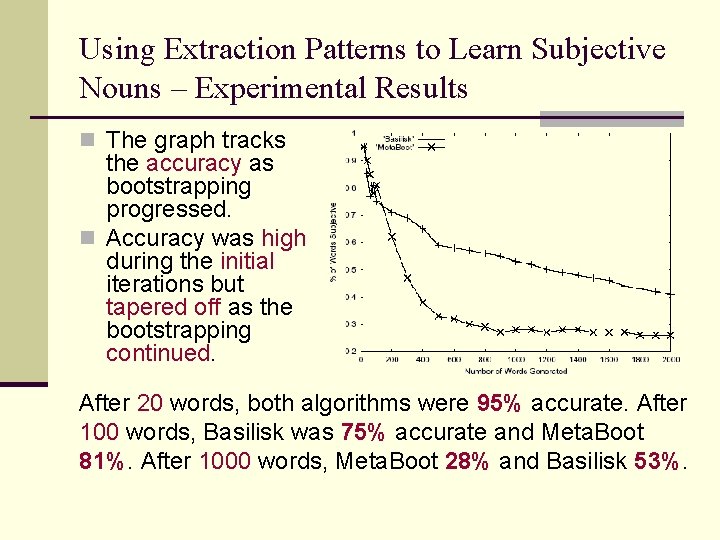

Using Extraction Patterns to Learn Subjective Nouns – Experimental Results n The graph tracks the accuracy as bootstrapping progressed. n Accuracy was high during the initial iterations but tapered off as the bootstrapping continued. After 20 words, both algorithms were 95% accurate. After 100 words, Basilisk was 75% accurate and Meta. Boot 81%. After 1000 words, Meta. Boot 28% and Basilisk 53%.

Creating Subjectivity Classifiers – Subjective Noun Features n Naïve Bayes classifier using the nouns as features. Sets: n n BA-Strong: the set of Strong. Subjective nouns generated by Basilisk BA-Weak: the set of Weak. Subjective nouns generated by Basilisk MB-Strong: the set of Strong. Subjective nouns generated by Meta-Bootstrapping MB-Weak: the set of Weak. Subjective nouns generated by Meta-Bootstrapping n For each set – a three-valued feature: n presence of 0, 1, ≥ 2 words from that set

Creating Subjectivity Classifiers – Previously Established Features n (Wiebe, Bruce, O’Hara 1999) n Sets: n a set of stems positively correlated with the subjective training examples – subj. Stems n a set of stems positively correlated with the objective training examples – obj. Stems n For each set – a three-valued feature n the presence of 0, 1, ≥ 2 members of the set. n A binary feature for each: n presence in the sentence of a pronoun, adjective, cardinal number, modal other than will, adverb other than not. n Other features from other researchers.

Creating Subjectivity Classifiers – Discourse Features subj. Clues = all sets defined before except obj. Stems n Four features: n Clue. Ratesubj for the previous and following sentences n Clue. Rateobj for the previous and following sentences n Feature for sentence length.

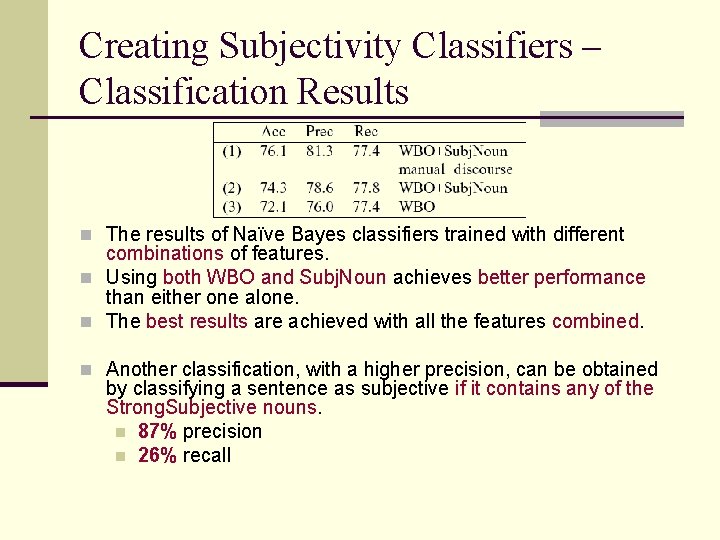

Creating Subjectivity Classifiers – Classification Results n The results of Naïve Bayes classifiers trained with different combinations of features. n Using both WBO and Subj. Noun achieves better performance than either one alone. n The best results are achieved with all the features combined. n Another classification, with a higher precision, can be obtained by classifying a sentence as subjective if it contains any of the Strong. Subjective nouns. n 87% precision n 26% recall

- Slides: 10