Learning Processes CS 679 Lecture Note by Jin

Learning Processes CS 679 Lecture Note by Jin Hyung Kim Computer Science Department KAIST

Learning is a process by which free parameters of NN are adapted thru stimulation from environment n Sequence of Events n u stimulated by an environment u undergoes changes in its free parameters u responds in a new way to the environment n Learning Algorithm u prescribed steps of process to make a system learn u ways to adjust synaptic weight of a neuron u No unique learning algorithms - kit of tools n The Chapter covers u five learning rules, learning paradigms, issues of learning task u probabilistic and statistical aspect of learning

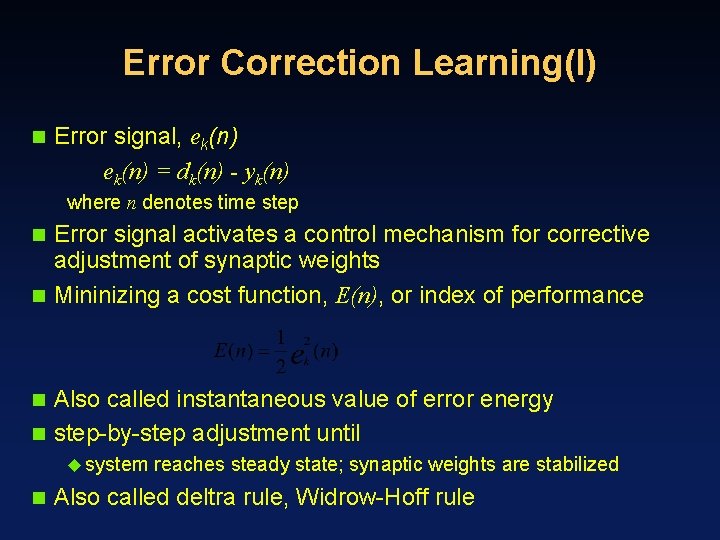

Error Correction Learning(I) n Error signal, ek(n) = dk(n) - yk(n) where n denotes time step Error signal activates a control mechanism for corrective adjustment of synaptic weights n Mininizing a cost function, E(n), or index of performance n Also called instantaneous value of error energy n step-by-step adjustment until n u system n reaches steady state; synaptic weights are stabilized Also called deltra rule, Widrow-Hoff rule

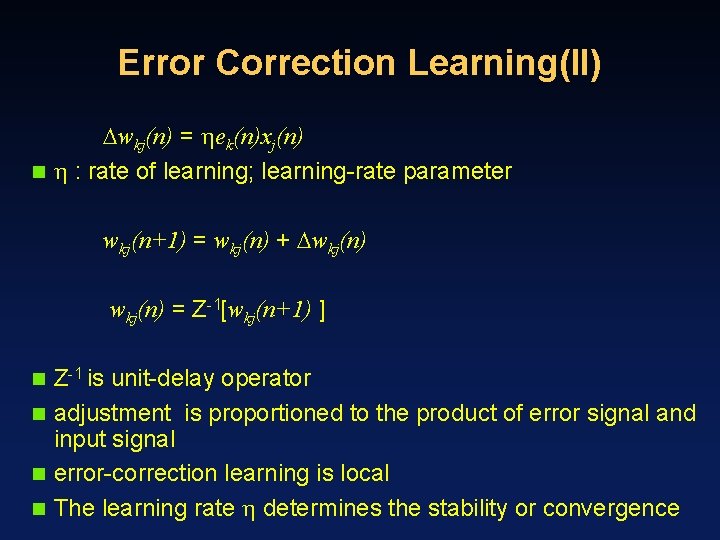

Error Correction Learning(II) wkj(n) = ek(n)xj(n) n : rate of learning; learning-rate parameter wkj(n+1) = wkj(n) + wkj(n) = Z-1[wkj(n+1) ] Z-1 is unit-delay operator n adjustment is proportioned to the product of error signal and input signal n error-correction learning is local n The learning rate determines the stability or convergence n

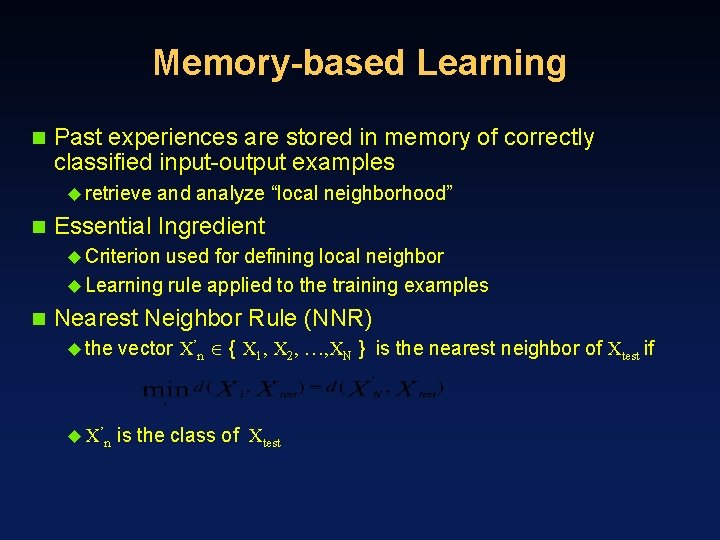

Memory-based Learning n Past experiences are stored in memory of correctly classified input-output examples u retrieve n and analyze “local neighborhood” Essential Ingredient u Criterion used for defining local neighbor u Learning rule applied to the training examples n Nearest Neighbor Rule (NNR) u the vector X’n { X 1, X 2, …, XN } is the nearest neighbor of Xtest if u X’n is the class of Xtest

Nearest Neighbor Rule n Cover and Hart u Examples are independent and identically distributed u The sample size N is infinitely large then, error(NNR) < 2 * error(Bayesian rule) u Half of the information is contained in the Nearest Neighbor n K-nearest Neighbor rule u variant of NNR u Select k-nearest neighbors of Xtest and use a majority vote u act like averaging device u discriminate against outlier n Radial-basis function network is a memory-based classifier

Hebbian Learning n If two neurons of a connection are activated u simultaneously (synchronously), then its strength is increased u asynchronously, then the strength is weakened or eliminated n Hebbian synapse u time dependent u depend on exact time of occurrence of two signals u locally available information is used u interactive mechanism u learning is done by two signal interaction u conjunctional or correlational mechanism u cooccurrence of two signals n Hebbian learning is found in Hippocampus presynaptic & postsynaptic signals

Math Model of Hebbian Modification wkj(n) = F(yk(n), xj(n)) n Hebb’s hypothesis wkj(n) = yk(n)xj(n) where is rate of learning u also called activity product rule u repeated application of xj leads to exponential growth Covariance hypothesis wkj(n) = (xj - x)(yk - y) 1. wkj is enhanced if xj > x and yk > y 2. wkj is depressed if (xj > x and yk < y ) or (xj < x and yk > y ) n where x and y are time-average

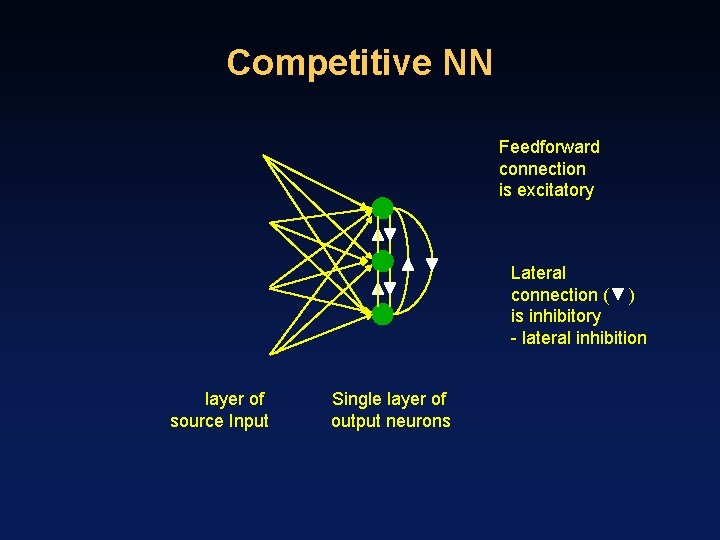

Competitive Learning Output neurons of NN compete to became active n Only single neuron is active at any one time n u salient n feature for pattern classification Basic Elements u. A set of neurons that are all same except synaptic weight distribution u responds differently to a given set of input pattern u A Limits on the strength of each neuron u A mechanism to compete to respond to a given input u winner-takes-all n Neurons learn to respond specialized conditions u become feature detectors

Competitive NN Feedforward connection is excitatory Lateral connection ( ) is inhibitory - lateral inhibition layer of source Input Single layer of output neurons

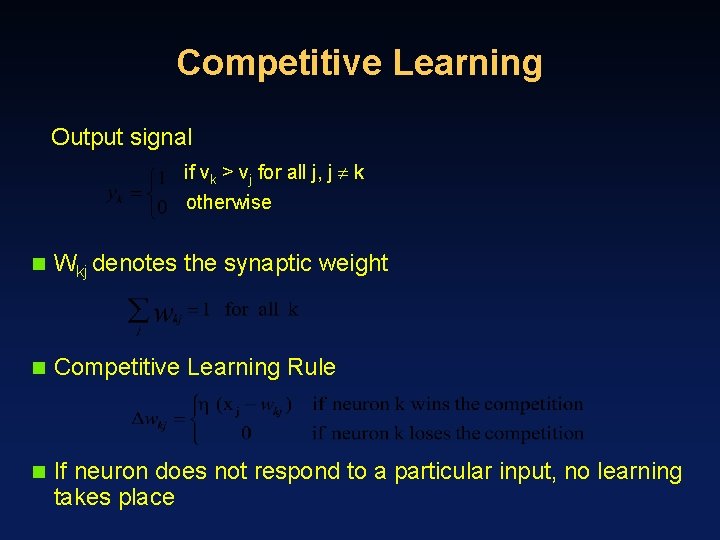

Competitive Learning Output signal if vk > vj for all j, j k otherwise n Wkj denotes the synaptic weight n Competitive Learning Rule n If neuron does not respond to a particular input, no learning takes place

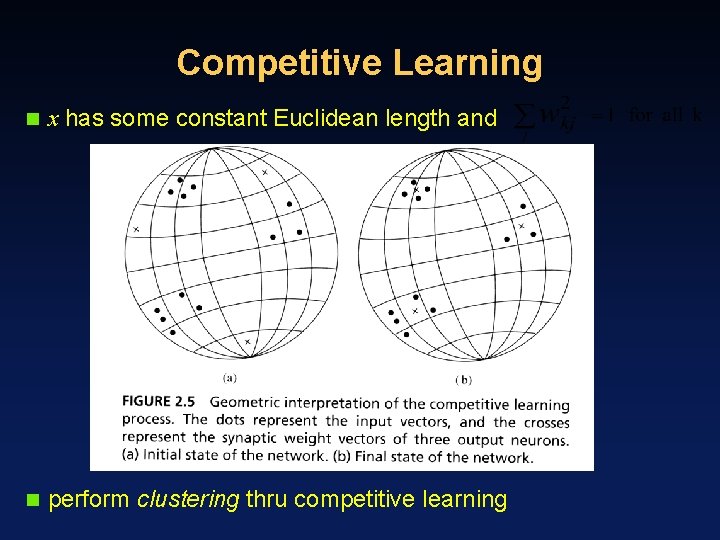

Competitive Learning n x has some constant Euclidean length and n perform clustering thru competitive learning

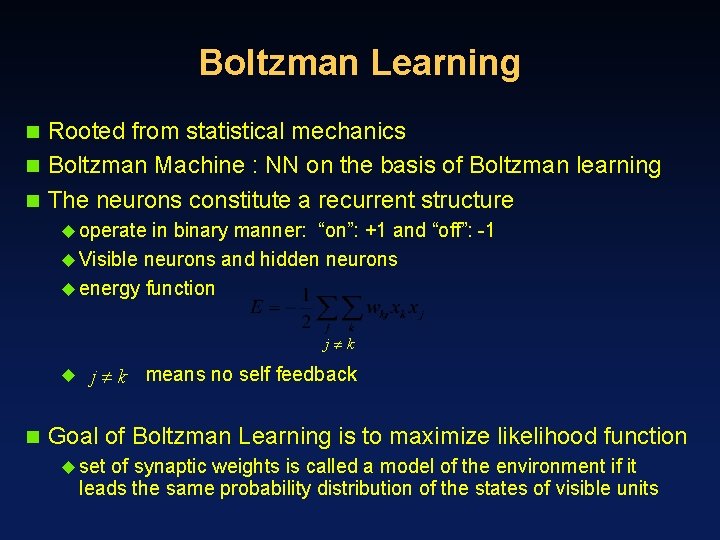

Boltzman Learning Rooted from statistical mechanics n Boltzman Machine : NN on the basis of Boltzman learning n The neurons constitute a recurrent structure n u operate in binary manner: “on”: +1 and “off”: -1 u Visible neurons and hidden neurons u energy function j k u n j k means no self feedback Goal of Boltzman Learning is to maximize likelihood function u set of synaptic weights is called a model of the environment if it leads the same probability distribution of the states of visible units

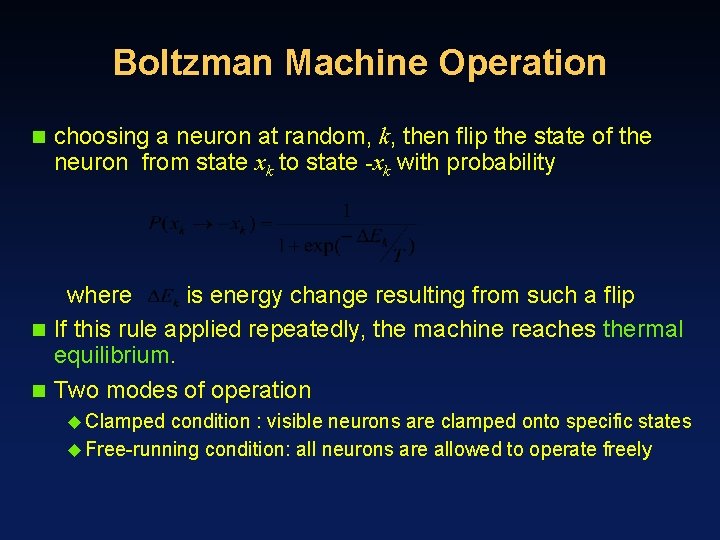

Boltzman Machine Operation n choosing a neuron at random, k, then flip the state of the neuron from state xk to state -xk with probability where is energy change resulting from such a flip n If this rule applied repeatedly, the machine reaches thermal equilibrium. n Two modes of operation u Clamped condition : visible neurons are clamped onto specific states u Free-running condition: all neurons are allowed to operate freely

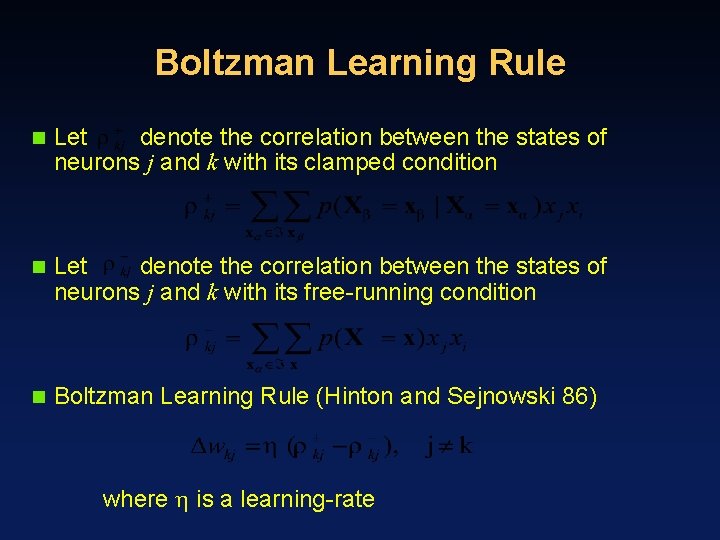

Boltzman Learning Rule n Let denote the correlation between the states of neurons j and k with its clamped condition n Let denote the correlation between the states of neurons j and k with its free-running condition n Boltzman Learning Rule (Hinton and Sejnowski 86) where is a learning-rate

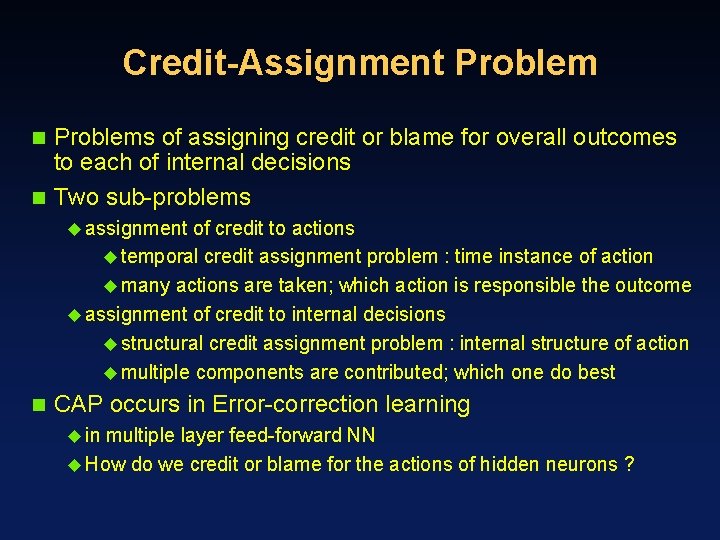

Credit-Assignment Problems of assigning credit or blame for overall outcomes to each of internal decisions n Two sub-problems n u assignment of credit to actions u temporal credit assignment problem : time instance of action u many actions are taken; which action is responsible the outcome u assignment of credit to internal decisions u structural credit assignment problem : internal structure of action u multiple components are contributed; which one do best n CAP occurs in Error-correction learning u in multiple layer feed-forward NN u How do we credit or blame for the actions of hidden neurons ?

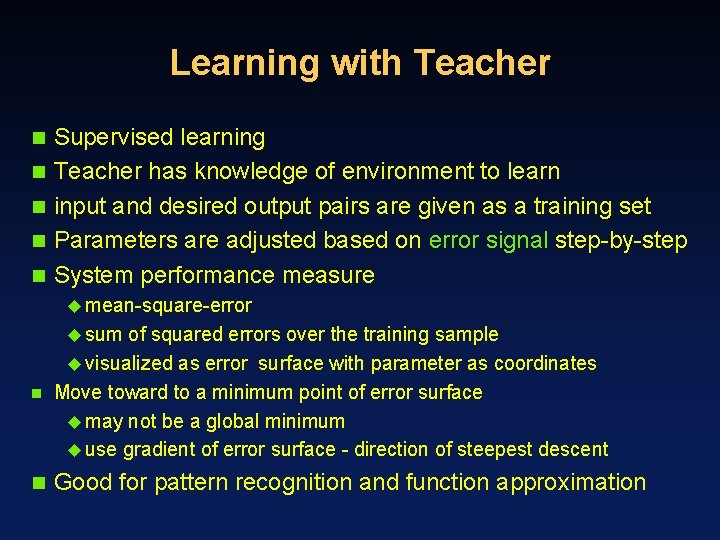

Learning with Teacher n n n Supervised learning Teacher has knowledge of environment to learn input and desired output pairs are given as a training set Parameters are adjusted based on error signal step-by-step System performance measure u mean-square-error u sum of squared errors over the training sample u visualized as error surface with parameter as coordinates n Move toward to a minimum point of error surface u may not be a global minimum u use gradient of error surface - direction of steepest descent n Good for pattern recognition and function approximation

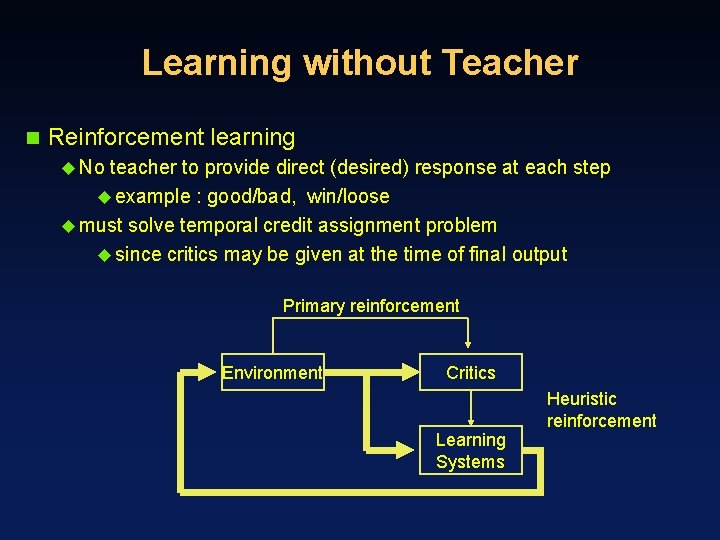

Learning without Teacher n Reinforcement learning u No teacher to provide direct (desired) response at each step u example : good/bad, win/loose u must solve temporal credit assignment problem u since critics may be given at the time of final output Primary reinforcement Environment Critics Learning Systems Heuristic reinforcement

Unsupervised Learning n Self-organized learning u No external teacher or critics u Task-independent measure of quality is required to learn u Network parameters are optimized with respect to the measure u competitive learning rule is a case of unsupervised learning

Learning Tasks n n n Pattern Association Pattern Recognition Function Approximation Control Filtering Beamforming

Pattern Association n Associative memory is distributed memory that learns by association u predominant features of human memory xk yk, k=1, 2, …, q n Storage capacity, q n Storage phase and Recall phase n u NN is required to store a set of patterns by repeatedly presenting u partial description or distorted pattern is used to retrieve the particular pattern u xk is act as a stimulus that determines location of memorized pattern Autoassociation : when xk = yk, n Hetroassociation : when xk yk, n Have q as large as possible yet recall correctly n

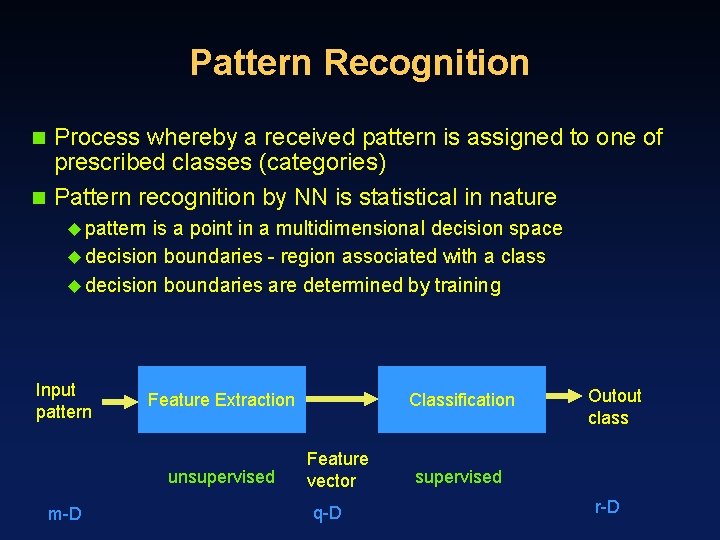

Pattern Recognition Process whereby a received pattern is assigned to one of prescribed classes (categories) n Pattern recognition by NN is statistical in nature n u pattern is a point in a multidimensional decision space u decision boundaries - region associated with a class u decision boundaries are determined by training Input pattern Feature Extraction unsupervised m-D Classification Feature vector q-D Outout class supervised r-D

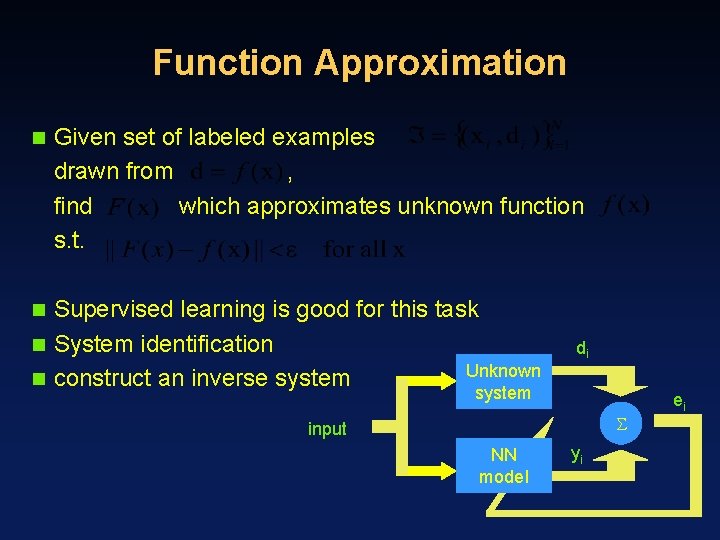

Function Approximation n Given set of labeled examples drawn from , find which approximates unknown function s. t. Supervised learning is good for this task n System identification Unknown n construct an inverse system n di system input NN model yi ei

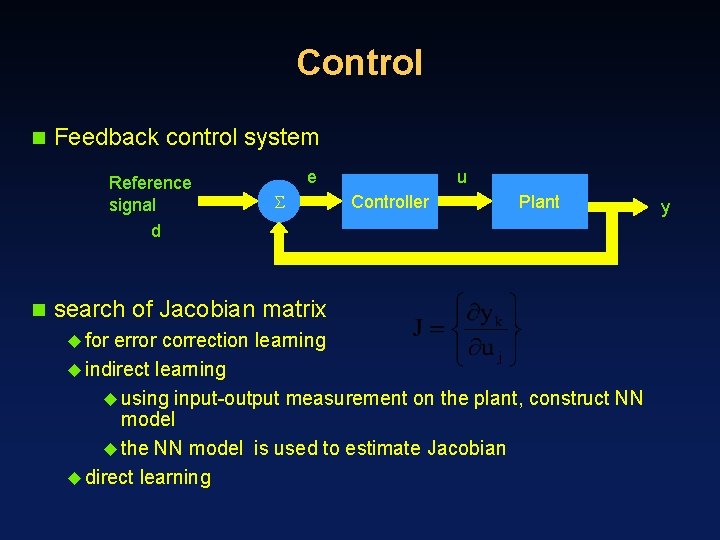

Control n Feedback control system Reference signal d n e u Controller Plant search of Jacobian matrix u for error correction learning u indirect learning u using input-output measurement on the plant, construct NN model u the NN model is used to estimate Jacobian u direct learning y

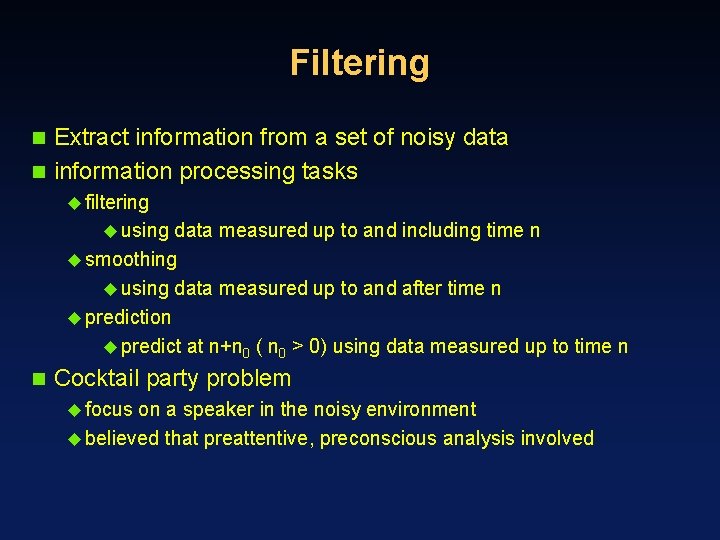

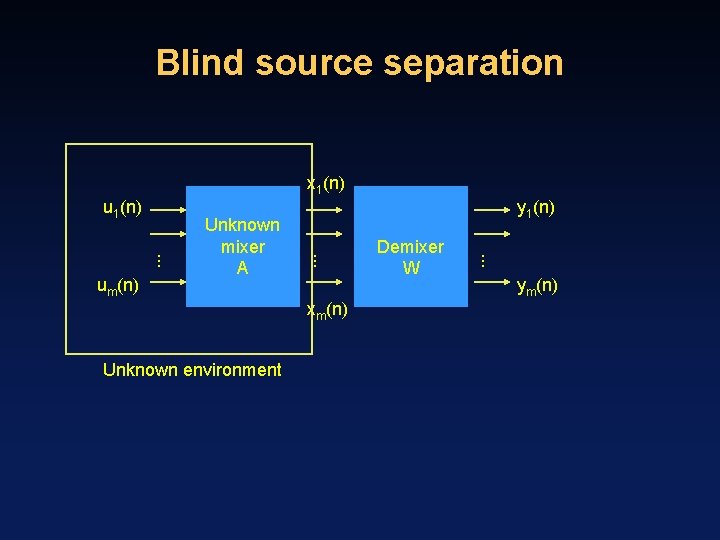

Filtering Extract information from a set of noisy data n information processing tasks n u filtering u using data measured up to and including time n u smoothing u using data measured up to and after time n u prediction u predict at n+n 0 ( n 0 > 0) using data measured up to time n n Cocktail party problem u focus on a speaker in the noisy environment u believed that preattentive, preconscious analysis involved

Blind source separation x 1(n) u 1(n) xm(n) Unknown environment Demixer W . . um(n) Unknown mixer A y 1(n) ym(n)

Memory Relatively enduring neural alterations induced by interaction with environment - neurobiological definition n accessible to influence future behavior n activity pattern is stored by learning process n u memory n and learning are connected Short-term memory u compilation n of knowledge representing current state of environment Long-term memory u knowledge stored for a long time or permanently

Associative memory n n n Memory is distributed stimulus pattern and response pattern consist of data vectors information is stored as spatial patterns of neural activities information of stimulus pattern determines not only stage location but also address for its retrieval although neurons is not reliable, memory is there may be interaction between patterns stored. (not a simple storage of patterns) There is possibility of error in recall process

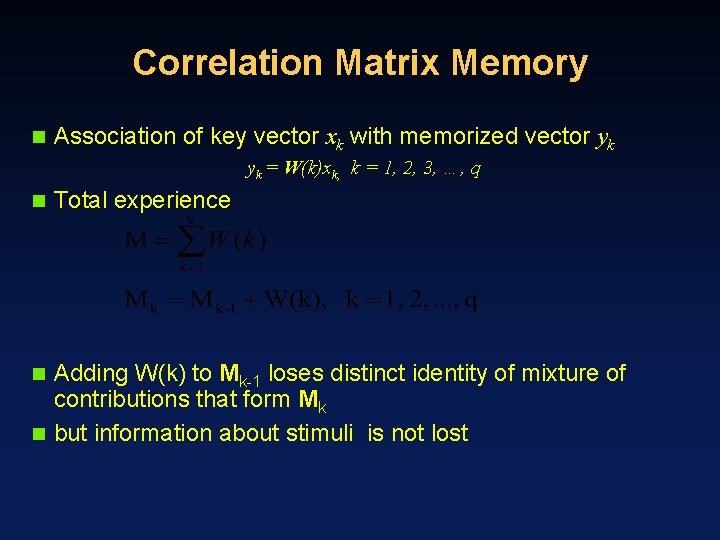

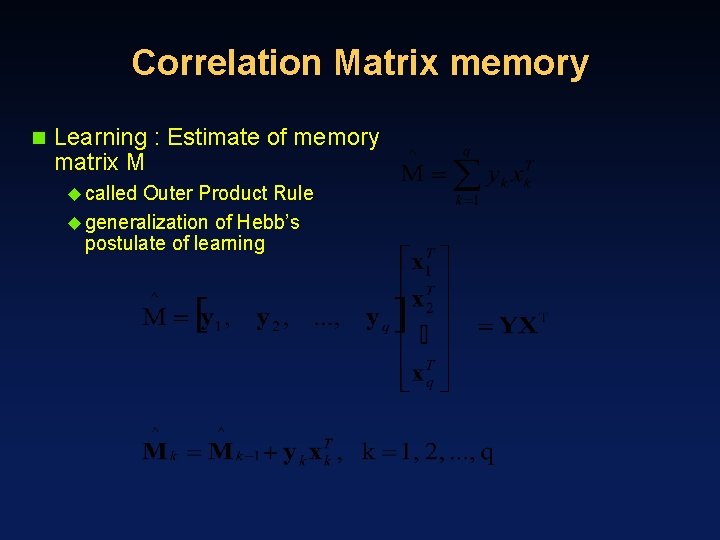

Correlation Matrix Memory n Association of key vector xk with memorized vector yk yk = W(k)xk, k = 1, 2, 3, …, q n Total experience Adding W(k) to Mk-1 loses distinct identity of mixture of contributions that form Mk n but information about stimuli is not lost n

Correlation Matrix memory n Learning : Estimate of memory matrix M u called Outer Product Rule u generalization of Hebb’s postulate of learning

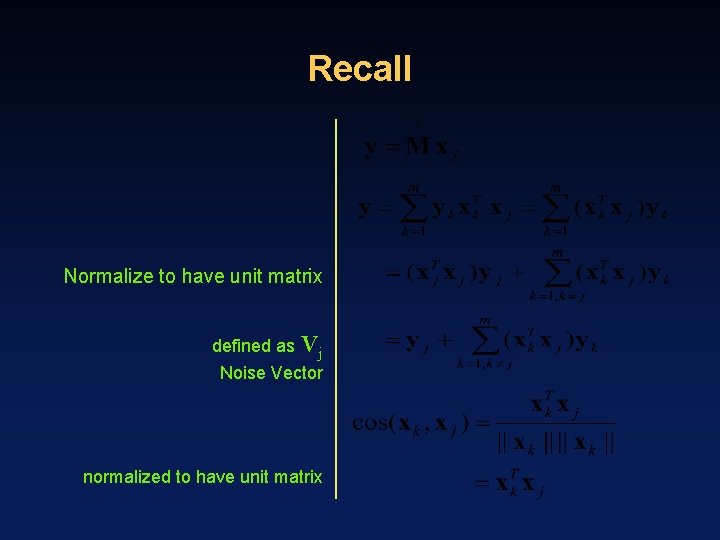

Recall Normalize to have unit matrix defined as Vj Noise Vector normalized to have unit matrix

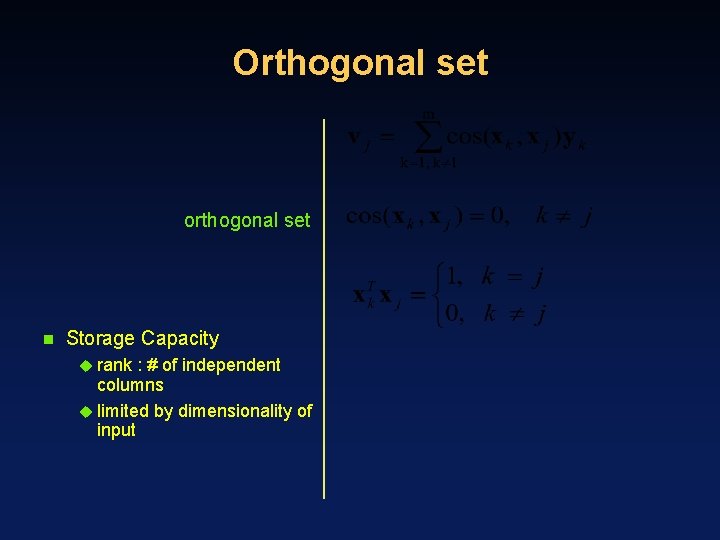

Orthogonal set orthogonal set n Storage Capacity u rank : # of independent columns u limited by dimensionality of input

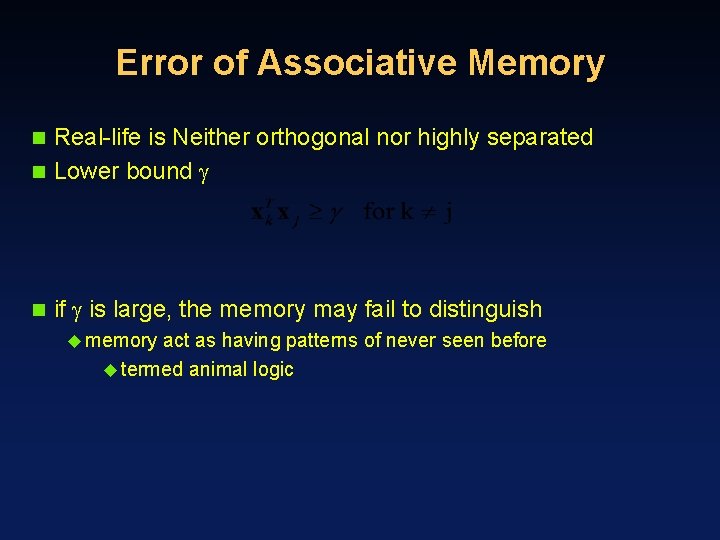

Error of Associative Memory Real-life is Neither orthogonal nor highly separated n Lower bound n n if is large, the memory may fail to distinguish u memory act as having patterns of never seen before u termed animal logic

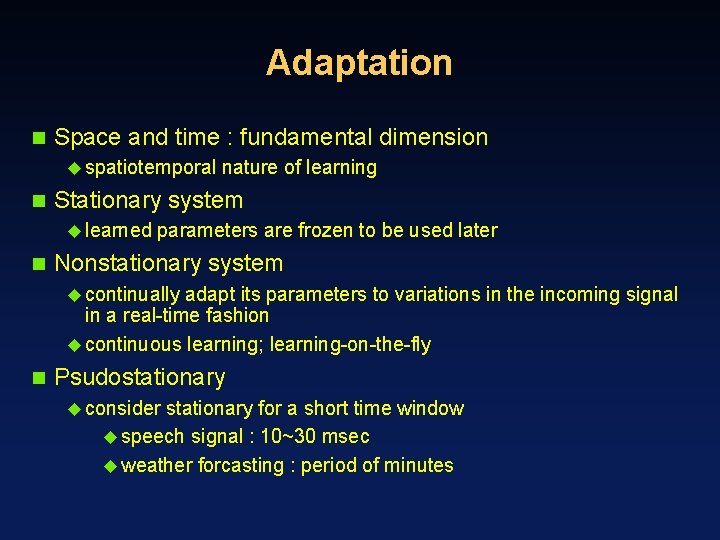

Adaptation n Space and time : fundamental dimension u spatiotemporal n Stationary system u learned n nature of learning parameters are frozen to be used later Nonstationary system u continually adapt its parameters to variations in the incoming signal in a real-time fashion u continuous learning; learning-on-the-fly n Psudostationary u consider stationary for a short time window u speech signal : 10~30 msec u weather forcasting : period of minutes

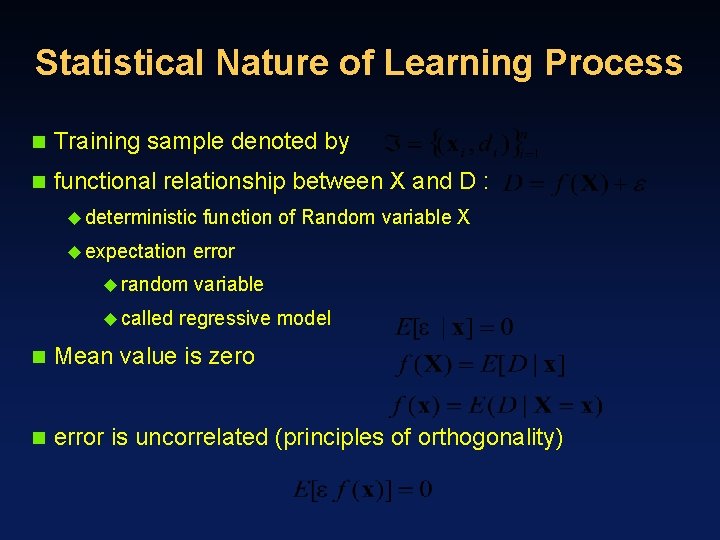

Statistical Nature of Learning Process n Training sample denoted by n functional relationship between X and D : u deterministic u expectation u random u called function of Random variable X error variable regressive model n Mean value is zero n error is uncorrelated (principles of orthogonality)

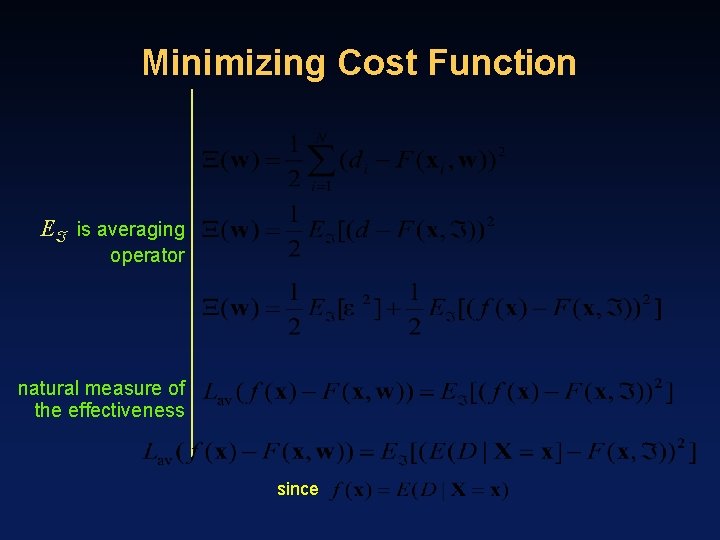

Minimizing Cost Function E is averaging operator natural measure of the effectiveness since

Bias/Variance Dilemma Bias: variance: n Bias u represents n inability of the NN to approximate the regression function variance u inadequacy of the information contained in the training set we can reduce both of bias and variance only with Large training samples n purposely introduce “harmless” bias to reduce variance n u designed bias - constrained network takes prior knowledge

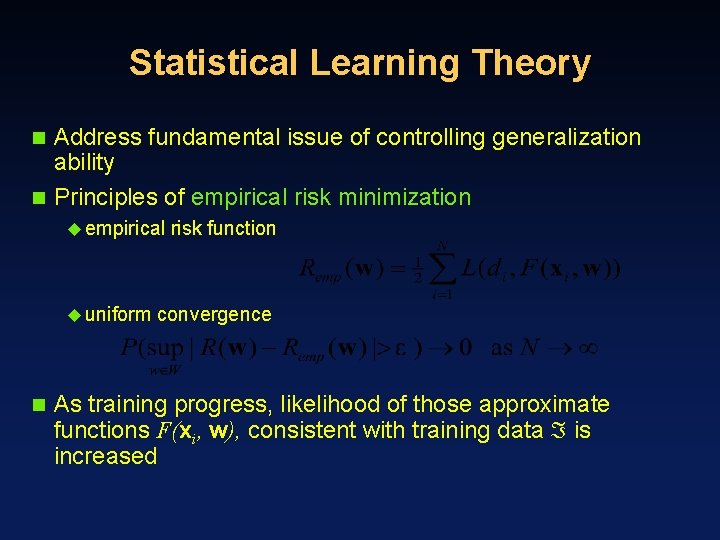

Statistical Learning Theory Address fundamental issue of controlling generalization ability n Principles of empirical risk minimization n u empirical u uniform n risk function convergence As training progress, likelihood of those approximate functions F(xi, w), consistent with training data is increased

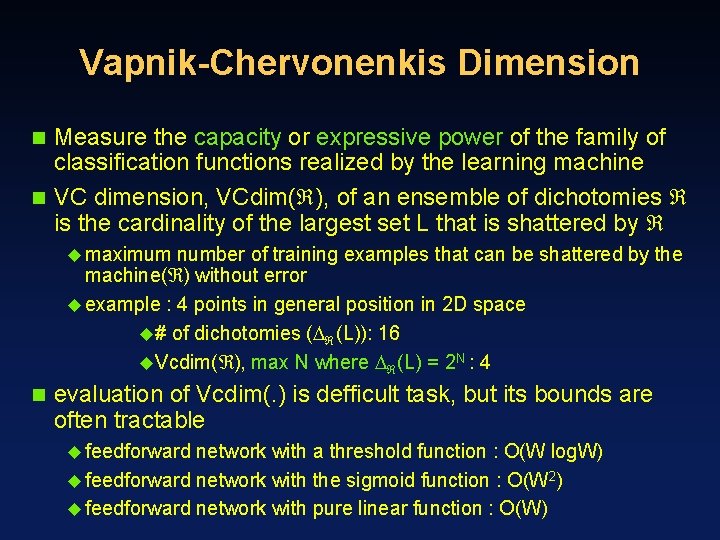

Vapnik-Chervonenkis Dimension Measure the capacity or expressive power of the family of classification functions realized by the learning machine n VC dimension, VCdim( ), of an ensemble of dichotomies is the cardinality of the largest set L that is shattered by n u maximum number of training examples that can be shattered by the machine( ) without error u example : 4 points in general position in 2 D space u# of dichotomies ( (L)): 16 u. Vcdim( ), max N where (L) = 2 N : 4 n evaluation of Vcdim(. ) is defficult task, but its bounds are often tractable u feedforward network with a threshold function : O(W log. W) u feedforward network with the sigmoid function : O(W 2) u feedforward network with pure linear function : O(W)

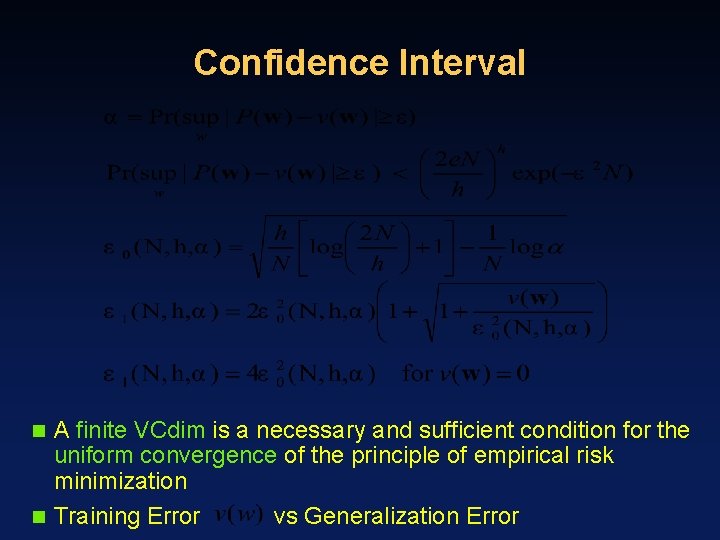

Confidence Interval A finite VCdim is a necessary and sufficient condition for the uniform convergence of the principle of empirical risk minimization n Training Error vs Generalization Error n

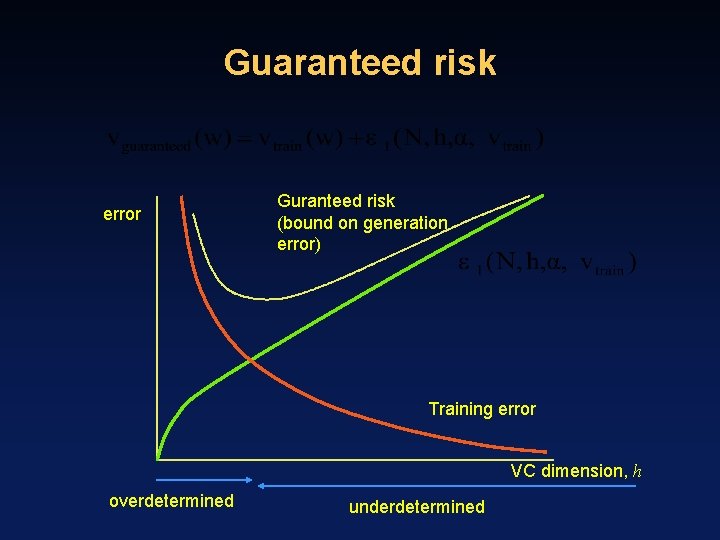

Guaranteed risk error Guranteed risk (bound on generation error) Training error VC dimension, h overdetermined underdetermined

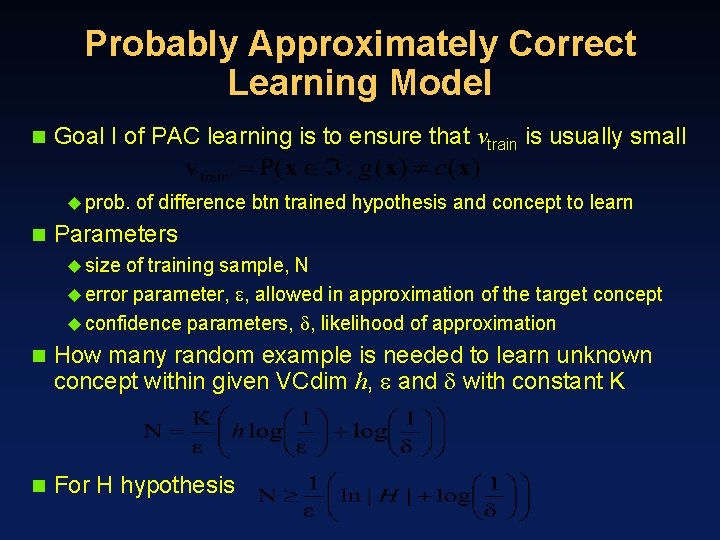

Probably Approximately Correct Learning Model n Goal I of PAC learning is to ensure that vtrain is usually small u prob. n of difference btn trained hypothesis and concept to learn Parameters u size of training sample, N u error parameter, , allowed in approximation of the target concept u confidence parameters, , likelihood of approximation n How many random example is needed to learn unknown concept within given VCdim h, and with constant K n For H hypothesis

- Slides: 42