Learning Objectives Assessment LongTerm Program Planning Mission Objectives

Learning Objectives Assessment Long-Term Program Planning: Mission, Objectives, Bloom’s Taxonomy & Designing Projects Institutional Effectiveness August 11, 2020 Chad Bebee, Director of Assessment

How the Assessment Process is Improving • The Assessment Committee will review assessment plans that detail assessments for up to a two-year period. • Planning a year in advance is currently optional. Year-to-year planning can still be done, but if a program can plan in advance it will cut-down on the assessment planning workload and enable more long-term planning. • When a plan is reviewed, the committee would like a representative of the program to join us via Zoom if possible. Attending the meeting helps the committee understand the intent of the program’s plans and helps the program contextualize our feedback. • Assessment planning now incorporates both direct measures (i. e. tests, rubric scores, observation checklists) and indirect measures (student surveys, focus groups, and other forms of student feedback).

American Association for Higher Education’s Principles of Good Practice for Assessing Student Learning ■ The assessment of student learning begins with educational values. ■ Assessment is most effective when it reflects an understanding of learning as multidimensional, integrated, and revealed in performance over time. ■ Assessment works best when the programs it seeks to improve have clear, explicitly stated purposes. ■ Assessment requires attention to outcomes but also and equally to the experiences that lead to those outcomes. ■ Assessment works best when it is ongoing, not episodic. ■ Assessment fosters wider improvement when representatives from across the educational community are involved. ■ Assessment makes a difference when it begins with issues of use and illuminates questions that people really care about. ■ Assessment is most likely to lead to improvement when it is part of a larger set of conditions that promote change. ■ Through assessment, educators meet responsibilities to students and to the public.

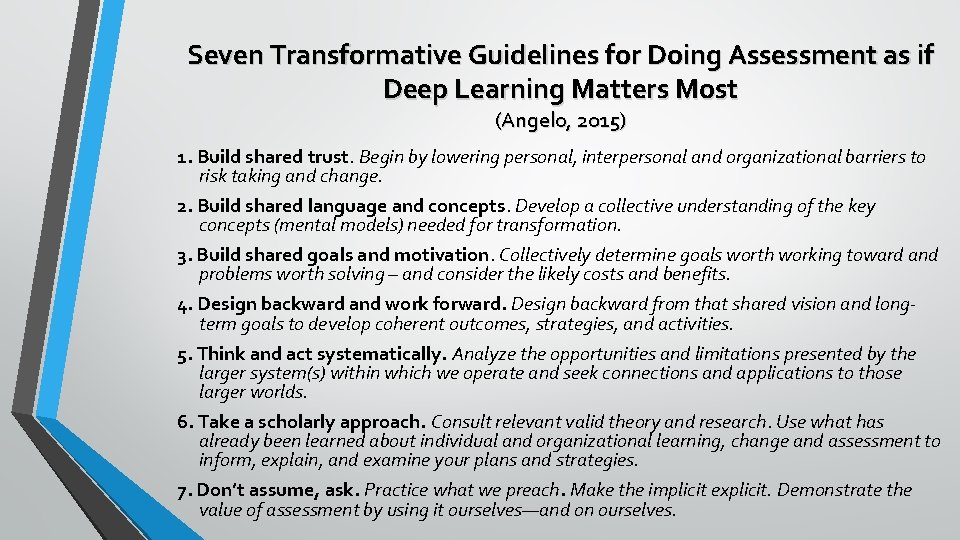

Seven Transformative Guidelines for Doing Assessment as if Deep Learning Matters Most (Angelo, 2015) 1. Build shared trust. Begin by lowering personal, interpersonal and organizational barriers to risk taking and change. 2. Build shared language and concepts. Develop a collective understanding of the key concepts (mental models) needed for transformation. 3. Build shared goals and motivation. Collectively determine goals worth working toward and problems worth solving – and consider the likely costs and benefits. 4. Design backward and work forward. Design backward from that shared vision and longterm goals to develop coherent outcomes, strategies, and activities. 5. Think and act systematically. Analyze the opportunities and limitations presented by the larger system(s) within which we operate and seek connections and applications to those larger worlds. 6. Take a scholarly approach. Consult relevant valid theory and research. Use what has already been learned about individual and organizational learning, change and assessment to inform, explain, and examine your plans and strategies. 7. Don’t assume, ask. Practice what we preach. Make the implicit explicit. Demonstrate the value of assessment by using it ourselves—and on ourselves.

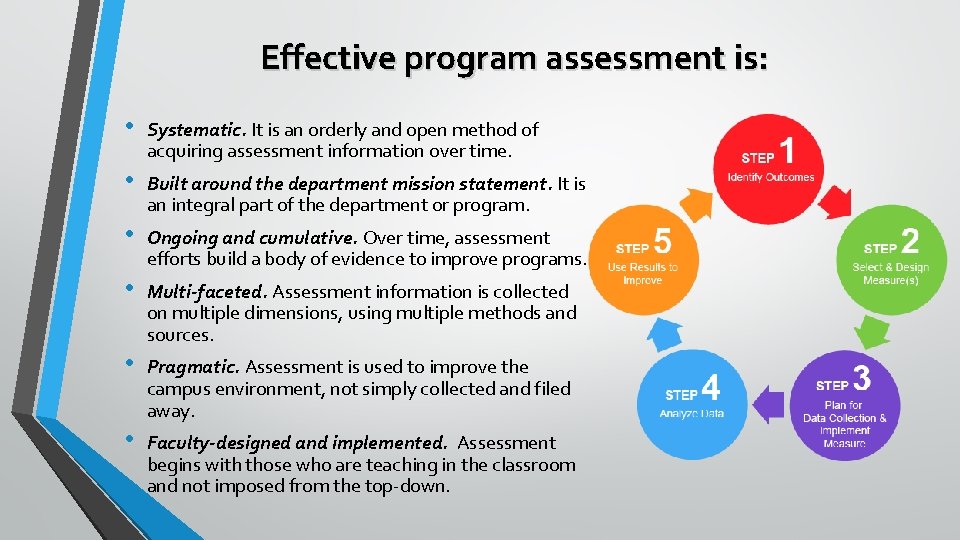

Effective program assessment is: • Systematic. It is an orderly and open method of acquiring assessment information over time. • Built around the department mission statement. It is an integral part of the department or program. • Ongoing and cumulative. Over time, assessment efforts build a body of evidence to improve programs. • Multi-faceted. Assessment information is collected on multiple dimensions, using multiple methods and sources. • Pragmatic. Assessment is used to improve the campus environment, not simply collected and filed away. • Faculty-designed and implemented. Assessment begins with those who are teaching in the classroom and not imposed from the top-down.

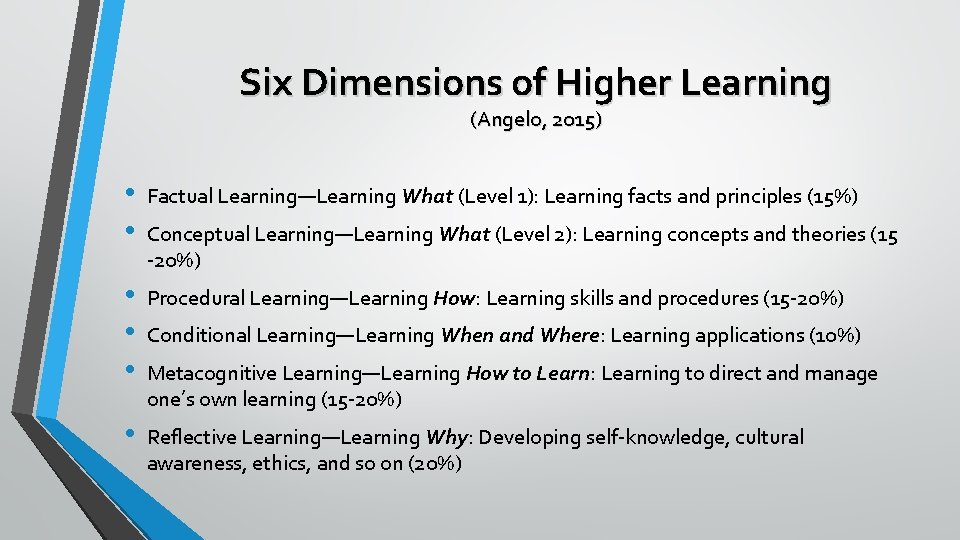

Six Dimensions of Higher Learning (Angelo, 2015) • • Factual Learning—Learning What (Level 1): Learning facts and principles (15%) • • • Procedural Learning—Learning How: Learning skills and procedures (15 -20%) • Reflective Learning—Learning Why: Developing self-knowledge, cultural awareness, ethics, and so on (20%) Conceptual Learning—Learning What (Level 2): Learning concepts and theories (15 -20%) Conditional Learning—Learning When and Where: Learning applications (10%) Metacognitive Learning—Learning How to Learn: Learning to direct and manage one’s own learning (15 -20%)

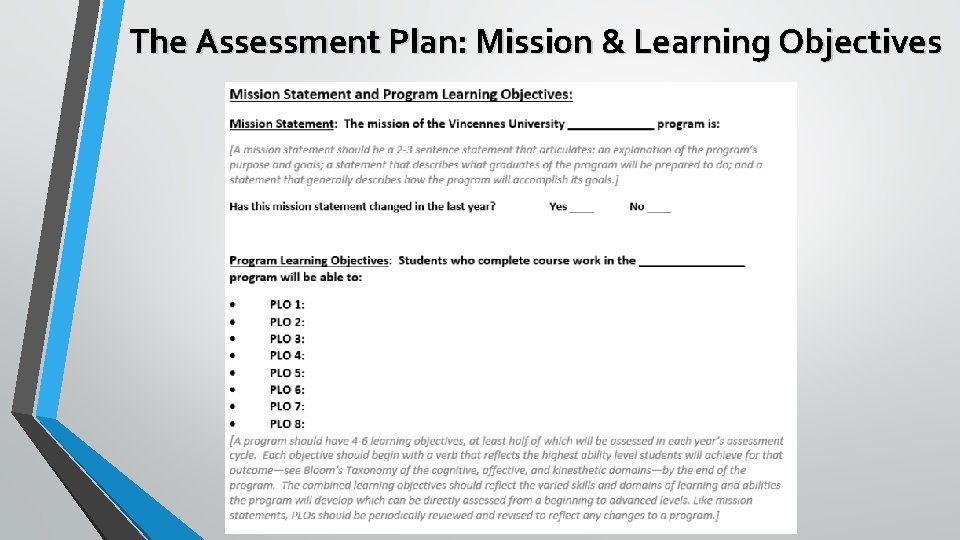

The Assessment Plan: Mission & Learning Objectives

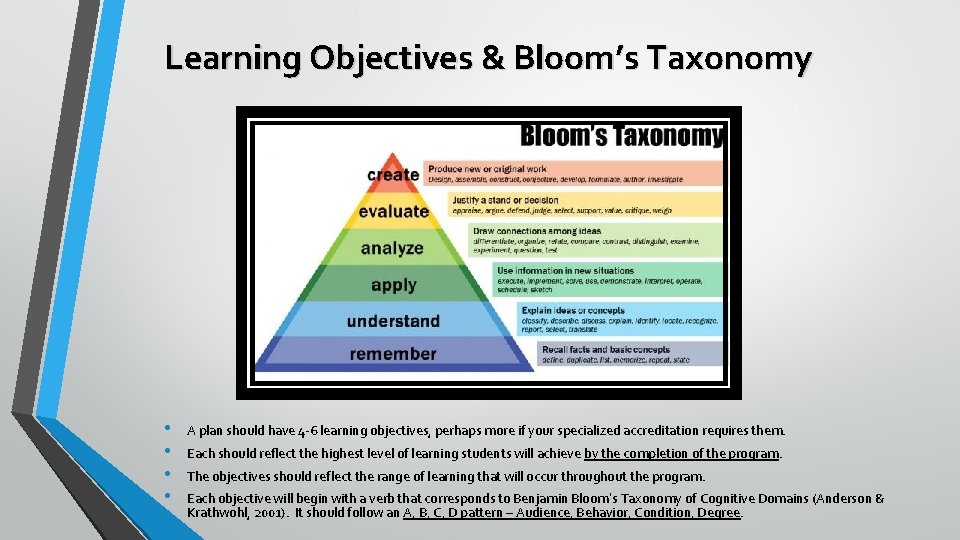

Learning Objectives & Bloom’s Taxonomy • • A plan should have 4 -6 learning objectives, perhaps more if your specialized accreditation requires them. Each should reflect the highest level of learning students will achieve by the completion of the program. The objectives should reflect the range of learning that will occur throughout the program. Each objective will begin with a verb that corresponds to Benjamin Bloom’s Taxonomy of Cognitive Domains (Anderson & Krathwohl, 2001). It should follow an A, B, C, D pattern – Audience, Behavior, Condition, Degree.

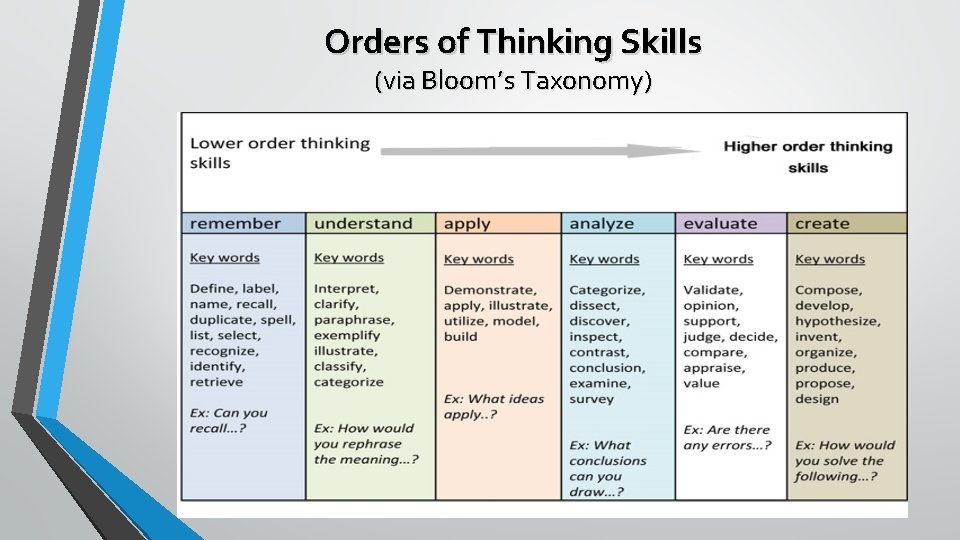

Orders of Thinking Skills (via Bloom’s Taxonomy)

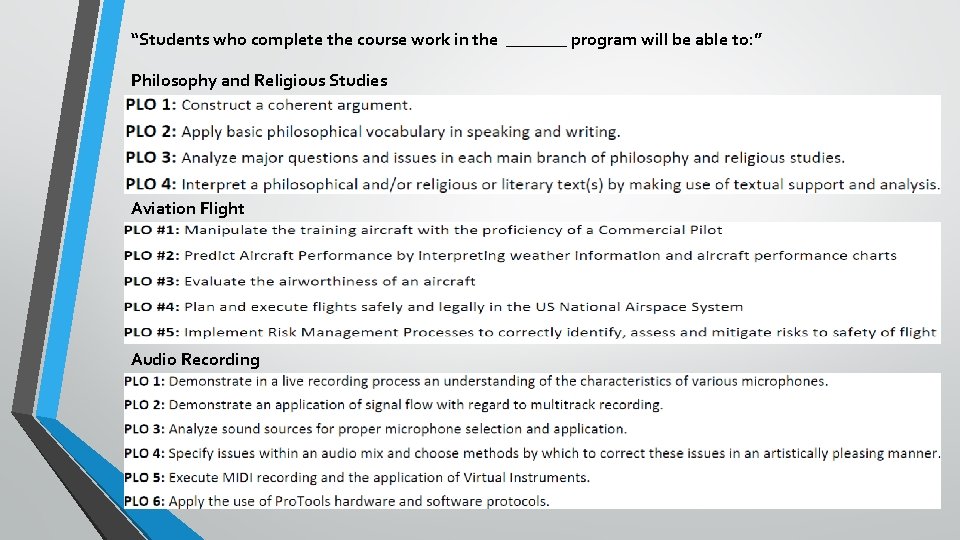

“Students who complete the course work in the _______ program will be able to: ” Philosophy and Religious Studies Aviation Flight Audio Recording

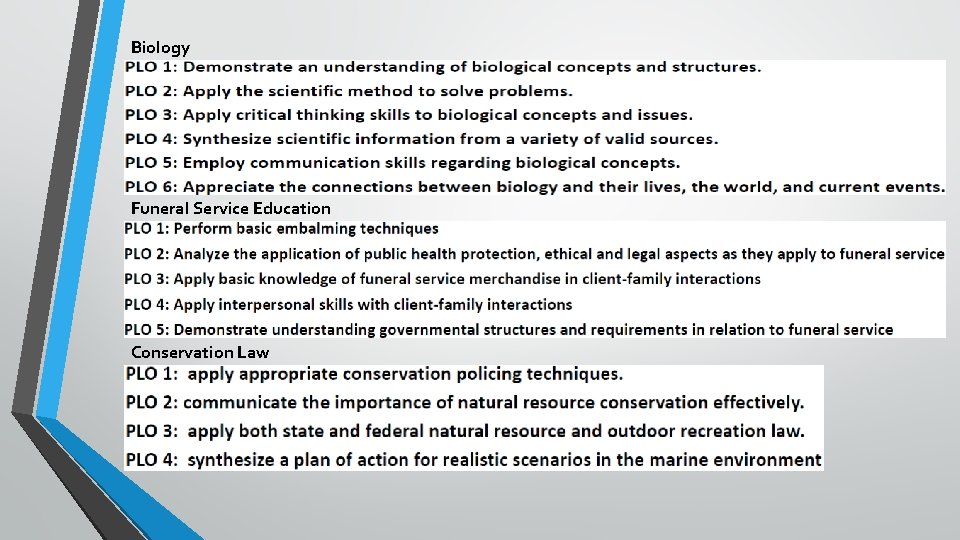

Biology Funeral Service Education Conservation Law

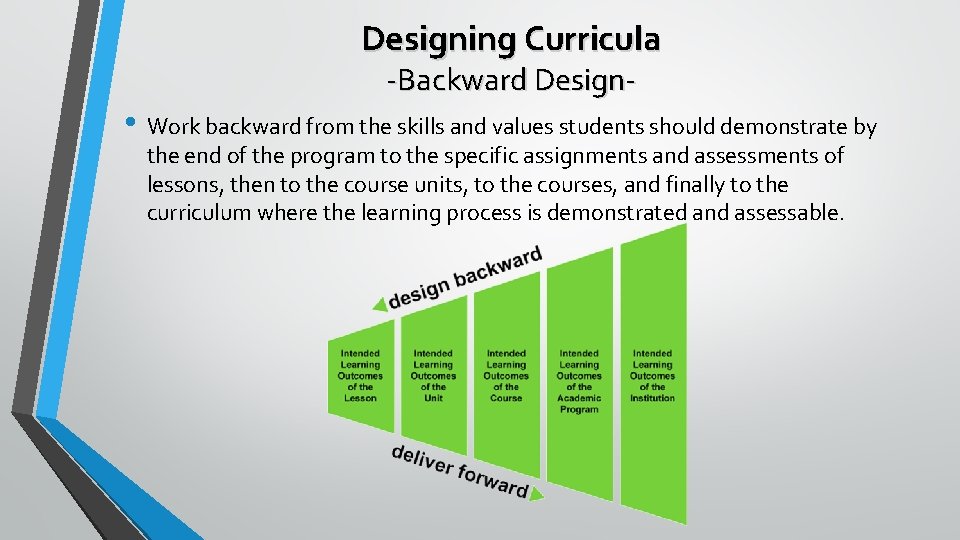

Designing Curricula -Backward Design- • Work backward from the skills and values students should demonstrate by the end of the program to the specific assignments and assessments of lessons, then to the course units, to the courses, and finally to the curriculum where the learning process is demonstrated and assessable.

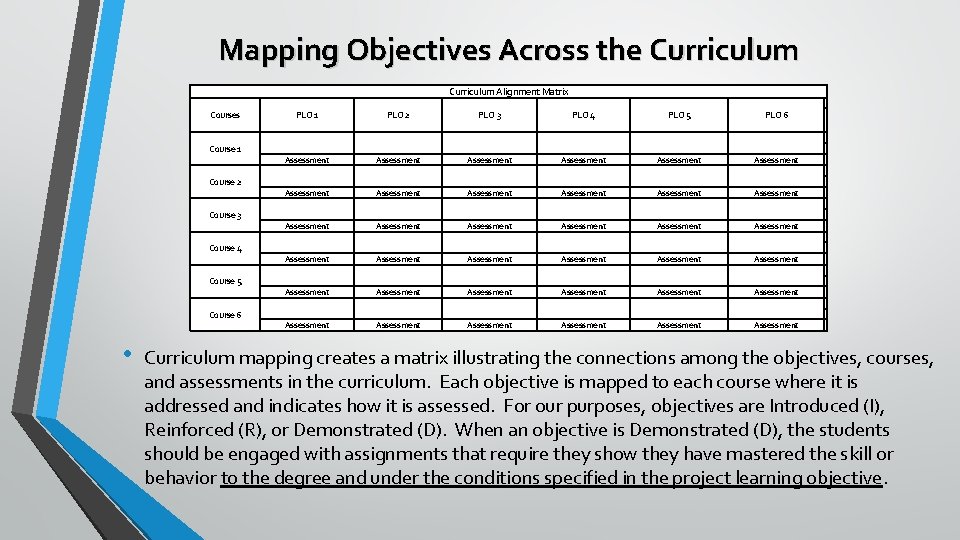

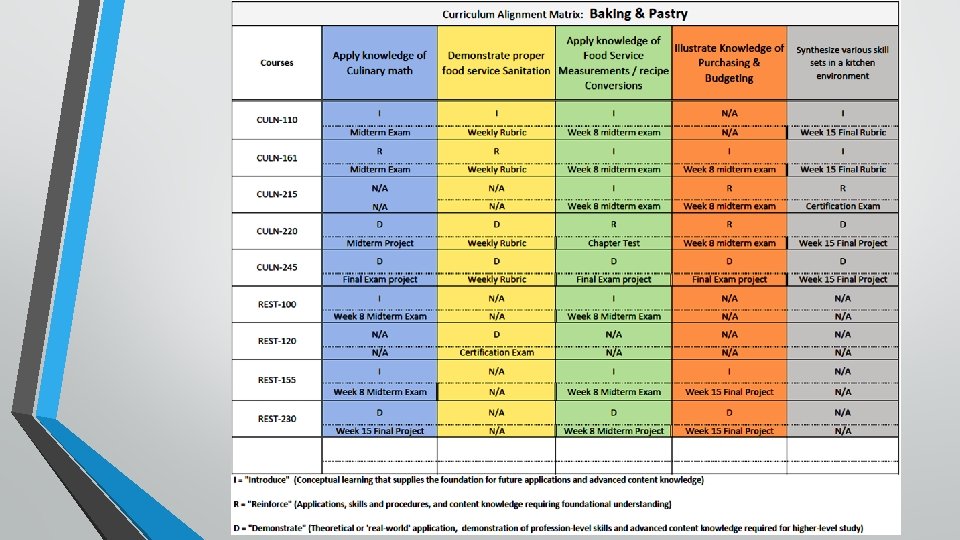

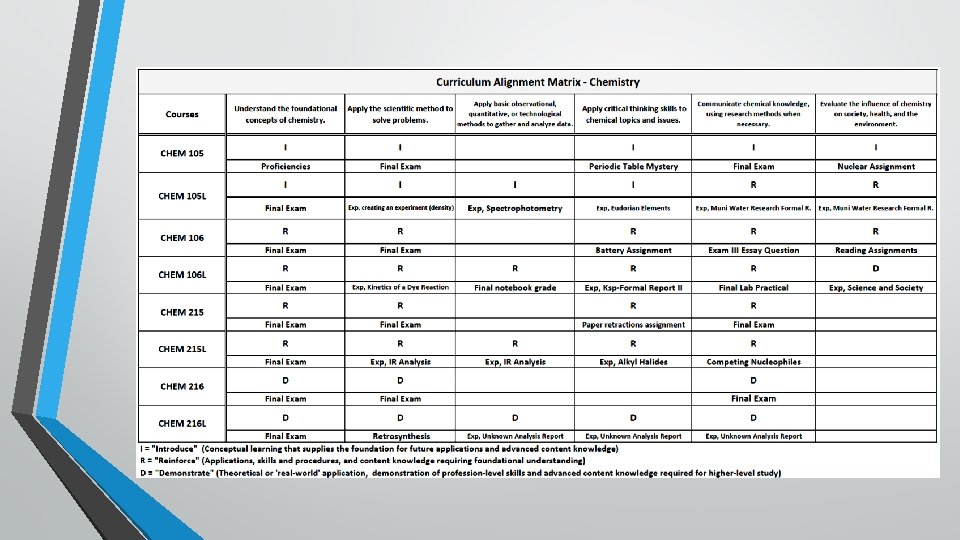

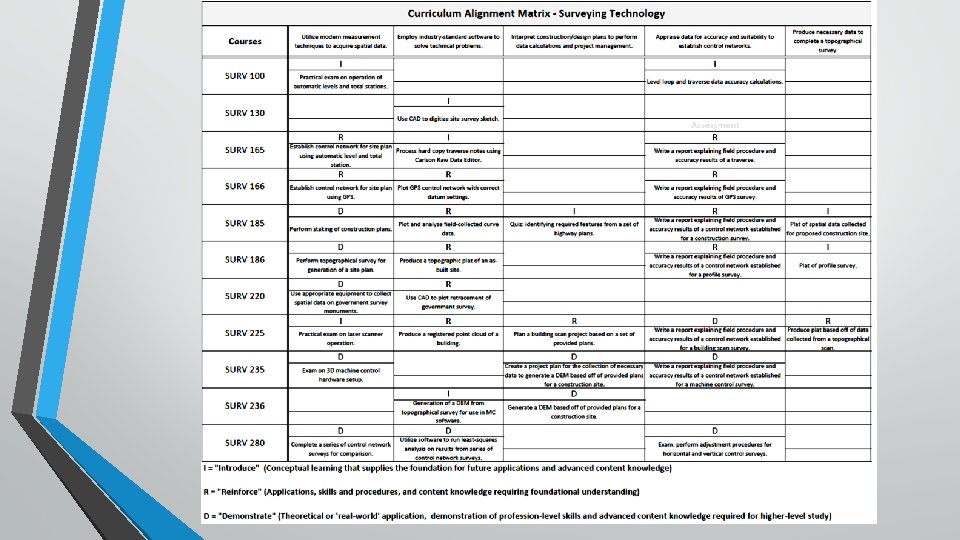

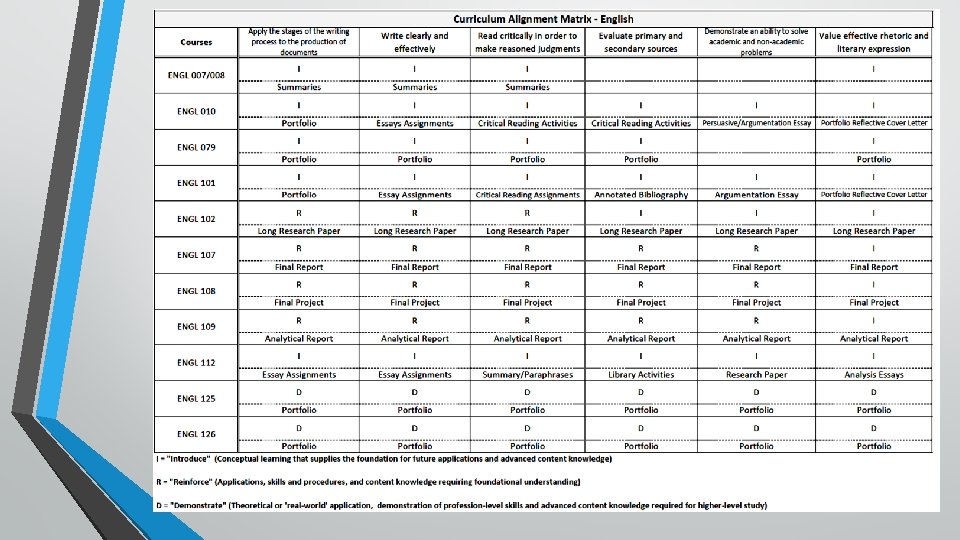

Mapping Objectives Across the Curriculum Alignment Matrix Courses PLO 1 PLO 2 PLO 3 PLO 4 PLO 5 PLO 6 Assessment Assessment Assessment Assessment Assessment Assessment Assessment Assessment Assessment Course 1 Course 2 Course 3 Course 4 Course 5 Course 6 • Curriculum mapping creates a matrix illustrating the connections among the objectives, courses, and assessments in the curriculum. Each objective is mapped to each course where it is addressed and indicates how it is assessed. For our purposes, objectives are Introduced (I), Reinforced (R), or Demonstrated (D). When an objective is Demonstrated (D), the students should be engaged with assignments that require they show they have mastered the skill or behavior to the degree and under the conditions specified in the project learning objective.

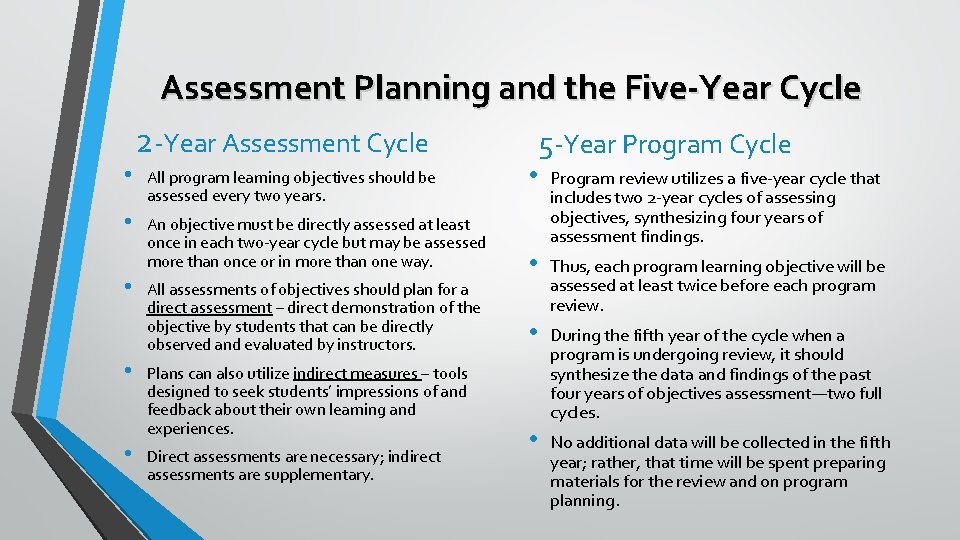

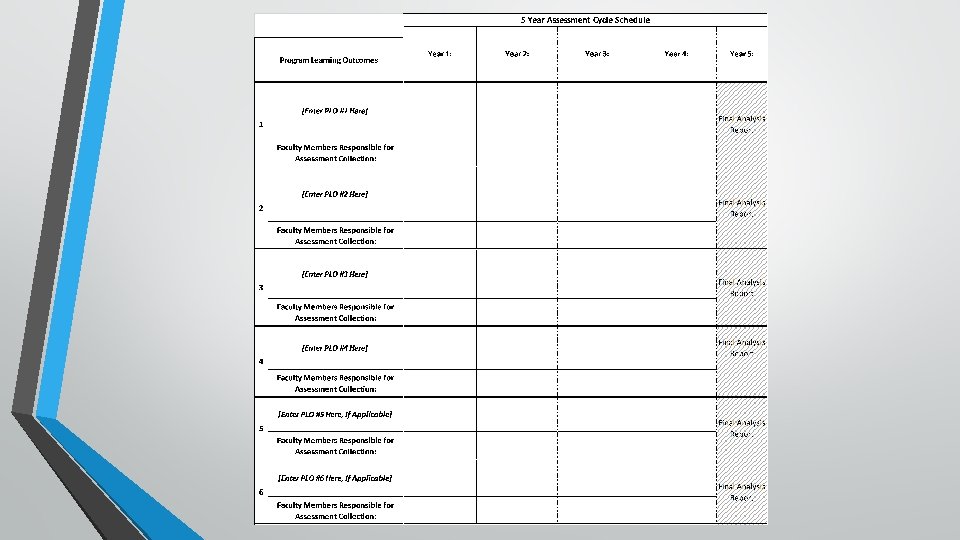

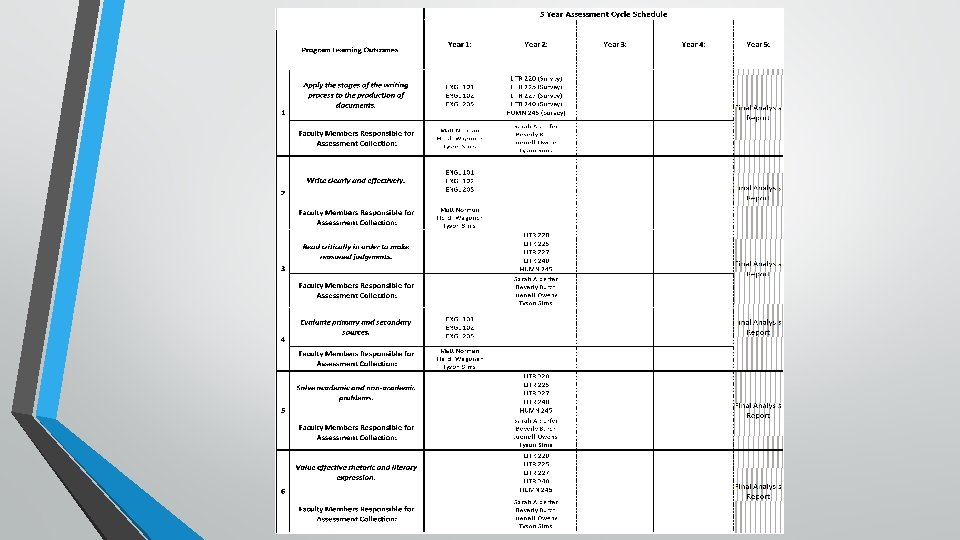

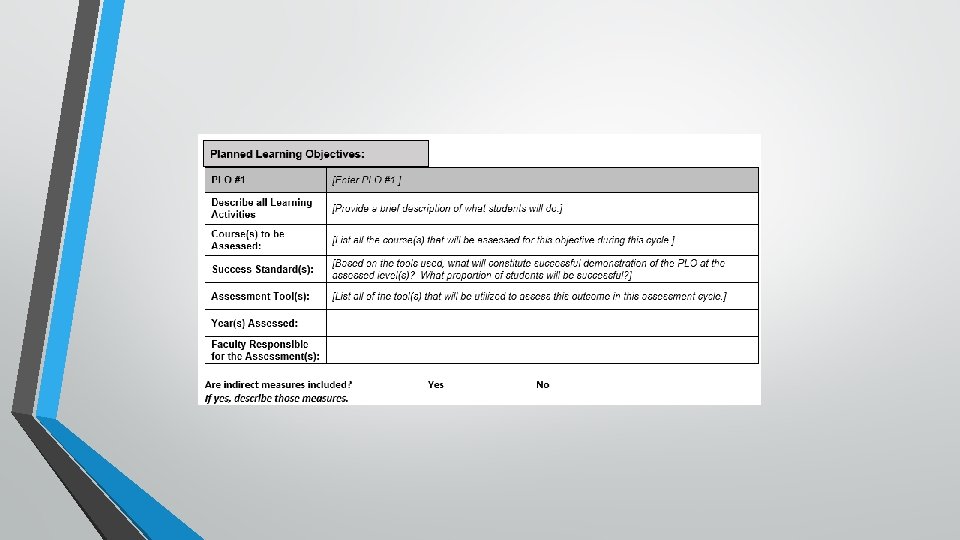

Assessment Planning and the Five-Year Cycle • • • 2 -Year Assessment Cycle All program learning objectives should be assessed every two years. • 5 -Year Program Cycle Program review utilizes a five-year cycle that includes two 2 -year cycles of assessing objectives, synthesizing four years of assessment findings. An objective must be directly assessed at least once in each two-year cycle but may be assessed more than once or in more than one way. • All assessments of objectives should plan for a direct assessment – direct demonstration of the objective by students that can be directly observed and evaluated by instructors. Thus, each program learning objective will be assessed at least twice before each program review. • During the fifth year of the cycle when a program is undergoing review, it should synthesize the data and findings of the past four years of objectives assessment—two full cycles. • No additional data will be collected in the fifth year; rather, that time will be spent preparing materials for the review and on program planning. • Plans can also utilize indirect measures – tools designed to seek students’ impressions of and feedback about their own learning and experiences. • Direct assessments are necessary; indirect assessments are supplementary.

Success Standards -The Basic Process for Creating an Evaluation Tool- 1. (Suskie, 2009) Develop a rubric or a holistic scoring guide that articulates what is minimally acceptable performance, i. e. what is minimally needed for success. In a rubric, this material should be the middle level. 2. Then determine and articulate what is effective or exceptional performance and input this material into the scoring tool. In a rubric, place this material first. 3. Finally, determine and articulate what is inadequate or unacceptable performance; input this material into the scoring tool. In a rubric, place this material last. Remember: Like all of us, students want to understand how they will be evaluated. Use the evaluation tool as a teaching tool! Share it with students and give them opportunities to think about it, use it, and possibly even help to design/revise it.

Success Standards -Setting Targets for Student Success- (Suskie, 2009) v The next step is setting a target for students’ collective performance. Ask: how many students do we want to meet the standard? Context Matters: students who are graduating a program may have a different standard for success than those who are just beginning or are in the middle of the program. Success standards should reflect a program’s expectations for students at each level of the curriculum. v Consider the following regarding your expectations for student success: • Should every student meet the standard? Would you be satisfied if 90% were able to meet the standard? What is the minimum percentage you are willing to accept as successful? • • Would you be satisfied if every student met the minimum standard but no higher? • Express targets as percentages rather than averages. Percentages are more useful and meaningful than mean scores. • Consider multiple scores: what percentage will score minimally acceptable and what percentage will score above average, proficient, or exemplary? Vary your targets depending on the circumstances. Some fields have less tolerance for error than others, but all students should graduate having mastered the fundamental skills.

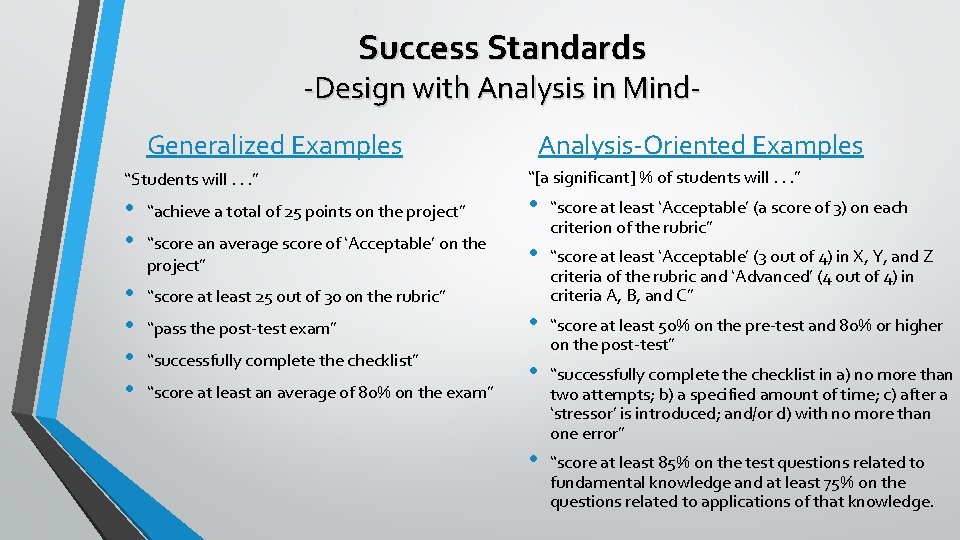

Success Standards -Design with Analysis in Mind. Generalized Examples “Students will. . . ” Analysis-Oriented Examples “[a significant] % of students will. . . ” • • “achieve a total of 25 points on the project” • “score an average score of ‘Acceptable’ on the project” “score at least ‘Acceptable’ (a score of 3) on each criterion of the rubric” • • • “score at least 25 out of 30 on the rubric” “score at least ‘Acceptable’ (3 out of 4) in X, Y, and Z criteria of the rubric and ‘Advanced’ (4 out of 4) in criteria A, B, and C” • “score at least 50% on the pre-test and 80% or higher on the post-test” • “successfully complete the checklist in a) no more than two attempts; b) a specified amount of time; c) after a ‘stressor’ is introduced; and/or d) with no more than one error” • “score at least 85% on the test questions related to fundamental knowledge and at least 75% on the questions related to applications of that knowledge. “pass the post-test exam” “successfully complete the checklist” “score at least an average of 80% on the exam”

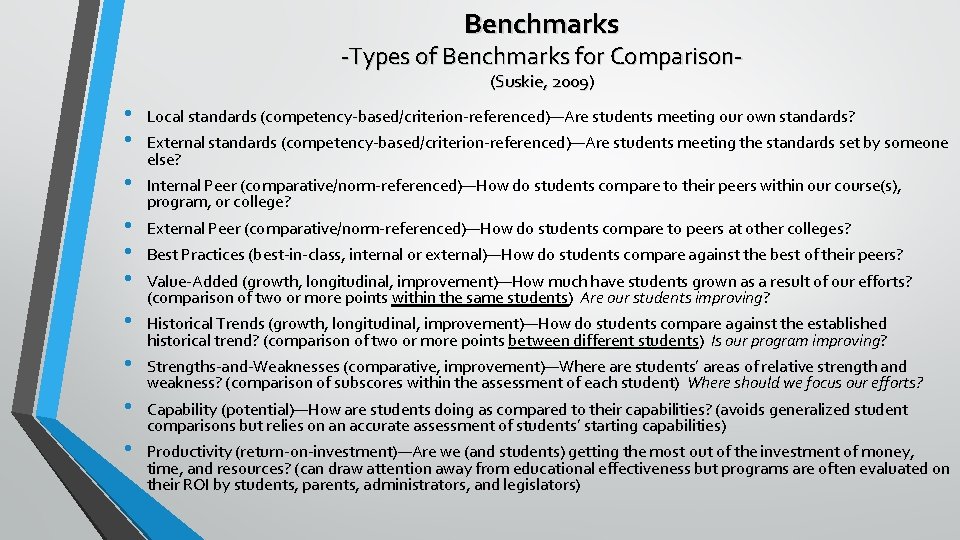

Benchmarks -Types of Benchmarks for Comparison(Suskie, 2009) • • Local standards (competency-based/criterion-referenced)—Are students meeting our own standards? • Internal Peer (comparative/norm-referenced)—How do students compare to their peers within our course(s), program, or college? • • External standards (competency-based/criterion-referenced)—Are students meeting the standards set by someone else? External Peer (comparative/norm-referenced)—How do students compare to peers at other colleges? Best Practices (best-in-class, internal or external)—How do students compare against the best of their peers? Value-Added (growth, longitudinal, improvement)—How much have students grown as a result of our efforts? (comparison of two or more points within the same students) Are our students improving? Historical Trends (growth, longitudinal, improvement)—How do students compare against the established historical trend? (comparison of two or more points between different students) Is our program improving? Strengths-and-Weaknesses (comparative, improvement)—Where are students’ areas of relative strength and weakness? (comparison of subscores within the assessment of each student) Where should we focus our efforts? Capability (potential)—How are students doing as compared to their capabilities? (avoids generalized student comparisons but relies on an accurate assessment of students’ starting capabilities) Productivity (return-on-investment)—Are we (and students) getting the most out of the investment of money, time, and resources? (can draw attention away from educational effectiveness but programs are often evaluated on their ROI by students, parents, administrators, and legislators)

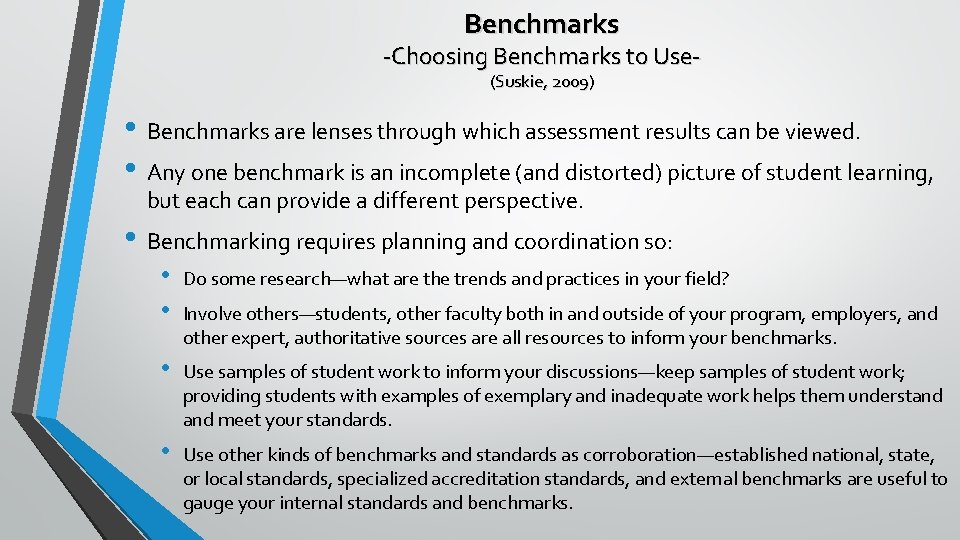

Benchmarks -Choosing Benchmarks to Use(Suskie, 2009) • Benchmarks are lenses through which assessment results can be viewed. • Any one benchmark is an incomplete (and distorted) picture of student learning, but each can provide a different perspective. • Benchmarking requires planning and coordination so: • • Do some research—what are the trends and practices in your field? • Use samples of student work to inform your discussions—keep samples of student work; providing students with examples of exemplary and inadequate work helps them understand meet your standards. • Use other kinds of benchmarks and standards as corroboration—established national, state, or local standards, specialized accreditation standards, and external benchmarks are useful to gauge your internal standards and benchmarks. Involve others—students, other faculty both in and outside of your program, employers, and other expert, authoritative sources are all resources to inform your benchmarks.

Sources and Additional Information • Many useful texts offer additional information. Here are the sources referenced in these slides: American Association of Higher Education. (1992, Dec. ). Principles of good practice for assessing student learning. https: //www. learningoutcomesassessment. org/wpcontent/uploads/2019/08/AAHE-Principles. pdf Anderson, L. W. & Krathwohl, D. R. (2001). A taxonomy for teaching and learning: A revision of bloom’s taxonomy of educational objectives. New York, NY: Longman. Angelo, T. (2015, Oct. 27). Doing assessment as if teaching and learning matter most [Conference session]. Assessment Institute, Indianapolis, IN, United States. Suskie, L. (2009). Assessing student learning: A common sense guide. Jossey-Bass. • Call the Director of Assessment at ext. 5369 or email: cbebee@vinu. edu or the Office of Institutional Effectiveness: institutionaleffectiveness@vinu. edu.

Questions?

- Slides: 29