Learning NonLinear Kernel Combinations Subject to General Regularization

Learning Non-Linear Kernel Combinations Subject to General Regularization: Theory and Applications IIIT Hyderabad - Rakesh Babu 200402007 Advisors : Prof C. V. Jawahar, IIIT Hyderabad Dr. Manik Varma, Microsoft Research

Overview IIIT Hyderabad • • Introduction to Support Vector Machines (SVM) Multiple Kernel Learning (MKL) Problem Statement Literature Survey Generalized Multiple Kernel Learning ( GMKL ) Applications Conclusion

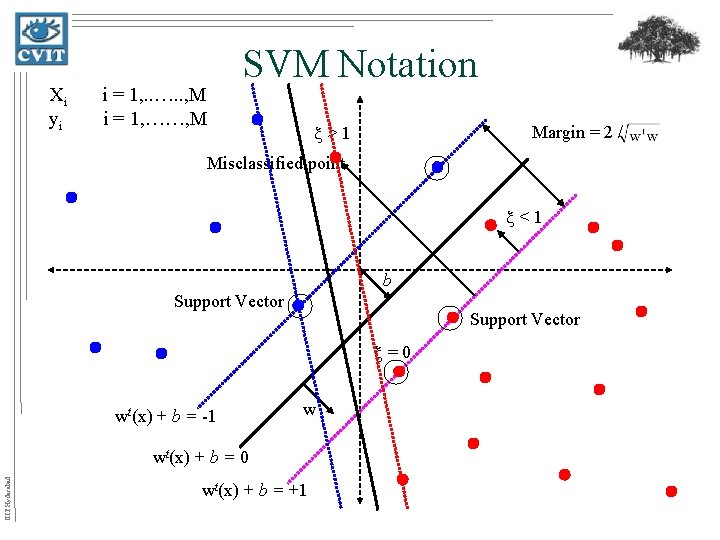

Xi yi i = 1, . . …. . , M i = 1, ……, M SVM Notation >1 Margin = 2 / Misclassified point <1 b Support Vector =0 wt(x) + b = -1 w IIIT Hyderabad wt(x) + b = 0 wt(x) + b = +1

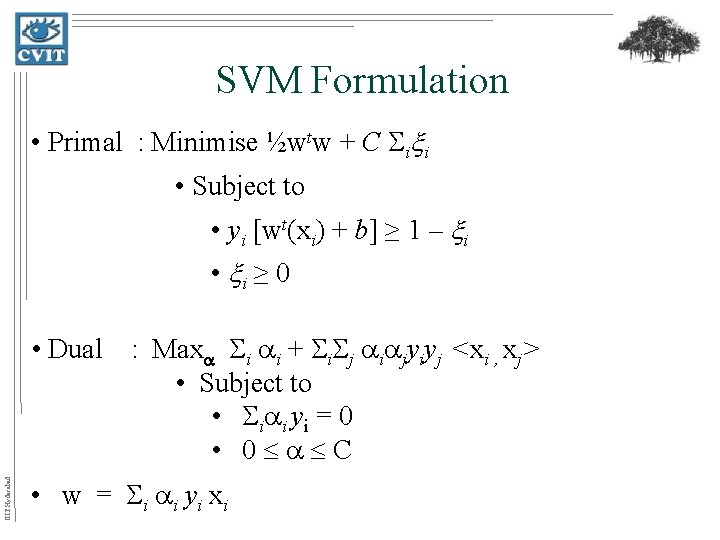

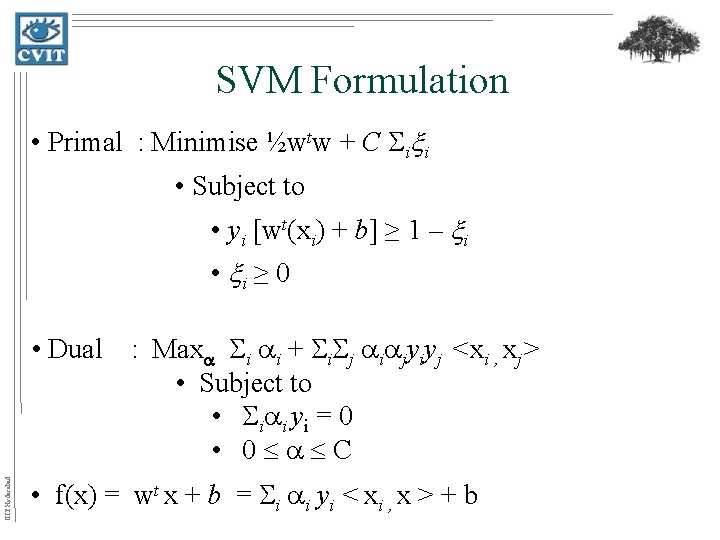

SVM Formulation • Primal : Minimise ½wtw + C i i • Subject to • yi [wt(xi) + b] ≥ 1 – i • i ≥ 0 IIIT Hyderabad • Dual : Max i i + i jyiyj <xi , xj> • Subject to • i i y i = 0 • 0 C • w = i i yi xi

SVM Formulation • Primal : Minimise ½wtw + C i i • Subject to • yi [wt(xi) + b] ≥ 1 – i • i ≥ 0 IIIT Hyderabad • Dual : Max i i + i jyiyj <xi , xj> • Subject to • i i y i = 0 • 0 C • f(x) = wt x + b = i i yi < xi , x > + b

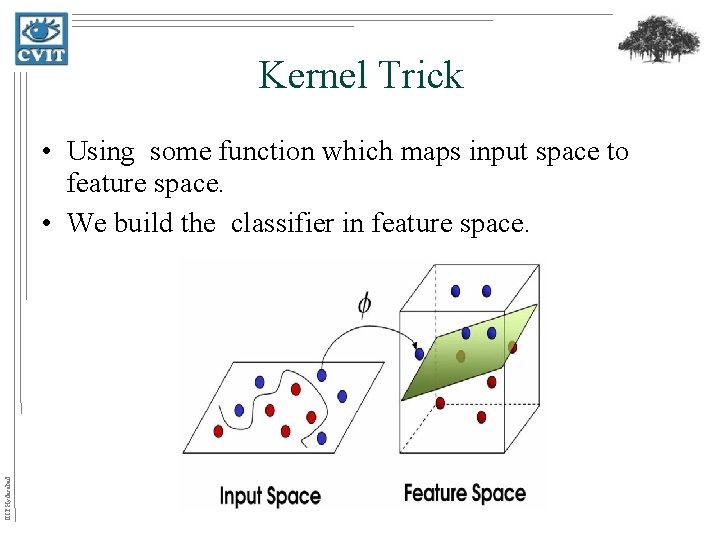

Kernel Trick IIIT Hyderabad • Using some function which maps input space to feature space. • We build the classifier in feature space.

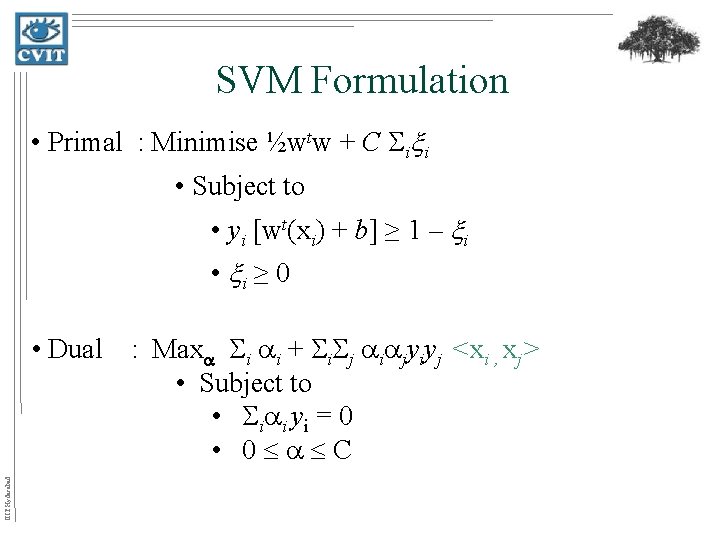

SVM Formulation • Primal : Minimise ½wtw + C i i • Subject to • yi [wt(xi) + b] ≥ 1 – i • i ≥ 0 IIIT Hyderabad • Dual : Max i i + i jyiyj <xi , xj> • Subject to • i i y i = 0 • 0 C

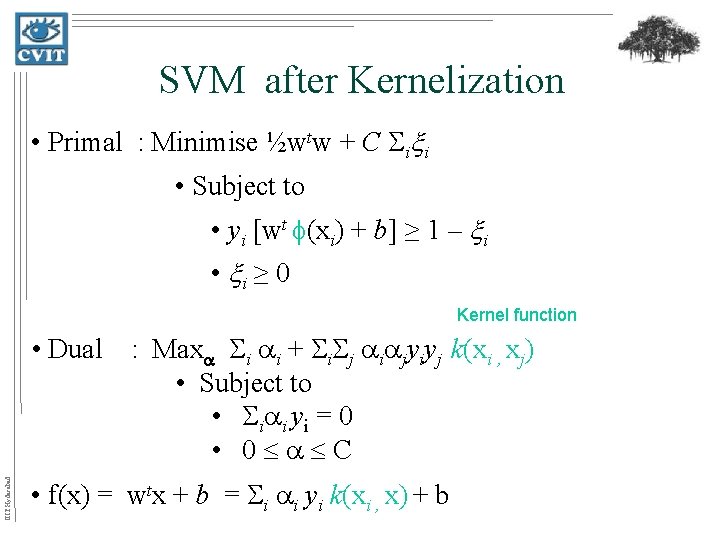

SVM after Kernelization • Primal : Minimise ½wtw + C i i • Subject to • yi [wt (xi) + b] ≥ 1 – i • i ≥ 0 Dot product in feature space IIIT Hyderabad • Dual : Max i i + i jyiyj < (xi) , (xj) > • Subject to • i i y i = 0 • 0 C • f(x) = wtx + b = i i yi < (xi) , (x) > + b

SVM after Kernelization • Primal : Minimise ½wtw + C i i • Subject to • yi [wt (xi) + b] ≥ 1 – i • i ≥ 0 Kernel function IIIT Hyderabad • Dual : Max i i + i jyiyj k(xi , xj) • Subject to • i i y i = 0 • 0 C • f(x) = wtx + b = i i yi k(xi , x) + b

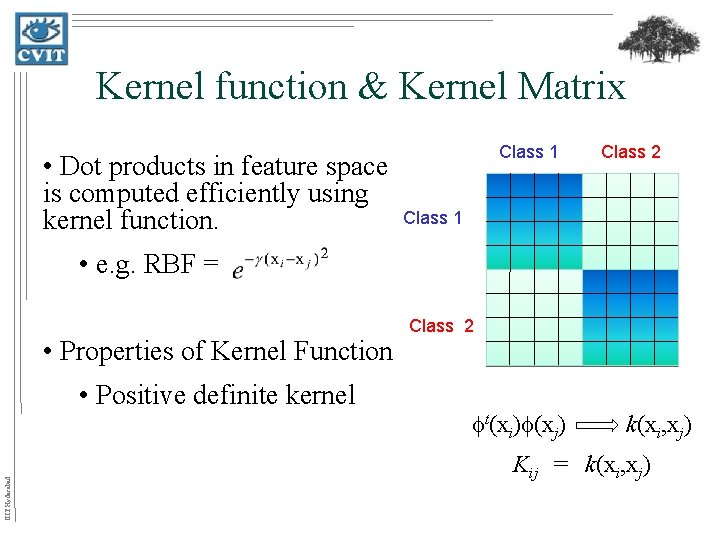

Kernel function & Kernel Matrix • Dot products in feature space is computed efficiently using kernel function. Class 1 Class 2 Class 1 • e. g. RBF = • Properties of Kernel Function IIIT Hyderabad • Positive definite kernel Class 2 t(xi) (xj) k(xi, xj) Kij = k(xi, xj)

Some Popular Kernels • Linear : k(xi, xj) = xitxj • Polynomial : k(xi, xj) = (xitxj + c)d • Gaussian (RBF) : k(xi, xj) = exp( – (xi – xj)2) IIIT Hyderabad • Chi-Squared : k(xi, xj) = exp( – 2(xi, xj) )

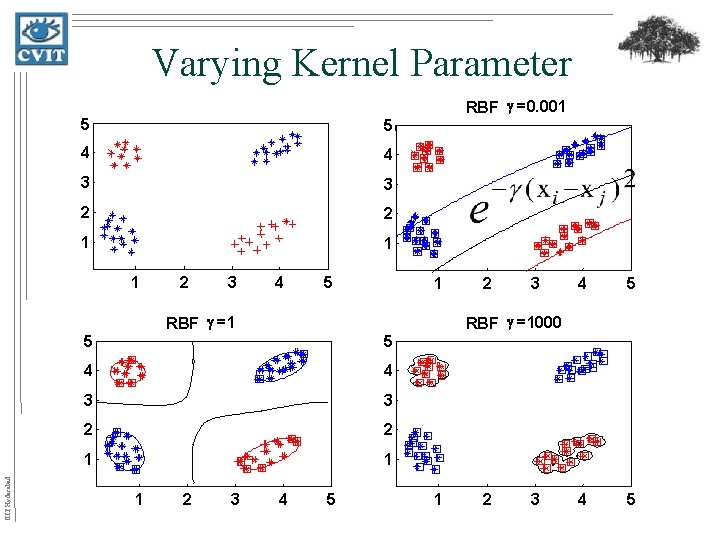

Varying Kernel Parameter 5 5 4 4 3 3 2 2 1 1 1 2 3 4 5 RBF g =0. 001 Decision Boundaries 1 IIIT Hyderabad RBF g =1 5 4 4 3 3 2 2 1 1 2 3 3 4 5 RBF g =1000 5 1 2 4 55 1 2 3

Learning the kernel • Valid kernels : – k = α 1 k 1 + α 2 k 2 – k = k 1 * k 2 • Learning the kernel function IIIT Hyderabad k(xi, xj) = l dl kl(xi , xj)

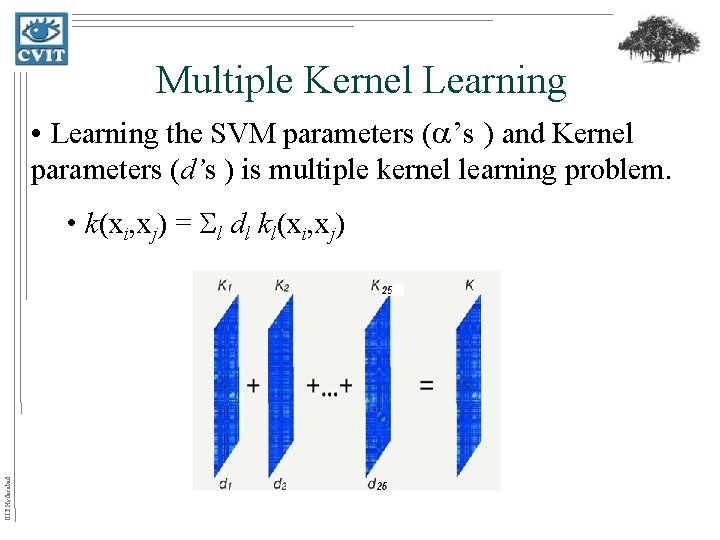

Multiple Kernel Learning • Learning the SVM parameters ( ’s ) and Kernel parameters (d’s ) is multiple kernel learning problem. • k(xi, xj) = l dl kl(xi, xj) IIIT Hyderabad = d 2 2 d 3 3

Problem Statement • Most of multiple kernel learning formulations are restricted to linear combination of kernels subject to either l 1 or l 2 regularization. IIIT Hyderabad • In this thesis, we address the problem of how the kernel can be learnt using non-linear kernel combinations subject to general regularization. • We investigate some applications, the use of nonlinear kernel combinations.

IIIT Hyderabad Literature Survey • Kernel Target Alignment • Semi-Definite Programming-MKL (SDP) • Block l 1 -MKL (M-Y regularization + SMO) • Semi-Infinite Linear Programming-MKL (SILP) • Simple MKL (gradient descent) • Hyper kernels (SDP/SOCP) • Multi-class MKL • Hierarchical MKL • Local MKL • Mixed norm MKL (mirror descent)

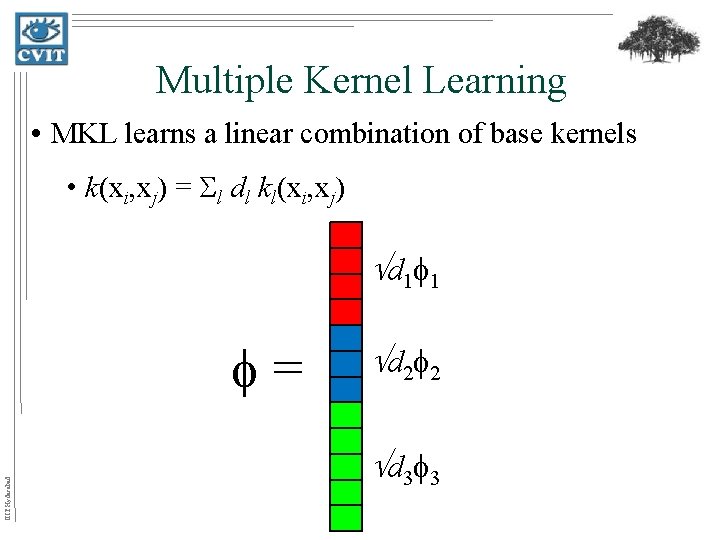

Multiple Kernel Learning • MKL learns a linear combination of base kernels • k(xi, xj) = l dl kl(xi, xj) d 1 1 IIIT Hyderabad = d 2 2 d 3 3

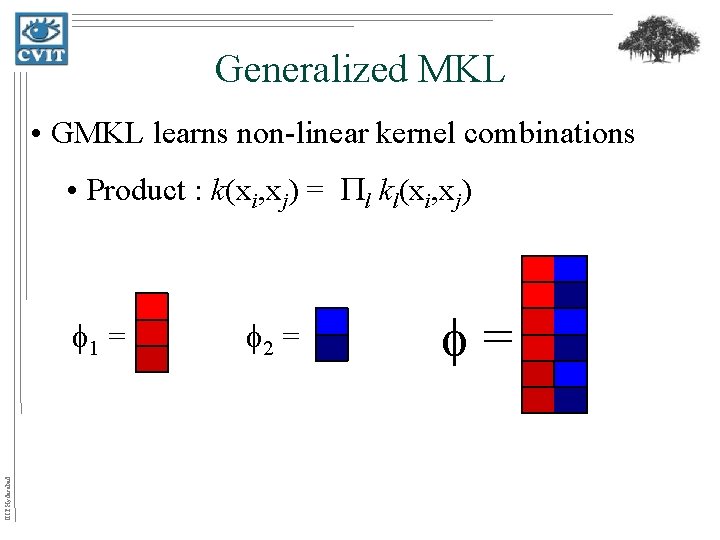

Generalized MKL • GMKL learns non-linear kernel combinations • Product : k(xi, xj) = l kl(xi, xj) IIIT Hyderabad 1 = 2 = =

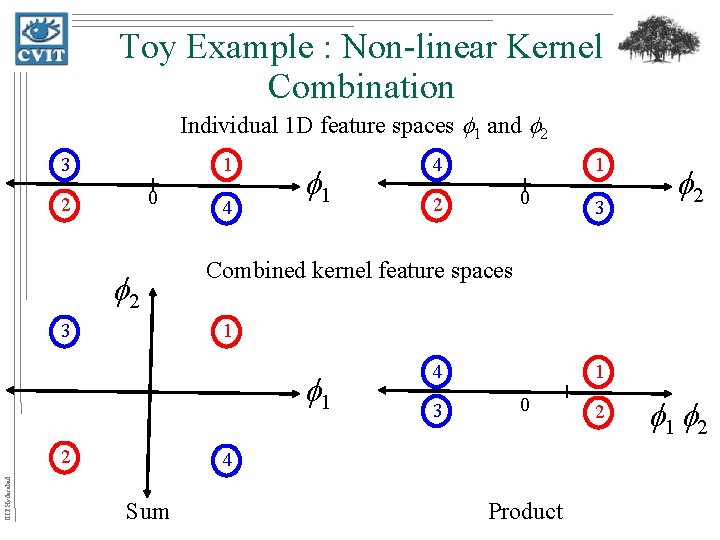

Toy Example : Non-linear Kernel Combination Individual 1 D feature spaces 1 and 2 3 1 0 2 2 3 4 1 1 0 2 3 2 Combined kernel feature spaces 1 1 2 IIIT Hyderabad 4 4 3 1 0 4 Sum Product 2 1 2

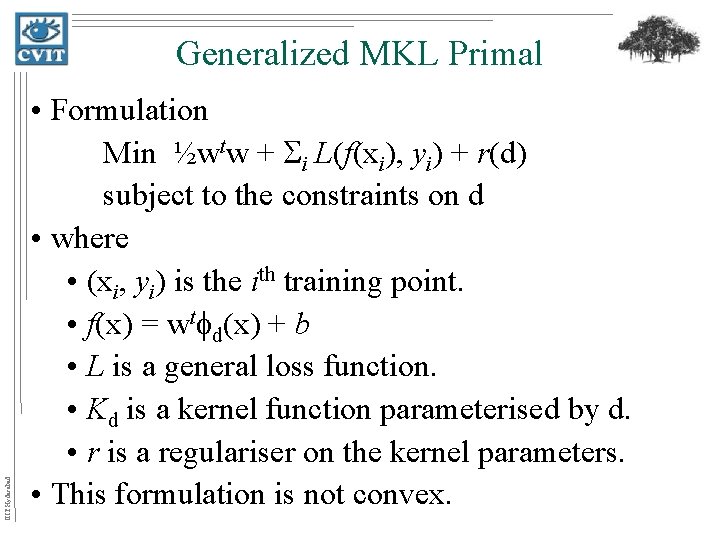

IIIT Hyderabad Generalized MKL Primal • Formulation Min ½wtw + i L(f(xi), yi) + r(d) subject to the constraints on d • where • (xi, yi) is the ith training point. • f(x) = wt d(x) + b • L is a general loss function. • Kd is a kernel function parameterised by d. • r is a regulariser on the kernel parameters. • This formulation is not convex.

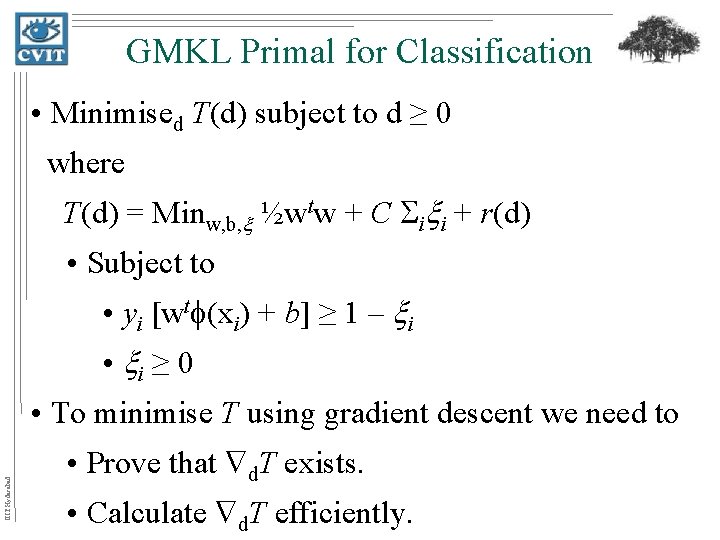

GMKL Primal for Classification • Minimised T(d) subject to d ≥ 0 where T(d) = Minw, b, ½wtw + C i i + r(d) • Subject to • yi [wt (xi) + b] ≥ 1 – i • i ≥ 0 IIIT Hyderabad • To minimise T using gradient descent we need to • Prove that d. T exists. • Calculate d. T efficiently.

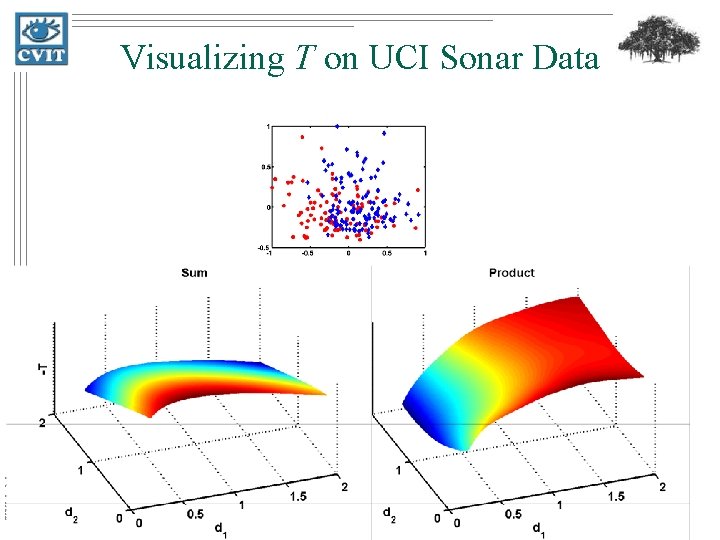

IIIT Hyderabad Visualizing T on UCI Sonar Data

Dual - Differentiability • W(d) = r(d) + Max 1 t – ½ t. YKd. Y • Subject to • 1 t. Y = 0 • 0≤ ≤C • T(d) = W(d) by the principle of strong duality. IIIT Hyderabad • Differentiability with respect to d comes from Danskin's Theorem [Danskin 1947].

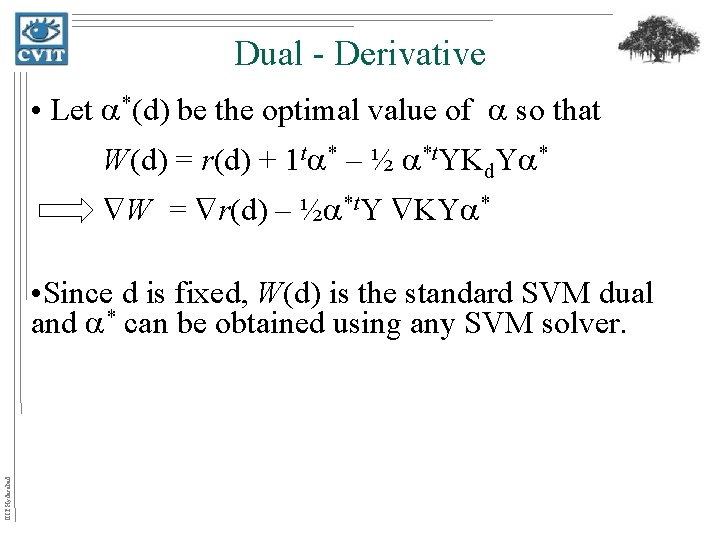

Dual - Derivative • Let *(d) be the optimal value of so that W(d) = r(d) + 1 t * – ½ *t. YKd. Y * W = r(d) – ½ *t. Y KY * IIIT Hyderabad • Since d is fixed, W(d) is the standard SVM dual and * can be obtained using any SVM solver.

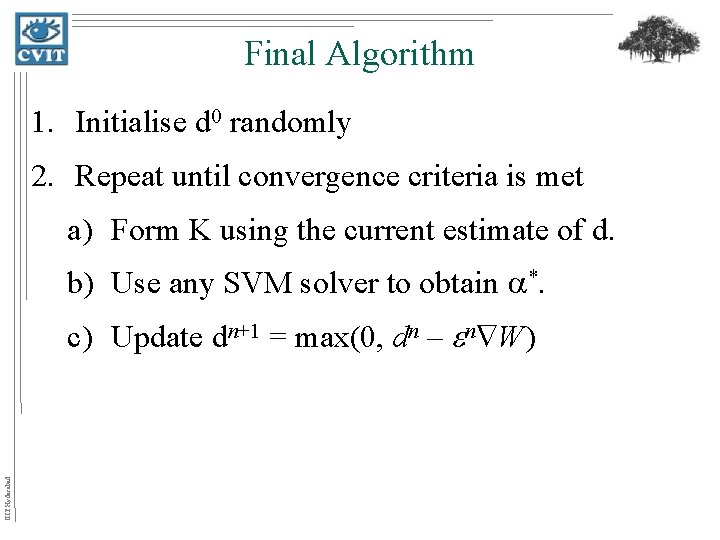

Final Algorithm 1. Initialise d 0 randomly 2. Repeat until convergence criteria is met a) Form K using the current estimate of d. b) Use any SVM solver to obtain *. IIIT Hyderabad c) Update dn+1 = max(0, dn – n W)

Applications • Feature selection • Learning discriminative parts/pixels for object categorization IIIT Hyderabad • Character Recognition taken in natural scenes

MKL & its Applications IIIT Hyderabad • In general, applications exploit one of the following views of MKL. – To obtain the optimal weights of different features used for the task. – To interpret the sparsity after learning the weights of the kernels. – To Combine the multiple heterogeneous data sources.

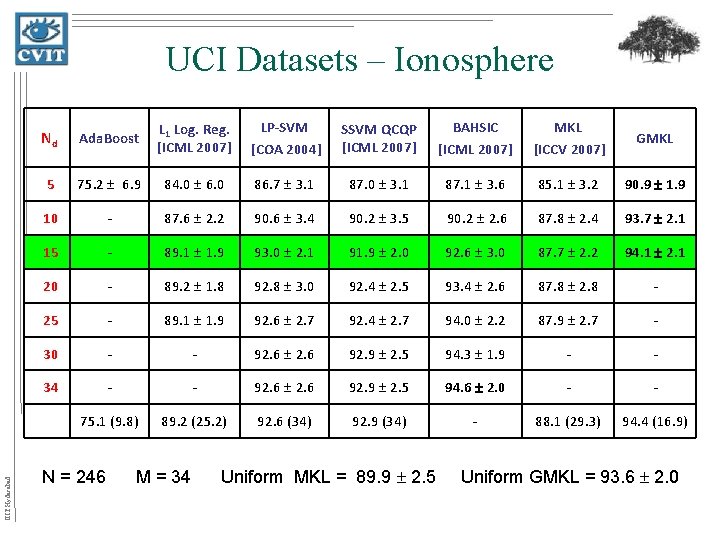

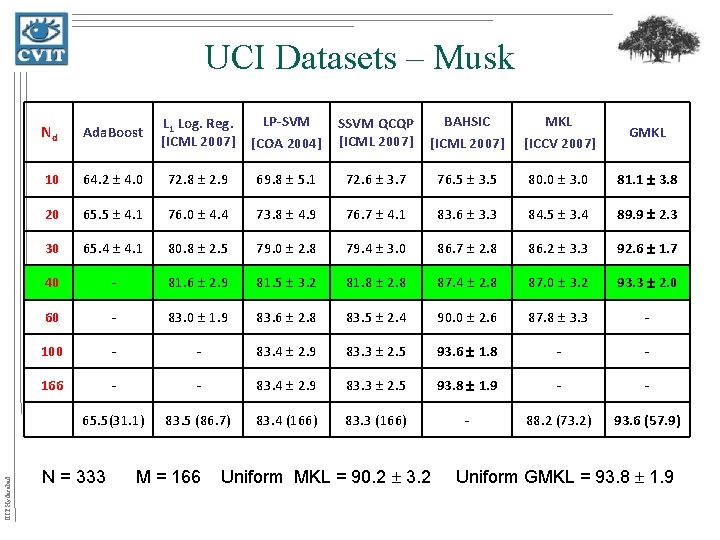

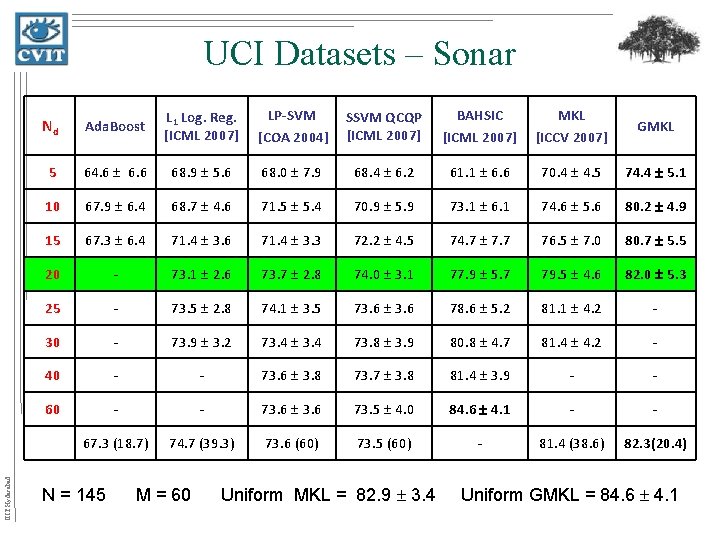

Applications : Feature Selection • UCI datasets IIIT Hyderabad k(xi, xj) = l exp( -dl(xil-xjl)2 )

IIIT Hyderabad UCI Datasets – Ionosphere Nd Ada. Boost L 1 Log. Reg. [ICML 2007] LP-SVM [COA 2004] SSVM QCQP [ICML 2007] BAHSIC [ICML 2007] MKL [ICCV 2007] GMKL 5 75. 2 6. 9 84. 0 6. 0 86. 7 3. 1 87. 0 3. 1 87. 1 3. 6 85. 1 3. 2 90. 9 1. 9 10 - 87. 6 2. 2 90. 6 3. 4 90. 2 3. 5 90. 2 2. 6 87. 8 2. 4 93. 7 2. 1 15 - 89. 1 1. 9 93. 0 2. 1 91. 9 2. 0 92. 6 3. 0 87. 7 2. 2 94. 1 2. 1 20 - 89. 2 1. 8 92. 8 3. 0 92. 4 2. 5 93. 4 2. 6 87. 8 2. 8 - 25 - 89. 1 1. 9 92. 6 2. 7 92. 4 2. 7 94. 0 2. 2 87. 9 2. 7 - 30 - - 92. 6 92. 9 2. 5 94. 3 1. 9 - - 34 - - 92. 6 92. 9 2. 5 94. 6 2. 0 - - 75. 1 (9. 8) 89. 2 (25. 2) 92. 6 (34) 92. 9 (34) - 88. 1 (29. 3) 94. 4 (16. 9) N = 246 M = 34 Uniform MKL = 89. 9 2. 5 Uniform GMKL = 93. 6 2. 0

![UCI Dataset – Parkinson’s Nd Ada. Boost L 1 Log. Reg. [ICML 2007] LP-SVM UCI Dataset – Parkinson’s Nd Ada. Boost L 1 Log. Reg. [ICML 2007] LP-SVM](http://slidetodoc.com/presentation_image_h/8605be139d3df38da79c884a344664ed/image-30.jpg)

UCI Dataset – Parkinson’s Nd Ada. Boost L 1 Log. Reg. [ICML 2007] LP-SVM [COA 2004] SSVM QCQP [ICML 2007] BAHSIC [ICML 2007] MKL [ICCV 2007] GMKL 3 79. 4 6. 5 81. 7 2. 7 76. 4 4. 5 76. 1 5. 8 85. 2 4. 5 83. 7 4. 4 86. 3 4. 1 7 - 82. 6 3. 3 86. 2 2. 7 86. 1 4. 0 88. 5 3. 6 84. 7 5. 2 92. 6 2. 9 11 - 83. 5 2. 8 86. 0 3. 5 86. 1 3. 1 89. 4 3. 6 86. 3 4. 3 - 15 - - 87. 0 3. 3 86. 3 3. 1 89. 9 3. 5 - - 22 - - 87. 2 3. 2 87. 2 3. 0 91. 0 3. 5 - - 80. 2 (5. 2) 83. 6 (11. 1) 87. 2 (22) - 88. 3 (14. 6) 92. 7 (9. 0) IIIT Hyderabad N = 136 M = 22 Uniform MKL = 87. 3 3. 9 Uniform GMKL = 91. 0 3. 5

IIIT Hyderabad UCI Datasets – Musk Nd Ada. Boost L 1 Log. Reg. [ICML 2007] LP-SVM [COA 2004] SSVM QCQP [ICML 2007] BAHSIC [ICML 2007] MKL [ICCV 2007] GMKL 10 64. 2 4. 0 72. 8 2. 9 69. 8 5. 1 72. 6 3. 7 76. 5 3. 5 80. 0 3. 0 81. 1 3. 8 20 65. 5 4. 1 76. 0 4. 4 73. 8 4. 9 76. 7 4. 1 83. 6 3. 3 84. 5 3. 4 89. 9 2. 3 30 65. 4 4. 1 80. 8 2. 5 79. 0 2. 8 79. 4 3. 0 86. 7 2. 8 86. 2 3. 3 92. 6 1. 7 40 - 81. 6 2. 9 81. 5 3. 2 81. 8 2. 8 87. 4 2. 8 87. 0 3. 2 93. 3 2. 0 60 - 83. 0 1. 9 83. 6 2. 8 83. 5 2. 4 90. 0 2. 6 87. 8 3. 3 - 100 - - 83. 4 2. 9 83. 3 2. 5 93. 6 1. 8 - - 166 - - 83. 4 2. 9 83. 3 2. 5 93. 8 1. 9 - - 65. 5(31. 1) 83. 5 (86. 7) 83. 4 (166) 83. 3 (166) - 88. 2 (73. 2) 93. 6 (57. 9) N = 333 M = 166 Uniform MKL = 90. 2 3. 2 Uniform GMKL = 93. 8 1. 9

IIIT Hyderabad UCI Datasets – Sonar Nd Ada. Boost L 1 Log. Reg. [ICML 2007] LP-SVM [COA 2004] SSVM QCQP [ICML 2007] BAHSIC [ICML 2007] MKL [ICCV 2007] GMKL 5 64. 6 6. 6 68. 9 5. 6 68. 0 7. 9 68. 4 6. 2 61. 1 6. 6 70. 4 4. 5 74. 4 5. 1 10 67. 9 6. 4 68. 7 4. 6 71. 5 5. 4 70. 9 5. 9 73. 1 6. 1 74. 6 5. 6 80. 2 4. 9 15 67. 3 6. 4 71. 4 3. 6 71. 4 3. 3 72. 2 4. 5 74. 7 7. 7 76. 5 7. 0 80. 7 5. 5 20 - 73. 1 2. 6 73. 7 2. 8 74. 0 3. 1 77. 9 5. 7 79. 5 4. 6 82. 0 5. 3 25 - 73. 5 2. 8 74. 1 3. 5 73. 6 78. 6 5. 2 81. 1 4. 2 - 30 - 73. 9 3. 2 73. 4 73. 8 3. 9 80. 8 4. 7 81. 4 4. 2 - 40 - - 73. 6 3. 8 73. 7 3. 8 81. 4 3. 9 - - 60 - - 73. 6 73. 5 4. 0 84. 6 4. 1 - - 67. 3 (18. 7) 74. 7 (39. 3) 73. 6 (60) 73. 5 (60) - 81. 4 (38. 6) 82. 3(20. 4) N = 145 M = 60 Uniform MKL = 82. 9 3. 4 Uniform GMKL = 84. 6 4. 1

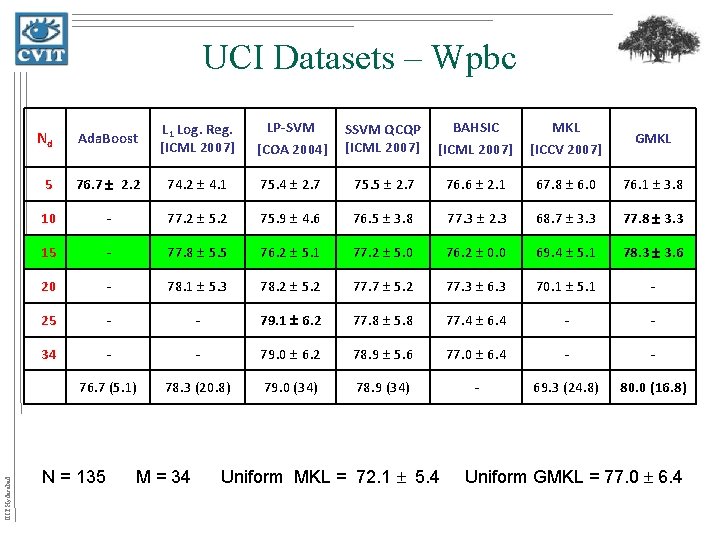

IIIT Hyderabad UCI Datasets – Wpbc Nd Ada. Boost L 1 Log. Reg. [ICML 2007] LP-SVM [COA 2004] SSVM QCQP [ICML 2007] BAHSIC [ICML 2007] MKL [ICCV 2007] GMKL 5 76. 7 2. 2 74. 2 4. 1 75. 4 2. 7 75. 5 2. 7 76. 6 2. 1 67. 8 6. 0 76. 1 3. 8 10 - 77. 2 5. 2 75. 9 4. 6 76. 5 3. 8 77. 3 2. 3 68. 7 3. 3 77. 8 3. 3 15 - 77. 8 5. 5 76. 2 5. 1 77. 2 5. 0 76. 2 0. 0 69. 4 5. 1 78. 3 3. 6 20 - 78. 1 5. 3 78. 2 5. 2 77. 7 5. 2 77. 3 6. 3 70. 1 5. 1 - 25 - - 79. 1 6. 2 77. 8 5. 8 77. 4 6. 4 - - 34 - - 79. 0 6. 2 78. 9 5. 6 77. 0 6. 4 - - 76. 7 (5. 1) 78. 3 (20. 8) 79. 0 (34) 78. 9 (34) - 69. 3 (24. 8) 80. 0 (16. 8) N = 135 M = 34 Uniform MKL = 72. 1 5. 4 Uniform GMKL = 77. 0 6. 4

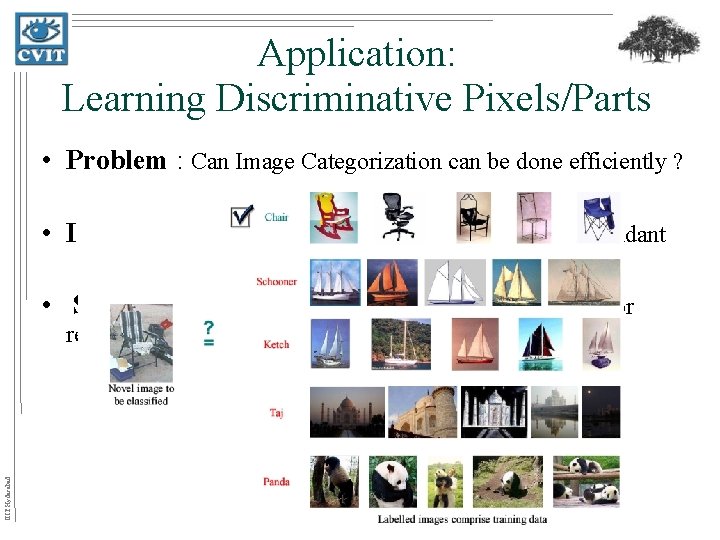

Application: Learning Discriminative Pixels/Parts • Problem : Can Image Categorization can be done efficiently ? • Idea: Often, Information present in images can be redundant • Solution : Yes, by focusing on only a subset of pixels or IIIT Hyderabad regions in an image.

Solution IIIT Hyderabad • A Kernel is associated with each part

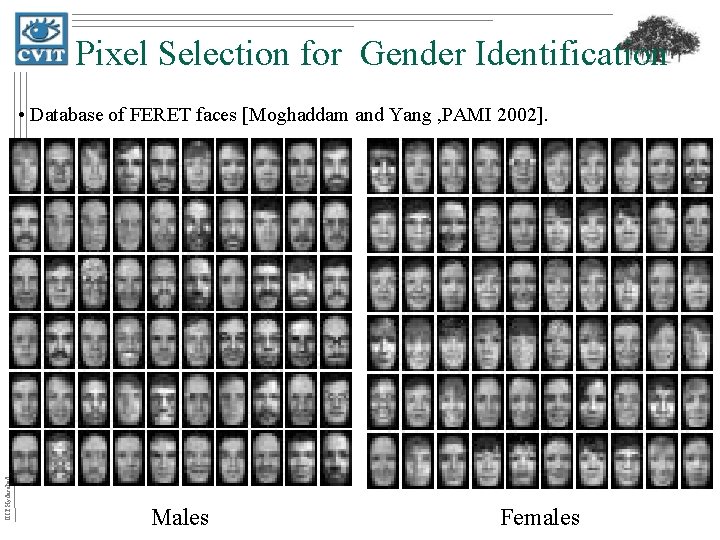

Pixel Selection for Gender Identification IIIT Hyderabad • Database of FERET faces [Moghaddam and Yang , PAMI 2002]. Males Females

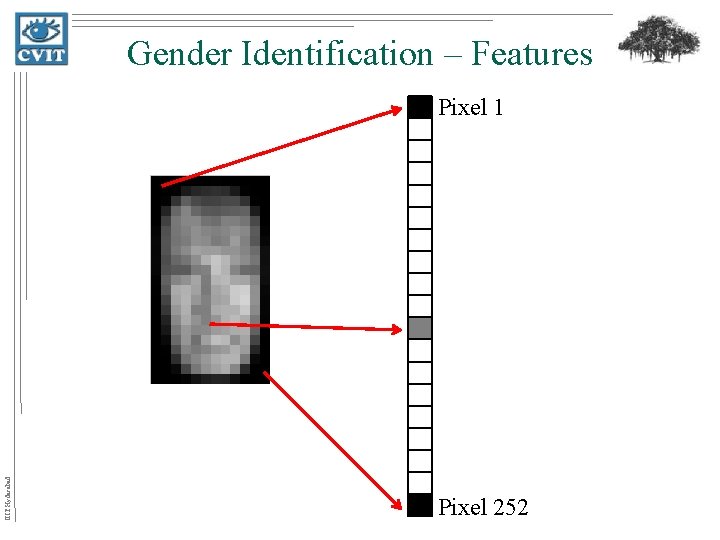

Gender Identification – Features IIIT Hyderabad Pixel 1 Pixel 252

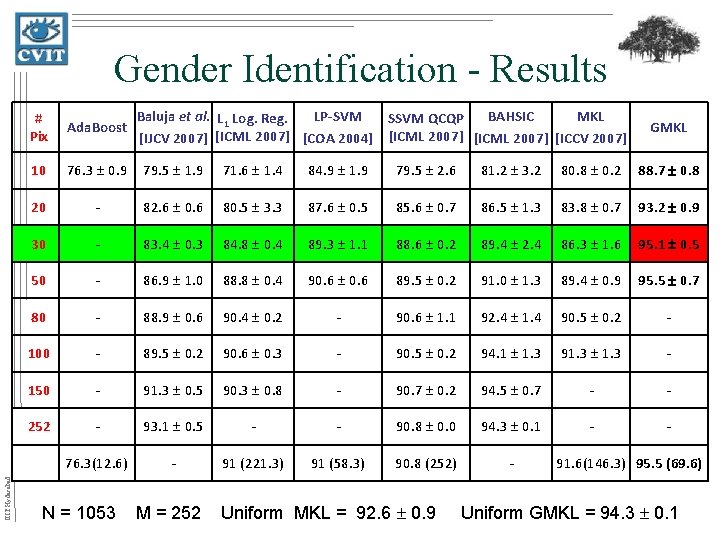

IIIT Hyderabad Gender Identification - Results Baluja et al. L 1 Log. Reg. LP-SVM BAHSIC MKL SSVM QCQP [IJCV 2007] [ICML 2007] [COA 2004] [ICML 2007] [ICCV 2007] # Pix Ada. Boost 10 76. 3 0. 9 79. 5 1. 9 71. 6 1. 4 84. 9 1. 9 79. 5 2. 6 81. 2 3. 2 80. 8 0. 2 88. 7 0. 8 20 - 82. 6 0. 6 80. 5 3. 3 87. 6 0. 5 85. 6 0. 7 86. 5 1. 3 83. 8 0. 7 93. 2 0. 9 30 - 83. 4 0. 3 84. 8 0. 4 89. 3 1. 1 88. 6 0. 2 89. 4 2. 4 86. 3 1. 6 95. 1 0. 5 50 - 86. 9 1. 0 88. 8 0. 4 90. 6 89. 5 0. 2 91. 0 1. 3 89. 4 0. 9 95. 5 0. 7 80 - 88. 9 0. 6 90. 4 0. 2 - 90. 6 1. 1 92. 4 1. 4 90. 5 0. 2 - 100 - 89. 5 0. 2 90. 6 0. 3 - 90. 5 0. 2 94. 1 1. 3 91. 3 - 150 - 91. 3 0. 5 90. 3 0. 8 - 90. 7 0. 2 94. 5 0. 7 - - 252 - 93. 1 0. 5 - - 90. 8 0. 0 94. 3 0. 1 - - 76. 3(12. 6) - 91 (221. 3) 91 (58. 3) 90. 8 (252) - N = 1053 M = 252 Uniform MKL = 92. 6 0. 9 GMKL 91. 6(146. 3) 95. 5 (69. 6) Uniform GMKL = 94. 3 0. 1

Caltech 101 • Task : Object recognition • No. of classes : 102 classes Collected by Fei-Fei et al. [PAMI 2006] IIIT Hyderabad • Problem !!! Not Perfectly Aligned !!!. . . but roughly aligned

Approach IIIT Hyderabad Kernel 1 Feature Extraction : GIST Kernel 64

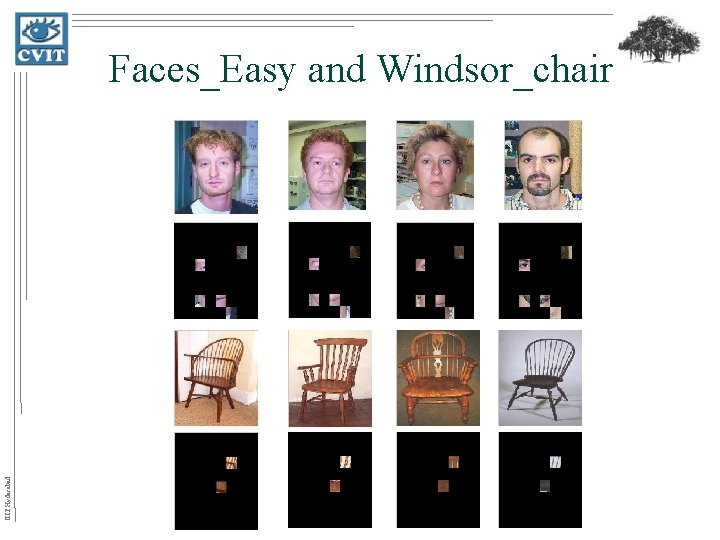

IIIT Hyderabad Faces_Easy and Windsor_chair

IIIT Hyderabad Car_Side and Leopards

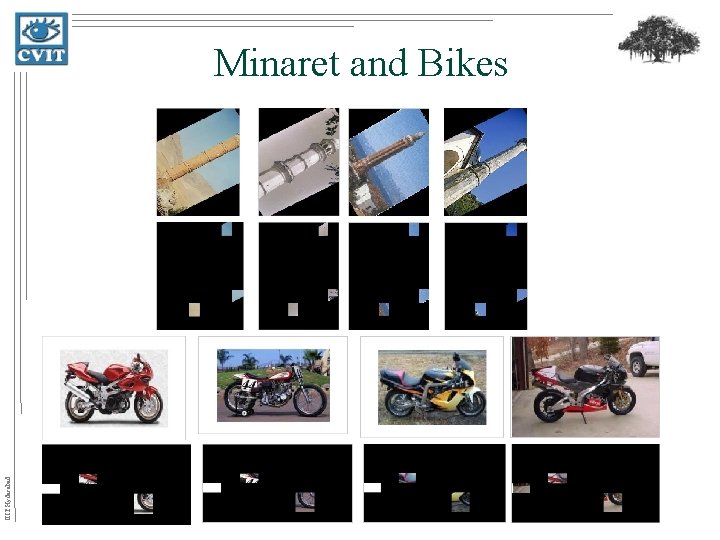

IIIT Hyderabad Minaret and Bikes

Problem IIIT Hyderabad • Objective : Character recognition (English) taken from natural scenes.

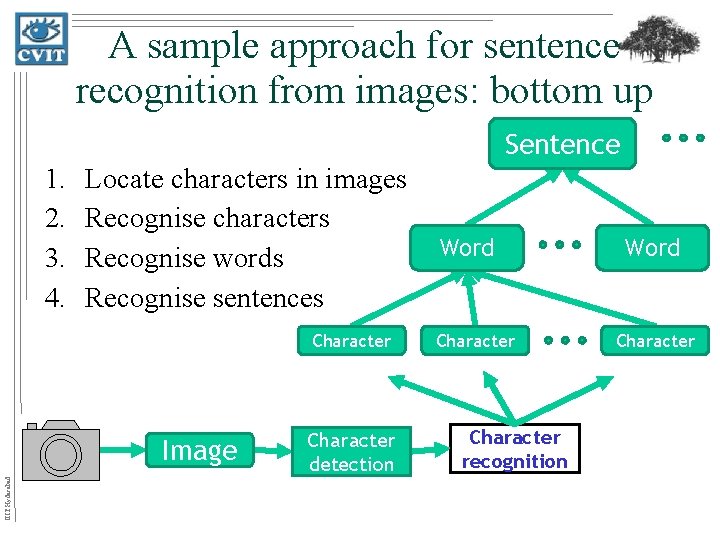

A sample approach for sentence recognition from images: bottom up Sentence 1. 2. 3. 4. Locate characters in images Recognise characters Recognise words Recognise sentences Character IIIT Hyderabad Image Character detection Word Character recognition Word Character

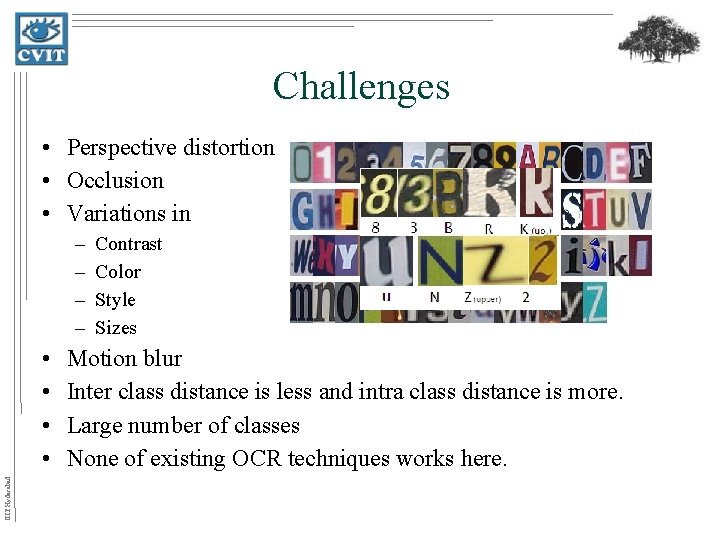

Challenges • Perspective distortion • Occlusion • Variations in – – IIIT Hyderabad • • Contrast Color Style Sizes Motion blur Inter class distance is less and intra class distance is more. Large number of classes None of existing OCR techniques works here.

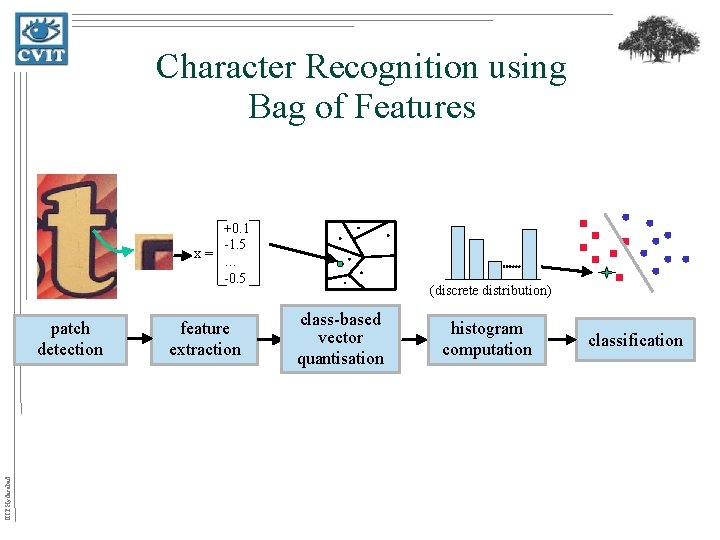

Character Recognition using Bag of Features +0. 1 -1. 5 x= … -0. 5 IIIT Hyderabad patch detection feature extraction (discrete distribution) class-based vector quantisation histogram computation classification

![Feature Extraction Methods IIIT Hyderabad • • • Geometric Blur [Berg] Shape Contexts [Belongie Feature Extraction Methods IIIT Hyderabad • • • Geometric Blur [Berg] Shape Contexts [Belongie](http://slidetodoc.com/presentation_image_h/8605be139d3df38da79c884a344664ed/image-48.jpg)

Feature Extraction Methods IIIT Hyderabad • • • Geometric Blur [Berg] Shape Contexts [Belongie et al] SIFT [Lowe] Patches [Varma & Zisserman 07] SPIN [Lazebnik et al. , Johnson] MR 8 (maximum response of 8 filters) [Varma & Zisserman 05]

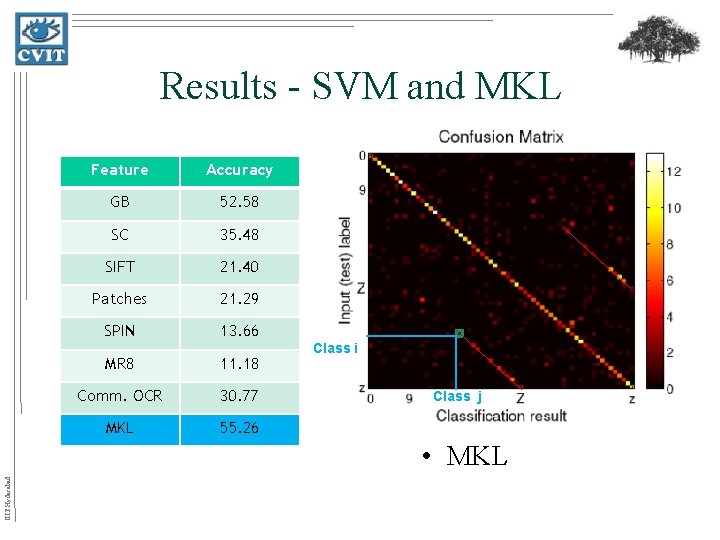

Results - SVM and MKL Feature Accuracy GB 52. 58 SC 35. 48 SIFT 21. 40 Patches 21. 29 SPIN 13. 66 MR 8 11. 18 Comm. OCR 30. 77 MKL 55. 26 Class i Class j IIIT Hyderabad • MKL

Conclusions • We presented a formulation which accepts non-linear kernel combinations. • GMKL results can be significantly better than standard MKL. IIIT Hyderabad • We shown several applications where proposed formulation gives better than state of art methods.

Publications • B. Rakesh Babu, M. Varma and C. V. Jawahar. Learning Discriminative parts for Object Categorization , Not Published Yet. • M. Varma and B. Rakesh Babu. More generality in efficient multiple kernel learning. In Proceedings of the International Conference on Machine Learning (ICML), Montreal, Canada, June 2009. ( Already 9 citations to this paper ) IIIT Hyderabad • T. E. de Campos and B. Rakesh Babu and M. Varma. Character recognition in natural images. In Proceedings of the International Conference on Computer Vision Theory and Applications (VISSAP), Lisbon, Portugal, February 2009.

Thank You IIIT Hyderabad rakeshbabu@research. iiit. net

- Slides: 52