Learning Individual Models for Imputation 120 Aoqian Zhang

- Slides: 20

Learning Individual Models for Imputation 1/20 Aoqian Zhang, Shaoxu Song, Yu Sun, Jianmin Wang Tsinghua University, China ICDE 2019

Outline 2/20 Motivation Existing methods Solution Experiments Conclusion ICDE 2019

Missing Numerical Data 3/20 Missing values over numerical data Ø Ø Ø Failures of sensor reading devices Poorly handling overflow during calculation Mismatching in integrating heterogeneous sources How to handle the missing data Ø Ø Ø Simply discarding the incomplete tuples with missing values makes the data even more incomplete Loss of information Impute the missing data can be useful ICDE 2019

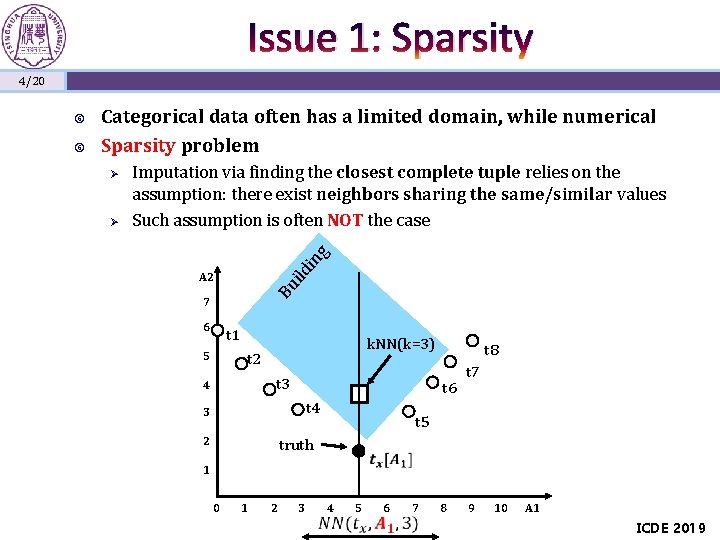

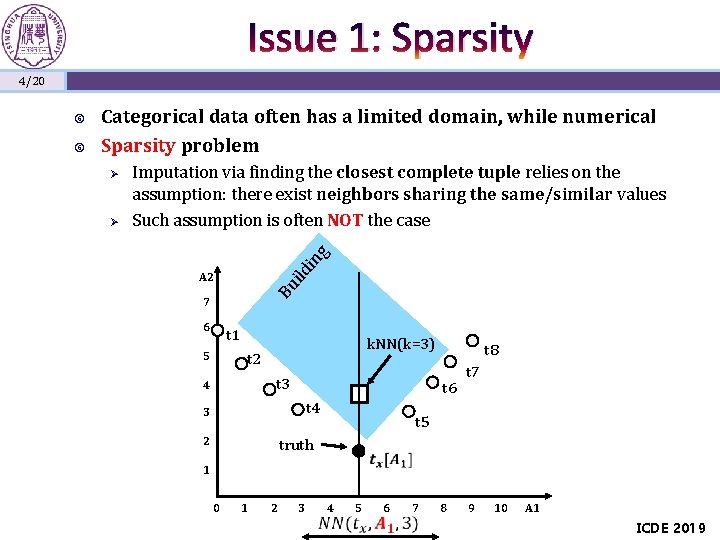

Issue 1: Sparsity 4/20 Ø Imputation via finding the closest complete tuple relies on the assumption: there exist neighbors sharing the same/similar values Such assumption is often NOT the case g Ø ild in Categorical data often has a limited domain, while numerical Sparsity problem A 2 Bu 7 6 t 1 5 k. NN(k=3) t 2 t 3 4 t 6 t 4 3 2 t 8 t 7 t 5 truth 1 0 1 2 3 4 5 6 7 8 9 10 A 1 ICDE 2019

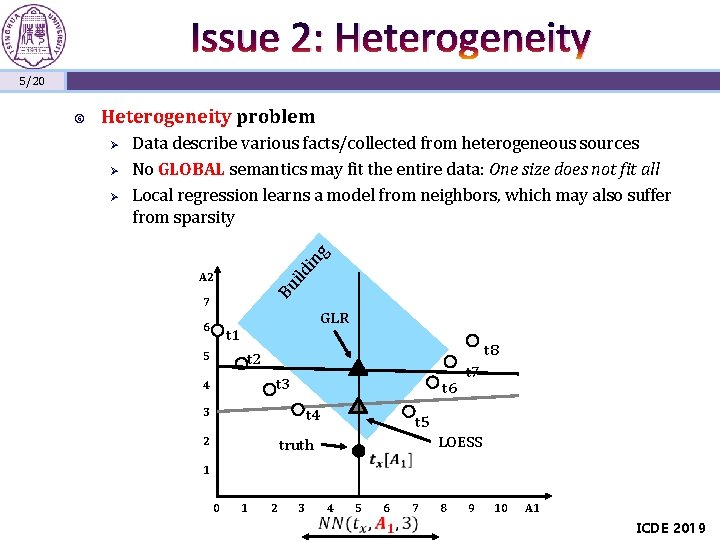

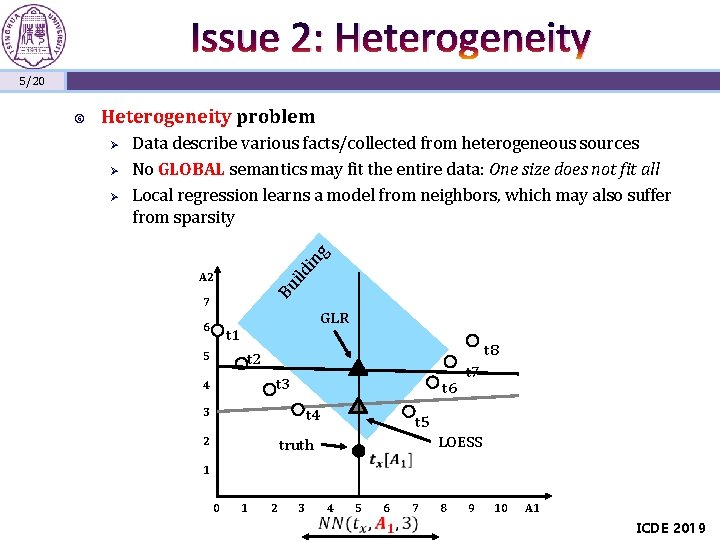

Issue 2: Heterogeneity 5/20 Heterogeneity problem Ø g Ø Data describe various facts/collected from heterogeneous sources No GLOBAL semantics may fit the entire data: One size does not fit all Local regression learns a model from neighbors, which may also suffer from sparsity ild in Ø A 2 Bu 7 6 GLR t 1 5 t 8 t 2 t 3 4 t 6 3 t 4 2 t 7 t 5 LOESS truth 1 0 1 2 3 4 5 6 7 8 9 10 A 1 ICDE 2019

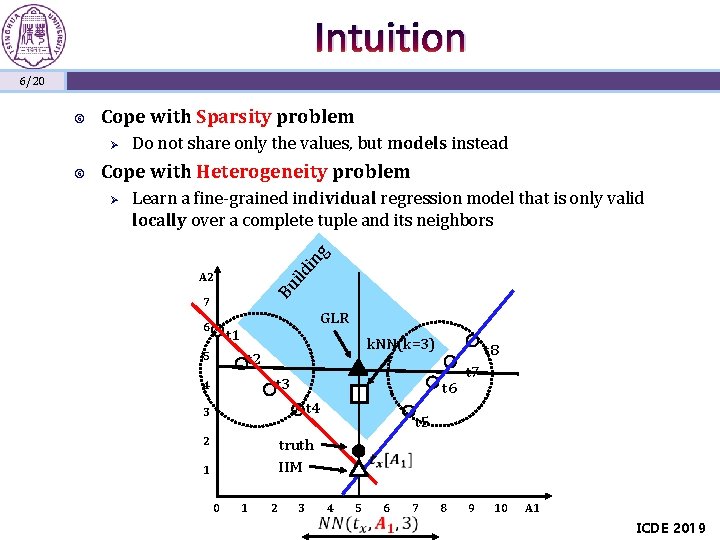

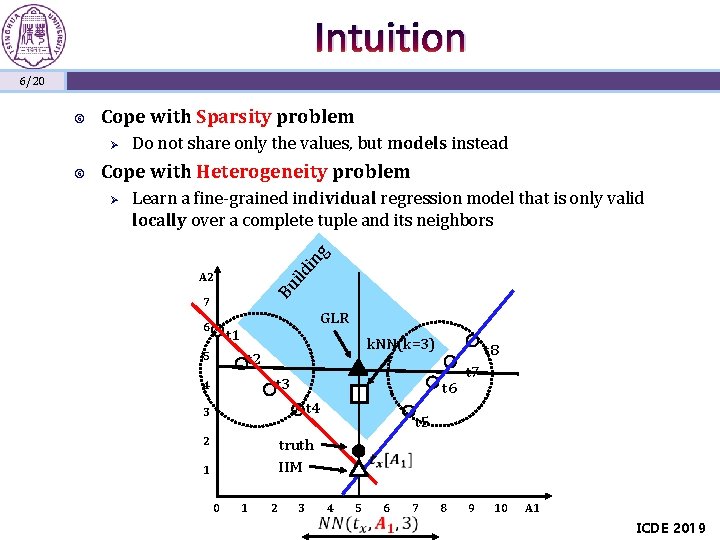

Intuition 6/20 Cope with Sparsity problem Ø Cope with Heterogeneity problem Learn a fine-grained individual regression model that is only valid locally over a complete tuple and its neighbors g Ø ild in Do not share only the values, but models instead A 2 Bu 7 6 GLR t 1 5 k. NN(k=3) t 2 t 3 4 t 6 t 4 3 2 t 8 t 7 t 5 truth IIM 1 0 1 2 3 4 5 6 7 8 9 10 A 1 ICDE 2019

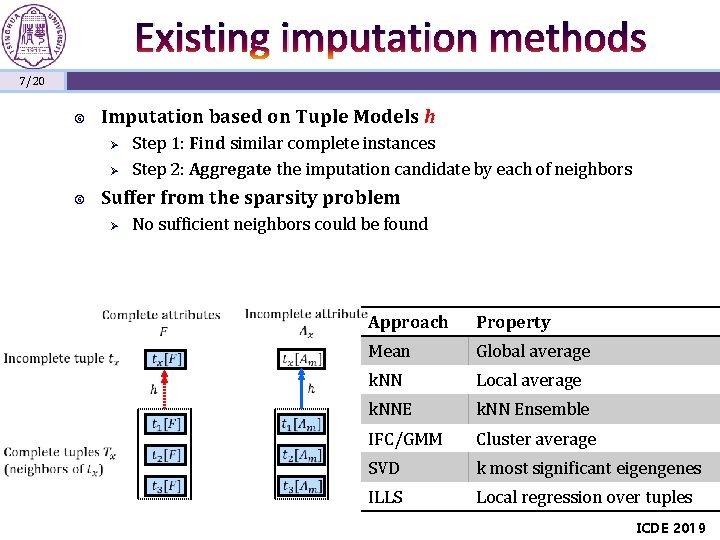

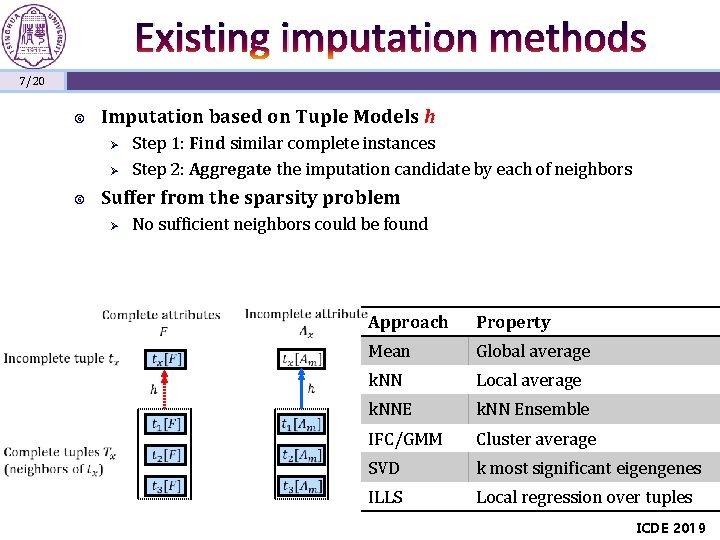

Existing imputation methods 7/20 Imputation based on Tuple Models h Ø Ø Step 1: Find similar complete instances Step 2: Aggregate the imputation candidate by each of neighbors Suffer from the sparsity problem Ø No sufficient neighbors could be found Approach Property Mean Global average k. NN Local average k. NNE k. NN Ensemble IFC/GMM Cluster average SVD k most significant eigengenes ILLS Local regression over tuples ICDE 2019

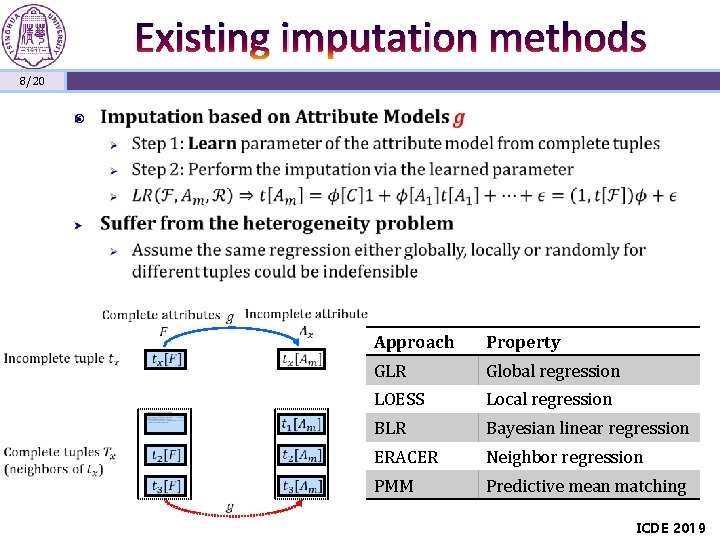

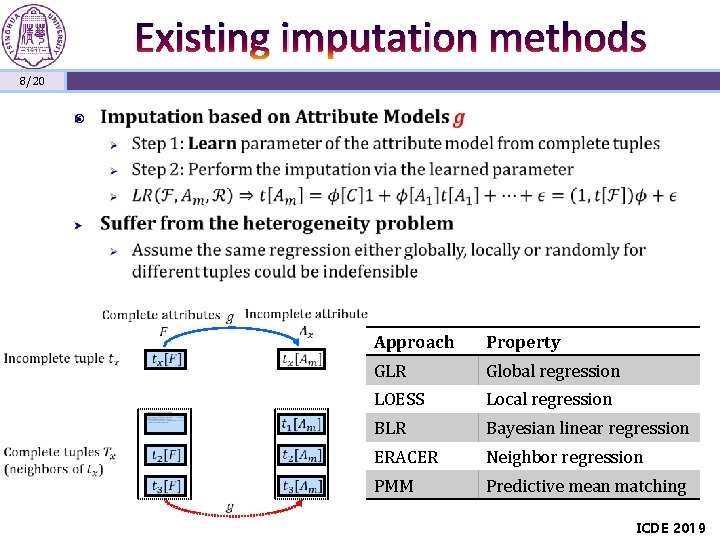

Existing imputation methods 8/20 Approach Property GLR Global regression LOESS Local regression BLR Bayesian linear regression ERACER Neighbor regression PMM Predictive mean matching ICDE 2019

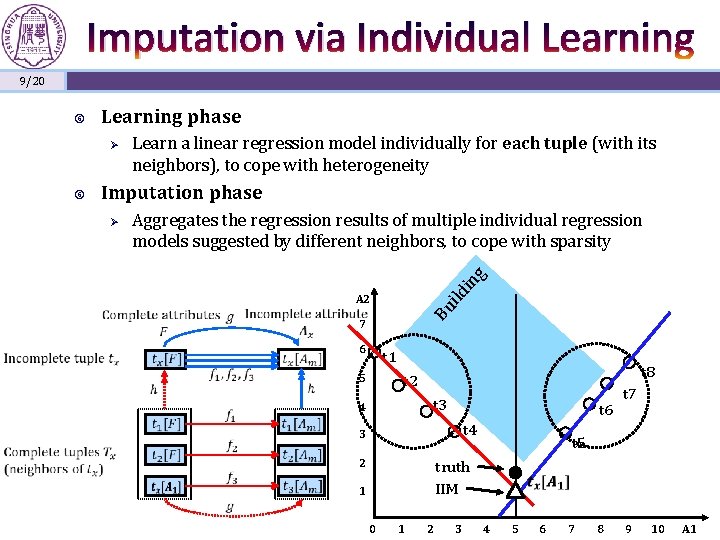

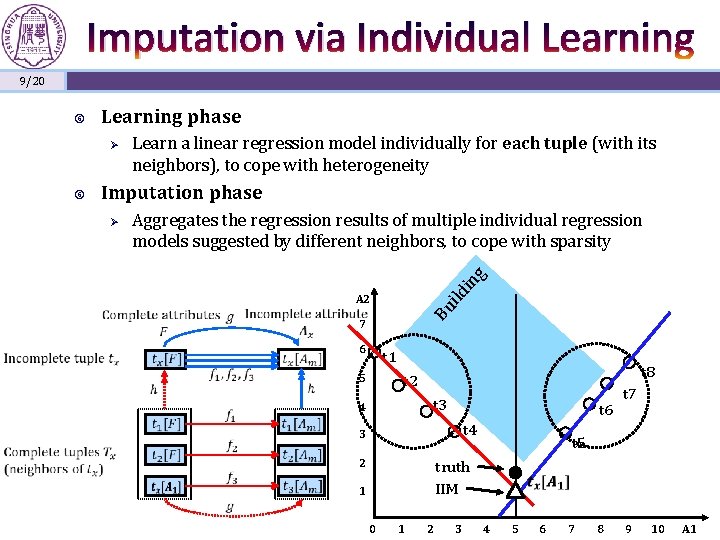

Imputation via Individual Learning 9/20 Learning phase Ø Imputation phase g Aggregates the regression results of multiple individual regression models suggested by different neighbors, to cope with sparsity in Ø ild Learn a linear regression model individually for each tuple (with its neighbors), to cope with heterogeneity A 2 Bu 7 6 t 1 5 t 8 t 2 t 3 4 t 6 t 4 3 2 t 7 t 5 truth IIM 1 0 1 2 3 4 5 6 7 8 9 10 A 1

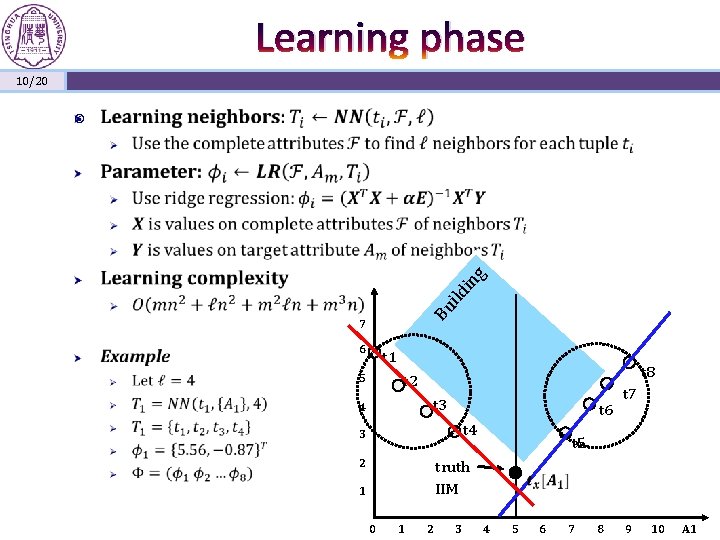

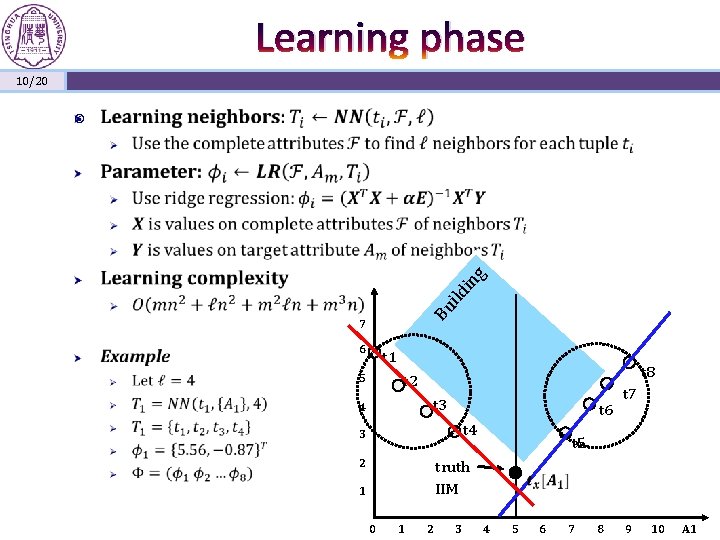

Learning phase 10/20 Bu ild in g 7 6 t 1 5 t 8 t 2 t 3 4 t 6 t 4 3 2 t 7 t 5 truth IIM 1 0 1 2 3 4 5 6 7 8 9 10 A 1

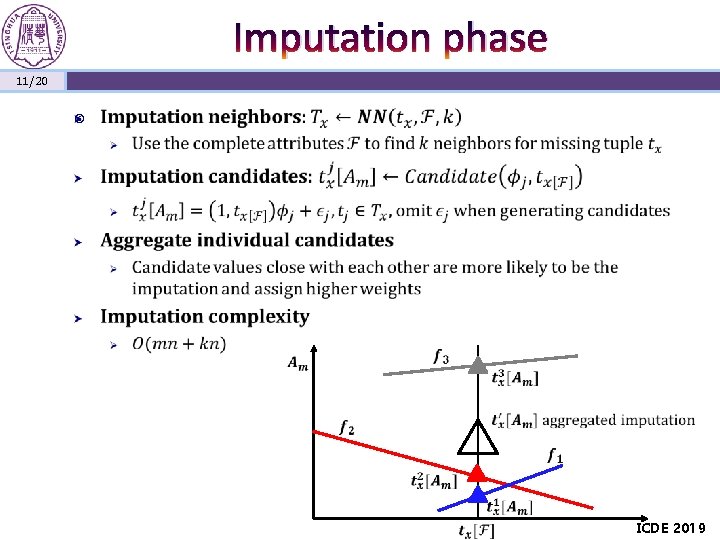

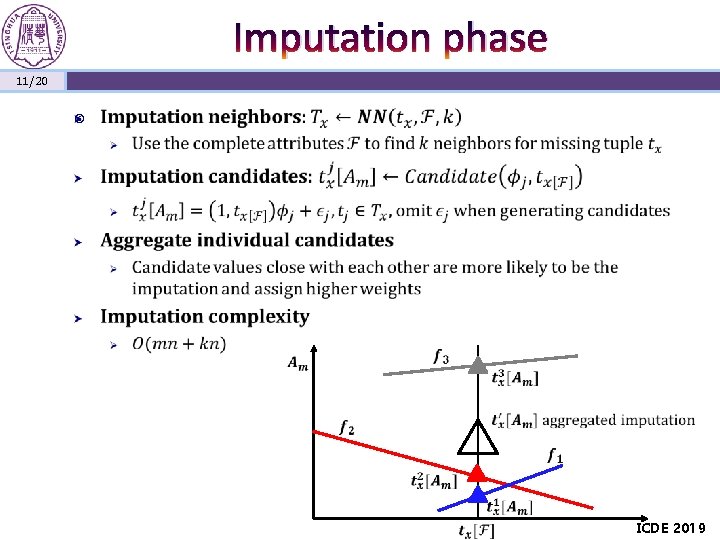

Imputation phase 11/20 ICDE 2019

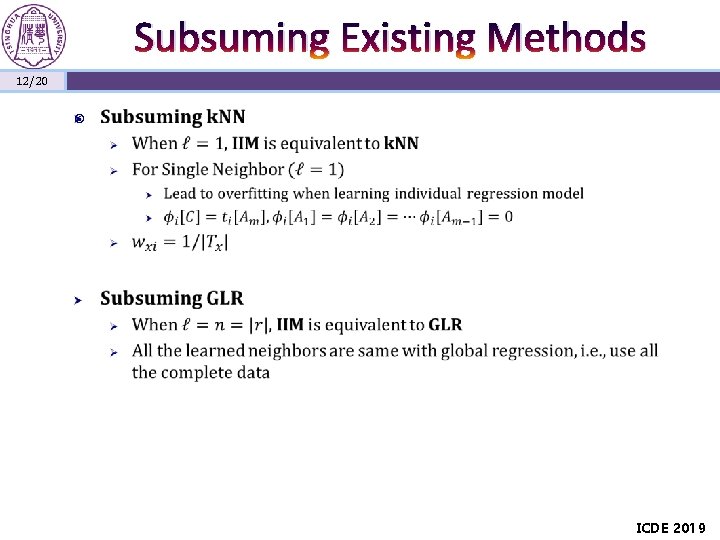

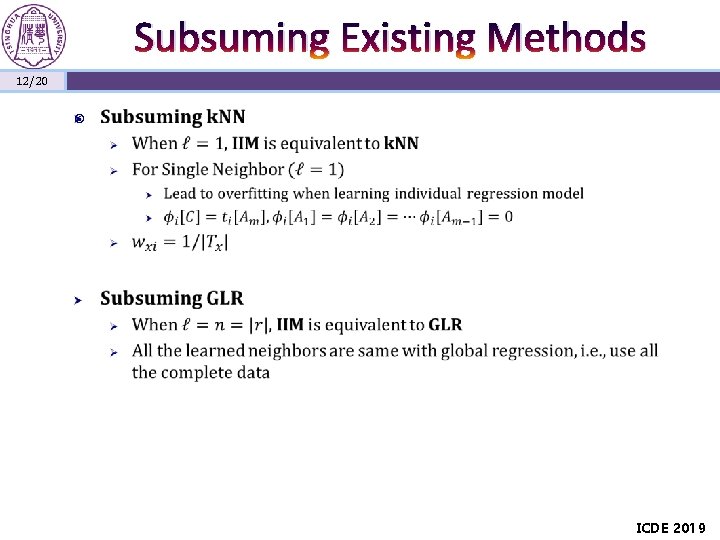

Subsuming Existing Methods 12/20 ICDE 2019

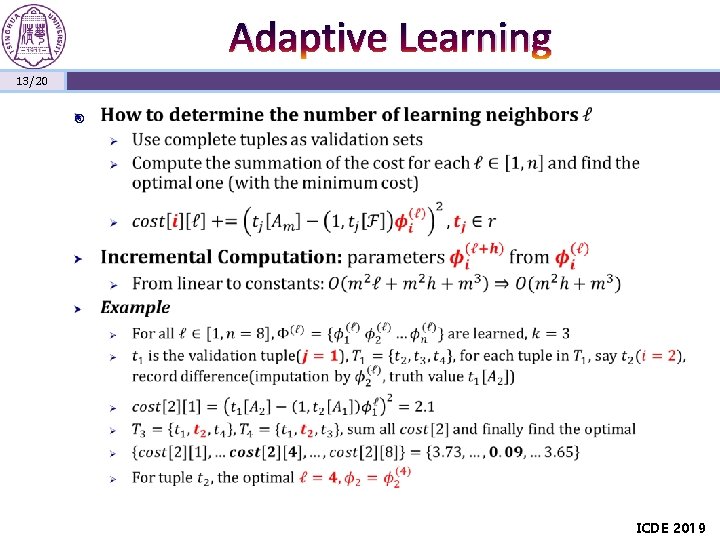

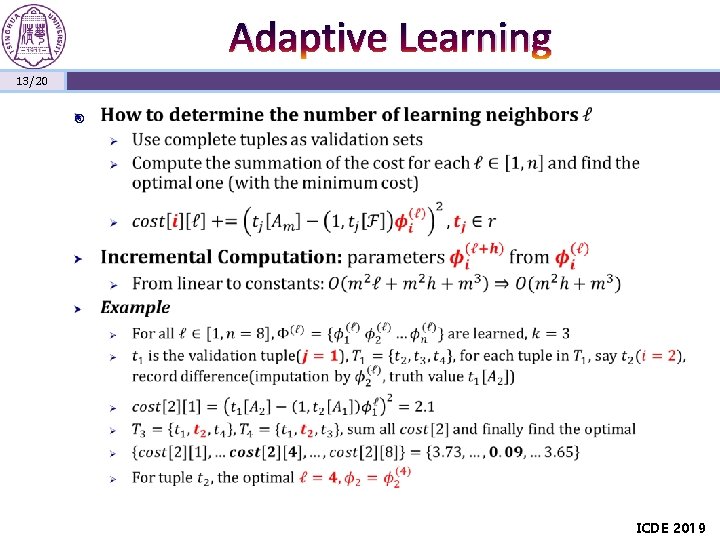

Adaptive Learning 13/20 ICDE 2019

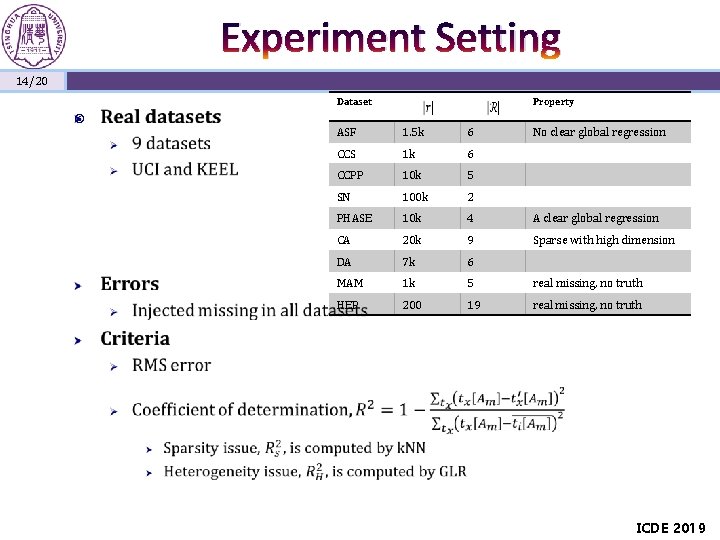

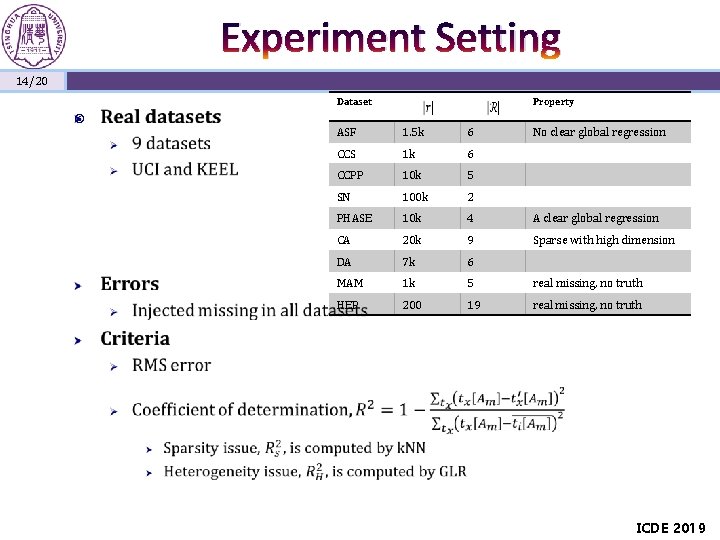

Experiment Setting 14/20 Dataset Property ASF 1. 5 k 6 No clear global regression CCS 1 k 6 CCPP 10 k 5 SN 100 k 2 PHASE 10 k 4 A clear global regression CA 20 k 9 Sparse with high dimension DA 7 k 6 MAM 1 k 5 real missing, no truth HEP 200 19 real missing, no truth ICDE 2019

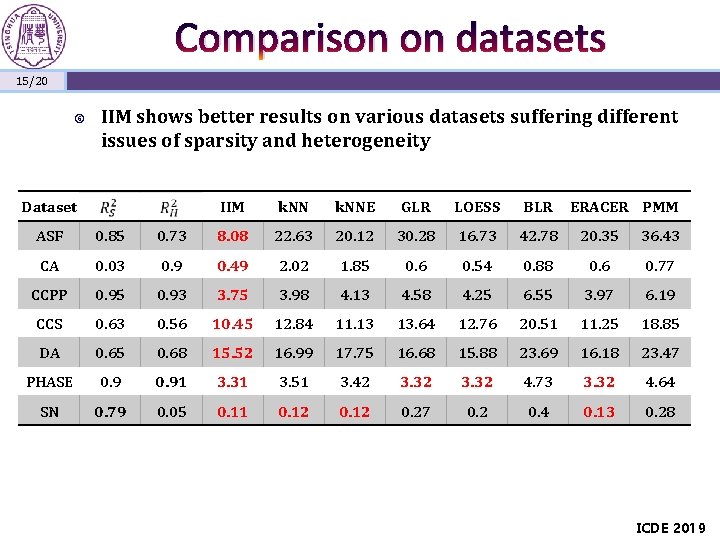

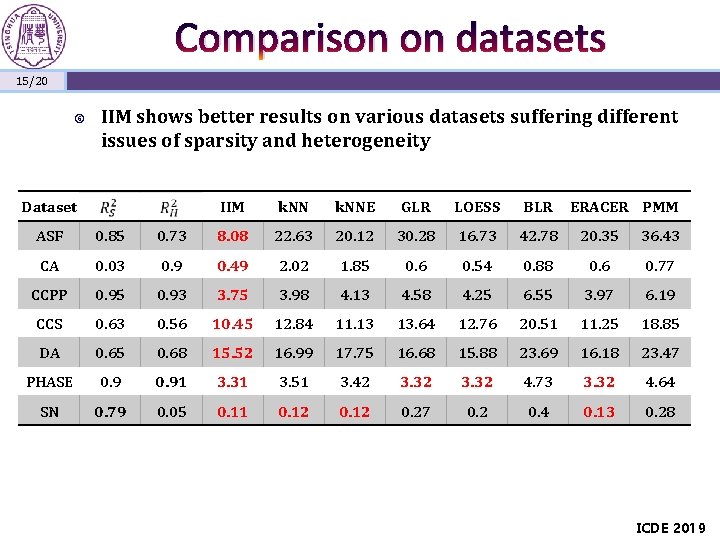

Comparison on datasets 15/20 IIM shows better results on various datasets suffering different issues of sparsity and heterogeneity Dataset IIM k. NNE GLR LOESS BLR ERACER PMM ASF 0. 85 0. 73 8. 08 22. 63 20. 12 30. 28 16. 73 42. 78 20. 35 36. 43 CA 0. 03 0. 9 0. 49 2. 02 1. 85 0. 6 0. 54 0. 88 0. 6 0. 77 CCPP 0. 95 0. 93 3. 75 3. 98 4. 13 4. 58 4. 25 6. 55 3. 97 6. 19 CCS 0. 63 0. 56 10. 45 12. 84 11. 13 13. 64 12. 76 20. 51 11. 25 18. 85 DA 0. 65 0. 68 15. 52 16. 99 17. 75 16. 68 15. 88 23. 69 16. 18 23. 47 PHASE 0. 91 3. 31 3. 51 3. 42 3. 32 4. 73 3. 32 4. 64 SN 0. 79 0. 05 0. 11 0. 12 0. 27 0. 2 0. 4 0. 13 0. 28 ICDE 2019

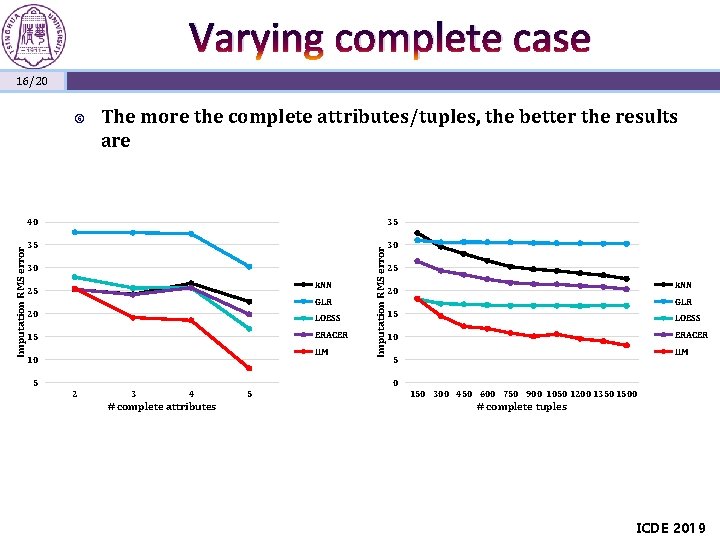

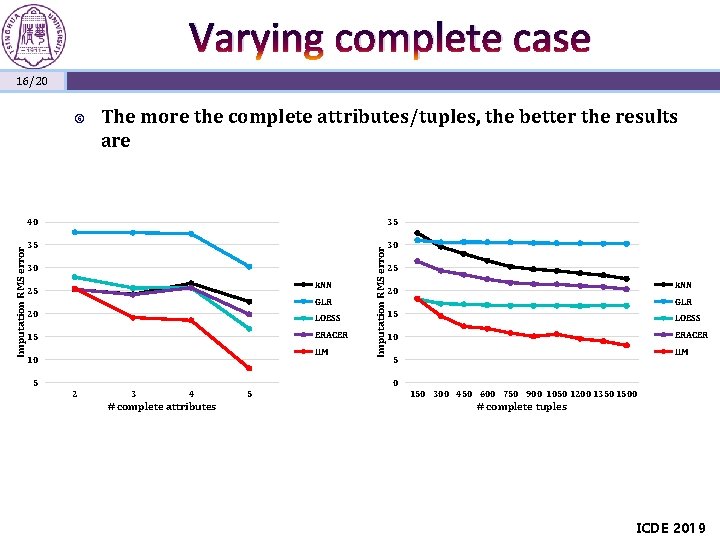

Varying complete case 16/20 The more the complete attributes/tuples, the better the results are 40 35 35 30 30 k. NN 25 GLR 20 LOESS 15 ERACER IIM 10 5 Imputation RMS error 25 k. NN 20 GLR 15 LOESS 10 ERACER IIM 5 0 2 3 4 # complete attributes 5 150 300 450 600 750 900 1050 1200 1350 1500 # complete tuples ICDE 2019

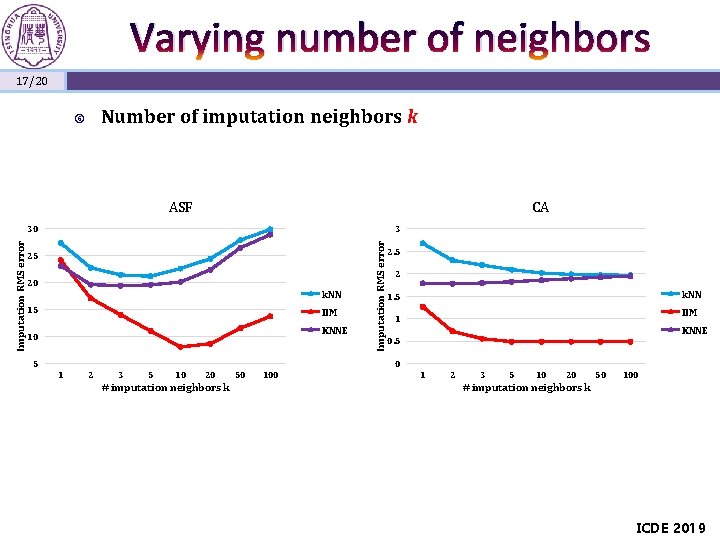

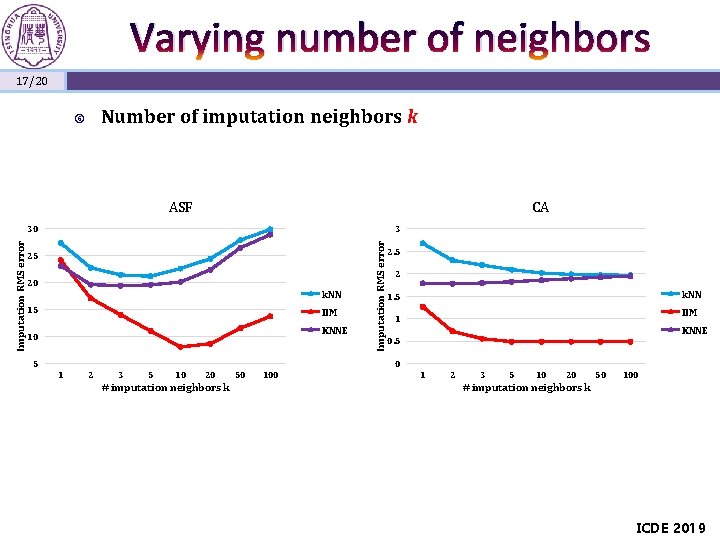

Varying number of neighbors 17/20 Number of imputation neighbors k ASF CA 3 25 20 k. NN 15 IIM KNNE 10 5 Imputation RMS error 30 2. 5 2 k. NN 1. 5 IIM 1 KNNE 0. 5 0 1 2 3 5 10 20 # imputation neighbors k 50 100 1 2 3 5 10 20 50 100 # imputation neighbors k ICDE 2019

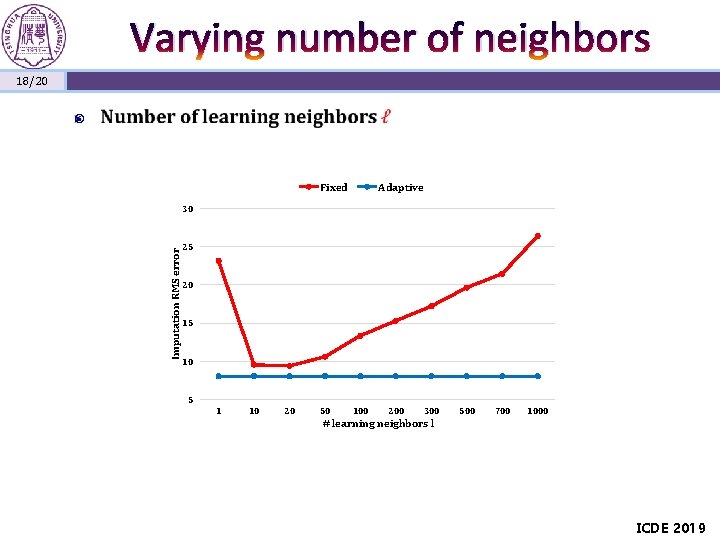

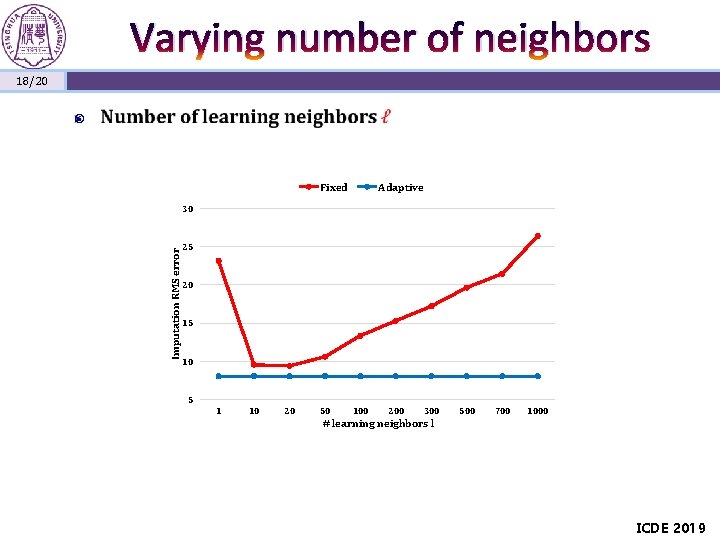

Varying number of neighbors 18/20 Fixed Adaptive Imputation RMS error 30 25 20 15 10 5 1 10 20 50 100 200 300 500 700 1000 # learning neighbors l ICDE 2019

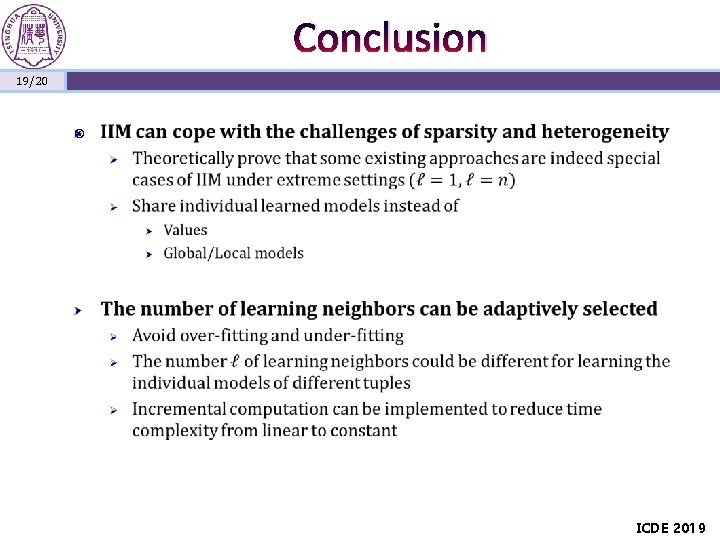

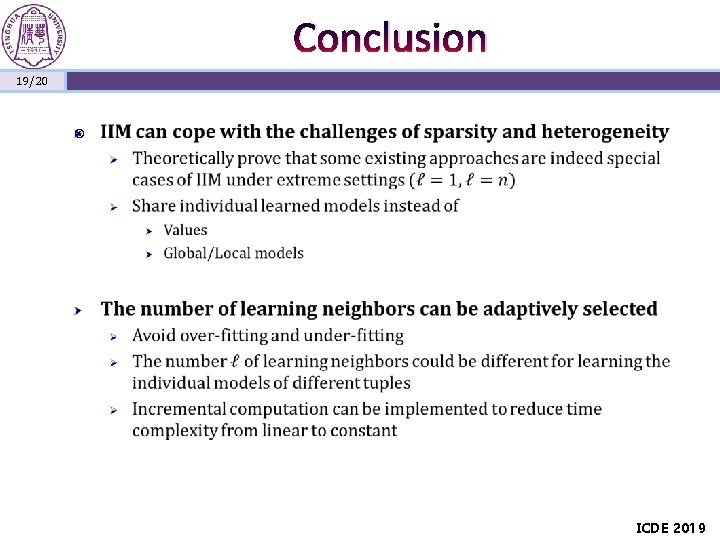

Conclusion 19/20 ICDE 2019

Q&A Thanks! 20/20 ICDE 2019