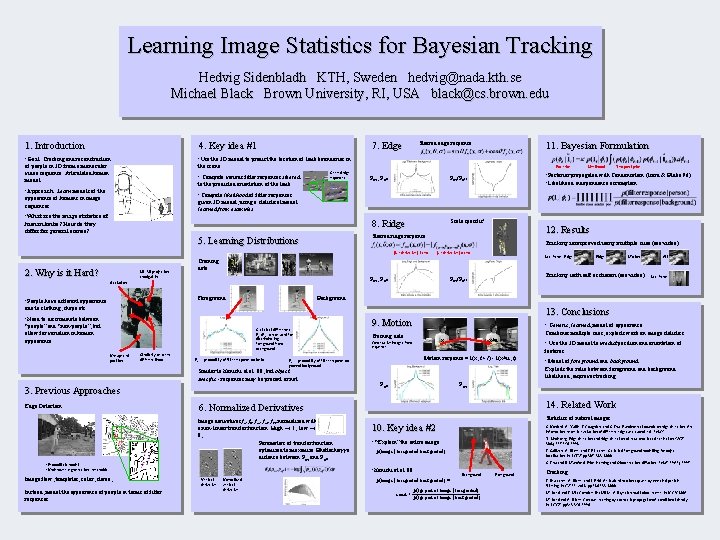

Learning Image Statistics for Bayesian Tracking Hedvig Sidenbladh

Learning Image Statistics for Bayesian Tracking Hedvig Sidenbladh KTH, Sweden hedvig@nada. kth. se Michael Black Brown University, RI, USA black@cs. brown. edu 1. Introduction 4. Key idea #1 7. Edge • Goal: Tracking and reconstruction • Use the 3 D model to predict the location of limb boundaries in of people in 3 D from a monocular video sequence. Articulated human model. the scene. 11. Bayesian Formulation Posterior • Compute various filter responses steered Steered edge responses to the predicted orientation of the limb. • Approach: Learn models of the Steered edge response: Pon, Poff Likelihood Temporal prior • Posterior propagated with Condensation (Isard & Blake 96) • Likelihood independence assumption: Pon/Poff • Compute likelihood of filter responses appearance of humans in image sequences. given 3 D model, using a statistical model learned from examples. • What are the image statistics of Scale specific! 8. Ridge human limbs? How do they differ for general scenes? Tracking is improved using multiple cues (see video) |2 nd derivative| arm 2. Why is it Hard? 2 D-3 D projection ambiguities |2 nd derivative| // arm Last frame, Edge Training data: Pon, Poff Occlusion Foreground • People have different appearance 12. Results Steered ridge response: 5. Learning Distributions Statistical differences. Pon/Poff can be used for discriminating foreground from background. Similarity between different limbs Pon = probability of filter response on limbs 3. Previous Approaches • Probabilistic model? • Under/over-segmentation, thresholds, . . . Image flow, templates, color, stereo, . . . Instead, model the appearance of people in terms of filter responses • Generic, learned, model of appearance Combines multiple cues, exploits work on image statistics Training data: x Consecutive images from sequence x+u Image derivatives fx, fy, fxx, fxy, fyy normalized with a non-linear transfer function. High 1, low 0, Parameters of transfer function optimized to maximize Bhattacharyya distance between Pon and Poff. : • Model of foreground and background Exploits the ratio between foreground and background likelihood, improves tracking Poff Pon 14. Related Work Statistics of natural images: 10. Key idea #2 S. Konishi, A. Yuille, J. Coughlan, and S. Zhu. Fundamental bounds on edge detection: An information theoretic evaluation of different edge cues. submitted: PAMI • “Explain” the entire image T. Lindeberg. Edge detection and ridge detection with automatic scale selection. IJCV, 30(2): 117 -156, 1998 J. Sullivan, A. Blake, and J. Rittscher. Statistical foreground modelling for object localization. In ECCV, pp 307 -323, 2000 p(image | foreground, background) S. Zhu and D. Mumford. Prior learning and Gibbs reaction-diffusion. PAMI, 19(11), 1997 • Konishi et al. 00: Normalized vertical derivative • Use the 3 D model to predict position and orientation of features Motion response = I(x, t+1) - I(x+u, t) 6. Normalized Derivatives Vertical derivative Last frame 13. Conclusions 9. Motion Poff = probability of filter response on general background Similar to Konishi et al. 00, but object specific - responses may be present or not. Edge Detection: All Background • Need to discriminate between Unexpected position Motion Tracking with self occlusion (see video) Pon/Poff due to clothing, shape etc. “people” and “non-people”, but allow for variation in human appearance Ridge Background p(image | foreground, background) = p(fgr part of image | foreground) const p(fgr part of image | background) Foreground Tracking: J. Deutscher, A. Blake, and I. Reid. Articulated motion capture by annealed particle filtering. In CVPR, vol 2, pp 126 -133, 2000 M. Isard and J. Mac. Cormick. Bra. MBLe: A Bayesian multi-blob tracker. In ICCV, 2001 M. Isard and A. Blake. Contour tracking by stochastic propagation of conditional density. In ECCV, pp 343 -356, 1996

- Slides: 1