Learning from Examples Standard Methodology for Evaluation 1

![Diagnosing Breast Cancer [Real Data: Davis et al. IJCAI 2005] © Jude Shavlik 2006 Diagnosing Breast Cancer [Real Data: Davis et al. IJCAI 2005] © Jude Shavlik 2006](https://slidetodoc.com/presentation_image_h2/f776afcae3c7d0f39443053fcbbf650c/image-70.jpg)

![Diagnosing Breast Cancer [Real Data: Davis et al. IJCAI 2005] © Jude Shavlik 2006 Diagnosing Breast Cancer [Real Data: Davis et al. IJCAI 2005] © Jude Shavlik 2006](https://slidetodoc.com/presentation_image_h2/f776afcae3c7d0f39443053fcbbf650c/image-71.jpg)

![Predicting Aliases [Synthetic data: Davis et al. ICIA 2005] © Jude Shavlik 2006 David Predicting Aliases [Synthetic data: Davis et al. ICIA 2005] © Jude Shavlik 2006 David](https://slidetodoc.com/presentation_image_h2/f776afcae3c7d0f39443053fcbbf650c/image-72.jpg)

![Predicting Aliases [Synthetic data: Davis et al. ICIA 2005] © Jude Shavlik 2006 David Predicting Aliases [Synthetic data: Davis et al. ICIA 2005] © Jude Shavlik 2006 David](https://slidetodoc.com/presentation_image_h2/f776afcae3c7d0f39443053fcbbf650c/image-73.jpg)

- Slides: 91

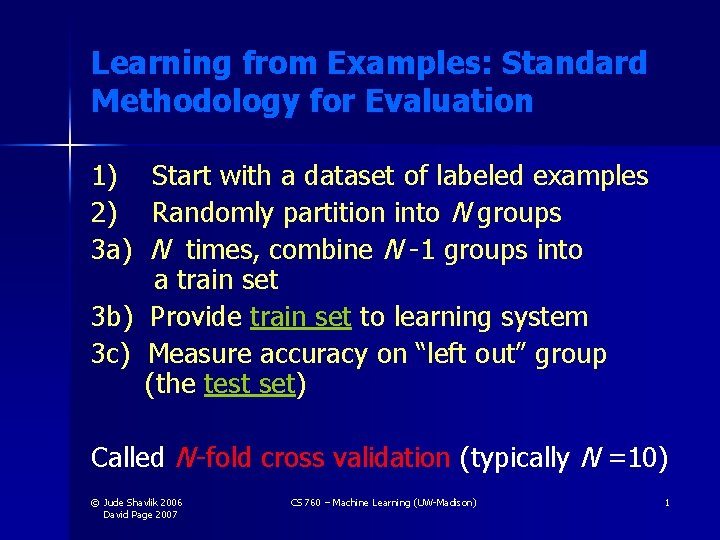

Learning from Examples: Standard Methodology for Evaluation 1) Start with a dataset of labeled examples 2) Randomly partition into N groups 3 a) N times, combine N -1 groups into a train set 3 b) Provide train set to learning system 3 c) Measure accuracy on “left out” group (the test set) Called N -fold cross validation (typically N =10) © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 1

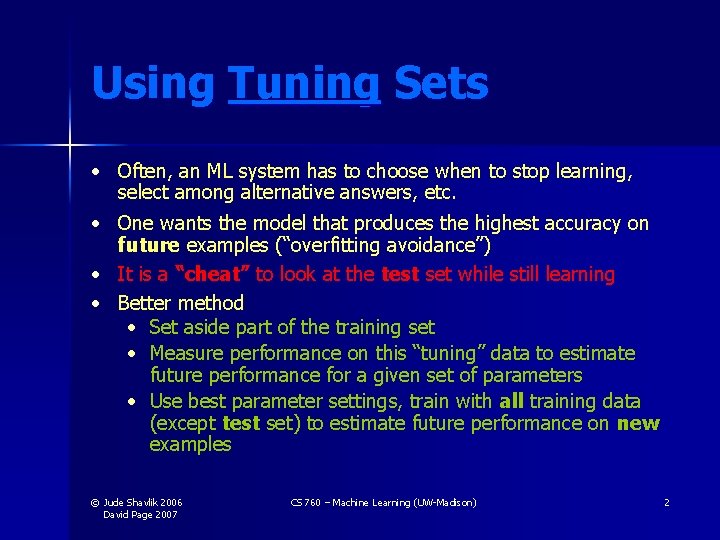

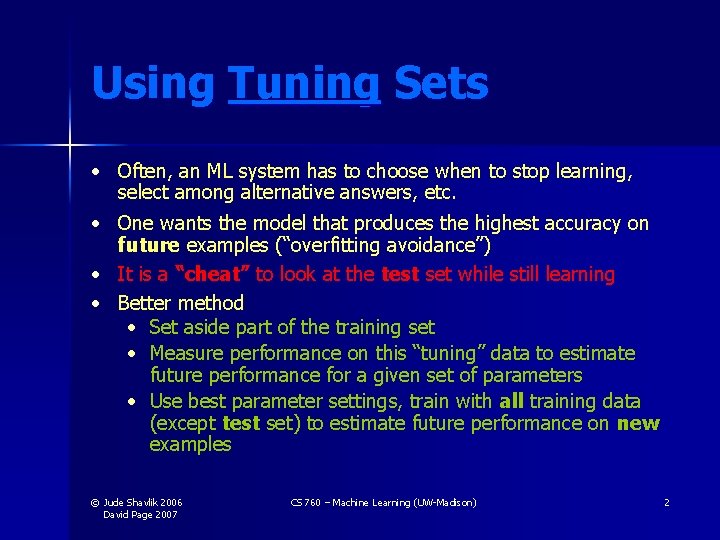

Using Tuning Sets • Often, an ML system has to choose when to stop learning, select among alternative answers, etc. • One wants the model that produces the highest accuracy on future examples (“overfitting avoidance”) • It is a “cheat” to look at the test set while still learning • Better method • Set aside part of the training set • Measure performance on this “tuning” data to estimate future performance for a given set of parameters • Use best parameter settings, train with all training data (except test set) to estimate future performance on new examples © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 2

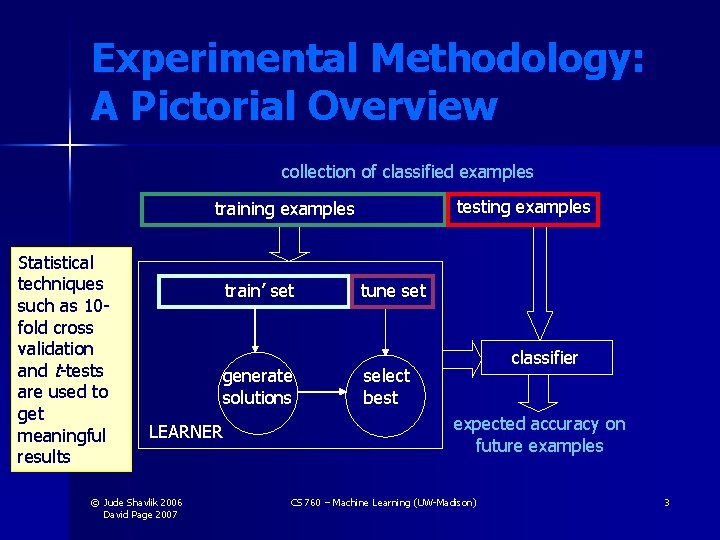

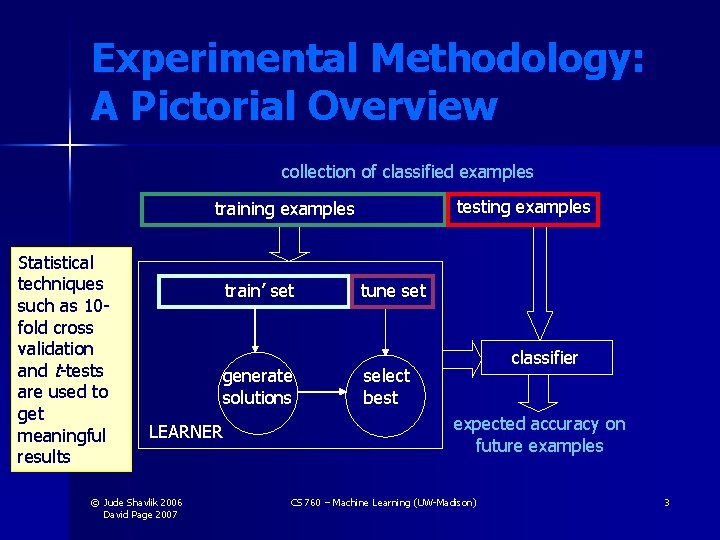

Experimental Methodology: A Pictorial Overview collection of classified examples testing examples training examples Statistical techniques such as 10 fold cross validation and t-tests are used to get meaningful results train’ set generate solutions LEARNER © Jude Shavlik 2006 David Page 2007 tune set classifier select best expected accuracy on future examples CS 760 – Machine Learning (UW-Madison) 3

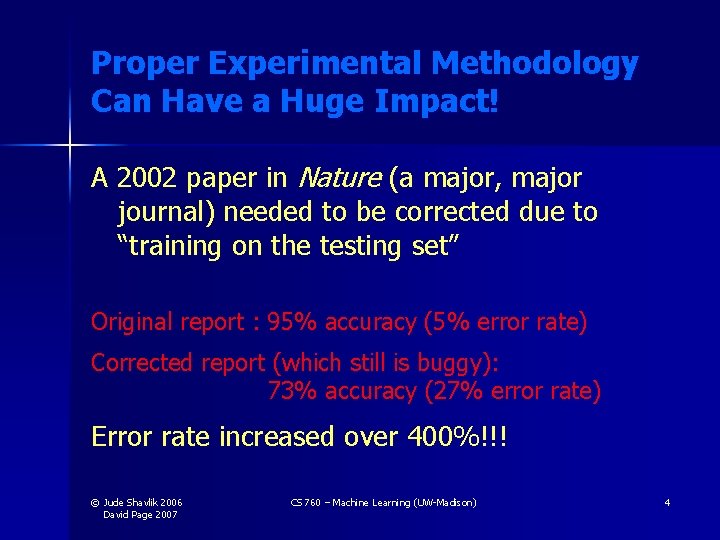

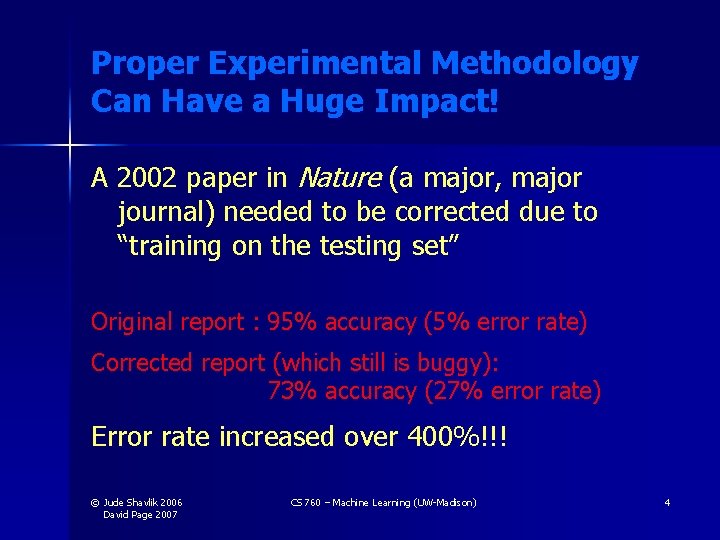

Proper Experimental Methodology Can Have a Huge Impact! A 2002 paper in Nature (a major, major journal) needed to be corrected due to “training on the testing set” Original report : 95% accuracy (5% error rate) Corrected report (which still is buggy): 73% accuracy (27% error rate) Error rate increased over 400%!!! © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 4

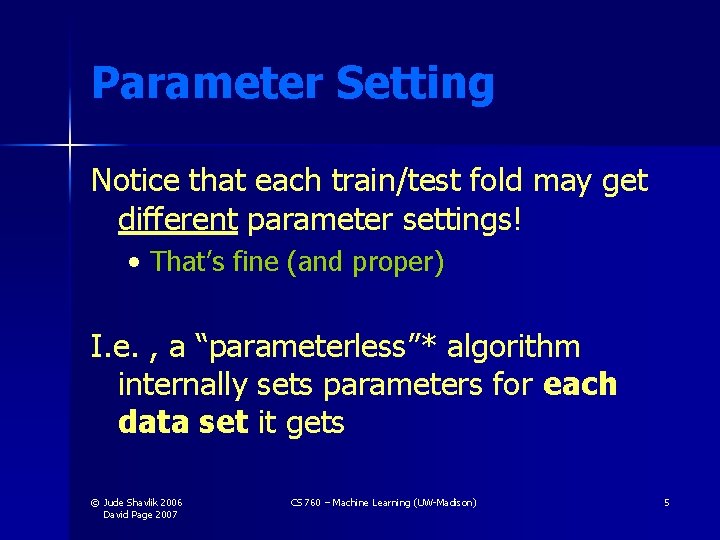

Parameter Setting Notice that each train/test fold may get different parameter settings! • That’s fine (and proper) I. e. , a “parameterless”* algorithm internally sets parameters for each data set it gets © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 5

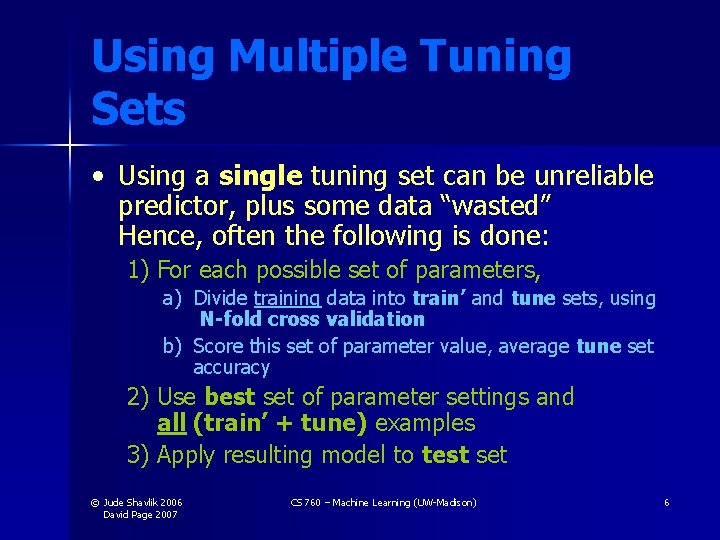

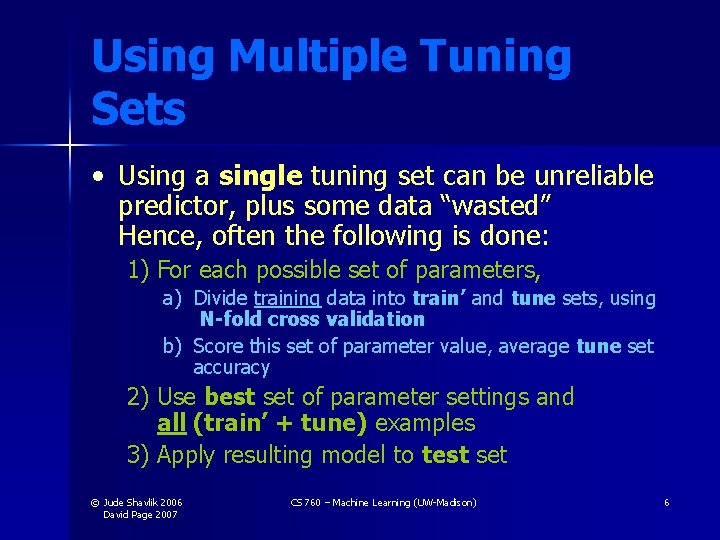

Using Multiple Tuning Sets • Using a single tuning set can be unreliable predictor, plus some data “wasted” Hence, often the following is done: 1) For each possible set of parameters, a) Divide training data into train’ and tune sets, using N-fold cross validation b) Score this set of parameter value, average tune set accuracy 2) Use best set of parameter settings and all (train’ + tune) examples 3) Apply resulting model to test set © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 6

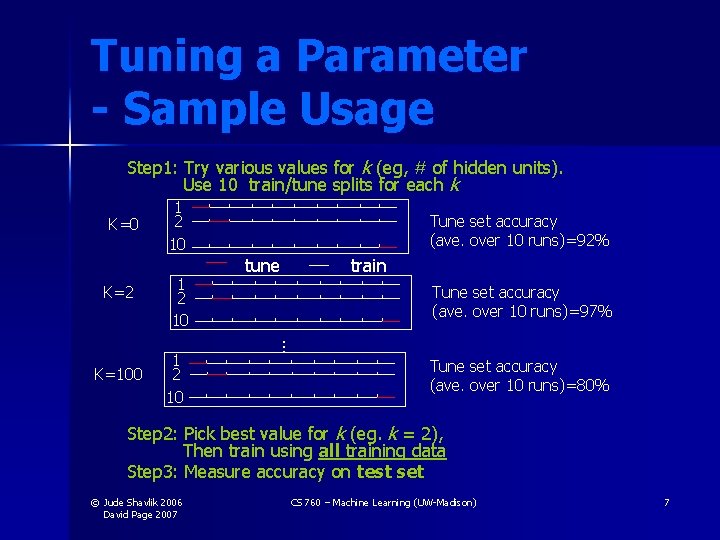

Tuning a Parameter - Sample Usage Step 1: Try various values for k (eg, # of hidden units). Use 10 train/tune splits for each k K=0 K=2 1 2 10 Tune set accuracy (ave. over 10 runs)=92% tune train Tune set accuracy (ave. over 10 runs)=97% … K=100 1 2 10 Tune set accuracy (ave. over 10 runs)=80% Step 2: Pick best value for k (eg. k = 2), Then train using all training data Step 3: Measure accuracy on test set © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 7

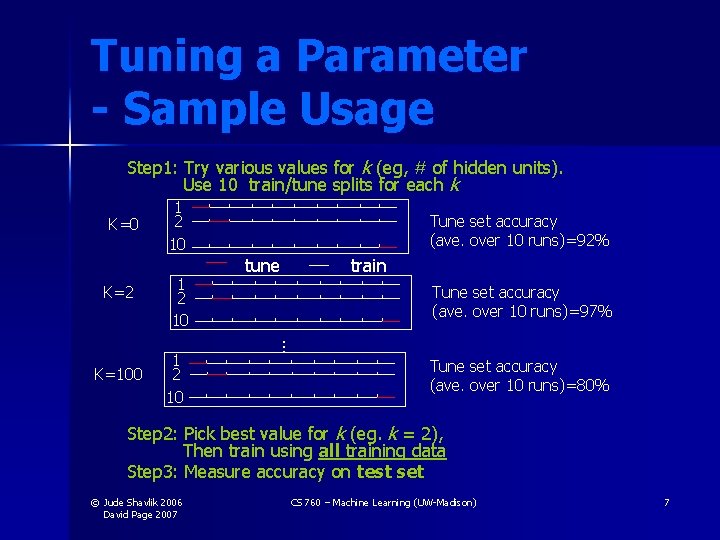

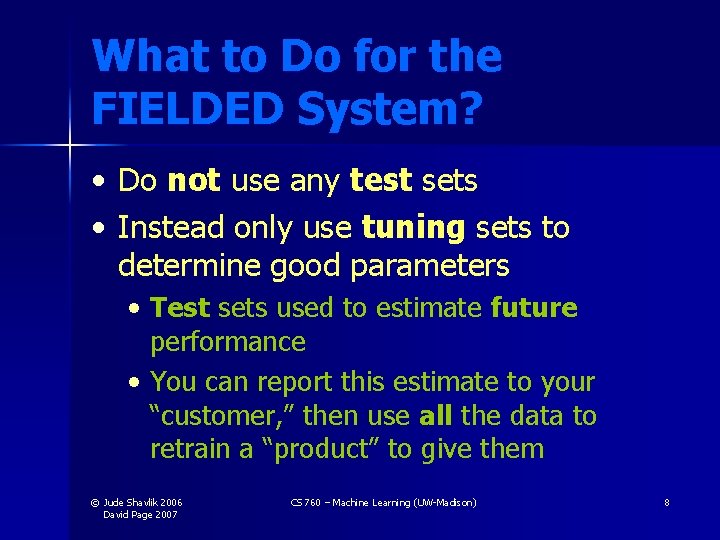

What to Do for the FIELDED System? • Do not use any test sets • Instead only use tuning sets to determine good parameters • Test sets used to estimate future performance • You can report this estimate to your “customer, ” then use all the data to retrain a “product” to give them © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 8

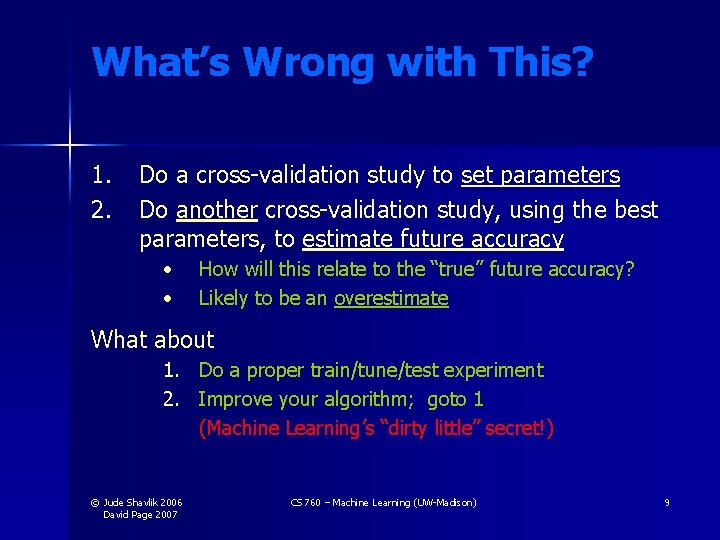

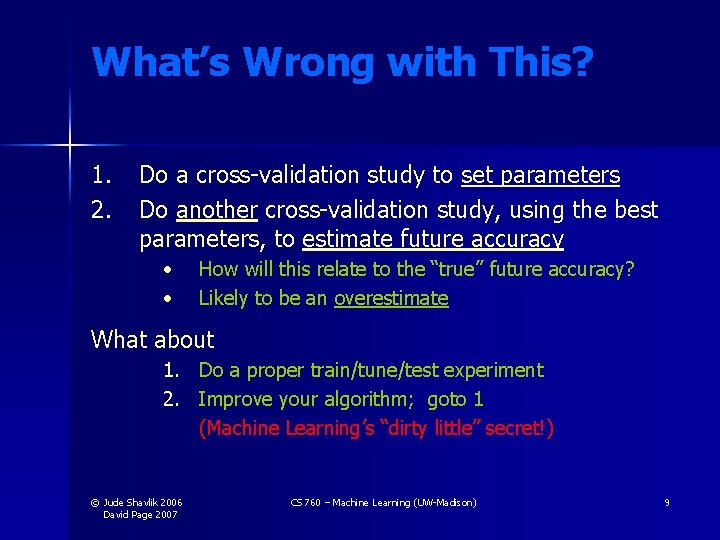

What’s Wrong with This? 1. 2. Do a cross-validation study to set parameters Do another cross-validation study, using the best parameters, to estimate future accuracy • • How will this relate to the “true” future accuracy? Likely to be an overestimate What about 1. Do a proper train/tune/test experiment 2. Improve your algorithm; goto 1 (Machine Learning’s “dirty little” secret!) © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 9

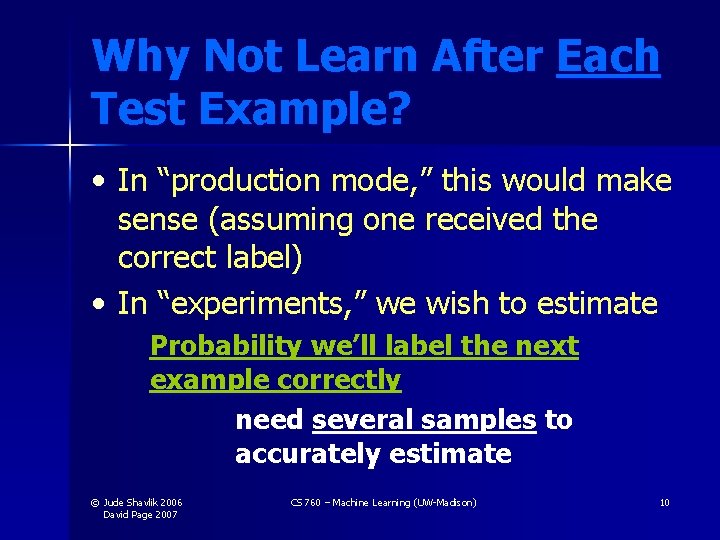

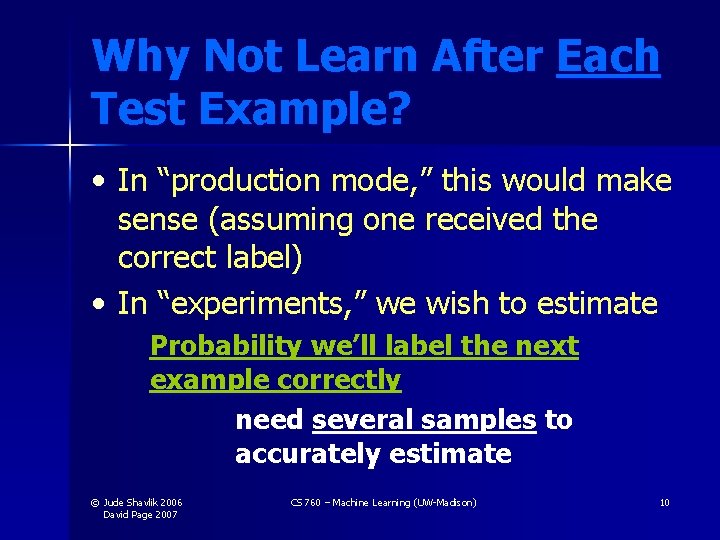

Why Not Learn After Each Test Example? • In “production mode, ” this would make sense (assuming one received the correct label) • In “experiments, ” we wish to estimate Probability we’ll label the next example correctly need several samples to accurately estimate © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 10

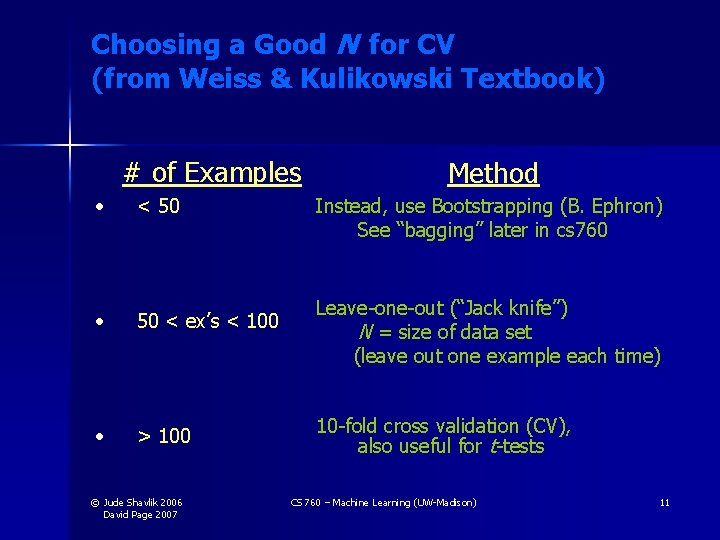

Choosing a Good N for CV (from Weiss & Kulikowski Textbook) # of Examples • < 50 • 50 < ex’s < 100 • > 100 © Jude Shavlik 2006 David Page 2007 Method Instead, use Bootstrapping (B. Ephron) See “bagging” later in cs 760 Leave-one-out (“Jack knife”) N = size of data set (leave out one example each time) 10 -fold cross validation (CV), also useful for t-tests CS 760 – Machine Learning (UW-Madison) 11

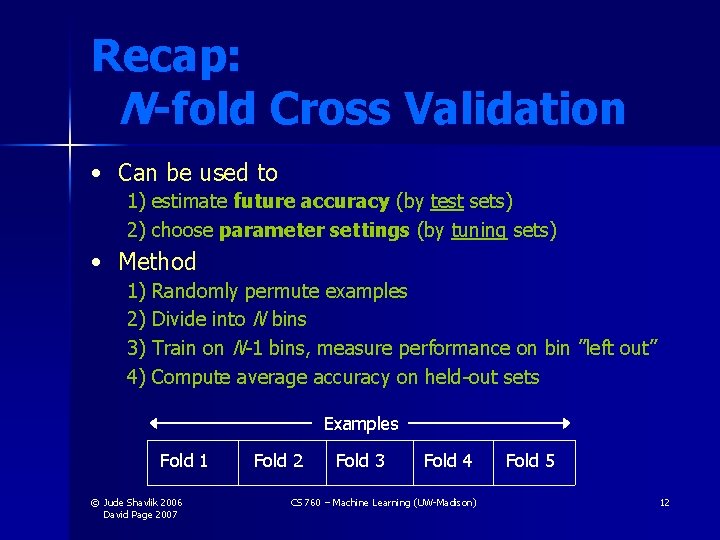

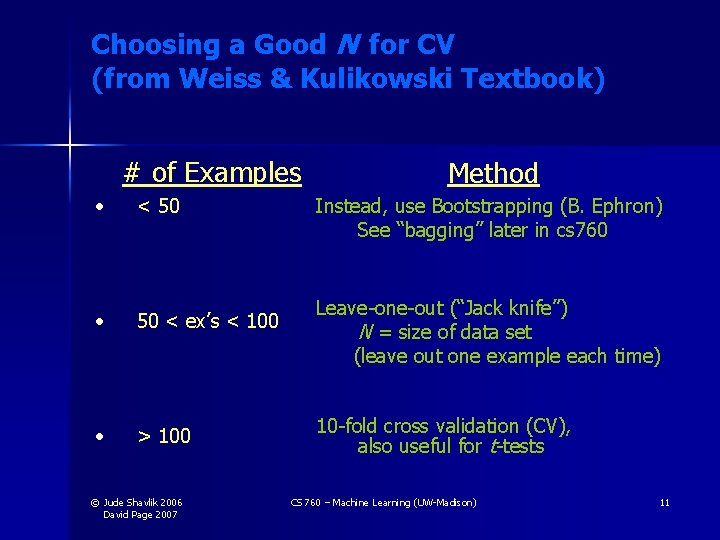

Recap: N -fold Cross Validation • Can be used to 1) estimate future accuracy (by test sets) 2) choose parameter settings (by tuning sets) • Method 1) Randomly permute examples 2) Divide into N bins 3) Train on N-1 bins, measure performance on bin ”left out” 4) Compute average accuracy on held-out sets Examples Fold 1 © Jude Shavlik 2006 David Page 2007 Fold 2 Fold 3 Fold 4 CS 760 – Machine Learning (UW-Madison) Fold 5 12

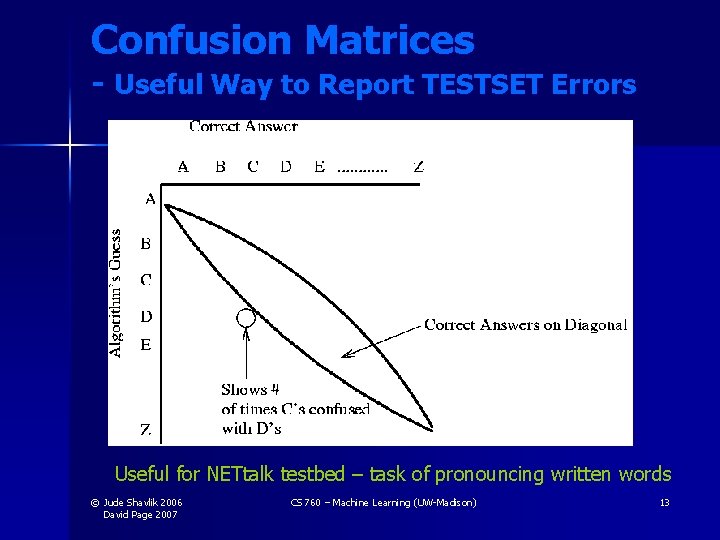

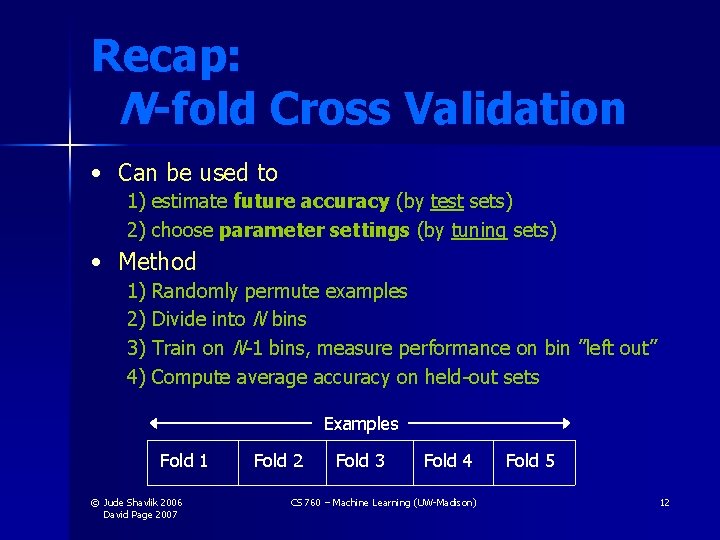

Confusion Matrices - Useful Way to Report TESTSET Errors Useful for NETtalk testbed – task of pronouncing written words © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 13

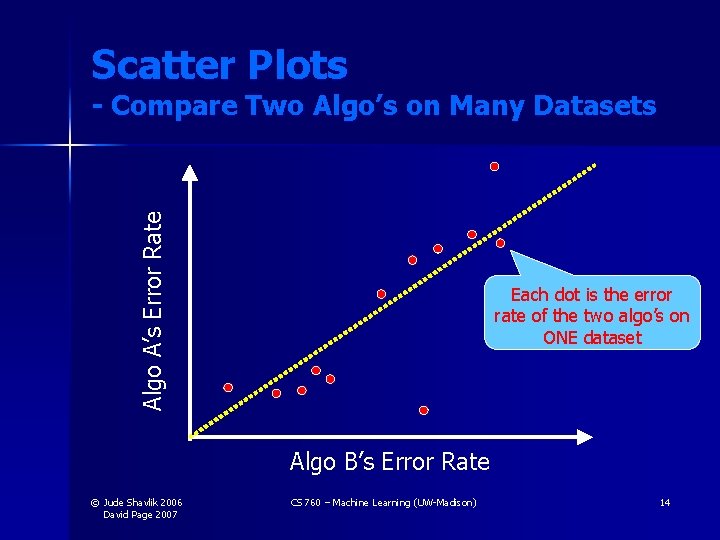

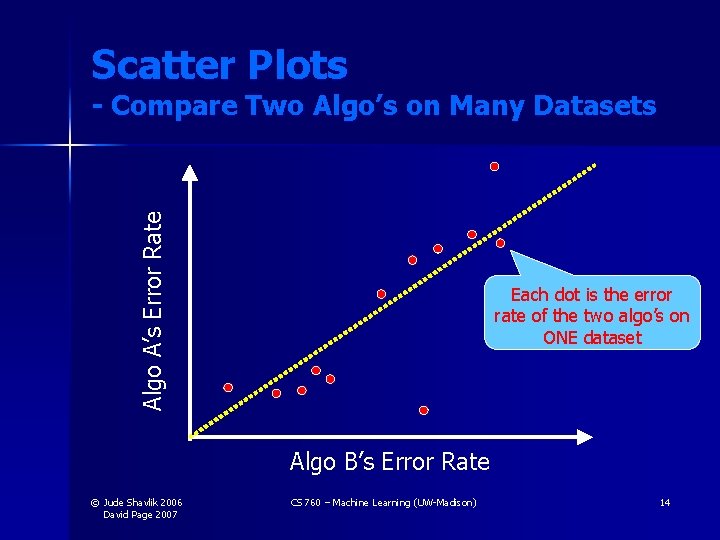

Scatter Plots Algo A’s Error Rate - Compare Two Algo’s on Many Datasets Each dot is the error rate of the two algo’s on ONE dataset Algo B’s Error Rate © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 14

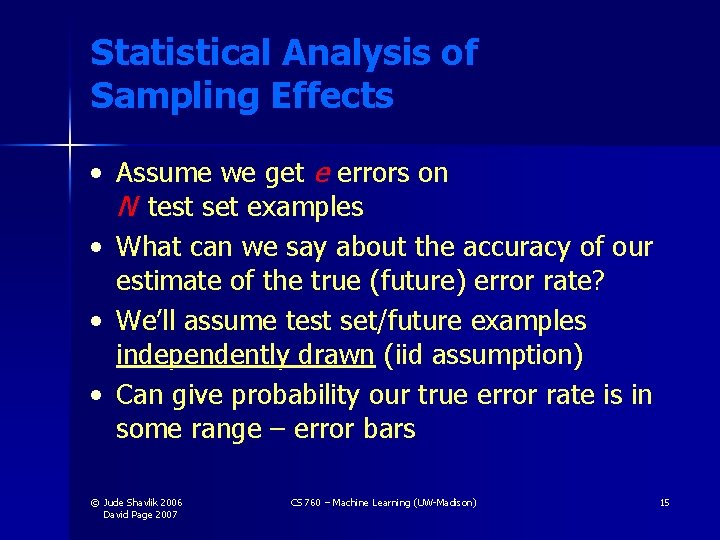

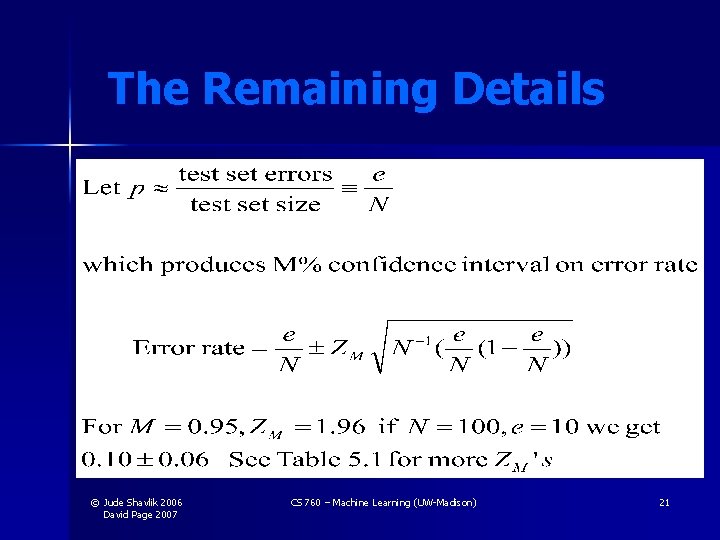

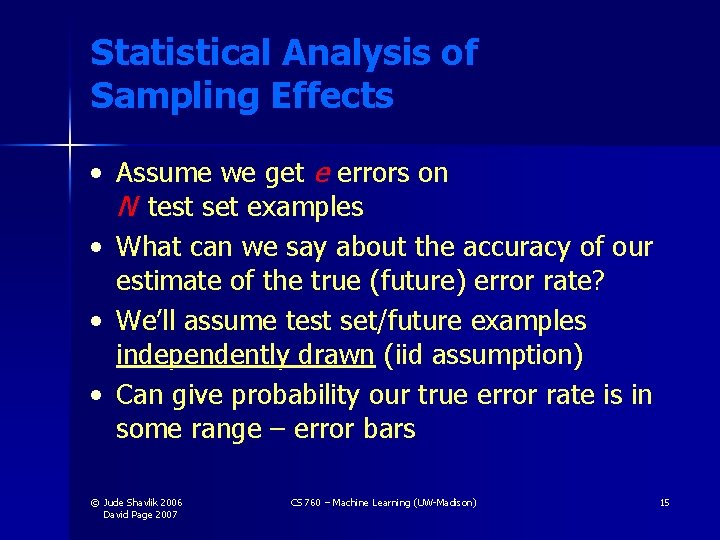

Statistical Analysis of Sampling Effects • Assume we get e errors on N test set examples • What can we say about the accuracy of our estimate of the true (future) error rate? • We’ll assume test set/future examples independently drawn (iid assumption) • Can give probability our true error rate is in some range – error bars © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 15

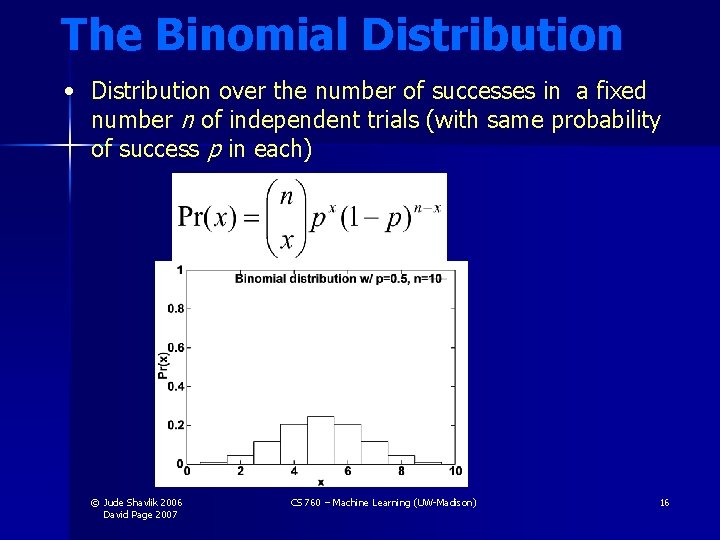

The Binomial Distribution • Distribution over the number of successes in a fixed number n of independent trials (with same probability of success p in each) © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 16

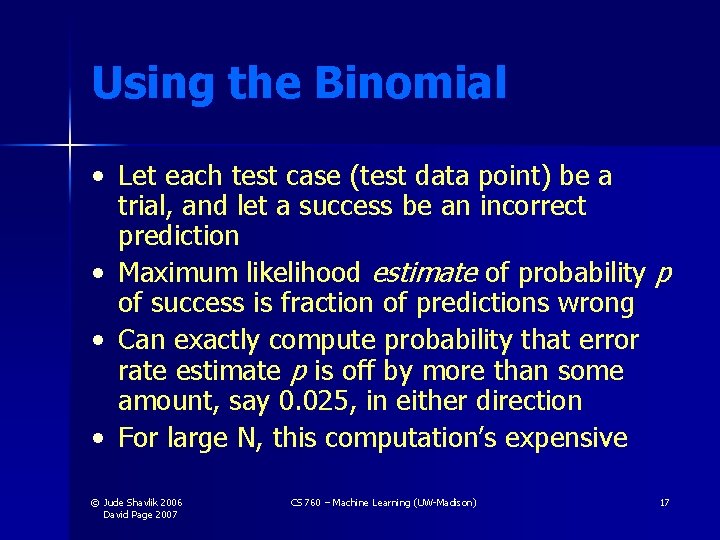

Using the Binomial • Let each test case (test data point) be a trial, and let a success be an incorrect prediction • Maximum likelihood estimate of probability p of success is fraction of predictions wrong • Can exactly compute probability that error rate estimate p is off by more than some amount, say 0. 025, in either direction • For large N, this computation’s expensive © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 17

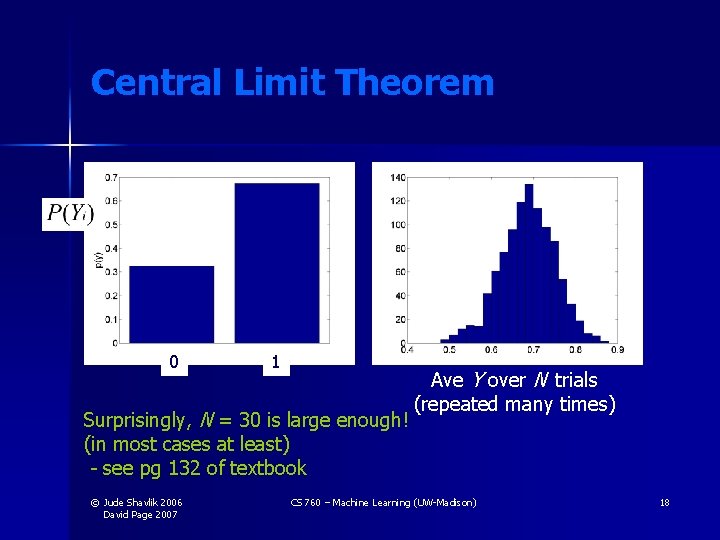

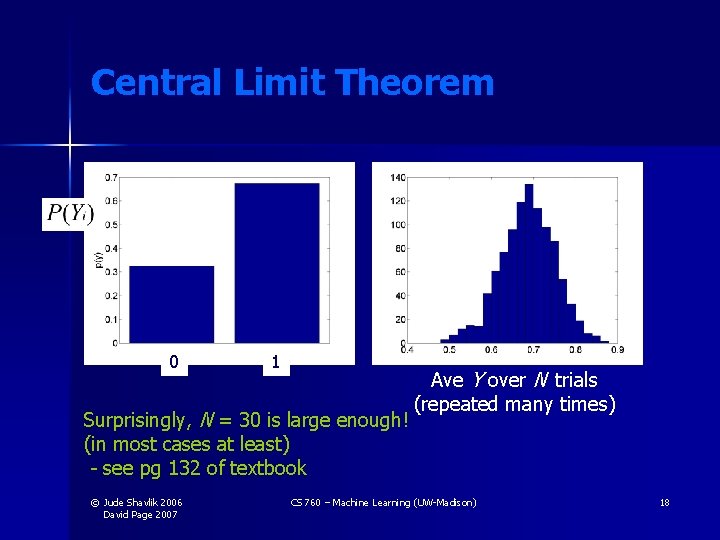

Central Limit Theorem • Roughly, for large enough N, all distributions look Gaussian when summing/averaging N values 0 1 Surprisingly, N = 30 is large enough! (in most cases at least) - see pg 132 of textbook © Jude Shavlik 2006 David Page 2007 Ave Y over N trials (repeated many times) CS 760 – Machine Learning (UW-Madison) 18

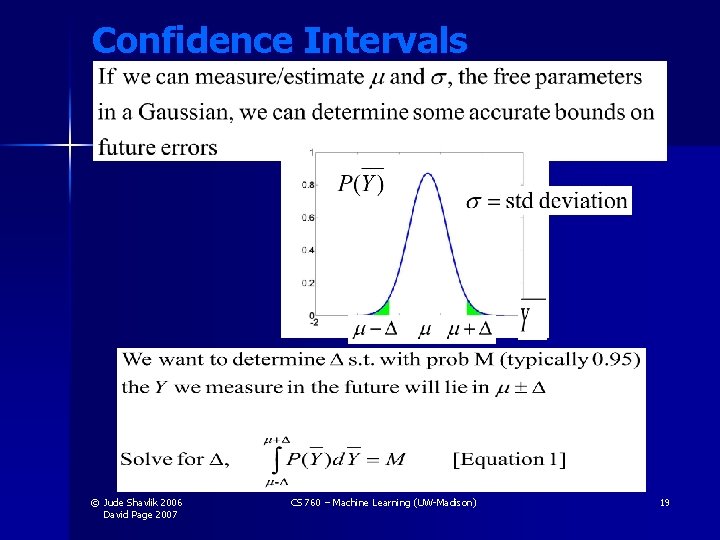

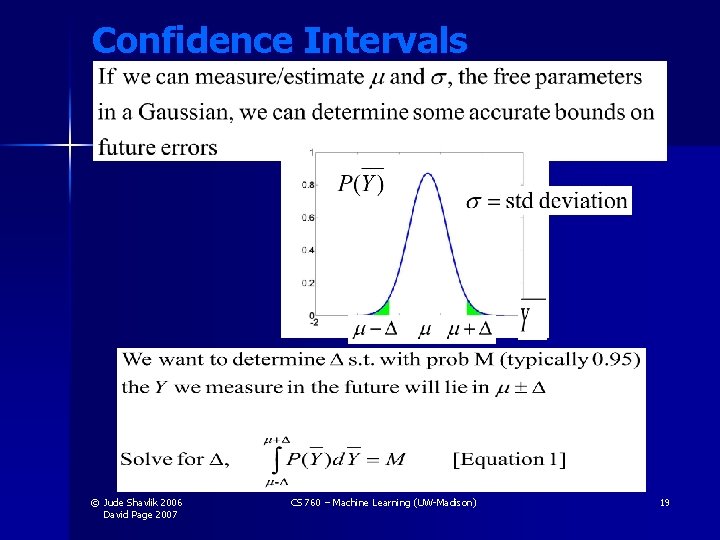

Confidence Intervals © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 19

As You Already Learned in “Stat 101” If we estimate μ (mean error rate) and σ (std dev), we can say our ML algo’s error rate is μ ± ZM σ ZM : value you looked up in a table of N(0, 1) for desired confidence; e. g. , for 95% confidence it’s 1. 96 © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 20

The Remaining Details © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 21

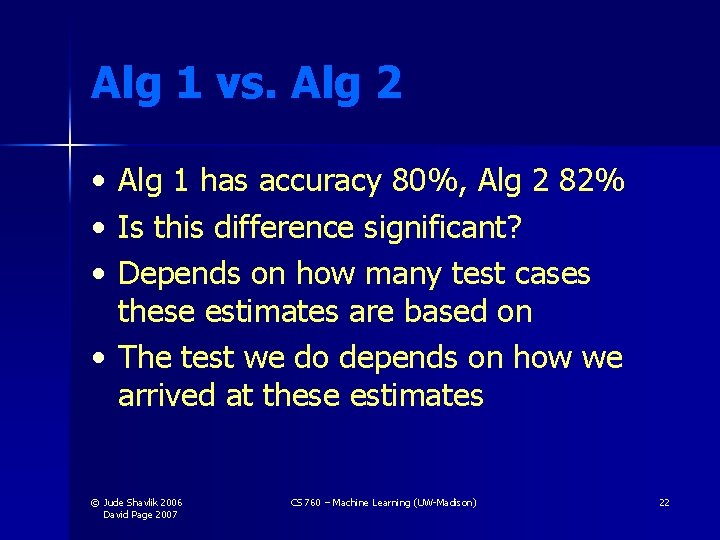

Alg 1 vs. Alg 2 • • • Alg 1 has accuracy 80%, Alg 2 82% Is this difference significant? Depends on how many test cases these estimates are based on • The test we do depends on how we arrived at these estimates © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 22

Leave-One-Out: Sign Test • Suppose we ran leave-one-out crossvalidation on a data set of 100 cases • Divide the cases into (1) Alg 1 won, (2) Alg 2 won, (3) Ties (both wrong or both right); Throw out the ties • Suppose 10 ties and 50 wins for Alg 1 • Ask: Under (null) binomial(90, 0. 5), what is prob of 50+ or 40 - successes? © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 23

What about 10 -fold? • Difficult to get significance from sign test of 10 cases • We’re throwing out the numbers (accuracy estimates) for each fold, and just asking which is larger • Use the numbers… t-test… designed to test for a difference of means © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 24

Paired Student t -tests • Given • • • 10 training/test sets 2 ML algorithms Results of the 2 ML algo’s on the 10 test-sets • Determine • Which algorithm is better on this problem? • Is the difference statistically significant? © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 25

Paired Student t –Tests (cont. ) Example Algorithm 1: Algorithm 2: δi : Accuracies on Testsets 80% 50 75 … 99 79 49 74 … 98 +1 +1 +1 … +1 • Algorithm 1’s mean is better, but the two std. Deviations will clearly overlap • But algorithm 1 is always better than algorithm 2 © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 26

The Random Variable in the t -Test Consider random variable δi = Algo A’s test-set i error minus Algo B’s test-set i error Notice we’re “factoring out” test-set difficulty by looking at relative performance In general, one tries to explain variance in results across experiments Here we’re saying that Variance = f( Problem difficulty ) + g( Algorithm strength ) © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 27

More on the Paired t -Test Our NULL HYPOTHESIS is that the two ML algorithms have equivalent average accuracies • i. e. differences (in the scores) are due to the “random fluctuations” about the mean of zero We compute the probability that the observed δ arose from the null hypothesis • If this probability is low we reject the null hypo and say that the two algo’s appear different • ‘Low’ is usually taken as prob ≤ 0. 05 © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 28

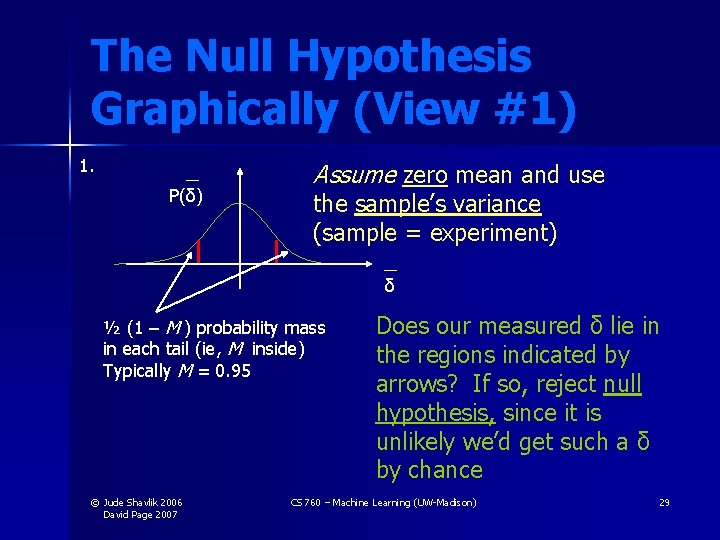

The Null Hypothesis Graphically (View #1) 1. P(δ) Assume zero mean and use the sample’s variance (sample = experiment) δ ½ (1 – M ) probability mass in each tail (ie, M inside) Typically M = 0. 95 © Jude Shavlik 2006 David Page 2007 Does our measured δ lie in the regions indicated by arrows? If so, reject null hypothesis, since it is unlikely we’d get such a δ by chance CS 760 – Machine Learning (UW-Madison) 29

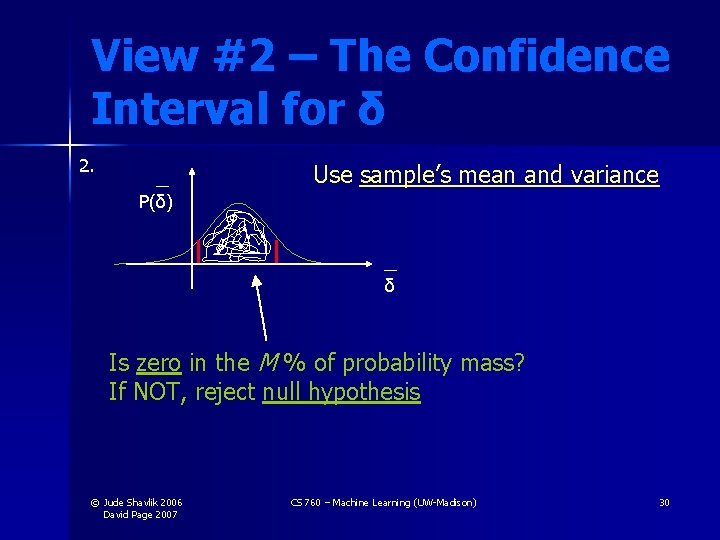

View #2 – The Confidence Interval for δ 2. Use sample’s mean and variance P(δ) δ Is zero in the M % of probability mass? If NOT, reject null hypothesis © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 30

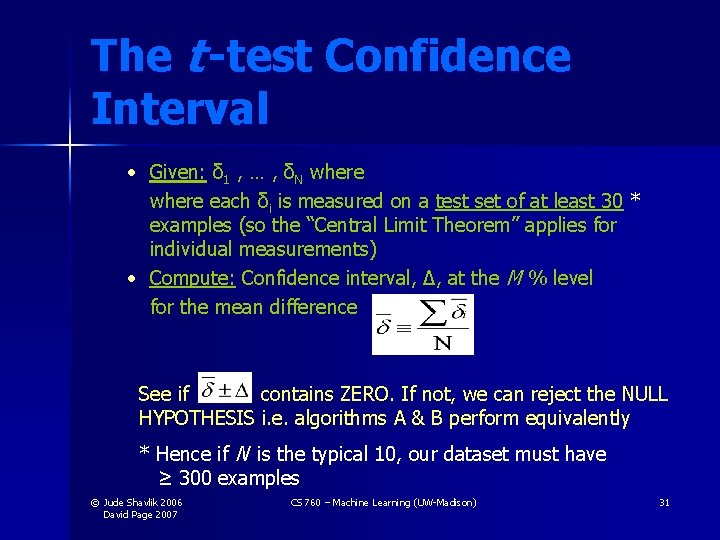

The t -test Confidence Interval • Given: δ 1 , … , δN where each δi is measured on a test set of at least 30 * examples (so the “Central Limit Theorem” applies for individual measurements) • Compute: Confidence interval, Δ, at the M % level for the mean difference See if contains ZERO. If not, we can reject the NULL HYPOTHESIS i. e. algorithms A & B perform equivalently * Hence if N is the typical 10, our dataset must have ≥ 300 examples © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 31

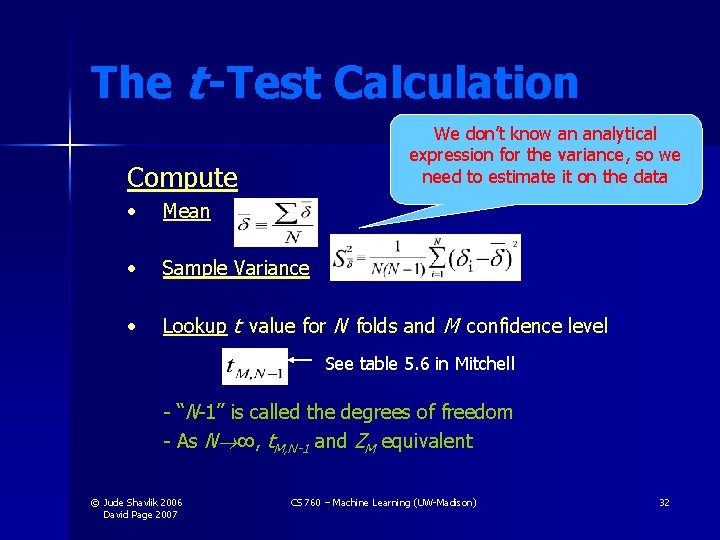

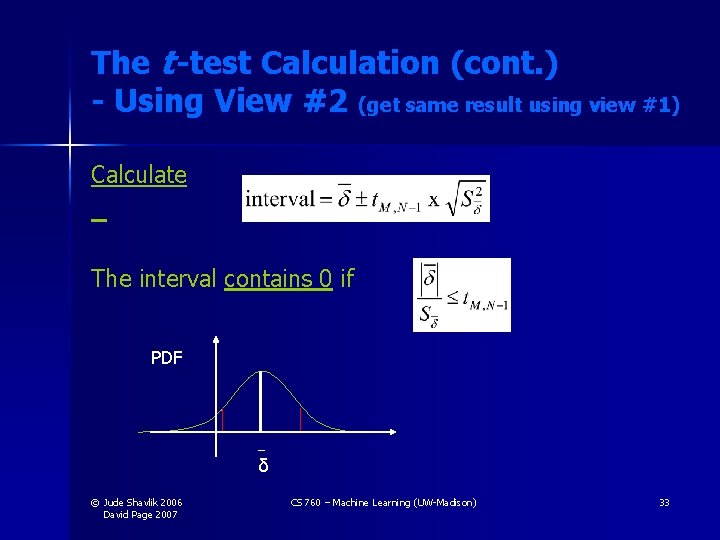

The t -Test Calculation We don’t know an analytical expression for the variance, so we need to estimate it on the data Compute • Mean • Sample Variance • Lookup t value for N folds and M confidence level See table 5. 6 in Mitchell - “N-1” is called the degrees of freedom - As N ∞, t. M, N-1 and ZM equivalent © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 32

The t -test Calculation (cont. ) - Using View #2 (get same result using view #1) Calculate The interval contains 0 if PDF δ © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 33

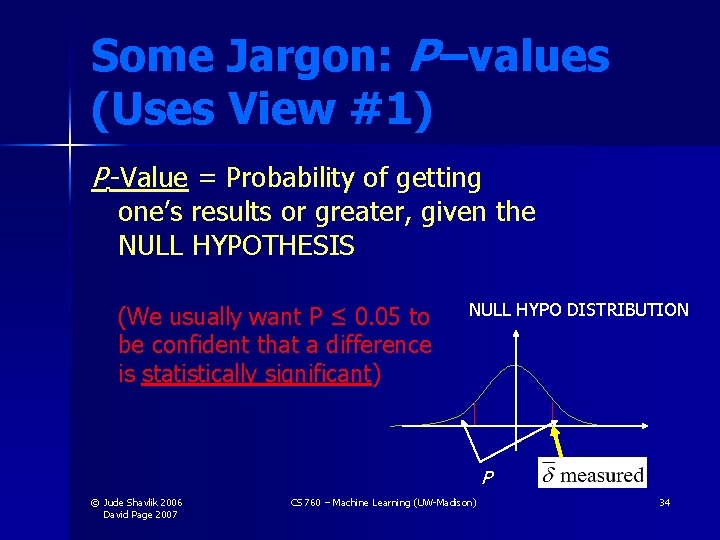

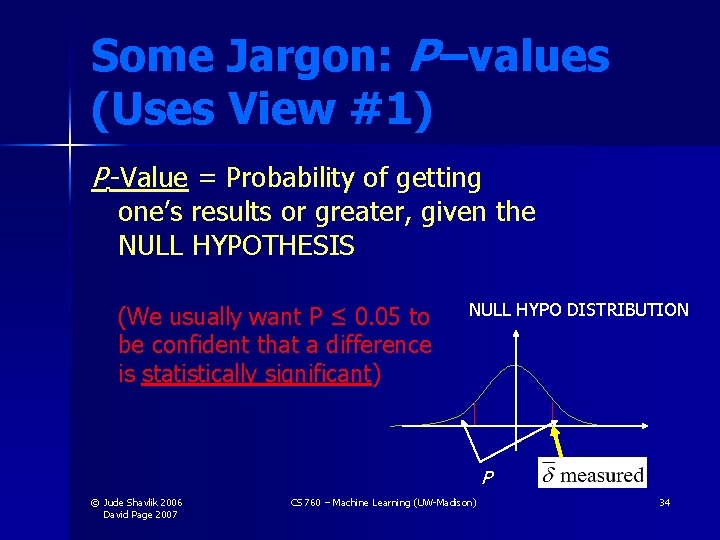

Some Jargon: P –values (Uses View #1) P -Value = Probability of getting one’s results or greater, given the NULL HYPOTHESIS (We usually want P ≤ 0. 05 to be confident that a difference is statistically significant) NULL HYPO DISTRIBUTION P © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 34

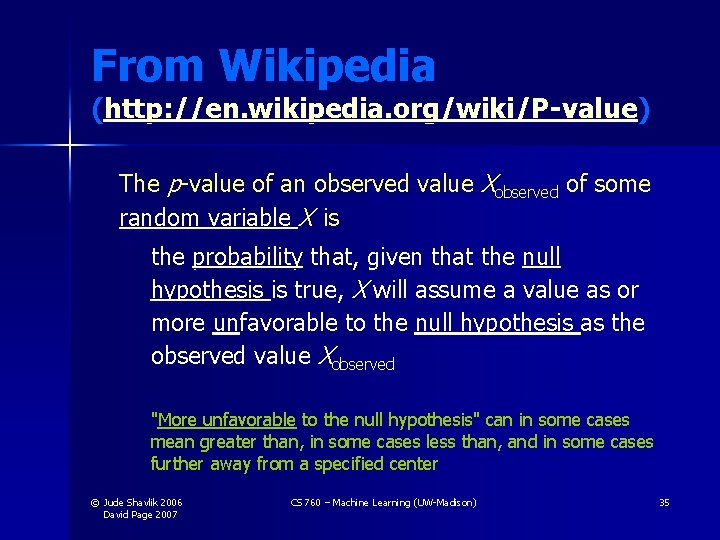

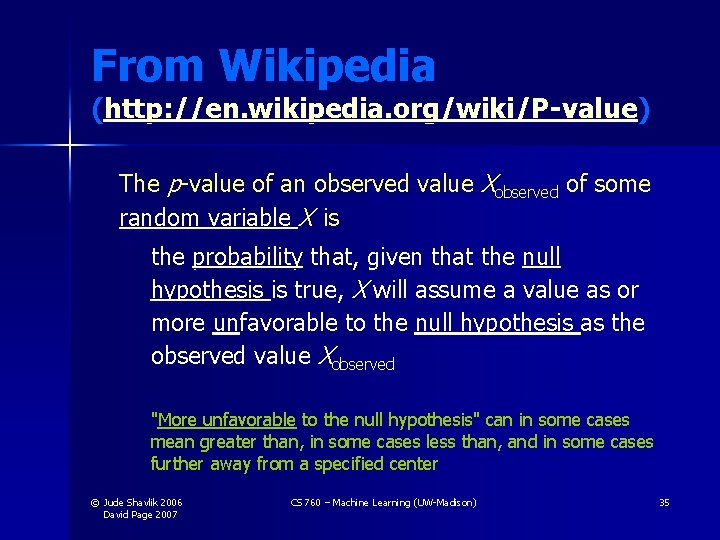

From Wikipedia (http: //en. wikipedia. org/wiki/P-value) The p-value of an observed value Xobserved of some random variable X is the probability that, given that the null hypothesis is true, X will assume a value as or more unfavorable to the null hypothesis as the observed value Xobserved "More unfavorable to the null hypothesis" can in some cases mean greater than, in some cases less than, and in some cases further away from a specified center © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 35

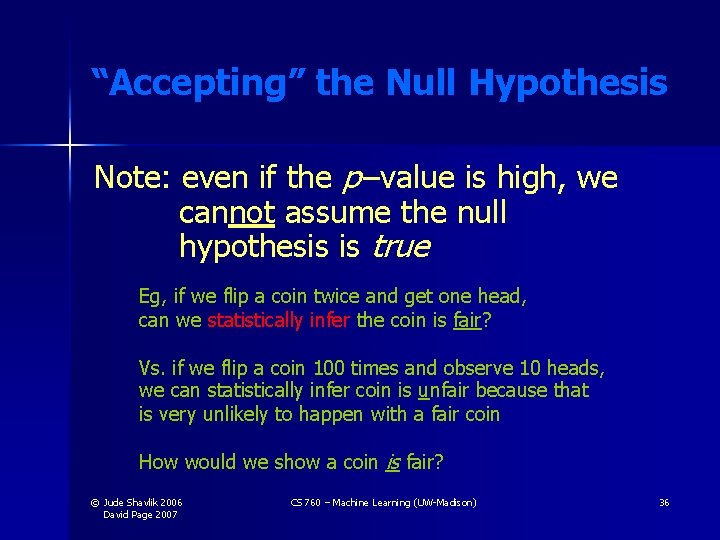

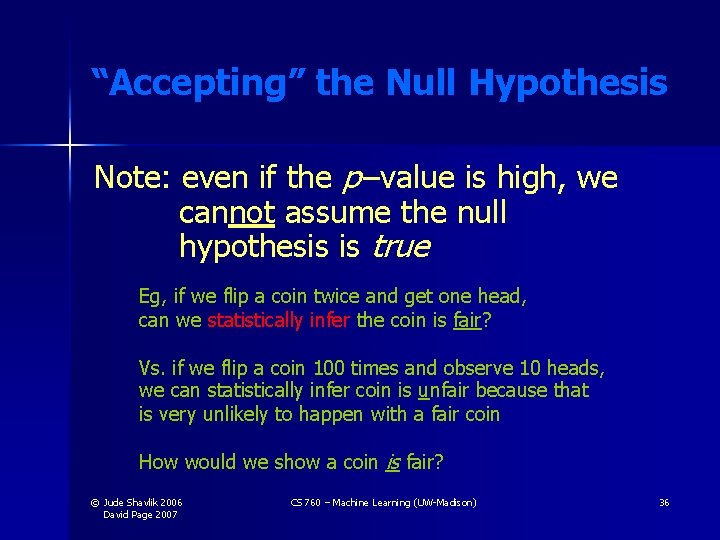

“Accepting” the Null Hypothesis Note: even if the p –value is high, we cannot assume the null hypothesis is true Eg, if we flip a coin twice and get one head, can we statistically infer the coin is fair? Vs. if we flip a coin 100 times and observe 10 heads, we can statistically infer coin is unfair because that is very unlikely to happen with a fair coin How would we show a coin is fair? © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 36

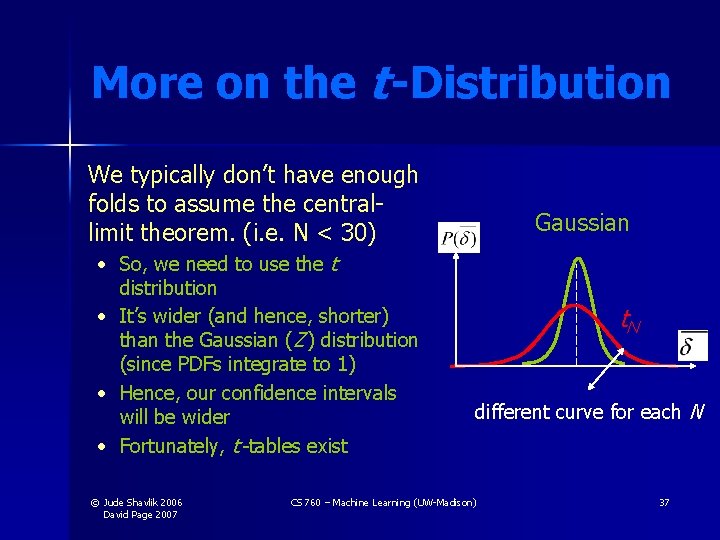

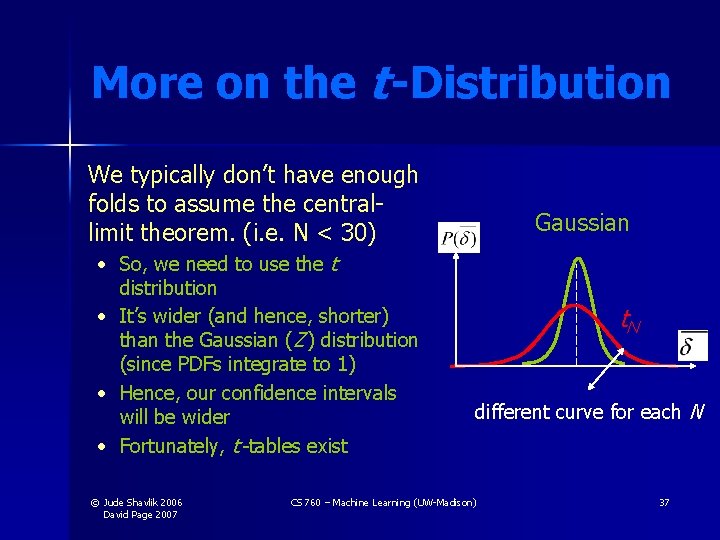

More on the t -Distribution We typically don’t have enough folds to assume the centrallimit theorem. (i. e. N < 30) • So, we need to use the t distribution • It’s wider (and hence, shorter) than the Gaussian (Z ) distribution (since PDFs integrate to 1) • Hence, our confidence intervals will be wider • Fortunately, t -tables exist © Jude Shavlik 2006 David Page 2007 Gaussian t. N different curve for each N CS 760 – Machine Learning (UW-Madison) 37

Some Assumptions Underlying our Calculations General Central Limit Theorem applies (I. e. , >= 30 measurements averaged) ML-Specific #errors/#tests accurately estimates p, prob of error on 1 ex. - used in formula for s which characterizes expected future deviations about mean (p ) Using independent sample space of possible instances - representative of future examples - individual ex’s iid drawn For paired t-tests, learned classifier same for each fold (“stability”) since combining results across folds © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 38

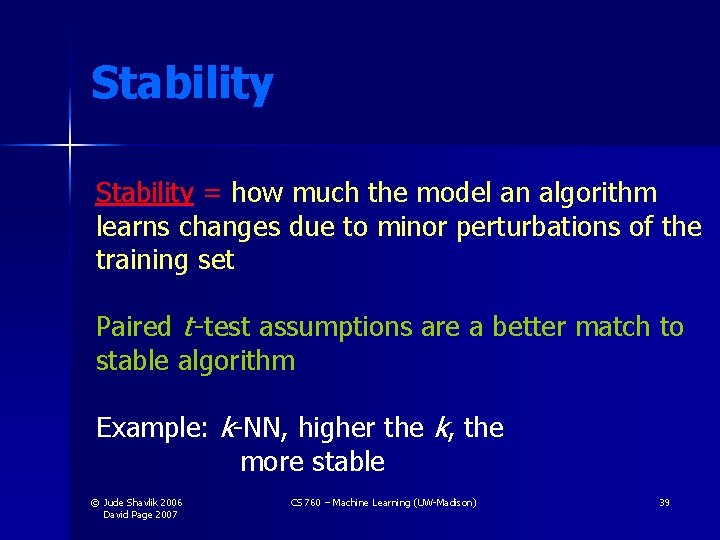

Stability = how much the model an algorithm learns changes due to minor perturbations of the training set Paired t -test assumptions are a better match to stable algorithm Example: k-NN, higher the k, the more stable © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 39

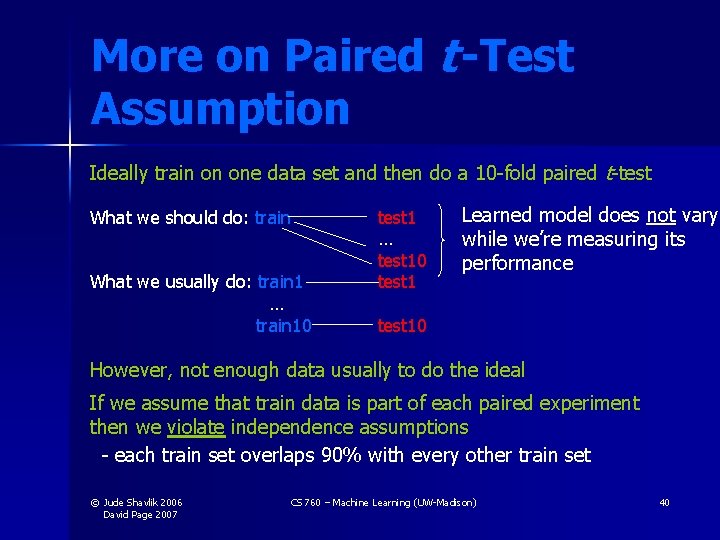

More on Paired t -Test Assumption Ideally train on one data set and then do a 10 -fold paired t-test What we should do: train What we usually do: train 1 … train 10 test 1 … test 10 test 1 Learned model does not vary while we’re measuring its performance test 10 However, not enough data usually to do the ideal If we assume that train data is part of each paired experiment then we violate independence assumptions - each train set overlaps 90% with every other train set © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 40

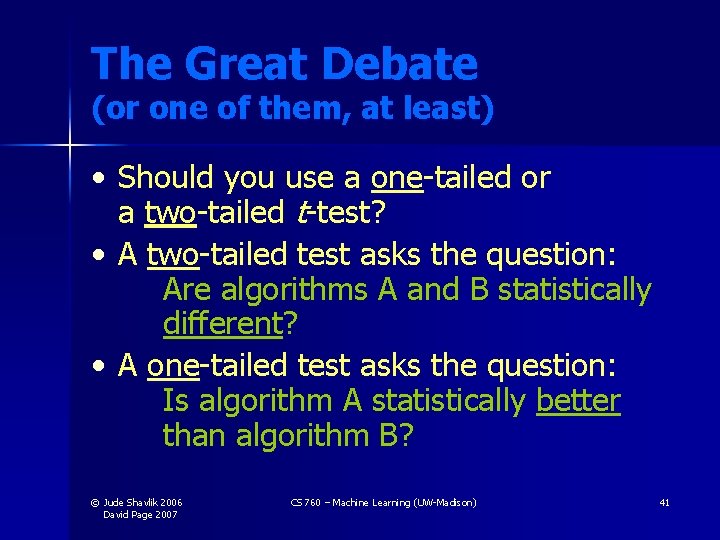

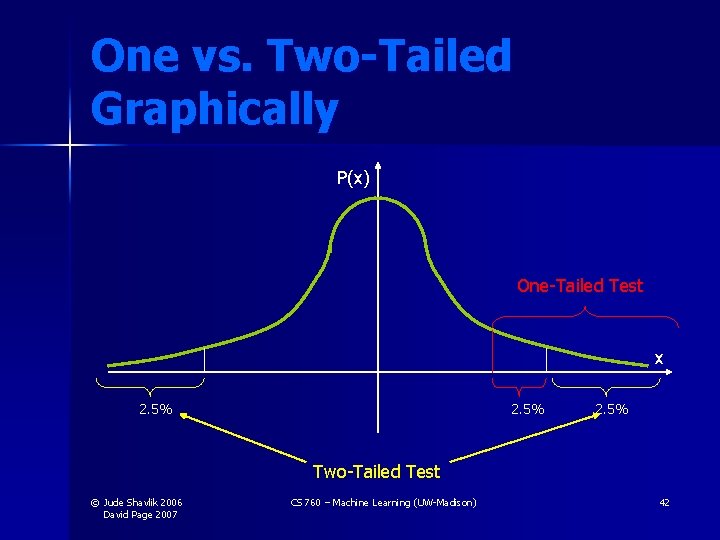

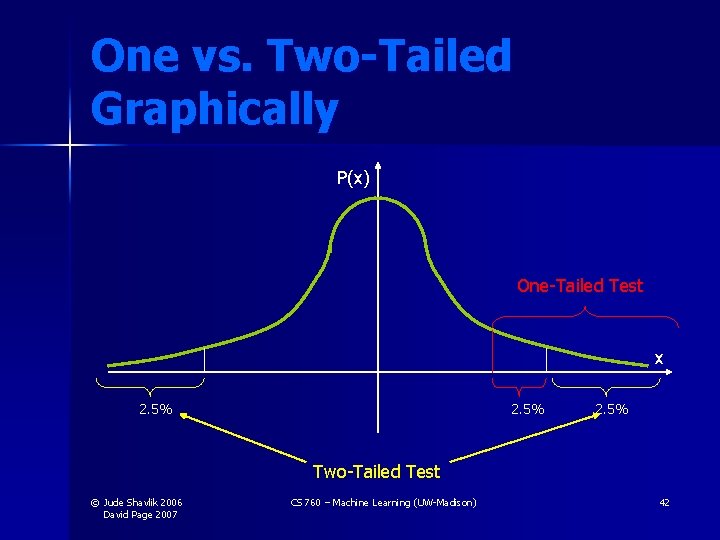

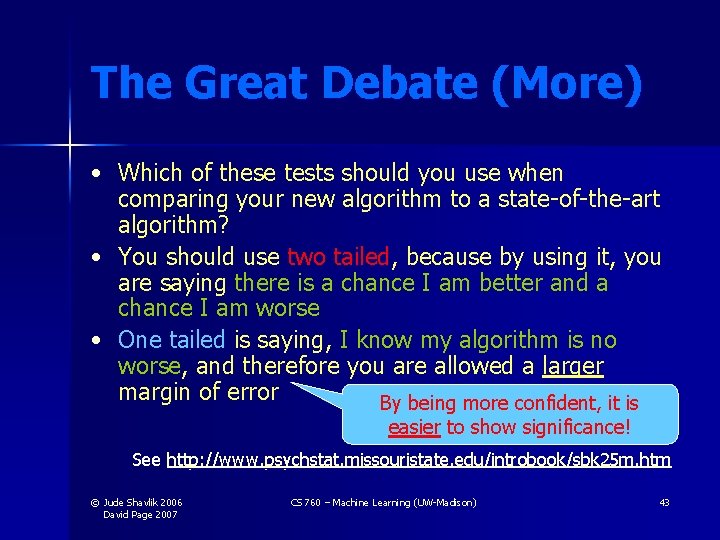

The Great Debate (or one of them, at least) • Should you use a one-tailed or a two-tailed t-test? • A two-tailed test asks the question: Are algorithms A and B statistically different? • A one-tailed test asks the question: Is algorithm A statistically better than algorithm B? © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 41

One vs. Two-Tailed Graphically P(x) One-Tailed Test x 2. 5% Two-Tailed Test © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 42

The Great Debate (More) • Which of these tests should you use when comparing your new algorithm to a state-of-the-art algorithm? • You should use two tailed, because by using it, you are saying there is a chance I am better and a chance I am worse • One tailed is saying, I know my algorithm is no worse, and therefore you are allowed a larger margin of error By being more confident, it is easier to show significance! See http: //www. psychstat. missouristate. edu/introbook/sbk 25 m. htm © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 43

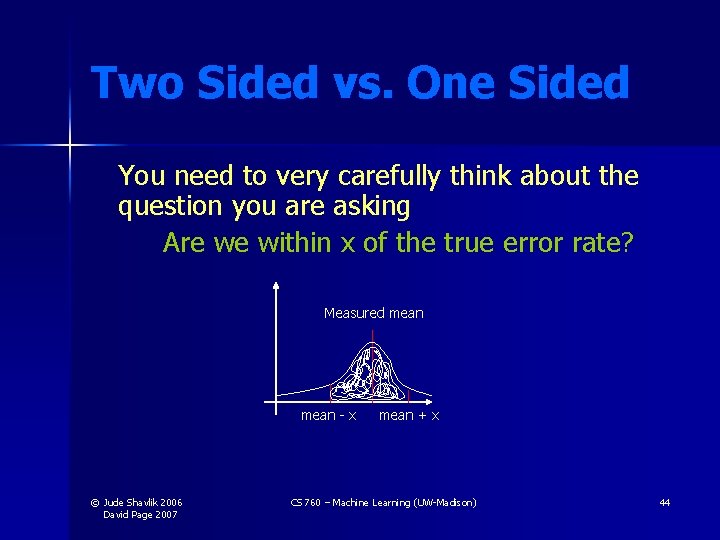

Two Sided vs. One Sided You need to very carefully think about the question you are asking Are we within x of the true error rate? Measured mean - x © Jude Shavlik 2006 David Page 2007 mean + x CS 760 – Machine Learning (UW-Madison) 44

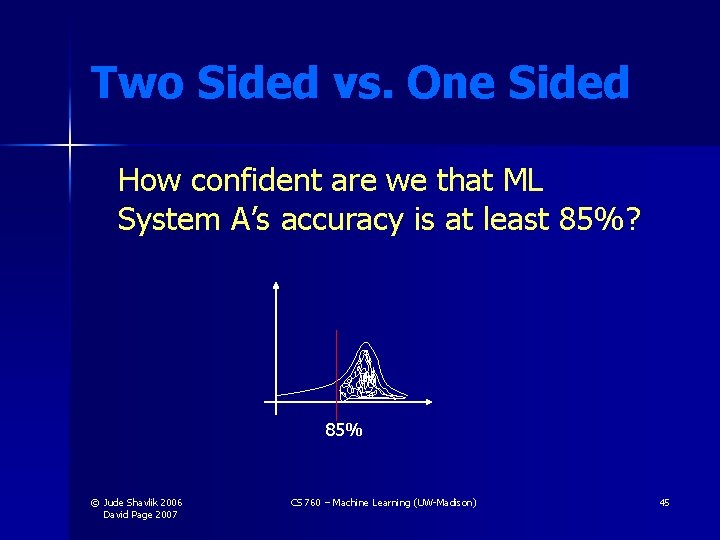

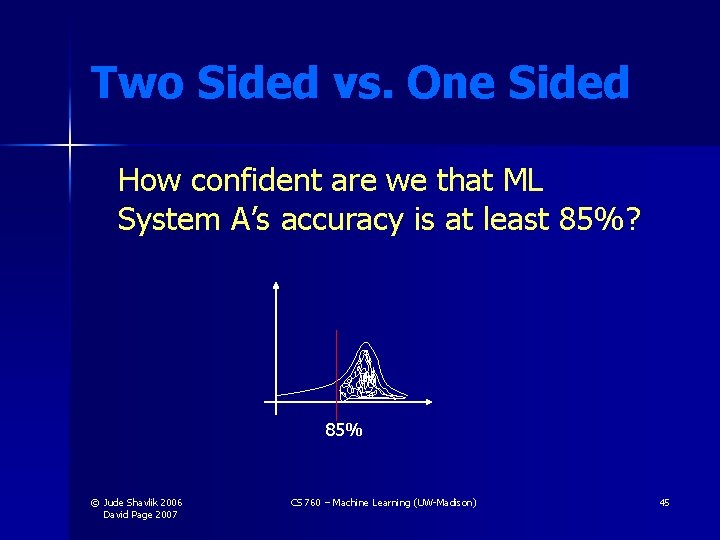

Two Sided vs. One Sided How confident are we that ML System A’s accuracy is at least 85%? 85% © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 45

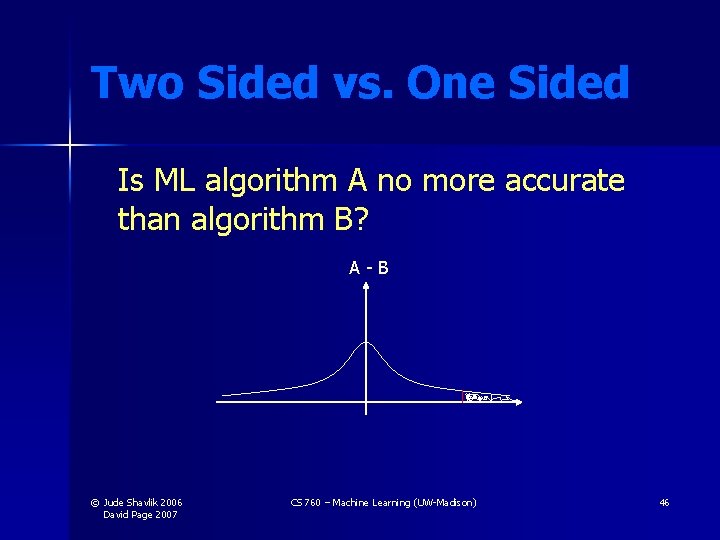

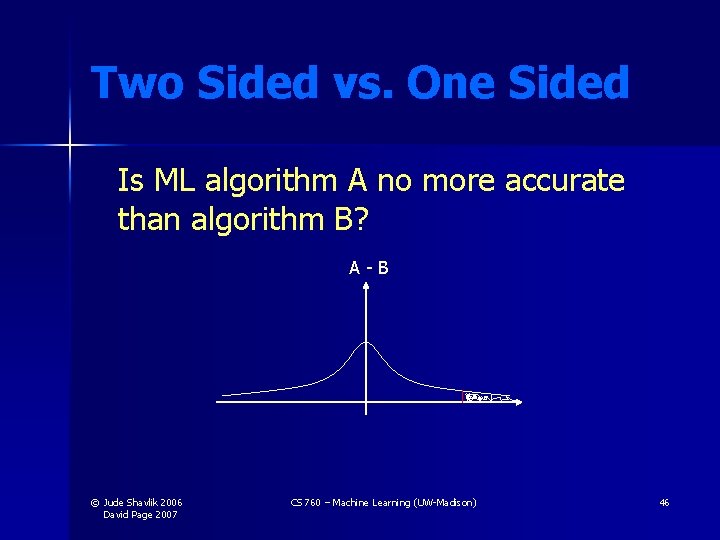

Two Sided vs. One Sided Is ML algorithm A no more accurate than algorithm B? A-B © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 46

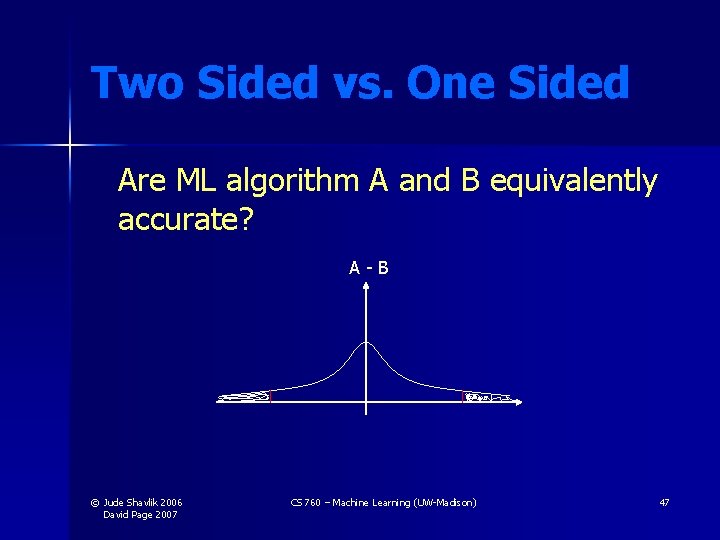

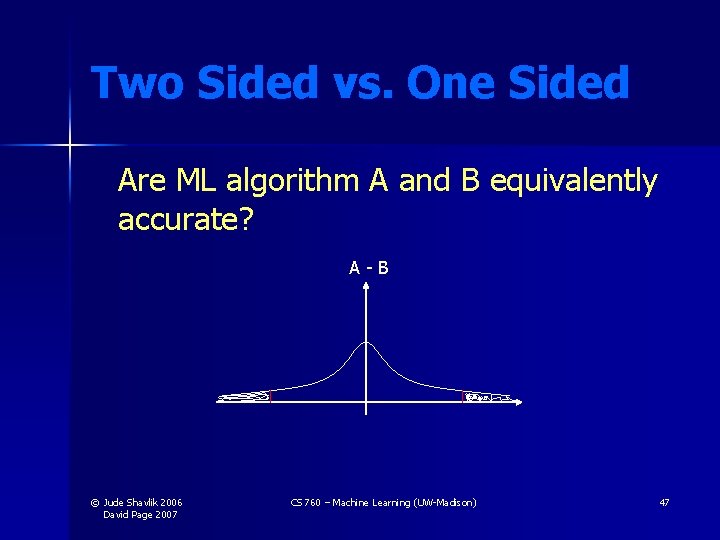

Two Sided vs. One Sided Are ML algorithm A and B equivalently accurate? A-B © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 47

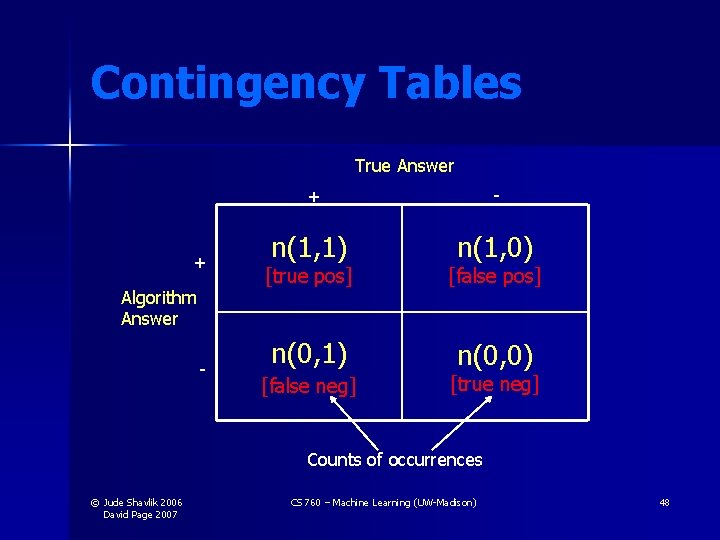

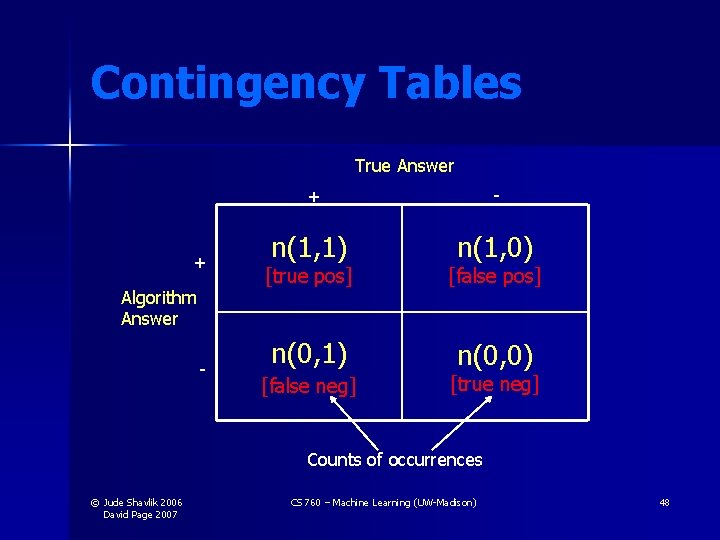

Contingency Tables True Answer + Algorithm Answer - + - n(1, 1) n(1, 0) [true pos] [false pos] n(0, 1) n(0, 0) [false neg] [true neg] Counts of occurrences © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 48

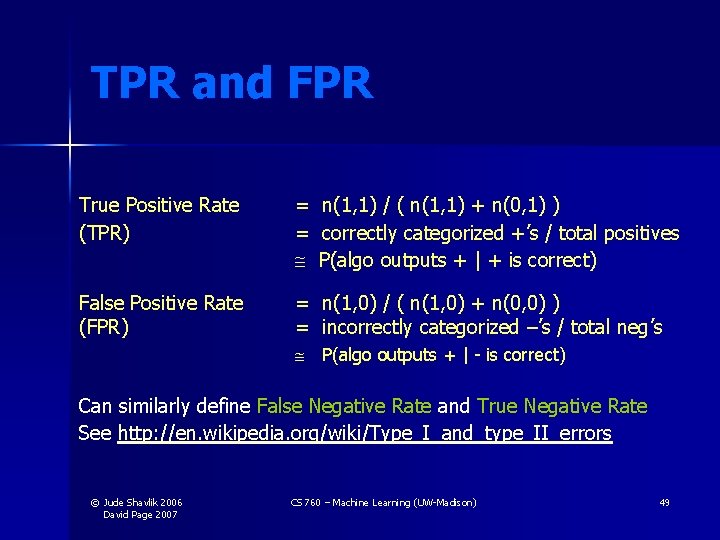

TPR and FPR True Positive Rate (TPR) = n(1, 1) / ( n(1, 1) + n(0, 1) ) = correctly categorized +’s / total positives P(algo outputs + | + is correct) False Positive Rate (FPR) = n(1, 0) / ( n(1, 0) + n(0, 0) ) = incorrectly categorized –’s / total neg’s P(algo outputs + | - is correct) Can similarly define False Negative Rate and True Negative Rate See http: //en. wikipedia. org/wiki/Type_I_and_type_II_errors © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 49

ROC Curves • • • ROC: Receiver Operating Characteristics Started during radar research during WWII Judging algorithms on accuracy alone may not be good enough when getting a positive wrong costs more than getting a negative wrong (or vice versa) • Eg, medical tests for serious diseases • Eg, a movie-recommender (ala’ Net. Flix) system © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 50

Ideal Spot 1. 0 True positives rate Prob (alg outputs + | + is correct) ROC Curves Graphically Different algorithms can work better in different parts of ROC space. This depends on cost of false + vs false Alg 1 Alg 2 False positives rate 1. 0 Prob (alg outputs + | - is correct) © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 51

Creating an ROC Curve - the Standard Approach • You need an ML algorithm that outputs NUMERIC results such as prob(example is +) • You can use ensembles (later) to get this from a model that only provides Boolean outputs • Eg, have 100 models vote & count votes © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 52

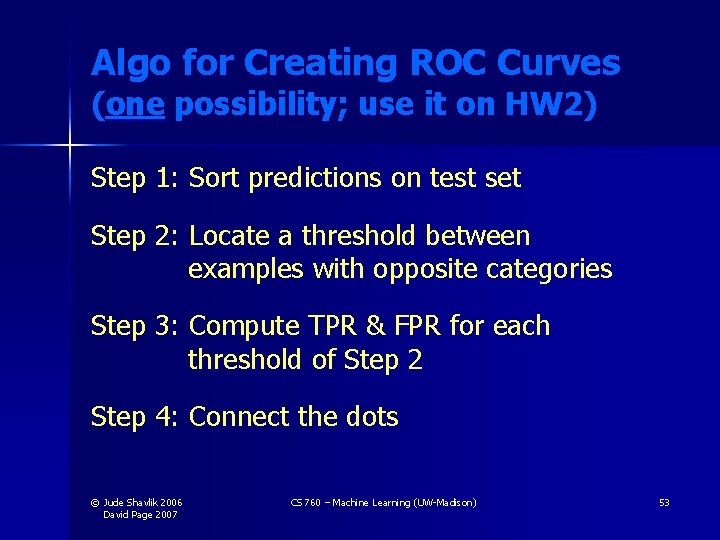

Algo for Creating ROC Curves (one possibility; use it on HW 2) Step 1: Sort predictions on test set Step 2: Locate a threshold between examples with opposite categories Step 3: Compute TPR & FPR for each threshold of Step 2 Step 4: Connect the dots © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 53

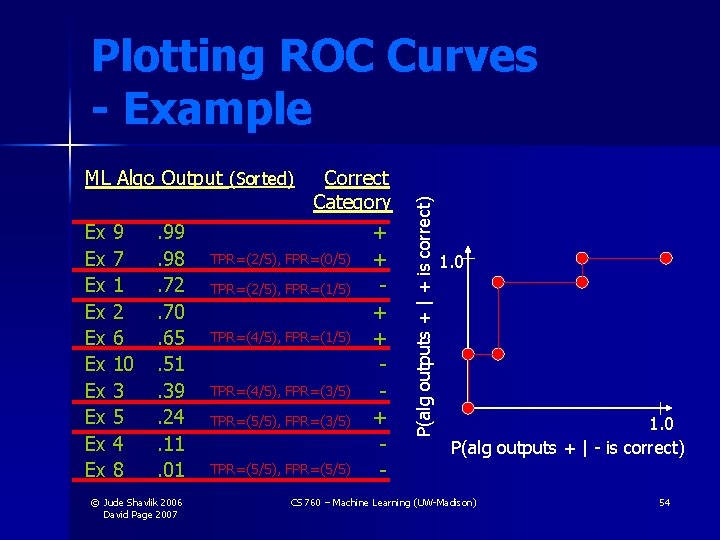

Plotting ROC Curves - Example Ex Ex Ex 9 7 1 2 6 10 3 5 4 8 . 99. 98. 72. 70. 65. 51. 39. 24. 11. 01 © Jude Shavlik 2006 David Page 2007 Correct Category + TPR=(2/5), FPR=(0/5) + TPR=(2/5), FPR=(1/5) + TPR=(4/5), FPR=(3/5) + TPR=(5/5), FPR=(3/5) TPR=(5/5), FPR=(5/5) - P(alg outputs + | + is correct) ML Algo Output (Sorted) 1. 0 P(alg outputs + | - is correct) CS 760 – Machine Learning (UW-Madison) 54

ROC’s and Many Models (not in the ensemble sense) • It is not necessary that we learn one model and then threshold its output to produce an ROC curve • You could learn different models for different regions of ROC space • Eg, see Goadrich, Oliphant, & Shavlik ILP ’ 04 and MLJ ‘ 06 © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 55

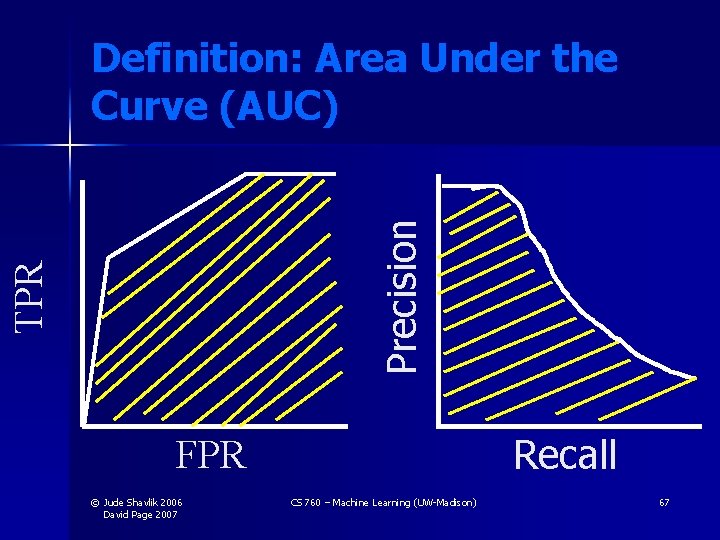

Area Under ROC Curve A common metric for experiments is to numerically integrate the ROC Curve True positives 1. 0 False positives © Jude Shavlik 2006 David Page 2007 1. 0 CS 760 – Machine Learning (UW-Madison) 56

Asymmetric Error Costs • • Assume that cost(FP) != cost(FN) You would like to pick a threshold that mimimizes E(total cost) = cost(FP) x prob(FP) x (# of -) + cost(FN) x prob(FN) x (# of +) • You could also have (maybe negative) costs for TP and TN (assumed zero in above) © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 57

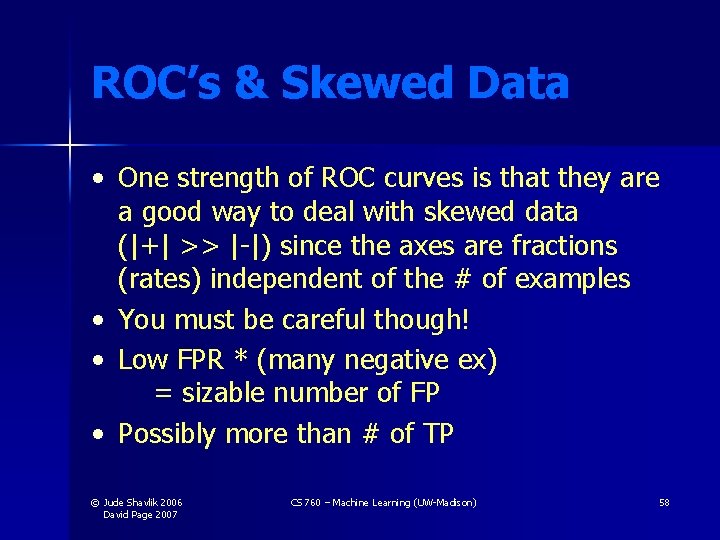

ROC’s & Skewed Data • One strength of ROC curves is that they are a good way to deal with skewed data (|+| >> |-|) since the axes are fractions (rates) independent of the # of examples • You must be careful though! • Low FPR * (many negative ex) = sizable number of FP • Possibly more than # of TP © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 58

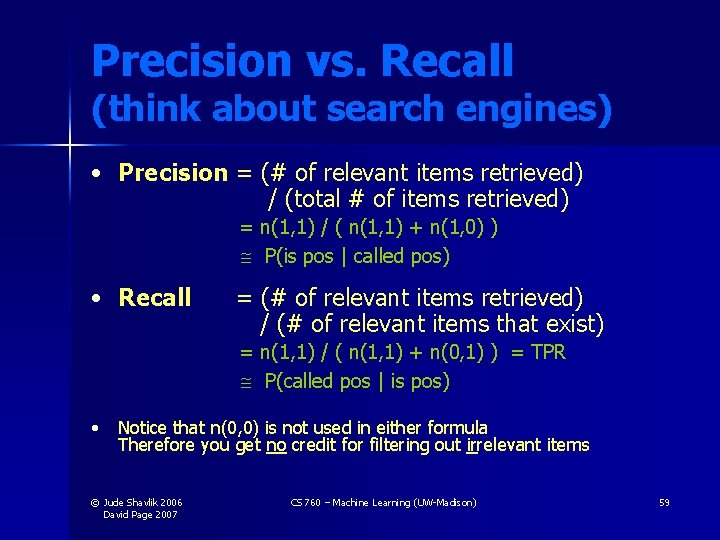

Precision vs. Recall (think about search engines) • Precision = (# of relevant items retrieved) / (total # of items retrieved) = n(1, 1) / ( n(1, 1) + n(1, 0) ) P(is pos | called pos) • Recall = (# of relevant items retrieved) / (# of relevant items that exist) = n(1, 1) / ( n(1, 1) + n(0, 1) ) = TPR P(called pos | is pos) • Notice that n(0, 0) is not used in either formula Therefore you get no credit for filtering out irrelevant items © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 59

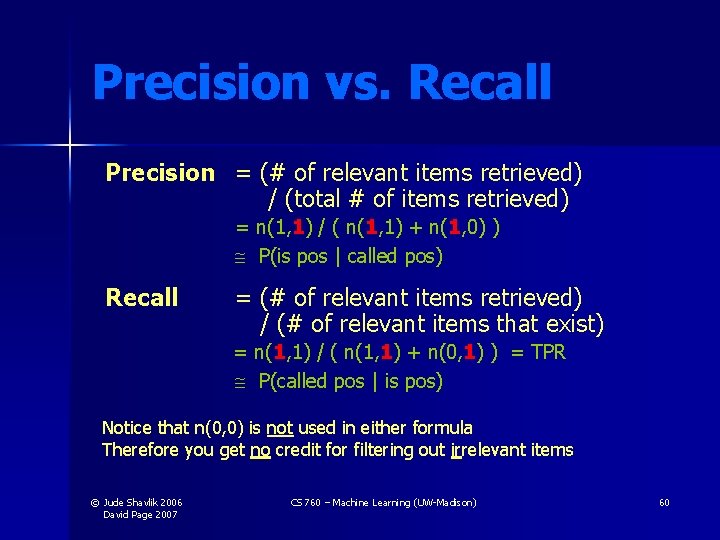

Precision vs. Recall Precision = (# of relevant items retrieved) / (total # of items retrieved) = n(1, 1) / ( n(1, 1) + n(1, 0) ) P(is pos | called pos) Recall = (# of relevant items retrieved) / (# of relevant items that exist) = n(1, 1) / ( n(1, 1) + n(0, 1) ) = TPR P(called pos | is pos) Notice that n(0, 0) is not used in either formula Therefore you get no credit for filtering out irrelevant items © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 60

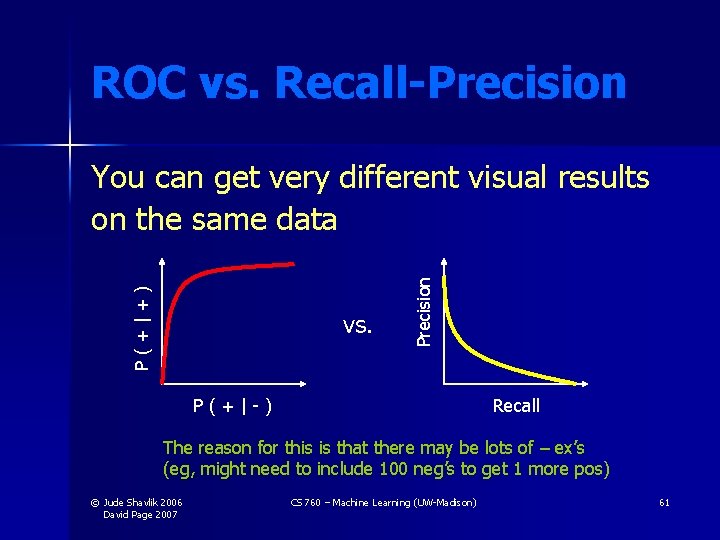

ROC vs. Recall-Precision vs. Precision P(+|+) You can get very different visual results on the same data P(+|-) Recall The reason for this is that there may be lots of – ex’s (eg, might need to include 100 neg’s to get 1 more pos) © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 61

Recall-Precision Curves You cannot simply connect the dots in Recall-Precision curves (OK to do in ROC’s) Precision See Goadrich, Oliphant, & Shavlik, ILP ’ 04 or MLJ ’ 06 x Recall © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 62

Interpolating in PR Space • Would like to interpolate correctly, then remove points that lie below interpolation • Analogous to convex hull in ROC space • Can you do it efficiently? • Yes – convert to ROC space, take convex hull, convert back to PR space (Davis & Goadrich, ICML-06) © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 63

The Relationship between Precision-Recall and ROC Curves Jesse Davis & Mark Goadrich Department of Computer Sciences University of Wisconsin © Jude Shavlik 2004 CS 760 – Machine Learning (UW-Madison) Lecture #1

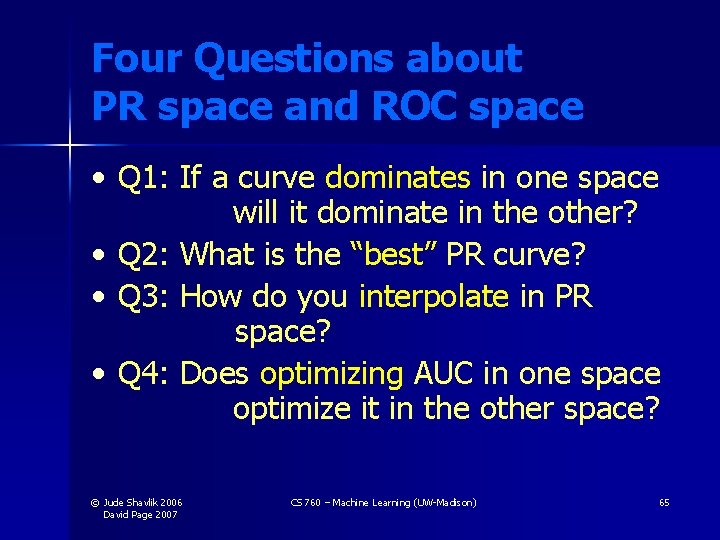

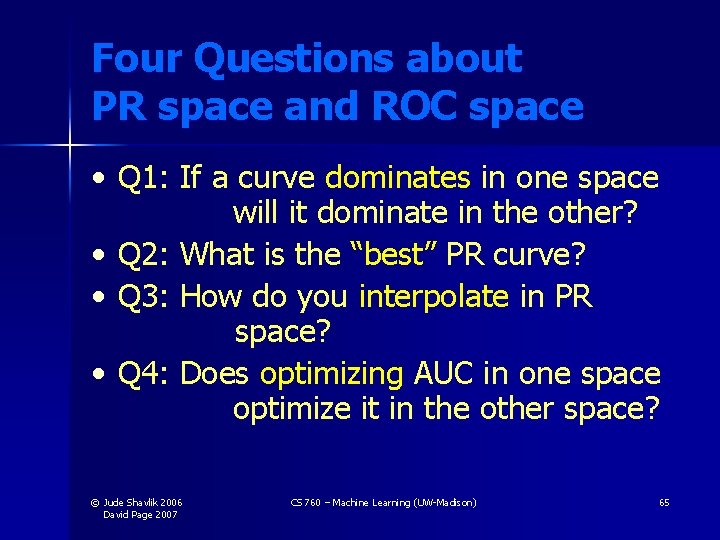

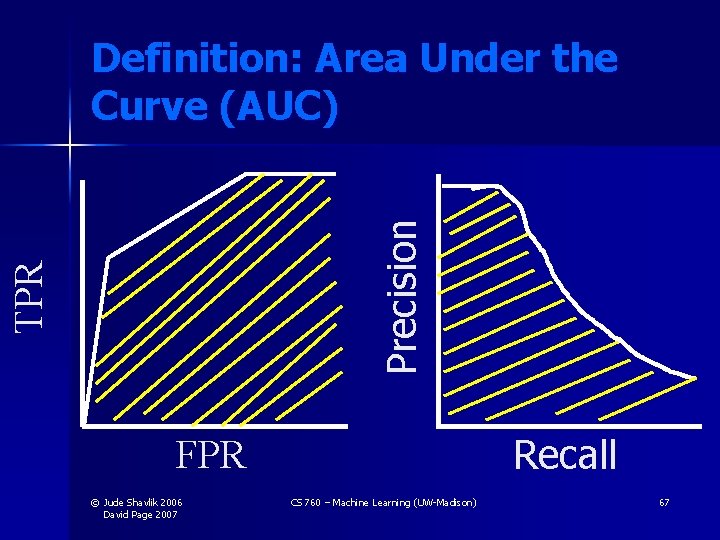

Four Questions about PR space and ROC space • Q 1: If a curve dominates in one space will it dominate in the other? • Q 2: What is the “best” PR curve? • Q 3: How do you interpolate in PR space? • Q 4: Does optimizing AUC in one space optimize it in the other space? © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 65

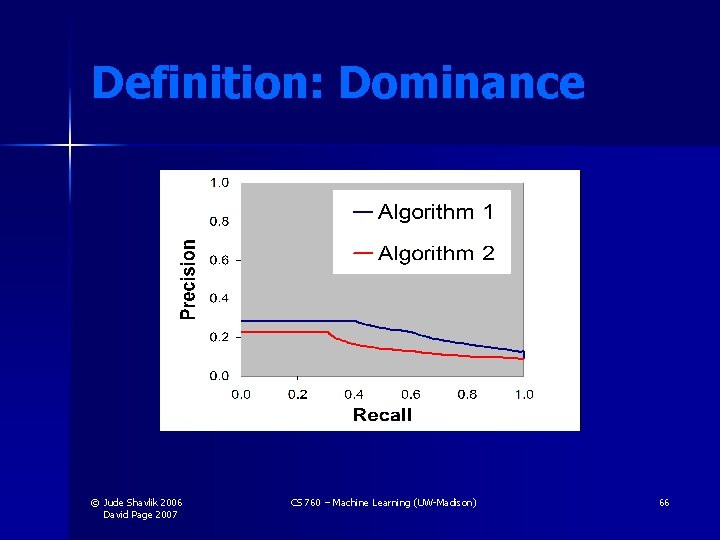

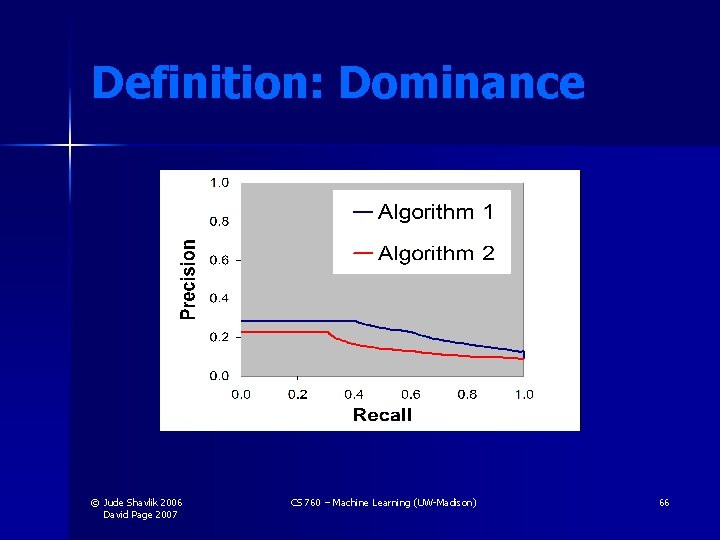

Definition: Dominance © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 66

TPR Precision Definition: Area Under the Curve (AUC) Recall FPR © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 67

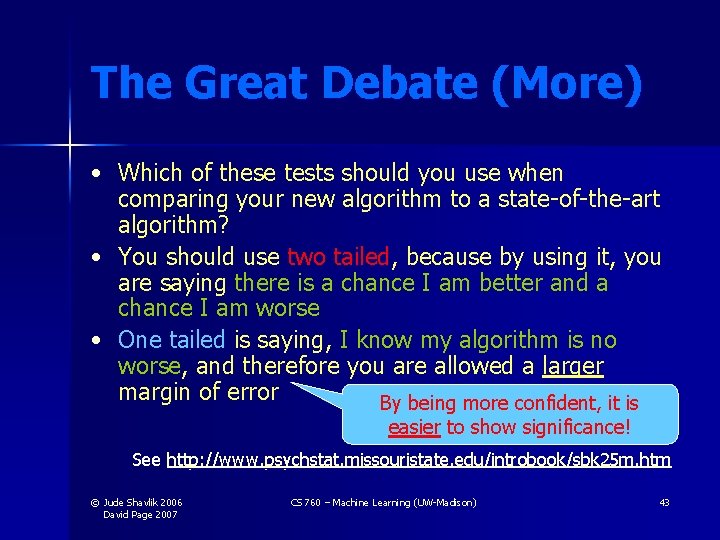

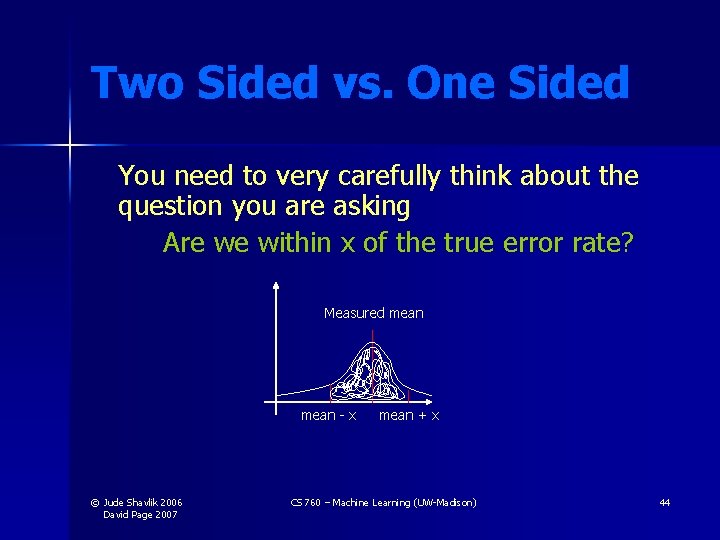

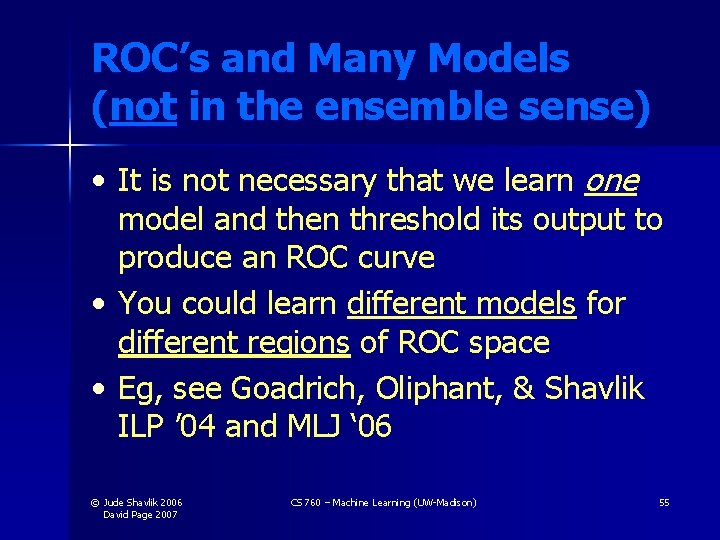

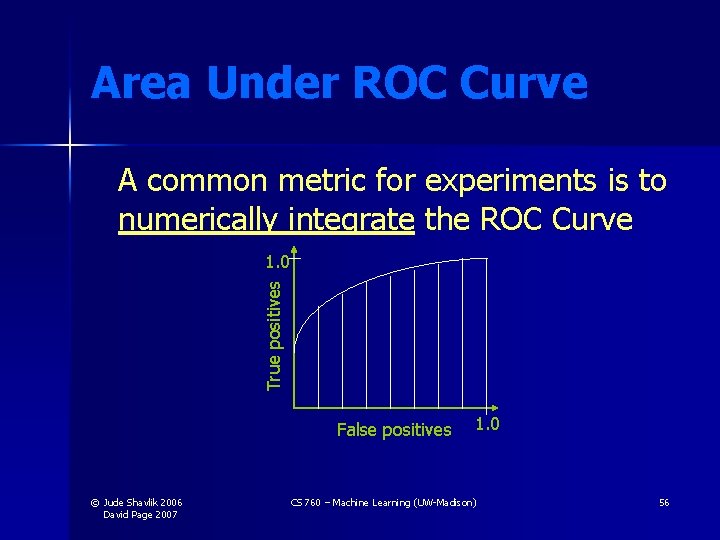

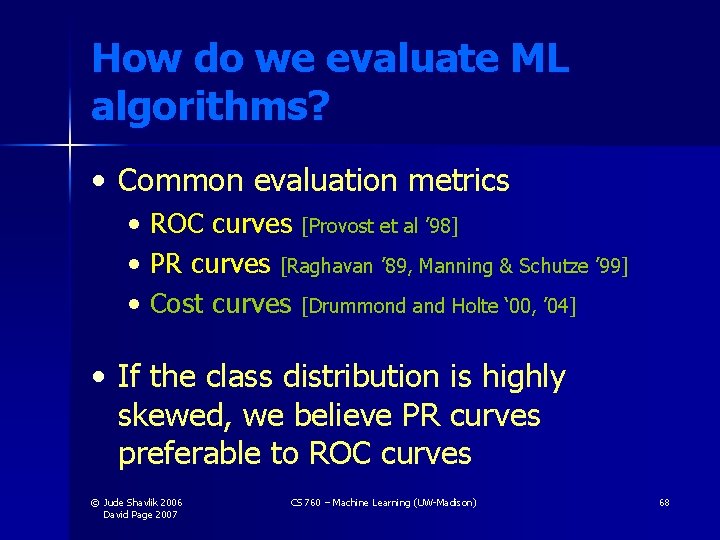

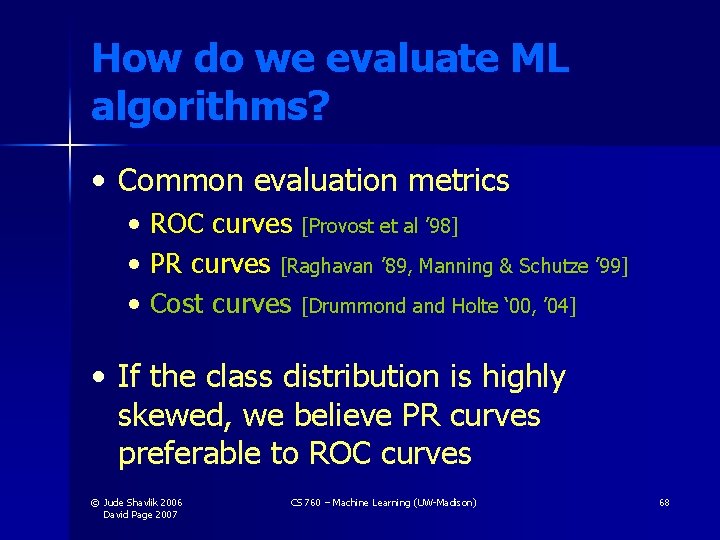

How do we evaluate ML algorithms? • Common evaluation metrics • ROC curves [Provost et al ’ 98] • PR curves [Raghavan ’ 89, Manning & Schutze ’ 99] • Cost curves [Drummond and Holte ‘ 00, ’ 04] • If the class distribution is highly skewed, we believe PR curves preferable to ROC curves © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 68

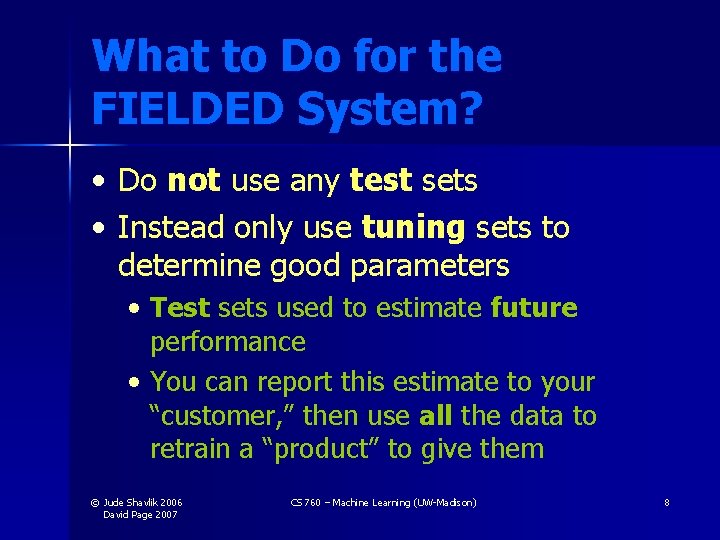

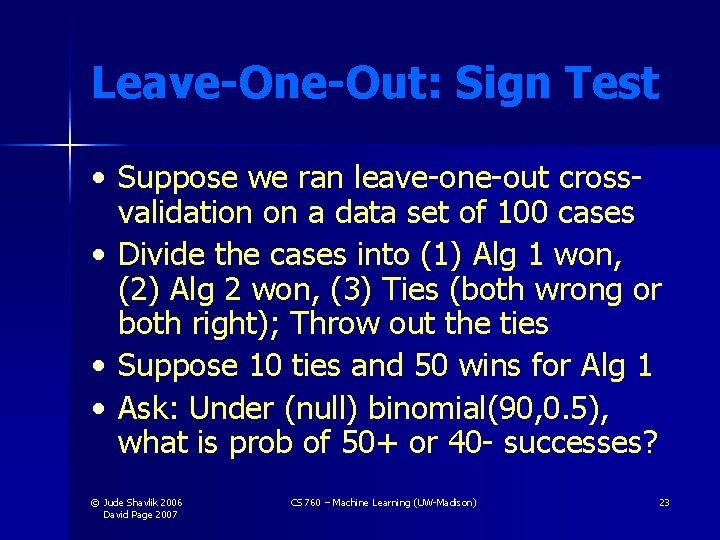

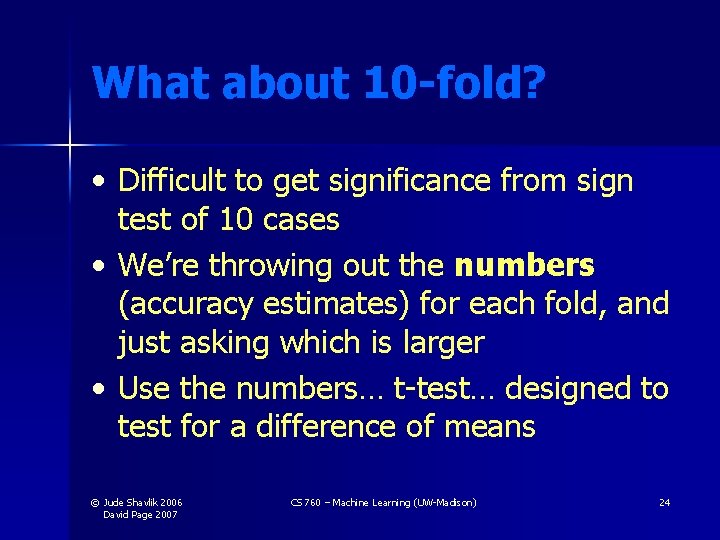

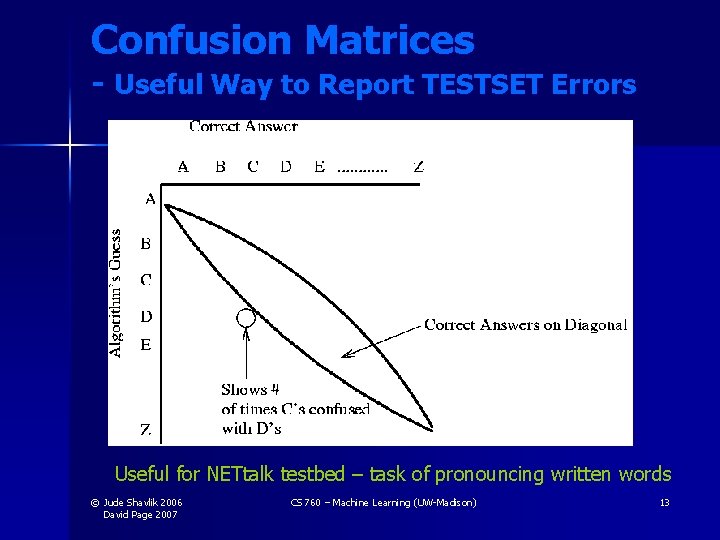

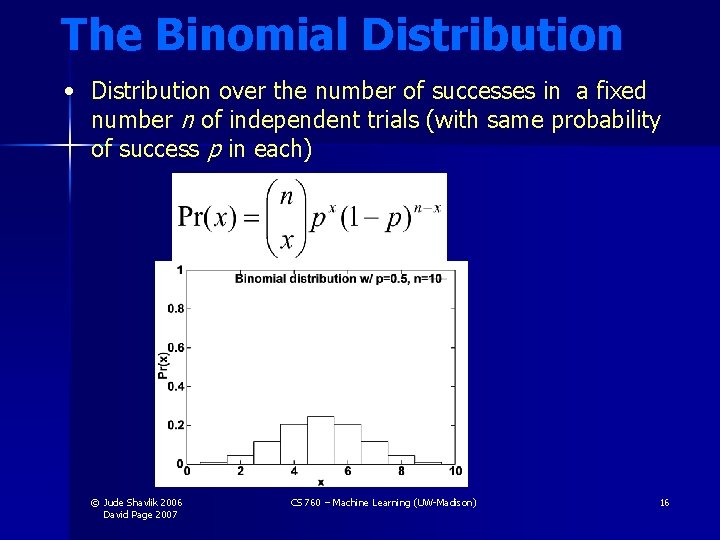

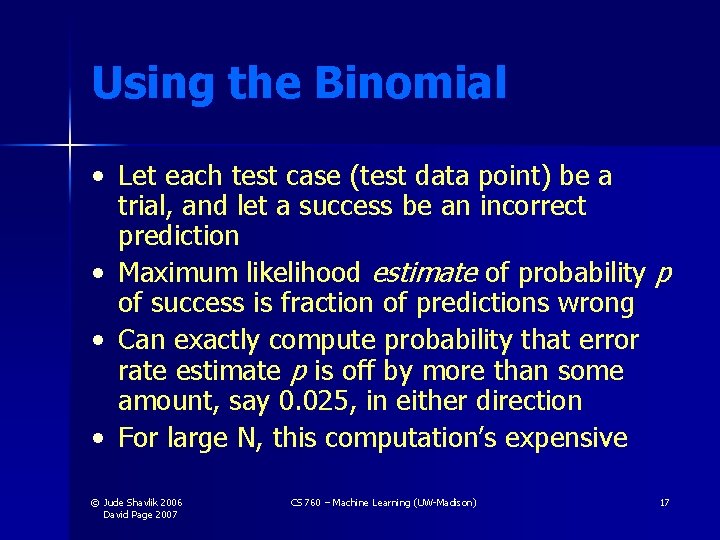

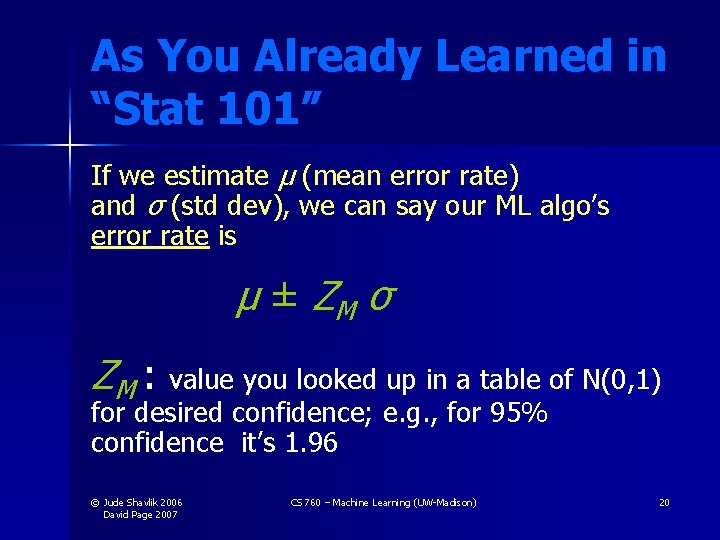

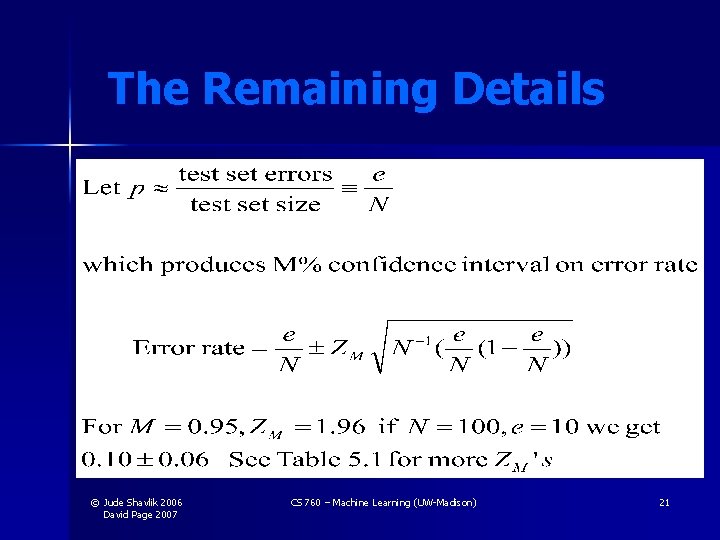

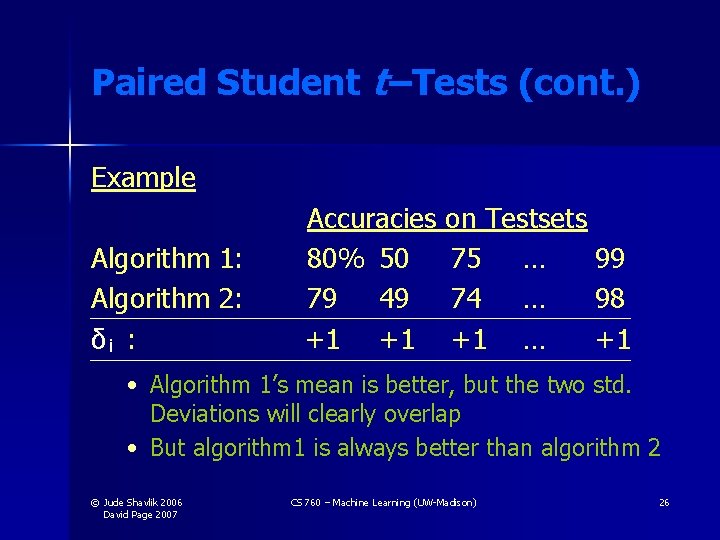

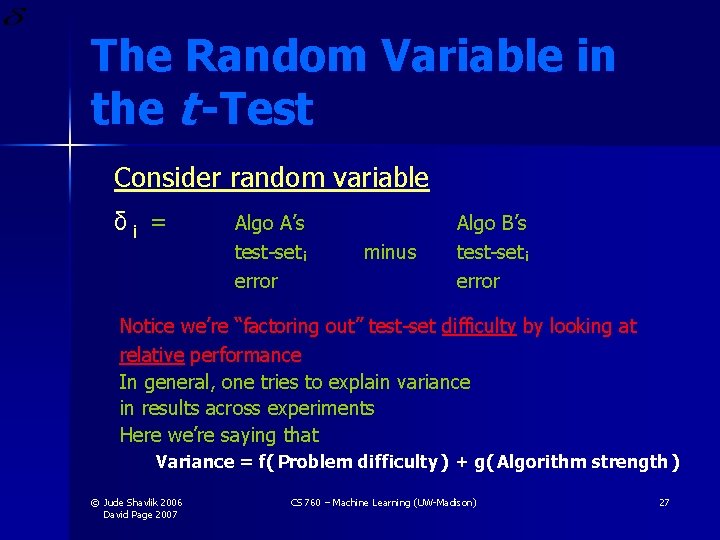

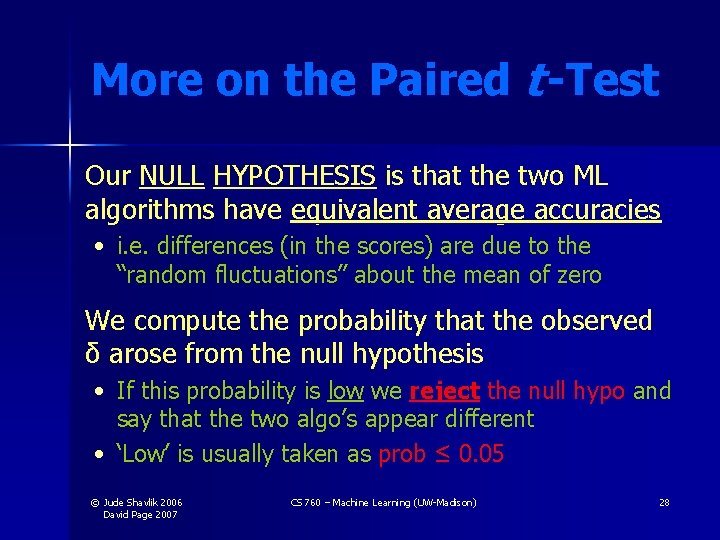

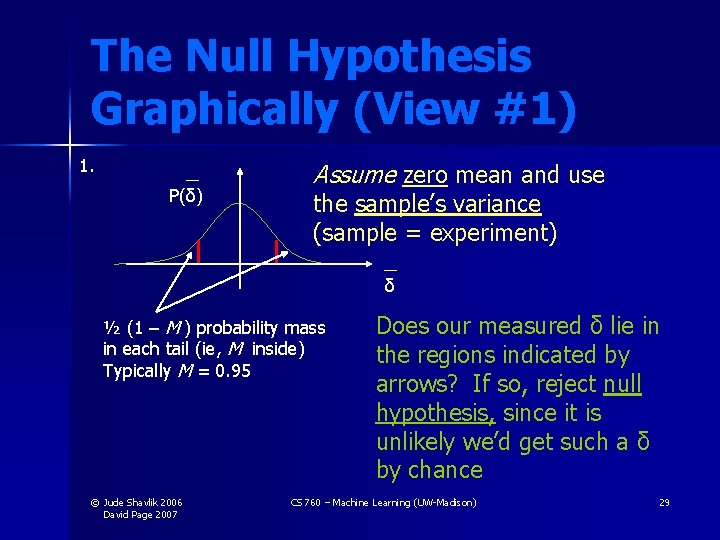

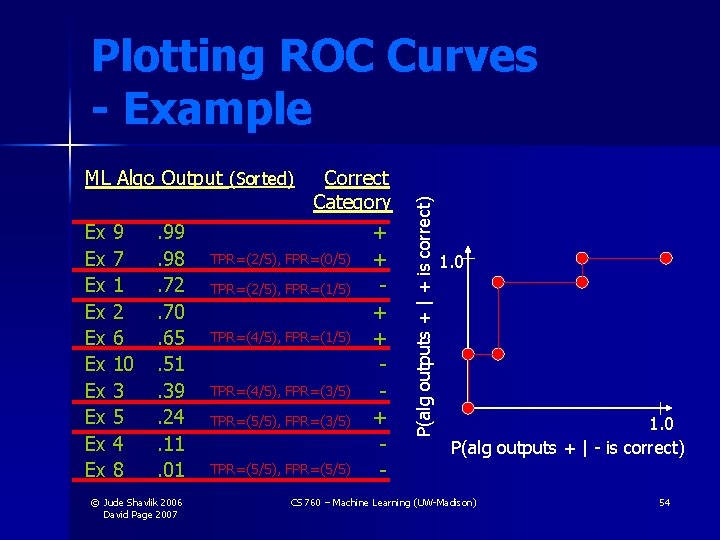

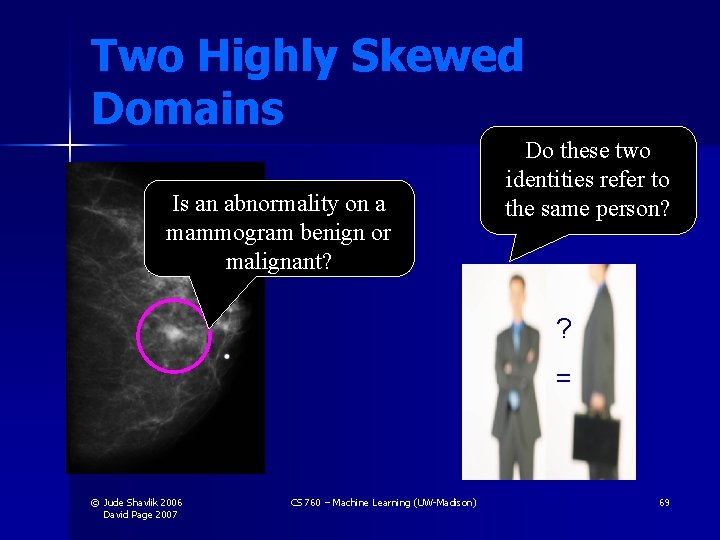

Two Highly Skewed Domains Is an abnormality on a mammogram benign or malignant? Do these two identities refer to the same person? ? = © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 69

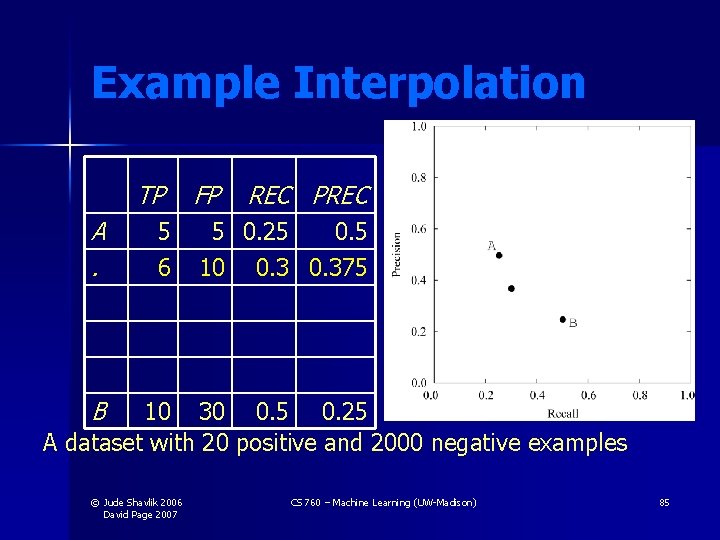

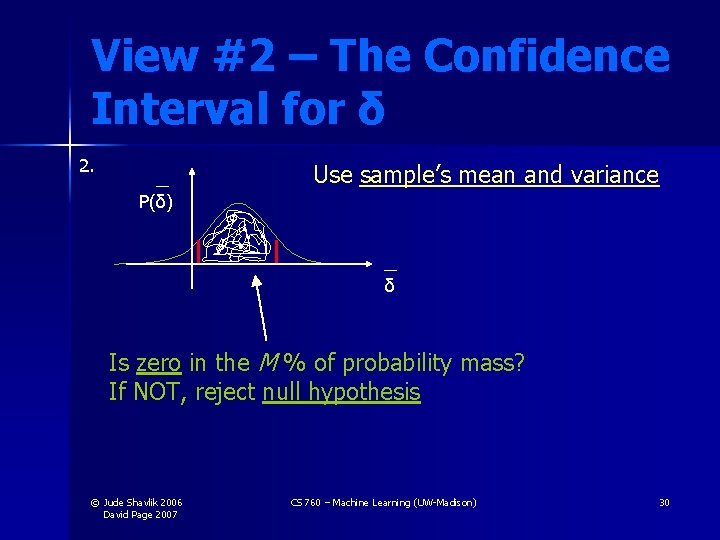

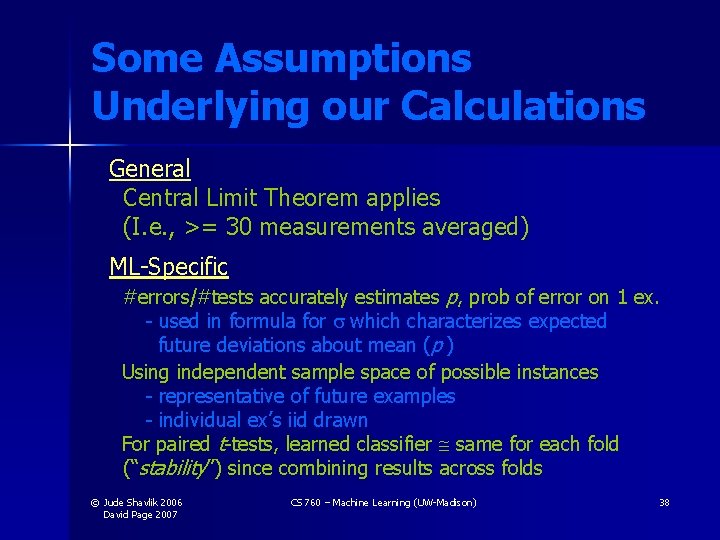

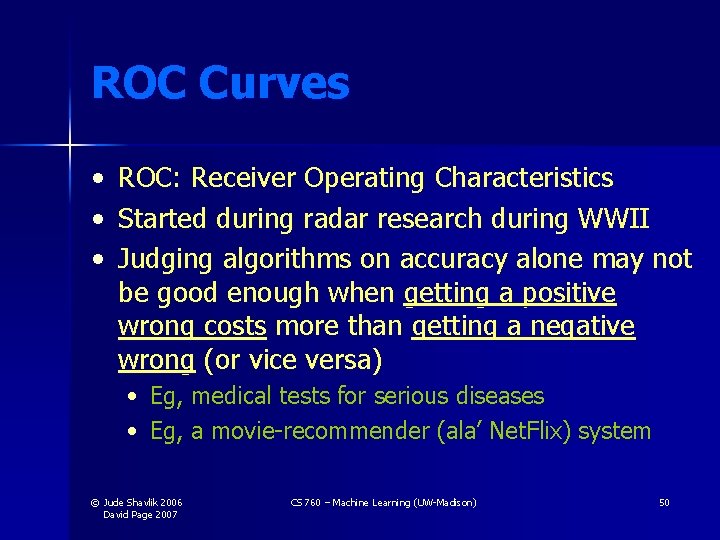

![Diagnosing Breast Cancer Real Data Davis et al IJCAI 2005 Jude Shavlik 2006 Diagnosing Breast Cancer [Real Data: Davis et al. IJCAI 2005] © Jude Shavlik 2006](https://slidetodoc.com/presentation_image_h2/f776afcae3c7d0f39443053fcbbf650c/image-70.jpg)

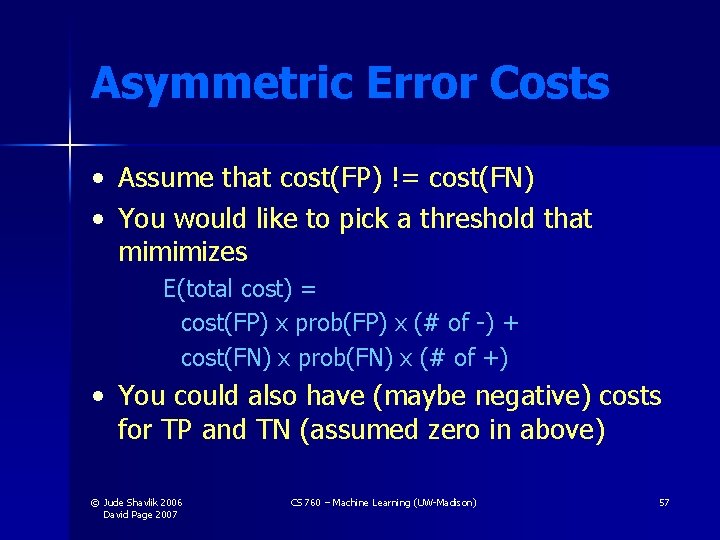

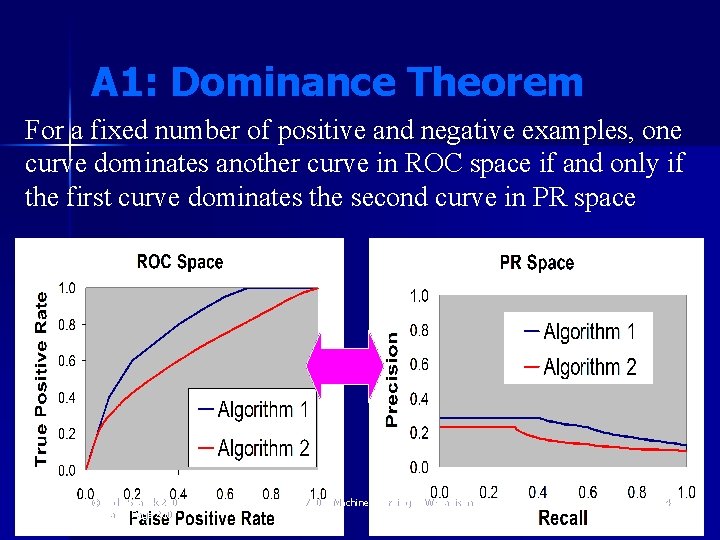

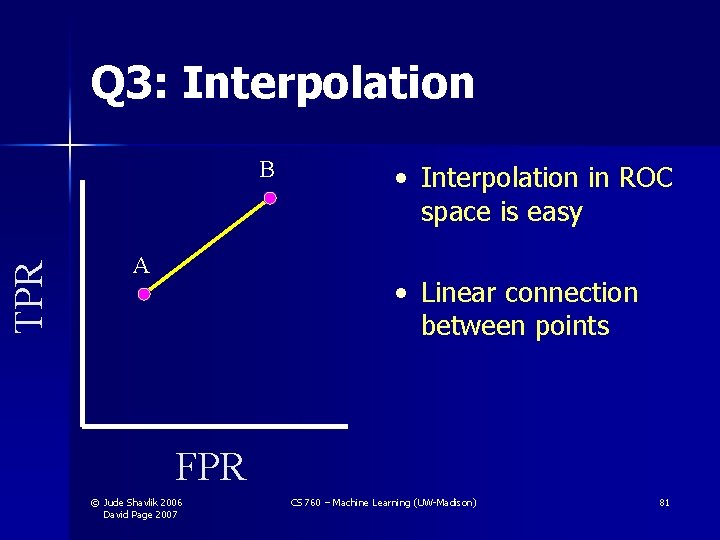

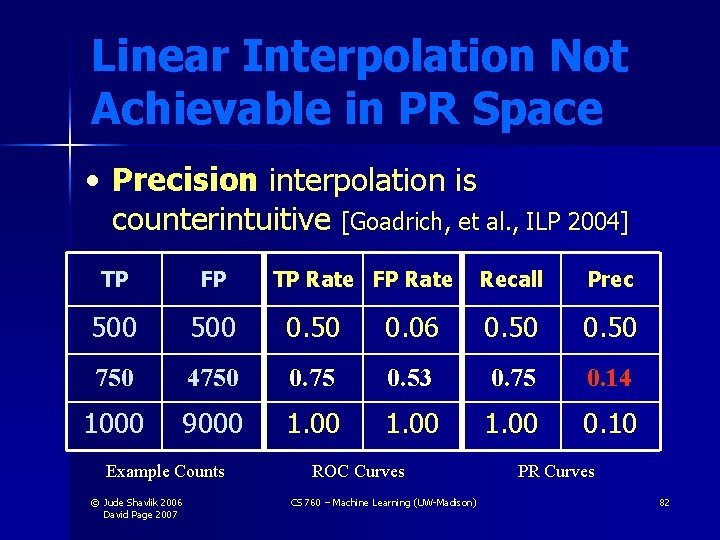

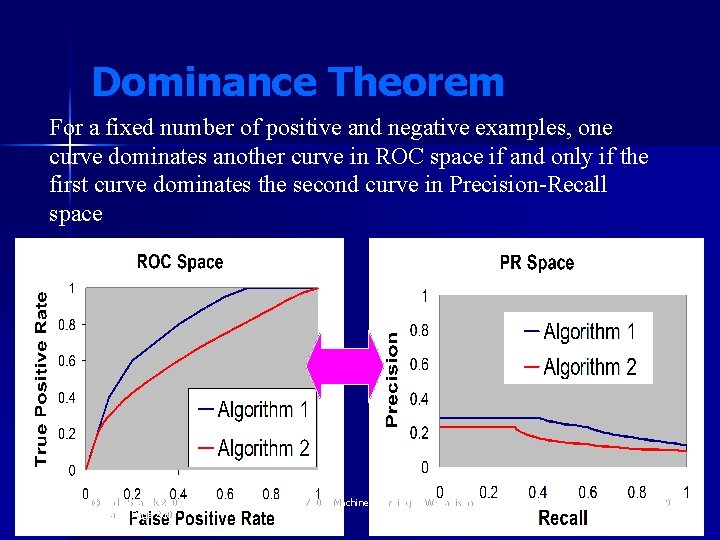

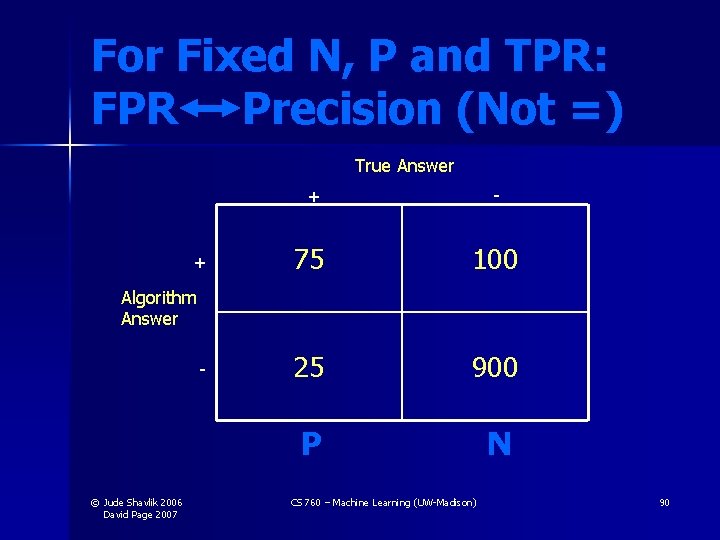

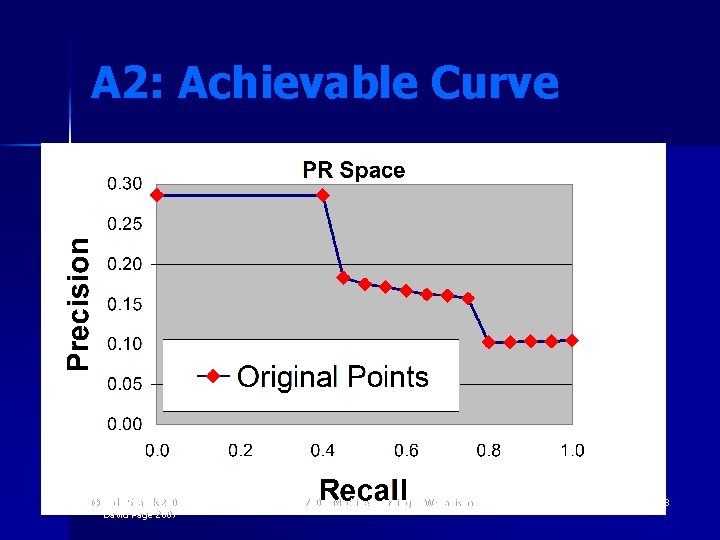

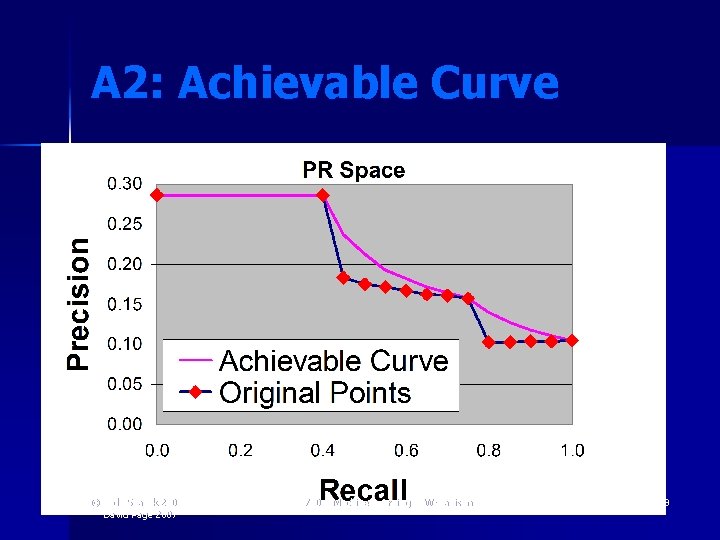

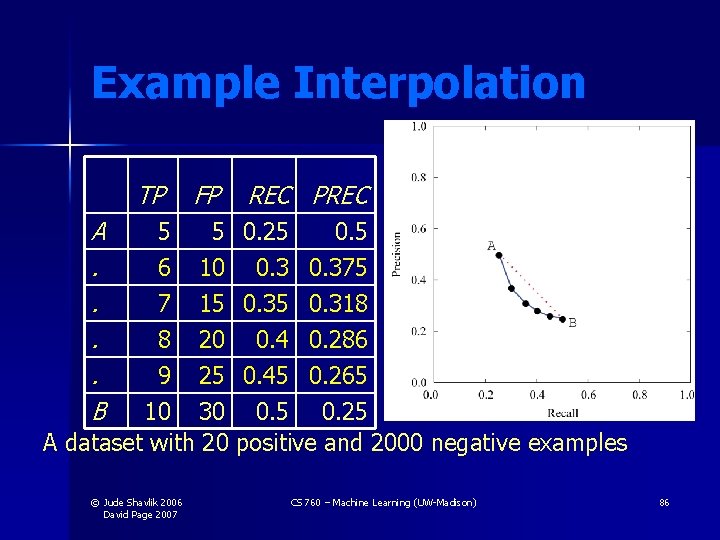

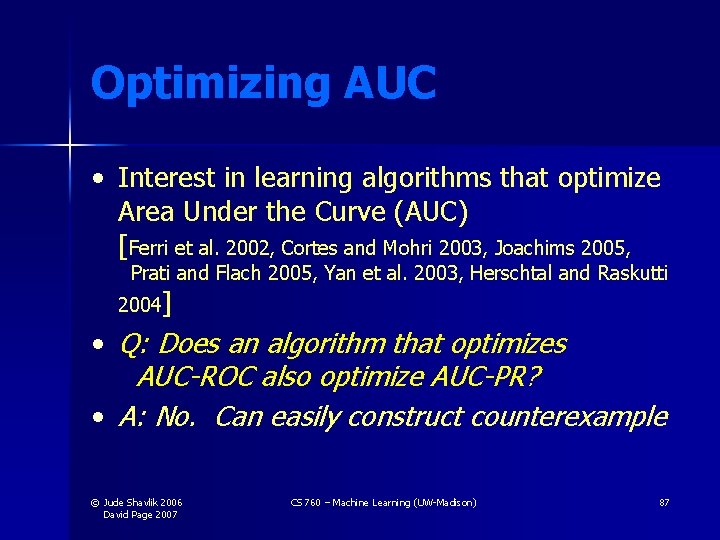

Diagnosing Breast Cancer [Real Data: Davis et al. IJCAI 2005] © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 70

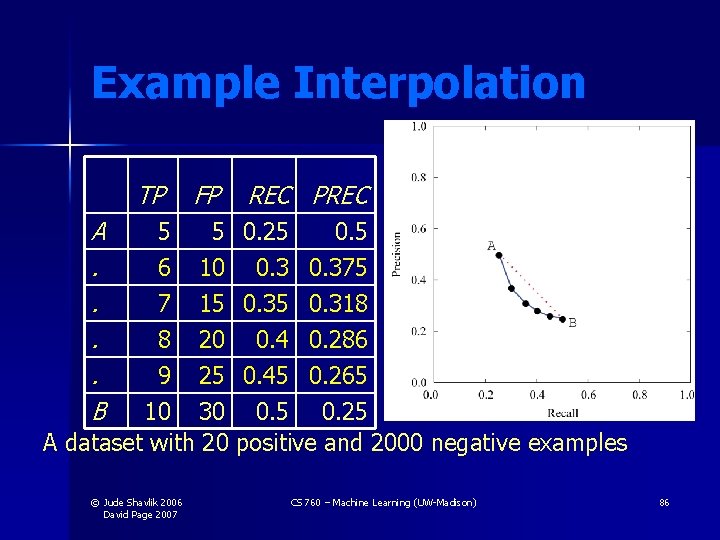

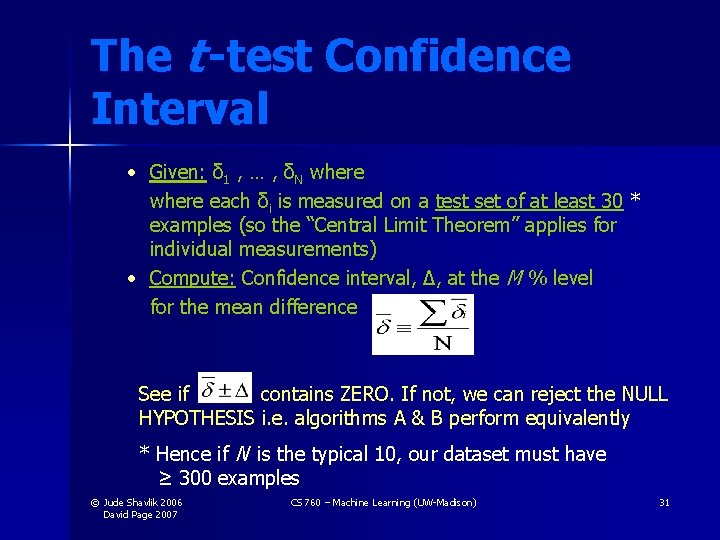

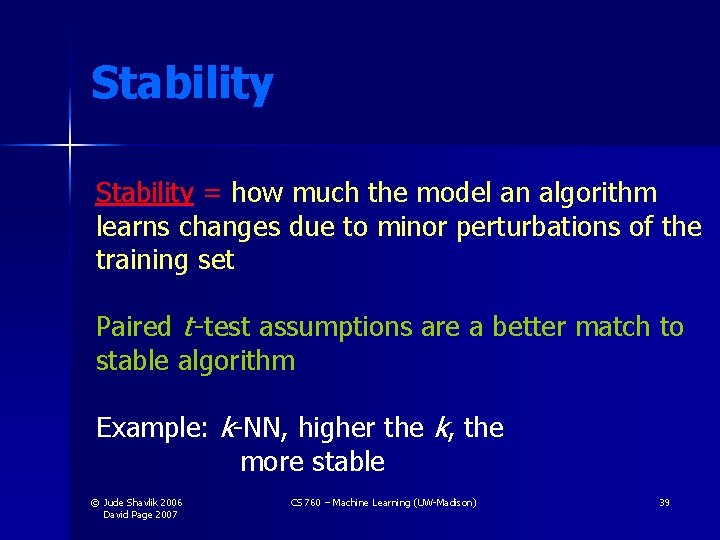

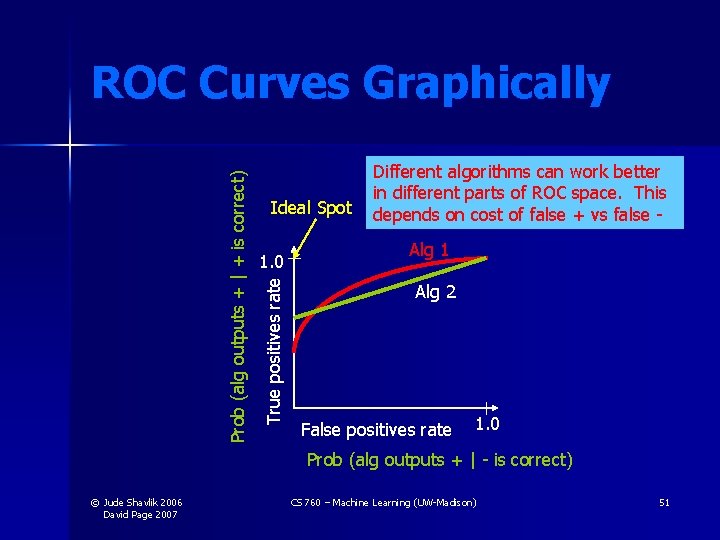

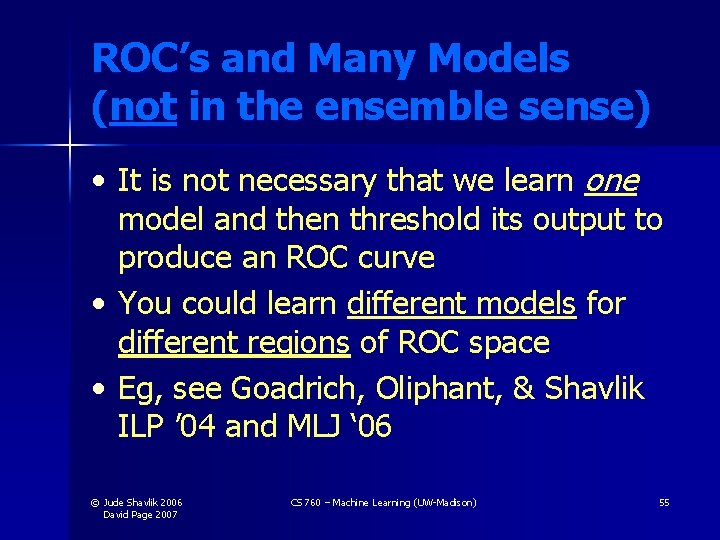

![Diagnosing Breast Cancer Real Data Davis et al IJCAI 2005 Jude Shavlik 2006 Diagnosing Breast Cancer [Real Data: Davis et al. IJCAI 2005] © Jude Shavlik 2006](https://slidetodoc.com/presentation_image_h2/f776afcae3c7d0f39443053fcbbf650c/image-71.jpg)

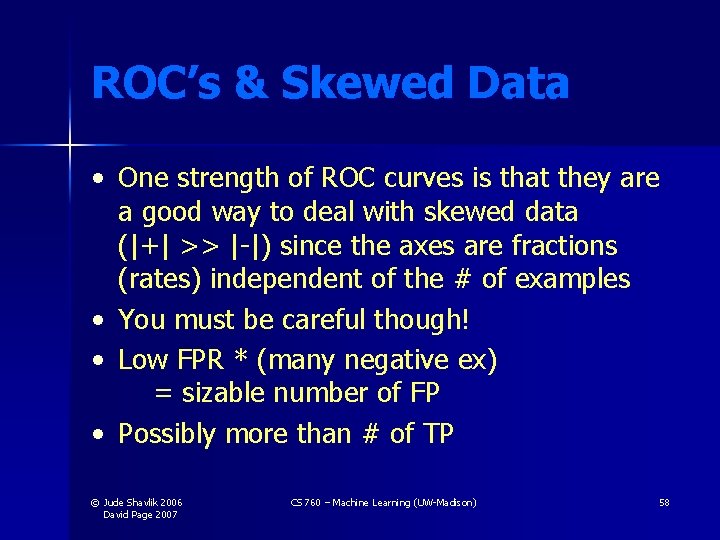

Diagnosing Breast Cancer [Real Data: Davis et al. IJCAI 2005] © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 71

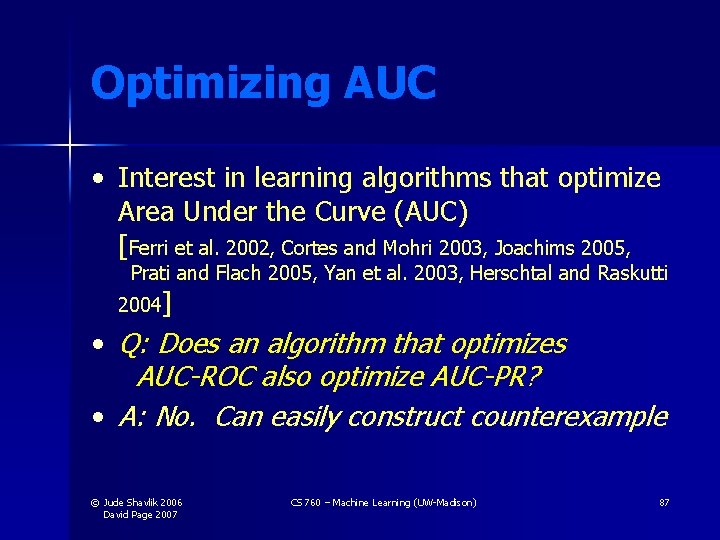

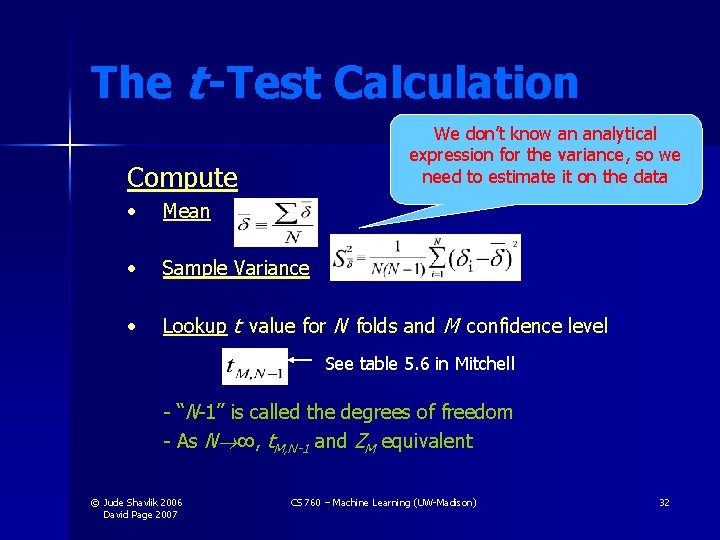

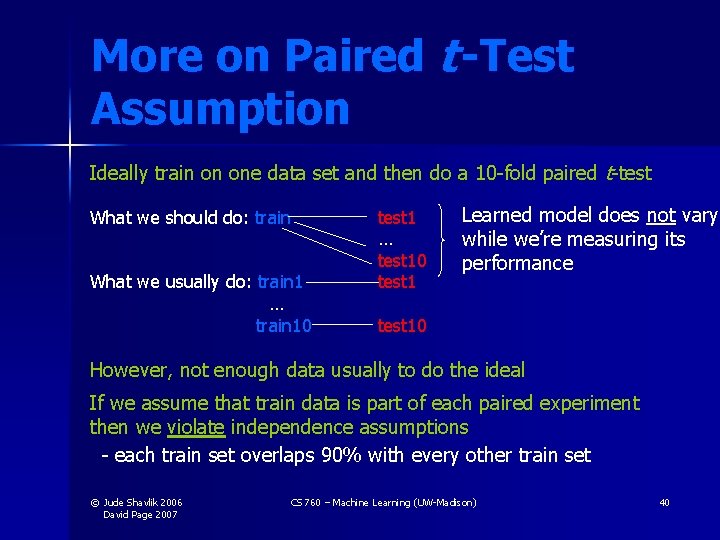

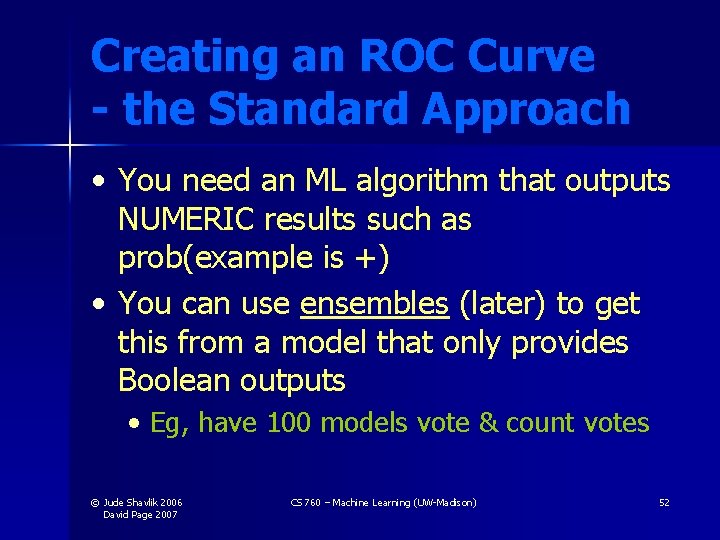

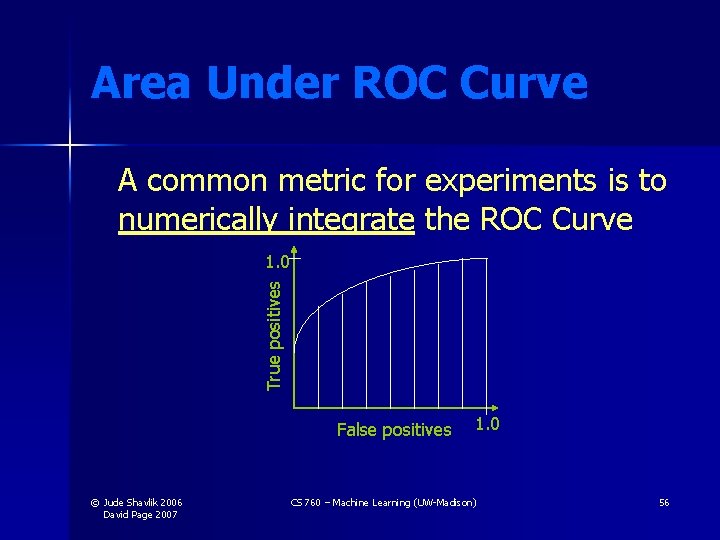

![Predicting Aliases Synthetic data Davis et al ICIA 2005 Jude Shavlik 2006 David Predicting Aliases [Synthetic data: Davis et al. ICIA 2005] © Jude Shavlik 2006 David](https://slidetodoc.com/presentation_image_h2/f776afcae3c7d0f39443053fcbbf650c/image-72.jpg)

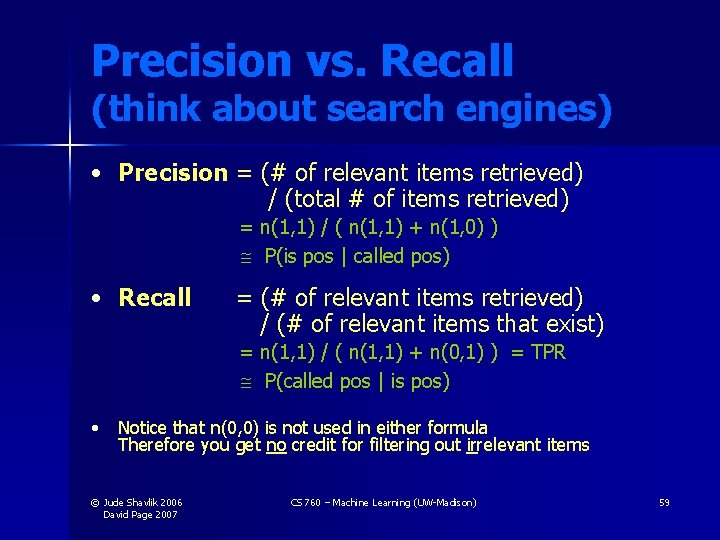

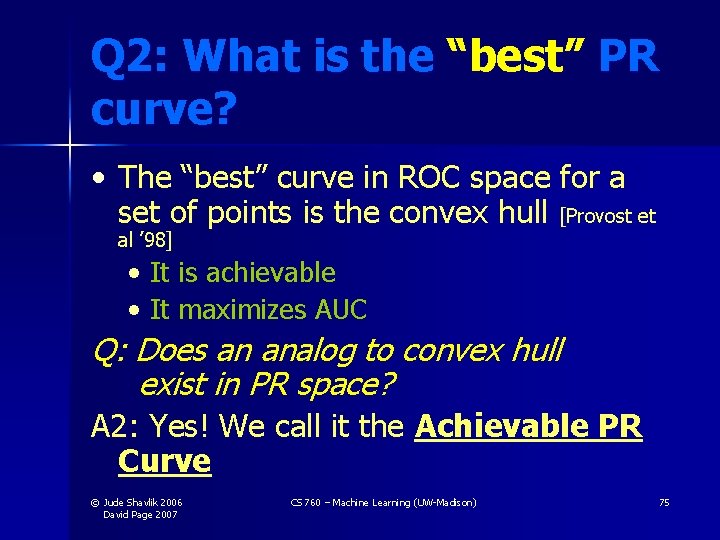

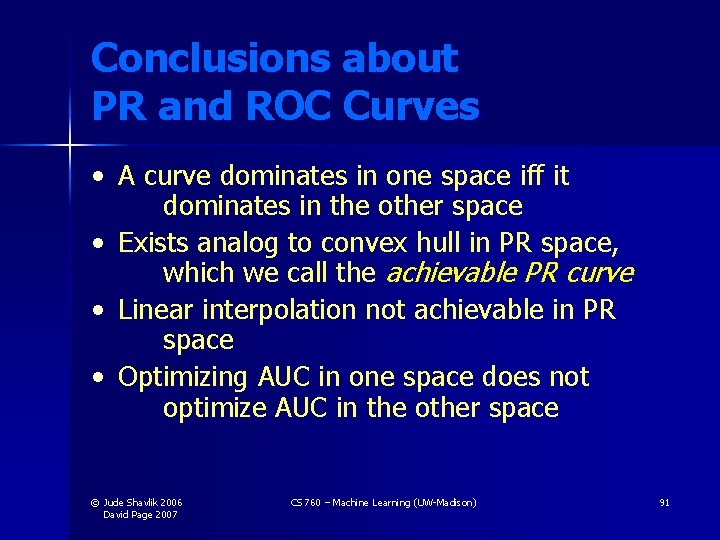

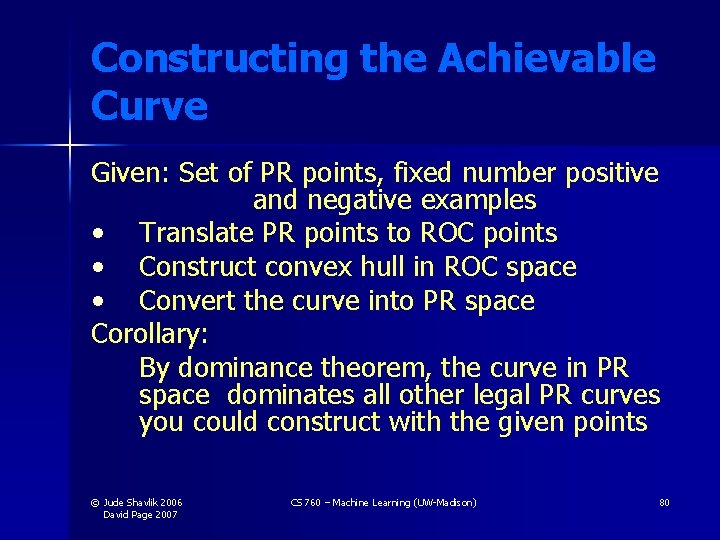

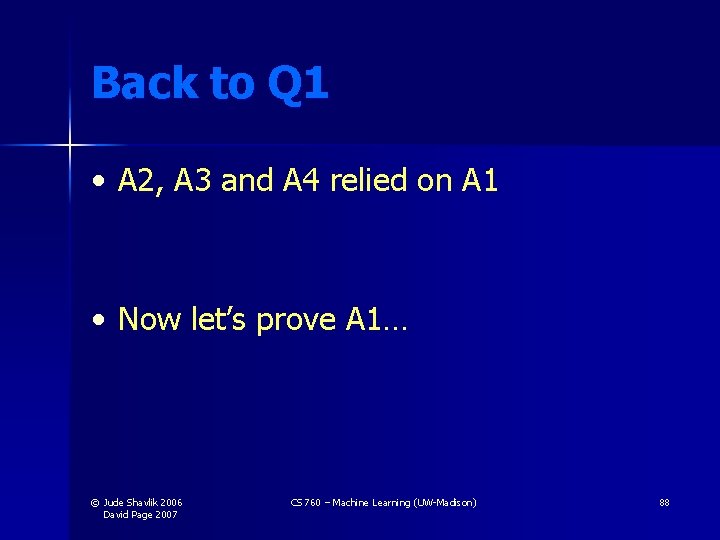

Predicting Aliases [Synthetic data: Davis et al. ICIA 2005] © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 72

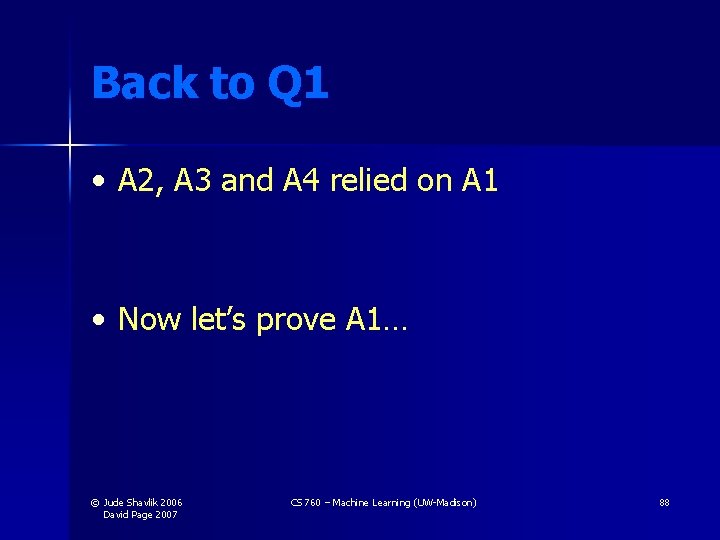

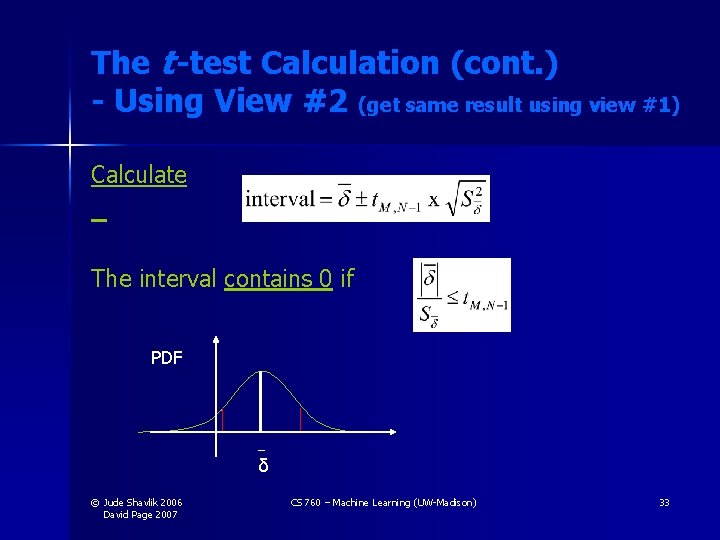

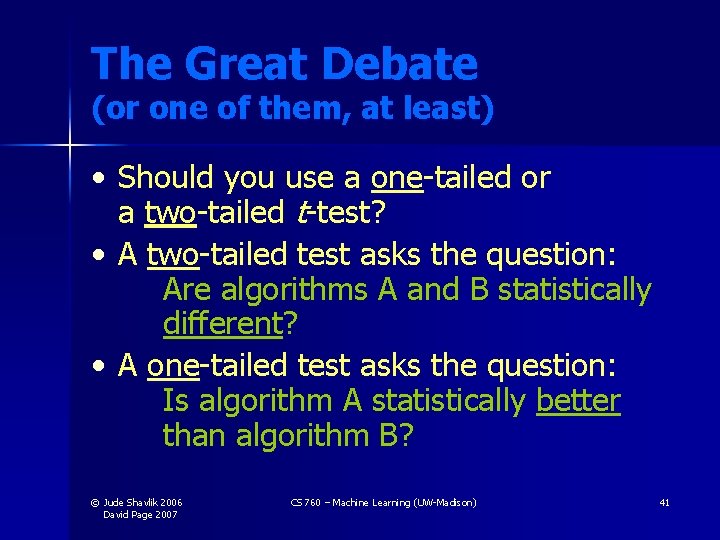

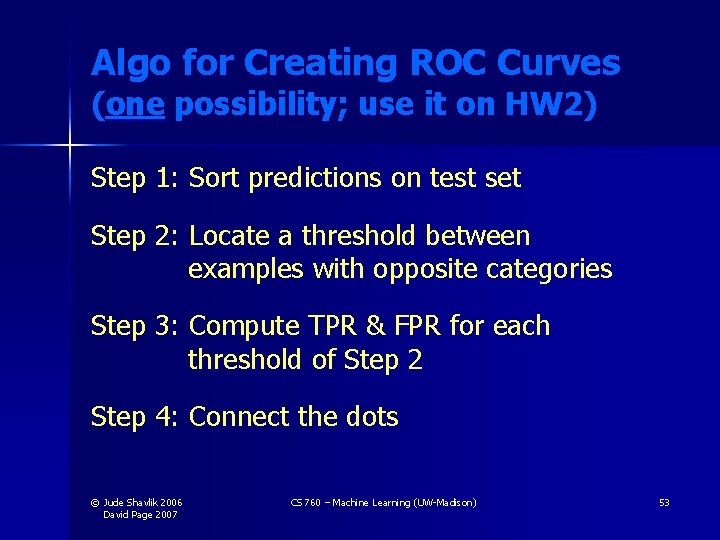

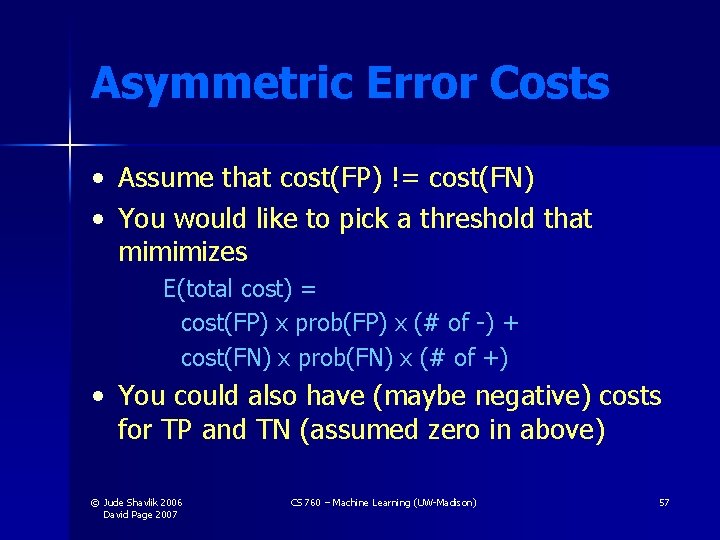

![Predicting Aliases Synthetic data Davis et al ICIA 2005 Jude Shavlik 2006 David Predicting Aliases [Synthetic data: Davis et al. ICIA 2005] © Jude Shavlik 2006 David](https://slidetodoc.com/presentation_image_h2/f776afcae3c7d0f39443053fcbbf650c/image-73.jpg)

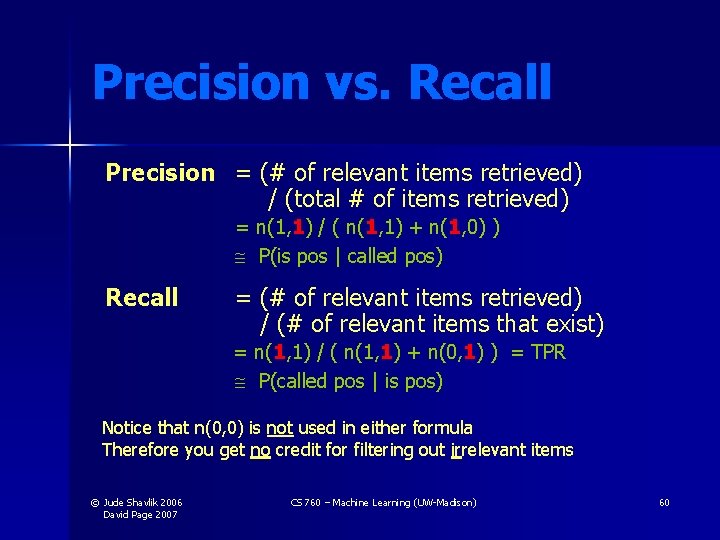

Predicting Aliases [Synthetic data: Davis et al. ICIA 2005] © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 73

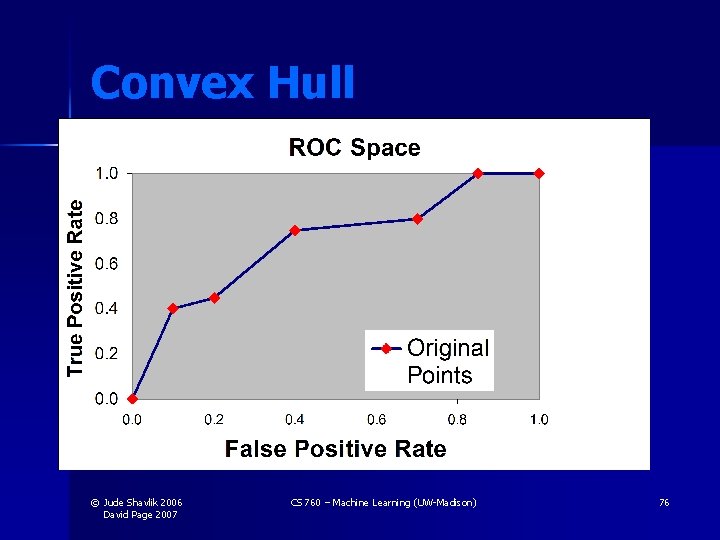

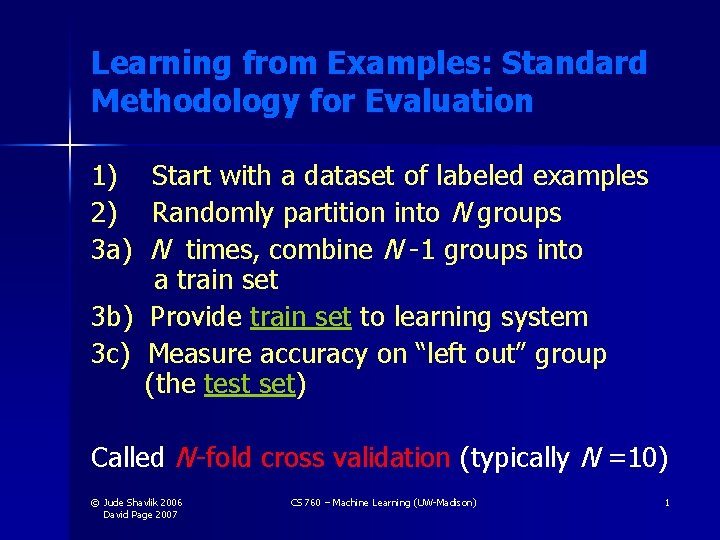

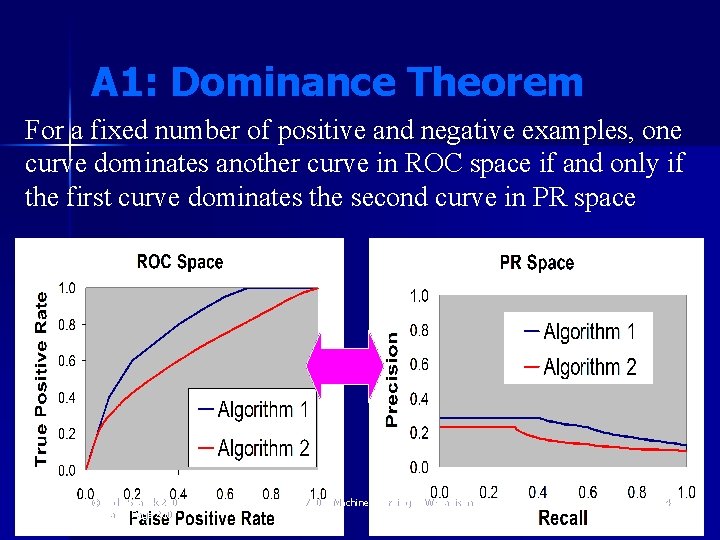

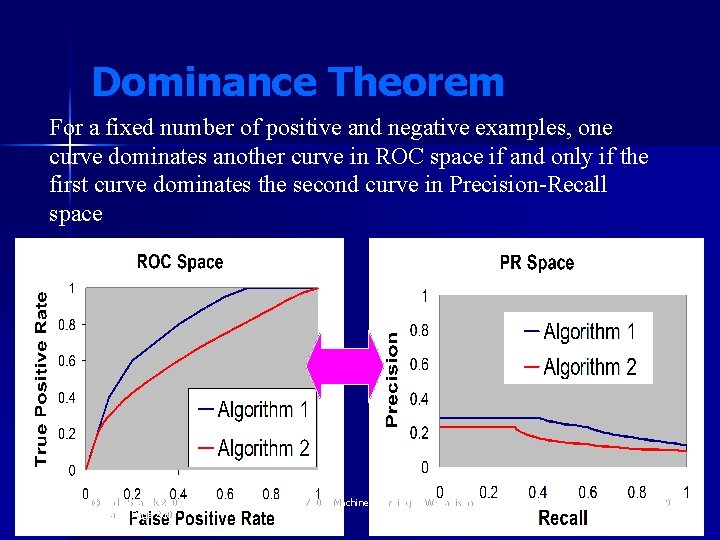

A 1: Dominance Theorem For a fixed number of positive and negative examples, one curve dominates another curve in ROC space if and only if the first curve dominates the second curve in PR space © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 74

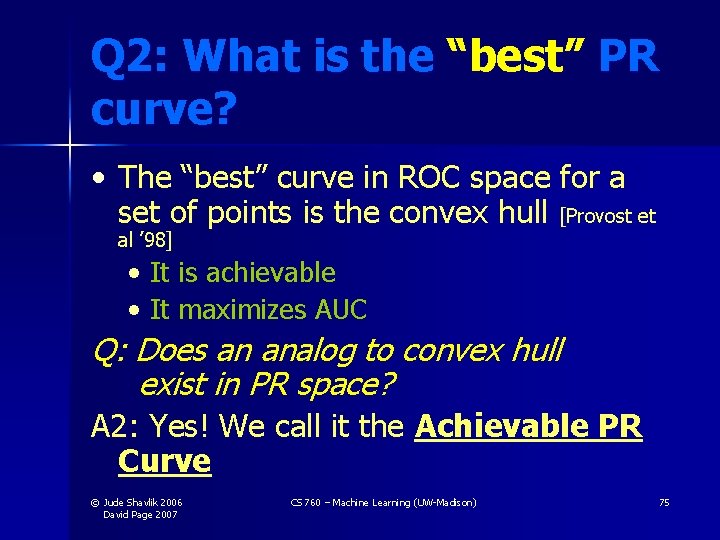

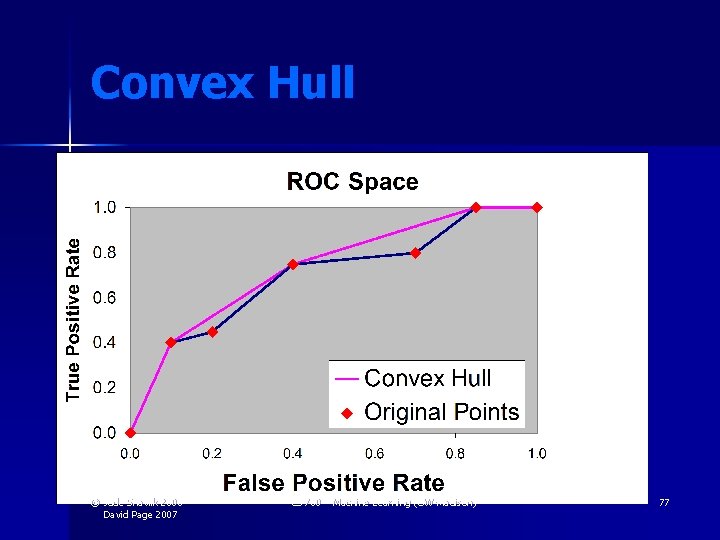

Q 2: What is the “best” PR curve? • The “best” curve in ROC space for a set of points is the convex hull [Provost et al ’ 98] • It is achievable • It maximizes AUC Q: Does an analog to convex hull exist in PR space? A 2: Yes! We call it the Achievable PR Curve © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 75

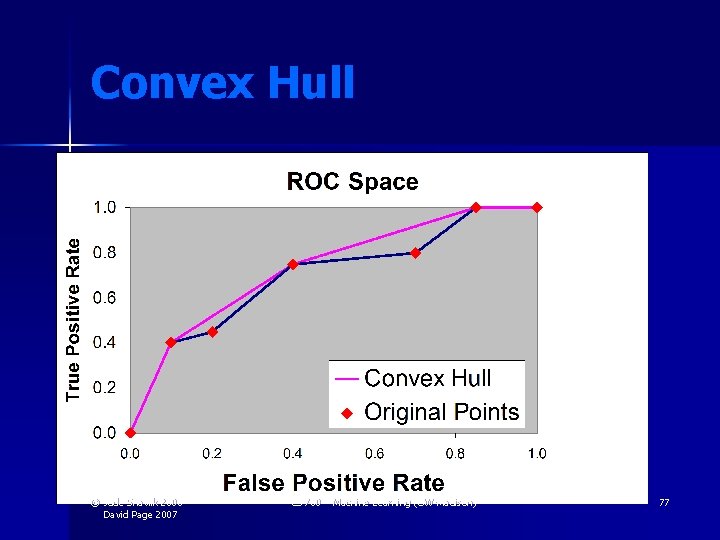

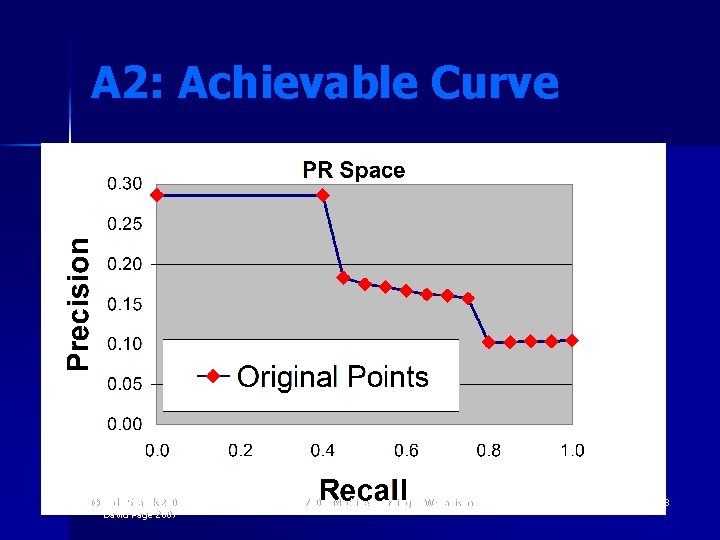

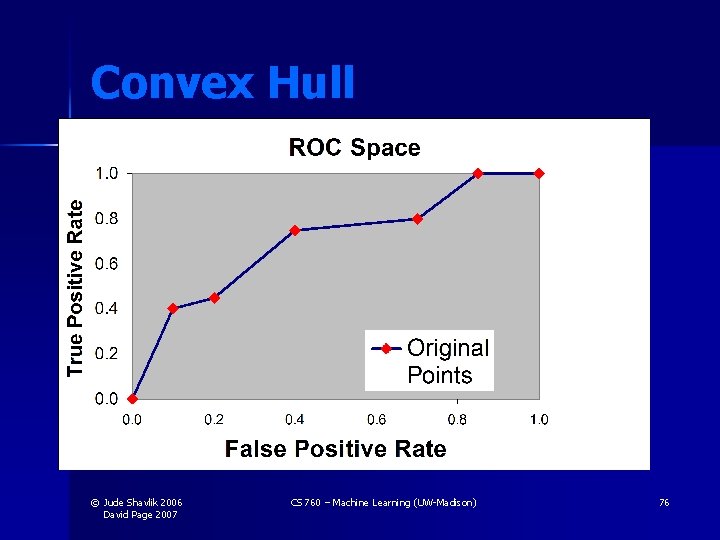

Convex Hull © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 76

Convex Hull © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 77

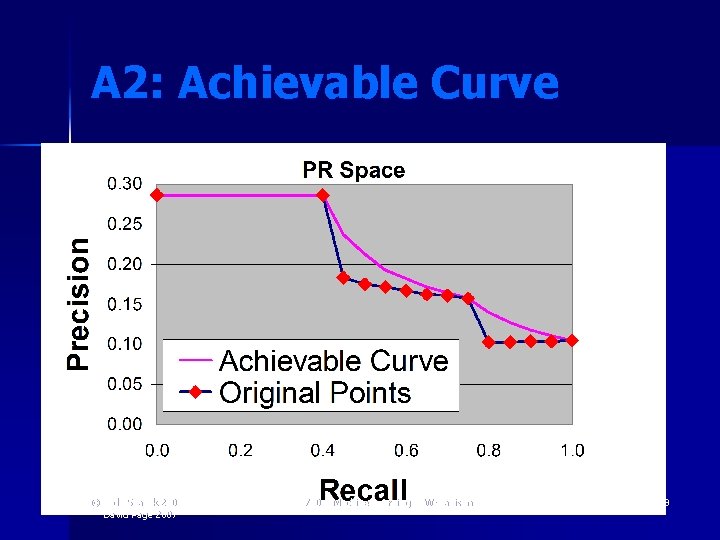

A 2: Achievable Curve © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 78

A 2: Achievable Curve © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 79

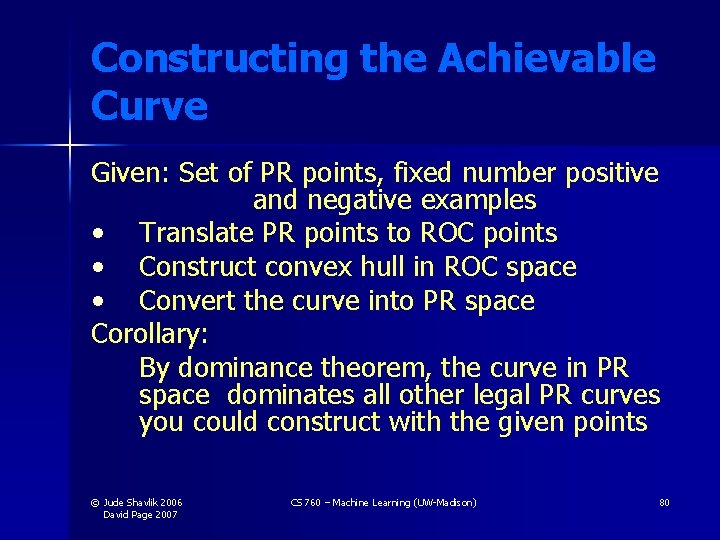

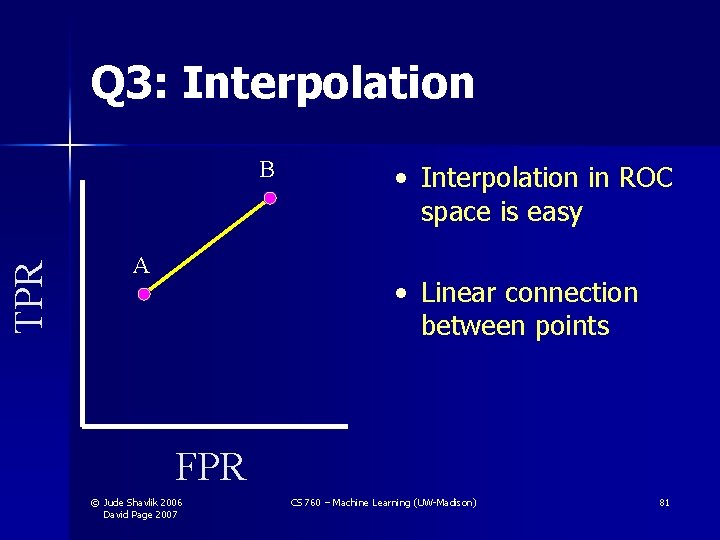

Constructing the Achievable Curve Given: Set of PR points, fixed number positive and negative examples • Translate PR points to ROC points • Construct convex hull in ROC space • Convert the curve into PR space Corollary: By dominance theorem, the curve in PR space dominates all other legal PR curves you could construct with the given points © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 80

Q 3: Interpolation TPR B A • Interpolation in ROC space is easy • Linear connection between points FPR © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 81

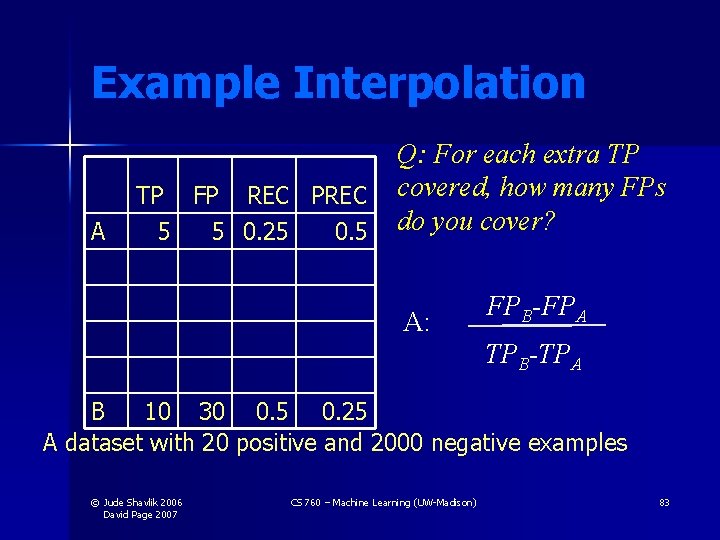

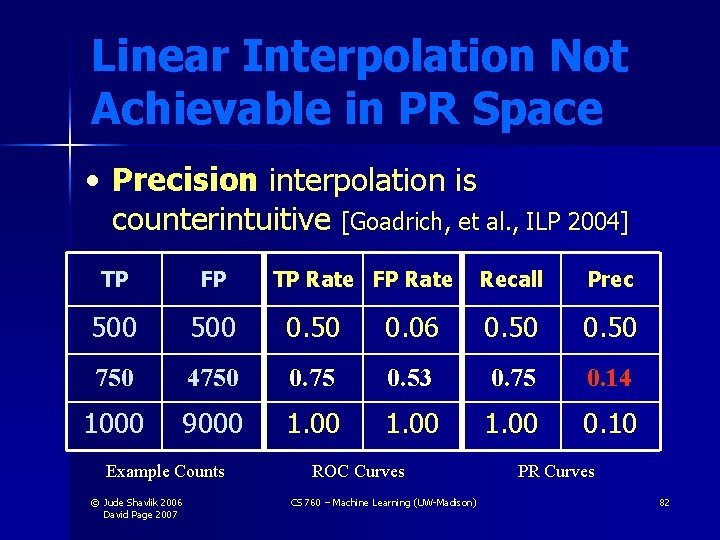

Linear Interpolation Not Achievable in PR Space • Precision interpolation is counterintuitive [Goadrich, et al. , ILP 2004] TP FP 500 0. 50 750 4750 1000 9000 Example Counts © Jude Shavlik 2006 David Page 2007 TP Rate FP Rate Recall Prec 0. 06 0. 50 0. 75 0. 53 0. 75 0. 14 1. 00 0. 10 ROC Curves CS 760 – Machine Learning (UW-Madison) PR Curves 82

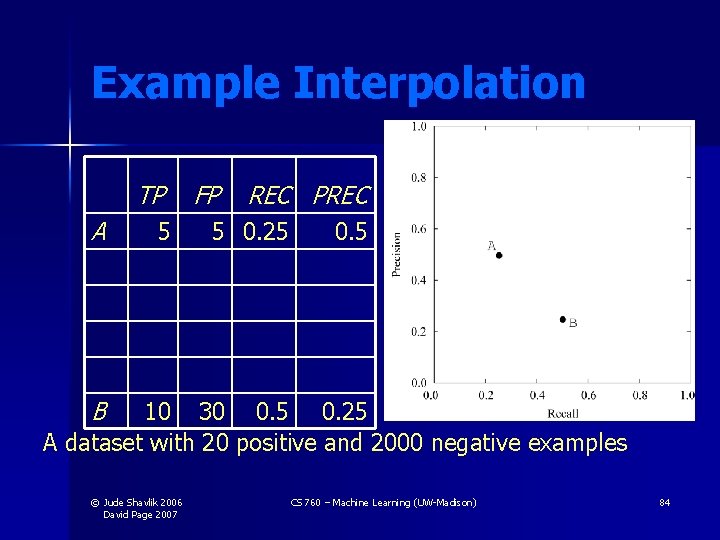

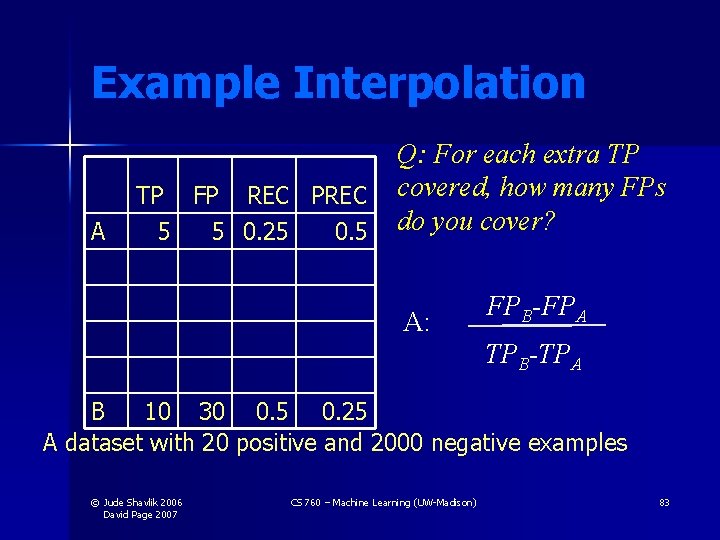

Example Interpolation A Q: For each extra TP TP FP REC PREC covered, how many FPs 5 5 0. 25 0. 5 do you cover? A: FPB-FPA TPB-TPA B 10 30 0. 5 0. 25 A dataset with 20 positive and 2000 negative examples © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 83

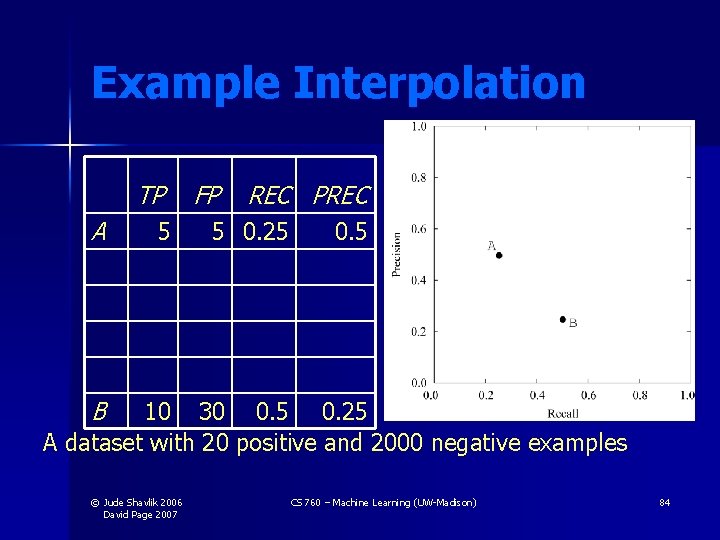

Example Interpolation TP A 5 FP REC PREC 5 0. 25 0. 5 B 10 30 0. 5 0. 25 A dataset with 20 positive and 2000 negative examples © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 84

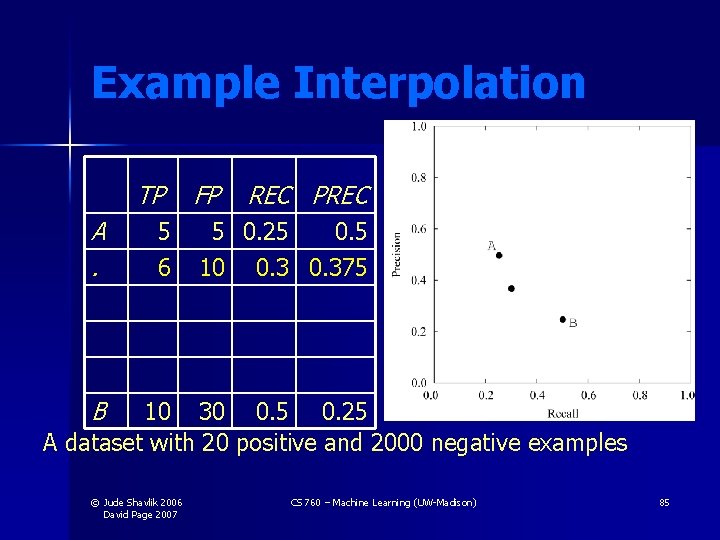

Example Interpolation TP A. 5 6 FP REC PREC 5 0. 25 0. 5 10 0. 375 B 10 30 0. 5 0. 25 A dataset with 20 positive and 2000 negative examples © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 85

Example Interpolation TP A. . B FP REC PREC 5 6 5 0. 25 0. 5 10 0. 375 7 15 0. 318 8 20 0. 4 0. 286 9 25 0. 45 0. 265 10 30 0. 5 0. 25 A dataset with 20 positive and 2000 negative examples © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 86

Optimizing AUC • Interest in learning algorithms that optimize Area Under the Curve (AUC) [Ferri et al. 2002, Cortes and Mohri 2003, Joachims 2005, Prati and Flach 2005, Yan et al. 2003, Herschtal and Raskutti 2004] • Q: Does an algorithm that optimizes AUC-ROC also optimize AUC-PR? • A: No. Can easily construct counterexample © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 87

Back to Q 1 • A 2, A 3 and A 4 relied on A 1 • Now let’s prove A 1… © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 88

Dominance Theorem For a fixed number of positive and negative examples, one curve dominates another curve in ROC space if and only if the first curve dominates the second curve in Precision-Recall space © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 89

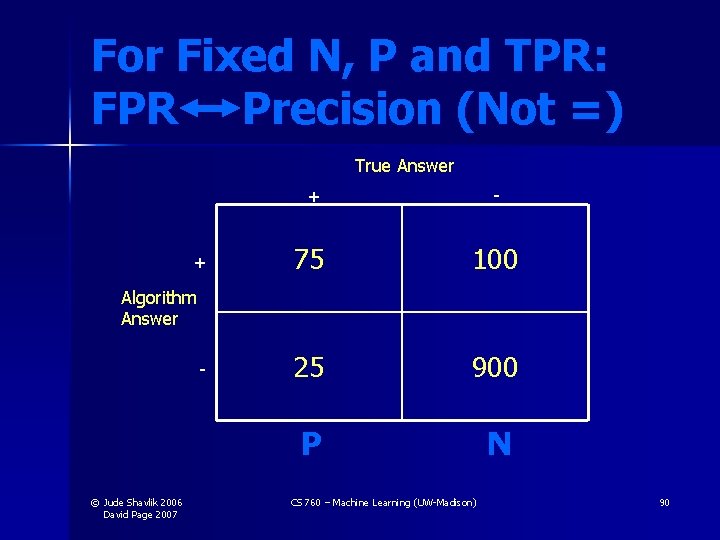

For Fixed N, P and TPR: FPR Precision (Not =) True Answer + + - 75 100 25 900 P N Algorithm Answer - © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 90

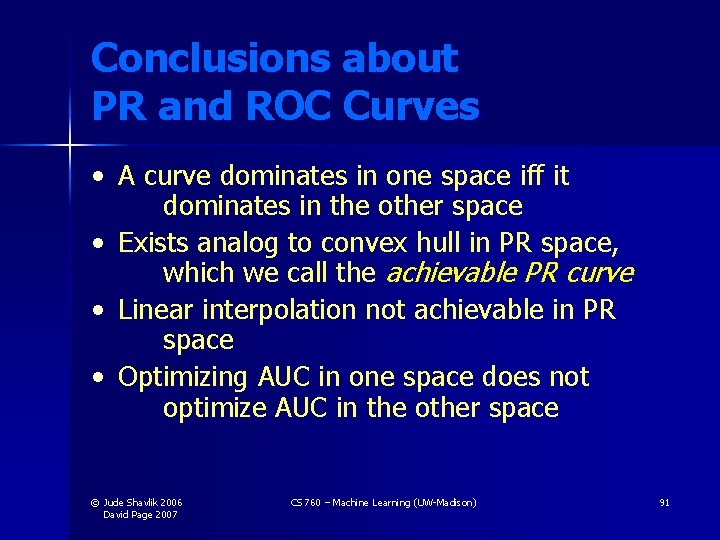

Conclusions about PR and ROC Curves • A curve dominates in one space iff it dominates in the other space • Exists analog to convex hull in PR space, which we call the achievable PR curve • Linear interpolation not achievable in PR space • Optimizing AUC in one space does not optimize AUC in the other space © Jude Shavlik 2006 David Page 2007 CS 760 – Machine Learning (UW-Madison) 91