Learning Bayesian Network Structure from Massive Datasets The

Learning Bayesian Network Structure from Massive Datasets: The ``Sparse Candidate'' Algorithm Nir Friedman Dana Pe'er Iftach Nachman Institute of Computer Science Hebrew University Jerusalem

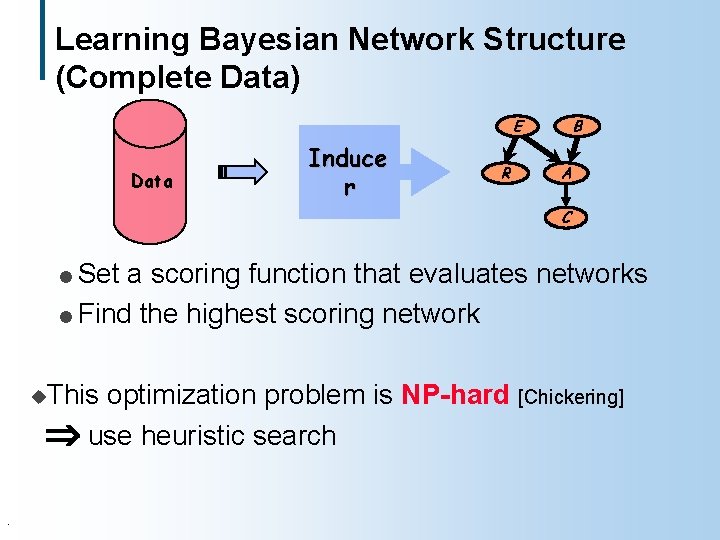

Learning Bayesian Network Structure (Complete Data) B E Data Induce r R A C Set a scoring function that evaluates networks l Find the highest scoring network l u. This optimization problem is NP-hard [Chickering] use heuristic search .

Our Contribution u. We suggest a new heuristic l Builds on simple ideas l Easy to implement l Can be combined with existing heuristic search procedures l Reduces learning time significantly u. Also gain some insight on the complexity of learning problem .

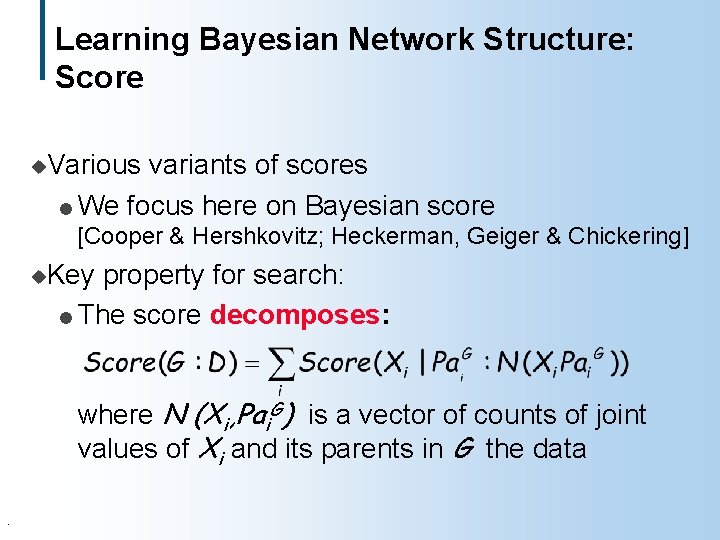

Learning Bayesian Network Structure: Score u. Various variants of scores l We focus here on Bayesian score [Cooper & Hershkovitz; Heckerman, Geiger & Chickering] u. Key property for search: l The score decomposes: where N (Xi, Pai. G) is a vector of counts of joint values of Xi and its parents in G the data .

Heuristic Search in Learning Networks u. Search over network structures u. Standard operations: add, delete, reverse u. Need to check acyclicty A Remove B C A B C u. Use. B Add A B A C B C Reverse B C A B C standard search method in this space: greedy hill climbing, simulated annealing, etc.

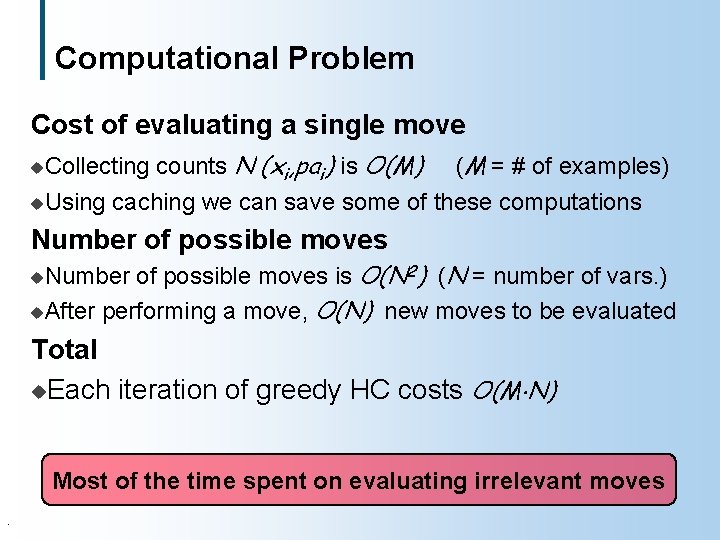

Computational Problem Cost of evaluating a single move counts N (xi, pai) is O(M) (M = # of examples) u. Using caching we can save some of these computations u. Collecting Number of possible moves is O(N 2) (N = number of vars. ) u. After performing a move, O(N) new moves to be evaluated u. Number Total u. Each iteration of greedy HC costs O(M N) Most of the time spent on evaluating irrelevant moves.

Idea #1: Restrict to Few Candidates each X, select a small set of candidates C(X) l Consider arcs Y X only if Y is in C(X) u. For A B C u. If C(B) = {A} C(C) = {A, B} B A C A A->C C->B X X we restrict to k candidate for each variable, then only O(k. N) possible moves for each network l in greedy HC, only O(k) new moves to evaluate in each iteration l Cost of each iteration is O(M k) l . C(A) = { B }

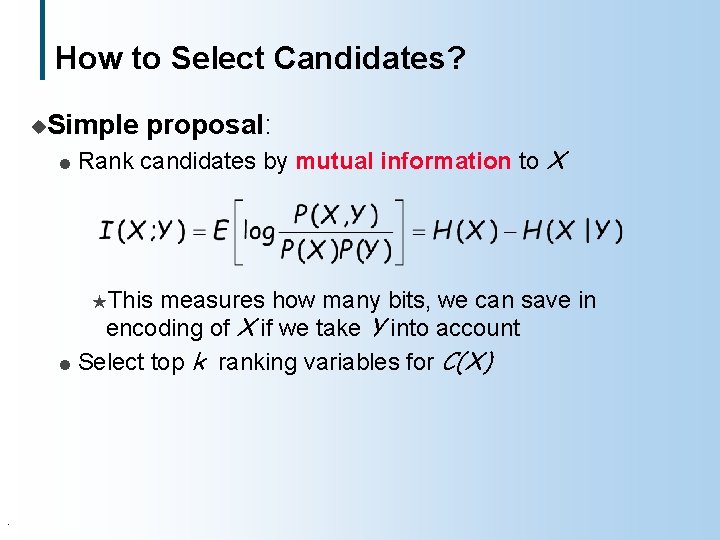

How to Select Candidates? u. Simple l proposal: Rank candidates by mutual information to X HThis measures how many bits, we can save in encoding of X if we take Y into account l Select top k ranking variables for C(X) .

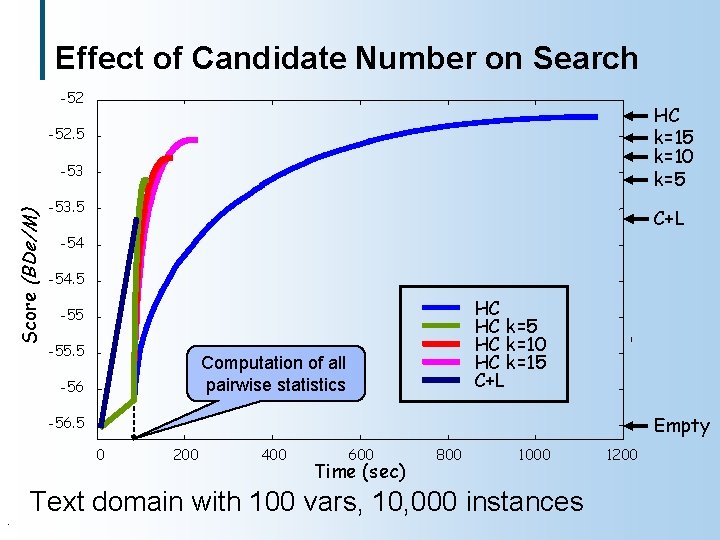

Effect of Candidate Number on Search -52 HC k=15 k=10 k=5 -52. 5 Score (BDe/M) -53. 5 C+L -54. 5 HC HC k=5 HC k=10 HC k=15 C+L -55. 5 Computation of all pairwise statistics -56 Empty -56. 5 0 . 200 400 600 Time (sec) 800 1000 Text domain with 100 vars, 10, 000 instances 1200

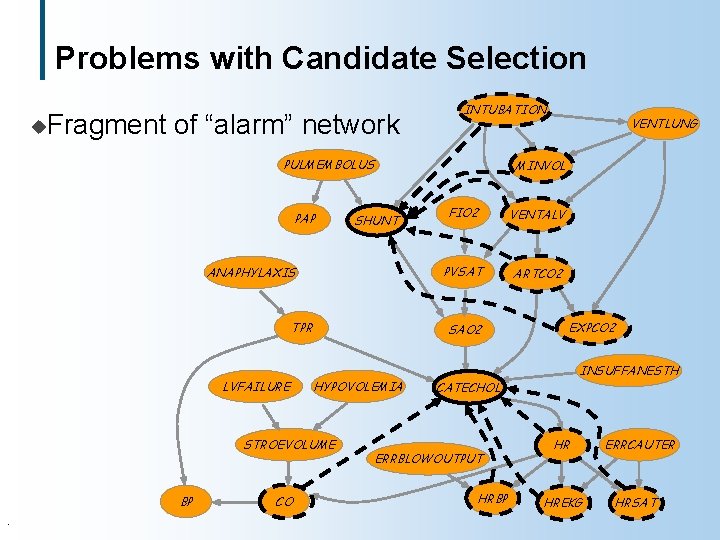

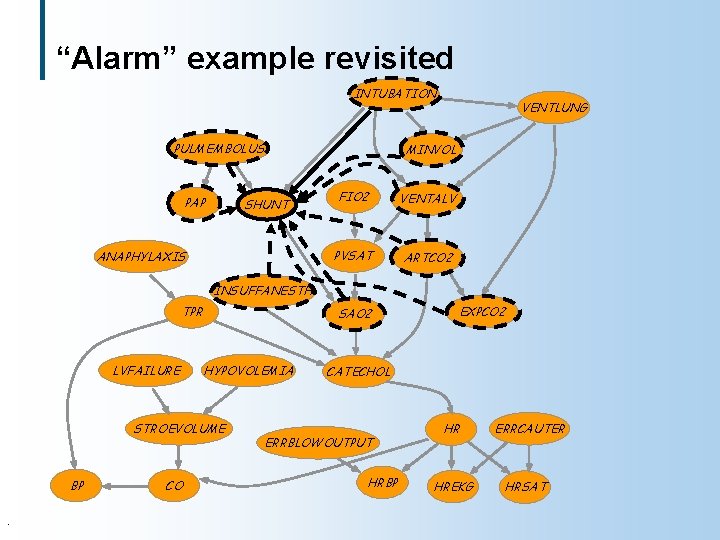

Problems with Candidate Selection u. Fragment of “alarm” network INTUBATION PULMEMBOLUS PAP SHUNT ANAPHYLAXIS TPR LVFAILURE STROEVOLUME BP. CO MINVOL FIO 2 VENTALV PVSAT ARTCO 2 SAO 2 HYPOVOLEMIA VENTLUNG EXPCO 2 INSUFFANESTH CATECHOL ERRBLOWOUTPUT HRBP HR HREKG ERRCAUTER HRSAT

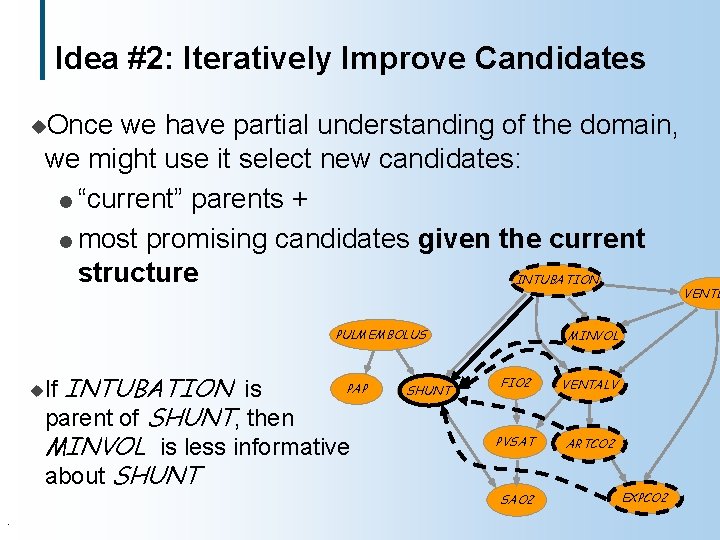

Idea #2: Iteratively Improve Candidates u. Once we have partial understanding of the domain, we might use it select new candidates: l “current” parents + l most promising candidates given the current structure INTUBATION PULMEMBOLUS PAP INTUBATION is parent of SHUNT, then MINVOL is less informative about SHUNT u. If . SHUNT MINVOL FIO 2 VENTALV PVSAT ARTCO 2 SAO 2 EXPCO 2 VENTL

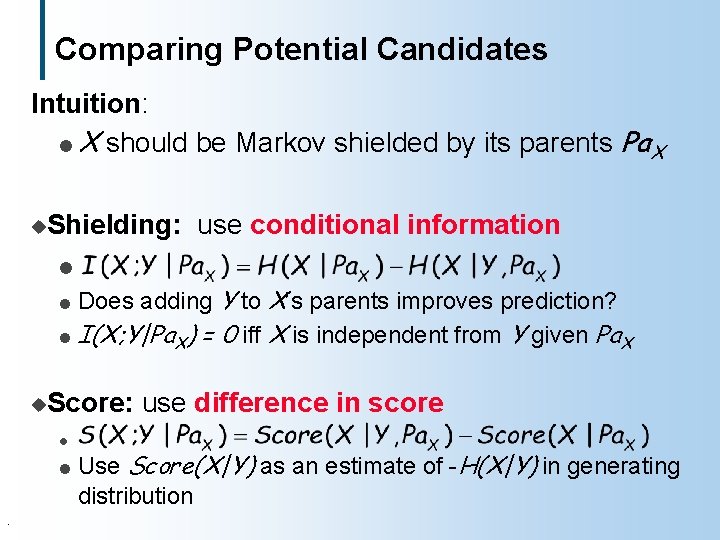

Comparing Potential Candidates Intuition: l X should be Markov shielded by its parents Pa. X u. Shielding: use conditional information l Does adding Y to X’s parents improves prediction? l I(X; Y|Pa. X) = 0 iff X is independent from Y given Pa. X l u. Score: l l . use difference in score Use Score(X|Y) as an estimate of -H(X|Y) in generating distribution

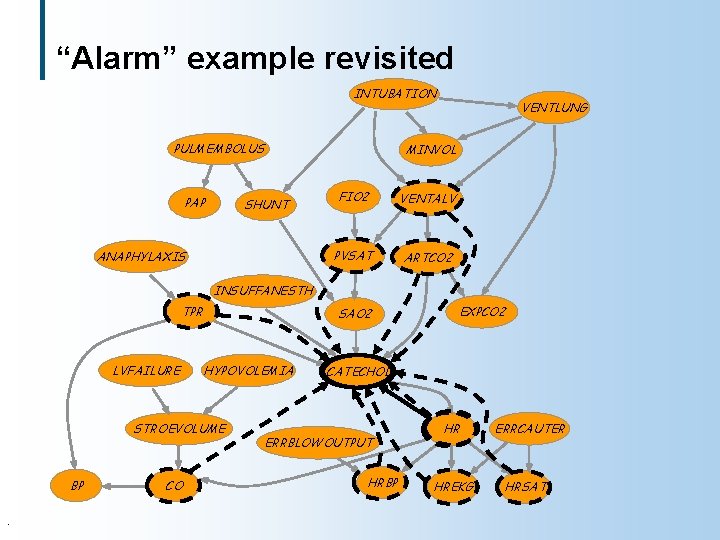

“Alarm” example revisited INTUBATION PULMEMBOLUS PAP SHUNT ANAPHYLAXIS VENTLUNG MINVOL FIO 2 VENTALV PVSAT ARTCO 2 INSUFFANESTH TPR LVFAILURE SAO 2 HYPOVOLEMIA STROEVOLUME BP. CO EXPCO 2 CATECHOL ERRBLOWOUTPUT HRBP HR HREKG ERRCAUTER HRSAT

“Alarm” example revisited INTUBATION PULMEMBOLUS PAP SHUNT ANAPHYLAXIS VENTLUNG MINVOL FIO 2 VENTALV PVSAT ARTCO 2 INSUFFANESTH TPR LVFAILURE SAO 2 HYPOVOLEMIA STROEVOLUME BP. CO EXPCO 2 CATECHOL ERRBLOWOUTPUT HRBP HR HREKG ERRCAUTER HRSAT

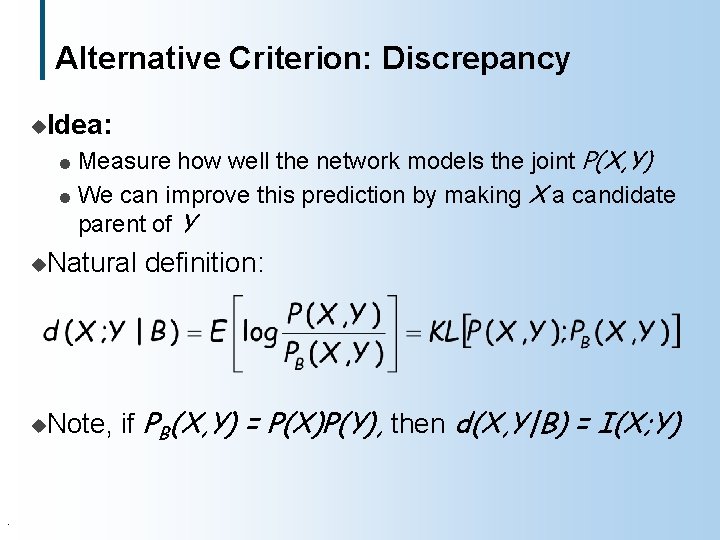

Alternative Criterion: Discrepancy u. Idea: Measure how well the network models the joint P(X, Y) l We can improve this prediction by making X a candidate parent of Y l u. Natural u. Note, . definition: if PB(X, Y) = P(X)P(Y), then d(X, Y|B) = I(X; Y)

Text with 100 words -52 Score (BDe/M) -52. 5 -53. 5 -54 Greedy HC Disc k=15 Score k=15 Shld k=15 0 200 400 600 800 1000 1200 Time (sec). 1400 1600 1800 2000

Text with 200 words -82 Score (BDe/L) -82. 2 -82. 4 -82. 6 -82. 8 -83. 2 Greedy HC Disc k=15 Score k=15 Shld k=15 -83. 4 0 1000 2000 3000 4000 5000 Time (sec). 6000 7000 8000 9000

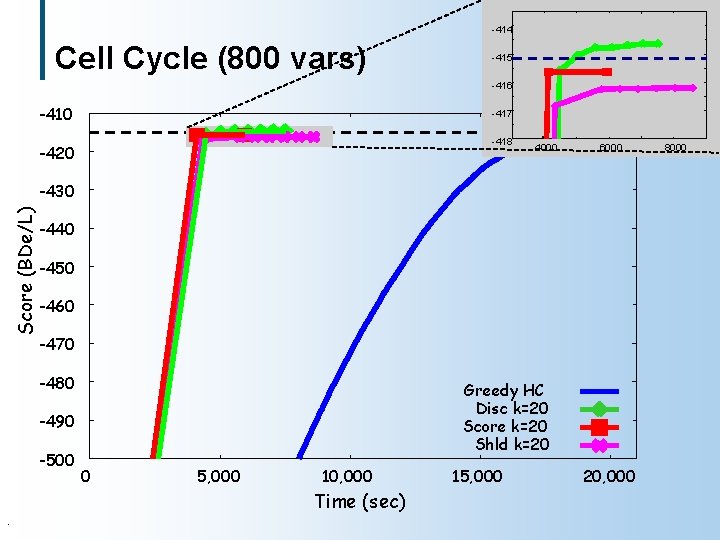

-414 Cell Cycle (800 vars) -415 -416 -410 -417 -418 -420 4000 6000 Score (BDe/L) -430 -440 -450 -460 -470 -480 Greedy HC Disc k=20 Score k=20 Shld k=20 -490 -500 0 5, 000 10, 000 Time (sec). 15, 000 20, 000 8000

Complexity of Structure Learning Without restriction of the candidate sets: u. Restricting |Pai| 1 Problem is easy [Chow+Liu; Heckerman+al] u. No restriction Problem is NP-Hard [Chickering] l Even when restricting |Pai| 2 l We do not know of interesting intermediate problems u. Such behavior is often called the “exponential cliff”.

Complexity with Small Candidate Sets In each iteration, we solve an optimization problem: u. Given candidate sets C(X 1), …, C(XN), find best scoring network that respects these candidates Is this problem easier than unconstrained structure learning? .

Complexity with Small Candidate Sets Theorem: If |C(Xi) | > 1 finding best-scoring structure is NP-Hard But… u. The complexity function is gradually growing u. There is a parameter c, s. t. time complexity is l Exponential in c l Linear in N u. Fix d. There is polynomial procedure that can solve all instances with c < d u. Similar situation in inference: exponential in the size of largest clique in triangulated graph, linear in N .

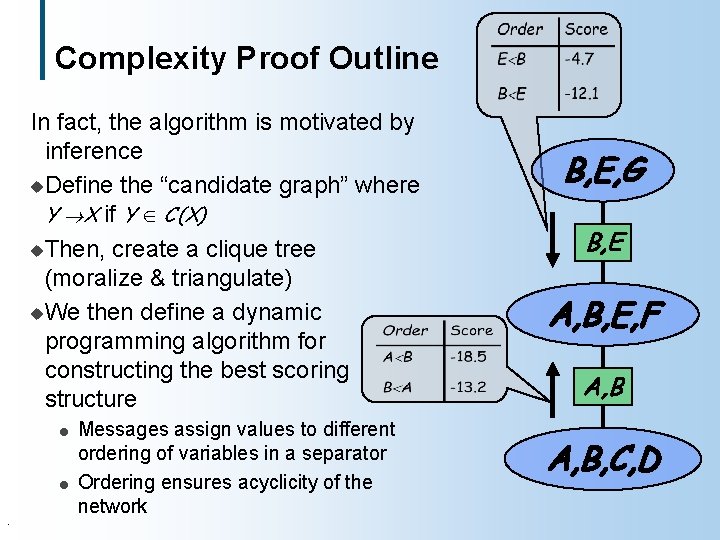

Complexity Proof Outline In fact, the algorithm is motivated by inference u. Define the “candidate graph” where Y X if Y C(X) u. Then, create a clique tree (moralize & triangulate) u. We then define a dynamic programming algorithm for constructing the best scoring structure l l. Messages assign values to different ordering of variables in a separator Ordering ensures acyclicity of the network B, E, G B, E A, B, E, F A, B, C, D

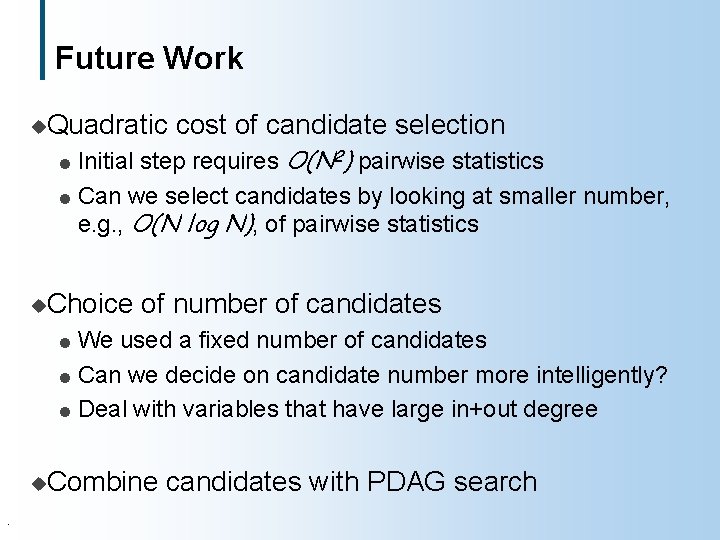

Future Work u. Quadratic cost of candidate selection Initial step requires O(N 2) pairwise statistics l Can we select candidates by looking at smaller number, e. g. , O(N log N), of pairwise statistics l u. Choice of number of candidates We used a fixed number of candidates l Can we decide on candidate number more intelligently? l Deal with variables that have large in+out degree l u. Combine. candidates with PDAG search

Summary u. Heuristic for structure search Incorporates understanding of BNs into blind search l Drastically reduces the size of the search space faster search that requires fewer statistics l u. Empirical Evaluation We present evaluation on several datasets l Variants of the algorithm used in l H[Boyen, Friedman&Koller] for temporal models with SEM H[Friedman, Getoor, Koller&Pfeffer] for relational models u. Complexity l . Analysis Computational subproblem where structure search might be tractable even beyond trees

- Slides: 24