Learning abductive reasoning using random examples Brendan Juba

Learning abductive reasoning using random examples Brendan Juba Washington University in St. Louis

Outline 1. Models of abductive reasoning 2. An abductive reasoning algorithm 3. “Not-easiness” of abducing conjunctions

Abductive reasoning: making plausible guesses • Abductive reasoning: Given a conclusion c, find a “plausible” h that implies/leads to/… c • Two varieties of “plausibility” in common use – Logical plausibility: a small h from which c follows – Bayesian plausibility: a h which has large posterior probability when given c In symbols… Pr [h|c true] > … Requires a prior distribution over representations…

Why might we want a new model? • Existing models only tractable in simple cases – E. g. Horn rules (a⋀b⋀c⇒d …no negations), “nice” (conjugate) priors • The choice of prior distribution really matters – And, it’s difficult to specify by hand

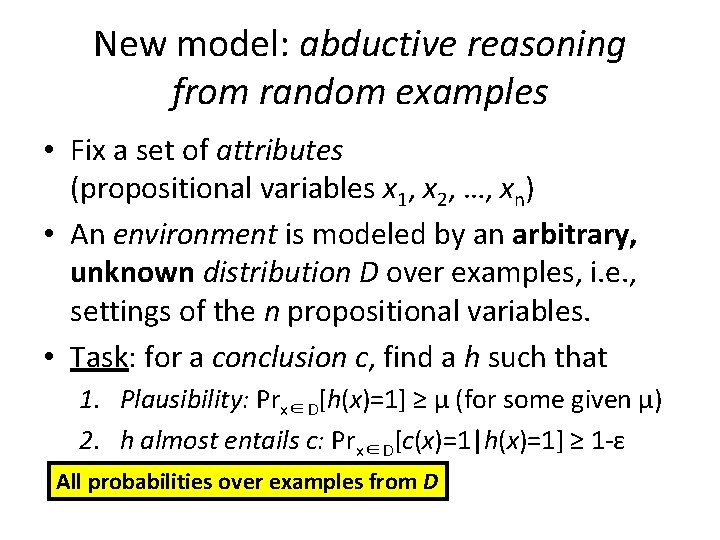

New model: abductive reasoning from random examples • Fix a set of attributes (propositional variables x 1, x 2, …, xn) • An environment is modeled by an arbitrary, unknown distribution D over examples, i. e. , settings of the n propositional variables. • Task: for a conclusion c, find a h such that 1. Plausibility: Prx∈D[h(x)=1] ≥ μ (for some given μ) 2. h almost entails c: Prx∈D[c(x)=1|h(x)=1] ≥ 1 -ε All probabilities over examples from D

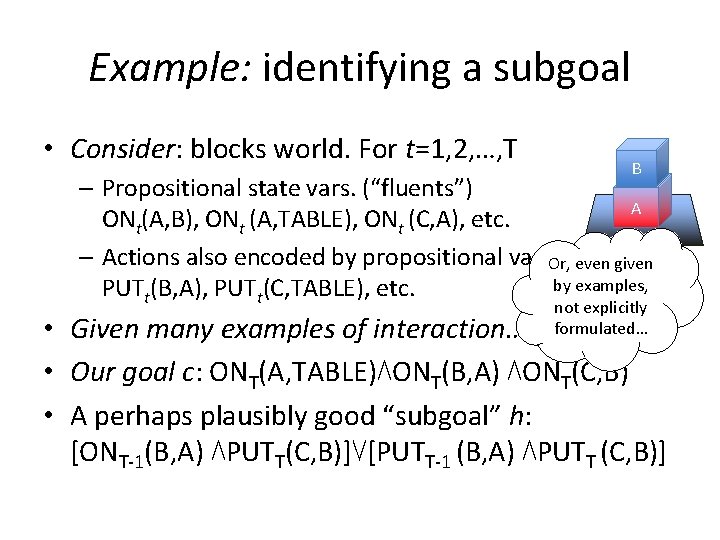

Example: identifying a subgoal • Consider: blocks world. For t=1, 2, …, T B – Propositional state vars. (“fluents”) A ONt(A, B), ONt (A, TABLE), ONt (C, A), etc. – Actions also encoded by propositional vars. Or, even given by examples, PUTt(B, A), PUTt(C, TABLE), etc. not explicitly formulated… • Given many examples of interaction… • Our goal c: ONT(A, TABLE)⋀ONT(B, A) ⋀ONT(C, B) • A perhaps plausibly good “subgoal” h: [ONT-1(B, A) ⋀PUTT(C, B)]⋁[PUTT-1 (B, A) ⋀PUTT (C, B)]

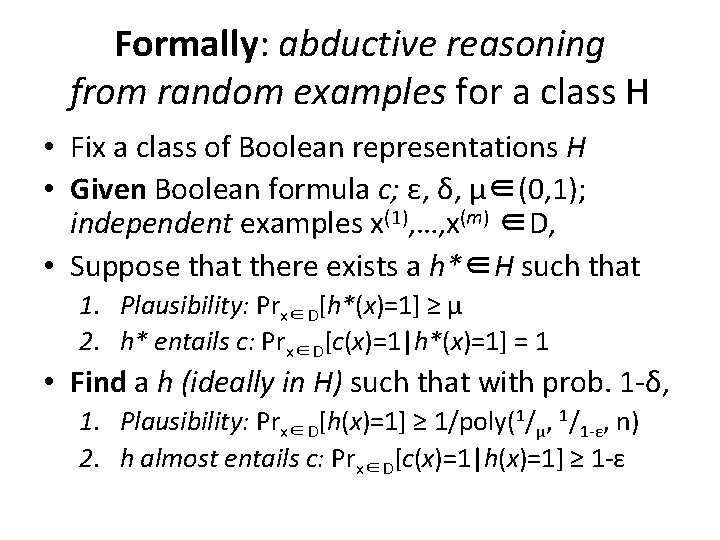

Formally: abductive reasoning from random examples for a class H • Fix a class of Boolean representations H • Given Boolean formula c; ε, δ, μ∈(0, 1); independent examples x(1), …, x(m) ∈D, • Suppose that there exists a h*∈H such that 1. Plausibility: Prx∈D[h*(x)=1] ≥ μ 2. h* entails c: Prx∈D[c(x)=1|h*(x)=1] = 1 • Find a h (ideally in H) such that with prob. 1 -δ, 1. Plausibility: Prx∈D[h(x)=1] ≥ 1/poly(1/μ, 1/1 -ε, n) 2. h almost entails c: Prx∈D[c(x)=1|h(x)=1] ≥ 1 -ε

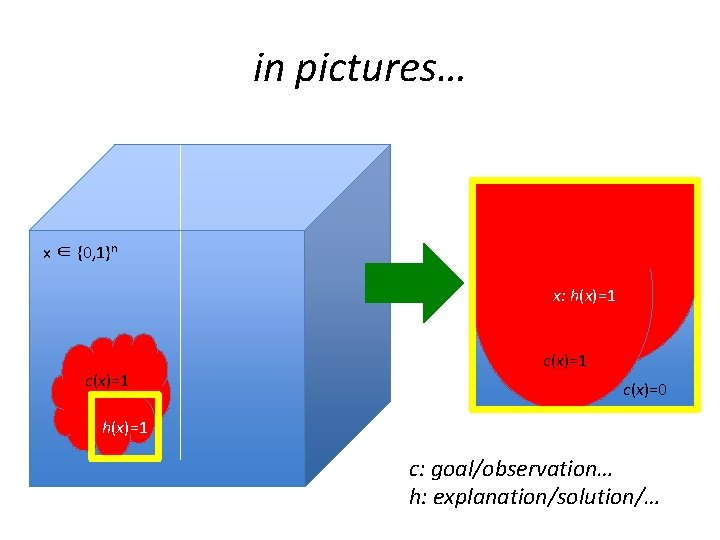

in pictures… x ∈ {0, 1}n x: h(x)=1 c(x)=1 c(x)=0 h(x)=1 c: goal/observation… h: explanation/solution/…

Outline 1. Models of abductive reasoning 2. An abductive reasoning algorithm 3. “Not-easiness” of abducing conjunctions

![Theorem 1. If there is a k-DNF h* such that 1. Plausibility: Prx∈D[h*(x)=1] ≥ Theorem 1. If there is a k-DNF h* such that 1. Plausibility: Prx∈D[h*(x)=1] ≥](http://slidetodoc.com/presentation_image/22953ed37423f14e01149f067c1042cd/image-10.jpg)

Theorem 1. If there is a k-DNF h* such that 1. Plausibility: Prx∈D[h*(x)=1] ≥ μ 2. h* entails c: Prx∈D[c(x)=1|h*(x)=1] = 1 then using m = O(1/με (nk+log 1/δ)) examples, in time O(mnk) we can find a k-DNF h such that with probability 1 -δ, 1. Plausibility: Prx∈D[h(x)=1] ≥ μ 2. h almost entails c: Prx∈D[c(x)=1|h(x)=1] ≥ 1 -ε k-DNF: an OR of “terms of size k” – ANDs of at most k “literals” – attributes or their negations

Algorithm for k-DNF abduction • Start with h as an OR over all terms of size k • For each example x(1), …, x(m) – If c(x(i)) = 0, delete all terms T from h such that T(x(i)) = 1 • Return h Simple algorithm, 1 st proposed by J. S. Mill, 1843 Running time is clearly O(mnk)

![Analysis pt 1: Prx∈D[h(x)=1] ≥ μ • We are given that some k-DNF h* Analysis pt 1: Prx∈D[h(x)=1] ≥ μ • We are given that some k-DNF h*](http://slidetodoc.com/presentation_image/22953ed37423f14e01149f067c1042cd/image-12.jpg)

Analysis pt 1: Prx∈D[h(x)=1] ≥ μ • We are given that some k-DNF h* has 1. Plausibility: Prx∈D[h*(x)=1] ≥ μ 2. h* entails c: Prx∈D[c(x)=1|h*(x)=1] = 1 • Initially, every term of h* is in h • Terms of h* are never true when c(x)=0 by 2. ☞every term of h* remains in h ☞h* implies h, so Prx[h(x)=1]≥Prx[h*(x)=1]≥μ.

![Analysis pt 2: Prx[c(x)=1|h(x)=1] ≥ 1 -ε • Rewrite conditional probability: Prx∈D [c(x)=0⋀h(x)=1] ≤ Analysis pt 2: Prx[c(x)=1|h(x)=1] ≥ 1 -ε • Rewrite conditional probability: Prx∈D [c(x)=0⋀h(x)=1] ≤](http://slidetodoc.com/presentation_image/22953ed37423f14e01149f067c1042cd/image-13.jpg)

Analysis pt 2: Prx[c(x)=1|h(x)=1] ≥ 1 -ε • Rewrite conditional probability: Prx∈D [c(x)=0⋀h(x)=1] ≤ εPrx∈D [h(x)=1] • We’ll show: Prx∈D [c(x)=0⋀h(x)=1] ≤ εμ ( ≤ εPrx∈D [h(x)=1] by part 1) • Consider any h’ s. t. Prx[c(x)=0⋀h’(x)=1] > εμ – Since each x(i) is drawn independently from D Prx∈D [no i has c(x(i))=0 ⋀ h’(x(i))=1] < (1 -εμ)m – A term of h’ is deleted when c=0 and h’ =1 – So, h’ is only possibly output w. p. < (1 -εμ)m

![Analysis pt 2, cont’d: Prx[c(x)=1|h(x)=1] ≥ 1 -ε • We’ll show: Prx∈D [c(x)=0⋀h(x)=1] ≤ Analysis pt 2, cont’d: Prx[c(x)=1|h(x)=1] ≥ 1 -ε • We’ll show: Prx∈D [c(x)=0⋀h(x)=1] ≤](http://slidetodoc.com/presentation_image/22953ed37423f14e01149f067c1042cd/image-14.jpg)

Analysis pt 2, cont’d: Prx[c(x)=1|h(x)=1] ≥ 1 -ε • We’ll show: Prx∈D [c(x)=0⋀h(x)=1] ≤ εμ • Consider any h’ s. t. Prx[c(x)=0⋀h’(x)=1] > εμ – h’ is only possibly output w. p. < (1 -εμ)m • There are only 2 O(nk) possible k-DNF h’ • Since (1 -1/x)x ≤ 1/e, m = O(1/με (nk+log 1/δ)) ex’s suffice to guarantee that each such h’ k) O(n is only possible to output w. p. < δ/2 ☞w. p. >1 -δ, our h has Prx[c(x)=0⋀h(x)=1] ≤ εμ

![Theorem 1. If there is a k-DNF h* such that 1. Plausibility: Prx∈D[h*(x)=1] ≥ Theorem 1. If there is a k-DNF h* such that 1. Plausibility: Prx∈D[h*(x)=1] ≥](http://slidetodoc.com/presentation_image/22953ed37423f14e01149f067c1042cd/image-15.jpg)

Theorem 1. If there is a k-DNF h* such that 1. Plausibility: Prx∈D[h*(x)=1] ≥ μ 2. h* entails c: Prx∈D[c(x)=1|h*(x)=1] = 1 then using m = O(1/με (nk+log 1/δ)) examples, in time O(mnk) we can find a k-DNF h such that with probability 1 -δ, 1. Plausibility: Prx∈D[h(x)=1] ≥ μ 2. h almost entails c: Prx∈D[c(x)=1|h(x)=1] ≥ 1 -ε k-DNF: an OR of “terms of size k” – ANDs of at most k “literals” – attributes or their negations A version that tolerates exceptions is also possible–see paper…

Outline 1. Models of abductive reasoning 2. An abductive reasoning algorithm 3. “Not-easiness” of abducing conjunctions

Theorem 2. Suppose that a polynomial-time algorithm exists for learning abduction from random examples for conjunctions. Then there is a polynomial-time algorithm for PAC-learning DNF.

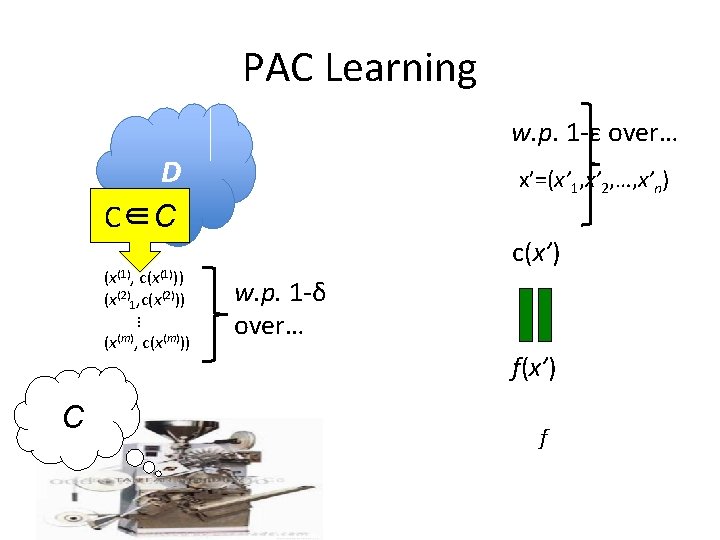

PAC Learning w. p. 1 -ε over… D C∈C (x(1), c(x(1))) (x(2)1, c(x(2))) … (x(m), c(x(m))) C x’=(x’ 1, x’ 2, …, x’n) c(x’) w. p. 1 -δ over… f(x’) f

Theorem 2. Suppose that a polynomial-time algorithm exists for learning abduction from random examples for conjunctions. Then there is a polynomial-time algorithm for PAC-learning DNF. Theorem (Daniely & Shalev-Shwartz’ 14). If there is a polynomial-time algorithm for PAClearning DNF, then for every f(k)�∞ there is a polynomial-time algorithm for refuting random k -SAT formulas of nf(k) clauses. ☞ This is a new hardness assumption. Use at your discretion.

Key learning technique: Boosting (Schapire, 1990) • Suppose that there is a polynomial-time algorithm that, given examples of c∈C, w. p. 1 -δ produces a circuit f s. t. Prx[f(x)=c(x)] > ½+1/poly(n). Then there is a polynomial-time PAC-learning algorithm for the class C. – i. e. , using the ability to produce such f’s, we produce a g for which Prx[g(x)=c(x)] is “boosted” to any 1 -ε we require.

Sketch of learning DNF using conjunctive abduction • If c is a DNF and Pr[c(x)=1]> ¼, then some term T of c has Pr[T(x)=1]>1/4|c| – …and otherwise f≡ 0 satisfies Pr[f(x)=c(x)]>½+¼ • Note: Pr[c(x)=1|T(x)=1]=1, T is a conjunction ☞Abductive reasoning finds some h such that Pr[h(x)=1]>1/poly(n) and Pr[c(x)=1|h(x)=1]>¾ • Return f: f(x)=1 whenever h(x)=1; if h(x)=0, f(x)=majx: h(x)=0{c(x)}—Pr[f(x)=c(x)]>½+1/4 poly(n)

Recap: learning abduction from random examples For goal condition c, if there is a h* such that Prx∈D[h*(x)=1] ≥ μ & Prx∈D[c(x)=1|h*(x)=1] = 1 using examples x from D, find a h such that Prx∈D[h(x)=1] ≥ μ’ & Prx∈D[c(x)=1|h(x)=1] ≥ 1 -ε Thm 1. An efficient algorithm exists for k-DNF h Thm 2. Unless DNF is PAC-learnable, there is no efficient algorithm for conjunctions

- Slides: 22