LCG PEB 27 April2004 Middleware Reengineering www euegee

LCG PEB, 27 -April-2004 Middleware Re-engineering www. eu-egee. org Status and Plans Erwin Laure EGEE Deputy Middleware Manager EGEE is a project funded by the European Union under contract IST-2003 -508833

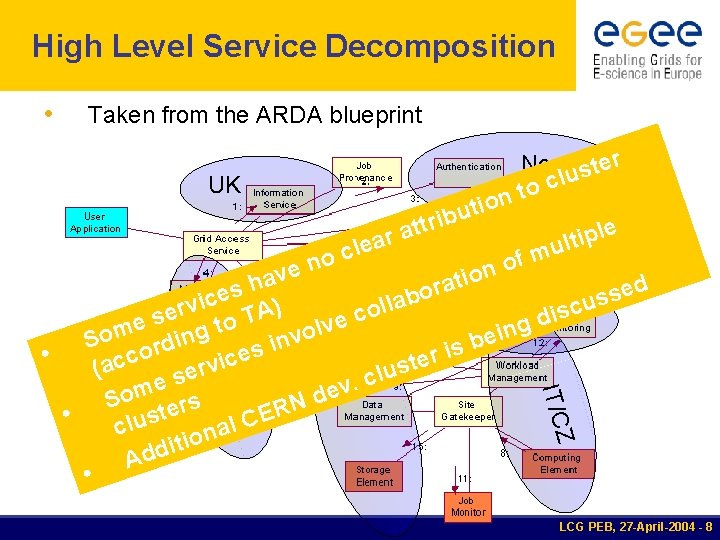

Development Clusters • 4 development clusters: § UK (Steve Fisher) § CERN/DM (Peter Kunszt) § IT/CZ (Francesco Prelz) § Nordic (security) JRA 3 • Clusters have a reasonable sized (distributed) development testbed § Taken over from EDG § Nordic cluster to be finalized § Milestone MJRA 1. 2 (PM 3) almost reached now • Collaboration with integration & tools cluster established • Clusters up and running! LCG PEB, 27 -April-2004 - 2

Design Team • Formed in December 2003 • Current members: § § § UK: Steve Fisher IT/CZ: Francesco Prelz Nordic: David Groep VDT: Miron Livny CERN: Predrag Buncic, Peter Kunszt, Frederic Hemmer, Erwin Laure • Started service design based on component breakdown defined by ARDA • Leverage experiences and existing components from Ali. En, VDT, and EDG. • A working document • Overall design & API’s • https: //edms. cern. ch/document/458972 • New version (0. 17) incorporating feedback from experiments (and others) being produced now. LCG PEB, 27 -April-2004 - 3

Guiding Principles • Lightweight (existing) services § Easily and quickly deployable • Interoperability § Allow for multiple implementations • Resilience and Fault Tolerance • Co-existence with deployed infrastructure § Run as an application • Service oriented approach § Follow WSRF standardization § No mature WSRF implementations exist to date, hence: start with plain WS – WSRF compliance is not an immediate goal LCG PEB, 27 -April-2004 - 4

Initial Focus • Data management § Storage Element • SRM based; allow POSIX-like access • Workload management § Computing Element • Allow pull and push mode • Leverage Condor. G for managing jobs on CE • • Information and monitoring Security § Need to integrate components with quite different security models § Start with a minimalist approach based on VOMS and my. Proxy LCG PEB, 27 -April-2004 - 5

Towards a prototype Initial prototype Focus on key services discussed; exploit existing components Initially an ad-hoc installation at Cern and Wisconsin components Aim to have first instance ready by end of April slip for one week) for(might April’ 04 • • • n o i ! t To be extended/ e a l as al t e l s e n a r -hoc i changed (e. g. WMS) t o Enter a rapid feedback n cycle ad s i anof remaining services § Continue theldesign y This with e rexisting services based on early user-feedback u § Enrich/harden p ’s Ittracked Progress on weekly basis – https: //edms. cern. ch/document/457150/ § § • • • Access service: § • • Ali. En CE, Globus gatekeeper, Condor. G (with LSF/PBS backend) Security: § VOMS, my. Proxy Workload mgmt: § • R-GMA CE: § • Ali. En shell, APIs Information & Monitoring: § • Open only to a small user community (via ARDA + biomed) Expect frequent changes (also API changes) based on user feedback and integration of further services SE: § • SRM (Castor; d. Cache? ), Grid. FTP, GFAL, aoid File Transfer Service: § • Ali. En task queue Ali. En FTD File and Replica Catalog: § Ali. En File Catalog, RLS LCG PEB, 27 -April-2004 - 6

Planning • Evolution of the prototype § Envisaged status at end of 2004: • Key services need to fulfill all requirements (application, operation, quality, security, …) and form a deployable release • Remaining services available as prototype § Need to develop a roadmap • Incremental changes to prototype (where possible) • Early user feedback through ARDA (and other sciences) and early deployment on SA 1 pre-production service • Detailed release plan being produced (mid May) § Converge prototype work with integration & testing activities • Need to get rolling now! • First components will start using SCM in May LCG PEB, 27 -April-2004 - 7

High Level Service Decomposition • Taken from the ARDA blueprint r e Nordic t s u l c n to UK io t u ttrib le p i t l u m f o o IT/CZ en n v o i a h at d r e s o s e b s c i u la v l ) c r o A s e i c UK g d T s e o e v t l n m o i v e So ording n b i s is e • r c c e c i t s (a rv u e l s c. e v m e d So ters N R E • s C u l l c a n o i t i d d A a r a e cl CER Z IT/C N N CER • LCG PEB, 27 -April-2004 - 8

American Involvement in JRA 1 • UWisc § Miron Livny part of the design Team § Condor Team actively involved in reengineering for resource access (CE) • In collaboration with Italian Cluster and Ali. En • ISI § Identification of potential contributions started (e. g. RLS) • Focused discussions being planned • Argonne § Collaboration on Testing started § Support for key Globus Components enhancements being discussed LCG PEB, 27 -April-2004 - 9

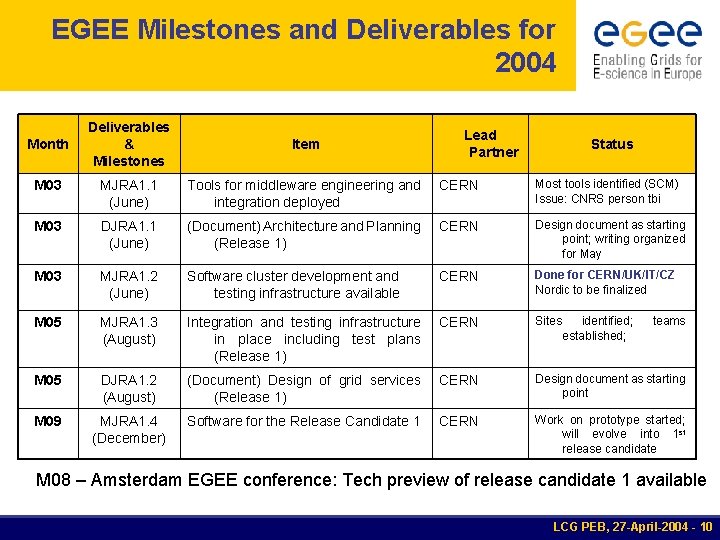

EGEE Milestones and Deliverables for 2004 Deliverables & Milestones Item M 03 MJRA 1. 1 (June) Tools for middleware engineering and integration deployed CERN Most tools identified (SCM) Issue: CNRS person tbi M 03 DJRA 1. 1 (June) (Document) Architecture and Planning (Release 1) CERN Design document as starting point; writing organized for May M 03 MJRA 1. 2 (June) Software cluster development and testing infrastructure available CERN Done for CERN/UK/IT/CZ Nordic to be finalized M 05 MJRA 1. 3 (August) Integration and testing infrastructure in place including test plans (Release 1) CERN Sites M 05 DJRA 1. 2 (August) (Document) Design of grid services (Release 1) CERN Design document as starting point M 09 MJRA 1. 4 (December) Software for the Release Candidate 1 CERN Work on prototype started; will evolve into 1 st release candidate Month Lead Partner Status identified; established; teams M 08 – Amsterdam EGEE conference: Tech preview of release candidate 1 available LCG PEB, 27 -April-2004 - 10

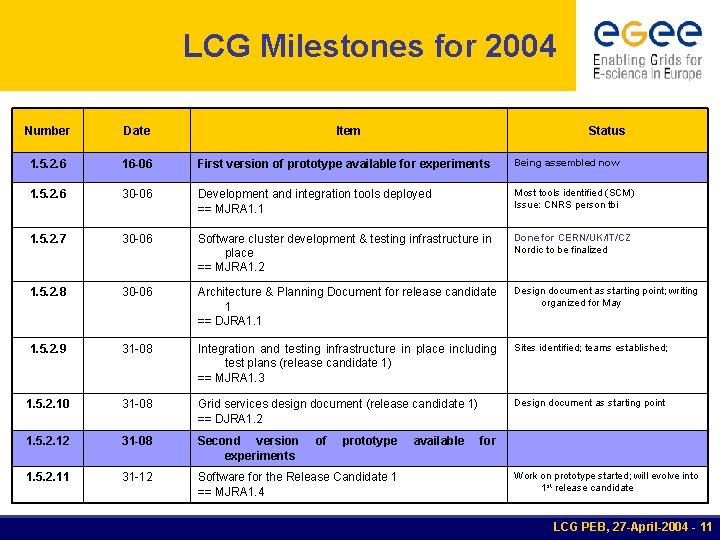

LCG Milestones for 2004 Number Date Item 1. 5. 2. 6 16 -06 First version of prototype available for experiments Being assembled now 1. 5. 2. 6 30 -06 Development and integration tools deployed == MJRA 1. 1 Most tools identified (SCM) Issue: CNRS person tbi 1. 5. 2. 7 30 -06 Software cluster development & testing infrastructure in place == MJRA 1. 2 Done for CERN/UK/IT/CZ Nordic to be finalized 1. 5. 2. 8 30 -06 Architecture & Planning Document for release candidate 1 == DJRA 1. 1 Design document as starting point; writing organized for May 1. 5. 2. 9 31 -08 Integration and testing infrastructure in place including test plans (release candidate 1) == MJRA 1. 3 Sites identified; teams established; 1. 5. 2. 10 31 -08 Grid services design document (release candidate 1) == DJRA 1. 2 Design document as starting point 1. 5. 2. 12 31 -08 Second version experiments 1. 5. 2. 11 31 -12 Software for the Release Candidate 1 == MJRA 1. 4 of prototype Status available for Work on prototype started; will evolve into 1 st release candidate LCG PEB, 27 -April-2004 - 11

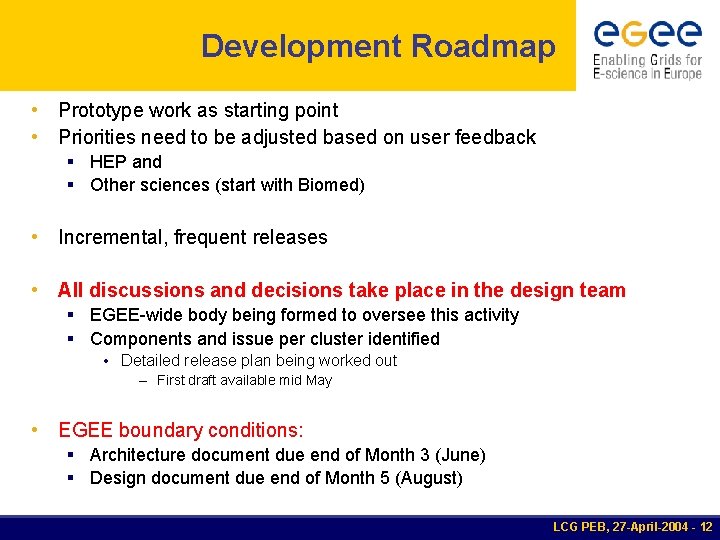

Development Roadmap • Prototype work as starting point • Priorities need to be adjusted based on user feedback § HEP and § Other sciences (start with Biomed) • Incremental, frequent releases • All discussions and decisions take place in the design team § EGEE-wide body being formed to oversee this activity § Components and issue per cluster identified • Detailed release plan being worked out – First draft available mid May • EGEE boundary conditions: § Architecture document due end of Month 3 (June) § Design document due end of Month 5 (August) LCG PEB, 27 -April-2004 - 12

Summary • Work started; all partners participate § Prototype first tangible outcome § Architectural and design work started § Incremental changes to prototype • Feedback from experiments (via ARDA) essential! • Detailed release plan being worked out • Continuous integration and testing scheme defined and being adopted (first components in May) • Technology Risk § Will WS allow for all upcoming requirements? § Divergence to standards LCG PEB, 27 -April-2004 - 13

Additional slides • Responsibilities and issues for dev clusters • Some details on key components § SE § Catalogs § CE § Information & Monitoring § Security LCG PEB, 27 -April-2004 - 14

UK Cluster • R-GMA § Interface to various graphical tools § Monitoring largely driven by application and infrastructure needs § Information system: clarify role in job-submission/data mgmt cycles (e. g. role of GLUE) § Interface to other monitoring systems (e. g. Grid 3) § Understand R-GMA role in • Accounting • Job provenance • Logging & bookkeeping • … LCG PEB, 27 -April-2004 - 15

IT/CZ Cluster • Resource Access (aka ‘CE’) § Interface to various batch systems § Starting with the integration of Condor. G § Job. Fetcher (implementing ‘pull’ model) § Site policy mgmt, enforcement, and advertisement • WMS § High level optimizer components at Task. Queue • Matchmaking • Job adjustment § VO policy management and enforcement § Task. Queue interactions LCG PEB, 27 -April-2004 - 16

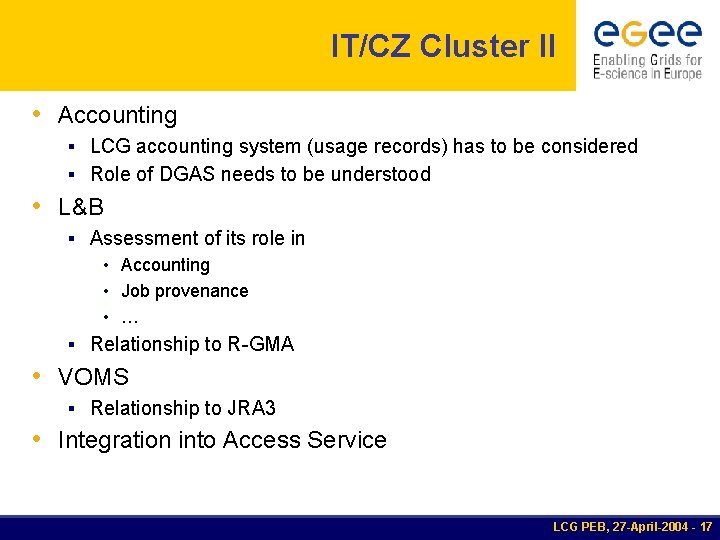

IT/CZ Cluster II • Accounting § LCG accounting system (usage records) has to be considered § Role of DGAS needs to be understood • L&B § Assessment of its role in • Accounting • Job provenance • … § Relationship to R-GMA • VOMS § Relationship to JRA 3 • Integration into Access Service LCG PEB, 27 -April-2004 - 17

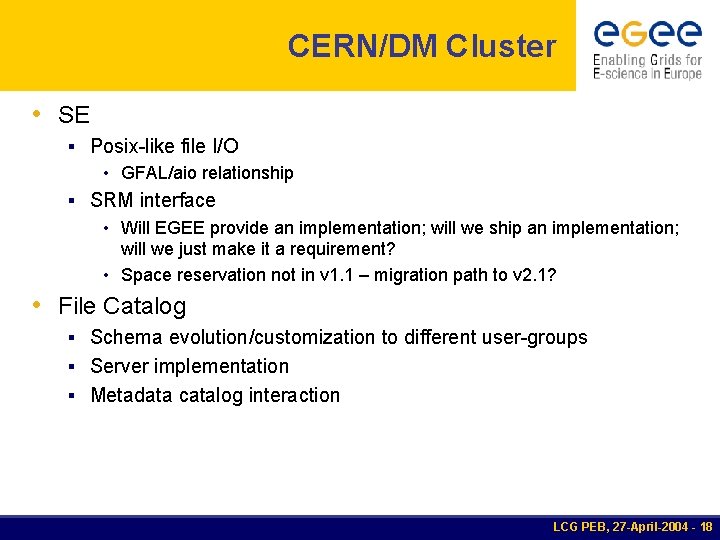

CERN/DM Cluster • SE § Posix-like file I/O • GFAL/aio relationship § SRM interface • Will EGEE provide an implementation; will we ship an implementation; will we just make it a requirement? • Space reservation not in v 1. 1 – migration path to v 2. 1? • File Catalog § Schema evolution/customization to different user-groups § Server implementation § Metadata catalog interaction LCG PEB, 27 -April-2004 - 18

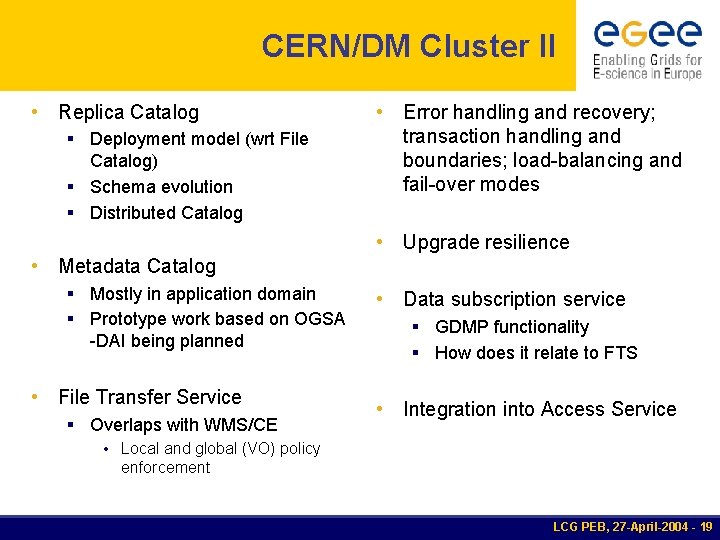

CERN/DM Cluster II • Replica Catalog § Deployment model (wrt File Catalog) § Schema evolution § Distributed Catalog • Metadata Catalog § Mostly in application domain § Prototype work based on OGSA -DAI being planned • File Transfer Service § Overlaps with WMS/CE • Local and global (VO) policy enforcement • Error handling and recovery; transaction handling and boundaries; load-balancing and fail-over modes • Upgrade resilience • Data subscription service § GDMP functionality § How does it relate to FTS • Integration into Access Service LCG PEB, 27 -April-2004 - 19

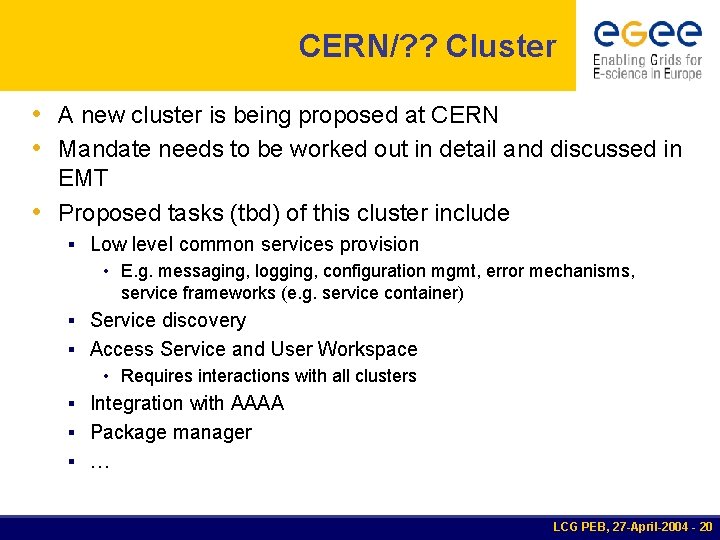

CERN/? ? Cluster • A new cluster is being proposed at CERN • Mandate needs to be worked out in detail and discussed in EMT • Proposed tasks (tbd) of this cluster include § Low level common services provision • E. g. messaging, logging, configuration mgmt, error mechanisms, service frameworks (e. g. service container) § Service discovery § Access Service and User Workspace • Requires interactions with all clusters § Integration with AAAA § Package manager § … LCG PEB, 27 -April-2004 - 20

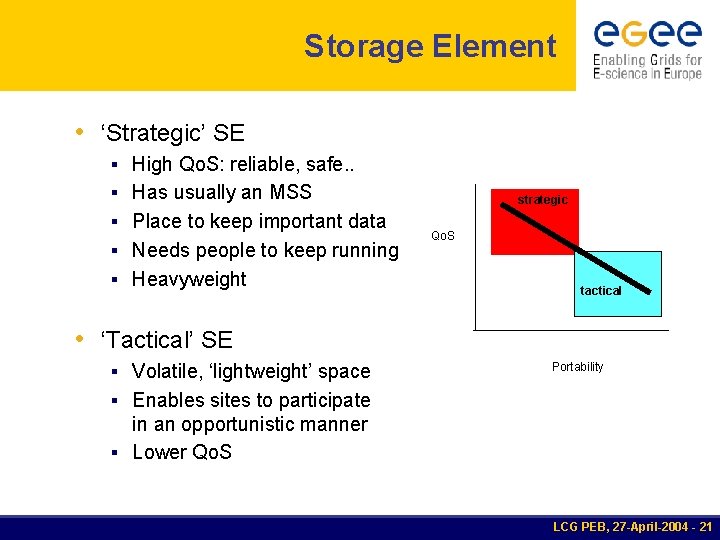

Storage Element • ‘Strategic’ SE § High Qo. S: reliable, safe. . § Has usually an MSS § Place to keep important data § Needs people to keep running § Heavyweight strategic Qo. S tactical • ‘Tactical’ SE § Volatile, ‘lightweight’ space Portability § Enables sites to participate in an opportunistic manner § Lower Qo. S LCG PEB, 27 -April-2004 - 21

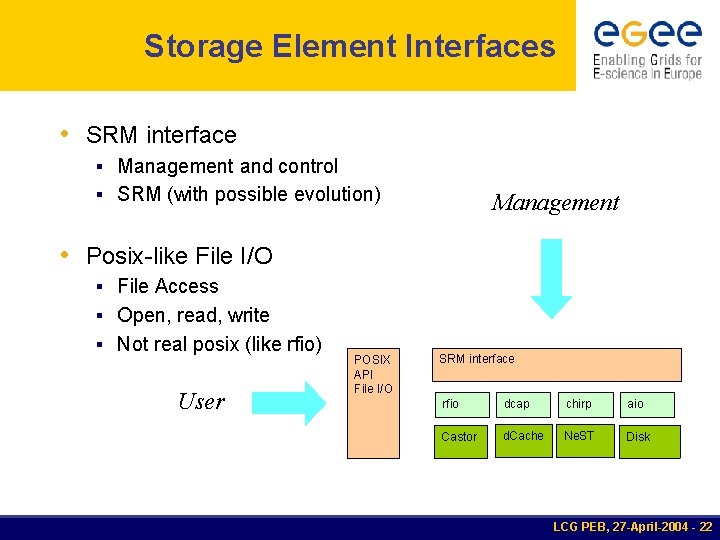

Storage Element Interfaces • SRM interface § Management and control § SRM (with possible evolution) Management • Posix-like File I/O § File Access § Open, read, write § Not real posix (like rfio) User POSIX API File I/O SRM interface rfio dcap chirp aio Castor d. Cache Ne. ST Disk LCG PEB, 27 -April-2004 - 22

Catalogs • File Catalog § Filesystem-like view on logical file names • Replica Catalog § Keep track of replicas of the same file • (Meta Data Catalog) § Attributes of files on the logical level § Boundary between generic middleware and application layer LCG PEB, 27 -April-2004 - 23

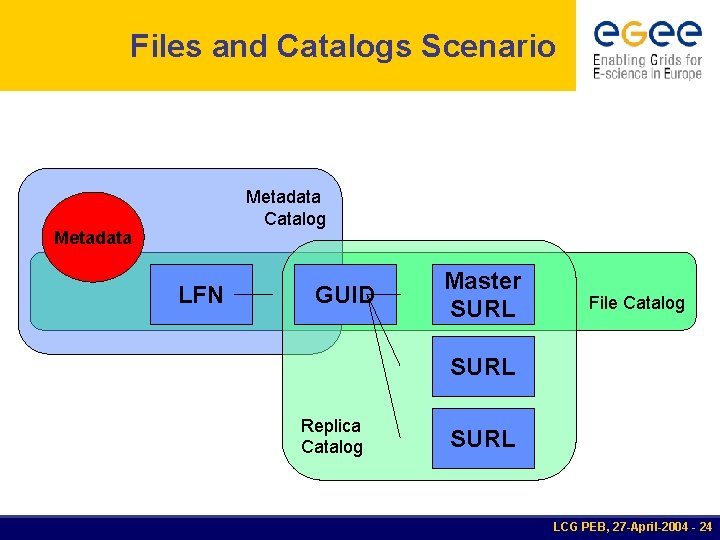

Files and Catalogs Scenario Metadata Catalog Metadata LFN GUID Master SURL File Catalog SURL Replica Catalog SURL LCG PEB, 27 -April-2004 - 24

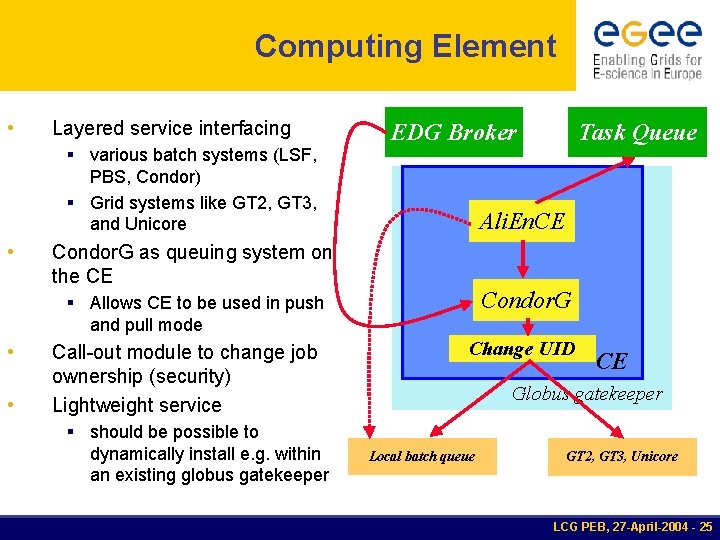

Computing Element • Layered service interfacing EDG Broker Task Queue § various batch systems (LSF, PBS, Condor) § Grid systems like GT 2, GT 3, and Unicore • Ali. En. CE Condor. G as queuing system on the CE Condor. G § Allows CE to be used in push and pull mode • • Call-out module to change job ownership (security) Lightweight service Change UID CE Globus gatekeeper § should be possible to dynamically install e. g. within an existing globus gatekeeper Local batch queue GT 2, GT 3, Unicore LCG PEB, 27 -April-2004 - 25

Information Service • • Adopt a common approach to information and monitoring infrastructure. There may be a need for specialised information services § e. g. accounting, package management, grid information, monitoring, provenance, logging § these may be built on an underlying information service • • A range of visualisation tools may be used Using R-GMA LCG PEB, 27 -April-2004 - 26

Authentication/Authorization • • • Different models and mechanisms Authentication based on Globus/GSI, AFS, SSH, X 509, tokens Authorization § Ali. En: exploits mechanism of RDBMS backend § EDG: gridmap file; VOMS credentials and LCAS/LCMAPS § VDT: gridmap file; CAS, VOMS (client) Security and protection at a level acceptable by fabric managers and end users needs to be discussed and “blessed” in advance. LCG PEB, 27 -April-2004 - 27

A minimalist approach to security • • Need to integrate components with quite different security model Start with a minimalist approach § Based on VOMS (proxy issuing) and my. Proxy (proxy store) § User stores proxy in my. Proxy from where it can be retrieved by access services and sent to other services § Credential chain needs to be preserved • Allow service to authenticate client § Local authorization could be done via LCAS if required § User is mapped to group accounts or components like LCMAPS are used to assign local user identity LCG PEB, 27 -April-2004 - 28

- Slides: 28