Lattices Definition and Related Problems Lattices Definition lattice

Lattices Definition and Related Problems

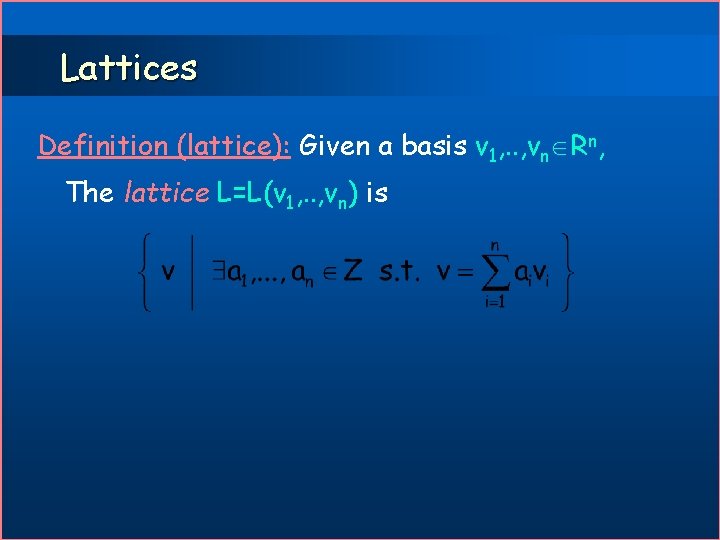

Lattices Definition (lattice): Given a basis v 1, . . , vn Rn, The lattice L=L(v 1, . . , vn) is

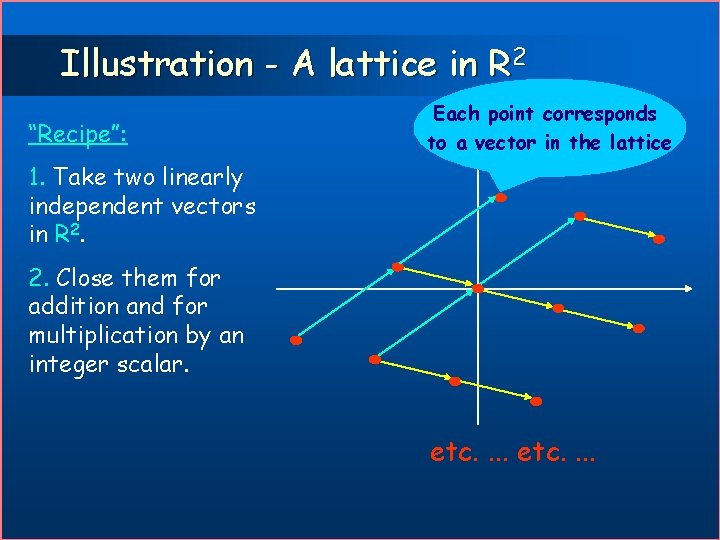

Illustration - A lattice in R 2 “Recipe”: Each point corresponds to a vector in the lattice 1. Take two linearly independent vectors in R 2. 2. Close them for addition and for multiplication by an integer scalar. etc. . . .

Shortest Vector Problem SVP (Shortest Vector Problem): Given a lattice L find s 0 L s. t. for any x 0 L || x || s ||.

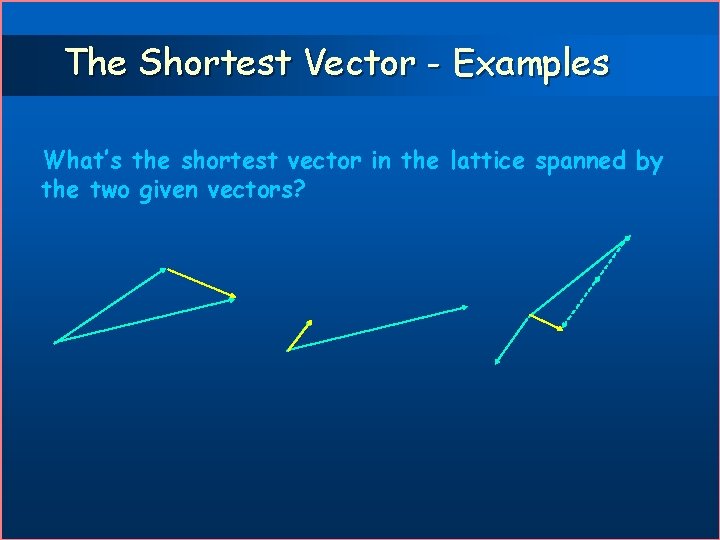

The Shortest Vector - Examples What’s the shortest vector in the lattice spanned by the two given vectors?

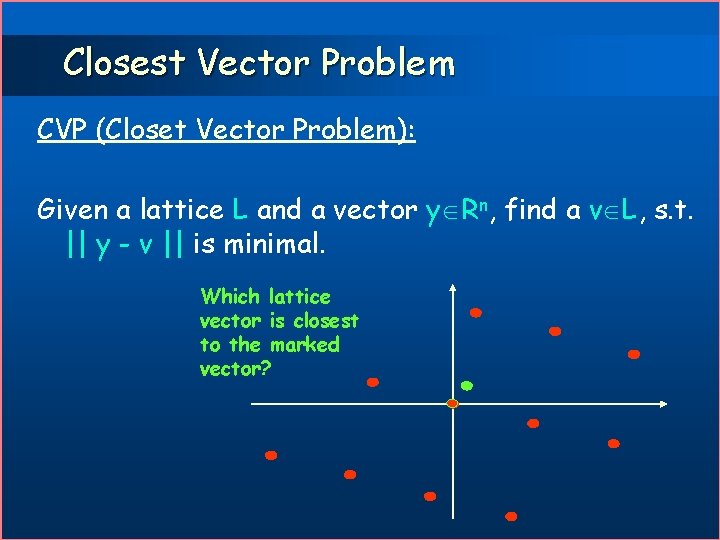

Closest Vector Problem CVP (Closet Vector Problem): Given a lattice L and a vector y Rn, find a v L, s. t. || y - v || is minimal. Which lattice vector is closest to the marked vector?

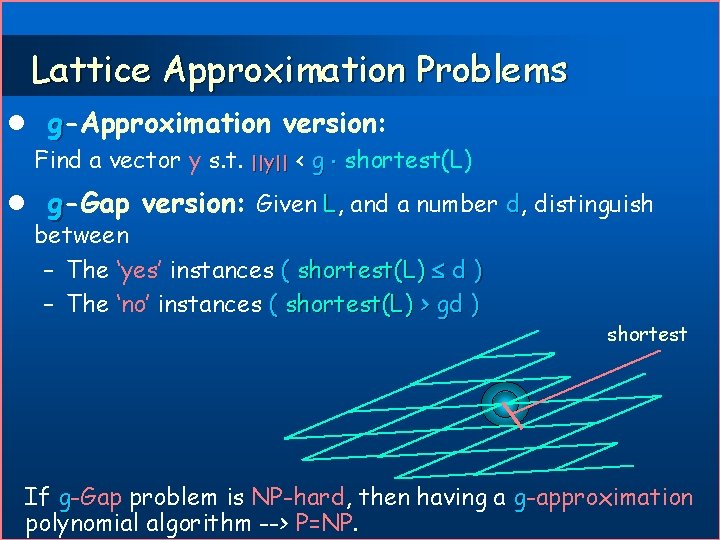

Lattice Approximation Problems l g-Approximation version: Find a vector y s. t. ||y|| < g shortest(L) l g-Gap version: Given L, and a number d, distinguish between – The ‘yes’ instances ( shortest(L) d ) – The ‘no’ instances ( shortest(L) > gd ) shortest If g-Gap problem is NP-hard, then having a g-approximation polynomial algorithm --> P=NP.

Lattice Approximation Problems l g-Approximation version: Find a vector y s. t. ||y|| < g shortest(L) l g-Gap version: Given L, and a number d, distinguish between – The ‘yes’ instances ( shortest(L) d ) – The ‘no’ instances ( shortest(L) > gd ) shortest If g-Gap problem is NP-hard, then having a g-approximation polynomial algorithm --> P=NP.

![Lattice Problems - Brief History [Dirichlet, Minkowski] no CVP algorithms… l [LLL] Approximation algorithm Lattice Problems - Brief History [Dirichlet, Minkowski] no CVP algorithms… l [LLL] Approximation algorithm](http://slidetodoc.com/presentation_image/50a5a49f6be88339935df1f29941e7ca/image-9.jpg)

Lattice Problems - Brief History [Dirichlet, Minkowski] no CVP algorithms… l [LLL] Approximation algorithm for SVP, factor 2 n/2 l [Babai] Extension to CVP l [Schnorr] Improved factor, 2 n/lg n for both CVP and SVP l [v. EB]: CVP is NP-hard l [ABSS]: Approximating CVP is l – NP hard to within any constant – Almost NP hard to within an almost polynomial factor.

![Lattice Problems - Recent History [Ajtai 96]: worst-case/average-case reduction for SVP. l [Ajtai-Dwork 96]: Lattice Problems - Recent History [Ajtai 96]: worst-case/average-case reduction for SVP. l [Ajtai-Dwork 96]:](http://slidetodoc.com/presentation_image/50a5a49f6be88339935df1f29941e7ca/image-10.jpg)

Lattice Problems - Recent History [Ajtai 96]: worst-case/average-case reduction for SVP. l [Ajtai-Dwork 96]: Cryptosystem. l [Ajtai 97]: SVP is NP-hard (for randomized reductions). l [Micc 98]: SVP is NP-hard to approximate to within some constant factor. l [DKRS]: CVP is NP hard to within an almost polynomial factor. l [LLS]: Approximating CVP to within n 1. 5 is in co. NP. l [GG]: Approximating SVP and CVP to within n is in co. AM NP. l

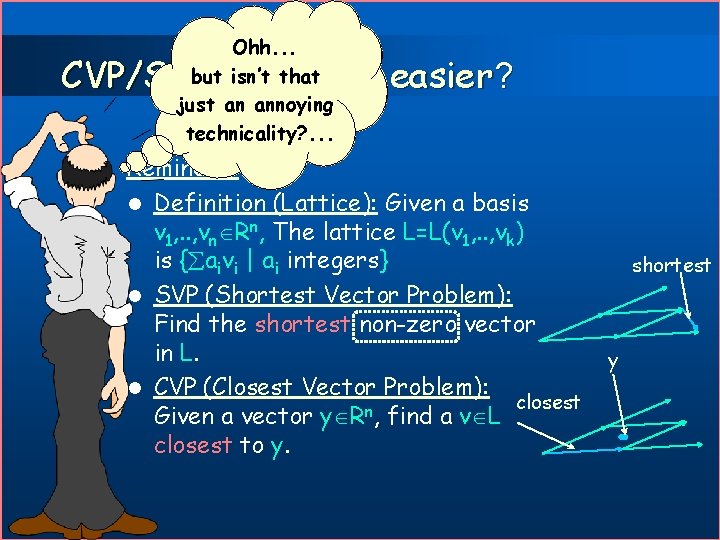

Ohh. . . Why is SVP not but isn’t that the same as just an annoying CVP with y=0? technicality? . . . CVP/SVP - which is easier? Reminder: l Definition (Lattice): Given a basis v 1, . . , vn Rn, The lattice L=L(v 1, . . , vk) is { aivi | ai integers} l SVP (Shortest Vector Problem): Find the shortest non-zero vector in L. l CVP (Closest Vector Problem): closest Given a vector y Rn, find a v L closest to y. shortest y

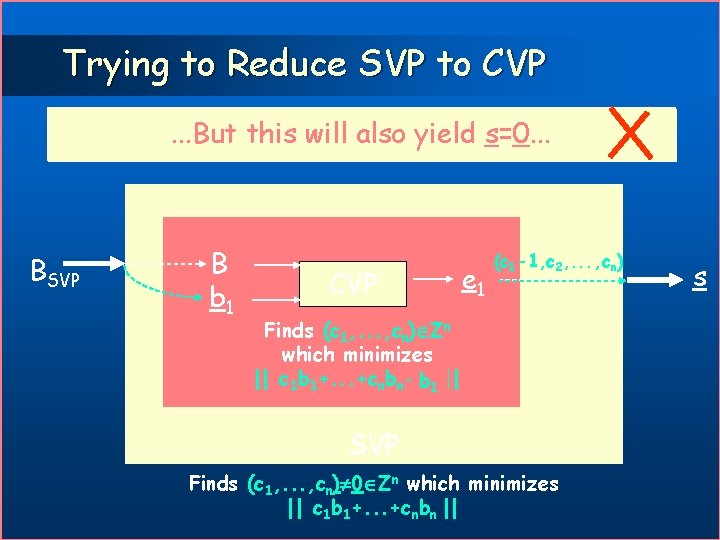

Trying to Reduce SVP to CVP. . . But#1 this will also #1 try: y=0 Note that we can similarly try: y=0 yield c=0 s=0. . . BSVP B b y 1 0 CVP (c 1 -1, c 2, . . . , cn) ec 10 c Finds (c 1, . . . , cn) Zn which minimizes || c 1 b 1+. . . +cnbn- b 0 y 1 || SVP Finds (c 1, . . . , cn) 0 Zn which minimizes || c 1 b 1+. . . +cnbn || s

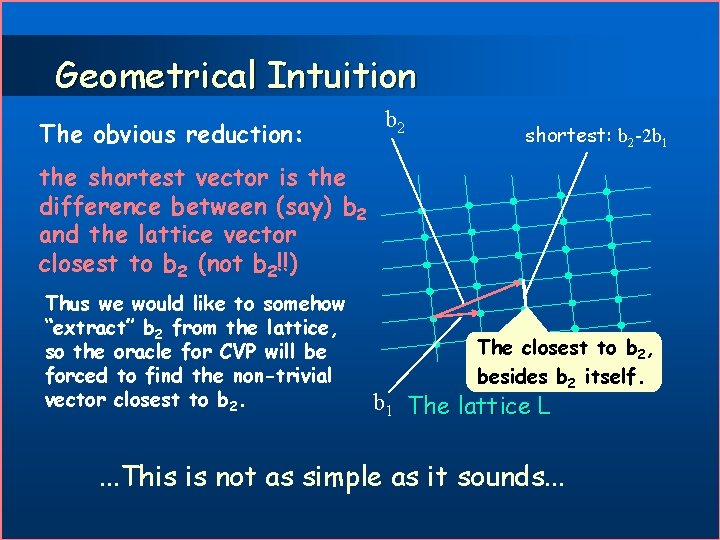

Geometrical Intuition The obvious reduction: b 2 shortest: b 2 -2 b 1 the shortest vector is the difference between (say) b 2 and the lattice vector closest to b 2 (not b 2!!) Thus we would like to somehow “extract” b 2 from the lattice, so the oracle for CVP will be forced to find the non-trivial vector closest to b 2. The closest to b 2, besides b 2 itself. b 1 The lattice L . . . This is not as simple as it sounds. . .

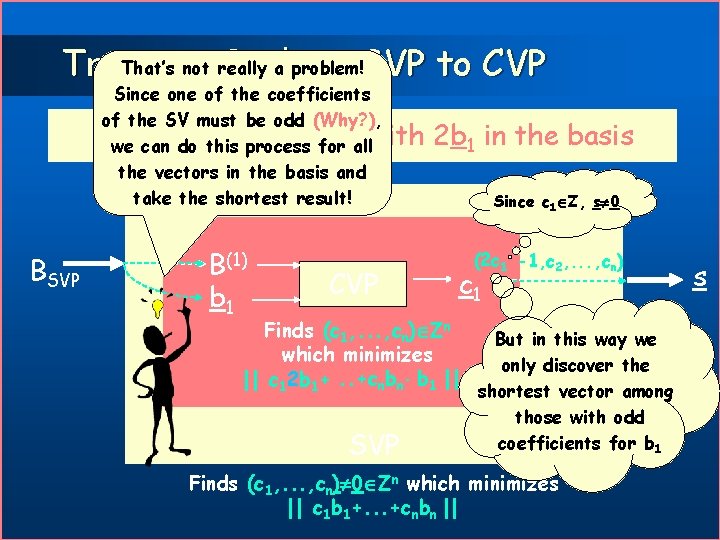

That’s to not really a problem!SVP to CVP Trying Reduce Since one of the coefficients of the SV must be odd (Why? ), The trick: b 1 allwith we can do thisreplace process for the vectors in the basis and take the shortest result! BSVP B(1) b y 1 0 CVP 2 b 1 in the basis Since c 1 Z, s 0 (2 c(c 1 1 -1, c 2, . . . , cn) 0 c cc 1 Finds (c 1, . . . , cn) Zn But in this way we which minimizes only discover the ||||c 1 c 2 b + 0 y 1 || shortest vector among 1 b 11+. . . +c nbn- b SVP those with odd coefficients for b 1 Finds (c 1, . . . , cn) 0 Zn which minimizes || c 1 b 1+. . . +cnbn || s

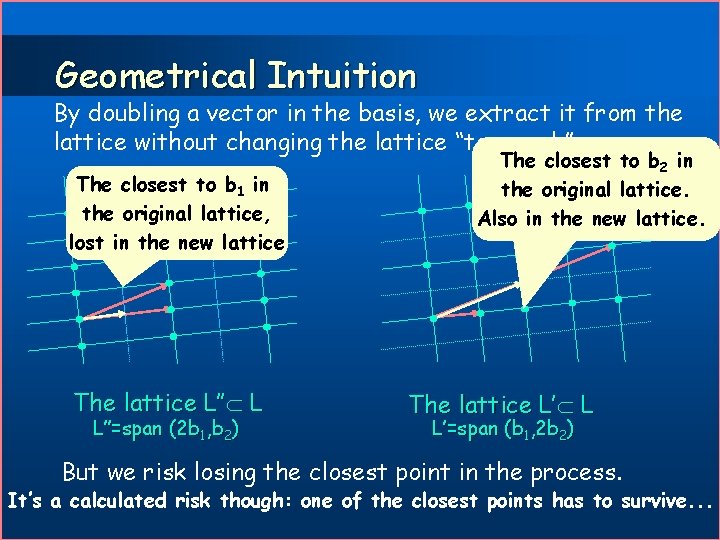

Geometrical Intuition By doubling a vector in the basis, we extract it from the lattice without changing the lattice “too much” The closest to b 1 in the original lattice, lost in the new lattice The lattice L’’ L L’’=span (2 b 1, b 2) The closest to b 2 in the original lattice. Also in the new lattice. The lattice L’ L L’=span (b 1, 2 b 2) But we risk losing the closest point in the process. It’s a calculated risk though: one of the closest points has to survive. . .

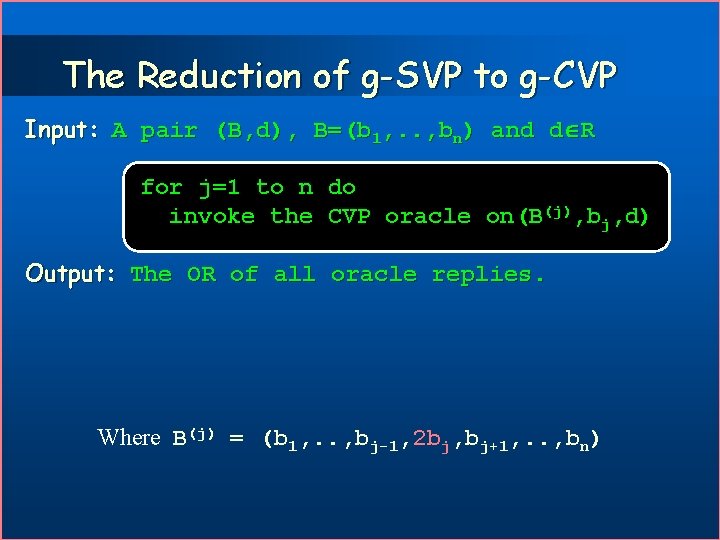

The Reduction of g-SVP to g-CVP Input: A pair (B, d), B=(b 1, . . , bn) and d R for j=1 to n do invoke the CVP oracle on(B(j), bj, d) Output: The OR of all oracle replies. Where B(j) = (b 1, . . , bj-1, 2 bj, bj+1, . . , bn)

Hardness of SVP & applications l Finding and even approximating the shortest vector is hard. l Next we will see how this fact can be exploited for cryptography. l We start by explaining the general frame of work: a well known cryptographic method called publickey cryptosystem.

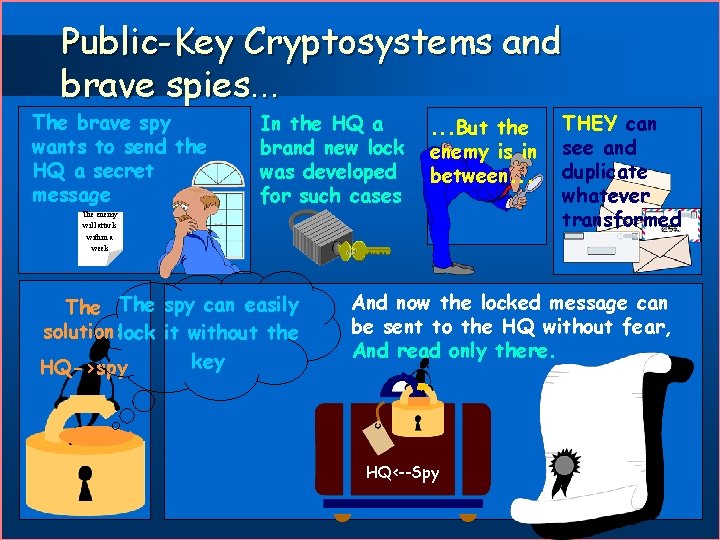

Public-Key Cryptosystems and brave spies. . . The brave spy wants to send the HQ a secret message In the HQ a brand new lock was developed for such cases . . . But the enemy is in between. . . The enemy will attack within a week The spy can easily solution: lock it without the key HQ->spy THEY can see and duplicate whatever transformed And now the locked message can be sent to the HQ without fear, And read only there. HQ<--Spy

Public-Key Cryptosystem (76( Requirements: Two poly-time computable functions Encr and Decr, s. t: 1. x Decr(Encr(x))=x 2. Given Encr(x) only, it is hard to find x. Usage Make Encr public so anyone can send you messages, keep Decr private.

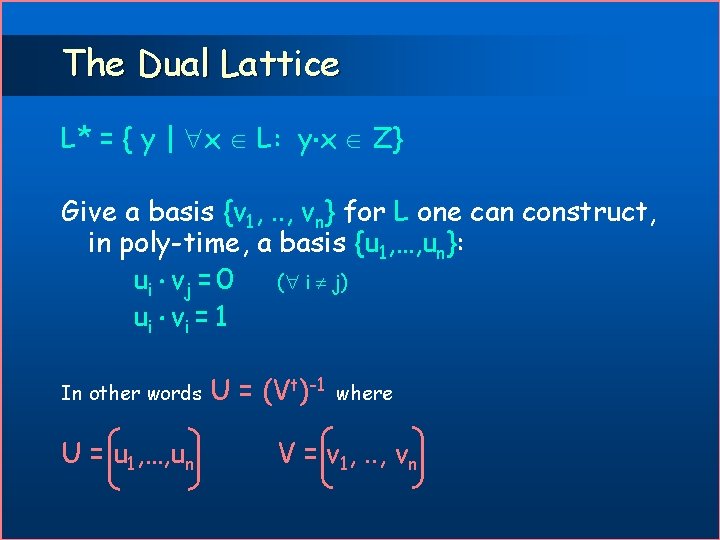

The Dual Lattice L* = { y | x L: y x Z} Give a basis {v 1, . . , vn} for L one can construct, in poly-time, a basis {u 1, …, un}: ui v j = 0 ( i j) ui v i = 1 In other words U = u 1, …, un U = (Vt)-1 where V = v 1, . . , vn

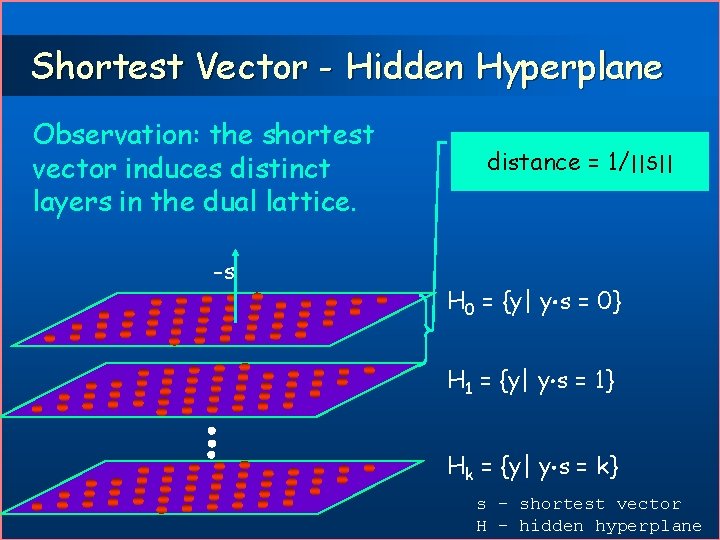

Shortest Vector - Hidden Hyperplane Observation: the shortest vector induces distinct layers in the dual lattice. -s distance = 1/||S|| H 0 = {y| y s = 0} H 1 = {y| y s = 1} Hk = {y| y s = k} s – shortest vector H – hidden hyperplane

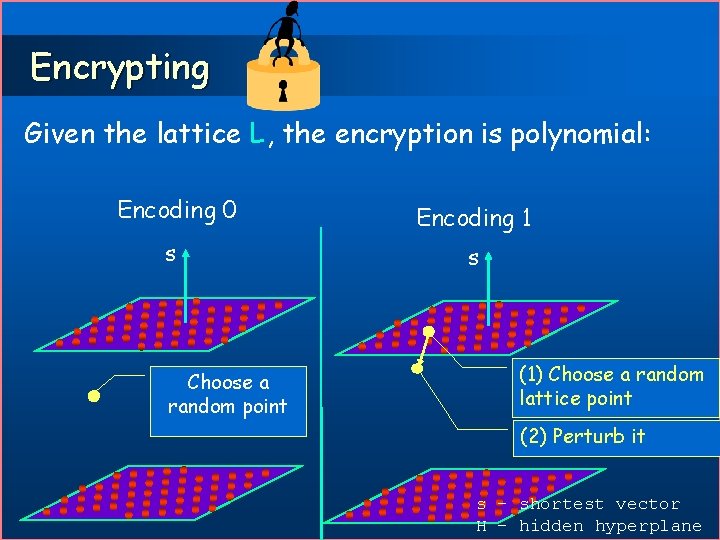

Encrypting Given the lattice L, the encryption is polynomial: Encoding 0 Encoding 1 s s Choose a random point (1) Choose a random lattice point (2) Perturb it s – shortest vector H – hidden hyperplane

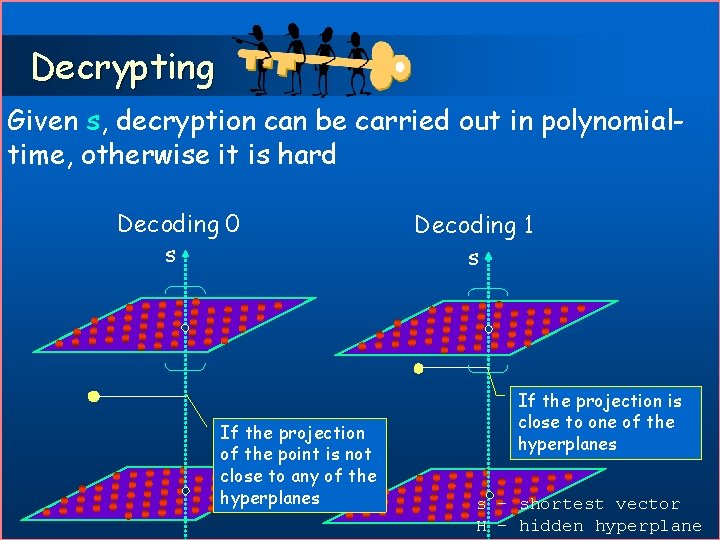

Decrypting Given s, decryption can be carried out in polynomialtime, otherwise it is hard Decoding 0 s If the projection of the point is not close to any of the hyperplanes Decoding 1 s If the projection is close to one of the hyperplanes s – shortest vector H – hidden hyperplane

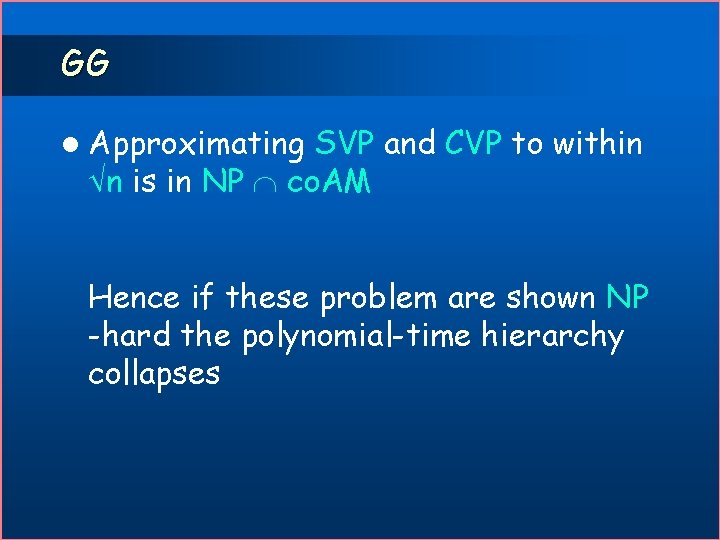

GG l Approximating SVP and CVP to within n is in NP co. AM Hence if these problem are shown NP -hard the polynomial-time hierarchy collapses

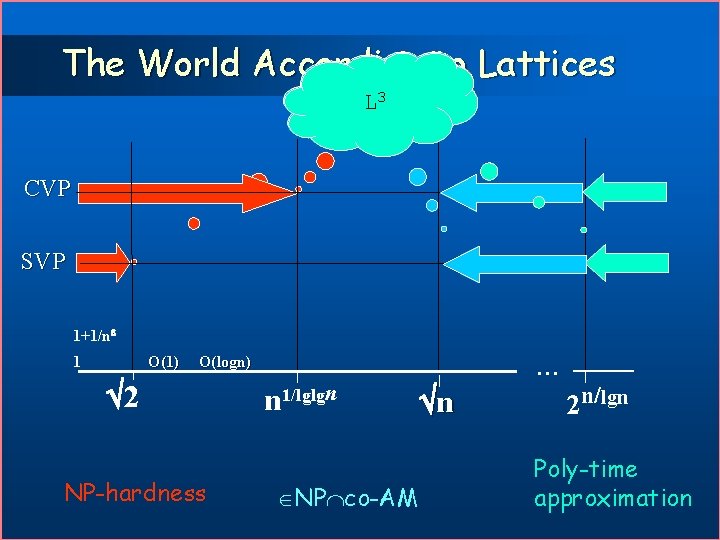

The World According Ajtai- to Lattices DKRS GG L 3 Miccianci o CVP SVP 1+1/n 1 O(1) O(logn) 2 NP-hardness n 1/lglgn NP co-AM n 2 n/lgn Poly-time approximation

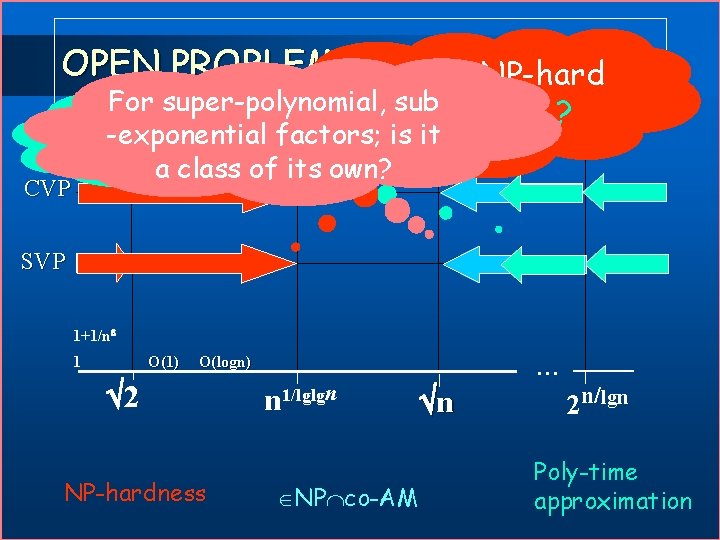

OPEN PROBLEMS Is g-SVP NP-hard For super-polynomial, sub to within n ? Can LLL be -exponential factors; is it improved? a class of its own? CVP SVP 1+1/n 1 O(1) O(logn) 2 NP-hardness n 1/lglgn NP co-AM n 2 n/lgn Poly-time approximation

Approximating SVP in Poly-Time The LLL Algorithm

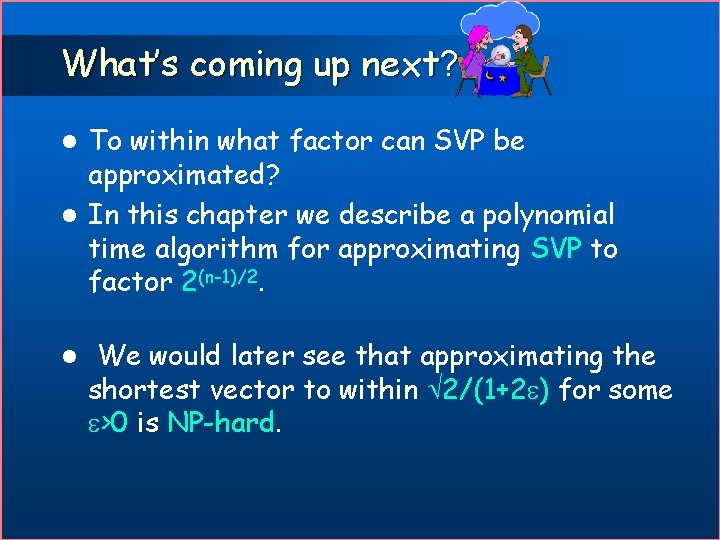

What’s coming up next? To within what factor can SVP be approximated? l In this chapter we describe a polynomial time algorithm for approximating SVP to factor 2(n-1)/2. l l We would later see that approximating the shortest vector to within 2/(1+2 ) for some >0 is NP-hard.

The Fundamental Insight(? ) Assume an orthogonal basis for a lattice. The shortest vector in this lattice is

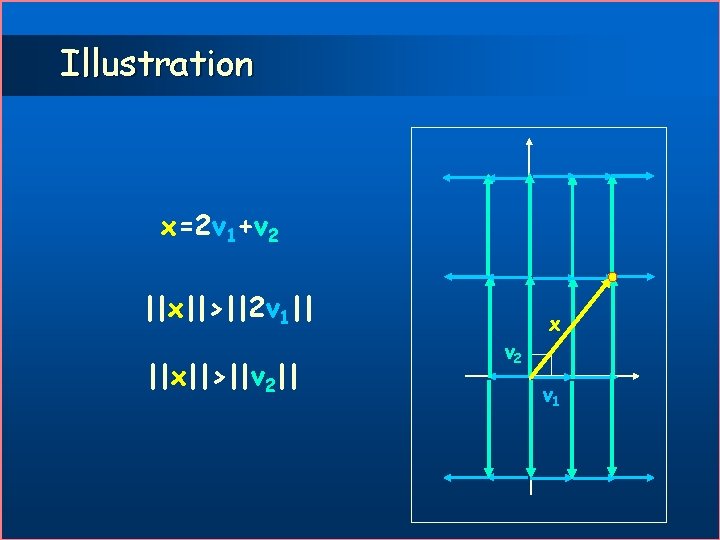

Illustration x=2 v 1+v 2 ||x||>||2 v 1|| ||x||>||v 2|| v 2 x v 1

The Fundamental Insight(!) Assume an orthogonal basis for a lattice. The shortest vector in this lattice is the shortest basis vector

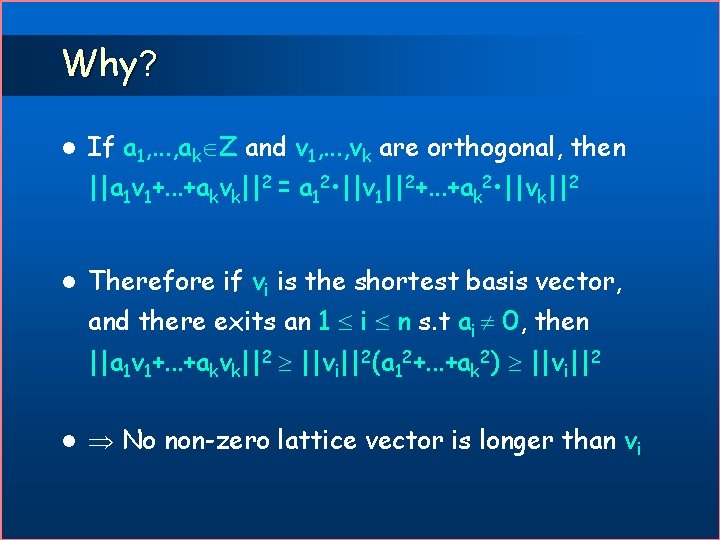

Why? l If a 1, . . . , ak Z and v 1, . . . , vk are orthogonal, then ||a 1 v 1+. . . +akvk||2 = a 12 • ||v 1||2+. . . +ak 2 • ||vk||2 l Therefore if vi is the shortest basis vector, and there exits an 1 i n s. t ai 0, then ||a 1 v 1+. . . +akvk||2 ||vi||2(a 12+. . . +ak 2) ||vi||2 l No non-zero lattice vector is longer than vi

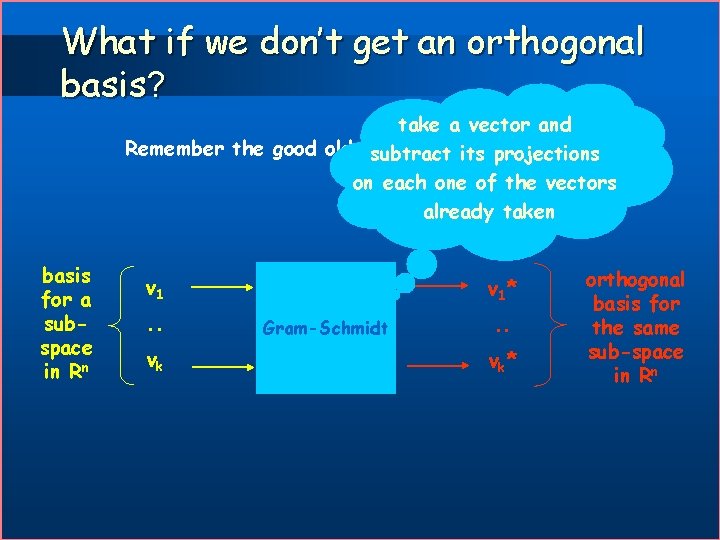

What if we don’t get an orthogonal basis? take a vector and Remember the good old Gram-Schmidt procedure: subtract its projections on each one of the vectors already taken basis for a subspace in Rn v 1. . vk v 1* Gram-Schmidt . . vk* orthogonal basis for the same sub-space in Rn

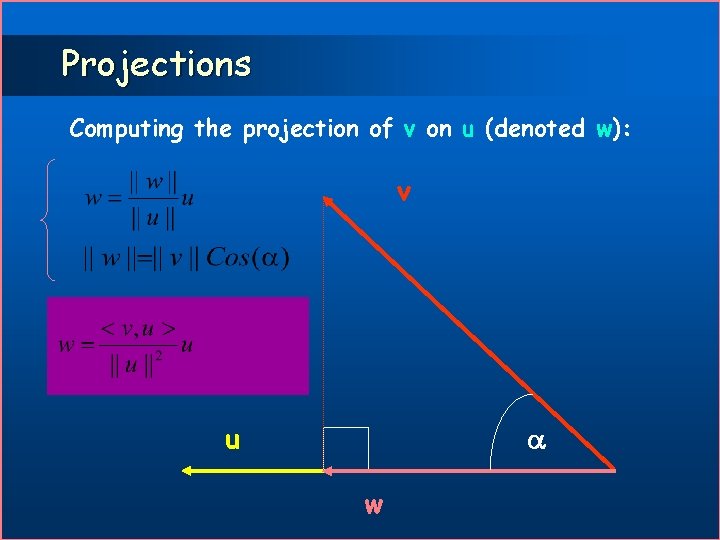

Projections Computing the projection of v on u (denoted w): v u w

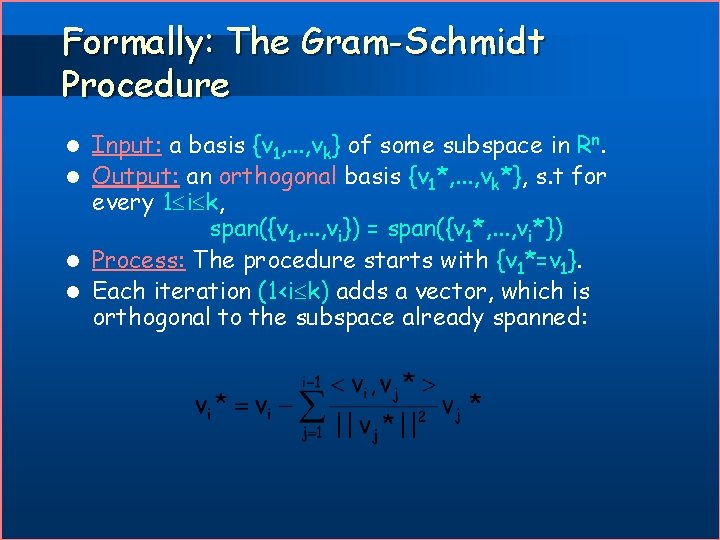

Formally: The Gram-Schmidt Procedure Input: a basis {v 1, . . . , vk} of some subspace in Rn. l Output: an orthogonal basis {v 1*, . . . , vk*}, s. t for every 1 i k, span({v 1, . . . , vi}) = span({v 1*, . . . , vi*}) l Process: The procedure starts with {v 1*=v 1}. l Each iteration (1<i k) adds a vector, which is orthogonal to the subspace already spanned: l

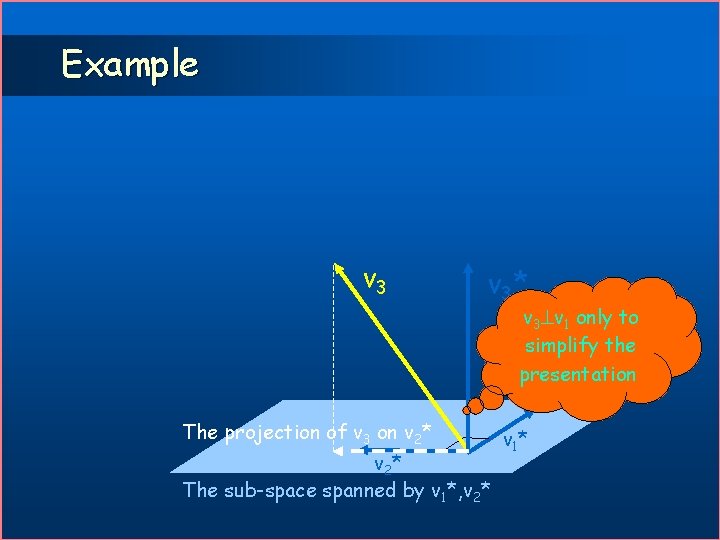

Example v 3* v 3 v 1 only to simplify the presentation The projection of v 3 on v 2* The sub-space spanned by v 1*, v 2* v 1 *

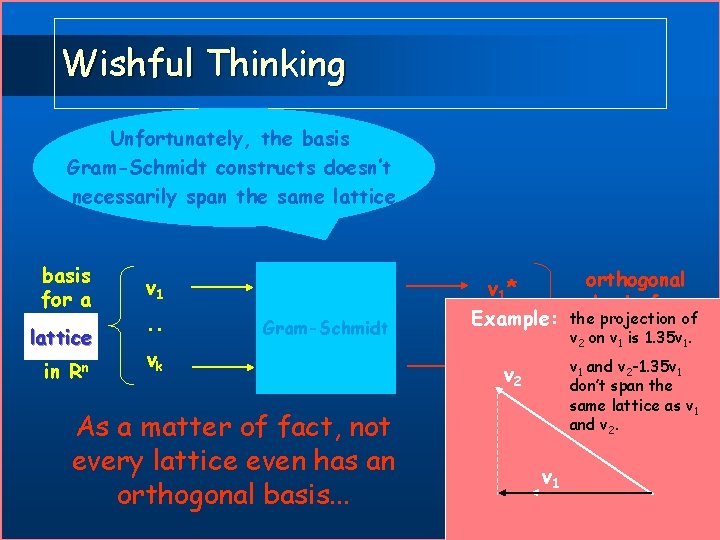

Wishful Thinking Unfortunately, the basis Gram-Schmidt constructs doesn’t necessarily span the same lattice basis for a sublattice space in Rn v 1. . orthogonal basis for the projection of Example: . . the same v 2 on v 1 is 1. 35 v 1. lattice sub-space vk* v 1 and v 2 -1. 35 v v 2 in Rn 1 don’t span the v 1* Gram-Schmidt vk As a matter of fact, not every lattice even has an orthogonal basis. . . same lattice as v 1 and v 2. v 1

Nevertheless. . . l Invoking Gram-Schmidt on a lattice basis produces a lower-bound on the length of the shortest vector in this lattice.

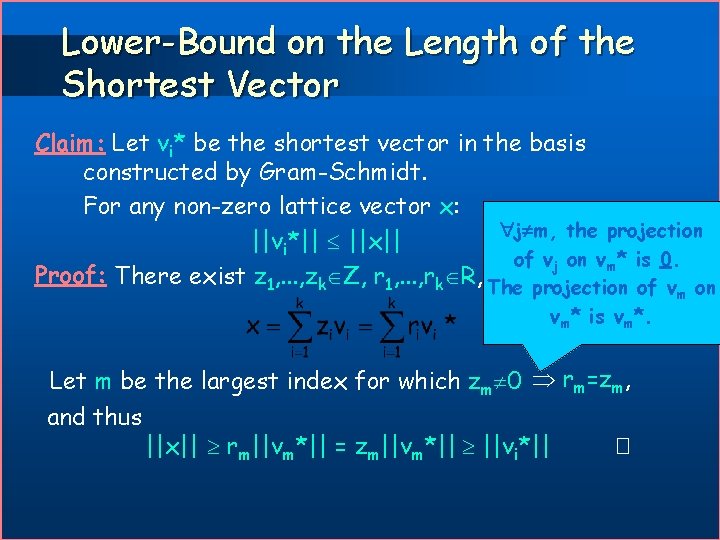

Lower-Bound on the Length of the Shortest Vector Claim: Let vi* be the shortest vector in the basis constructed by Gram-Schmidt. For any non-zero lattice vector x: j m, the projection ||vi*|| ||x|| of vj on vm* is 0. Proof: There exist z 1, . . . , zk Z, r 1, . . . , rk R, The suchprojection that of v on vm* is vm*. Let m be the largest index for which zm 0 rm=zm, and thus ||x|| rm||vm*|| = zm||vm*|| ||vi*|| � m

Compromise n Still we’ll have to settle for less than an orthogonal basis: n We’ll construct reduced basis. n Reduced basis are composed of “almost” orthogonal and relatively short vectors. n They will therefore suffice for our purpose.

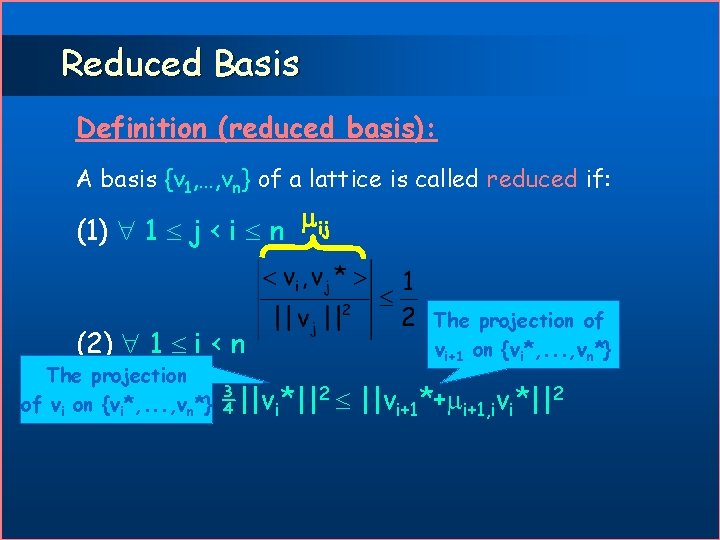

Reduced Basis Definition (reduced basis): A basis {v 1, …, vn} of a lattice is called reduced if: (1) 1 j < i n ij (2) 1 i < n The projection of vi on {vi*, . . . , vn*} The projection of vi+1 on {vi*, . . . , vn*} ¾||vi*||2 ||vi+1*+ i+1, ivi*||2

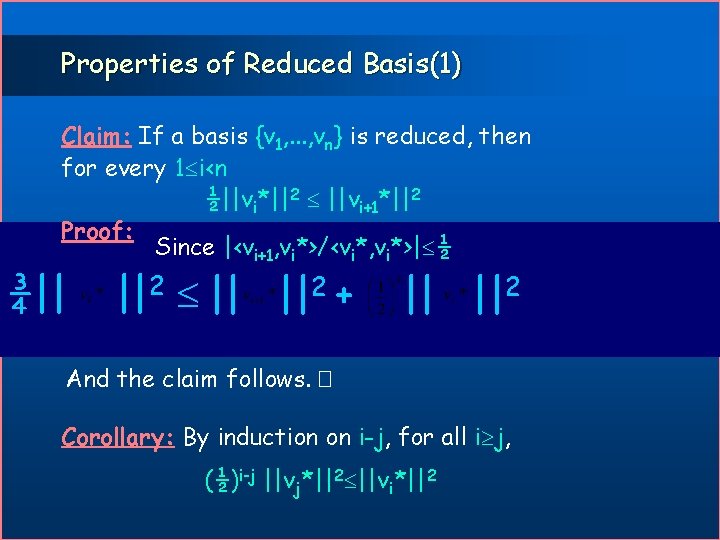

Properties of Reduced Basis(1) Claim: If a basis {v 1, . . . , vn} is reduced, then for every 1 i<n ½||vi*||2 ||vi+1*||2 Proof: {v }vis reduced Since |<v v i* and arei*>| ½ orthogonal Since , vin*>/<v 1, . . . , v i+1*i*, v i+1 ¾|| ||2 + 2 2 || || || And the claim follows. � Corollary: By induction on i-j, for all i j, (½)i-j ||vj*||2 ||vi*||2

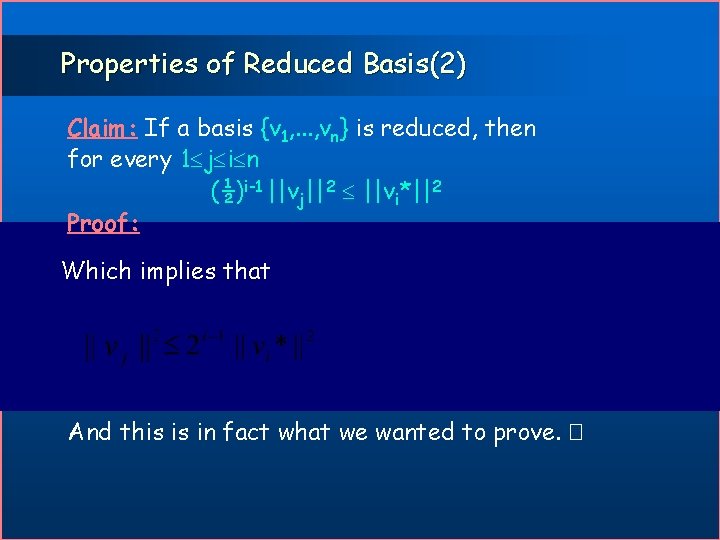

Properties of Reduced Basis(2) Claim: If a basis {v 1, . . . , vn} is reduced, then for every 1 j i n (½)i-1 ||vj||2 ||vi*||2 Proof: 2 (½)k-j ||v *||2 Since |<v , v *>/<v *, v *>| ½ and 1 k j-1 ||v *|| Some arithmetics. . . Since {v *, . . . , v *} is an orthogonal basis Rearranging the terms i+1 i i i k j Geometric sum Which implies that By the previous corollary 1 n And this is in fact what we wanted to prove. �

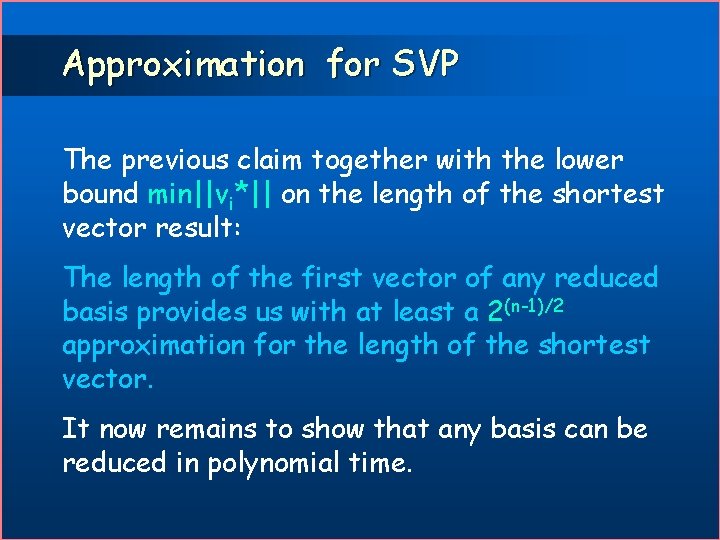

Approximation for SVP The previous claim together with the lower bound min||vi*|| on the length of the shortest vector result: The length of the first vector of any reduced basis provides us with at least a 2(n-1)/2 approximation for the length of the shortest vector. It now remains to show that any basis can be reduced in polynomial time.

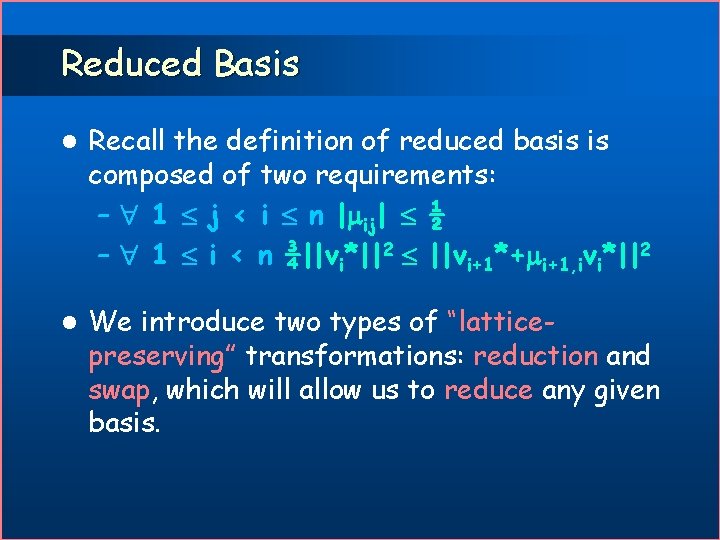

Reduced Basis l Recall the definition of reduced basis is composed of two requirements: – 1 j < i n | ij| ½ – 1 i < n ¾||vi*||2 ||vi+1*+ i+1, ivi*||2 l We introduce two types of “latticepreserving” transformations: reduction and swap, which will allow us to reduce any given basis.

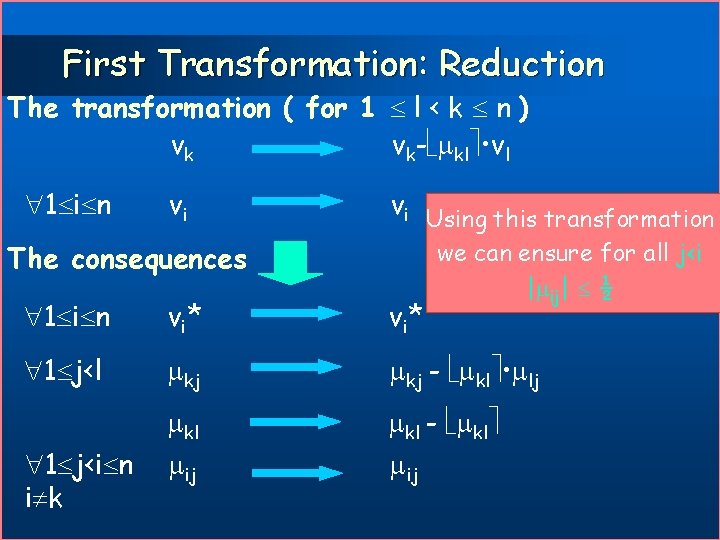

First Transformation: Reduction The transformation ( for 1 l < k n ) vk vk- kl • vl 1 i n vi vi Using this transformation The consequences we can ensure for all j<i | ij| ½ 1 i n v i* 1 j<l kj - kl • lj kl ij kl - kl ij 1 j<i n i k

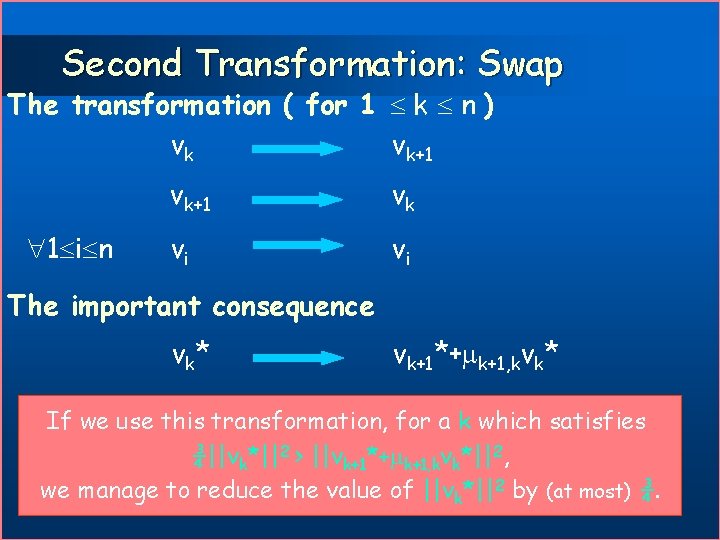

Second Transformation: Swap The transformation ( for 1 k n ) vk vk+1 1 i n vk+1 vk vi vi The important consequence v k* vk+1*+ k+1, kvk* If we use this transformation, for a k which satisfies ¾||vk*||2 > ||vk+1*+ k+1, kvk*||2, we manage to reduce the value of ||vk*||2 by (at most) ¾.

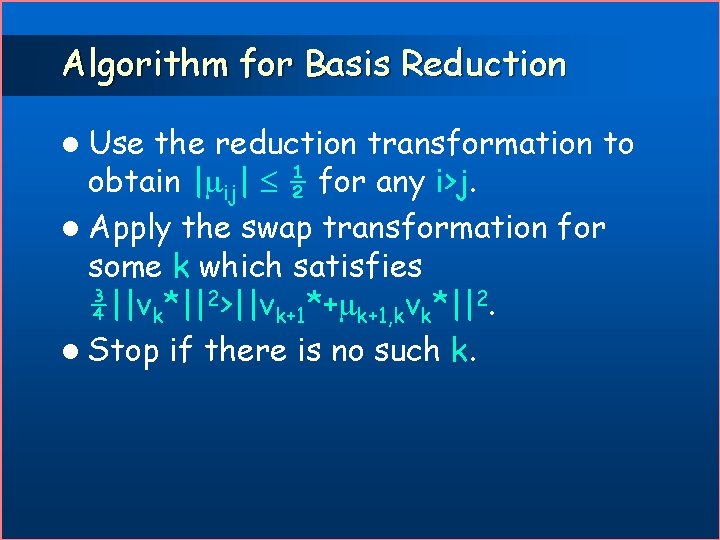

Algorithm for Basis Reduction l Use the reduction transformation to obtain | ij| ½ for any i>j. l Apply the swap transformation for some k which satisfies ¾||vk*||2>||vk+1*+ k+1, kvk*||2. l Stop if there is no such k.

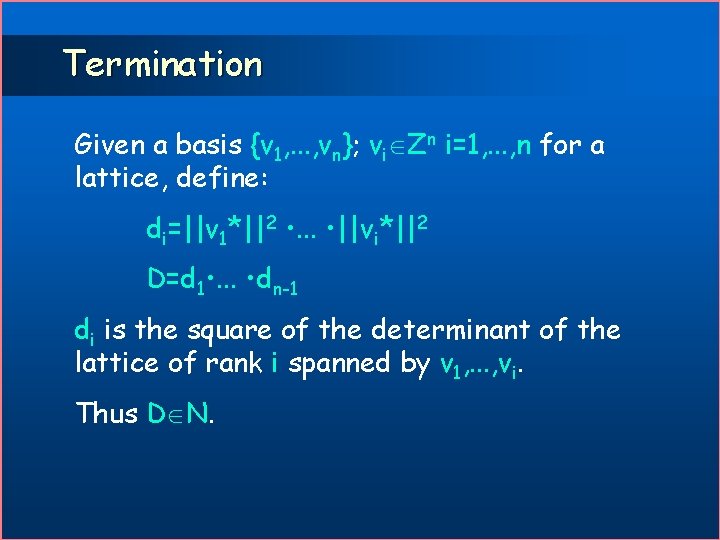

Termination Given a basis {v 1, . . . , vn}; vi Zn i=1, . . . , n for a lattice, define: di=||v 1*||2 • . . . • ||vi*||2 D=d 1 • . . . • dn-1 di is the square of the determinant of the lattice of rank i spanned by v 1, . . . , vi. Thus D N.

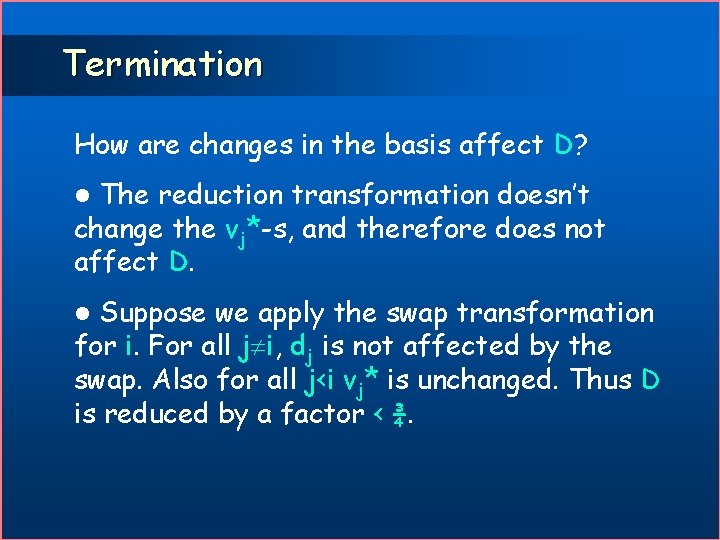

Termination How are changes in the basis affect D? The reduction transformation doesn’t change the vj*-s, and therefore does not affect D. l Suppose we apply the swap transformation for i. For all j i, dj is not affected by the swap. Also for all j<i vj* is unchanged. Thus D is reduced by a factor < ¾. l

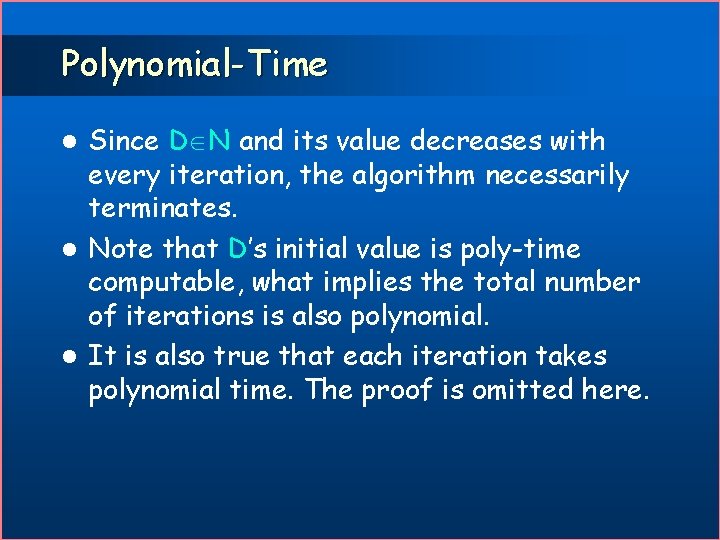

Polynomial-Time Since D N and its value decreases with every iteration, the algorithm necessarily terminates. l Note that D’s initial value is poly-time computable, what implies the total number of iterations is also polynomial. l It is also true that each iteration takes polynomial time. The proof is omitted here. l

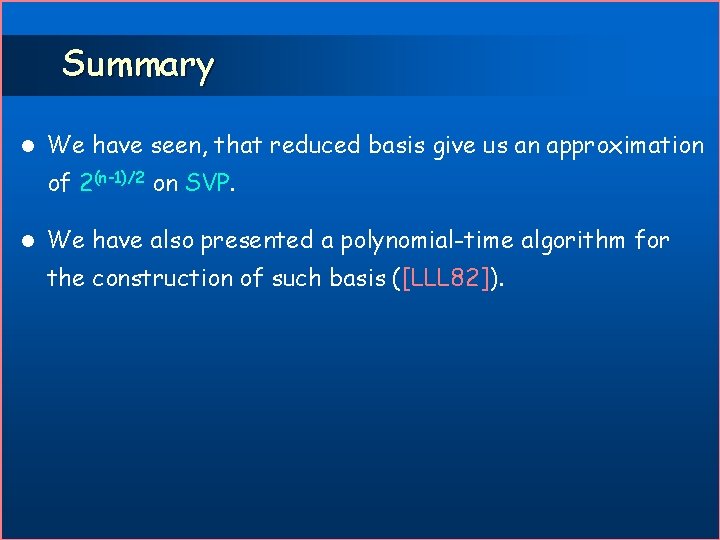

Summary l We have seen, that reduced basis give us an approximation of 2(n-1)/2 on SVP. l We have also presented a polynomial-time algorithm for the construction of such basis ([LLL 82]).

![Hardness of Approx. SVP [MICC] Gap. SVPg: Input: (B, d) where B is a Hardness of Approx. SVP [MICC] Gap. SVPg: Input: (B, d) where B is a](http://slidetodoc.com/presentation_image/50a5a49f6be88339935df1f29941e7ca/image-57.jpg)

Hardness of Approx. SVP [MICC] Gap. SVPg: Input: (B, d) where B is a basis for a lattice in Rn and d R. Yes instances: (B, d) s. t. . No instances: (B, d) s. t. . Gap. CVP’g: Input: (B, y, d) where B Zk n, y Zk, and d R. Yes instances: (B, y, d) s. t. . No instances: (B, y, d) s. t. .

Reducing CVP to SVP We will use the fact that Gap. CVPc’ is NP-hard for every constant c, and give a reduction from Gap. CVP’ 2/ to Gap. SVP 2/(1+2 ), for every > 0.

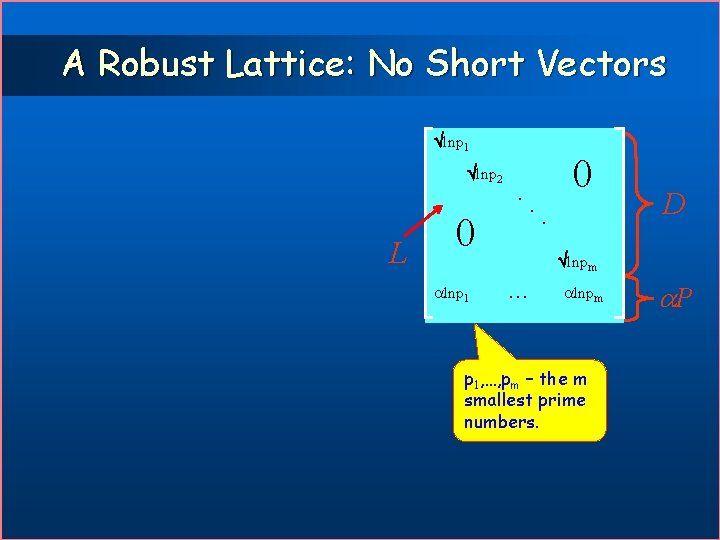

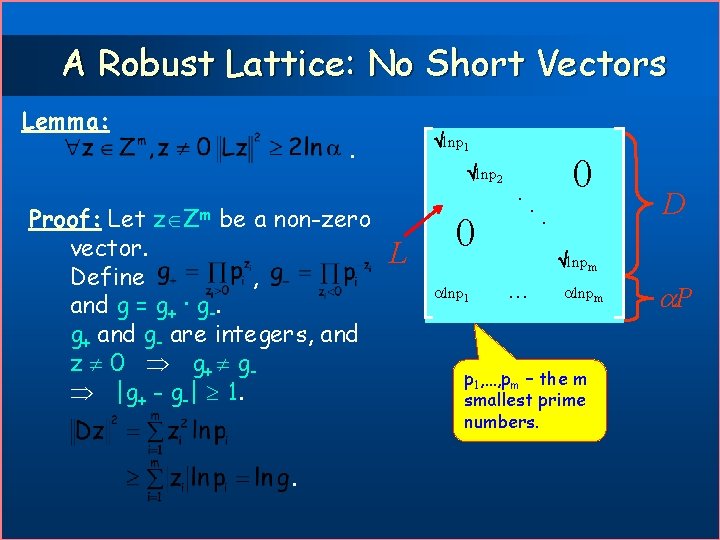

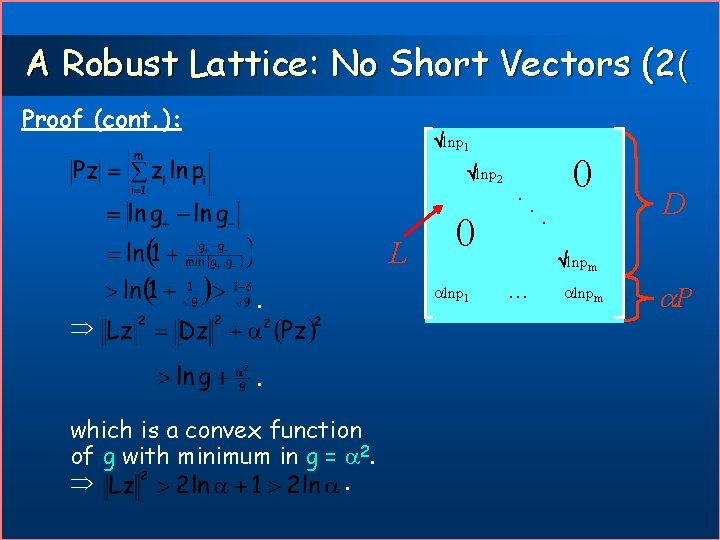

A Robust Lattice: No Short Vectors lnp 1 lnp 2 L . 0 lnp 1 . 0. D lnpm p 1, …, pm – the m smallest prime numbers. P

A Robust Lattice: No Short Vectors Lemma: lnp 1 . Proof: Let be a non-zero vector. Define , and g = g+ · g-. g+ and g- are integers, and z 0 g+ g |g+ - g-| 1. lnp 2 z Zm . L . 0 lnp 1 . 0. D lnpm p 1, …, pm – the m smallest prime numbers. P

A Robust Lattice: No Short Vectors (2( Proof (cont. ): lnp 1 lnp 2 L . . which is a convex function of g with minimum in g = 2. . . 0 lnp 1 . 0. D lnpm P

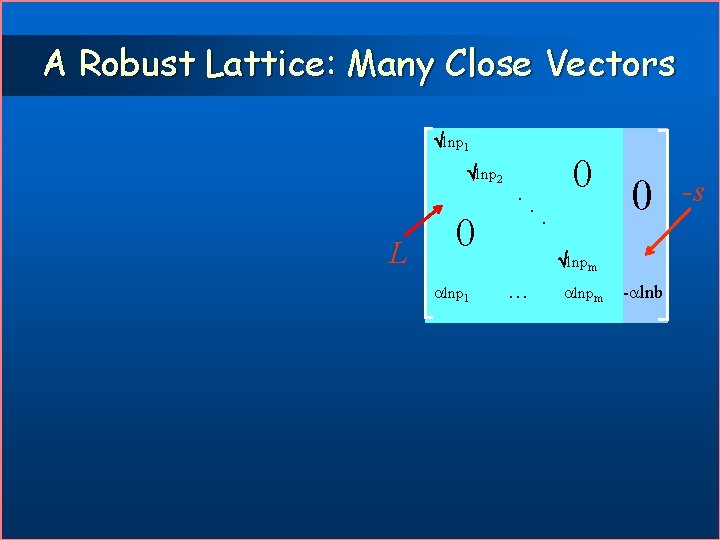

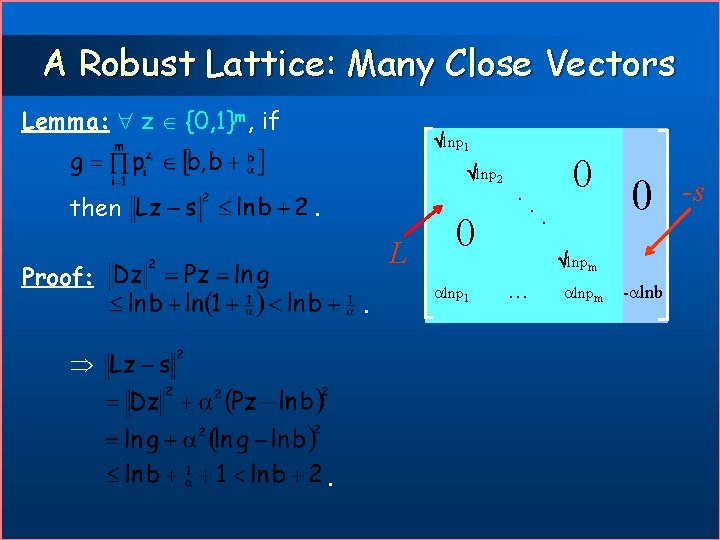

A Robust Lattice: Many Close Vectors lnp 1 lnp 2 L . 0 lnp 1 . 0 lnpm - lnb -s

A Robust Lattice: Many Close Vectors Lemma: z {0, 1}m, if lnp 1 lnp 2 then . L Proof: . . . 0 lnp 1 . 0 lnpm - lnb -s

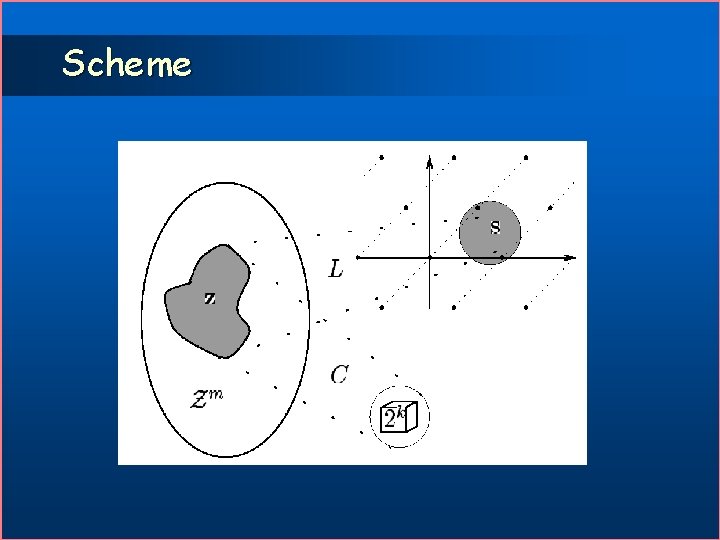

Scheme

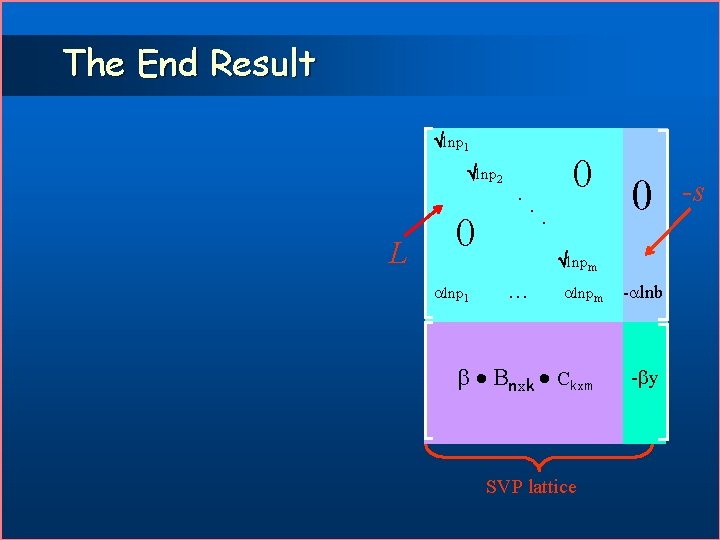

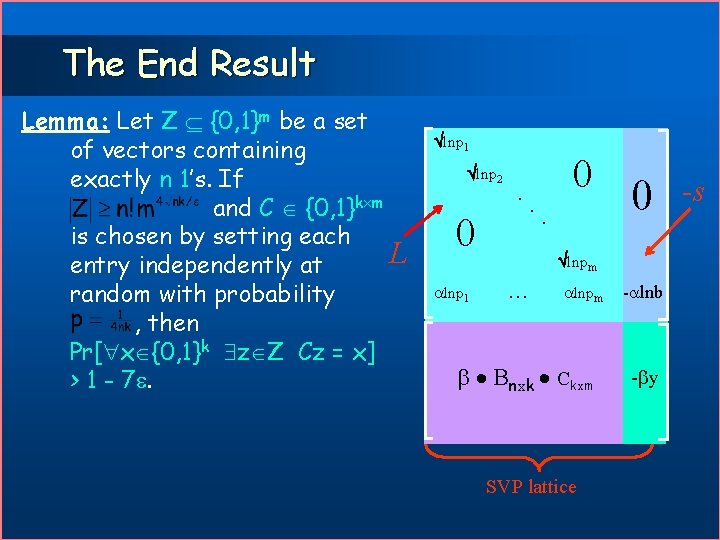

The End Result lnp 1 lnp 2 L . 0 lnp 1 . 0 lnpm Bnxk Ckxm SVP lattice - lnb - y -s

The End Result Lemma: Let Z {0, 1}m be a set of vectors containing exactly n 1’s. If and C {0, 1}k m is chosen by setting each L entry independently at random with probability , then Pr[ x {0, 1}k z Z Cz = x] > 1 - 7. lnp 1 lnp 2 . 0 lnp 1 . 0 lnpm Bnxk Ckxm SVP lattice - lnb - y -s

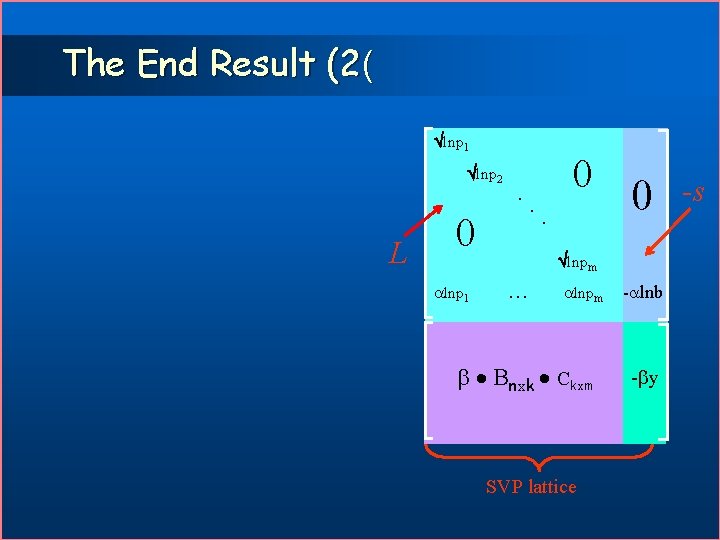

The End Result (2( lnp 1 lnp 2 L . 0 lnp 1 . 0 lnpm Bnxk Ckxm SVP lattice - lnb - y -s

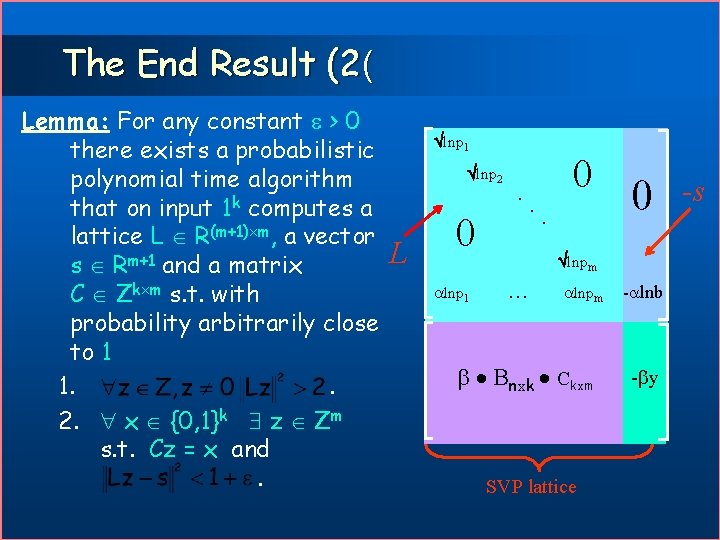

The End Result (2( Lemma: For any constant > 0 there exists a probabilistic polynomial time algorithm that on input 1 k computes a lattice L R(m+1) m, a vector s Rm+1 and a matrix C Zk m s. t. with probability arbitrarily close to 1 1. . 2. x {0, 1}k z Zm s. t. Cz = x and. lnp 1 lnp 2 L . 0 lnp 1 . 0 lnpm Bnxk Ckxm SVP lattice - lnb - y -s

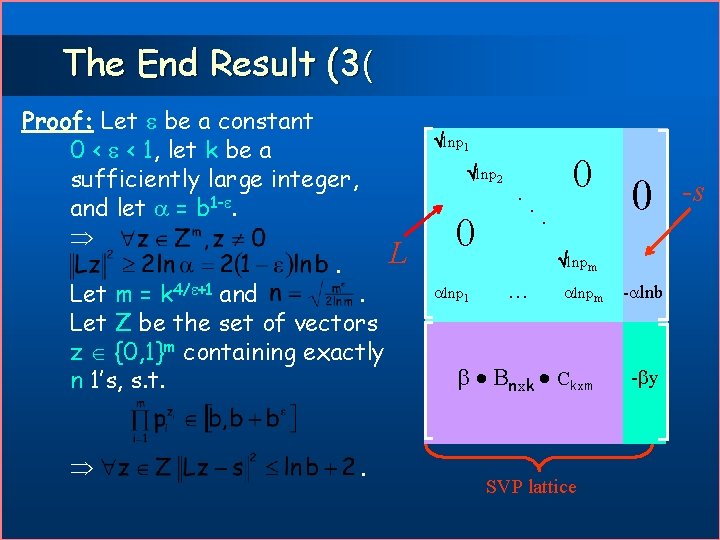

The End Result (3( Proof: Let be a constant 0 < < 1, let k be a sufficiently large integer, and let = b 1 -. L. Let m = k 4/ +1 and. Let Z be the set of vectors z {0, 1}m containing exactly n 1’s, s. t. . lnp 1 lnp 2 . 0 lnp 1 . 0 lnpm Bnxk Ckxm SVP lattice - lnb - y -s

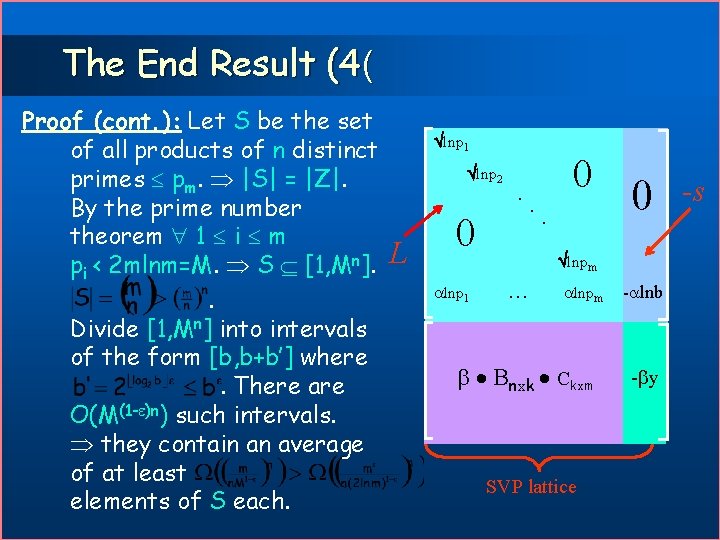

The End Result (4( Proof (cont. ): Let S be the set of all products of n distinct primes pm. |S| = |Z|. By the prime number theorem 1 i m pi < 2 mlnm=M. S [1, Mn]. . Divide [1, Mn] into intervals of the form [b, b+b’] where. There are O(M(1 - )n) such intervals. they contain an average of at least elements of S each. lnp 1 lnp 2 L . 0 lnp 1 . 0 lnpm Bnxk Ckxm SVP lattice - lnb - y -s

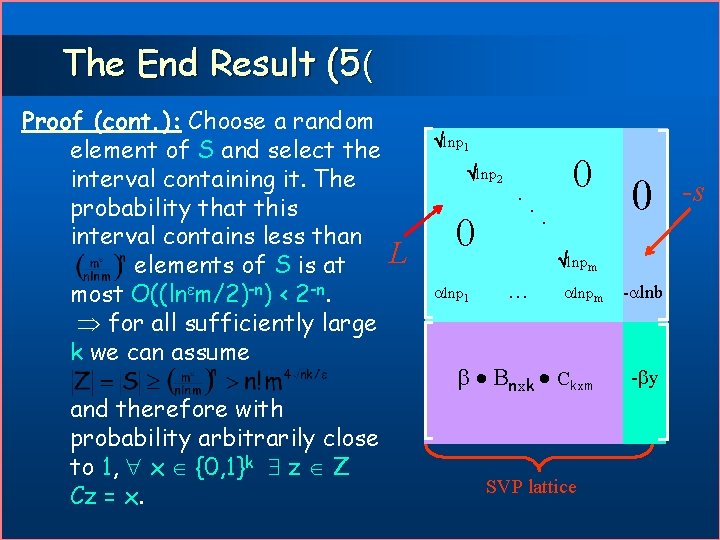

The End Result (5( Proof (cont. ): Choose a random element of S and select the interval containing it. The probability that this interval contains less than elements of S is at most O((ln m/2)-n) < 2 -n. for all sufficiently large k we can assume and therefore with probability arbitrarily close to 1, x {0, 1}k z Z Cz = x. lnp 1 lnp 2 L . 0 lnp 1 . 0 lnpm Bnxk Ckxm SVP lattice - lnb - y -s

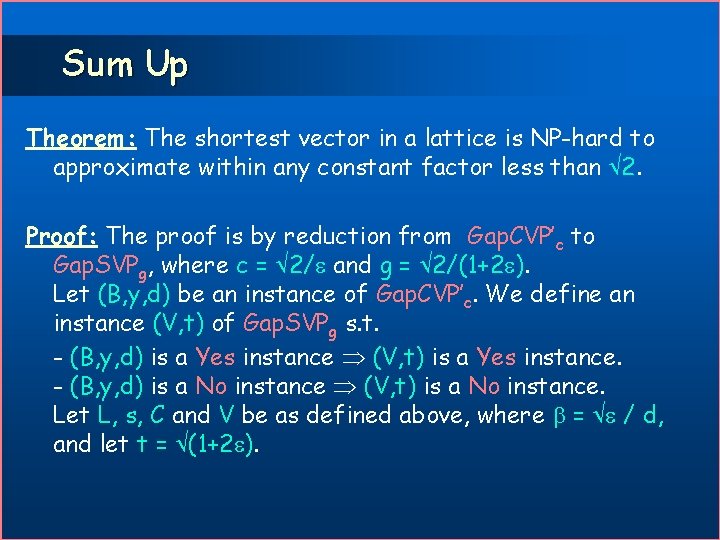

Sum Up Theorem: The shortest vector in a lattice is NP-hard to approximate within any constant factor less than 2. Proof: The proof is by reduction from Gap. CVP’c to Gap. SVPg, where c = 2/ and g = 2/(1+2 ). Let (B, y, d) be an instance of Gap. CVP’c. We define an instance (V, t) of Gap. SVPg s. t. - (B, y, d) is a Yes instance (V, t) is a Yes instance. - (B, y, d) is a No instance (V, t) is a No instance. Let L, s, C and V be as defined above, where = / d, and let t = (1+2 ).

Completeness Proof (cont. ): l Assume that (B, y, d) is a Yes instance of Gap. CVP’c. . l From the previous lemma z Zm s. t. Cz = x and. Define a vector . (V, t) is a Yes instance of Gap. SVPg.

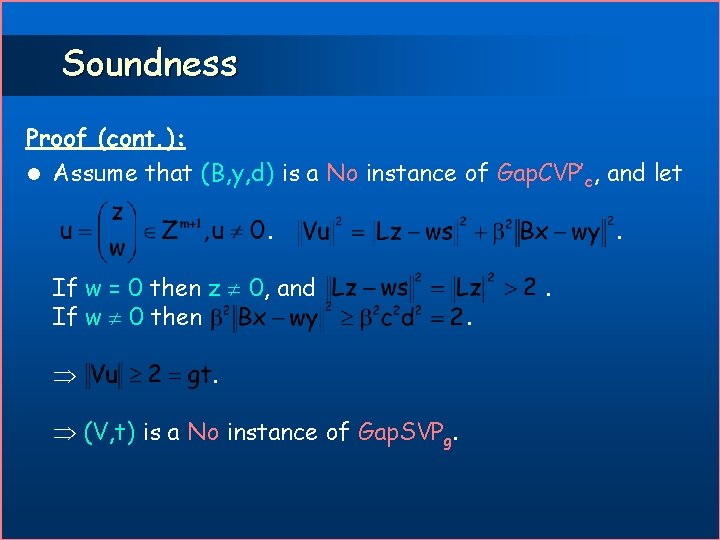

Soundness Proof (cont. ): l Assume that (B, y, d) is a No instance of Gap. CVP’c, and let. If w = 0 then z 0, and If w 0 then . (V, t) is a No instance of Gap. SVPg. .

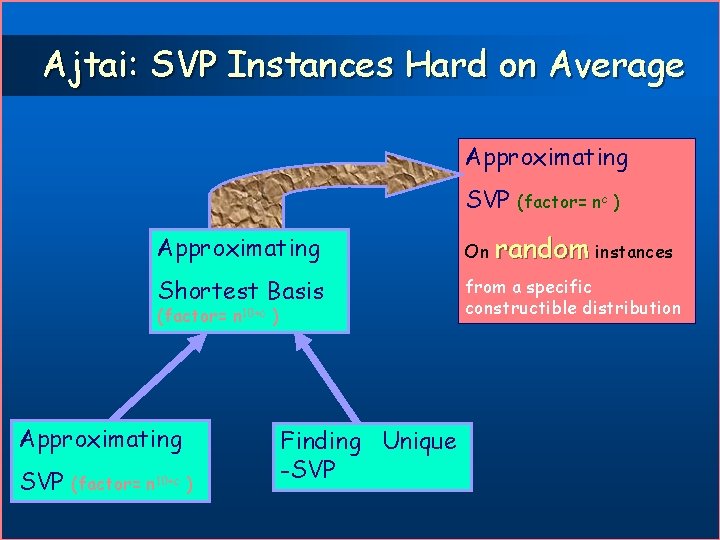

Ajtai: SVP Instances Hard on Average Approximating SVP Approximating On random instances Shortest Basis from a specific constructible distribution (factor= n 10+c ) Approximating SVP (factor= nc ) (factor= n 10+c ) Finding Unique -SVP

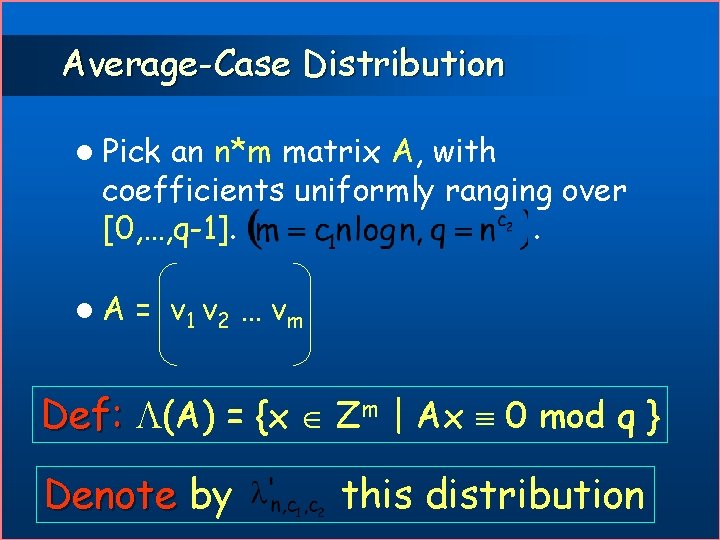

Average-Case Distribution l Pick an n*m matrix A, with coefficients uniformly ranging over [0, …, q-1]. . l. A = v 1 v 2 … vm Def: (A) = {x Zm | Ax 0 mod q } Denote by this distribution

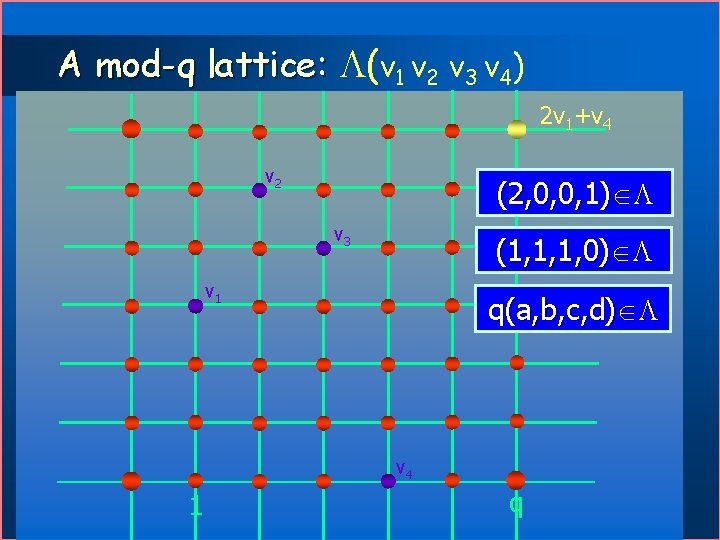

A mod-q lattice: (v 1 v 2 v 3 v 4) 2 v 1+v 4 v 2 (2, 0, 0, 1) v 3 (1, 1, 1, 0) v 1 q(a, b, c, d) v 4 1 q

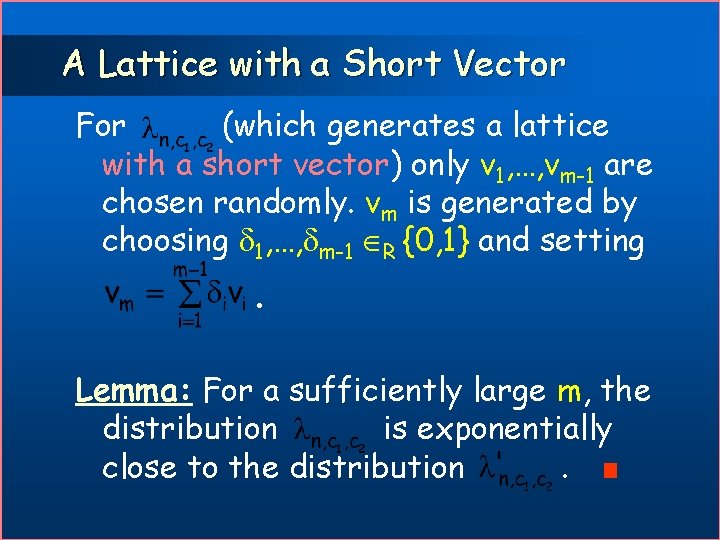

A Lattice with a Short Vector For (which generates a lattice with a short vector) only v 1, …, vm-1 are chosen randomly. vm is generated by choosing 1, …, m-1 R {0, 1} and setting . Lemma: For a sufficiently large m, the distribution is exponentially close to the distribution.

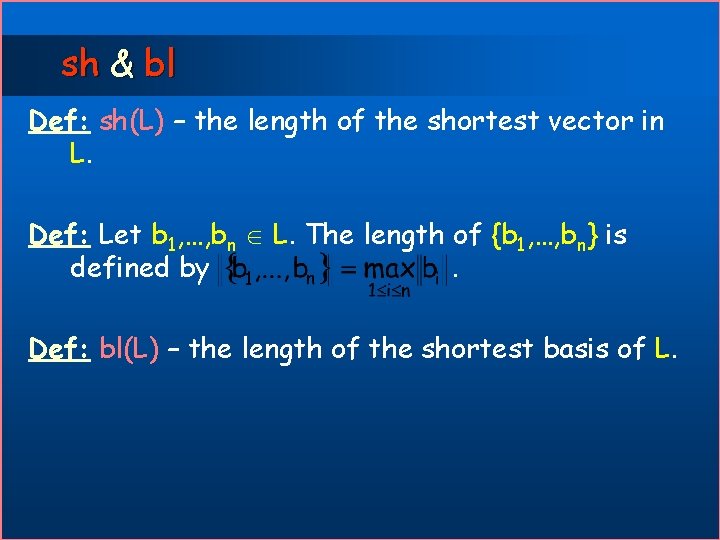

sh & bl Def: sh(L) – the length of the shortest vector in L. Def: Let b 1, …, bn L. The length of {b 1, …, bn} is defined by. Def: bl(L) – the length of the shortest basis of L.

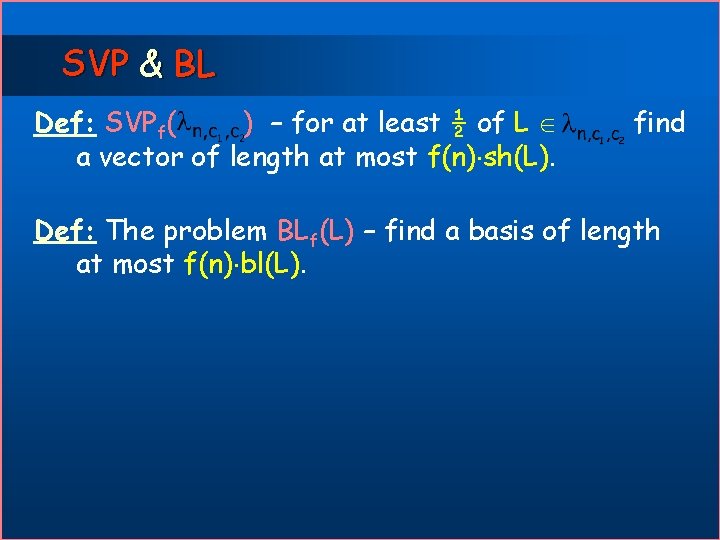

SVP & BL Def: SVPf( ) – for at least ½ of L a vector of length at most f(n) sh(L). find Def: The problem BLf(L) – find a basis of length at most f(n) bl(L).

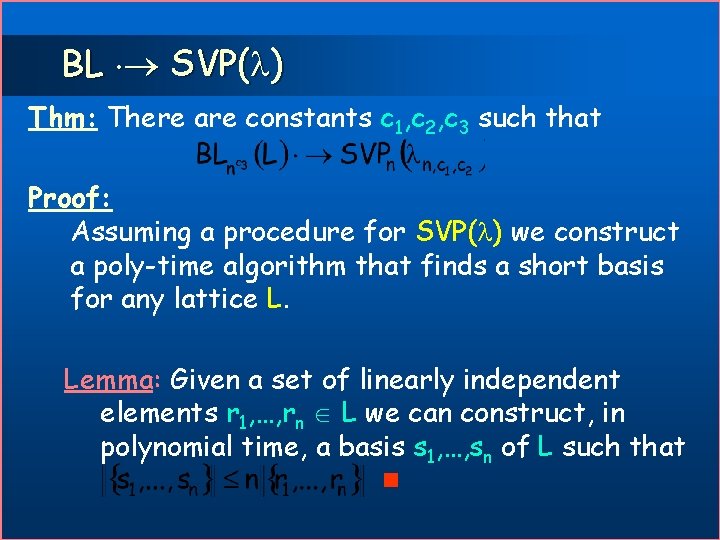

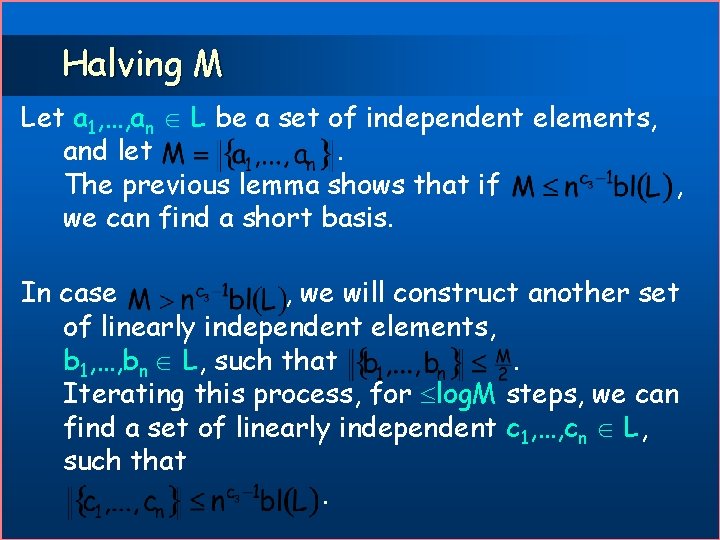

BL SVP( ) Thm: There are constants c 1, c 2, c 3 such that Proof: Assuming a procedure for SVP( ) we construct a poly-time algorithm that finds a short basis for any lattice L. Lemma: Given a set of linearly independent elements r 1, …, rn L we can construct, in polynomial time, a basis s 1, …, sn of L such that

Halving M Let a 1, …, an L be a set of independent elements, and let. The previous lemma shows that if , we can find a short basis. In case , we will construct another set of linearly independent elements, b 1, …, bn L, such that. Iterating this process, for log. M steps, we can find a set of linearly independent c 1, …, cn L, such that.

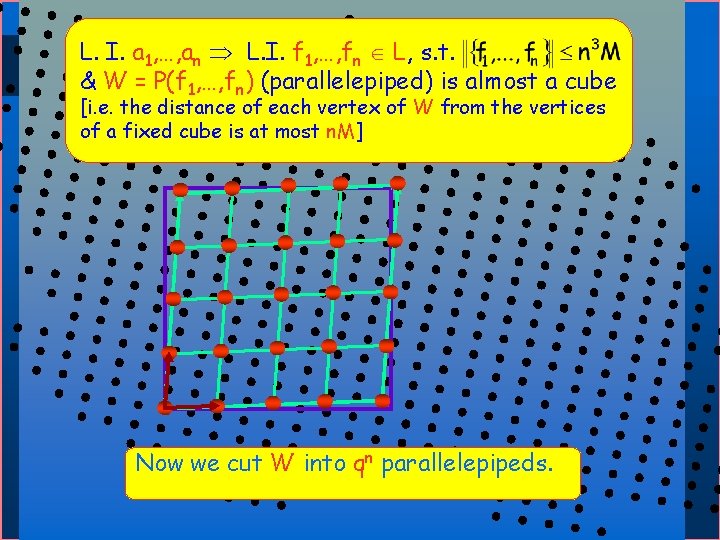

L. I. a 1, …, an L. I. f 1, …, fn L, s. t. & W = P(f 1, …, fn) (parallelepiped) is almost a cube [i. e. the distance of each vertex of W from the vertices of a fixed cube is at most n. M] Now we cut W into qn parallelepipeds.

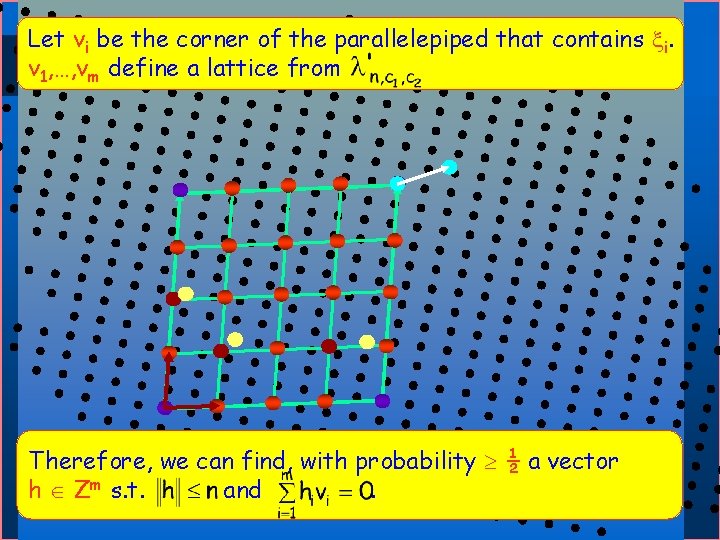

Let vi be the corner of the parallelepiped that contains i. v 1, …, vm define a lattice from Therefore, we can sequence find, withof probability ½ a 1 vector We take a random lattice points , …, m, and m s. t. h Zfor. find each 1 iand m the parallelepiped that contains i.

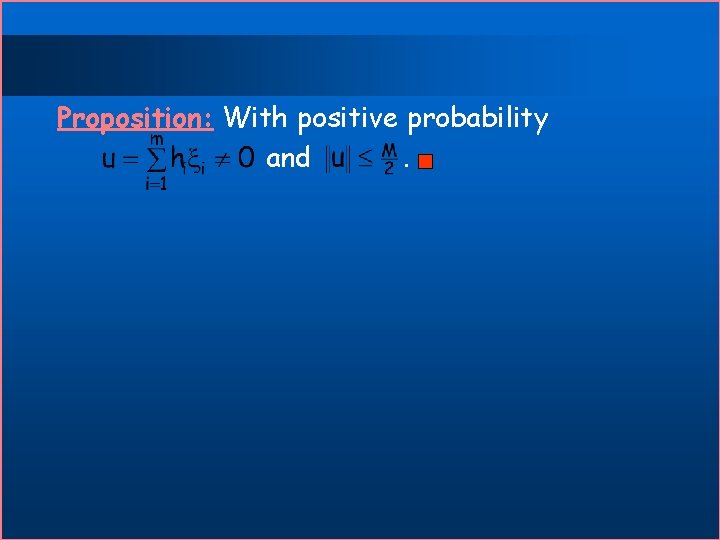

Proposition: With positive probability and.

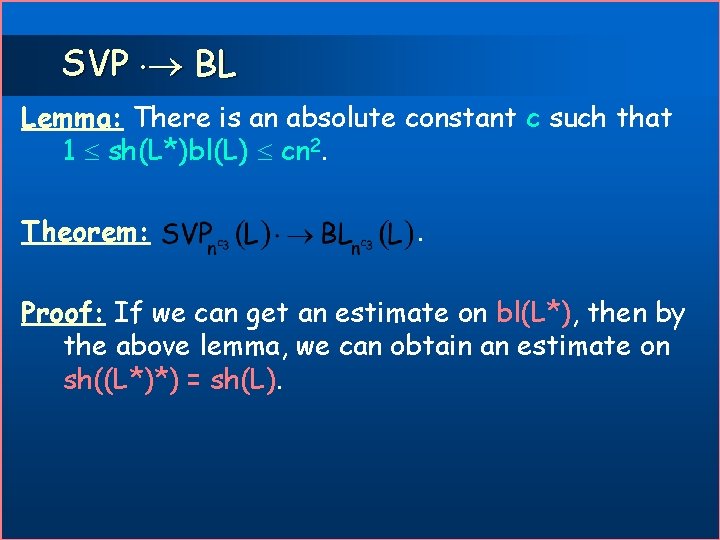

SVP BL Lemma: There is an absolute constant c such that 1 sh(L*)bl(L) cn 2. Theorem: . Proof: If we can get an estimate on bl(L*), then by the above lemma, we can obtain an estimate on sh((L*)*) = sh(L).

![Hardness of approx. CVP [DKRS] g-CVP is NP-hard for g=n 1/loglog n n - Hardness of approx. CVP [DKRS] g-CVP is NP-hard for g=n 1/loglog n n -](http://slidetodoc.com/presentation_image/50a5a49f6be88339935df1f29941e7ca/image-89.jpg)

Hardness of approx. CVP [DKRS] g-CVP is NP-hard for g=n 1/loglog n n - lattice dimension Improving – Hardness (NP-hardness instead of quasi-NPhardness) – Non-approximation factor (from 2(logn)1 - )

![l [ABSS] reduction: uses PCP to show – NP-hard for g=O(1) – Quasi-NP-hard g=2(logn)1 l [ABSS] reduction: uses PCP to show – NP-hard for g=O(1) – Quasi-NP-hard g=2(logn)1](http://slidetodoc.com/presentation_image/50a5a49f6be88339935df1f29941e7ca/image-90.jpg)

l [ABSS] reduction: uses PCP to show – NP-hard for g=O(1) – Quasi-NP-hard g=2(logn)1 - by repeated blow-up. l Barrier - l SSAT: a new non-PCP characterization of NP. NP-hard to approximate to within g=n 1/loglogn. 2(logn)1 - const >0

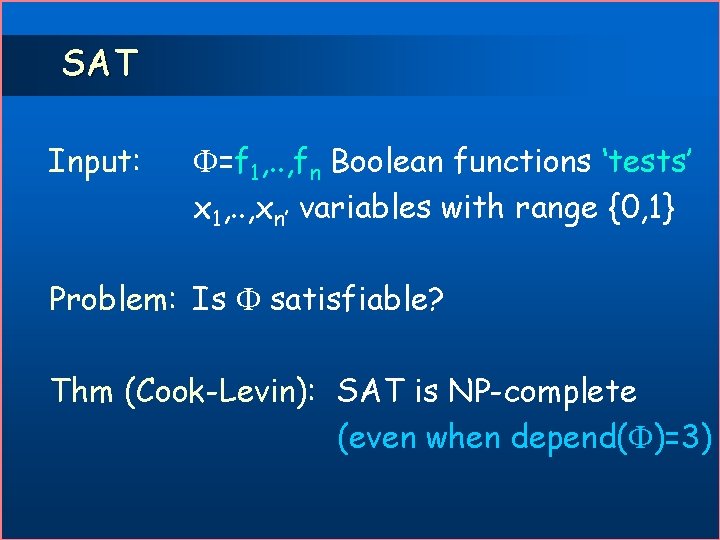

SAT Input: =f 1, . . , fn Boolean functions ‘tests’ x 1, . . , xn’ variables with range {0, 1} Problem: Is satisfiable? Thm (Cook-Levin): SAT is NP-complete (even when depend( )=3)

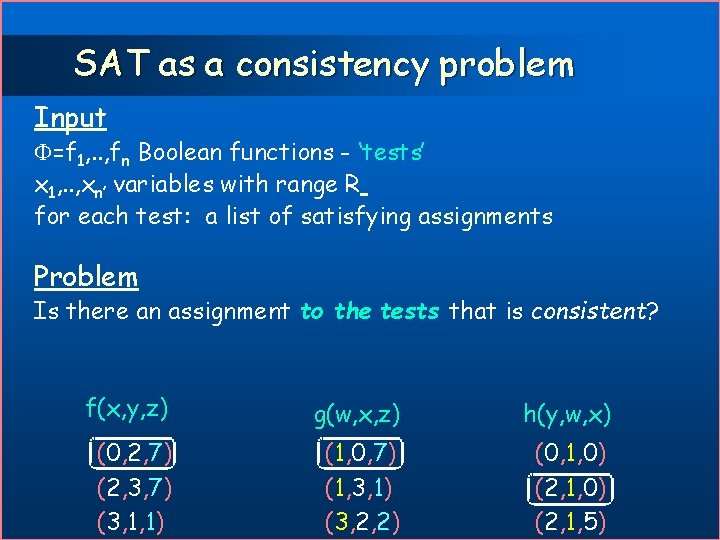

SAT as a consistency problem Input =f 1, . . , fn Boolean functions - ‘tests’ x 1, . . , xn’ variables with range R for each test: a list of satisfying assignments Problem Is there an assignment to the tests that is consistent? f(x, y, z) g(w, x, z) h(y, w, x) (0, 2, 7) (2, 3, 7) (3, 1, 1) (1, 0, 7) (1, 3, 1) (3, 2, 2) (0, 1, 0) (2, 1, 5)

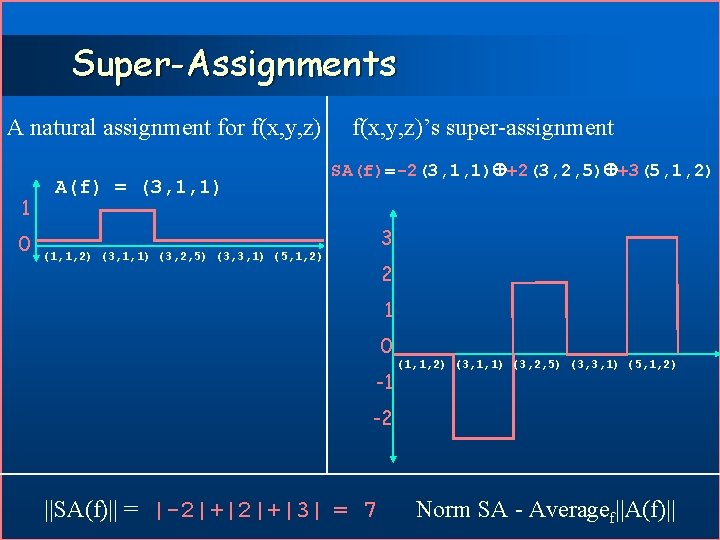

Super-Assignments A natural assignment for f(x, y, z) 1 0 A(f) = (3, 1, 1) f(x, y, z)’s super-assignment SA(f)=-2(3, 1, 1) +2(3, 2, 5) +3(5, 1, 2) 3 (1, 1, 2) (3, 1, 1) (3, 2, 5) (3, 3, 1) (5, 1, 2) 2 1 0 -1 (1, 1, 2) (3, 1, 1) (3, 2, 5) (3, 3, 1) (5, 1, 2) -2 ||SA(f)|| = |-2|+|3| = 7 Norm SA - Averagef||A(f)||

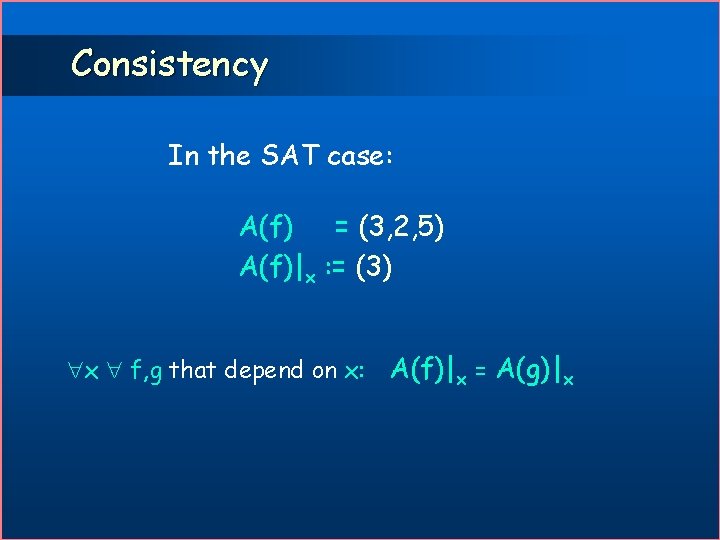

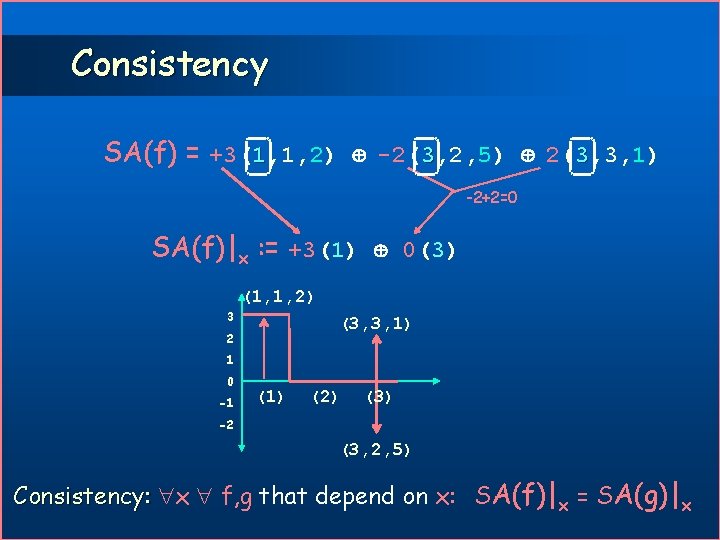

Consistency In the SAT case: A(f) = (3, 2, 5) A(f)|x : = (3) x f, g that depend on x: A(f)|x = A(g)|x

Consistency SA(f) = +3(1, 1, 2) -2(3, 2, 5) 2(3, 3, 1) -2+2=0 SA(f)|x : = +3(1) 0(3) (1, 1, 2) 3 (3, 3, 1) 2 1 0 -1 (1) (2) (3) -2 (3, 2, 5) Consistency: x f, g that depend on x: SA(f)|x = SA(g)|x

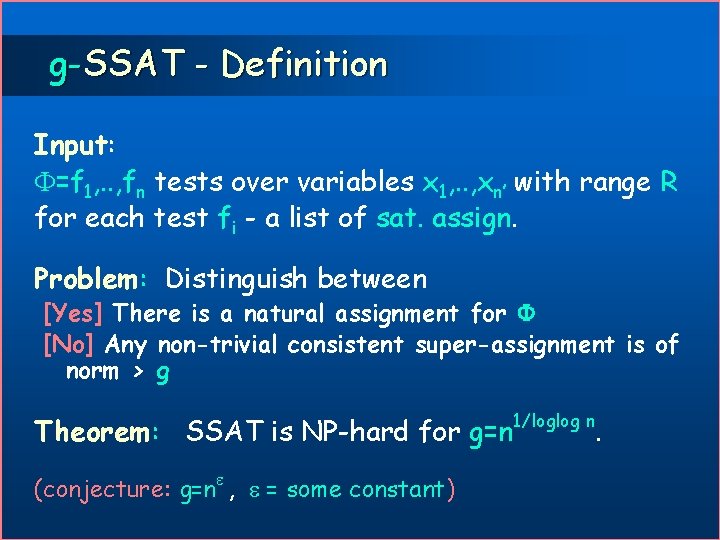

g-SSAT - Definition Input: =f 1, . . , fn tests over variables x 1, . . , xn’ with range R for each test fi - a list of sat. assign. Problem: Distinguish between [Yes] There is a natural assignment for [No] Any non-trivial consistent super-assignment is of norm > g Theorem: SSAT is NP-hard for g=n (conjecture: g=n , = some constant) 1/loglog n .

SSAT is NP-hard to approximate to within g = n 1/loglogn

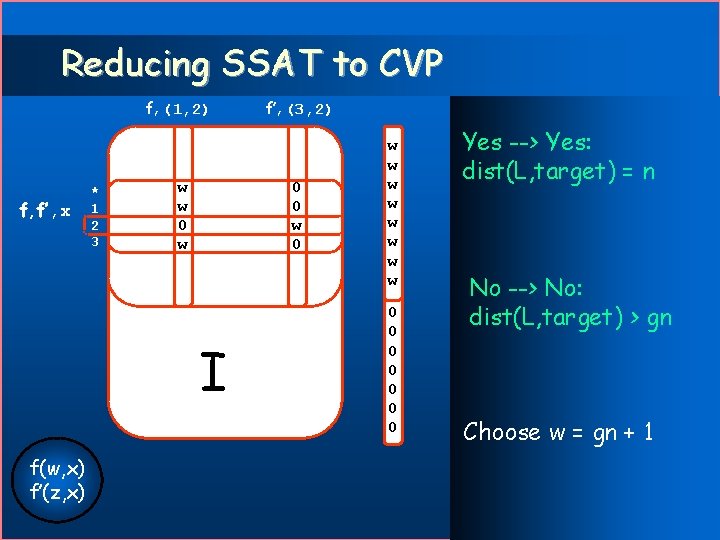

Reducing SSAT to CVP f, (1, 2) f, f’, x * 1 2 3 w w 0 0 w 0 I f(w, x) f’(z, x) f’, (3, 2) w w w w 0 0 0 0 Yes --> Yes: dist(L, target) = n No --> No: dist(L, target) > gn Choose w = gn + 1

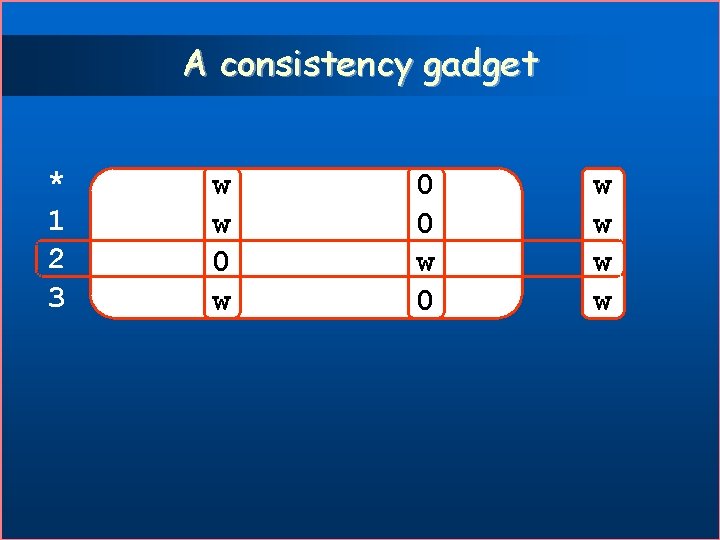

A consistency gadget * 1 2 3 w w 0 0 w w w w

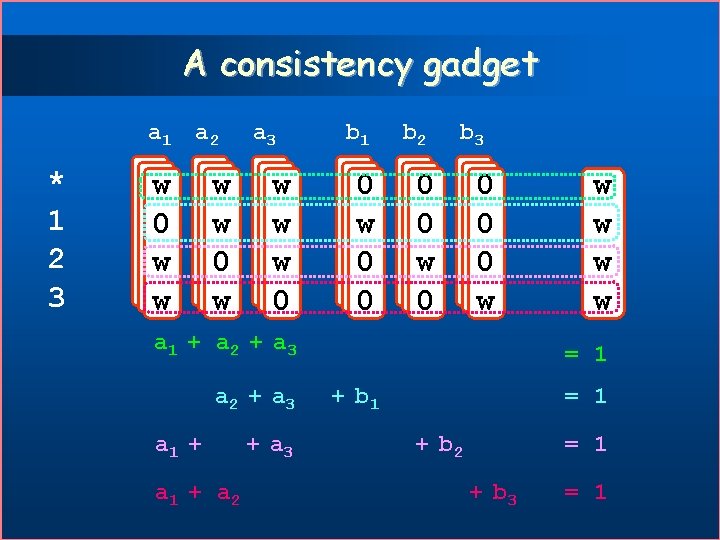

A consistency gadget a 1 a 2 * 1 2 3 www 000 www www 00 w ww 0 www a 3 www 00 w ww 0 b 1 b 2 b 3 ww 0 00 w ww 0 000 www ww 0 000 www a 1 + a 2 + a 3 w w = 1 + b 1 = 1 + b 2 = 1 + b 3 = 1

- Slides: 100