Large Vocabulary Continuous Speech Recognition LVCSR Automatic Speech

Large Vocabulary Continuous Speech Recognition (LVCSR) Automatic Speech Recognition SPRING 2016

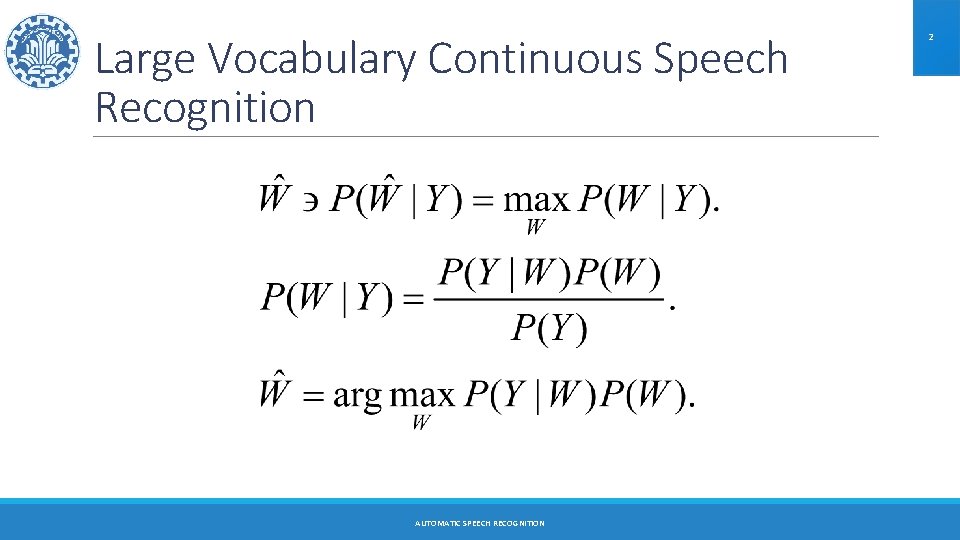

Large Vocabulary Continuous Speech Recognition AUTOMATIC SPEECH RECOGNITION 2

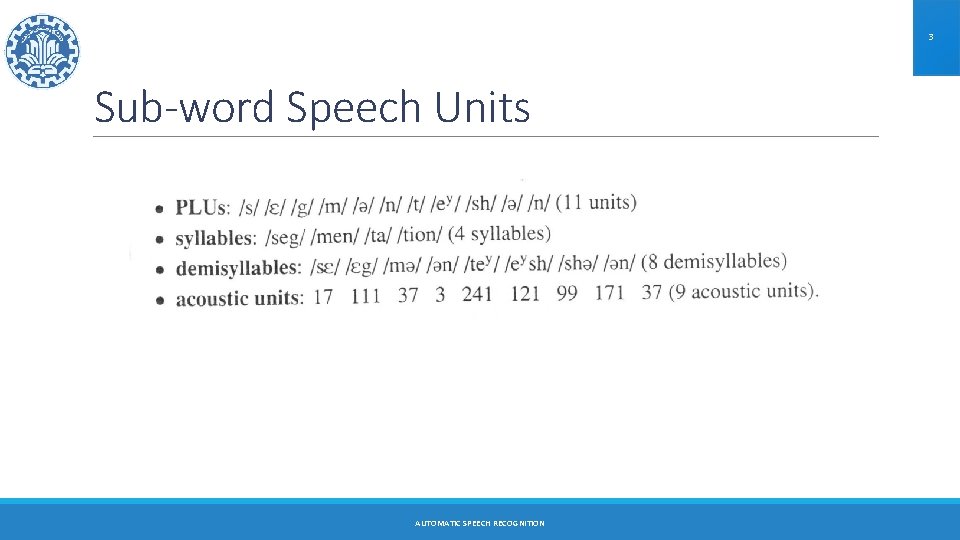

3 Sub-word Speech Units AUTOMATIC SPEECH RECOGNITION

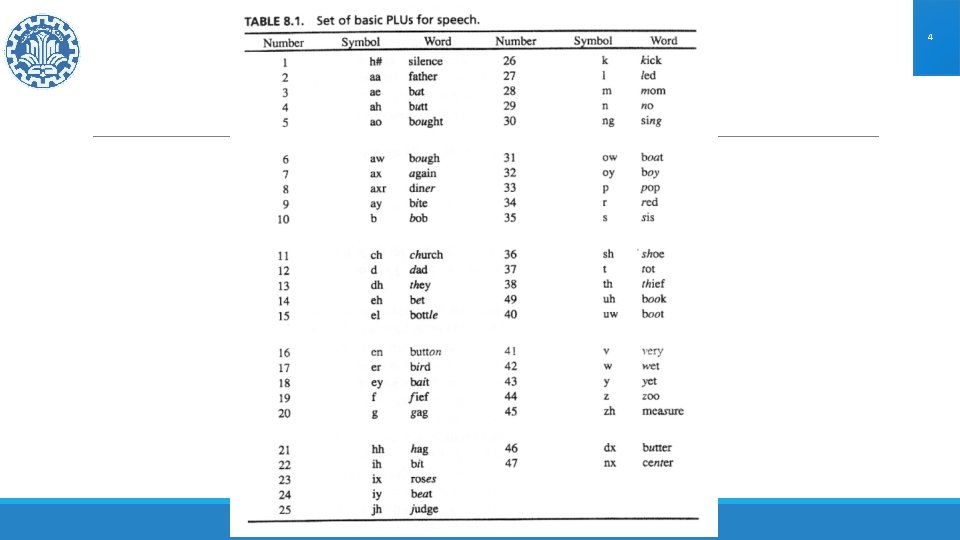

4 AUTOMATIC SPEECH RECOGNITION

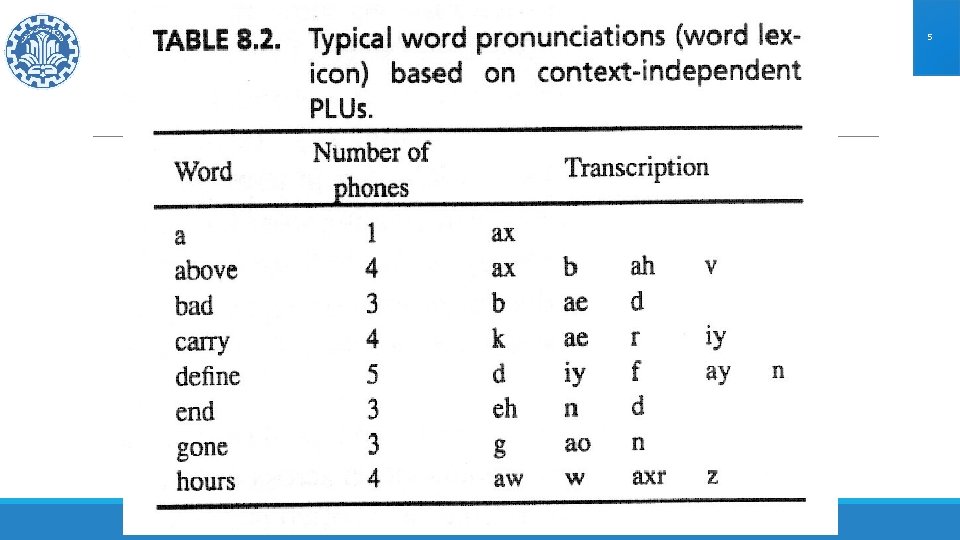

5 AUTOMATIC SPEECH RECOGNITION

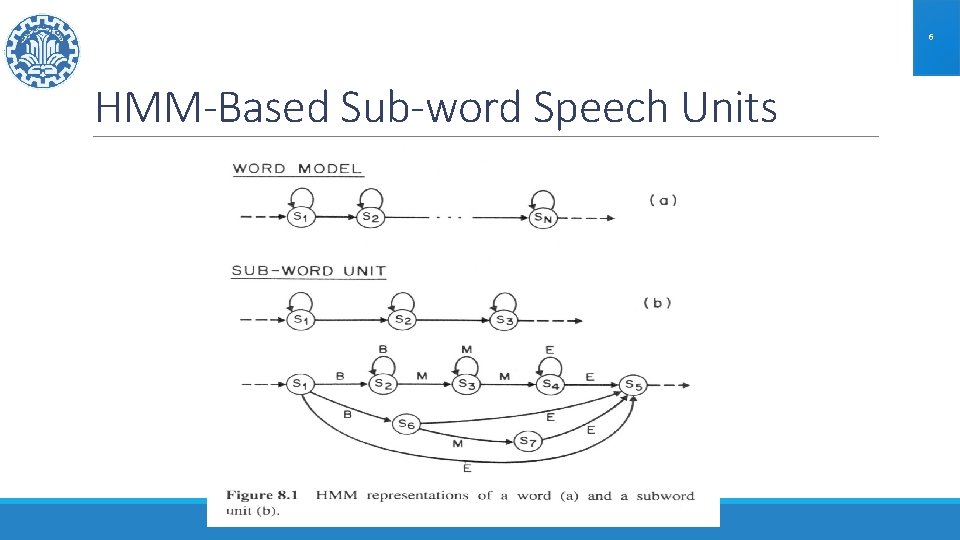

6 HMM-Based Sub-word Speech Units AUTOMATIC SPEECH RECOGNITION

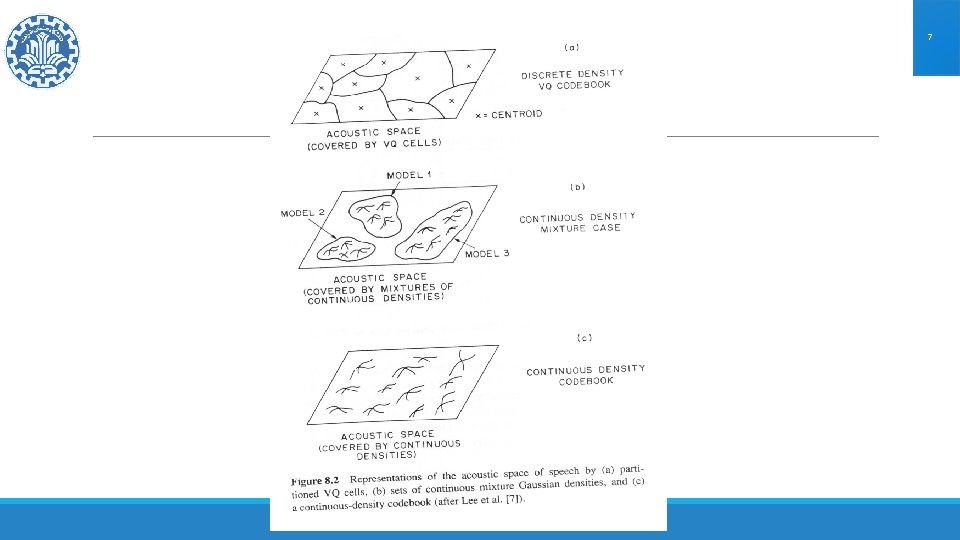

7 AUTOMATIC SPEECH RECOGNITION

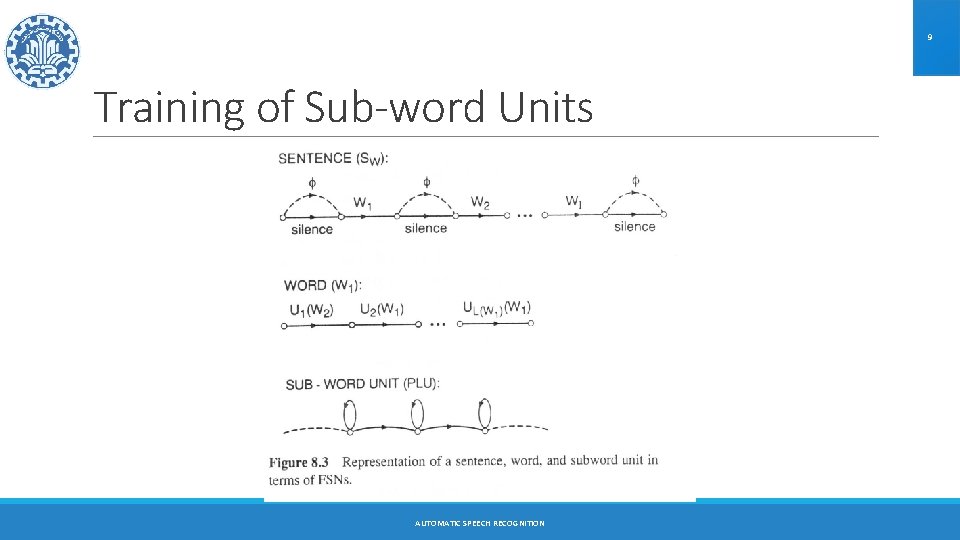

8 Training of Sub-word Units AUTOMATIC SPEECH RECOGNITION

9 Training of Sub-word Units AUTOMATIC SPEECH RECOGNITION

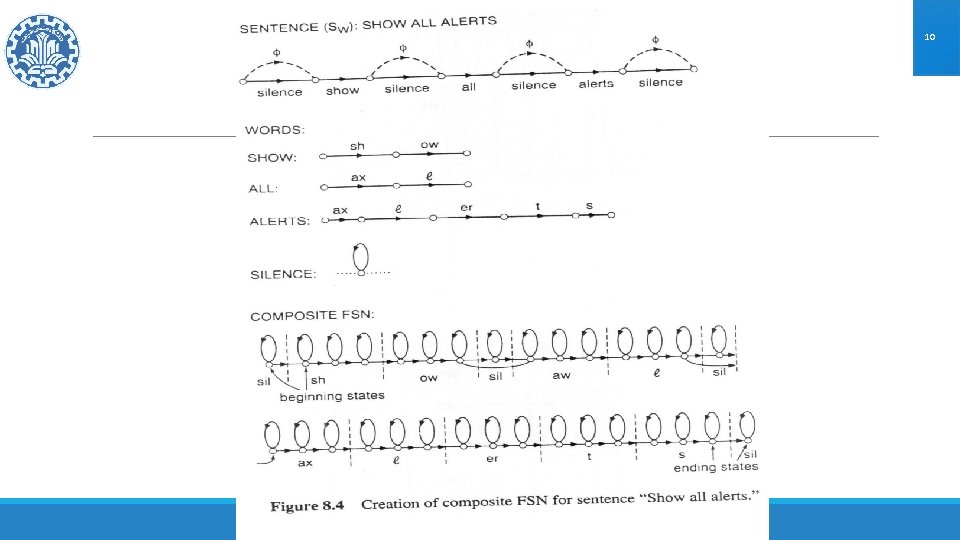

10 AUTOMATIC SPEECH RECOGNITION

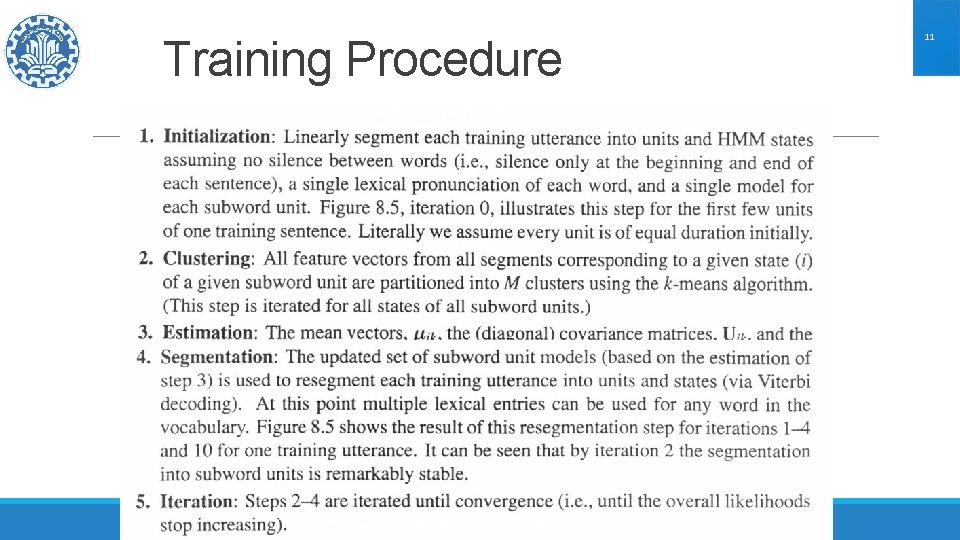

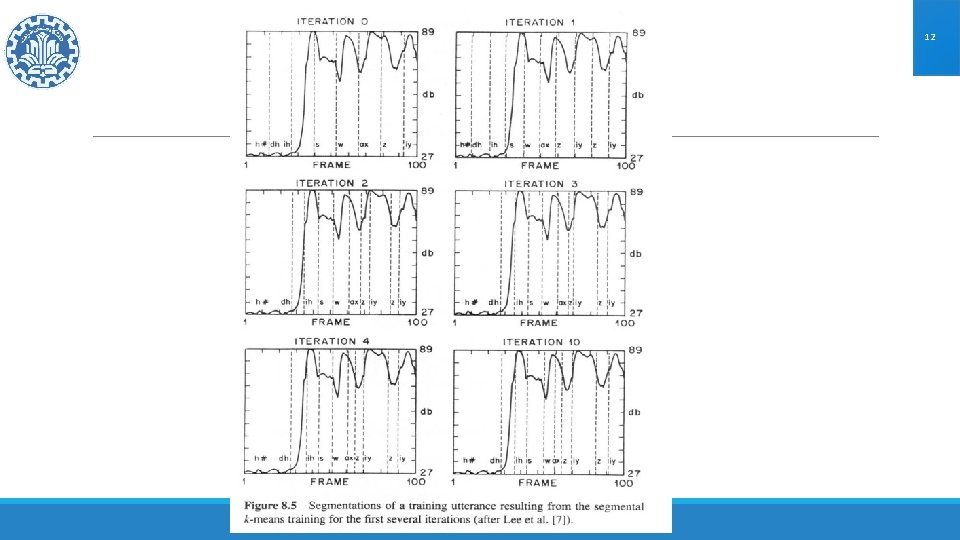

Training Procedure AUTOMATIC SPEECH RECOGNITION 11

12 AUTOMATIC SPEECH RECOGNITION

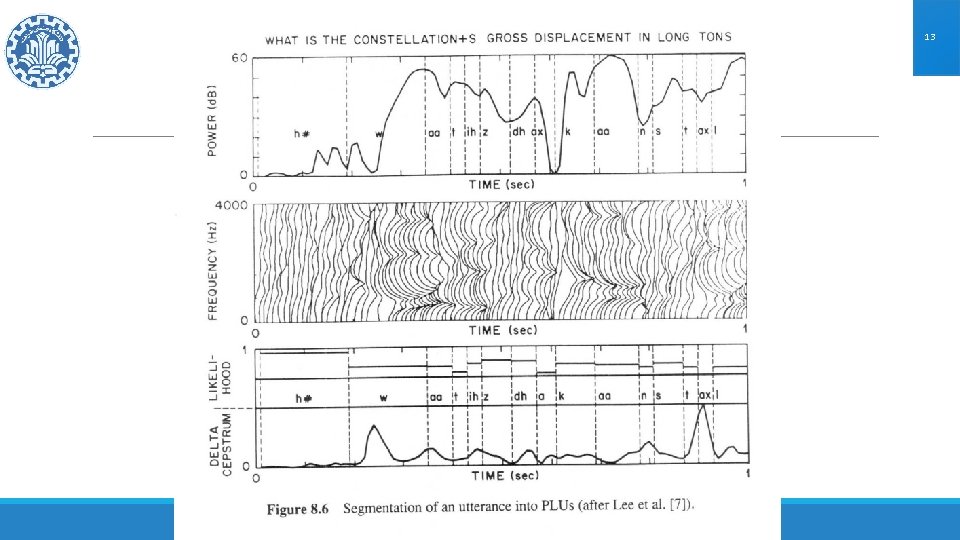

13 AUTOMATIC SPEECH RECOGNITION

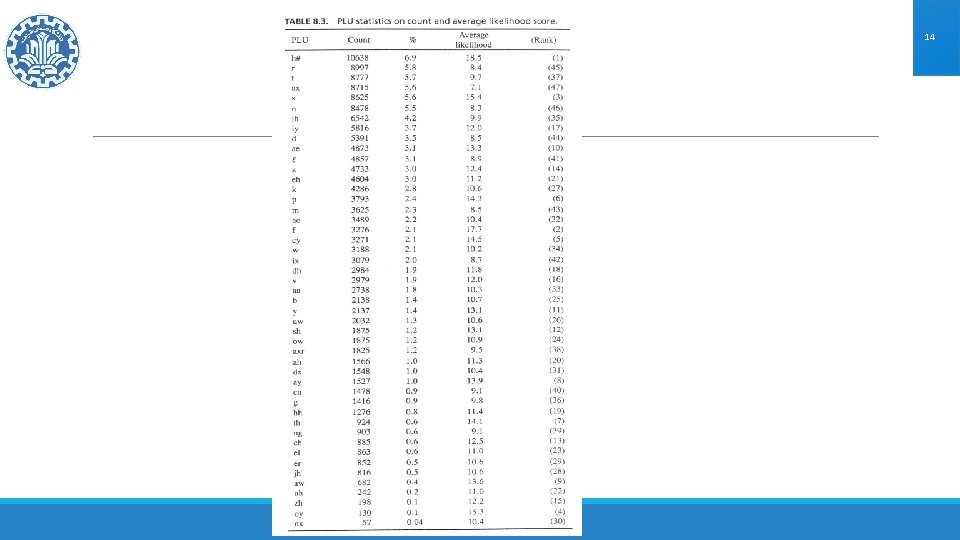

14 AUTOMATIC SPEECH RECOGNITION

Errors and performance evaluation in PLU recognition ØSubstitution error (s) ØDeletion error (d) ØInsertion error (i) ØPerformance evaluation: ØIf the total number of PLUs is N, we define: ◦ Correctness rate: N – s – d /N ◦ Accuracy rate: N – s – d – i / N AUTOMATIC SPEECH RECOGNITION 15

16 Language Models for LVCSR Word Pair Model: Specify which word pairs are valid AUTOMATIC SPEECH RECOGNITION

17 Statistical Language Modeling AUTOMATIC SPEECH RECOGNITION

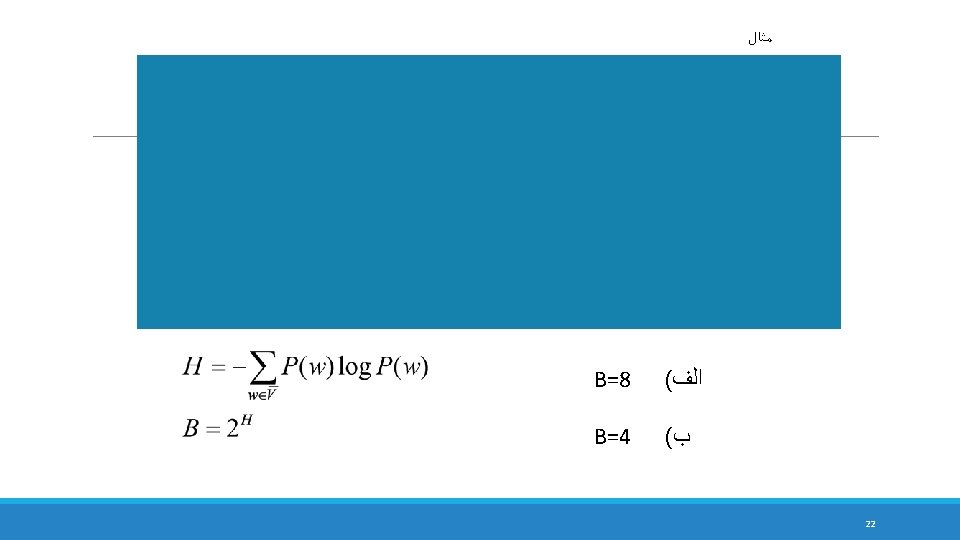

18 Perplexity of the Language Model Entropy of the Source: Assuming independent generation of words: Then, H is called the first order entropy of the source: If the source is ergodic, meaning its statistical properties can be completely characterized in a sufficiently long sequence that the Source puts out, AUTOMATIC SPEECH RECOGNITION

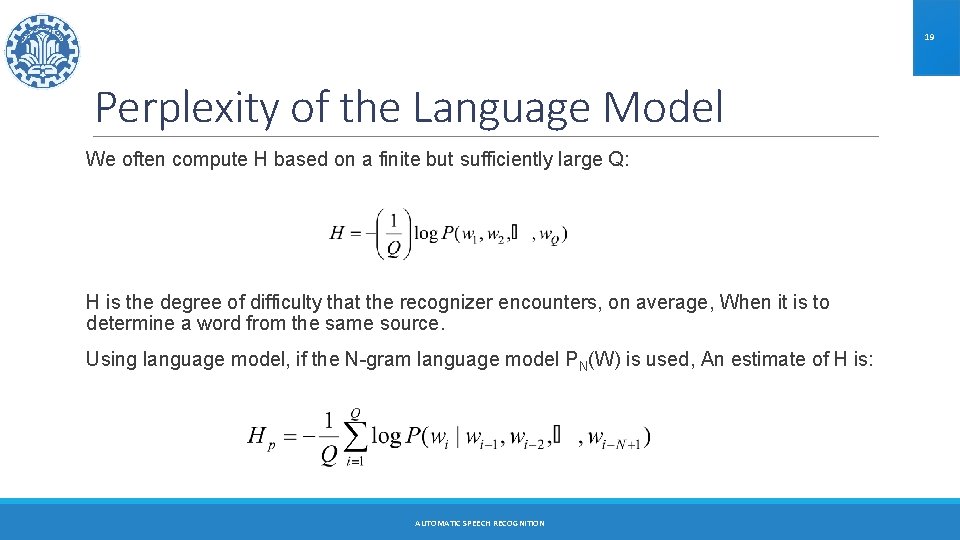

19 Perplexity of the Language Model We often compute H based on a finite but sufficiently large Q: H is the degree of difficulty that the recognizer encounters, on average, When it is to determine a word from the same source. Using language model, if the N-gram language model PN(W) is used, An estimate of H is: AUTOMATIC SPEECH RECOGNITION

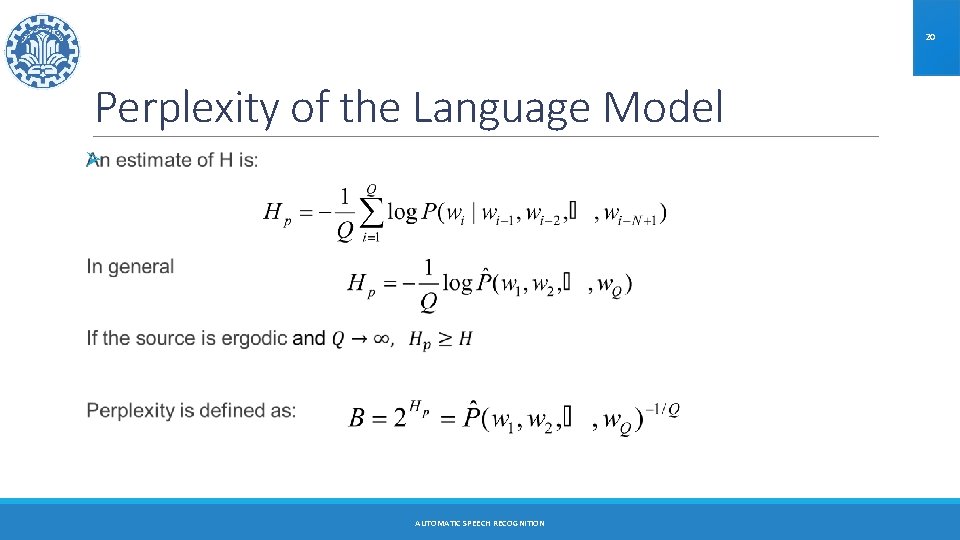

20 Perplexity of the Language Model Ø AUTOMATIC SPEECH RECOGNITION

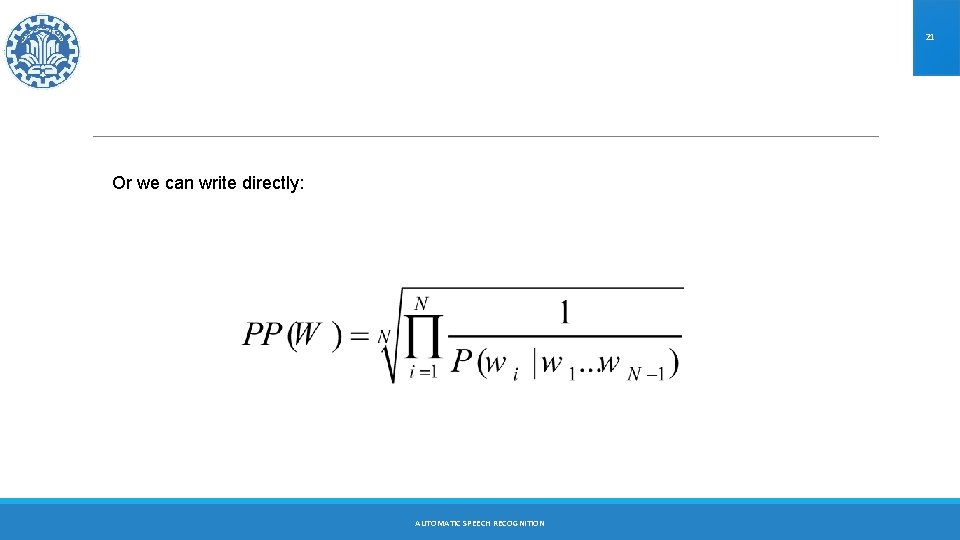

21 Or we can write directly: AUTOMATIC SPEECH RECOGNITION

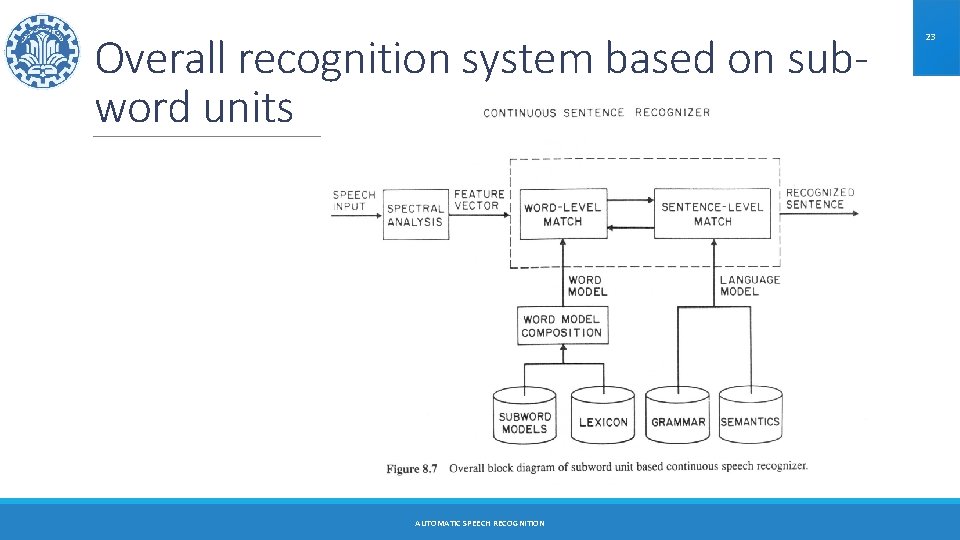

Overall recognition system based on subword units AUTOMATIC SPEECH RECOGNITION 23

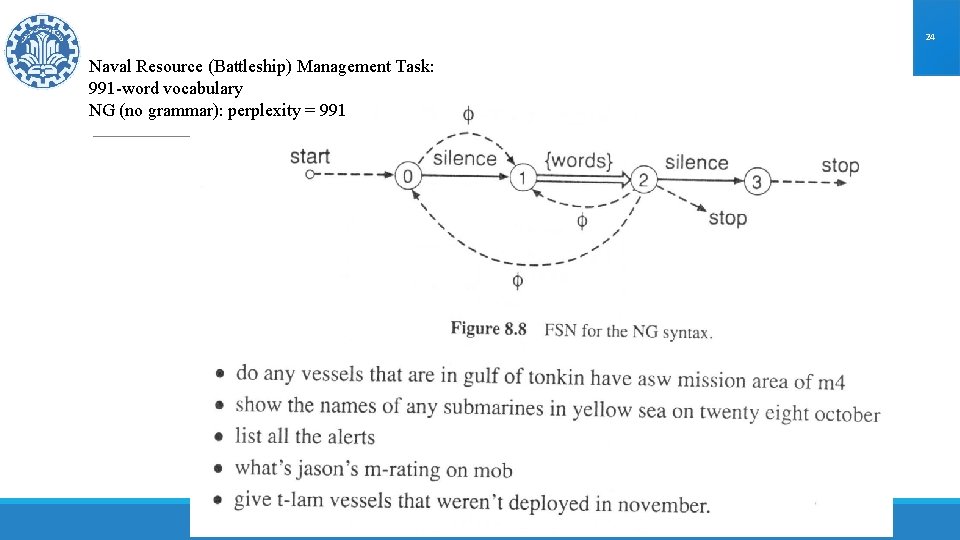

24 Naval Resource (Battleship) Management Task: 991 -word vocabulary NG (no grammar): perplexity = 991 AUTOMATIC SPEECH RECOGNITION

25 Word pair grammar ØWe can partition the vocabulary into four nonoverlapping sets of words: ØThe overall FSN allows recognition of sentences of the form: AUTOMATIC SPEECH RECOGNITION

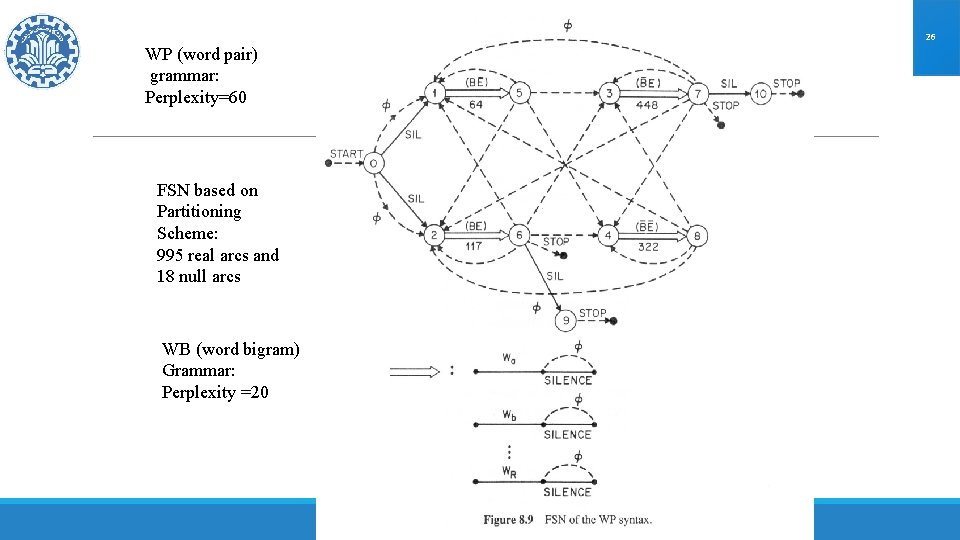

26 WP (word pair) grammar: Perplexity=60 FSN based on Partitioning Scheme: 995 real arcs and 18 null arcs WB (word bigram) Grammar: Perplexity =20 AUTOMATIC SPEECH RECOGNITION

27 Control of word insertion/word deletion rate ØIn the discussed structure, there is no control on the sentence length ØWe introduce a word insertion penalty into the Viterbi decoding ØFor this, a fixed negative quantity is added to the likelihood score at the end of each word arc AUTOMATIC SPEECH RECOGNITION

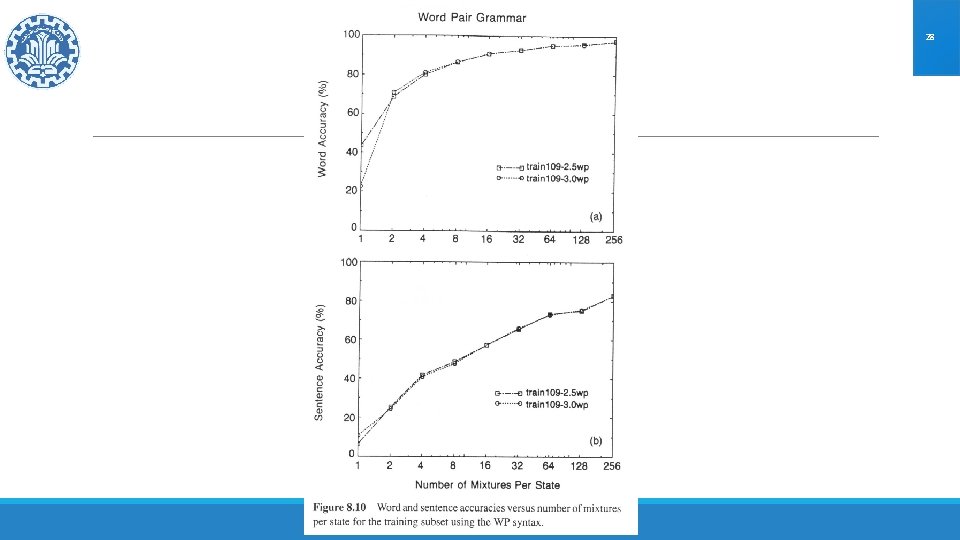

28 AUTOMATIC SPEECH RECOGNITION

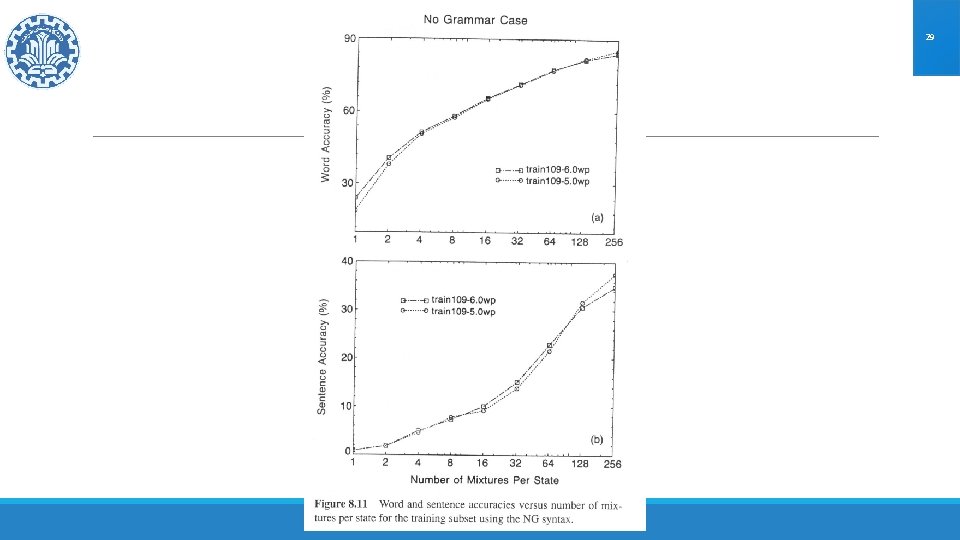

29 AUTOMATIC SPEECH RECOGNITION

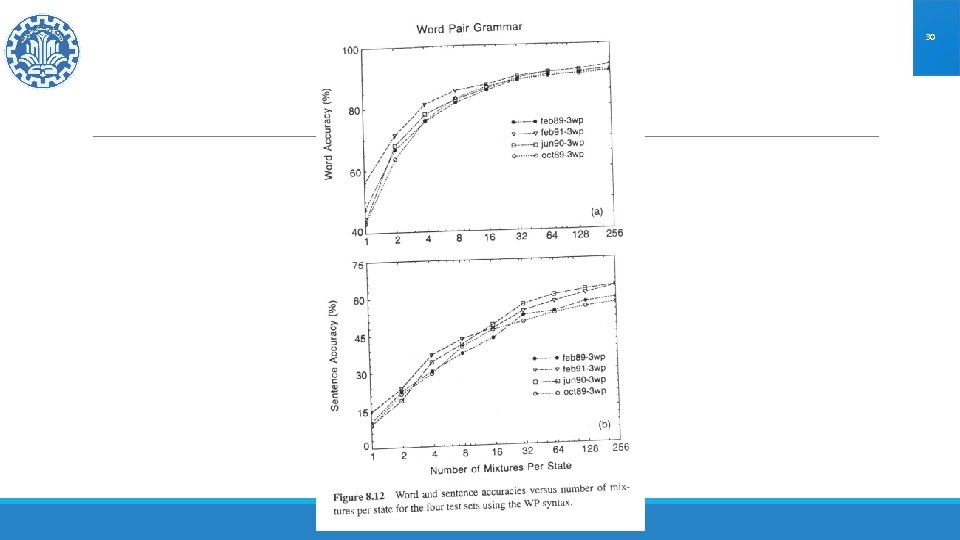

30 AUTOMATIC SPEECH RECOGNITION

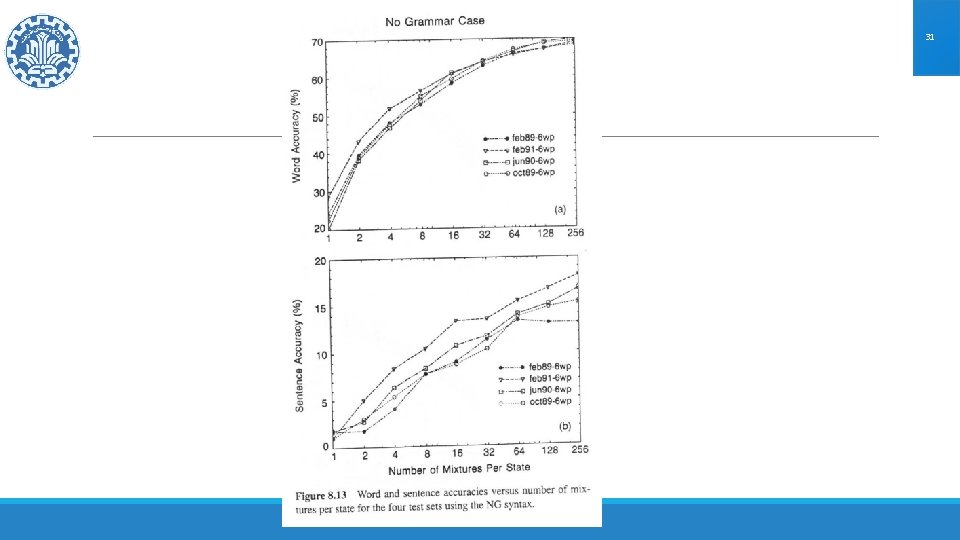

31 AUTOMATIC SPEECH RECOGNITION

State Tying AUTOMATIC SPEECH RECOGNITION 32

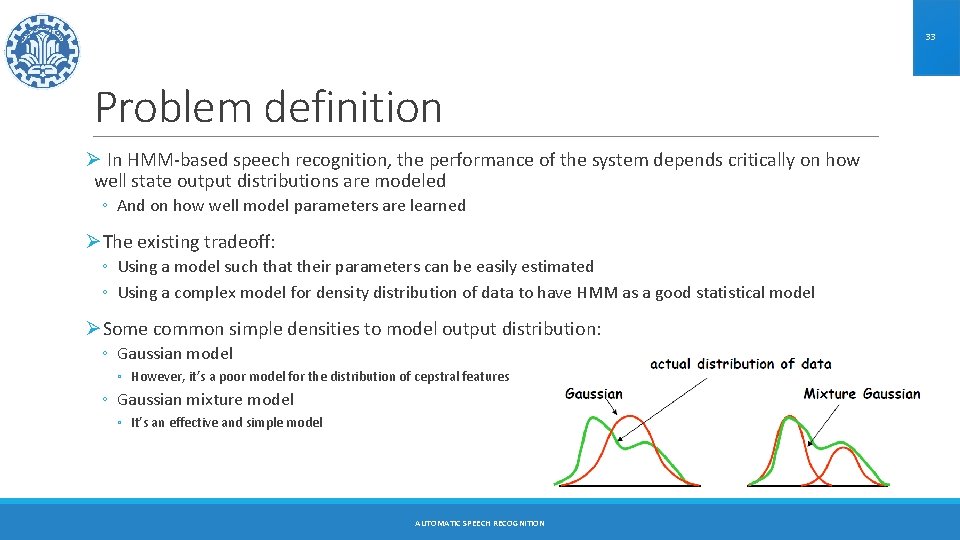

33 Problem definition Ø In HMM-based speech recognition, the performance of the system depends critically on how well state output distributions are modeled ◦ And on how well model parameters are learned ØThe existing tradeoff: ◦ Using a model such that their parameters can be easily estimated ◦ Using a complex model for density distribution of data to have HMM as a good statistical model ØSome common simple densities to model output distribution: ◦ Gaussian model ◦ However, it’s a poor model for the distribution of cepstral features ◦ Gaussian mixture model ◦ It’s an effective and simple model AUTOMATIC SPEECH RECOGNITION

34 Problem definition ØThe parameters required to specify a mixture of K Gaussians includes K mean vectors, K covariance matrices, and K mixture weights ØA recognizer with tens (or hundreds) of thousands of HMM states will require hundreds of thousands (or millions) of parameters to specify all state output densities ØMost training corpora cannot provide sufficient training data to learn all these parameters effectively ◦ Parameters for the state output densities of sub-word units that are never seen in the training data can never be learned at all The key problem: maintaining the balance between model complexity and available training data AUTOMATIC SPEECH RECOGNITION

35 Training the HMM ØTo train the HMM for a sub-word unit, data from all instances of the unit in the training corpus are used to estimate the parameters ØThis process could be: ◦ Context-independent ◦ Context-dependent AUTOMATIC SPEECH RECOGNITION

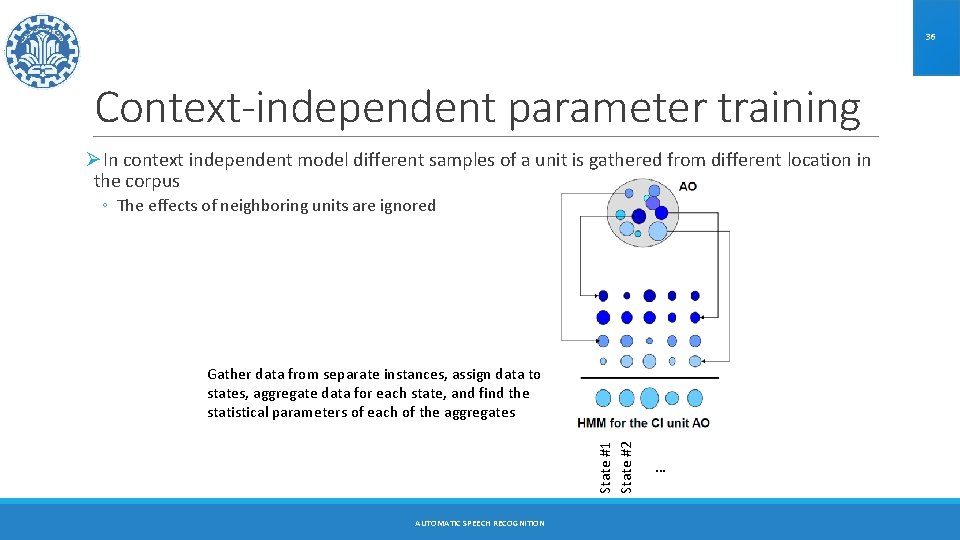

36 Context-independent parameter training ØIn context independent model different samples of a unit is gathered from different location in the corpus ◦ The effects of neighboring units are ignored State #1 State #2 Gather data from separate instances, assign data to states, aggregate data for each state, and find the statistical parameters of each of the aggregates AUTOMATIC SPEECH RECOGNITION …

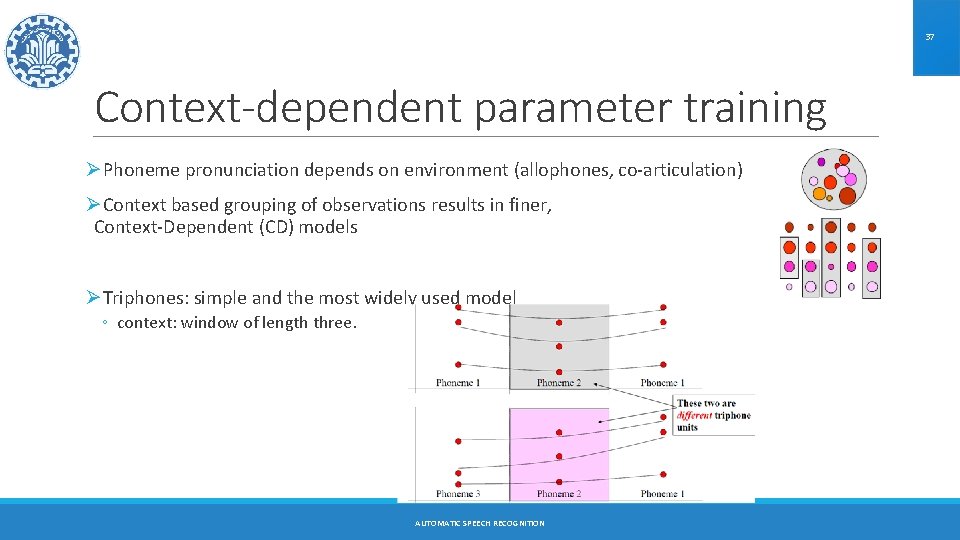

37 Context-dependent parameter training ØPhoneme pronunciation depends on environment (allophones, co-articulation) ØContext based grouping of observations results in finer, Context-Dependent (CD) models ØTriphones: simple and the most widely used model ◦ context: window of length three. AUTOMATIC SPEECH RECOGNITION

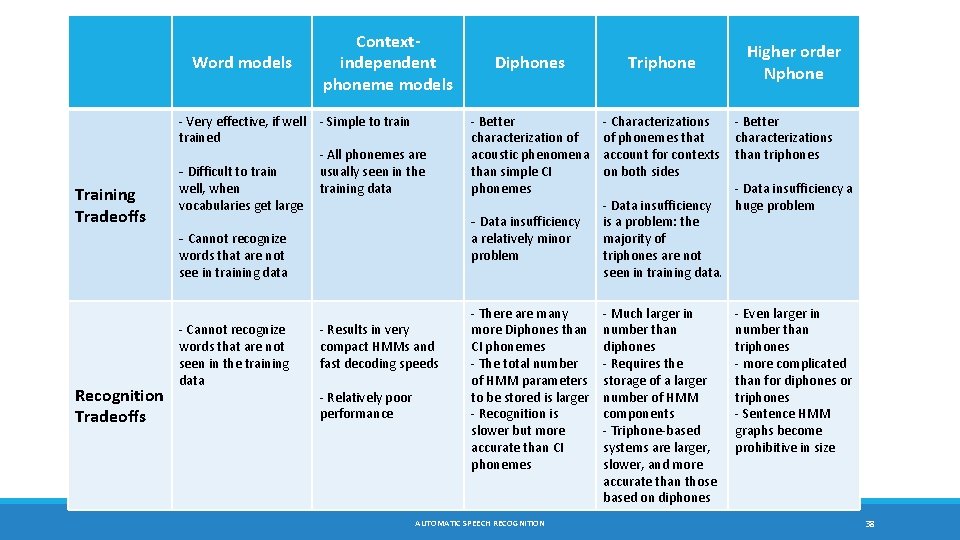

Word models Training Tradeoffs Contextindependent phoneme models - Very effective, if well - Simple to trained - All phonemes are usually seen in the - Difficult to train well, when training data vocabularies get large - Cannot recognize words that are not see in training data Recognition Tradeoffs - Cannot recognize words that are not seen in the training data - Results in very compact HMMs and fast decoding speeds - Relatively poor performance Diphones Triphone - Better characterization of acoustic phenomena than simple CI phonemes - Characterizations of phonemes that account for contexts on both sides - Data insufficiency a relatively minor problem - There are many more Diphones than CI phonemes - The total number of HMM parameters to be stored is larger - Recognition is slower but more accurate than CI phonemes AUTOMATIC SPEECH RECOGNITION - Data insufficiency is a problem: the majority of triphones are not seen in training data. - Much larger in number than diphones - Requires the storage of a larger number of HMM components - Triphone-based systems are larger, slower, and more accurate than those based on diphones Higher order Nphone - Better characterizations than triphones - Data insufficiency a huge problem - Even larger in number than triphones - more complicated than for diphones or triphones - Sentence HMM graphs become prohibitive in size 38

39 What to use? ØWord models are best when the vocabulary is small (e. g. digits). ØCI phoneme based models are rarely used ØWhere accuracy is of prime importance, triphone models are usually used ØIf reduced memory footprint and speed are important, e. g. in embedded recognizers, diphone models are often used ØHigher-order Nphone models are rarely used AUTOMATIC SPEECH RECOGNITION

40 Triphones ØTo build the HMM for a word, we simply concatenate the HMMs for individual triphones in it AUTOMATIC SPEECH RECOGNITION

41 Triphones ØTriphones at word boundaries are dependent on neighboring words. ◦ cross-word triphones: context spanning word boundaries, important for accurate modeling. ØA triphone in the middle of a word sounds different from the same triphone at word boundaries ◦ e. g the word-internal triphone AX(G, T) from GUT: G AX T ◦ Vs. cross-word triphone AX(G, T) in BIG ATTEMPT ØThis results in significant complication of the HMM for the language (through which we find the best path, for recognition) ◦ Resulting in larger HMMs and slower search AUTOMATIC SPEECH RECOGNITION

42 Problems with triphones ØParameters: very large numbers for VLVR. ◦ Number of phones: about 50 ◦ Number of CD phones: possibly , 503 ◦ but not all of them occur (phonotactic constraints). In practice, about 60000. ØNumber of HMM parameters: ◦ with 16 mixture and 39 -dimensional feature vector: 60000 × 3 × (39 × 16 × 2 +16) ≈ 280 M ØData sparsity: ◦ some triphones, particularly cross-word triphones, do not appear in sample. AUTOMATIC SPEECH RECOGNITION

43 Solution ØParameter sharing: cluster parameters with similar characteristics (‘parameter tying’). ◦ clustering HMM states. ØParameter sharing is a technique by which several similar HMM states share a common set of HMM parameters ØSince the shared HMM parameters are now trained using the data from all similar states, there are more data available to train any HMM parameter AUTOMATIC SPEECH RECOGNITION

44 Parameter sharing types ØContinuous density HMMs ◦ Individual states may share the same mixture distributions AUTOMATIC SPEECH RECOGNITION

45 Parameter sharing types ØSemi-continuous density HMMs: all states may share the same Gaussians, but with different mixture weights AUTOMATIC SPEECH RECOGNITION

46 Parameter sharing types ØSemi-continuous density HMMs: all states may share the same Gaussians, but with statespecific mixture weights, and then share the weights as well AUTOMATIC SPEECH RECOGNITION

47 Two techniques ØData-driven Clustering ◦ Group HMM states together based on the similarity of their distributions, until all groups have sufficient data ◦ The densities used for grouping are poorly estimated in the first place ◦ Has no estimates for unseen sub-word units ◦ Places no restrictions on HMM topologies etc. ØDecision trees ◦ Clustering based on expert-specified rules. The selection of rule is data driven ◦ Based on externally provided rules. Very robust if the rules are good ◦ Provides a mechanism for estimating unseen sub-word units ◦ Restricts HMM topologies AUTOMATIC SPEECH RECOGNITION

48 Decision Tree ØBasic principle: ◦ Recursively partition a data set to maximize a pre-specified objective function ØThe actual objective function used is dependent on the specific decision tree algorithm ØThe objective is to separate the data into increasingly “pure” subsets, such that most of the data in any subset belongs to a single class ◦ In our case the “classes” are HMM states ØMost commonly used tools for induction of decision trees: ◦ CART (classification and regression tree) ◦ C 4. 5 AUTOMATIC SPEECH RECOGNITION

49 Decision Tree in our problem Øalgorithm ◦ Initially, group together all triphones for the same phoneme. ◦ Split group according to decision tree questions based on left or right phonetic context. ◦ All triphones (HMM states) at the same leaf are clustered (tied). ØAdvantage: ◦ even unseen triphones are assigned to a cluster and thus a model. ØQuestions ◦ which DT questions? Which criterion? ◦ Example for predefined binary questions ◦ is the phoneme to the left an /l/? ◦ is the phoneme to the right a nasal? AUTOMATIC SPEECH RECOGNITION

50 Clustering context-dependent phone AUTOMATIC SPEECH RECOGNITION

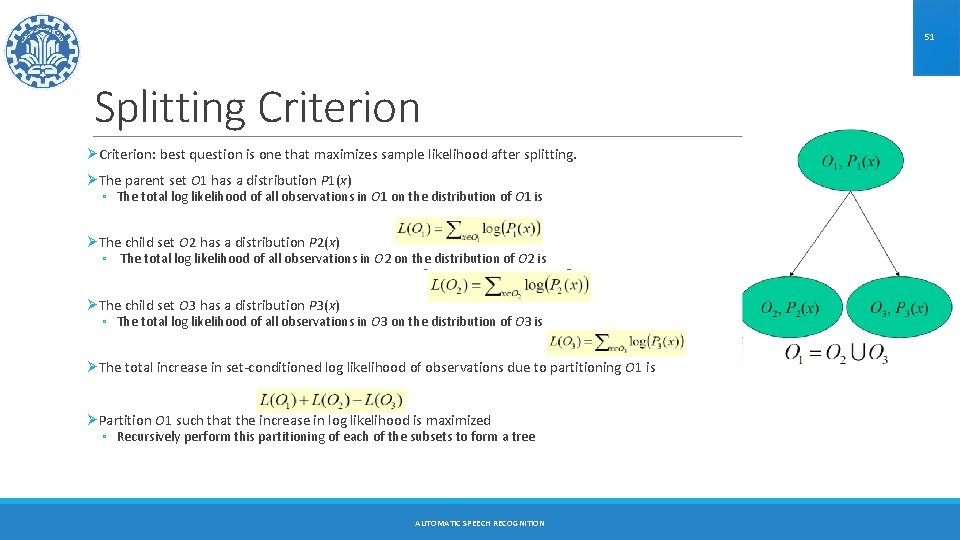

51 Splitting Criterion ØCriterion: best question is one that maximizes sample likelihood after splitting. ØThe parent set O 1 has a distribution P 1(x) ◦ The total log likelihood of all observations in O 1 on the distribution of O 1 is ØThe child set O 2 has a distribution P 2(x) ◦ The total log likelihood of all observations in O 2 on the distribution of O 2 is ØThe child set O 3 has a distribution P 3(x) ◦ The total log likelihood of all observations in O 3 on the distribution of O 3 is ØThe total increase in set-conditioned log likelihood of observations due to partitioning O 1 is ØPartition O 1 such that the increase in log likelihood is maximized ◦ Recursively perform this partitioning of each of the subsets to form a tree AUTOMATIC SPEECH RECOGNITION

52 Stopping Criteria ØGrow-then-prune strategy with cross-validation using held-out data set. ØHeuristics in VLVR: ◦ question does not yield significant increase in log-likelihood. ◦ insufficient data for questions. ◦ computational limitations. AUTOMATIC SPEECH RECOGNITION

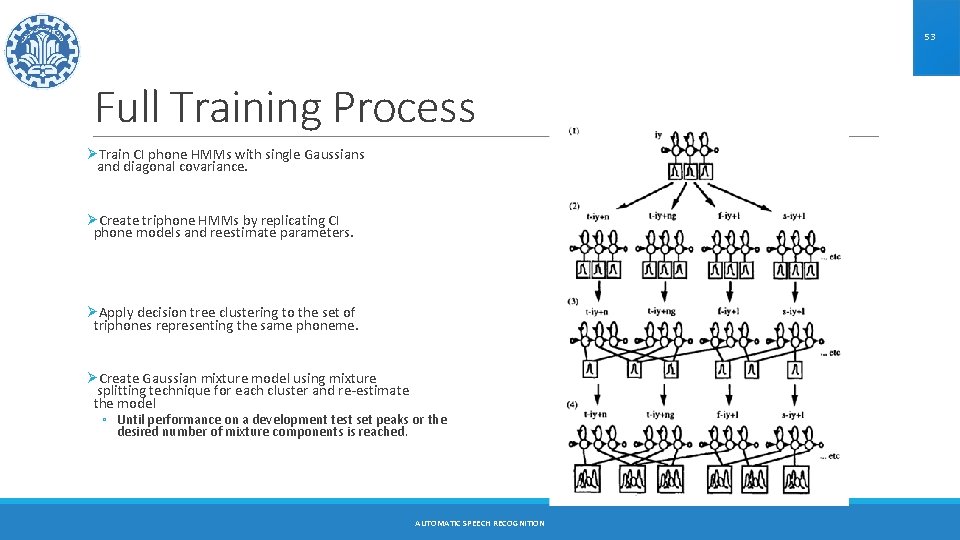

53 Full Training Process ØTrain CI phone HMMs with single Gaussians and diagonal covariance. ØCreate triphone HMMs by replicating CI phone models and reestimate parameters. ØApply decision tree clustering to the set of triphones representing the same phoneme. ØCreate Gaussian mixture model using mixture splitting technique for each cluster and re-estimate the model ◦ Until performance on a development test set peaks or the desired number of mixture components is reached. AUTOMATIC SPEECH RECOGNITION

54 References ØYoung, Steve J. , Julian J. Odell, and Philip C. Woodland. "Tree-based state tying for high accuracy acoustic modelling. " Proceedings of the workshop on Human Language Technology. Association for Computational Linguistics, 1994. ØASR course slides at CMU: http: //www. speech. cs. cmu. edu/15 -492/ ØASR course slides at NYU: http: //www. cs. nyu. edu/~eugenew/asr 13/ ØASR course slides at MIT: http: //ocw. mit. edu/courses/electrical-engineering-and-computer-science/6 -345 -automaticspeech-recognition-spring-2003/lecture-notes/ AUTOMATIC SPEECH RECOGNITION

- Slides: 54