Languages and Compilers SProg og Oversttere Bent Thomsen

Languages and Compilers (SProg og Oversættere) Bent Thomsen Department of Computer Science Aalborg University With acknowledgement to Norm Hutchinson whose slides this lecture is based on. 1

Curricula (Studie ordning) The purpose of the course is for the student to gain knowledge of important principles in programming languages and for the student to gain an understanding of techniques for describing and compiling programming languages. 2

What was this course about? • Programming Language Design – Concepts and Paradigms – Ideas and philosophy – Syntax and Semantics • Compiler Construction – Tools and Techniques – Implementations – The nuts and bolts 3

The principal paradigms • • Imperative Programming (C) Object-Oriented Programming (C++) Logic/Declarative Programming (Prolog) Functional/Applicative Programming (Lisp) • New paradigms? – – Agent Oriented Programming Business Process Oriented (Web computing) Grid Oriented Aspect Oriented Programming 4

Criteria in a good language design • Writability: The quality of a language that enables a programmer to use it to express a computation clearly, correctly, concisely, and quickly. • Readability: The quality of a language that enables a programmer to understand comprehend the nature of a computation easily and accurately. • Orthogonality: The quality of a language that features provided have as few restrictions as possible and be combinable in any meaningful way. • Reliability: The quality of a language that assures a program will not behave in unexpected or disastrous ways during execution. • Maintainability: The quality of a language that eases errors can be found and corrected and new features added. 5

Criteria (Continued) • Generality: The quality of a language that avoids special cases in the availability or use of constructs and by combining closely related constructs into a single more general one. • Uniformity: The quality of a language that similar features should look similar and behave similar. • Extensibility: The quality of a language that provides some general mechanism for the user to add new constructs to a language. • Standardability: The quality of a language that allows programs written to be transported from one computer to another without significant change in language structure. • Implementability: The quality of a language that provides a translator or interpreter can be written. This can address to complexity of the language definition. 6

Important! • Syntax is the visible part of a programming language – Programming Language designers can waste a lot of time discussing unimportant details of syntax • The language paradigm is the next most visible part – The choice of paradigm, and therefore language, depends on how humans best think about the problem – There are no right models of computations – just different models of computations, some more suited for certain classes of problems than others • The most invisible part is the language semantics – Clear semantics usually leads to simple and efficient implementations 7

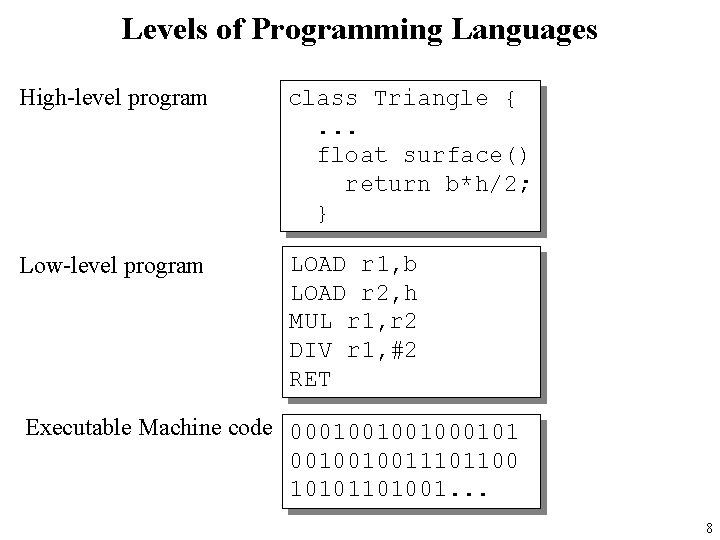

Levels of Programming Languages High-level program class Triangle {. . . float surface() return b*h/2; } Low-level program LOAD r 1, b LOAD r 2, h MUL r 1, r 2 DIV r 1, #2 RET Executable Machine code 000100100101 0010010011101100 10101101001. . . 8

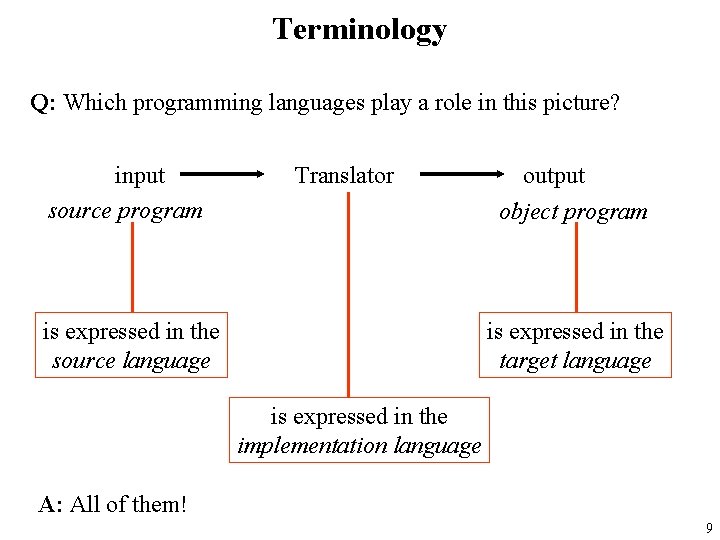

Terminology Q: Which programming languages play a role in this picture? input source program Translator is expressed in the source language output object program is expressed in the target language is expressed in the implementation language A: All of them! 9

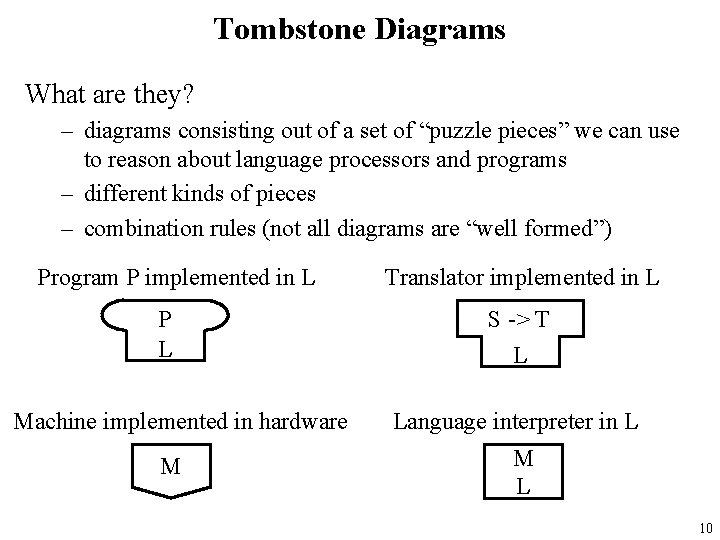

Tombstone Diagrams What are they? – diagrams consisting out of a set of “puzzle pieces” we can use to reason about language processors and programs – different kinds of pieces – combination rules (not all diagrams are “well formed”) Program P implemented in L P L Machine implemented in hardware M Translator implemented in L S -> T L Language interpreter in L M L 10

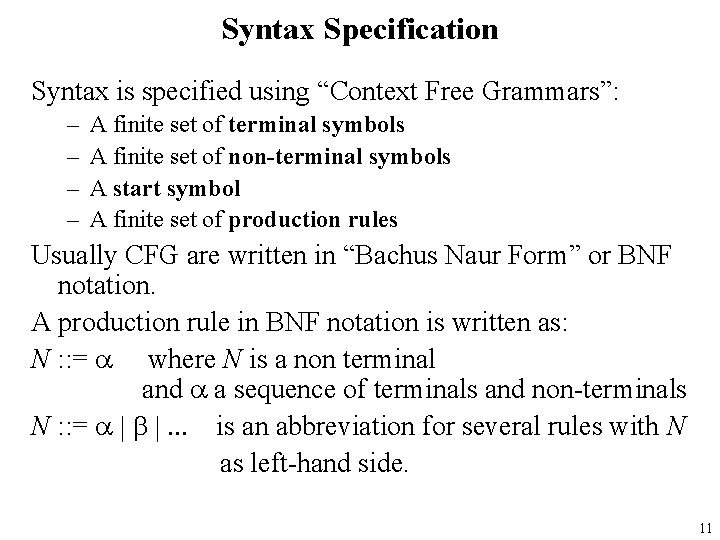

Syntax Specification Syntax is specified using “Context Free Grammars”: – – A finite set of terminal symbols A finite set of non-terminal symbols A start symbol A finite set of production rules Usually CFG are written in “Bachus Naur Form” or BNF notation. A production rule in BNF notation is written as: N : : = a where N is a non terminal and a a sequence of terminals and non-terminals N : : = a | b |. . . is an abbreviation for several rules with N as left-hand side. 11

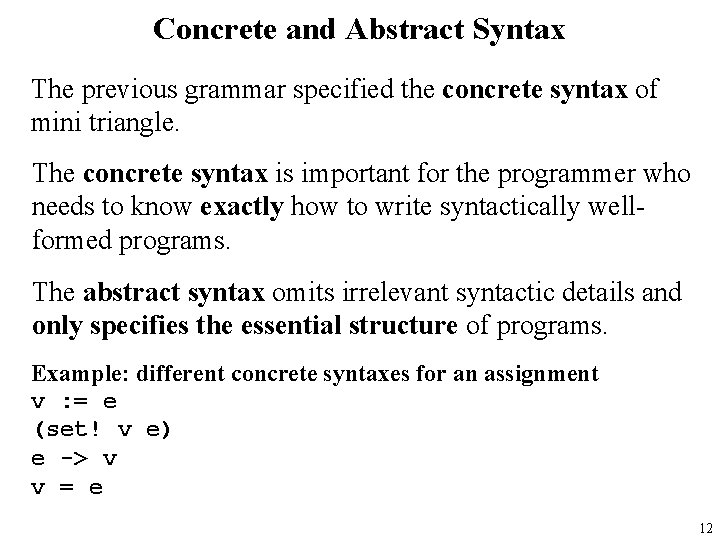

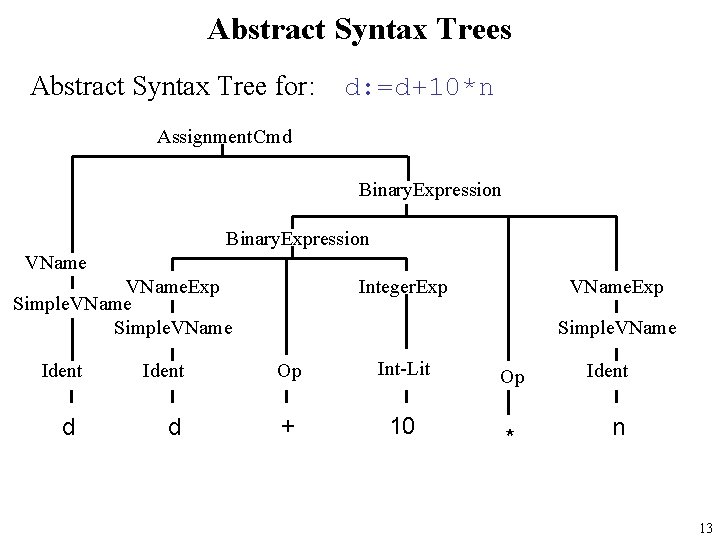

Concrete and Abstract Syntax The previous grammar specified the concrete syntax of mini triangle. The concrete syntax is important for the programmer who needs to know exactly how to write syntactically wellformed programs. The abstract syntax omits irrelevant syntactic details and only specifies the essential structure of programs. Example: different concrete syntaxes for an assignment v : = e (set! v e) e -> v v = e 12

Abstract Syntax Trees Abstract Syntax Tree for: d: =d+10*n Assignment. Cmd Binary. Expression VName. Exp Simple. VName Ident d Integer. Exp VName. Exp Simple. VName Op Int-Lit + 10 Op * Ident n 13

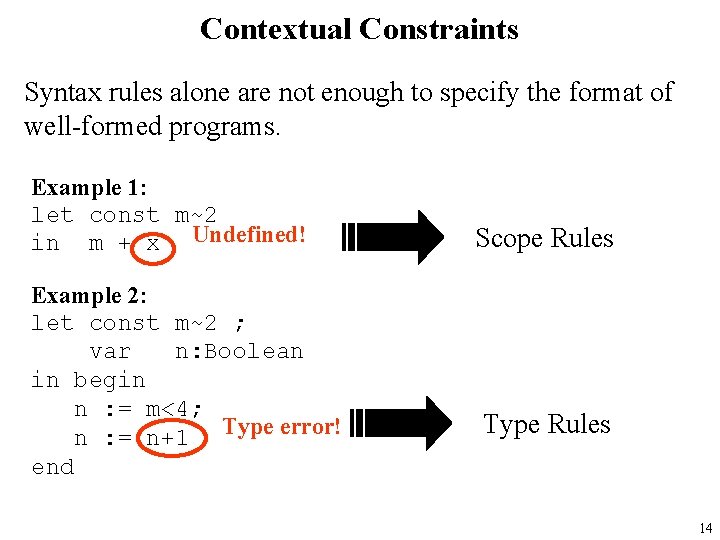

Contextual Constraints Syntax rules alone are not enough to specify the format of well-formed programs. Example 1: let const m~2 in m + x Undefined! Example 2: let const m~2 ; var n: Boolean in begin n : = m<4; n : = n+1 Type error! end Scope Rules Type Rules 14

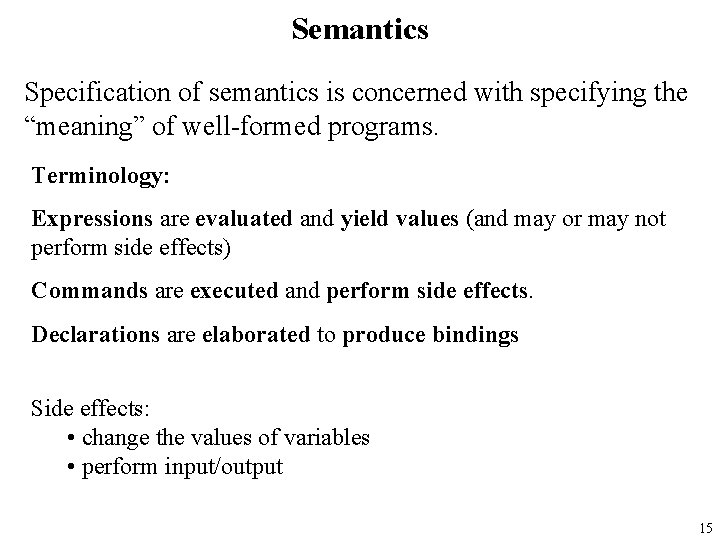

Semantics Specification of semantics is concerned with specifying the “meaning” of well-formed programs. Terminology: Expressions are evaluated and yield values (and may or may not perform side effects) Commands are executed and perform side effects. Declarations are elaborated to produce bindings Side effects: • change the values of variables • perform input/output 15

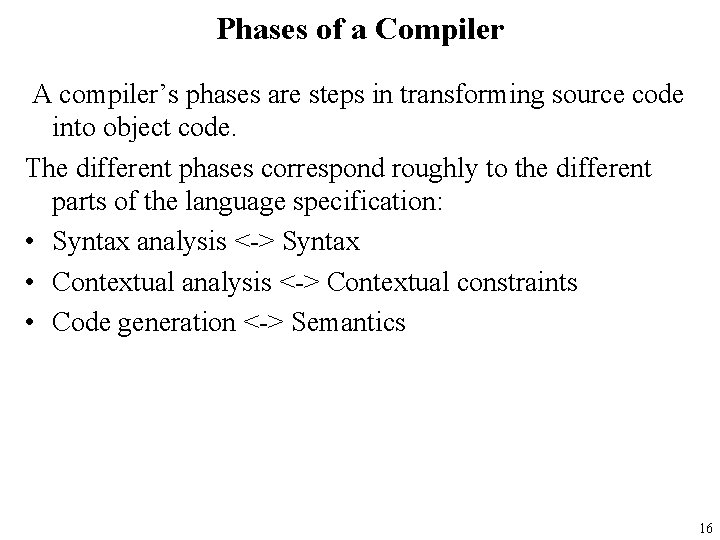

Phases of a Compiler A compiler’s phases are steps in transforming source code into object code. The different phases correspond roughly to the different parts of the language specification: • Syntax analysis <-> Syntax • Contextual analysis <-> Contextual constraints • Code generation <-> Semantics 16

The “Phases” of a Compiler Source Program Syntax Analysis Error Reports Abstract Syntax Tree Contextual Analysis Error Reports Decorated Abstract Syntax Tree Code Generation Object Code 17

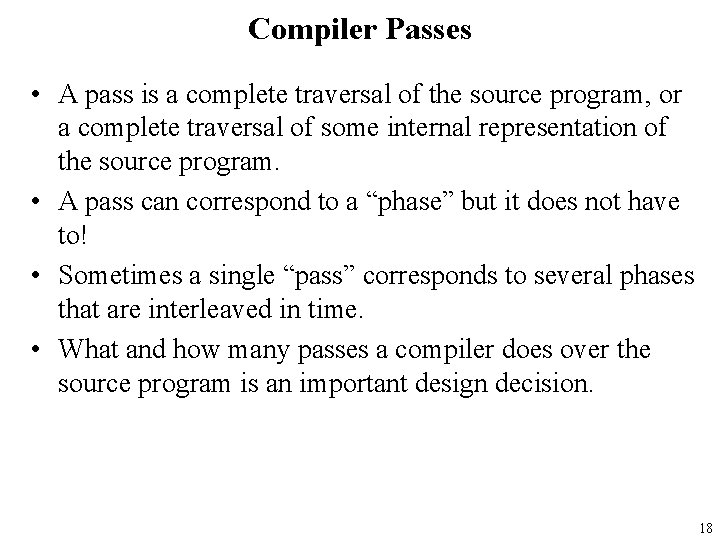

Compiler Passes • A pass is a complete traversal of the source program, or a complete traversal of some internal representation of the source program. • A pass can correspond to a “phase” but it does not have to! • Sometimes a single “pass” corresponds to several phases that are interleaved in time. • What and how many passes a compiler does over the source program is an important design decision. 18

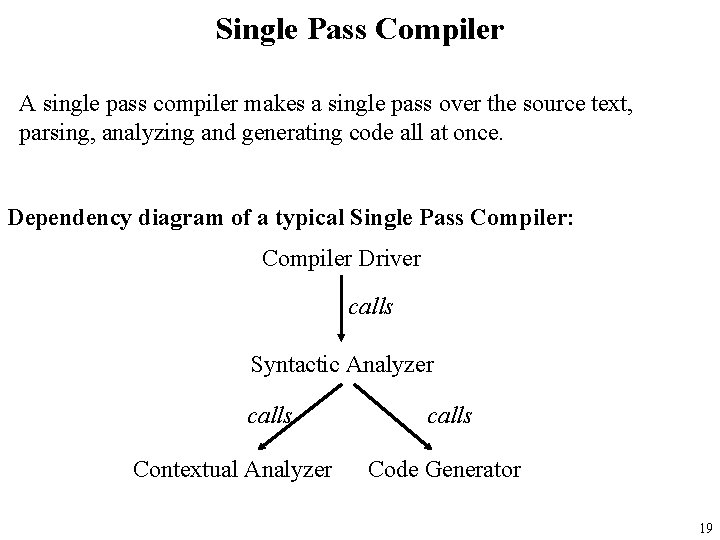

Single Pass Compiler A single pass compiler makes a single pass over the source text, parsing, analyzing and generating code all at once. Dependency diagram of a typical Single Pass Compiler: Compiler Driver calls Syntactic Analyzer calls Contextual Analyzer calls Code Generator 19

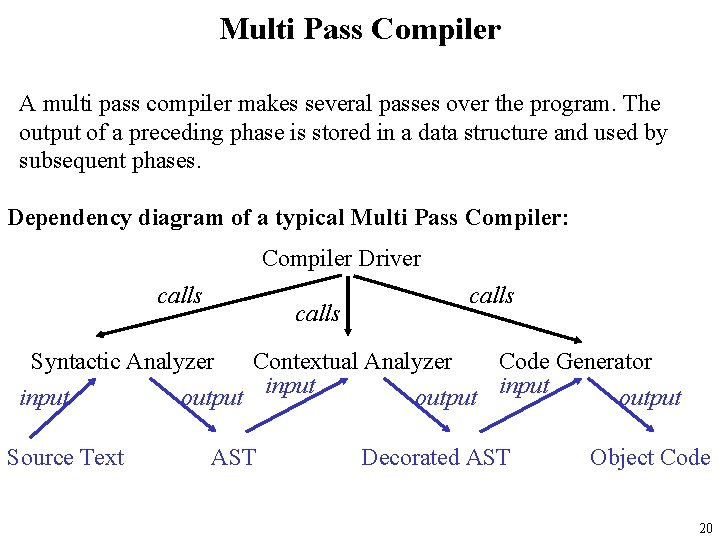

Multi Pass Compiler A multi pass compiler makes several passes over the program. The output of a preceding phase is stored in a data structure and used by subsequent phases. Dependency diagram of a typical Multi Pass Compiler: Compiler Driver calls Syntactic Analyzer Contextual Analyzer Code Generator input output Source Text AST Decorated AST Object Code 20

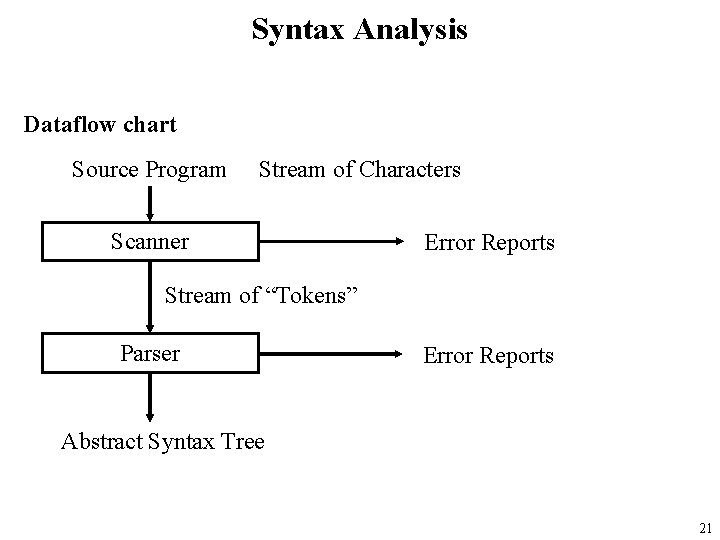

Syntax Analysis Dataflow chart Source Program Stream of Characters Scanner Error Reports Stream of “Tokens” Parser Error Reports Abstract Syntax Tree 21

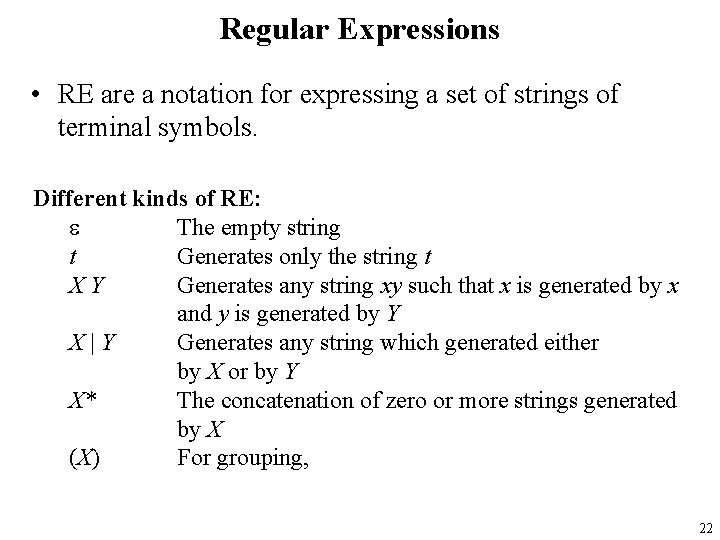

Regular Expressions • RE are a notation for expressing a set of strings of terminal symbols. Different kinds of RE: e The empty string t Generates only the string t XY Generates any string xy such that x is generated by x and y is generated by Y X|Y Generates any string which generated either by X or by Y X* The concatenation of zero or more strings generated by X (X) For grouping, 22

FA and the implementation of Scanners • Regular expressions, (N)DFA-e and NDFA and DFA’s are all equivalent formalism in terms of what languages can be defined with them. • Regular expressions are a convenient notation for describing the “tokens” of programming languages. • Regular expressions can be converted into FA’s (the algorithm for conversion into NDFA-e is straightforward) • DFA’s can be easily implemented as computer programs. 23

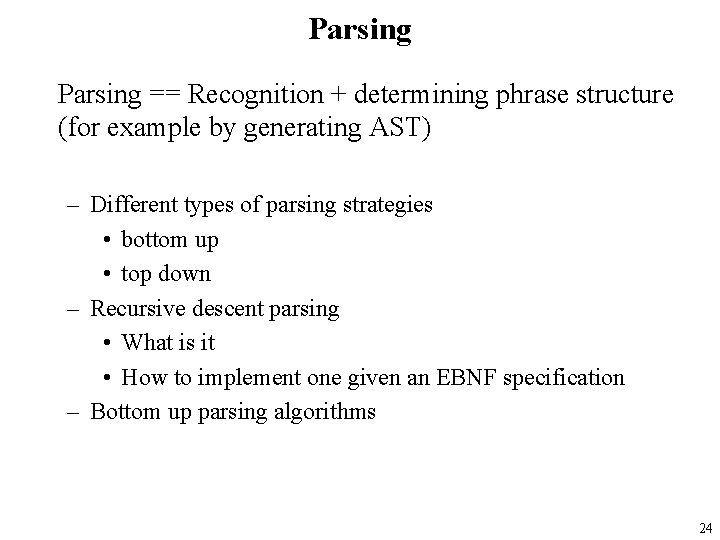

Parsing == Recognition + determining phrase structure (for example by generating AST) – Different types of parsing strategies • bottom up • top down – Recursive descent parsing • What is it • How to implement one given an EBNF specification – Bottom up parsing algorithms 24

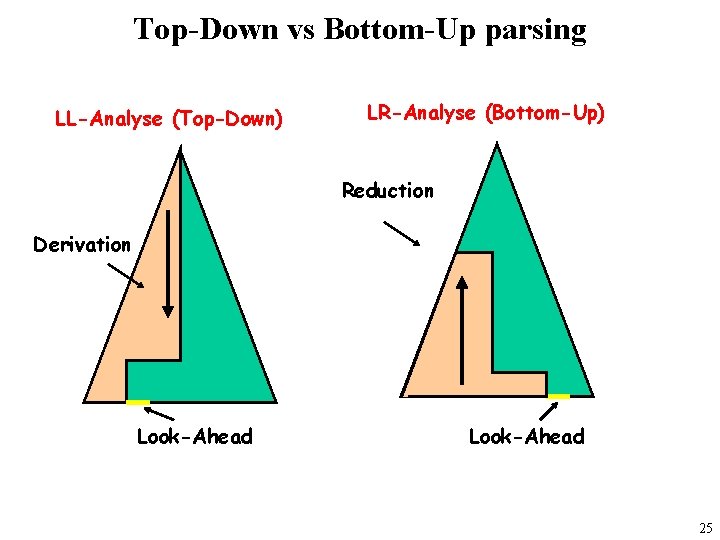

Top-Down vs Bottom-Up parsing LL-Analyse (Top-Down) LR-Analyse (Bottom-Up) Reduction Derivation Look-Ahead 25

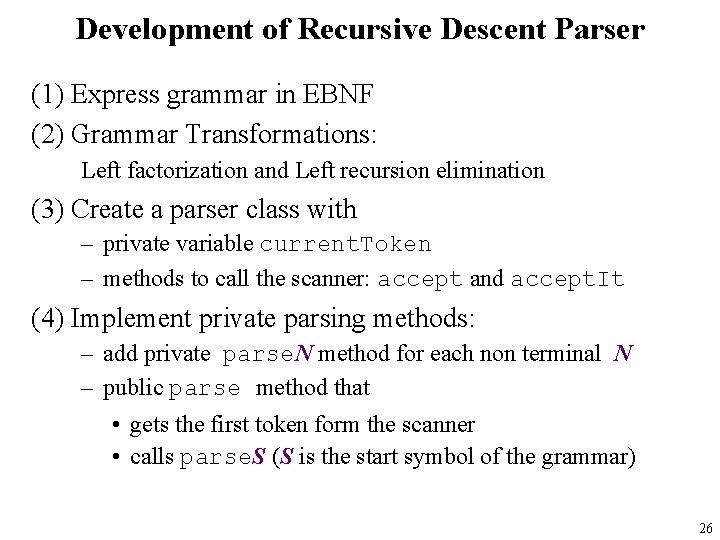

Development of Recursive Descent Parser (1) Express grammar in EBNF (2) Grammar Transformations: Left factorization and Left recursion elimination (3) Create a parser class with – private variable current. Token – methods to call the scanner: accept and accept. It (4) Implement private parsing methods: – add private parse. N method for each non terminal N – public parse method that • gets the first token form the scanner • calls parse. S (S is the start symbol of the grammar) 26

LL 1 Grammars • The presented algorithm to convert EBNF into a parser does not work for all possible grammars. • It only works for so called “LL 1” grammars. • Basically, an LL 1 grammar is a grammar which can be parsed with a top-down parser with a lookahead (in the input stream of tokens) of one token. • What grammars are LL 1? How can we recognize that a grammar is (or is not) LL 1? ÞWe can deduce the necessary conditions from the parser generation algorithm. ÞWe can use a formal definition 27

Converting EBNF into RD parsers • The conversion of an EBNF specification into a Java implementation for a recursive descent parser is so “mechanical” that it can easily be automated! => Java. CC “Java Compiler” 28

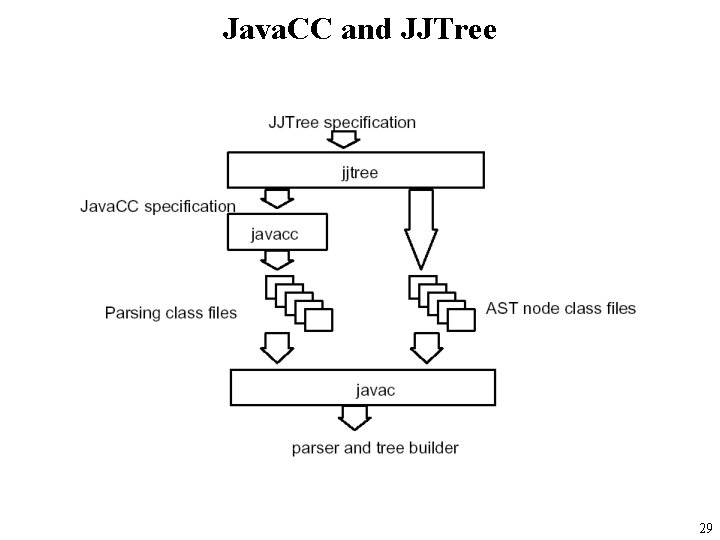

Java. CC and JJTree 29

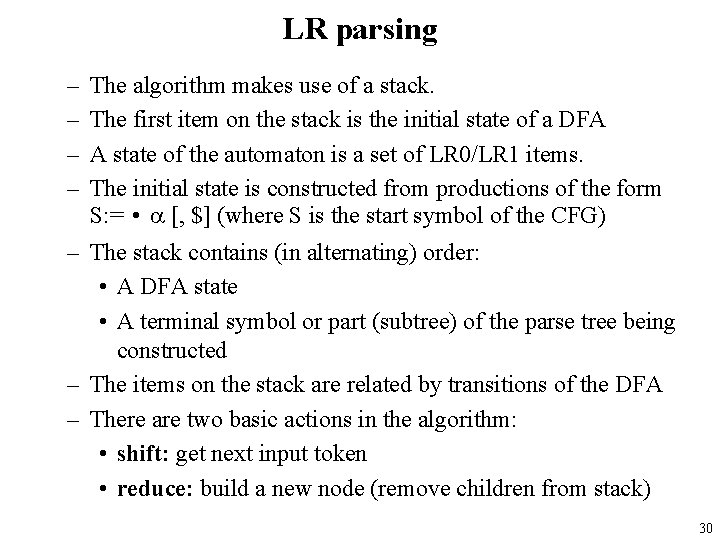

LR parsing – – The algorithm makes use of a stack. The first item on the stack is the initial state of a DFA A state of the automaton is a set of LR 0/LR 1 items. The initial state is constructed from productions of the form S: = • a [, $] (where S is the start symbol of the CFG) – The stack contains (in alternating) order: • A DFA state • A terminal symbol or part (subtree) of the parse tree being constructed – The items on the stack are related by transitions of the DFA – There are two basic actions in the algorithm: • shift: get next input token • reduce: build a new node (remove children from stack) 30

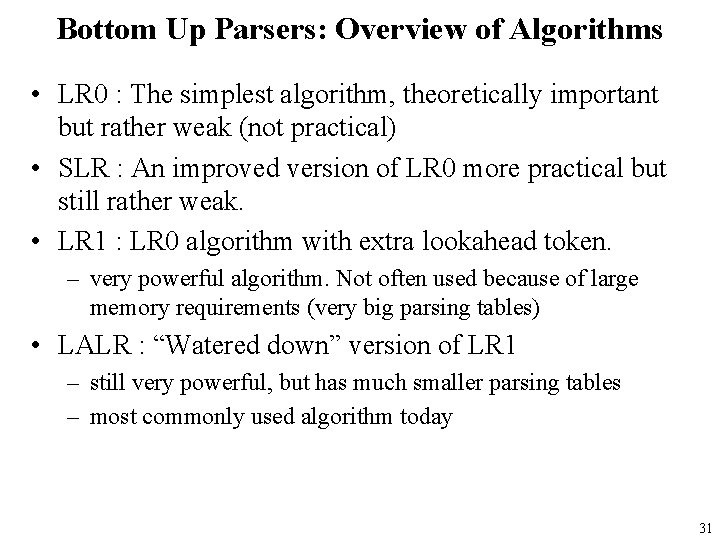

Bottom Up Parsers: Overview of Algorithms • LR 0 : The simplest algorithm, theoretically important but rather weak (not practical) • SLR : An improved version of LR 0 more practical but still rather weak. • LR 1 : LR 0 algorithm with extra lookahead token. – very powerful algorithm. Not often used because of large memory requirements (very big parsing tables) • LALR : “Watered down” version of LR 1 – still very powerful, but has much smaller parsing tables – most commonly used algorithm today 31

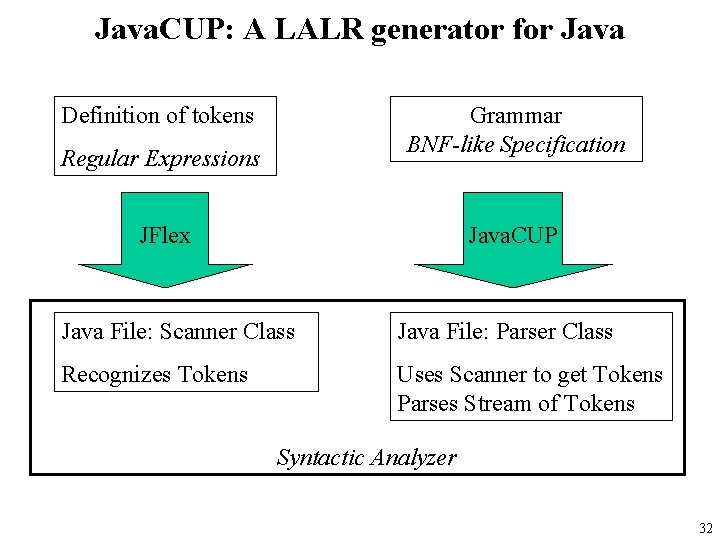

Java. CUP: A LALR generator for Java Definition of tokens Grammar BNF-like Specification Regular Expressions JFlex Java. CUP Java File: Scanner Class Java File: Parser Class Recognizes Tokens Uses Scanner to get Tokens Parses Stream of Tokens Syntactic Analyzer 32

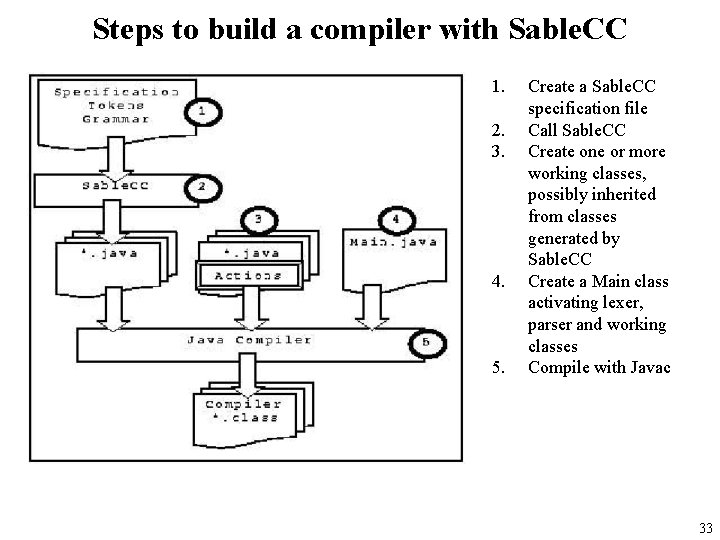

Steps to build a compiler with Sable. CC 1. 2. 3. 4. 5. Create a Sable. CC specification file Call Sable. CC Create one or more working classes, possibly inherited from classes generated by Sable. CC Create a Main class activating lexer, parser and working classes Compile with Javac 33

Contextual Analysis Phase • Purposes: – Finish syntax analysis by deriving context-sensitive information – Associate semantic routines with individual productions of the context free grammar or subtrees of the AST – Start to interpret meaning of program based on its syntactic structure – Prepare for the final stage of compilation: Code generation 34

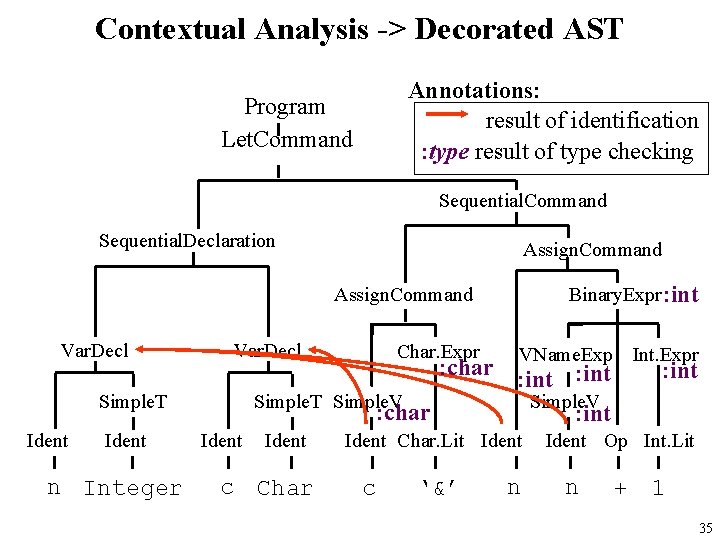

Contextual Analysis -> Decorated AST Annotations: result of identification : type result of type checking Program Let. Command Sequential. Declaration Assign. Command Binary. Expr : int Assign. Command Var. Decl Ident n Integer VName. Exp Int. Expr : char : int Simple. T Simple. V : char : int Simple. T Ident Char. Expr Ident c Char Ident Char. Lit Ident c ‘&’ n : int Ident Op Int. Lit n + 1 35

Nested Block Structure Nested A language exhibits nested block structure if blocks may be nested one within another (typically with no upper bound on the level of nesting that is allowed). There can be any number of scope levels (depending on the level of nesting of blocks): Typical scope rules: • no identifier may be declared more than once within the same block (at the same level). • for any applied occurrence there must be a corresponding declaration, either within the same block or in a block in which it is nested. 36

Type Checking For most statically typed programming languages, a bottom up algorithm over the AST: • Types of expression AST leaves are known immediately: – literals => obvious – variables => from the ID table – named constants => from the ID table • Types of internal nodes are inferred from the type of the children and the type rule for that kind of expression 37

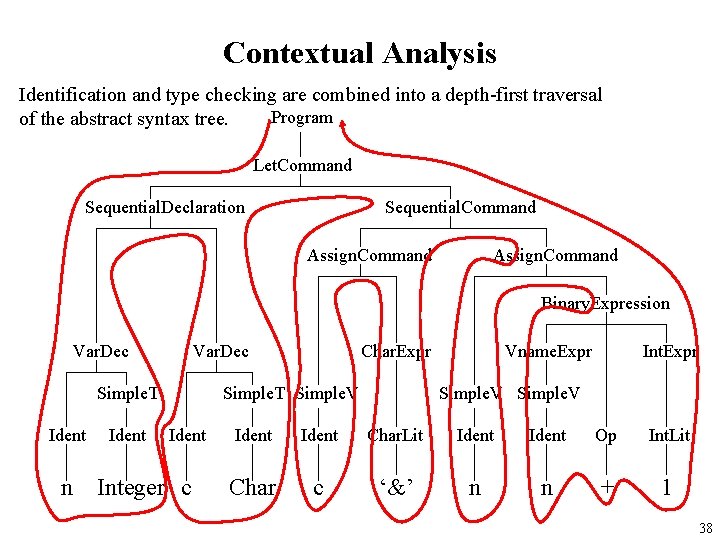

Contextual Analysis Identification and type checking are combined into a depth-first traversal Program of the abstract syntax tree. Let. Command Sequential. Declaration Sequential. Command Assign. Command Binary. Expression Var. Dec Simple. T Ident Char. Expr Simple. T Simple. V Ident n Integer c Vname. Expr Int. Expr Simple. V Ident Char. Lit Ident Op Int. Lit Char c ‘&’ n n + 1 38

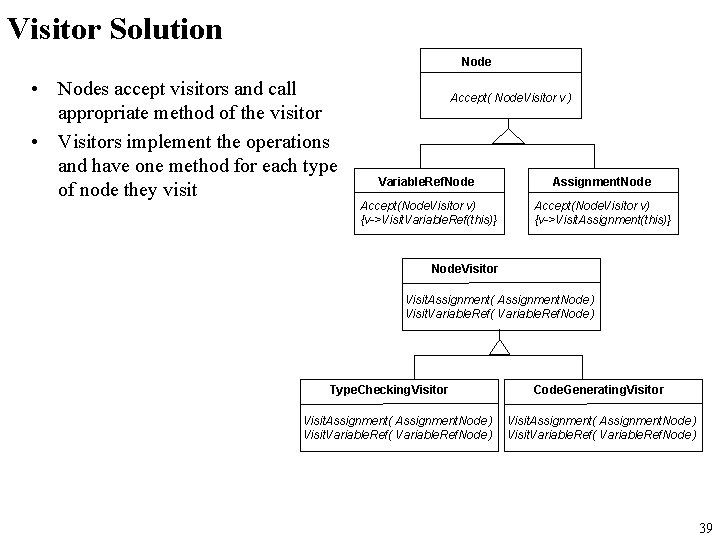

Visitor Solution Node • Nodes accept visitors and call appropriate method of the visitor • Visitors implement the operations and have one method for each type of node they visit Accept( Node. Visitor v ) Variable. Ref. Node Assignment. Node Accept(Node. Visitor v) {v->Visit. Variable. Ref(this)} Accept(Node. Visitor v) {v->Visit. Assignment(this)} Node. Visitor Visit. Assignment( Assignment. Node ) Visit. Variable. Ref( Variable. Ref. Node ) Type. Checking. Visitor Visit. Assignment( Assignment. Node ) Visit. Variable. Ref( Variable. Ref. Node ) Code. Generating. Visitor Visit. Assignment( Assignment. Node ) Visit. Variable. Ref( Variable. Ref. Node ) 39

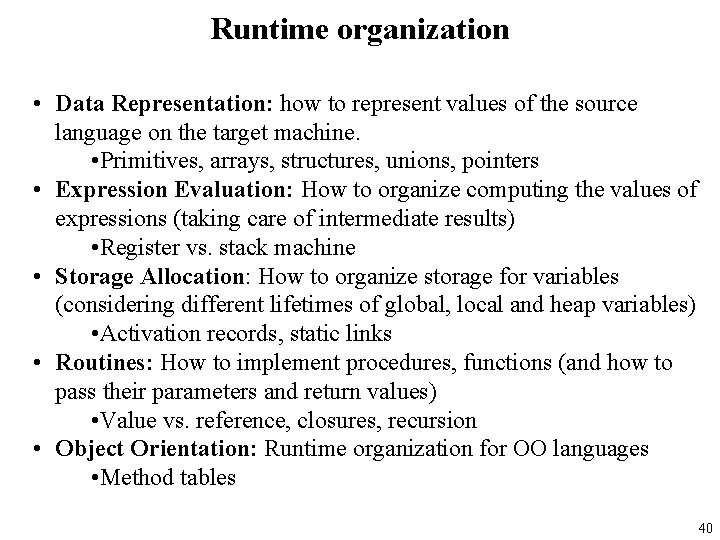

Runtime organization • Data Representation: how to represent values of the source language on the target machine. • Primitives, arrays, structures, unions, pointers • Expression Evaluation: How to organize computing the values of expressions (taking care of intermediate results) • Register vs. stack machine • Storage Allocation: How to organize storage for variables (considering different lifetimes of global, local and heap variables) • Activation records, static links • Routines: How to implement procedures, functions (and how to pass their parameters and return values) • Value vs. reference, closures, recursion • Object Orientation: Runtime organization for OO languages • Method tables 40

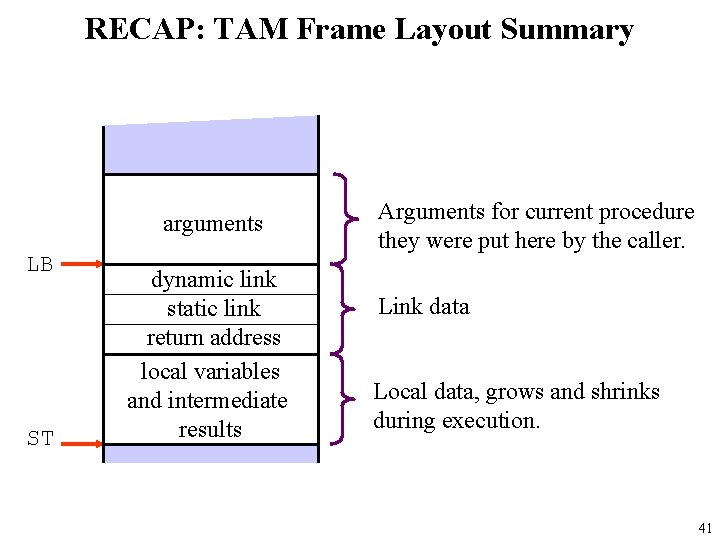

RECAP: TAM Frame Layout Summary arguments LB ST dynamic link static link return address local variables and intermediate results Arguments for current procedure they were put here by the caller. Link data Local data, grows and shrinks during execution. 41

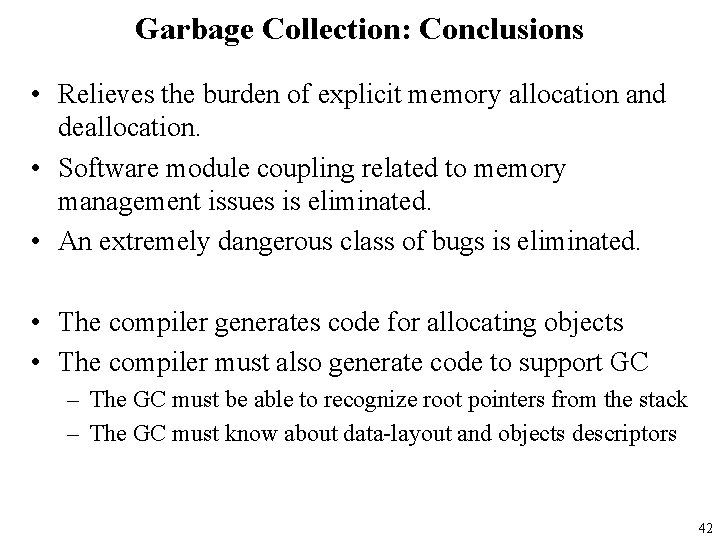

Garbage Collection: Conclusions • Relieves the burden of explicit memory allocation and deallocation. • Software module coupling related to memory management issues is eliminated. • An extremely dangerous class of bugs is eliminated. • The compiler generates code for allocating objects • The compiler must also generate code to support GC – The GC must be able to recognize root pointers from the stack – The GC must know about data-layout and objects descriptors 42

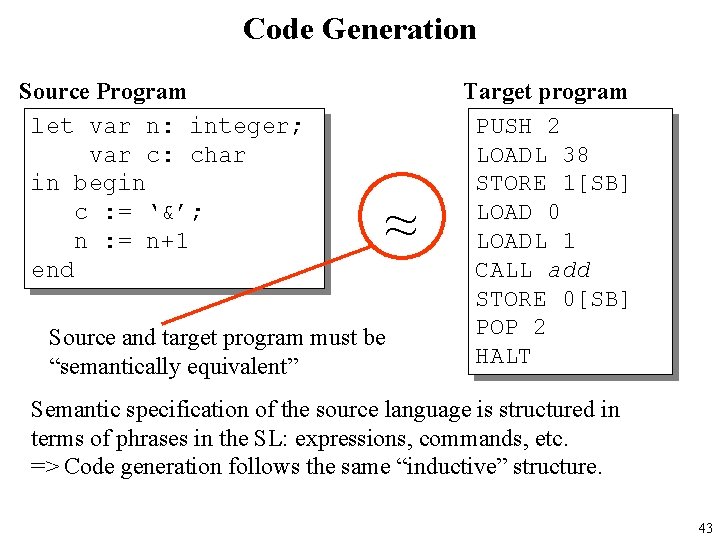

Code Generation Source Program let var n: integer; var c: char in begin c : = ‘&’; n : = n+1 end ~ Source and target program must be “semantically equivalent” Target program PUSH 2 LOADL 38 STORE 1[SB] LOAD 0 LOADL 1 CALL add STORE 0[SB] POP 2 HALT Semantic specification of the source language is structured in terms of phrases in the SL: expressions, commands, etc. => Code generation follows the same “inductive” structure. 43

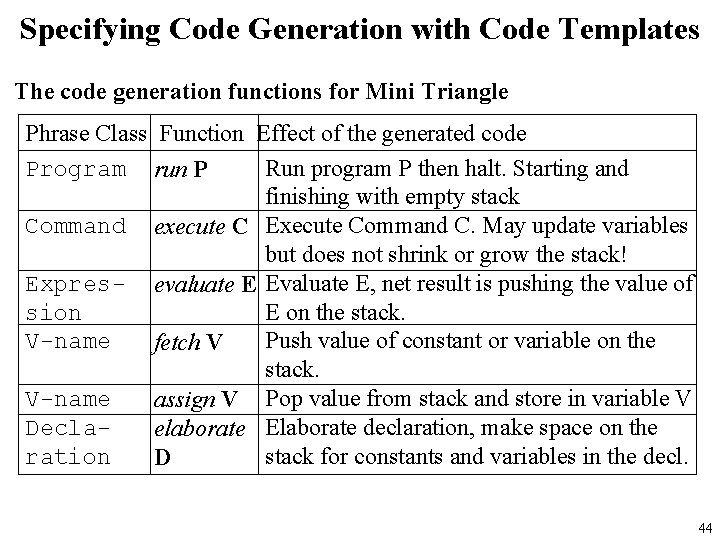

Specifying Code Generation with Code Templates The code generation functions for Mini Triangle Phrase Class Function Effect of the generated code Program run P Run program P then halt. Starting and finishing with empty stack Command execute C Execute Command C. May update variables but does not shrink or grow the stack! Expres- evaluate E Evaluate E, net result is pushing the value of sion E on the stack. V-name Push value of constant or variable on the fetch V stack. V-name assign V Pop value from stack and store in variable V Declaelaborate Elaborate declaration, make space on the ration stack for constants and variables in the decl. D 44

![Code Generation with Code Templates While command execute [while E do C] = JUMP Code Generation with Code Templates While command execute [while E do C] = JUMP](http://slidetodoc.com/presentation_image/22f1a842be6803e92b78eec81a080783/image-45.jpg)

Code Generation with Code Templates While command execute [while E do C] = JUMP h g: execute [C] h: evaluate[E] JUMPIF(1) g C E 45

![Developing a Code Generator “Visitor” execute [C 1 ; C 2] = execute[C 1] Developing a Code Generator “Visitor” execute [C 1 ; C 2] = execute[C 1]](http://slidetodoc.com/presentation_image/22f1a842be6803e92b78eec81a080783/image-46.jpg)

Developing a Code Generator “Visitor” execute [C 1 ; C 2] = execute[C 1] execute[C 2] public Object visit. Sequential. Command( Sequential. Command com, Object arg) { com. C 1. visit(this, arg); com. C 2. visit(this, arg); return null; } Let. Command, If. Command, While. Command => later. - Let. Command is more complex: memory allocation and addresses - If. Command While. Command: complications with jumps 46

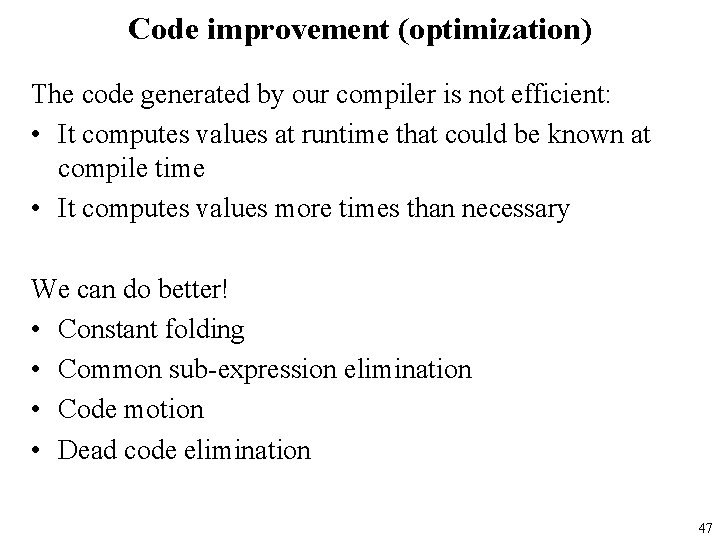

Code improvement (optimization) The code generated by our compiler is not efficient: • It computes values at runtime that could be known at compile time • It computes values more times than necessary We can do better! • Constant folding • Common sub-expression elimination • Code motion • Dead code elimination 47

Optimization implementation • Is the optimization correct or safe? • Is the optimization an improvement? • What sort of analyses do we need to perform to get the required information? –Local –Global 48

Concurrency, distributed computing, the Internet • • • Traditional view: Let the OS deal with this => It is not a programming language issue! End of Lecture Wait-a-minute … Maybe “the traditional view” is getting out of date? 49

Languages with concurrency constructs • • • • Maybe the “traditional view” was always out of date? Simula Modula 3 Occam Concurrent Pascal ADA Linda CML Facile Jo-Caml Java C# … 50

What could languages provide? • Abstract model of system – abstract machine => abstract system • Example high-level constructs – Process as the value of an expression • Pass processes to functions • Create processes at the result of function call – Communication abstractions • Synchronous communication • Buffered asynchronous channels that preserve msg order – Mutual exclusion, atomicity primitives • Most concurrent languages provide some form of locking • Atomicity is more complicated, less commonly provided 51

Programming Language Life cycle • The requirements for the new language are identified • The language syntax and semantics is designed – BNF or EBNF, experiments with front-end tools – Informal or formal Semantic • An informal or formal specification is developed • Initial implementation – Prototype via interpreter or interpretive compiler • Language tested by designers, implementers and a few friends • Feedback on the design and possible reconsiderations • Improved implementation 52

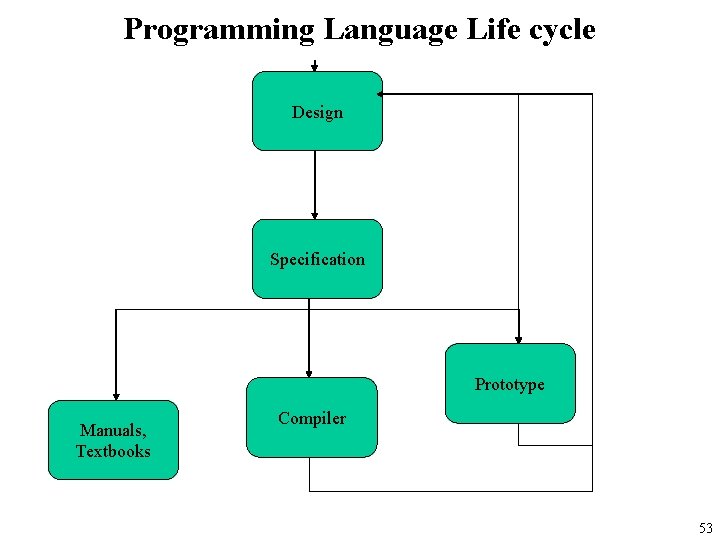

Programming Language Life cycle Design Specification Prototype Manuals, Textbooks Compiler 53

Programming Language Life cycle • • • Lots of research papers Conferences session dedicated to new language Text books and manuals Used in large applications Huge international user community Dedicated conference International standardisation efforts Industry defacto standard Programs written in the languages becomes legacy code Language enters “hall-of-fame” and features are taught in CS course on Programming Language Design and Implementation 54

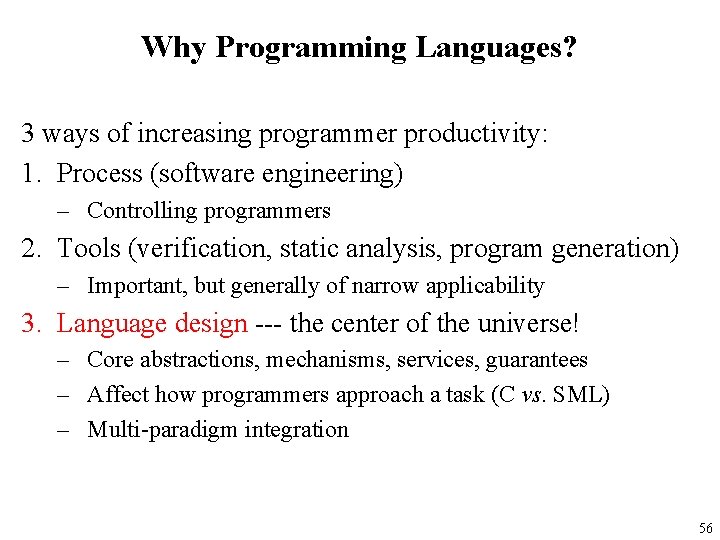

The Most Important Open Problem in Computing Increasing Programmer Productivity – Write programs correctly – Write programs quickly – Write programs easily • Why? – – Decreases support cost Decreases development cost Decreases time to market Increases satisfaction 55

Why Programming Languages? 3 ways of increasing programmer productivity: 1. Process (software engineering) – Controlling programmers 2. Tools (verification, static analysis, program generation) – Important, but generally of narrow applicability 3. Language design --- the center of the universe! – Core abstractions, mechanisms, services, guarantees – Affect how programmers approach a task (C vs. SML) – Multi-paradigm integration 56

Programming Languages and Compilers are at the core of Computing All software is written in a programming language Learning about compilers will teach you a lot about the programming languages you already know. Compilers are big – therefore you need to apply all you knowledge of software engineering. The compiler is the program from which all other programs arise. 57

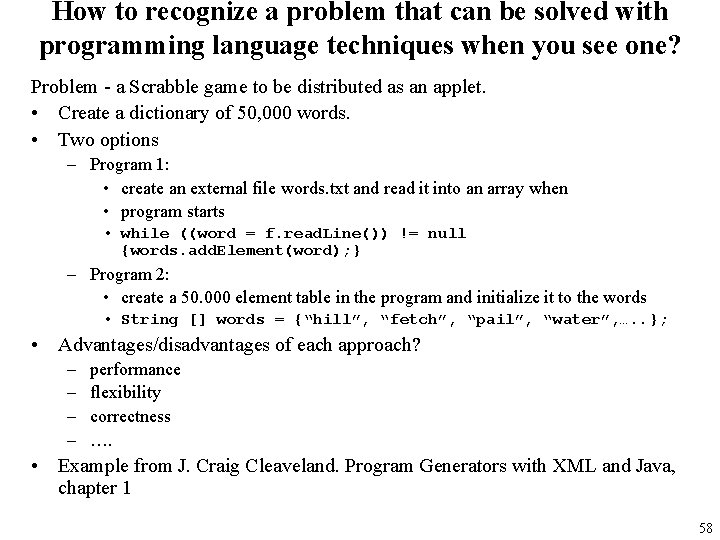

How to recognize a problem that can be solved with programming language techniques when you see one? Problem - a Scrabble game to be distributed as an applet. • Create a dictionary of 50, 000 words. • Two options – Program 1: • create an external file words. txt and read it into an array when • program starts • while ((word = f. read. Line()) != null {words. add. Element(word); } – Program 2: • create a 50. 000 element table in the program and initialize it to the words • String [] words = {“hill”, “fetch”, “pail”, “water”, …. . }; • Advantages/disadvantages of each approach? – – performance flexibility correctness …. • Example from J. Craig Cleaveland. Program Generators with XML and Java, chapter 1 58

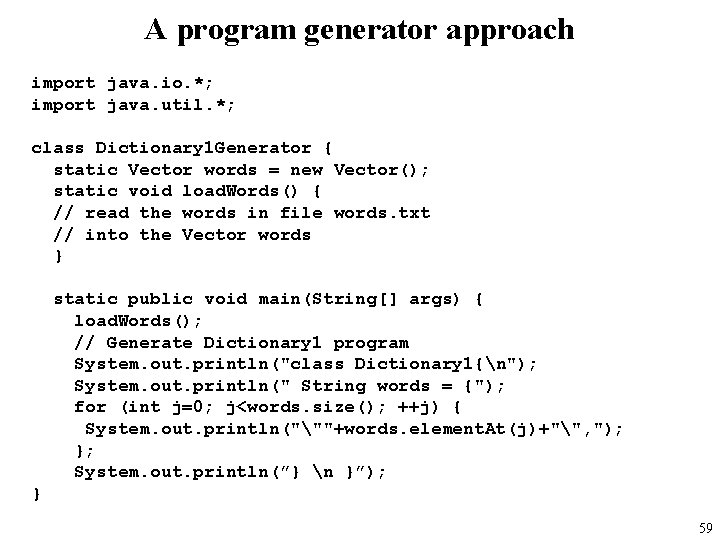

A program generator approach import java. io. *; import java. util. *; class Dictionary 1 Generator { static Vector words = new Vector(); static void load. Words() { // read the words in file words. txt // into the Vector words } static public void main(String[] args) { load. Words(); // Generate Dictionary 1 program System. out. println("class Dictionary 1{n"); System. out. println(" String words = {"); for (int j=0; j<words. size(); ++j) { System. out. println("""+words. element. At(j)+"", "); }; System. out. println(”} n }”); } 59

Typical program generator • Dictionary example • The data – simply a list of words • Analyzing/transforming data – duplicate word removal – sorting • Generate program – simply use print statements to write program text • General picture • The data – some more complex representation of data • formal specs, • grammar, • spreadsheet, • XML, • etc. • Analyzing/transforming data – parse, check for inconsistencies, transform to other data structures • Generate program – generate syntax tree, use templates, … 60

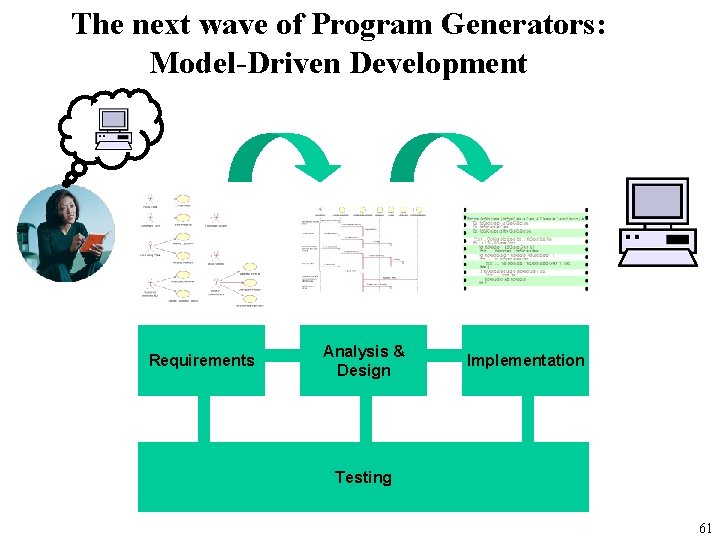

The next wave of Program Generators: Model-Driven Development Requirements Analysis & Design Implementation Testing 61

New Programming Language! Why Should I Care? • The problem is not designing a new language – It’s easy! Thousands of languages have been developed • The problem is how to get wide adoption of the new language – It’s hard! Challenges include • Competition • Usefulness • Interoperability • Fear “It’s a good idea, but it’s a new idea; therefore, I fear it and must reject it. ” --- Homer Simpson • The financial rewards are low, but … 62

Famous Danish Computer Scientists • Peter Nauer – BNF and Algol • Per Brinck Hansen – Monitors and Concurrent Pascal • Dines Bjorner – VDM and ADA • Bjarne Straustrup – C++ • Mads Tofte – SML • Rasmus Lerdorf – Ph. P • Anders Hejlsberg – Turbo Pascal and C# • Jacob Nielsen 63

Fancy joining this crowd? • Join the Programming Language Technology Research Group when you get to DAT 5/DAT 6 or SW 8/SW 9 • New Research Programme underway • How would you like to programme in 20 years? – – – – Languages for testability, verifiability, specifiability Java vs. . Net Aspect Oriented Programming on. Net Business Process Management Language Multiple dispatch in C# XML as program representation Java on Mobile Phones 64

Finally Keep in mind, the compiler is the program from which all other programs arise. If your compiler is under par, all programs created by the compiler will also be under par. No matter the purpose or use -- your own enlightenment about compilers or commercial applications -- you want to be patient and do a good job with this program; in other words, don't try to throw this together on a weekend. Asking a computer programmer to tell you how to write a compiler is like saying to Picasso, "Teach me to paint like you. " *Sigh* Well, Picasso tried. 65

What I promised you at the start of the course Ideas, principles and techniques to help you – Design your own programming language or design your own extensions to an existing language – Tools and techniques to implement a compiler or an interpreter – Lots of knowledge about programming I hope you feel you got what I promised 66

Top 10 reasons COMPILERS must be female 10. Picky, picky. 9. They hear what you say, but not what you mean. 8. Beauty is only shell deep. 7. When you ask what's wrong, they say "nothing". 6. Can produce incorrect results with alarming speed. 5. Always turning simple statements into big productions. 4. Small talk is important. 3. You do the same thing for years, and suddenly it's wrong. 2. They make you take the garbage out. 1. Miss a period and they go wild. 67

- Slides: 67