LandmarkBased Speech Recognition The Marriage of HighDimensional Machine

Landmark-Based Speech Recognition The Marriage of High-Dimensional Machine Learning Techniques with Modern Linguistic Representations Mark Hasegawa-Johnson jhasegaw@uiuc. edu Research performed in collaboration with James Baker (Carnegie Mellon), Sarah Borys (Illinois), Ken Chen (Illinois), Emily Coogan (Illinois), Steven Greenberg (Berkeley), Amit Juneja (Maryland), Katrin Kirchhoff (Washington), Karen Livescu (MIT), Srividya Mohan (Johns Hopkins), Jen Muller (Dept. of Defense), Kemal Sonmez (SRI), and Tianyu Wang (Georgia Tech)

What are Landmarks? • Time-frequency regions of high mutual information between phone and signal (maxima of I(phone label; acoustics(t, f)) ) • Acoustic events with similar importance in all languages, and across all speaking styles • Acoustic events that can be detected even in extremely noisy environments Where do these things happen? • Syllable Onset ≈ Consonant Release • Syllable Nucleus ≈ Vowel Center • Syllable Coda ≈ Consonant Closure I(phone; acoustics) experiment: Hasegawa-Johnson, 2000

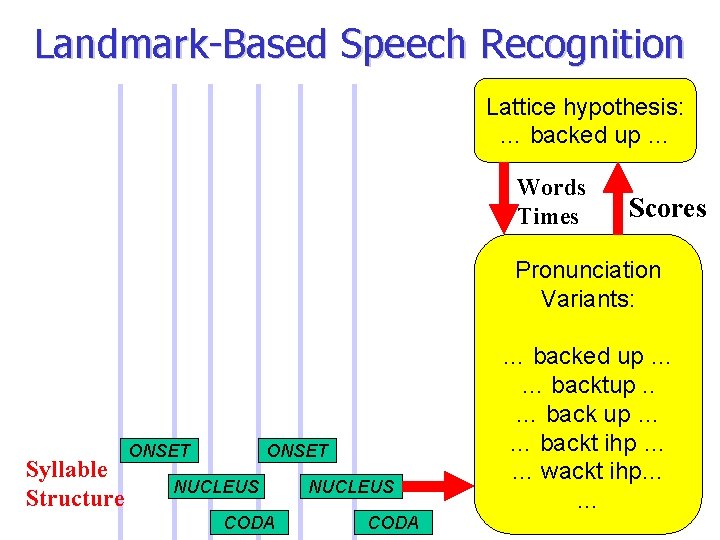

Landmark-Based Speech Recognition Lattice hypothesis: … backed up … Words Times Scores Pronunciation Variants: Syllable Structure ONSET NUCLEUS CODA … backed up … … backtup. . … back up … … backt ihp … … wackt ihp… …

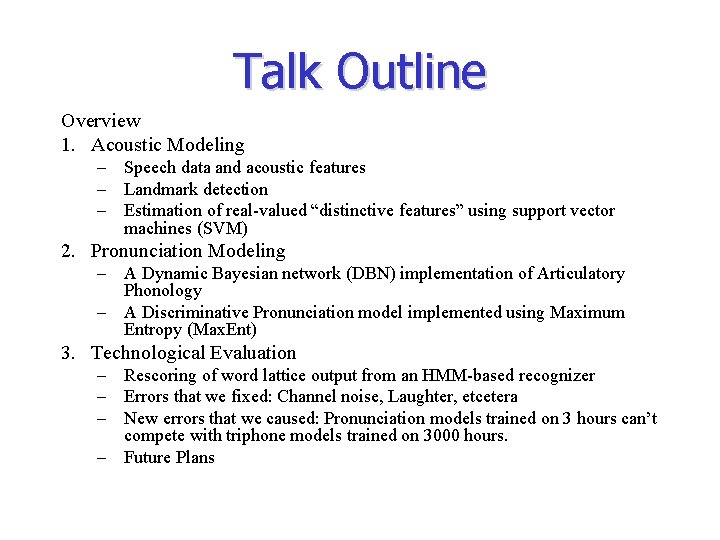

Talk Outline Overview 1. Acoustic Modeling – Speech data and acoustic features – Landmark detection – Estimation of real-valued “distinctive features” using support vector machines (SVM) 2. Pronunciation Modeling – A Dynamic Bayesian network (DBN) implementation of Articulatory Phonology – A Discriminative Pronunciation model implemented using Maximum Entropy (Max. Ent) 3. Technological Evaluation – Rescoring of word lattice output from an HMM-based recognizer – Errors that we fixed: Channel noise, Laughter, etcetera – New errors that we caused: Pronunciation models trained on 3 hours can’t compete with triphone models trained on 3000 hours. – Future Plans

Overview • History – Research described in this talk was performed between June 30 and August 17, 2004, at the Johns Hopkins summer workshop WS 04 • Scientific Goal – To use high-dimensional machine learning technologies (SVM, DBN) to create representations capable of learning, from data, the types of speech knowledge that humans exhibit in psychophysical speech perception experiments • Technological Goal – Long-term: To create a better speech recognizer – Short-term: lattice rescoring, applied to word lattices produced by SRI’s NN/HMM hybrid

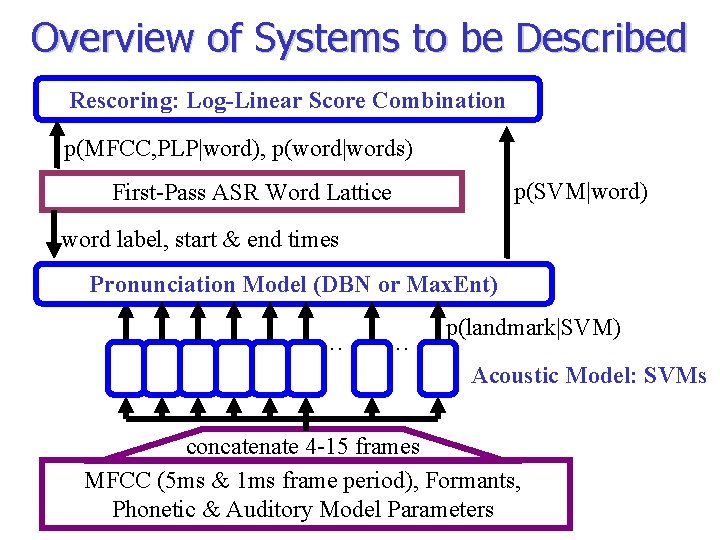

Overview of Systems to be Described Rescoring: Log-Linear Score Combination p(MFCC, PLP|word), p(word|words) p(SVM|word) First-Pass ASR Word Lattice word label, start & end times Pronunciation Model (DBN or Max. Ent) … … p(landmark|SVM) Acoustic Model: SVMs concatenate 4 -15 frames MFCC (5 ms & 1 ms frame period), Formants, Phonetic & Auditory Model Parameters

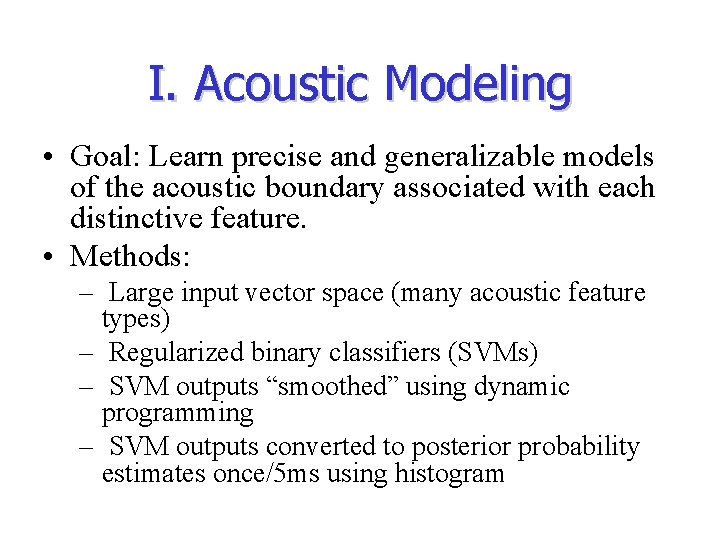

I. Acoustic Modeling • Goal: Learn precise and generalizable models of the acoustic boundary associated with each distinctive feature. • Methods: – Large input vector space (many acoustic feature types) – Regularized binary classifiers (SVMs) – SVM outputs “smoothed” using dynamic programming – SVM outputs converted to posterior probability estimates once/5 ms using histogram

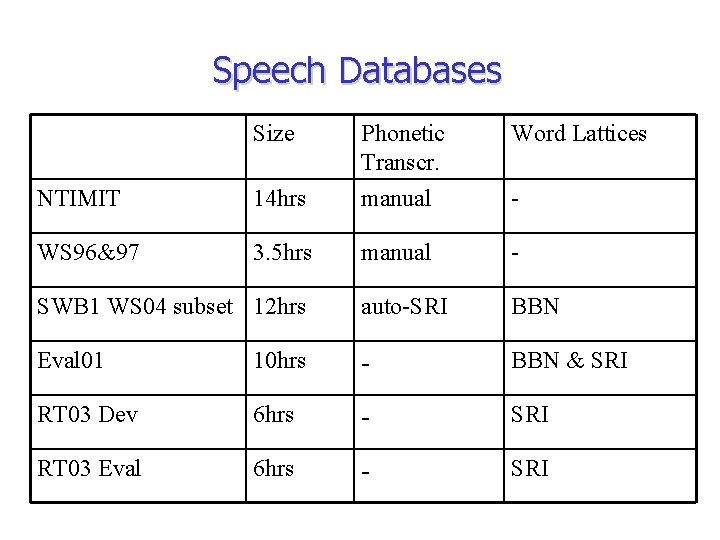

Speech Databases Size NTIMIT 14 hrs Phonetic Transcr. manual Word Lattices WS 96&97 3. 5 hrs manual - SWB 1 WS 04 subset 12 hrs auto-SRI BBN Eval 01 10 hrs - BBN & SRI RT 03 Dev 6 hrs - SRI RT 03 Eval 6 hrs - SRI -

Acoustic and Auditory Features • MFCCs, 25 ms window (standard ASR features) • Spectral shape: energy, spectral tilt, and spectral compactness, once/millisecond • Noise-robust MUSIC-based formant frequencies, amplitudes, and bandwidths (Zheng & Hasegawa. Johnson, ICSLP 2004) • Acoustic-phonetic parameters (Formant-based relative spectral measures and time-domain measures; Bitar & Espy-Wilson, 1996) • Rate-place model of neural response fields in the cat auditory cortex (Carlyon & Shamma, JASA 2003)

What are Distinctive Features? What are Landmarks? • Distinctive feature = – a binary partition of the phonemes (Jakobson, 1952) – … that compactly describes pronunciation variability (Halle) – … and correlates with distinct acoustic cues (Stevens) • Landmark = Change in the value of a Manner Feature – [+sonorant] to [–sonorant], [–sonorant] to [+sonorant] – 5 manner features: [consonantal, continuant, syllabic, silence] • Place and Voicing features: SVMs are only trained at landmarks – Primary articulator: lips, tongue blade, or tongue body – Features of primary articulator: anterior, strident – Features of secondary articulator: nasal, voiced

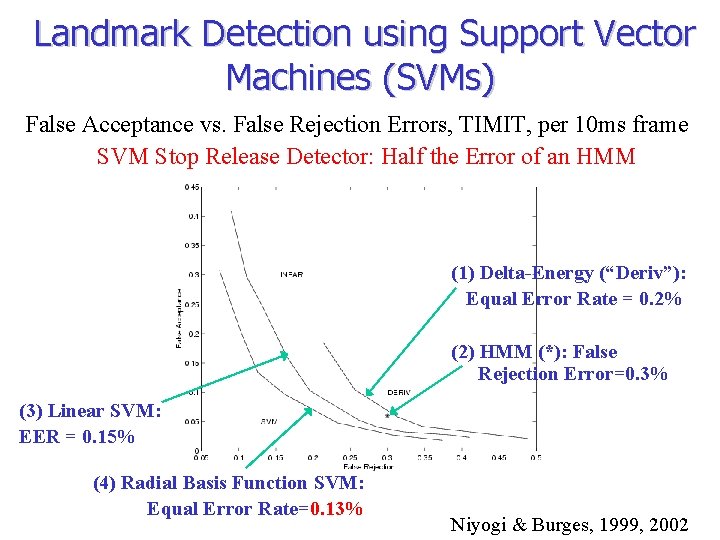

Landmark Detection using Support Vector Machines (SVMs) False Acceptance vs. False Rejection Errors, TIMIT, per 10 ms frame SVM Stop Release Detector: Half the Error of an HMM (1) Delta-Energy (“Deriv”): Equal Error Rate = 0. 2% (2) HMM (*): False Rejection Error=0. 3% (3) Linear SVM: EER = 0. 15% (4) Radial Basis Function SVM: Equal Error Rate=0. 13% Niyogi & Burges, 1999, 2002

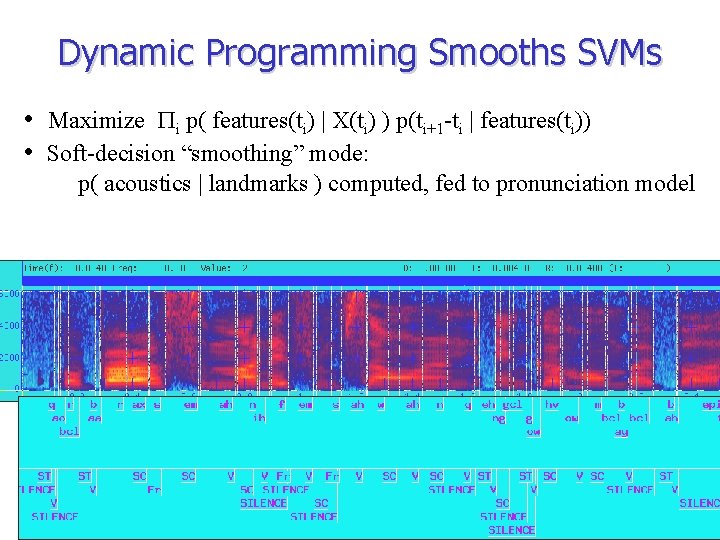

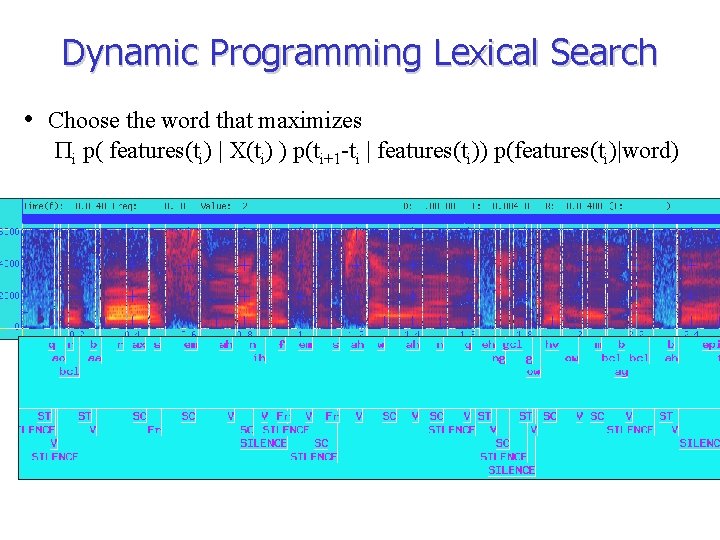

Dynamic Programming Smooths SVMs • Maximize Pi p( features(ti) | X(ti) ) p(ti+1 -ti | features(ti)) • Soft-decision “smoothing” mode: p( acoustics | landmarks ) computed, fed to pronunciation model

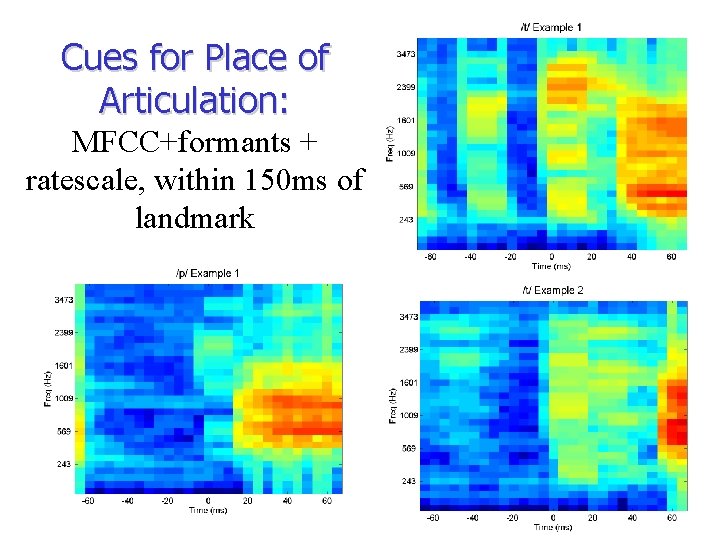

Cues for Place of Articulation: MFCC+formants + ratescale, within 150 ms of landmark

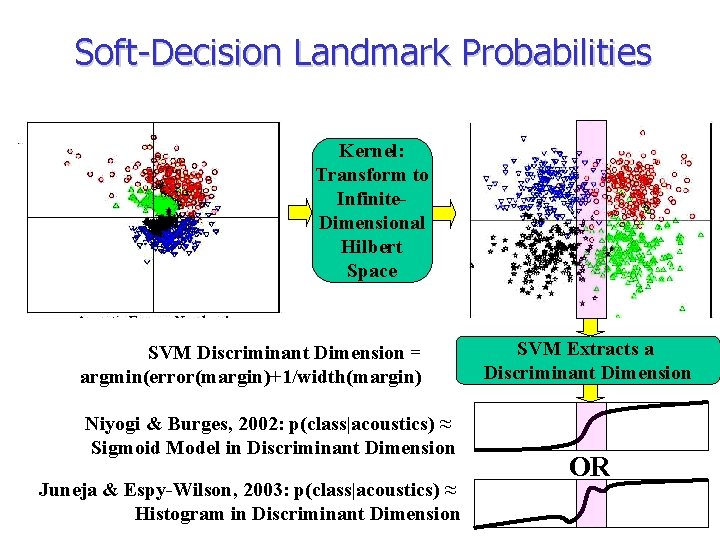

Soft-Decision Landmark Probabilities Kernel: Transform to Infinite. Dimensional Hilbert Space SVM Discriminant Dimension = argmin(error(margin)+1/width(margin) Niyogi & Burges, 2002: p(class|acoustics) ≈ Sigmoid Model in Discriminant Dimension Juneja & Espy-Wilson, 2003: p(class|acoustics) ≈ Histogram in Discriminant Dimension SVM Extracts a Discriminant Dimension OR

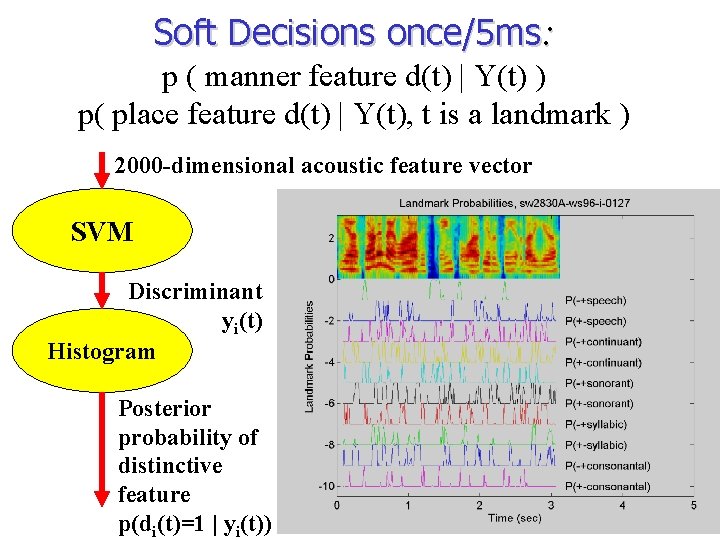

Soft Decisions once/5 ms: p ( manner feature d(t) | Y(t) ) p( place feature d(t) | Y(t), t is a landmark ) 2000 -dimensional acoustic feature vector SVM Discriminant yi(t) Histogram Posterior probability of distinctive feature p(di(t)=1 | yi(t))

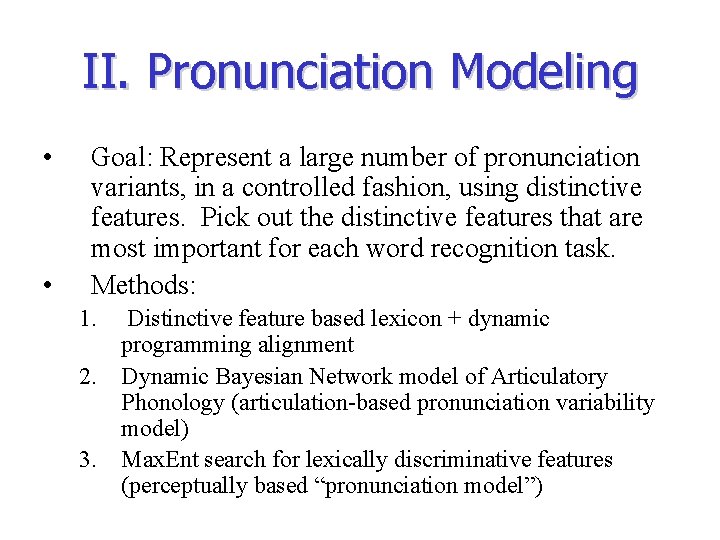

II. Pronunciation Modeling • • Goal: Represent a large number of pronunciation variants, in a controlled fashion, using distinctive features. Pick out the distinctive features that are most important for each word recognition task. Methods: 1. Distinctive feature based lexicon + dynamic programming alignment 2. Dynamic Bayesian Network model of Articulatory Phonology (articulation-based pronunciation variability model) 3. Max. Ent search for lexically discriminative features (perceptually based “pronunciation model”)

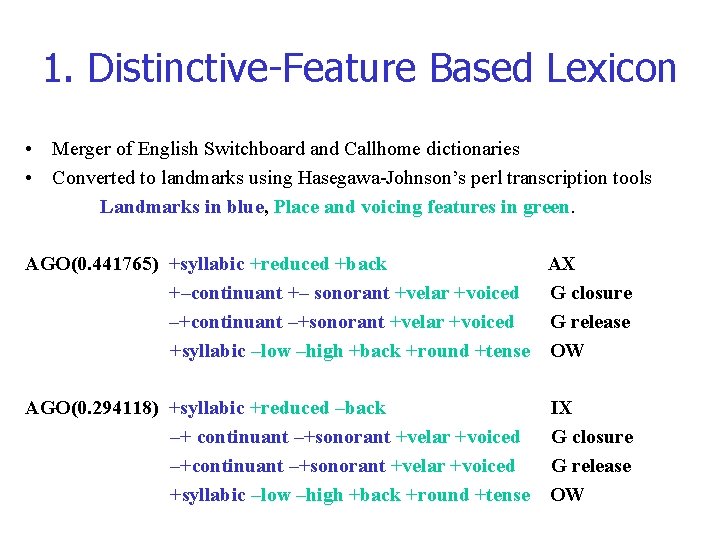

1. Distinctive-Feature Based Lexicon • Merger of English Switchboard and Callhome dictionaries • Converted to landmarks using Hasegawa-Johnson’s perl transcription tools Landmarks in blue, Place and voicing features in green. AGO(0. 441765) +syllabic +reduced +back AX +–continuant +– sonorant +velar +voiced G closure –+continuant –+sonorant +velar +voiced G release +syllabic –low –high +back +round +tense OW AGO(0. 294118) +syllabic +reduced –back –+ continuant –+sonorant +velar +voiced –+continuant –+sonorant +velar +voiced +syllabic –low –high +back +round +tense IX G closure G release OW

Dynamic Programming Lexical Search • Choose the word that maximizes Pi p( features(ti) | X(ti) ) p(ti+1 -ti | features(ti)) p(features(ti)|word)

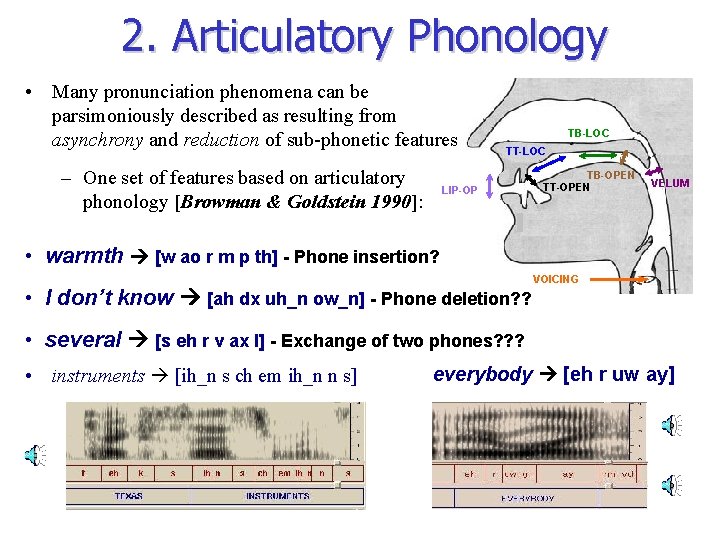

2. Articulatory Phonology • Many pronunciation phenomena can be parsimoniously described as resulting from asynchrony and reduction of sub-phonetic features – One set of features based on articulatory phonology [Browman & Goldstein 1990]: TB-LOC TT-LOC LIP-OP TB-OPEN TT-OPEN VELUM • warmth [w ao r m p th] - Phone insertion? • I don’t know [ah dx uh_n ow_n] - Phone deletion? ? VOICING • several [s eh r v ax l] - Exchange of two phones? ? ? • instruments [ih_n s ch em ih_n n s] everybody [eh r uw ay]

Dynamic Bayesian Network Model (Livescu and Glass, 2004) • The model is implemented as a dynamic Bayesian network (DBN): – A representation, via a directed graph, of a distribution over a set of variables that evolve through time . . . • Example DBN with three features: Pr( async 1; 2 = a ) = Pr(| ind 1 - ind 2 |= a ) given by baseform pronunciations 0 1 2 0 1. 7. 2 0. 7 0 0 2. 1. 2. 7 3 0. 1. 2 4 0 0. 1 … … … =1 =1

The DBN-SVM Hybrid Developed at WS 04 Word LIKE A Canonical Form Tongue front Tongue closed Surface Form Manner Place SVM Outputs Tongue front … Tongue open … … Vowel Tongue Mid Tongue open Semi-closed Tongue Front Glide Front Palatal p( g. PGR(x) | palatal glide release) p( g. GR(x) | glide release ) … x: Multi-Frame Observation including Spectrum, Formants, & Auditory Model …

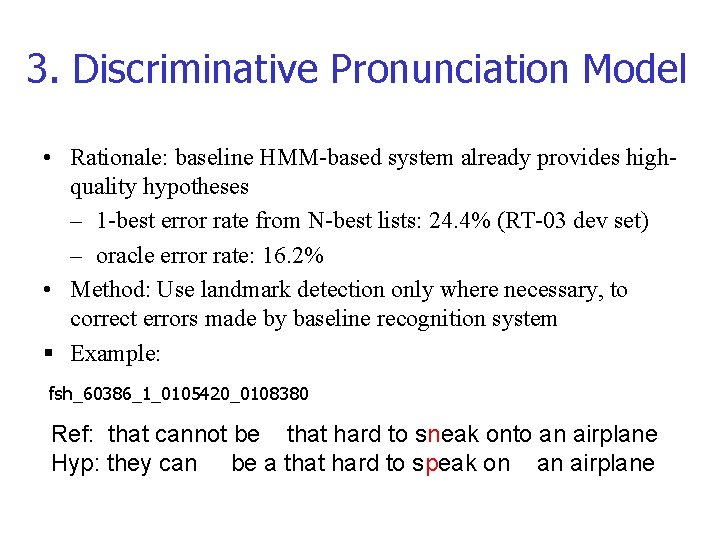

3. Discriminative Pronunciation Model • Rationale: baseline HMM-based system already provides highquality hypotheses – 1 -best error rate from N-best lists: 24. 4% (RT-03 dev set) – oracle error rate: 16. 2% • Method: Use landmark detection only where necessary, to correct errors made by baseline recognition system § Example: fsh_60386_1_0105420_0108380 Ref: that cannot be that hard to sneak onto an airplane Hyp: they can be a that hard to speak on an airplane

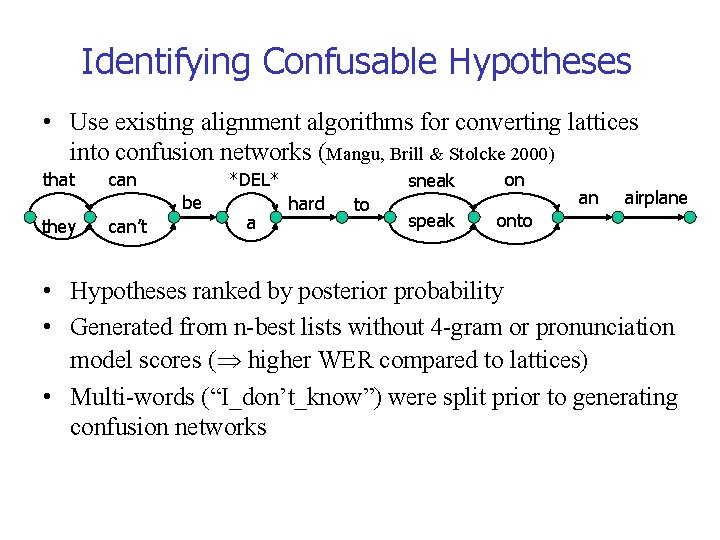

Identifying Confusable Hypotheses • Use existing alignment algorithms for converting lattices into confusion networks (Mangu, Brill & Stolcke 2000) that can *DEL* be they can’t a hard to sneak on speak onto an airplane • Hypotheses ranked by posterior probability • Generated from n-best lists without 4 -gram or pronunciation model scores ( higher WER compared to lattices) • Multi-words (“I_don’t_know”) were split prior to generating confusion networks

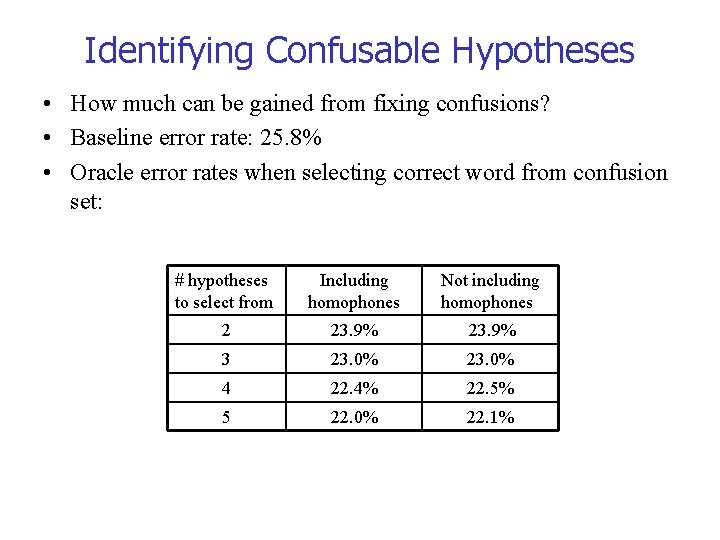

Identifying Confusable Hypotheses • How much can be gained from fixing confusions? • Baseline error rate: 25. 8% • Oracle error rates when selecting correct word from confusion set: # hypotheses to select from Including homophones Not including homophones 2 23. 9% 3 23. 0% 4 22. 4% 22. 5% 5 22. 0% 22. 1%

Selecting Relevant Landmarks • Not all landmarks are equally relevant for distinguishing between competing word hypotheses (e. g. vowel features irrelevant for sneak vs. speak) • Using all available landmarks might deteriorate performance when irrelevant landmarks have weak scores (but: redundancy might be useful) • Automatic selection algorithm – Should optimally distinguish set of confusable words (discriminative) – Should rank landmark features according to their relevance for distinguishing words (i. e. output should be interpretable in phonetic terms) – Should be extendable to features beyond landmarks

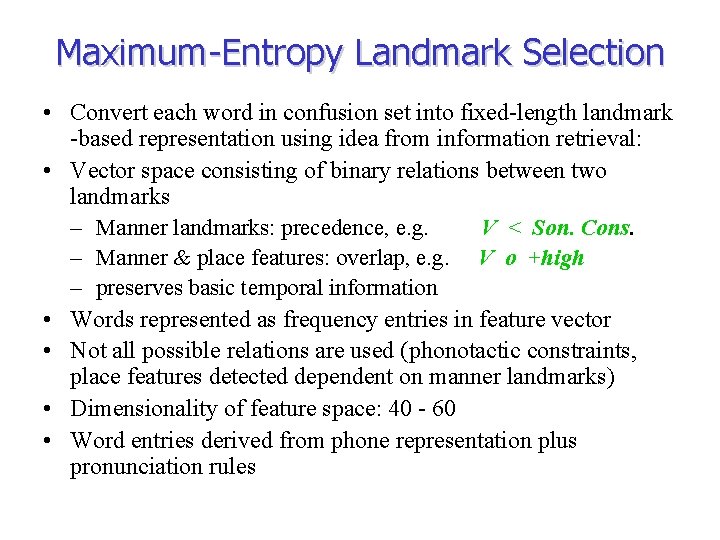

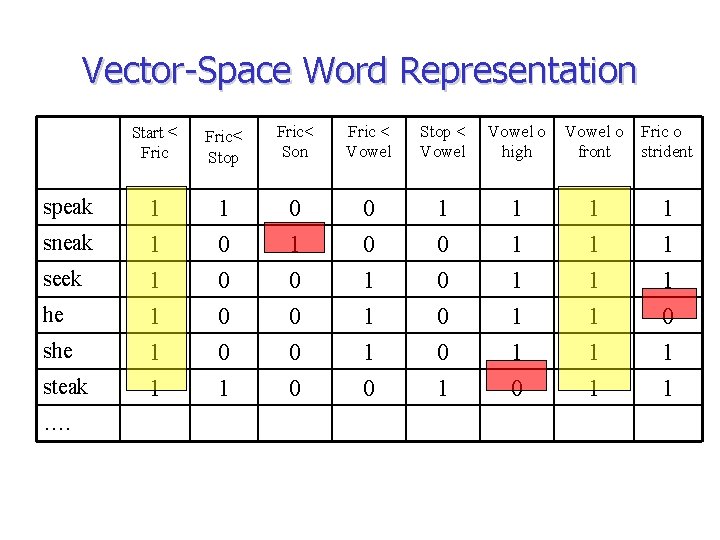

Maximum-Entropy Landmark Selection • Convert each word in confusion set into fixed-length landmark -based representation using idea from information retrieval: • Vector space consisting of binary relations between two landmarks – Manner landmarks: precedence, e. g. V < Son. Cons. – Manner & place features: overlap, e. g. V o +high – preserves basic temporal information • Words represented as frequency entries in feature vector • Not all possible relations are used (phonotactic constraints, place features detected dependent on manner landmarks) • Dimensionality of feature space: 40 - 60 • Word entries derived from phone representation plus pronunciation rules

Vector-Space Word Representation speak sneak seek he steak …. Start < Fric< Stop Fric< Son Fric < Vowel Stop < Vowel o high Vowel o front Fric o strident 1 1 1 1 0 0 0 0 1 1 1 0 0 0 0 1 1 1 1 1 0 1 1

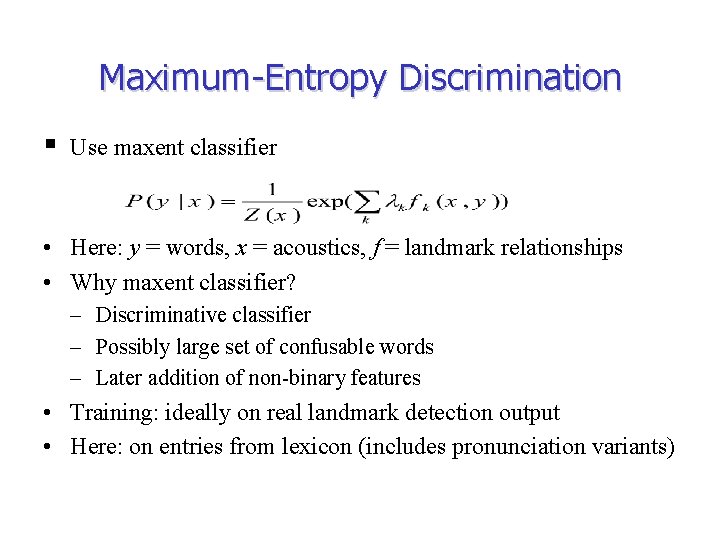

Maximum-Entropy Discrimination § Use maxent classifier • Here: y = words, x = acoustics, f = landmark relationships • Why maxent classifier? – Discriminative classifier – Possibly large set of confusable words – Later addition of non-binary features • Training: ideally on real landmark detection output • Here: on entries from lexicon (includes pronunciation variants)

Maximum-Entropy Discrimination • Example: sneak vs. speak sneak SC ○ +blade FR < SC FR < SIL < ST …. . 2. 47 -2. 11 -1. 75 SC ○ +blade -2. 47 FR < SC -2. 47 FR < SIL 2. 11 SIL < ST 1. 75 …. . • Different model is trained for each confusion set landmarks can have different weights in different contexts

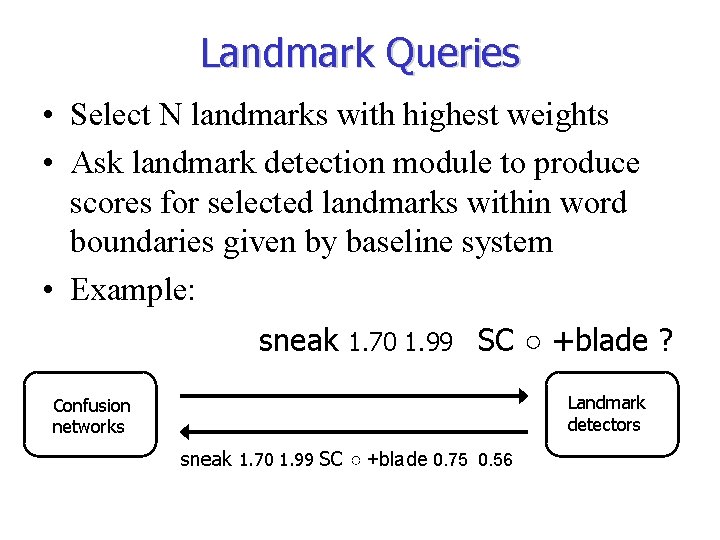

Landmark Queries • Select N landmarks with highest weights • Ask landmark detection module to produce scores for selected landmarks within word boundaries given by baseline system • Example: sneak 1. 70 1. 99 SC ○ +blade ? Landmark detectors Confusion networks sneak 1. 70 1. 99 SC ○ +blade 0. 75 0. 56

III. Evaluation

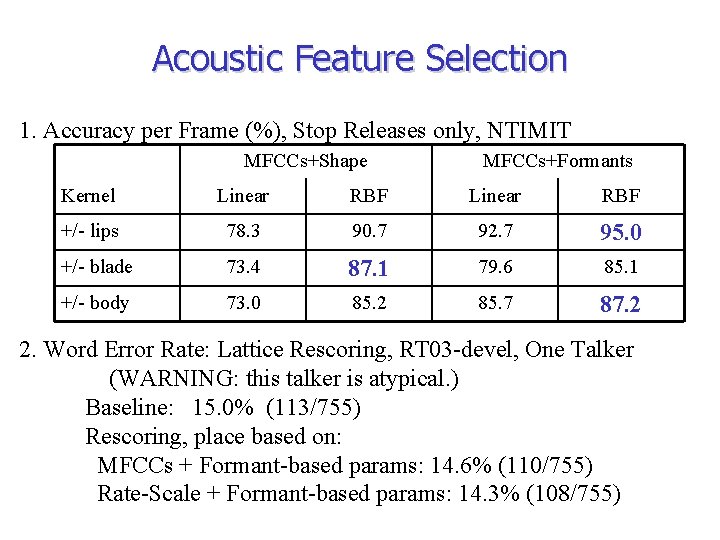

Acoustic Feature Selection 1. Accuracy per Frame (%), Stop Releases only, NTIMIT MFCCs+Shape MFCCs+Formants Kernel Linear RBF +/- lips 78. 3 90. 7 92. 7 95. 0 +/- blade 73. 4 87. 1 79. 6 85. 1 +/- body 73. 0 85. 2 85. 7 87. 2 2. Word Error Rate: Lattice Rescoring, RT 03 -devel, One Talker (WARNING: this talker is atypical. ) Baseline: 15. 0% (113/755) Rescoring, place based on: MFCCs + Formant-based params: 14. 6% (110/755) Rate-Scale + Formant-based params: 14. 3% (108/755)

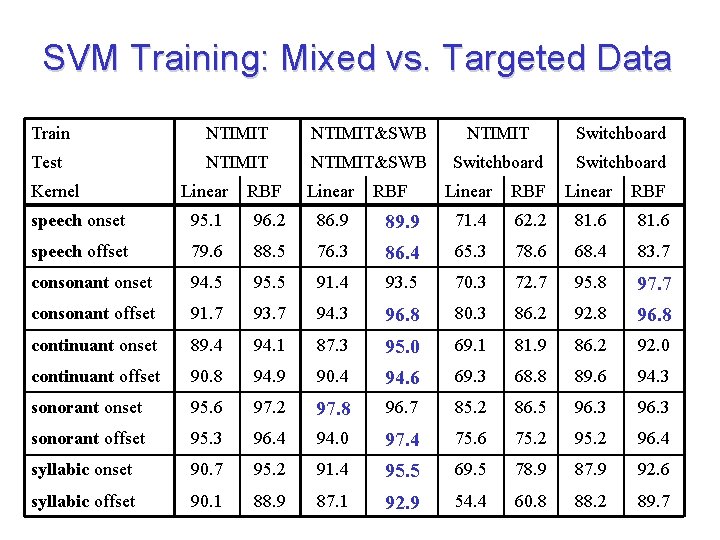

SVM Training: Mixed vs. Targeted Data Train NTIMIT&SWB NTIMIT Switchboard Test NTIMIT&SWB Switchboard Kernel Linear RBF Linear speech onset 95. 1 96. 2 86. 9 speech offset 79. 6 88. 5 consonant onset 94. 5 consonant offset RBF Linear RBF 89. 9 71. 4 62. 2 81. 6 76. 3 86. 4 65. 3 78. 6 68. 4 83. 7 95. 5 91. 4 93. 5 70. 3 72. 7 95. 8 97. 7 91. 7 93. 7 94. 3 96. 8 80. 3 86. 2 92. 8 96. 8 continuant onset 89. 4 94. 1 87. 3 95. 0 69. 1 81. 9 86. 2 92. 0 continuant offset 90. 8 94. 9 90. 4 94. 6 69. 3 68. 8 89. 6 94. 3 sonorant onset 95. 6 97. 2 97. 8 96. 7 85. 2 86. 5 96. 3 sonorant offset 95. 3 96. 4 94. 0 97. 4 75. 6 75. 2 96. 4 syllabic onset 90. 7 95. 2 91. 4 95. 5 69. 5 78. 9 87. 9 92. 6 syllabic offset 90. 1 88. 9 87. 1 92. 9 54. 4 60. 8 88. 2 89. 7

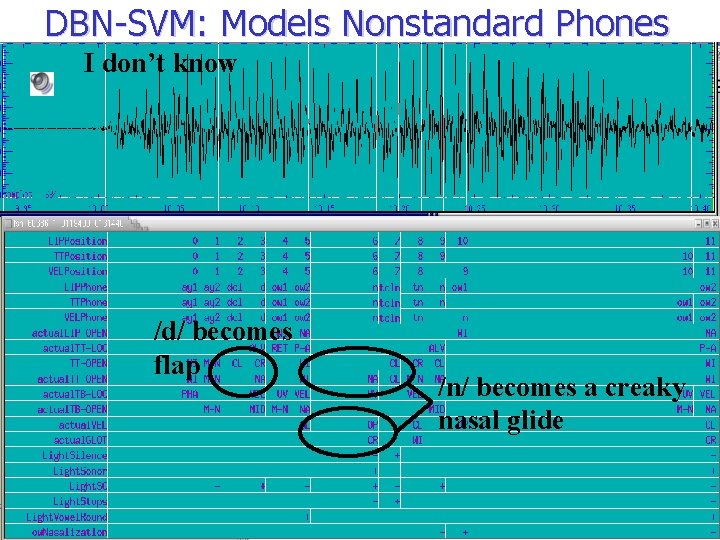

DBN-SVM: Models Nonstandard Phones I don’t know /d/ becomes flap /n/ becomes a creaky nasal glide

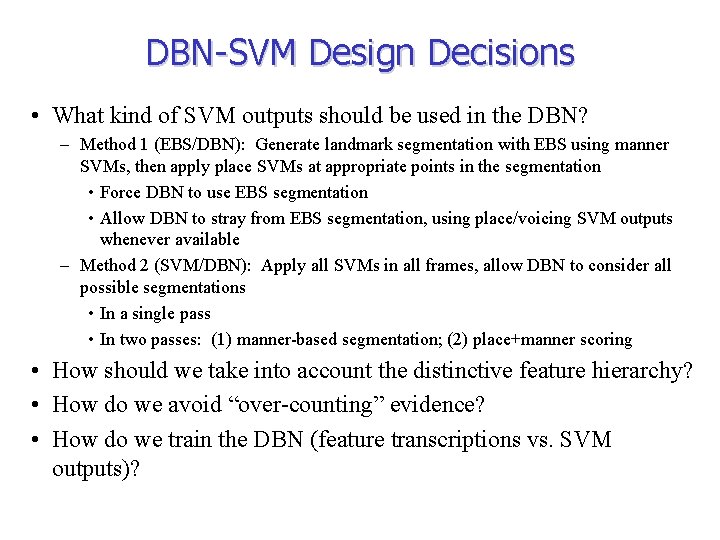

DBN-SVM Design Decisions • What kind of SVM outputs should be used in the DBN? – Method 1 (EBS/DBN): Generate landmark segmentation with EBS using manner SVMs, then apply place SVMs at appropriate points in the segmentation • Force DBN to use EBS segmentation • Allow DBN to stray from EBS segmentation, using place/voicing SVM outputs whenever available – Method 2 (SVM/DBN): Apply all SVMs in all frames, allow DBN to consider all possible segmentations • In a single pass • In two passes: (1) manner-based segmentation; (2) place+manner scoring • How should we take into account the distinctive feature hierarchy? • How do we avoid “over-counting” evidence? • How do we train the DBN (feature transcriptions vs. SVM outputs)?

DBN-SVM Rescoring Experiments • For each lattice edge: – SVM probabilities computed over edge duration and used as soft evidence in DBN – DBN computes a score S P(word | evidence) – Final edge score is a weighted interpolation of baseline scores and EBS/DBN or SVM/DBN score Date Experimental setup - Jul 31_0 Aug 1_19 Aug 2_19 Baseline EBS/DBN, “hierarchically-normalized” SVM output probabilities, DBN trained on subset of ICSI transcriptions + improved silence modeling EBS/DBN, unnormalized SVM probs + fricative lip feature Aug 4_2 Aug 6_20 Aug 7_3 Aug 8_19 Aug 11_19 Aug 14_0 Aug 14_20 + DBN trained using SVM outputs + full feature hierarchy in DBN + reduction probabilities depend on word frequency + retrained SVMs + nasal classifier + DBN bug fixes SVM/DBN, 1 pass SVM/DBN, 2 pass, using only high-accuracy SVMs 3 -speaker RT 03 dev WER (# errors) WER 27. 7 (550) 26. 8 27. 6 (549) 27. 3 (543) 26. 8 27. 3 (543) 27. 4 (545) 27. 4 (544) Miserable failure! 27. 3 (542) 27. 2 (541)

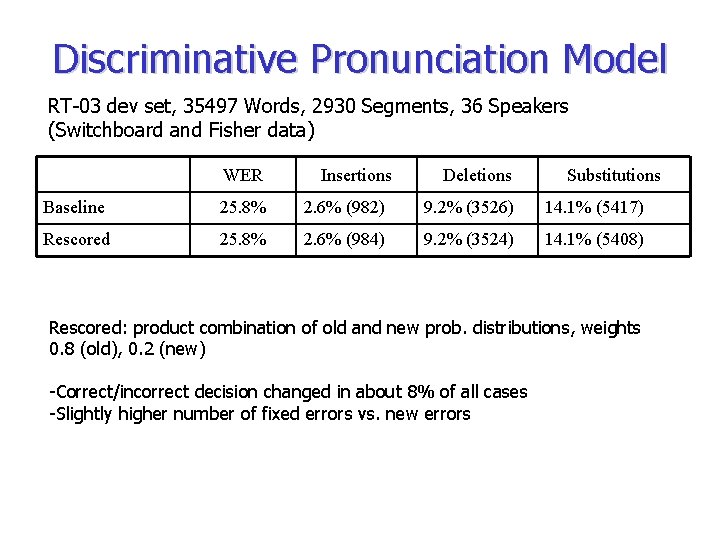

Discriminative Pronunciation Model RT-03 dev set, 35497 Words, 2930 Segments, 36 Speakers (Switchboard and Fisher data) WER Insertions Deletions Substitutions Baseline 25. 8% 2. 6% (982) 9. 2% (3526) 14. 1% (5417) Rescored 25. 8% 2. 6% (984) 9. 2% (3524) 14. 1% (5408) Rescored: product combination of old and new prob. distributions, weights 0. 8 (old), 0. 2 (new) -Correct/incorrect decision changed in about 8% of all cases -Slightly higher number of fixed errors vs. new errors

Analysis • When does it work? – Detectors give high probability for correct distinguishing feature mean (correct) vs. me (false) V < +nasal 0. 76 • When does it not work? – Problems in lexicon representation once (correct) vs. what (false): Sil ○ +blade 0. 87 can’t [kæ t] (correct) vs cat (false): SC ○ +nasal 0. 26 – Landmark detectors are confident but wrong like (correct) vs. liked (false): Sil ○ +blade 0. 95

Analysis • Incorrect landmark scores often due to word boundary effects, e. g. : much he she • Word boundaries given by baseline system may exclude relevant landmarks or include parts of neighbouring words • DBN-SVM system also failed when word boundaries grossly misaligned

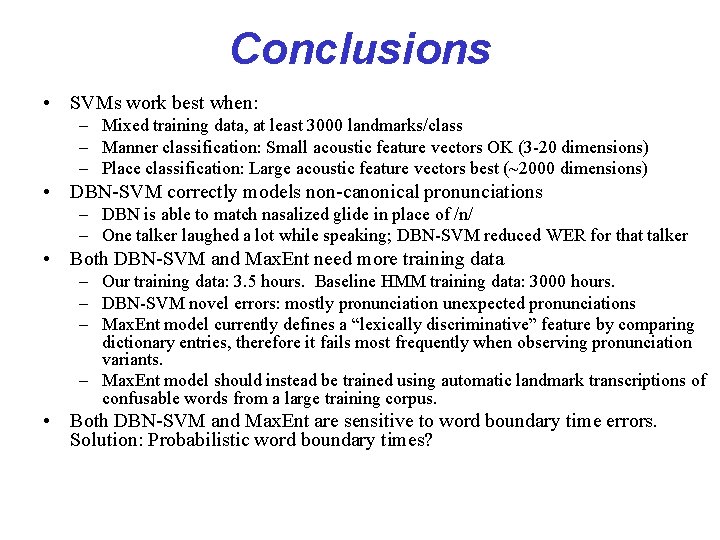

Conclusions • SVMs work best when: – Mixed training data, at least 3000 landmarks/class – Manner classification: Small acoustic feature vectors OK (3 -20 dimensions) – Place classification: Large acoustic feature vectors best (~2000 dimensions) • DBN-SVM correctly models non-canonical pronunciations – DBN is able to match nasalized glide in place of /n/ – One talker laughed a lot while speaking; DBN-SVM reduced WER for that talker • Both DBN-SVM and Max. Ent need more training data – Our training data: 3. 5 hours. Baseline HMM training data: 3000 hours. – DBN-SVM novel errors: mostly pronunciation unexpected pronunciations – Max. Ent model currently defines a “lexically discriminative” feature by comparing dictionary entries, therefore it fails most frequently when observing pronunciation variants. – Max. Ent model should instead be trained using automatic landmark transcriptions of confusable words from a large training corpus. • Both DBN-SVM and Max. Ent are sensitive to word boundary time errors. Solution: Probabilistic word boundary times?

- Slides: 40