KNOWLEDGE GRAPH AND CORPUS DRIVEN SEGMENTATION AND ANSWER

- Slides: 28

KNOWLEDGE GRAPH AND CORPUS DRIVEN SEGMENTATION AND ANSWER INFERENCE FOR TELEGRAPHIC ENTITYSEEKING QUERIES EMNLP 2014 MANDAR JOSHI UMA SAWANT SOUMEN CHAKRABARTI IBM RESEARCH IIT BOMBAY, YAHOO LABS IIT BOMBAY mandarj 90@in. ibm. com uma@cse. iitb. ac. in soumen@cse. iitb. ac. in

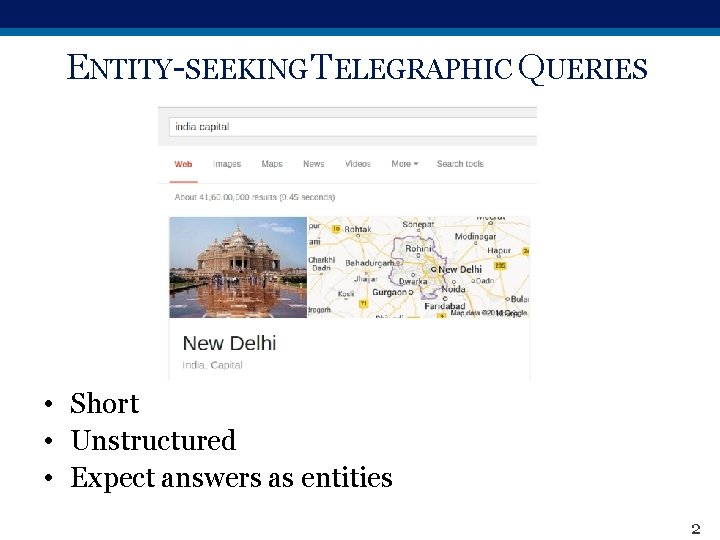

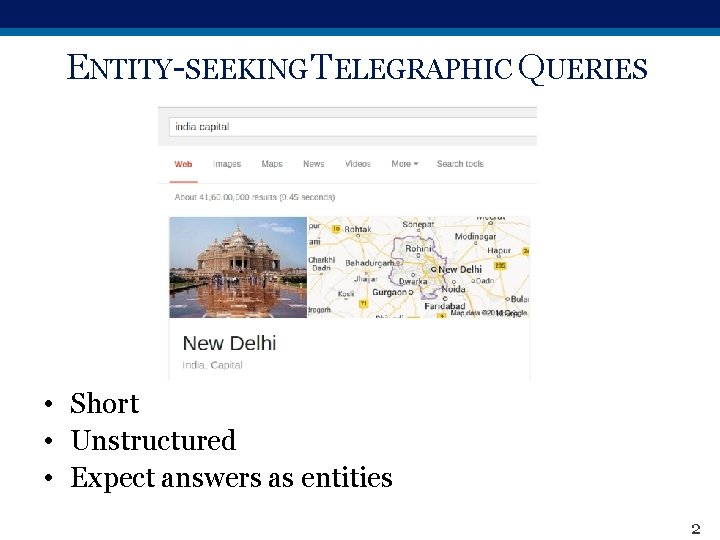

ENTITY-SEEKING TELEGRAPHIC QUERIES • Short • Unstructured • Expect answers as entities 2

CHALLENGES • No reliable syntax clues § Free word order § No or rare capitalization § Rare to find quoted phrases • Ambiguous § Multiple interpretations § aamir khan films • Aamir Khan - the Indian actor or British boxer • Films - appeared in, directed by, or about • Difficult to exploit redundancy 3

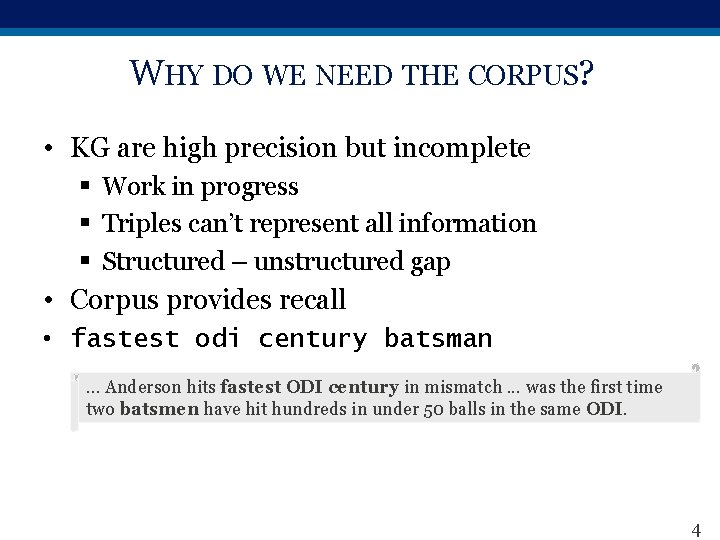

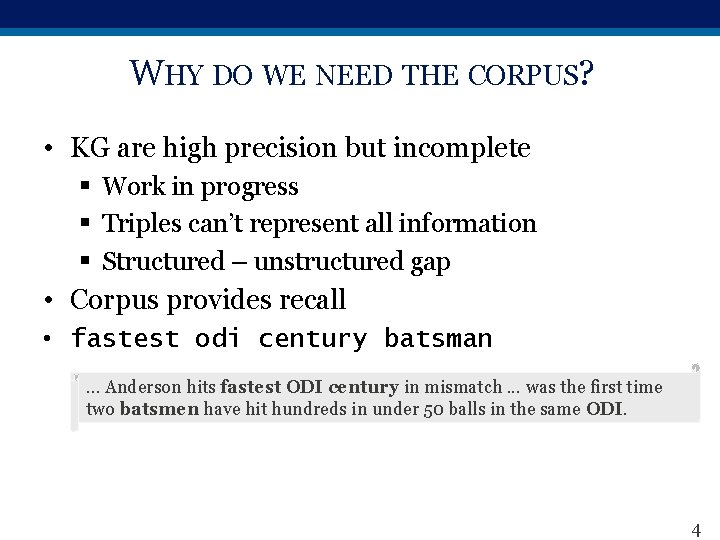

WHY DO WE NEED THE CORPUS? • KG are high precision but incomplete § Work in progress § Triples can’t represent all information § Structured – unstructured gap • Corpus provides recall • fastest odi century batsman … Anderson hits fastest ODI century in mismatch. . . was the first time two batsmen have hit hundreds in under 50 balls in the same ODI. 4

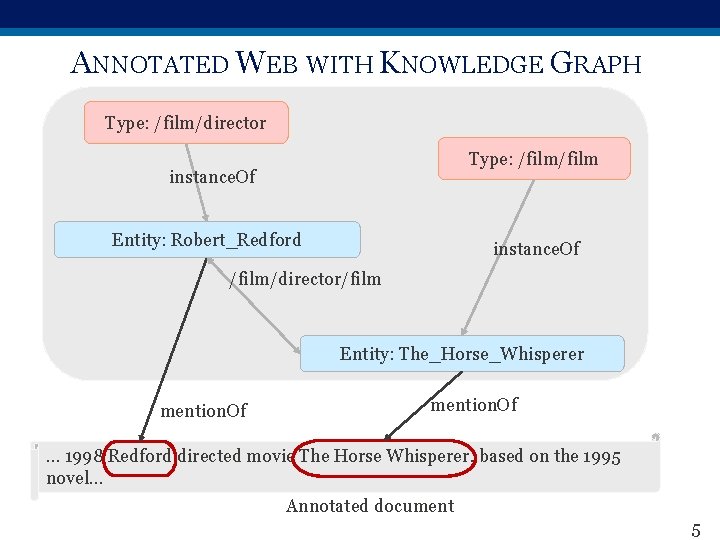

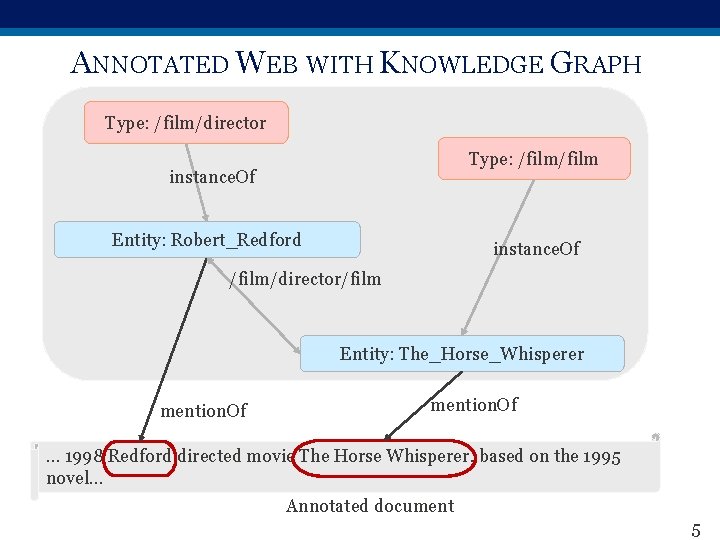

ANNOTATED WEB WITH KNOWLEDGE GRAPH Type: /film/director Type: /film instance. Of Entity: Robert_Redford instance. Of /film/director/film Entity: The_Horse_Whisperer mention. Of … 1998 Redford directed movie The Horse Whisperer, based on the 1995 novel… Annotated document 5

INTERPRETATION VIA SEGMENTATION 6

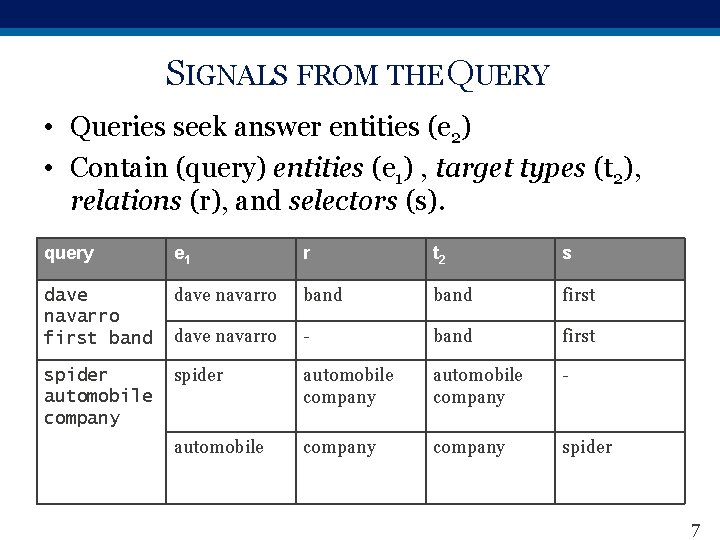

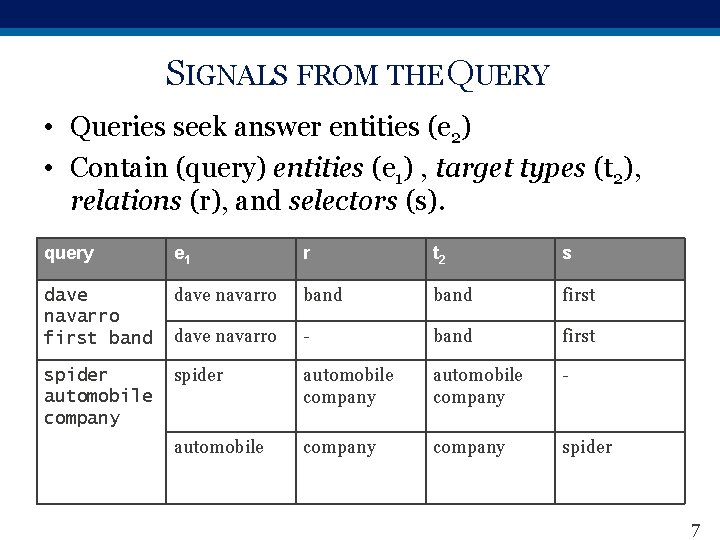

SIGNALS FROM THE QUERY • Queries seek answer entities (e 2) • Contain (query) entities (e 1) , target types (t 2), relations (r), and selectors (s). query e 1 r t 2 s dave navarro first band dave navarro band first dave navarro - band first spider automobile company - automobile company spider 7

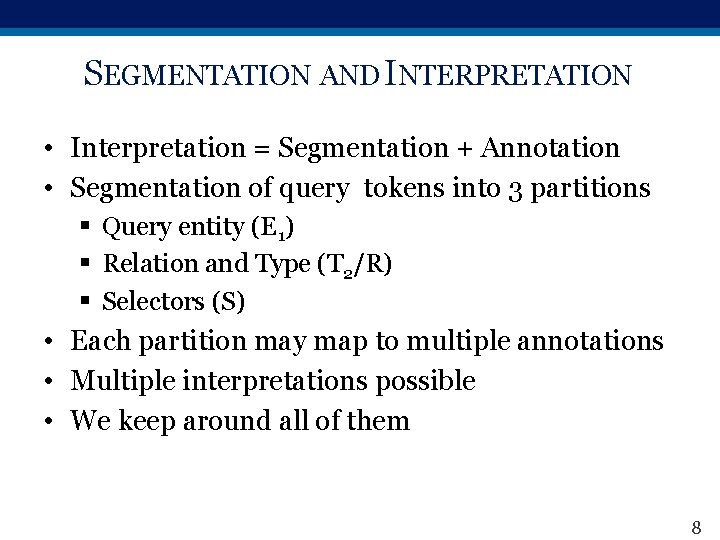

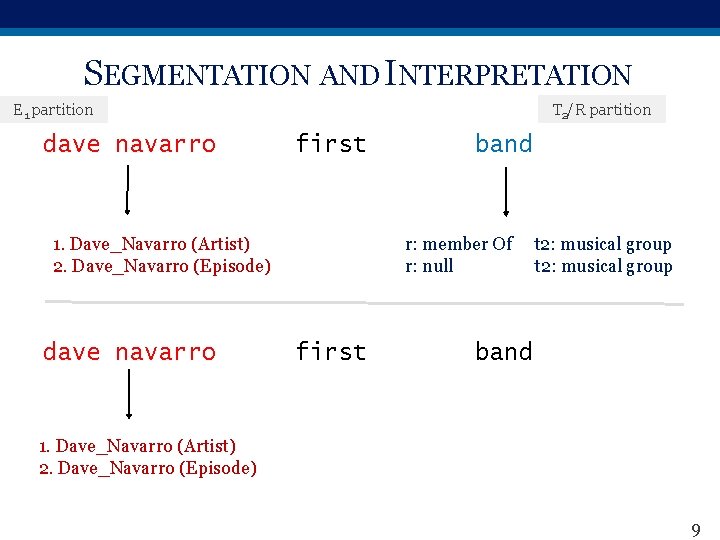

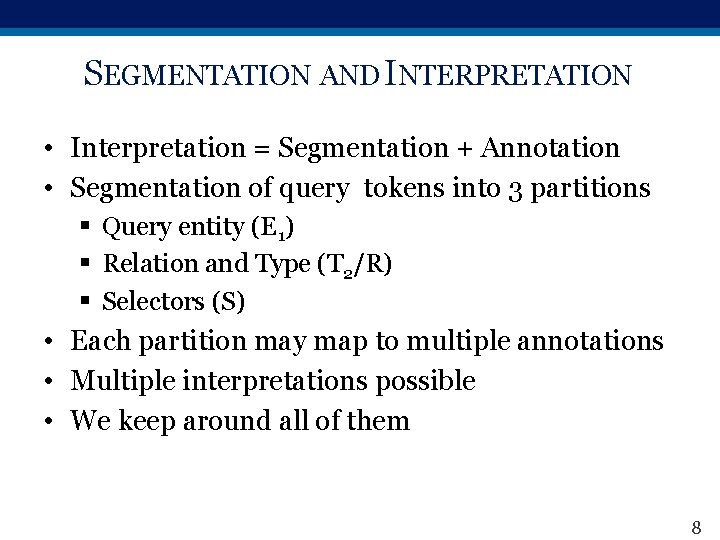

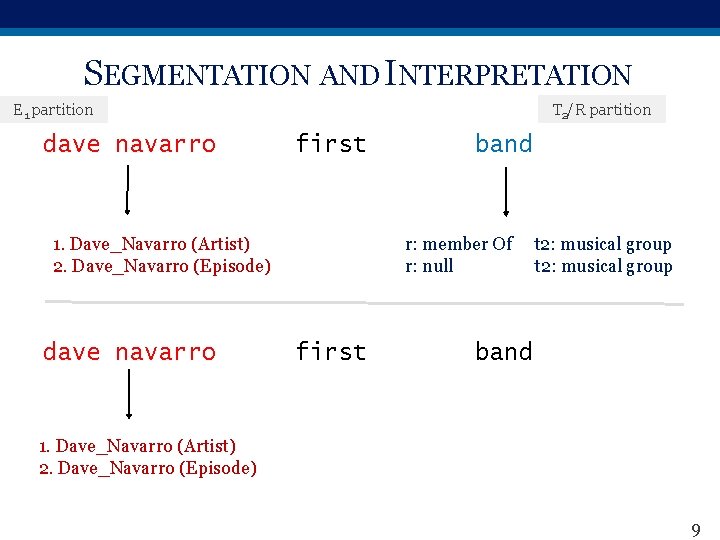

SEGMENTATION AND INTERPRETATION • Interpretation = Segmentation + Annotation • Segmentation of query tokens into 3 partitions § Query entity (E 1) § Relation and Type (T 2/R) § Selectors (S) • Each partition may map to multiple annotations • Multiple interpretations possible • We keep around all of them 8

SEGMENTATION AND INTERPRETATION T 2/R partition E 1 partition dave navarro first 1. Dave_Navarro (Artist) 2. Dave_Navarro (Episode) dave navarro band r: member Of r: null first t 2: musical group band 1. Dave_Navarro (Artist) 2. Dave_Navarro (Episode) 9

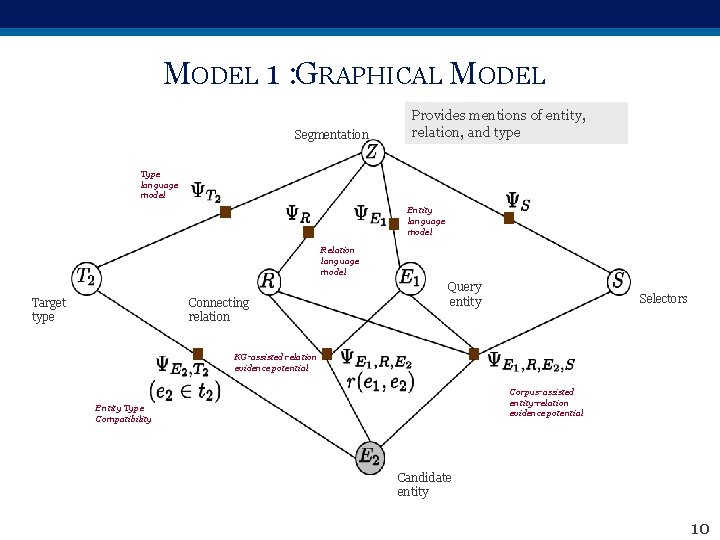

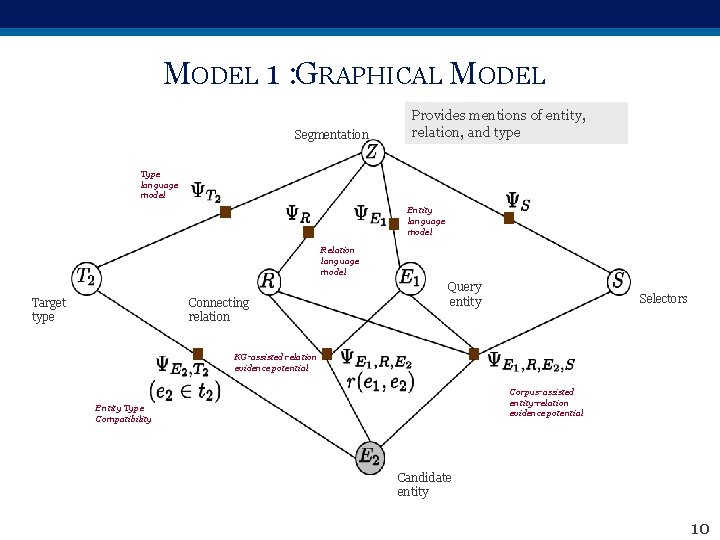

MODEL 1 : GRAPHICAL MODEL Segmentation Provides mentions of entity, relation, and type Type language model Entity language model Relation language model Target type Connecting relation Query entity Selectors KG-assisted relation evidence potential Corpus-assisted entity-relation evidence potential Entity Type Compatibility Candidate entity 10

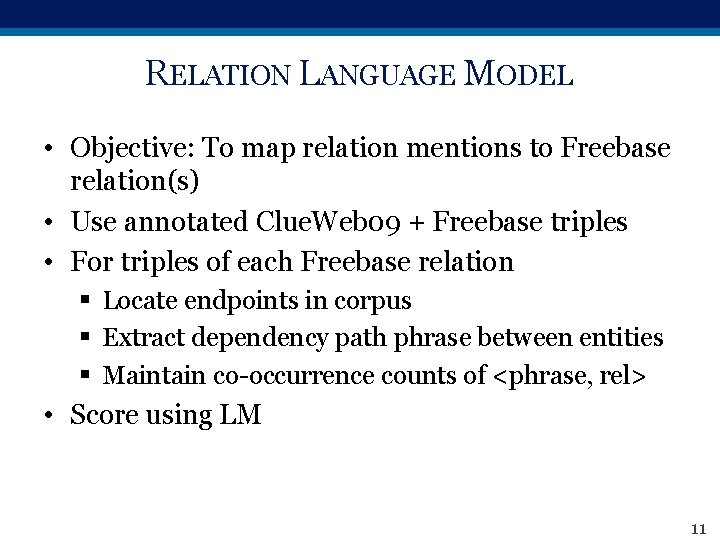

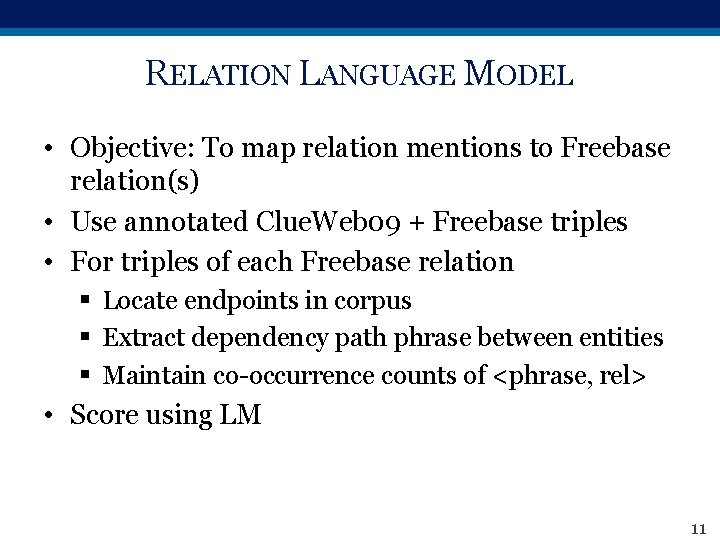

RELATION LANGUAGE MODEL • Objective: To map relation mentions to Freebase relation(s) • Use annotated Clue. Web 09 + Freebase triples • For triples of each Freebase relation § Locate endpoints in corpus § Extract dependency path phrase between entities § Maintain co-occurrence counts of <phrase, rel> • Score using LM 11

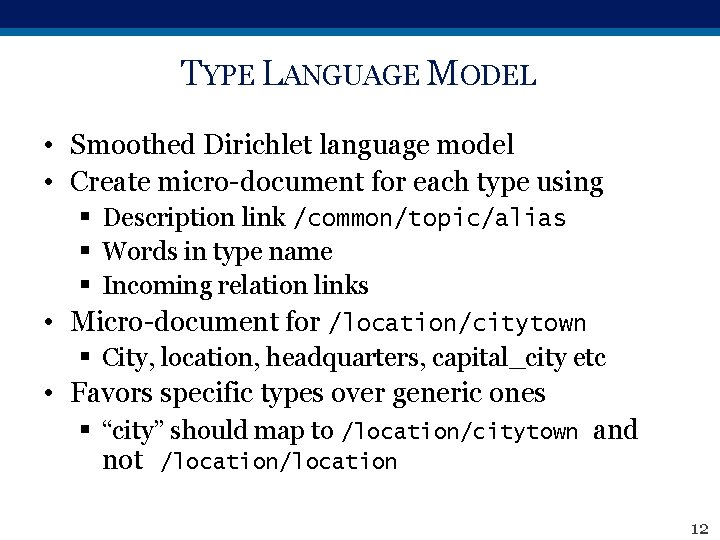

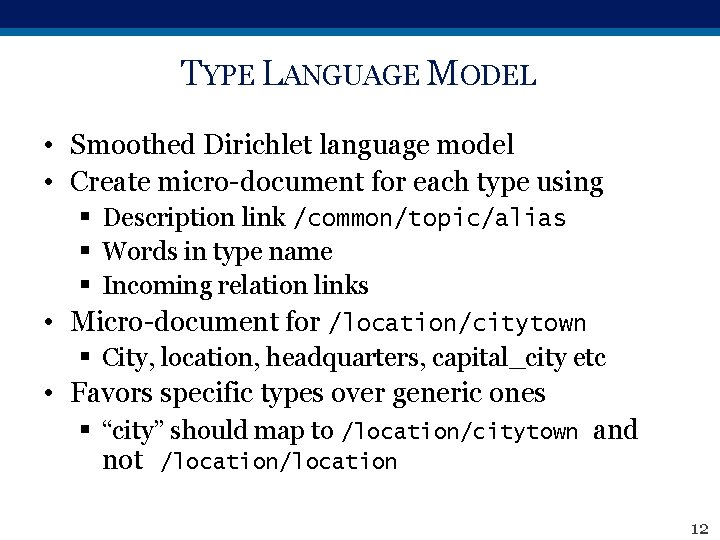

TYPE LANGUAGE MODEL • Smoothed Dirichlet language model • Create micro-document for each type using § Description link /common/topic/alias § Words in type name § Incoming relation links • Micro-document for /location/citytown § City, location, headquarters, capital_city etc • Favors specific types over generic ones § “city” should map to /location/citytown and not /location 12

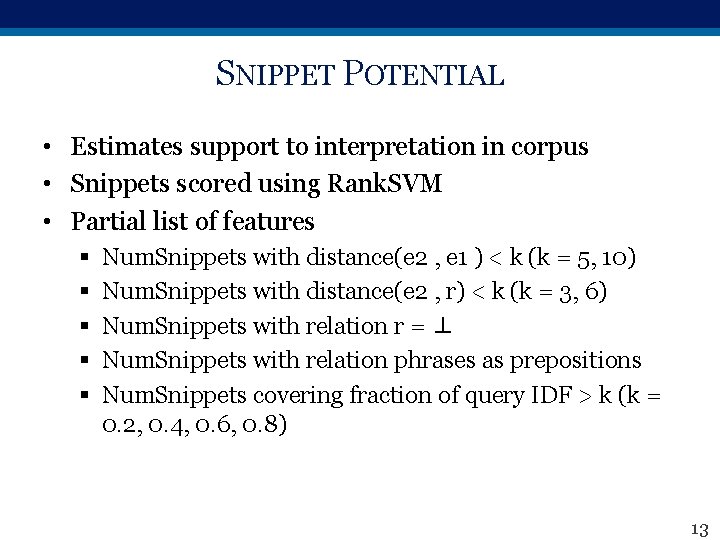

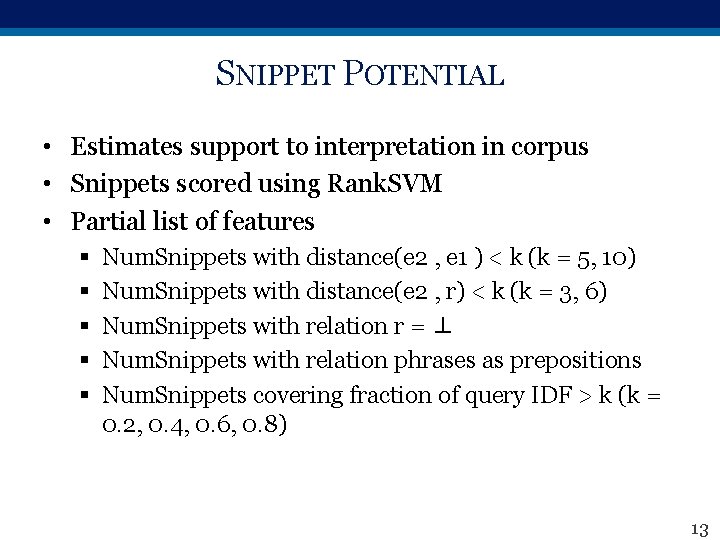

SNIPPET POTENTIAL • Estimates support to interpretation in corpus • Snippets scored using Rank. SVM • Partial list of features § § § Num. Snippets with distance(e 2 , e 1 ) < k (k = 5, 10) Num. Snippets with distance(e 2 , r) < k (k = 3, 6) Num. Snippets with relation r = ⊥ Num. Snippets with relation phrases as prepositions Num. Snippets covering fraction of query IDF > k (k = 0. 2, 0. 4, 0. 6, 0. 8) 13

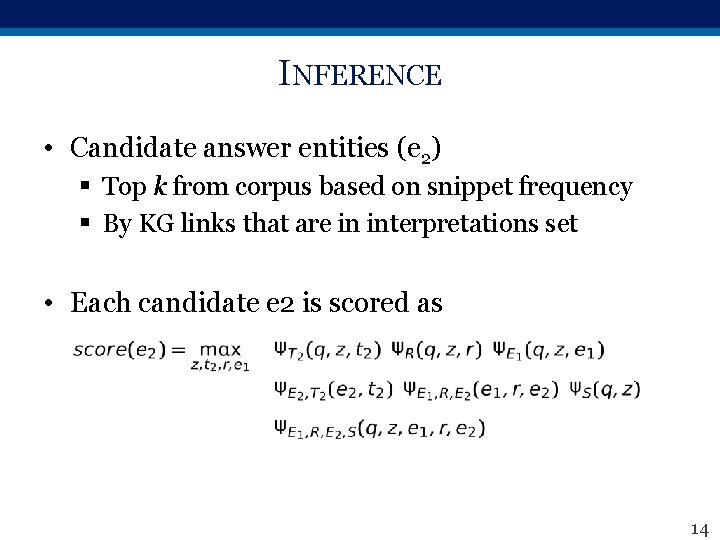

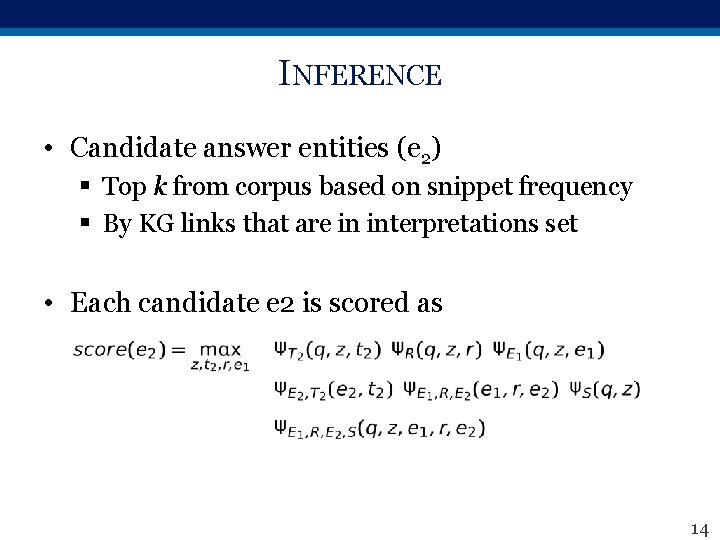

INFERENCE • Candidate answer entities (e 2) § Top k from corpus based on snippet frequency § By KG links that are in interpretations set • Each candidate e 2 is scored as 14

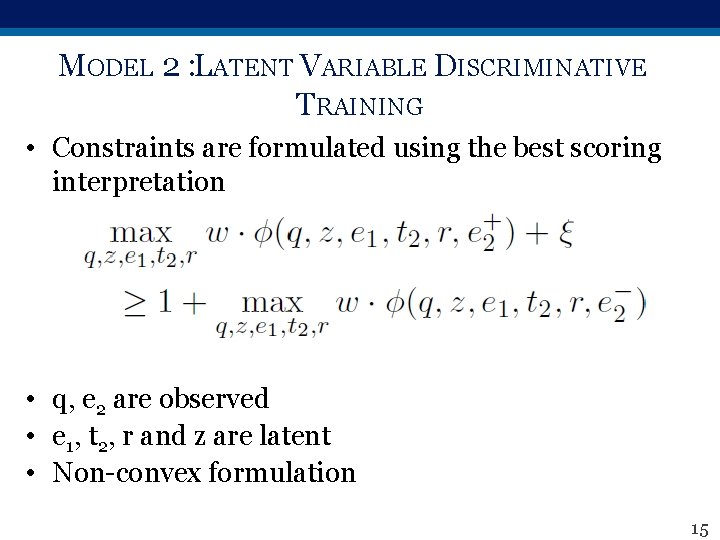

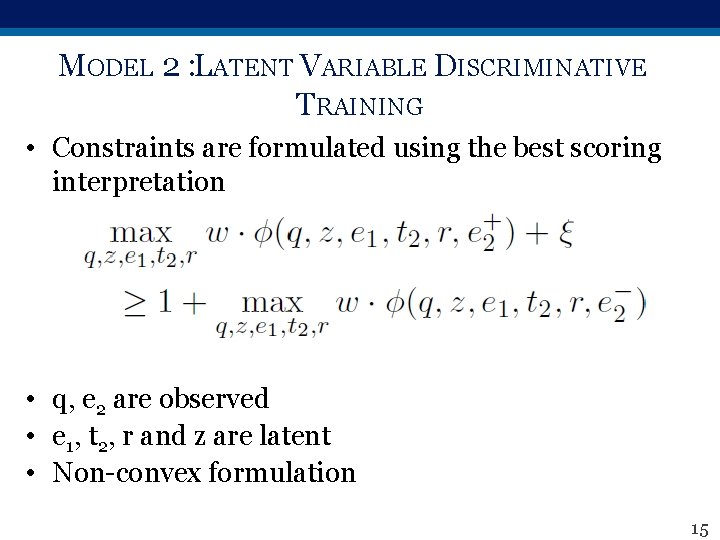

MODEL 2 : LATENT VARIABLE DISCRIMINATIVE TRAINING • Constraints are formulated using the best scoring interpretation • q, e 2 are observed • e 1, t 2, r and z are latent • Non-convex formulation 15

EXPERIMENTS 16

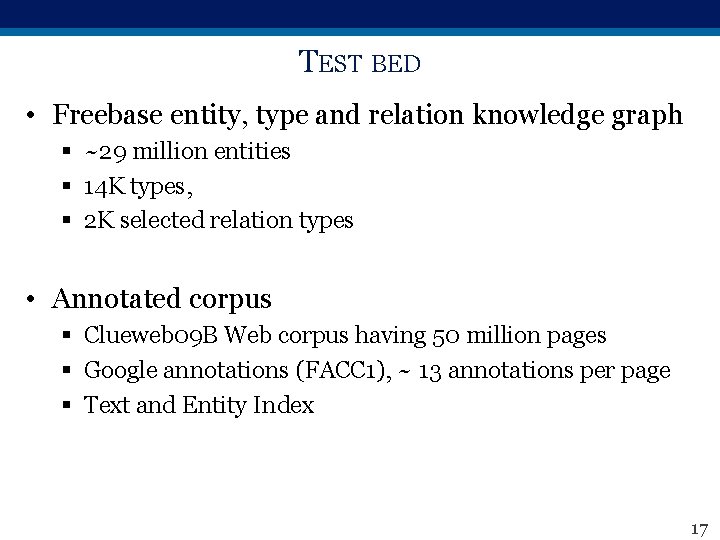

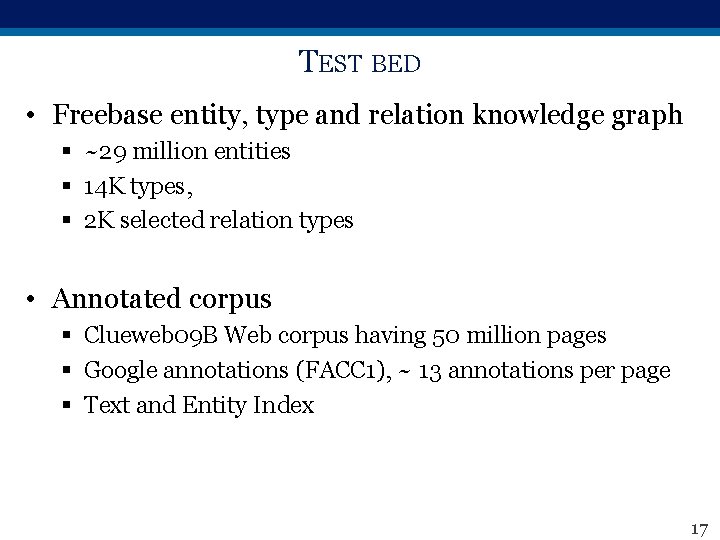

TEST BED • Freebase entity, type and relation knowledge graph § ~29 million entities § 14 K types, § 2 K selected relation types • Annotated corpus § Clueweb 09 B Web corpus having 50 million pages § Google annotations (FACC 1), ~ 13 annotations per page § Text and Entity Index 17

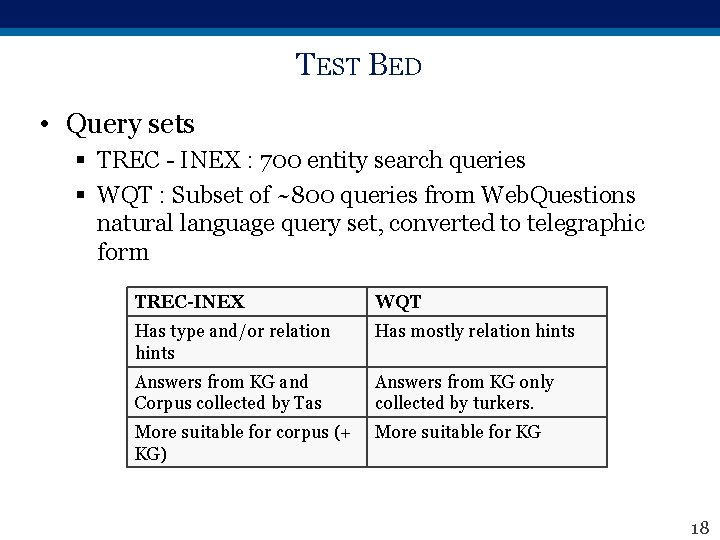

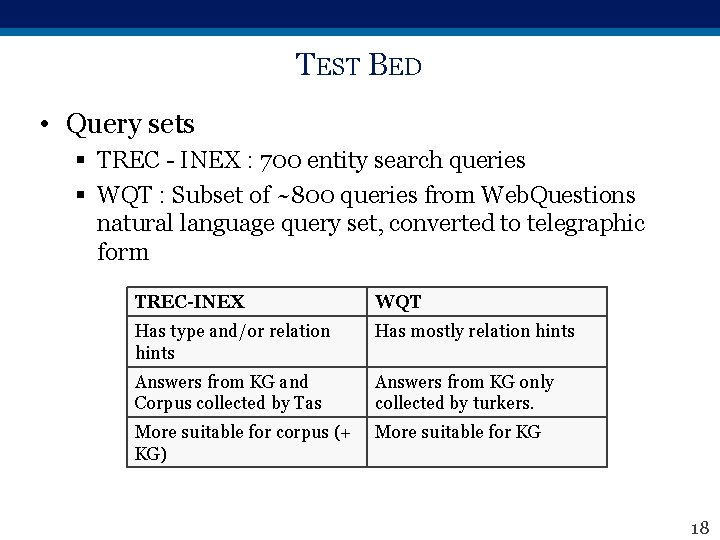

TEST BED • Query sets § TREC - INEX : 700 entity search queries § WQT : Subset of ~800 queries from Web. Questions natural language query set, converted to telegraphic form TREC-INEX WQT Has type and/or relation hints Has mostly relation hints Answers from KG and Corpus collected by Tas Answers from KG only collected by turkers. More suitable for corpus (+ KG) More suitable for KG 18

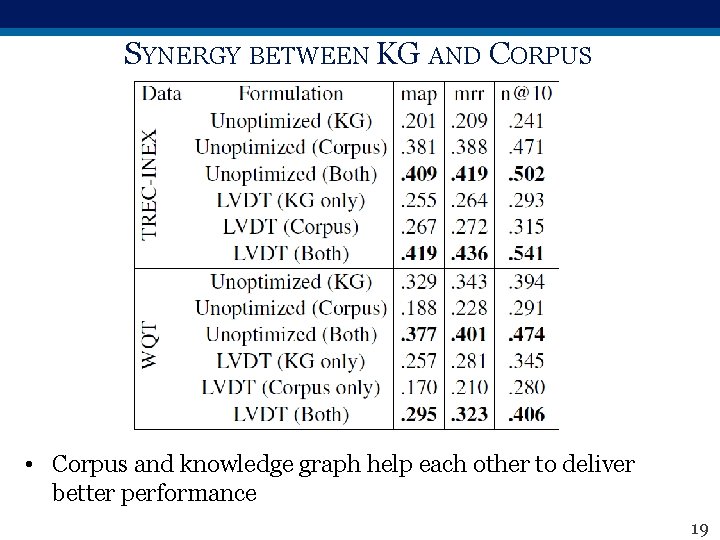

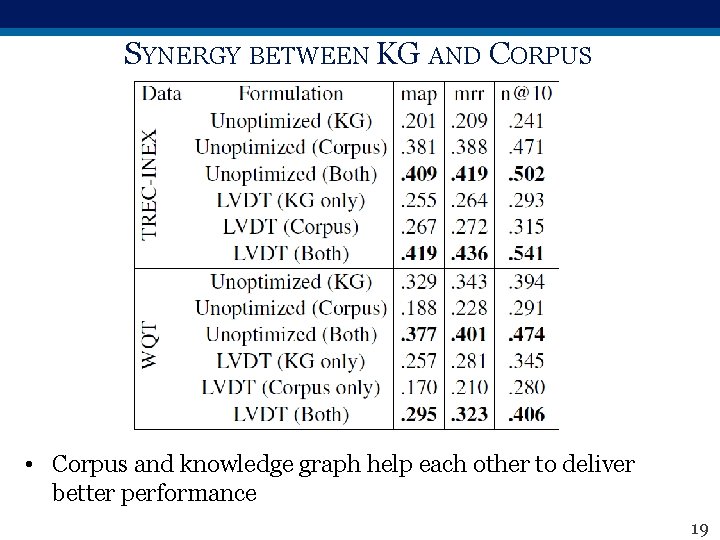

SYNERGY BETWEEN KG AND CORPUS • Corpus and knowledge graph help each other to deliver better performance 19

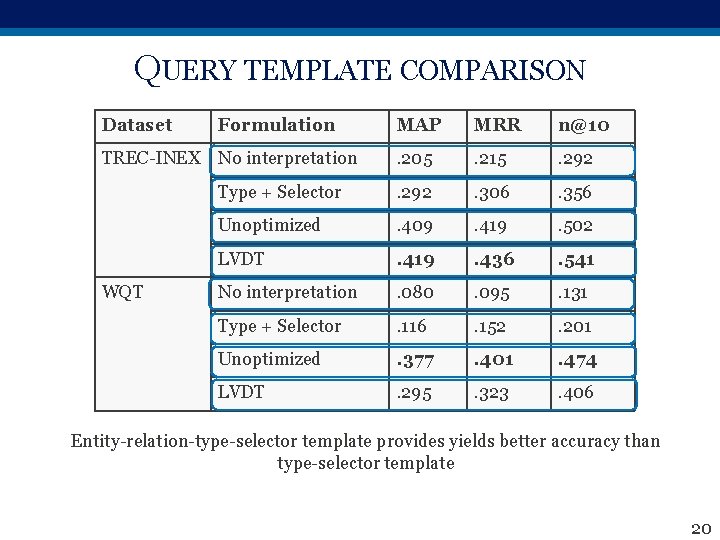

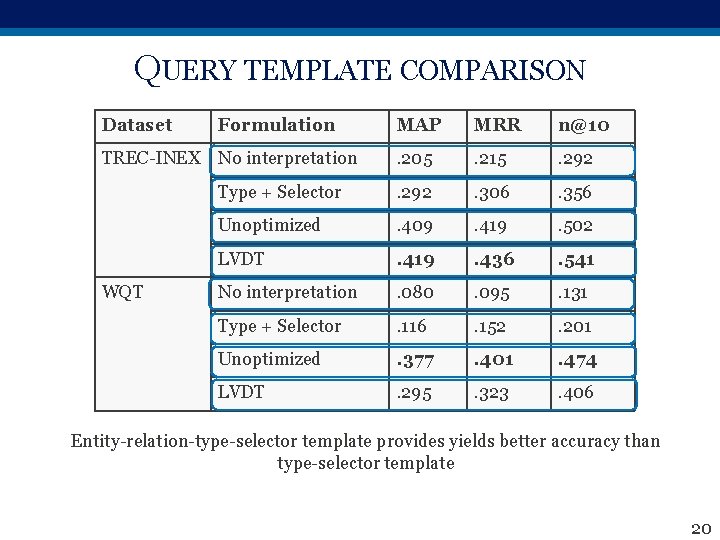

QUERY TEMPLATE COMPARISON Dataset Formulation MAP MRR n@10 . 205 . 215 . 292 Type + Selector . 292 . 306 . 356 Unoptimized . 409 . 419 . 502 LVDT . 419 . 436 . 541 No interpretation . 080 . 095 . 131 Type + Selector . 116 . 152 . 201 Unoptimized . 377 . 401 . 474 LVDT . 295 . 323 . 406 TREC-INEX No interpretation WQT Entity-relation-type-selector template provides yields better accuracy than type-selector template 20

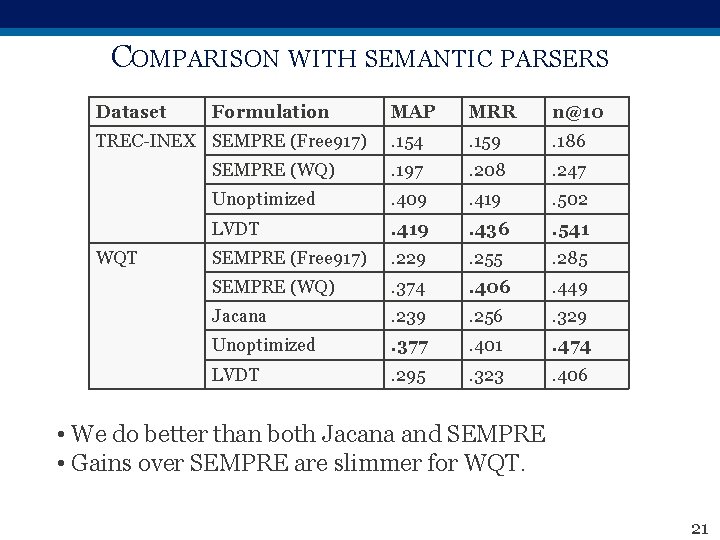

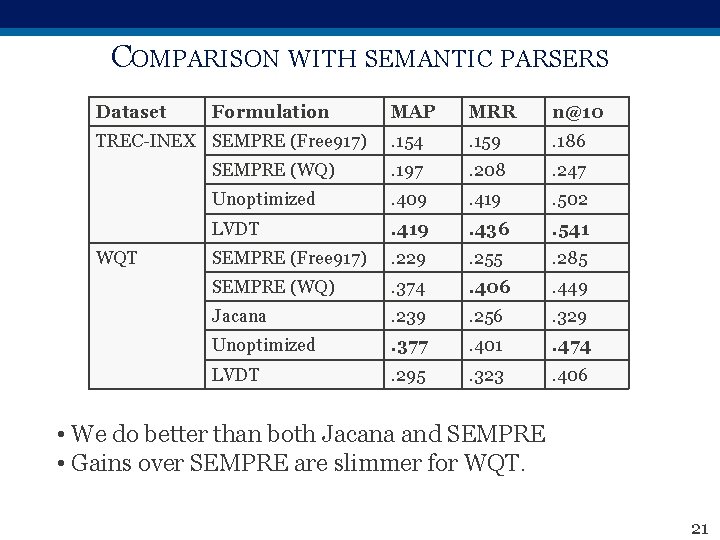

COMPARISON WITH SEMANTIC PARSERS Dataset Formulation MAP MRR n@10 . 154 . 159 . 186 SEMPRE (WQ) . 197 . 208 . 247 Unoptimized . 409 . 419 . 502 LVDT . 419 . 436 . 541 SEMPRE (Free 917) . 229 . 255 . 285 SEMPRE (WQ) . 374 . 406 . 449 Jacana . 239 . 256 . 329 Unoptimized . 377 . 401 . 474 LVDT . 295 . 323 . 406 TREC-INEX SEMPRE (Free 917) WQT • We do better than both Jacana and SEMPRE • Gains over SEMPRE are slimmer for WQT. 21

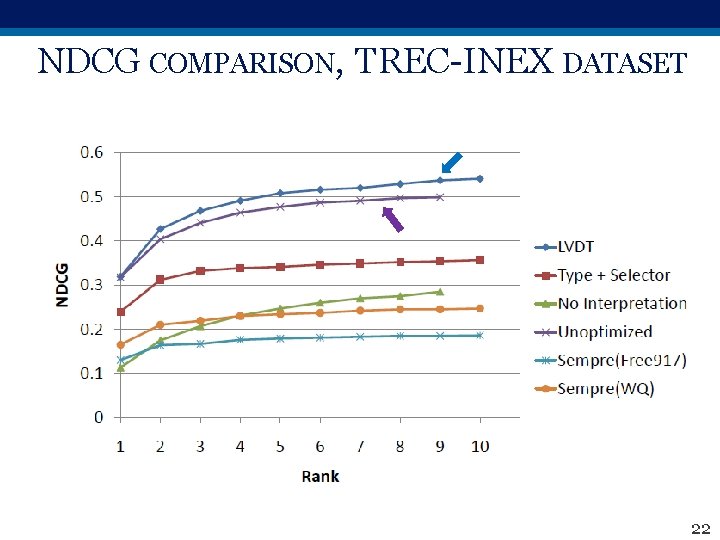

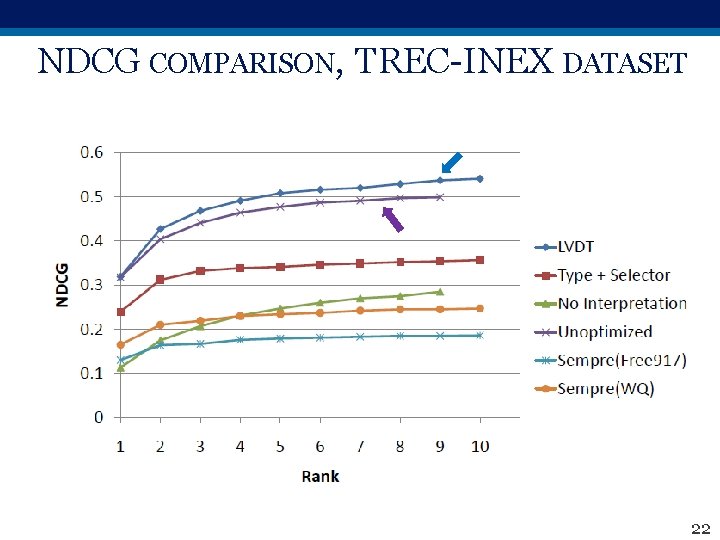

NDCG COMPARISON, TREC-INEX DATASET 22

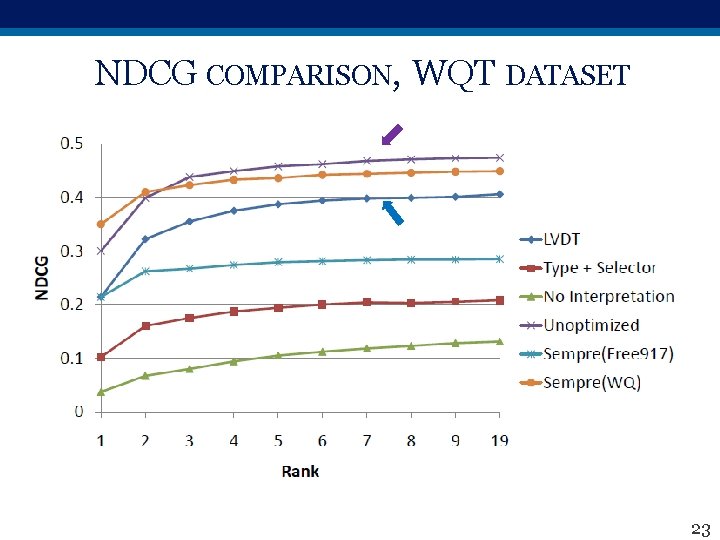

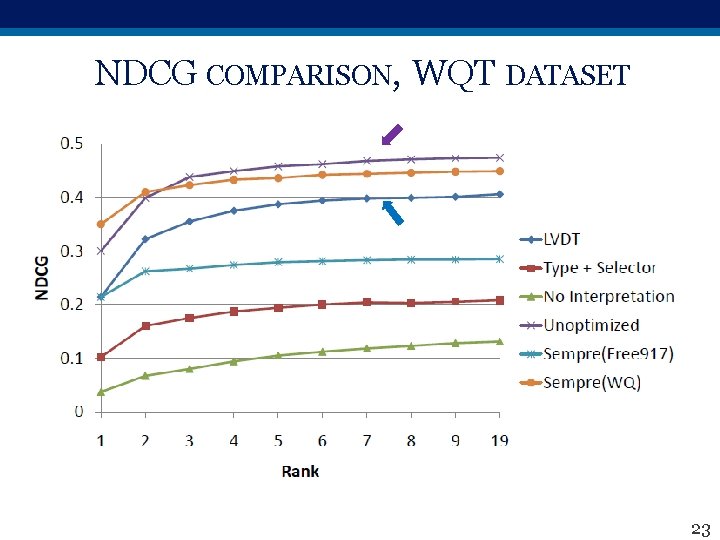

NDCG COMPARISON, WQT DATASET 23

SUMMARY • Query interpretation is rewarding, but non-trivial • Semantic parsers do not work well with syntax-less telegraphic queries, segmentation based models work better • Entity-relation-type-selector template better than type-selector template • Knowledge graph and corpus provide complementary benefits 24

FUTURE WORK • Extend to NL queries § More natural type and relation hints § More filler words; need better word selection for corpus match • Handle relation joins § Requires more complex relation model § Large interpretation space 25

THANK YOU! 26

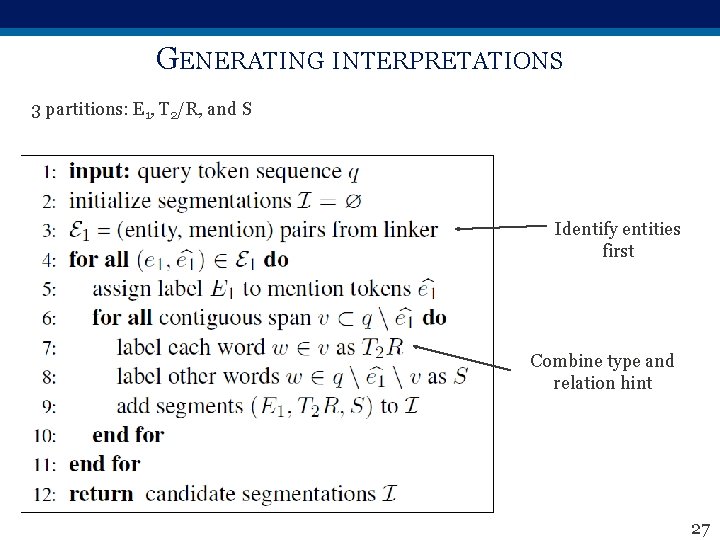

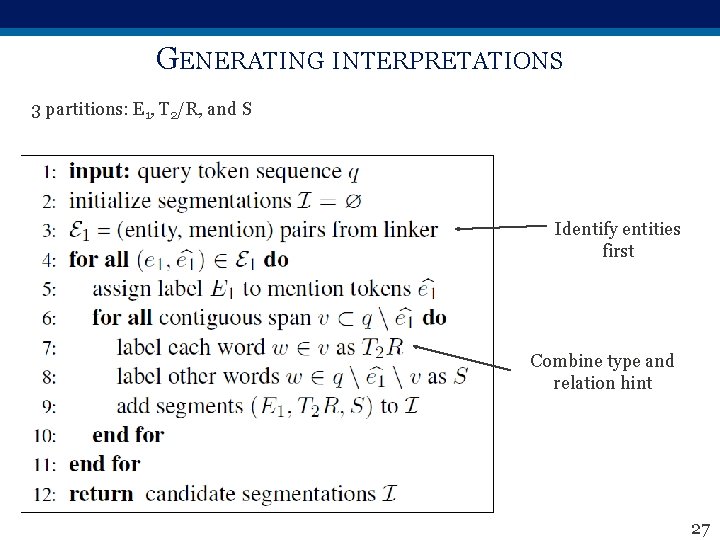

GENERATING INTERPRETATIONS 3 partitions: E 1, T 2/R, and S Identify entities first Combine type and relation hint 27

KG VS. CORPUS • KG § Better quality content than corpus § Generally incomplete • Corpus § Has better recall but more noise § Dependence on annotation algorithm • Complementary Benefits! 28