Kinect SDK Tutorial Skeleton and Camera RGB Anant

Kinect SDK Tutorial Skeleton and Camera (RGB) Anant Bhardwaj

Skeleton Tracking • • Getting skeleton data Getting Joint positions Scaling (uses coding 4 fun library) Fine-tuning – Using Transform. Smooth parameters

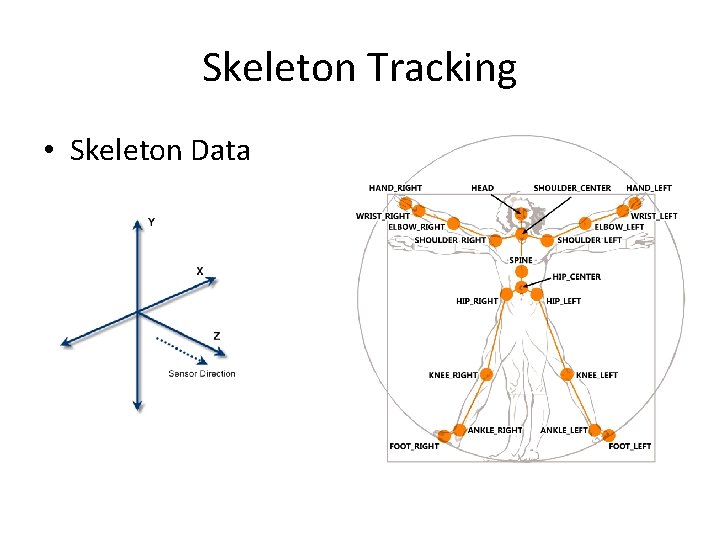

Skeleton Tracking • Skeleton Data

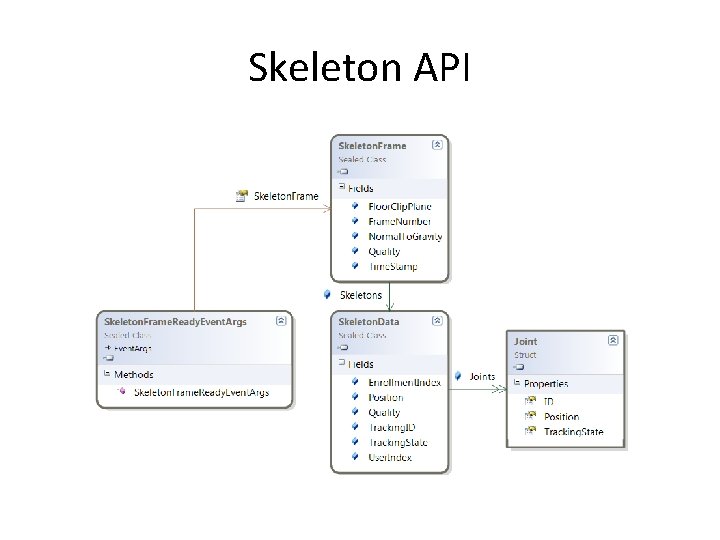

Skeleton API

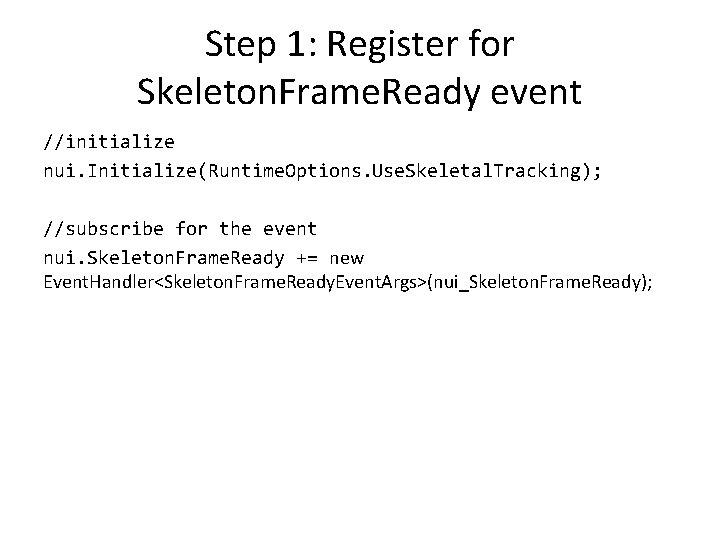

Step 1: Register for Skeleton. Frame. Ready event //initialize nui. Initialize(Runtime. Options. Use. Skeletal. Tracking); //subscribe for the event nui. Skeleton. Frame. Ready += new Event. Handler<Skeleton. Frame. Ready. Event. Args>(nui_Skeleton. Frame. Ready);

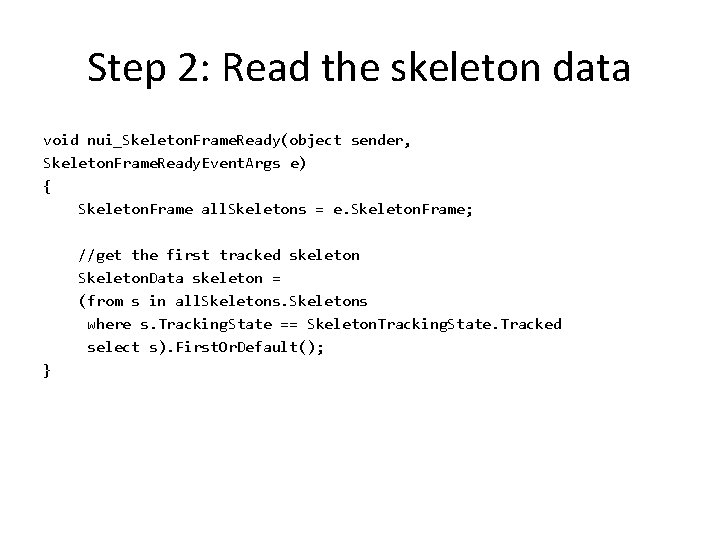

Step 2: Read the skeleton data void nui_Skeleton. Frame. Ready(object sender, Skeleton. Frame. Ready. Event. Args e) { Skeleton. Frame all. Skeletons = e. Skeleton. Frame; //get the first tracked skeleton Skeleton. Data skeleton = (from s in all. Skeletons where s. Tracking. State == Skeleton. Tracking. State. Tracked select s). First. Or. Default(); }

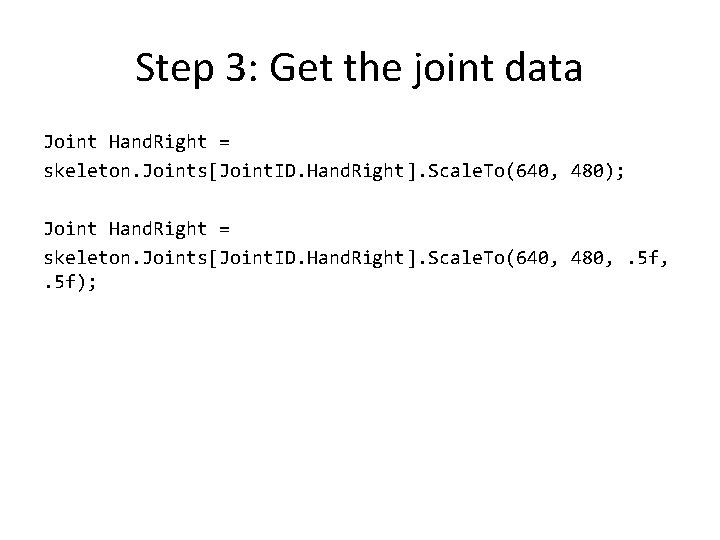

Step 3: Get the joint data Joint Hand. Right = skeleton. Joints[Joint. ID. Hand. Right]. Scale. To(640, 480); Joint Hand. Right = skeleton. Joints[Joint. ID. Hand. Right]. Scale. To(640, 480, . 5 f);

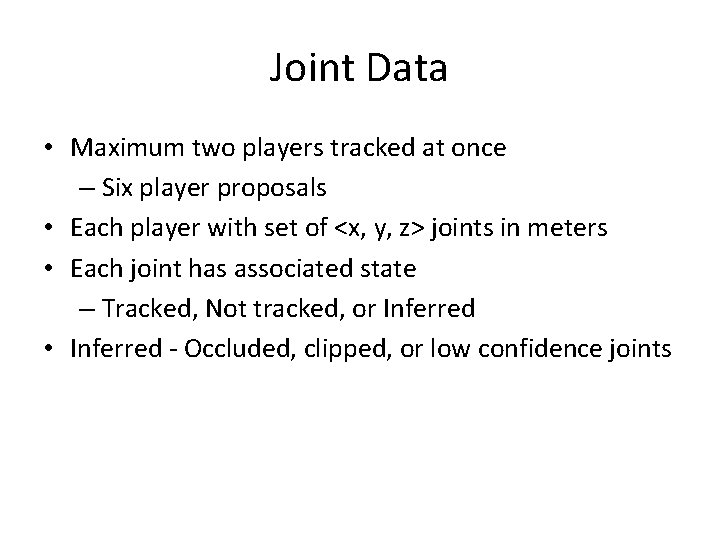

Joint Data • Maximum two players tracked at once – Six player proposals • Each player with set of <x, y, z> joints in meters • Each joint has associated state – Tracked, Not tracked, or Inferred • Inferred - Occluded, clipped, or low confidence joints

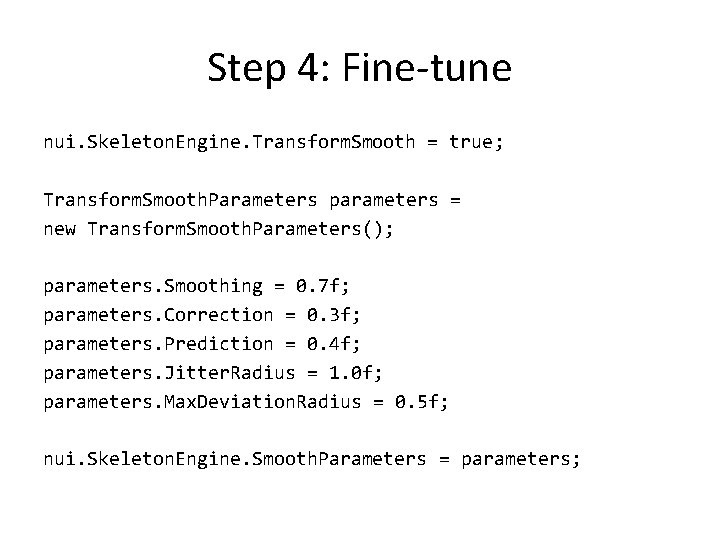

Step 4: Fine-tune nui. Skeleton. Engine. Transform. Smooth = true; Transform. Smooth. Parameters parameters = new Transform. Smooth. Parameters(); parameters. Smoothing = 0. 7 f; parameters. Correction = 0. 3 f; parameters. Prediction = 0. 4 f; parameters. Jitter. Radius = 1. 0 f; parameters. Max. Deviation. Radius = 0. 5 f; nui. Skeleton. Engine. Smooth. Parameters = parameters;

Camera: RGB Data • Getting RGB camera data • Converting into image • Getting RGB values for each pixel

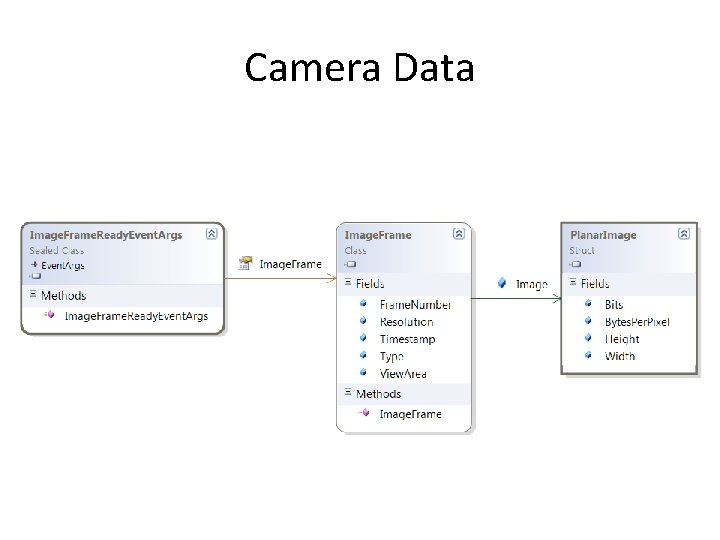

Camera Data

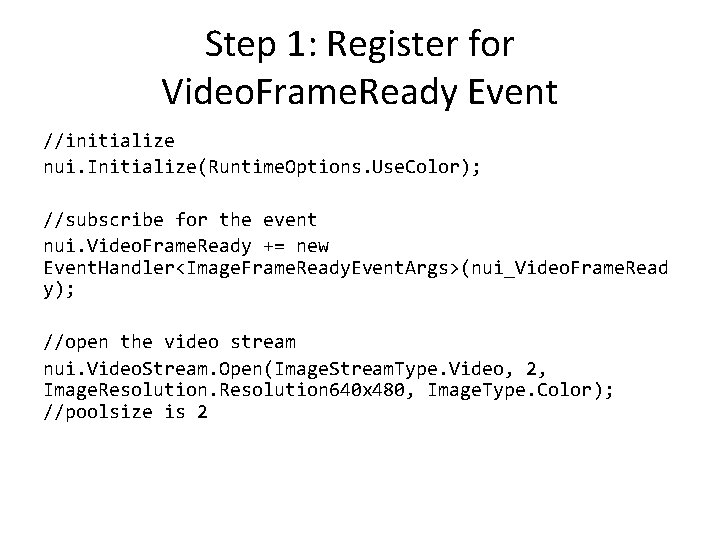

Step 1: Register for Video. Frame. Ready Event //initialize nui. Initialize(Runtime. Options. Use. Color); //subscribe for the event nui. Video. Frame. Ready += new Event. Handler<Image. Frame. Ready. Event. Args>(nui_Video. Frame. Read y); //open the video stream nui. Video. Stream. Open(Image. Stream. Type. Video, 2, Image. Resolution 640 x 480, Image. Type. Color); //poolsize is 2

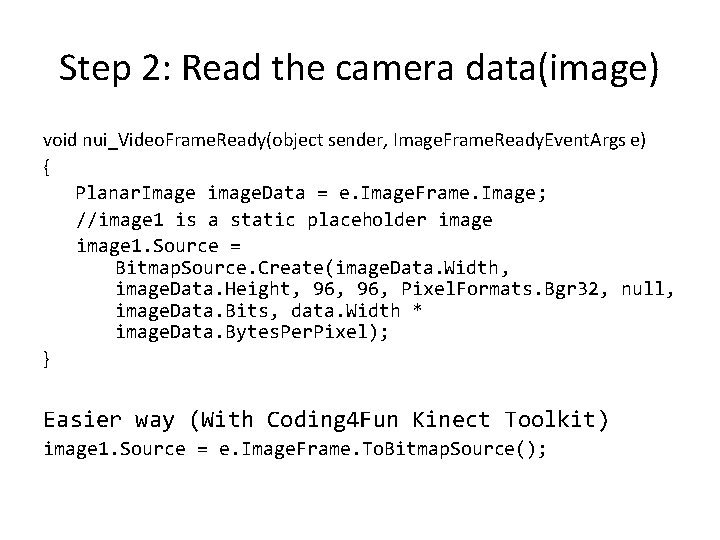

Step 2: Read the camera data(image) void nui_Video. Frame. Ready(object sender, Image. Frame. Ready. Event. Args e) { Planar. Image image. Data = e. Image. Frame. Image; //image 1 is a static placeholder image 1. Source = Bitmap. Source. Create(image. Data. Width, image. Data. Height, 96, Pixel. Formats. Bgr 32, null, image. Data. Bits, data. Width * image. Data. Bytes. Per. Pixel); } Easier way (With Coding 4 Fun Kinect Toolkit) image 1. Source = e. Image. Frame. To. Bitmap. Source();

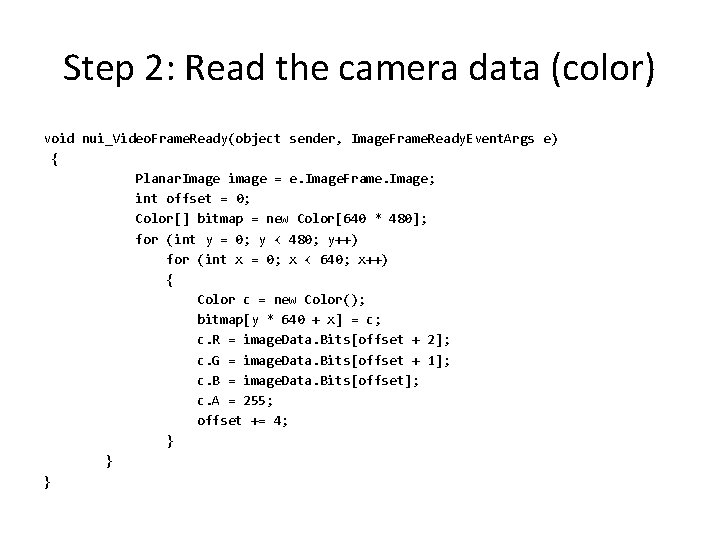

Step 2: Read the camera data (color) void nui_Video. Frame. Ready(object sender, Image. Frame. Ready. Event. Args e) { Planar. Image image = e. Image. Frame. Image; int offset = 0; Color[] bitmap = new Color[640 * 480]; for (int y = 0; y < 480; y++) for (int x = 0; x < 640; x++) { Color c = new Color(); bitmap[y * 640 + x] = c; c. R = image. Data. Bits[offset + 2]; c. G = image. Data. Bits[offset + 1]; c. B = image. Data. Bits[offset]; c. A = 255; offset += 4; } }

Questions

- Slides: 15