KEG PARTY Keg Party tomorrow night Prof Markov

- Slides: 43

KEG PARTY!!!!! ® Keg Party tomorrow night ® Prof. Markov will give out extra credit to anyone who attends* *Note: This statement is a lie

Trugenberger’s Quantum Optimization Algorithm Overview and Application

Overview ® Inspiration ® Basic Idea ® Mathematical and Circuit Realizations ® Limitations ® Future Work

Overview ® Inspiration ® Basic Idea ® Mathematical and Circuit Realizations ® Limitations ® Future Work

Two Main Sources of Inspiration ® Exploiting Quantum Parallelism ® Analogy of Simulated Annealing

What is quantum parallelism? ® What ® We is quantum parallelism? can represent super-positions of specific instances of data in a single quantum state ® We can then apply a single operator to this quantum state and thereby change all instances of data in a single step

What is Simulated Annealing? ® Comes from physical annealing ® Iteratively heat and cool a material until there’s a high probability of obtaining a crystalline structure ® Can be represented as a computational algorithm ® Iteratively make changes to your data until there is a high probability of ending up with the data you want

Overview ® Inspiration ® Basic Idea ® Mathematical and Circuit Realizations ® Limitations ® Future Work

Basic Idea ® Use this inspiration to come up with a more generalized quantum searching algorithm ® Trugenberger’s algorithm does a heuristic search on the entire data set by applying a cost function to each element in the data set ® Goal is to find a minimal cost solution

The high-level algorithm ® Use quantum parallelism to apply the cost function to all elements of the data set simultaneously in one step ® Iteratively apply this cost function to the data set ® Number of iterations is analogous to an instance of simulated annealing

Overview ® Inspiration ® Basic Idea ® Mathematical and Circuit Realizations ® Limitations ® Future Work

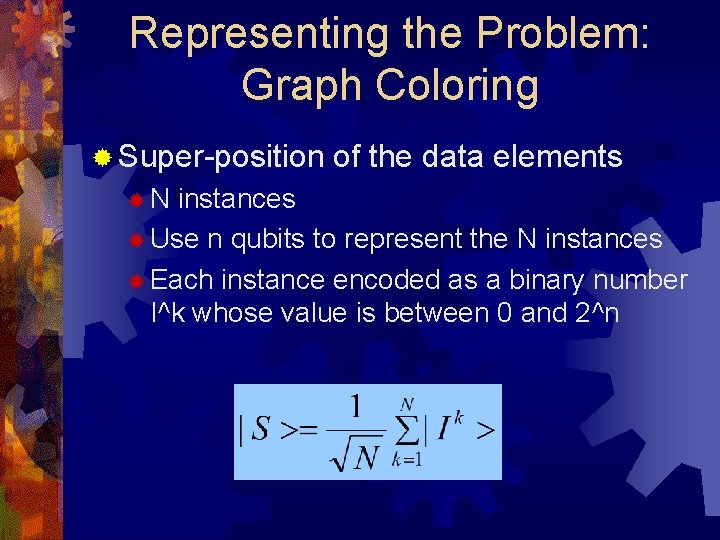

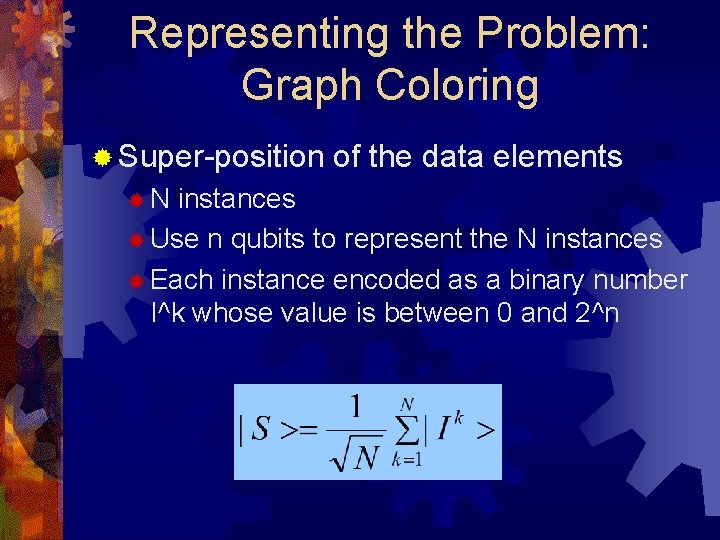

Representing the Problem: Graph Coloring ® Super-position ®N of the data elements instances ® Use n qubits to represent the N instances ® Each instance encoded as a binary number I^k whose value is between 0 and 2^n

Cost Functions in General should return a cost for that data element ® In this algorithm we will want to minimize cost ® Data elements with lower cost are better solutions ®

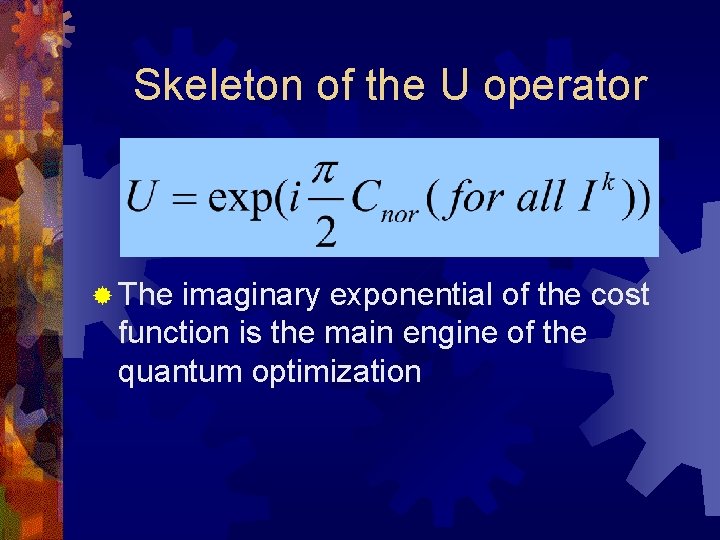

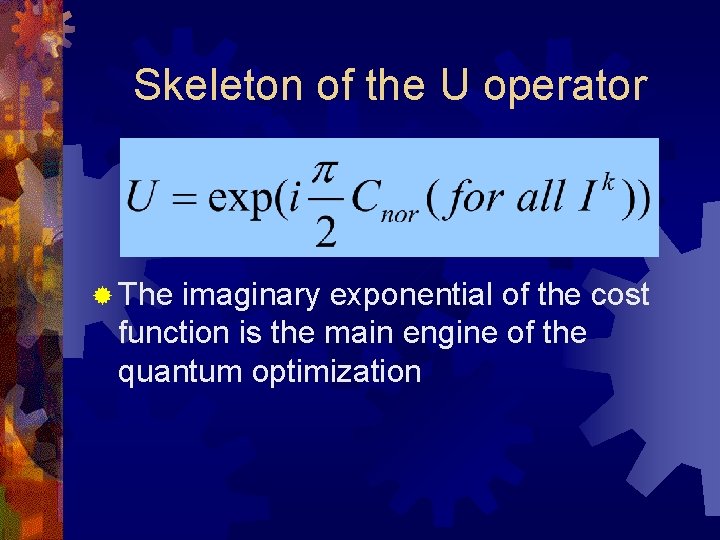

Skeleton of the U operator ® The imaginary exponential of the cost function is the main engine of the quantum optimization

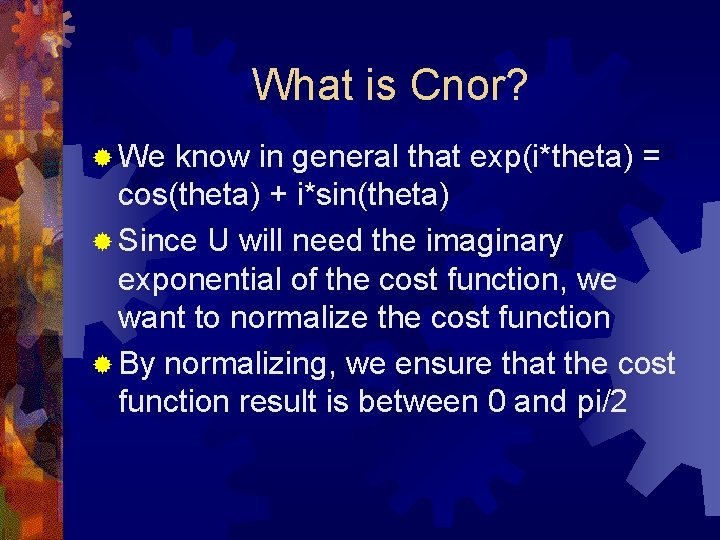

What is Cnor? ® We know in general that exp(i*theta) = cos(theta) + i*sin(theta) ® Since U will need the imaginary exponential of the cost function, we want to normalize the cost function ® By normalizing, we ensure that the cost function result is between 0 and pi/2

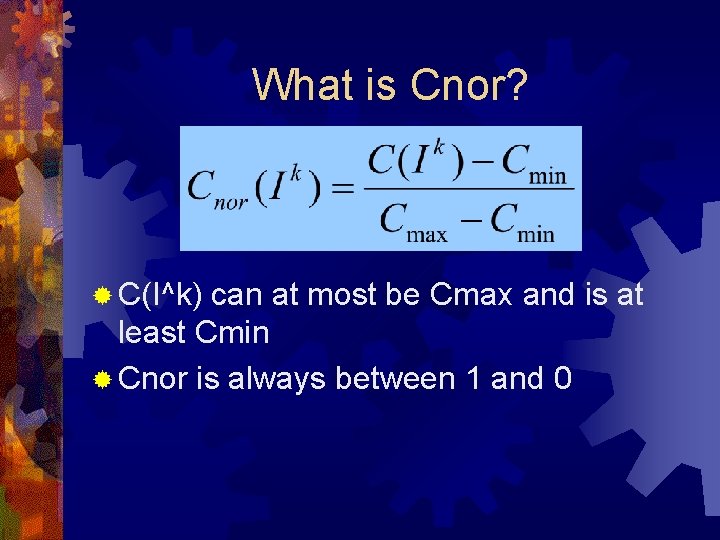

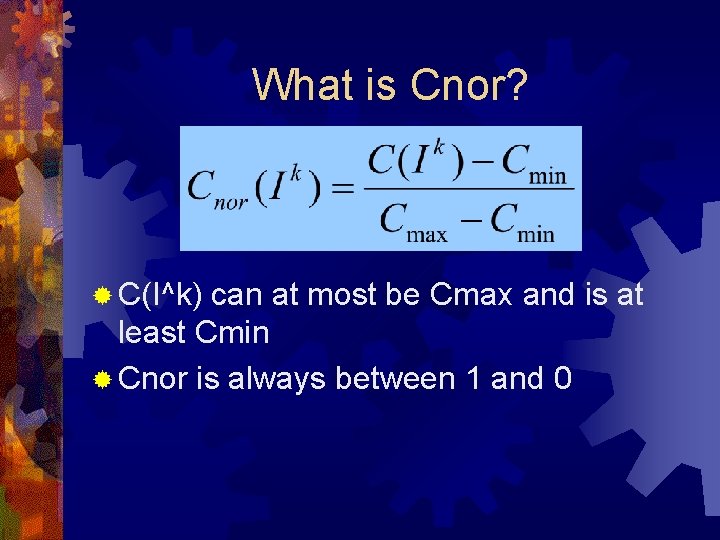

What is Cnor? ® C(I^k) can at most be Cmax and is at least Cmin ® Cnor is always between 1 and 0

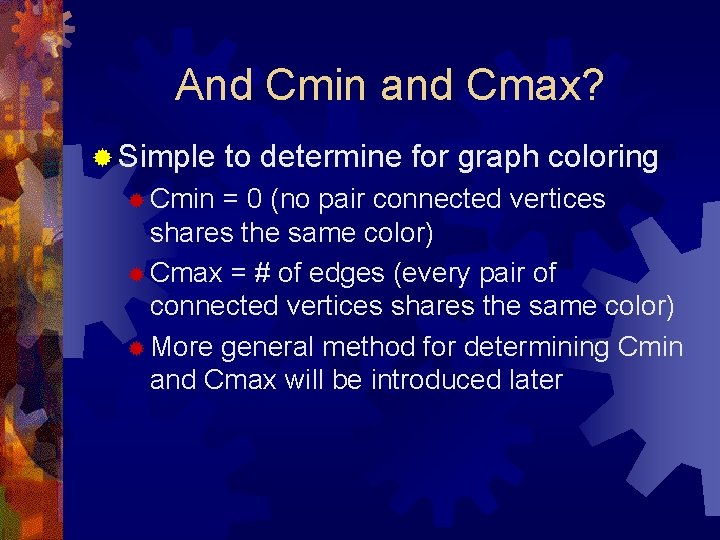

And Cmin and Cmax? ® Simple ® Cmin to determine for graph coloring = 0 (no pair connected vertices shares the same color) ® Cmax = # of edges (every pair of connected vertices shares the same color) ® More general method for determining Cmin and Cmax will be introduced later

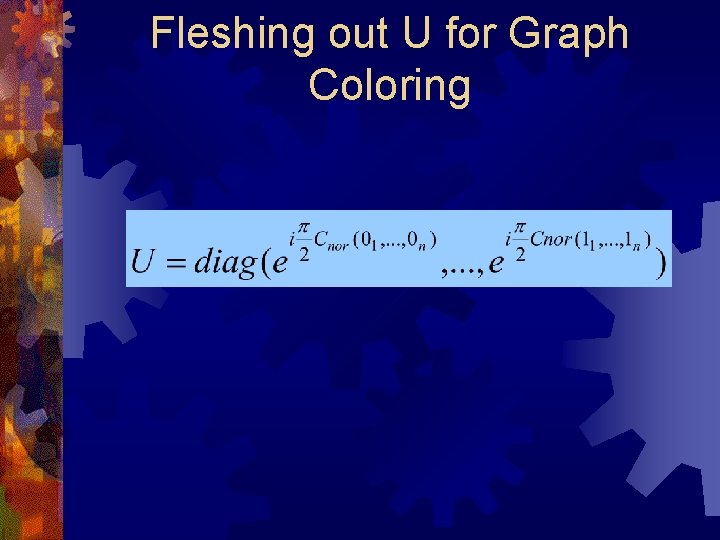

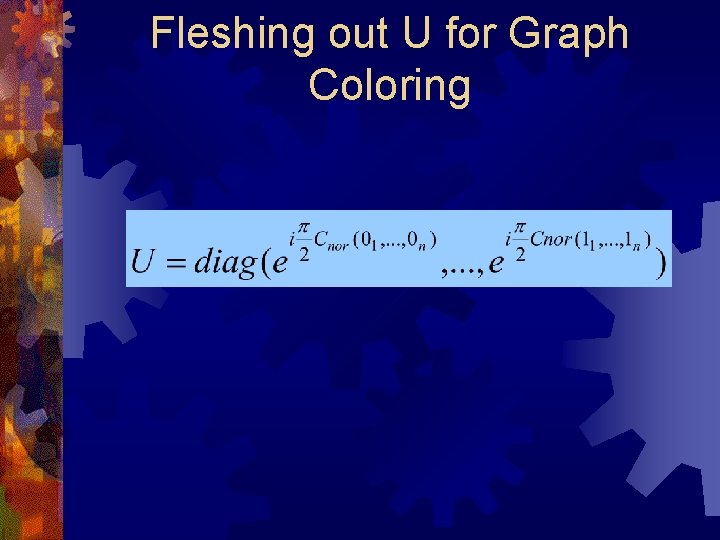

Fleshing out U for Graph Coloring

Still don’t quite have our magic operator ® As written, U by itself will not lower the probability amplitude of bad states and increase the amplitude of good states ® If we apply U now, the probability amplitudes of both the best and worst data elements will be the same and differ only in phase

Take Advantage of Phase Differences ® We can accomplish the proper amplitude modifications by using a controlled form of the U gate ® Can’t be an ordinary controlled gate though

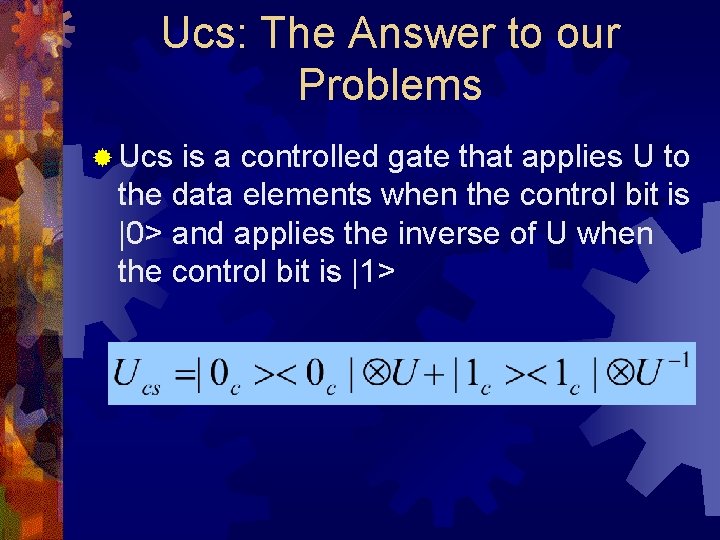

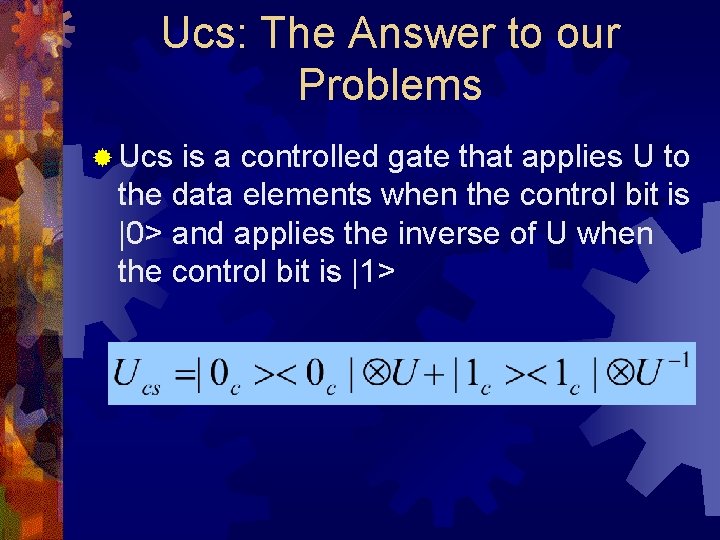

Ucs: The Answer to our Problems ® Ucs is a controlled gate that applies U to the data elements when the control bit is |0> and applies the inverse of U when the control bit is |1>

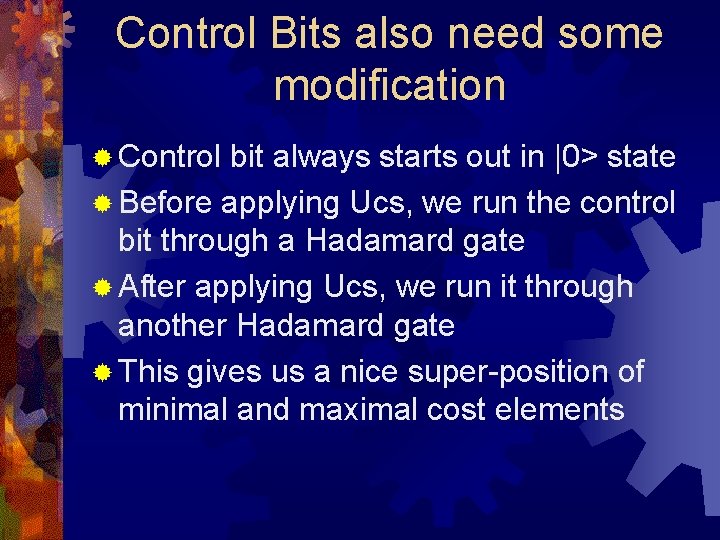

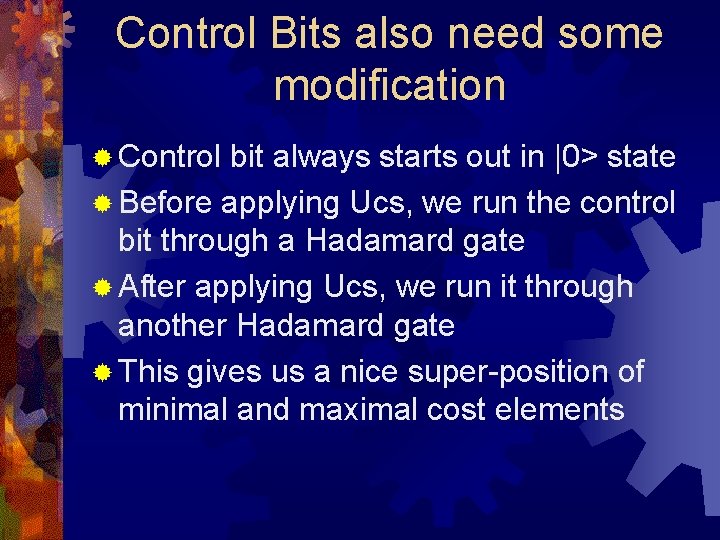

Control Bits also need some modification ® Control bit always starts out in |0> state ® Before applying Ucs, we run the control bit through a Hadamard gate ® After applying Ucs, we run it through another Hadamard gate ® This gives us a nice super-position of minimal and maximal cost elements

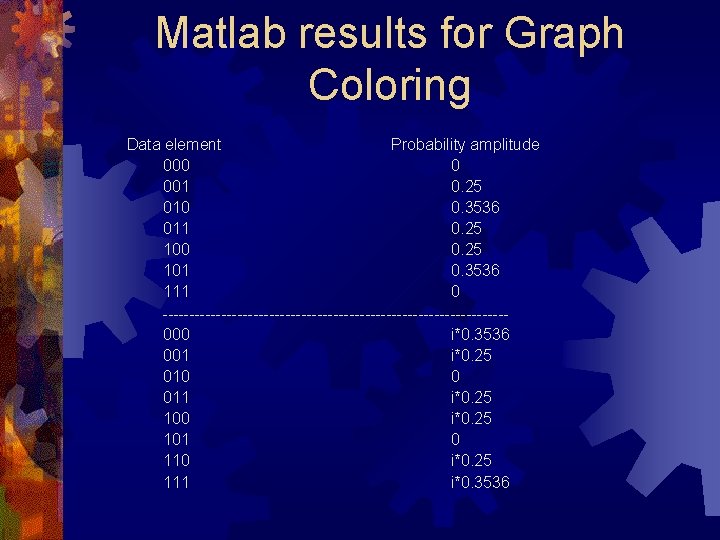

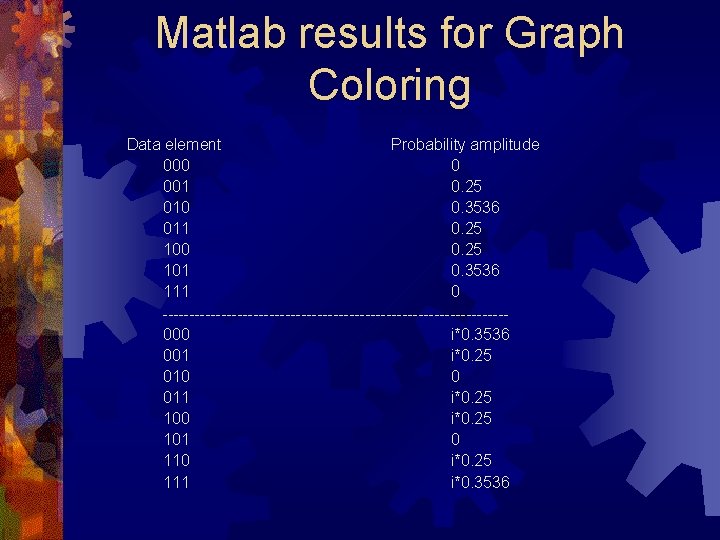

Matlab results for Graph Coloring Data element Probability amplitude 000 0 001 0. 25 010 0. 3536 011 0. 25 100 0. 25 101 0. 3536 111 0 --------------------------------000 i*0. 3536 001 i*0. 25 010 0 011 i*0. 25 100 i*0. 25 101 0 110 i*0. 25 111 i*0. 3536

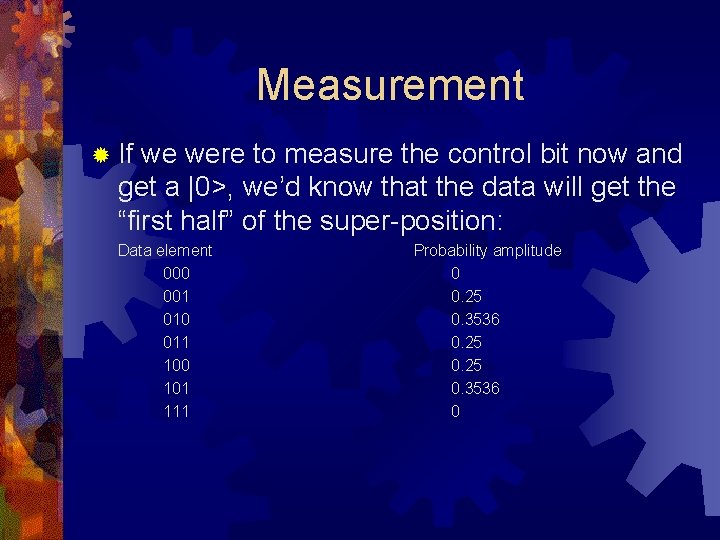

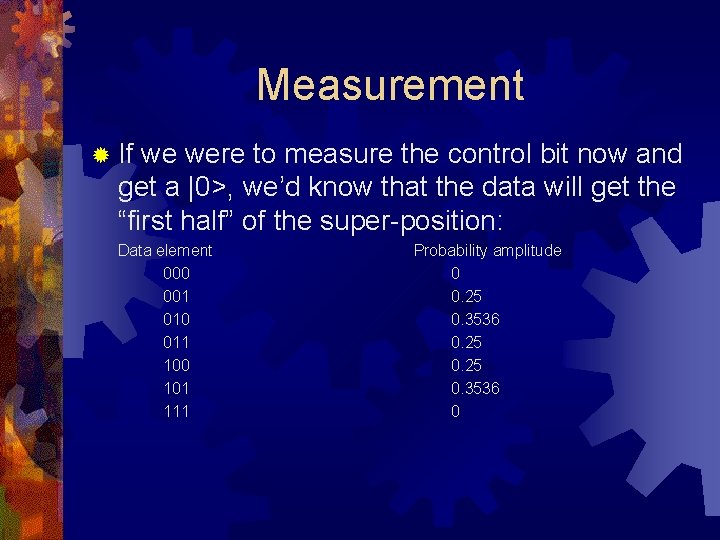

Measurement ® If we were to measure the control bit now and get a |0>, we’d know that the data will get the “first half” of the super-position: Data element 000 001 010 011 100 101 111 Probability amplitude 0 0. 25 0. 3536 0

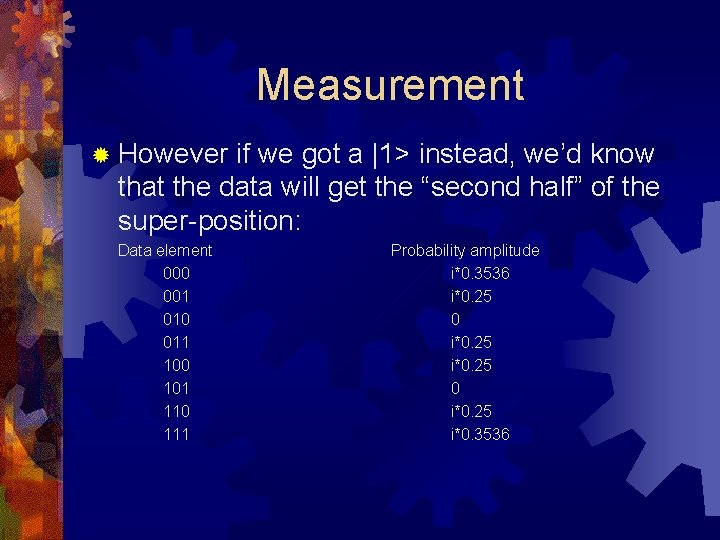

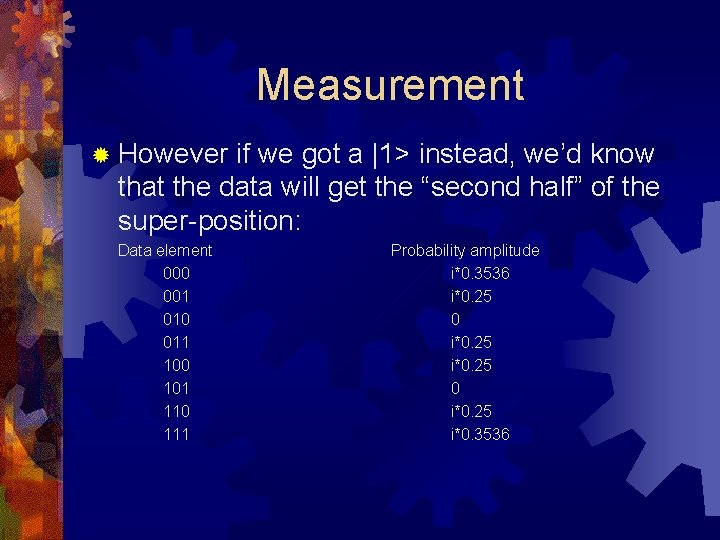

Measurement ® However if we got a |1> instead, we’d know that the data will get the “second half” of the super-position: Data element 000 001 010 011 100 101 110 111 Probability amplitude i*0. 3536 i*0. 25 0 i*0. 25 i*0. 3536

Measurement ®A control qubit measurement of |0> means we have a better chance of getting a lower cost state (a good solution) ® A control qubit measurement of |1> means we have a better chance of getting a higher cost state (a bad solution)

Measurement ® Assume the world is perfect and we always get a |0> when we measure the control qubit ® We can effectively increase our probability of getting good solutions and decrease the probability of getting bad solutions by iterating the H, Ucs, H operations ® We iterate by duplicating the circuit and adding more control qubits

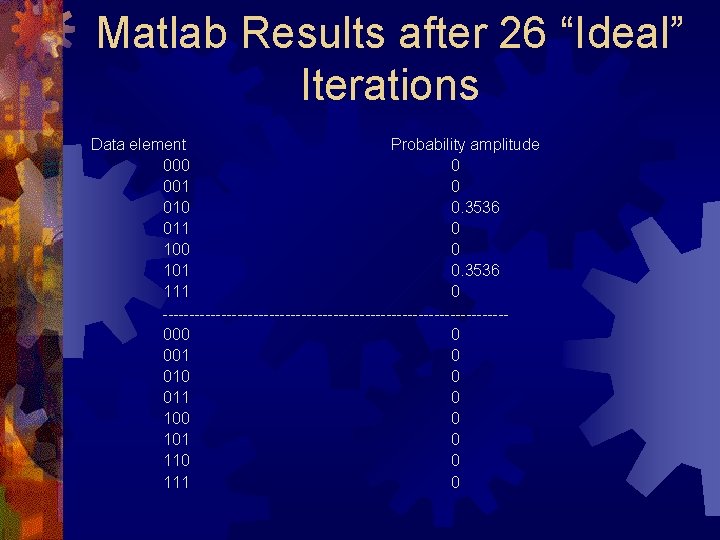

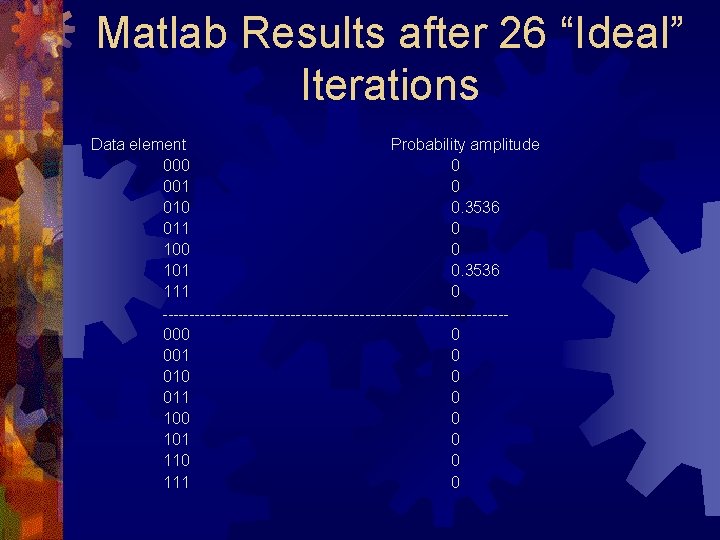

Matlab Results after 26 “Ideal” Iterations Data element Probability amplitude 000 0 001 0 010 0. 3536 011 0 100 0 101 0. 3536 111 0 --------------------------------000 0 001 0 010 0 011 0 100 0 101 0 110 0 111 0

Life Isn’t Fair ® We don’t always get a |0> for all the control qubits when we measure ® Some of the qubits are bound to be measured in the |1> state ® Upon measuring the control qubits we can at least know the quality of our computation

The Tradeoff ® If we increase the number of control qubits (b), then we have a chance of bumping up the probability amplitudes of the lower cost solutions and canceling out the probability amplitudes of the higher cost solutions

The Tradeoff ® However, if we increase the number of control qubits (b), we ALSO lower our chances of getting more control qubits in the |0> state

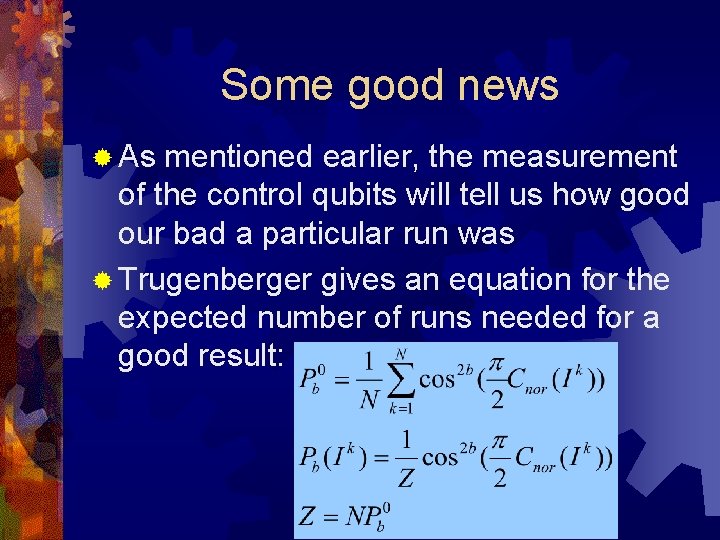

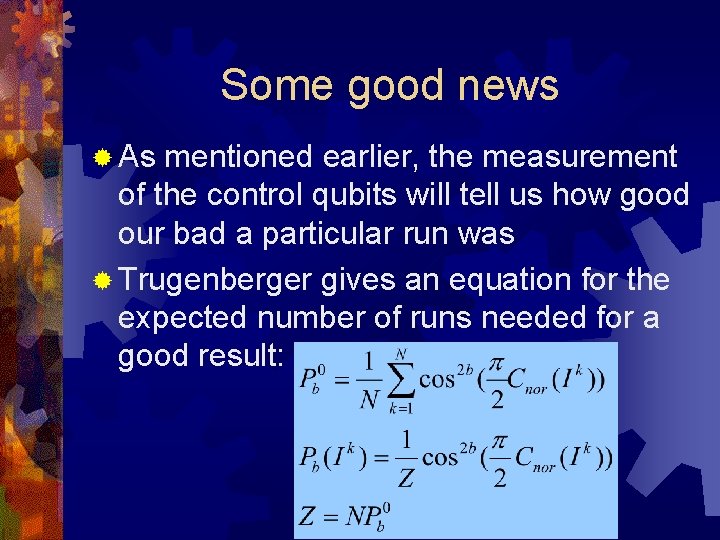

Some good news ® As mentioned earlier, the measurement of the control qubits will tell us how good our bad a particular run was ® Trugenberger gives an equation for the expected number of runs needed for a good result:

Analogy to Simulated Annealing ® Can view b, the number of control qubits, as a sort of temperature parameter ® Trugenberger gives some energy distributions based on the “effective temperature” being equal to 1/b ® Simply an analogy to the number of iterations needed for a probabilistically good solution

A Whole New Meaning for k ®k can be seen as a certain subset of the |S> super-position of data elements ® For the graph coloring problem, k=3 ® More generally for other problems, k can vary from 1 to K where K > 1

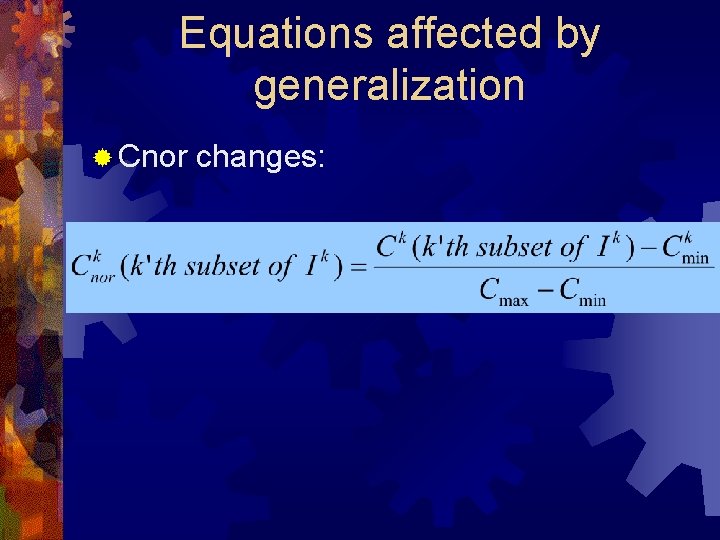

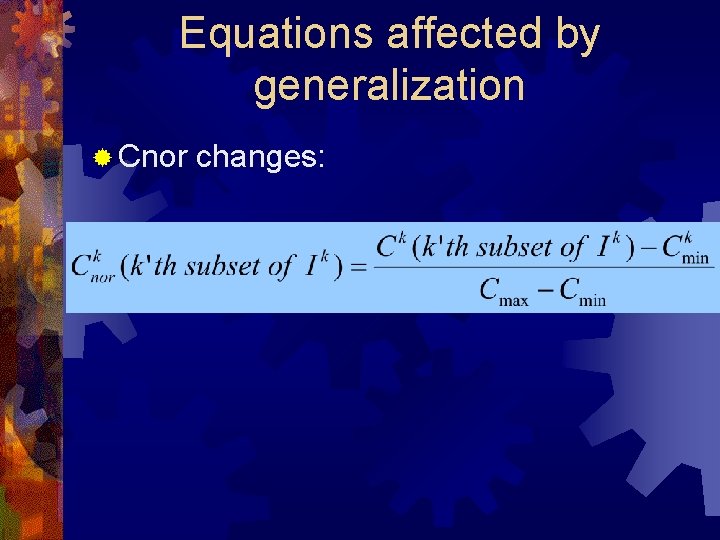

Equations affected by generalization ® Cnor changes:

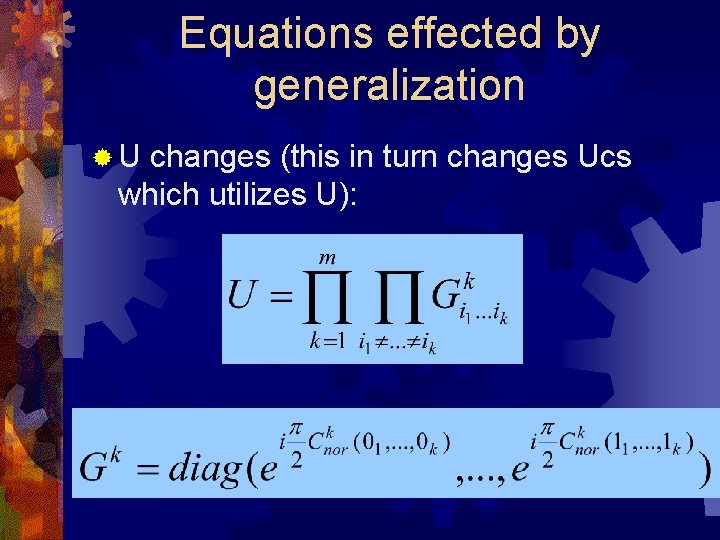

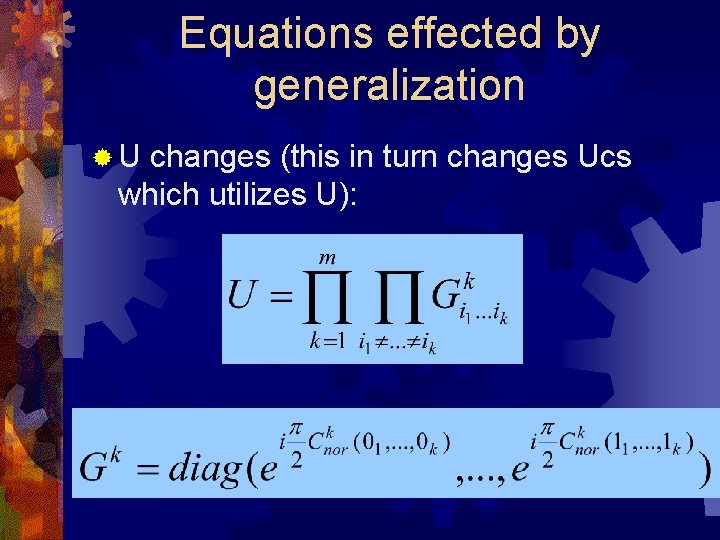

Equations effected by generalization ®U changes (this in turn changes Ucs which utilizes U):

Overview ® Inspiration ® Basic Idea ® Mathematical and Circuit Realizations ® Limitations ® Future Work

U operator ® Constructing the U operator may itself be exponential in the number of qubits ® Perhaps some physical process to get around this

Cost Function Oracle? ® Trugenberger glosses over the implementation of the cost function (in fact no implementation is suggested) ® Some problems may still be intractable if cost function is too complicated

Only a Heuristic ® Trugenberger’s algorithm may not get the exact minimal solution ® Although, keeping in mind the tradeoff, more control qubits can be added to increase the odds of a good solution

Overview ® Inspiration ® Basic Idea ® Mathematical and Circuit Realizations ® Limitations ® Future Work

Future Work ® Look into physical feasibility of cost function and construction of Ucs ® Run more simulations on various problems and compare against classical heuristics ® Compare with Grover’s algorithm

Reference ® Quantum Optimization by C. A. Trugenberger, July 22, 2001 (can be found on LANL archive)