Kecerdasan Buatan Teori Keputusan Decision Theory Decision trees

Kecerdasan Buatan Teori Keputusan (Decision Theory)

Decision trees Sebuah pohon keputusan dapat didefinisikan sebagai peta proses penalaran.

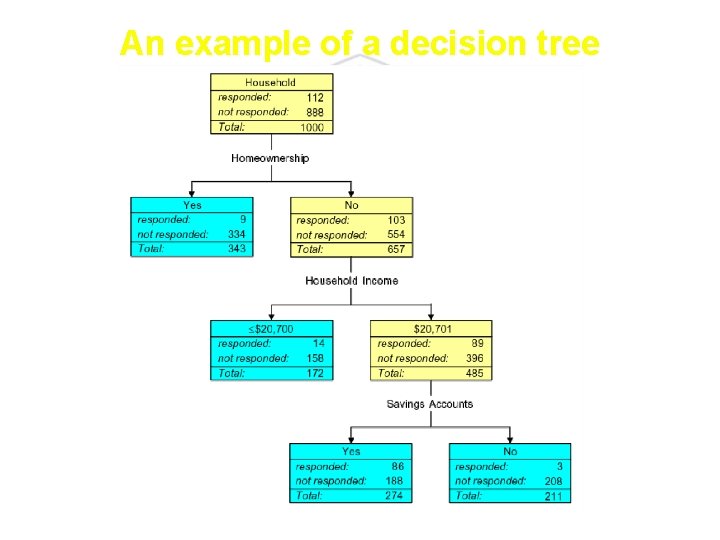

An example of a decision tree

q A decision tree consists of nodes, branches and leaves. q The top node is called the root node. All nodes are connected by branches. q Nodes that are at the end of branches are called terminal nodes, or leaves.

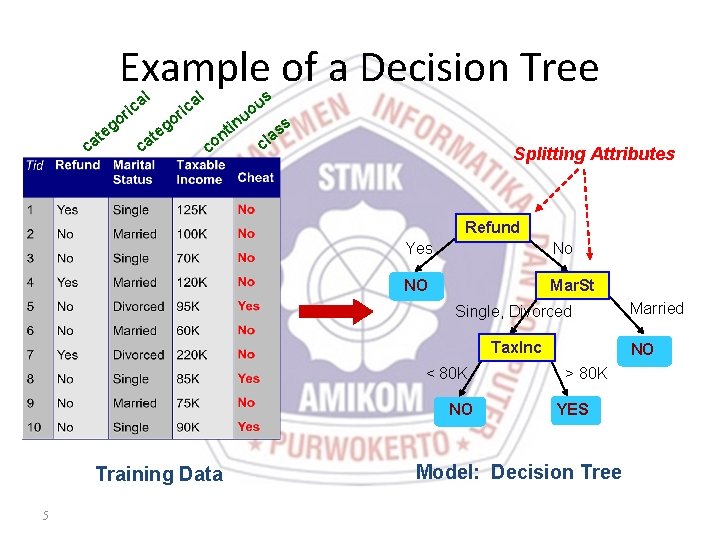

Example of a Decision Tree al ric at c o eg c at al o eg ric in nt co u s u o ss a cl Splitting Attributes Refund Yes No NO Mar. St Single, Divorced Tax. Inc < 80 K NO Training Data 5 Married NO > 80 K YES Model: Decision Tree

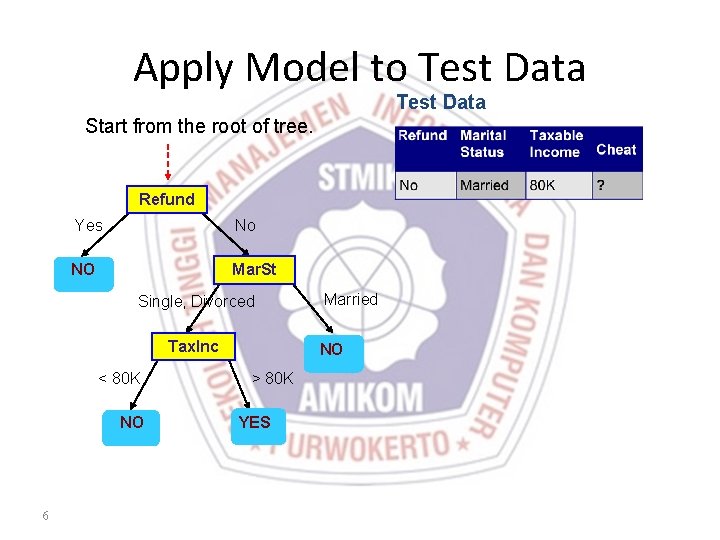

Apply Model to Test Data Start from the root of tree. Refund Yes No NO Mar. St Single, Divorced Tax. Inc < 80 K NO 6 Married NO > 80 K YES

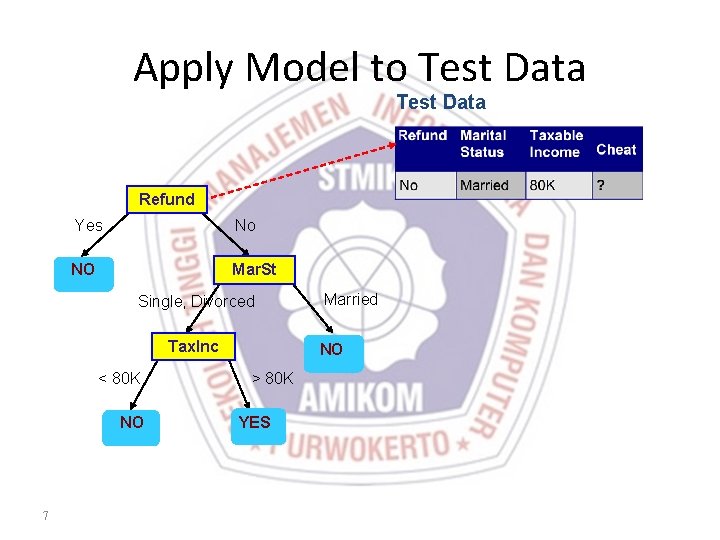

Apply Model to Test Data Refund Yes No NO Mar. St Single, Divorced Tax. Inc < 80 K NO 7 Married NO > 80 K YES

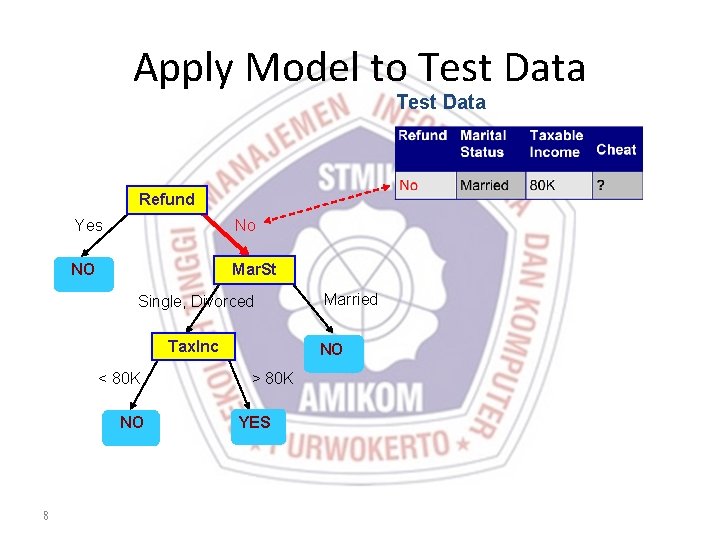

Apply Model to Test Data Refund Yes No NO Mar. St Single, Divorced Tax. Inc < 80 K NO 8 Married NO > 80 K YES

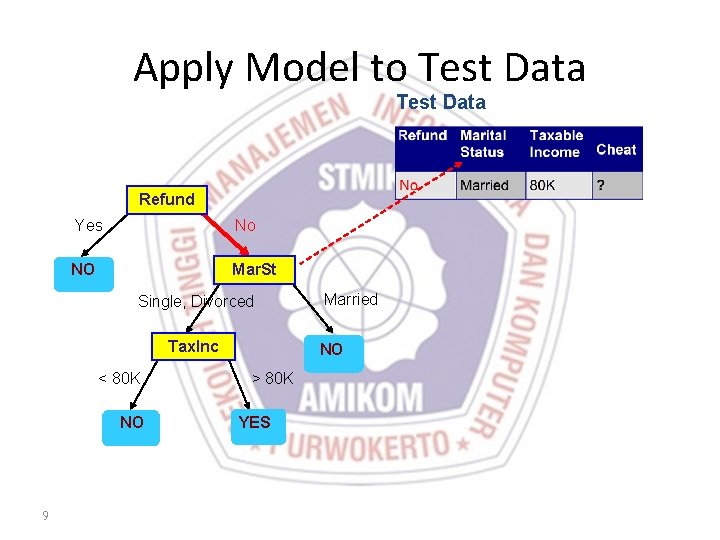

Apply Model to Test Data Refund Yes No NO Mar. St Single, Divorced Tax. Inc < 80 K NO 9 Married NO > 80 K YES

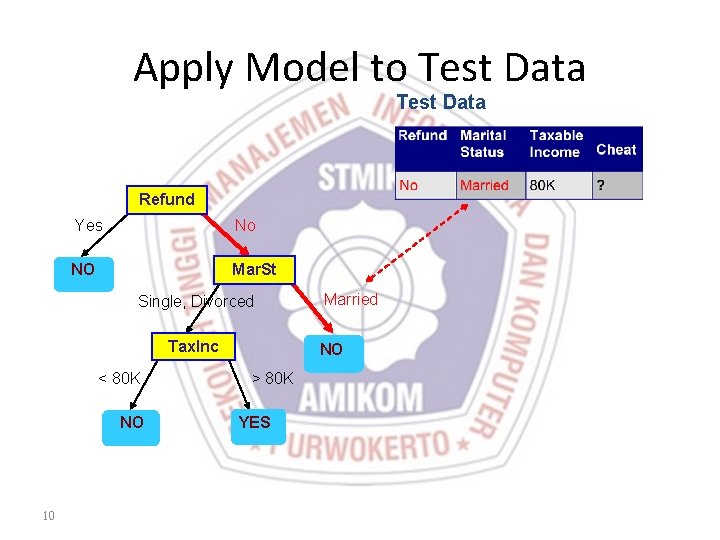

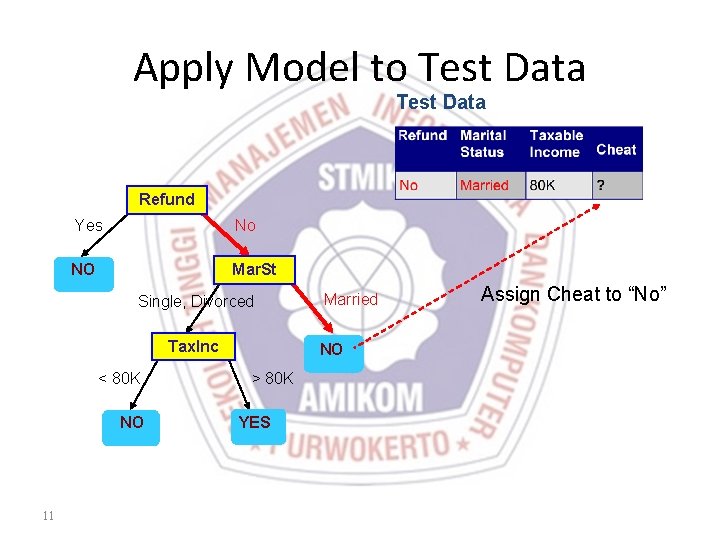

Apply Model to Test Data Refund Yes No NO Mar. St Single, Divorced Tax. Inc < 80 K NO 10 Married NO > 80 K YES

Apply Model to Test Data Refund Yes No NO Mar. St Single, Divorced Tax. Inc < 80 K NO 11 Married NO > 80 K YES Assign Cheat to “No”

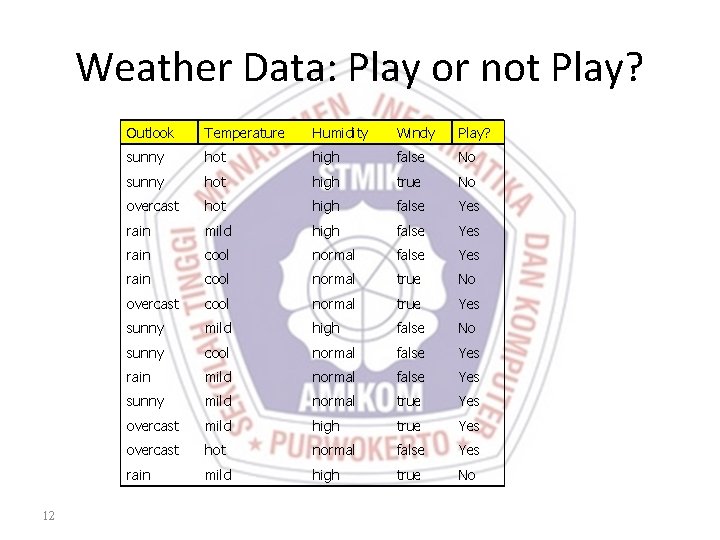

Weather Data: Play or not Play? 12 Outlook Temperature Humidity Windy Play? sunny hot high false No sunny hot high true No overcast hot high false Yes rain mild high false Yes rain cool normal true No overcast cool normal true Yes sunny mild high false No sunny cool normal false Yes rain mild normal false Yes sunny mild normal true Yes overcast mild high true Yes overcast hot normal false Yes rain mild high true No

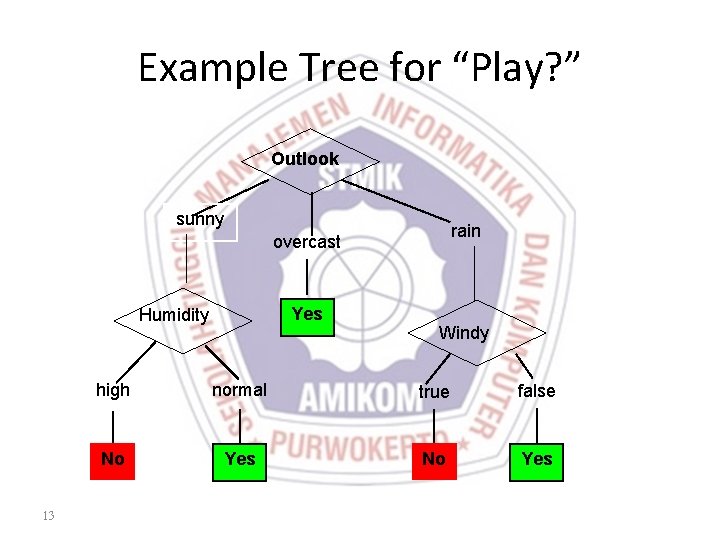

Example Tree for “Play? ” Outlook sunny rain overcast Yes Humidity 13 Windy high normal true false No Yes

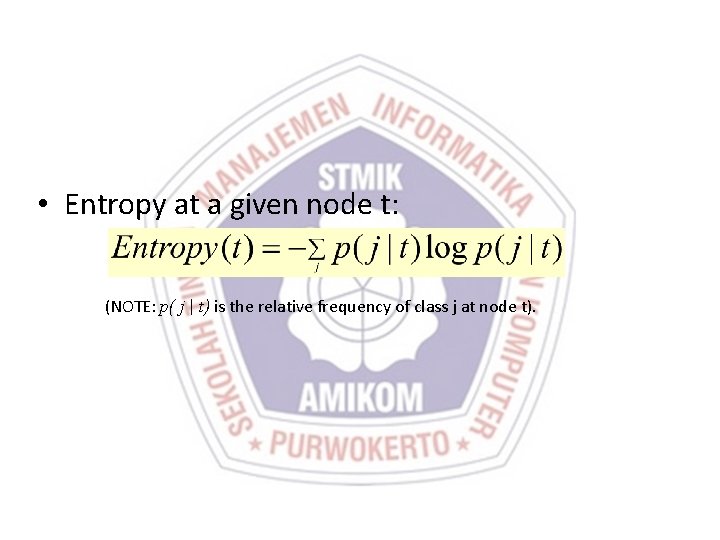

• Entropy at a given node t: (NOTE: p( j | t) is the relative frequency of class j at node t).

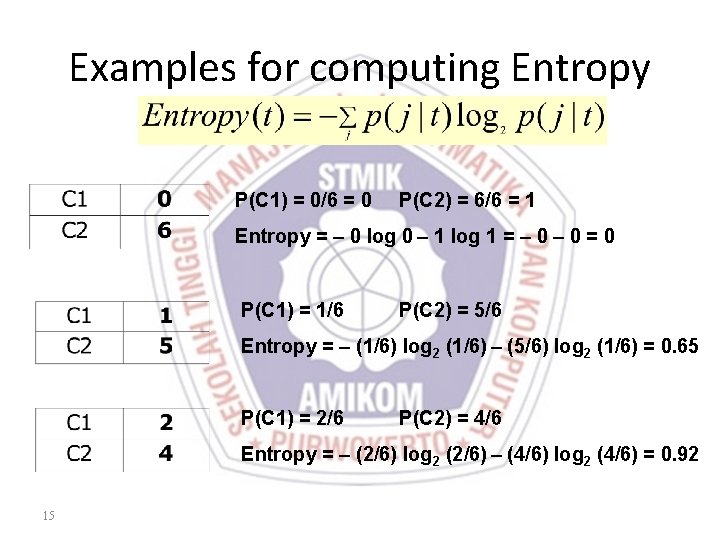

Examples for computing Entropy P(C 1) = 0/6 = 0 P(C 2) = 6/6 = 1 Entropy = – 0 log 0 – 1 log 1 = – 0 = 0 P(C 1) = 1/6 P(C 2) = 5/6 Entropy = – (1/6) log 2 (1/6) – (5/6) log 2 (1/6) = 0. 65 P(C 1) = 2/6 P(C 2) = 4/6 Entropy = – (2/6) log 2 (2/6) – (4/6) log 2 (4/6) = 0. 92 15

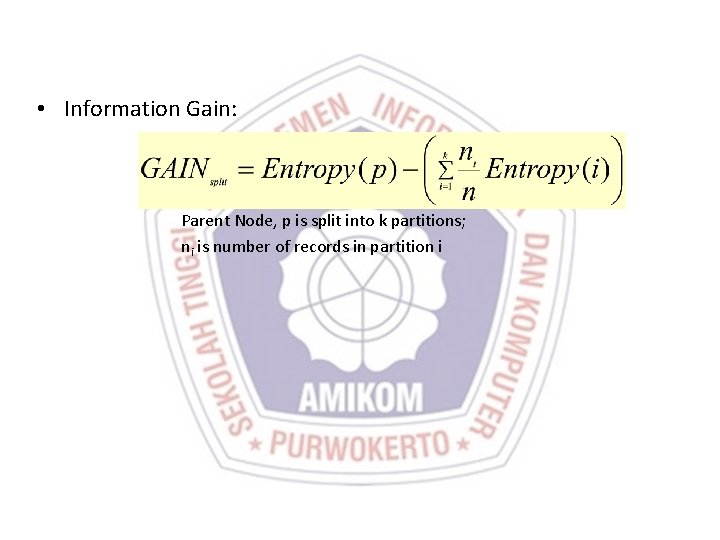

• Information Gain: Parent Node, p is split into k partitions; ni is number of records in partition i

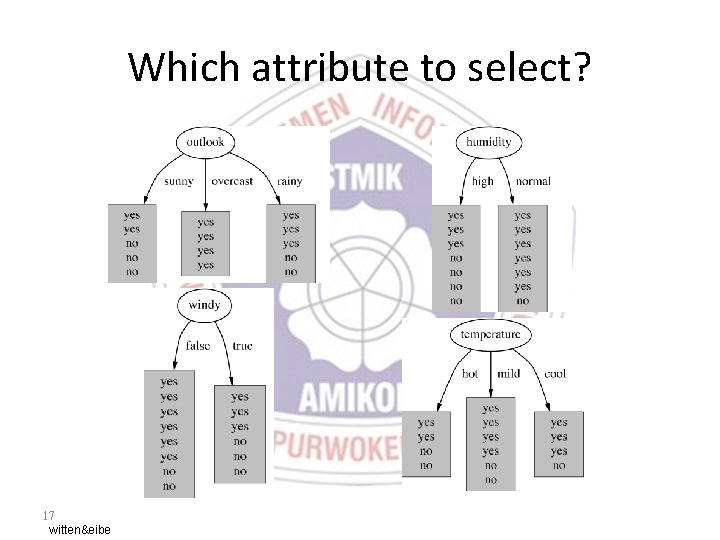

Which attribute to select? 17 witten&eibe

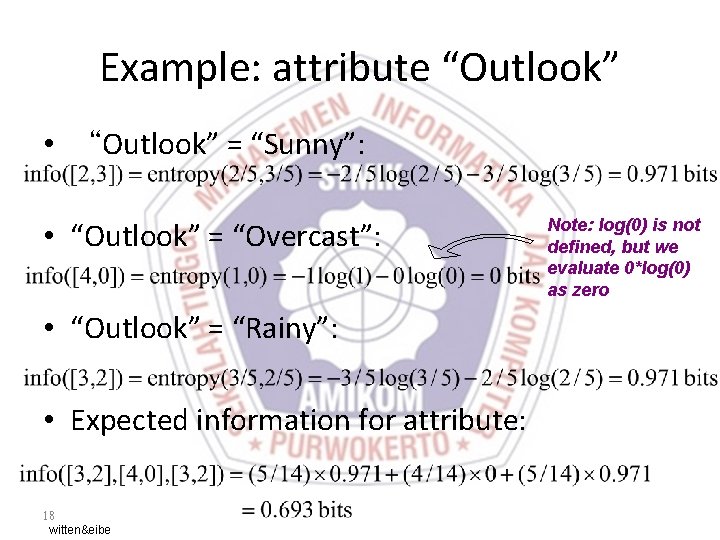

Example: attribute “Outlook” • “Outlook” = “Sunny”: • “Outlook” = “Overcast”: • “Outlook” = “Rainy”: • Expected information for attribute: 18 witten&eibe Note: log(0) is not defined, but we evaluate 0*log(0) as zero

- Slides: 18