Journalism 614 Reliability and Validity Criteria of Measurement

Journalism 614: Reliability and Validity

Criteria of Measurement Quality ¨ How do we judge the relative success (or failure) in measuring various concepts? – Reliability • Consistency over time – Validity • Reflects the real meaning

Reliability and Validity ¨ Reliability focuses on measurement ¨ Validity is important to measurement too – Validity also extends to: • Internal features of the study (Internal Validity) • Generalizations made from study (External Validity)

Key to Reliable Valid Measures ¨ Precise conceptual and operational definitions of concepts - tight fit – Conceptual definitions: abstract sense of the idea – Operational definitions: measuring the concept

Reliability ¨ Consistency of Measurement – Reproducibility over time, over different indicators, used by different interviewers ¨ Estimates of Reliability – Statistical coefficients that tell use how consistently we measured something

Four Aspects of Reliability: ¨ 1. Stability ¨ 2. Reproducibility ¨ 3. Homogeneity ¨ These three factors = precision

1. Stability ¨ Consistency across time – repeating a measure at a later time to examine the consistency – Compare time 1 and time 2

2. Reproducibility ¨ Consistency between observers ¨ Equivalent application of measuring device – Do observers using the same measuring tools reach the same conclusion? – If we don’t get the same results, what are we measuring? • Lack of reliability can compromise validity

3. Homogeneity ¨ Consistency between different measures of the same concept – Different items used to tap a given concept show similar results ¨ Homogeneity of measures: – 1. Cronbach’s Alpha coefficient – 2. Mean Inter-item Correlation

Indicators of Reliability ¨ Test-retest – Make measurements more than once and see if they yield the same result ¨ Split-half – If you have multiple measures of a concept, split items into two scales, which should then be correlated ¨ Cronbach’s Alpha or Item-total Correlation

Relationship to Validity ¨ Reliability is a necessary condition for validity - consistency as an indicator ¨ Reliability is not a sufficient condition for validity - consistency does not = accuracy – E. g. , Grocery Scale. Must be consistent to have any hope of being valid, but could still be off the mark (1 lb always measures 1. 1 lb.

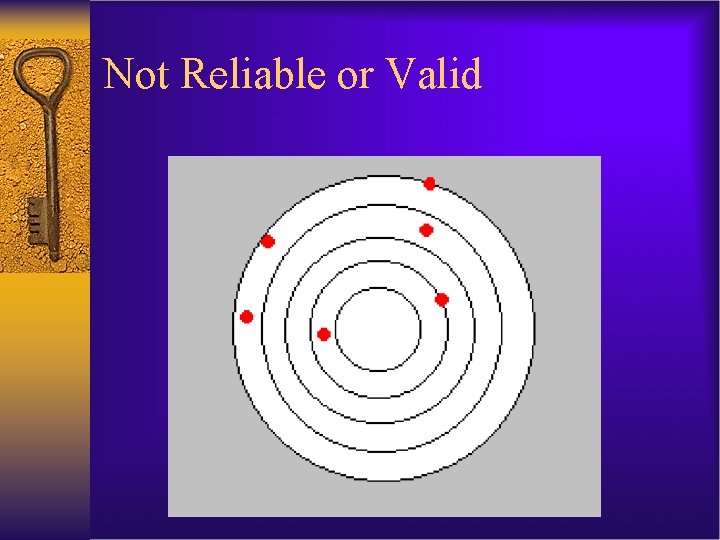

Not Reliable or Valid

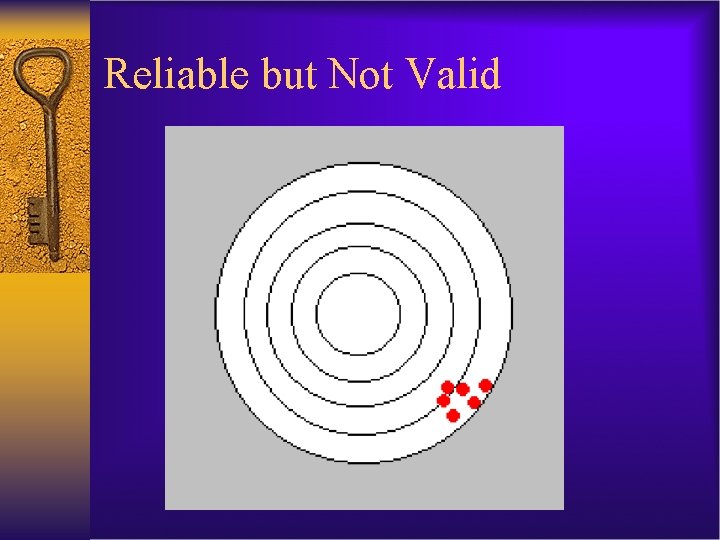

Reliable but Not Valid

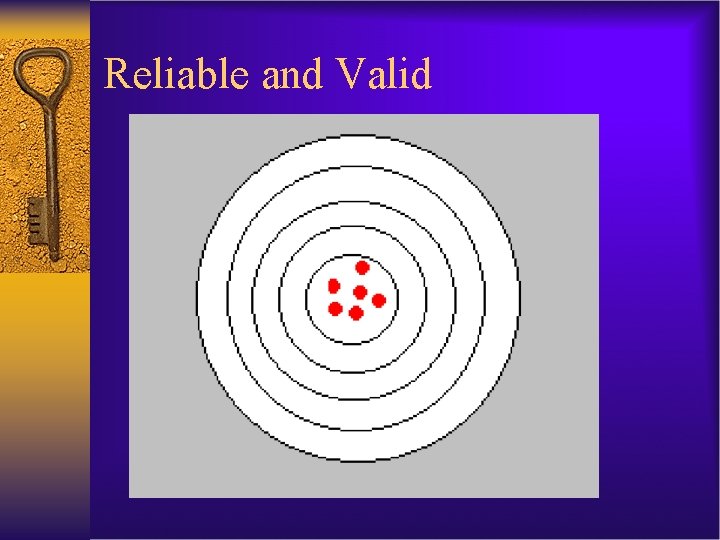

Reliable and Valid

Types of Validity ¨ 1. Face validity ¨ 2. Content validity ¨ 3. Pragmatic (criterion) validity – A. Concurrent validity – B. Predictive validity ¨ 4. Construct validity – A. Convergent validity – B. Discriminant validity

Face Validity ¨ Subjective expert judgment about “what’s there” ¨ Compare each item to conceptual definition – If not, it should be dropped – Is the measure valid “on its face” – E. g. , Asking about race prejudice by asking people’s affinity for ethnic cuisine

Content Validity ¨ Subjective expert judgment of “what’s not there” – Start with conceptual definition and see if all dimensions and traits are represented at the operational level – Are some over or underrepresented? ¨ If current indicators are insufficient, develop more indicators - cycle of face and content validity ¨ Example - Civic Participation questions: – Did you vote in the last election? – Do you belong to any advocacy groups? – Have you ever volunteered in your community?

Pragmatic (Criterion-Related) Validity ¨ Uses empirical evidence to test validity ¨ 1. Concurrent validity – Does the measure predict a pre-existing measure that has been previously deemed to be valid? • E. g. , Does a new version of an IQ test correlate with past versions? ¨ 2. Predictive validity – Does the measure predict the future outcomes it is supposed to predict? : • E. g. , SAT scores: Do they predict college GPA?

Construct Validity ¨ Overall validity encompassing other elements ¨ Do measurements: – A. Represent all dimensions of the concept – B. Distinguish concept from other similar concepts ¨ Tied to meaning analysis of the concept – Specifies the dimensions and indicators to be tested ¨ Assessing construct validity: – A. Convergent validity – B. Discriminant validity

Convergent Validity ¨ Convergent validity: – Measuring the same concept with very different methods – If different methods yield the same results, than convergent validity is supported – E. g. , Different survey items used to measure decision-making style - closed and open-ended • Code for decision-making style from open-ended responses • High score on scale = more compensatory responses

Discriminant (Divergent) Validity ¨ Discriminant validity: – Ability of measure of a concept to discriminate that concept from other closely related concepts – E. g. , Measuring Maternalism and Altruism as distinct concepts. Might be correlated but not too highly or this is an issue.

Validity & Research Design ¨ Internal – Controlling for other factors in the design • Validity of structure, sampling, measures, procedures • Claims regarding what happened in the study ¨ External – Looking beyond the design to other cases • Validity of inferences made from the conclusions • Claims regarding what happens in the real world

- Slides: 22