Joint Capability Technology Demonstration OSD RFD USSTRATCOM NRL

Joint Capability Technology Demonstration OSD (RFD) – USSTRATCOM – NRL – NGA – INSCOM – DISA

Agenda ▪ ▪ ▪ Warfighter Problem Large Data Concept of Operations Operational Utility Assessment Why LD JCTD Works Transition Summary 2

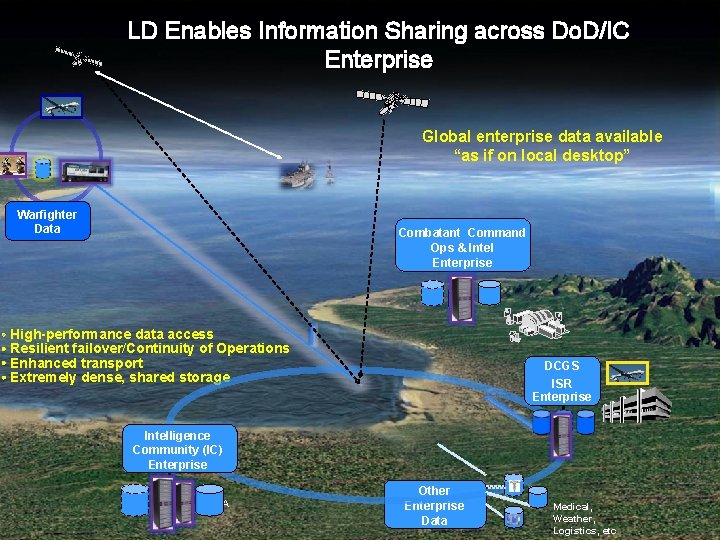

LD Enables Information Sharing across Do. D/IC Enterprise Global enterprise data available “as if on local desktop” Warfighter Data Combatant Command Ops & Intel Enterprise • High-performance data access • Resilient failover/Continuity of Operations • Enhanced transport • Extremely dense, shared storage DCGS ISR Enterprise Intelligence Community (IC) Enterprise CALA Other Enterprise Data Medical, Weather, Logistics, etc 3

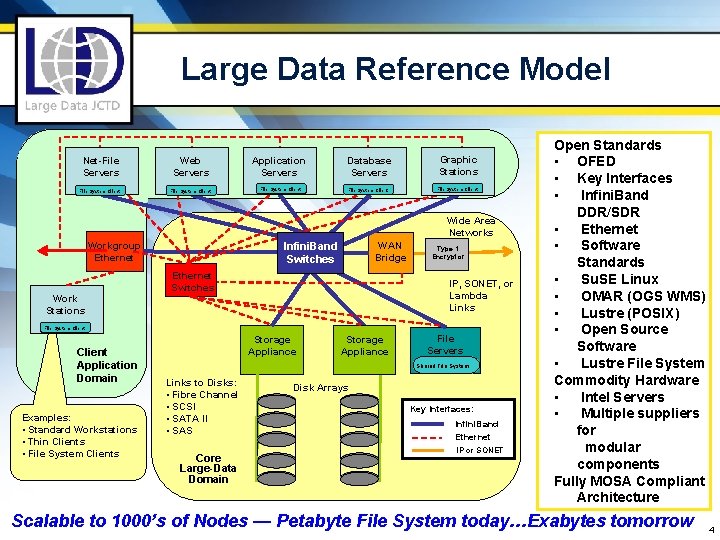

Large Data Reference Model Net-File Servers Web Servers Application Servers Database Servers File System Client Graphic Stations File System Client Wide Area Networks Work Stations WAN Bridge Infini. Band Switches Workgroup Ethernet Switches Type 1 Encryptor IP, SONET, or Lambda Links File System Client Application Domain Examples: • Standard Workstations • Thin Clients • File System Clients Storage Appliance File Servers Shared File System Links to Disks: • Fibre Channel • SCSI • SATA II • SAS Core Large-Data Domain Disk Arrays Key Interfaces: Infini. Band Ethernet IP or SONET Open Standards • OFED • Key Interfaces • Infini. Band DDR/SDR • Ethernet • Software Standards • Su. SE Linux • OMAR (OGS WMS) • Lustre (POSIX) • Open Source Software • Lustre File System Commodity Hardware • Intel Servers • Multiple suppliers for modular components Fully MOSA Compliant Architecture Scalable to 1000’s of Nodes — Petabyte File System today…Exabytes tomorrow 4

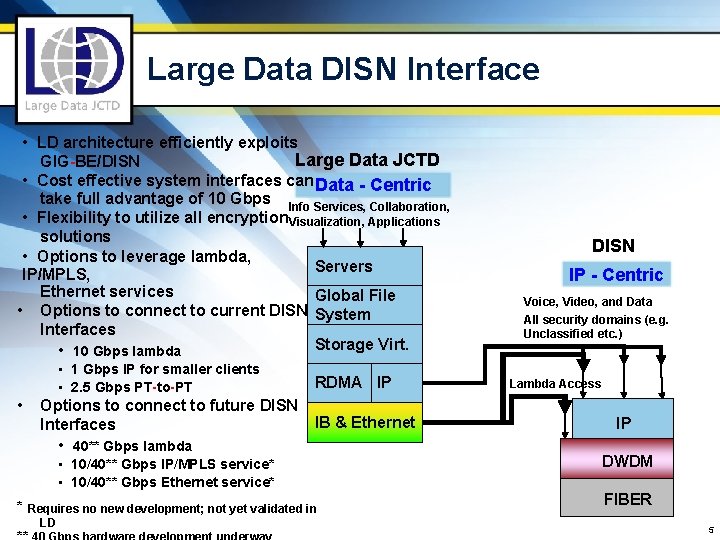

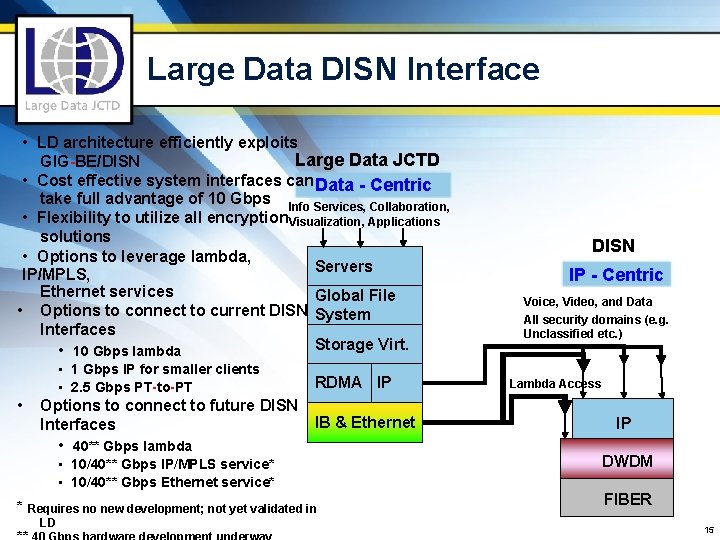

Large Data DISN Interface • LD architecture efficiently exploits Large Data JCTD GIG-BE/DISN • Cost effective system interfaces can Data - Centric take full advantage of 10 Gbps Info Services, Collaboration, • Flexibility to utilize all encryption Visualization, Applications solutions • Options to leverage lambda, Servers IP/MPLS, Ethernet services Global File • Options to connect to current DISN System Interfaces Storage Virt. • 10 Gbps lambda • 1 Gbps IP for smaller clients • 2. 5 Gbps PT-to-PT • RDMA IP Options to connect to future DISN IB & Ethernet Interfaces • 40** Gbps lambda • 10/40** Gbps IP/MPLS service* • 10/40** Gbps Ethernet service* * Requires no new development; not yet validated in LD DISN IP - Centric Voice, Video, and Data All security domains (e. g. Unclassified etc. ) Lambda Access IP DWDM FIBER 5

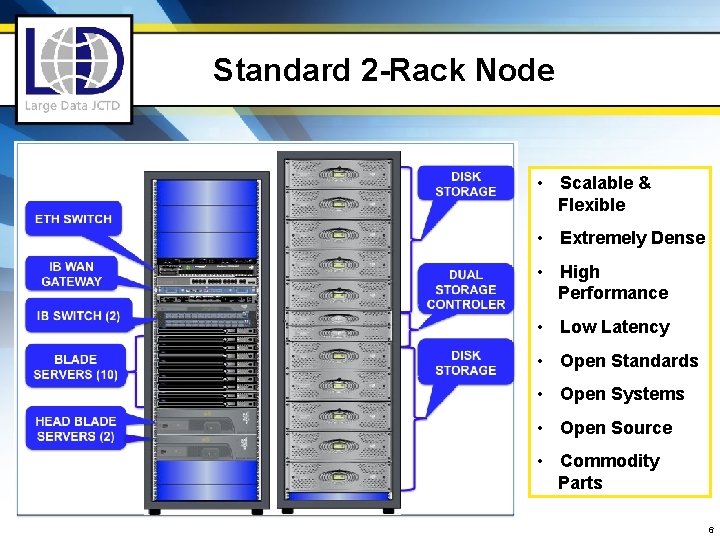

Standard 2 -Rack Node • Scalable & Flexible • Extremely Dense • High Performance • Low Latency • Open Standards • Open Systems • Open Source • Commodity Parts 6

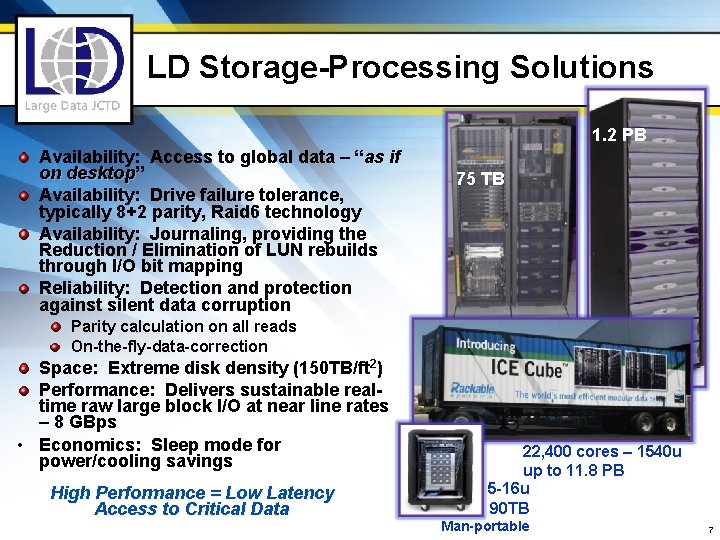

LD Storage-Processing Solutions 1. 2 PB Availability: Access to global data – “as if on desktop” desktop Availability: Drive failure tolerance, typically 8+2 parity, Raid 6 technology Availability: Journaling, providing the Reduction / Elimination of LUN rebuilds through I/O bit mapping Reliability: Detection and protection against silent data corruption Parity calculation on all reads 75 TB On-the-fly-data-correction Space: Extreme disk density (150 TB/ft 2) Performance: Delivers sustainable realtime raw large block I/O at near line rates – 8 GBps • Economics: Sleep mode for power/cooling savings High Performance = Low Latency Access to Critical Data 22, 400 cores – 1540 u up to 11. 8 PB 5 -16 u 90 TB Man-portable 7

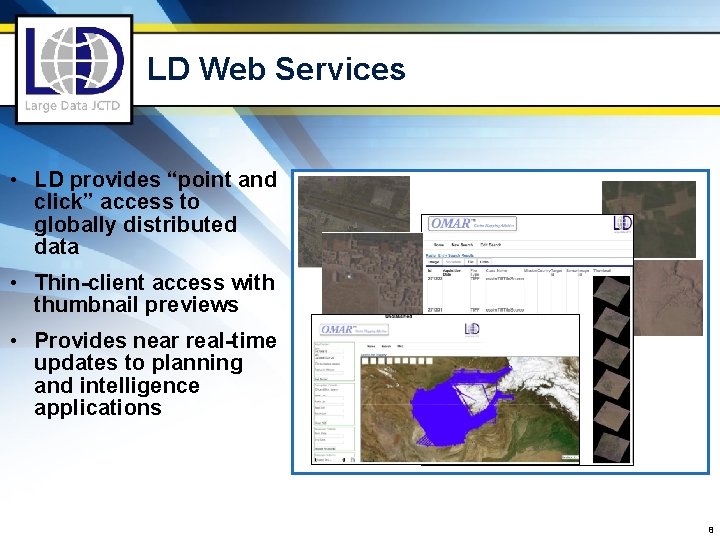

LD Web Services • LD provides “point and click” access to globally distributed data • Thin-client access with thumbnail previews • Provides near real-time updates to planning and intelligence applications 8

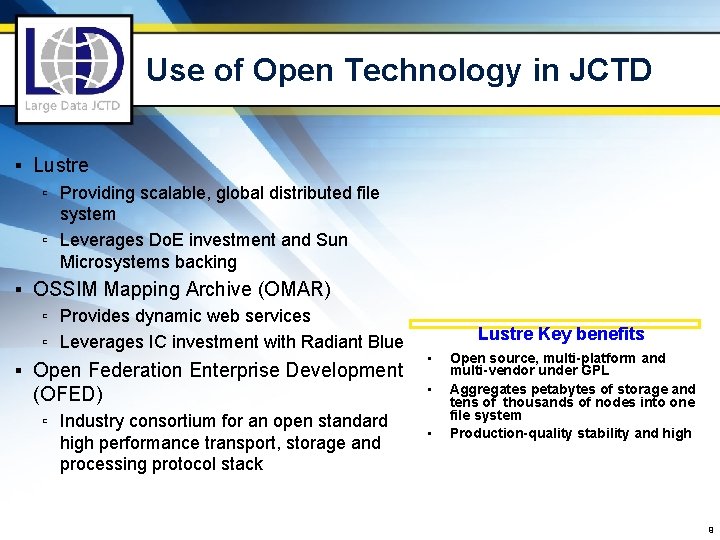

Use of Open Technology in JCTD ▪ Lustre ▫ Providing scalable, global distributed file system ▫ Leverages Do. E investment and Sun Microsystems backing ▪ OSSIM Mapping Archive (OMAR) ▫ Provides dynamic web services ▫ Leverages IC investment with Radiant Blue ▪ Open Federation Enterprise Development (OFED) ▫ Industry consortium for an open standard high performance transport, storage and processing protocol stack Lustre Key benefits • • • Open source, multi-platform and multi-vendor under GPL Aggregates petabytes of storage and tens of thousands of nodes into one file system Production-quality stability and high 9

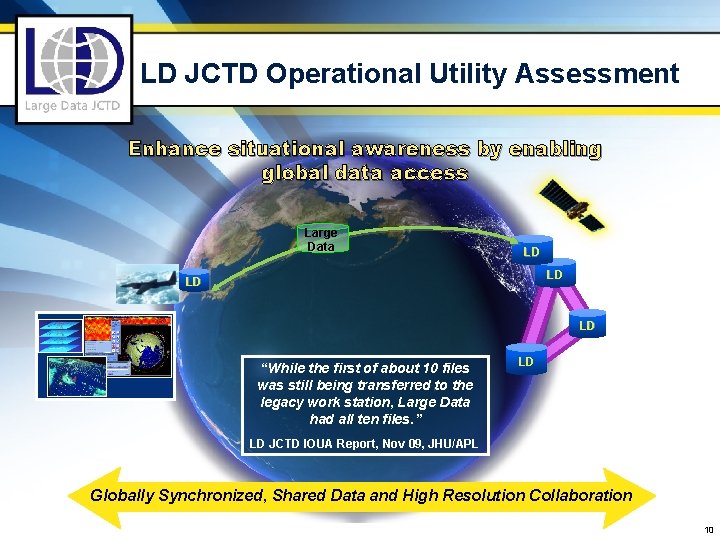

LD JCTD Operational Utility Assessment Enhance situational awareness by enabling global data access Large Data LD LD “While the first of about 10 files was still being transferred to the legacy work station, Large Data had all ten files. ” LD LD JCTD IOUA Report, Nov 09, JHU/APL Globally Synchronized, Shared Data and High Resolution Collaboration 10

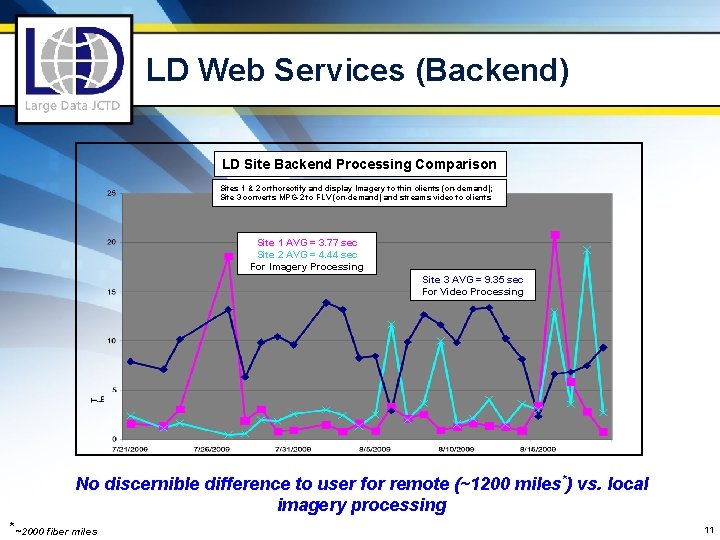

LD Web Services (Backend) LD Site Backend Processing Comparison Sites 1 & 2 orthorectify and display Imagery to thin clients (on-demand); Site 3 converts MPG-2 to FLV (on-demand) and streams video to clients Site 1 AVG = 3. 77 sec Site 2 AVG = 4. 44 sec For Imagery Processing Site 3 AVG = 9. 35 sec For Video Processing No discernible difference to user for remote (~1200 miles*) vs. local imagery processing * ~2000 fiber miles 11

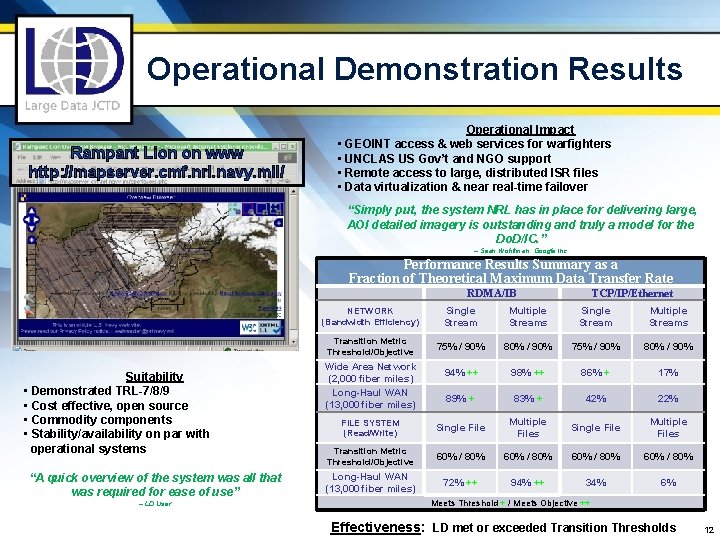

Operational Demonstration Results Rampant Lion on www http: //mapserver. cmf. nrl. navy. mil/ Operational Impact • GEOINT access & web services for warfighters • UNCLAS US Gov’t and NGO support • Remote access to large, distributed ISR files • Data virtualization & near real-time failover “Simply put, the system NRL has in place for delivering large, AOI detailed imagery is outstanding and truly a model for the Do. D/IC. ” – Sean Wohltman, Google Inc. Performance Results Summary as a Fraction of Theoretical Maximum Data Transfer Rate RDMA/IB Suitability • Demonstrated TRL-7/8/9 • Cost effective, open source • Commodity components • Stability/availability on par with operational systems “A quick overview of the system was all that was required for ease of use” -- LD User TCP/IP/Ethernet NETWORK (Bandwidth Efficiency) Single Stream Multiple Streams Transition Metric Threshold/Objective 75% / 90% 80% / 90% 94% ++ 98% ++ 86% + 17% 89% + 83% + 42% 22% FILE SYSTEM (Read/Write) Single File Multiple Files Transition Metric Threshold/Objective 60% / 80% Long-Haul WAN (13, 000 fiber miles) 72% ++ 94% ++ 34% 6% Wide Area Network (2, 000 fiber miles) Long-Haul WAN (13, 000 fiber miles) Meets Threshold + / Meets Objective ++ Effectiveness: LD met or exceeded Transition Thresholds 12

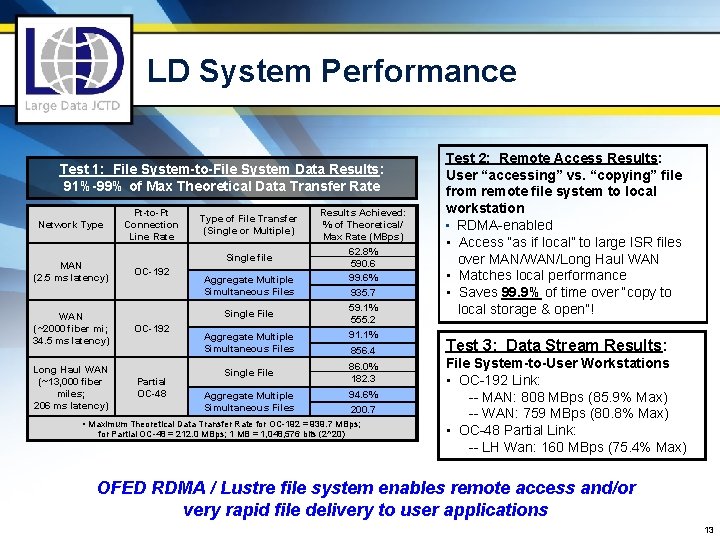

LD System Performance Test 1: File System-to-File System Data Results: 91%-99% of Max Theoretical Data Transfer Rate Network Type Pt-to-Pt Connection Line Rate MAN (2. 5 ms latency) OC-192 WAN (~2000 fiber mi; 34. 5 ms latency) Long Haul WAN (~13, 000 fiber miles; 206 ms latency) Type of File Transfer (Single or Multiple) Single file Aggregate Multiple Simultaneous Files Single File OC-192 Partial OC-48 Results Achieved: % of Theoretical/ Max Rate (MBps) 62. 8% 590. 6 99. 6% 935. 7 59. 1% 555. 2 Aggregate Multiple Simultaneous Files 91. 1% 856. 4 Single File 86. 0% 182. 3 Aggregate Multiple Simultaneous Files 94. 6% 200. 7 • Maximum Theoretical Data Transfer Rate for OC-192 = 939. 7 MBps; for Partial OC-48 = 212. 0 MBps; 1 MB = 1, 048, 576 bits (2^20) Test 2: Remote Access Results: User “accessing” vs. “copying” file from remote file system to local workstation • RDMA-enabled • Access “as if local” to large ISR files over MAN/WAN/Long Haul WAN • Matches local performance • Saves 99. 9% of time over “copy to local storage & open”! Test 3: Data Stream Results: File System-to-User Workstations • OC-192 Link: -- MAN: 808 MBps (85. 9% Max) -- WAN: 759 MBps (80. 8% Max) • OC-48 Partial Link: -- LH Wan: 160 MBps (75. 4% Max) OFED RDMA / Lustre file system enables remote access and/or very rapid file delivery to user applications 13

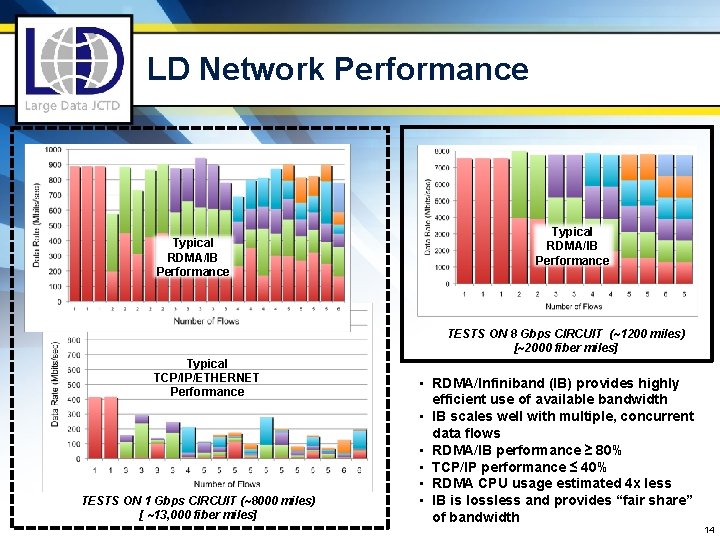

LD Network Performance Typical RDMA/IB Performance TESTS ON 8 Gbps CIRCUIT (~1200 miles) [~2000 fiber miles] Typical TCP/IP/ETHERNET Performance TESTS ON 1 Gbps CIRCUIT (~8000 miles) [ ~13, 000 fiber miles] • RDMA/Infiniband (IB) provides highly efficient use of available bandwidth • IB scales well with multiple, concurrent data flows • RDMA/IB performance ≥ 80% • TCP/IP performance ≤ 40% • RDMA CPU usage estimated 4 x less • IB is lossless and provides “fair share” of bandwidth 14

Large Data DISN Interface • LD architecture efficiently exploits Large Data JCTD GIG-BE/DISN • Cost effective system interfaces can Data - Centric take full advantage of 10 Gbps Info Services, Collaboration, • Flexibility to utilize all encryption Visualization, Applications solutions • Options to leverage lambda, Servers IP/MPLS, Ethernet services Global File • Options to connect to current DISN System Interfaces Storage Virt. • 10 Gbps lambda • 1 Gbps IP for smaller clients • 2. 5 Gbps PT-to-PT • RDMA IP Options to connect to future DISN IB & Ethernet Interfaces • 40** Gbps lambda • 10/40** Gbps IP/MPLS service* • 10/40** Gbps Ethernet service* * Requires no new development; not yet validated in LD DISN IP - Centric Voice, Video, and Data All security domains (e. g. Unclassified etc. ) Lambda Access IP DWDM FIBER 15

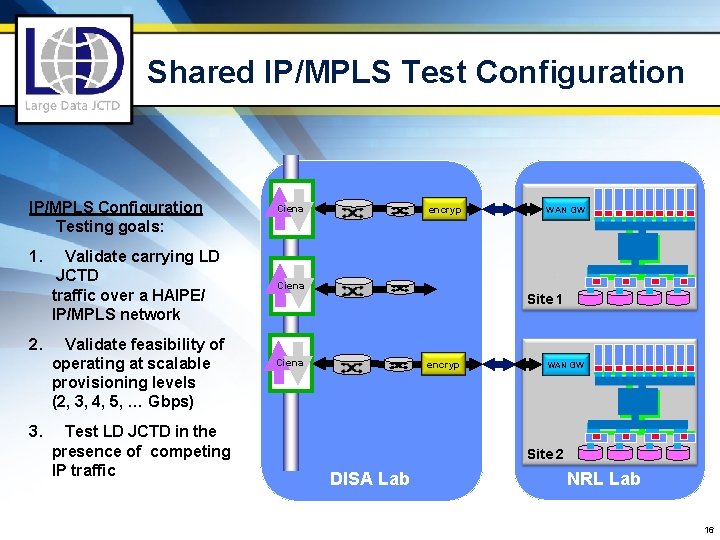

Shared IP/MPLS Test Configuration IP/MPLS Configuration Testing goals: 1. Validate carrying LD JCTD traffic over a HAIPE/ IP/MPLS network 2. Validate feasibility of operating at scalable provisioning levels (2, 3, 4, 5, … Gbps) 3. Test LD JCTD in the presence of competing IP traffic Ciena encryp Ciena WAN GW Site 1 Ciena encryp WAN GW Site 2 DISA Lab NRL Lab 16

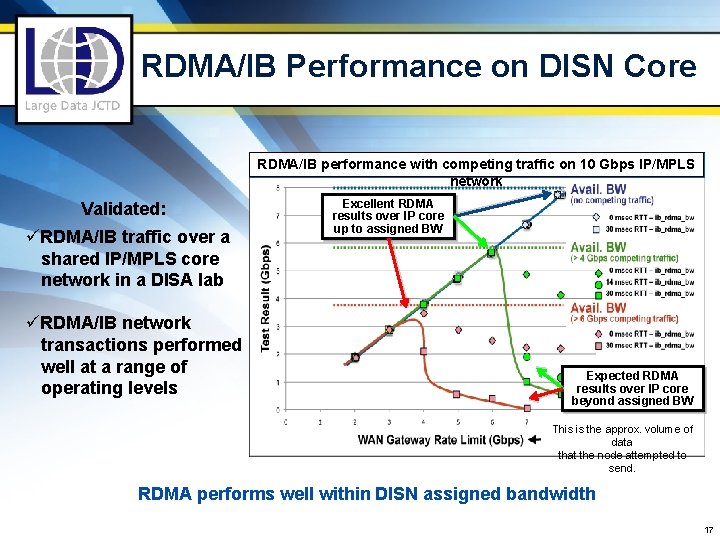

RDMA/IB Performance on DISN Core RDMA/IB performance with competing traffic on 10 Gbps IP/MPLS network Validated: üRDMA/IB traffic over a shared IP/MPLS core network in a DISA lab üRDMA/IB network transactions performed well at a range of operating levels Excellent RDMA results over IP core up to assigned BW Expected RDMA results over IP core beyond assigned BW This is the approx. volume of data that the node attempted to send. RDMA performs well within DISN assigned bandwidth 17

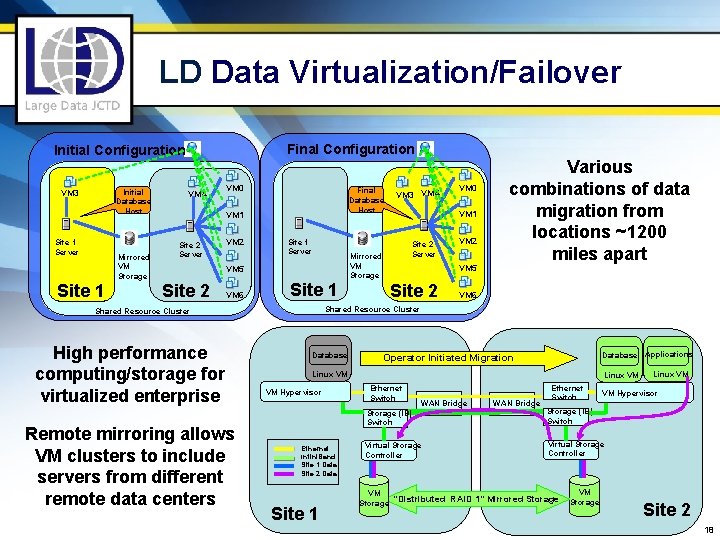

LD Data Virtualization/Failover Final Configuration Initial Database Host VM 3 Site 1 Server Mirrored VM Storage Site 1 VM 4 VM 0 Final Database Host VM 1 Site 2 Server VM 2 Site 1 Server VM 6 Site 1 Remote mirroring allows VM clusters to include servers from different remote data centers Site 2 Server VM 2 VM 5 Site 2 VM 6 Shared Resource Cluster High performance computing/storage for virtualized enterprise VM 0 VM 1 Mirrored VM Storage VM 5 Site 2 VM 3 VM 4 Various combinations of data migration from locations ~1200 miles apart Database Applications Operator Initiated Migration Linux VM VM Hypervisor Ethernet Infini. Band Site 1 Data Site 2 Data Site 1 Linux VM Ethernet Switch WAN Bridge Ethernet Switch VM Hypervisor Storage (IB) Switch Virtual Storage Controller VM Storage “Distributed RAID 1” Mirrored Storage VM Storage Linux VM Site 2 18

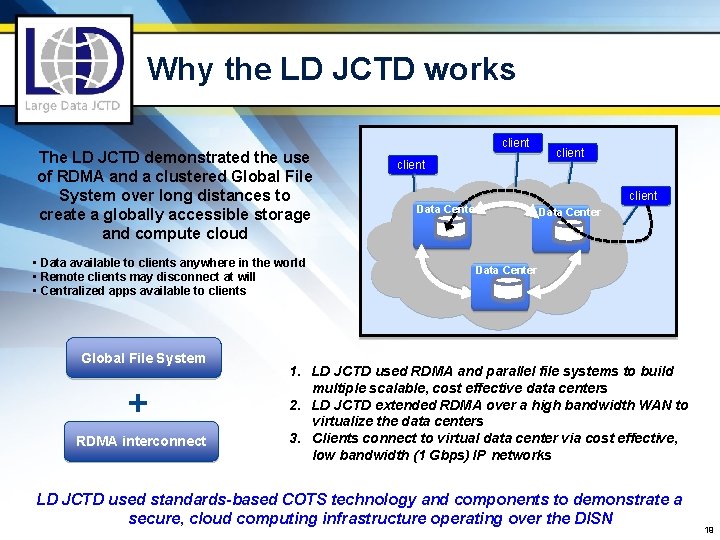

Why the LD JCTD works The LD JCTD demonstrated the use of RDMA and a clustered Global File System over long distances to create a globally accessible storage and compute cloud • Data available to clients anywhere in the world • Remote clients may disconnect at will • Centralized apps available to clients Global File System + RDMA interconnect client Data Center 1. LD JCTD used RDMA and parallel file systems to build multiple scalable, cost effective data centers 2. LD JCTD extended RDMA over a high bandwidth WAN to virtualize the data centers 3. Clients connect to virtual data center via cost effective, low bandwidth (1 Gbps) IP networks LD JCTD used standards-based COTS technology and components to demonstrate a secure, cloud computing infrastructure operating over the DISN 19

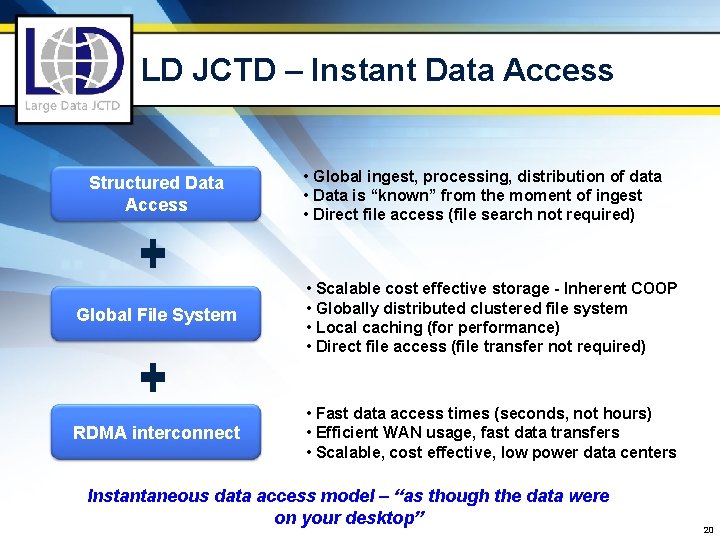

LD JCTD – Instant Data Access Structured Data Access • Global ingest, processing, distribution of data • Data is “known” from the moment of ingest • Direct file access (file search not required) Global File System • Scalable cost effective storage - Inherent COOP • Globally distributed clustered file system • Local caching (for performance) • Direct file access (file transfer not required) RDMA interconnect • Fast data access times (seconds, not hours) • Efficient WAN usage, fast data transfers • Scalable, cost effective, low power data centers Instantaneous data access model – “as though the data were on your desktop” 20

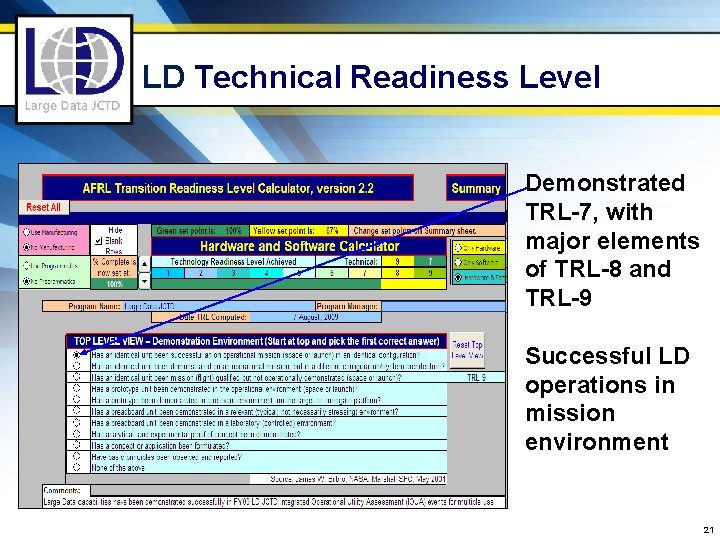

LD Technical Readiness Level Demonstrated TRL-7, with major elements of TRL-8 and TRL-9 Successful LD operations in mission environment 21

LD Transition ▪ Do. D and IC programs of record are adopting LD benefits and capabilities in FY 10/11 for: q Rapid, global data access and federated exploitation for very large files such as imagery and wide area persistent surveillance q Operationally responsive data dissemination/transfer q Data federation & synchronization for planning q Support to global intelligence operations q Enhancing net-centric data delivery to warfighters 22

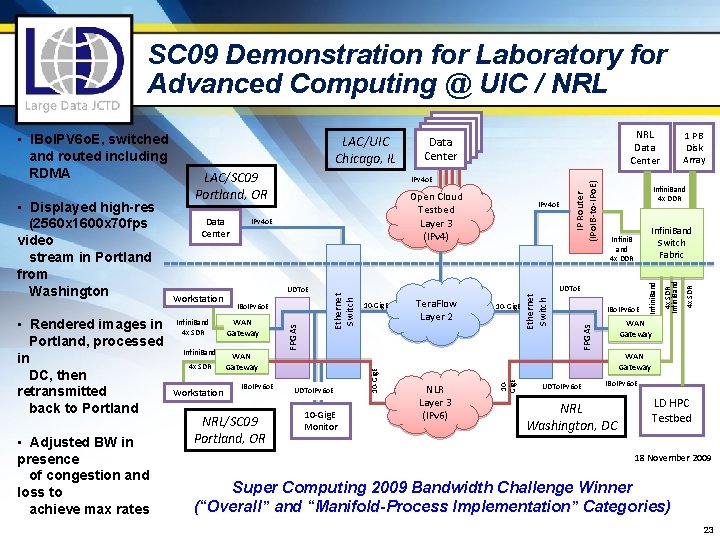

SC 09 Demonstration for Laboratory for Advanced Computing @ UIC / NRL • Adjusted BW in presence of congestion and loss to achieve max rates Infini. Band 4 x SDR Workstation WAN Gateway IBo. IPv 6 o. E NRL/SC 09 Portland, OR UDTo. IPv 6 o. E 10 -Gig. E Monitor Tera. Flow Layer 2 NLR Layer 3 (IPv 6) 10 -Gig. E IP Router (IPo. IB-to-IPo. E) IBo. IPv 6 o. E UDTo. IPv 6 o. E 4 x SDR 10 -Gig. E UDTo. E 4 x SDR Infini. Band IBo. IPv 6 o. E Infini. Band Switch Fabric Infini. B and 4 x DDR Infini. Band UDTo. E 1 PB Disk Array Infini. Band 4 x DDR WAN Gateway FPGAs Infini. Band 4 x SDR IPv 4 o. E Ethernet Switch Workstation Open Cloud Testbed Layer 3 (IPv 4) 10 Gig. E Data Center NRL Data Center IPv 4 o. E 10 -Gig. E • Rendered images in Portland, processed in DC, then retransmitted back to Portland LAC/SC 09 Portland, OR Ethernet Switch • Displayed high-res (2560 x 1600 x 70 fps video stream in Portland from Washington LAC/UIC Chicago, IL FPGAs • IBo. IPV 6 o. E, switched and routed including RDMA WAN Gateway IBo. IPv 6 o. E NRL Washington, DC LD HPC Testbed 18 November 2009 Super Computing 2009 Bandwidth Challenge Winner (“Overall” and “Manifold-Process Implementation” Categories) 23

Dr. Hank Dardy, 1943 -2010 24

Questions? 25

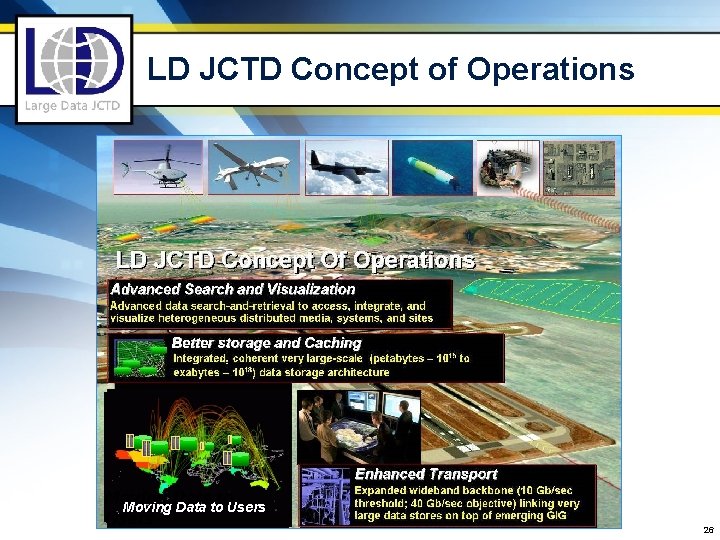

LD JCTD Concept of Operations Moving Data to Users 26

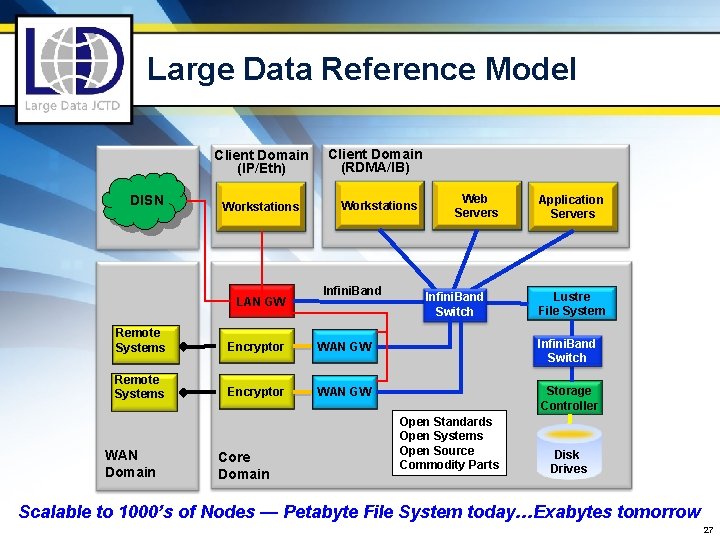

Large Data Reference Model Client Domain (IP/Eth) DISN Workstations LAN GW Client Domain (RDMA/IB) Workstations Infini. Band Web Servers Infini. Band Switch Application Servers Lustre File System Remote Systems Encryptor WAN GW Infini. Band Switch Remote Systems Encryptor WAN GW Storage Controller WAN Domain Core Domain Open Standards Open Systems Open Source Commodity Parts Disk Drives Scalable to 1000’s of Nodes — Petabyte File System today…Exabytes tomorrow 27

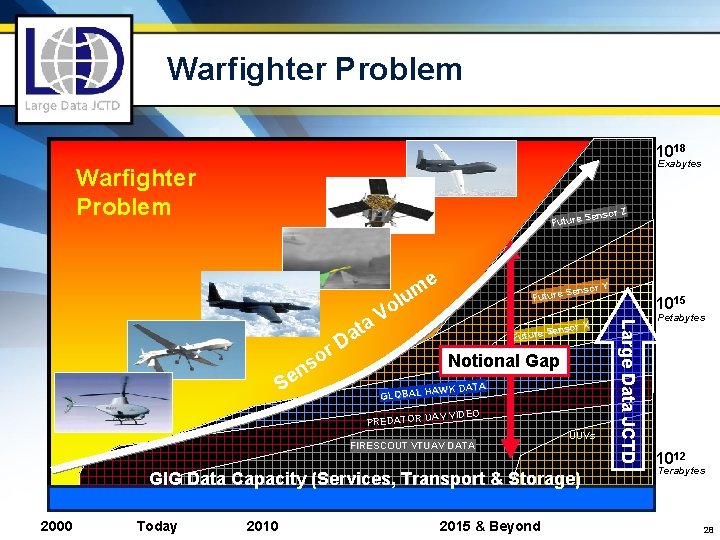

Warfighter Problem 1018 Exabytes Warfighter Problem r Z Senso Future e m lu r X Senso Future o s en S Notional Gap AWK DATA GLOBAL H AV VIDEO PREDATOR U FIRESCOUT VTUAV DATA UUVs GIG Data Capacity (Services, Transport & Storage) 2000 Today 2010 2015 & Beyond 1015 Large Data JCTD o V ta a r D r Y Senso Future Petabytes 1012 Terabytes 28

Summary ▪ LD underpins net-centric warfighting by providing a data-centric Do. D information enterprise ▫ LD seeded in key programs ▫ Next generation performance (scalable to exabytes) in smaller footprint at lower cost ▪ Working with Transition Partners to ensure integrated enterprise implementation 29

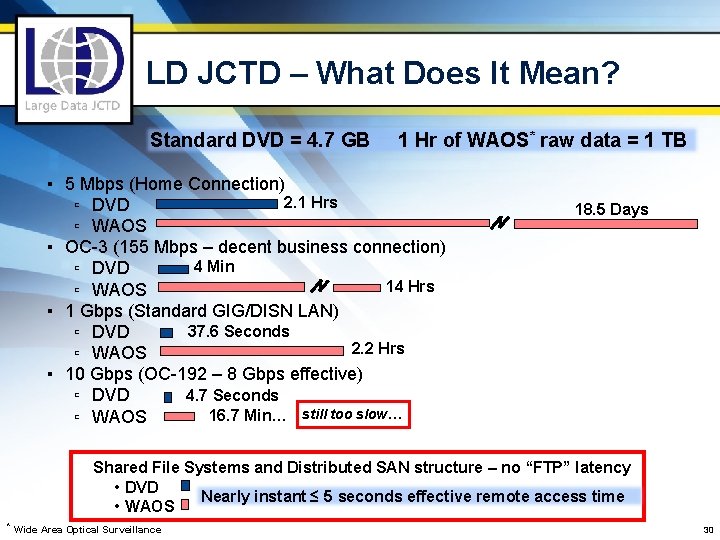

LD JCTD – What Does It Mean? Standard DVD = 4. 7 GB 1 Hr of WAOS* raw data = 1 TB ▪ 5 Mbps (Home Connection) 2. 1 Hrs ▫ DVD ▫ WAOS ▪ OC-3 (155 Mbps – decent business connection) 4 Min ▫ DVD 14 Hrs ▫ WAOS ▪ 1 Gbps (Standard GIG/DISN LAN) 37. 6 Seconds ▫ DVD 2. 2 Hrs ▫ WAOS ▪ 10 Gbps (OC-192 – 8 Gbps effective) 4. 7 Seconds ▫ DVD 16. 7 Min… still too slow… ▫ WAOS 18. 5 Days Shared File Systems and Distributed SAN structure – no “FTP” latency • DVD • WAOS * Wide Area Optical Surveillance Nearly instant ≤ 5 seconds effective remote access time 30

Large Data JCTD Global Access, Global Visualization OE OM TM Fritz Schultz 703. 697. 3443 Fritz. Schultz@osd. mil Randy Heth 402. 232. 2122 hethr@stratcom. mil Jim Hofmann 202. 404. 3132 jhofmann@cmf. nrl. navy. mil XM Mike O’Brien 703. 735. 2721 Michael. A. Obrien@nga. mil XM Mike Laurine 703. 882. 1358 Michael. Laurine@disa. mil

- Slides: 31