ITICERTH Augmented and Virtual Reality Laboratory AVRLab The

ITI-CERTH Augmented and Virtual Reality Laboratory (AVRLab) The AVRL of the Informatics and Telematics Institute exhibits substantial research activity, both basic and industry-oriented, as well as technology transfer actions, in the area of virtual, augmented and mixed reality. This area includes modeling and simulation of complex virtual environments, haptic force feedback applied research, 3 D modeling and compression, deformable objects and cloth modeling, collaborative virtual environments, new sensor development for head/hands/body tracking, multimedia analysis/synthesis, gesture recognition, head motion modelling and tracking, advanced applications of virtual/augmented/mixed reality in education and training, e-health, assistive technologies, culture, industry and e-business.

The ITI-CERTH AVRLab operates a fully operational and well-equipped Virtual Reality Laboratory that serves as a reference for augmented and virtual reality applications and demonstrations in Greece. It employs a high quality scientific group of personnel for the design, development and demonstration of advanced augmented and virtual reality applications and services. It participates in research networks with assorted institutes and industrial partners in Greece and Europe and has established a spin-off company, to commercially exploit the research results of the Laboratory. Some significant applied research fields where ITI is activated are: ü ü Haptics Computer Graphics 3 D Semantics Mixed and Augmented Reality ü Virtual, Augmented and Mixed Reality Applications

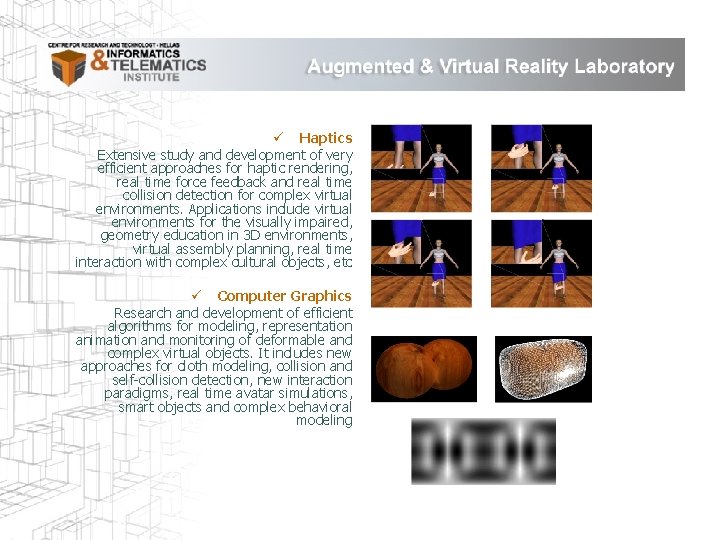

ü Haptics Extensive study and development of very efficient approaches for haptic rendering, real time force feedback and real time collision detection for complex virtual environments. Applications include virtual environments for the visually impaired, geometry education in 3 D environments, virtual assembly planning, real time interaction with complex cultural objects, etc ü Computer Graphics Research and development of efficient algorithms for modeling, representation animation and monitoring of deformable and complex virtual objects. It includes new approaches for cloth modeling, collision and self-collision detection, new interaction paradigms, real time avatar simulations, smart objects and complex behavioral modeling

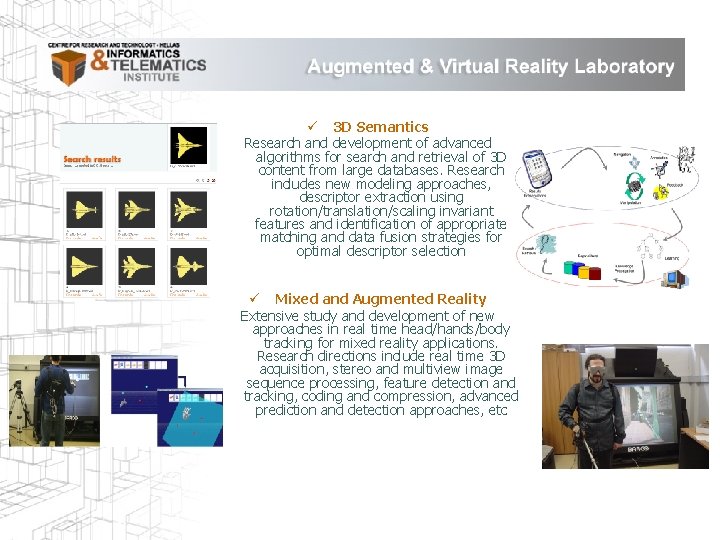

ü 3 D Semantics Research and development of advanced algorithms for search and retrieval of 3 D content from large databases. Research includes new modeling approaches, descriptor extraction using rotation/translation/scaling invariant features and identification of appropriate matching and data fusion strategies for optimal descriptor selection ü Mixed and Augmented Reality Extensive study and development of new approaches in real time head/hands/body tracking for mixed reality applications. Research directions include real time 3 D acquisition, stereo and multiview image sequence processing, feature detection and tracking, coding and compression, advanced prediction and detection approaches, etc

ü Virtual, Augmented and Mixed Reality Applications Application oriented research in areas such as education and training, e-health, assistive technologies, culture, industry, e-business, phobia treatment, etc. Includes various approaches utilizing and integrating appropriate virtual/augmented/mixed reality strategies for enhanced application realism

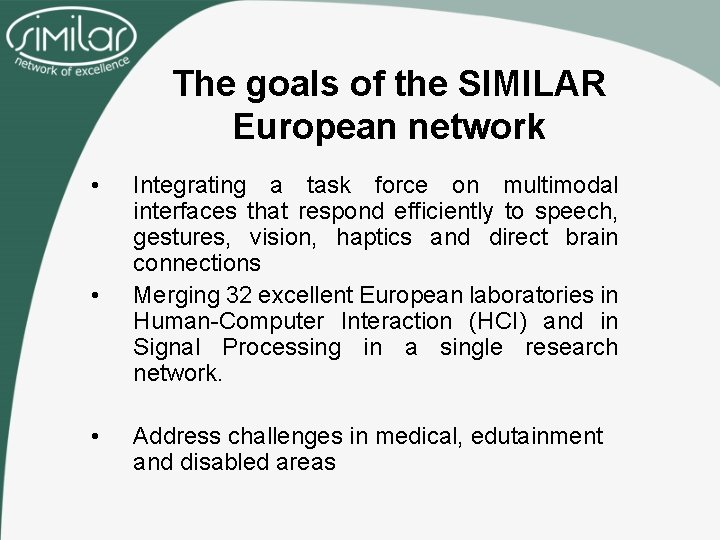

The goals of the SIMILAR European network • • • Integrating a task force on multimodal interfaces that respond efficiently to speech, gestures, vision, haptics and direct brain connections Merging 32 excellent European laboratories in Human-Computer Interaction (HCI) and in Signal Processing in a single research network. Address challenges in medical, edutainment and disabled areas

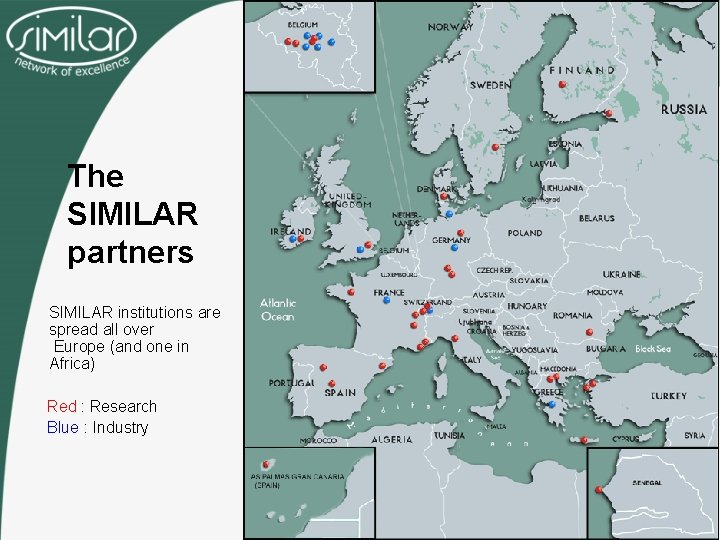

The SIMILAR partners SIMILAR institutions are spread all over Europe (and one in Africa) Red : Research Blue : Industry

Secondary Objectives – SIMILAR will develop a common theoretical framework for fusion and fission of multimodal information using Signal Processing tools constrained by Human Computer Interaction rules. – SIMILAR will develop a network of usability test facilities. – SIMILAR will manage an International Journal, Special Sessions in conferences, summer schools, interact with industrial partners and promote new research activities. – SIMILAR will address a series of grand challenges in edutainment, disabled people and medical applications and will develop new interfaces for environments where the user is unable to use his hands, like in surgical operation rooms, or in cars.

The 7 SIGs (Special Interest Groups ) – – – – Information Fusion and Fission Multimodal Analysis and Synthesis Context Aware Adaptation Usability Medical Applications Edutainment Applications Disabled People Applications

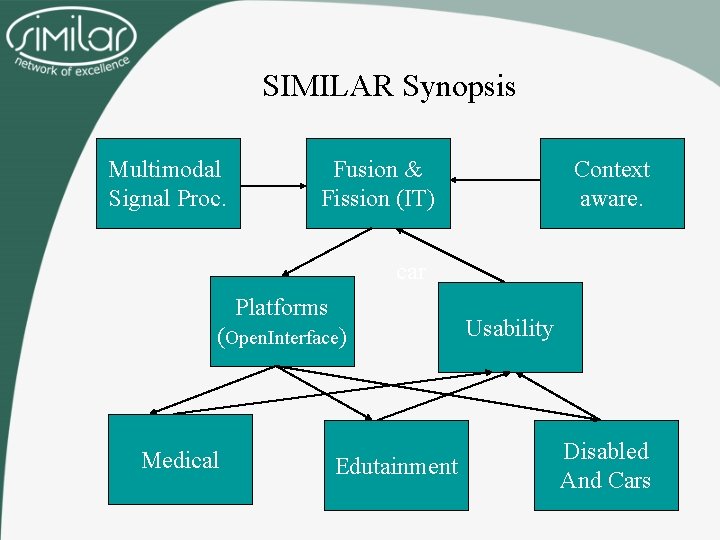

SIMILAR Synopsis Multimodal Signal Proc. Fusion & Fission (IT) Context aware. car Platforms (Open. Interface) Medical Edutainment Usability Disabled And Cars

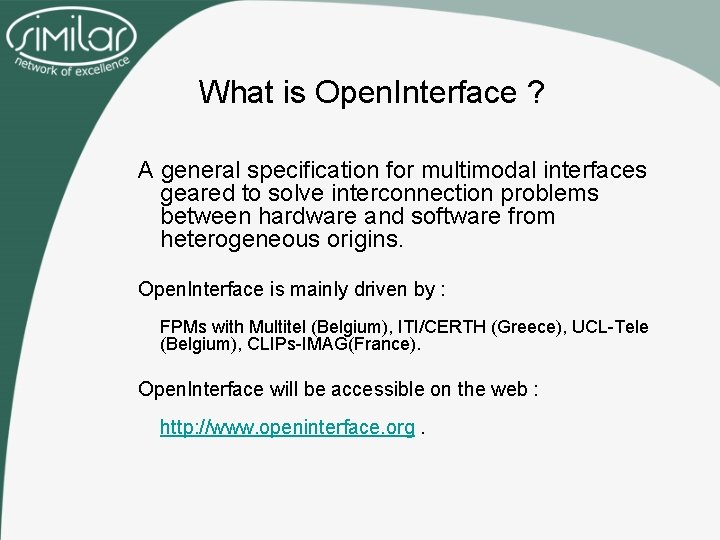

What is Open. Interface ? A general specification for multimodal interfaces geared to solve interconnection problems between hardware and software from heterogeneous origins. Open. Interface is mainly driven by : FPMs with Multitel (Belgium), ITI/CERTH (Greece), UCL-Tele (Belgium), CLIPs-IMAG(France). Open. Interface will be accessible on the web : http: //www. openinterface. org.

Interconnection problems (1) Collaborative research between a large amount of partners leads to interconnection problems…

Interconnection problems (2) • John is working on a voice recognition algorithm and would like to test it into a real application… • Brenda has a 3 D visualisation system that would be interesting to drive with voice… ? … but programming interfaces are not the same !

Interconnection problems (3) • Brenda’s 3 D visualisation system looks very promising and Wiliam would like to use it to inspect his 3 D segmented medical structures… ? … but programming interfaces are not the same !

Interconnection problems (4) • Solution : SIMILARPlatforms SIMILAR Platforms (Open. Interface) Creation of a common language, using XML, that will be understood by anyone and used to describe module’s interfaces.

Interconnection problems (5) • Once a common language as been created, everybody will be able to drive partner’s plugin easily and in a way that could be re-used to drive other partner’s module of the same type. Zoom 1, 5

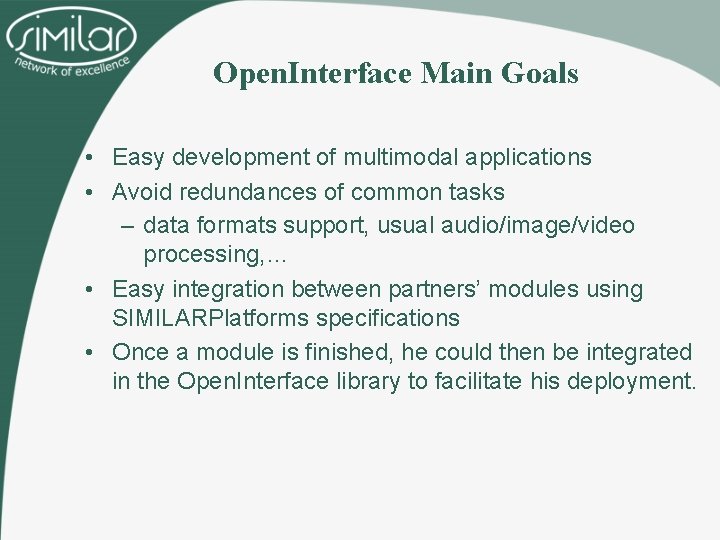

Open. Interface Main Goals • Easy development of multimodal applications • Avoid redundances of common tasks – data formats support, usual audio/image/video processing, … • Easy integration between partners’ modules using SIMILARPlatforms specifications • Once a module is finished, he could then be integrated in the Open. Interface library to facilitate his deployment.

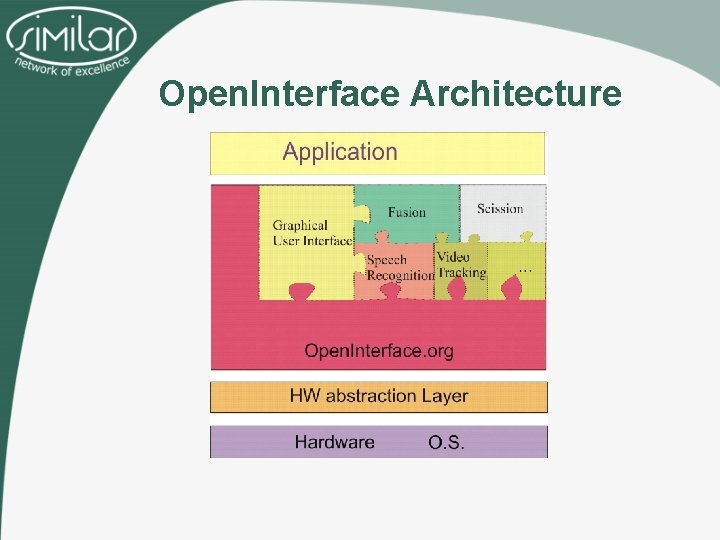

Open. Interface Architecture

Open. Interface : Component based architecture (1) • Each functionality is a component • Components are grouped into plugins (shared libraries) • The content of each plugin is described using the Similar. Platforms language

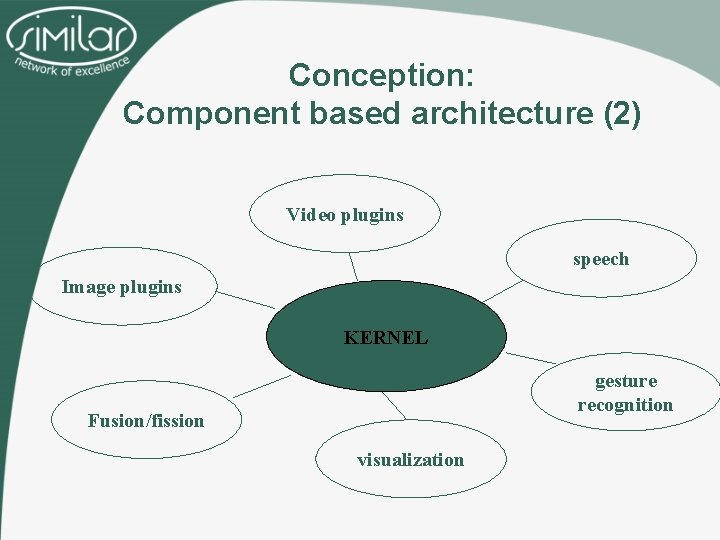

Conception: Component based architecture (2) Video plugins speech Image plugins KERNEL gesture recognition Fusion/fission visualization

Open. Interface Editor • The Open. Interface Editor will – Facilitate the generation of new multimodal applications by simple drag and drop of partner’s modules in a intuitive graphical user interface, – Use the Open. Interface library and the SIMILARPlatforms language to automaticaly add new modules, – Allow rapid creation of protopytes to test new multimodal interfaces.

Similar e. NTERFACE workshops • Similar organizes annual workshops on multimodal signal processing and interaction – Graduate, master, Ph. D, postdoctoral students as well as Seniors meet for four weeks and work together in common or complementary research areas and develop novel applications! • e. NTERFACE 2005 in Mons, Belgium was a complete success • e. NTERFACE 2006 in Dubrovnik was also successful • Hopefully e. NTERFACE projects will continue far beyond SIMILAR

e. NTERFACE 2005 projects • Project 1: Combined Gesture-Speech Analysis and Synthesis • Project 2: Multimodal Caricatural Mirror • Project 3: Biologically-driven musical instrument • Project 4: Multimodal Focus Attention Detection in an Augmented Driver Simulator • Project 5: Multilingual Multimodal Biometric Identification/Verification • Project 6: Speech Conductor • Project 7: A Multimodal (Gesture+Speech) Interface for 3 D Model Search and Retrieval Integrated in a Virtual Assembly Application

e. NTERFACE 2006 projects • Project 1: An Agent Based Multicultural User Interface in a Customer Service Application • Project 2: Multimodal tools and interfaces for the intercommunication between visually impaired and “deaf and mute” people • Project 3: Sign Language Tutoring Tool • Project 4: Multimodal Character Morphing • Project 5: Introducing Network-Awareness for Networked Multimedia and Multi-modal Applications • Project 6: An instrument of sound and visual creation driven by biological signals • Project 7: Emotion Detection in the Loop from Brain Signals and Facial Images • Project 8: Realtime and Accurate Control of Expression in Singing Synthesis • Project 9: Multimodal Driving Simulator

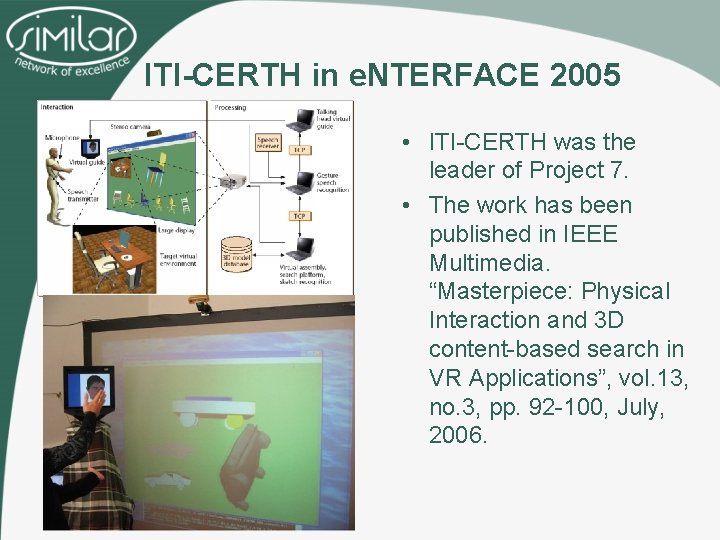

ITI-CERTH in e. NTERFACE 2005 • ITI-CERTH was the leader of Project 7. • The work has been published in IEEE Multimedia. “Masterpiece: Physical Interaction and 3 D content-based search in VR Applications”, vol. 13, no. 3, pp. 92 -100, July, 2006.

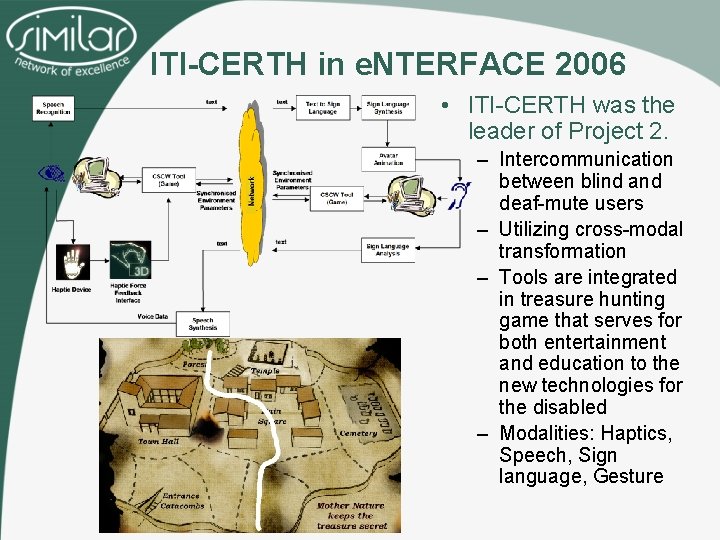

ITI-CERTH in e. NTERFACE 2006 • ITI-CERTH was the leader of Project 2. – Intercommunication between blind and deaf-mute users – Utilizing cross-modal transformation – Tools are integrated in treasure hunting game that serves for both entertainment and education to the new technologies for the disabled – Modalities: Haptics, Speech, Sign language, Gesture

THANK YOU! INFORMATICS & TELEMATICS INSTITUTE 1 st km. Thermi-Panorama Road PO BOX 361, 57001 THERMI THESSALONIKI, GREECE TEL: +30 2310 464160 FAX: +30 2310 464164 http: //www. iti. gr Konstantinos Moustakas Email: moustak@iti. gr

- Slides: 28