Iterative SoftDecision Decoding of AlgebraicGeometric Codes n n

Iterative Soft-Decision Decoding of Algebraic-Geometric Codes n n n n Li Chen Associate Professor School of Information Science and Technology, Sun Yat-sen University, Guangzhou, China chenli 55@mail. sysu. edu. cn website: sist. sysu. edu. cn/~chenli Institute of Network Coding and Department of Information Engineering, the Chinese University of Hong Kong 1 st of Aug, 2012

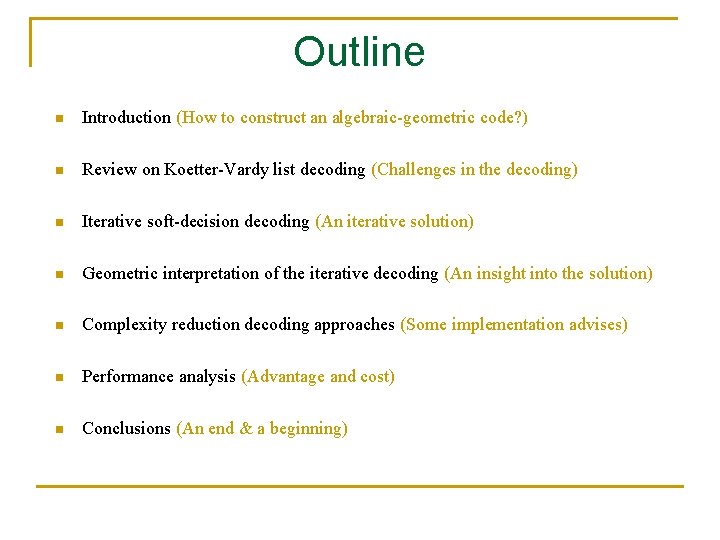

Outline n Introduction (How to construct an algebraic-geometric code? ) n Review on Koetter-Vardy list decoding (Challenges in the decoding) n Iterative soft-decision decoding (An iterative solution) n Geometric interpretation of the iterative decoding (An insight into the solution) n Complexity reduction decoding approaches (Some implementation advises) n Performance analysis (Advantage and cost) n Conclusions (An end & a beginning)

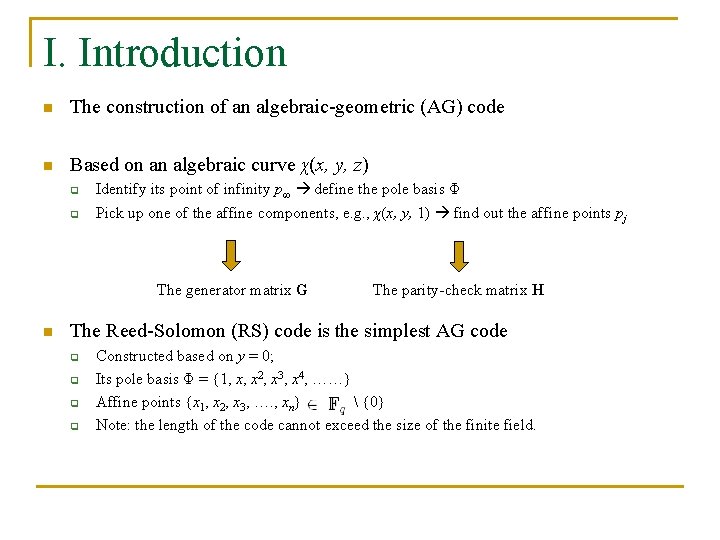

I. Introduction n The construction of an algebraic-geometric (AG) code n Based on an algebraic curve χ(x, y, z) q q Identify its point of infinity p∞ define the pole basis Φ Pick up one of the affine components, e. g. , χ(x, y, 1) find out the affine points pj The generator matrix G n The parity-check matrix H The Reed-Solomon (RS) code is the simplest AG code q q Constructed based on y = 0; Its pole basis Φ = {1, x, x 2, x 3, x 4, ……} Affine points {x 1, x 2, x 3, …. , xn} {0} Note: the length of the code cannot exceed the size of the finite field.

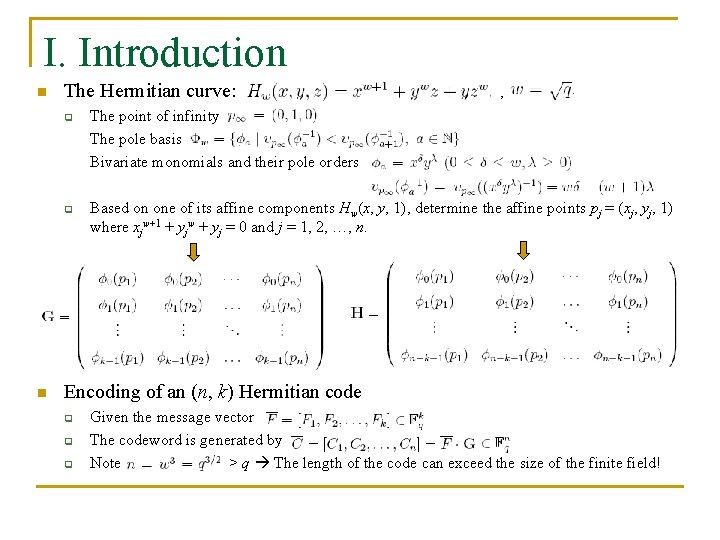

I. Introduction n The Hermitian curve: q q n , , The point of infinity The pole basis Bivariate monomials and their pole orders Based on one of its affine components Hw(x, y, 1), determine the affine points pj = (xj, yj, 1) where xjw+1 + yjw + yj = 0 and j = 1, 2, …, n. Encoding of an (n, k) Hermitian code q q q Given the message vector The codeword is generated by Note > q The length of the code can exceed the size of the finite field!

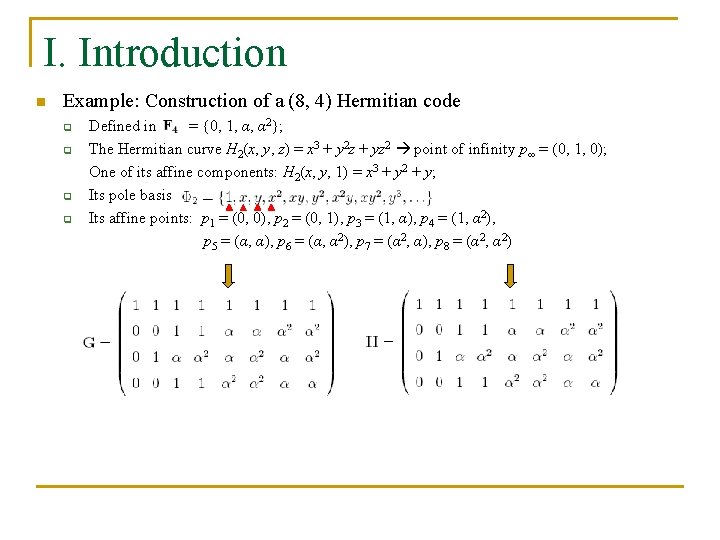

I. Introduction n Example: Construction of a (8, 4) Hermitian code q q Defined in = {0, 1, α, α 2}; The Hermitian curve H 2(x, y, z) = x 3 + y 2 z + yz 2 point of infinity p∞ = (0, 1, 0); One of its affine components: H 2(x, y, 1) = x 3 + y 2 + y; Its pole basis Its affine points: p 1 = (0, 0), p 2 = (0, 1), p 3 = (1, α), p 4 = (1, α 2), p 5 = (α, α), p 6 = (α, α 2), p 7 = (α 2, α), p 8 = (α 2, α 2)

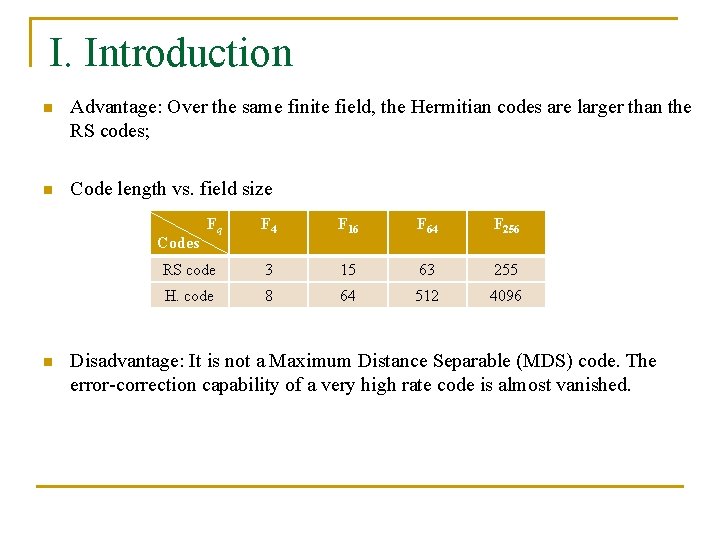

I. Introduction n Advantage: Over the same finite field, the Hermitian codes are larger than the RS codes; n Code length vs. field size F 4 F 16 F 64 F 256 RS code 3 15 63 255 H. code 8 64 512 4096 Codes n Fq Disadvantage: It is not a Maximum Distance Separable (MDS) code. The error-correction capability of a very high rate code is almost vanished.

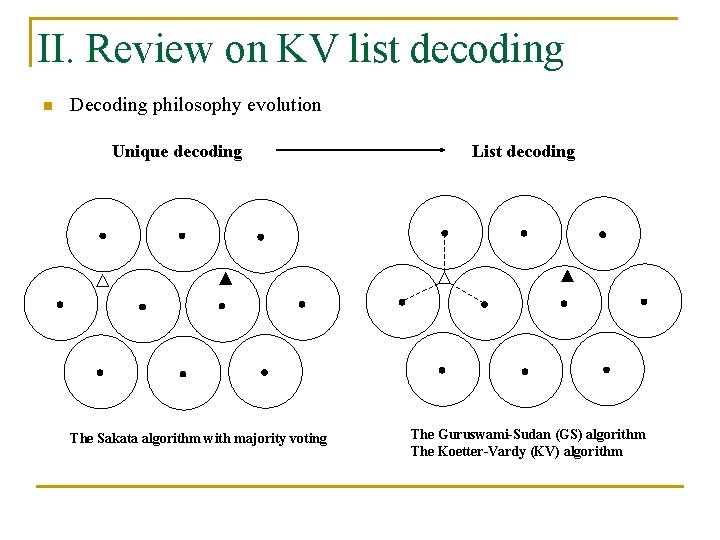

II. Review on KV list decoding n Decoding philosophy evolution Unique decoding The Sakata algorithm with majority voting List decoding The Guruswami-Sudan (GS) algorithm The Koetter-Vardy (KV) algorithm

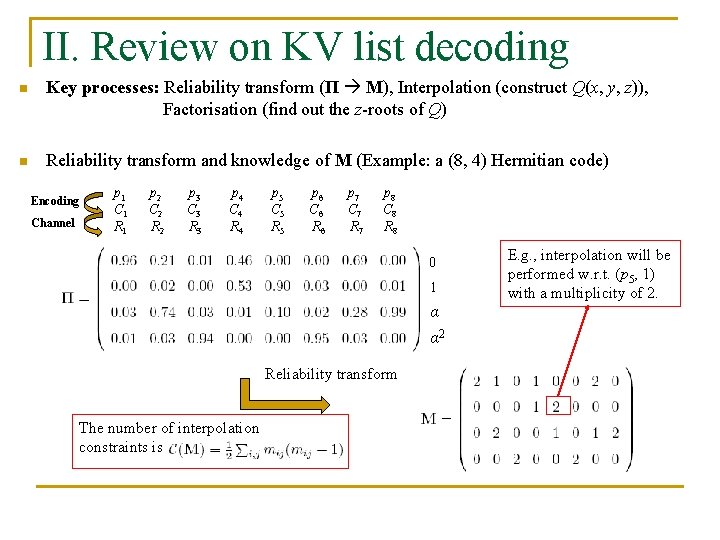

II. Review on KV list decoding n Key processes: Reliability transform (Π M), Interpolation (construct Q(x, y, z)), Factorisation (find out the z-roots of Q) n Reliability transform and knowledge of M (Example: a (8, 4) Hermitian code) Encoding Channel p 1 C 1 R 1 p 2 C 2 R 2 p 3 C 3 R 3 p 4 C 4 R 4 p 5 C 5 R 5 p 6 C 6 R 6 p 7 C 7 R 7 p 8 C 8 R 8 0 1 α α 2 Reliability transform The number of interpolation constraints is E. g. , interpolation will be performed w. r. t. (p 5, 1) with a multiplicity of 2.

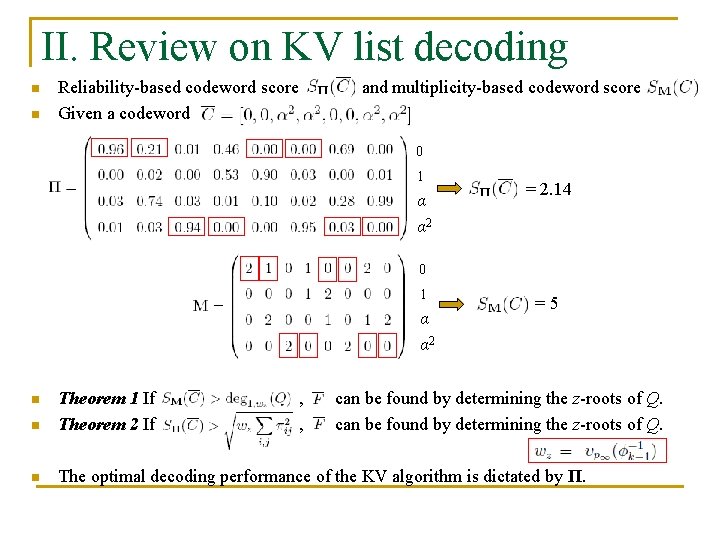

II. Review on KV list decoding n n Reliability-based codeword score Given a codeword and multiplicity-based codeword score 0 1 α α 2 = 2. 14 0 1 α α 2 n Theorem 1 If Theorem 2 If n The optimal decoding performance of the KV algorithm is dictated by Π. n , , =5 can be found by determining the z-roots of Q.

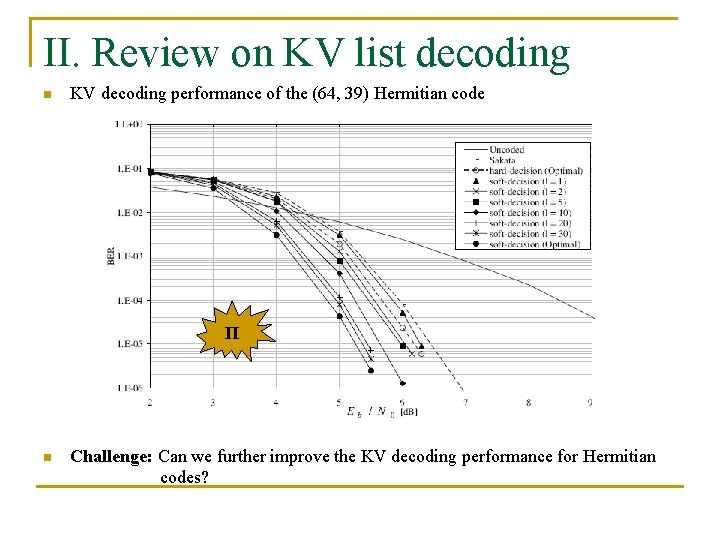

II. Review on KV list decoding n KV decoding performance of the (64, 39) Hermitian code Π n Challenge: Can we further improve the KV decoding performance for Hermitian codes?

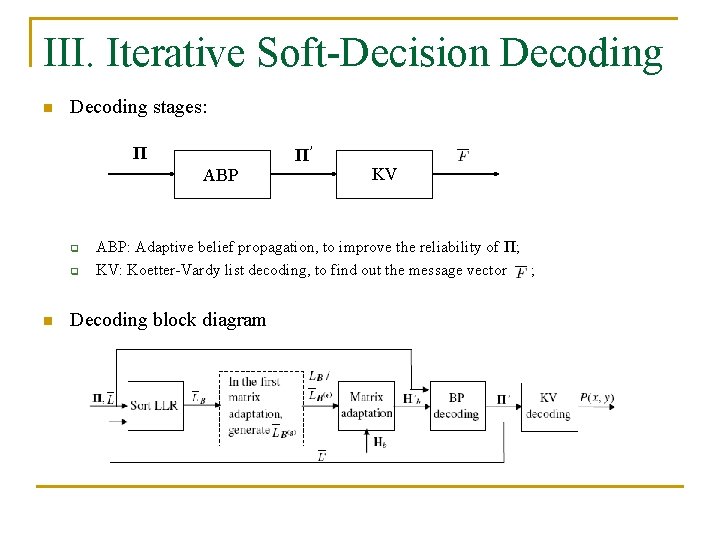

III. Iterative Soft-Decision Decoding stages: Π Π’ ABP q q n KV ABP: Adaptive belief propagation, to improve the reliability of Π; KV: Koetter-Vardy list decoding, to find out the message vector ; Decoding block diagram

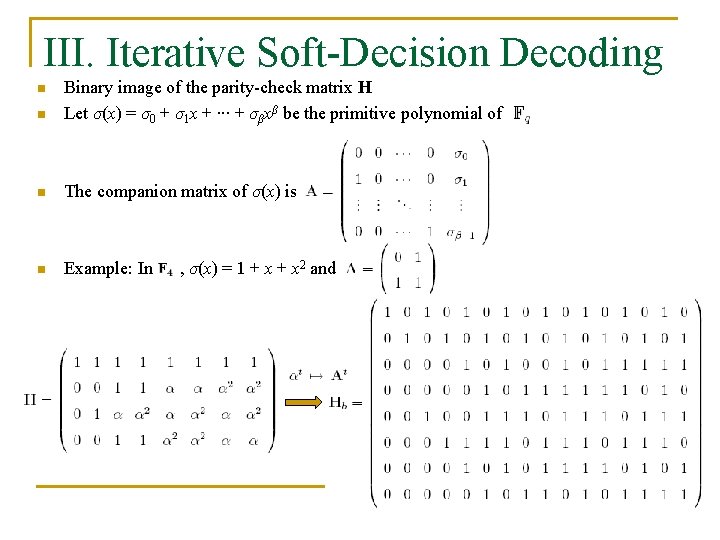

III. Iterative Soft-Decision Decoding n Binary image of the parity-check matrix H Let σ(x) = σ0 + σ1 x + ∙∙∙ + σβxβ be the primitive polynomial of n The companion matrix of σ(x) is n Example: In n , σ(x) = 1 + x 2 and

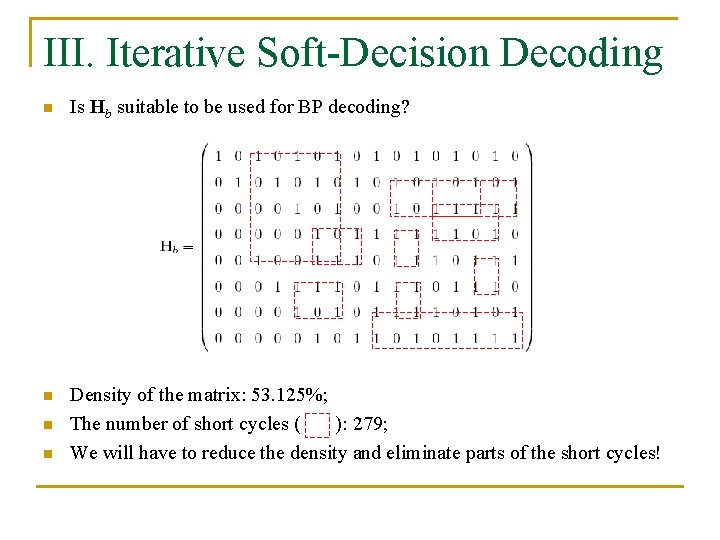

III. Iterative Soft-Decision Decoding n Is Hb suitable to be used for BP decoding? n Density of the matrix: 53. 125%; The number of short cycles ( ): 279; We will have to reduce the density and eliminate parts of the short cycles! n n

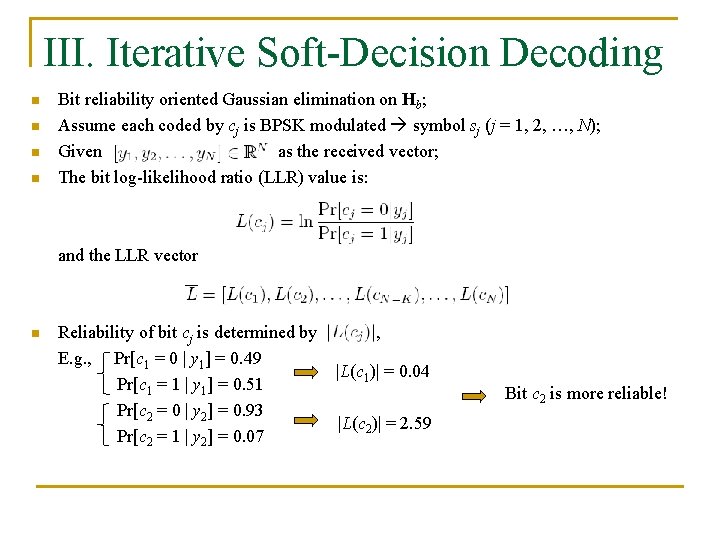

III. Iterative Soft-Decision Decoding n n Bit reliability oriented Gaussian elimination on Hb; Assume each coded by cj is BPSK modulated symbol sj (j = 1, 2, …, N); Given as the received vector; The bit log-likelihood ratio (LLR) value is: and the LLR vector n Reliability of bit cj is determined by E. g. , Pr[c 1 = 0 | y 1] = 0. 49 Pr[c 1 = 1 | y 1] = 0. 51 Pr[c 2 = 0 | y 2] = 0. 93 Pr[c 2 = 1 | y 2] = 0. 07 , |L(c 1)| = 0. 04 |L(c 2)| = 2. 59 Bit c 2 is more reliable!

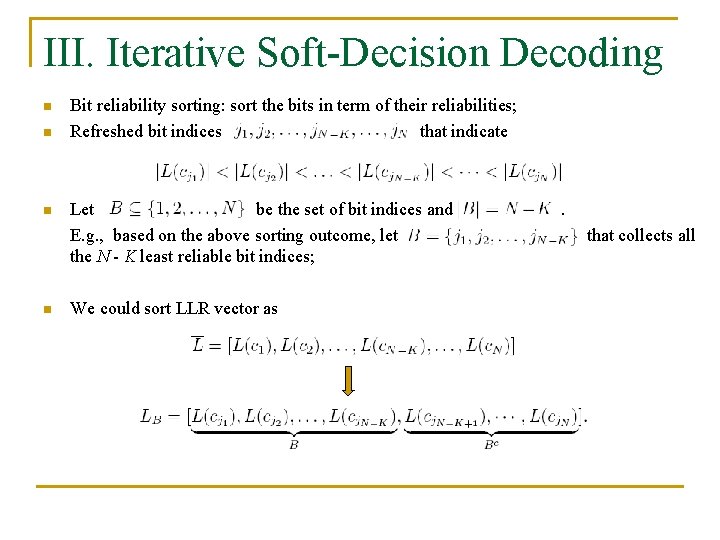

III. Iterative Soft-Decision Decoding n n Bit reliability sorting: sort the bits in term of their reliabilities; Refreshed bit indices that indicate Let be the set of bit indices and E. g. , based on the above sorting outcome, let the N - K least reliable bit indices; We could sort LLR vector as . that collects all

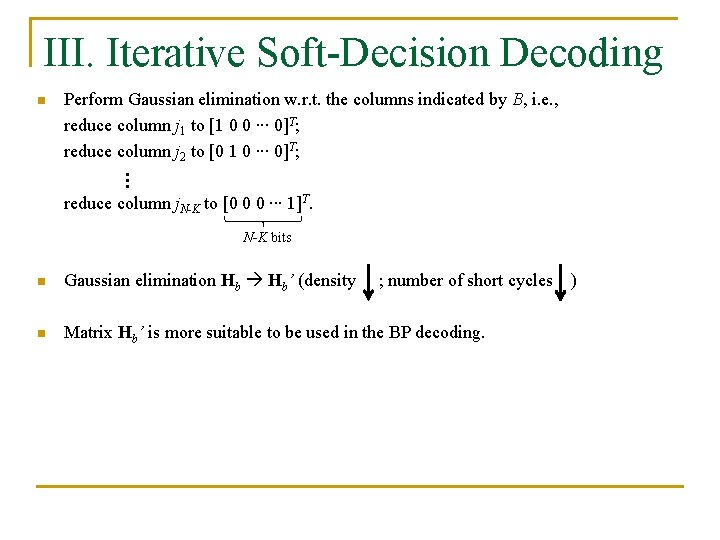

III. Iterative Soft-Decision Decoding n Perform Gaussian elimination w. r. t. the columns indicated by B, i. e. , reduce column j 1 to [1 0 0 ∙∙∙ 0]T; reduce column j 2 to [0 1 0 ∙∙∙ 0]T; … reduce column j. N-K to [0 0 0 ∙∙∙ 1]T. N-K bits n Gaussian elimination Hb Hb’ (density n Matrix Hb’ is more suitable to be used in the BP decoding. ; number of short cycles )

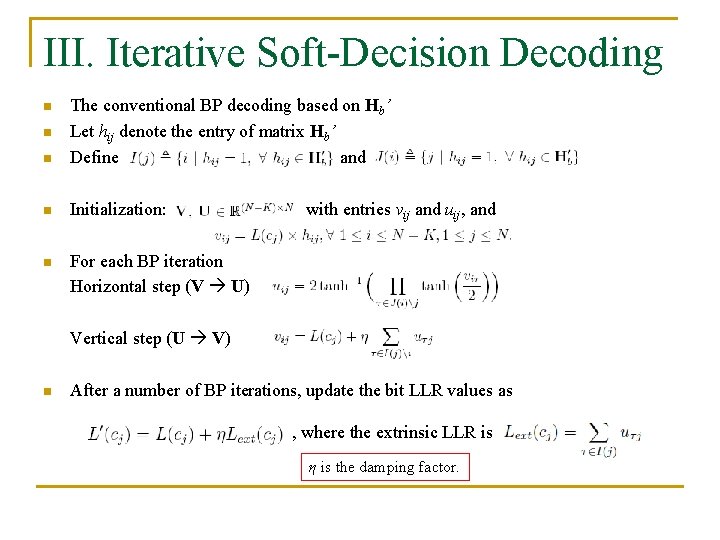

III. Iterative Soft-Decision Decoding n The conventional BP decoding based on Hb’ Let hij denote the entry of matrix Hb’ Define and n Initialization: n For each BP iteration Horizontal step (V U) n n with entries vij and uij, and Vertical step (U V) n After a number of BP iterations, update the bit LLR values as , where the extrinsic LLR is η is the damping factor.

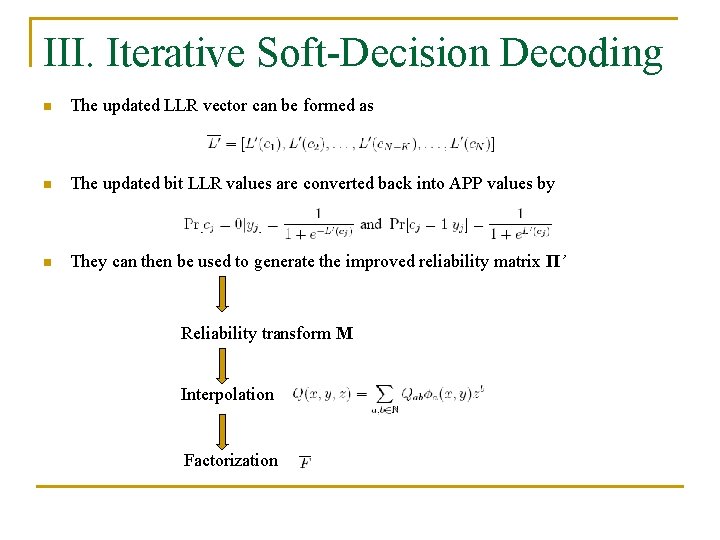

III. Iterative Soft-Decision Decoding n The updated LLR vector can be formed as n The updated bit LLR values are converted back into APP values by n They can then be used to generate the improved reliability matrix Π’ Reliability transform M Interpolation Factorization

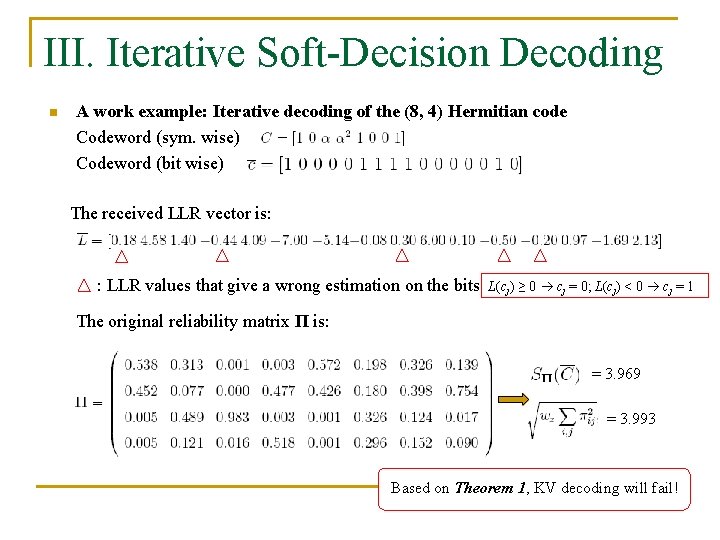

III. Iterative Soft-Decision Decoding n A work example: Iterative decoding of the (8, 4) Hermitian code Codeword (sym. wise) Codeword (bit wise) The received LLR vector is: : LLR values that give a wrong estimation on the bits L(cj) ≥ 0 cj = 0; L(cj) < 0 cj = 1 The original reliability matrix Π is: = 3. 969 = 3. 993 Based on Theorem 1, KV decoding will fail!

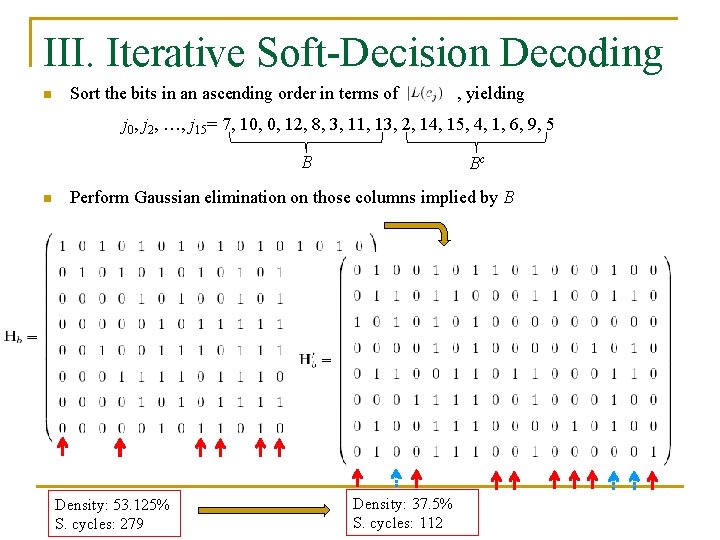

III. Iterative Soft-Decision Decoding n Sort the bits in an ascending order in terms of , yielding j 0, j 2, …, j 15= 7, 10, 0, 12, 8, 3, 11, 13, 2, 14, 15, 4, 1, 6, 9, 5 B n Bc Perform Gaussian elimination on those columns implied by B Density: 53. 125% S. cycles: 279 Density: 37. 5% S. cycles: 112

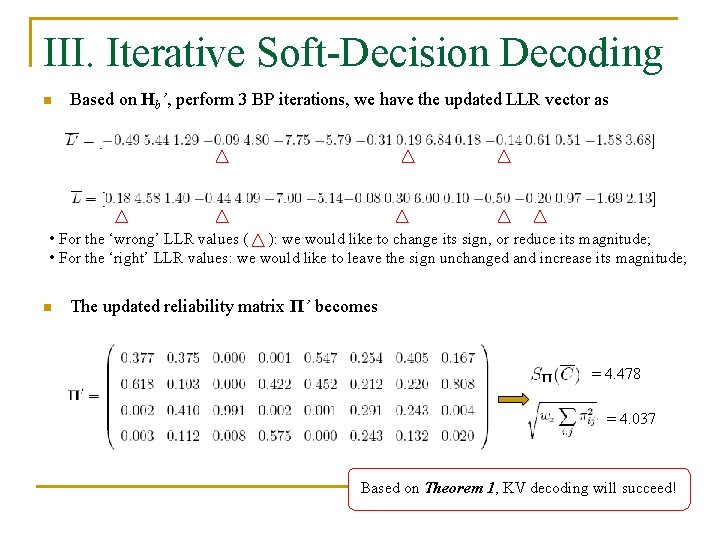

III. Iterative Soft-Decision Decoding n Based on Hb’, perform 3 BP iterations, we have the updated LLR vector as • For the ‘wrong’ LLR values ( ): we would like to change its sign, or reduce its magnitude; • For the ‘right’ LLR values: we would like to leave the sign unchanged and increase its magnitude; n The updated reliability matrix Π’ becomes = 4. 478 = 4. 037 Based on Theorem 1, KV decoding will succeed!

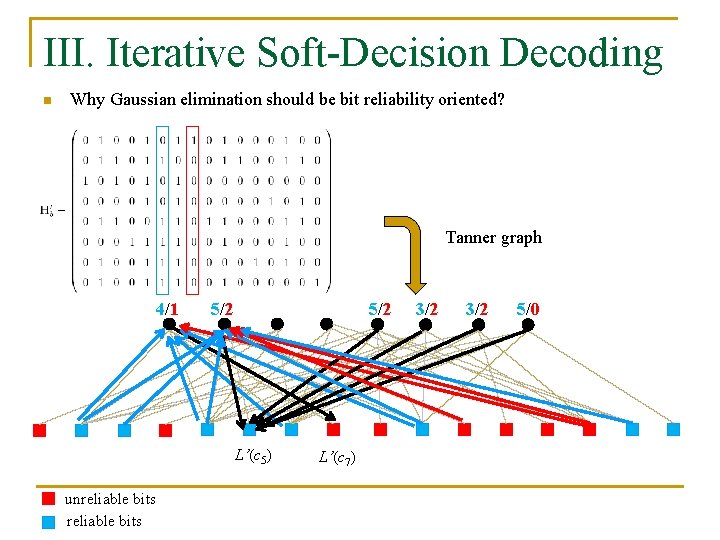

III. Iterative Soft-Decision Decoding n Why Gaussian elimination should be bit reliability oriented? Tanner graph 4/1 5/2 L’(c 5) unreliable bits L’(c 7) 3/2 5/0

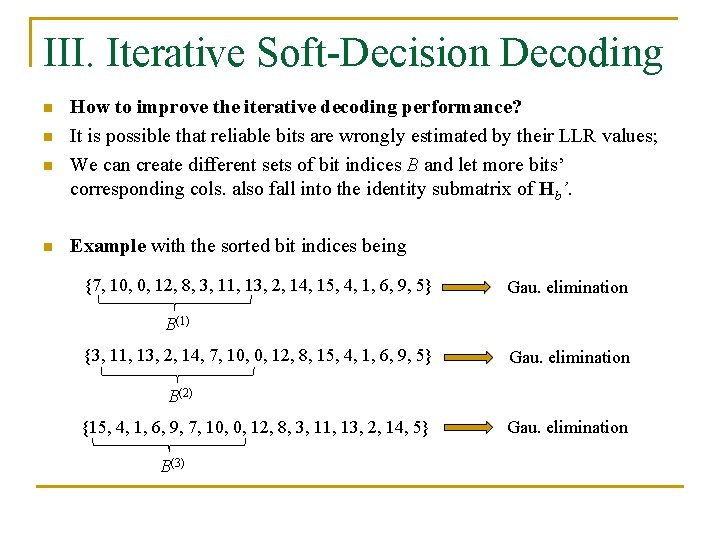

III. Iterative Soft-Decision Decoding n n How to improve the iterative decoding performance? It is possible that reliable bits are wrongly estimated by their LLR values; We can create different sets of bit indices B and let more bits’ corresponding cols. also fall into the identity submatrix of Hb’. Example with the sorted bit indices being {7, 10, 0, 12, 8, 3, 11, 13, 2, 14, 15, 4, 1, 6, 9, 5} Gau. elimination B(1) {3, 11, 13, 2, 14, 7, 10, 0, 12, 8, 15, 4, 1, 6, 9, 5} Gau. elimination B(2) {15, 4, 1, 6, 9, 7, 10, 0, 12, 8, 3, 11, 13, 2, 14, 5} B(3) Gau. elimination

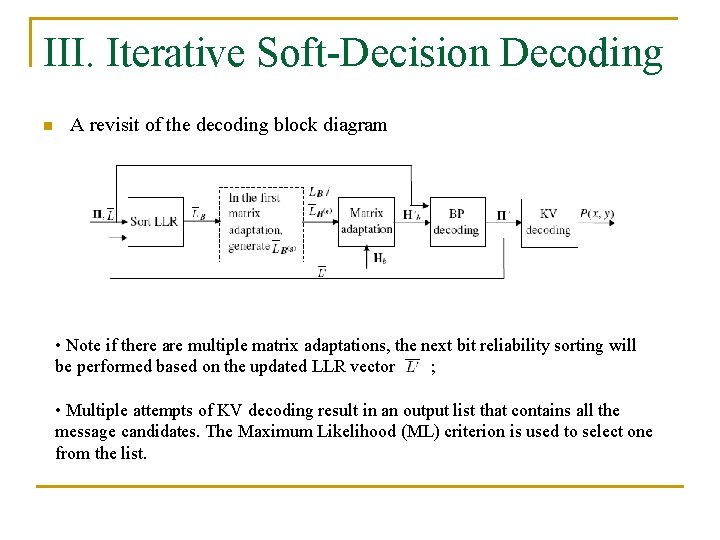

III. Iterative Soft-Decision Decoding n A revisit of the decoding block diagram • Note if there are multiple matrix adaptations, the next bit reliability sorting will be performed based on the updated LLR vector ; • Multiple attempts of KV decoding result in an output list that contains all the message candidates. The Maximum Likelihood (ML) criterion is used to select one from the list.

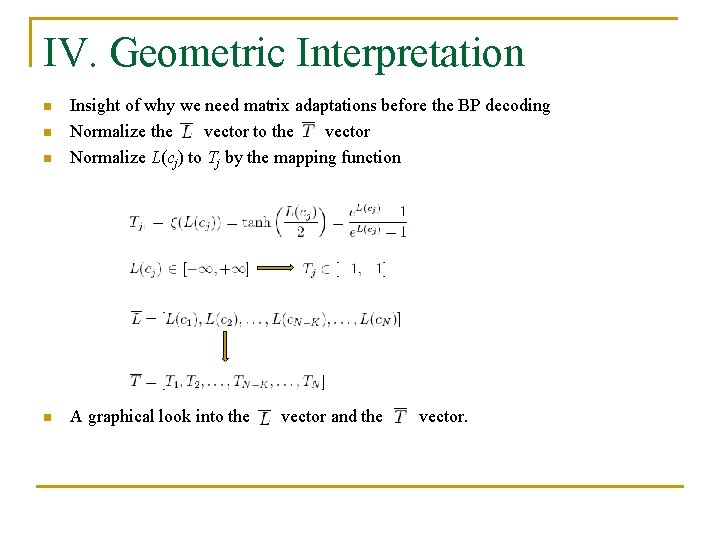

IV. Geometric Interpretation n Insight of why we need matrix adaptations before the BP decoding Normalize the vector to the vector Normalize L(cj) to Tj by the mapping function n A graphical look into the n n vector and the vector.

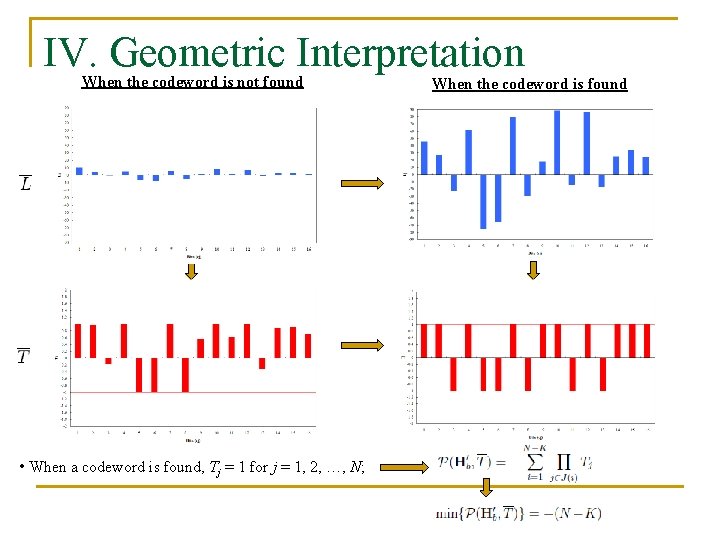

IV. Geometric Interpretation When the codeword is not found • When a codeword is found, Tj = 1 for j = 1, 2, …, N; When the codeword is found

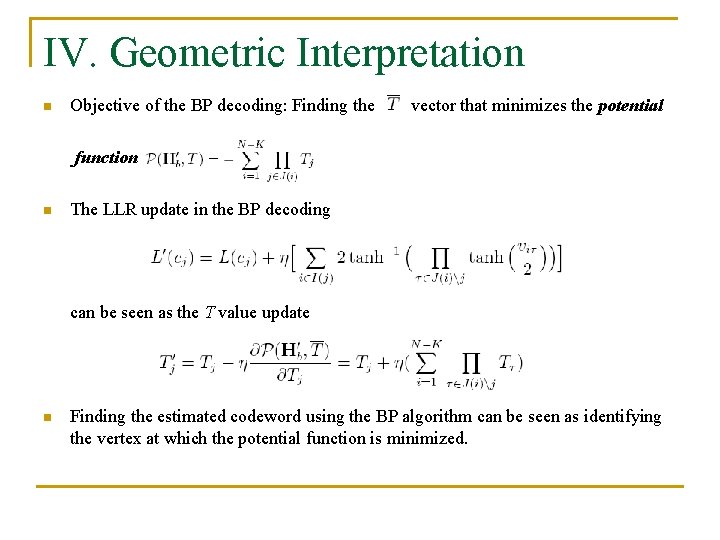

IV. Geometric Interpretation n Objective of the BP decoding: Finding the vector that minimizes the potential function n The LLR update in the BP decoding can be seen as the T value update n Finding the estimated codeword using the BP algorithm can be seen as identifying the vertex at which the potential function is minimized.

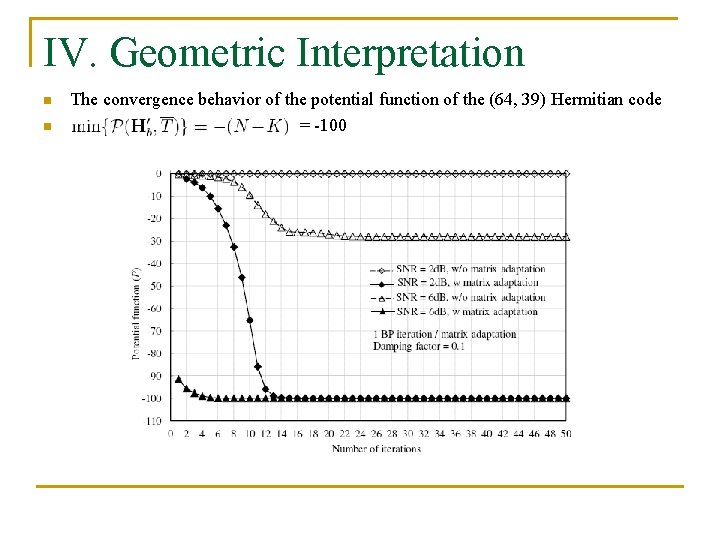

IV. Geometric Interpretation n n The convergence behavior of the potential function of the (64, 39) Hermitian code = -100

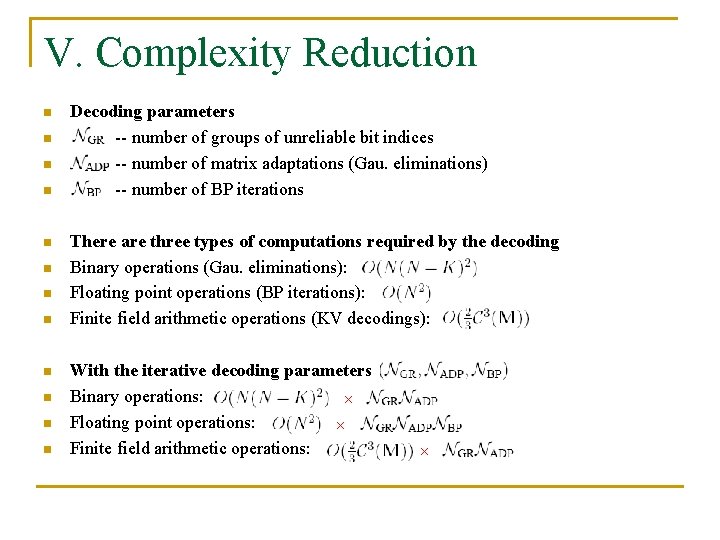

V. Complexity Reduction n n n Decoding parameters -- number of groups of unreliable bit indices -- number of matrix adaptations (Gau. eliminations) -- number of BP iterations There are three types of computations required by the decoding Binary operations (Gau. eliminations): Floating point operations (BP iterations): Finite field arithmetic operations (KV decodings): With the iterative decoding parameters of Binary operations: × Floating point operations: × Finite field arithmetic operations: ×

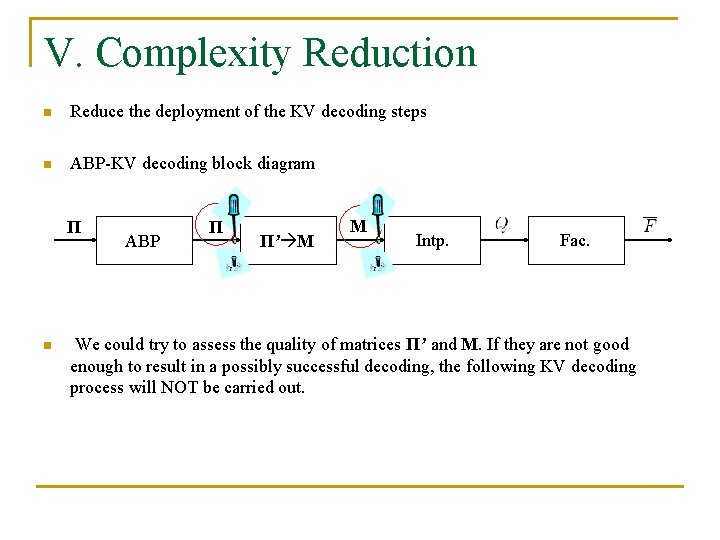

V. Complexity Reduction n Reduce the deployment of the KV decoding steps n ABP-KV decoding block diagram Π n ABP Π’ Π’ M M Intp. Fac. We could try to assess the quality of matrices Π’ and M. If they are not good enough to result in a possibly successful decoding, the following KV decoding process will NOT be carried out.

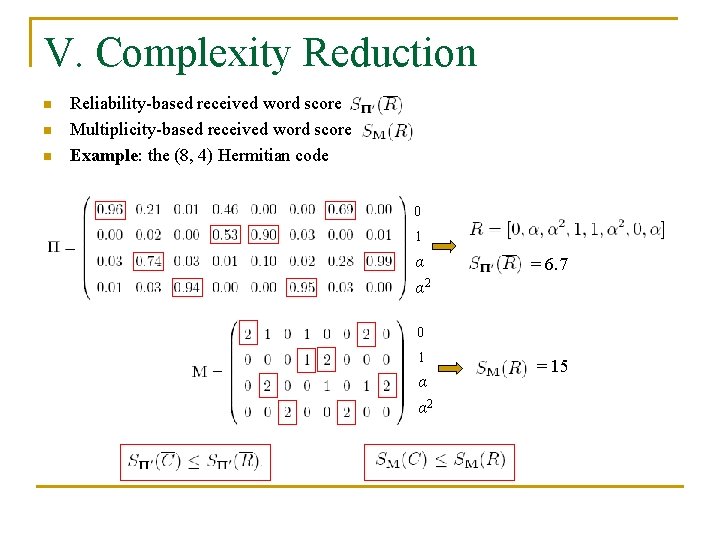

V. Complexity Reduction n Reliability-based received word score Multiplicity-based received word score Example: the (8, 4) Hermitian code 0 1 α α 2 = 6. 7 0 1 α α 2 = 15

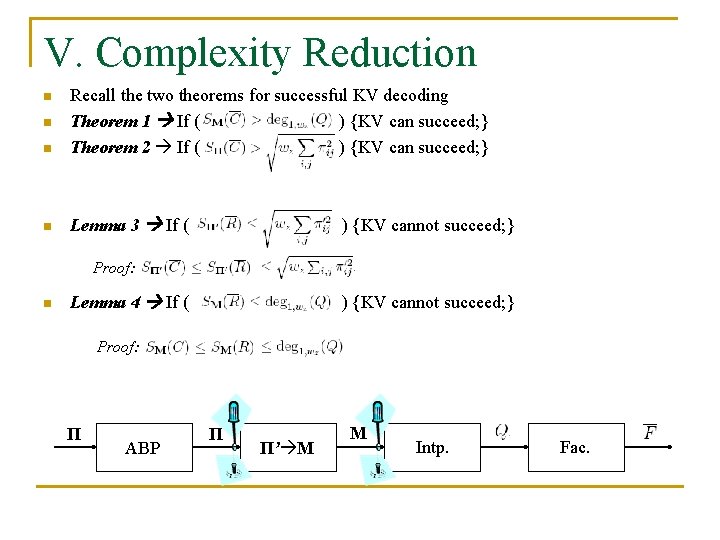

V. Complexity Reduction n Recall the two theorems for successful KV decoding Theorem 1 If ( ) {KV can succeed; } Theorem 2 If ( ) {KV can succeed; } n Lemma 3 If ( n n ) {KV cannot succeed; } Proof: n Lemma 4 If ( ) {KV cannot succeed; } Proof: Π ABP Π’ Π’ M M Intp. Fac.

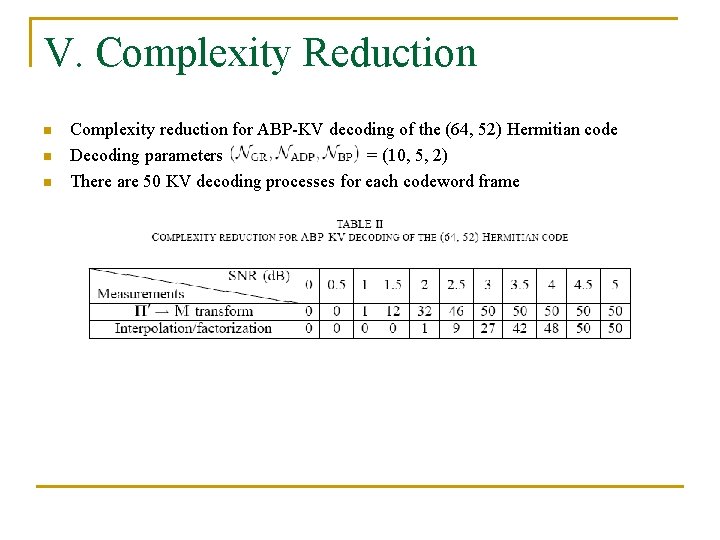

V. Complexity Reduction n Complexity reduction for ABP-KV decoding of the (64, 52) Hermitian code Decoding parameters = (10, 5, 2) There are 50 KV decoding processes for each codeword frame

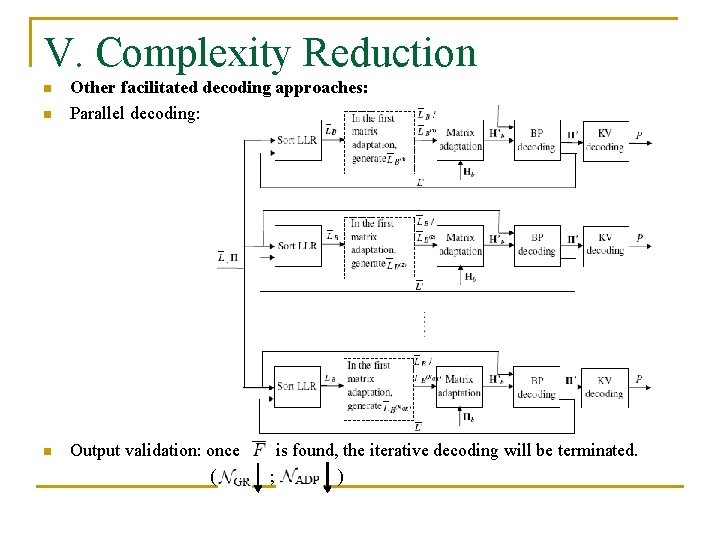

V. Complexity Reduction n Other facilitated decoding approaches: Parallel decoding: Output validation: once ( is found, the iterative decoding will be terminated. ; )

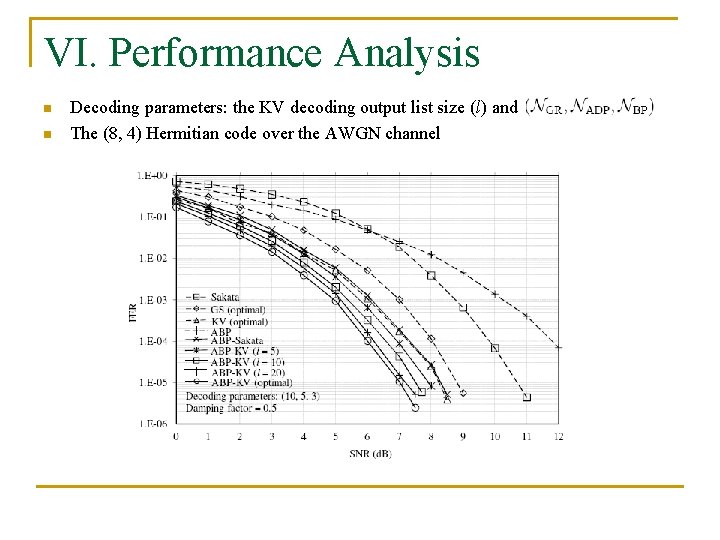

VI. Performance Analysis n n Decoding parameters: the KV decoding output list size (l) and The (8, 4) Hermitian code over the AWGN channel

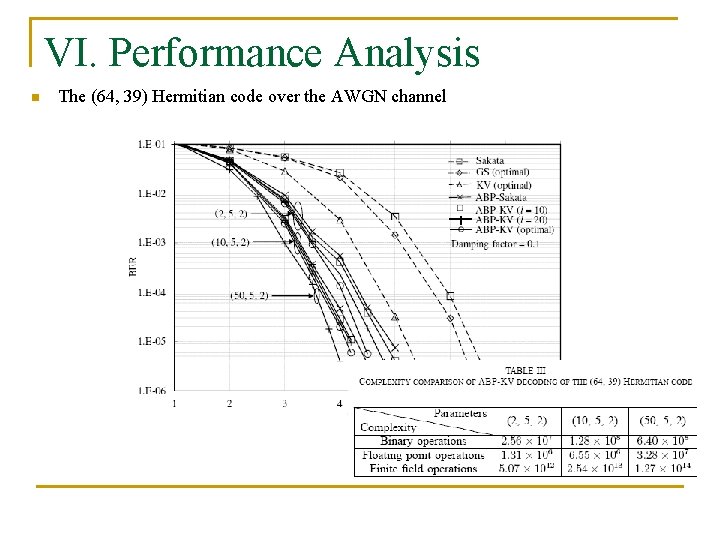

VI. Performance Analysis n The (64, 39) Hermitian code over the AWGN channel

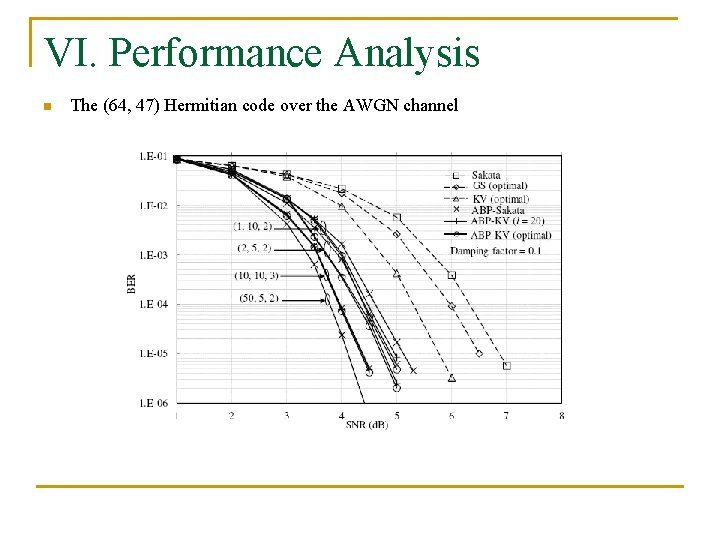

VI. Performance Analysis n The (64, 47) Hermitian code over the AWGN channel

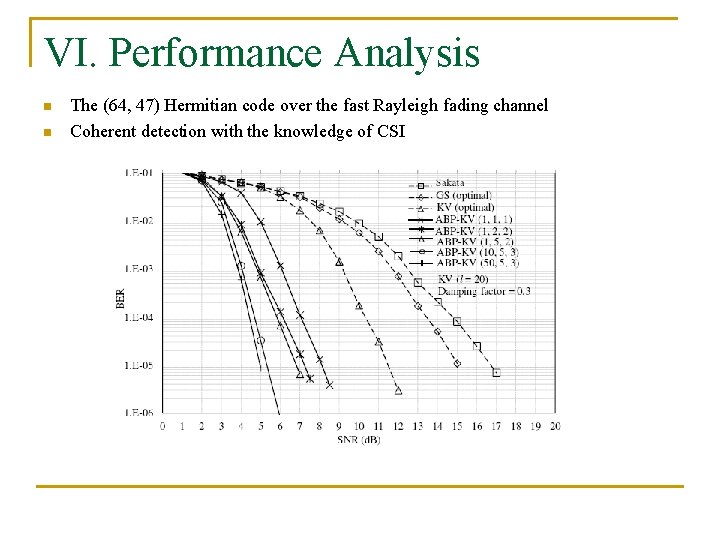

VI. Performance Analysis n n The (64, 47) Hermitian code over the fast Rayleigh fading channel Coherent detection with the knowledge of CSI

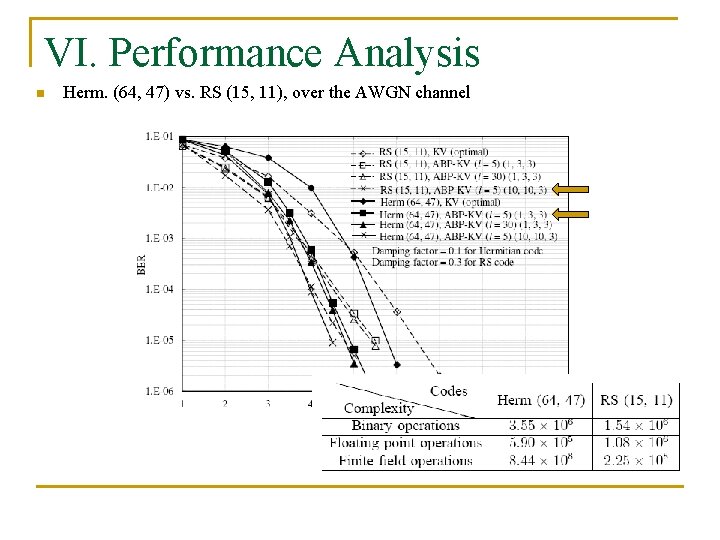

VI. Performance Analysis n Herm. (64, 47) vs. RS (15, 11), over the AWGN channel

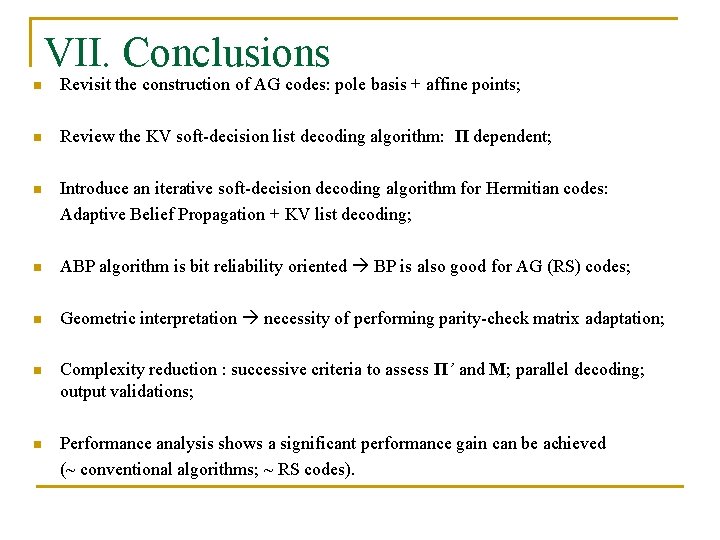

VII. Conclusions n Revisit the construction of AG codes: pole basis + affine points; n Review the KV soft-decision list decoding algorithm: Π dependent; n Introduce an iterative soft-decision decoding algorithm for Hermitian codes: Adaptive Belief Propagation + KV list decoding; n ABP algorithm is bit reliability oriented BP is also good for AG (RS) codes; n Geometric interpretation necessity of performing parity-check matrix adaptation; n Complexity reduction : successive criteria to assess Π’ and M; parallel decoding; output validations; n Performance analysis shows a significant performance gain can be achieved (~ conventional algorithms; ~ RS codes).

Acknowledgement n National natural Science Foundation of China Project: Advanced coding technology for future storage devices; ID: 61001094; From 2011. 1 to 2013. 12. Thank you!

- Slides: 41