Iterative Improvement Techniques for Solving Tight Constraint Satisfaction

![ERA - ERA in general ERA [Liu & al. AIJ 2002] – Environment, Reactive ERA - ERA in general ERA [Liu & al. AIJ 2002] – Environment, Reactive](https://slidetodoc.com/presentation_image/e121615b9345f6b09ce150ef630e2c27/image-18.jpg)

- Slides: 36

Iterative Improvement Techniques for Solving Tight Constraint Satisfaction Problems Hui Zou Constraint Systems Laboratory Department of Computer Science and Engineering University of Nebraska-Lincoln November, 2003 Supported by NSF grant #EPS-0091900

Outline Motivation; Related works; Questions Approach: goal, context & model Solvers: designed & evaluated Local search Multi-agent based search Further improvements Directions of future research

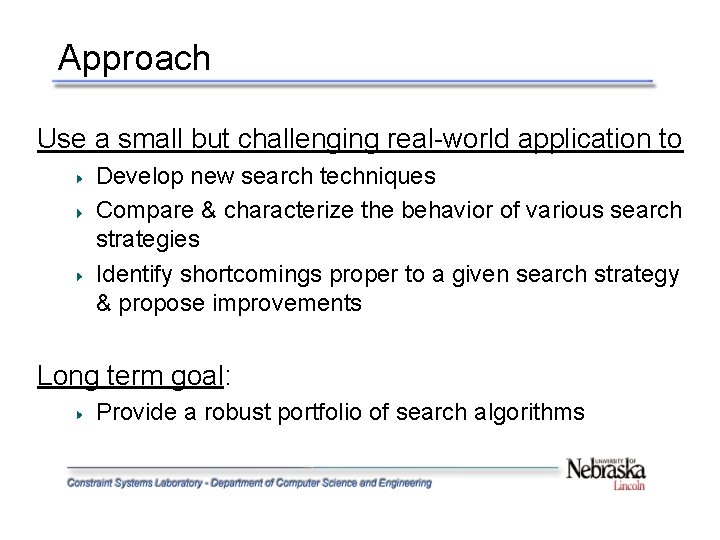

Approach Use a small but challenging real-world application to Develop new search techniques Compare & characterize the behavior of various search strategies Identify shortcomings proper to a given search strategy & propose improvements Long term goal: Provide a robust portfolio of search algorithms

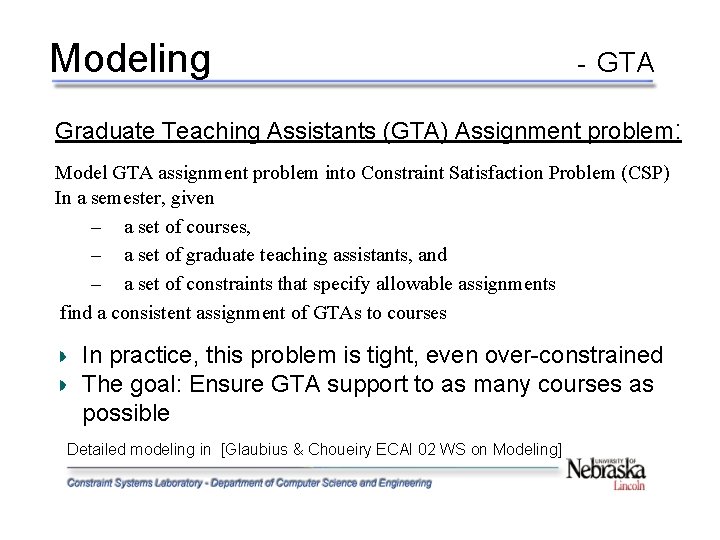

Modeling - GTA Graduate Teaching Assistants (GTA) Assignment problem: Model GTA assignment problem into Constraint Satisfaction Problem (CSP) In a semester, given – a set of courses, – a set of graduate teaching assistants, and – a set of constraints that specify allowable assignments find a consistent assignment of GTAs to courses In practice, this problem is tight, even over-constrained The goal: Ensure GTA support to as many courses as possible Detailed modeling in [Glaubius & Choueiry ECAI 02 WS on Modeling]

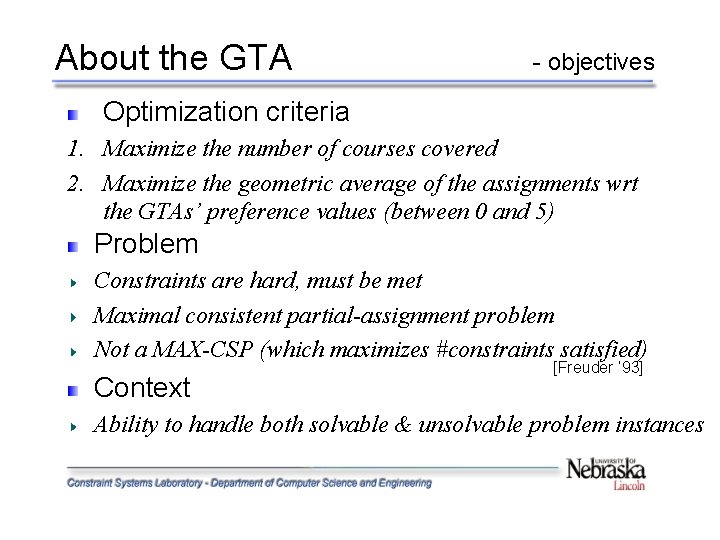

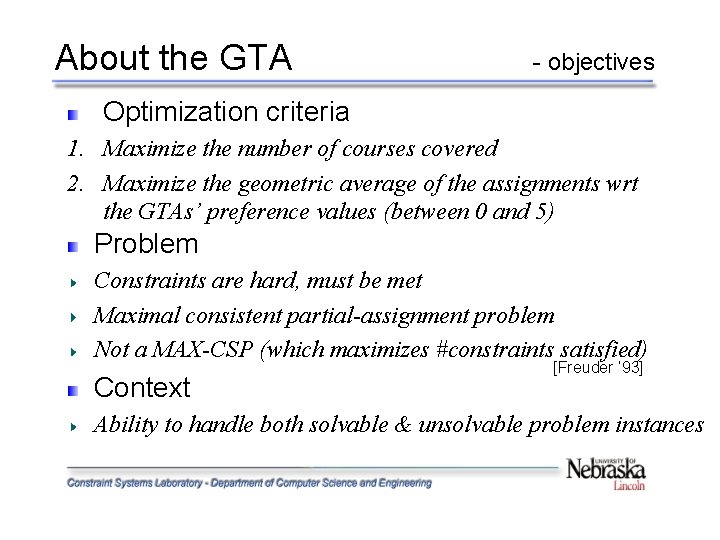

About the GTA - objectives Optimization criteria 1. Maximize the number of courses covered 2. Maximize the geometric average of the assignments wrt the GTAs’ preference values (between 0 and 5) Problem Constraints are hard, must be met Maximal consistent partial-assignment problem Not a MAX-CSP (which maximizes #constraints satisfied) Context [Freuder ’ 93] Ability to handle both solvable & unsolvable problem instances

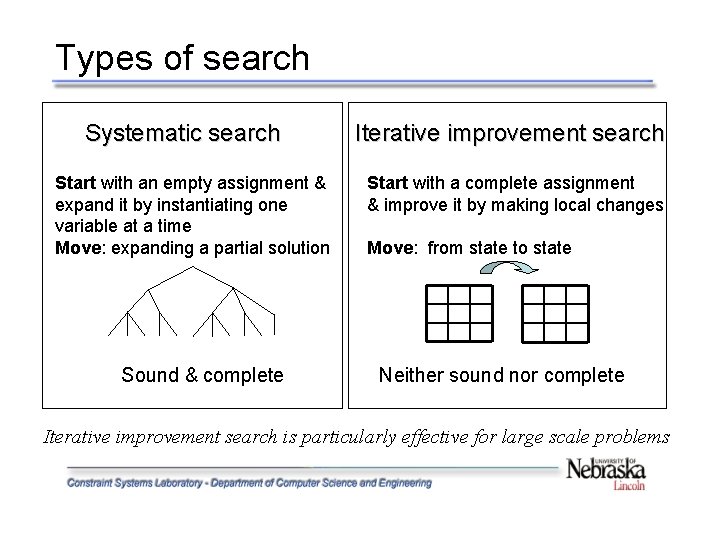

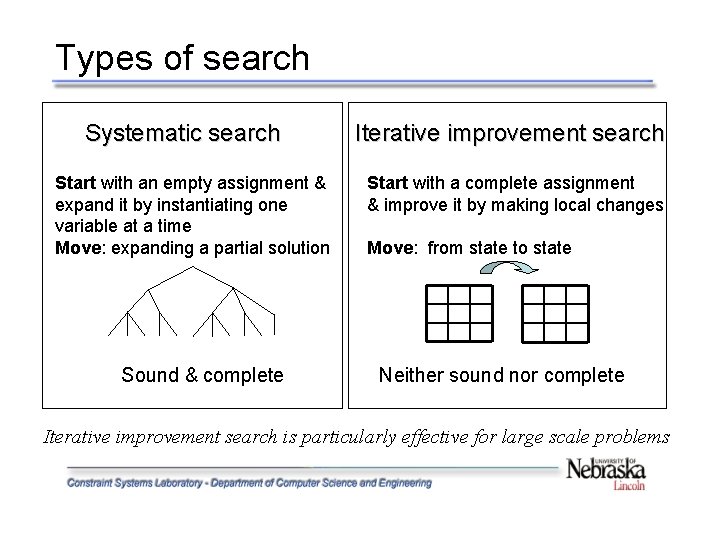

Types of search Systematic search Start with an empty assignment & expand it by instantiating one variable at a time Move: expanding a partial solution Sound & complete Iterative improvement search Start with a complete assignment & improve it by making local changes Move: from state to state Neither sound nor complete Iterative improvement search is particularly effective for large scale problems

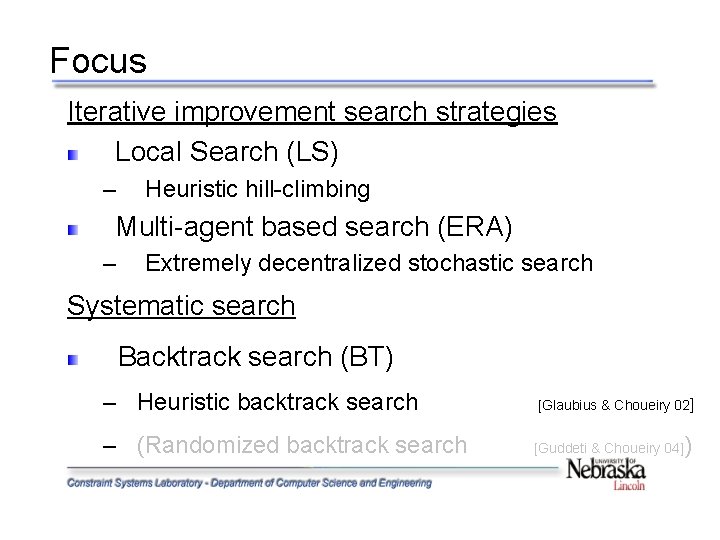

Focus Iterative improvement search strategies Local Search (LS) – Heuristic hill-climbing Multi-agent based search (ERA) – Extremely decentralized stochastic search Systematic search Backtrack search (BT) – Heuristic backtrack search [Glaubius & Choueiry 02] – (Randomized backtrack search [Guddeti & Choueiry 04] )

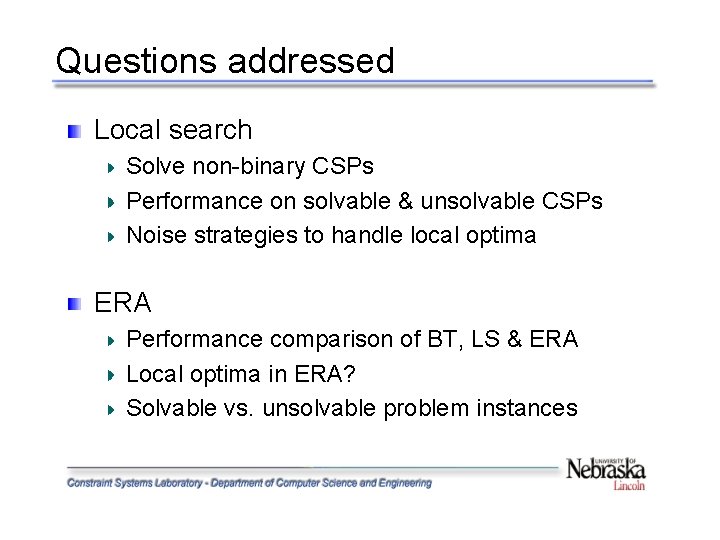

Questions addressed Local search Solve non-binary CSPs Performance on solvable & unsolvable CSPs Noise strategies to handle local optima ERA Performance comparison of BT, LS & ERA Local optima in ERA? Solvable vs. unsolvable problem instances

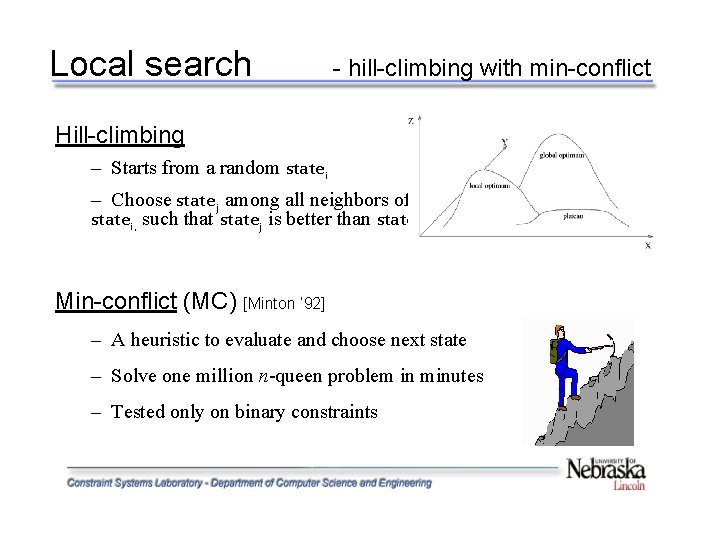

Heuristic hill-climbing (LS) Min-conflict heuristic, for choosing the move – Adapted to non-binary CSPs Uses constraint propagation to handle global constraints (i. e. , capacity constraints) – Drawback: Nugatory move Random walk to avoid local optima – Studied effect of the noise probability on performance Random restart to recover from local optima – Studied effect of the number of restart on performance Resulting strategy operates as a greedy stochastic search

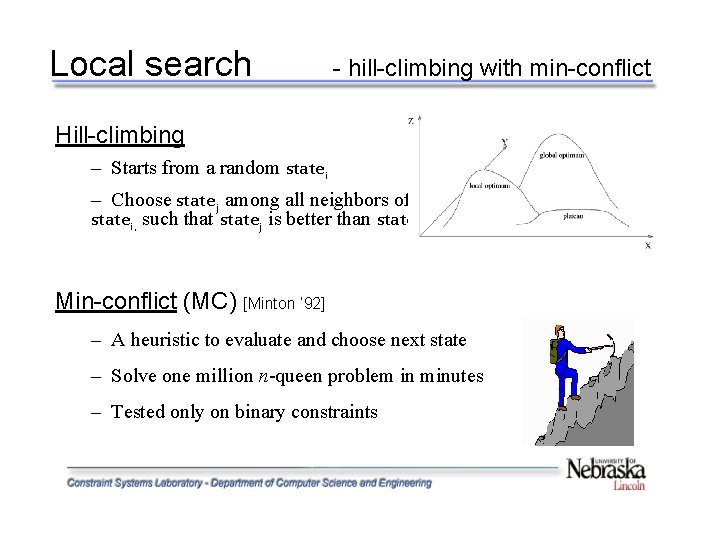

Local search - hill-climbing with min-conflict Hill-climbing – Starts from a random statei – Choose statej among all neighbors of statei, such that statej is better than statei Min-conflict (MC) [Minton ’ 92] – A heuristic to evaluate and choose next state – Solve one million n-queen problem in minutes – Tested only on binary constraints

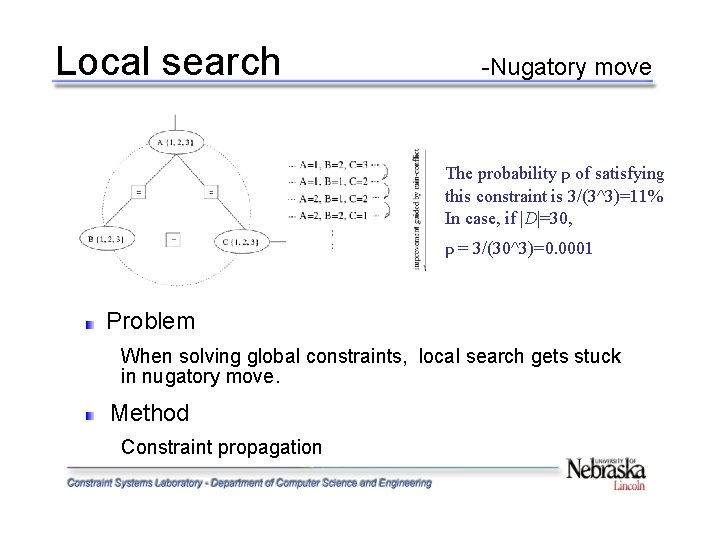

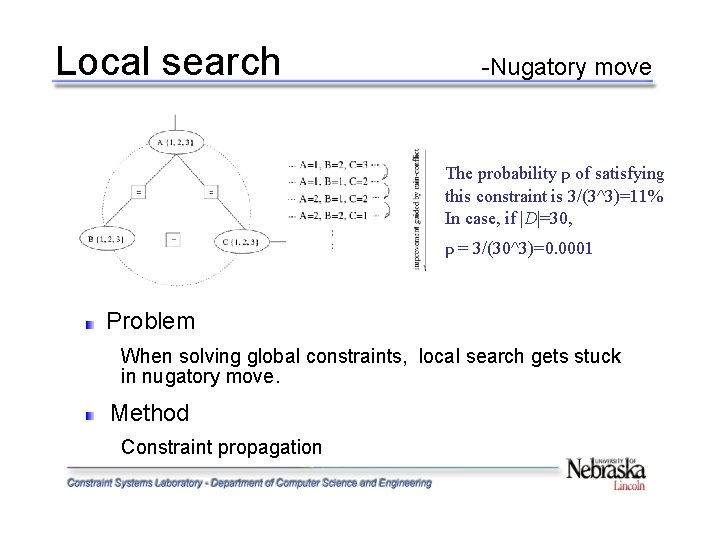

Local search -Nugatory move The probability p of satisfying this constraint is 3/(3^3)=11% In case, if |D|=30, p = 3/(30^3)=0. 0001 Problem When solving global constraints, local search gets stuck in nugatory move. Method Constraint propagation

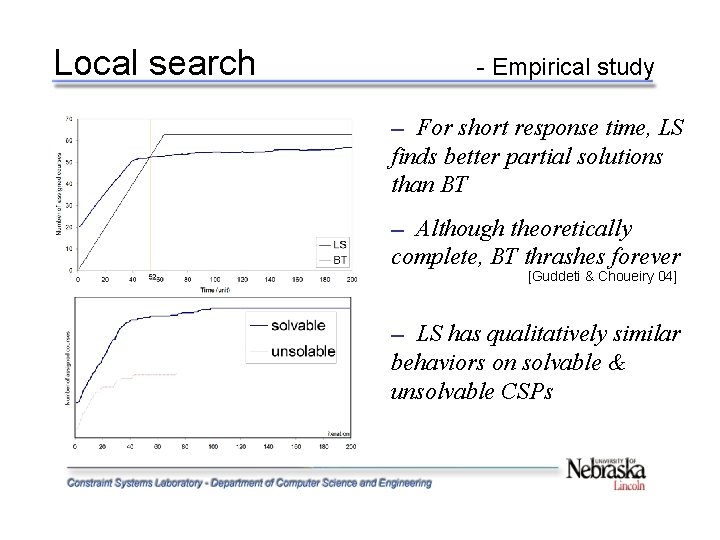

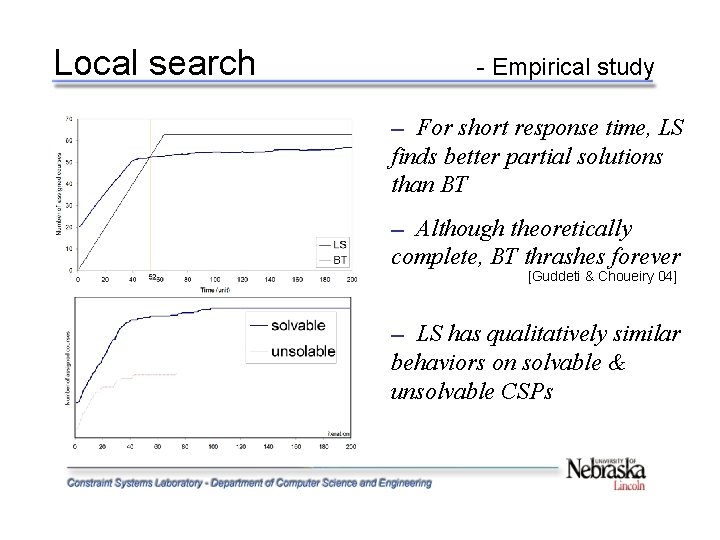

Local search - Empirical study For short response time, LS finds better partial solutions than BT ─ Although theoretically complete, BT thrashes forever ─ [Guddeti & Choueiry 04] LS has qualitatively similar behaviors on solvable & unsolvable CSPs ─

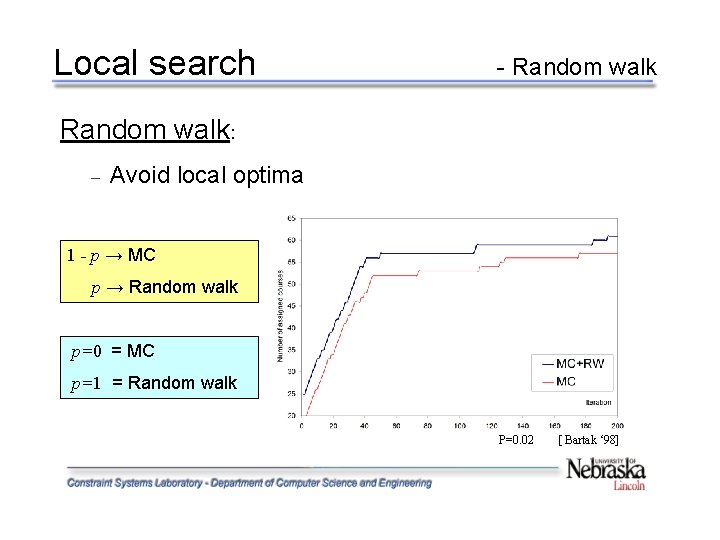

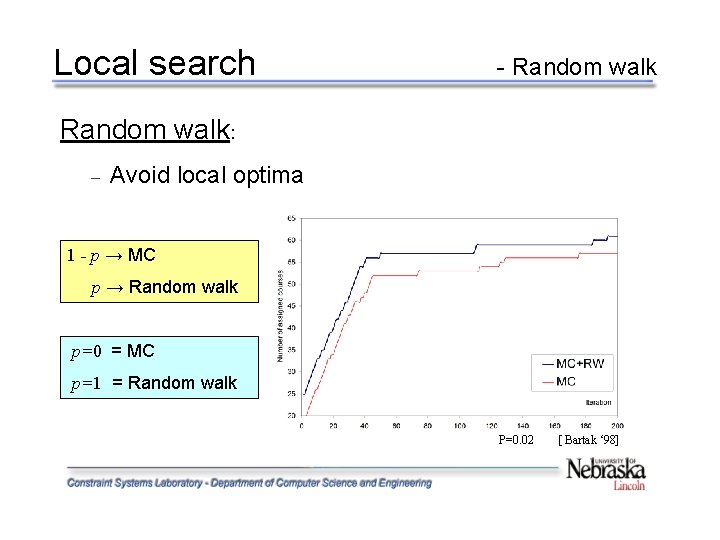

Local search - Random walk: – Avoid local optima 1 - p → MC p → Random walk p=0 = MC p=1 = Random walk P=0. 02 [ Bartak ‘ 98]

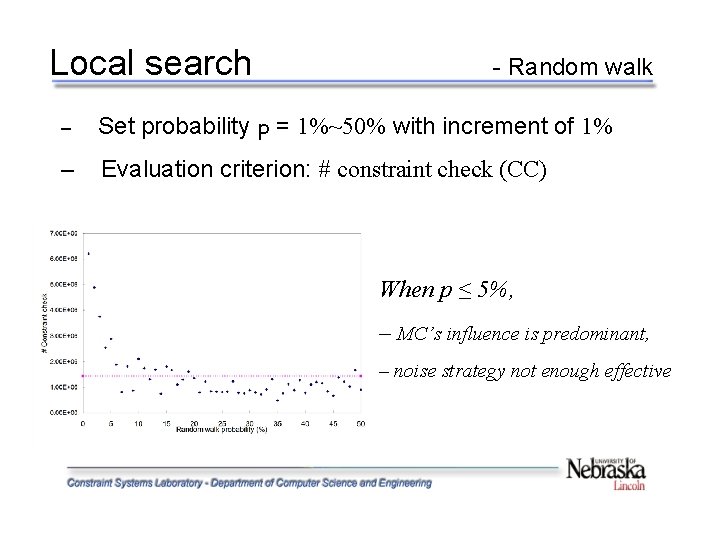

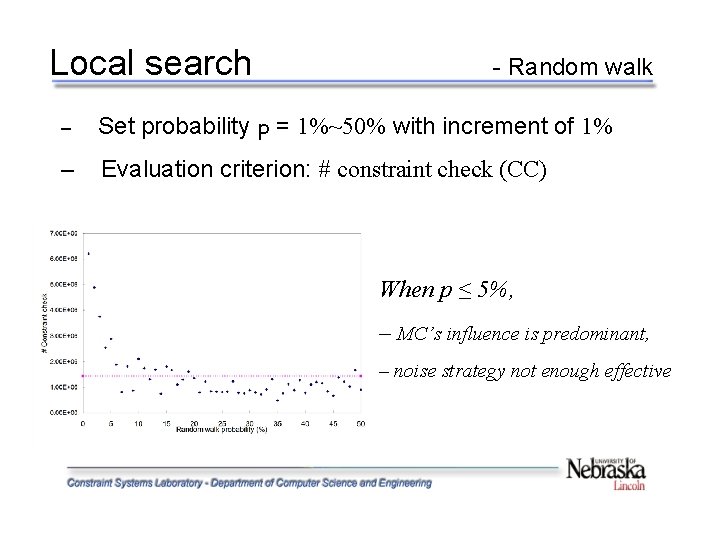

Local search - Random walk – Set probability p = 1%~50% with increment of 1% – Evaluation criterion: # constraint check (CC) When p ≤ 5%, – MC’s influence is predominant, – noise strategy not enough effective

Local search - Random restart Restart the search from a new randomly selected state • The number of restarts varies from 50~500 with increment of 50 • Evaluated by the percentage of unassigned courses Restarts 50 100 150 200 250 300 350 400 450 500 SD Solvable (%) 17 11 13 15 14 13 12 15 11 13 1. 89 Unsolvable(% 23 ) 26 30 21 20 24 23 26 28 29 3. 36 SD – standard deviation – The number of restarts does not significantly affect performance – On average, a value of 300 ~ 400 is good enough

Local search - Conclusions Binary vs. non-binary: – Constraint propagation to handle global constraint Local search (LS) vs. systematic search (BT): LS: quickly find a good partial solution – LS: monotonic improvement, quick stabilization, one-time reparation – Solvable vs. unsolvable – similar behavior in both cases – good performance, but local optima Handle local optima – noise strategies: random walk and random restart – the setting of parameters is likely problem dependent

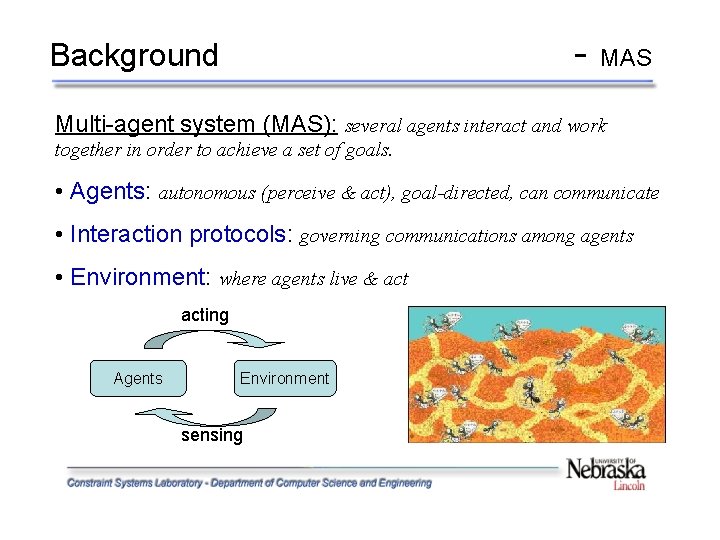

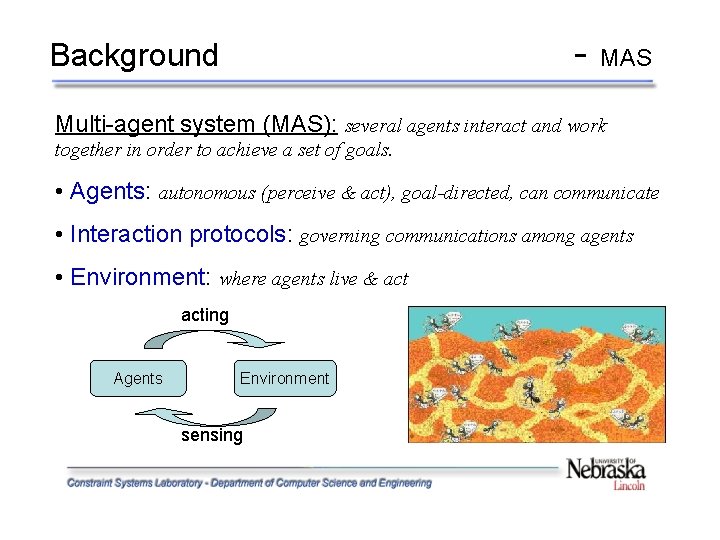

- Background MAS Multi-agent system (MAS): several agents interact and work together in order to achieve a set of goals. • Agents: autonomous (perceive & act), goal-directed, can communicate • Interaction protocols: governing communications among agents • Environment: where agents live & acting Agents Environment sensing

![ERA ERA in general ERA Liu al AIJ 2002 Environment Reactive ERA - ERA in general ERA [Liu & al. AIJ 2002] – Environment, Reactive](https://slidetodoc.com/presentation_image/e121615b9345f6b09ce150ef630e2c27/image-18.jpg)

ERA - ERA in general ERA [Liu & al. AIJ 2002] – Environment, Reactive rules, and Agents – A multi-agent formulation for solving a general CSP – Transitions between states when agents move It can be viewed as an extension of local search, but they are different: Local search • only one evaluation value (state cost) for the whole state • one global goal, central control ERA • each agent has its own evaluation value (position value) • each agent has its own local goal, no central control

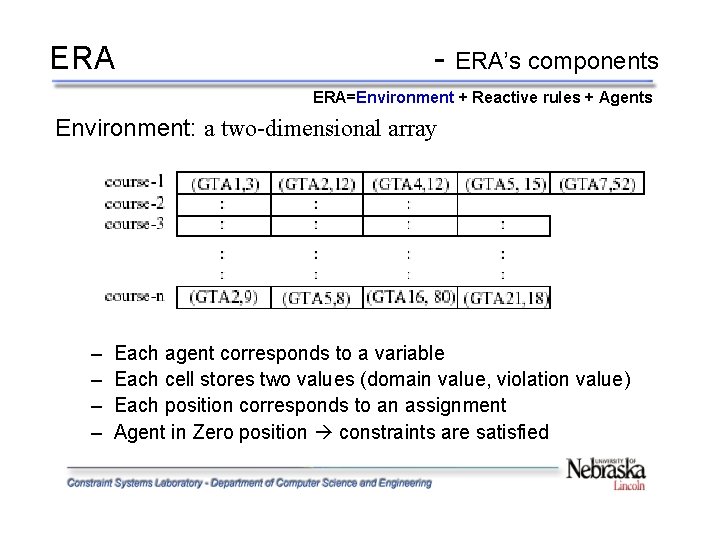

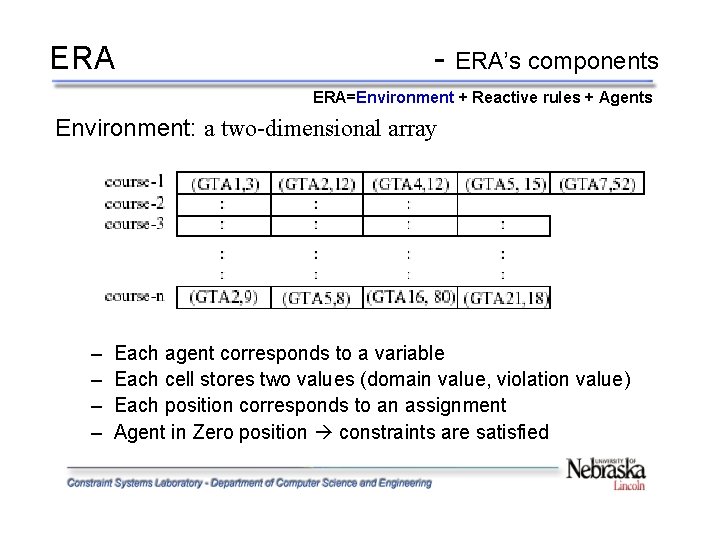

ERA - ERA’s components ERA=Environment + Reactive rules + Agents Environment: a two-dimensional array – – Each agent corresponds to a variable Each cell stores two values (domain value, violation value) Each position corresponds to an assignment Agent in Zero position constraints are satisfied

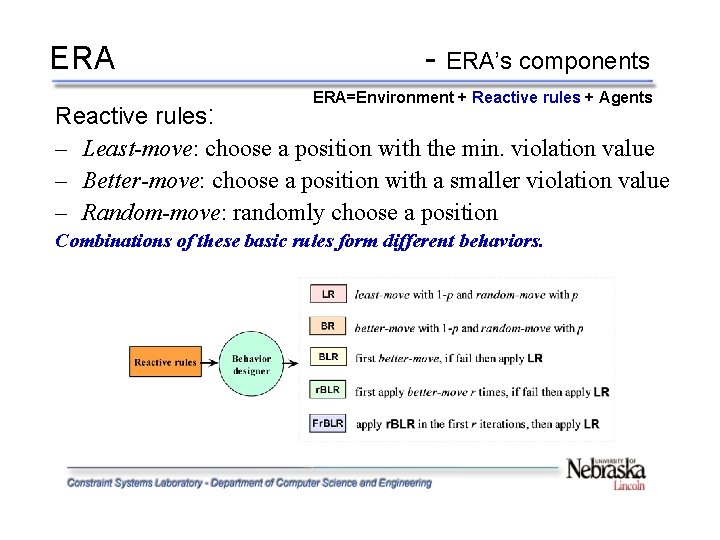

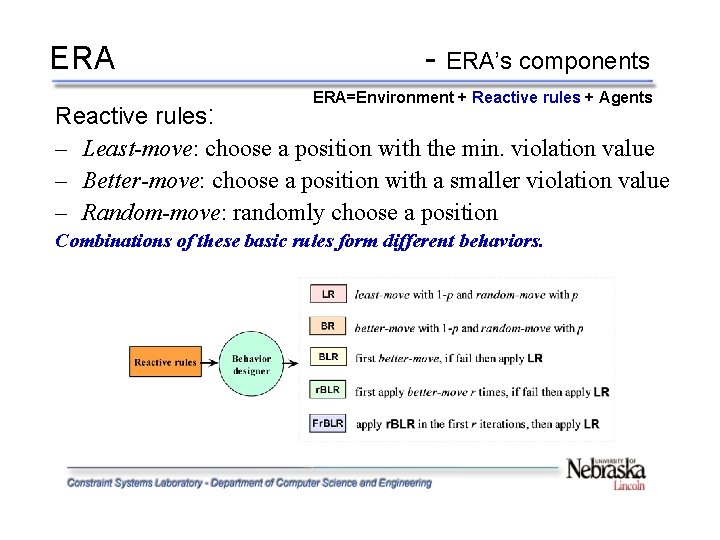

ERA - ERA’s components ERA=Environment + Reactive rules + Agents Reactive rules: – Least-move: choose a position with the min. violation value – Better-move: choose a position with a smaller violation value – Random-move: randomly choose a position Combinations of these basic rules form different behaviors.

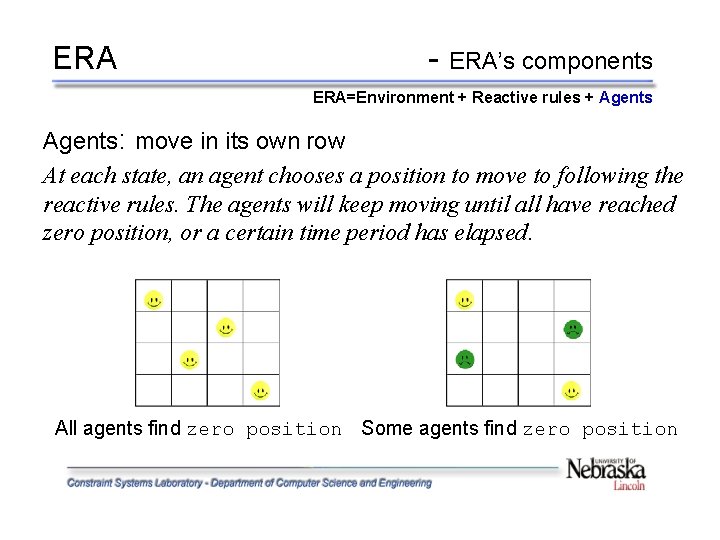

ERA - ERA’s components ERA=Environment + Reactive rules + Agents: move in its own row At each state, an agent chooses a position to move to following the reactive rules. The agents will keep moving until all have reached zero position, or a certain time period has elapsed. All agents find zero position Some agents find zero position

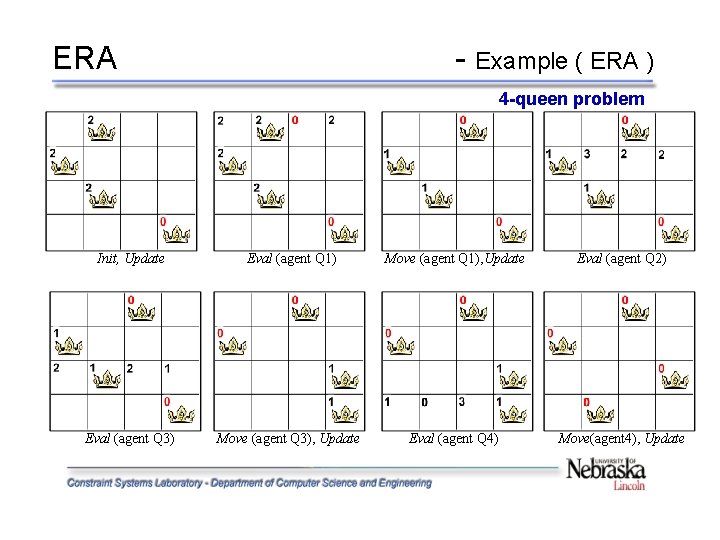

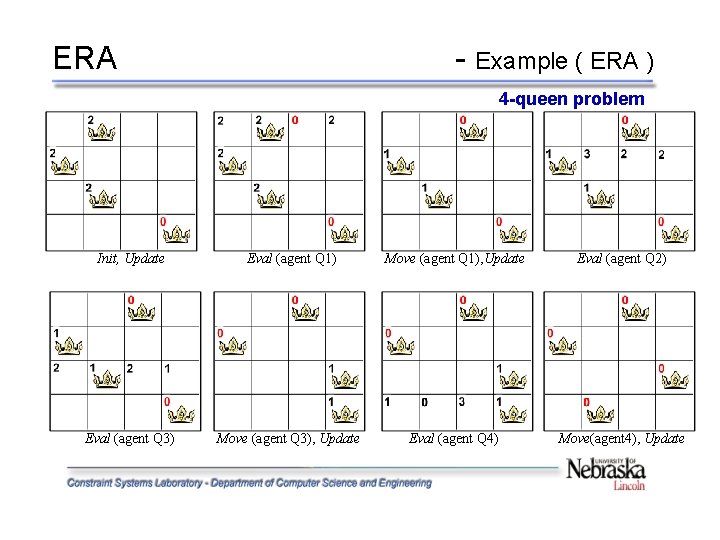

ERA - Example ( ERA ) 4 -queen problem Init, Update Eval (agent Q 1) Move (agent Q 1), Update Eval (agent Q 2) Eval (agent Q 3) Move (agent Q 3), Update Eval (agent Q 4) Move(agent 4), Update

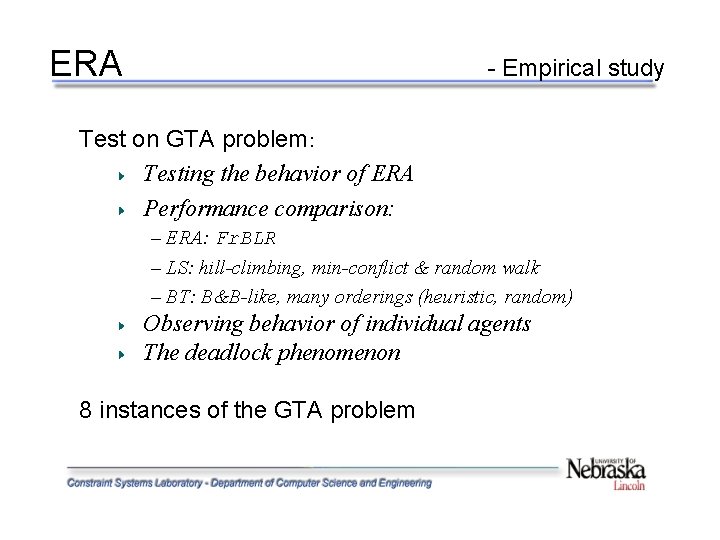

ERA - Empirical study Test on GTA problem: Testing the behavior of ERA Performance comparison: – ERA: Fr. BLR – LS: hill-climbing, min-conflict & random walk – BT: B&B-like, many orderings (heuristic, random) Observing behavior of individual agents The deadlock phenomenon 8 instances of the GTA problem

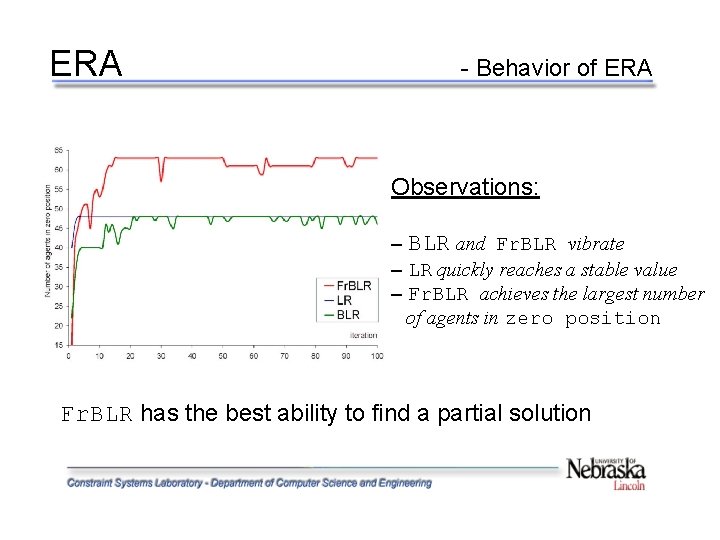

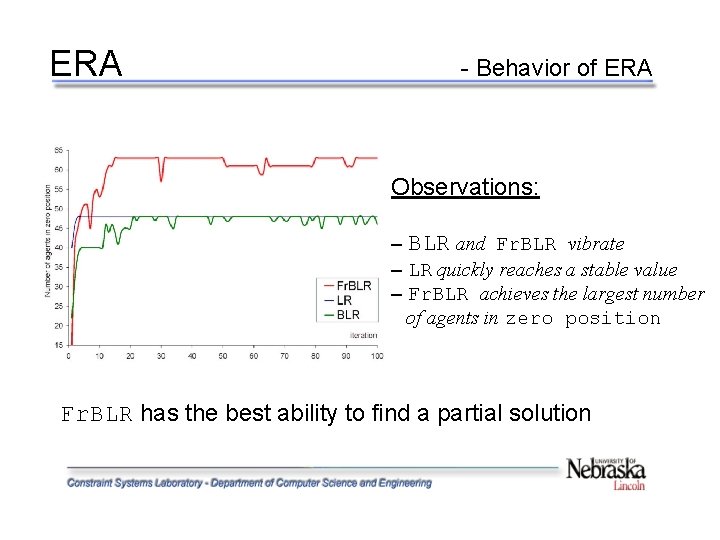

ERA - Behavior of ERA Observations: – BLR and Fr. BLR vibrate – LR quickly reaches a stable value – Fr. BLR achieves the largest number of agents in zero position Fr. BLR has the best ability to find a partial solution

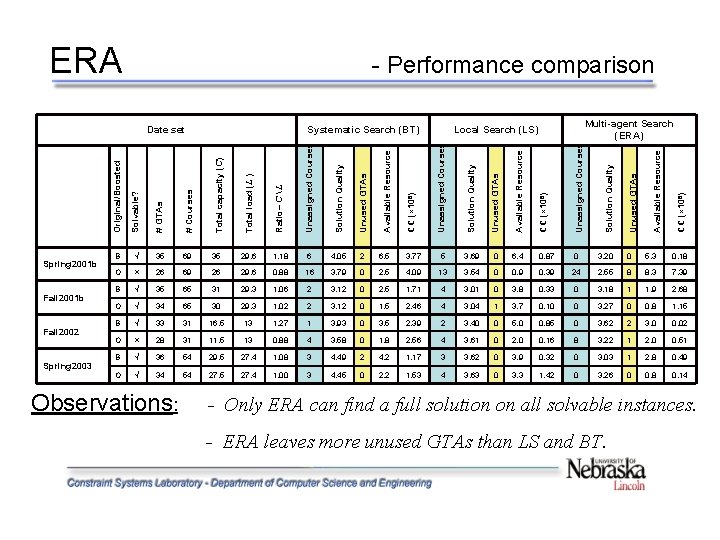

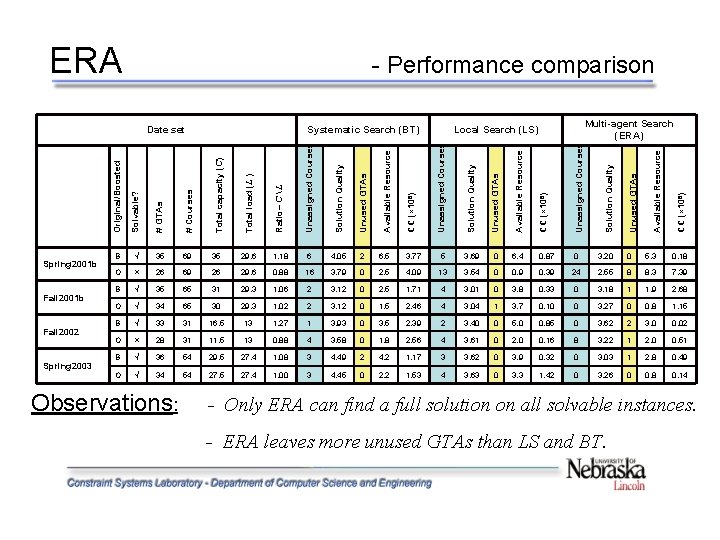

ERA - Performance comparison Unused GTAs CC (× 108) Unassigned Courses Solution Quality Unused GTAs Available Resource CC (× 108) Unassigned Courses 35 29. 6 1. 18 6 4. 05 2 6. 5 3. 77 5 3. 69 0 6. 4 0. 87 0 3. 20 0 5. 3 0. 18 O × 26 69 26 29. 6 0. 88 16 3. 79 0 2. 5 4. 09 13 3. 54 0 0. 9 0. 39 24 2. 55 8 8. 3 7. 39 B √ 35 65 31 29. 3 1. 06 2 3. 12 0 2. 5 1. 71 4 3. 01 0 3. 8 0. 33 0 3. 18 1 1. 9 2. 68 O √ 34 65 30 29. 3 1. 02 2 3. 12 0 1. 5 2. 46 4 3. 04 1 3. 7 0. 10 0 3. 27 0 0. 8 1. 15 B √ 33 31 16. 5 13 1. 27 1 3. 93 0 3. 5 2. 39 2 3. 40 0 5. 0 0. 85 0 3. 62 2 3. 0 0. 02 O × 28 31 11. 5 13 0. 88 4 3. 58 0 1. 8 2. 56 4 3. 61 0 2. 0 0. 16 8 3. 22 1 2. 0 0. 51 B √ 36 54 29. 5 27. 4 1. 08 3 4. 49 2 4. 2 1. 17 3 3. 62 0 3. 9 0. 32 0 3. 03 1 2. 8 0. 49 O √ 34 54 27. 5 27. 4 1. 00 3 4. 45 0 2. 2 1. 53 4 3. 63 0 3. 3 1. 42 0 3. 26 0 0. 8 0. 14 Observations: CC (× 108) Solution Quality 69 Available Resource Unassigned Courses 35 Unused GTAs Ratio= C L √ Solution Quality Total load (L ) B Available Resource Total capacity (C) Spring 2003 # Courses Fall 2002 # GTAs Fall 2001 b Multi-agent Search (ERA) Local Search (LS) Solvable? Spring 2001 b Systematic Search (BT) Original/Boosted Date set - Only ERA can find a full solution on all solvable instances. - ERA leaves more unused GTAs than LS and BT.

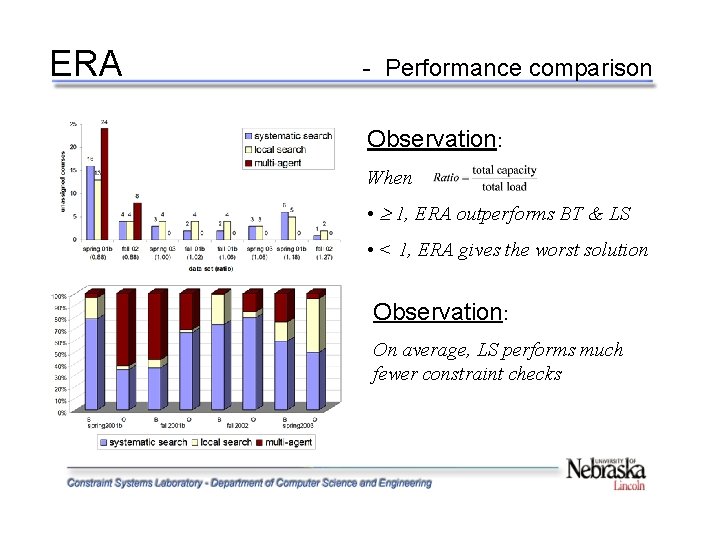

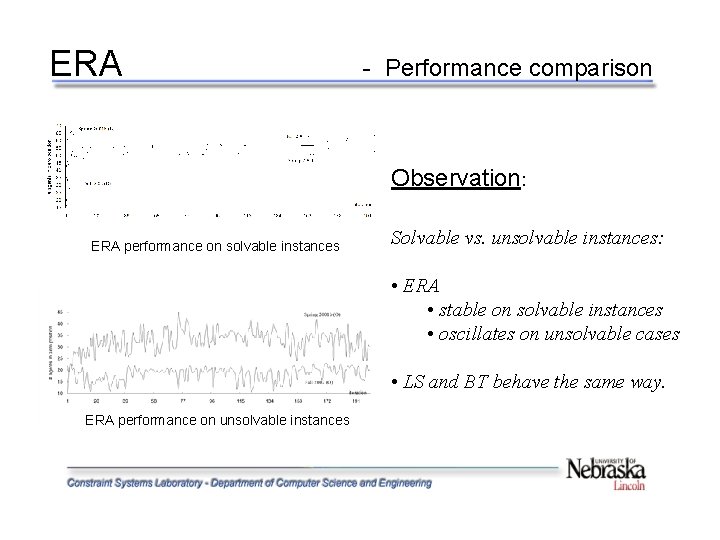

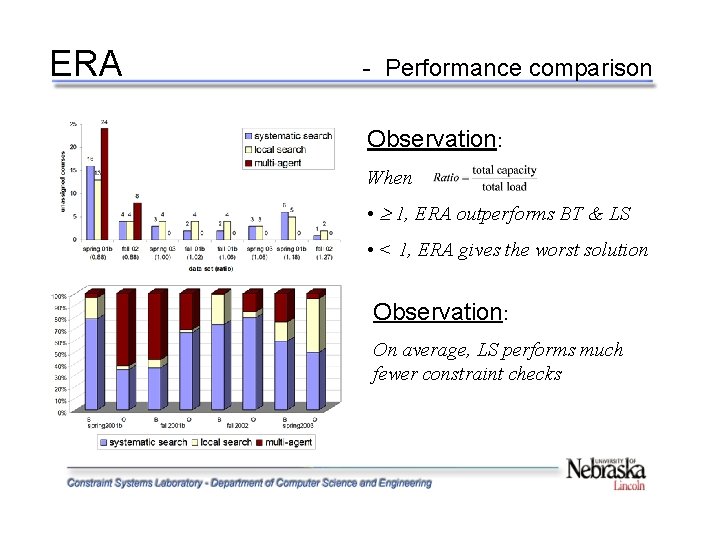

ERA - Performance comparison Observation: When • 1, ERA outperforms BT & LS • < 1, ERA gives the worst solution Observation: On average, LS performs much fewer constraint checks

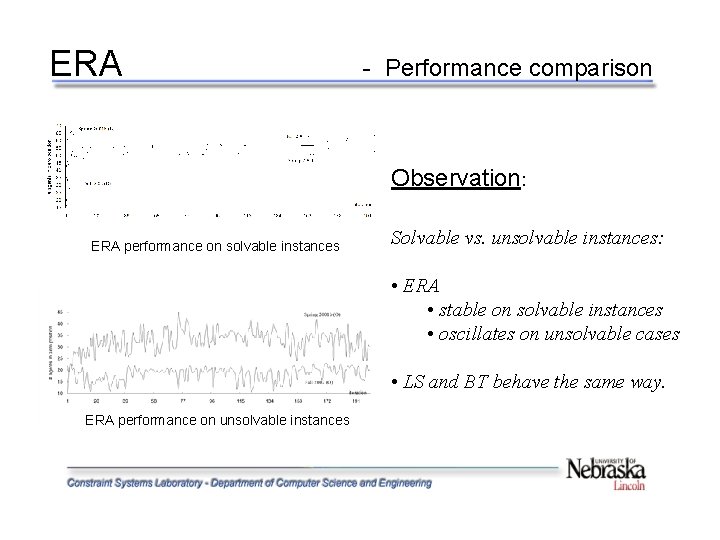

ERA - Performance comparison Observation: ERA performance on solvable instances Solvable vs. unsolvable instances: • ERA • stable on solvable instances • oscillates on unsolvable cases • LS and BT behave the same way. ERA performance on unsolvable instances

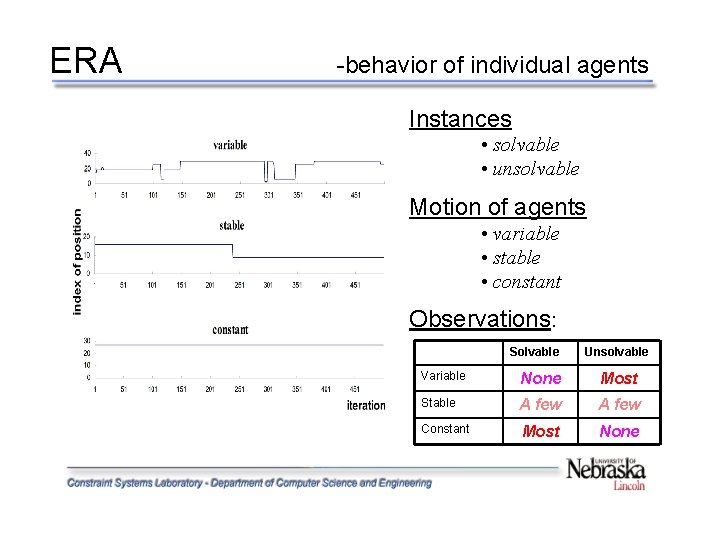

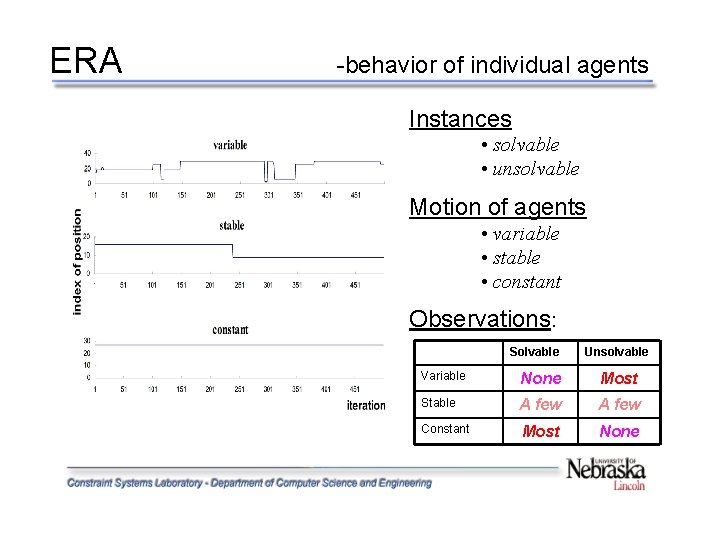

ERA -behavior of individual agents Instances • solvable • unsolvable Motion of agents • variable • stable • constant Observations: Solvable Unsolvable Variable None Most Stable A few Constant Most None

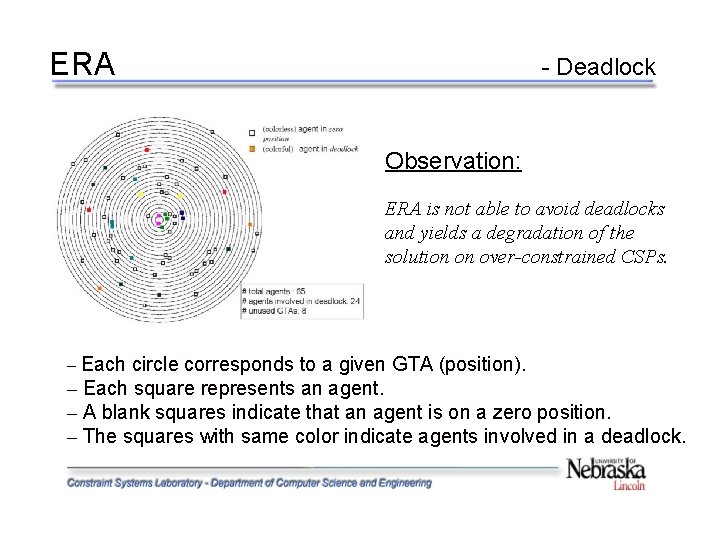

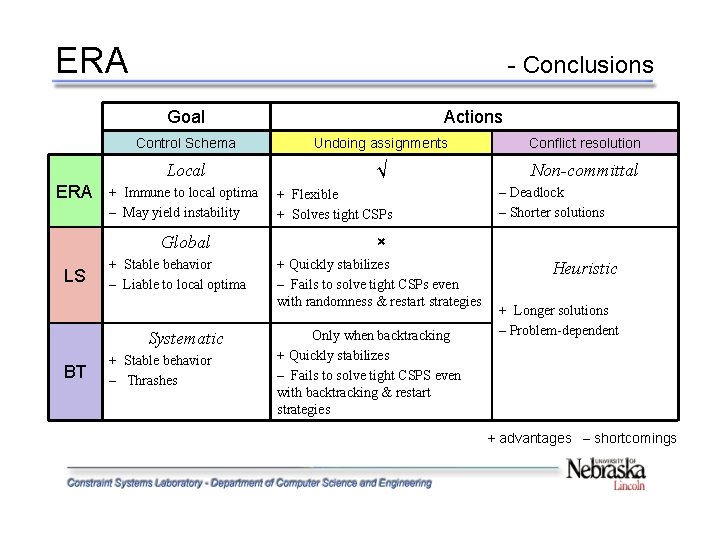

ERA - Deadlock Observation: ERA is not able to avoid deadlocks and yields a degradation of the solution on over-constrained CSPs. – Each circle corresponds to a given GTA (position). – Each square represents an agent. – A blank squares indicate that an agent is on a zero position. – The squares with same color indicate agents involved in a deadlock.

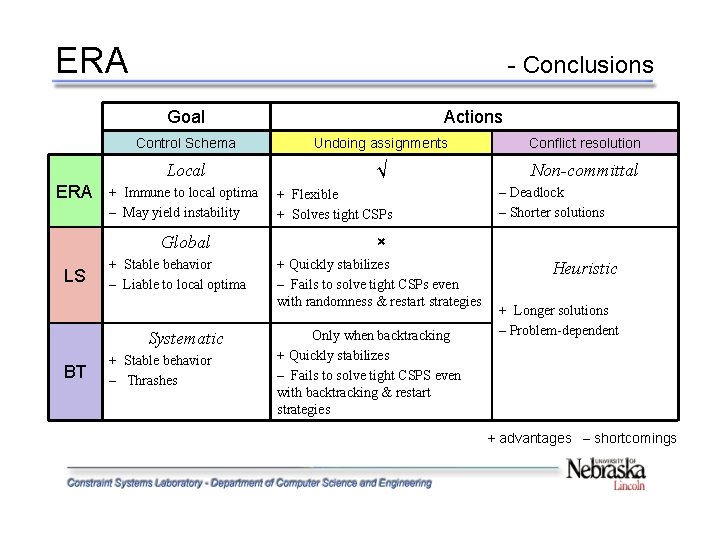

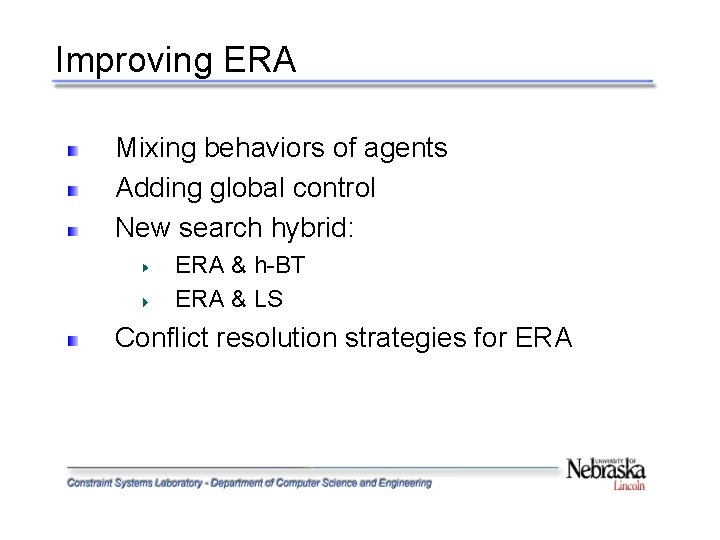

ERA - Conclusions Goal ERA Control Schema Undoing assignments Conflict resolution Local √ Non-committal + Immune to local optima – May yield instability Global LS + Stable behavior – Liable to local optima Systematic BT Actions + Stable behavior – Thrashes + Flexible + Solves tight CSPs – Deadlock – Shorter solutions × + Quickly stabilizes – Fails to solve tight CSPs even with randomness & restart strategies Only when backtracking + Quickly stabilizes – Fails to solve tight CSPS even with backtracking & restart strategies Heuristic + Longer solutions – Problem-dependent + advantages – shortcomings

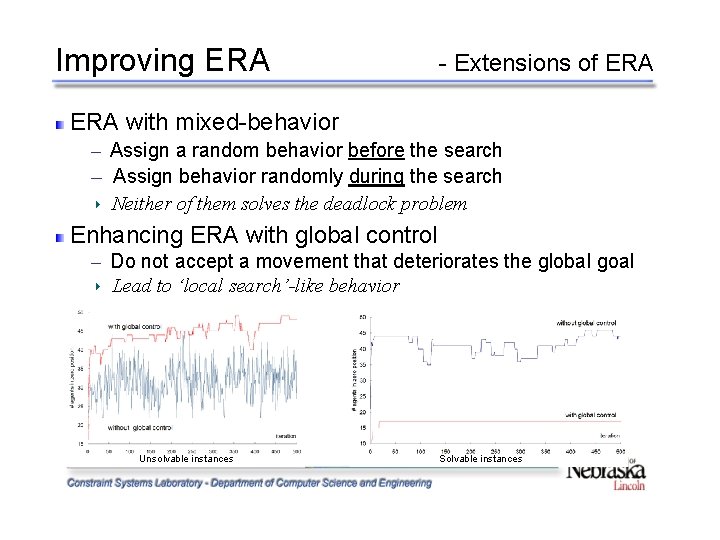

Improving ERA Mixing behaviors of agents Adding global control New search hybrid: ERA & h-BT ERA & LS Conflict resolution strategies for ERA

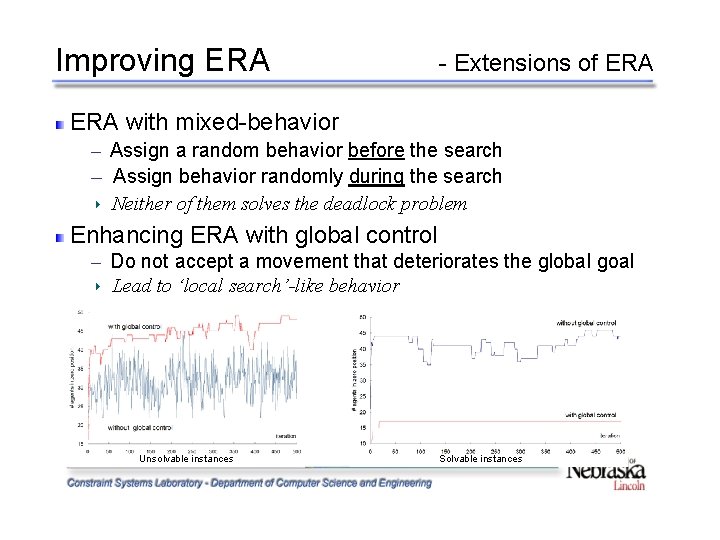

Improving ERA - Extensions of ERA with mixed-behavior ─ ─ Assign a random behavior before the search Assign behavior randomly during the search Neither of them solves the deadlock problem Enhancing ERA with global control ─ Do not accept a movement that deteriorates the global goal Lead to ‘local search’-like behavior Unsolvable instances Solvable instances

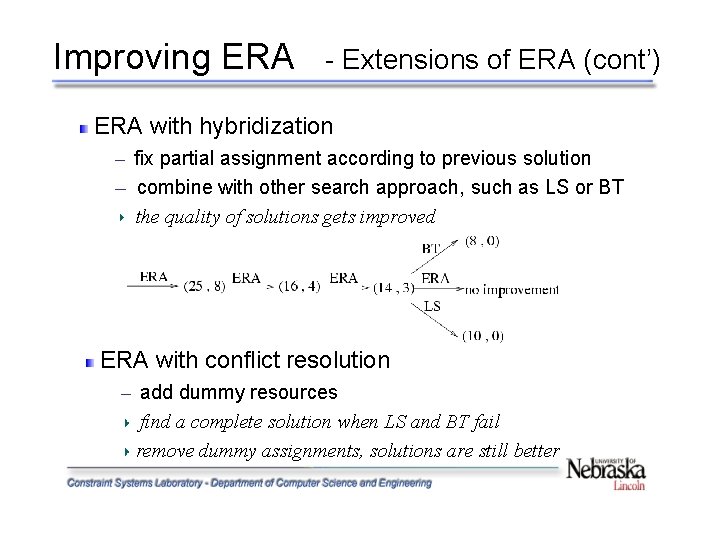

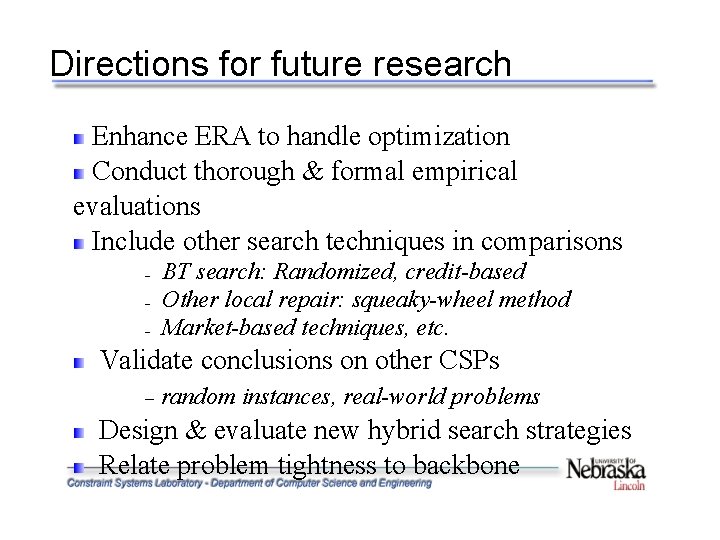

Improving ERA - Extensions of ERA (cont’) ERA with hybridization ─ ─ fix partial assignment according to previous solution combine with other search approach, such as LS or BT the quality of solutions gets improved ERA with conflict resolution ─ add dummy resources find a complete solution when LS and BT fail remove dummy assignments, solutions are still better

Directions for future research Enhance ERA to handle optimization Conduct thorough & formal empirical evaluations Include other search techniques in comparisons – – – BT search: Randomized, credit-based Other local repair: squeaky-wheel method Market-based techniques, etc. Validate conclusions on other CSPs – random instances, real-world problems Design & evaluate new hybrid search strategies Relate problem tightness to backbone

Acknowledgements Dr. F. Fred Choobineh Dr. Berthe Y. Choueiry (advisor) Dr. Hong Jiang Dr. Peter Revesz Members in the Constraint Systems Laboratory My friends in Lincoln My parents and my wife

Questions