Item Analysis Summary Validity and Reliability Validity is

Item Analysis Summary

Validity and Reliability Validity is about 1. Assessing the “right” SLO at the “right” level 2. Choosing the “right” assessment task 3. Asking the “right” question 4. Proper sampling from learning outcomes and course content Reliability is about 1. Asking the “right” question “concisely” 2. Grading the task “consistently” 3. Enough Sampling (reliability presumes validity) 2

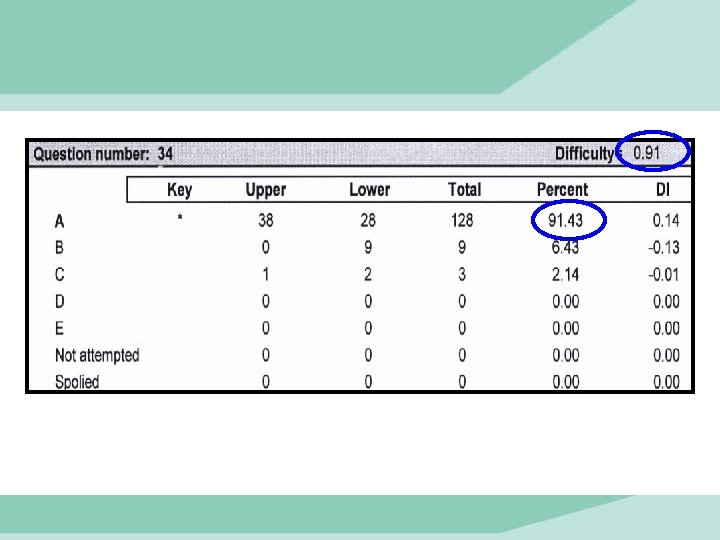

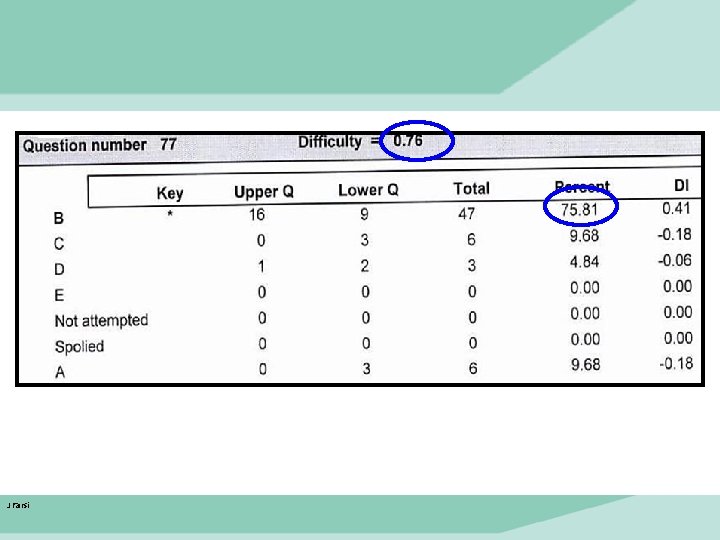

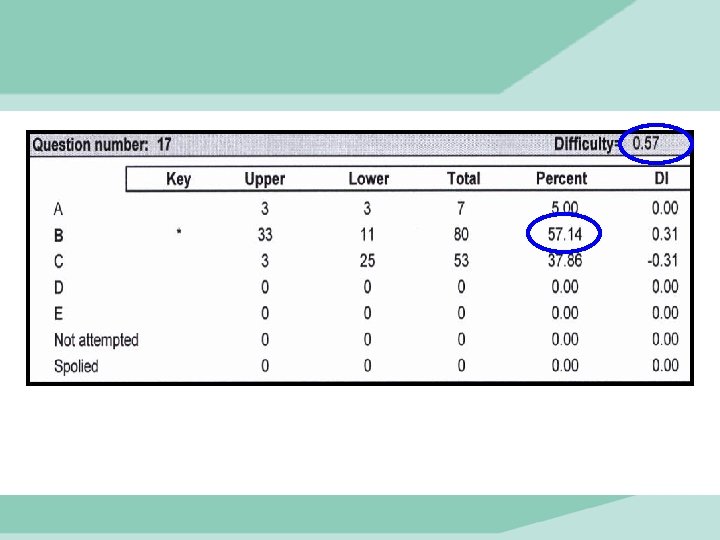

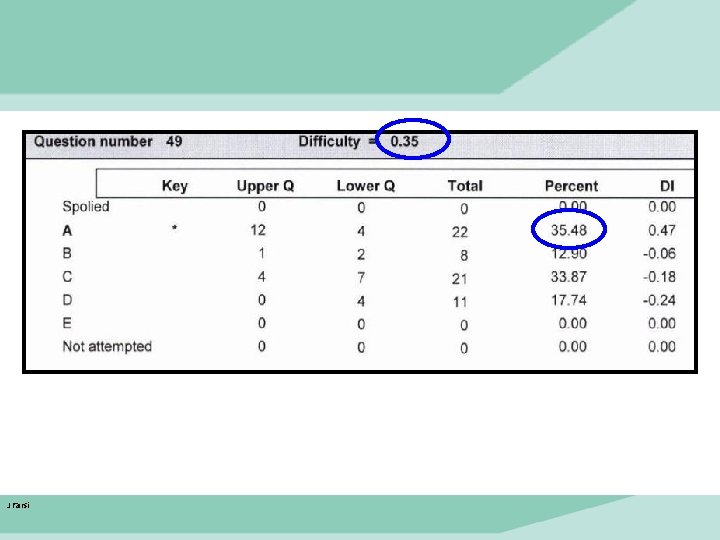

Item Difficulty • Item difficulty measures the proportion of students who answered correctly (represented with a lowercase p). • Most of items should be in midrange difficulty, while few are difficult or easy. J Farsi 2016 3

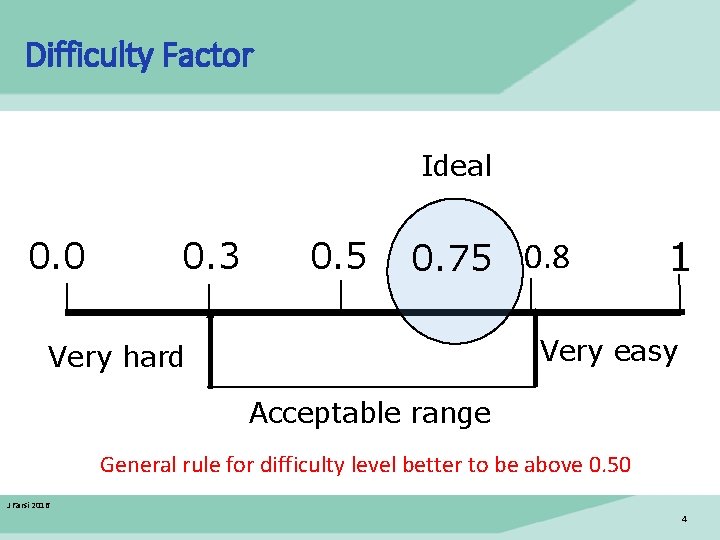

Difficulty Factor Ideal 0. 0 0. 5 0. 75 0. 8 0. 3 1 Very easy Very hard Acceptable range General rule for difficulty level better to be above 0. 50 J Farsi 2016 4

J Farsi

J Farsi

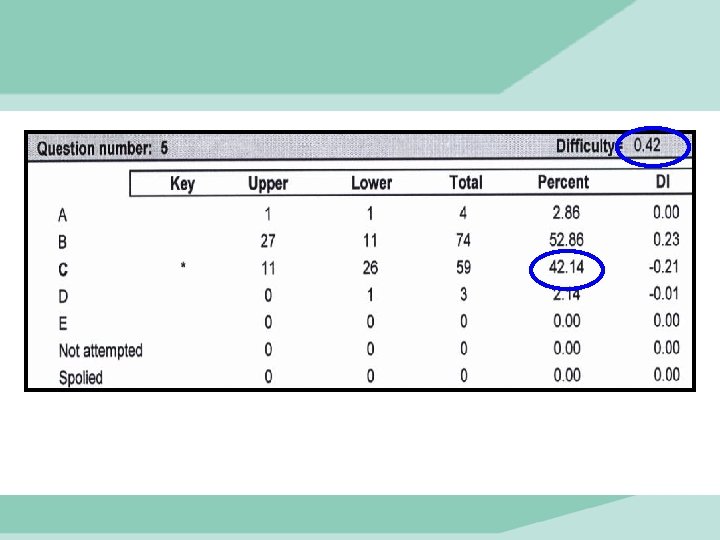

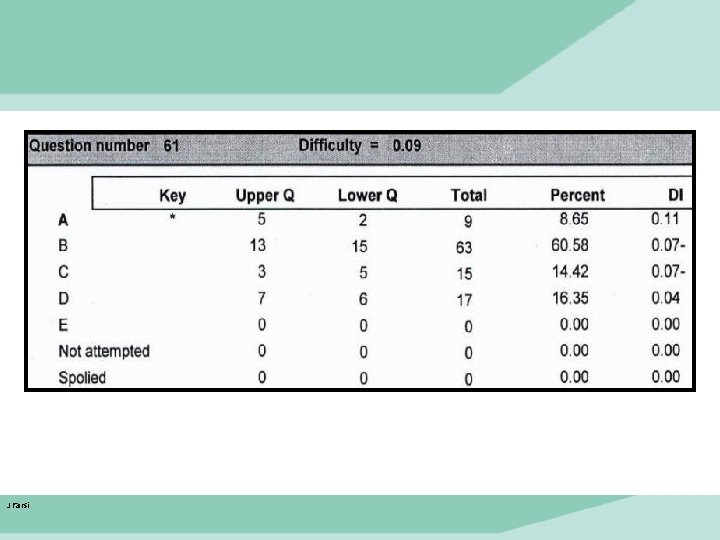

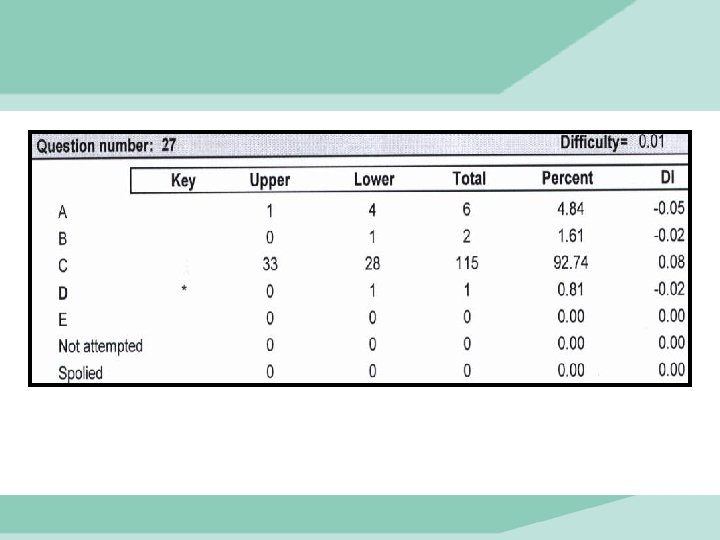

Difficult Item • Ambiguity in the question; • difficult material; • missing skill; • not enough practice; • bad distractors J Farsi 2016 9

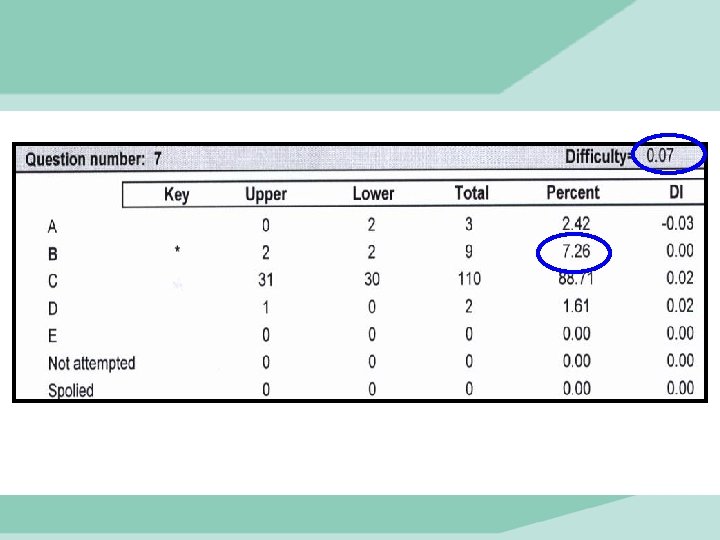

Difficult Item • Ambiguity in the question; • difficult material; • missing skill; • not enough practice; • bad distractors • Miss-keyed J Farsi 2016 11

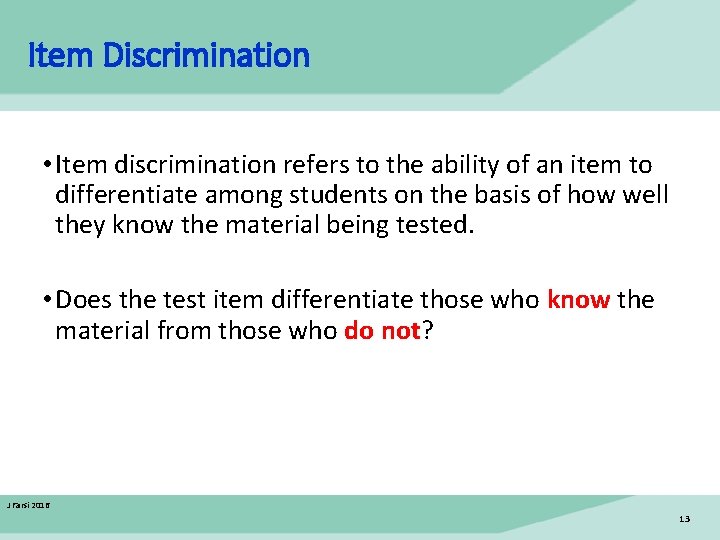

Item Discrimination • Item discrimination refers to the ability of an item to differentiate among students on the basis of how well they know the material being tested. • Does the test item differentiate those who know the material from those who do not? J Farsi 2016 13

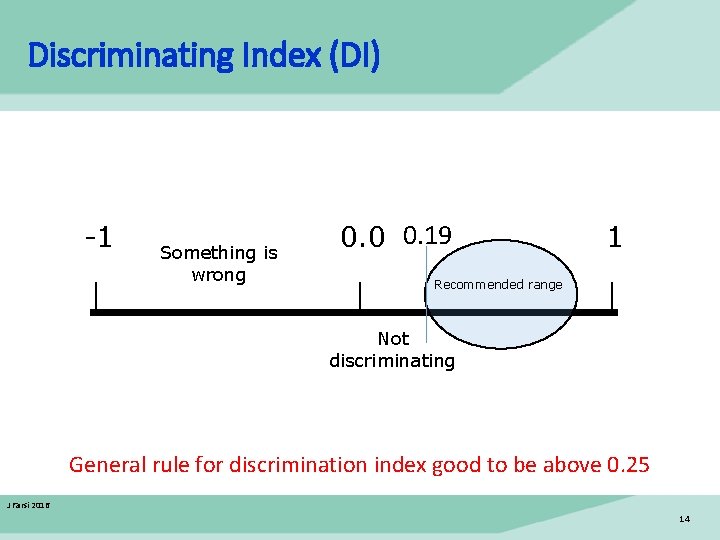

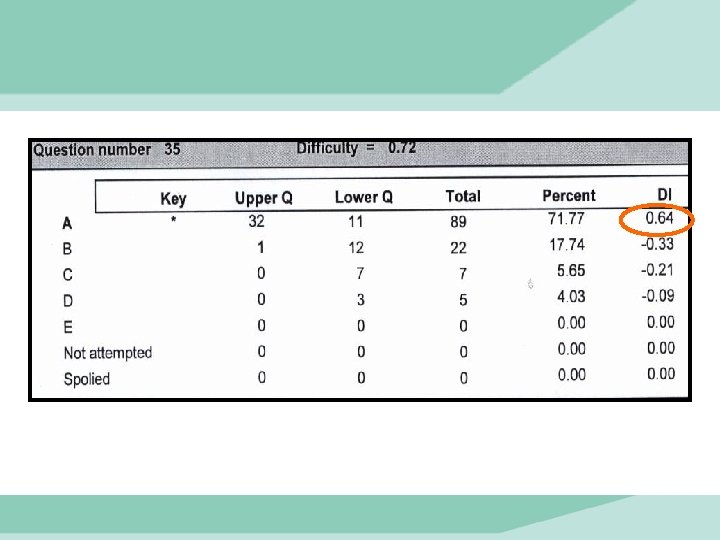

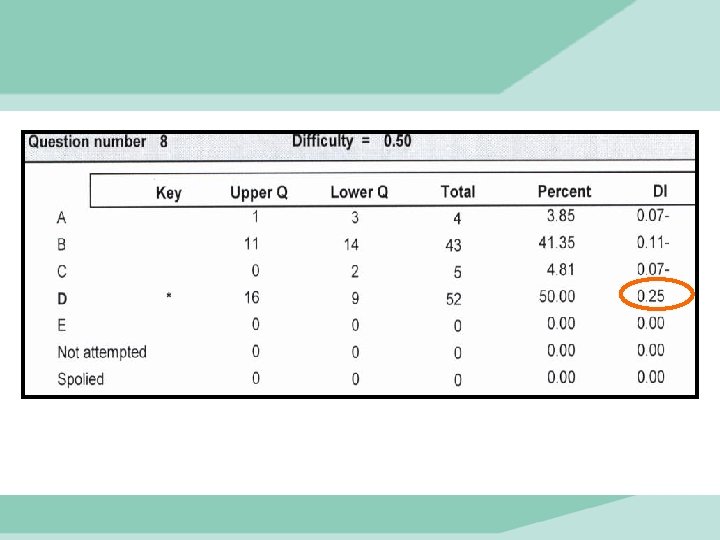

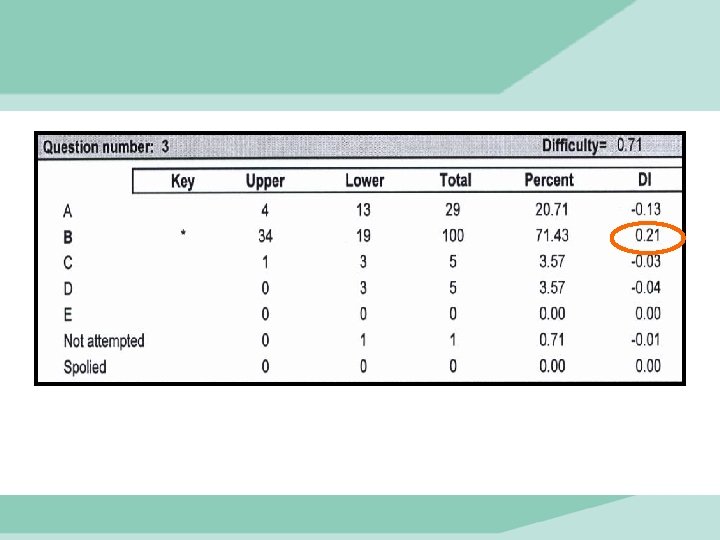

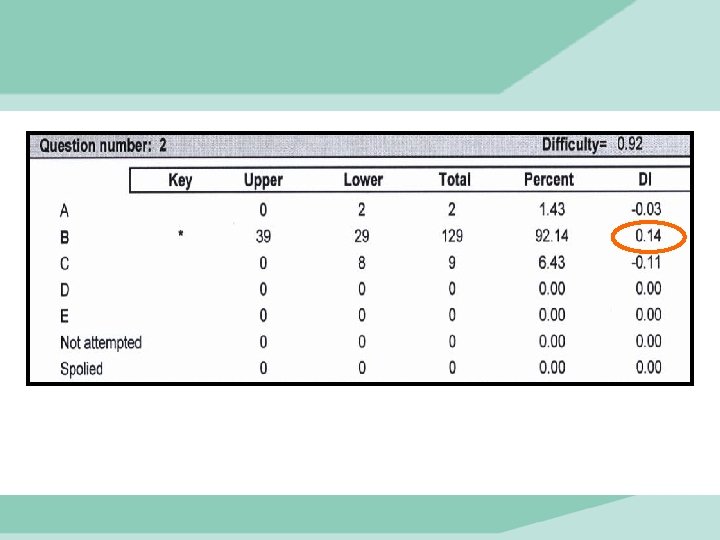

Discriminating Index (DI) -1 Something is wrong 0. 0 0. 19 1 Recommended range Not discriminating General rule for discrimination index good to be above 0. 25 J Farsi 2016 14

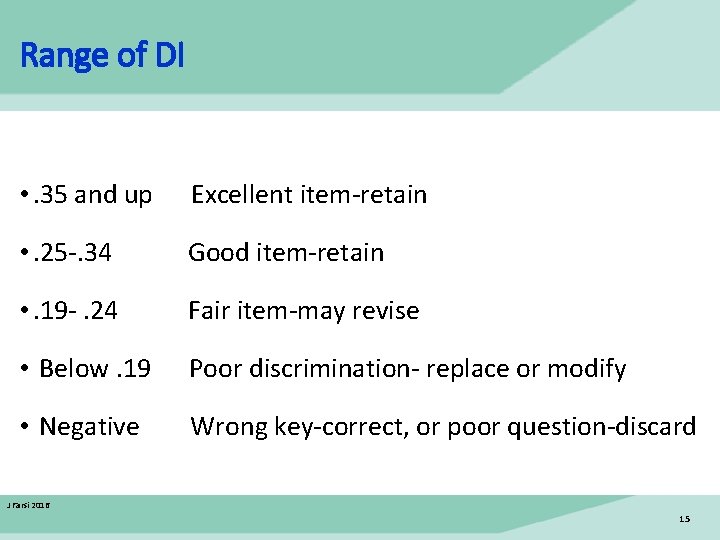

Range of DI • . 35 and up Excellent item-retain • . 25 -. 34 Good item-retain • . 19 -. 24 Fair item-may revise • Below. 19 Poor discrimination- replace or modify • Negative Wrong key-correct, or poor question-discard J Farsi 2016 15

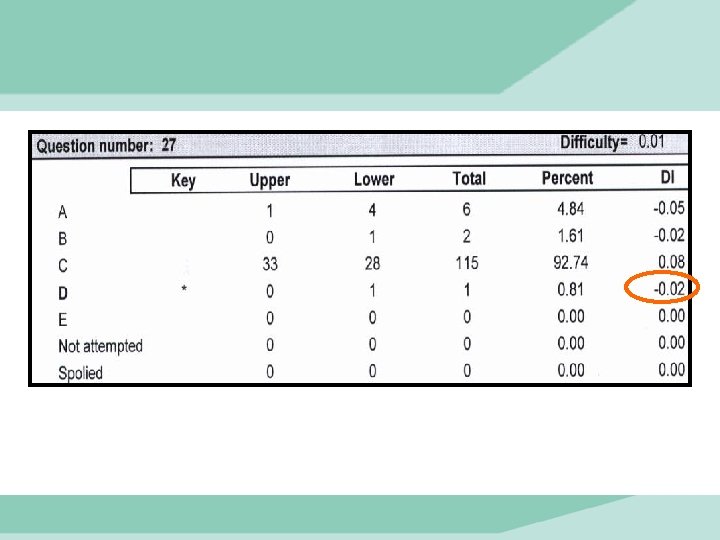

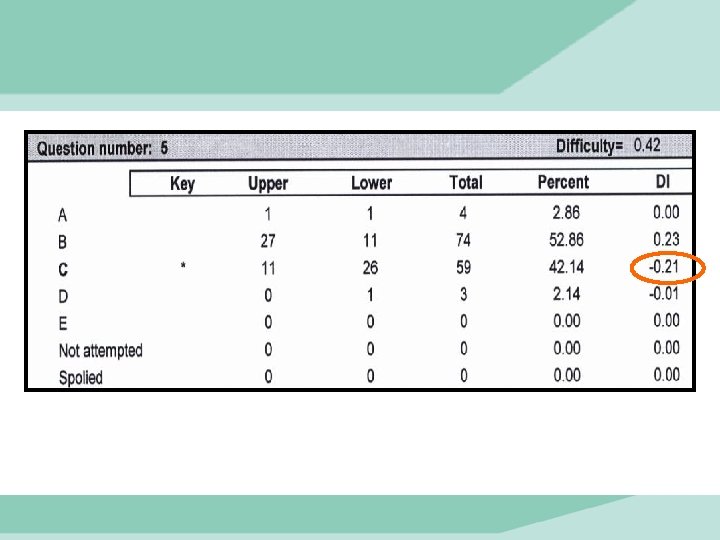

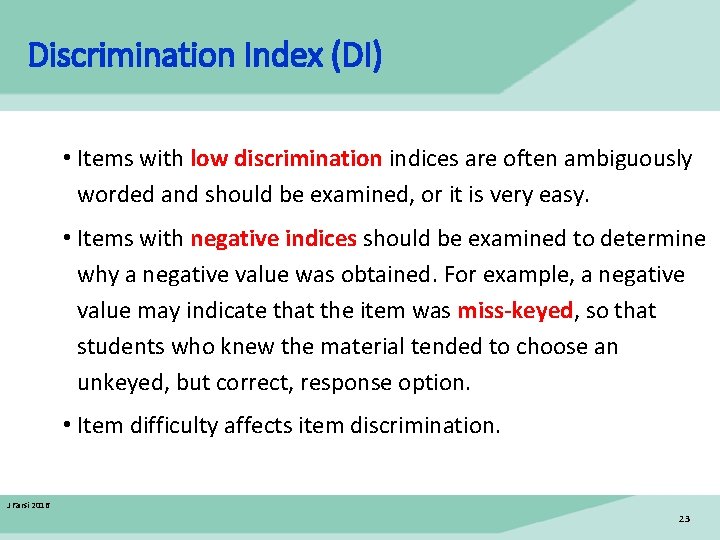

Discrimination Index (DI) • Items with low discrimination indices are often ambiguously worded and should be examined, or it is very easy. • Items with negative indices should be examined to determine why a negative value was obtained. For example, a negative value may indicate that the item was miss-keyed, so that students who knew the material tended to choose an unkeyed, but correct, response option. • Item difficulty affects item discrimination. J Farsi 2016 23

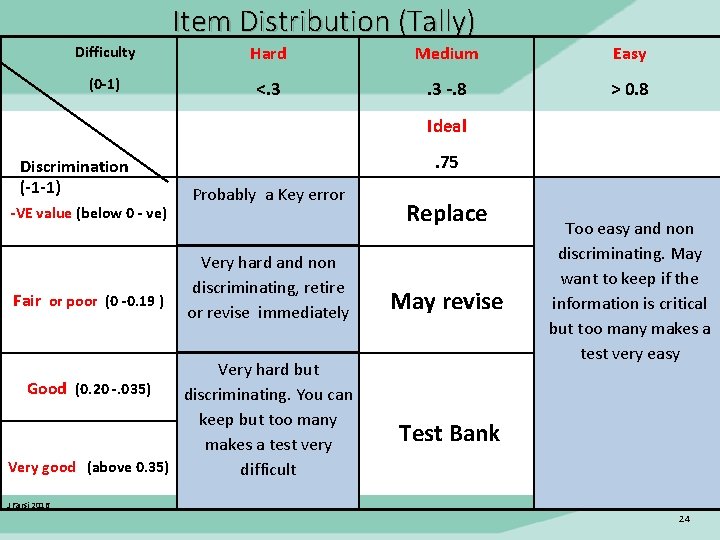

Item Distribution (Tally) Difficulty (0 -1) Hard Medium Easy <. 3 -. 8 > 0. 8 Ideal Discrimination (-1 -1) -VE value (below 0 - ve) Fair or poor (0 -0. 19 ) . 75 Probably a Key error Very hard and non discriminating, retire or revise immediately Very hard but Good (0. 20 -. 035) discriminating. You can keep but too many makes a test very Very good (above 0. 35) difficult Replace May revise Too easy and non discriminating. May want to keep if the information is critical but too many makes a test very easy Test Bank J Farsi 2016 24

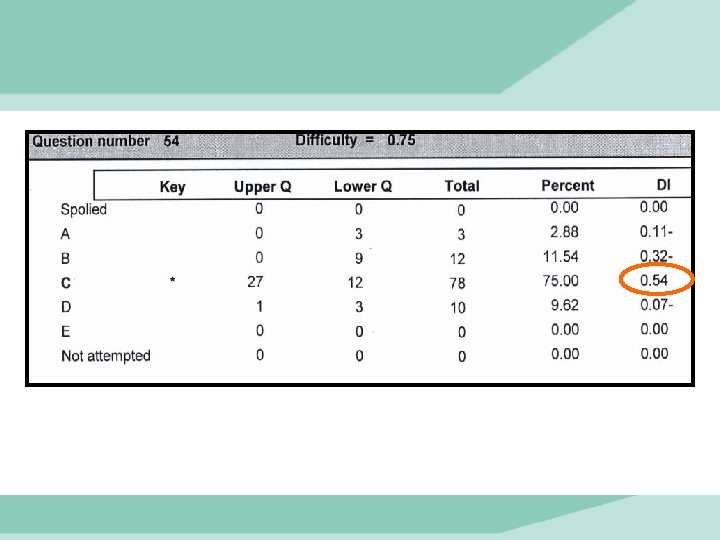

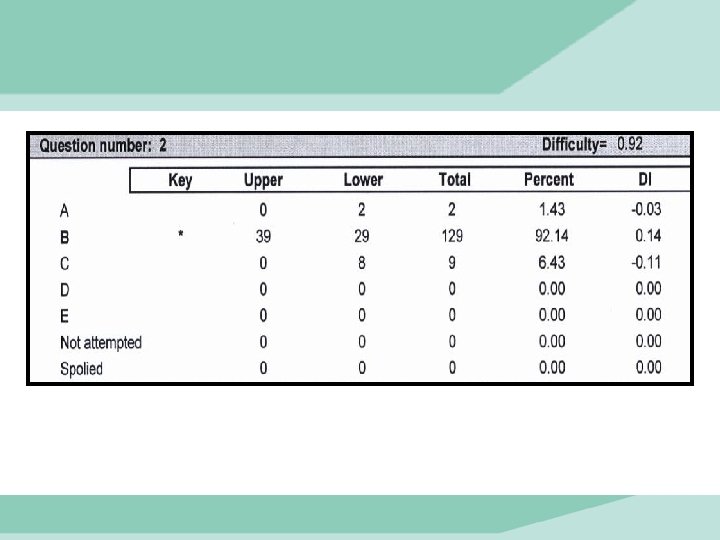

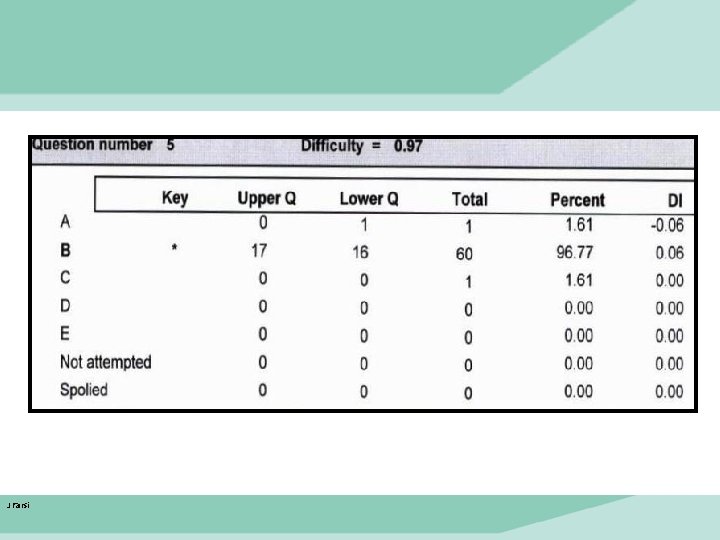

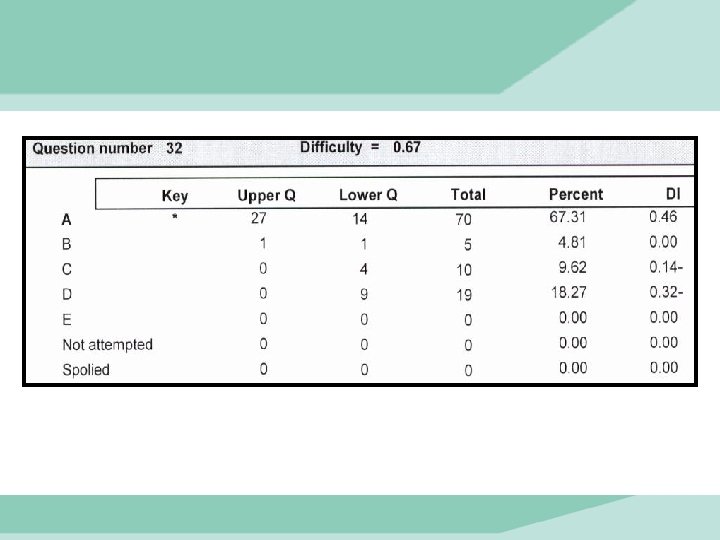

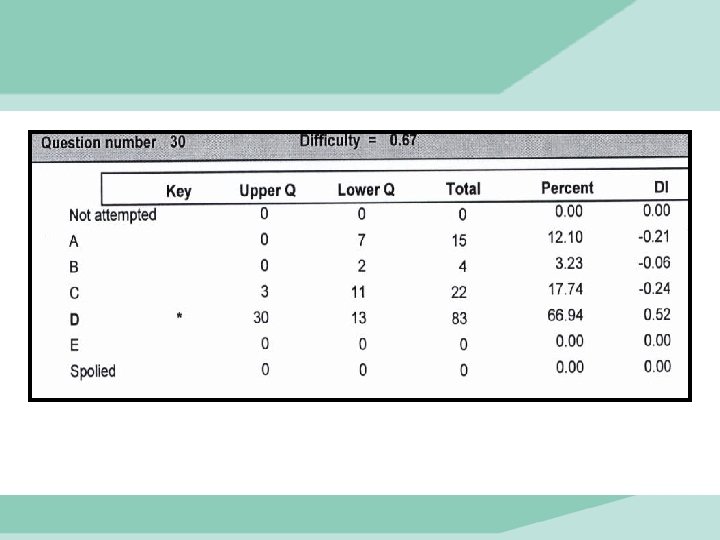

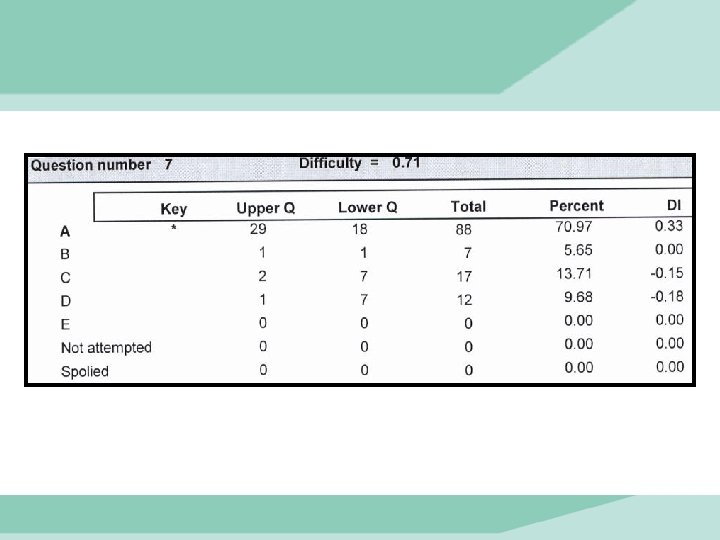

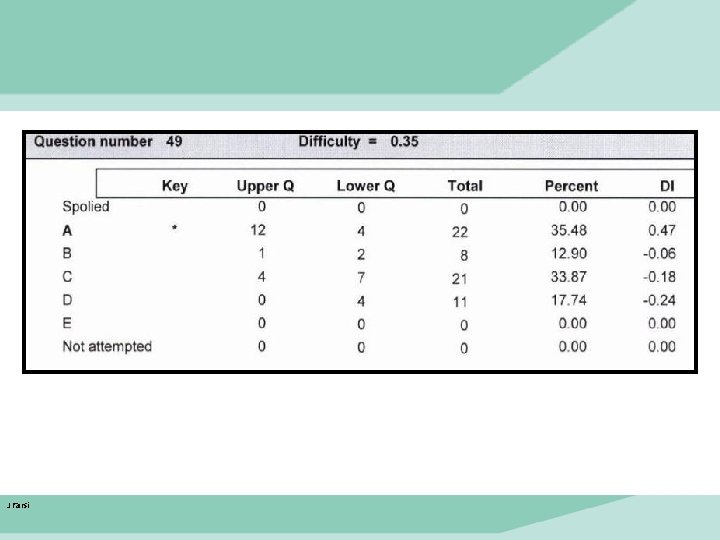

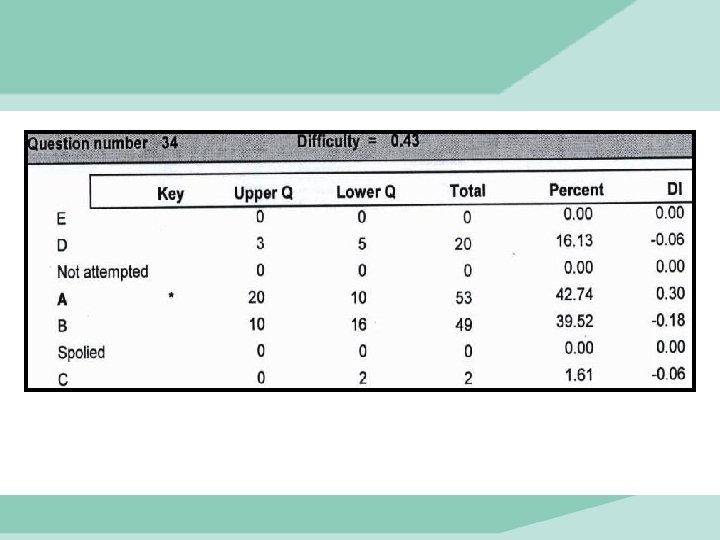

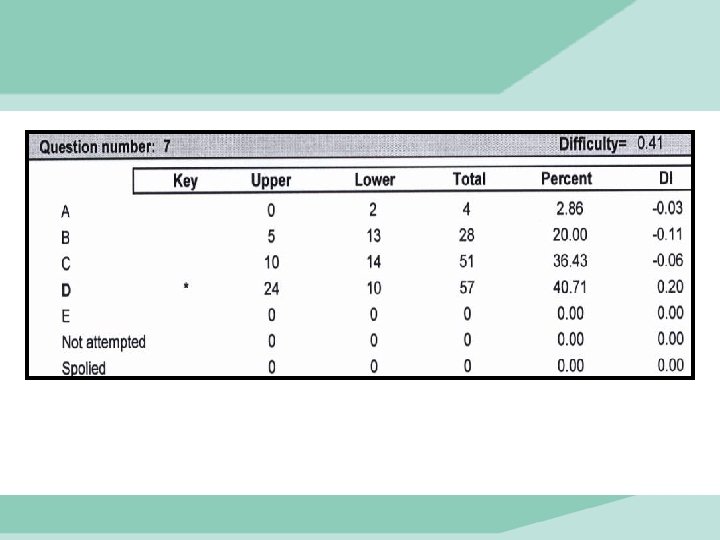

Distractor analysis is used to determine which distractors, or “wrong response options” students find attractive. Which group of students choose it more (higher or lower) J Farsi 2016 25

Issues in distractors analysis • most students in the upper group fail to select the correct (or keyed) option • students in the upper group are divided between two options (a wrong answer is selected by similar number of students as the correct answer) • more students in the upper group chose a wrong option than students from lower group • a distractor is not chosen by students J Farsi 2016 26

J Farsi

J Farsi

J Farsi

- Slides: 36