IS MY GOOD BETTER THAN YOURS Mara Galache

IS MY ‘GOOD’ BETTER THAN YOURS? María Galache Ramos AIE Conference October 2019

Poor – Good – Excellent?

Poor – Good – Excellent?

Poor – Good – Excellent? If I didn't have you to lie with at night When I'm feeling blue If I didn't have you to share my sighs And to kiss me and dry my tears when I cry Well… I really think that I would Have somebody else. If I didn't have you Someone else would do.

Poor – Good – Excellent? Local materials Sense of community Harriet Hill, Rock of ages (2019)

Poor – Good – Excellent? Primary school Hobbies Marina (4 years old)

Poor – Good – Excellent? Song lyrics If I didn't have you to lie with at night Humour When I'm feeling blue If I didn't have you to share my sighs And to kiss me and dry my tears when I cry Well… I really think that I would Have somebody else. If I didn't have you Someone else would do. Tim Minchin, “If I didn’t have you”, Ready for this? (2009)

IS MY ‘GOOD’ BETTER THAN YOURS? UNDERSTANDING & INTERPRETING ASSESSMENT CRITERIA

WHY? High stakes assessment is important for students and their families, schools, higher education institutions, employers, governments… …. and exam boards! Media coverage: Is assessment fair? – Stakeholders’ trust – Legal implications

RESEARCH Fairness in assessment Extensive body of research from a theoretical approach Lack of empirical studies of less observable features such as the role of examiner judgement

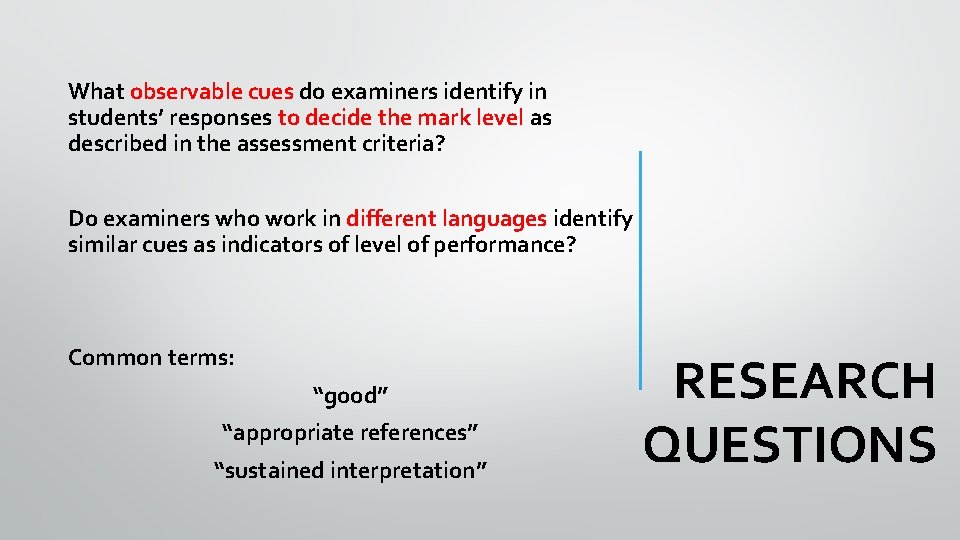

What observable cues do examiners identify in students’ responses to decide the mark level as described in the assessment criteria? Do examiners who work in different languages identify similar cues as indicators of level of performance? Common terms: “good” “appropriate references” “sustained interpretation” RESEARCH QUESTIONS

LITERATURE REVIEW • What are assessment standards? ‘a level of performance’ (Wiliam, 2000) • Why is it important to set and maintain standards? Fairness: “the qualities of an assessment system that attempt to eliminate or avoid issues of inequity amongst students” - Poor design of assessment tasks - Inter-rater discrepancy

Standards Fairness “the primary decisions in marking and grading are based on the judgement of student performance against expected standards” (IB, 2004) • Where do we find the expected standard? • The role of examiner judgement in criterion referencing • Inter-rater agreement: Inter-subjectivity can lead to objectivity (Wiliam, 2000)

Expected standard The exercise of examiner judgement is carried out by a “coherent community of practice” who develop a common understanding and interpretation of the assessment criteria A shared construct Construct referenced assessment (Wiliam, 2000)

• Measures: recruitment, training, standardisation, grade award • Comparability of standards: Who? Why? What? • Exam boards compare cohorts, assessment tools and subject performance • Implications (validity, reliability, reputational)

CONTEXT Assessment in the IB Diploma Programme Group 1 Studies in Language and Literature Language A Literature in +75 languages Are some subjects more difficult than others? Toze (2008), Matthews(2009)

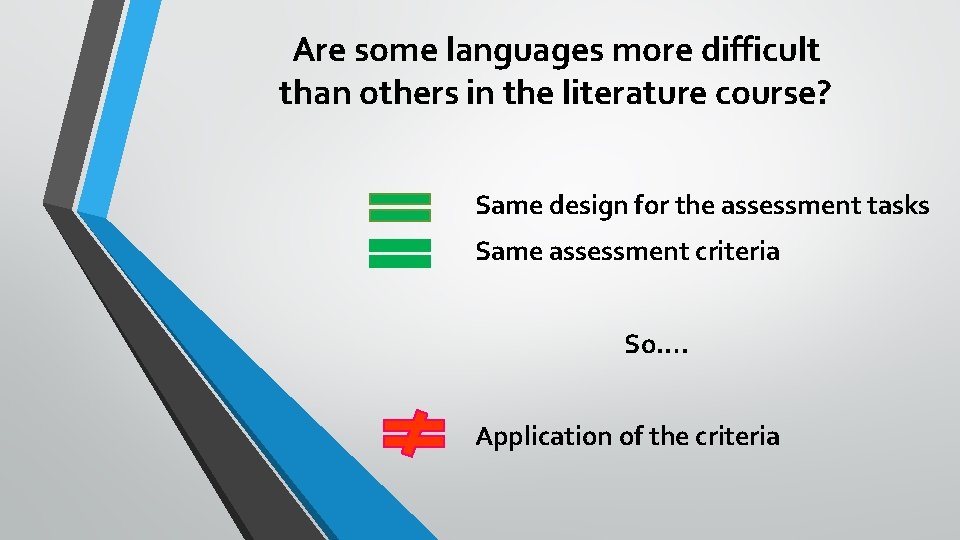

Are some languages more difficult than others in the literature course? Same design for the assessment tasks Same assessment criteria So…. Application of the criteria

Empirical research

CONTEXT Assessment in the IB Diploma Programme Group 1 Studies in Language and Literature Language A Literature in +75 languages Spanish A Literature: Higher Level Paper 1 Open ended response: essay based Assessment criteria

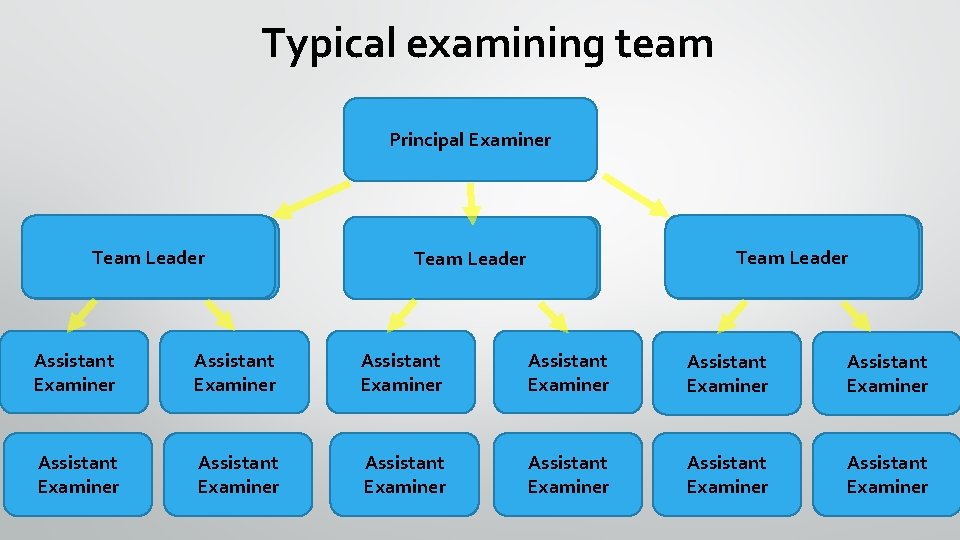

Typical examining team Principal Examiner Team. Leader Team. Leader Assistant Examiner Assistant Examiner Assistant Examiner

What observable cues do examiners identify in students’ responses to decide the mark level as described in the assessment criteria? Do examiners who work in different languages identify similar cues as indicators of level of performance? Common terms: “good” “appropriate references” “sustained interpretation” RESEARCH QUESTIONS

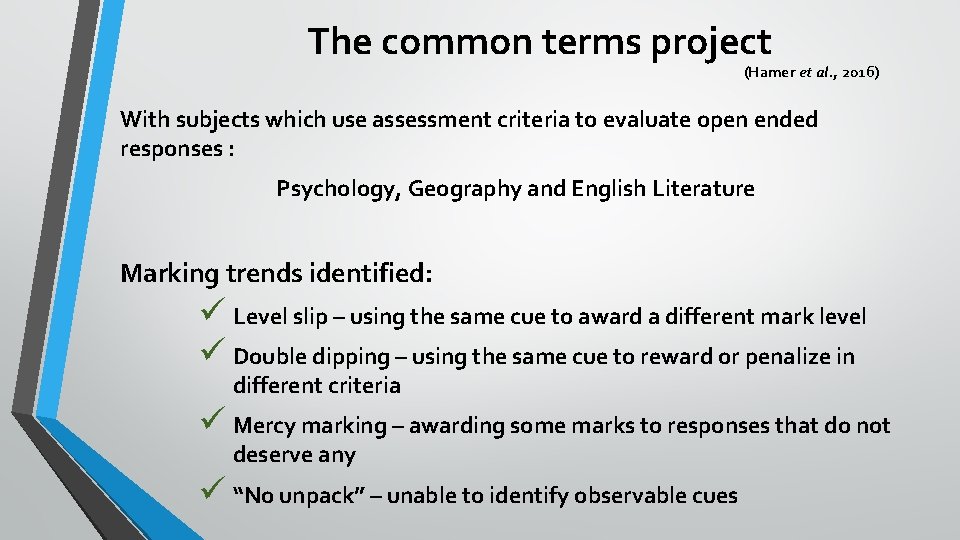

The common terms project (Hamer et al. , 2016) With subjects which use assessment criteria to evaluate open ended responses : Psychology, Geography and English Literature Marking trends identified: ü Level slip – using the same cue to award a different mark level ü Double dipping – using the same cue to reward or penalize in different criteria ü Mercy marking – awarding some marks to responses that do not deserve any ü “No unpack” – unable to identify observable cues

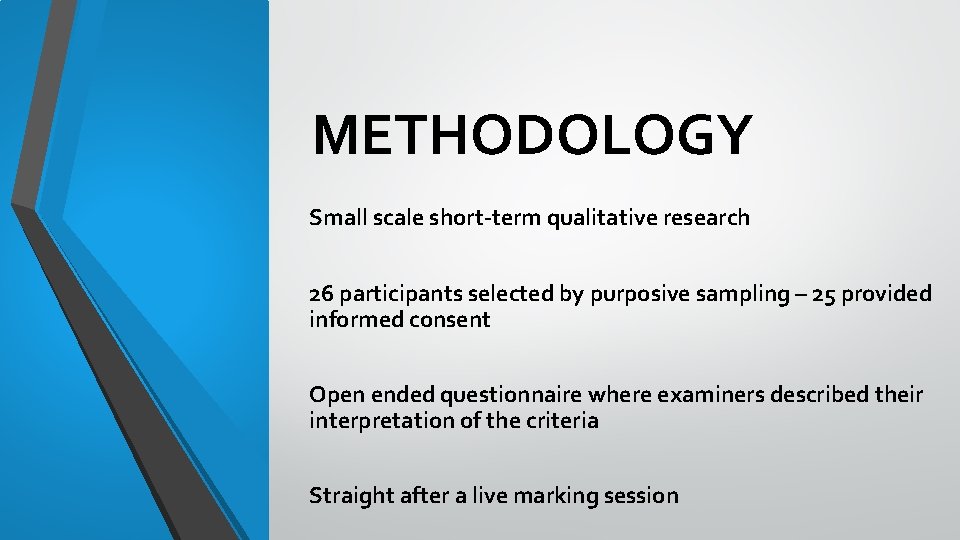

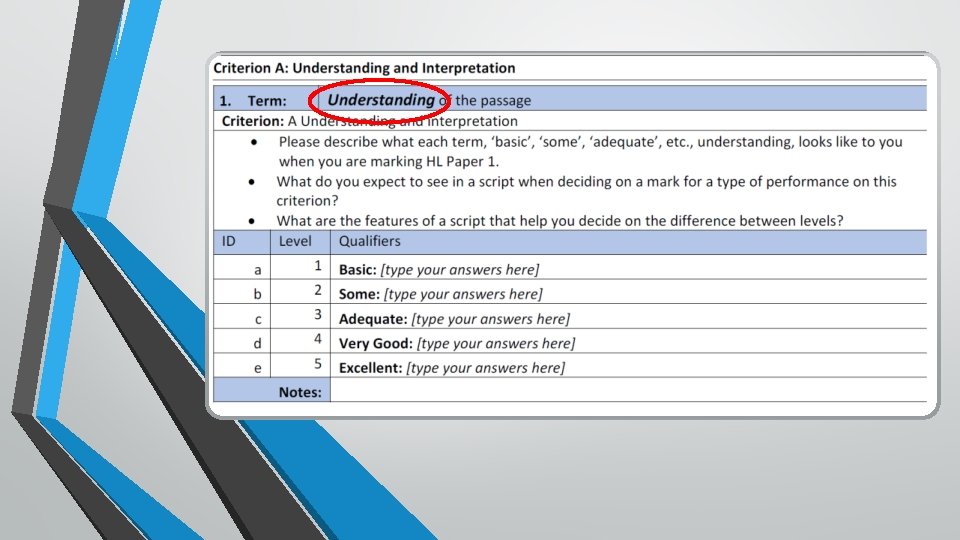

METHODOLOGY Small scale short-term qualitative research 26 participants selected by purposive sampling – 25 provided informed consent Open ended questionnaire where examiners described their interpretation of the criteria Straight after a live marking session

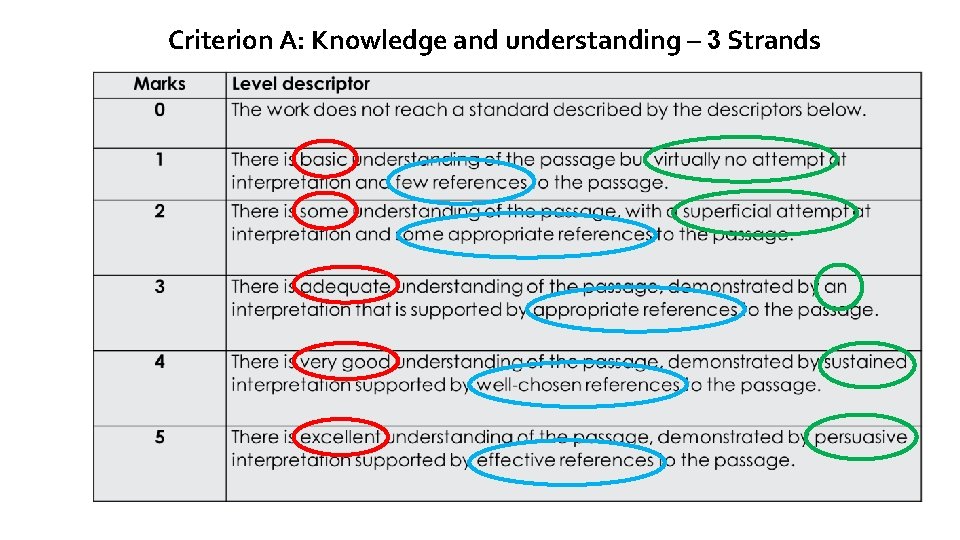

Criterion A: Knowledge and understanding – 3 Strands

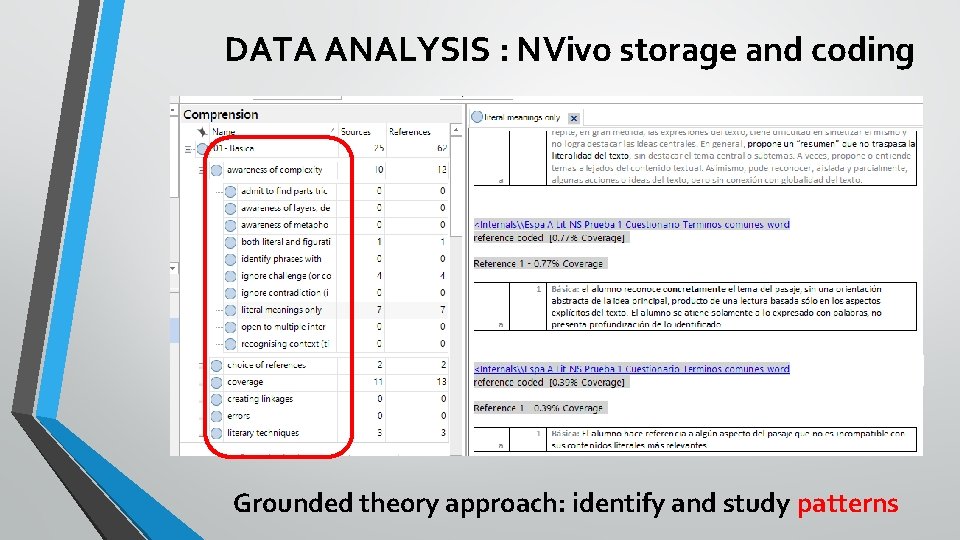

DATA ANALYSIS : NVivo storage and coding Grounded theory approach: identify and study patterns

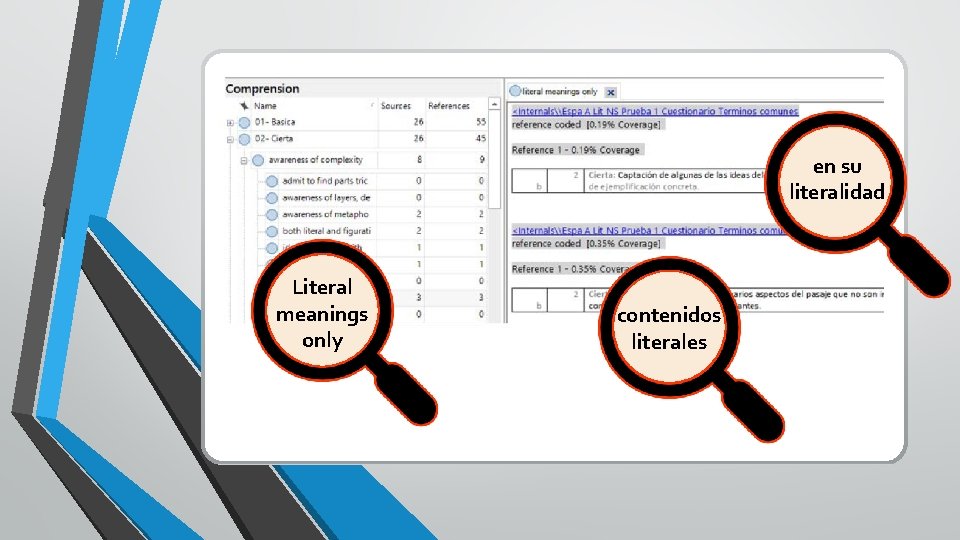

en su literalidad Literal meanings only contenidos literales

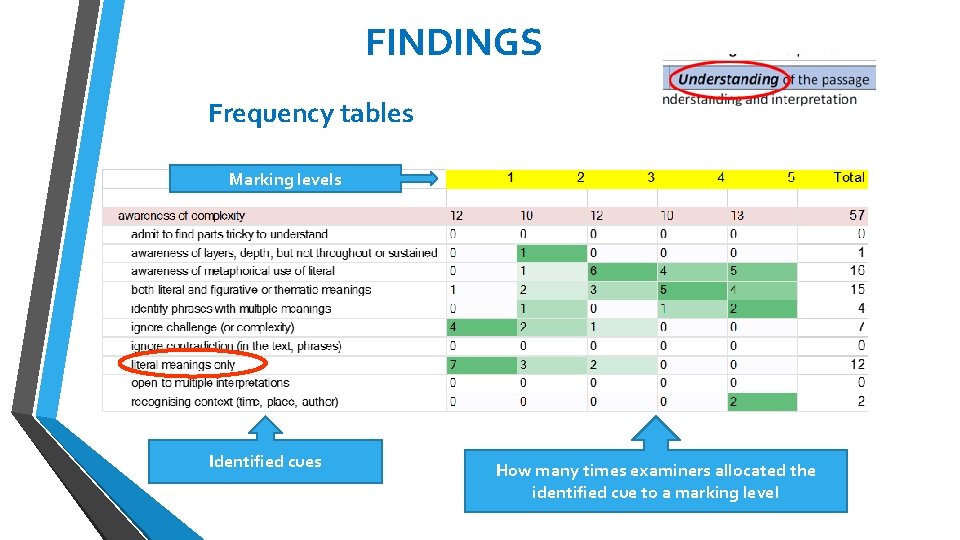

FINDINGS Frequency tables Marking levels Identified cues How many times examiners allocated the identified cue to a marking level

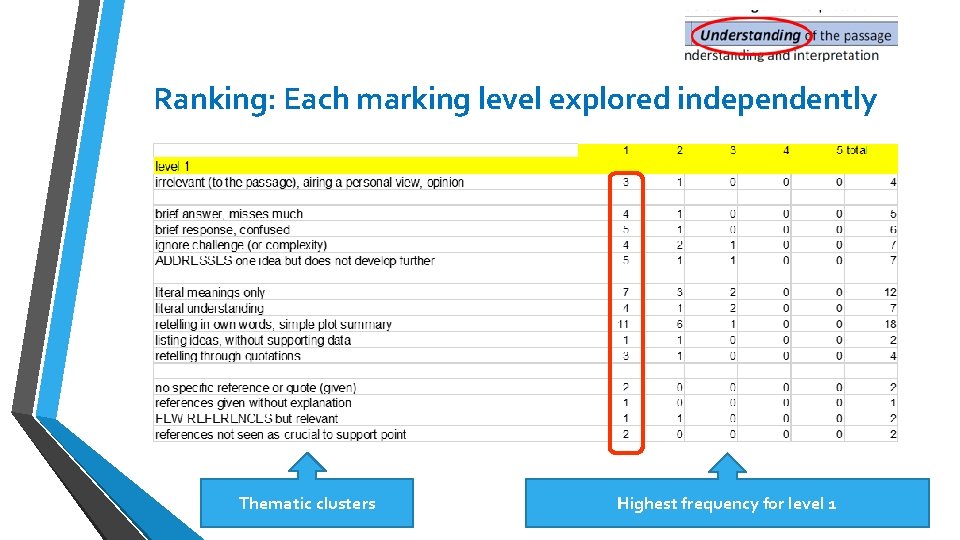

Ranking: Each marking level explored independently Thematic clusters Highest frequency for level 1

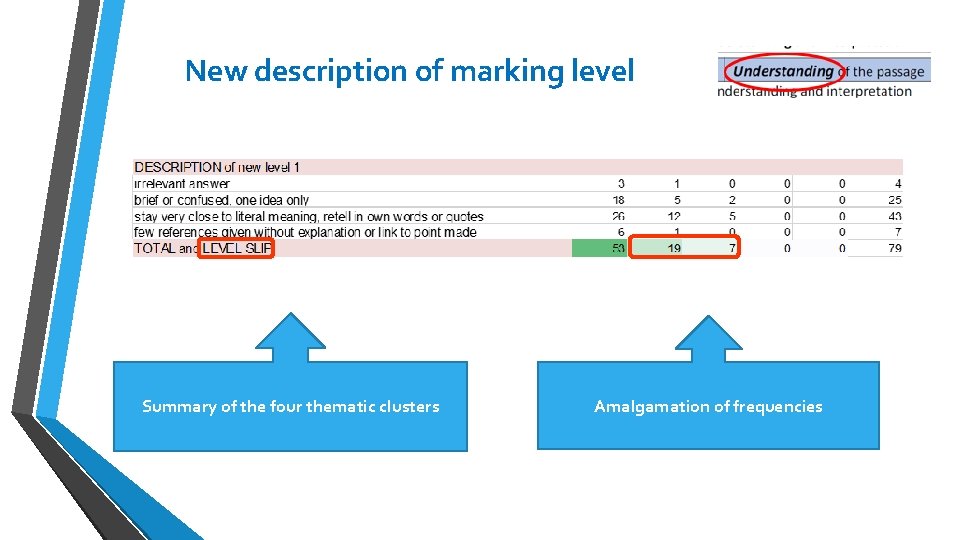

New description of marking level Summary of the four thematic clusters Amalgamation of frequencies

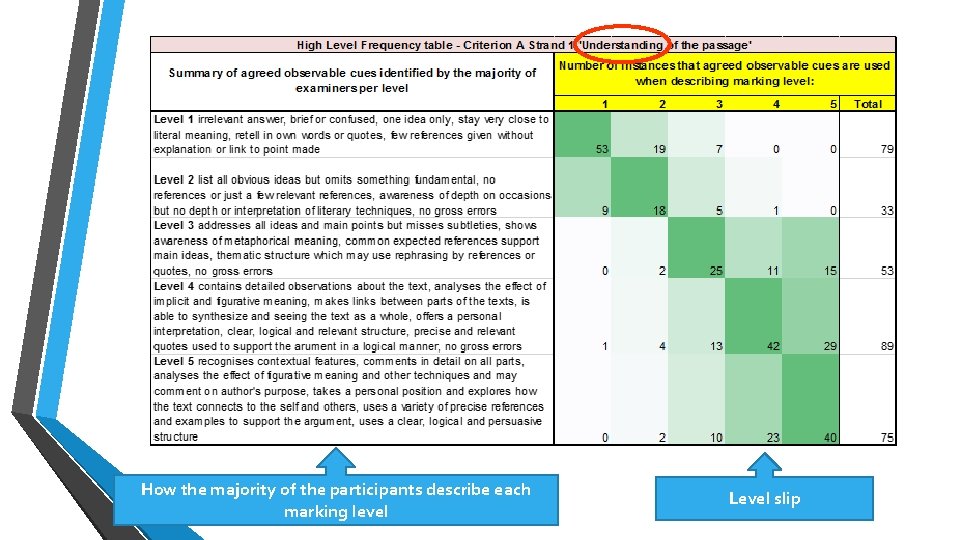

How the majority of the participants describe each marking level Level slip

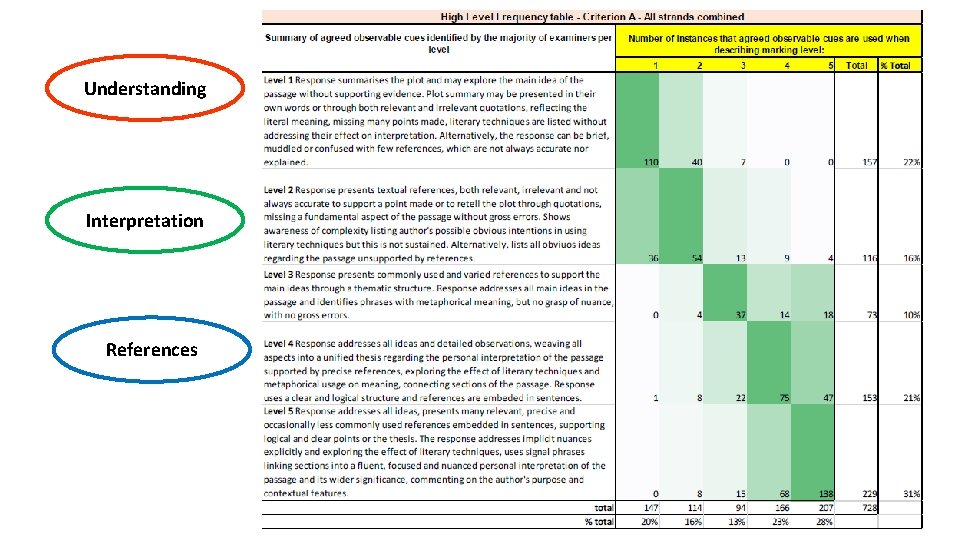

Understanding Interpretation References

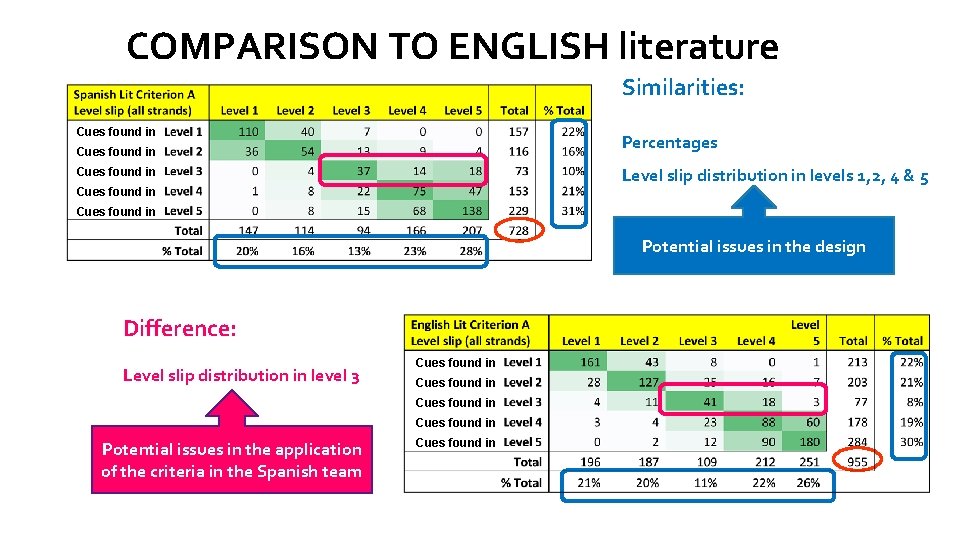

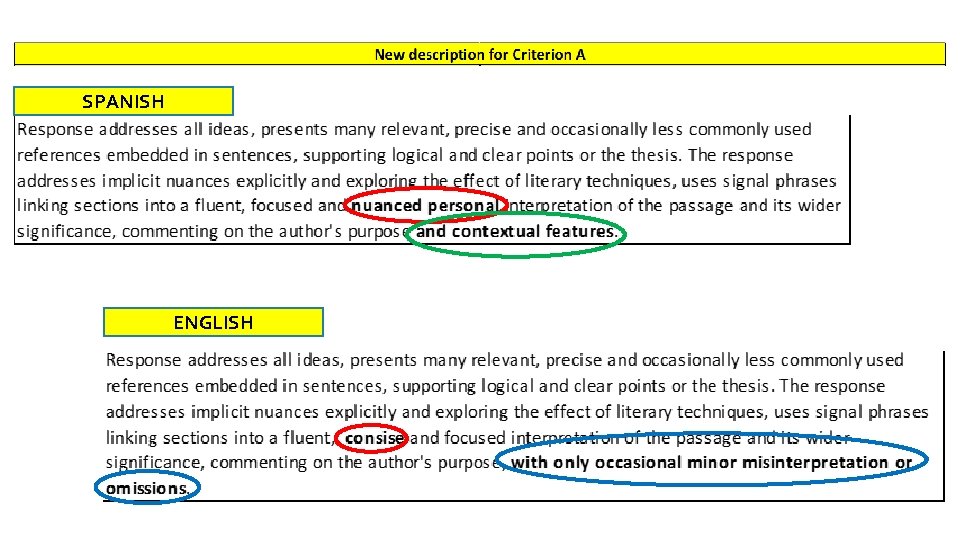

COMPARISON TO ENGLISH literature Similarities: Cues found in Percentages Cues found in Level slip distribution in levels 1, 2, 4 & 5 Cues found in Potential issues in the design Difference: Level slip distribution in level 3 Cues found in Potential issues in the application of the criteria in the Spanish team Cues found in

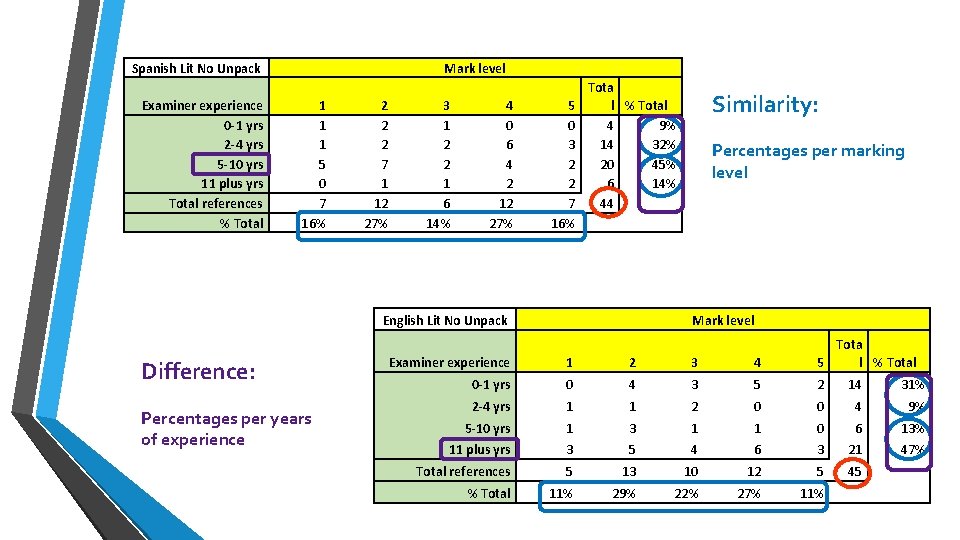

Spanish Lit No Unpack Examiner experience 0 -1 yrs 2 -4 yrs 5 -10 yrs 11 plus yrs Total references % Total Mark level 1 1 1 5 0 7 16% 2 2 2 7 1 12 27% 3 1 2 2 1 6 14% 4 0 6 4 2 12 27% 5 0 3 2 2 7 16% Tota l % Total 4 9% 14 32% 20 45% 6 14% 44 English Lit No Unpack Difference: Percentages per years of experience Similarity: Percentages per marking level Mark level Examiner experience 1 2 3 4 Tota 5 l % Total 0 -1 yrs 0 4 3 5 2 14 31% 2 -4 yrs 1 1 2 0 0 4 9% 5 -10 yrs 1 3 1 1 0 6 13% 11 plus yrs 3 5 4 6 3 21 47% Total references 5 13 10 12 5 45 % Total 11% 29% 22% 27% 11%

SPANISH ENGLISH

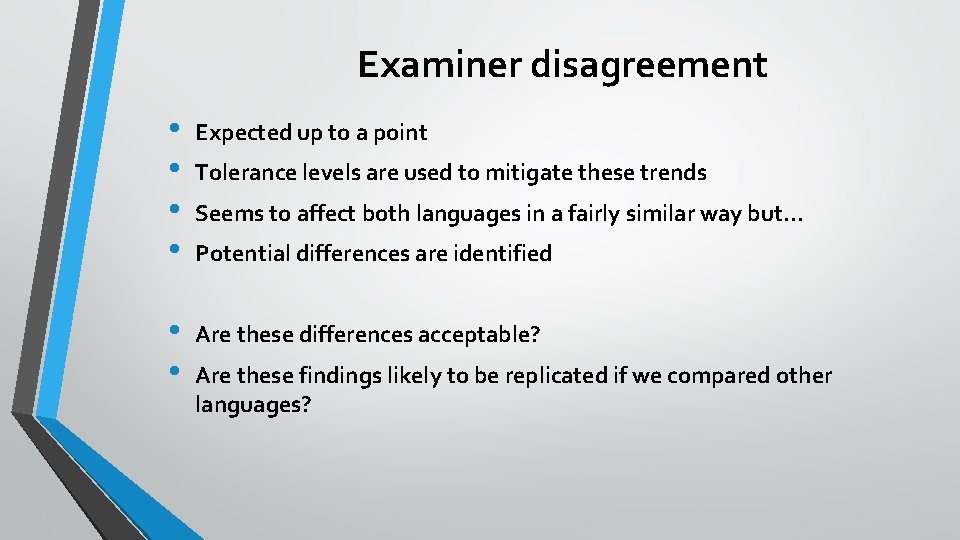

Examiner disagreement • • Expected up to a point • • Are these differences acceptable? Tolerance levels are used to mitigate these trends Seems to affect both languages in a fairly similar way but… Potential differences are identified Are these findings likely to be replicated if we compared other languages?

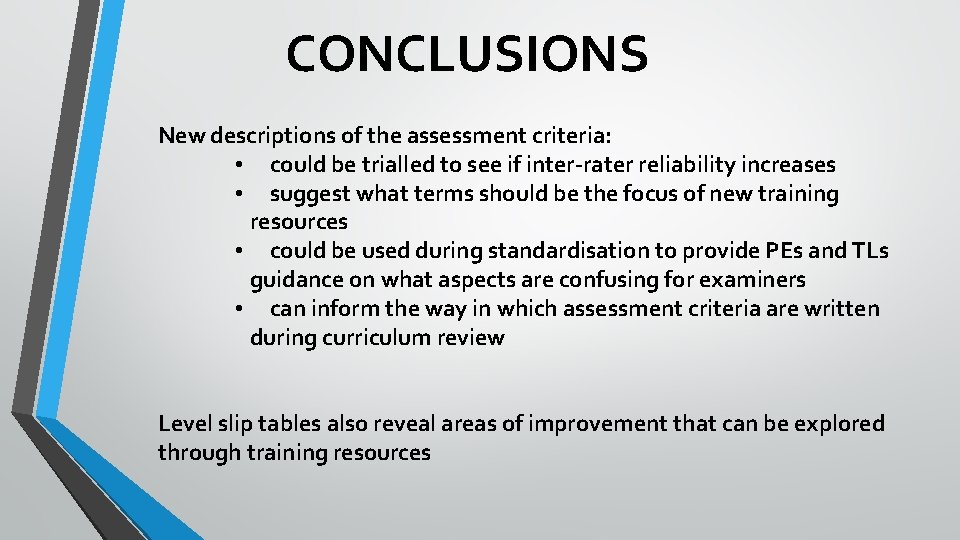

CONCLUSIONS New descriptions of the assessment criteria: • could be trialled to see if inter-rater reliability increases • suggest what terms should be the focus of new training resources • could be used during standardisation to provide PEs and TLs guidance on what aspects are confusing for examiners • can inform the way in which assessment criteria are written during curriculum review Level slip tables also reveal areas of improvement that can be explored through training resources

Mitigations • Implement cross-standardisation among different subjects • Implement cross-paper authoring among different subjects • Expand collaboration of different subjects across the DP and the MYP • Enhanced training resources for examiner training of the new courses

References • Baird, J. -A. , Cresswell, M. & Newton, P. , 2000. Would the real gold standard please step forward? . Research Papers in Education, 15(2), pp. 213 -229. • Bunnell, T. , 2011. The Growth of the International Baccalaureate Diploma Program: Concerns About the Consistency and Reliability of the Assessments. The Educational Forum, 75(2), pp. 174 -187. • Galache Ramos, M. (2017). Is my ‘good’ better than yours? An exploration of examiners’ interpretation of ‘common terms’ in criterion-referenced assessment in the International Baccalaureate Diploma Programme. Department of Education. Bath, University of Bath. MA Education. • Hamer, R. , Galache Ramos, M. & Furlong, A. , 2016. What examiners look for in awarding marks for higher order thinking in essay type responses. Cape Town, International Baccalaureate, IAEA Conference. • Hill, H. , 2019. Rock of ages. [Sculpture] Church of St. Michael the Archangel, Well: Sculpt: Art in the churches. Image taken from: www. thenorthernecho. co. uk (Accessed 25 -10 -2019) • International Baccalaureate, 2004. Diploma Programme assessment Principles and practice, Cardiff: International Baccalaureate. • International Baccalaureate, 2011. Diploma Programme Language A: literature guide, First examinations 2015, Cardiff: International Baccalaureate. • • Matthews, M. , 2009 a. Another head weighs in on the IB issues raised in letter. The International Educator, 23(3), p. 28. • • Matthews, M. , 2009 c. Challenging the IB: A Personal View, Post-Seville. The International Educator, 24(2), pp. 19 -20. Matthews, M. , 2009 b. The International Baccalaureate: Common Myths, Real Concerns. The International Educator, 24(1), pp. 11, 17. Minchin, T. , 2009. If I didn’t have you. Ready for this? [DVD] London: Laughing Stock Productions. Toze, D. , 2008. Concerns about IB exam results undermining confidence in program. The International Educator, 23(2), p. 6. Wiliam, D. , 2000. Chapter 20: STANDARDS: What are they, what do they do and where do they live? . In: B. Peretz, S. Brown & B. Moon, eds. Routledge International Companion to Education. London: Routledge , pp. 349 -364.

- Slides: 40