Ironing The Clouds A Truly Performant Bare Metal

Ironing The Clouds A Truly Performant Bare Metal Open. Stack! Jacob Anders - CSIRO Open. Stack Summit Vancouver - May 2018 Erez Cohen – Mellanox Moshe Levi – Mellanox 1

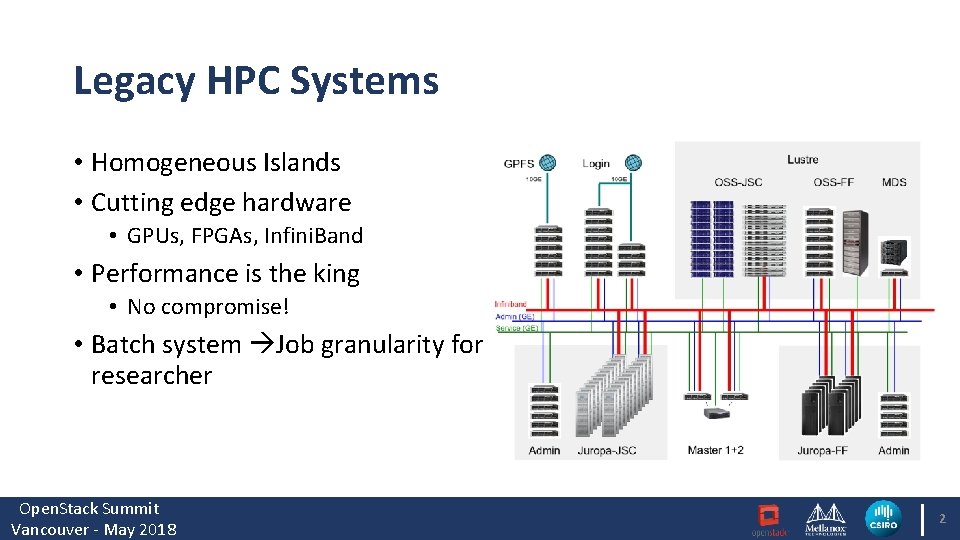

Legacy HPC Systems • Homogeneous Islands • Cutting edge hardware • GPUs, FPGAs, Infini. Band • Performance is the king • No compromise! • Batch system Job granularity for researcher Open. Stack Summit Vancouver - May 2018 2

Modern Data Centers – The Cloud Transformation • Software defined everything • Compute, Storage, network, infrastructure • Self service • Elastic, scalable • Virtualization • Container packaged applications • Portability • Multi-tenancy • Services driven • Iaa. S, Paa. S, Saa. S, Faa. S Open. Stack Summit Vancouver - May 2018 3

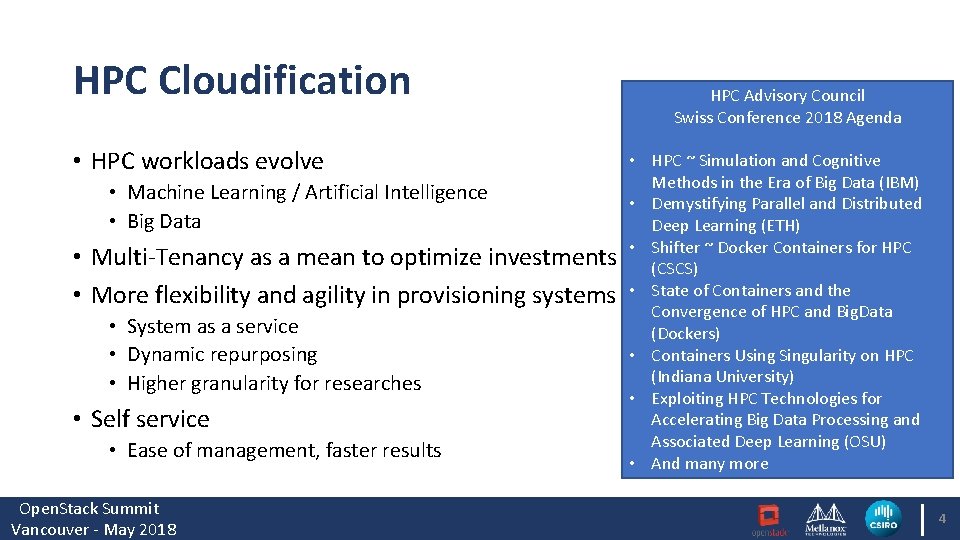

HPC Cloudification • HPC workloads evolve • Machine Learning / Artificial Intelligence • Big Data • Multi-Tenancy as a mean to optimize investments • More flexibility and agility in provisioning systems • System as a service • Dynamic repurposing • Higher granularity for researches • Self service • Ease of management, faster results Open. Stack Summit Vancouver - May 2018 HPC Advisory Council Swiss Conference 2018 Agenda • HPC ~ Simulation and Cognitive Methods in the Era of Big Data (IBM) • Demystifying Parallel and Distributed Deep Learning (ETH) • Shifter ~ Docker Containers for HPC (CSCS) • State of Containers and the Convergence of HPC and Big. Data (Dockers) • Containers Using Singularity on HPC (Indiana University) • Exploiting HPC Technologies for Accelerating Big Data Processing and Associated Deep Learning (OSU) • And many more 4

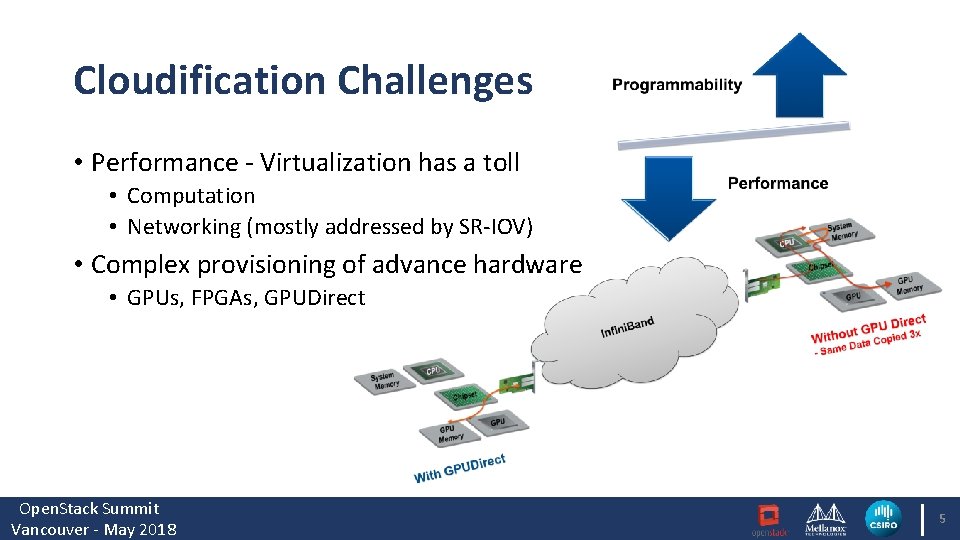

Cloudification Challenges • Performance - Virtualization has a toll • Computation • Networking (mostly addressed by SR-IOV) • Complex provisioning of advance hardware • GPUs, FPGAs, GPUDirect Open. Stack Summit Vancouver - May 2018 5

Ironic To The Rescue • Ironic: Open. Stack Bare Metal Provisioning Program • Initially developed to provision bare metal servers as part of Open. Stack deployment • Provision servers similarly to Virtual Machine • API driven • All HW exposed to user including GPUs, FPGAs etc. • GPUDirect available • Support multi-tenancy • Infini. Band support for Ironic enables HPC over Open. Stack! • SW defined data center with bare metal performance! Open. Stack Summit Vancouver - May 2018 6

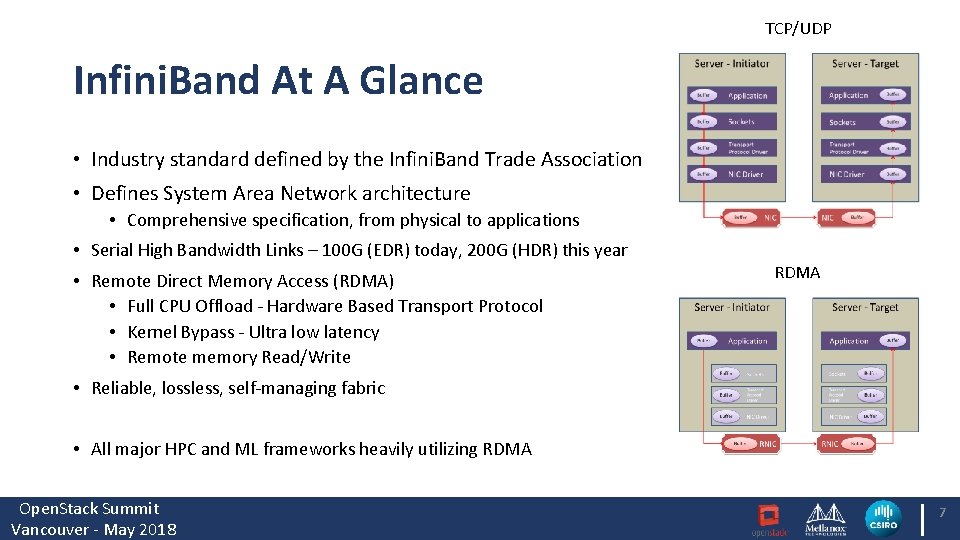

TCP/UDP Infini. Band At A Glance • Industry standard defined by the Infini. Band Trade Association • Defines System Area Network architecture • Comprehensive specification, from physical to applications • Serial High Bandwidth Links – 100 G (EDR) today, 200 G (HDR) this year • Remote Direct Memory Access (RDMA) • Full CPU Offload - Hardware Based Transport Protocol • Kernel Bypass - Ultra low latency • Remote memory Read/Write RDMA • Reliable, lossless, self-managing fabric • All major HPC and ML frameworks heavily utilizing RDMA Open. Stack Summit Vancouver - May 2018 7

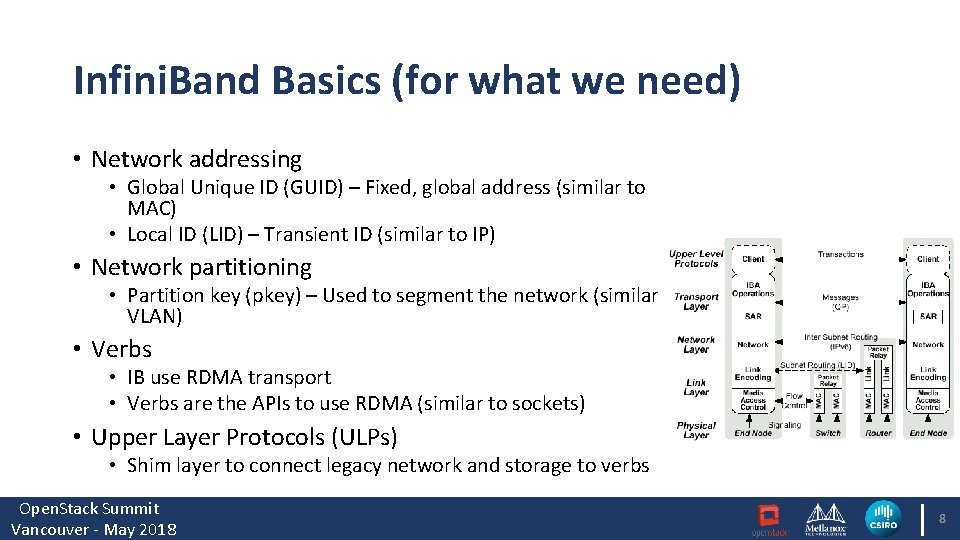

Infini. Band Basics (for what we need) • Network addressing • Global Unique ID (GUID) – Fixed, global address (similar to MAC) • Local ID (LID) – Transient ID (similar to IP) • Network partitioning • Partition key (pkey) – Used to segment the network (similar to VLAN) • Verbs • IB use RDMA transport • Verbs are the APIs to use RDMA (similar to sockets) • Upper Layer Protocols (ULPs) • Shim layer to connect legacy network and storage to verbs Open. Stack Summit Vancouver - May 2018 8

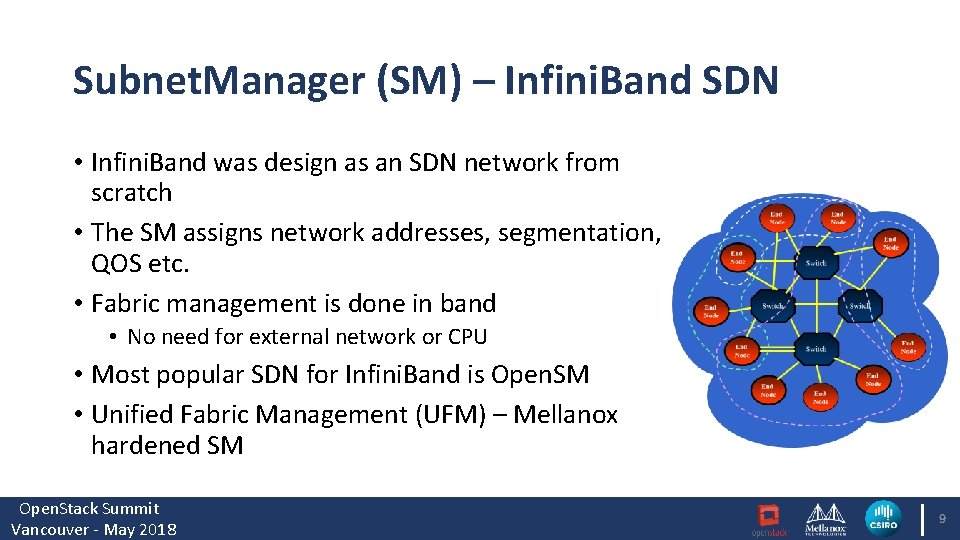

Subnet. Manager (SM) – Infini. Band SDN • Infini. Band was design as an SDN network from scratch • The SM assigns network addresses, segmentation, QOS etc. • Fabric management is done in band • No need for external network or CPU • Most popular SDN for Infini. Band is Open. SM • Unified Fabric Management (UFM) – Mellanox hardened SM Open. Stack Summit Vancouver - May 2018 9

Ironic Services • Ironic API - An admin-only RESTful for interacting with the managed bare metal servers • Ironic Conductor - Interact with the bare metal node and IPA • Ironic Python Agent (IPA) – provides control over the hardware which is not available remotely to the Ironic Conductor • Ironic Inspector – is used to discover hardware properties for a node managed by Ironic Open. Stack Summit Vancouver - May 2018

Ironic Multi-Tenancy • Provides isolation between bare metal on different tenant networks • Deploys the user image on the provision network • Move the node to tenant network as soon as deployment phase is completed Open. Stack Summit Vancouver - May 2018

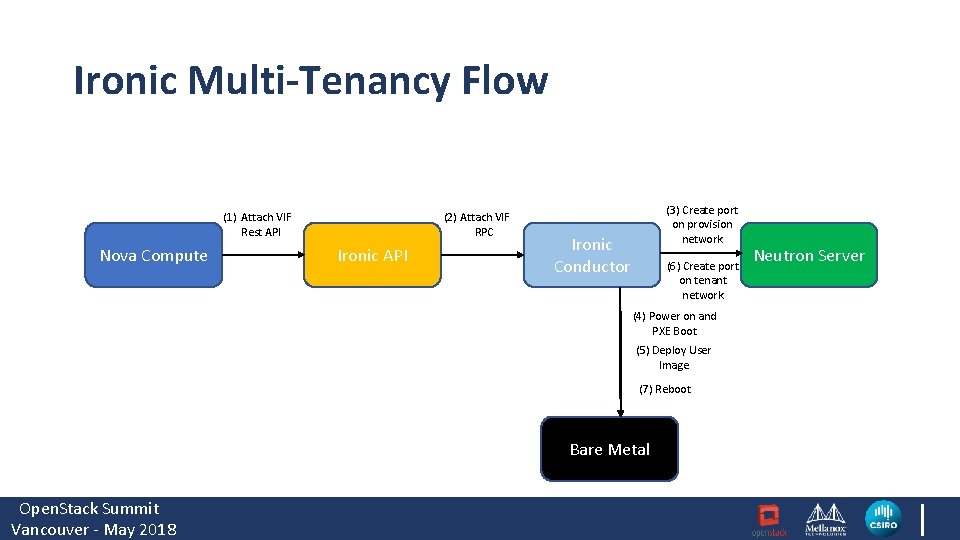

Ironic Multi-Tenancy Flow (1) Attach VIF Rest API Nova Compute (2) Attach VIF RPC Ironic API (3) Create port on provision network Ironic Conductor (6) Create port on tenant network (4) Power on and PXE Boot (5) Deploy User Image (7) Reboot Bare Metal Open. Stack Summit Vancouver - May 2018 Neutron Server

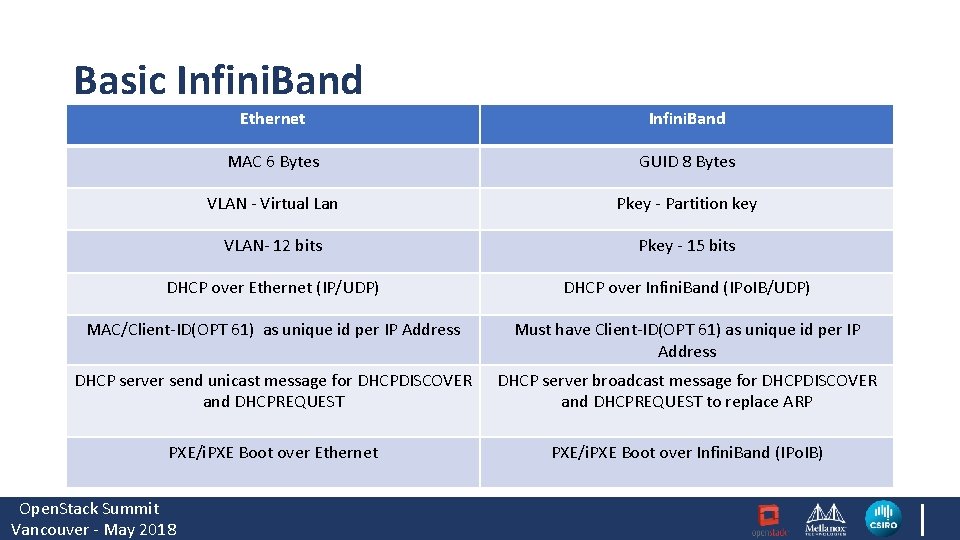

Basic Infini. Band Ethernet Infini. Band MAC 6 Bytes GUID 8 Bytes VLAN - Virtual Lan Pkey - Partition key VLAN- 12 bits Pkey - 15 bits DHCP over Ethernet (IP/UDP) DHCP over Infini. Band (IPo. IB/UDP) MAC/Client-ID(OPT 61) as unique id per IP Address Must have Client-ID(OPT 61) as unique id per IP Address DHCP server send unicast message for DHCPDISCOVER and DHCPREQUEST DHCP server broadcast message for DHCPDISCOVER and DHCPREQUEST to replace ARP PXE/i. PXE Boot over Ethernet PXE/i. PXE Boot over Infini. Band (IPo. IB) Open. Stack Summit Vancouver - May 2018

Ironic Multitenancy with Infini. Band • Available from Pike Release • Supported with Mellanox Interconnect • Requires Mellanox Flexboot FW • Requires Mellanox NEO, UFM, Open. SM Open. Stack Summit Vancouver - May 2018

Ironic python agent (IPA) – upstream work • Leverage the IPA Hardware Manager framework to detect Mellanox IB NIC • Translate Infini. Band interface GUID to Client-ID • Trim GUID to MAC • Inspector/deploy images require IPo. IB kernel module • Currently, the solution supports Mellanox NIC, but other vendors can follow Open. Stack Summit Vancouver - May 2018

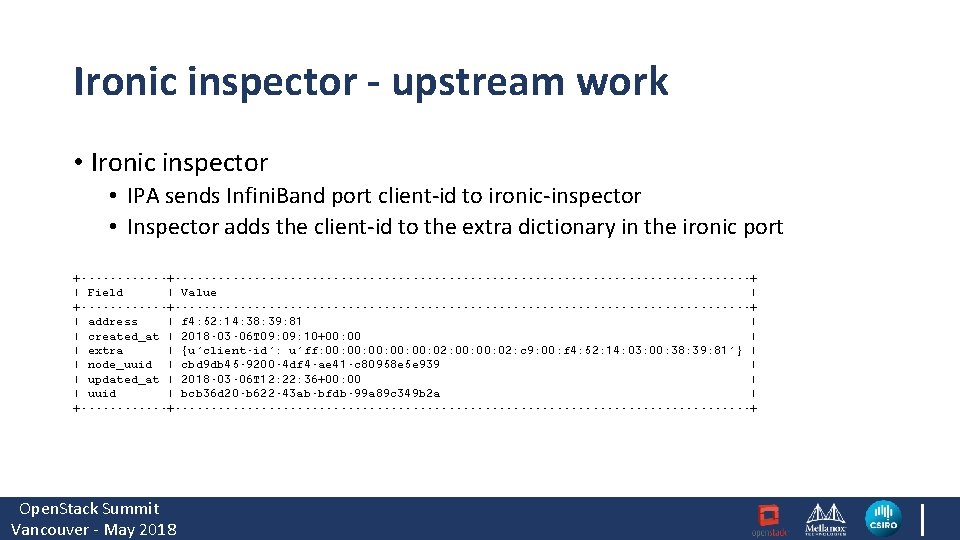

Ironic inspector - upstream work • Ironic inspector • IPA sends Infini. Band port client-id to ironic-inspector • Inspector adds the client-id to the extra dictionary in the ironic port +----------------------------------------------+ | Field | Value | +----------------------------------------------+ | address | f 4: 52: 14: 38: 39: 81 | | created_at | 2018 -03 -06 T 09: 10+00: 00 | | extra | {u'client-id': u'ff: 00: 00: 00: 02: c 9: 00: f 4: 52: 14: 03: 00: 38: 39: 81'} | | node_uuid | cbd 9 db 45 -9200 -4 df 4 -ae 41 -c 80958 e 5 e 939 | | updated_at | 2018 -03 -06 T 12: 22: 36+00: 00 | | uuid | bcb 36 d 20 -b 622 -43 ab-bfdb-99 a 89 c 349 b 2 a | +----------------------------------------------+ Open. Stack Summit Vancouver - May 2018

Ironic - upstream work • Ironic • Create the PXE/i. PXE file with indication of the Infini. Band Interface • Add client-id dhcp option to neutron port on the provision network • Add client-id dhcp option to neutron port on the tenant network • openstack baremetal port create $HW_MAC_ADDRESS --node $NODE_UUID --pxe-enabled true --extra client-id=$CLIENT_ID --physical-network physnet 1 Open. Stack Summit Vancouver - May 2018

Disk image builder - upstream work • Disk image builder - upstream • Added ipoib module to Mellanox element • Added for support configurable timeout for interface up • Build ironic-inspector/ironic deploy images • export DIB_DHCP_TIMEOUT=300 • “disk-image-create deploy-baremetal mellanox devuser ironic-agent centos 7 dhcp-all-interfaces hwdiscovery” Open. Stack Summit Vancouver - May 2018

Neutron - upstream work • Neutron – Upstream • Added Neutron DHCP agent support for server broadcast (from Kilo) • Added Neutron DHCP agent support for Client-ID DHCP Option (from Kilo) • Configuration • In /etc/neuton/dhcp_agent. ini set dhcp_broadcast_reply = true Open. Stack Summit Vancouver - May 2018

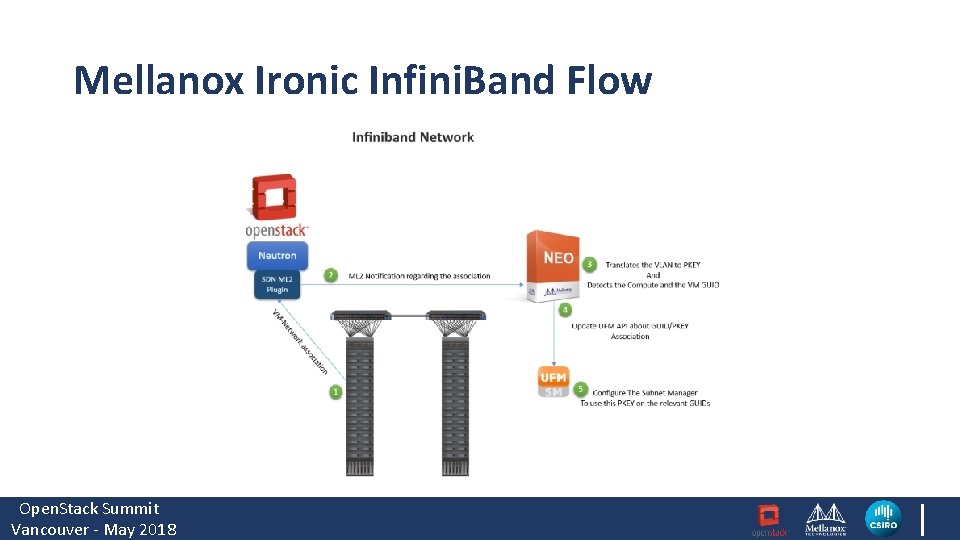

Mellanox Ironic Infini. Band Solution • MLNX SDN assist is a Mellanox ML 2 mechanism driver supporting Ethernet and Infini. Band Fabrics • Mellanox NEO™ is a cloud networking orchestration and management software package • Unified Fabric Manager (UFM®) Software Managing Infini. Band Fabrics. It provides the Subnet Manager functionality Open. Stack Summit Vancouver - May 2018

Mellanox Ironic Infini. Band Flow Open. Stack Summit Vancouver - May 2018

Ironic/IB+SDN CSIRO case study Open. Stack Summit Vancouver - May 2018

About CSIRO ● We are Australia’s national science agency, ● Our mission is to improve economic performance and the quality of life of people in Australia and overseas by means of scientific research, ● Australia’s biggest consumer of scientific computing resources, ● ~2 PF and ~50 PB storage of on-premise compute and storage, ● Mellanox Centre of Excellence, ● Headquarters in Canberra, ● 5000+ employees over 50 sites in Australia and overseas, ● Strong global presence and world-wide partnerships Open. Stack Summit Vancouver - May 2018

Why do we need Private Cloud? ● ● ● Increasing demand for dedicated systems, User interest in cloud-based solutions (growing EC 2 userbase), Varied security requirements and investment in cybersecurity research, Varying demand for services and the need to move capacity between systems Seeking the ability to turn systems into applications Open. Stack Summit Vancouver - May 2018

High Performance Computing & Cloud Computing ● Batch-queue systems offer excellent performance, which our users highly value (and will never give up), but have limited flexibility, ● Cloud systems offer the flexibility which our users are increasingly value, but as for today last week provide significantly lower performance, ● Implementing a solution which combines the benefits of both approaches would be a very strong asset to our organisation and our users Open. Stack Summit Vancouver - May 2018

. . . so how do we have our cake and eat it, too? (credit to Dan) Open. Stack Summit Vancouver - May 2018

Ironic/Infini. Band solution = flexibility + performance Open. Stack with Ironic/SDN+IB offers the best of both worlds, without any compromises: ● Easy, API-driven access to computing resources (Dev. Ops / Infrastructureas-Code paradigm) ● Unparalleled performance of bare-metal and Infini. Band with full support for advanced Neutron network configurations ● Access to high-performance RDMA filesystems at up to 10 GB/s per client and 200 GB/s+ aggregate bandwidth, with ability of servicing millions of random 4 k reads/writes Open. Stack Summit Vancouver - May 2018

. . but wait, there’s more. . ● Out-of-the-box support for any type of hardware supported on Linux, at full performance (GPU, FPGA, NVMe) ● Time-consuming hypervisor tuning ( reducing overcommitment ratios, CPU pass-through/pinning, LVM ephemeral storage, SRIOV, PCI pass-through, …) is no longer required ● MPI applications run at full performance, with no KVM-induced latency Open. Stack Summit Vancouver - May 2018

Performance comparison – legacy vs Ironic/IB Baremetal Cloud Infini. Band/RDMA transport Parallel filesystem Virtualised Cloud 10/40 GE TCP + NFS ( photo credit Qantas Airways & Bastien Boubee) Open. Stack Summit Vancouver - May 2018

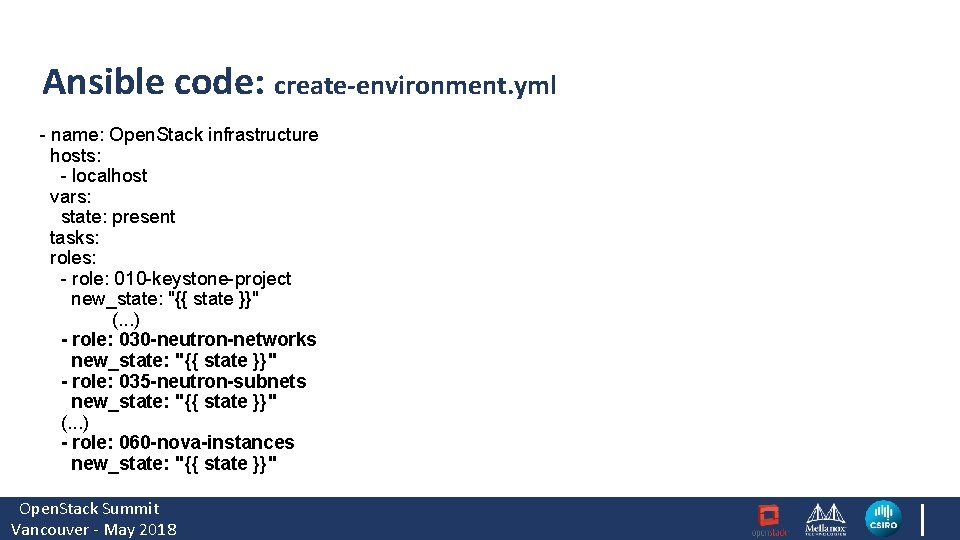

Ansible code: create-environment. yml - name: Open. Stack infrastructure hosts: - localhost vars: state: present tasks: roles: - role: 010 -keystone-project new_state: "{{ state }}" (. . . ) - role: 030 -neutron-networks new_state: "{{ state }}" - role: 035 -neutron-subnets new_state: "{{ state }}" (. . . ) - role: 060 -nova-instances new_state: "{{ state }}" Open. Stack Summit Vancouver - May 2018

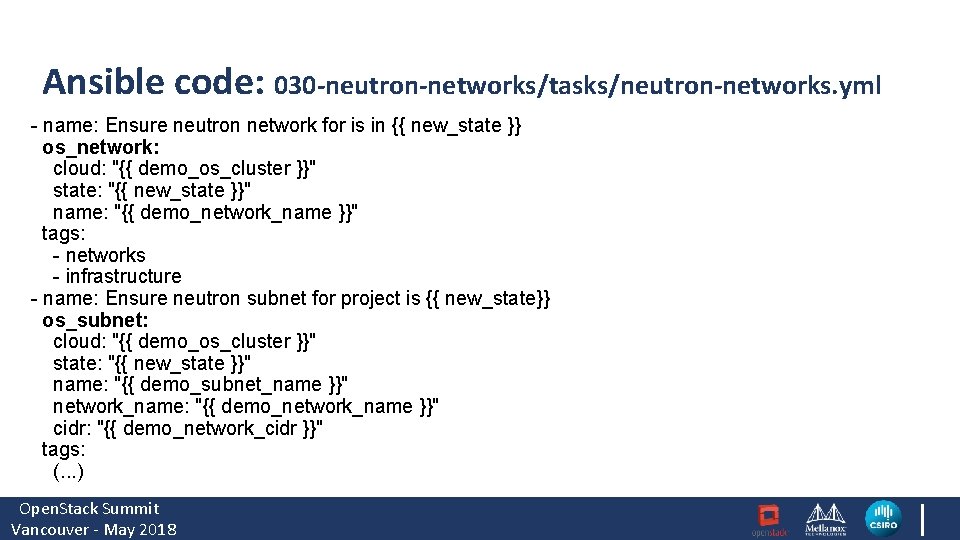

Ansible code: 030 -neutron-networks/tasks/neutron-networks. yml - name: Ensure neutron network for is in {{ new_state }} os_network: cloud: "{{ demo_os_cluster }}" state: "{{ new_state }}" name: "{{ demo_network_name }}" tags: - networks - infrastructure - name: Ensure neutron subnet for project is {{ new_state}} os_subnet: cloud: "{{ demo_os_cluster }}" state: "{{ new_state }}" name: "{{ demo_subnet_name }}" network_name: "{{ demo_network_name }}" cidr: "{{ demo_network_cidr }}" tags: (. . . ) Open. Stack Summit Vancouver - May 2018

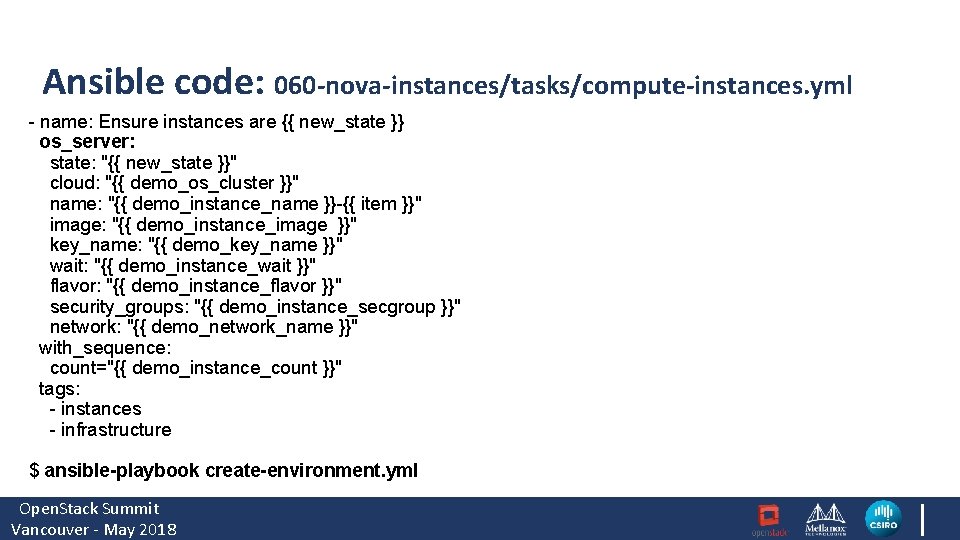

Ansible code: 060 -nova-instances/tasks/compute-instances. yml - name: Ensure instances are {{ new_state }} os_server: state: "{{ new_state }}" cloud: "{{ demo_os_cluster }}" name: "{{ demo_instance_name }}-{{ item }}" image: "{{ demo_instance_image }}" key_name: "{{ demo_key_name }}" wait: "{{ demo_instance_wait }}" flavor: "{{ demo_instance_flavor }}" security_groups: "{{ demo_instance_secgroup }}" network: "{{ demo_network_name }}" with_sequence: count="{{ demo_instance_count }}" tags: - instances - infrastructure $ ansible-playbook create-environment. yml Open. Stack Summit Vancouver - May 2018

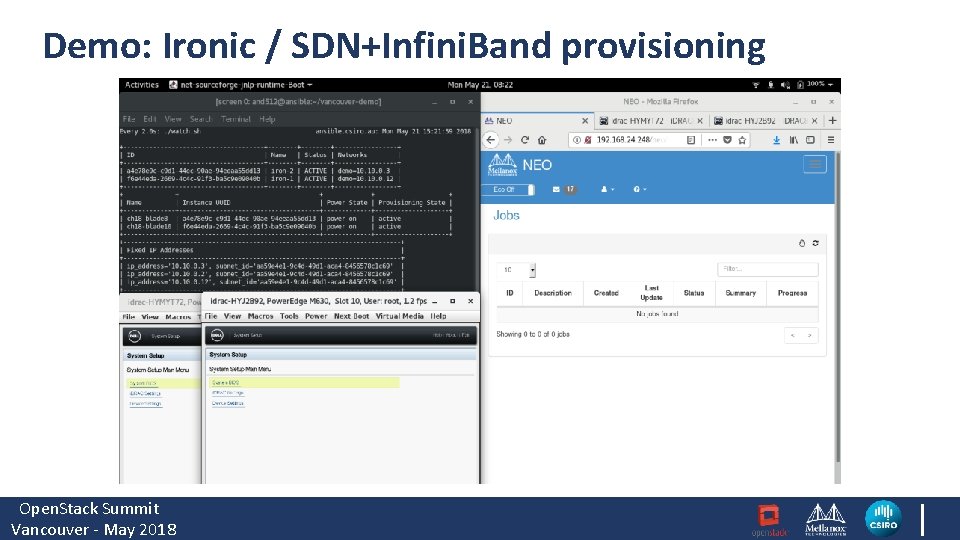

Demo: Ironic / SDN+Infini. Band provisioning Open. Stack Summit Vancouver - May 2018

Summary ● Open. Stack with Ironic/IB combines the flexibility of Cloud with the raw performance of legacy HPC ● This enables us to apply Dev. Ops & infrastructure-as-code methodology to Scientific Computing and to Convert systems into applications ● Paving the way for lightweight, highly efficient next-gen scientific computing platforms ● Those new capabilities are of great value and will help CSIRO solve some of the most difficult scientific computing challenges Open. Stack Summit Vancouver - May 2018

Open. Stack Summit Vancouver - May 2018 36

Future work ● Multi-port and/or multi-fabric configurations for even higher throughput and more flexible network topologies, ● PCIe 3 bandwidth is becoming the bottleneck – can we have PCIe 4 please? : ) ● Increasing the maximum network count (Infini. Band supports more network segments than Ethernet – but today’s HCA firmware has some limitations) Open. Stack Summit Vancouver - May 2018 “…line rate, or it didn’t

Future Reading • https: //github. com/openstack/ironic/blob/master/doc/source/admin /multitenancy. rst • https: //community. mellanox. com/docs/DOC-2251 • https: //specs. openstack. org/openstack/ironic-specs/6. 2/addinfiniband-support. html • https: //tools. ietf. org/html/draft-kashyap-ipoib-dhcp-over-infiniband 00 Open. Stack Summit Vancouver - May 2018

- Slides: 37