INVESTIGATE AND PARALLEL PROCESSING USING E 1350 IBM

INVESTIGATE AND PARALLEL PROCESSING USING E 1350 IBM ESERVER CLUSTER Ayaz ul Hassan Khan (g 201002860)

OBJECTIVES Explore the architecture of E 1350 IBM e. Server Cluster Parallel Programming: � Open. MP � MPI+Open. MP Analyzing the effects of above programming models on speedup Finding out overheads and optimize as much as possible

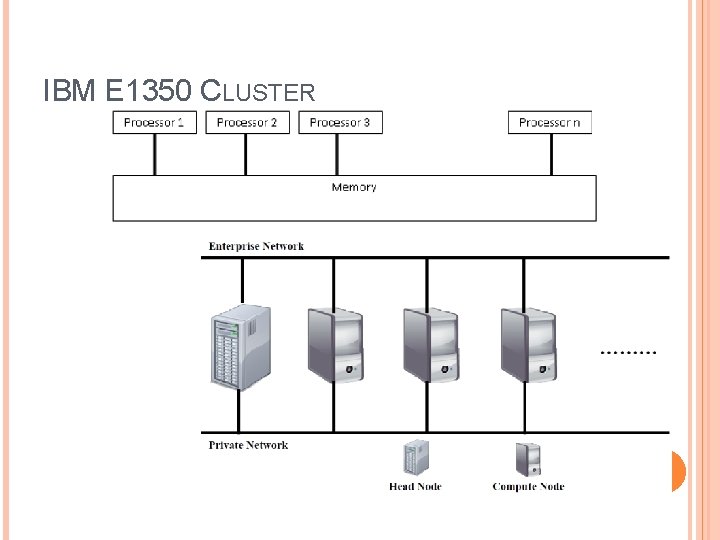

IBM E 1350 CLUSTER

CLUSTER SYSTEM The cluster is unique in its dual-boot capability with Microsoft Windows HPC Server 2008 and Red Hat Enterprise Linux 5 operating systems. The cluster has 3 master nodes, one for Red Hat Linux, one for Windows HPC Server 2008 and one for cluster management. The cluster has 128 compute nodes. Each compute node of the cluster is dual-processor having two 2. 0 GHz x 3550 Xeon Quad-core E 5405 processors. The total number of cores in the cluster is 1024. Each master node has 1 TB of hard disk space and each compute node has 500 GB of hard disk. Each master node has 8 GB of RAM. Each compute node has 4 GB of RAM. The interconnect is 10 GBASE-SR

EXPERIMENTAL ENVIRONMENT Nodes: hpc 081, hpc 082, hpc 083, hpc 084 Compilers: � icc: for sequential and Open. MP programs � mpiicc: for MPI and MPI+Open. MP programs Profiling Tools: � omp. P: for Open. MP profiling � mpi. P: for MPI profiling

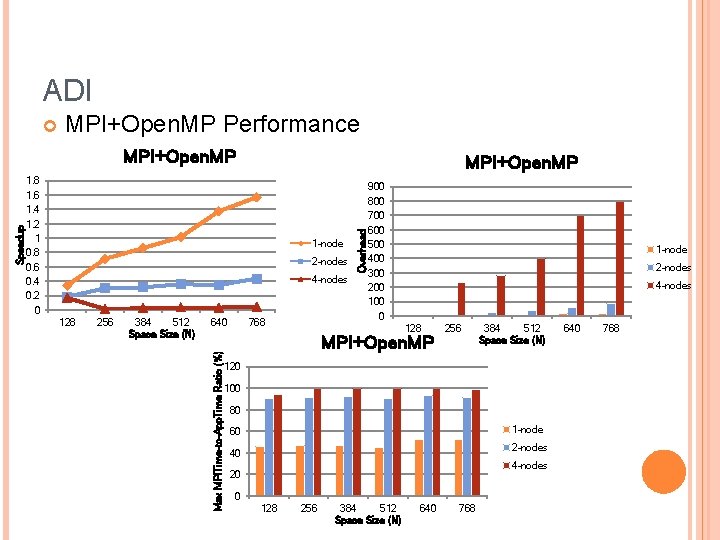

APPLICATIONS USED/IMPLEMENTED Jacobi Iterative Method � Max Speedup = 7. 1 (Open. MP, Threads = 8) � Max Speedup = 3. 7 (MPI, Nodes = 4) � Max Speedup = 9. 3 (MPI+Open. MP, Nodes = 2, Threads = 8) Alternating Direction Integration (ADI) � Max Speedup = 5. 0 (Open. MP, Threads = 8) � Max Speedup = 0. 8 (MPI, Nodes = 1) � Max Speedup = 1. 7 (MPI+Open. MP, Nodes = 1, Threads = 8)

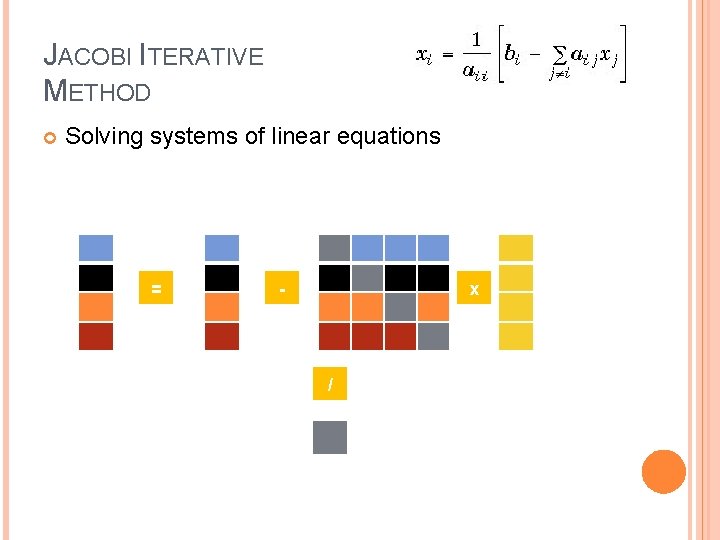

JACOBI ITERATIVE METHOD Solving systems of linear equations = - x /

![JACOBI ITERATIVE METHOD Sequential Code for(i = 0; i < N; i++){ x[i] = JACOBI ITERATIVE METHOD Sequential Code for(i = 0; i < N; i++){ x[i] =](http://slidetodoc.com/presentation_image_h/df3162bd60e5c260ca14626022c07967/image-8.jpg)

JACOBI ITERATIVE METHOD Sequential Code for(i = 0; i < N; i++){ x[i] = b[i]; } for(i=0; i<N; i++){ sum = 0. 0; for(j=0; j<N; j++){ if(i != j){ Time (secs) sequential 0. 9 0. 8 0. 7 0. 6 0. 5 0. 4 0. 3 0. 2 0. 1 0 sequential 128 256 384 512 Space Size (N) sum += a[i][j] * x[j]; new_x[i] = (b[i] - sum)/a[i][i]; } } } for(i=0; i < N; i++) x[i] = new_x[i]; 640 768

JACOBI ITERATIVE METHOD Open. MP Code #pragma omp parallel private(k, i, j, sum) { for(k = 0; k < MAX_ITER; k++){ #pragma omp for(i=0; i<N; i++){ sum = 0. 0; for(j=0; j<N; j++){ if(i != j){ sum += a[i][j] * x[j]; new_x[i] = (b[i] - sum)/a[i][i]; } } } #pragma omp for(i=0; i < N; i++) x[i] = new_x[i]; } }

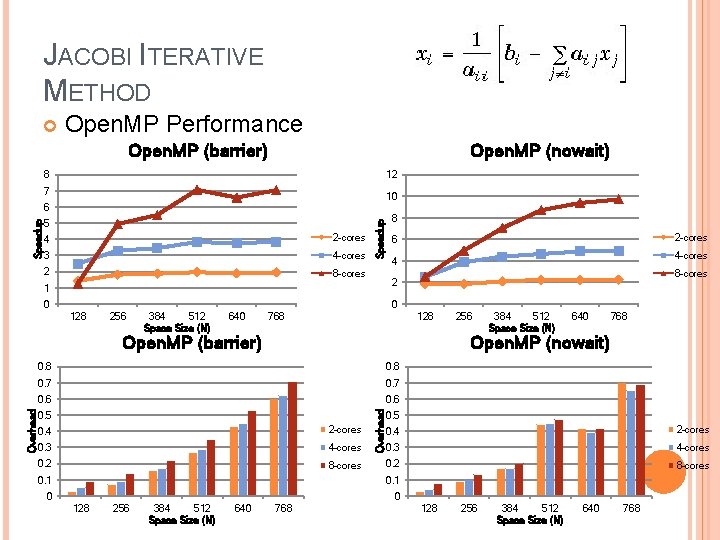

JACOBI ITERATIVE METHOD Open. MP Performance Open. MP (barrier) Open. MP (nowait) 8 12 7 10 5 4 2 -cores 3 4 -cores 2 8 -cores Speedup 6 8 2 -cores 6 4 -cores 4 8 -cores 2 1 0 0 128 256 384 512 Space Size (N) 640 768 128 384 512 Space Size (N) 640 768 Open. MP (nowait) 0. 8 0. 7 0. 6 0. 5 0. 4 2 -cores 0. 3 4 -cores 0. 2 8 -cores 0. 1 Overhead Open. MP (barrier) 256 0. 5 0. 4 2 -cores 0. 3 4 -cores 0. 2 8 -cores 0. 1 0 0 128 256 384 512 Space Size (N) 640 768

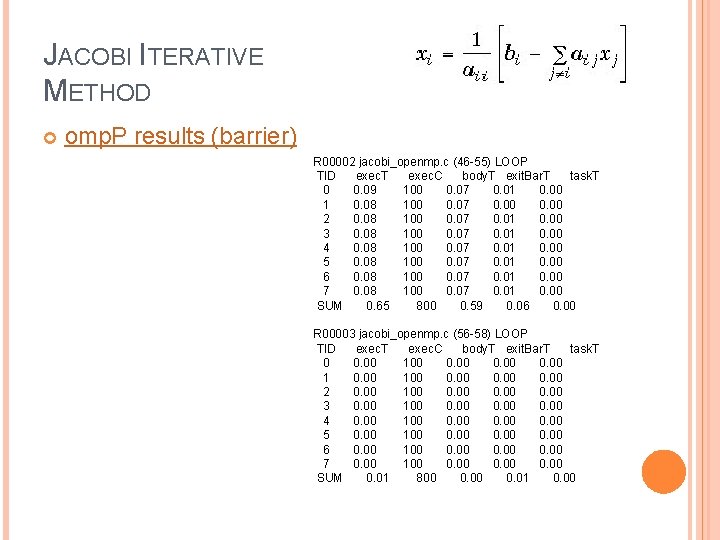

JACOBI ITERATIVE METHOD omp. P results (barrier) R 00002 jacobi_openmp. c (46 -55) LOOP TID exec. T exec. C body. T exit. Bar. T task. T 0 0. 09 100 0. 07 0. 01 0. 00 1 0. 08 100 0. 07 0. 00 2 0. 08 100 0. 07 0. 01 0. 00 3 0. 08 100 0. 07 0. 01 0. 00 4 0. 08 100 0. 07 0. 01 0. 00 5 0. 08 100 0. 07 0. 01 0. 00 6 0. 08 100 0. 07 0. 01 0. 00 7 0. 08 100 0. 07 0. 01 0. 00 SUM 0. 65 800 0. 59 0. 06 0. 00 R 00003 jacobi_openmp. c (56 -58) LOOP TID exec. T exec. C body. T exit. Bar. T task. T 0 0. 00 100 0. 00 2 0. 00 100 0. 00 3 0. 00 100 0. 00 4 0. 00 100 0. 00 5 0. 00 100 0. 00 6 0. 00 100 0. 00 7 0. 00 100 0. 00 SUM 0. 01 800 0. 01 0. 00

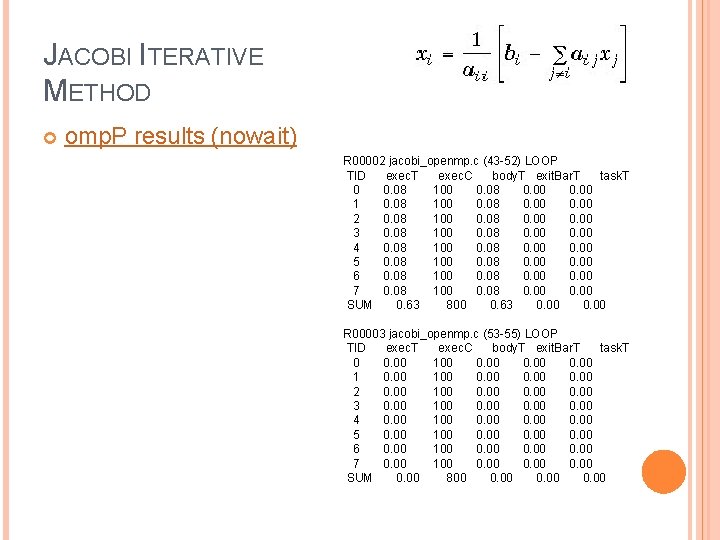

JACOBI ITERATIVE METHOD omp. P results (nowait) R 00002 jacobi_openmp. c (43 -52) LOOP TID exec. T exec. C body. T exit. Bar. T task. T 0 0. 08 100 0. 08 0. 00 1 0. 08 100 0. 08 0. 00 2 0. 08 100 0. 08 0. 00 3 0. 08 100 0. 08 0. 00 4 0. 08 100 0. 08 0. 00 5 0. 08 100 0. 08 0. 00 6 0. 08 100 0. 08 0. 00 7 0. 08 100 0. 08 0. 00 SUM 0. 63 800 0. 63 0. 00 R 00003 jacobi_openmp. c (53 -55) LOOP TID exec. T exec. C body. T exit. Bar. T task. T 0 0. 00 100 0. 00 2 0. 00 100 0. 00 3 0. 00 100 0. 00 4 0. 00 100 0. 00 5 0. 00 100 0. 00 6 0. 00 100 0. 00 7 0. 00 100 0. 00 SUM 0. 00 800 0. 00

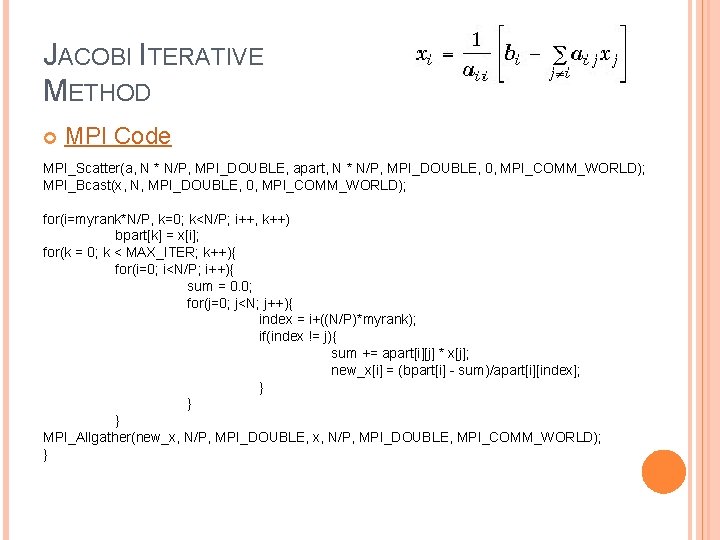

JACOBI ITERATIVE METHOD MPI Code MPI_Scatter(a, N * N/P, MPI_DOUBLE, apart, N * N/P, MPI_DOUBLE, 0, MPI_COMM_WORLD); MPI_Bcast(x, N, MPI_DOUBLE, 0, MPI_COMM_WORLD); for(i=myrank*N/P, k=0; k<N/P; i++, k++) bpart[k] = x[i]; for(k = 0; k < MAX_ITER; k++){ for(i=0; i<N/P; i++){ sum = 0. 0; for(j=0; j<N; j++){ index = i+((N/P)*myrank); if(index != j){ sum += apart[i][j] * x[j]; new_x[i] = (bpart[i] - sum)/apart[i][index]; } } } MPI_Allgather(new_x, N/P, MPI_DOUBLE, MPI_COMM_WORLD); }

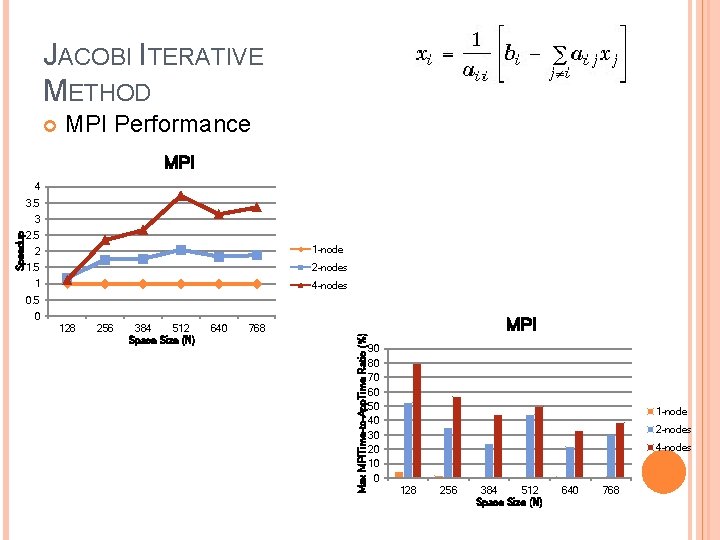

JACOBI ITERATIVE METHOD MPI Performance MPI 4 3. 5 2. 5 1 -node 2 1. 5 2 -nodes 1 4 -nodes 0. 5 0 128 256 384 512 Space Size (N) 640 768 Max MPITime-to-App. Time Ratio (%) Speedup 3 MPI 90 80 70 60 50 40 30 20 10 0 1 -node 2 -nodes 4 -nodes 128 256 384 512 Space Size (N) 640 768

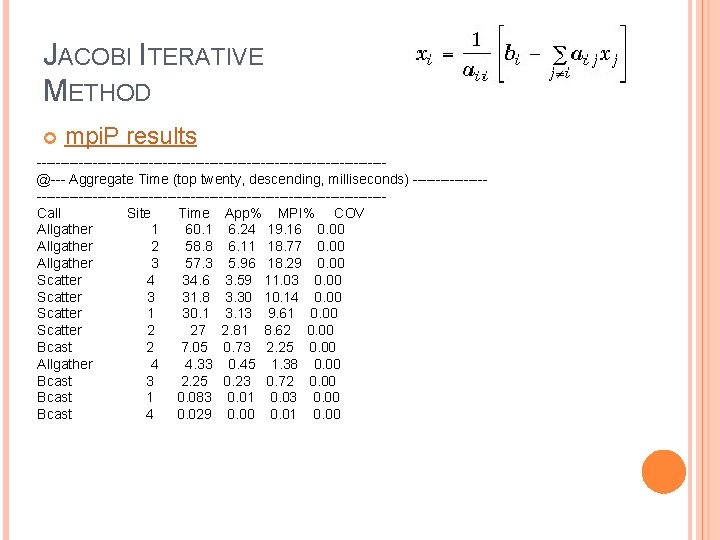

JACOBI ITERATIVE METHOD mpi. P results -------------------------------------@--- Aggregate Time (top twenty, descending, milliseconds) ---------------------------------------------Call Site Time App% MPI% COV Allgather 1 60. 1 6. 24 19. 16 0. 00 Allgather 2 58. 8 6. 11 18. 77 0. 00 Allgather 3 57. 3 5. 96 18. 29 0. 00 Scatter 4 34. 6 3. 59 11. 03 0. 00 Scatter 3 31. 8 3. 30 10. 14 0. 00 Scatter 1 30. 1 3. 13 9. 61 0. 00 Scatter 2 27 2. 81 8. 62 0. 00 Bcast 2 7. 05 0. 73 2. 25 0. 00 Allgather 4 4. 33 0. 45 1. 38 0. 00 Bcast 3 2. 25 0. 23 0. 72 0. 00 Bcast 1 0. 083 0. 01 0. 03 0. 00 Bcast 4 0. 029 0. 00 0. 01 0. 00

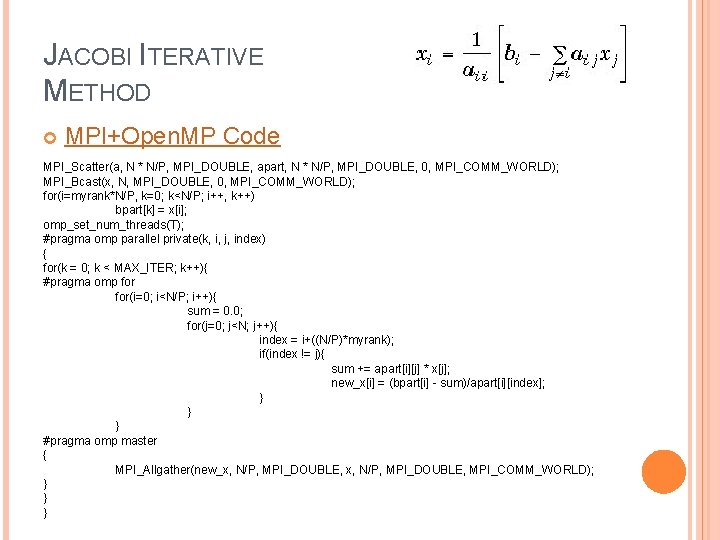

JACOBI ITERATIVE METHOD MPI+Open. MP Code MPI_Scatter(a, N * N/P, MPI_DOUBLE, apart, N * N/P, MPI_DOUBLE, 0, MPI_COMM_WORLD); MPI_Bcast(x, N, MPI_DOUBLE, 0, MPI_COMM_WORLD); for(i=myrank*N/P, k=0; k<N/P; i++, k++) bpart[k] = x[i]; omp_set_num_threads(T); #pragma omp parallel private(k, i, j, index) { for(k = 0; k < MAX_ITER; k++){ #pragma omp for(i=0; i<N/P; i++){ sum = 0. 0; for(j=0; j<N; j++){ index = i+((N/P)*myrank); if(index != j){ sum += apart[i][j] * x[j]; new_x[i] = (bpart[i] - sum)/apart[i][index]; } } } #pragma omp master { MPI_Allgather(new_x, N/P, MPI_DOUBLE, MPI_COMM_WORLD); } } }

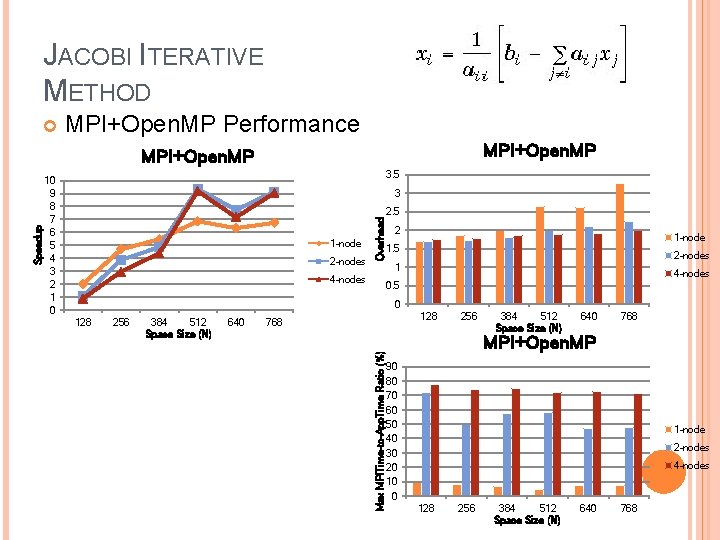

JACOBI ITERATIVE METHOD MPI+Open. MP Performance MPI+Open. MP 3. 5 10 9 8 7 6 5 4 3 2 1 0 1 -node 2 -nodes Overhead 3 2. 5 2 1 -node 1. 5 2 -nodes 1 4 -nodes 0. 5 0 128 256 384 512 Space Size (N) 640 128 768 Max MPITime-to-App. Time Ratio (%) Speedup MPI+Open. MP 256 384 512 Space Size (N) 640 768 MPI+Open. MP 90 80 70 60 50 40 30 20 10 0 1 -node 2 -nodes 4 -nodes 128 256 384 512 Space Size (N) 640 768

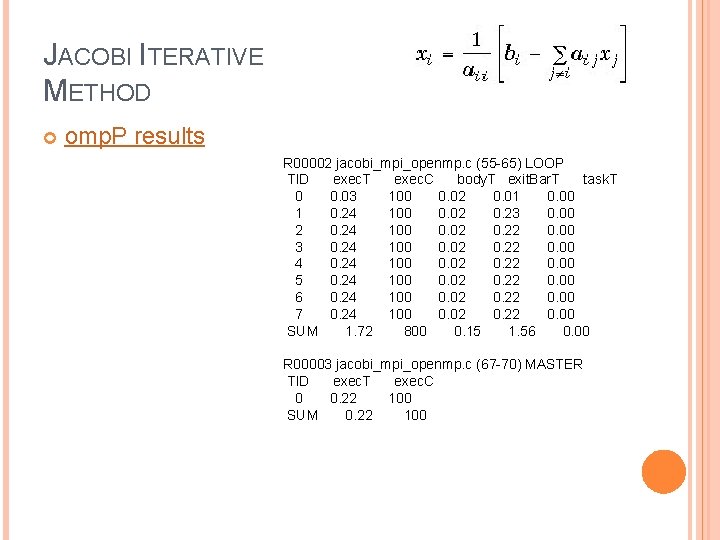

JACOBI ITERATIVE METHOD omp. P results R 00002 jacobi_mpi_openmp. c (55 -65) LOOP TID exec. T exec. C body. T exit. Bar. T task. T 0 0. 03 100 0. 02 0. 01 0. 00 1 0. 24 100 0. 02 0. 23 0. 00 2 0. 24 100 0. 02 0. 22 0. 00 3 0. 24 100 0. 02 0. 22 0. 00 4 0. 24 100 0. 02 0. 22 0. 00 5 0. 24 100 0. 02 0. 22 0. 00 6 0. 24 100 0. 02 0. 22 0. 00 7 0. 24 100 0. 02 0. 22 0. 00 SUM 1. 72 800 0. 15 1. 56 0. 00 R 00003 jacobi_mpi_openmp. c (67 -70) MASTER TID exec. T exec. C 0 0. 22 100 SUM 0. 22 100

JACOBI ITERATIVE METHOD mpi. P results -------------------------------------@--- Aggregate Time (top twenty, descending, milliseconds) ---------------------------------------------Call Site Time App% MPI% COV Scatter 8 34. 7 9. 62 14. 11 0. 00 Allgather 1 32. 6 9. 05 13. 28 0. 00 Scatter 6 31. 3 8. 70 12. 76 0. 00 Scatter 2 30. 2 8. 39 12. 31 0. 00 Allgather 3 29. 9 8. 30 12. 18 0. 00 Allgather 5 27. 67 11. 25 0. 00 Scatter 4 27. 1 7. 51 11. 02 0. 00 Allgather 7 22. 1 6. 14 9. 00 0. 00 Bcast 4 7. 12 1. 98 2. 90 0. 00 Bcast 6 2. 81 0. 78 1. 14 0. 00 Bcast 2 0. 09 0. 02 0. 04 0. 00 Bcast 8 0. 033 0. 01 0. 00

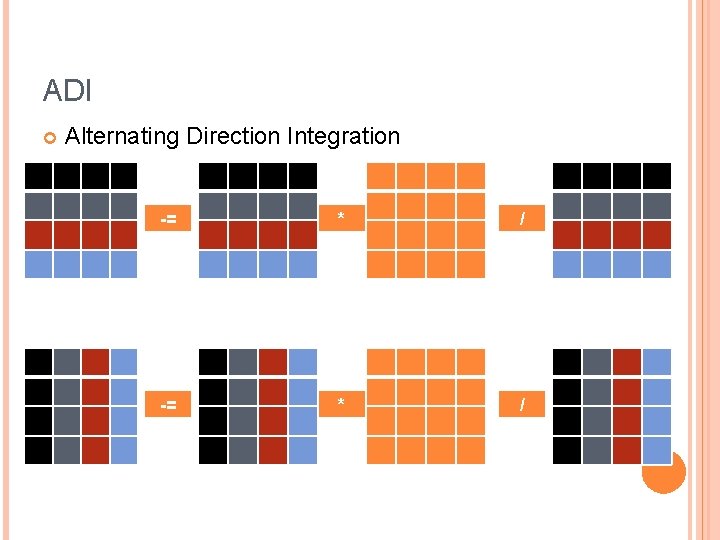

ADI Alternating Direction Integration -= * /

ADI sequential Sequential Code Time (secs) //////ADI forward & backword sweep along rows////// for (i = 0; i < N; i++){ for (j = 1; j < N; j++){ x[i][j] = x[i][j]-x[i][j-1]*a[i][j]/b[i][j-1]; b[i][j]= b[i][j] - a[i][j]*a[i][j]/b[i][j-1]; } x[i][N-1] = x[i][N-1]/b[i][N-1]; } for (i = 0; i < N; i++) for (j = N-2; j > 1; j--) x[i][j]=(x[i][j]-a[i][j+1]*x[i][j+1])/b[i][j]; ////// ADI forward & backward sweep along columns////// for (j = 0; j < N; j++){ for (i = 1; i < N; i++){ x[i][j] = x[i][j]-x[i-1][j]*a[i][j]/b[i-1][j]; b[i][j]= b[i][j] - a[i][j]*a[i][j]/b[i-1][j]; } x[N-1][j] = x[N-1][j]/b[N-1][j]; } for (j = 0; j < N; j++) for (i = N-2; i > 1; i--) x[i][j]=(x[i][j]-a[i+1][j]*x[i+1][j])/b[i][j]; 5 4 3. 5 3 2. 5 2 1. 5 1 0. 5 0 sequential 128 256 384 512 Space Size (N) 640 768

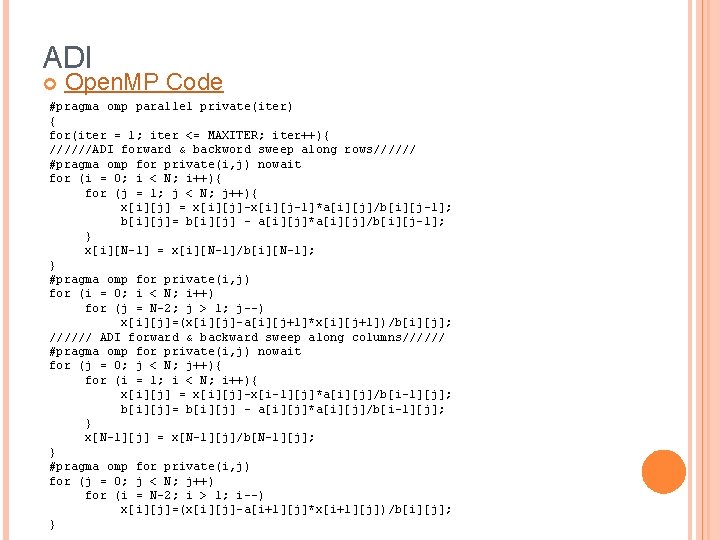

ADI Open. MP Code #pragma omp parallel private(iter) { for(iter = 1; iter <= MAXITER; iter++){ //////ADI forward & backword sweep along rows////// #pragma omp for private(i, j) nowait for (i = 0; i < N; i++){ for (j = 1; j < N; j++){ x[i][j] = x[i][j]-x[i][j-1]*a[i][j]/b[i][j-1]; b[i][j]= b[i][j] - a[i][j]*a[i][j]/b[i][j-1]; } x[i][N-1] = x[i][N-1]/b[i][N-1]; } #pragma omp for private(i, j) for (i = 0; i < N; i++) for (j = N-2; j > 1; j--) x[i][j]=(x[i][j]-a[i][j+1]*x[i][j+1])/b[i][j]; ////// ADI forward & backward sweep along columns////// #pragma omp for private(i, j) nowait for (j = 0; j < N; j++){ for (i = 1; i < N; i++){ x[i][j] = x[i][j]-x[i-1][j]*a[i][j]/b[i-1][j]; b[i][j]= b[i][j] - a[i][j]*a[i][j]/b[i-1][j]; } x[N-1][j] = x[N-1][j]/b[N-1][j]; } #pragma omp for private(i, j) for (j = 0; j < N; j++) for (i = N-2; i > 1; i--) x[i][j]=(x[i][j]-a[i+1][j]*x[i+1][j])/b[i][j]; }

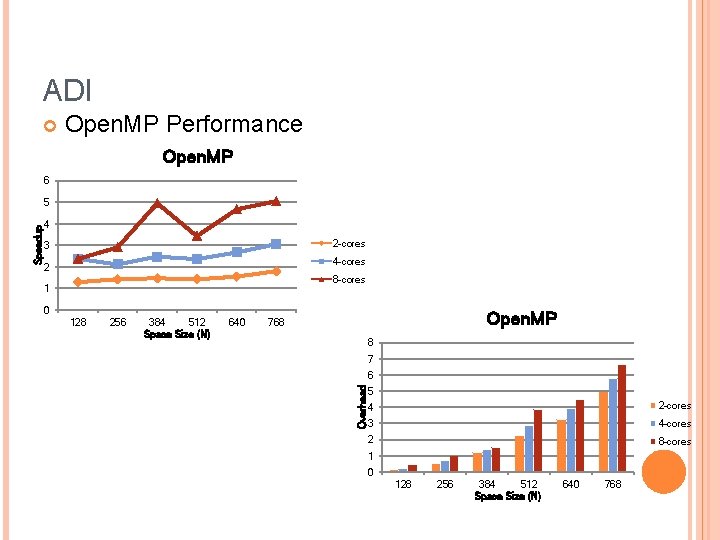

ADI Open. MP Performance Open. MP 6 4 2 -cores 3 4 -cores 2 8 -cores 1 0 128 256 384 512 Space Size (N) 640 Open. MP 768 8 7 6 Overhead Speedup 5 5 4 2 -cores 3 4 -cores 2 8 -cores 1 0 128 256 384 512 Space Size (N) 640 768

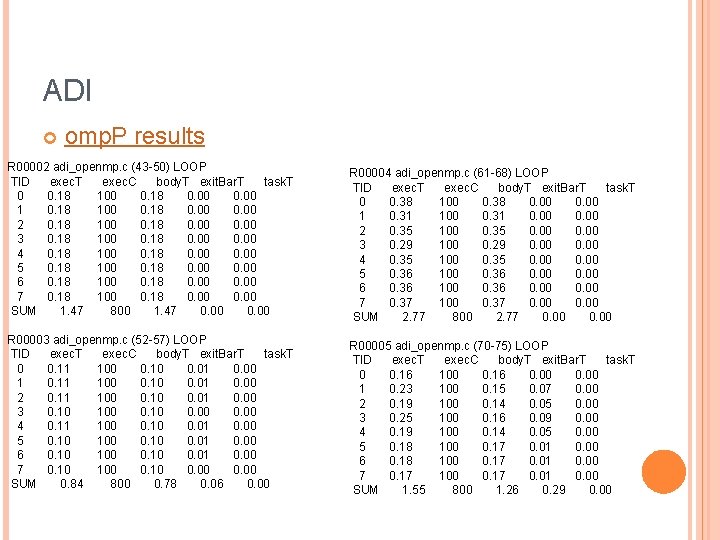

ADI omp. P results R 00002 adi_openmp. c (43 -50) LOOP TID exec. T exec. C body. T exit. Bar. T task. T 0 0. 18 100 0. 18 0. 00 1 0. 18 100 0. 18 0. 00 2 0. 18 100 0. 18 0. 00 3 0. 18 100 0. 18 0. 00 4 0. 18 100 0. 18 0. 00 5 0. 18 100 0. 18 0. 00 6 0. 18 100 0. 18 0. 00 7 0. 18 100 0. 18 0. 00 SUM 1. 47 800 1. 47 0. 00 R 00004 adi_openmp. c (61 -68) LOOP TID exec. T exec. C body. T exit. Bar. T task. T 0 0. 38 100 0. 38 0. 00 1 0. 31 100 0. 31 0. 00 2 0. 35 100 0. 35 0. 00 3 0. 29 100 0. 29 0. 00 4 0. 35 100 0. 35 0. 00 5 0. 36 100 0. 36 0. 00 6 0. 36 100 0. 36 0. 00 7 0. 37 100 0. 37 0. 00 SUM 2. 77 800 2. 77 0. 00 R 00003 adi_openmp. c (52 -57) LOOP TID exec. T exec. C body. T exit. Bar. T task. T 0 0. 11 100 0. 10 0. 01 0. 00 1 0. 11 100 0. 10 0. 01 0. 00 2 0. 11 100 0. 10 0. 01 0. 00 3 0. 10 100 0. 10 0. 00 4 0. 11 100 0. 10 0. 01 0. 00 5 0. 10 100 0. 10 0. 01 0. 00 6 0. 10 100 0. 10 0. 01 0. 00 7 0. 10 100 0. 10 0. 00 SUM 0. 84 800 0. 78 0. 06 0. 00 R 00005 adi_openmp. c (70 -75) LOOP TID exec. T exec. C body. T exit. Bar. T task. T 0 0. 16 100 0. 16 0. 00 1 0. 23 100 0. 15 0. 07 0. 00 2 0. 19 100 0. 14 0. 05 0. 00 3 0. 25 100 0. 16 0. 09 0. 00 4 0. 19 100 0. 14 0. 05 0. 00 5 0. 18 100 0. 17 0. 01 0. 00 6 0. 18 100 0. 17 0. 01 0. 00 7 0. 17 100 0. 17 0. 01 0. 00 SUM 1. 55 800 1. 26 0. 29 0. 00

ADI MPI Code MPI_Bcast(a, N * N, MPI_FLOAT, 0, MPI_COMM_WORLD); MPI_Scatter(x, N * N/P, MPI_FLOAT, xpart, N * N/P, MPI_FLOAT, 0, MPI_COMM_WORLD); MPI_Scatter(b, N * N/P, MPI_FLOAT, bpart, N * N/P, MPI_FLOAT, 0, MPI_COMM_WORLD); for(i=myrank*(N/P), k=0; k<N/P; i++, k++) for(j=0; j<N; j++) apart[k][j] = a[i][j]; for(iter = 1; iter <= 2*MAXITER; iter++){ //////ADI forward & backword sweep along rows////// for (i = 0; i < N/P; i++){ for (j = 1; j < N; j++){ xpart[i][j] = xpart[i][j]-xpart[i][j-1]*apart[i][j]/bpart[i][j-1]; bpart[i][j]= bpart[i][j] - apart[i][j]*apart[i][j]/bpart[i][j-1]; } xpart[i][N-1] = xpart[i][N-1]/bpart[i][N-1]; } for (i = 0; i < N/P; i++){ for (j = N-2; j > 1; j--) xpart[i][j]=(xpart[i][j]-apart[i][j+1]*xpart[i][j+1])/bpart[i][j];

ADI MPI Code MPI_Gather(xpart, N*N/P, MPI_FLOAT, x, N*N/P, MPI_FLOAT, 0, MPI_COMM_WORLD); MPI_Gather(bpart, N*N/P, MPI_FLOAT, b, N*N/P, MPI_FLOAT, 0, MPI_COMM_WORLD); //transpose trans(x, N, trans(b, N, trans(a, N, matrices N); N); MPI_Scatter(x, N * N/P, MPI_FLOAT, xpart, N * N/P, MPI_FLOAT, 0, MPI_COMM_WORLD); MPI_Scatter(b, N * N/P, MPI_FLOAT, bpart, N * N/P, MPI_FLOAT, 0, MPI_COMM_WORLD); for(i=myrank*(N/P), k=0; k<N/P; i++, k++) for(j=0; j<N; j++) apart[k][j] = a[i][j]; }

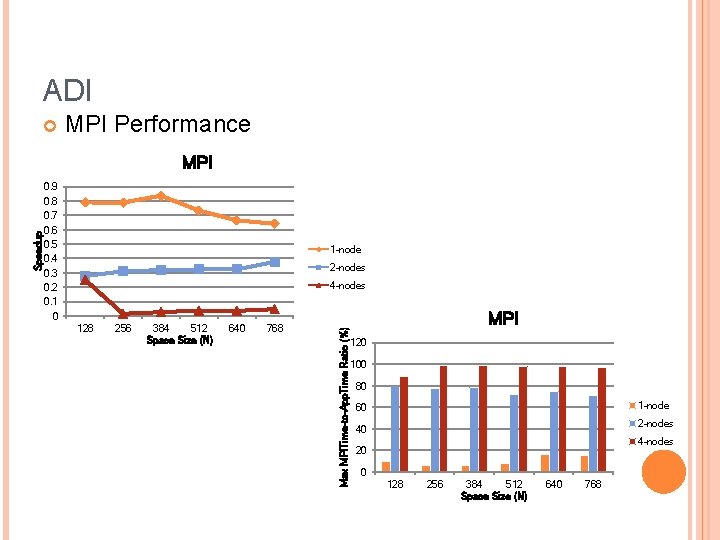

ADI MPI Performance 0. 9 0. 8 0. 7 0. 6 0. 5 0. 4 0. 3 0. 2 0. 1 0 1 -node 2 -nodes 4 -nodes 128 256 384 512 Space Size (N) 640 768 Max MPITime-to-App. Time Ratio (%) Speedup MPI 120 100 80 1 -node 60 2 -nodes 40 4 -nodes 20 0 128 256 384 512 Space Size (N) 640 768

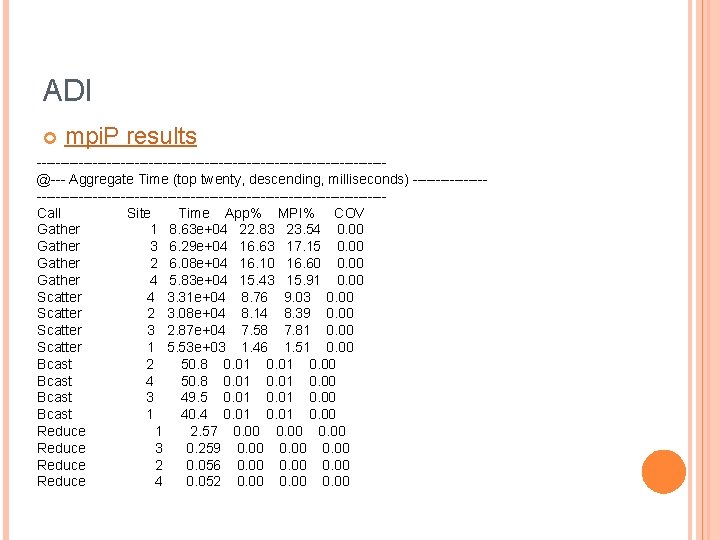

ADI mpi. P results -------------------------------------@--- Aggregate Time (top twenty, descending, milliseconds) ---------------------------------------------Call Site Time App% MPI% COV Gather 1 8. 63 e+04 22. 83 23. 54 0. 00 Gather 3 6. 29 e+04 16. 63 17. 15 0. 00 Gather 2 6. 08 e+04 16. 10 16. 60 0. 00 Gather 4 5. 83 e+04 15. 43 15. 91 0. 00 Scatter 4 3. 31 e+04 8. 76 9. 03 0. 00 Scatter 2 3. 08 e+04 8. 14 8. 39 0. 00 Scatter 3 2. 87 e+04 7. 58 7. 81 0. 00 Scatter 1 5. 53 e+03 1. 46 1. 51 0. 00 Bcast 2 50. 8 0. 01 0. 00 Bcast 4 50. 8 0. 01 0. 00 Bcast 3 49. 5 0. 01 0. 00 Bcast 1 40. 4 0. 01 0. 00 Reduce 1 2. 57 0. 00 Reduce 3 0. 259 0. 00 Reduce 2 0. 056 0. 00 Reduce 4 0. 052 0. 00

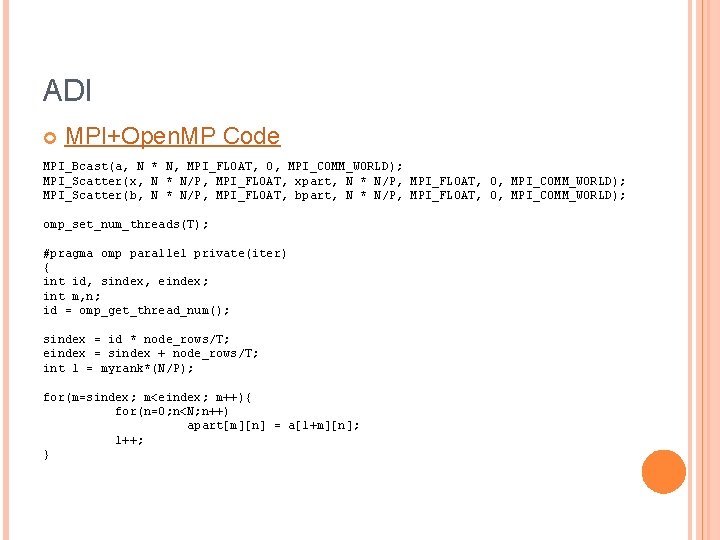

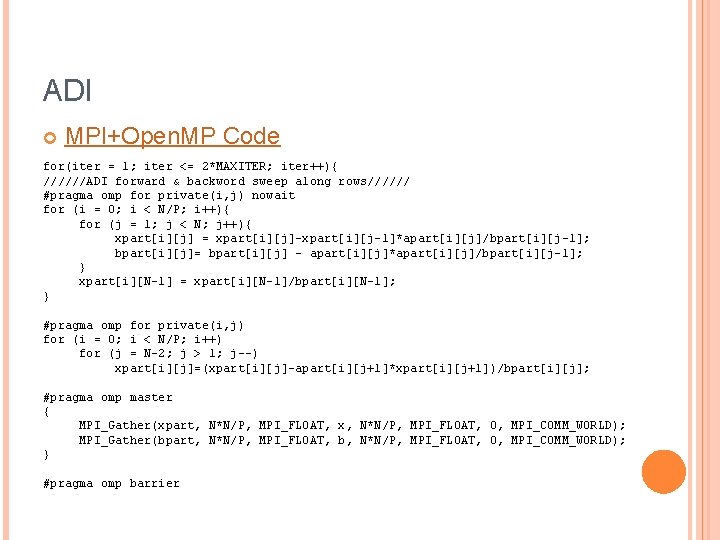

ADI MPI+Open. MP Code MPI_Bcast(a, N * N, MPI_FLOAT, 0, MPI_COMM_WORLD); MPI_Scatter(x, N * N/P, MPI_FLOAT, xpart, N * N/P, MPI_FLOAT, 0, MPI_COMM_WORLD); MPI_Scatter(b, N * N/P, MPI_FLOAT, bpart, N * N/P, MPI_FLOAT, 0, MPI_COMM_WORLD); omp_set_num_threads(T); #pragma omp parallel private(iter) { int id, sindex, eindex; int m, n; id = omp_get_thread_num(); sindex = id * node_rows/T; eindex = sindex + node_rows/T; int l = myrank*(N/P); for(m=sindex; m<eindex; m++){ for(n=0; n<N; n++) apart[m][n] = a[l+m][n]; l++; }

ADI MPI+Open. MP Code for(iter = 1; iter <= 2*MAXITER; iter++){ //////ADI forward & backword sweep along rows////// #pragma omp for private(i, j) nowait for (i = 0; i < N/P; i++){ for (j = 1; j < N; j++){ xpart[i][j] = xpart[i][j]-xpart[i][j-1]*apart[i][j]/bpart[i][j-1]; bpart[i][j]= bpart[i][j] - apart[i][j]*apart[i][j]/bpart[i][j-1]; } xpart[i][N-1] = xpart[i][N-1]/bpart[i][N-1]; } #pragma omp for private(i, j) for (i = 0; i < N/P; i++) for (j = N-2; j > 1; j--) xpart[i][j]=(xpart[i][j]-apart[i][j+1]*xpart[i][j+1])/bpart[i][j]; #pragma omp master { MPI_Gather(xpart, N*N/P, MPI_FLOAT, x, N*N/P, MPI_FLOAT, 0, MPI_COMM_WORLD); MPI_Gather(bpart, N*N/P, MPI_FLOAT, b, N*N/P, MPI_FLOAT, 0, MPI_COMM_WORLD); } #pragma omp barrier

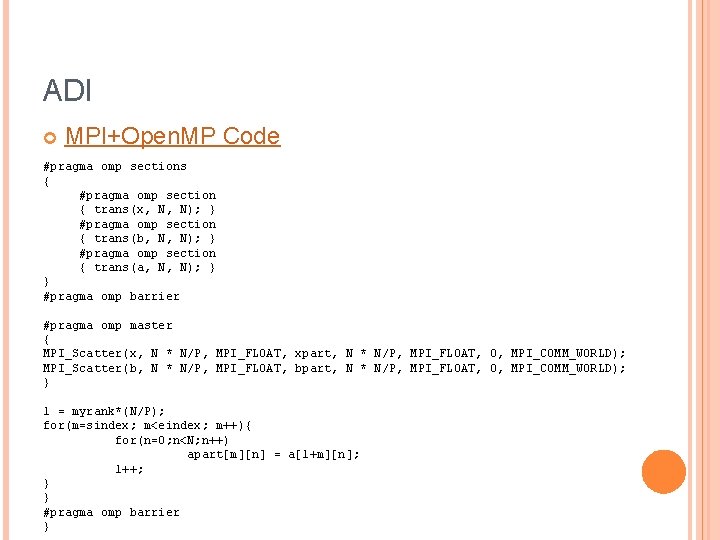

ADI MPI+Open. MP Code #pragma omp sections { #pragma omp section { trans(x, N, N); } #pragma omp section { trans(b, N, N); } #pragma omp section { trans(a, N, N); } } #pragma omp barrier #pragma omp master { MPI_Scatter(x, N * N/P, MPI_FLOAT, xpart, N * N/P, MPI_FLOAT, 0, MPI_COMM_WORLD); MPI_Scatter(b, N * N/P, MPI_FLOAT, bpart, N * N/P, MPI_FLOAT, 0, MPI_COMM_WORLD); } l = myrank*(N/P); for(m=sindex; m<eindex; m++){ for(n=0; n<N; n++) apart[m][n] = a[l+m][n]; l++; } } #pragma omp barrier }

ADI MPI+Open. MP Performance 1 -node 2 -nodes 4 -nodes 128 256 384 512 Space Size (N) 640 768 Overhead MPI+Open. MP 1. 8 1. 6 1. 4 1. 2 1 0. 8 0. 6 0. 4 0. 2 0 Max MPITime-to-App. Time Ratio (%) Speedup MPI+Open. MP 900 800 700 600 500 400 300 200 100 0 1 -node 2 -nodes 4 -nodes 128 MPI+Open. MP 256 384 512 Space Size (N) 120 100 80 1 -node 60 2 -nodes 40 4 -nodes 20 0 128 256 384 512 Space Size (N) 640 768

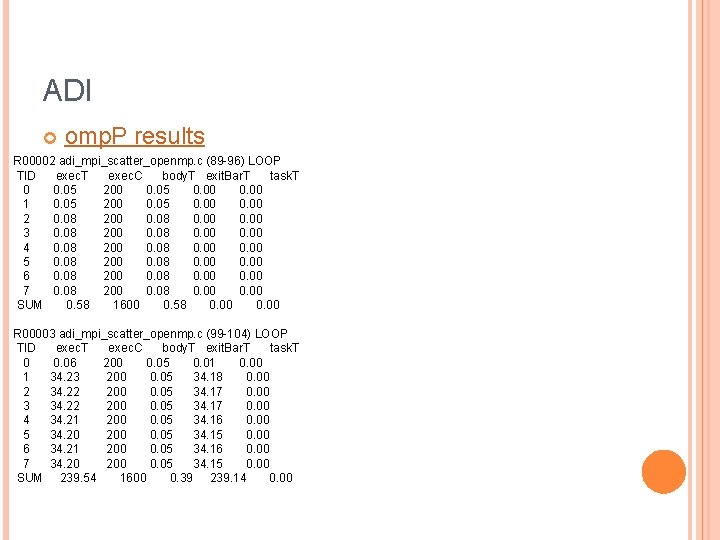

ADI omp. P results R 00002 adi_mpi_scatter_openmp. c (89 -96) LOOP TID exec. T exec. C body. T exit. Bar. T task. T 0 0. 05 200 0. 05 0. 00 1 0. 05 200 0. 05 0. 00 2 0. 08 200 0. 08 0. 00 3 0. 08 200 0. 08 0. 00 4 0. 08 200 0. 08 0. 00 5 0. 08 200 0. 08 0. 00 6 0. 08 200 0. 08 0. 00 7 0. 08 200 0. 08 0. 00 SUM 0. 58 1600 0. 58 0. 00 R 00003 adi_mpi_scatter_openmp. c (99 -104) LOOP TID exec. T exec. C body. T exit. Bar. T task. T 0 0. 06 200 0. 05 0. 01 0. 00 1 34. 23 200 0. 05 34. 18 0. 00 2 34. 22 200 0. 05 34. 17 0. 00 3 34. 22 200 0. 05 34. 17 0. 00 4 34. 21 200 0. 05 34. 16 0. 00 5 34. 20 200 0. 05 34. 15 0. 00 6 34. 21 200 0. 05 34. 16 0. 00 7 34. 20 200 0. 05 34. 15 0. 00 SUM 239. 54 1600 0. 39 239. 14 0. 00

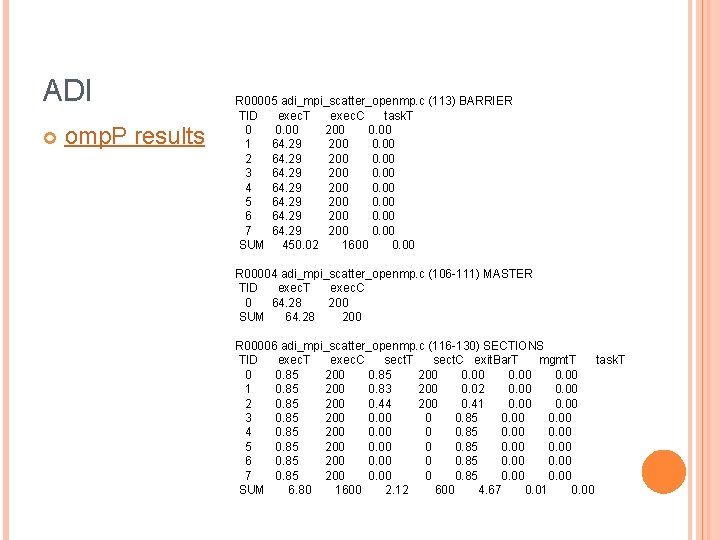

ADI omp. P results R 00005 adi_mpi_scatter_openmp. c (113) BARRIER TID exec. T exec. C task. T 0 0. 00 200 0. 00 1 64. 29 200 0. 00 2 64. 29 200 0. 00 3 64. 29 200 0. 00 4 64. 29 200 0. 00 5 64. 29 200 0. 00 6 64. 29 200 0. 00 7 64. 29 200 0. 00 SUM 450. 02 1600 0. 00 R 00004 adi_mpi_scatter_openmp. c (106 -111) MASTER TID exec. T exec. C 0 64. 28 200 SUM 64. 28 200 R 00006 adi_mpi_scatter_openmp. c (116 -130) SECTIONS TID exec. T exec. C sect. T sect. C exit. Bar. T mgmt. T task. T 0 0. 85 200 0. 00 1 0. 85 200 0. 83 200 0. 02 0. 00 2 0. 85 200 0. 44 200 0. 41 0. 00 3 0. 85 200 0 0. 85 0. 00 4 0. 85 200 0 0. 85 0. 00 5 0. 85 200 0 0. 85 0. 00 6 0. 85 200 0 0. 85 0. 00 7 0. 85 200 0 0. 85 0. 00 SUM 6. 80 1600 2. 12 600 4. 67 0. 01 0. 00

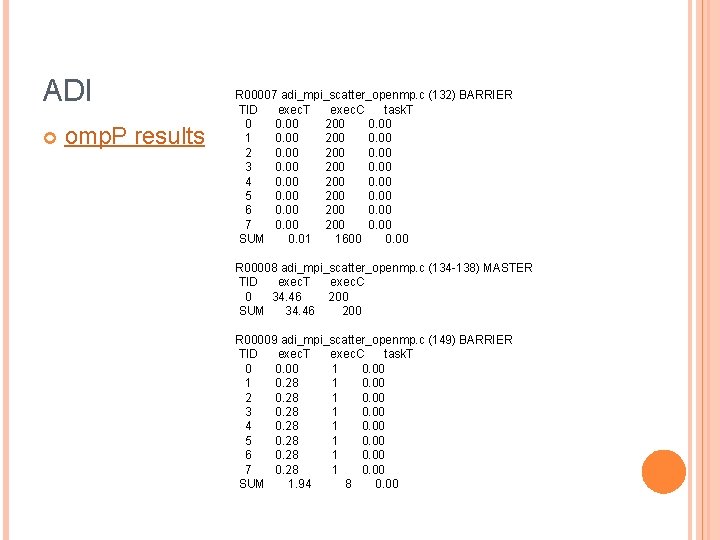

ADI omp. P results R 00007 adi_mpi_scatter_openmp. c (132) BARRIER TID exec. T exec. C task. T 0 0. 00 200 0. 00 1 0. 00 200 0. 00 3 0. 00 200 0. 00 4 0. 00 200 0. 00 5 0. 00 200 0. 00 6 0. 00 200 0. 00 7 0. 00 200 0. 00 SUM 0. 01 1600 0. 00 R 00008 adi_mpi_scatter_openmp. c (134 -138) MASTER TID exec. T exec. C 0 34. 46 200 SUM 34. 46 200 R 00009 adi_mpi_scatter_openmp. c (149) BARRIER TID exec. T exec. C task. T 0 0. 00 1 0. 28 1 0. 00 2 0. 28 1 0. 00 3 0. 28 1 0. 00 4 0. 28 1 0. 00 5 0. 28 1 0. 00 6 0. 28 1 0. 00 7 0. 28 1 0. 00 SUM 1. 94 8 0. 00

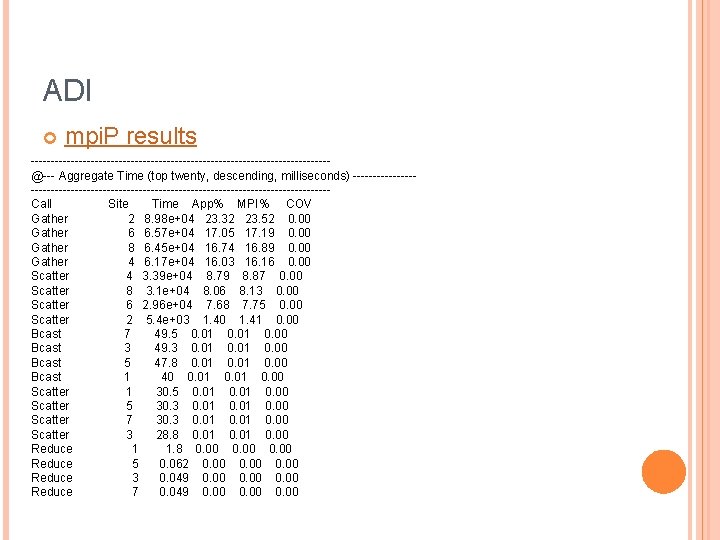

ADI mpi. P results -------------------------------------@--- Aggregate Time (top twenty, descending, milliseconds) ---------------------------------------------Call Site Time App% MPI% COV Gather 2 8. 98 e+04 23. 32 23. 52 0. 00 Gather 6 6. 57 e+04 17. 05 17. 19 0. 00 Gather 8 6. 45 e+04 16. 74 16. 89 0. 00 Gather 4 6. 17 e+04 16. 03 16. 16 0. 00 Scatter 4 3. 39 e+04 8. 79 8. 87 0. 00 Scatter 8 3. 1 e+04 8. 06 8. 13 0. 00 Scatter 6 2. 96 e+04 7. 68 7. 75 0. 00 Scatter 2 5. 4 e+03 1. 40 1. 41 0. 00 Bcast 7 49. 5 0. 01 0. 00 Bcast 3 49. 3 0. 01 0. 00 Bcast 5 47. 8 0. 01 0. 00 Bcast 1 40 0. 01 0. 00 Scatter 1 30. 5 0. 01 0. 00 Scatter 5 30. 3 0. 01 0. 00 Scatter 7 30. 3 0. 01 0. 00 Scatter 3 28. 8 0. 01 0. 00 Reduce 1 1. 8 0. 00 Reduce 5 0. 062 0. 00 Reduce 3 0. 049 0. 00 Reduce 7 0. 049 0. 00

THANKS Q&A Any Suggestions?

- Slides: 37