Introduction What is machine learning Machine Learning Andrew

![Cocktail party problem algorithm [W, s, v] = svd((repmat(sum(x. *x, 1), size(x, 1). *x)*x'); Cocktail party problem algorithm [W, s, v] = svd((repmat(sum(x. *x, 1), size(x, 1). *x)*x');](https://slidetodoc.com/presentation_image_h/1e8df3c39100c96bff25b8a2727fdee0/image-15.jpg)

- Slides: 89

Introduction What is machine learning Machine Learning Andrew Ng

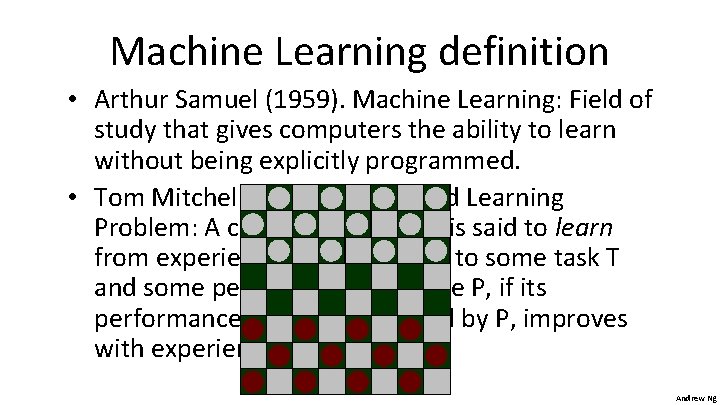

Machine Learning definition • Arthur Samuel (1959). Machine Learning: Field of study that gives computers the ability to learn without being explicitly programmed. • Tom Mitchell (1998) Well-posed Learning Problem: A computer program is said to learn from experience E with respect to some task T and some performance measure P, if its performance on T, as measured by P, improves with experience E. Andrew Ng

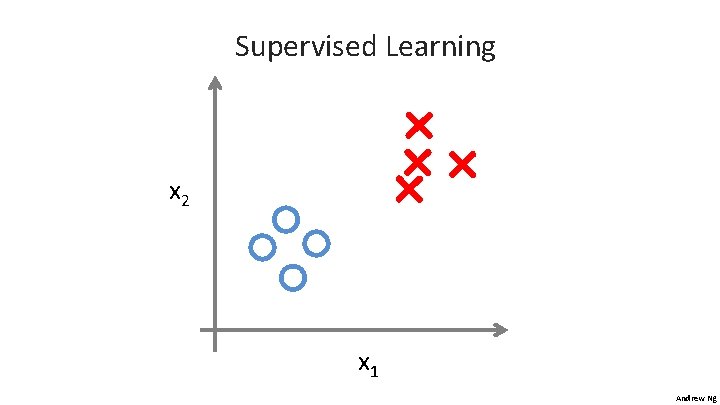

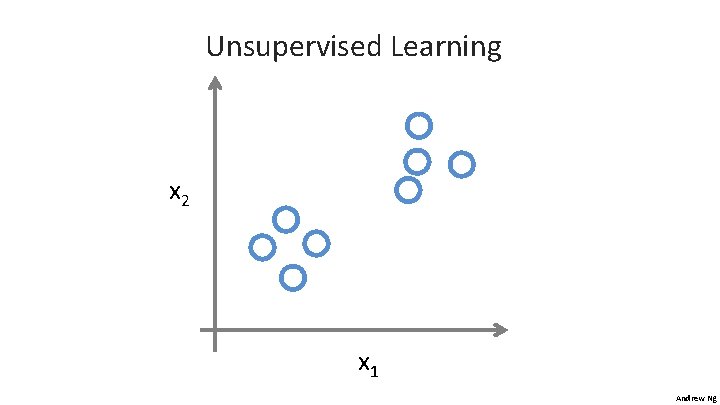

Machine learning algorithms: - Supervised learning - Unsupervised learning Others: Reinforcement learning, recommender systems. Also talk about: Practical advice for applying learning algorithms. Andrew Ng

Introduction Supervised Learning Machine Learning Andrew Ng

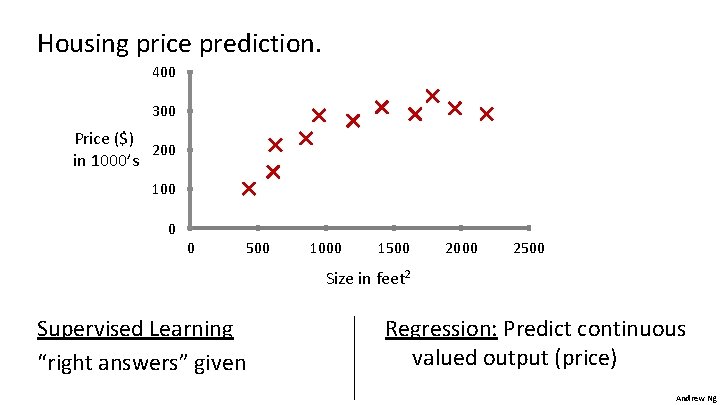

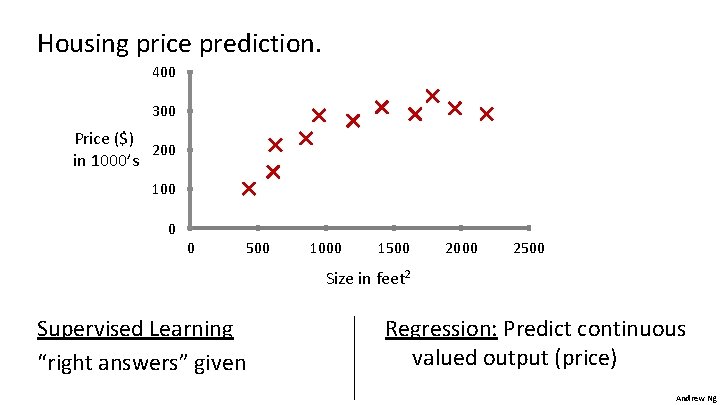

Housing price prediction. 400 300 Price ($) 200 in 1000’s 100 0 0 500 1000 1500 2000 2500 Size in feet 2 Supervised Learning “right answers” given Regression: Predict continuous valued output (price) Andrew Ng

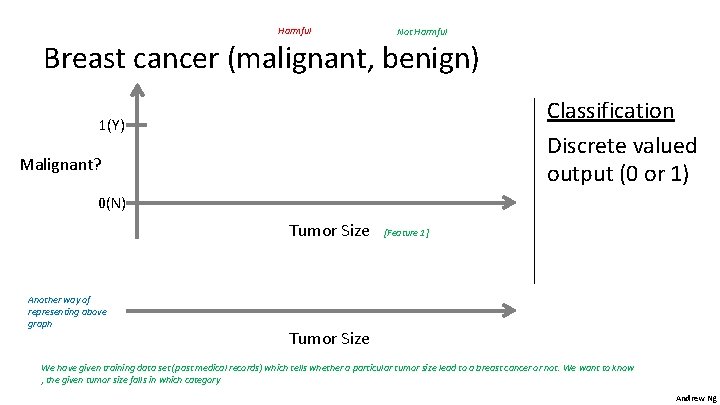

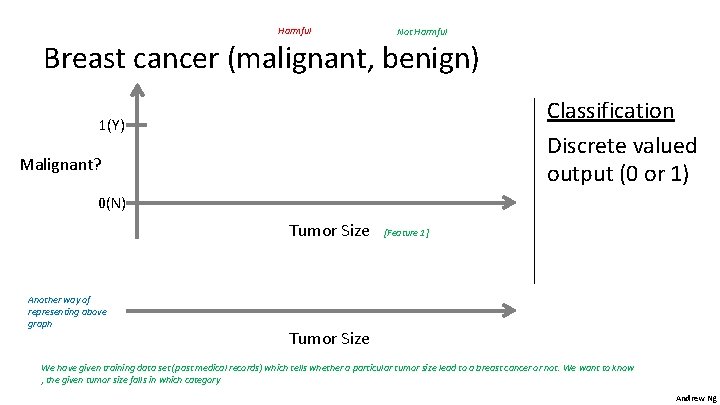

Harmful Not Harmful Breast cancer (malignant, benign) Classification Discrete valued output (0 or 1) 1(Y) Malignant? 0(N) Tumor Size Another way of representing above graph [Feature 1] Tumor Size We have given training data set (past medical records) which tells whether a particular tumor size lead to a breast cancer or not. We want to know , the given tumor size falls in which category Andrew Ng

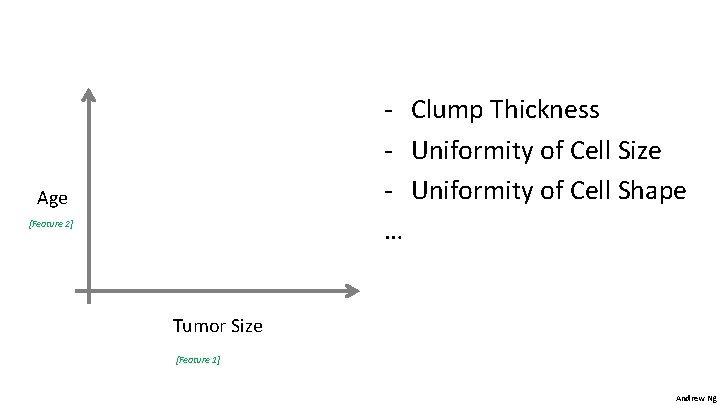

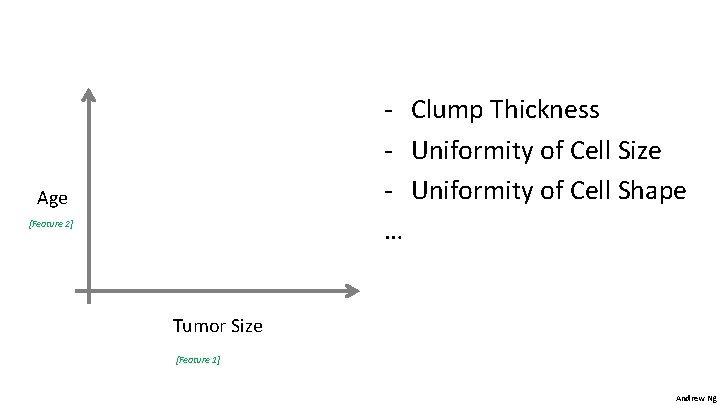

- Clump Thickness - Uniformity of Cell Size - Uniformity of Cell Shape … Age [Feature 2] Tumor Size [Feature 1] Andrew Ng

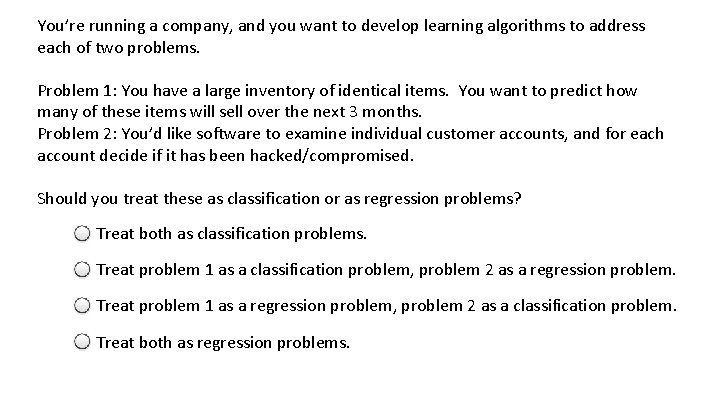

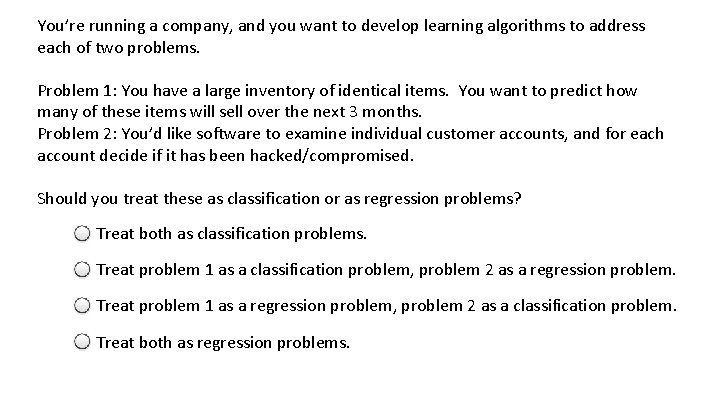

You’re running a company, and you want to develop learning algorithms to address each of two problems. Problem 1: You have a large inventory of identical items. You want to predict how many of these items will sell over the next 3 months. Problem 2: You’d like software to examine individual customer accounts, and for each account decide if it has been hacked/compromised. Should you treat these as classification or as regression problems? Treat both as classification problems. Treat problem 1 as a classification problem, problem 2 as a regression problem. Treat problem 1 as a regression problem, problem 2 as a classification problem. Treat both as regression problems.

Introduction Unsupervised Learning Machine Learning Andrew Ng

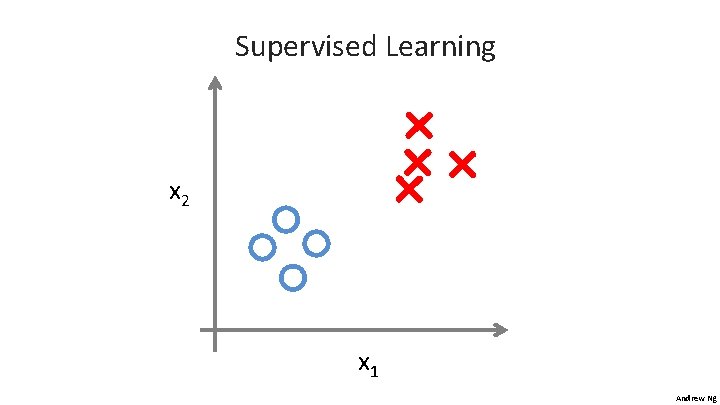

Supervised Learning x 2 x 1 Andrew Ng

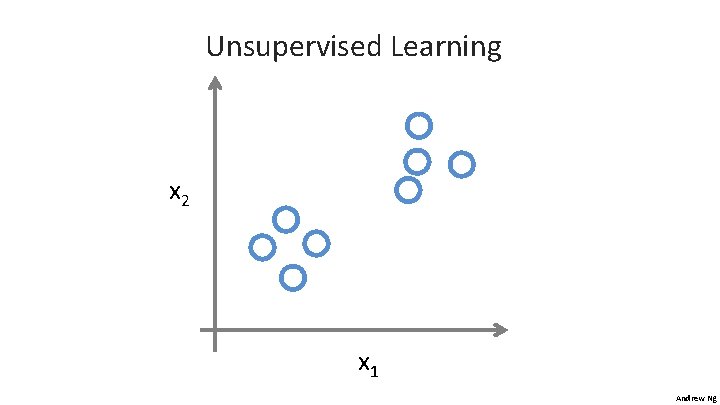

Unsupervised Learning x 2 x 1 Andrew Ng

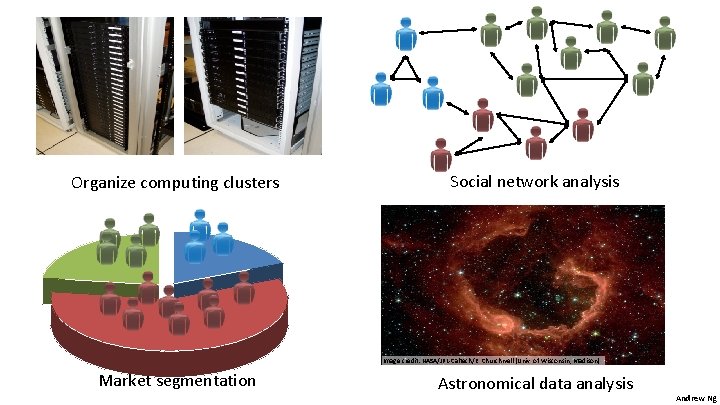

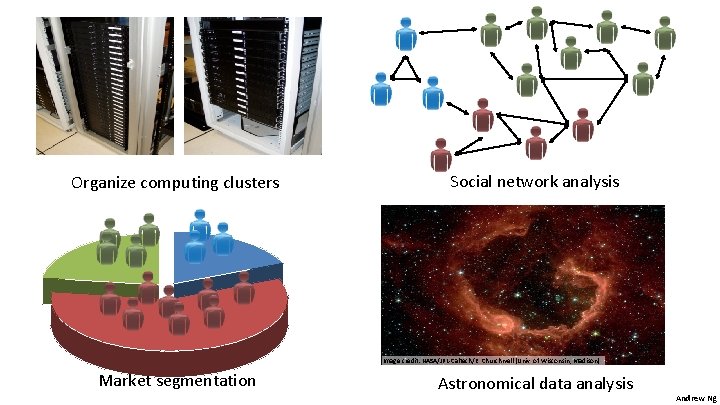

Organize computing clusters Social network analysis Image credit: NASA/JPL-Caltech/E. Churchwell (Univ. of Wisconsin, Madison) Market segmentation Astronomical data analysis Andrew Ng

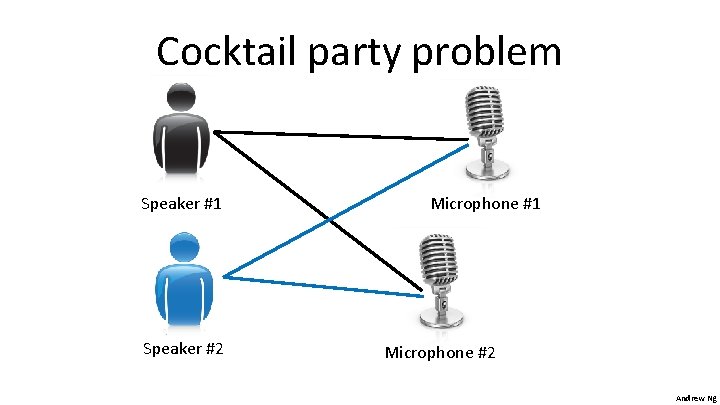

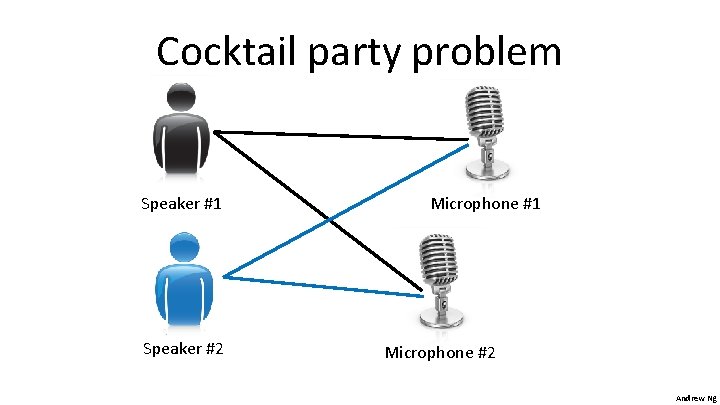

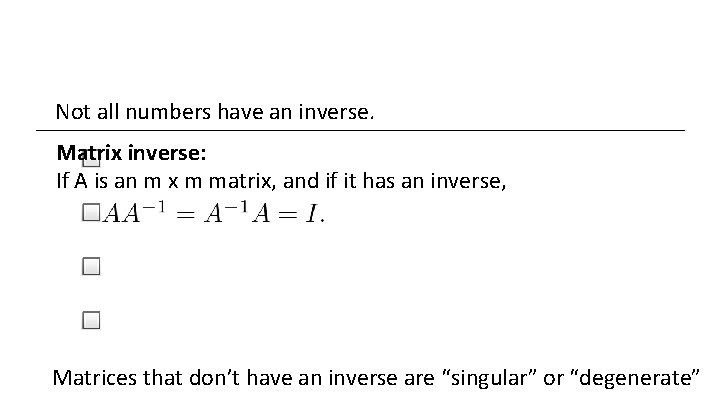

Cocktail party problem Speaker #1 Speaker #2 Microphone #1 Microphone #2 Andrew Ng

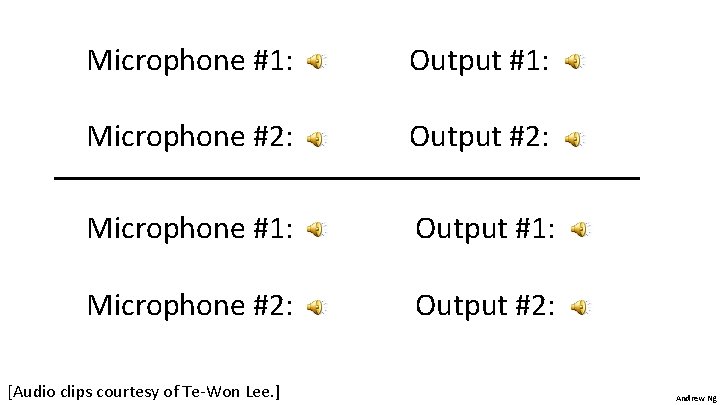

Microphone #1: Output #1: Microphone #2: Output #2: [Audio clips courtesy of Te-Won Lee. ] Andrew Ng

![Cocktail party problem algorithm W s v svdrepmatsumx x 1 sizex 1 xx Cocktail party problem algorithm [W, s, v] = svd((repmat(sum(x. *x, 1), size(x, 1). *x)*x');](https://slidetodoc.com/presentation_image_h/1e8df3c39100c96bff25b8a2727fdee0/image-15.jpg)

Cocktail party problem algorithm [W, s, v] = svd((repmat(sum(x. *x, 1), size(x, 1). *x)*x'); [Source: Sam Roweis, Yair Weiss & Eero Simoncelli] Andrew Ng

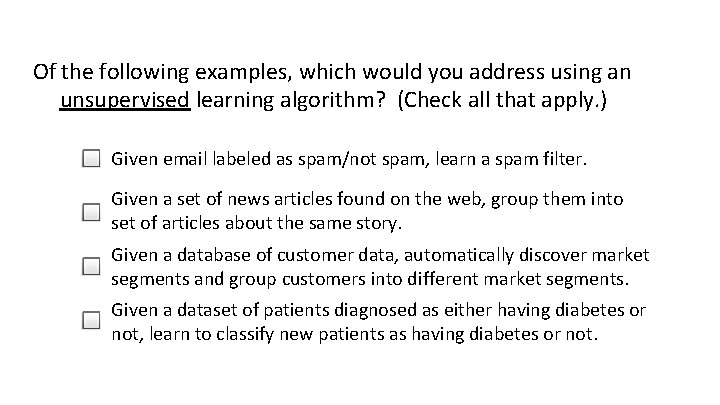

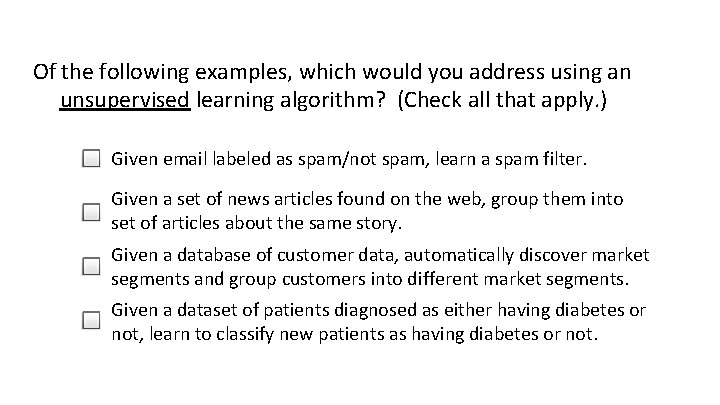

Of the following examples, which would you address using an unsupervised learning algorithm? (Check all that apply. ) Given email labeled as spam/not spam, learn a spam filter. Given a set of news articles found on the web, group them into set of articles about the same story. Given a database of customer data, automatically discover market segments and group customers into different market segments. Given a dataset of patients diagnosed as either having diabetes or not, learn to classify new patients as having diabetes or not.

Linear regression with one variable Model representation Machine Learning

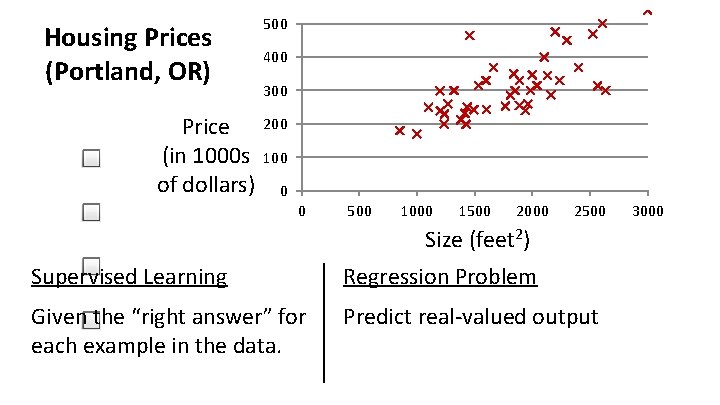

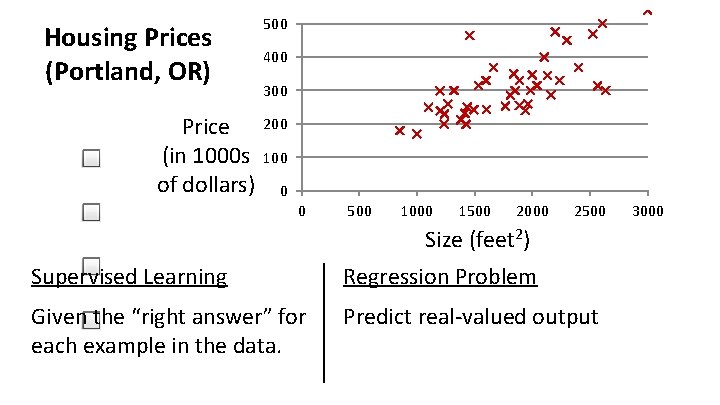

Housing Prices (Portland, OR) Price (in 1000 s of dollars) 500 400 300 200 100 0 0 500 1000 1500 Size 2000 (feet 2) 2500 Supervised Learning Regression Problem Given the “right answer” for each example in the data. Predict real-valued output 3000

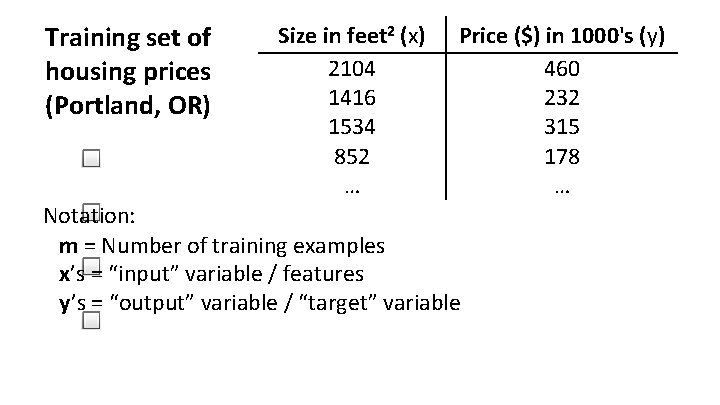

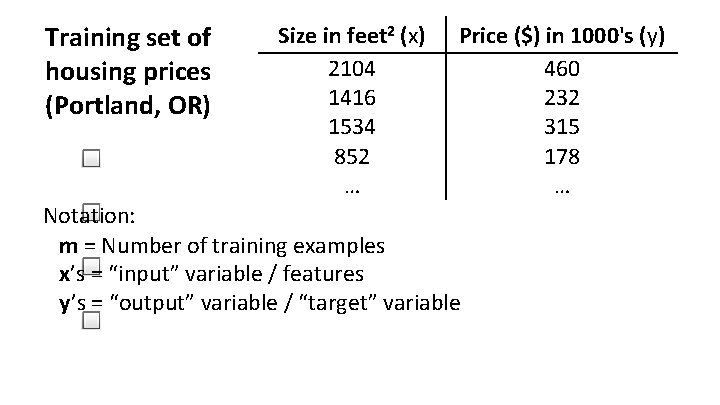

Training set of housing prices (Portland, OR) Size in feet 2 (x) 2104 1416 1534 852 … Price ($) in 1000's (y) 460 232 315 178 … Notation: m = Number of training examples x’s = “input” variable / features y’s = “output” variable / “target” variable

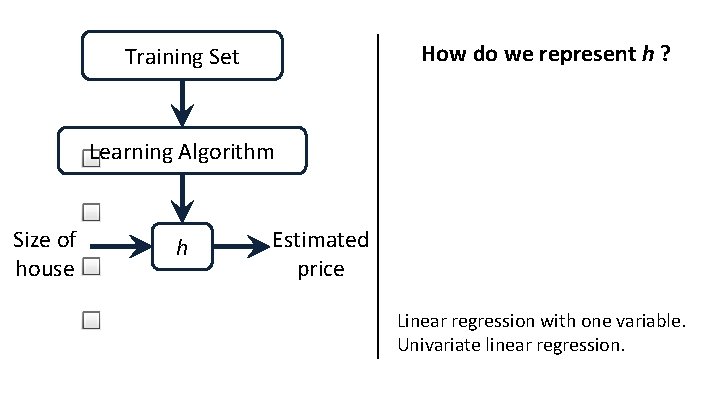

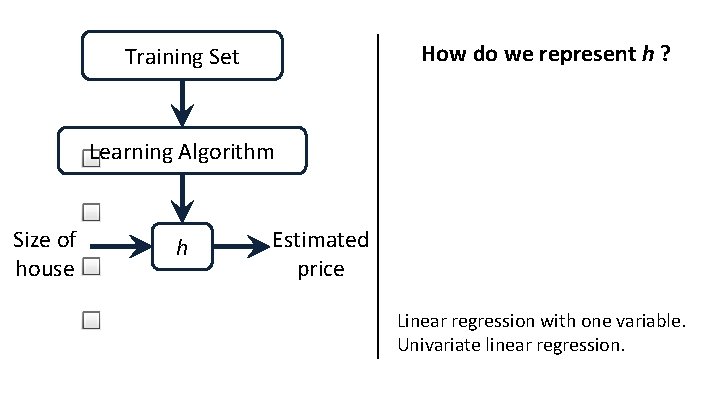

How do we represent h ? Training Set Learning Algorithm Size of house h Estimated price Linear regression with one variable. Univariate linear regression.

Linear regression with one variable Cost function Machine Learning

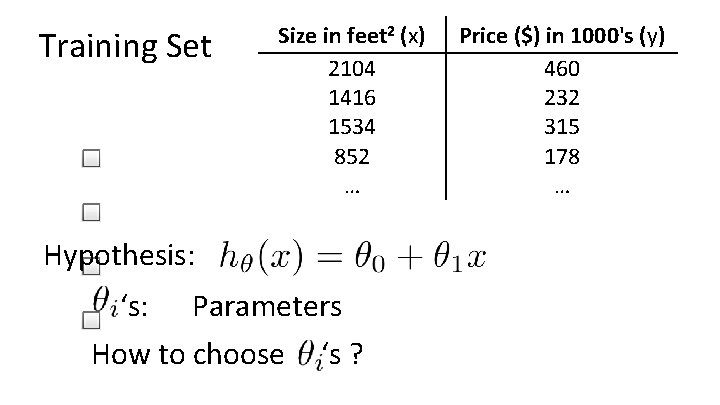

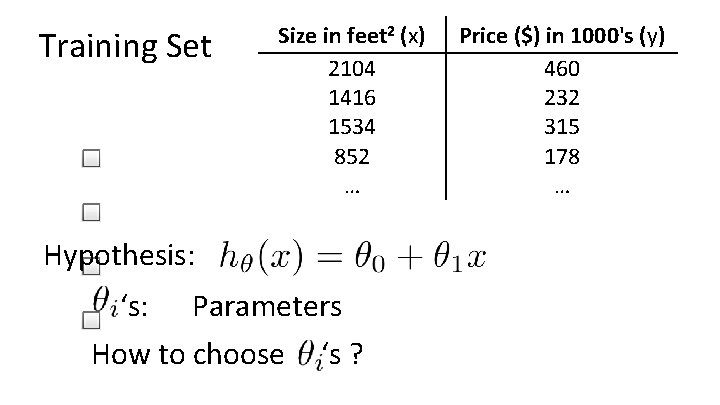

Training Set Size in feet 2 (x) 2104 1416 1534 852 … Hypothesis: ‘s: Parameters How to choose ‘s ? Price ($) in 1000's (y) 460 232 315 178 …

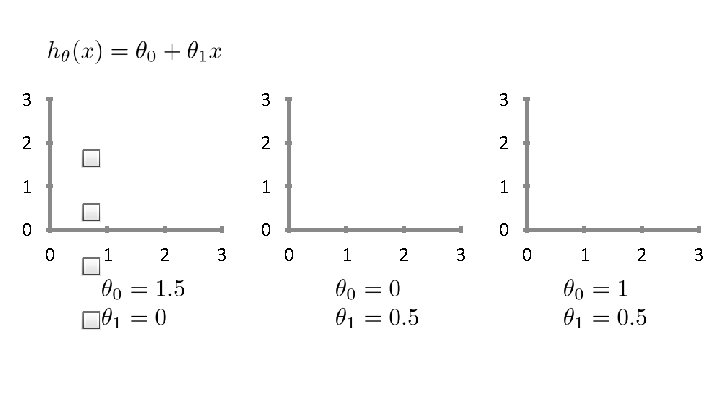

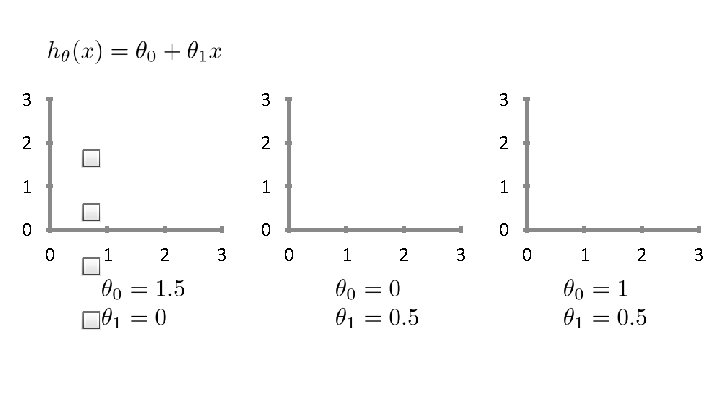

3 3 3 2 2 2 1 1 1 0 0 1 2 3

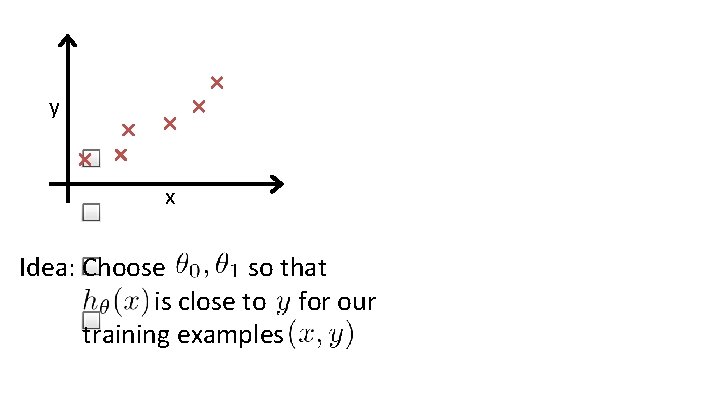

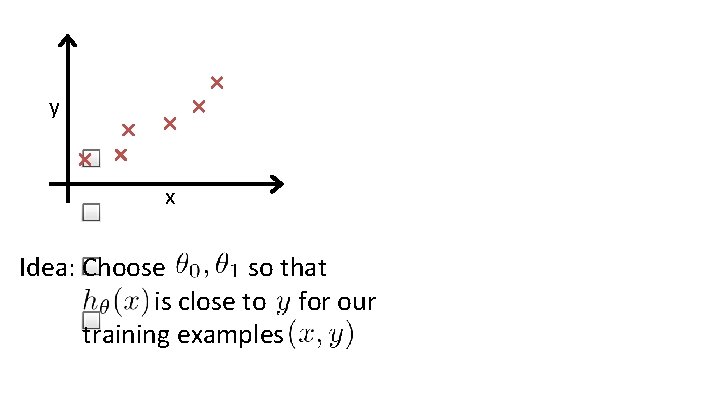

y x Idea: Choose so that is close to for our training examples

Linear regression with one variable Cost function intuition I Machine Learning

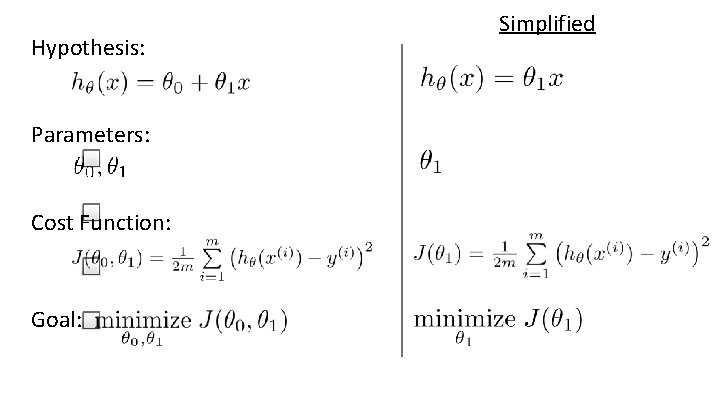

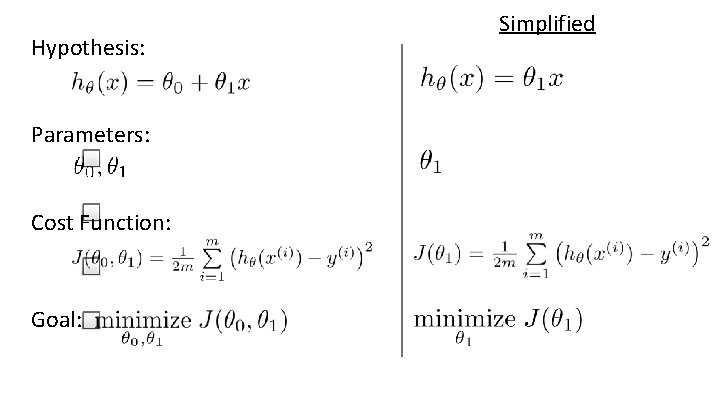

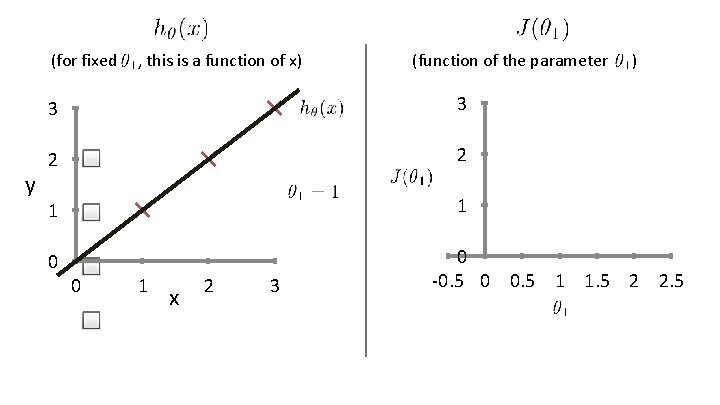

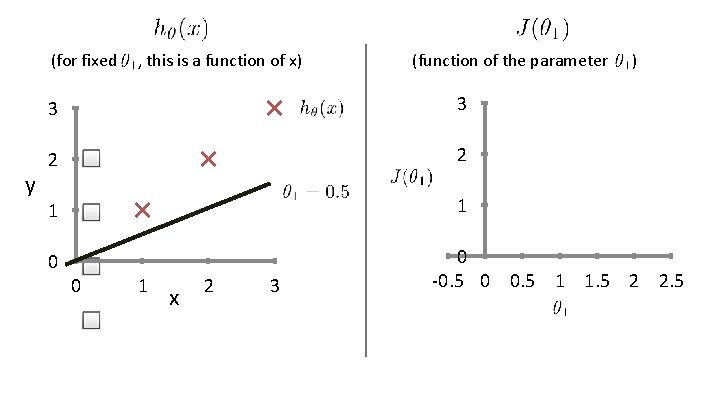

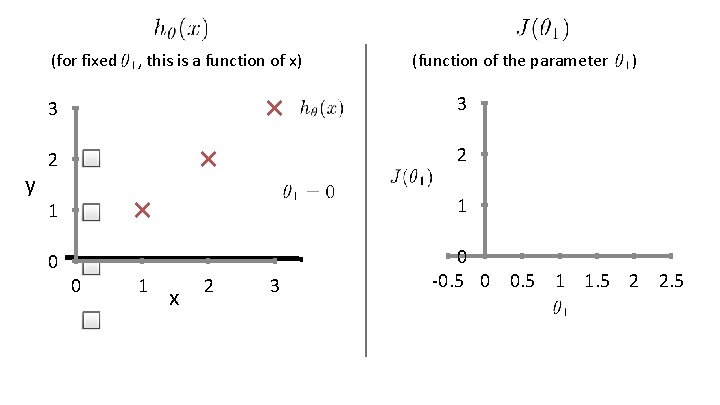

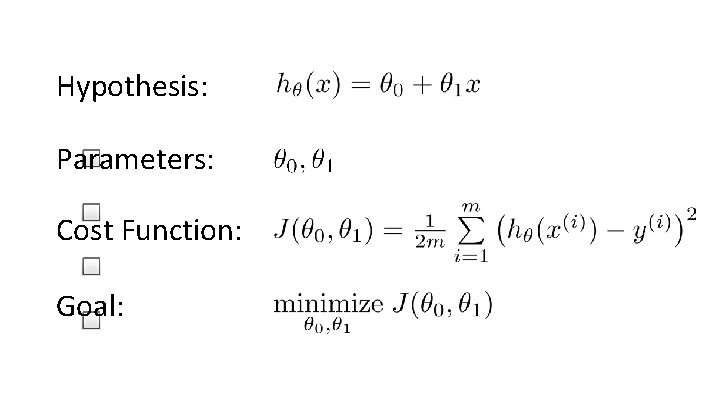

Hypothesis: Parameters: Cost Function: Goal: Simplified

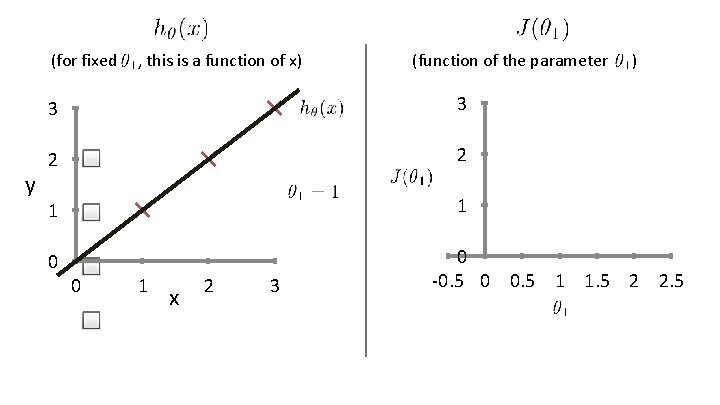

(for fixed y , this is a function of x) (function of the parameter 3 3 2 2 1 1 0 0 1 x 2 3 ) 0 -0. 5 0 0. 5 1 1. 5 2 2. 5

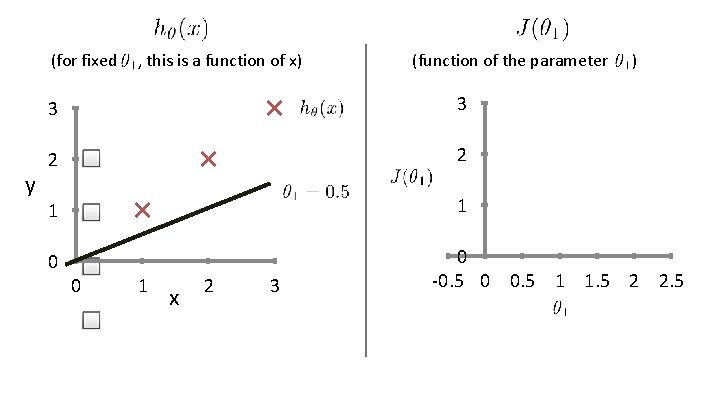

(for fixed y , this is a function of x) (function of the parameter 3 3 2 2 1 1 0 0 1 x 2 3 ) 0 -0. 5 0 0. 5 1 1. 5 2 2. 5

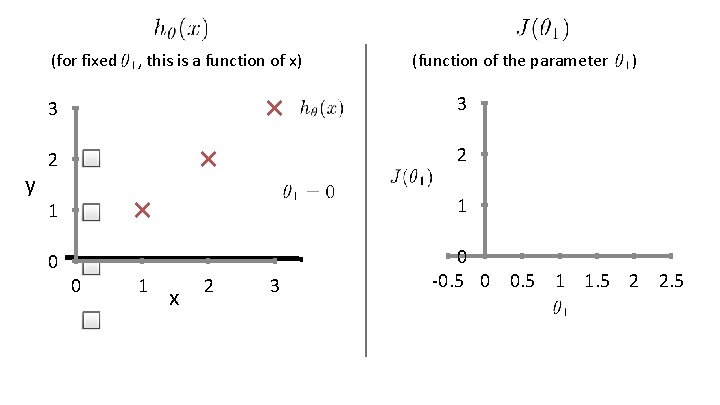

(for fixed y , this is a function of x) (function of the parameter 3 3 2 2 1 1 0 0 1 x 2 3 ) 0 -0. 5 0 0. 5 1 1. 5 2 2. 5

Linear regression with one variable Cost function intuition II Machine Learning

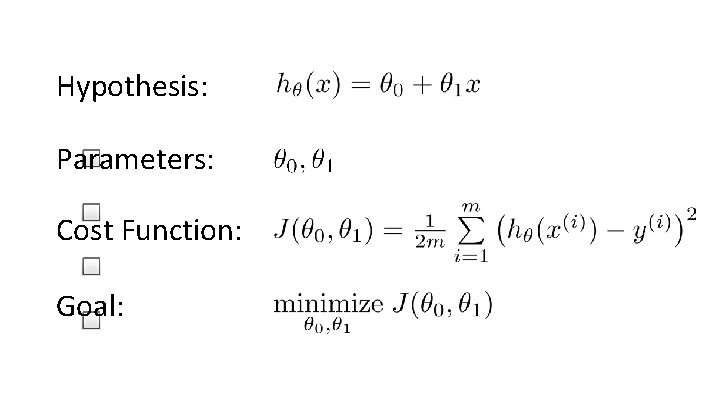

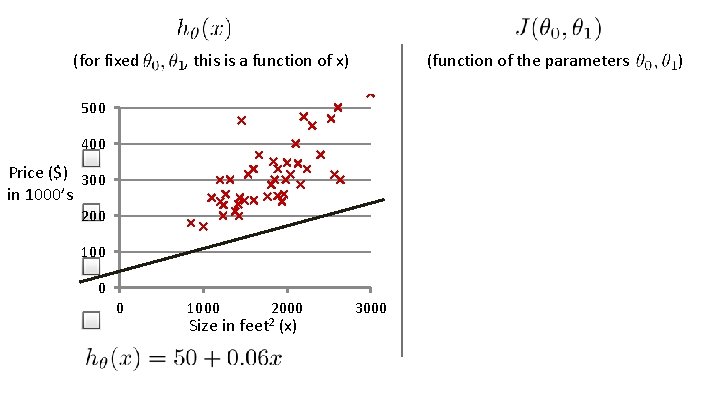

Hypothesis: Parameters: Cost Function: Goal:

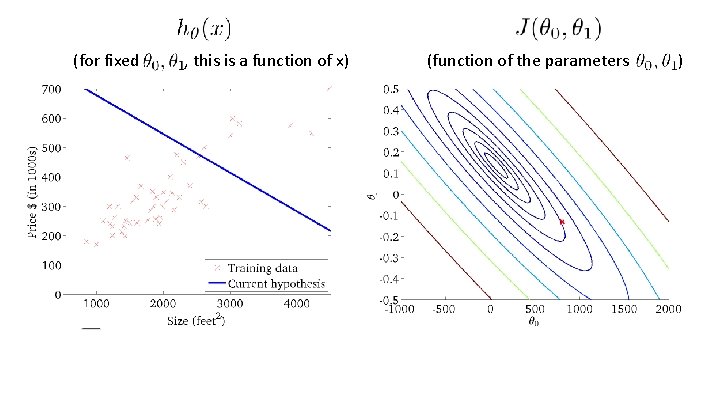

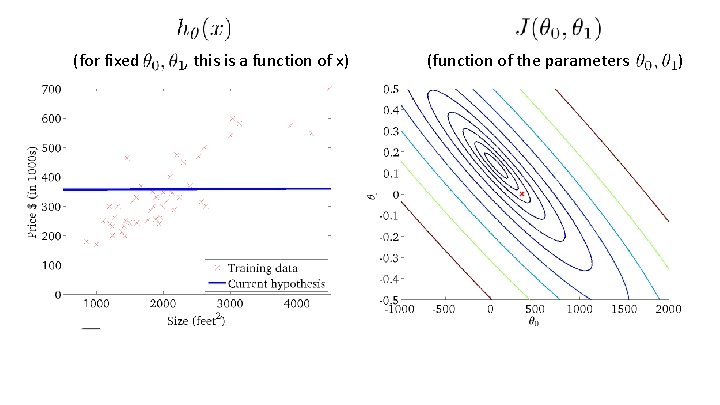

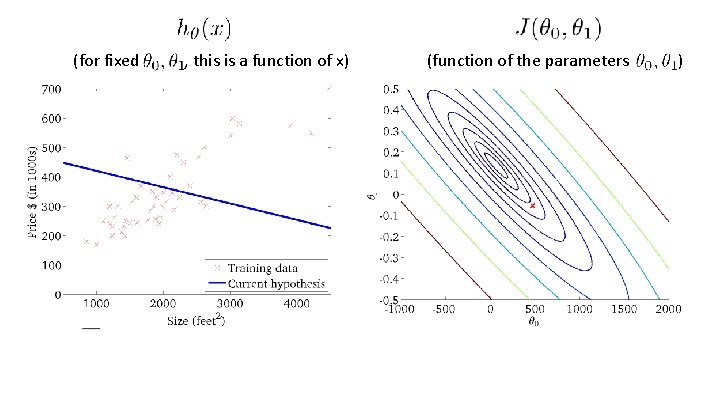

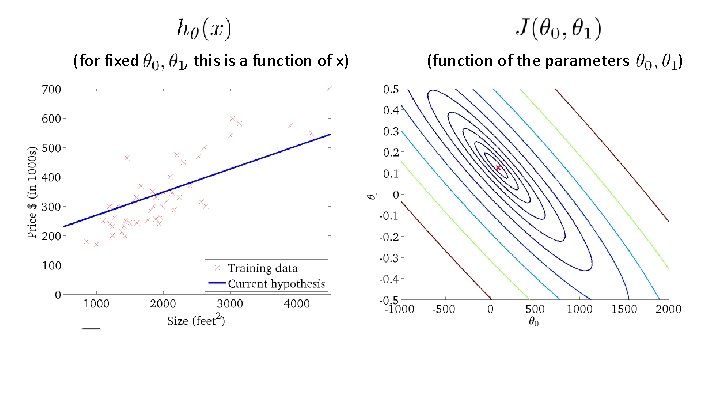

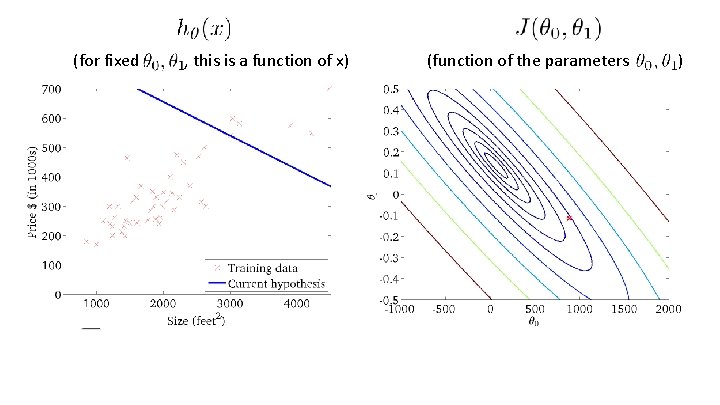

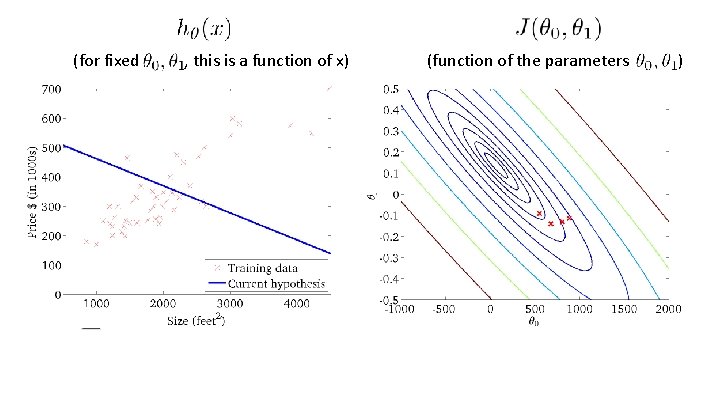

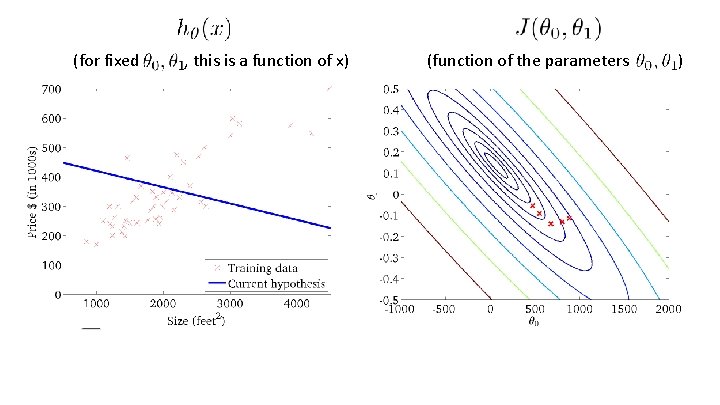

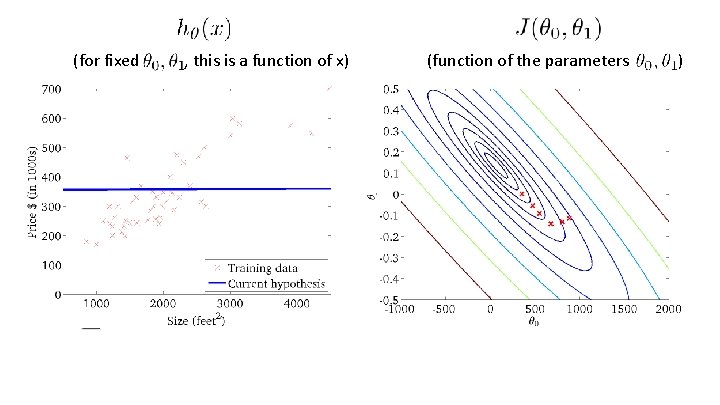

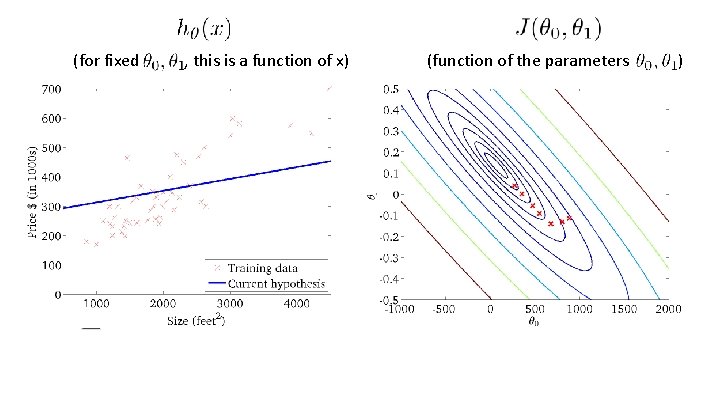

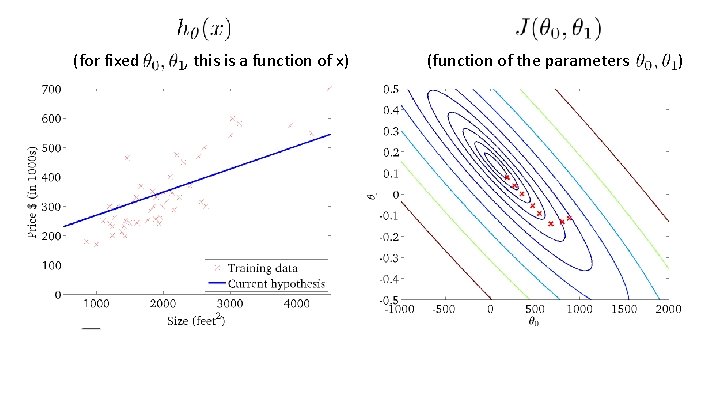

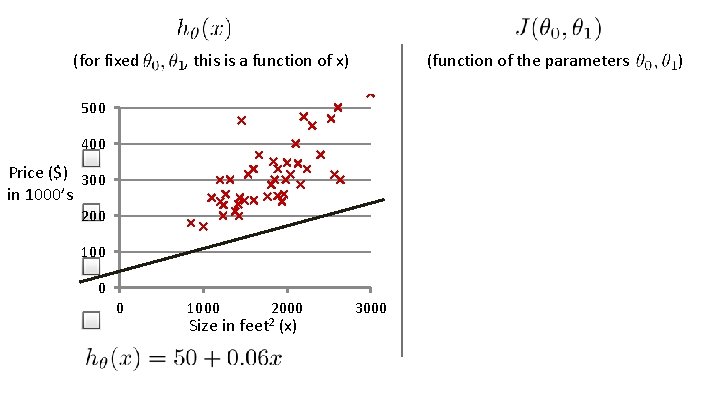

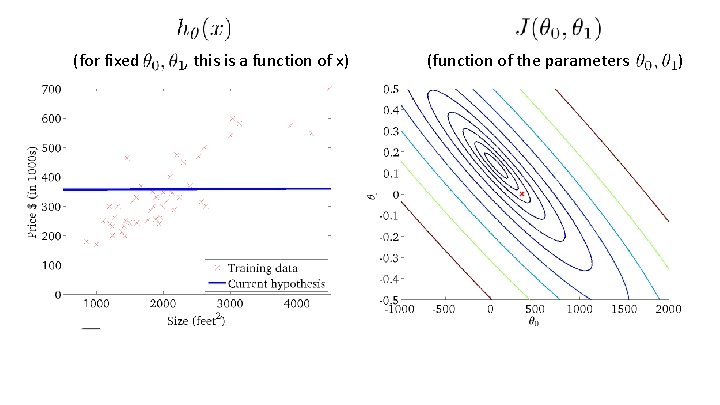

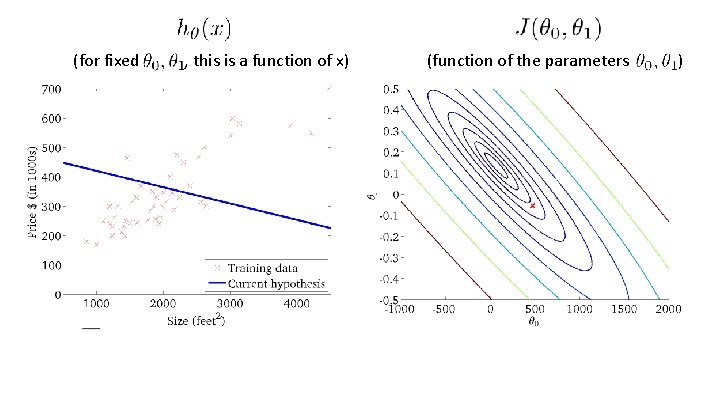

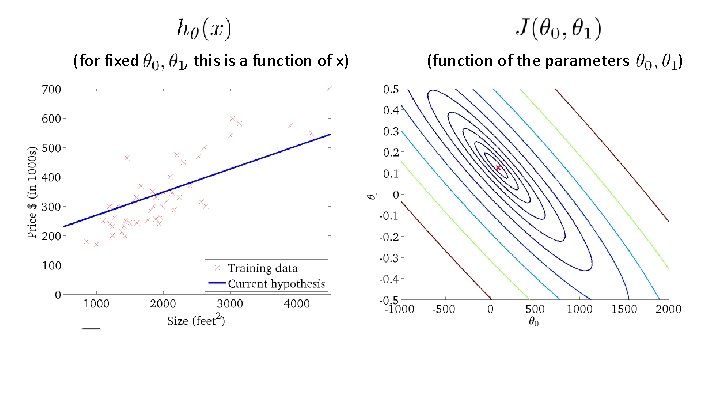

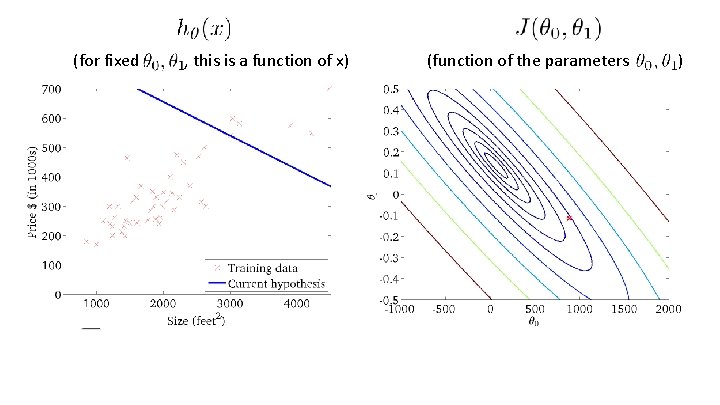

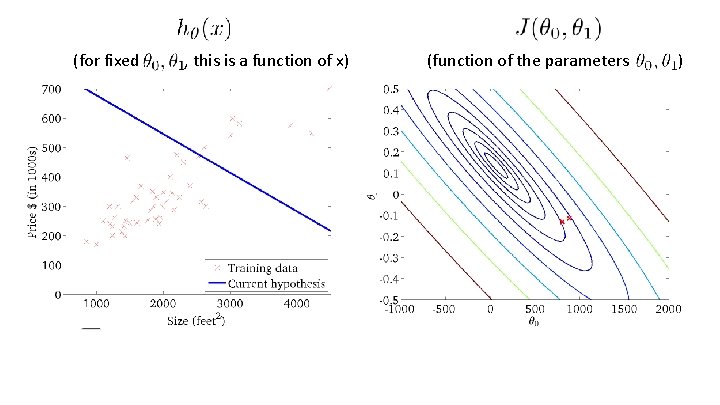

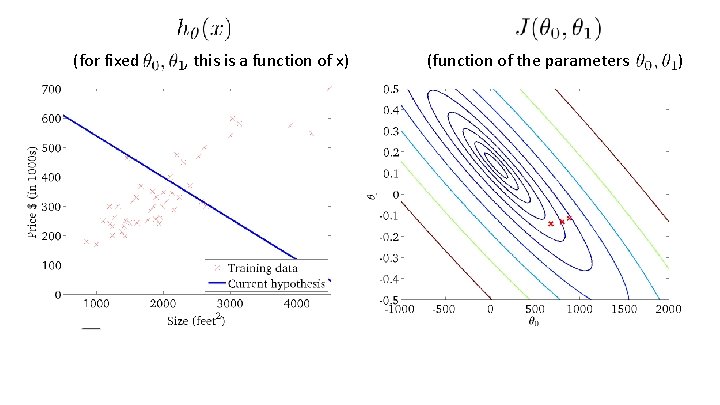

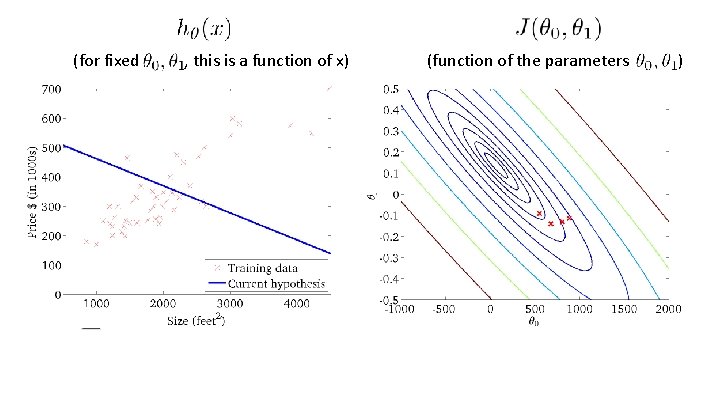

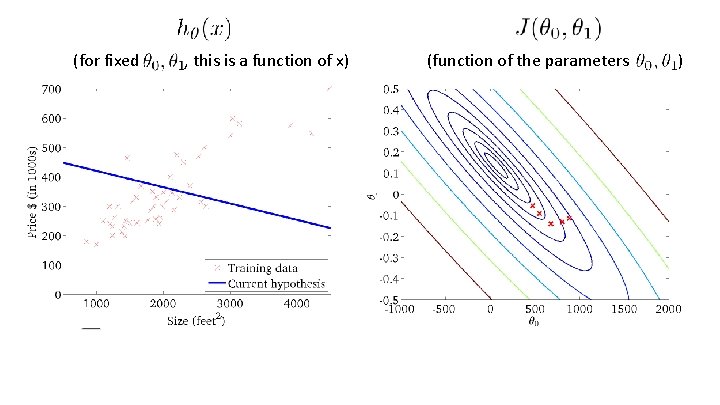

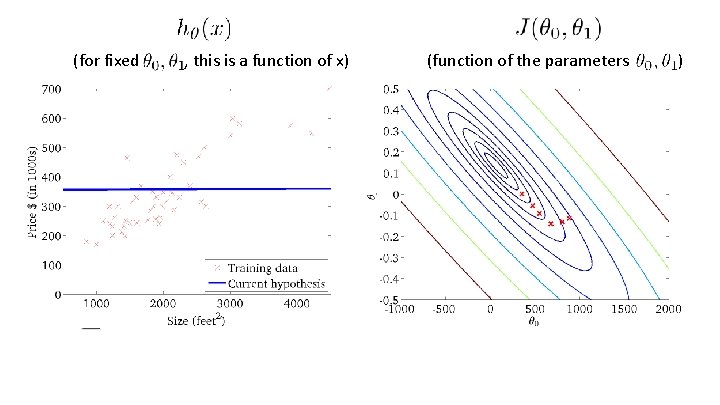

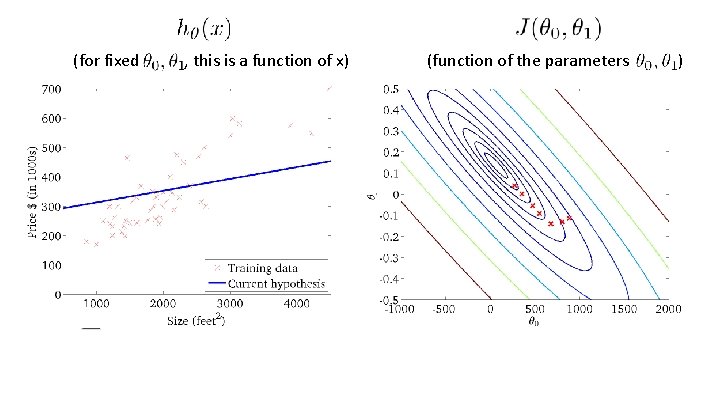

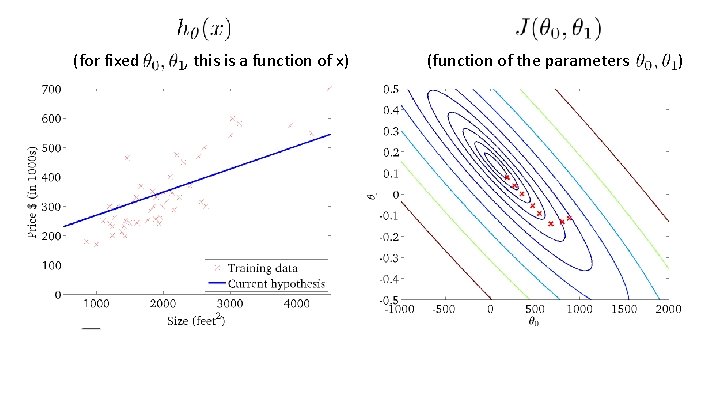

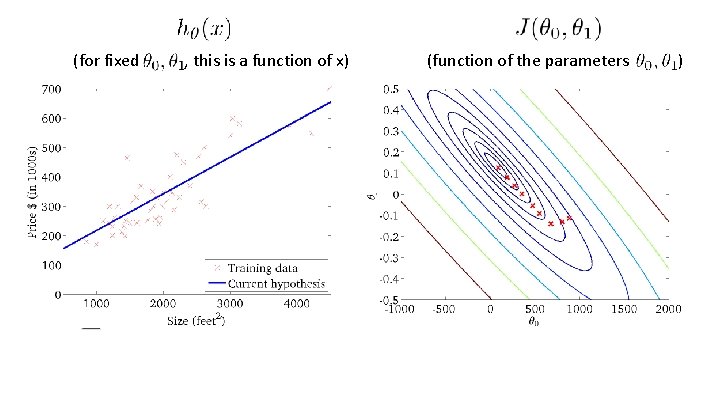

(for fixed , this is a function of x) (function of the parameters 500 400 Price ($) 300 in 1000’s 200 100 0 0 1000 Size in 2000 feet 2 (x) 3000 )

(for fixed , this is a function of x) (function of the parameters )

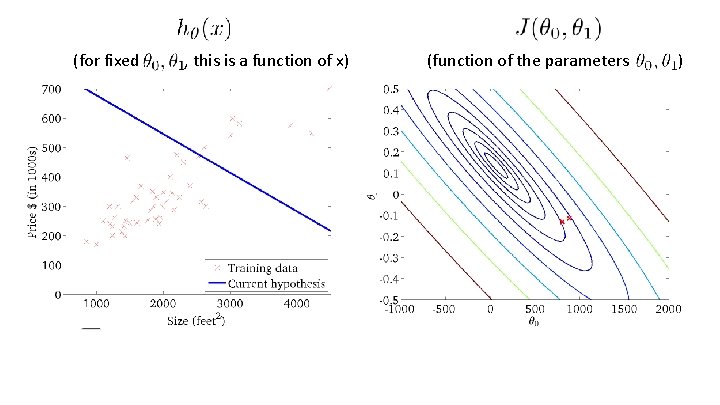

(for fixed , this is a function of x) (function of the parameters )

(for fixed , this is a function of x) (function of the parameters )

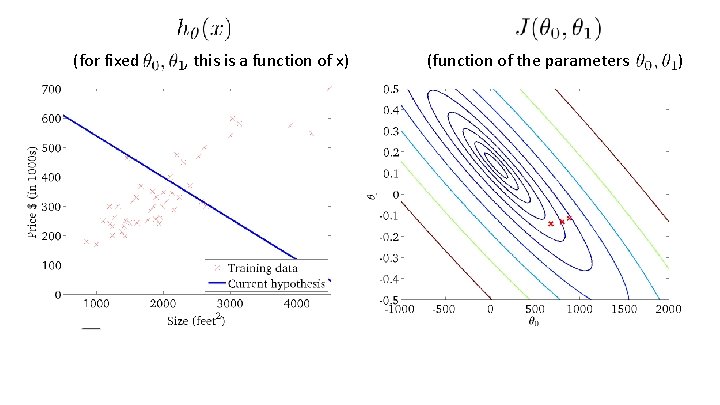

(for fixed , this is a function of x) (function of the parameters )

Linear regression with one variable Machine Learning Gradient descent

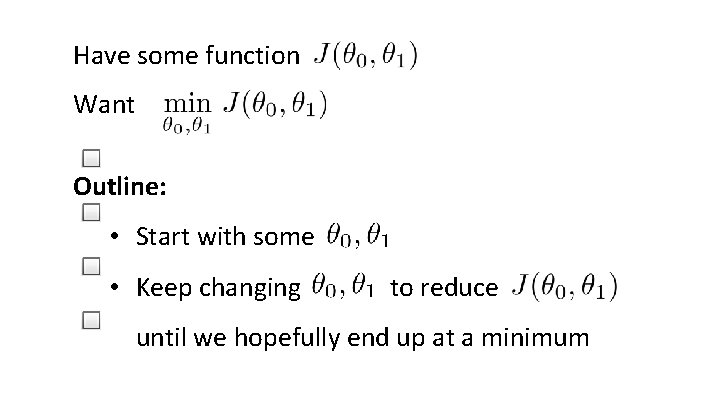

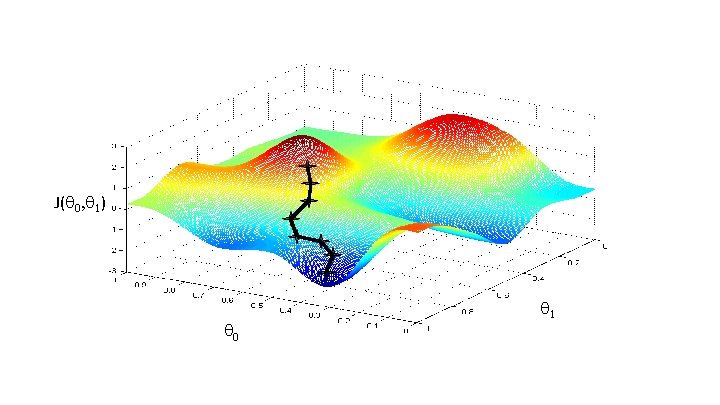

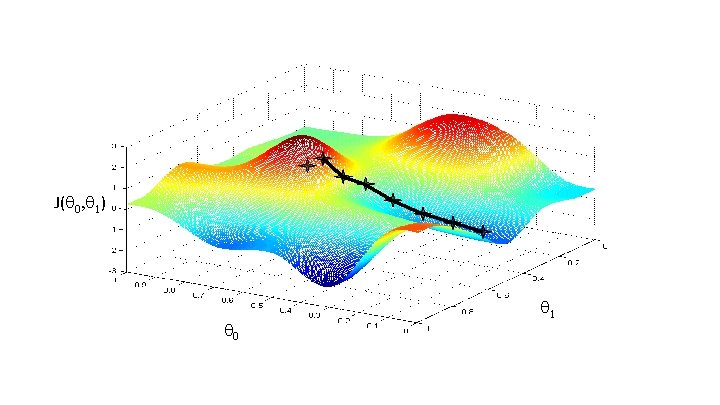

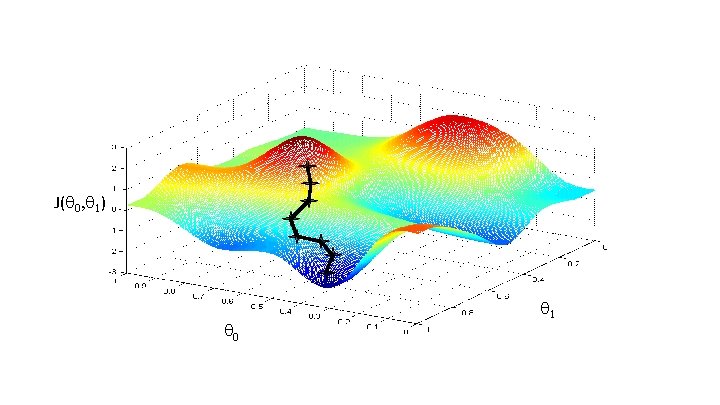

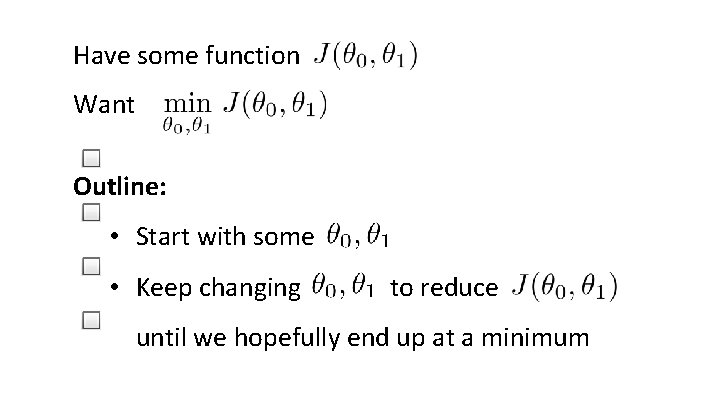

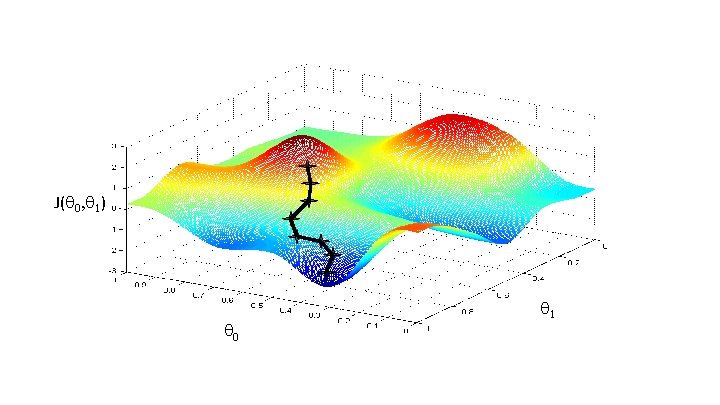

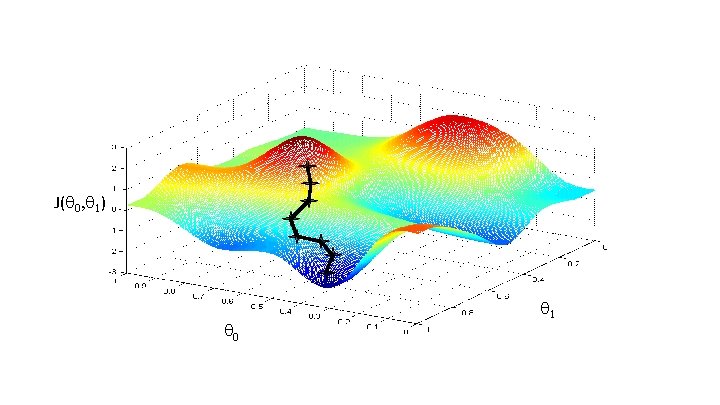

Have some function Want Outline: • Start with some • Keep changing to reduce until we hopefully end up at a minimum

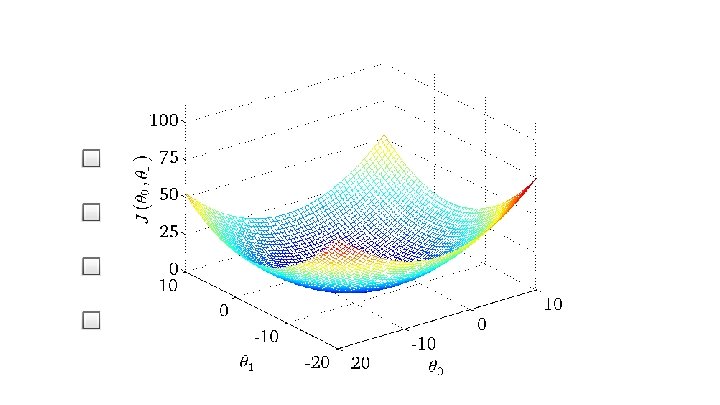

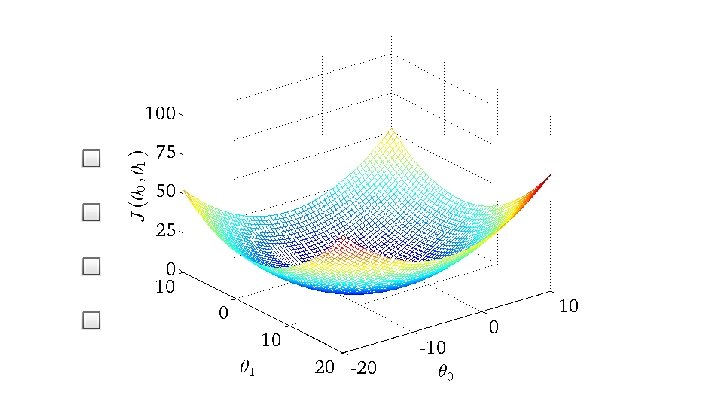

J( 0, 1) 0 1

J( 0, 1) 0 1

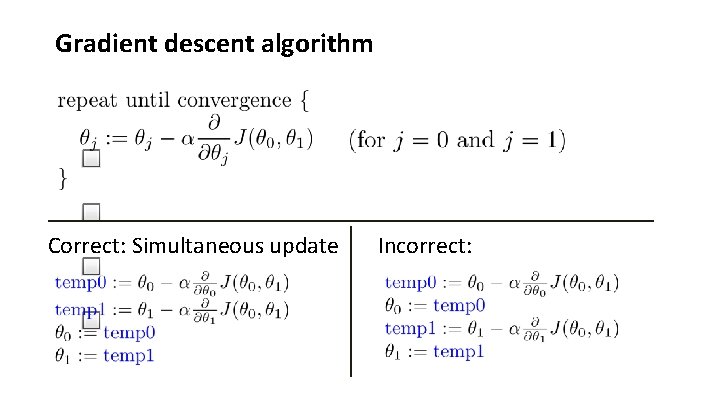

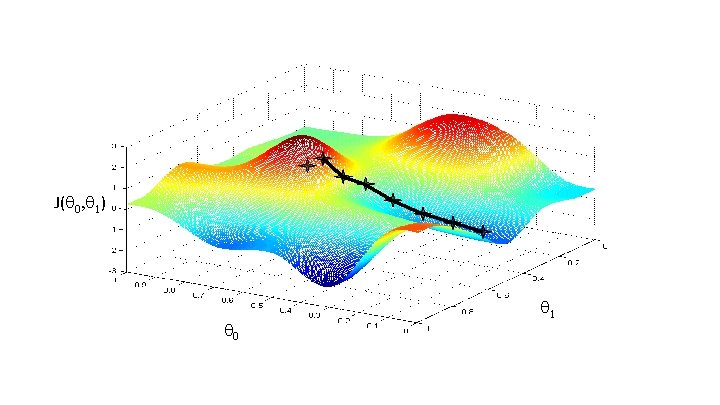

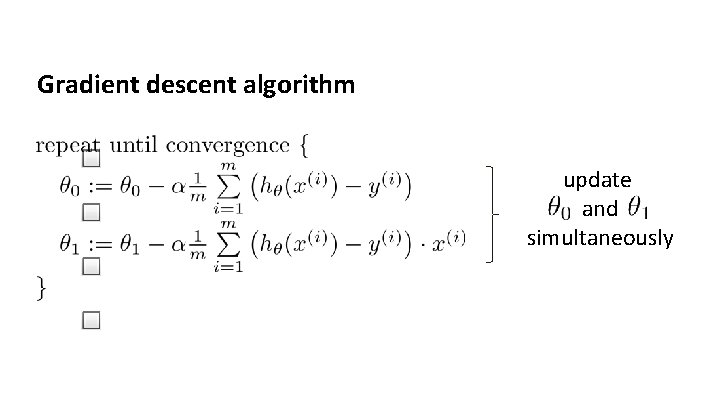

Gradient descent algorithm Correct: Simultaneous update Incorrect:

Linear regression with one variable Gradient descent intuition Machine Learning

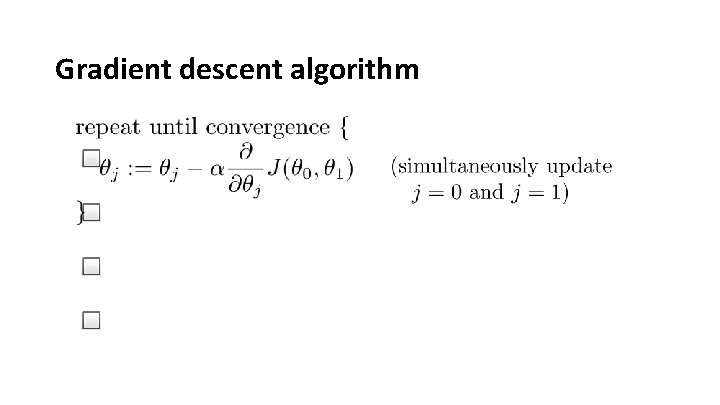

Gradient descent algorithm

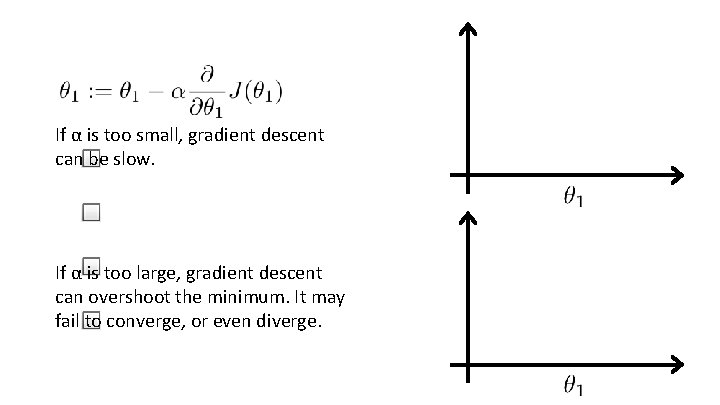

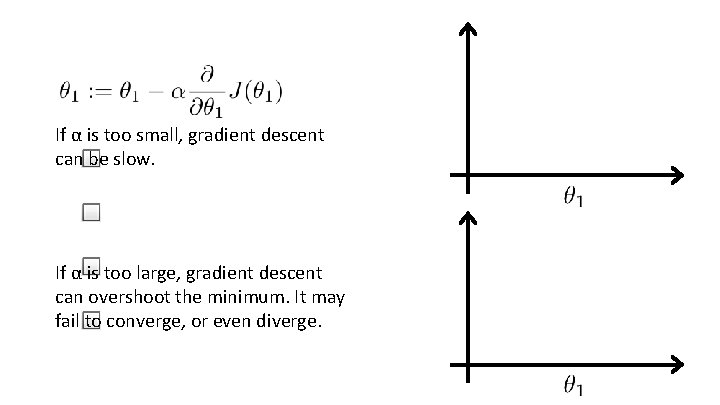

If α is too small, gradient descent can be slow. If α is too large, gradient descent can overshoot the minimum. It may fail to converge, or even diverge.

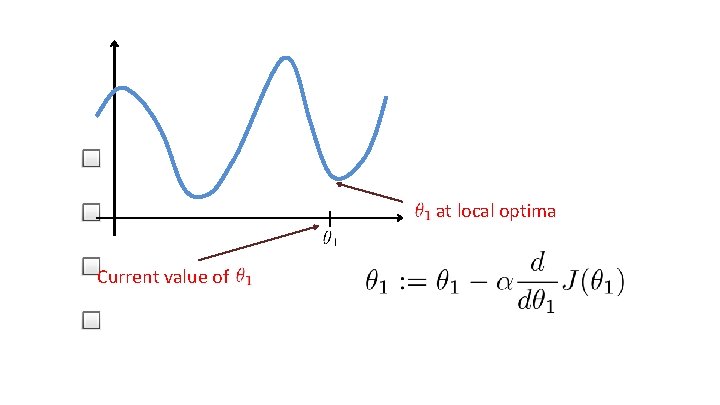

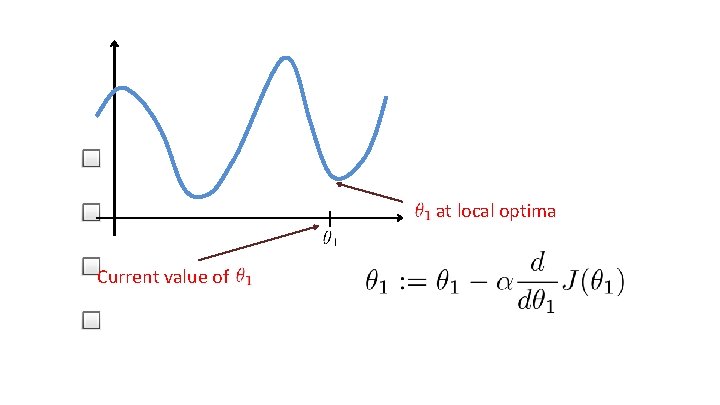

at local optima Current value of

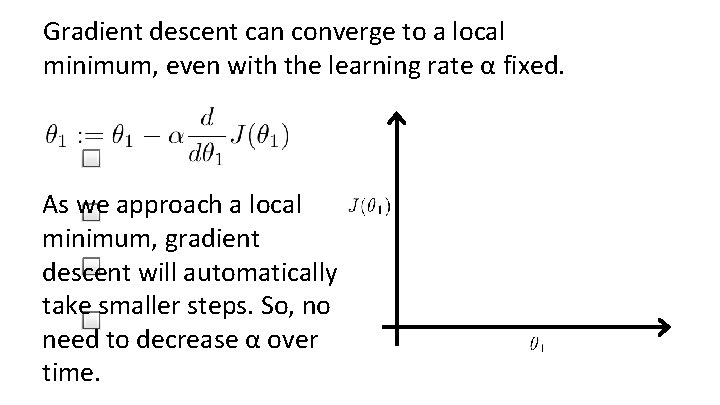

Gradient descent can converge to a local minimum, even with the learning rate α fixed. As we approach a local minimum, gradient descent will automatically take smaller steps. So, no need to decrease α over time.

Linear regression with one variable Gradient descent for linear regression Machine Learning

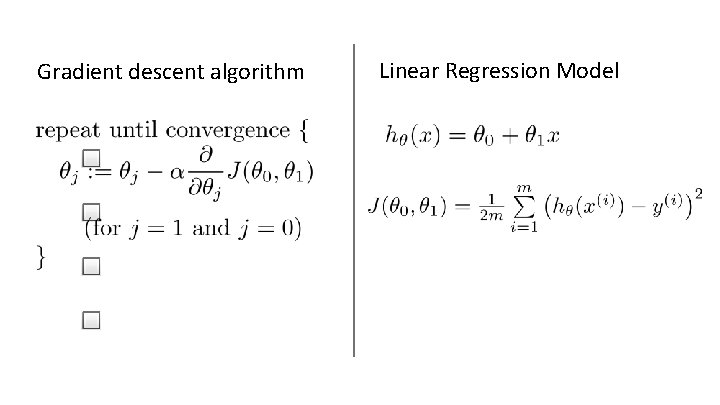

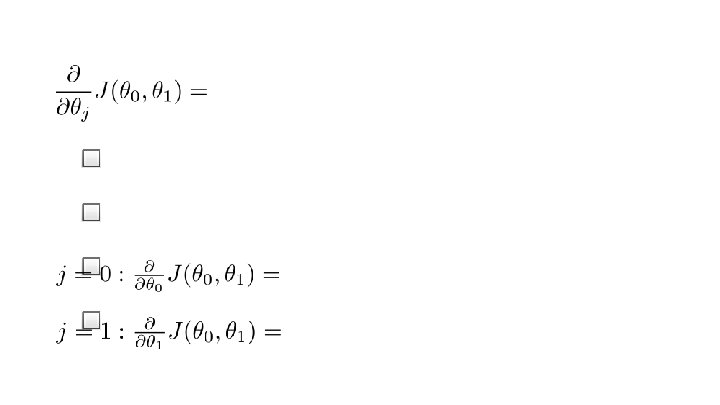

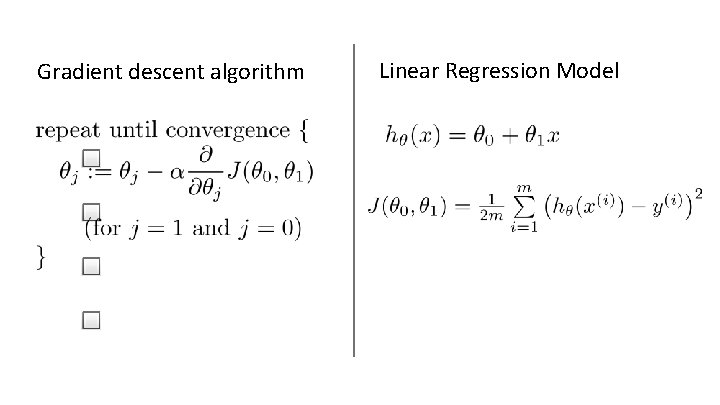

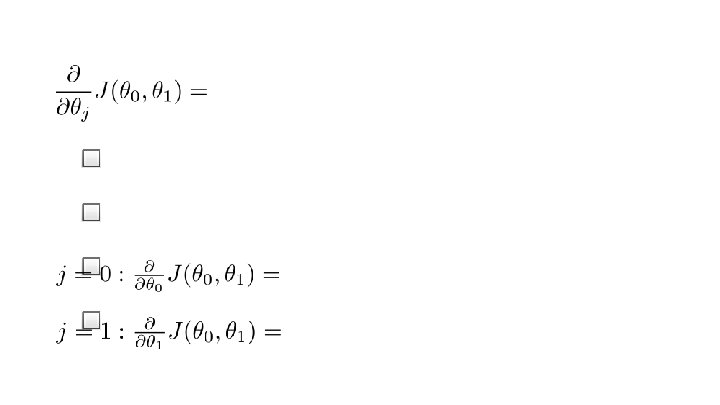

Gradient descent algorithm Linear Regression Model

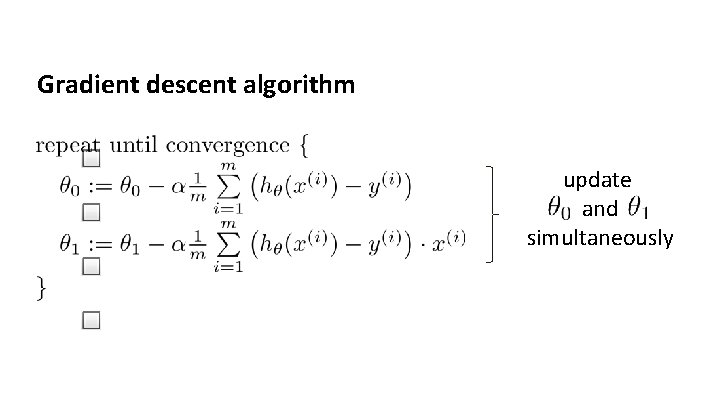

Gradient descent algorithm update and simultaneously

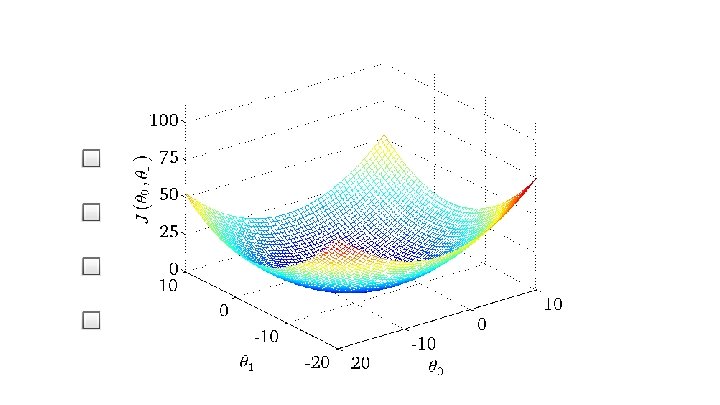

J( 0, 1) 0 1

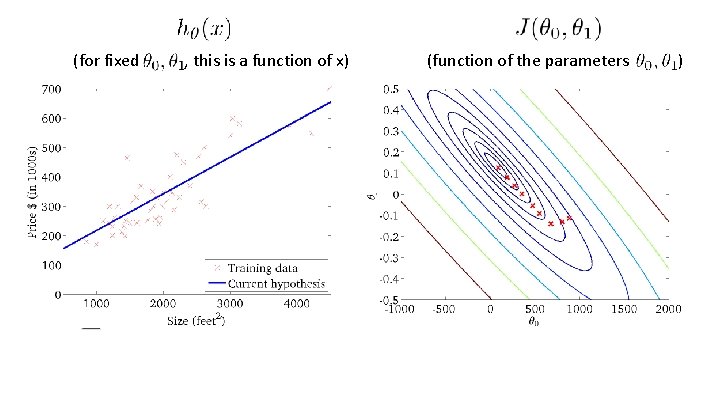

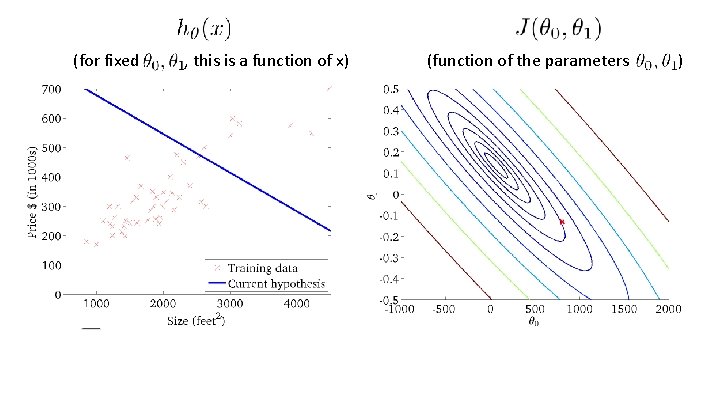

(for fixed , this is a function of x) (function of the parameters )

(for fixed , this is a function of x) (function of the parameters )

(for fixed , this is a function of x) (function of the parameters )

(for fixed , this is a function of x) (function of the parameters )

(for fixed , this is a function of x) (function of the parameters )

(for fixed , this is a function of x) (function of the parameters )

(for fixed , this is a function of x) (function of the parameters )

(for fixed , this is a function of x) (function of the parameters )

(for fixed , this is a function of x) (function of the parameters )

“Batch” Gradient Descent “Batch”: Each step of gradient descent uses all the training examples.

Linear Algebra review (optional) Matrices and vectors Machine Learning

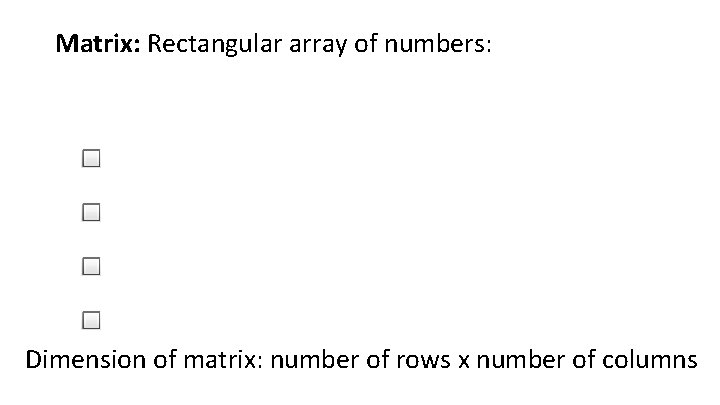

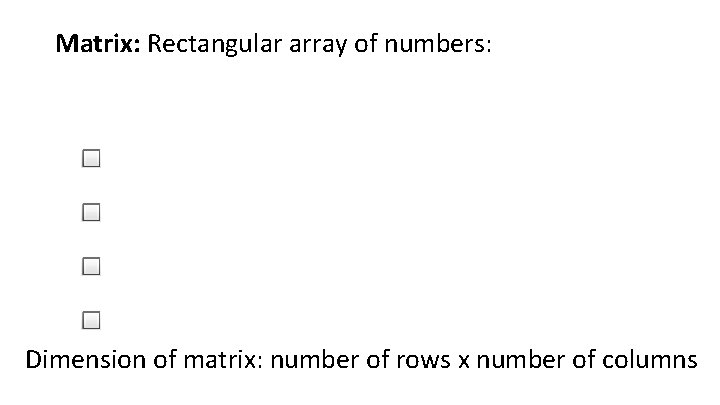

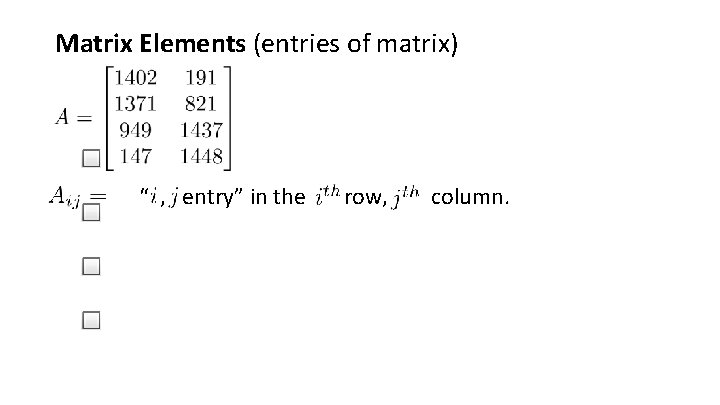

Matrix: Rectangular array of numbers: Dimension of matrix: number of rows x number of columns

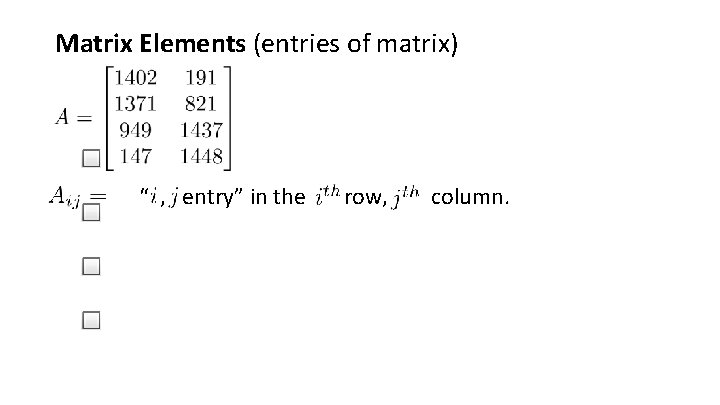

Matrix Elements (entries of matrix) “ , entry” in the row, column.

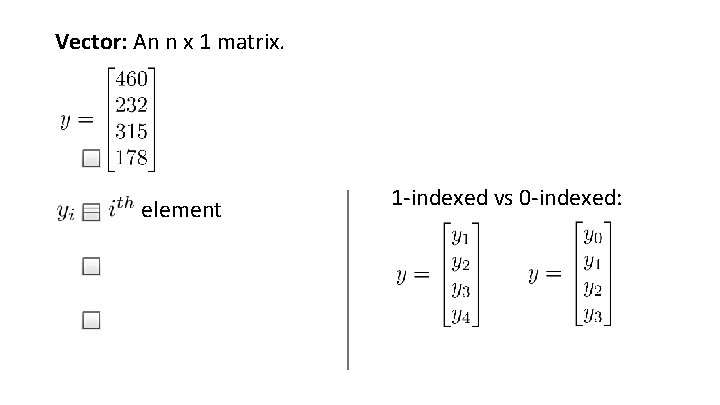

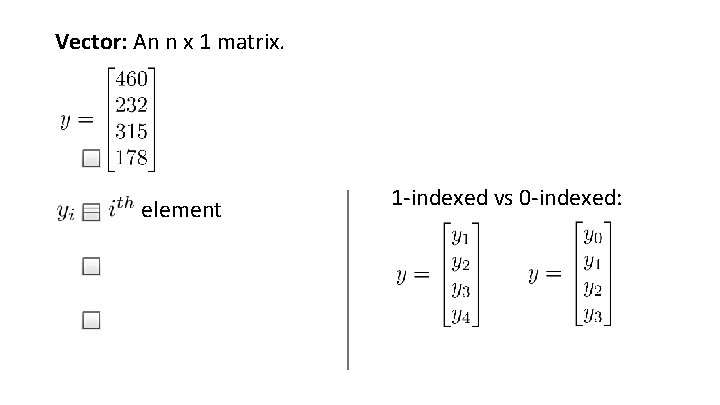

Vector: An n x 1 matrix. element 1 -indexed vs 0 -indexed:

Linear Algebra review (optional) Addition and scalar multiplication Machine Learning

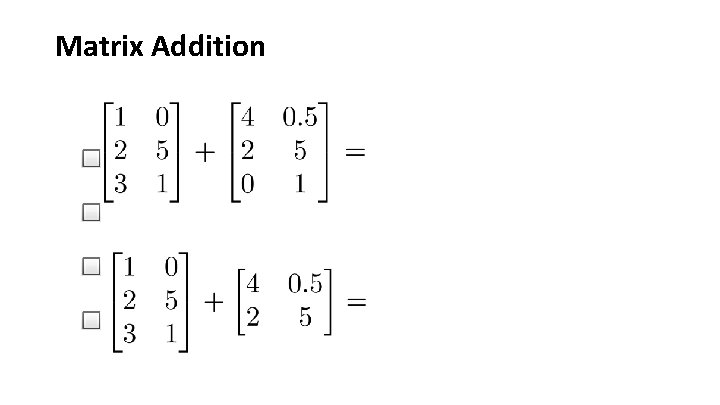

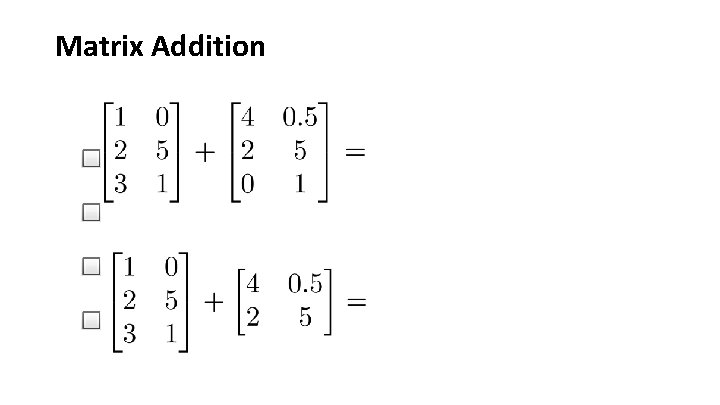

Matrix Addition

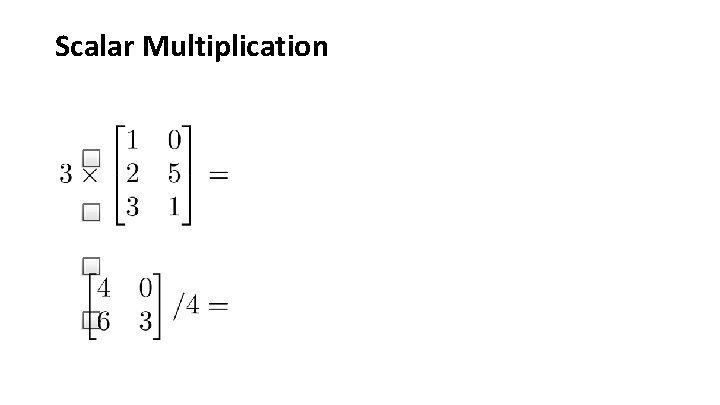

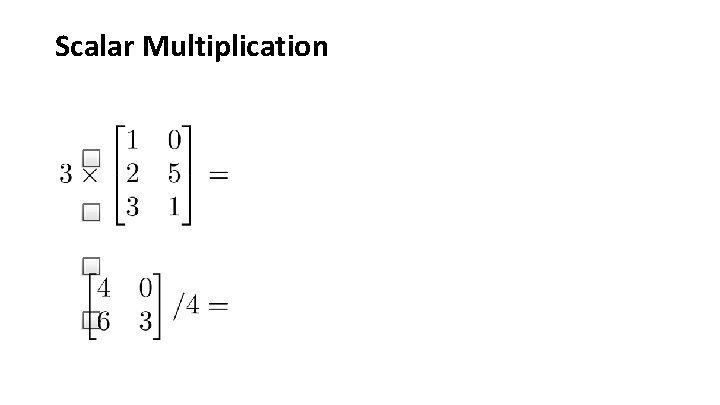

Scalar Multiplication

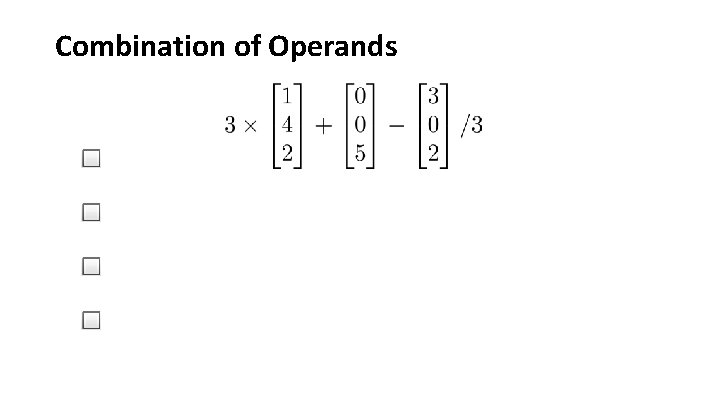

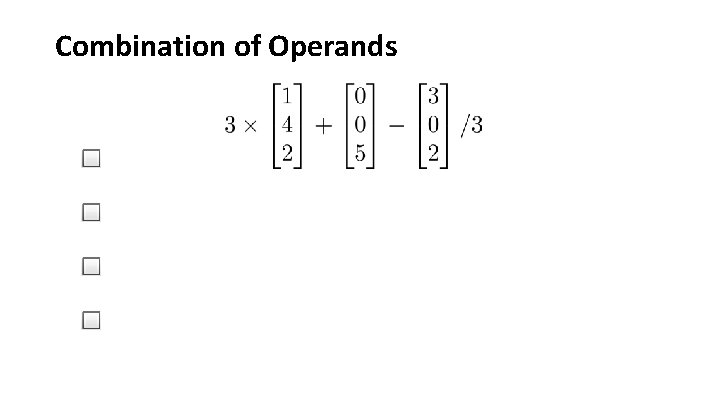

Combination of Operands

Linear Algebra review (optional) Matrix-vector multiplication Machine Learning

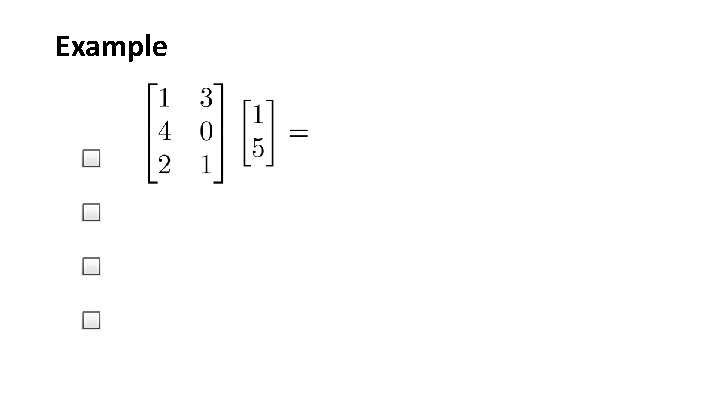

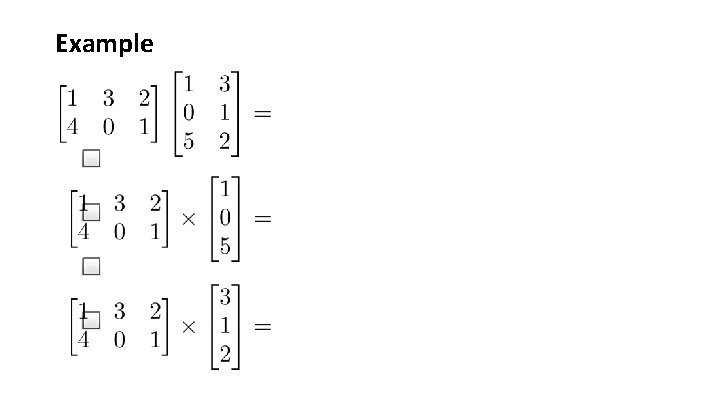

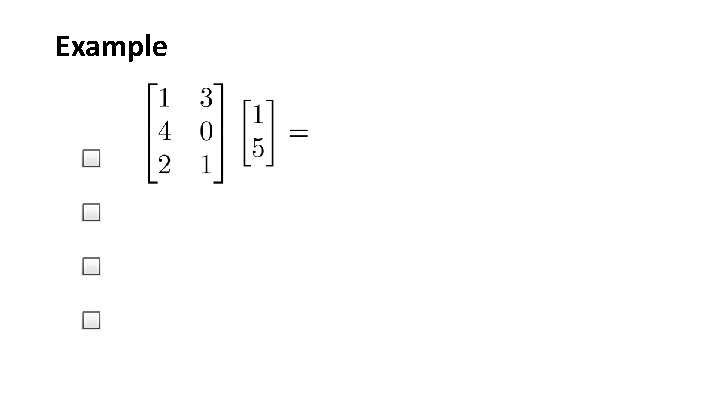

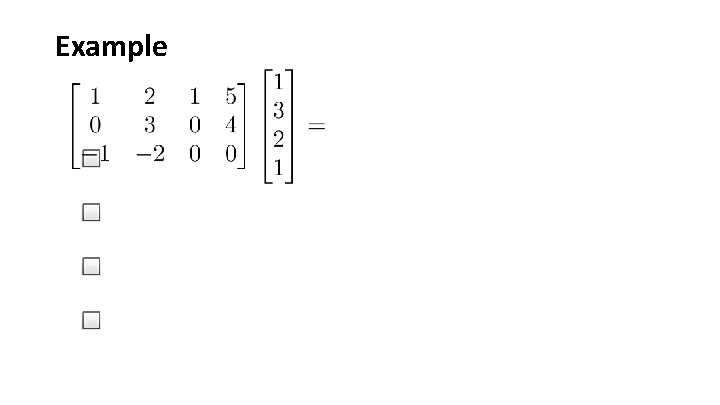

Example

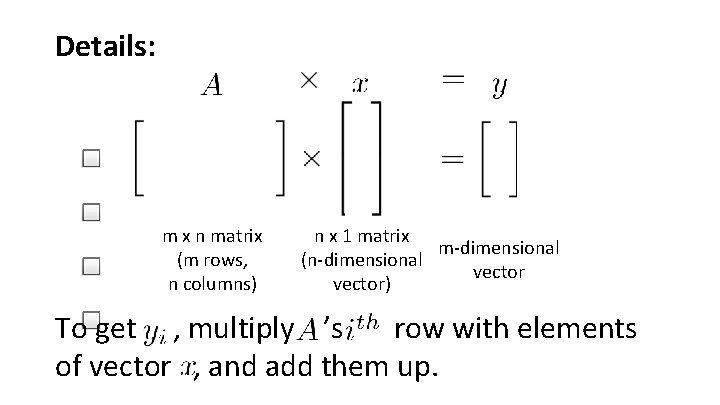

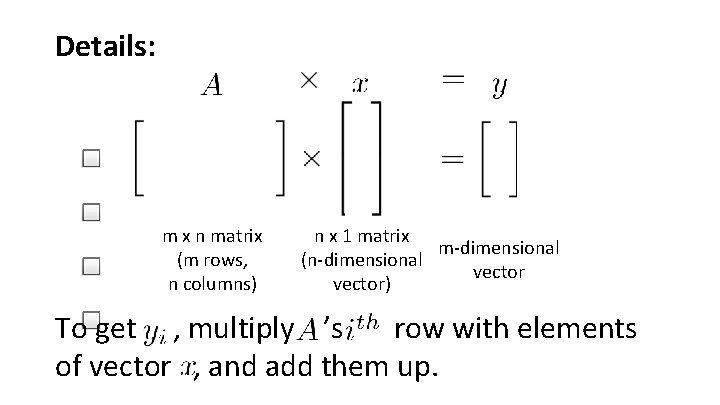

Details: m x n matrix (m rows, n columns) n x 1 matrix m-dimensional (n-dimensional vector) To get , multiply ’s row with elements of vector , and add them up.

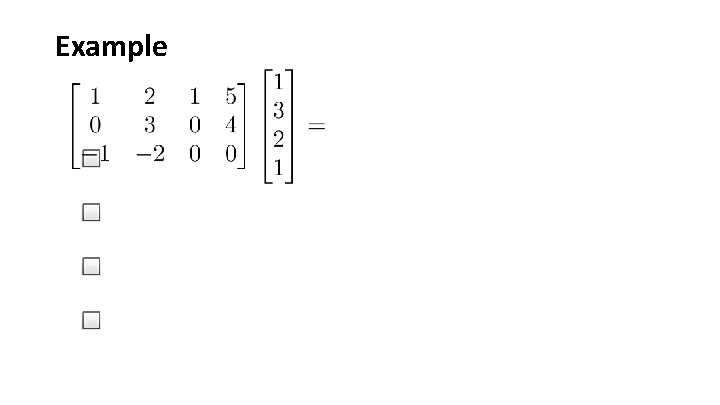

Example

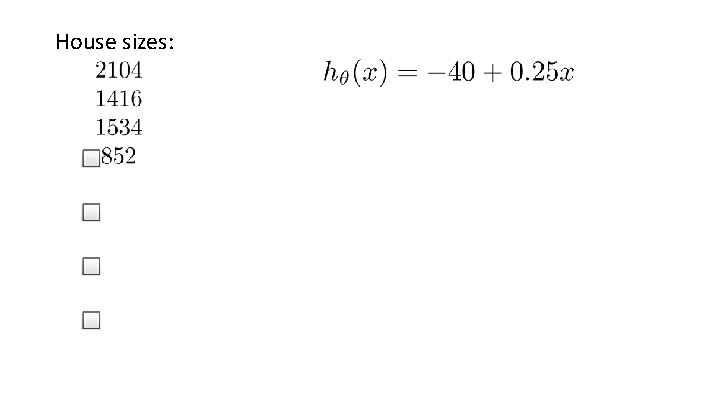

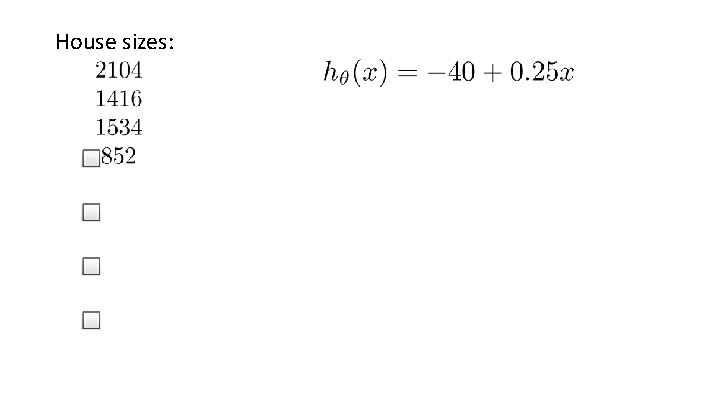

House sizes:

Linear Algebra review (optional) Matrix-matrix multiplication Machine Learning

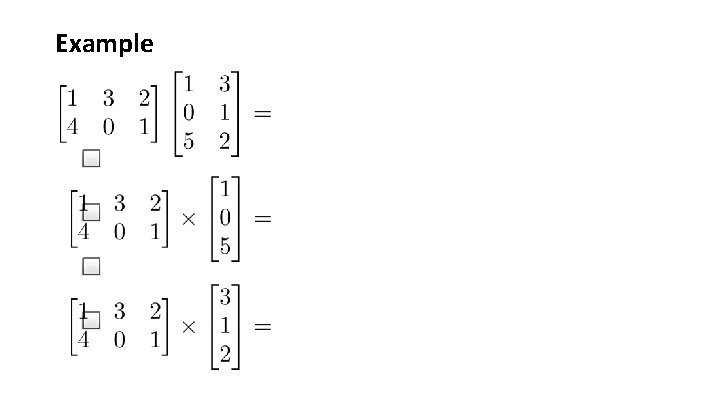

Example

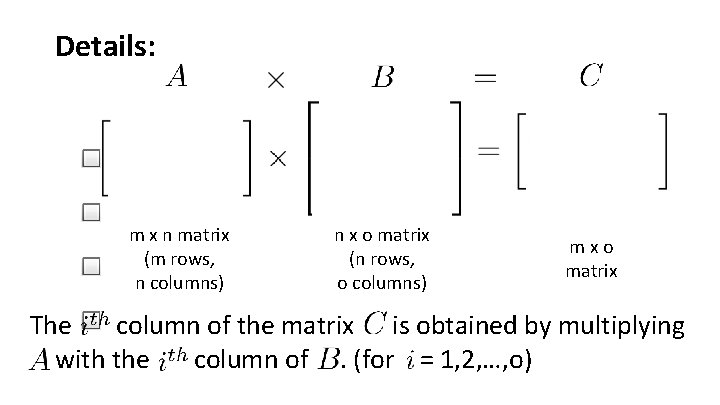

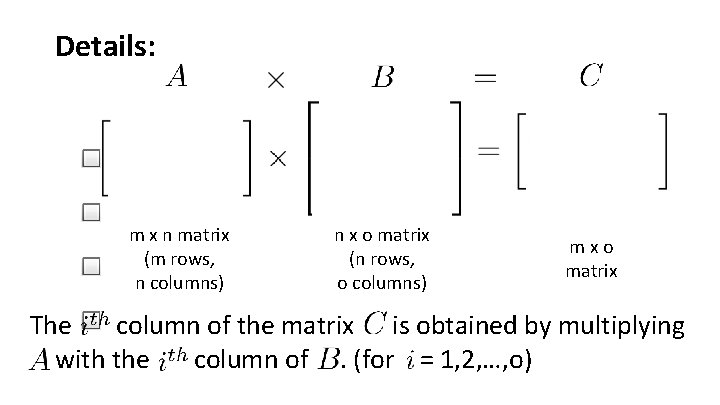

Details: m x n matrix (m rows, n columns) n x o matrix (n rows, o columns) mxo matrix The column of the matrix is obtained by multiplying with the column of. (for = 1, 2, …, o)

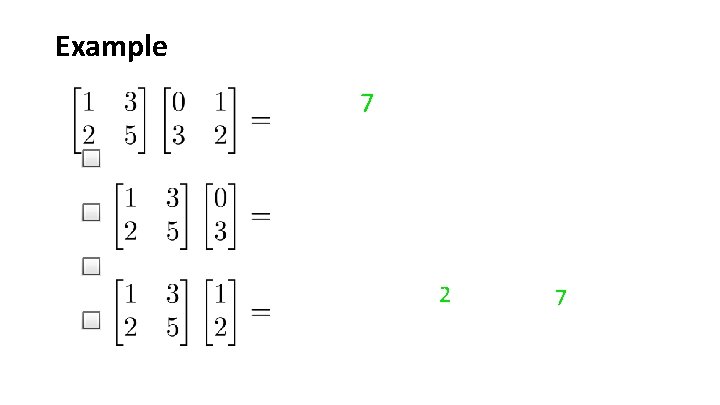

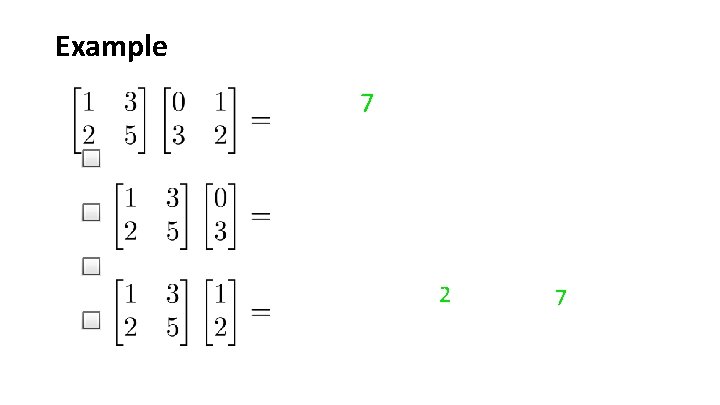

Example 7 2 7

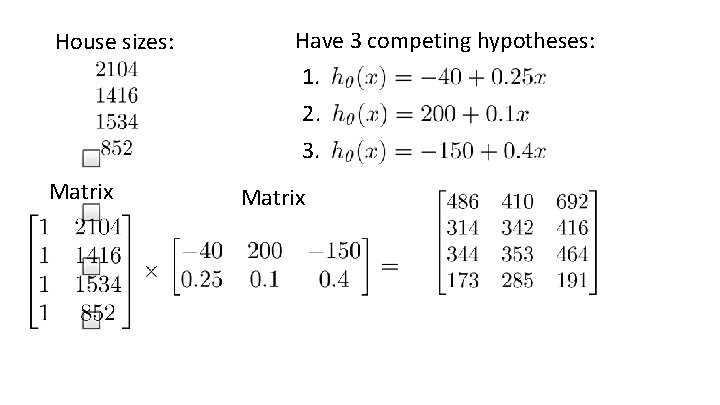

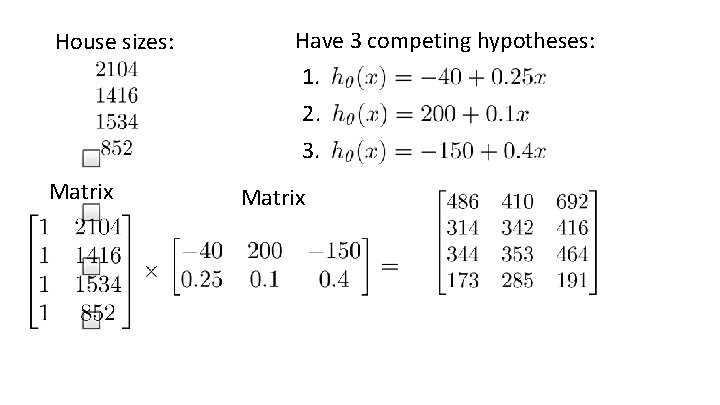

House sizes: Have 3 competing hypotheses: 1. 2. 3. Matrix

Linear Algebra review (optional) Matrix multiplication properties Machine Learning

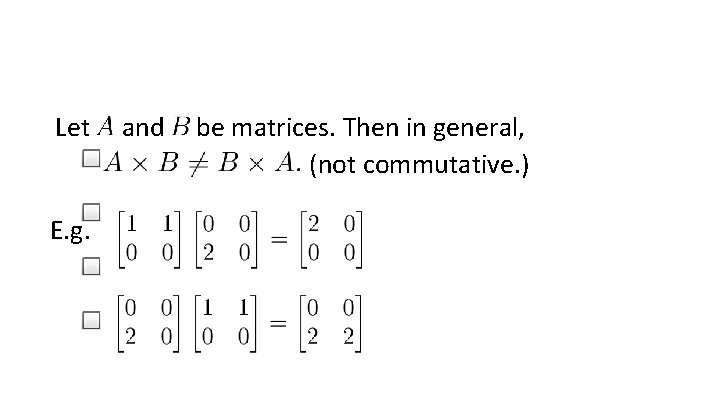

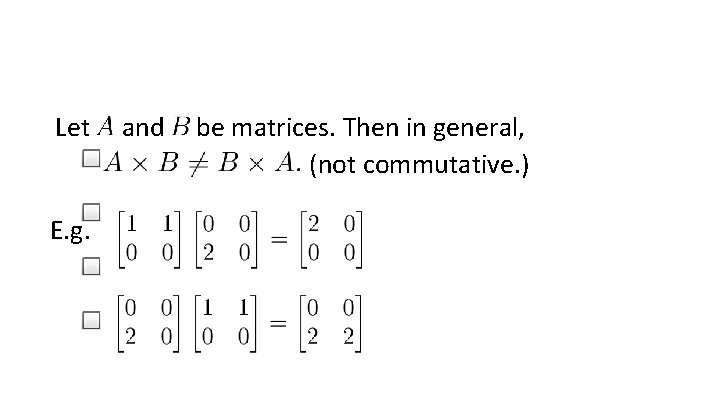

Let E. g. and be matrices. Then in general, (not commutative. )

Let Compute

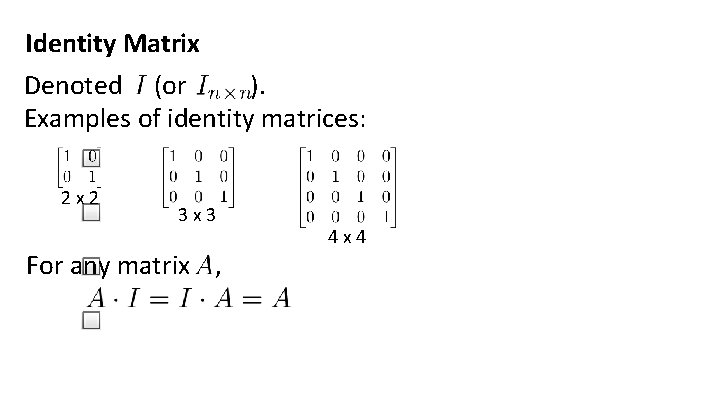

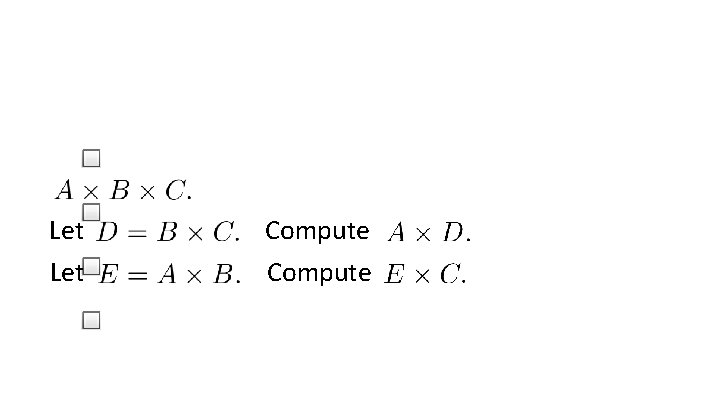

Identity Matrix Denoted (or ). Examples of identity matrices: 2 x 2 3 x 3 For any matrix , 4 x 4

Linear Algebra review (optional) Inverse and transpose Machine Learning

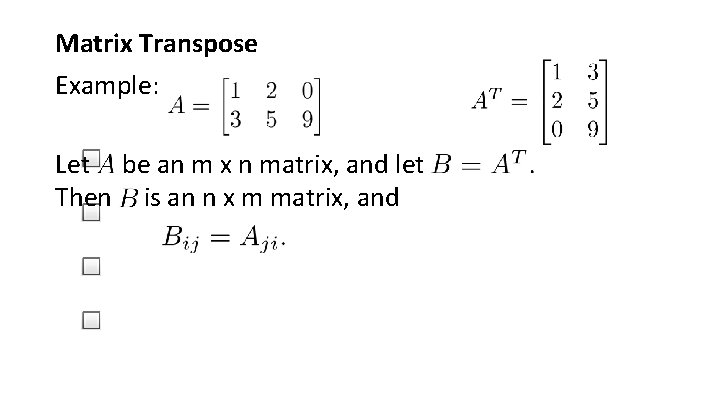

Not all numbers have an inverse. Matrix inverse: If A is an m x m matrix, and if it has an inverse, Matrices that don’t have an inverse are “singular” or “degenerate”

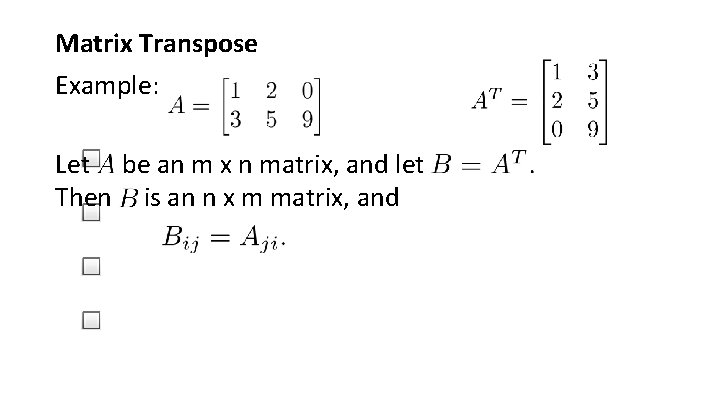

Matrix Transpose Example: Let be an m x n matrix, and let Then is an n x m matrix, and