INTRODUCTION TO VIRTUAL HIGH PERFORMANCE COMPUTING CLUSTERS Thomas

- Slides: 67

INTRODUCTION TO VIRTUAL HIGH PERFORMANCE COMPUTING CLUSTERS Thomas J. Hacker Associate Professor, Computer & Information Technology Co-Leader for Information Technology, Network for Earthquake Engineering Simulation (NEES) July 30, 2012

OUTLINE Motivation for the use of virtualization Overview of virtualization technology Overview of cloud computing technology Relation of cloud computing to HPC Practical notes on virtualization and cloud computing Virtual HPC clusters How to get started

MOTIVATION FOR VIRTUALIZATION WHY VIRTUALIZATION, AND WHEN DOES IT MAKE SENSE? • Clock speed increases following Moore’s law have ceased • Hardware is going to multicore with many cores – E. g. Intel MIC is the new Xeon Phi (Knight's Corner) with 50+ cores • Memory capacity of systems increasing – Max 512 GB on systems today

MOTIVATION FOR VIRTUALIZATION Traditional approach has been to tie a single application to a single server • An application runs in its own OS image on its own server for manageability and serviceability This approach doesn’t make sense anymore if you have 50+ cores that can’t be effectively used by an application It’s also difficult to share OS and various library versions for running multiple apps on the same system if OS/lib version requirements are conflicting VMs are being used to partition large scale servers to run many OSs and VMs independently from each other

MOTIVATION FOR VIRTUALIZATION Virtualization is now commodity technology • Ideas were first developed in the 1960 s at IBM for their mainframe computers Virtualization is used frequently for administrative applications to reduce the hardware footprint in the data center and reduce costs This represents a commodity trend that like other commodity trends that is worth exploiting for HPC Especially useful substitute for small-scale lab clusters that are used early in the life cycle of a parallel application.

SOFTWARE ECOSYSTEM FOR APPLICATIONS SOFTWARE REQUIRES A FUNCTIONAL ECOSYSTEM (SIMILAR TO MAZLOW’S NEEDS HIERARCHY) Basic “physiological” needs • Reliable computing platform • Functional operating system platform that is needed by the application – If software isn’t kept up to date, can conflict with OS upgrades • Adequate disk space, memory, and CPU cores “Safety” needs • Secure computing environment – no attackers, compromised accounts, etc. • “sense of security and predictability in the world” • Predictability is essential for replicating results and debugging “Sense of community” • All of the nodes in the cluster need to be consistent • Same OS version, libraries, etc. • Especially critical for MPI applications Meeting these basic needs ensures a consistent software ecosystem • Stable platform facilitates software development, testing, and validation of results • Developers and users can begin to trust the software, and results from software • Provides a strong base for future growth and development of the application

SOFTWARE ECOSYSTEM FOR APPLICATIONS Problems: difficult for users to control their computing environment for scientific applications • Scientific apps used in projects such as CMS require a lot of specific packages and versions, and it can be very difficult to get central IT organizations to customize and install the necessary software, due to the need to provide a generic and reliable system for the rest of the user base. • Scientific applications go through a life-cycle in which they evolve from single processor to running on a few workstations to small scale clusters and then finally scaling up to very large systems. • Building small scale physical clusters as a part of this life cycle is very expensive both in equipment, time, and grad student effort wasted to run these systems. • Scientific users can really benefit from having root access on their own systems to work on getting their codes working and installing any necessary packages.

SOFTWARE ECOSYSTEM FOR APPLICATIONS Virtual HPC clusters are an attractive and viable alternative to small scale lab clusters when applications that need these types of resources are still “young” and require a lot of customization. • • On larger systems, virtual clusters are a promising approach to provide system level checkpointing for large-scale applications. Imagine if you could use a virtualization system on your laptop to develop a 2 or 3 VM virtual cluster with all the packages and optimizations you needed, then transfer that VM image to a virtual cluster platform and instantiate dozens (or more) VM images to run a virtual cluster. Fault tolerance is a critical problem for applications as they scale up. • There are several levels of checkpointing: – Application level – “On the system” level (e. g. condor, blcr) – “below the system” level using live migration or checkpointing/saving VM images

RELIABILITY One of the “safety” needs of software in its ecosystem • Problems with reliability and techniques to improve reliability Large systems can fail often Severely affects large and/or long running jobs Very expensive to just restart computation from the beginning • Lots of wasted time on the computer system, and wasted power and cooling

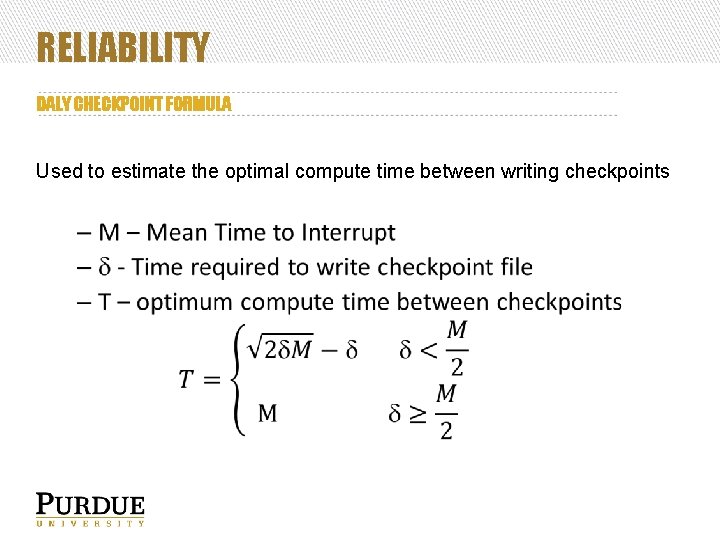

RELIABILITY A technique to overcome this problem is to frequently save critical program data – called checkpointing • Your program will need to read the saved data when your program is restarted and resume computational from the saved state There is some guidance as to how often you need to checkpoint to find a good balance between spending time on saving state for “safety” vs. making forward progress in your computation • Daly’s checkpoint formula is a good start

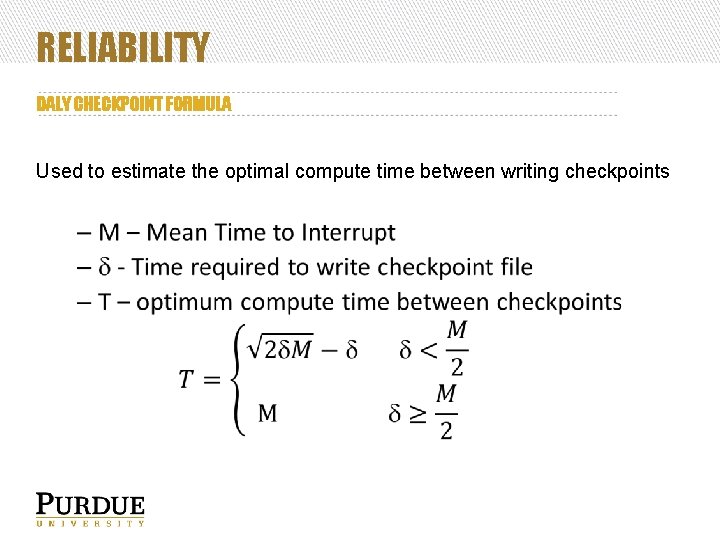

RELIABILITY DALY CHECKPOINT FORMULA Used to estimate the optimal compute time between writing checkpoints

RELIABILITY Research exploring alternative methods of performing checkpoint operations • System level checkpointing - BLCR • MPI level checkpointing • VM level checkpointing and live migration – Idea is to periodically save the VM state, or to live migrate the VM from sick to healthier systems

RELIABILITY Be aware of the need to integrate reliability practices in your application as you design and write your code At a minimum structure your code so that you can periodically save the current state of computation, and develop a capability to restart computation from that saved state if your program is restarted

OVERVIEW OF VIRTUALIZATION TECHNOLOGIES Virtualization is a technique that separates the operating system from the physical computer hardware, and interposes a layer of controlling software (hypervisor) between the hardware and operating system. Different types of virtualization systems (from Goldberg) • • Type 1: hypervisor between “bare metal” and guest operating systems Type 2: hypervisor between host operating system and guest operating systems Type 1 examples • • VMware, Xen, KVM Open. VZ Type 2 examples • Virtual Box, VMware Workstation, Parallels for Mac

TYPE 1 VIRTUALIZATION VMware • • • High quality commercial product We use VMware extensively for NEES Very useful for transitioning IT infrastructure from SDSC to Purdue for the NEES project Simply created VM images for each service/server on a few physical servers We were able to archive the VM images of the services/servers when NEES brought up NEEShub cyberinfrastructure Windows • Hyper-V

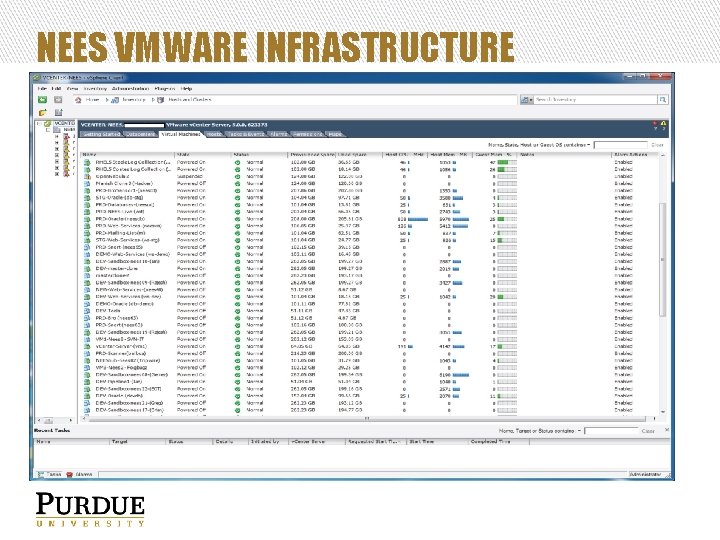

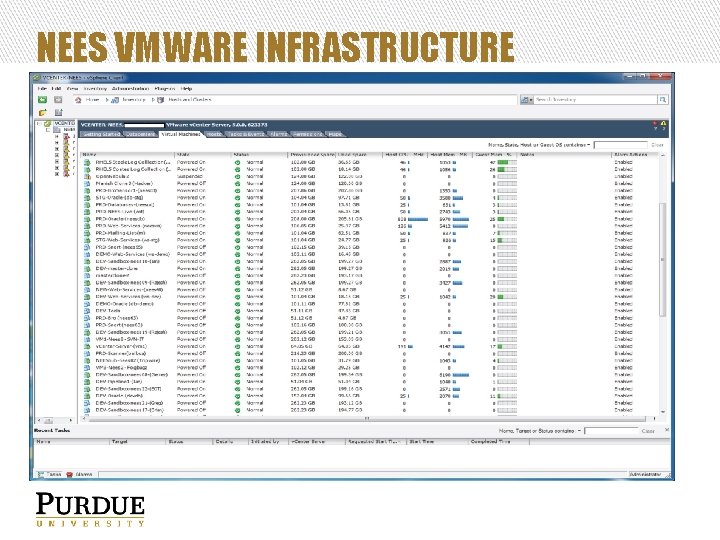

NEES VMWARE INFRASTRUCTURE

TYPE 1 VIRTUALIZATION Virtualization systems for Linux • • Xen and KVM Open source virtualization systems based on Linux • • First major virtualization system Older, seems to be less reliable Xen KVM • • Kernel-based Virtual Machine Newer, supported by Red. Hat Open. VZ • Container based virtualization system

XEN First version in 2003, and the first popular Linux hypervisor Integrated into the Linux kernel • • Uses paravirtualization – Guest OSs run a modified operating system to interact with hypervisor Different from VMware, which uses a custom kernel you load on the bare harware Host OS runs as Domain 0 Guest OSs run Used to be supported in a limited form in Red. Hat and Ubuntu • • Has been replaced with KVM in Red. Hat Citrix has a commercial version of Xen Personal experiences using Xen • • Works OK for simple virtualization Complex operations didn’t work as well

KVM Kernel-based Virtual Machine (KVM) • • • Built into Linux kernel Supported by Red. Hat More recent than Xen Uses QEMU for virtual processor emulation • • Allows you to emulated CPU architectures other than Intel E. g. ARM and SPARC Supports a wide variety of guest operating systems • • • Linux Windows Solaris Provides a useful set of management utilities • • Virtual Machine Manager Con. Virt

OPENVZ Container based virtualization system • Secure isolated Linux containers • Think of this as a “cage” for an application running in an Open. VZ container • Open. VZ terminology: Virtual Private Servers (VPS), Virtual Environments (VE) Two major differences from Xen and KVM • Guest OS shares kernel with host OS • File system of Guest OS visible on Host OS and is part of the directory tree on the Host OS – Doesn’t use a virtual disk drive (no 15 GB files to manage) Benefits compared with Xen and KVM • Very fast container creation • Very fast live migration • Easy to externally modify container file system (e. g. install software in the container) • Scales very well (no big virtual disk images) Downsides • Must use the same OS as the Host OS – Sharing kernel with the Host OS

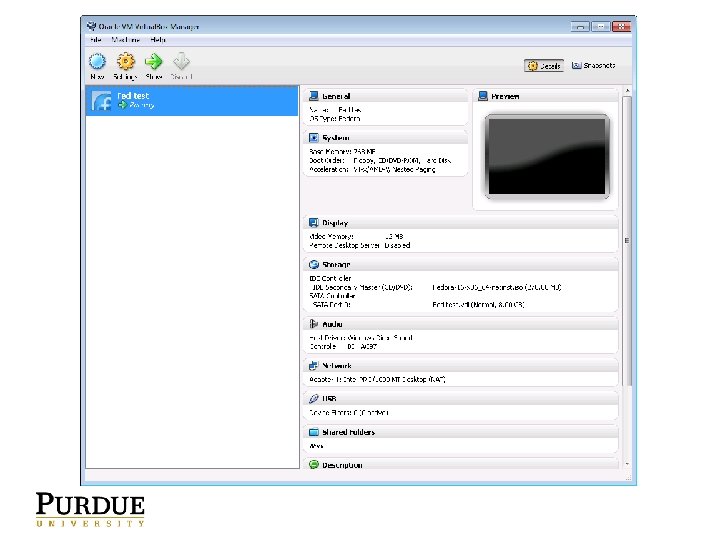

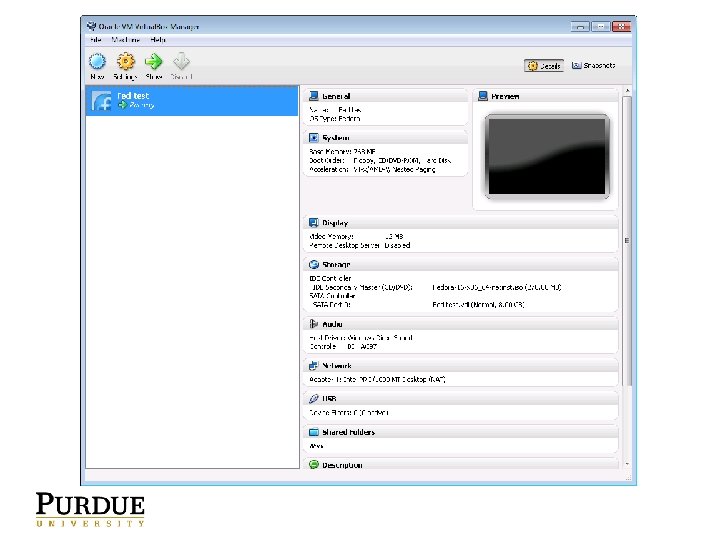

TYPE 2 EXAMPLES Oracle Virtual Box • • • Free VM environment that you can use on Windows, Linux, Mac OS X, and Solaris Simple to use, good way to get started VM images can be exported • • In theory…. Depends on the ability of the target virtualization system to import VM disk images Exports in OVF (Open Virtualization Framework) format My personal experience is that you often need to use a Linux utility to try to convert the disk image and VM metadata to an acceptable format for another virtualization system (often complex).

TYPE 2 EXAMPLES VMware Workstation • • • Runs as an application on top of Windows NOT VMware ESX (which is a hypervisor) Another good way to get started in working with virtualization technology Parallels for Mac • • • Can be used to run Windows on a Mac Commercial software Personal experience: Works “OK”, but Windows can be slow running on Parallels

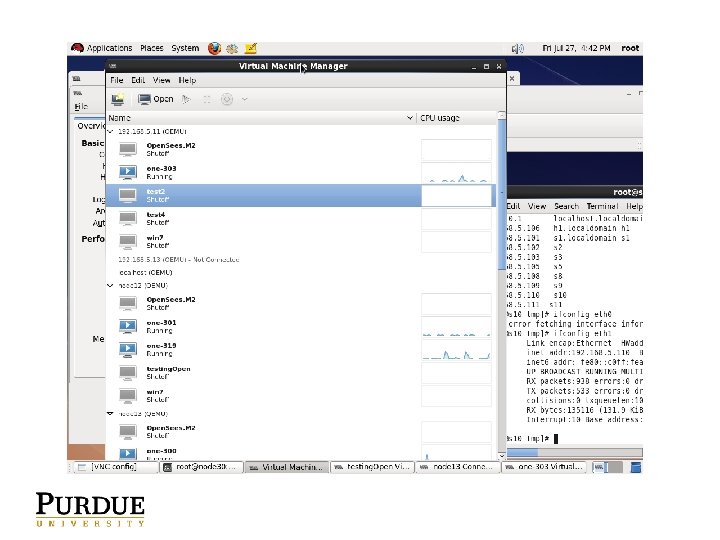

OPENVZ VS. KVM I am using Open. VZ and KVM for two different projects NEES / NEEShub • • Based on HUBzero Using Open. VZ as a virtual container or “jail” in which to run applications that interfaces with user through a vnc window on a webpage Open. Nebula cluster to run parallel applications • Distributed rendering using Maya – batchrendering animations • Open. Sees building simulation program for NEES – Parallel version that uses parallel solvers and MPI – Running on a virtual cluster on Open. Nebula in my lab and on Future. Grid The choice depends on the type of application who wish to run and the environment in which it will be run.

VIRTUALIZATION ON LINUX Additional mechanisms in Linux • Libvirt / virtio – Veneer library and utilities over virtualization systems • Brctl – Linux virtual network bridge control package • Cgroups – Linux feature for controlling resource use of processes • Network virtualization Network control is a constant problem • VLANs are best, but hard to configure • Open. Flow is supposed to address network management to simplify it and make it scalable.

MOVING UP FROM VIRTUALIZATION We talked about virtualization on a system level How can we manage a collection on virtual machines on a single system? How can we manage a distributed network of computers than host virtual machines? How can we manage the network and storage for this distributed network of virtual machines? This is the basis for one aspect of what is called “cloud computing” today • Infrastructure-as-a-Service (Iaa. S) The technology used for Iaa. S is the basis for building virtual HPC clusters, which is a collection of virtual machines running on a distributed network of computers.

OVERVIEW OF CLOUD COMPUTING TECHNOLOGIES

CLOUD COMPUTING Emerging technology that leverages virtualization • Distributed computing of the 201 Xs • Initial idea of a “computing utility” from Multics in the 1960 s Computing utility that provides services over a network • Computing • Storage • Pushes functionality from devices at the edge (e. g. laptops and mobile phones) to centralized servers

CLOUD COMPUTING ARCHITECTURE User interface • How users interact with the services running on the cloud • Very simple client hardware Resources and services index • What services are in the cloud, and where they are located System Management and Monitoring Storage and servers

TYPES OF CLOUD COMPUTING SYSTEMS Infrastructure as a service (Iaa. S) Software as a service (Saa. S) Platform as a service (Paa. S) There are some fundamental difference between these approaches that lead to confusion when talking about “cloud computing” • A cloud computing infrastructure can include one or all of these

INFRASTRUCTURE AS A SERVICE (IAAS) Virtualization environment • Cloud service provider offers capability of hosting virtual machines as a service • Cloud computing infrastructure for Iaa. S focuses on systems software needed to load, start, and manage virtual machines Amazon EC 2 is one example of Iaa. S

IAAS Enabling technologies used to provide Iaa. S Virtualization layer • VMware • Xen/KVM • Open. VZ Networking layer • Need to provide a VPN and network security for private VMs Scheduling layer • Managing the mapping of Iaa. S requests to physical and virtual infrastructure • Amazon EC 2 provide this • Open. Nebula, Eucalyptus, and Nimbus also provide scheduling services

IAAS BENEFITS User doesn’t need to own infrastructure • No servers, data center, etc. required • Very low cost of entry Pay-as-you-go computing • No upfront capital investments needed • Leasing a solution instead of a box No systems administration staff/operations staff needed • Cloud computing provided leverages economies of scale

EXAMPLES OF IAAS Blue. Lock in Indianapolis • Commercial Iaa. S provider Eucalyptus • Started as a research project at UCSB • Based on Java and Web Services Open. Nebula • Developed in Europe • Leverages usual Linux technologies – Ssh, NFS, etc. • Uses a scheduler named Haizea Nimbus • Research project at Argonne National Lab • Linked with Globus

PLATFORM AS A SERVICE (PAAS) Builds on virtualization platform Provides a software stack in addition to the virtualization service • OS, web server, authentication, etc. • APIs and middleware For example, if you needed a web server and you didn’t want to install apache, linux, etc.

BENEFITS OF PAAS Supported software stack • Don’t need to focus efforts on getting software infrastructure working • Pooled expertise in use of the software at the cloud computing provider You can focus service and development efforts on just your product Pay-as-you-go

EXAMPLES OF PAAS Amazon Web Service • Wikileaks was using this • You buy a web service that runs on Amazon’s virtualization infrastructure • Downside: outages can take out a lot of services. • Netflix also uses Amazon EC 2 Other Examples • Google App Engine • Microsoft Azure

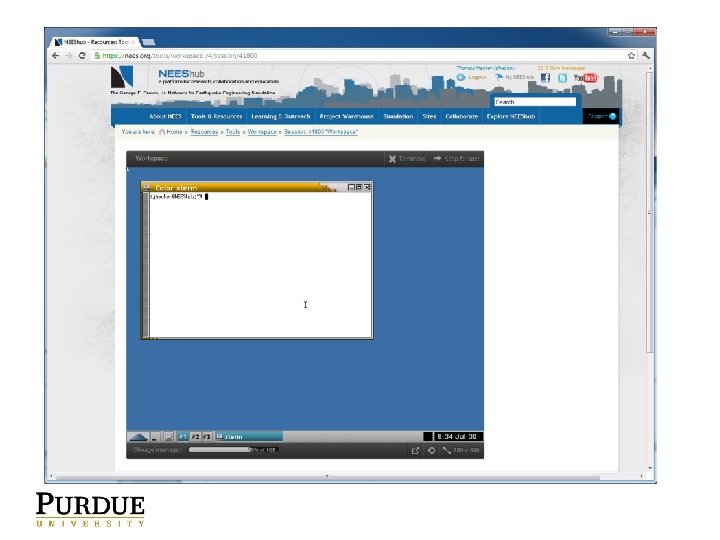

SOFTWARE AS A SERVICE (SAAS) Provides access to software over the Internet • No download/installation of the software is needed • Users can lease or rent software • Was a big idea about a decade ago, seems to be coming back Software runs remotely and displays back to the users computer • Think ‘vnc’ NEEShub is an example of this • Researchers can run tools in a window without download/install

BENEFITS OF SAAS No user download/install • Many corporate users don’t have access on their computers to install software Easier to support • Control the computing environment centrally Can be faster • As long as server hardware is fast and users have a good network connection Efficient use of centralized computing infrastructure

RELATION OF CLOUD COMPUTING TO HPC Use of cloud computing depends on how the HPC application is used Saa. S • • • NEEShub batchsubmit capability Allows uses to run parallel applications through the NEEShub as a service Users don’t need to be concerned about underlying infrastructure Iaa. S • • • HPC clusters on an infrastructure level The problem here is to deploy, operate, and use a collection of VMs to constitute a virtual HPC cluster The capabilities in this area are focused on VM image and network management and deployment

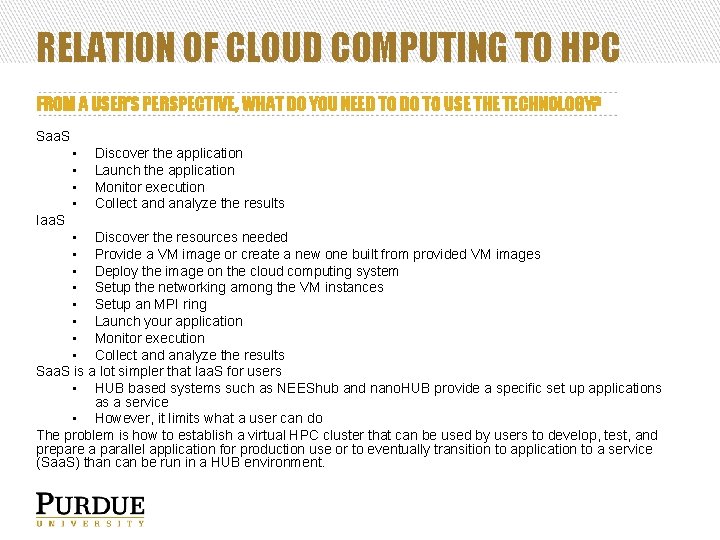

RELATION OF CLOUD COMPUTING TO HPC FROM A USER’S PERSPECTIVE, WHAT DO YOU NEED TO DO TO USE THE TECHNOLOGY? Saa. S • • Discover the application Launch the application Monitor execution Collect and analyze the results Iaa. S • Discover the resources needed • Provide a VM image or create a new one built from provided VM images • Deploy the image on the cloud computing system • Setup the networking among the VM instances • Setup an MPI ring • Launch your application • Monitor execution • Collect and analyze the results Saa. S is a lot simpler that Iaa. S for users • HUB based systems such as NEEShub and nano. HUB provide a specific set up applications as a service • However, it limits what a user can do The problem is how to establish a virtual HPC cluster that can be used by users to develop, test, and prepare a parallel application for production use or to eventually transition to application to a service (Saa. S) than can be run in a HUB environment.

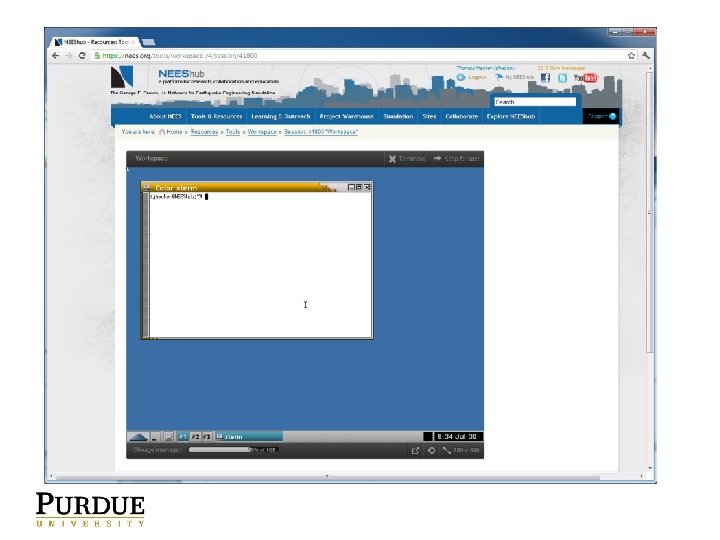

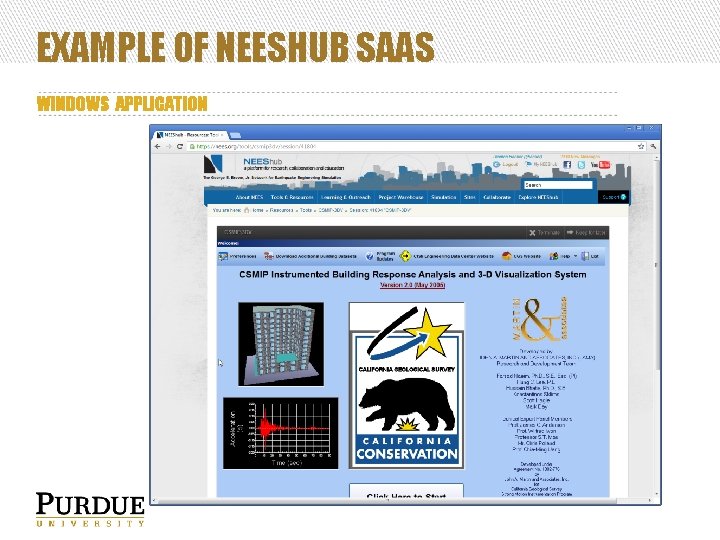

EXAMPLE OF NEESHUB SAAS WINDOWS APPLICATION

EXAMPLE OF NEESHUB SAAS LINUX APPLICATION You can create an account on nees. org and try these tools

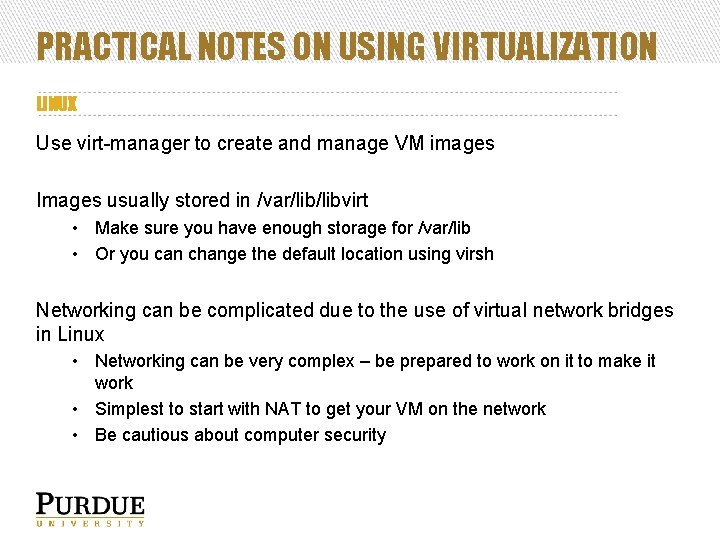

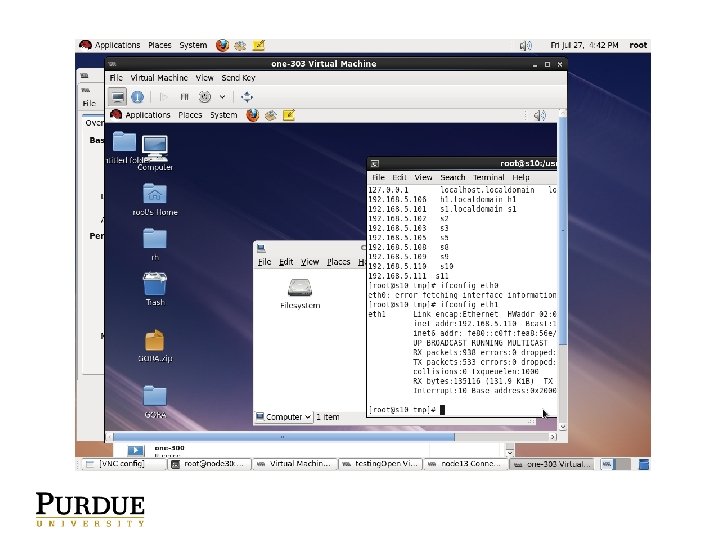

PRACTICAL NOTES ON USING VIRTUALIZATION LINUX Use virt-manager to create and manage VM images Images usually stored in /var/libvirt • Make sure you have enough storage for /var/lib • Or you can change the default location using virsh Networking can be complicated due to the use of virtual network bridges in Linux • Networking can be very complex – be prepared to work on it to make it work • Simplest to start with NAT to get your VM on the network • Be cautious about computer security

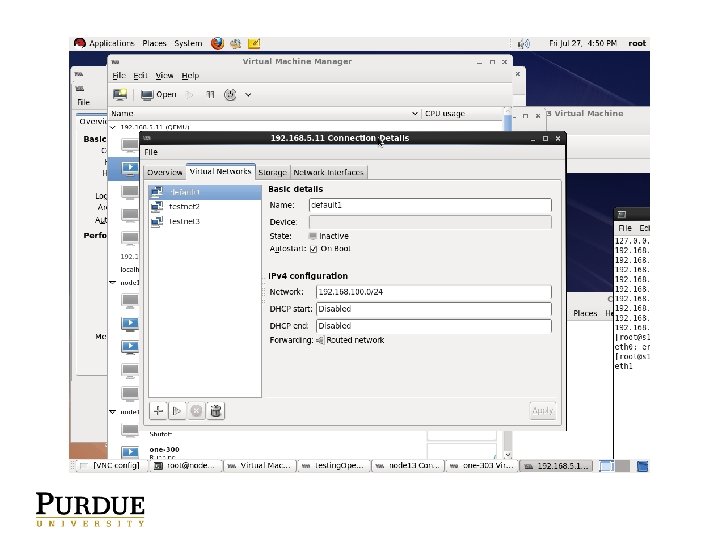

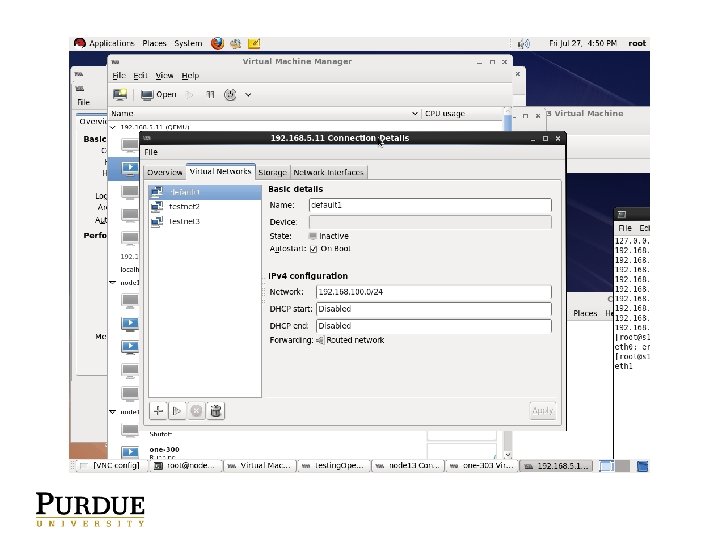

PRACTICAL NOTES ON USING VIRTUALIZATION Managing network can be tricky • Bridge-utils yum package provides brctl utilities to create and manage virtual network switches and connections External interface connects to the virtual network switch • VMs will connect to the virtual switch to share the connection • Virt-manager provides some functionality for this, but basically relies on what is created and managed by bridge-utils

PRACTICAL NOTES ON USING VIRTUALIZATION Learn virsh • Libvirt is used to control the KVM virtualization system • virsh is the CLI for libvirt • The real power behind the GUIs Libvirt is supposed to be able to control Xen and KVM

PRACTICAL NOTES ON CLOUD COMPUTING SYSTEMS VMware v. Sphere is commercial version of an Iaa. S controller • Start, stop, migrate, shutdown, and startup VM images and hardware servers • Manage virtual network switches • Good GUI and clear way to learn to work with the technology

PRACTICAL NOTES ON CLOUD COMPUTING SYSTEMS Linux based: NIMBUS, Eucalyptus, Open. Nebula, Open. Stack • My personal experience is that Open. Nebula is the most straightforward system to setup and use • Uses standard Linux facilities • Command line interface is clear and logical

VIRTUAL HPC CLUSTERS REVIEW Talked about virtualization technology Talked about cloud computing technology • Saa. S • Iaa. S Talked about setting up and controlling a collection of virtual machines How can we use this technology for HPC?

VIRTUAL HPC CLUSTERS You can create a virtual cluster built on a distributed collection of virtual machines controlled by a cloud computing system Allows you to run different OSs and applications on a finite set of servers without the need to reload and update the hardware servers when you want to change the load Very efficient use of hardware and space resources • Sometimes the campus will provide VM space for you

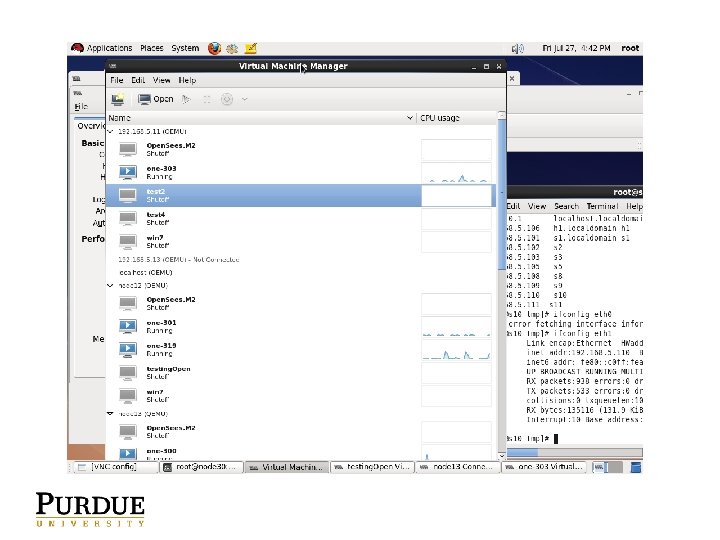

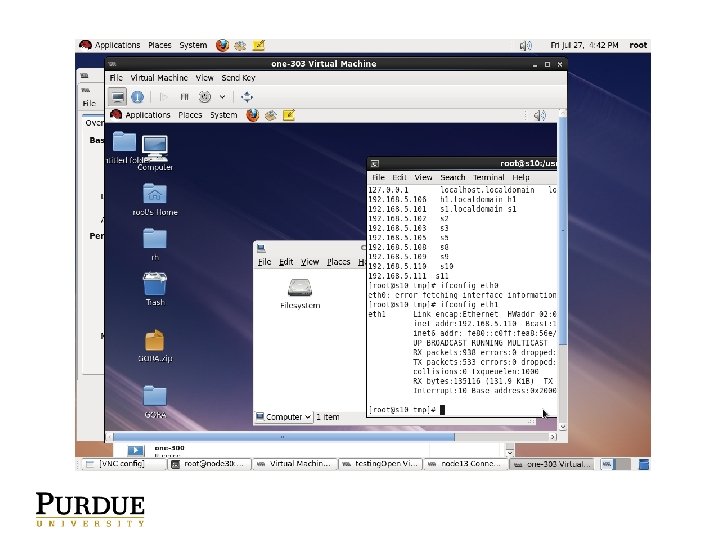

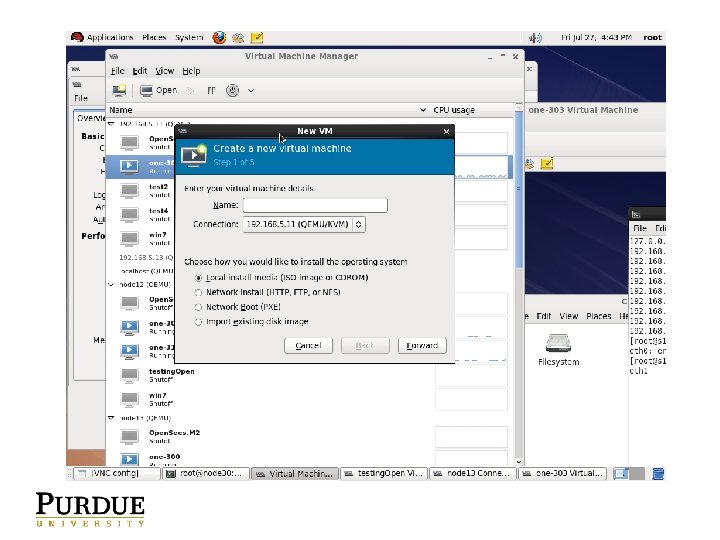

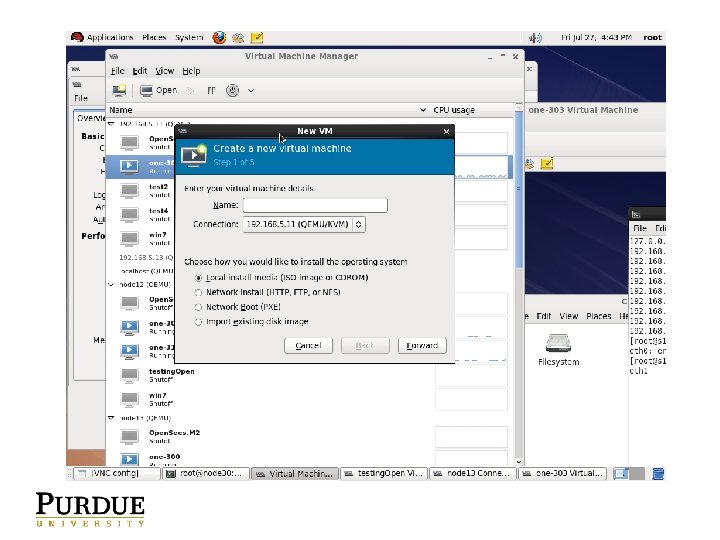

VIRTUAL CLUSTERS Steps to create a virtual cluster Select an Linux OS (or Windows) image you want to use • May select one already provided by the cloud computing provider • Pick you own, but might take more work to configure the VM image to work with the cloud computing system Create first VM image from DVD or provided instance • On Linux, use virt-manager • Other cloud computing systems (e. g. Amazon, Nimbus) provide a VM image to start with Connect it to the external network • Protect your image from intrusion using iptables or NAT using a local-only IP address (e. g. 192. 168. X. X) Customize the VM image with your application, libraries, and MPI • Install the compilers, libraries, or utilities needed for your application • Yum, apt-get, or. tar. gz files – This is why it’s important to have accessibility to external network • Pick an MPI implementation and install it Compile/build your application Ensure that you have installed all of the necessary libraries, tools, etc. • The ldd command is useful to ensure that all dependent libraries are on the system You how have a “golden master” VM image

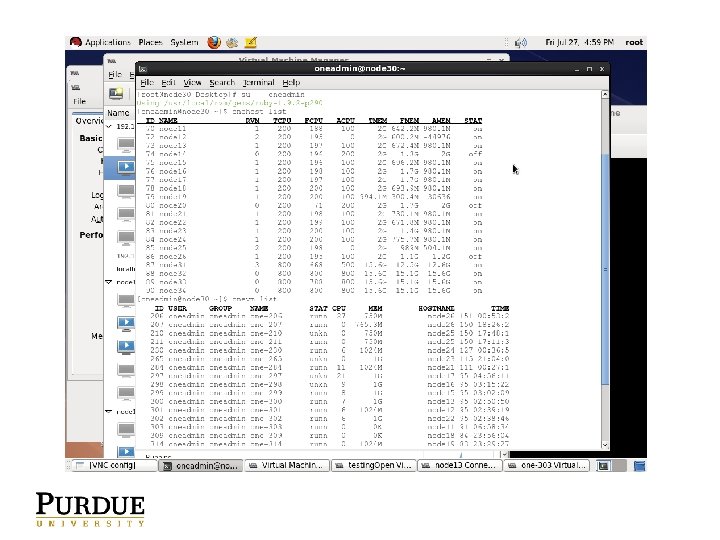

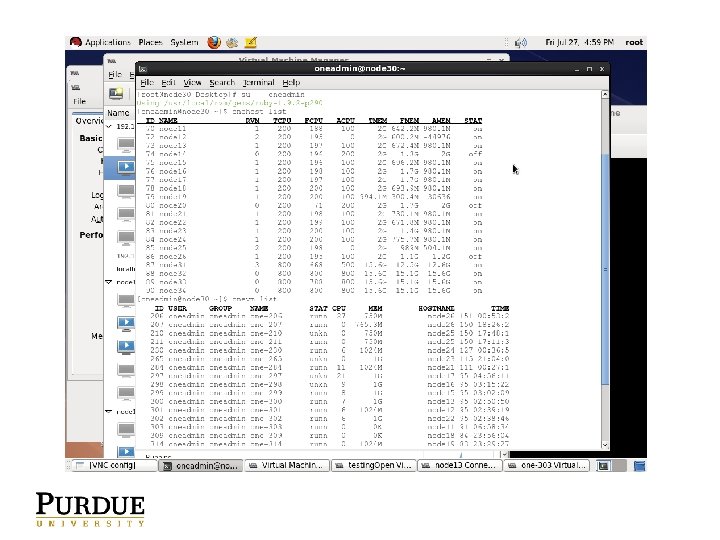

VIRTUAL CLUSTERS Plan out your IP address space • What range of IP addresses will you assign to the nodes on your virtual HPC cluster? • These addresses will need to go into /etc/hosts and each VM instance will need to be assigned an IP address and hostname Import your “golden master” image into the cloud computing system • This can be complicated and difficult • If you are building your image on Linux, you will need to export the virtual disk image along with the VM metadata describing number cores, mem, NIC MAC, etc. in the VM image • virsh dumpxml • Open. Nebula: oneimage / onetemplate commands Configure the cloud computing system to assign IP addresses from your range when it clones your “golden master” VM image • Alternative is to manually configure each VM instance Clone your “golden master” image N times to create an N node virtual HPC cluster • Watch your disk space to ensure you have enough space for all the VM images Once the cloud computing system has booted all of your VM images • Make sure you can connect to the console or ssh to each image • Virt-manager can help with this

VIRTUAL CLUSTERS Create /etc/hosts and mpihosts file and copy to all of the virtual nodes Either use mpdboot or mpiexec (depends on the MPI system you use) to make sure MPI can communicate across the network • • You will need to open the appropriate ports using iptables Alternatively, if your virtual cluster is behind a firewall (like pf. Sense), you won’t need to use iptables and you can open all of the ports. Setup a shared file space if you need it • Setup one of the nodes to act as an NFS server, and mount a shared space on all of the virtual cluster nodes Run your application

VIRTUAL CLUSTERS MY OWN EXPERIENCE I’ve used Open. Nebula to create and clone Windows 7 VMs and RHEL 6 VMs to run Open. Sees serial and parallel versions developed for Windows and Linux Seems to work pretty well once it’s setup Working with Future. Grid now to create a larger virtual RHEL cluster for the parallel version of Open. Sees So far, created a virtual HPC cluster with 10 4 -core VMs

BENEFITS OF VIRTUAL HPC CLUSTERS BENEFITS Flexibility User has root access System level checkpointing Potential for archiving and long term curation of scientific applications with operating system images required for the applications to execute You can save , share, and later retrieve whole virtual clusters (think versioning like you would do for software) Cluster can be created and operated for you by system administrators with the need to run your own cluster in a lab closet.

DRAWBACKS OF VIRTUAL HPC CLUSTERS DRAWBACKS Performance penalty Latency • Not likely to have access to a high performance switch IP address space can be difficult • • • Often not well thought out in cloud computing systems Ideal would be to have a private IP address space that is firewalled from the rest of the hardware and the world Way to do this is to use VPNs or VLANs – – • VPNs impose a software overhead VLANs require admin acceess to the network routers and switches (you are not likely to be granted this level of access) So, setting up /etc/hosts and MPI ring is still a little clunky compared with the other aspects of managing the VM images through the cloud computing system Getting a private networking and in place Complexity of learning how to use the technology Need for help from systems administrators to get initial VM image working • • • Some cloud computing systems require you to use a “canned” VM image to start with that they know already works More helpful if you can create your *own* OS images with necessaary libraries and software that you can then hand off to the cloud computing system In my own experience, Open. Nebula is the best for this

VIRTUAL HPC CLUSTERS IN USE Futuregrid • IU project to provide a testbed for virtual clusters and cloud computing apps Nimbus • Project led by Kate Keahey to develop software to support science clouds NEEShub / HUBzero • Purdue project working on the Saa. S level (and to some degree Iaa. S using Open. VZ) to provide a complete cyberinfrastructure for science and engie Galaxy project • Project led by James Taylor at Emory to provide a data cyberinfrastructure for biology. Users can create on demand virtual HPC clusters i. Plant • Project to develop cyberinfrastructure for plant biology. Users can create persistent VM images using Atmosphere system. – Uses Eucalyptus and Open. Stack on the back end for a cloud controller Condor • Treats VM deployment as jobs

HOW TO GET STARTED Using VMs is a different way of thinking about computing Best way to start is to try some software on your computer, make a VM, and try it out to become familiar with the technology • • • Linux – KVM Windows – Oracle Virtual. Box Mac – Parallels For an exercise, do the following: • • • Pick your platform, install a virtualization system Grab the latest Fedora DVD image Install it on your virtualization system Get it working on the network (e. g. browse the web) Perform a system update on the VM image

QUESTIONS? Contact: tjhacker@purdue. edu