Introduction to variable selection I Qi Yu Problems

Introduction to variable selection I Qi Yu

Problems due to poor variable selection: n Input dimension is too large; the curse of dimensionality problem may happen; n Poor model may be built with additional unrelated inputs or not enough relevant inputs; n Complex models which contain too many inputs is more different to understand 2

Two broad classes of variable selection methods: filter and wrapper n Filter method is a pre-processing step, which is independent of the learning algorithm. n The inputs subset is chosen by an evaluation criterion, which measures the relation of each subset of input variables with the output. 3

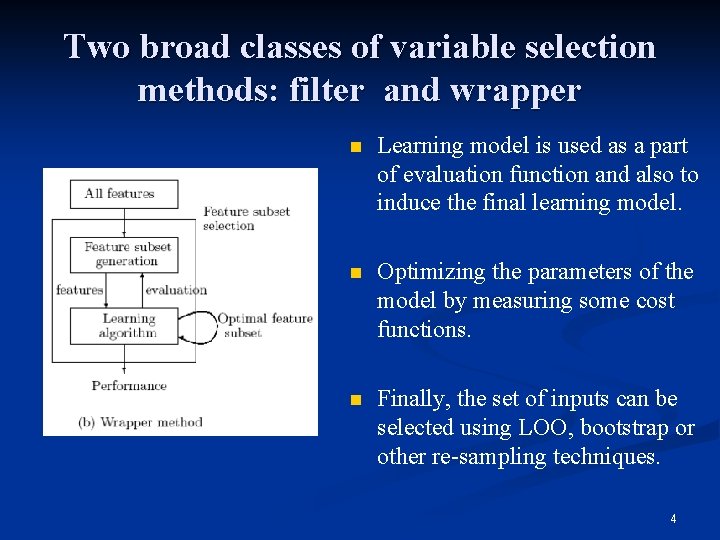

Two broad classes of variable selection methods: filter and wrapper n Learning model is used as a part of evaluation function and also to induce the final learning model. n Optimizing the parameters of the model by measuring some cost functions. n Finally, the set of inputs can be selected using LOO, bootstrap or other re-sampling techniques. 4

Comparsion of filter and wrapper: n Wrapper method tries to solve real problem, hence the criterion can be really optimaized; but it is potentially very time consuming since they typically need to evaluate a crossvalidation scheme at every iteration. n Filter method is much faster but it do not incorporate learning. 5

Embeded methods n In contrast of filter and wrapper approaches, in embedded methods the features selection part can not be separated from the learning part. n Structure of the class of function under consideration plays a crucial role n Existing embedded methods are reviewed based on a unifying mathematical framework. 6

Embeded methods n Forward-Backward Methods n Optimization of scaling factors n Sparsity term 7

Forward-Backward Methods n n n Forward selection methods: these methods start with one or a few features selected according to a method specific selection criteria. More features are iteratively added until a stopping criterion is met. Backward elimination methods: methods of this type start with all features and iteratively remove one feature or bunches of features. Nested methods: during an iteration features can be added as well as removed from the data. 8

Forward selection n Forward selection with Least squares n Grafting n Decision trees 9

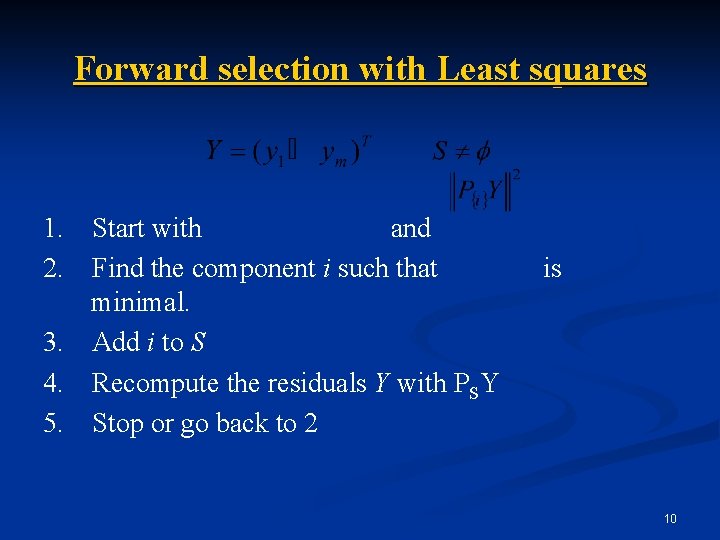

Forward selection with Least squares 1. 2. Start with and Find the component i such that minimal. 3. Add i to S 4. Recompute the residuals Y with PSY 5. Stop or go back to 2 is 10

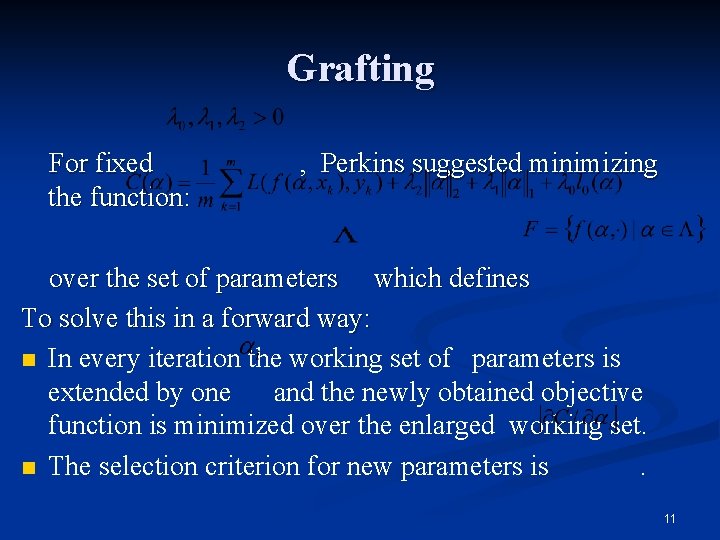

Grafting For fixed the function: , Perkins suggested minimizing over the set of parameters which defines To solve this in a forward way: n In every iteration the working set of parameters is extended by one and the newly obtained objective function is minimized over the enlarged working set. n The selection criterion for new parameters is. 11

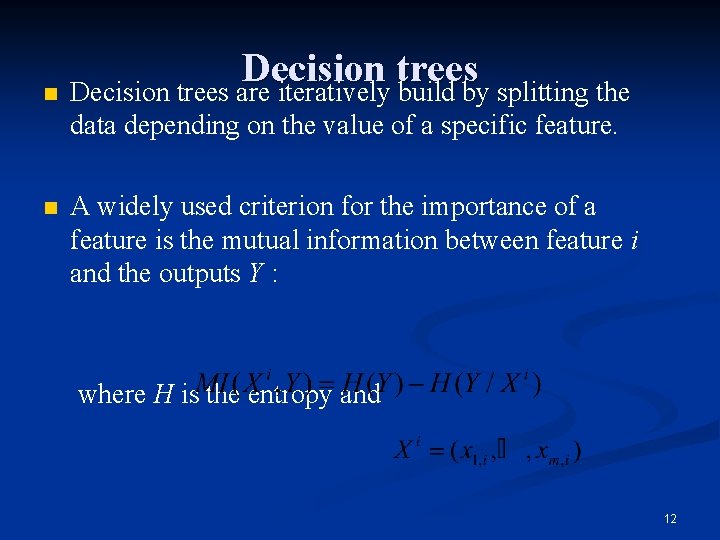

n Decision trees are iteratively build by splitting the data depending on the value of a specific feature. n A widely used criterion for the importance of a feature is the mutual information between feature i and the outputs Y : where H is the entropy and 12

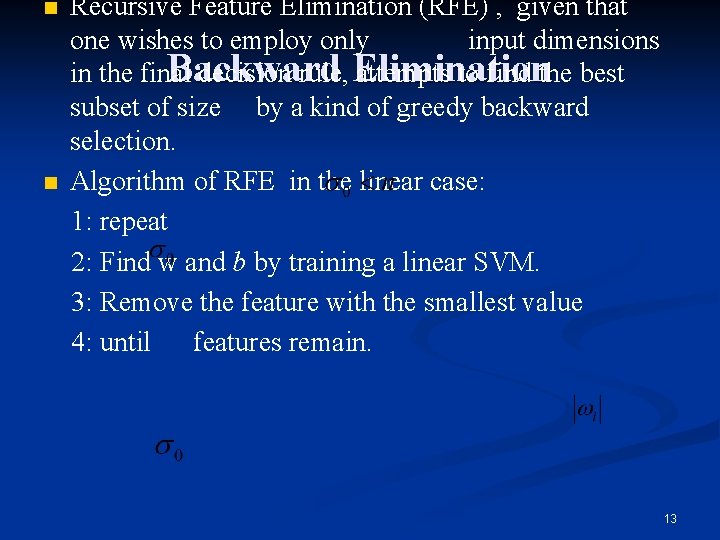

n n Recursive Feature Elimination (RFE) , given that one wishes to employ only input dimensions Backward in the final decision rule, Elimination attempts to find the best subset of size by a kind of greedy backward selection. Algorithm of RFE in the linear case: 1: repeat 2: Find w and b by training a linear SVM. 3: Remove the feature with the smallest value 4: until features remain. 13

Embeded methods n Forward-Backward Methods n Optimization of scaling factors n Sparsity term 14

Optimization of scaling factors n Scaling Factors for SVM n Automatic Relevance Determination n Variable Scaling: Extension to Maximum Entropy Discrimination 15

Scaling Factors for SVM Feature selection is performed by scaling the input parameters by a vector. Larger values of indicate more useful features. Thus the problem is now one of choosing the best kernel of the form: We wish to find the optimal parameters which can be optimized by many criterias, i. e. gradient descent on the R 2 W 2 bound, span bound or a validation error. 16

Optimization of scaling factors n Scaling Factors for SVM n Automatic Relevance Determination n Variable Scaling: Extension to Maximum Entropy Discrimination 17

Automatic Relevance Determination n In a probabilistic framework, a model of the likelihood of the data is chosen P(y|w) as well as a prior on the weight vector, P(w). n To predict the output of a test point x, the average of fw(x) over the posterior distribution P(w|y) is computed, that is using function fw. MAP to predict. w. MAP is the vector of parameters called the Maximum a Posteriori (MAP), i. e. 18

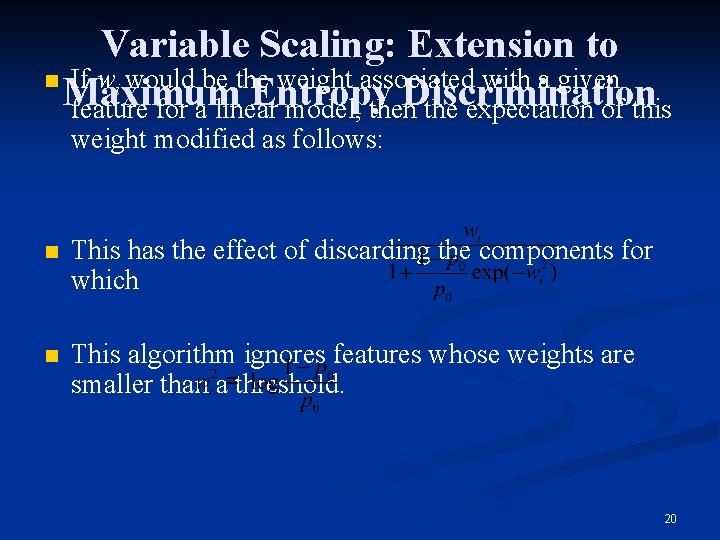

Variable Scaling: Extension to Maximum Entropy Discrimination n n The Maximum Entropy Discrimination (MED) framework is a probabilistic model in which one does not learn parameters of a model, but distributions over them. Feature selection can be easily integrated in this framework. For this purpose, one has to specify a prior probability p 0 that a feature is active. 19

Variable Scaling: Extension to n If wi would be the weight associated with a given Maximum Entropy Discrimination feature for a linear model, then the expectation of this weight modified as follows: n This has the effect of discarding the components for which n This algorithm ignores features whose weights are smaller than a threshold. 20

Sparsity term In the case of linear models, indicator variables are not necessary as feature selection can be enforced on the parameters of the model directly. This is generally done by adding a sparsity term to the objective function that the model minimizes. Feature Selection as an Optimization Problem n Concave Minimization n 21

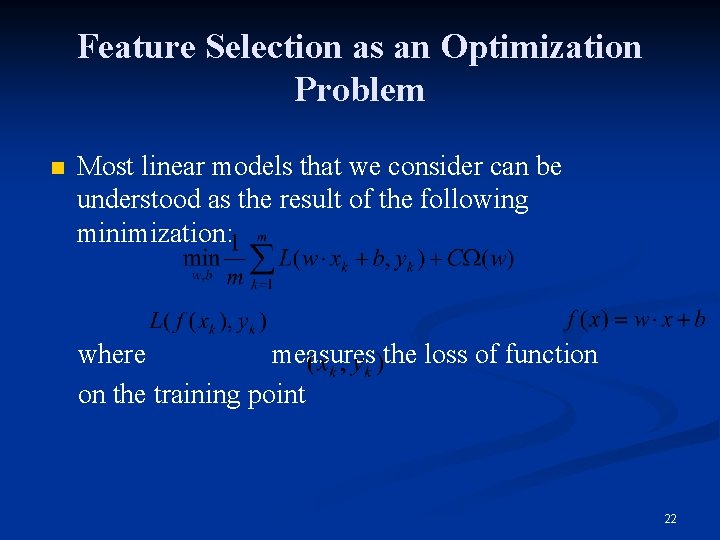

Feature Selection as an Optimization Problem n Most linear models that we consider can be understood as the result of the following minimization: where measures the loss of function on the training point 22

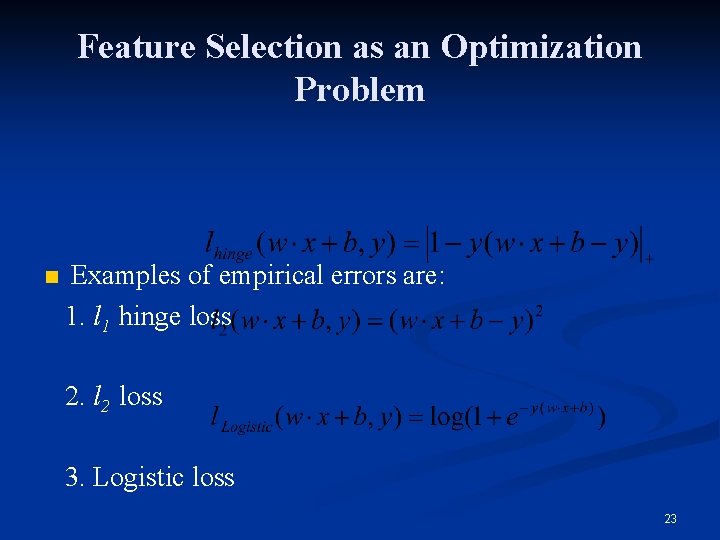

Feature Selection as an Optimization Problem n Examples of empirical errors are: 1. l 1 hinge loss 2. l 2 loss 3. Logistic loss 23

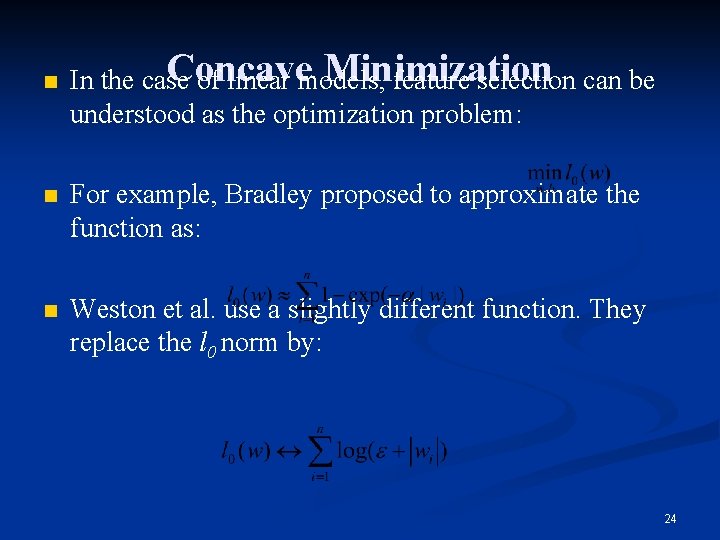

n Concave Minimization In the case of linear models, feature selection can be understood as the optimization problem: n For example, Bradley proposed to approximate the function as: n Weston et al. use a slightly different function. They replace the l 0 norm by: 24

Summary of embeded methods n n n Embeded method is built upon the concept of scaling factors. We discussed embedded methods along how they approximate the proposed optimization problems: Explicit removal or addition of features - the scaling factors are optimized over the discrete set {0, 1}n in a greedy iteration; Optimization of scaling factors over the compact interval [0, 1]n, and Linear approaches, that directly enforce sparsity of the model parameters. 25

Thank you !

- Slides: 26