INTRODUCTION TO MATLAB PARALLEL COMPUTING TOOLBOX Kadin Tseng

INTRODUCTION TO MATLAB PARALLEL COMPUTING TOOLBOX Kadin Tseng Boston University Scientific Computing and Visualization

MATLAB Parallel Computing Toolbox Log On To PC For Hands-on Practice § Log on with your BU userid and Kerboros password § If you don’t have BU userid, then use this: userid: tuta 1. . . tuta 18 password: SCVsummer 12 The number after tuta should match the number affixed on the front of your PC tower § Start MATLAB M-Files on T drive There are some files that you can copy over to your local folder for hands-on practices: >> copyfile(‘T: kadinpct*’ , ’. ’) 2

MATLAB Parallel Computing Toolbox What is the PCT ? • The Parallel Computing Toolbox is a MATLAB tool box. • This tool box provides parallel utility functions to enable users to run MATLAB operations or procedures in parallel to speed up processing time. 3

MATLAB Parallel Computing Toolbox Where To Run The PCT ? 4 § Run on a desktop or laptop § MATLAB must be installed on local machine § Starting with R 2011 b, up to 12 processors can be used; up to 8 processors for older versions § Must have multi-core to gain speedup. § The thin client you are using has a dual-core processor § Requires BU userid (to access MATLAB, PCT licenses) § Run on a Katana Cluster node (as if a multi-cored desktop) § Requires SCV userid § Run on multiple Katana nodes ( for up to 32 processors) § Requires SCV userid

MATLAB Parallel Computing Toolbox Types of Parallel Jobs ? There are two types of parallel applications. § Distributed Jobs – task parallel § Parallel Jobs – data parallel 5

MATLAB Parallel Computing Toolbox Distributed Jobs 6 This type of parallel processing is classified as: Multiple tasks running independently on multiple workers with no information passed among them. On Katana, a distributed job is a series of single-processor batch jobs. This is also known as task-parallel, or “embarrassingly parallel” , jobs. Examples of distributed jobs: Monte Carlo simulations, image processing Parallel utility function: dfeval

MATLAB Parallel Computing Toolbox Parallel Jobs 7 A parallel job is: A single task running concurrently on multiple workers that may communicate with each other. On Katana, this results in one batch job with multiple processors. This is also known as a dataparallel job. Examples of a parallel job include many linear algebra applications : matrix multiply; linear algebraic system of equations solvers; Eigen solvers. Some may run efficiently in parallel and others may not. It depends on the underlining algorithms and operations. This also include jobs that mix serial and parallel processing. Parallel utility functions: spmd, drange, parfor, . . .

MATLAB Parallel Computing Toolbox How much work to parallelize my code ? 8 § Amount of effort depends on code and parallel paradigm used. § Many MATLAB functions you are familiar with are overloaded to handle parallel operations based on the variables’ data type.

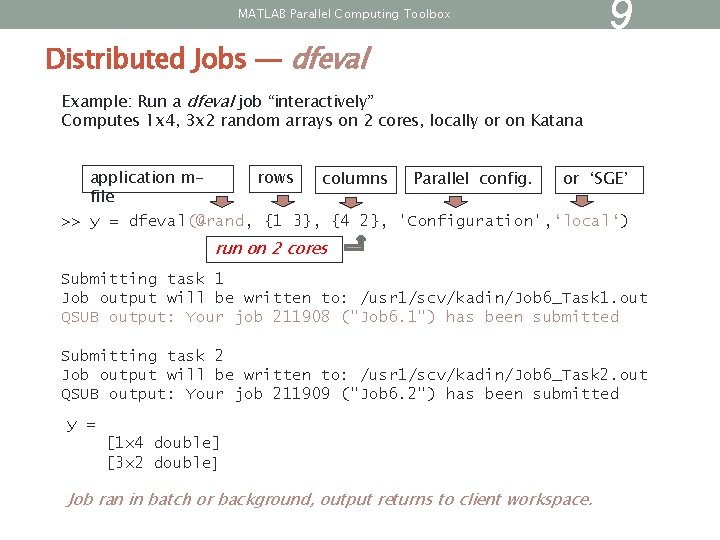

MATLAB Parallel Computing Toolbox Distributed Jobs — dfeval 9 Example: Run a dfeval job “interactively” Computes 1 x 4, 3 x 2 random arrays on 2 cores, locally or on Katana rows application mor ‘SGE’ columns Parallel config. file >> y = dfeval(@rand, {1 3}, {4 2}, 'Configuration', ‘local‘) run on 2 cores Submitting task 1 Job output will be written to: /usr 1/scv/kadin/Job 6_Task 1. out QSUB output: Your job 211908 ("Job 6. 1") has been submitted Submitting task 2 Job output will be written to: /usr 1/scv/kadin/Job 6_Task 2. out QSUB output: Your job 211909 ("Job 6. 2") has been submitted y = [1 x 4 double] [3 x 2 double] Job ran in batch or background, output returns to client workspace.

MATLAB Parallel Computing Toolbox Distributed Jobs – SCV script 10 For task-parallel applications on the Katana Cluster, we strongly recommend the use of an SCV script instead of dfeval. This script does not use the PCT. For details, see http: //www. bu. edu/tech/research/computation/linux-cluster/katanacluster/runningjobs/multiple_matlab_tasks/ If your application fits the description of a distributed job, you don’t need to know any more beyond this point. . .

MATLAB Parallel Computing Toolbox How Do I Run Parallel Jobs ? 11 Two ways to run parallel jobs: pmode or matlabpool. This procedure turns on (off) parallelism and allocate (deallocate) resources. pmode is a special mode of application; useful for learning the PCT and parallel program prototyping interactively. matlabpool is the general mode of application; it can be used for interactive and batch processing.

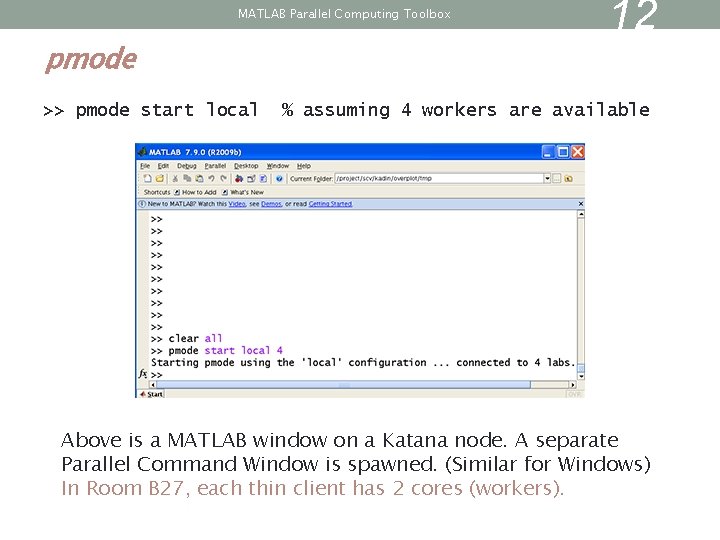

MATLAB Parallel Computing Toolbox pmode >> pmode start local 12 % assuming 4 workers are available Above is a MATLAB window on a Katana node. A separate Parallel Command Window is spawned. (Similar for Windows) In Room B 27, each thin client has 2 cores (workers).

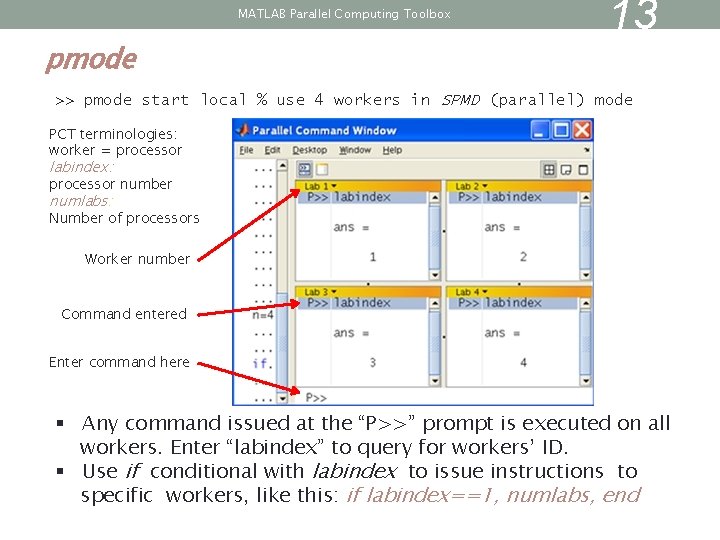

MATLAB Parallel Computing Toolbox pmode 13 >> pmode start local % use 4 workers in SPMD (parallel) mode PCT terminologies: worker = processor labindex: processor number numlabs: Number of processors Worker number Command entered Enter command here § Any command issued at the “P>>” prompt is executed on all workers. Enter “labindex” to query for workers’ ID. § Use if conditional with labindex to issue instructions to specific workers, like this: if labindex==1, numlabs, end

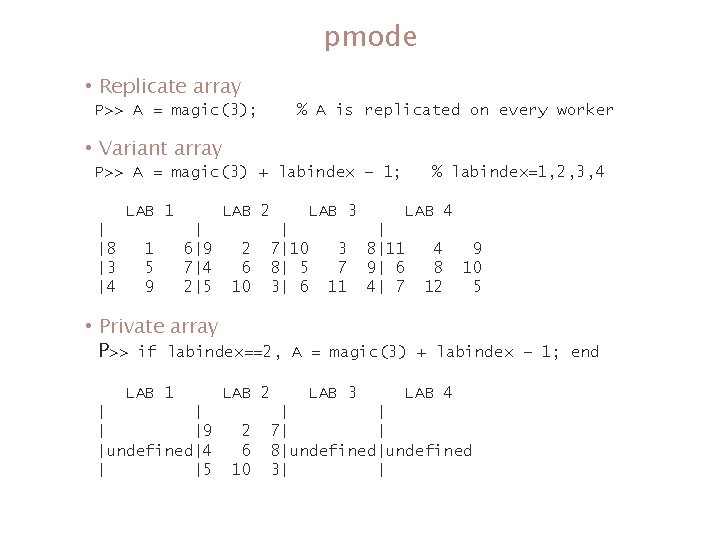

pmode • Replicate array P>> A = magic(3); % A is replicated on every worker • Variant array P>> A = magic(3) + labindex – 1; LAB 1 | |8 |3 |4 1 5 9 LAB 2 | 6|9 7|4 2|5 LAB 3 2 6 10 | 7|10 8| 5 3| 6 • Private array P>> if labindex==2, LAB 1 | |9 |undefined|4 | |5 Spring 2012 LAB 2 2 6 10 % labindex=1, 2, 3, 4 3 7 11 LAB 4 | 8|11 9| 6 4| 7 4 8 12 9 10 5 A = magic(3) + labindex – 1; end LAB 3 LAB 4 | | 7| | 8|undefined 3| | 14

Switch from pmode to matlabpool If you are running MATLAB pmode, exit it. P>> exit • MATLAB allows only one parallel environment at a time. • pmode may be started with the keyword open or start. • matlabpool can only be started with the keyword open. You can also close pmode from the MATLAB window: >> pmode close Now, open matlabpool >> matlabpool open local Spring 2012 % rely on default worker size 15

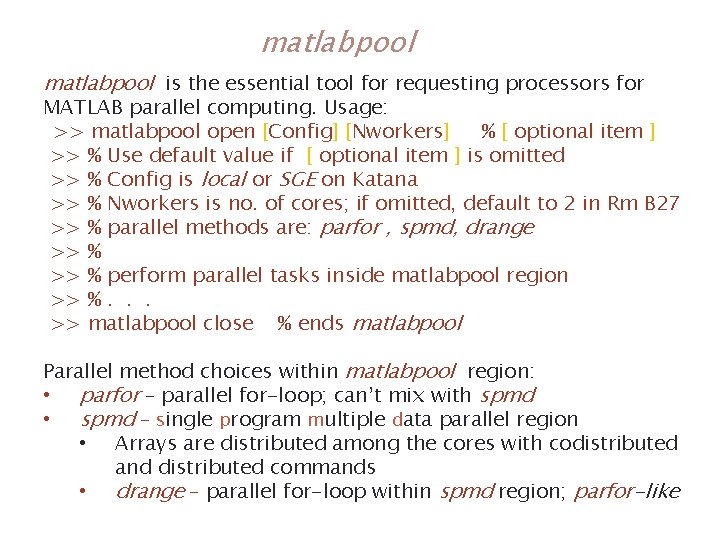

matlabpool is the essential tool for requesting processors for MATLAB parallel computing. Usage: >> matlabpool open [Config] [Nworkers] % [ optional item ] >> % Use default value if [ optional item ] is omitted >> % Config is local or SGE on Katana >> % Nworkers is no. of cores; if omitted, default to 2 in Rm B 27 >> % parallel methods are: parfor , spmd, drange >> % perform parallel tasks inside matlabpool region >> %. . . >> matlabpool close % ends matlabpool Parallel method choices within matlabpool region: • parfor – parallel for-loop; can’t mix with spmd • spmd – single program multiple data parallel region • Arrays are distributed among the cores with codistributed and distributed commands • drange – parallel for-loop within spmd region; parfor-like Spring 2012 16

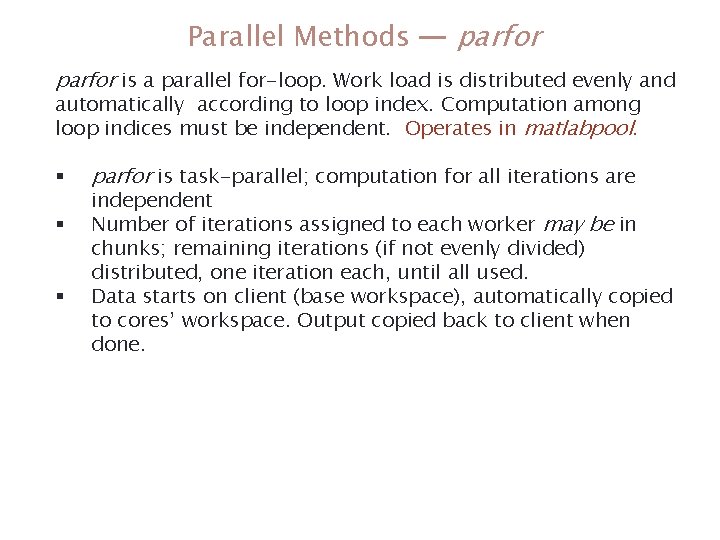

Parallel Methods — parfor is a parallel for-loop. Work load is distributed evenly and automatically according to loop index. Computation among loop indices must be independent. Operates in matlabpool. § § § parfor is task-parallel; computation for all iterations are independent Number of iterations assigned to each worker may be in chunks; remaining iterations (if not evenly divided) distributed, one iteration each, until all used. Data starts on client (base workspace), automatically copied to cores’ workspace. Output copied back to client when done. Spring 2012 17

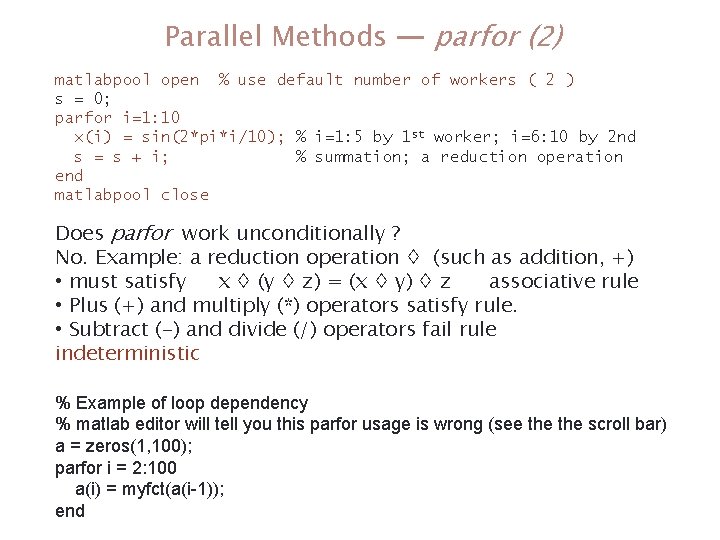

Parallel Methods — parfor (2) matlabpool open % use default number of workers ( 2 ) s = 0; parfor i=1: 10 x(i) = sin(2*pi*i/10); % i=1: 5 by 1 st worker; i=6: 10 by 2 nd s = s + i; % summation; a reduction operation end matlabpool close Does parfor work unconditionally ? No. Example: a reduction operation ◊ (such as addition, +) • must satisfy x ◊ (y ◊ z) = (x ◊ y) ◊ z associative rule • Plus (+) and multiply (*) operators satisfy rule. • Subtract (-) and divide (/) operators fail rule indeterministic % Example of loop dependency % matlab editor will tell you this parfor usage is wrong (see the scroll bar) a = zeros(1, 100); parfor i = 2: 100 a(i) = myfct(a(i-1)); Spring 18 end 2012

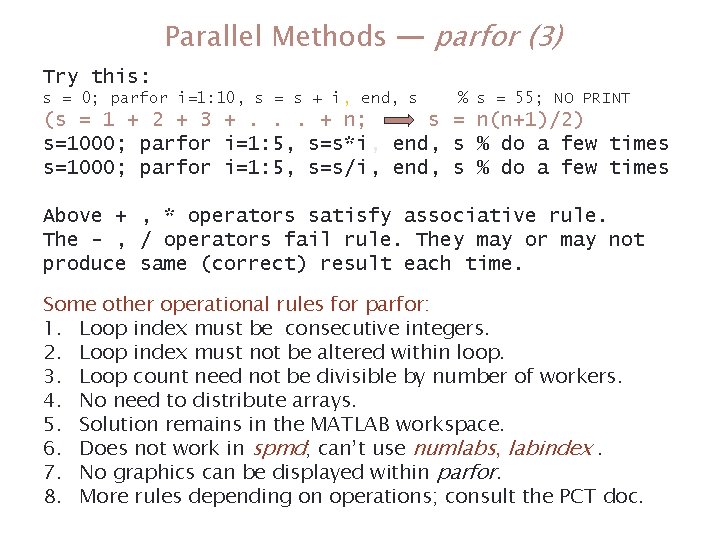

Parallel Methods — parfor (3) Try this: s = 0; parfor i=1: 10, s = s + i, end, s % s = 55; NO PRINT (s = 1 + 2 + 3 +. . . + n; s = n(n+1)/2) s=1000; parfor i=1: 5, s=s*i, end, s % do a few times s=1000; parfor i=1: 5, s=s/i, end, s % do a few times Above + , * operators satisfy associative rule. The - , / operators fail rule. They may or may not produce same (correct) result each time. Some other operational rules for parfor: 1. Loop index must be consecutive integers. 2. Loop index must not be altered within loop. 3. Loop count need not be divisible by number of workers. 4. No need to distribute arrays. 5. Solution remains in the MATLAB workspace. 6. Does not work in spmd; can’t use numlabs, labindex. 7. No graphics can be displayed within parfor. 8. More rules depending on operations; consult the PCT doc. Spring 2012 19

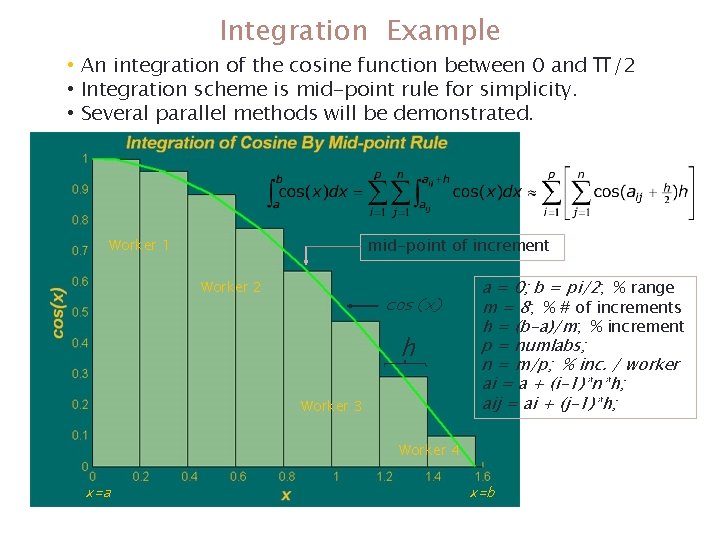

Integration Example • An integration of the cosine function between 0 and • Integration scheme is mid-point rule for simplicity. • Several parallel methods will be demonstrated. mid-point of increment Worker 1 Worker 2 cos(x) h Worker 3 a = 0; b = pi/2; % range m = 8; % # of increments h = (b-a)/m; % increment p = numlabs; n = m/p; % inc. / worker ai = a + (i-1)*n*h; aij = ai + (j-1)*h; Worker 4 x=a Spring 2012 π/2 x=b 20

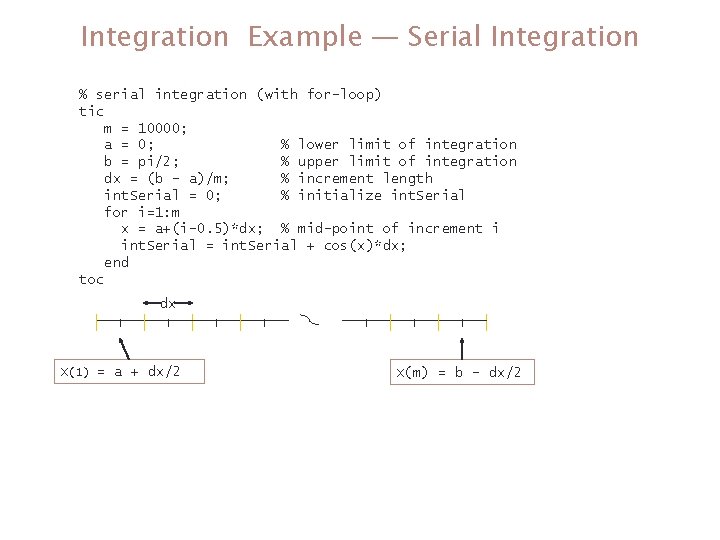

Integration Example — Serial Integration % serial integration (with for-loop) tic m = 10000; a = 0; % lower limit of integration b = pi/2; % upper limit of integration dx = (b – a)/m; % increment length int. Serial = 0; % initialize int. Serial for i=1: m x = a+(i-0. 5)*dx; % mid-point of increment i int. Serial = int. Serial + cos(x)*dx; end toc dx X(1) = a + dx/2 Spring 2012 X(m) = b - dx/2 21

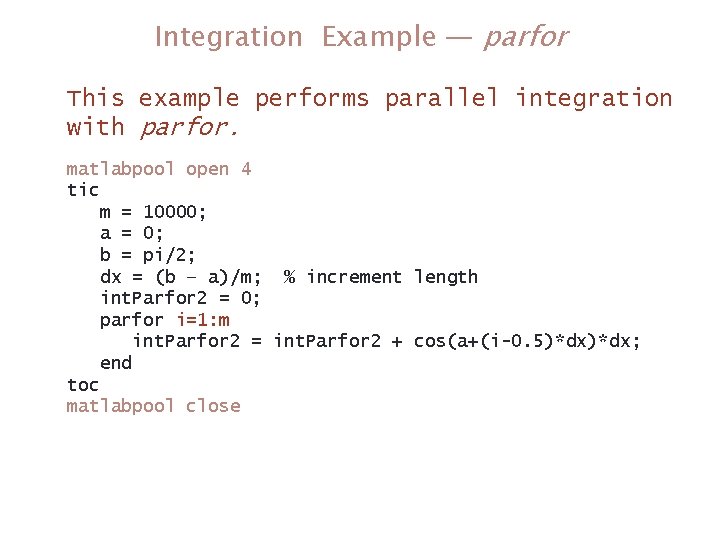

Integration Example — parfor This example performs parallel integration with parfor. matlabpool open 4 tic m = 10000; a = 0; b = pi/2; dx = (b – a)/m; % increment length int. Parfor 2 = 0; parfor i=1: m int. Parfor 2 = int. Parfor 2 + cos(a+(i-0. 5)*dx; end toc matlabpool close Spring 2012 22

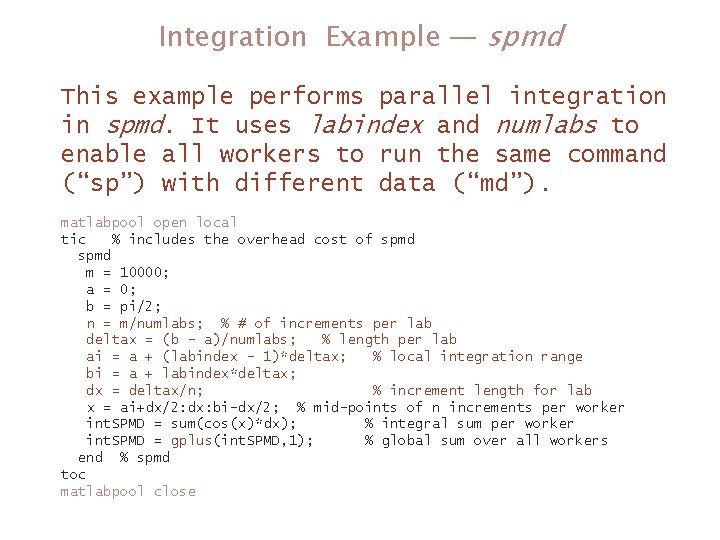

Integration Example — spmd This example performs parallel integration in spmd. It uses labindex and numlabs to enable all workers to run the same command (“sp”) with different data (“md”). matlabpool open local tic % includes the overhead cost of spmd m = 10000; a = 0; b = pi/2; n = m/numlabs; % # of increments per lab deltax = (b - a)/numlabs; % length per lab ai = a + (labindex - 1)*deltax; % local integration range bi = a + labindex*deltax; dx = deltax/n; % increment length for lab x = ai+dx/2: dx: bi-dx/2; % mid-points of n increments per worker int. SPMD = sum(cos(x)*dx); % integral sum per worker int. SPMD = gplus(int. SPMD, 1); % global sum over all workers end % spmd toc matlabpool close Spring 2012 23

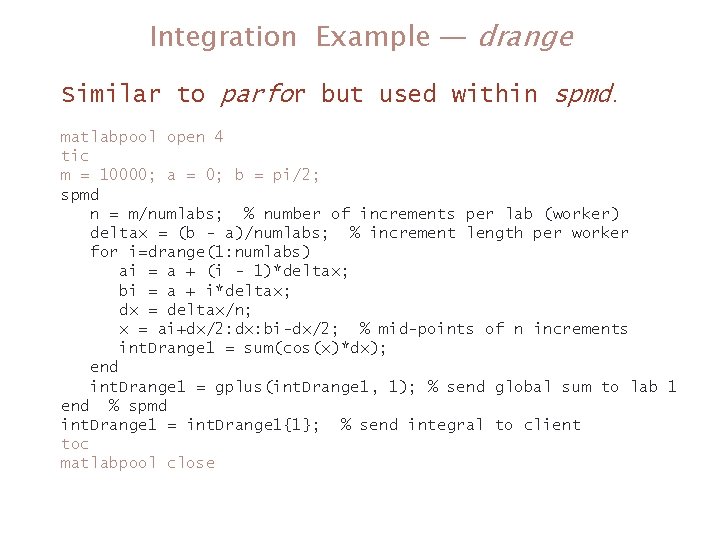

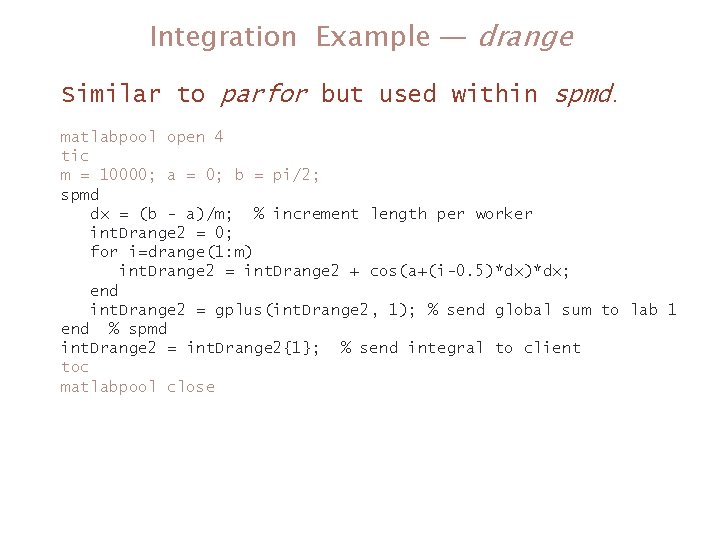

Integration Example — drange Similar to parfor but used within spmd. matlabpool open 4 tic m = 10000; a = 0; b = pi/2; spmd n = m/numlabs; % number of increments per lab (worker) deltax = (b - a)/numlabs; % increment length per worker for i=drange(1: numlabs) ai = a + (i - 1)*deltax; bi = a + i*deltax; dx = deltax/n; x = ai+dx/2: dx: bi-dx/2; % mid-points of n increments int. Drange 1 = sum(cos(x)*dx); end int. Drange 1 = gplus(int. Drange 1, 1); % send global sum to lab 1 end % spmd int. Drange 1 = int. Drange 1{1}; % send integral to client toc matlabpool close Spring 2012 24

Integration Example — drange Similar to parfor but used within spmd. matlabpool open 4 tic m = 10000; a = 0; b = pi/2; spmd dx = (b - a)/m; % increment length per worker int. Drange 2 = 0; for i=drange(1: m) int. Drange 2 = int. Drange 2 + cos(a+(i-0. 5)*dx; end int. Drange 2 = gplus(int. Drange 2, 1); % send global sum to lab 1 end % spmd int. Drange 2 = int. Drange 2{1}; % send integral to client toc matlabpool close Spring 2012 25

Array Distributions The purpose is to distribute data (e. g. , an array) among workers to reduce the memory usage and workload on each worker in return for smaller wall clock time. For some parallel applications, creating a distributed array often is the only thing you need to do to make your application to run in parallel (e. g. , due to function overloading). Some operations distribute data automatically while others require manual distribution. Spring 2012 26

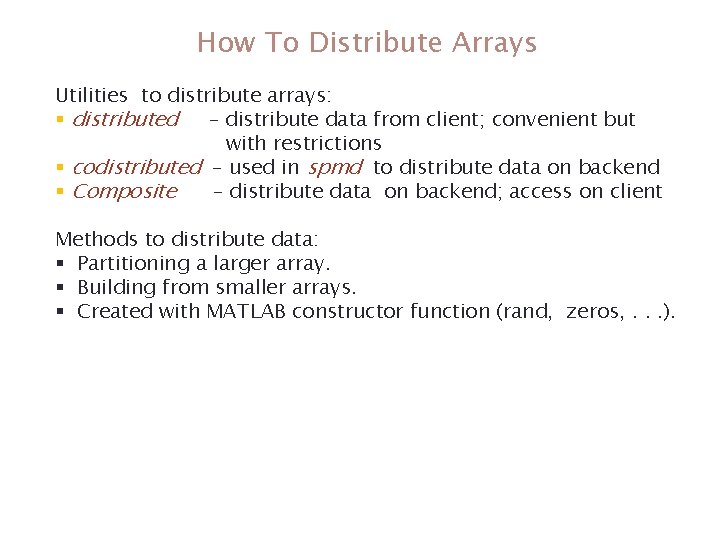

How To Distribute Arrays Utilities to distribute arrays: § distributed – distribute data from client; convenient but with restrictions § codistributed – used in spmd to distribute data on backend § Composite – distribute data on backend; access on client Methods to distribute data: § Partitioning a larger array. § Building from smaller arrays. § Created with MATLAB constructor function (rand, zeros, . . . ). Spring 2012 27

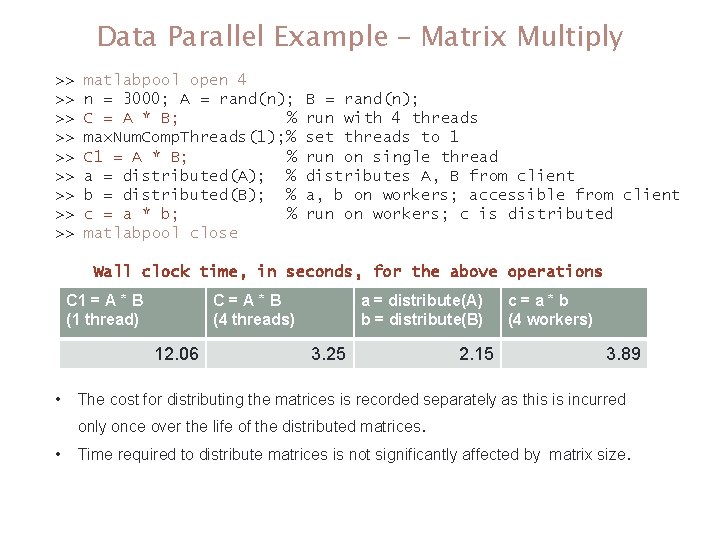

Data Parallel Example – Matrix Multiply >> >> >> matlabpool open 4 n = 3000; A = rand(n); C = A * B; % max. Num. Comp. Threads(1); % C 1 = A * B; % a = distributed(A); % b = distributed(B); % c = a * b; % matlabpool close B = rand(n); run with 4 threads set threads to 1 run on single thread distributes A, B from client a, b on workers; accessible from client run on workers; c is distributed Wall clock time, in seconds, for the above operations C 1 = A * B (1 thread) C=A*B (4 threads) 12. 06 • a = distribute(A) b = distribute(B) 3. 25 c=a*b (4 workers) 2. 15 3. 89 The cost for distributing the matrices is recorded separately as this is incurred only once over the life of the distributed matrices. • Time required to distribute matrices is not significantly affected by matrix size. Spring 2012 28

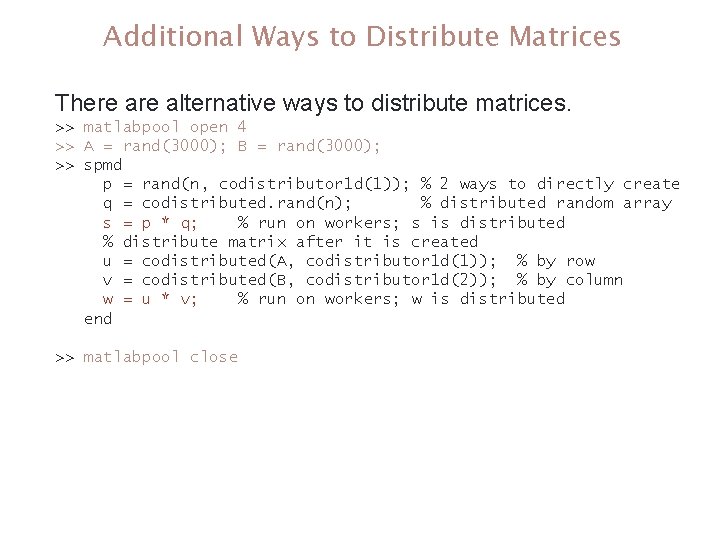

Additional Ways to Distribute Matrices There alternative ways to distribute matrices. >> matlabpool open 4 >> A = rand(3000); B = rand(3000); >> spmd p = rand(n, codistributor 1 d(1)); % 2 ways to directly create q = codistributed. rand(n); % distributed random array s = p * q; % run on workers; s is distributed % distribute matrix after it is created u = codistributed(A, codistributor 1 d(1)); % by row v = codistributed(B, codistributor 1 d(2)); % by column w = u * v; % run on workers; w is distributed end >> matlabpool close Spring 2012 29

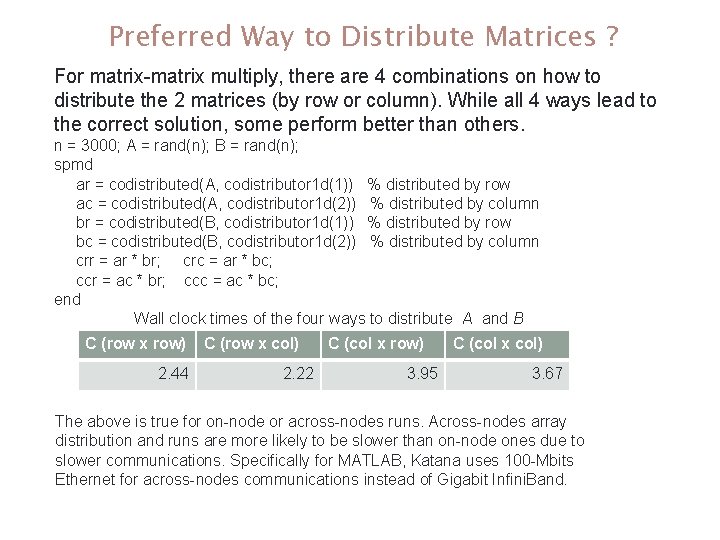

Preferred Way to Distribute Matrices ? For matrix-matrix multiply, there are 4 combinations on how to distribute the 2 matrices (by row or column). While all 4 ways lead to the correct solution, some perform better than others. n = 3000; A = rand(n); B = rand(n); spmd ar = codistributed(A, codistributor 1 d(1)) % distributed by row ac = codistributed(A, codistributor 1 d(2)) % distributed by column br = codistributed(B, codistributor 1 d(1)) % distributed by row bc = codistributed(B, codistributor 1 d(2)) % distributed by column crr = ar * br; crc = ar * bc; ccr = ac * br; ccc = ac * bc; end Wall clock times of the four ways to distribute A and B C (row x row) 2. 44 C (row x col) 2. 22 C (col x row) 3. 95 C (col x col) 3. 67 The above is true for on-node or across-nodes runs. Across-nodes array distribution and runs are more likely to be slower than on-node ones due to slower communications. Specifically for MATLAB, Katana uses 100 -Mbits Ethernet for across-nodes communications instead of Gigabit Infini. Band. Spring 2012 30

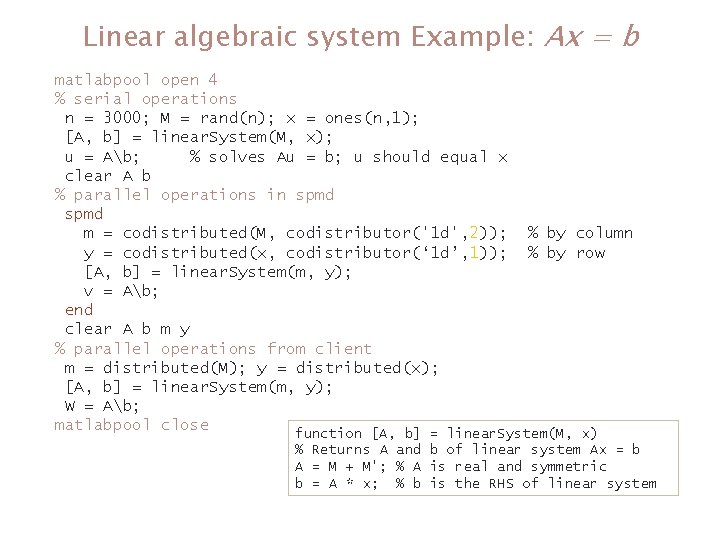

Linear algebraic system Example: Ax = b matlabpool open 4 % serial operations n = 3000; M = rand(n); x = ones(n, 1); [A, b] = linear. System(M, x); u = Ab; % solves Au = b; u should equal x clear A b % parallel operations in spmd m = codistributed(M, codistributor('1 d', 2)); % by column y = codistributed(x, codistributor(‘ 1 d’, 1)); % by row [A, b] = linear. System(m, y); v = Ab; end clear A b m y % parallel operations from client m = distributed(M); y = distributed(x); [A, b] = linear. System(m, y); W = Ab; matlabpool close function [A, b] = linear. System(M, x) % Returns A and b of linear system Ax = b A = M + M'; % A is real and symmetric b = A * x; % b is the RHS of linear system Spring 2012 31

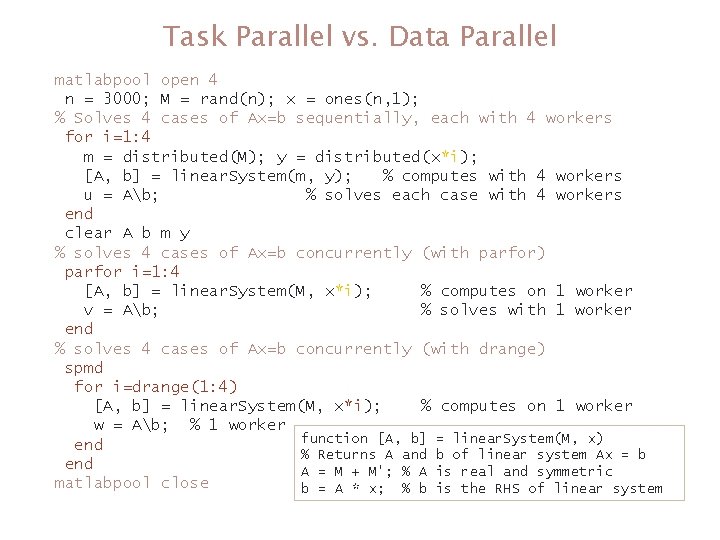

Task Parallel vs. Data Parallel matlabpool open 4 n = 3000; M = rand(n); x = ones(n, 1); % Solves 4 cases of Ax=b sequentially, each with 4 workers for i=1: 4 m = distributed(M); y = distributed(x*i); [A, b] = linear. System(m, y); % computes with 4 workers u = Ab; % solves each case with 4 workers end clear A b m y % solves 4 cases of Ax=b concurrently (with parfor) parfor i=1: 4 [A, b] = linear. System(M, x*i); % computes on 1 worker v = Ab; % solves with 1 worker end % solves 4 cases of Ax=b concurrently (with drange) spmd for i=drange(1: 4) [A, b] = linear. System(M, x*i); % computes on 1 worker w = Ab; % 1 worker function [A, b] = linear. System(M, x) end % Returns A and b of linear system Ax = b end A = M + M'; % A is real and symmetric matlabpool close b = A * x; % b is the RHS of linear system Spring 2012 32

7. How Do I Parallelize My Code ? 1. Profile serial code with profile. 2. Identify section of code or function within code using the most CPU time. 3. Look for ways to improve code section performance. 4. See if section is parallelizable and worth parallelizing. 5. If warranted, research and choose a suitable parallel algorithm and parallel paradigm for code section. 6. Parallelize code section with chosen parallel algorithm. 7. To improve performance further, work on the next most CPU-time-intensive section. Repeats steps 2 – 7. 8. Analyze the performance efficiency to know what the sweet-spot is, i. e. , given the implementation and platforms on which code is intended, what is the minimum number of workers for speediest turn-around (see the Amdahl’s Law page). Spring 2012 33

8. How Well Does PCT Scales ? • Task parallel applications generally scale linearly. • Data parallel applications’ parallel efficiency depend on individual code and algorithm used. • My personal experience is that the runtime of a reasonably well tuned MATLAB program running on single or multiprocessor is at least an order of magnitude slower than an equivalent C/FORTRAN code. • Additionally, the PCT’s communication is based on the Ethernet. MPI on Katana uses Infiniband is faster than Ethernet. This further disadvantaged PCT if your code is communication-bound. Spring 2012 34

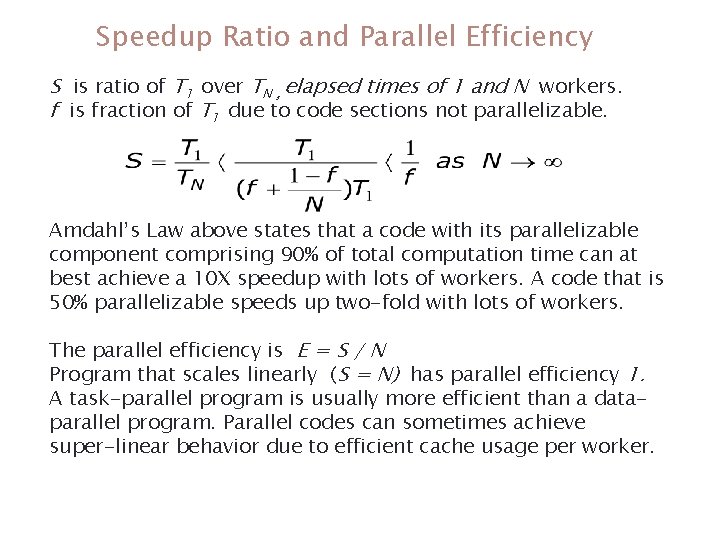

Speedup Ratio and Parallel Efficiency S is ratio of T 1 over TN , elapsed times of 1 and N workers. f is fraction of T 1 due to code sections not parallelizable. Amdahl’s Law above states that a code with its parallelizable component comprising 90% of total computation time can at best achieve a 10 X speedup with lots of workers. A code that is 50% parallelizable speeds up two-fold with lots of workers. The parallel efficiency is E = S / N Program that scales linearly (S = N) has parallel efficiency 1. A task-parallel program is usually more efficient than a dataparallel program. Parallel codes can sometimes achieve super-linear behavior due to efficient cache usage per worker. Spring 2012 35

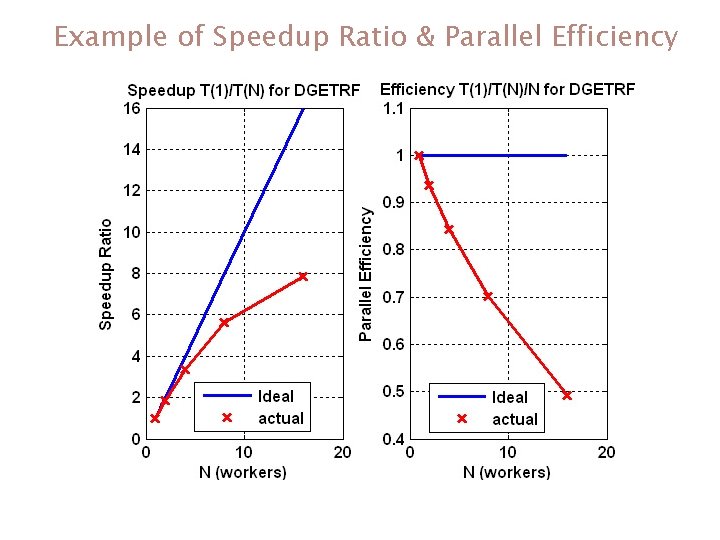

Example of Speedup Ratio & Parallel Efficiency Spring 2012 36

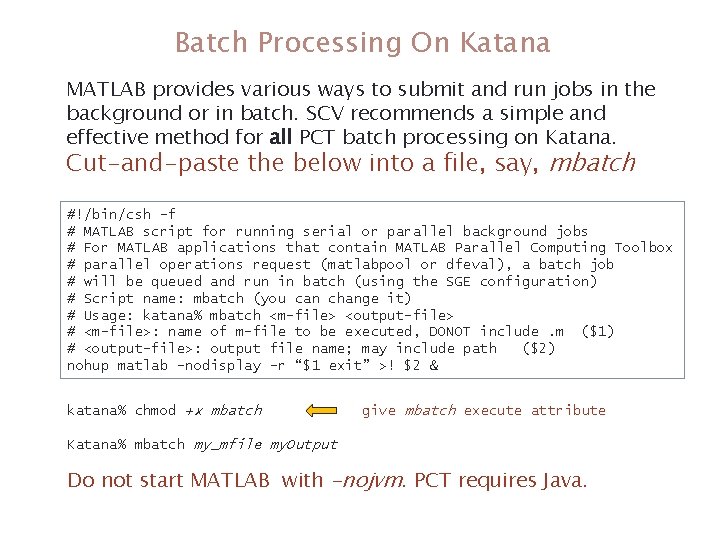

Batch Processing On Katana MATLAB provides various ways to submit and run jobs in the background or in batch. SCV recommends a simple and effective method for all PCT batch processing on Katana. Cut-and-paste the below into a file, say, mbatch #!/bin/csh -f # MATLAB script for running serial or parallel background jobs # For MATLAB applications that contain MATLAB Parallel Computing Toolbox # parallel operations request (matlabpool or dfeval), a batch job # will be queued and run in batch (using the SGE configuration) # Script name: mbatch (you can change it) # Usage: katana% mbatch <m-file> <output-file> # <m-file>: name of m-file to be executed, DONOT include. m ($1) # <output-file>: output file name; may include path ($2) nohup matlab –nodisplay -r “$1 exit” >! $2 & katana% chmod +x mbatch give mbatch execute attribute Katana% mbatch my_mfile my. Output Do not start MATLAB with -nojvm. PCT requires Java. Spring 2012 37

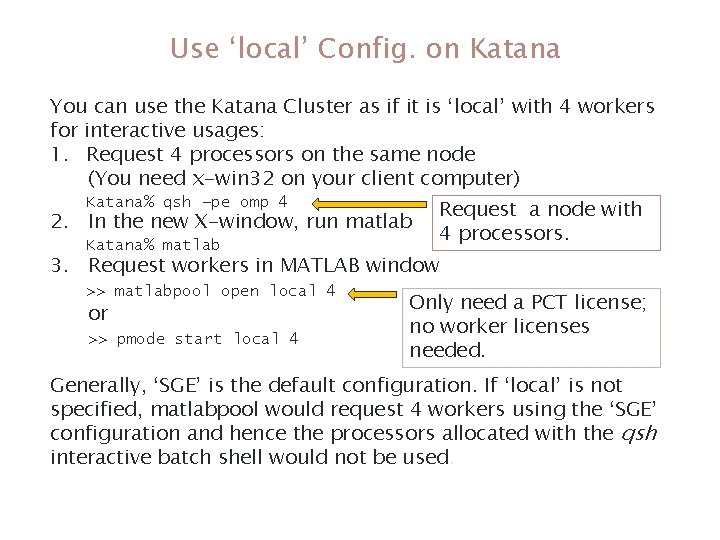

Use ‘local’ Config. on Katana You can use the Katana Cluster as if it is ‘local’ with 4 workers for interactive usages: 1. Request 4 processors on the same node (You need x-win 32 on your client computer) Katana% qsh –pe omp 4 2. In the new X-window, run matlab Katana% matlab Request a node with 4 processors. 3. Request workers in MATLAB window >> matlabpool open local 4 or >> pmode start local 4 Only need a PCT license; no worker licenses needed. Generally, ‘SGE’ is the default configuration. If ‘local’ is not specified, matlabpool would request 4 workers using the ‘SGE’ configuration and hence the processors allocated with the qsh interactive batch shell would not be used. Spring 2012 38

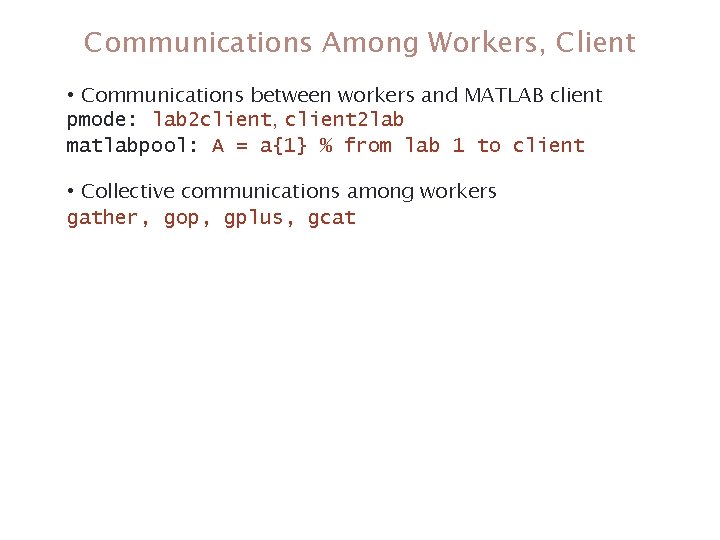

Communications Among Workers, Client • Communications between workers and MATLAB client pmode: lab 2 client, client 2 lab matlabpool: A = a{1} % from lab 1 to client • Collective communications among workers gather, gop, gplus, gcat Spring 2012 39

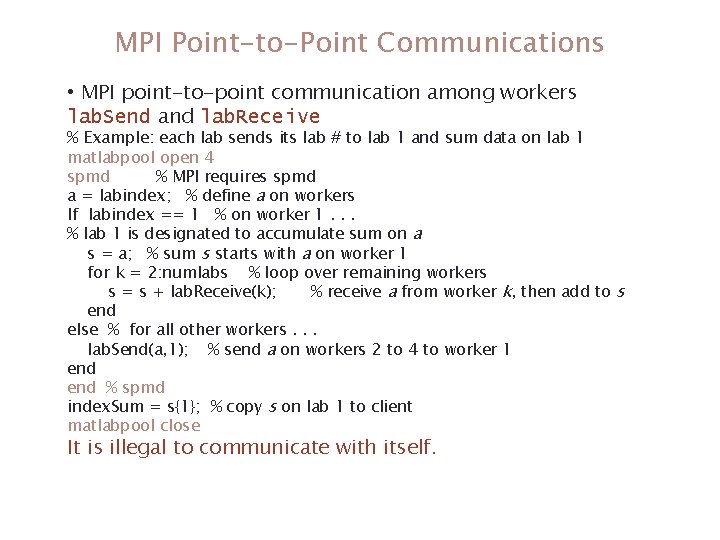

MPI Point-to-Point Communications • MPI point-to-point communication among workers lab. Send and lab. Receive % Example: each lab sends its lab # to lab 1 and sum data on lab 1 matlabpool open 4 spmd % MPI requires spmd a = labindex; % define a on workers If labindex == 1 % on worker 1. . . % lab 1 is designated to accumulate sum on a s = a; % sum s starts with a on worker 1 for k = 2: numlabs % loop over remaining workers s = s + lab. Receive(k); % receive a from worker k, then add to s end else % for all other workers. . . lab. Send(a, 1); % send a on workers 2 to 4 to worker 1 end % spmd index. Sum = s{1}; % copy s on lab 1 to client matlabpool close It is illegal to communicate with itself. Spring 2012 40

MATLAB Parallel Computing Toolbox Useful SCV Info 41 Please help us do better in the future by participating in a quick survey: http: //scv. bu. edu/survey/tutorial_evaluation. html § SCV home page (www. bu. edu/tech/research) § Resource Applications www. bu. edu/tech/accounts/special/research/accounts § Help • System • help@katana. bu. edu, bu. service-now. com • Web-based tutorials (www. bu. edu/tech/research/training/tutorials) (MPI, Open. MP, MATLAB, IDL, Graphics tools) • HPC consultations by appointment • Kadin Tseng (kadin@bu. edu)

- Slides: 41