Introduction to Information Retrieval Acknowledgements to Pandu Nayak

- Slides: 47

Introduction to Information Retrieval Acknowledgements to Pandu Nayak and Prabhakar Raghavan of Stanford, Hinrich Schütze and Christina Lioma of Stutgart, Lee Giles (and his sources) of Penn State

So far • You have learned to crawl the web – Being a good citizen and not hurting the servers – Extracting the kind of information that you want – Storing the retrieved material locally • Now, what are you going to do with those materials? – Develop a way to retrieve what you want from that collection, as you need it.

Finding what you need in a collection of documents • Information Retrieval: – Given a collection of “documents” – Retrieve • One or more documents that contain the specific information you want • Obtain the answer to an information need by querying the document collection

Tonight’s class • Introduce the concepts of Information Retrieval – Assume you have a collection – How to look into the documents for your information need – How to do it efficiently

Later • Tools to build indices – Apache Lucene – Apache Solr

A brief introduction to Information Retrieval • Recall our primary resource: – Christopher D. Manning, Prabhakar Raghavan and Hinrich Schütze, Introduction to Information Retrieval, Cambridge University Press. 2008. • The entire book is available online, free, at http: //nlp. stanford. edu/IR-book/informationretrieval-book. html • I will use some of the slides that they provide to go with the book. • I will also use slides from other sources and make new ones. The primary other source is Dr. Lee Giles, Penn State. Information Retrieval IST 441

Author’s definition • Information Retrieval (IR) is finding material (usually documents) of an unstructured nature (usually text) that satisfies an information need from within large collections (usually stored on computers). • Note the use of the word “usually. ” We will see examples where the material is not documents, and not text.

Examples and Scaling • IR is about finding a needle in a haystack – finding some particular thing in a very large collection of similar things. • Our examples are necessarily small, so that we can comprehend them. Do remember, that all that we say must scale to very large quantities.

Searching Shakespeare • Which plays of Shakespeare contain the words Brutus AND Caesar but NOT Calpurnia? – See http: //www. rhymezone. com/shakespeare/ • One could grep all of Shakespeare’s plays for Brutus and Caesar, then strip out lines containing Calpurnia? • Why is that not the answer? – Slow (for large corpora) – NOT Calpurnia is non-trivial – Other operations (e. g. , find the word Romans near countrymen) not feasible – Ranked retrieval (best documents to return)

Document match • Go to http: //www. rhymezone. com/shakespeare/ • Enter, one at a time, – Caesar – Brutus – Calpurnia • For each, note the collection of plays that contain the term • We need to associate one or more plays with each term – Term Incidence For our example, we will use a subset of the plays

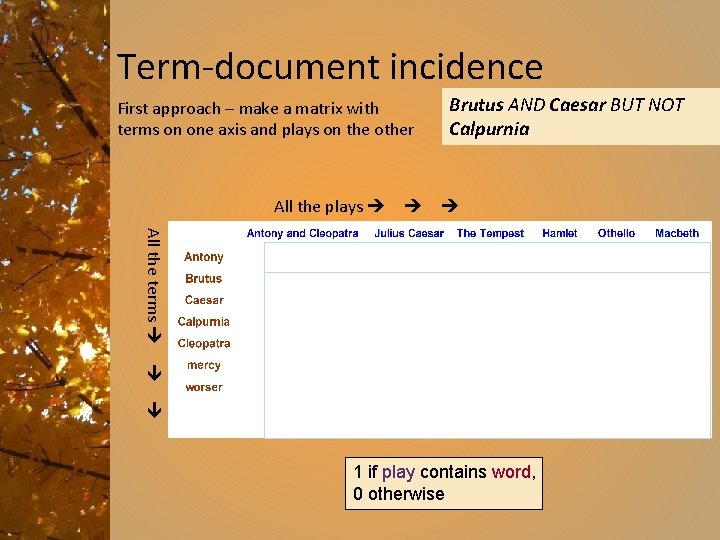

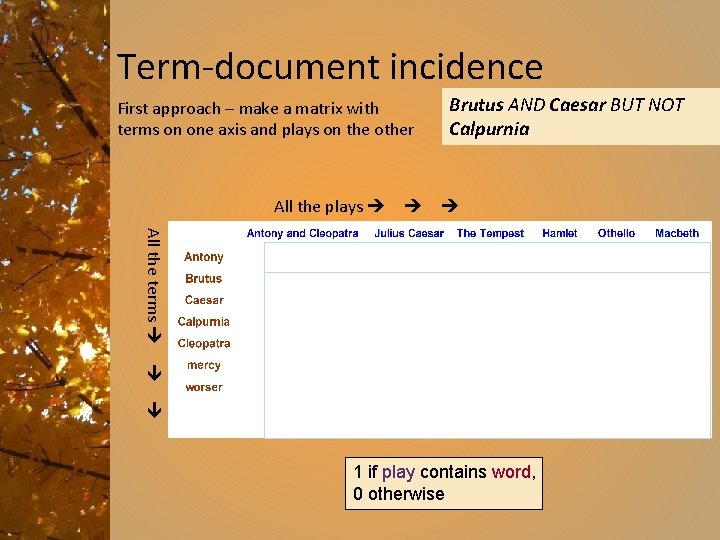

Term-document incidence First approach – make a matrix with terms on one axis and plays on the other All the plays Brutus AND Caesar BUT NOT Calpurnia All the terms 1 if play contains word, 0 otherwise

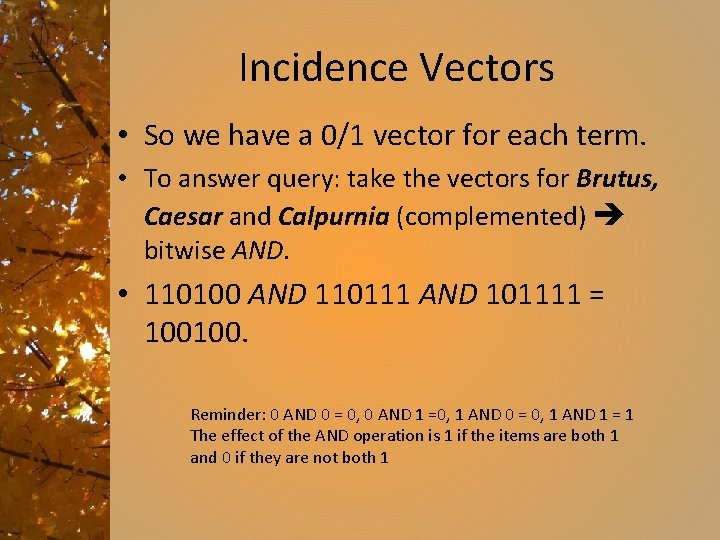

Incidence Vectors • So we have a 0/1 vector for each term. • To answer query: take the vectors for Brutus, Caesar and Calpurnia (complemented) bitwise AND. • 110100 AND 110111 AND 101111 = 100100. Reminder: 0 AND 0 = 0, 0 AND 1 =0, 1 AND 0 = 0, 1 AND 1 = 1 The effect of the AND operation is 1 if the items are both 1 and 0 if they are not both 1

Answer to query • Antony and Cleopatra, Act III, Scene ii Agrippa [Aside to DOMITIUS ENOBARBUS]: Why, Enobarbus, When Antony found Julius Caesar dead, He cried almost to roaring; and he wept When at Philippi he found Brutus slain. • Hamlet, Act III, Scene ii Lord Polonius: I did enact Julius Caesar I was killed i' the Capitol; Brutus killed me.

Spot check • Try another one • What is the vector for the query – Antony and mercy • What would we do to find Antony OR mercy?

Basic assumptions about information retrieval • Collection: Fixed set of documents • Goal: Retrieve documents with information that is relevant to the user’s information need and helps the user complete a task

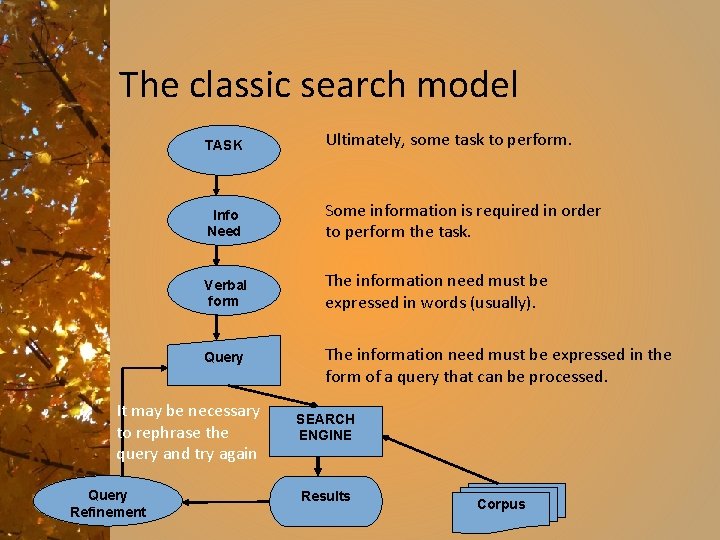

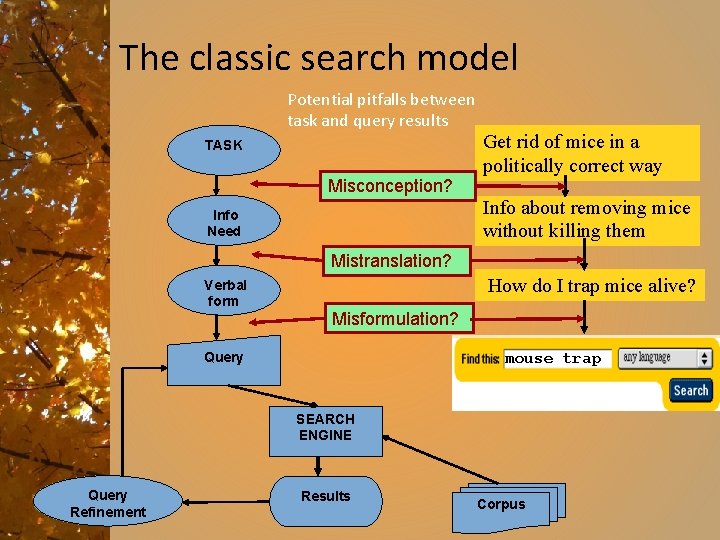

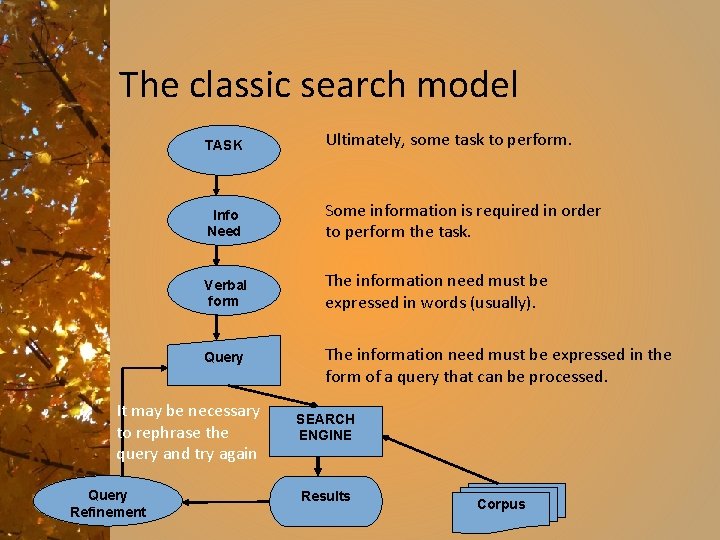

The classic search model TASK Ultimately, some task to perform. Info Need Some information is required in order to perform the task. Verbal form The information need must be expressed in words (usually). Query It may be necessary to rephrase the query and try again Query Refinement The information need must be expressed in the form of a query that can be processed. SEARCH ENGINE Results Corpus

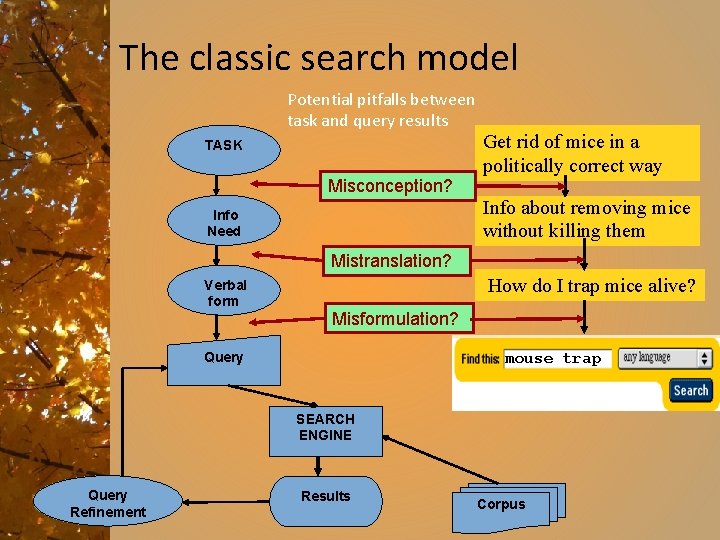

The classic search model Potential pitfalls between task and query results TASK Misconception? Info Need Get rid of mice in a politically correct way Info about removing mice without killing them Mistranslation? Verbal form How do I trap mice alive? Misformulation? Query mouse trap SEARCH ENGINE Query Refinement Results Corpus

How good are the results? • Precision: How well do the results match the information need? • Recall: What fraction of the available correct results were retrieved? • These are the basic concepts of information retrievaluation.

Size considerations • Consider N = 1 million documents, each with about 1000 words. • Avg 6 bytes/word including spaces/punctuation – 6 GB of data in the documents. • Say there are M = 500 K distinct terms among these.

The matrix does not work • 500 K x 1 M matrix has half-a-trillion 0’s and 1’s. • But it has no more than one billion 1’s. – matrix is extremely sparse. • What’s a better representation? – We only record the 1 positions. – i. e. We don’t need to know which documents do not have a term, only those that do. Why?

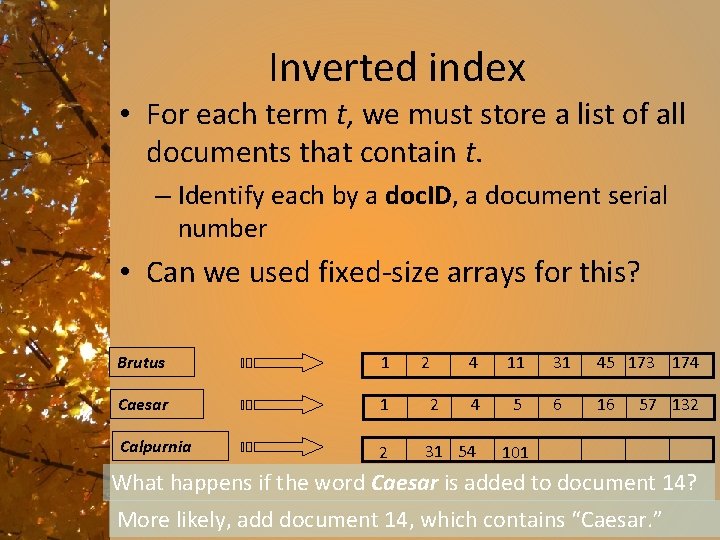

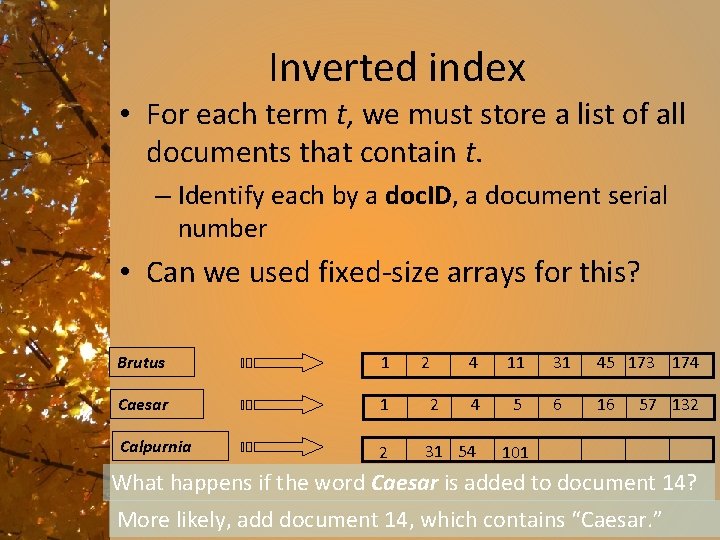

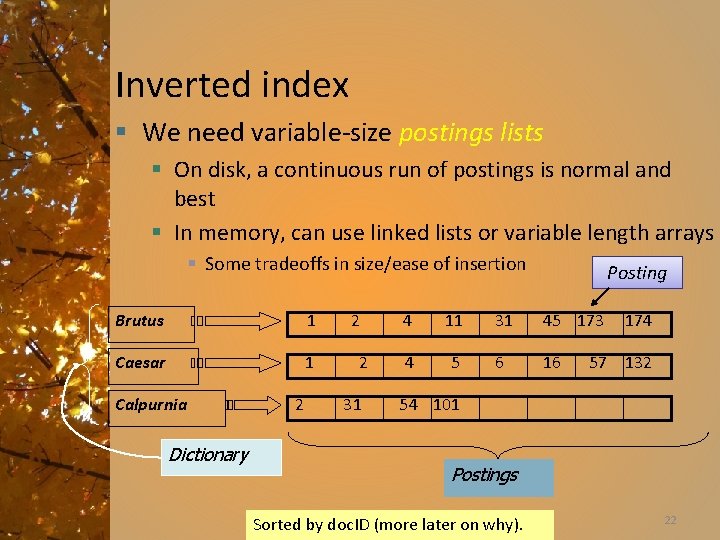

Inverted index • For each term t, we must store a list of all documents that contain t. – Identify each by a doc. ID, a document serial number • Can we used fixed-size arrays for this? Brutus 1 2 4 11 31 45 173 174 Caesar 1 2 4 5 6 16 Calpurnia 2 31 54 57 132 101 What happens if the word Caesar is added to document 14? More likely, add document 14, which contains “Caesar. ”

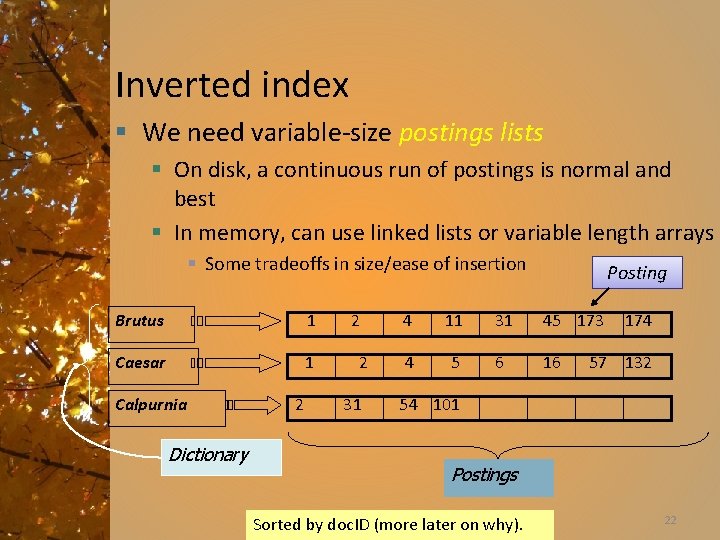

Inverted index § We need variable-size postings lists § On disk, a continuous run of postings is normal and best § In memory, can use linked lists or variable length arrays § Some tradeoffs in size/ease of insertion Brutus 1 Caesar 1 Calpurnia Dictionary 2 2 2 31 Posting 4 11 31 45 173 4 5 6 16 174 57 132 54 101 Postings Sorted by doc. ID (more later on why). 22

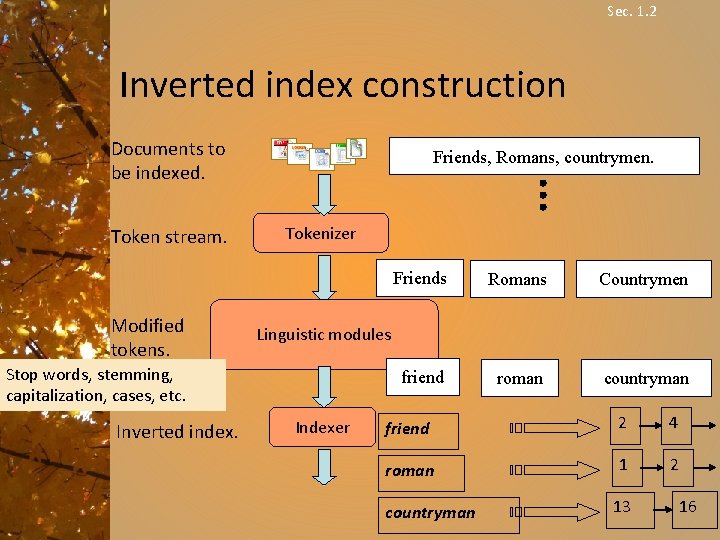

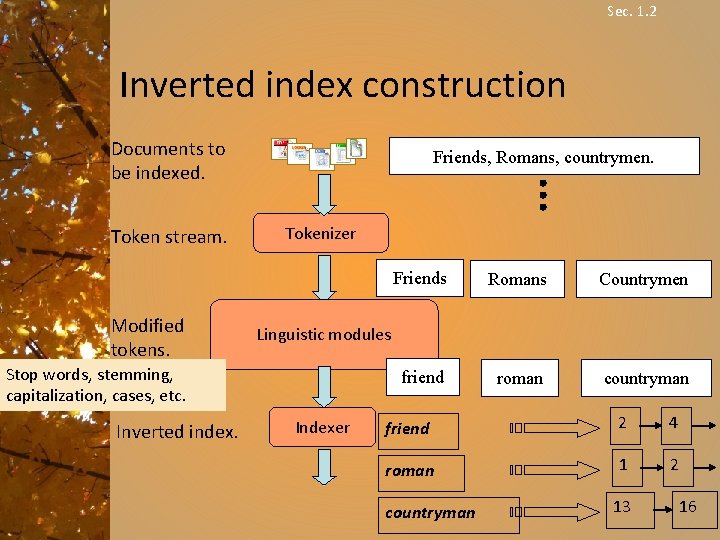

Sec. 1. 2 Inverted index construction Documents to be indexed. Token stream. Modified tokens. Friends, Romans, countrymen. Tokenizer Romans Countrymen friend roman countryman Linguistic modules Stop words, stemming, capitalization, cases, etc. Inverted index. Friends Indexer friend 2 4 roman 1 2 countryman 13 16

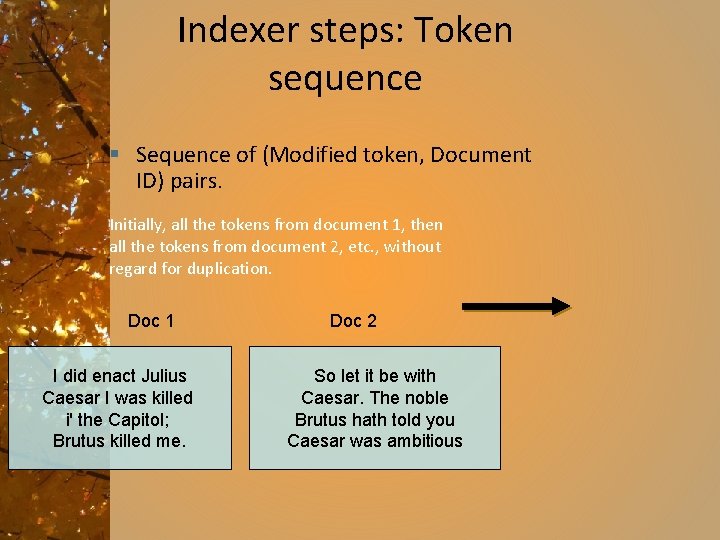

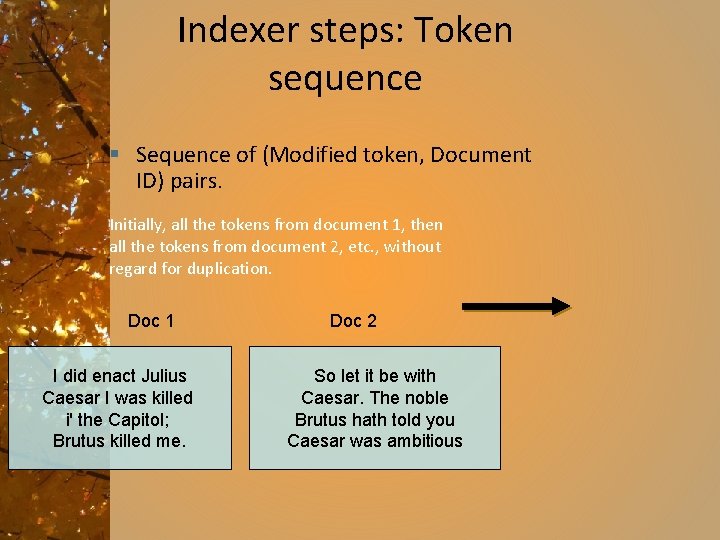

Indexer steps: Token sequence § Sequence of (Modified token, Document ID) pairs. Initially, all the tokens from document 1, then all the tokens from document 2, etc. , without regard for duplication. Doc 1 I did enact Julius Caesar I was killed i' the Capitol; Brutus killed me. Doc 2 So let it be with Caesar. The noble Brutus hath told you Caesar was ambitious

Indexer steps: Sort § Sort by terms § doc. ID within terms Core indexing step

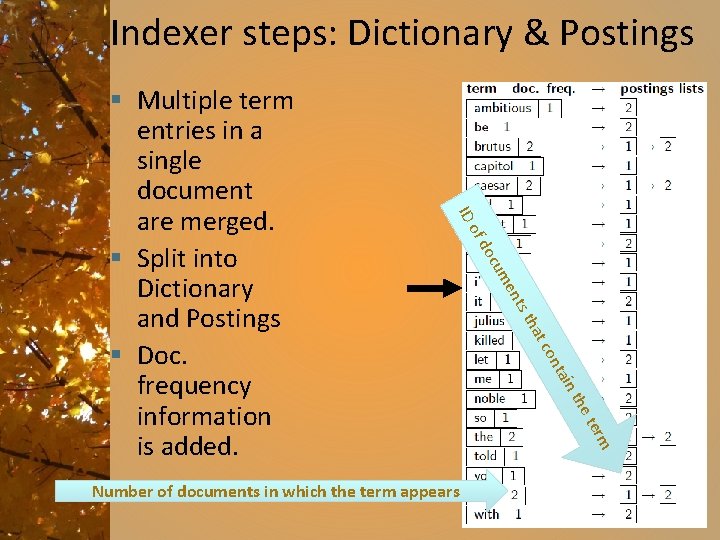

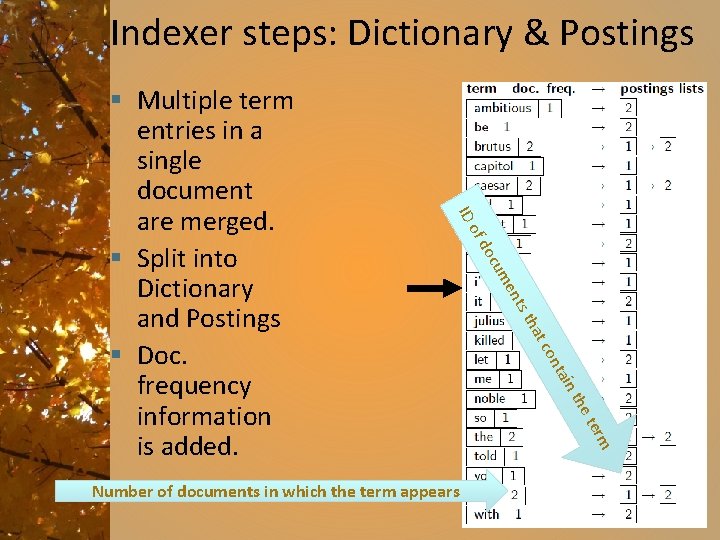

Indexer steps: Dictionary & Postings ID n tai on tc ha ts t en cum do the ter m Number of documents in which the term appears of § Multiple term entries in a single document are merged. § Split into Dictionary and Postings § Doc. frequency information is added.

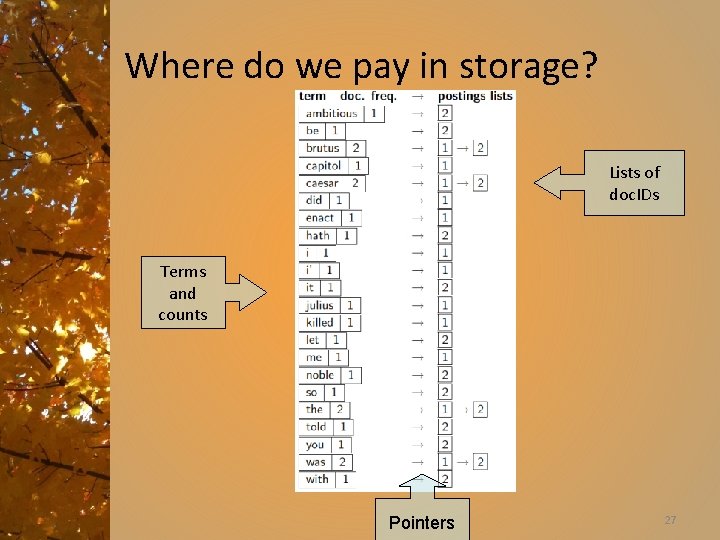

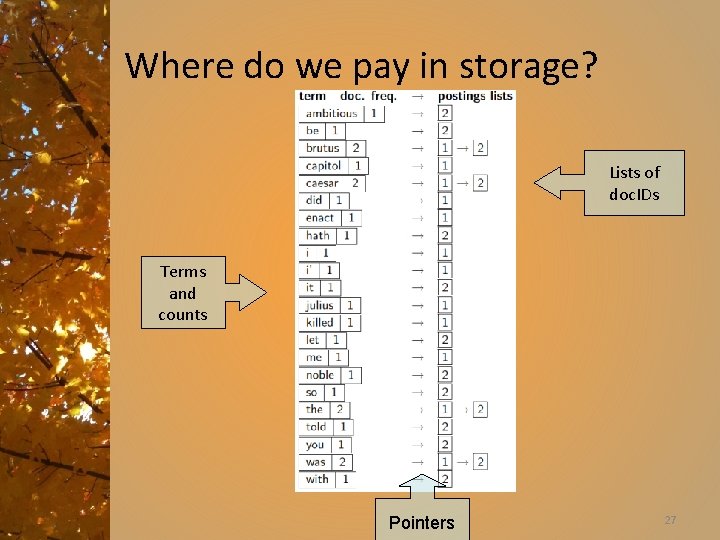

Where do we pay in storage? Lists of doc. IDs Terms and counts Pointers 27

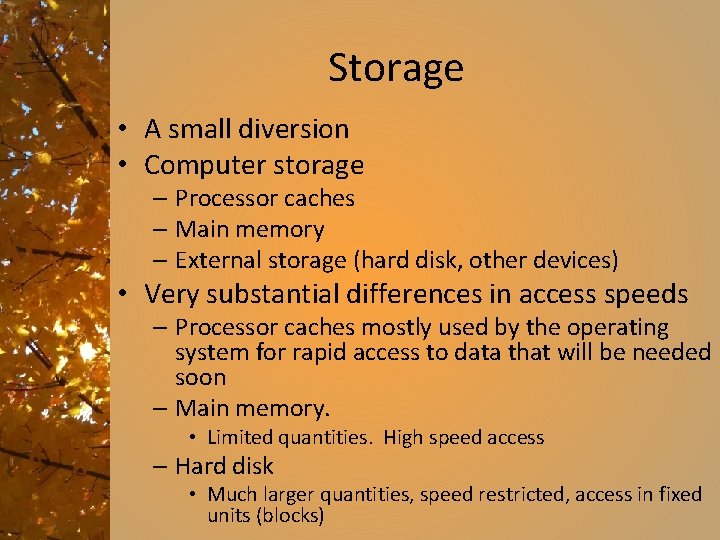

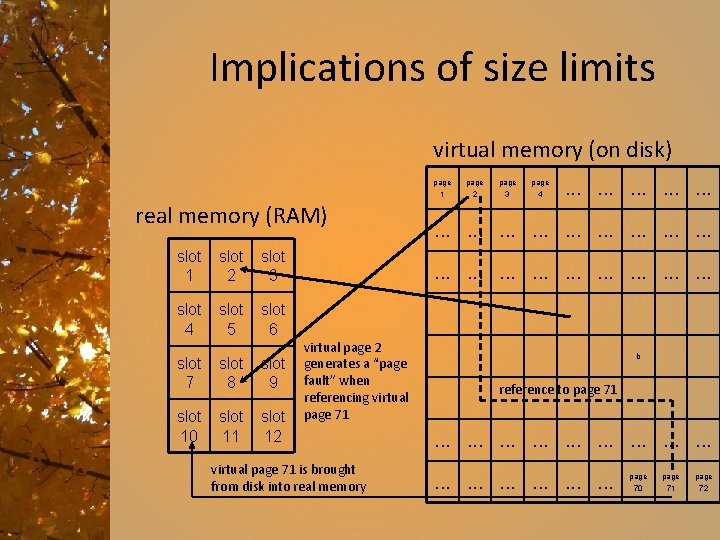

Storage • A small diversion • Computer storage – Processor caches – Main memory – External storage (hard disk, other devices) • Very substantial differences in access speeds – Processor caches mostly used by the operating system for rapid access to data that will be needed soon – Main memory. • Limited quantities. High speed access – Hard disk • Much larger quantities, speed restricted, access in fixed units (blocks)

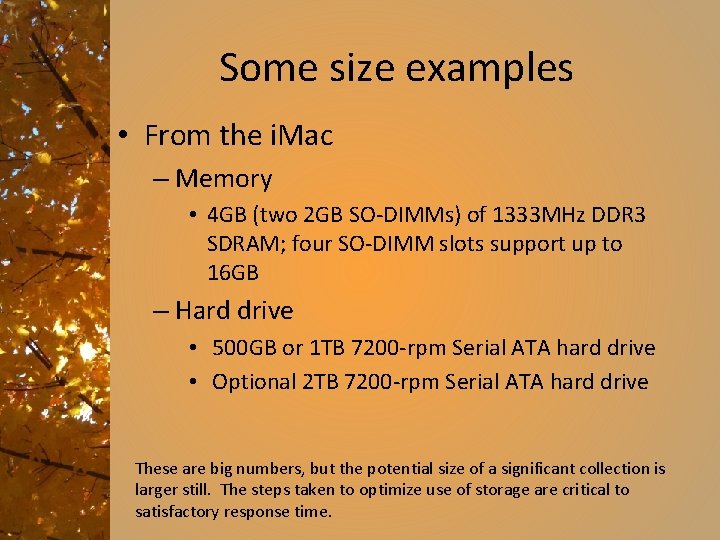

Some size examples • From the i. Mac – Memory • 4 GB (two 2 GB SO-DIMMs) of 1333 MHz DDR 3 SDRAM; four SO-DIMM slots support up to 16 GB – Hard drive • 500 GB or 1 TB 7200 -rpm Serial ATA hard drive • Optional 2 TB 7200 -rpm Serial ATA hard drive These are big numbers, but the potential size of a significant collection is larger still. The steps taken to optimize use of storage are critical to satisfactory response time.

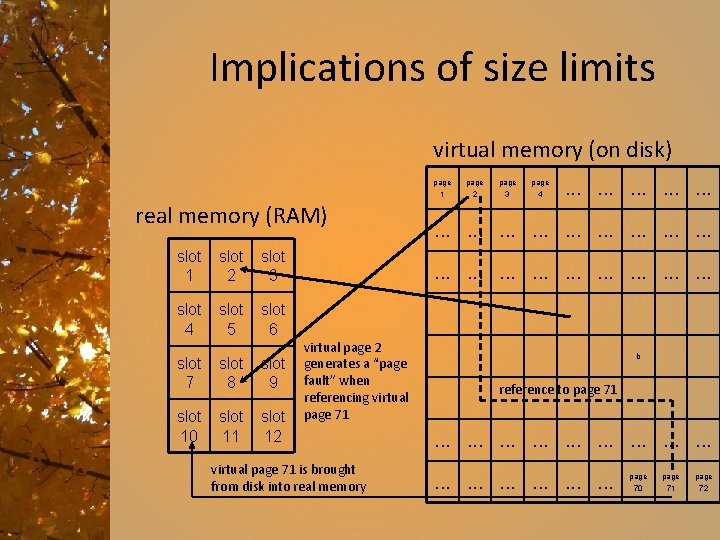

Implications of size limits virtual memory (on disk) page 1 real memory (RAM) slot 1 slot 2 slot 3 slot 4 slot 5 slot 6 slot 7 slot 8 slot 9 slot 10 slot 11 slot 12 page 3 page 4 . . . . virtual page 2 generates a “page fault” when referencing virtual page 71 is brought from disk into real memory b reference to page 71 . . . page 70 page 71 page 72

How do we process a query? • Using the index we just built, examine the terms in some order, looking for the terms in the query.

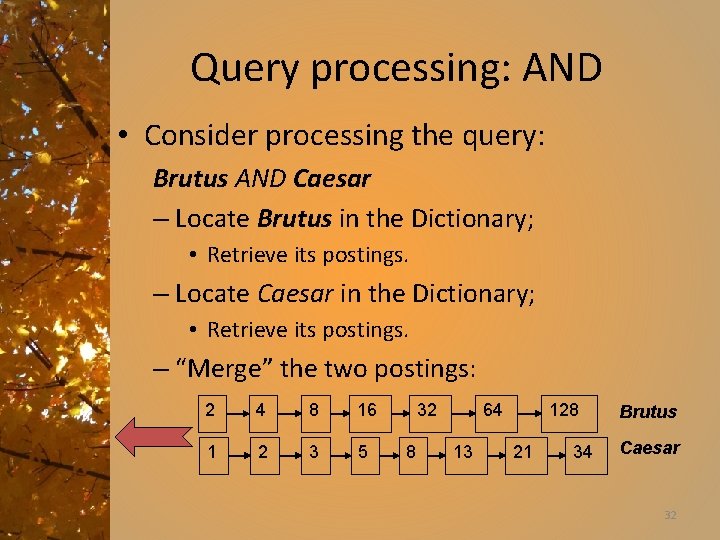

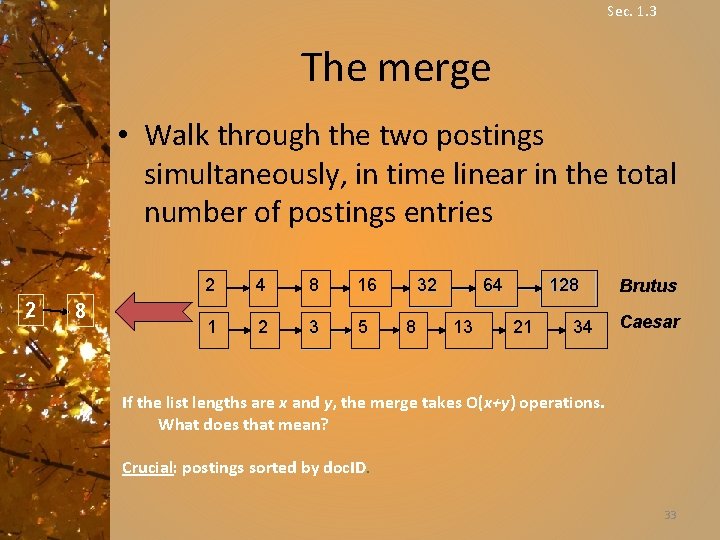

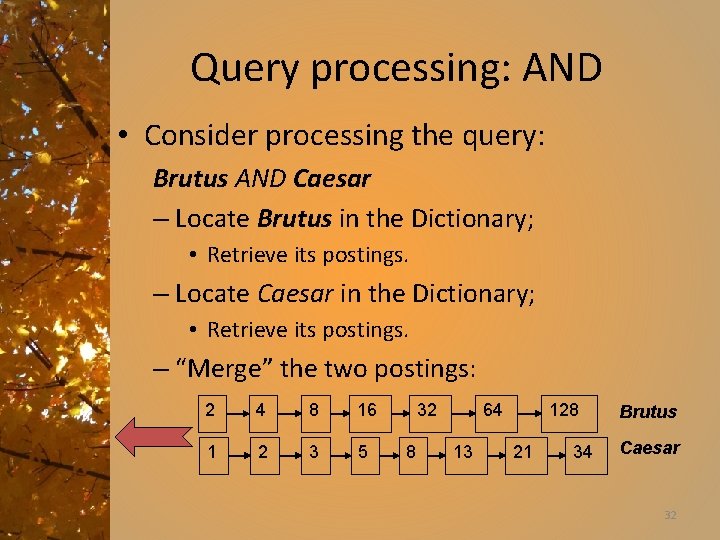

Query processing: AND • Consider processing the query: Brutus AND Caesar – Locate Brutus in the Dictionary; • Retrieve its postings. – Locate Caesar in the Dictionary; • Retrieve its postings. – “Merge” the two postings: 2 4 8 16 1 2 3 5 32 8 64 13 128 21 34 Brutus Caesar 32

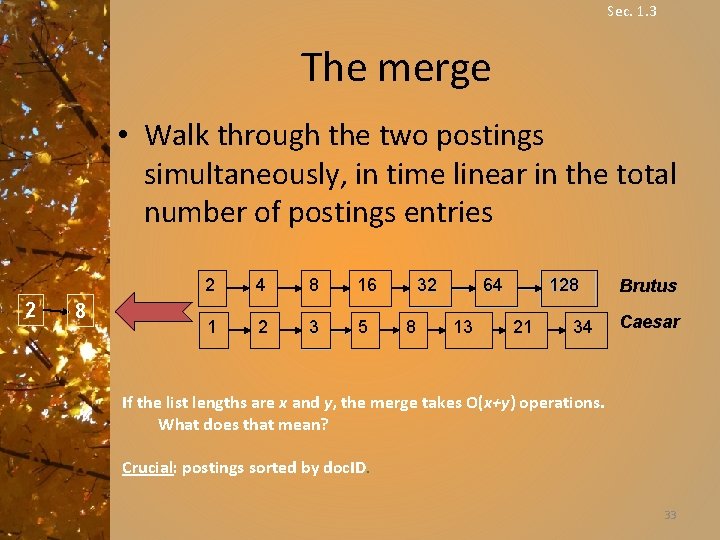

Sec. 1. 3 The merge • Walk through the two postings simultaneously, in time linear in the total number of postings entries 2 8 2 4 8 16 1 2 3 5 32 8 128 64 13 21 34 Brutus Caesar If the list lengths are x and y, the merge takes O(x+y) operations. What does that mean? Crucial: postings sorted by doc. ID. 33

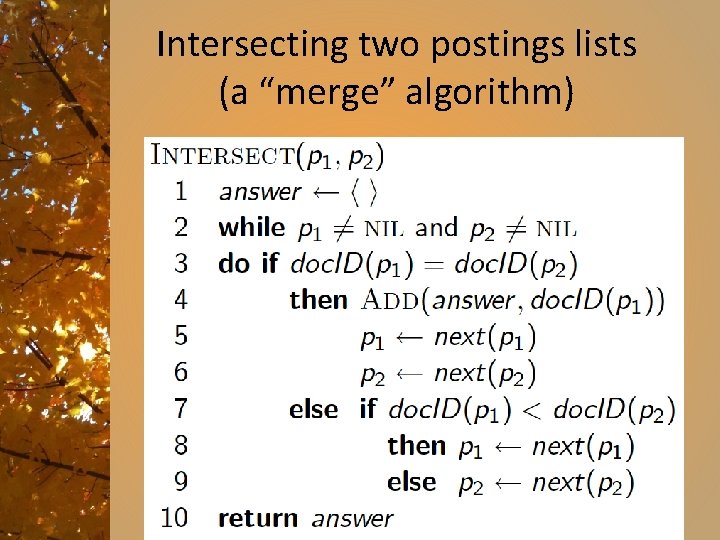

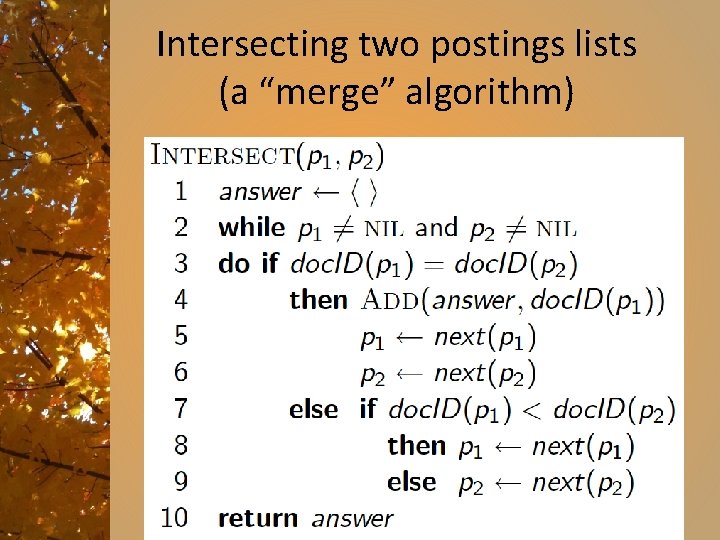

Intersecting two postings lists (a “merge” algorithm) 34

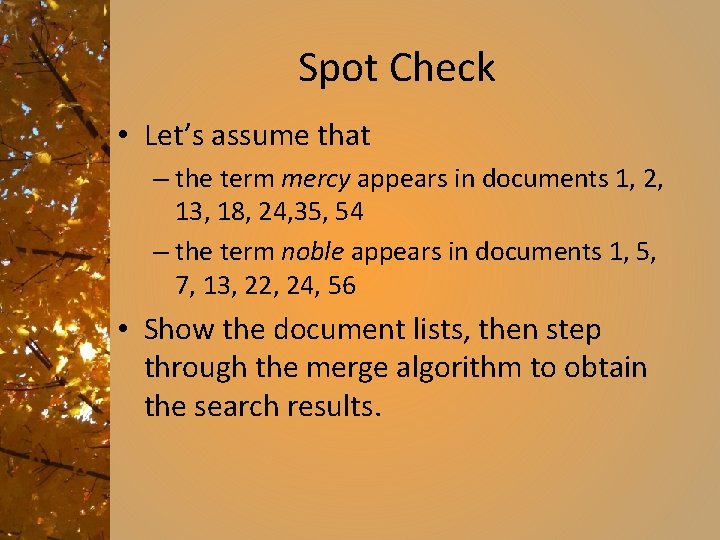

Spot Check • Let’s assume that – the term mercy appears in documents 1, 2, 13, 18, 24, 35, 54 – the term noble appears in documents 1, 5, 7, 13, 22, 24, 56 • Show the document lists, then step through the merge algorithm to obtain the search results.

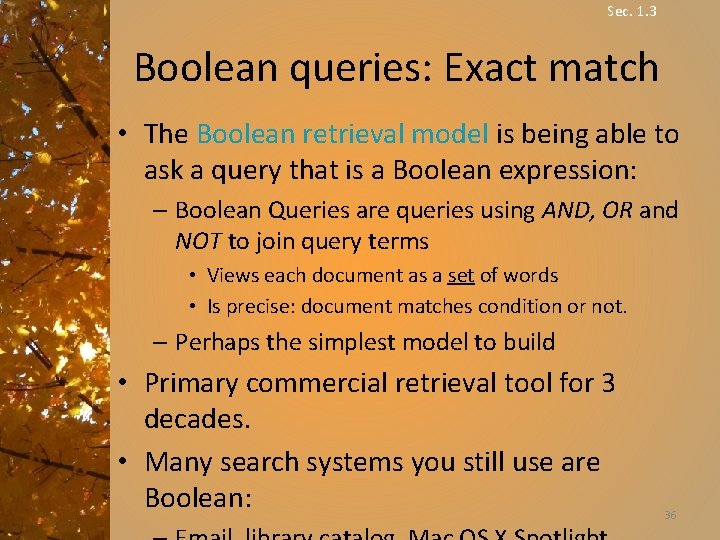

Sec. 1. 3 Boolean queries: Exact match • The Boolean retrieval model is being able to ask a query that is a Boolean expression: – Boolean Queries are queries using AND, OR and NOT to join query terms • Views each document as a set of words • Is precise: document matches condition or not. – Perhaps the simplest model to build • Primary commercial retrieval tool for 3 decades. • Many search systems you still use are Boolean: 36

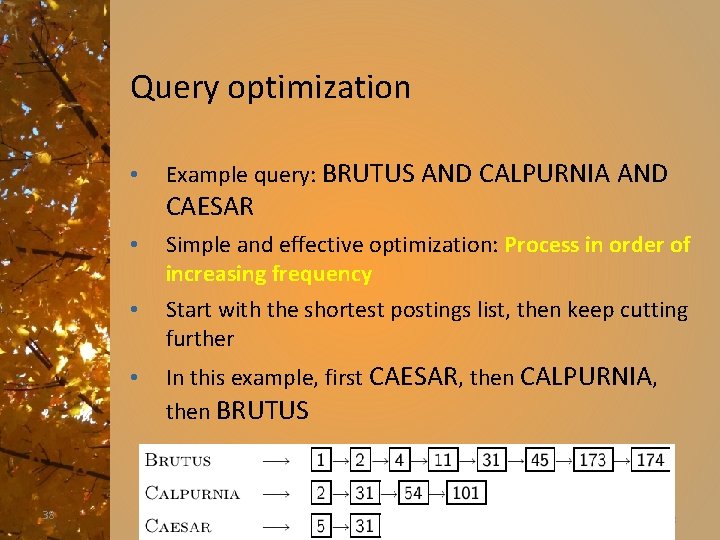

Query optimization • • Consider a query that is an and of n terms, n > 2 For each of the terms, get its postings list, then and them together • Example query: BRUTUS AND CALPURNIA AND CAESAR • 37 What is the best order for processing this query? 37

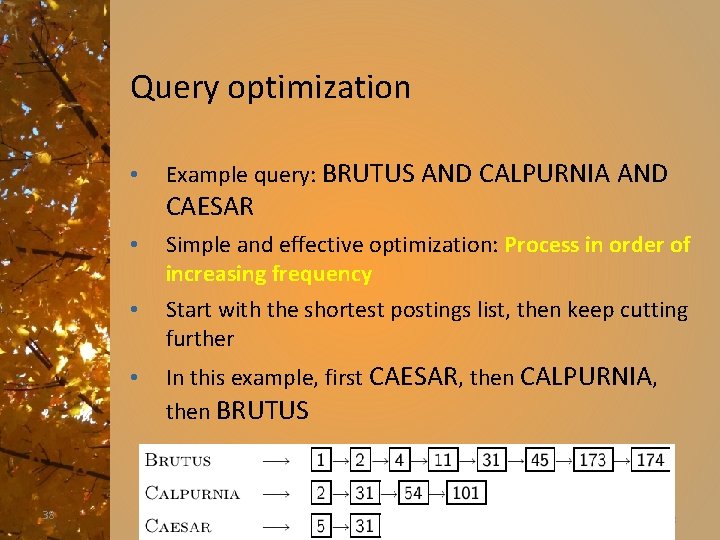

Query optimization • Example query: BRUTUS AND CALPURNIA AND CAESAR • • • 38 Simple and effective optimization: Process in order of increasing frequency Start with the shortest postings list, then keep cutting further In this example, first CAESAR, then CALPURNIA, then BRUTUS 38

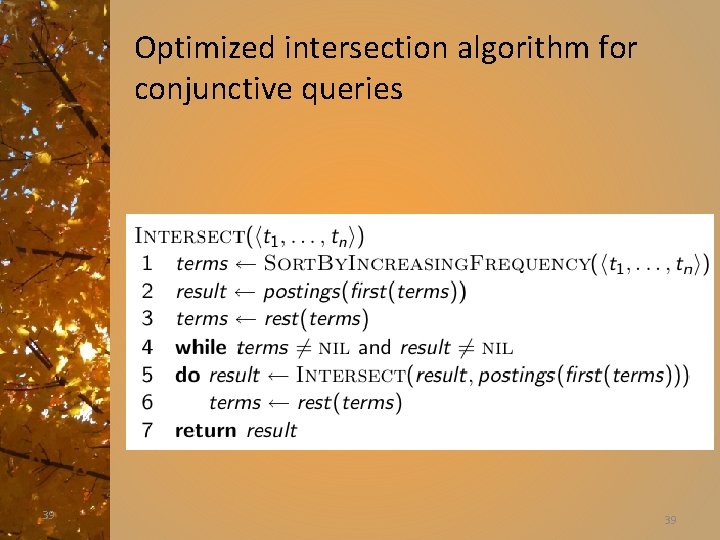

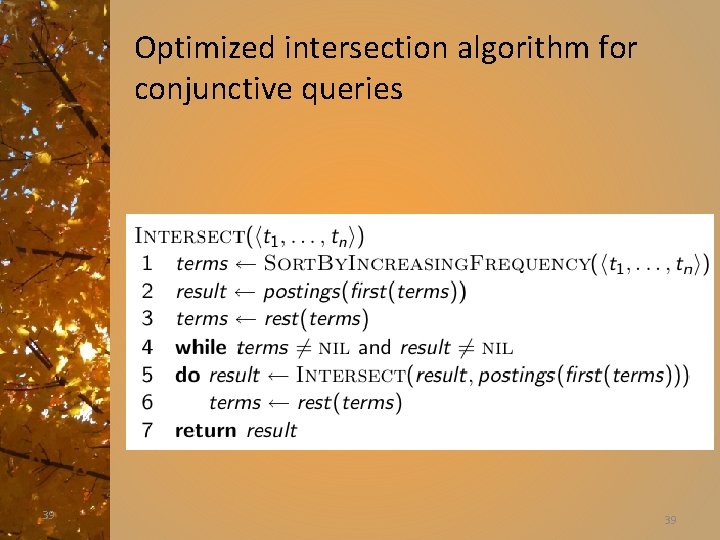

Optimized intersection algorithm for conjunctive queries 39 39

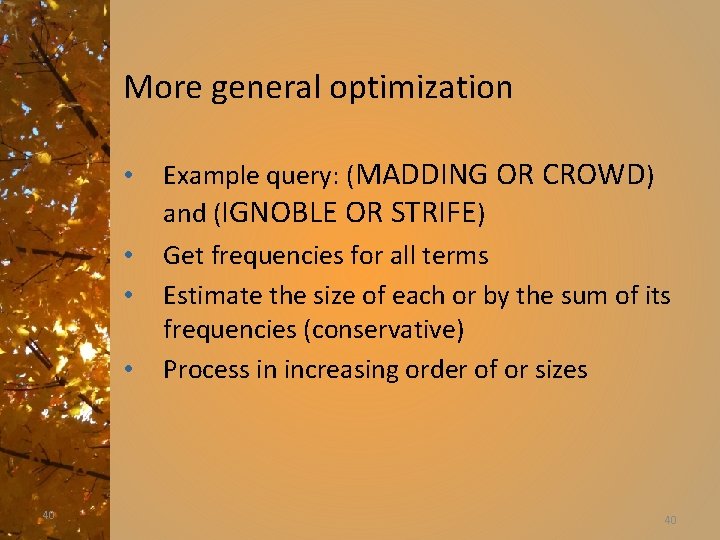

More general optimization • • 40 Example query: (MADDING OR CROWD) and (IGNOBLE OR STRIFE) Get frequencies for all terms Estimate the size of each or by the sum of its frequencies (conservative) Process in increasing order of or sizes 40

Scaling • These basic techniques are pretty simple • There are challenges – Scaling • as everything becomes digitized, how well do the processes scale? – Intelligent information extraction • I want information, not just a link to a place that might have that information.

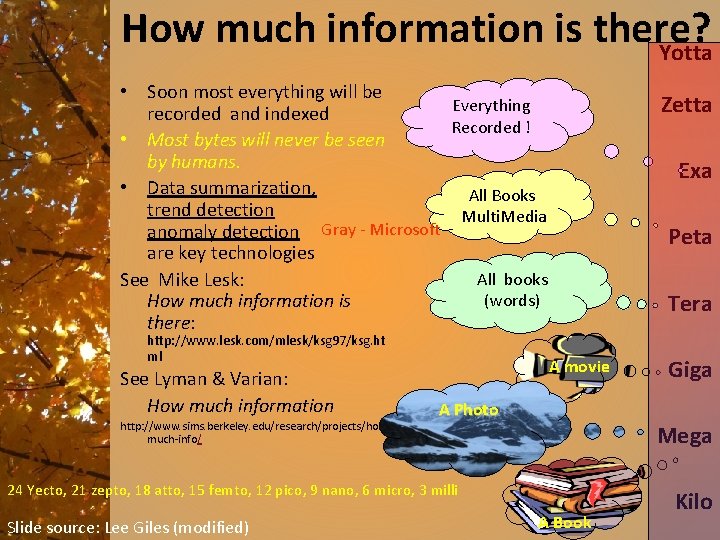

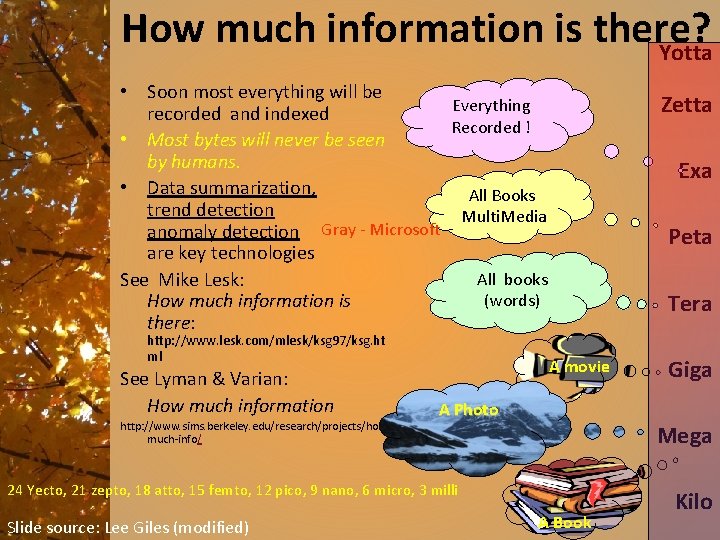

How much information is there? Yotta • Soon most everything will be Everything recorded and indexed Recorded ! • Most bytes will never be seen by humans. • Data summarization, All Books trend detection Multi. Media Gray Microsoft anomaly detection are key technologies All books See Mike Lesk: (words) How much information is there: http: //www. lesk. com/mlesk/ksg 97/ksg. ht ml See Lyman & Varian: How much information http: //www. sims. berkeley. edu/research/projects/howmuch-info/ Zetta Exa Peta Tera A movie A Photo Mega 24 Yecto, 21 zepto, 18 atto, 15 femto, 12 pico, 9 nano, 6 micro, 3 milli Slide source: Lee Giles (modified) Giga A Book Kilo

Getting the required information • Dependent on – Acquiring information – Storing information – Indexing – Interaction with the information source – Evaluation

Getting the required information • We have learned about – Acquiring information – Storing information – Indexing (the basics) – Interaction with the information source – Evaluation

Getting the required information • Still to come – Acquiring information – Storing information – Indexing (more) – Interaction with the information source – Evaluation

The web and its challenges § Unusual and diverse documents § Unusual and diverse users, queries, information needs § Beyond terms, exploit ideas from social networks § link analysis, clickstreams … § How do search engines work? And how can we make them better? 46

References • Christopher D. Manning, Prabhakar Raghavan and Hinrich Schütze, Introduction to Information Retrieval, Cambridge University Press. 2008. – – • • Book available online at http: //nlp. stanford. edu/IR-book/information-retrieval-book. html Many of these slides are taken directly from the authors’ slides from the first chapter of the book. C. Lee Giles, Penn State. http: //clgiles. ist. psu. edu/ Paging figure from Vittore Carsarosa, University of Parma, Italy