Introduction to HMM cont CHEN TZAN HWEI Reference

- Slides: 19

Introduction to HMM (cont) CHEN TZAN HWEI Reference : the slides of prof. Berlin Chen

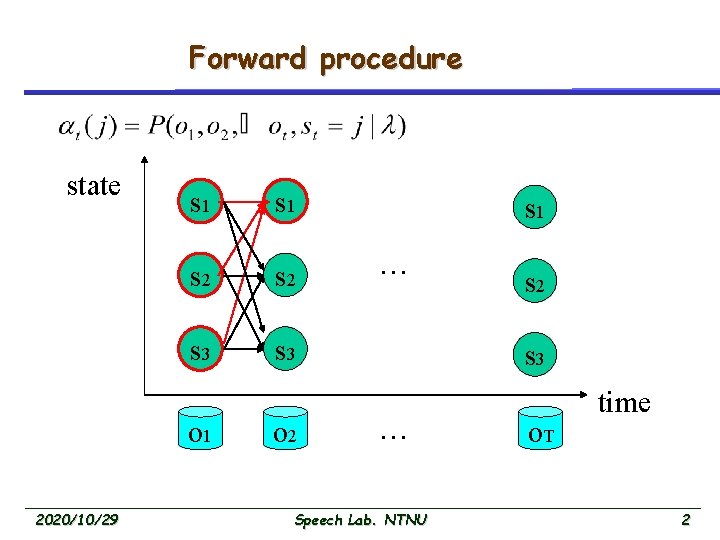

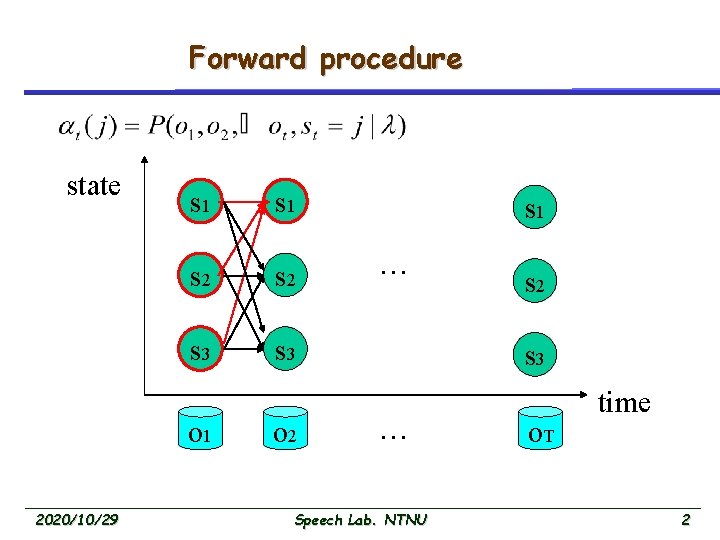

Forward procedure state s 1 s 2 s 3 o 1 2020/10/29 o 2 s 1 … s 2 s 3 … Speech Lab. NTNU o. T time 2

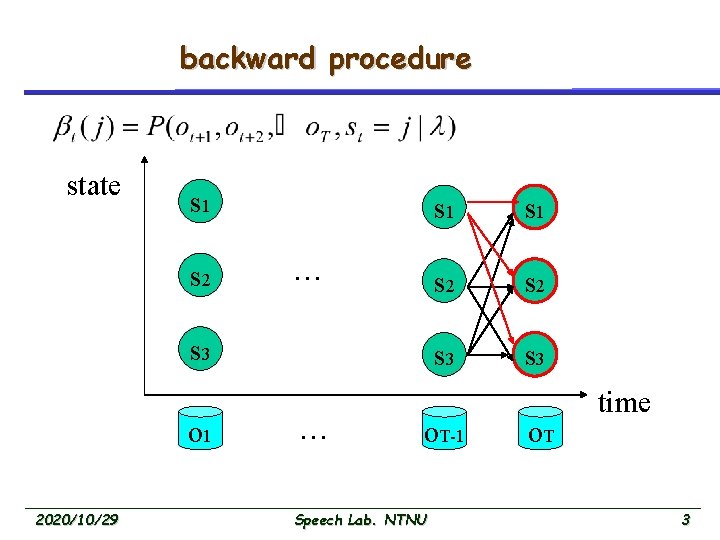

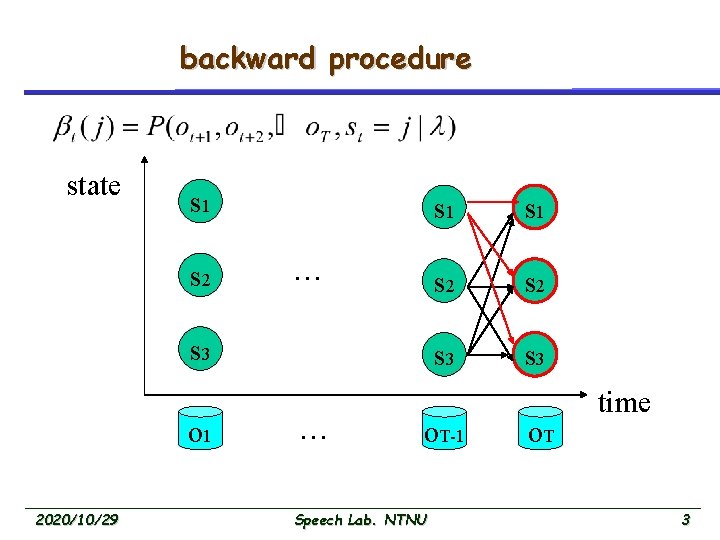

backward procedure state s 1 s 2 … s 3 o 1 2020/10/29 … s 1 s 2 s 3 o. T-1 Speech Lab. NTNU o. T time 3

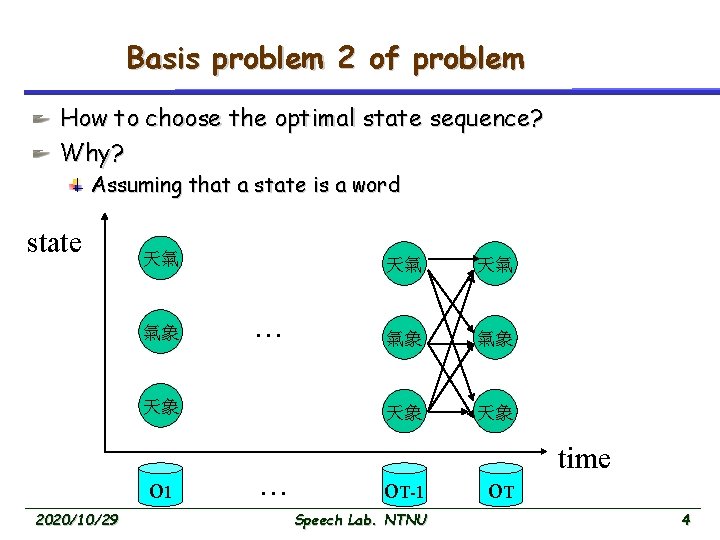

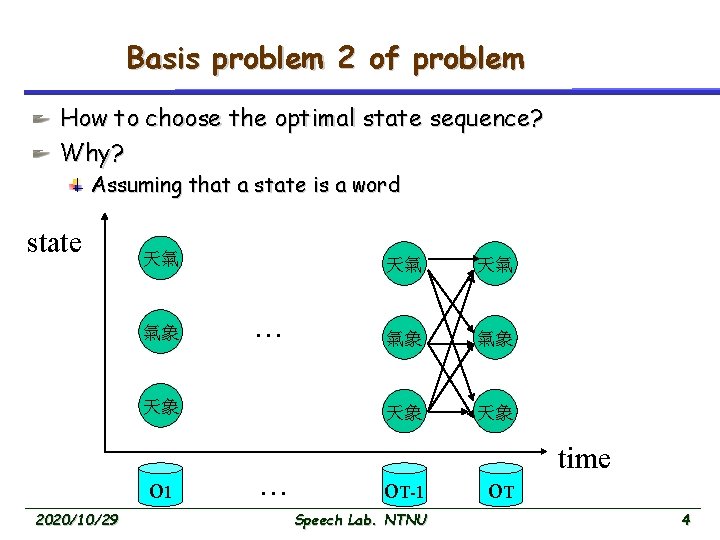

Basis problem 2 of problem How to choose the optimal state sequence? Why? Assuming that a state is a word state 天氣 氣象 … 天象 o 1 2020/10/29 … 天氣 天氣 氣象 氣象 天象 天象 o. T-1 Speech Lab. NTNU o. T time 4

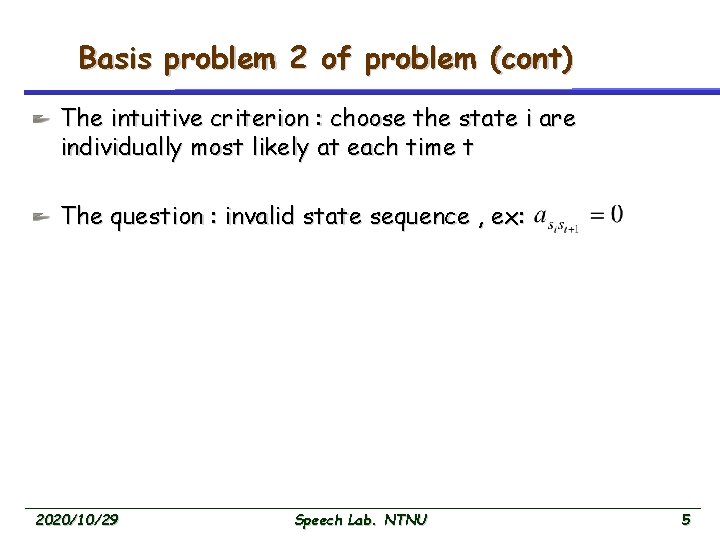

Basis problem 2 of problem (cont) The intuitive criterion : choose the state i are individually most likely at each time t The question : invalid state sequence , ex: 2020/10/29 Speech Lab. NTNU 5

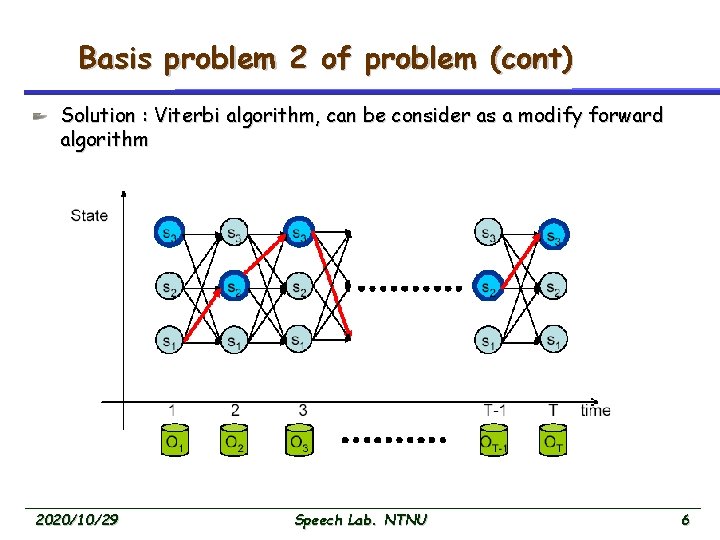

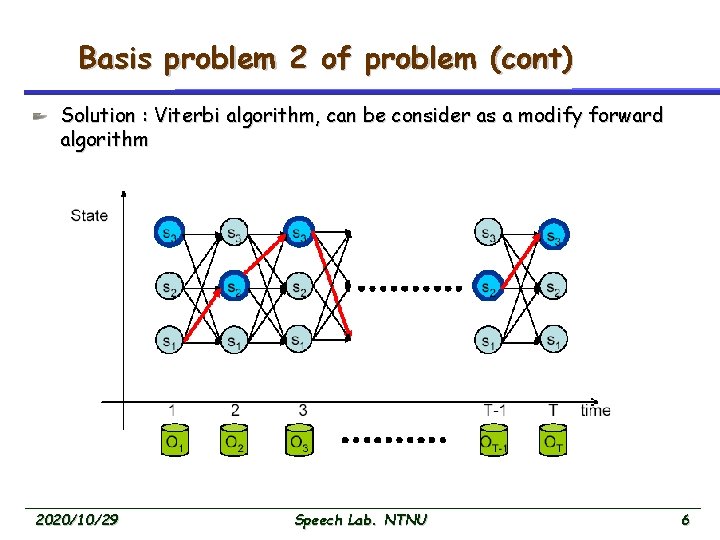

Basis problem 2 of problem (cont) Solution : Viterbi algorithm, can be consider as a modify forward algorithm 2020/10/29 Speech Lab. NTNU 6

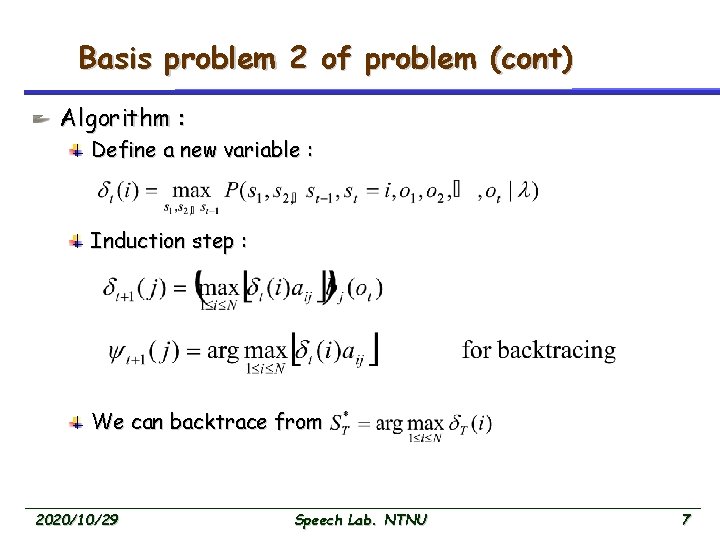

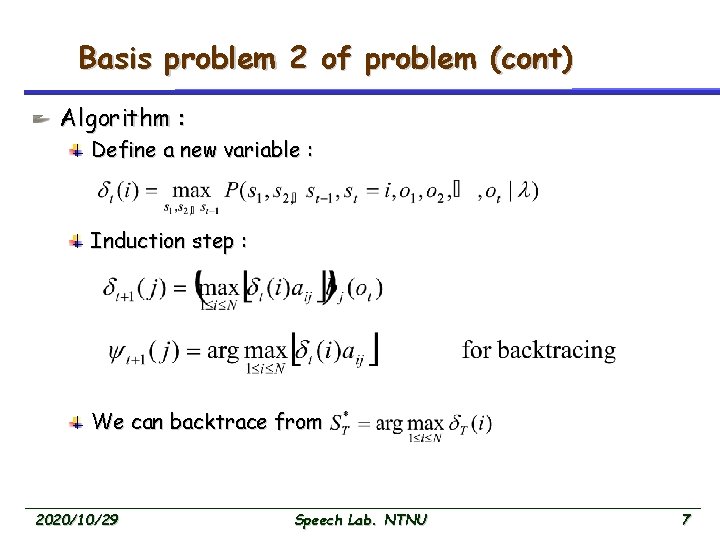

Basis problem 2 of problem (cont) Algorithm : Define a new variable : Induction step : We can backtrace from 2020/10/29 Speech Lab. NTNU 7

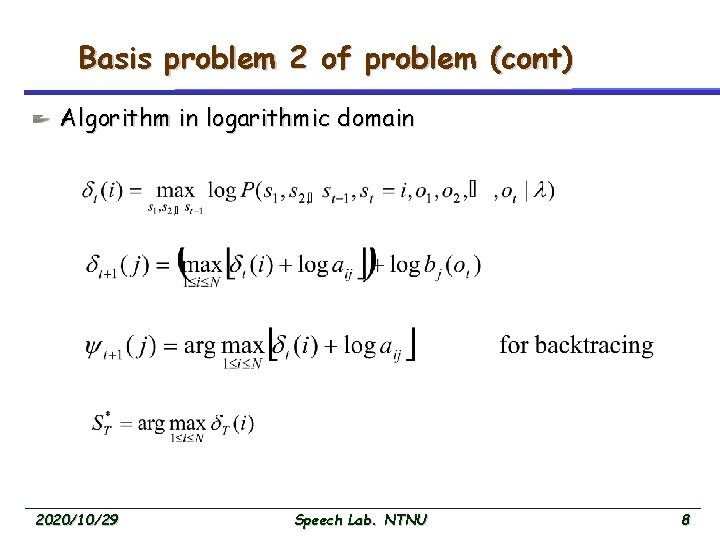

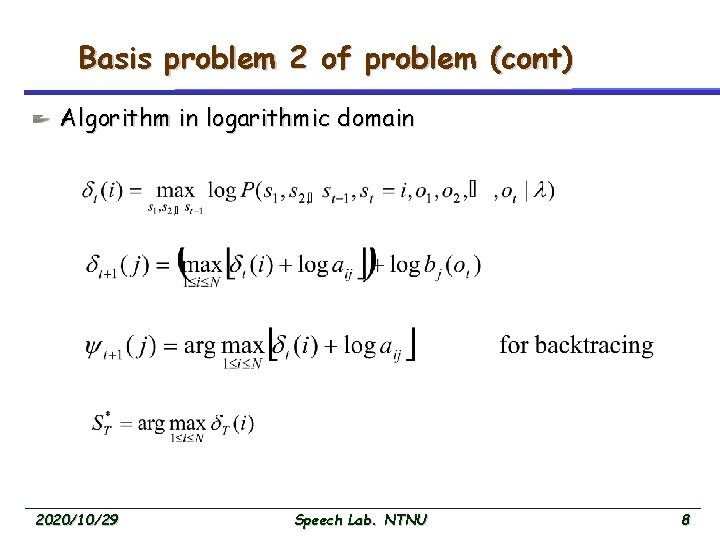

Basis problem 2 of problem (cont) Algorithm in logarithmic domain 2020/10/29 Speech Lab. NTNU 8

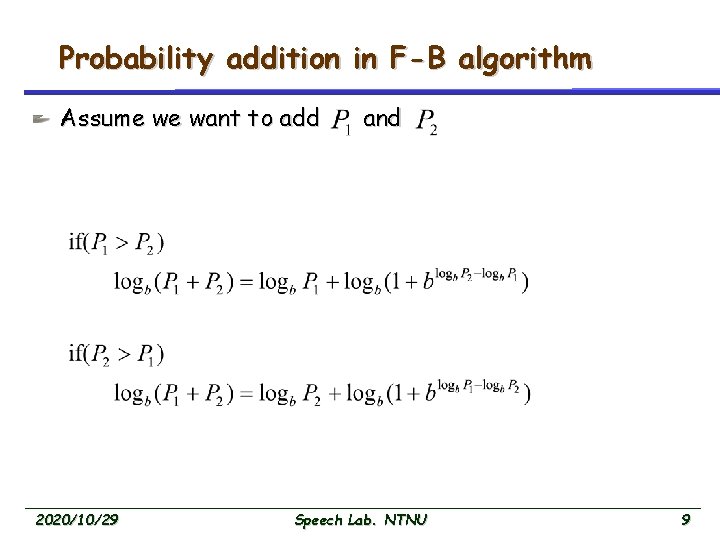

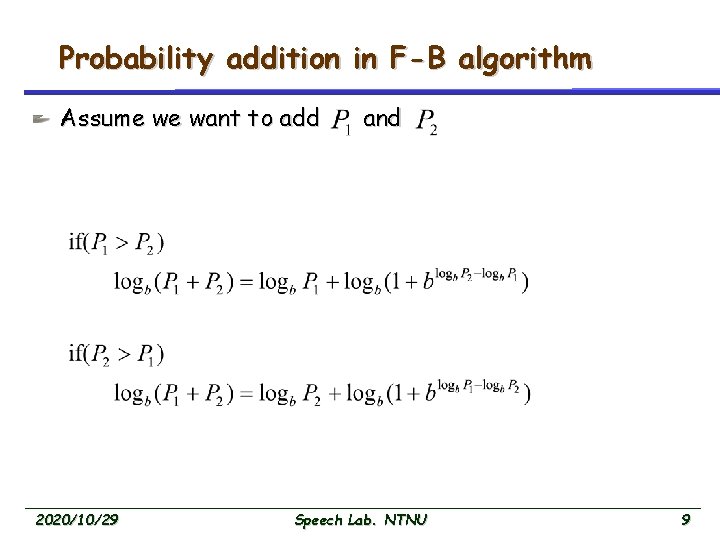

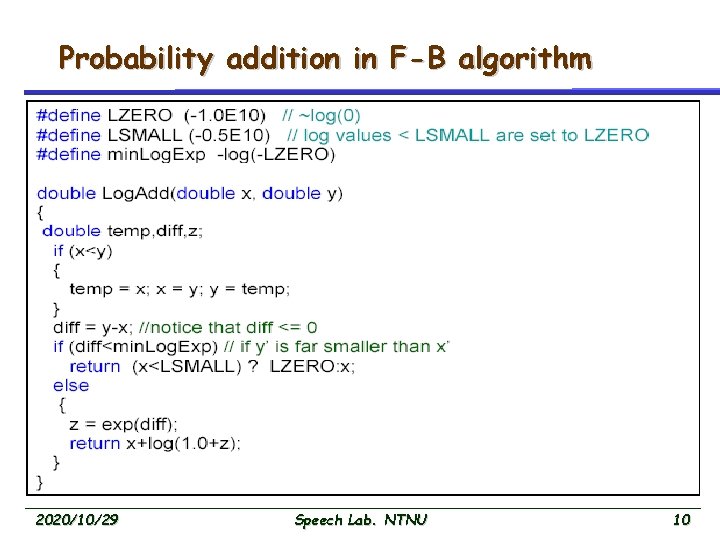

Probability addition in F-B algorithm Assume we want to add 2020/10/29 and Speech Lab. NTNU 9

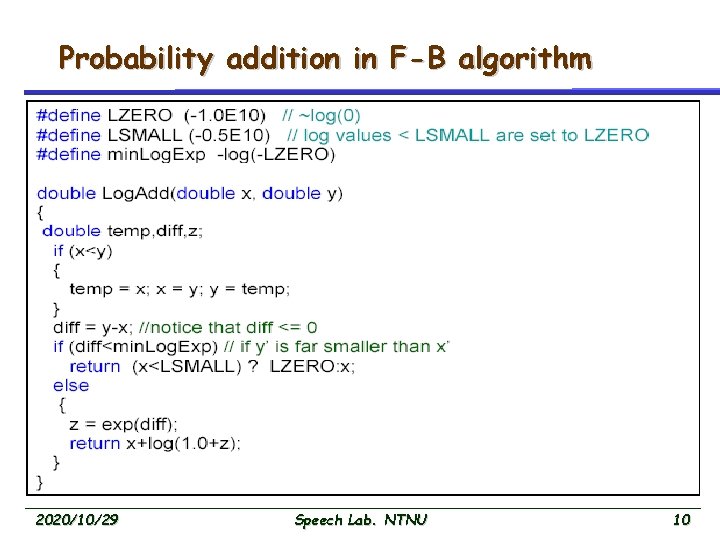

Probability addition in F-B algorithm 2020/10/29 Speech Lab. NTNU 10

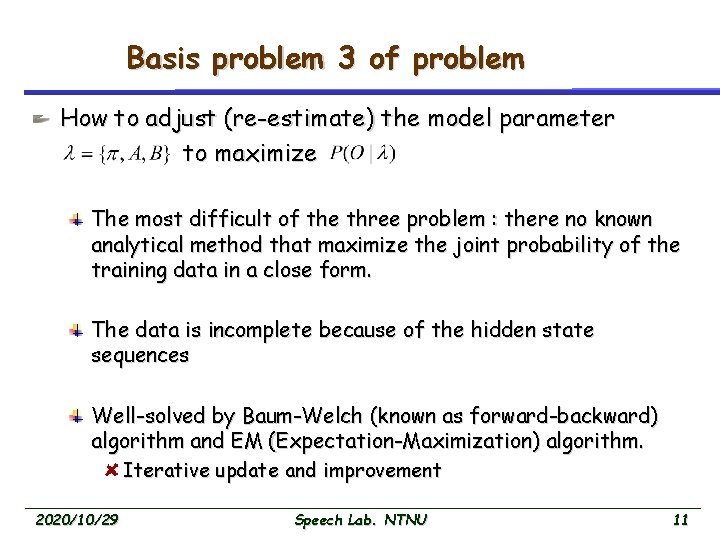

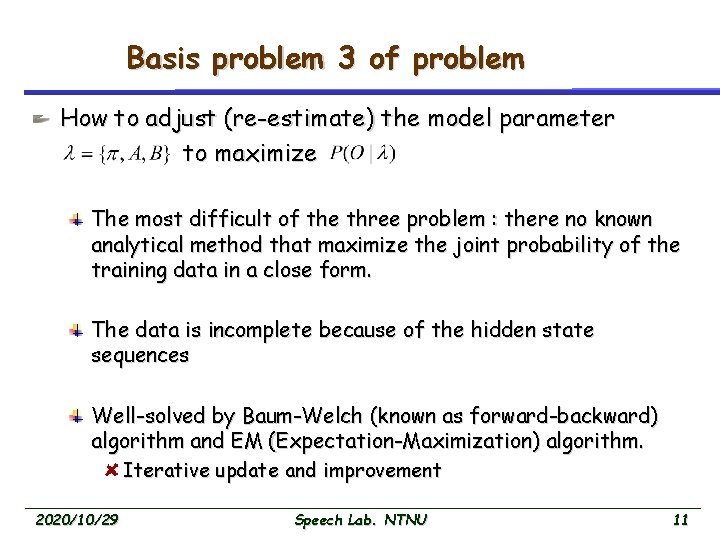

Basis problem 3 of problem How to adjust (re-estimate) the model parameter to maximize The most difficult of the three problem : there no known analytical method that maximize the joint probability of the training data in a close form. The data is incomplete because of the hidden state sequences Well-solved by Baum-Welch (known as forward-backward) algorithm and EM (Expectation-Maximization) algorithm. Iterative update and improvement 2020/10/29 Speech Lab. NTNU 11

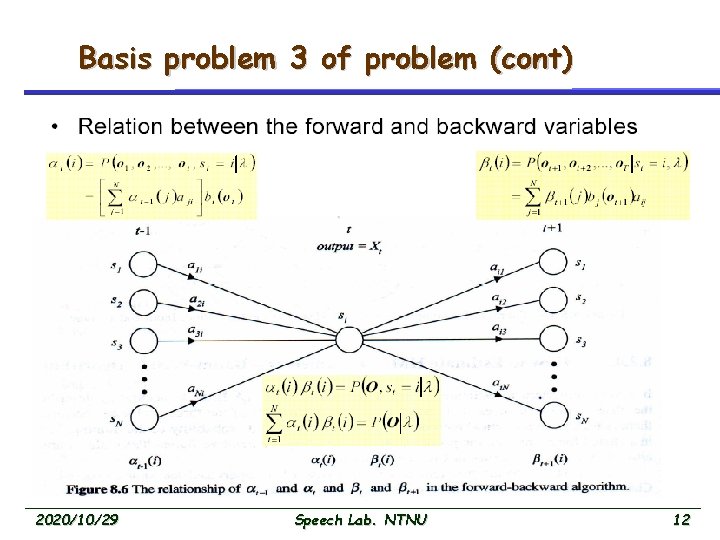

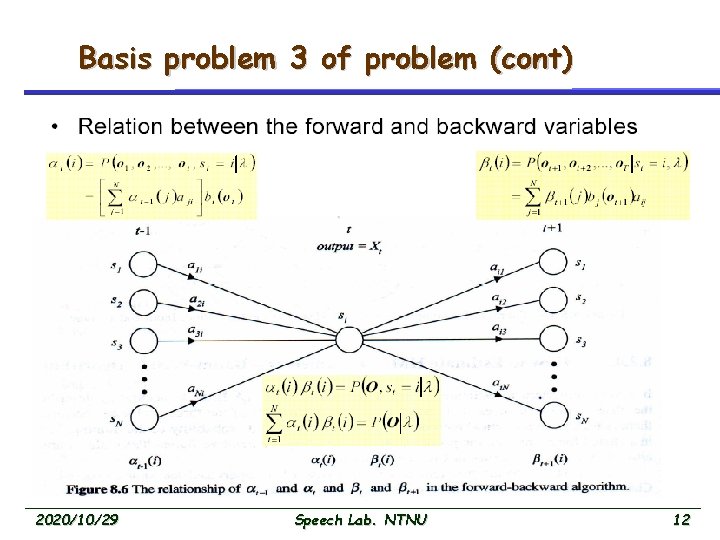

Basis problem 3 of problem (cont) 2020/10/29 Speech Lab. NTNU 12

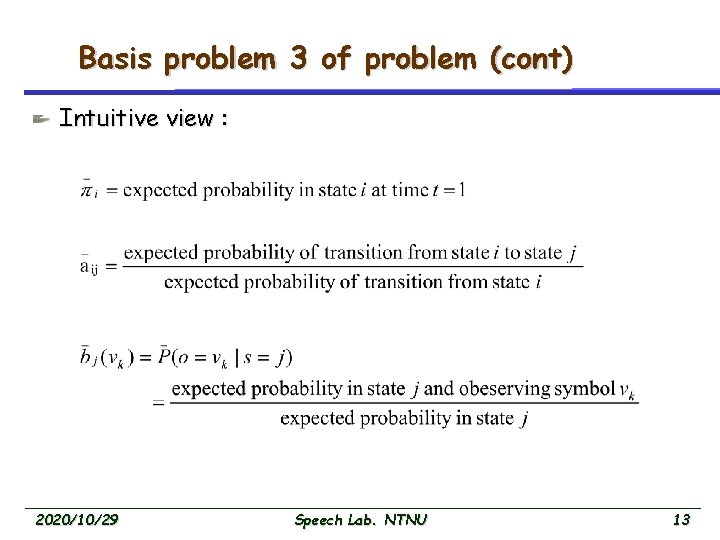

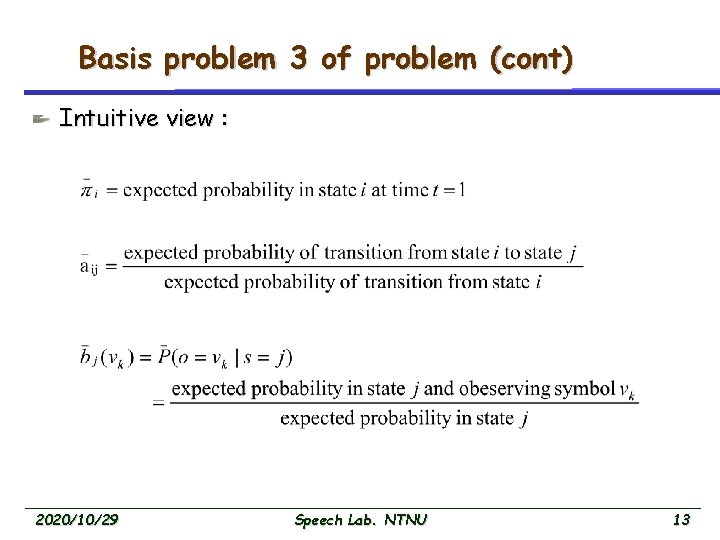

Basis problem 3 of problem (cont) Intuitive view : 2020/10/29 Speech Lab. NTNU 13

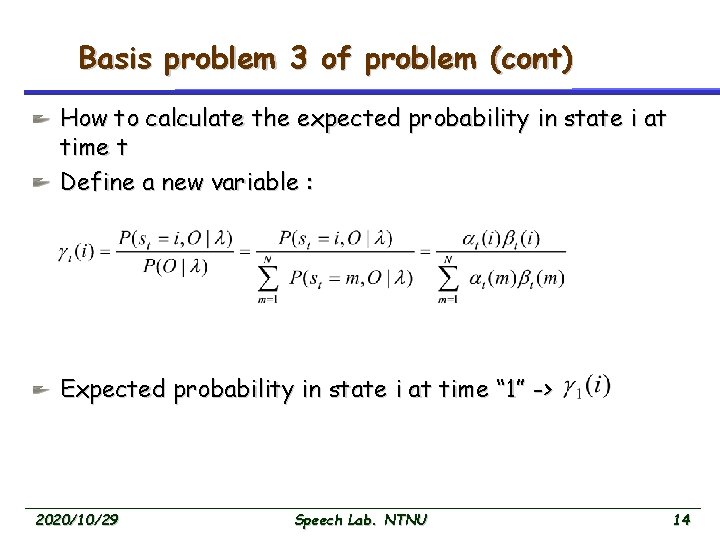

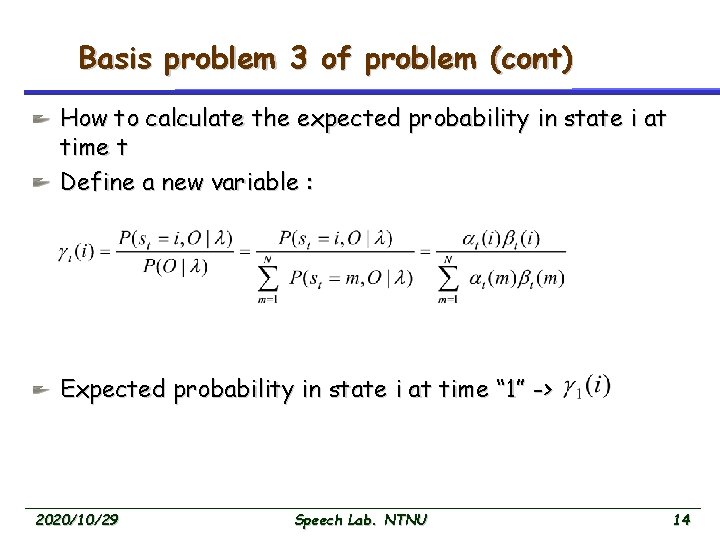

Basis problem 3 of problem (cont) How to calculate the expected probability in state i at time t Define a new variable : Expected probability in state i at time “ 1” -> 2020/10/29 Speech Lab. NTNU 14

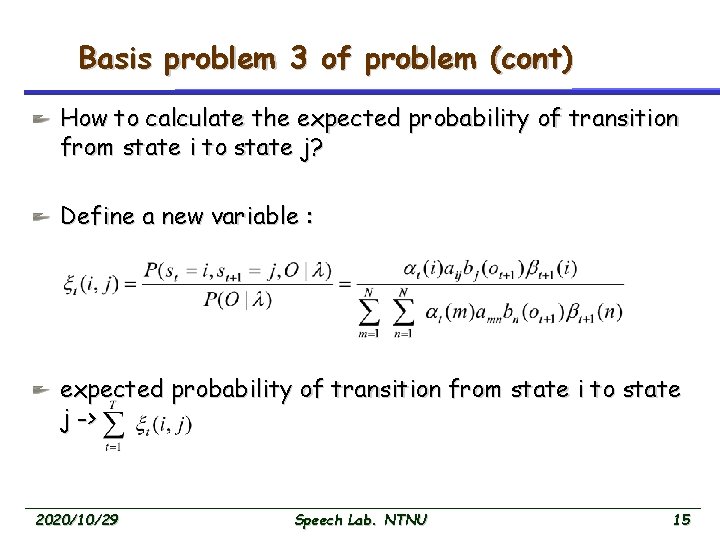

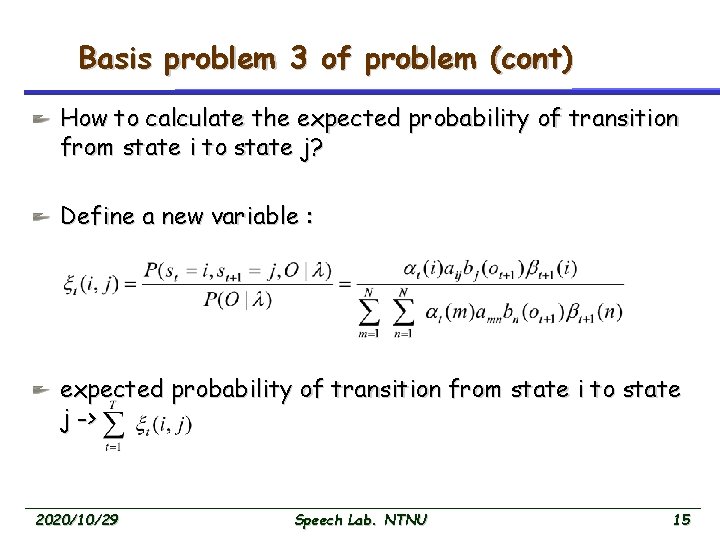

Basis problem 3 of problem (cont) How to calculate the expected probability of transition from state i to state j? Define a new variable : expected probability of transition from state i to state j -> 2020/10/29 Speech Lab. NTNU 15

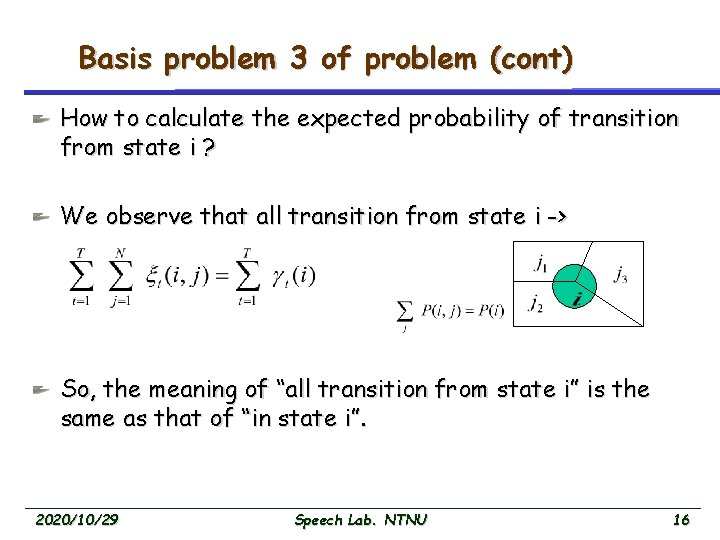

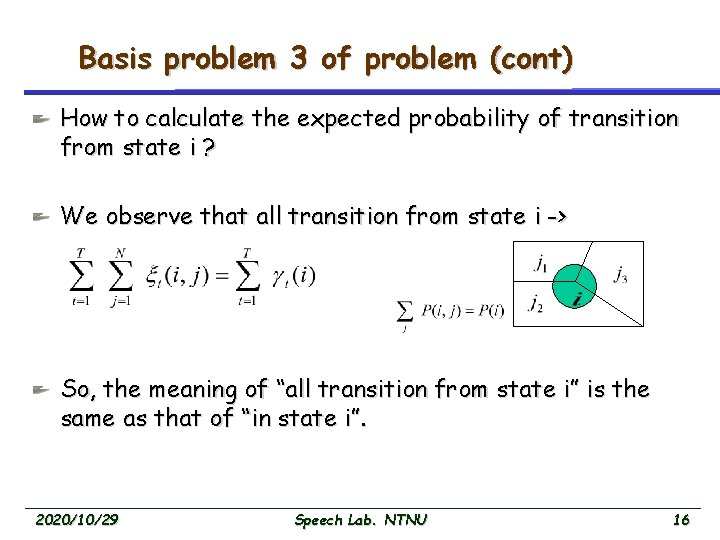

Basis problem 3 of problem (cont) How to calculate the expected probability of transition from state i ? We observe that all transition from state i -> So, the meaning of “all transition from state i” is the same as that of “in state i”. 2020/10/29 Speech Lab. NTNU 16

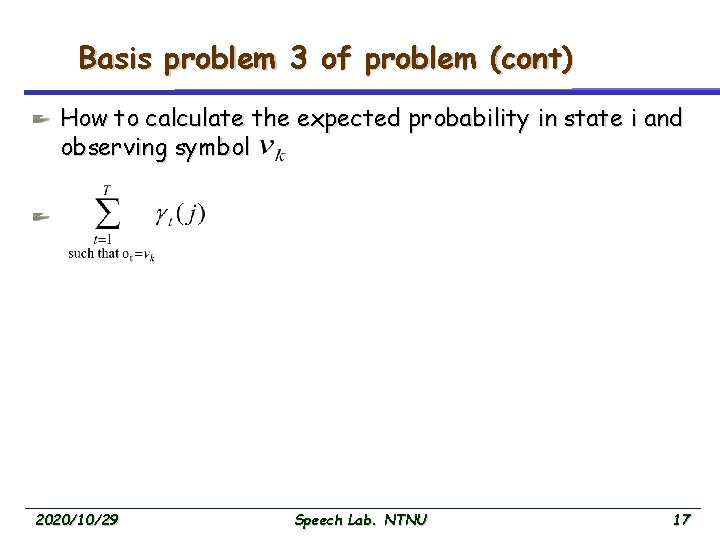

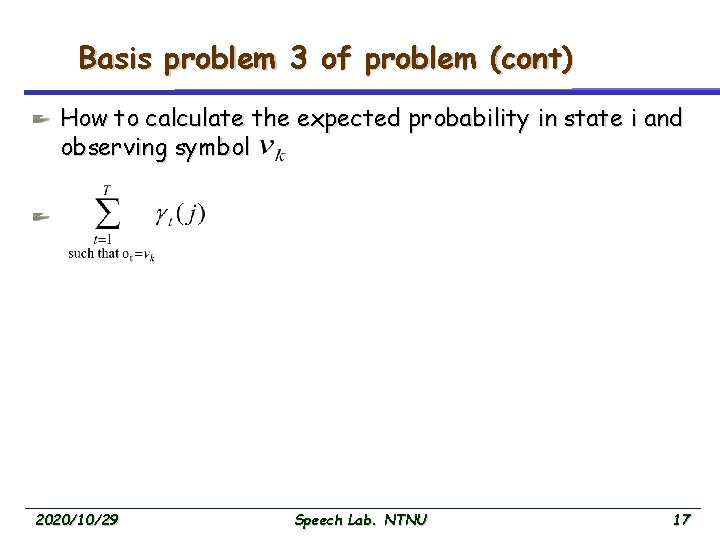

Basis problem 3 of problem (cont) How to calculate the expected probability in state i and observing symbol 2020/10/29 Speech Lab. NTNU 17

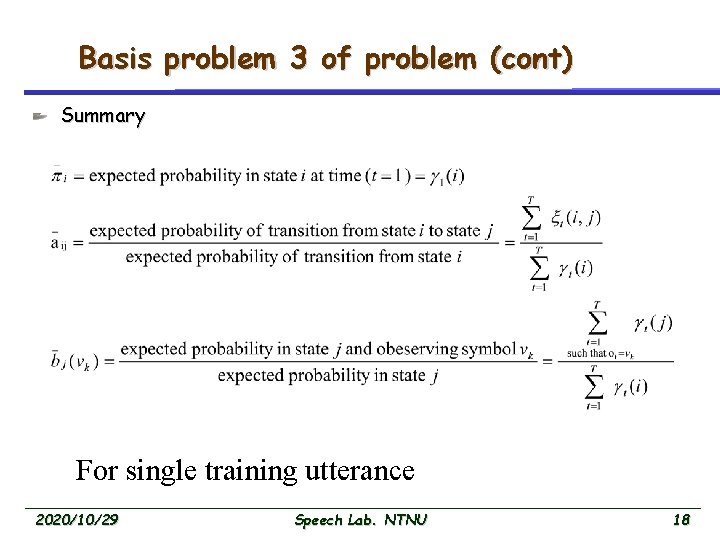

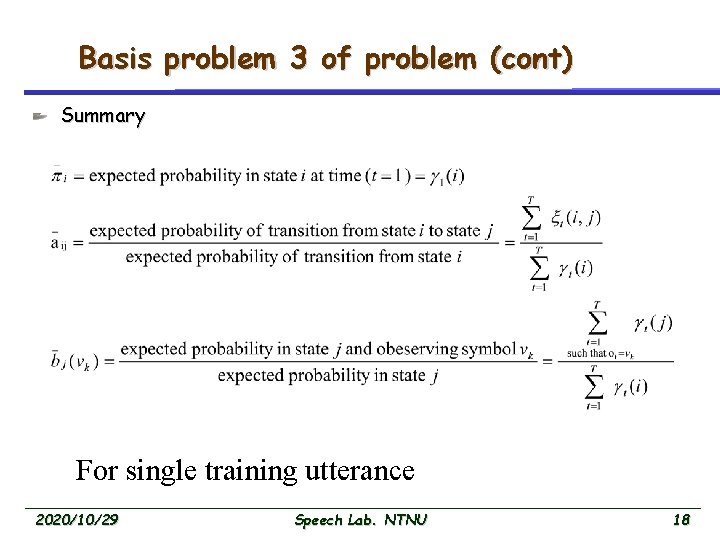

Basis problem 3 of problem (cont) Summary For single training utterance 2020/10/29 Speech Lab. NTNU 18

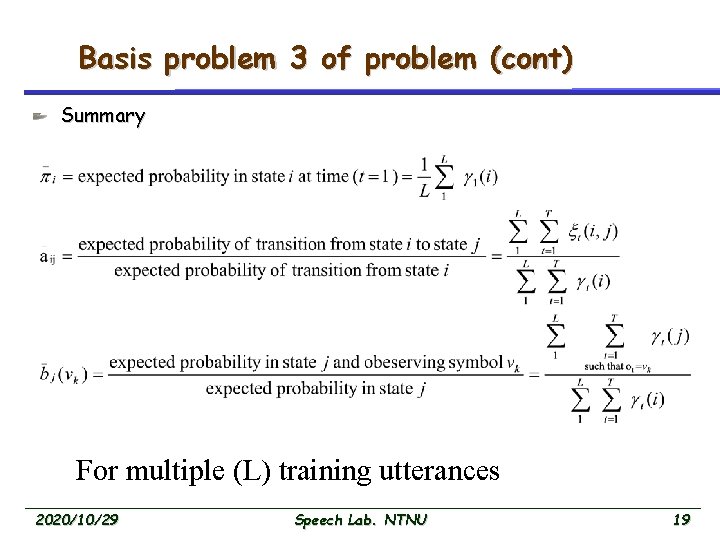

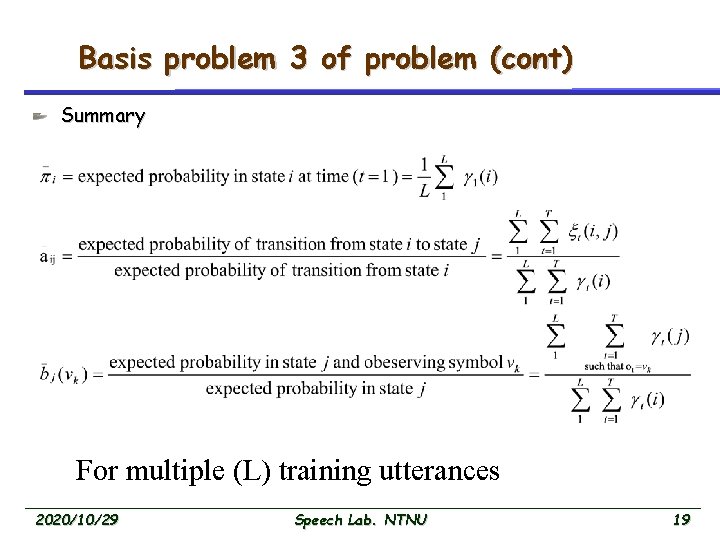

Basis problem 3 of problem (cont) Summary For multiple (L) training utterances 2020/10/29 Speech Lab. NTNU 19