Introduction to Content Analytics mer Sever IBM SWG

Introduction to Content Analytics Ömer Sever IBM SWG Enterprise Content Mangaement

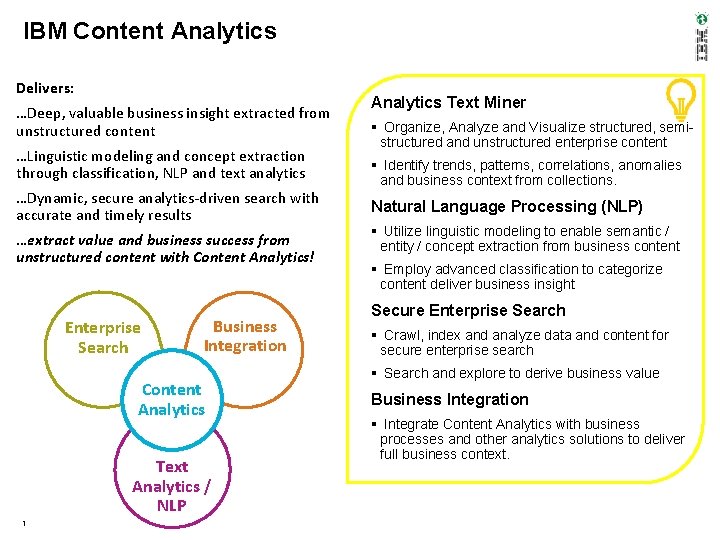

IBM Content Analytics Delivers: …Deep, valuable business insight extracted from unstructured content …Linguistic modeling and concept extraction through classification, NLP and text analytics …Dynamic, secure analytics-driven search with accurate and timely results …extract value and business success from unstructured content with Content Analytics! Enterprise Search Business Integration Content Analytics Text Analytics / NLP 1 Analytics Text Miner Organize, Analyze and Visualize structured, semistructured and unstructured enterprise content Identify trends, patterns, correlations, anomalies and business context from collections. Natural Language Processing (NLP) Utilize linguistic modeling to enable semantic / entity / concept extraction from business content Employ advanced classification to categorize content deliver business insight Secure Enterprise Search Crawl, index and analyze data and content for secure enterprise search Search and explore to derive business value Business Integration Integrate Content Analytics with business processes and other analytics solutions to deliver full business context.

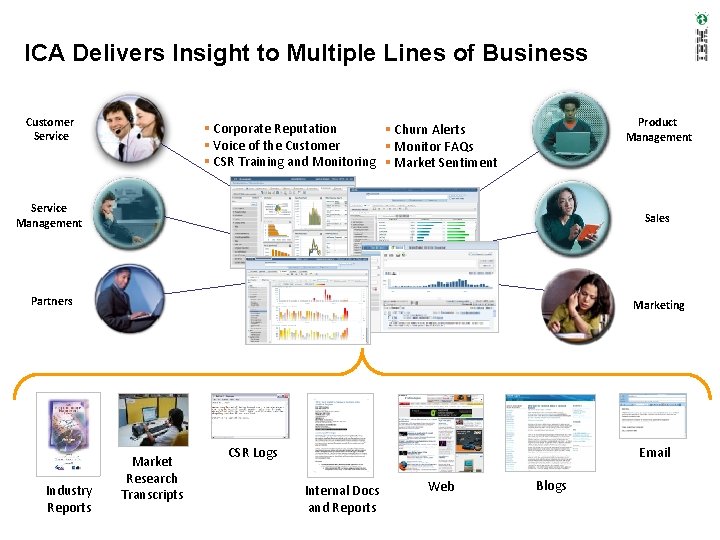

ICA Delivers Insight to Multiple Lines of Business Customer Service Product Management Corporate Reputation Churn Alerts Voice of the Customer Monitor FAQs CSR Training and Monitoring Market Sentiment Service Management Sales Partners Marketing Industry Reports 2 Market Research Transcripts CSR Logs Email Internal Docs and Reports Web Blogs

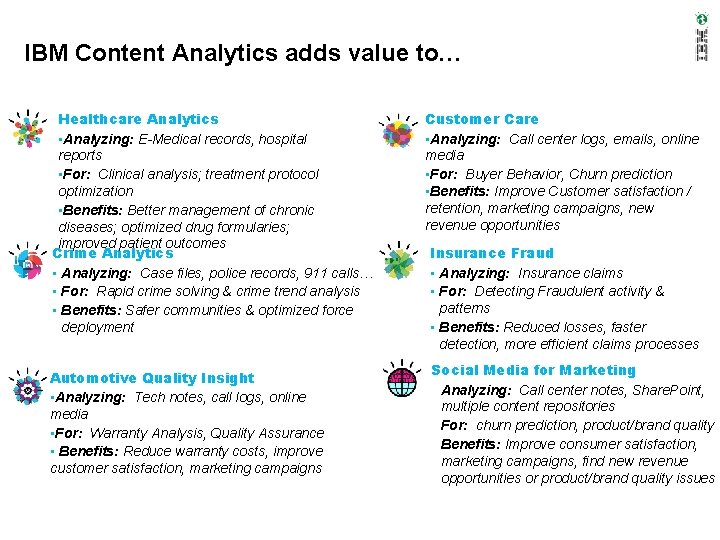

IBM Content Analytics adds value to… Healthcare Analytics • Analyzing: E-Medical records, hospital reports • For: Clinical analysis; treatment protocol optimization • Benefits: Better management of chronic diseases; optimized drug formularies; improved patient outcomes Crime Analytics • Analyzing: Case files, police records, 911 calls… • For: Rapid crime solving & crime trend analysis • Benefits: Safer communities & optimized force deployment Automotive Quality Insight • Analyzing: Tech notes, call logs, online media • For: Warranty Analysis, Quality Assurance • Benefits: Reduce warranty costs, improve customer satisfaction, marketing campaigns Customer Care • Analyzing: Call center logs, emails, online media • For: Buyer Behavior, Churn prediction • Benefits: Improve Customer satisfaction / retention, marketing campaigns, new revenue opportunities Insurance Fraud • Analyzing: Insurance claims • For: Detecting Fraudulent activity & patterns • Benefits: Reduced losses, faster detection, more efficient claims processes Social Media for Marketing • Analyzing: Call center notes, Share. Point, multiple content repositories • For: churn prediction, product/brand quality • Benefits: Improve consumer satisfaction, marketing campaigns, find new revenue opportunities or product/brand quality issues

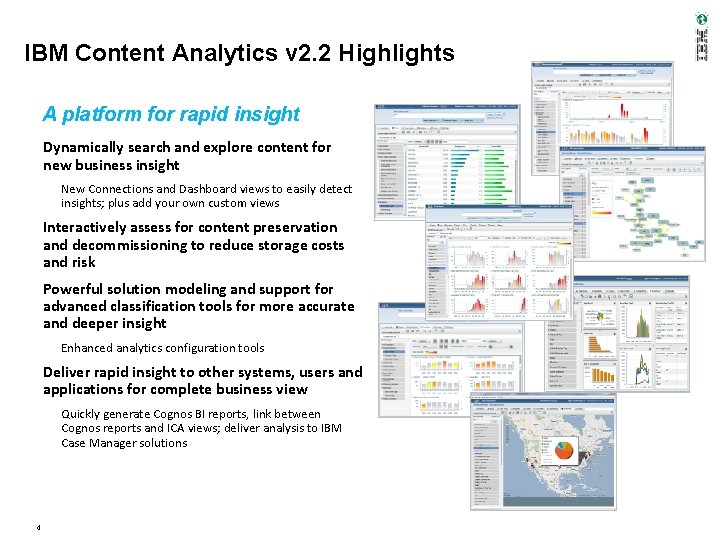

IBM Content Analytics v 2. 2 Highlights A platform for rapid insight Dynamically search and explore content for new business insight New Connections and Dashboard views to easily detect insights; plus add your own custom views Interactively assess for content preservation and decommissioning to reduce storage costs and risk Powerful solution modeling and support for advanced classification tools for more accurate and deeper insight Enhanced analytics configuration tools Deliver rapid insight to other systems, users and applications for complete business view Quickly generate Cognos BI reports, link between Cognos reports and ICA views; deliver analysis to IBM Case Manager solutions 4

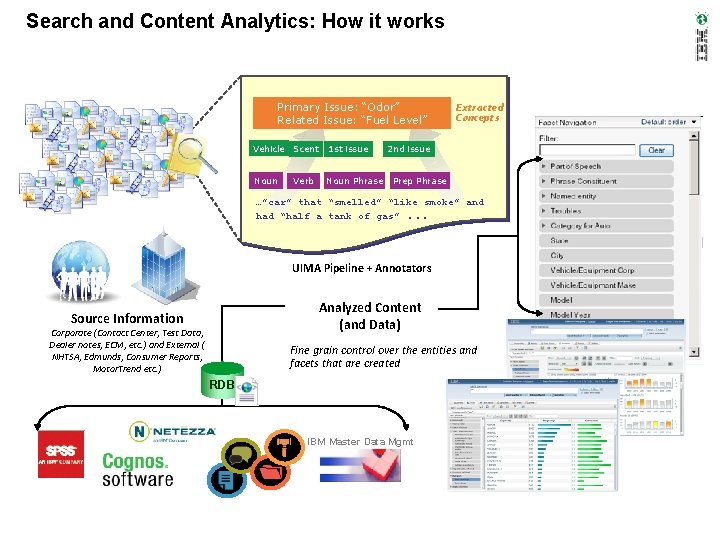

Search and Content Analytics: How it works Primary Issue: “Odor” Related Issue: “Fuel Level” Vehicle Scent 1 st issue Noun Phrase Verb Extracted Concepts 2 nd issue Prep Phrase …”car” that “smelled” “like smoke” and had “half a tank of gas”. . . UIMA Pipeline + Annotators Analyzed Content (and Data) Source Information Corporate (Contact Center, Test Data, Dealer notes, ECM, etc. ) and External ( NHTSA, Edmunds, Consumer Reports, Motor. Trend etc. ) Fine grain control over the entities and facets that are created RDB IBM Master Data Mgmt 5

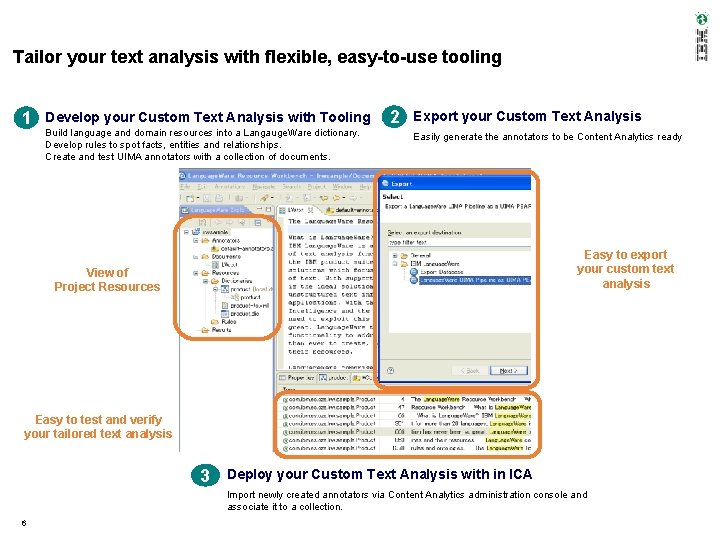

Tailor your text analysis with flexible, easy-to-use tooling 1 Develop your Custom Text Analysis with Tooling Build language and domain resources into a Langauge. Ware dictionary. Develop rules to spot facts, entities and relationships. Create and test UIMA annotators with a collection of documents. 2 Export your Custom Text Analysis Easily generate the annotators to be Content Analytics ready Easy to export your custom text analysis View of Project Resources Easy to test and verify your tailored text analysis 3 Deploy your Custom Text Analysis with in ICA Import newly created annotators via Content Analytics administration console and associate it to a collection. 6

Text Analytics / Natural Language Processing (NLP) • The simplest text analytics scenario is to scan a set of documents written in a natural language, then: • model the document set for predictive classification purposes, or • populate a database or search index with the information extracted. • Text analytics also describes that application of text analytics to respond to business problems, whether independently or in conjunction with query and analysis of fielded, numerical data. • It is a truism that 80 percent of business-relevant information originates in unstructured form, primarily text. • These techniques and processes discover and present knowledge – facts, business rules, and relationships – that is otherwise locked in textual form, impenetrable to automated processing.

Text Analytics • The term text analytics describes a set of techniques: – Linguistic – Statistical – Machine learning • These techniques model and structure the information content of textual • • sources for – business intelligence – exploratory data analysis – research – investigation. The term is roughly synonymous with text mining Text Analytics is now more frequently in business settings while "text mining" is used in – life-sciences research – government intelligence.

Text Analytics • Text analytics involves – information retrieval – lexical analysis to study word frequency distributions – pattern recognition – tagging/annotation – information extraction – data mining link and association analysis – Visualization – predictive analytics • The overarching goal is, essentially, to turn text into data for analysis via application of – natural language processing (NLP) – analytical methods.

What is Text Mining? Text mining technology has been developed to acquire useful knowledge from large amounts of textual data

Natural Language Processing (NLP) • Natural Language processing (NLP) is a field of computer science and linguistics concerned with the interactions between computers and human (natural) languages. – Natural language generation systems convert information from computer databases into readable human language. – Natural language understanding systems convert samples of human language into more formal representations such as parse trees or first-order logic structures that are easier for computer programs to manipulate. • Many problems within NLP apply to both generation and understanding – a computer must be able to model morphology (the structure of words) in – order to understand an English sentence a model of morphology is also needed for producing a grammatically correct English sentence.

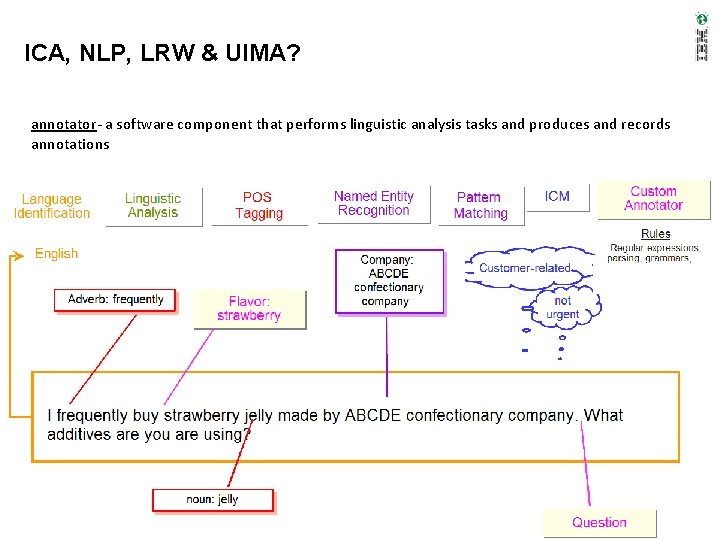

Terminology and Definitions • annotation - Information about a span of text. For example, an annotation could indicate that a span of • • • text represents a company name. annotator- a software component that performs linguistic analysis tasks and produces and records annotations. character rules - LRW rules to recognize sequences of characters (LRW way to do regular expressions) concept Extraction / Entity extraction- A text analysis function that identifies significant vocabulary items (such as people, places, or products) in text documents and produces a list of those items. dictionary– a list of words for document processing to use to create annotations lexical analysis- The overall process by which Language. Ware segments and normalizes text metadata– data about a document, such as size and modified date normalization- determining a single string representation for a word or term found in text. This single string representation may also be called lemma, citation form, canonical form. In LRW, since we include Semantic Normalization (IBM=International Business Machines, Big Blue) we use the term Normal Form parsing rules – LRW rules to recognize patterns of words, they run in the LRW rules engine regular expressions - A flexible means of identifying sequences of characters (such as URLs). Written in a formal language that can be interpreted by a regular expression processor. tokenization- The simple mechanical process of breaking up white space delimited text into words.

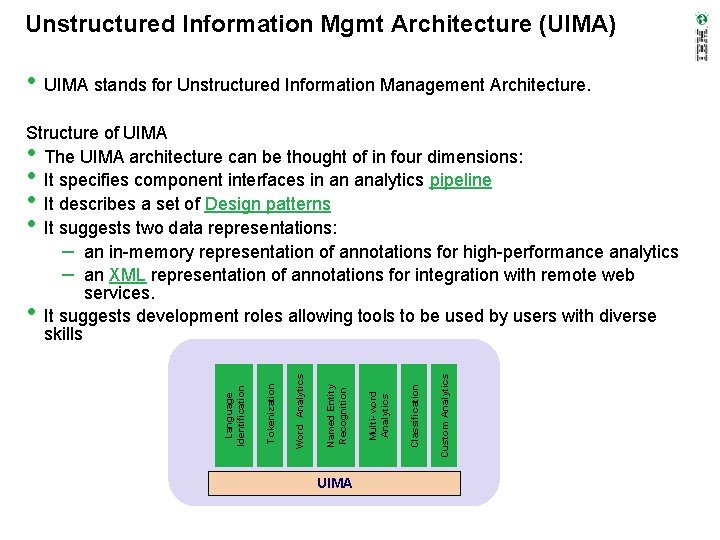

Unstructured Information Mgmt Architecture (UIMA) • UIMA stands for Unstructured Information Management Architecture. Structure of UIMA The UIMA architecture can be thought of in four dimensions: It specifies component interfaces in an analytics pipeline It describes a set of Design patterns It suggests two data representations: – an in-memory representation of annotations for high-performance analytics – an XML representation of annotations for integration with remote web services. It suggests development roles allowing tools to be used by users with diverse skills • • UIMA Custom Analytics Classification Multi-word Analytics Named Entity Recognition Word Analytics Tokenization Language Identification •

ICA, NLP, LRW & UIMA? annotator- a software component that performs linguistic analysis tasks and produces and records annotations

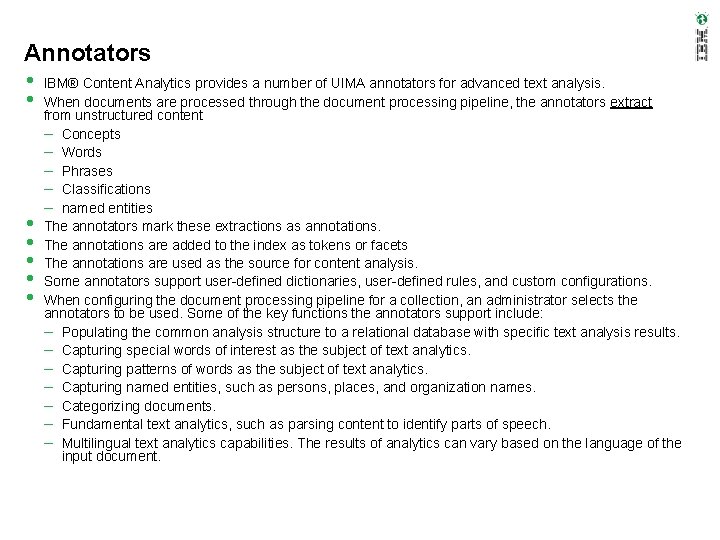

Annotators • IBM® Content Analytics provides a number of UIMA annotators for advanced text analysis. • When documents are processed through the document processing pipeline, the annotators extract • • • from unstructured content – Concepts – Words – Phrases – Classifications – named entities The annotators mark these extractions as annotations. The annotations are added to the index as tokens or facets The annotations are used as the source for content analysis. Some annotators support user-defined dictionaries, user-defined rules, and custom configurations. When configuring the document processing pipeline for a collection, an administrator selects the annotators to be used. Some of the key functions the annotators support include: – Populating the common analysis structure to a relational database with specific text analysis results. – Capturing special words of interest as the subject of text analytics. – Capturing patterns of words as the subject of text analytics. – Capturing named entities, such as persons, places, and organization names. – Categorizing documents. – Fundamental text analytics, such as parsing content to identify parts of speech. – Multilingual text analytics capabilities. The results of analytics can vary based on the language of the input document.

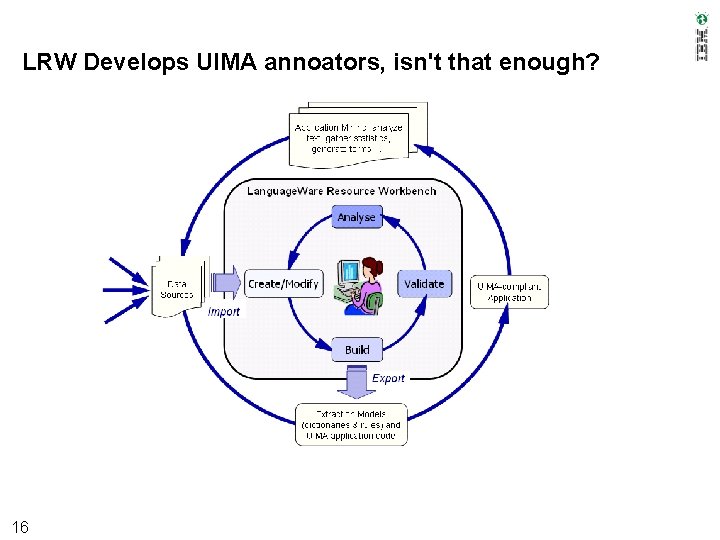

LRW Develops UIMA annoators, isn't that enough? 16

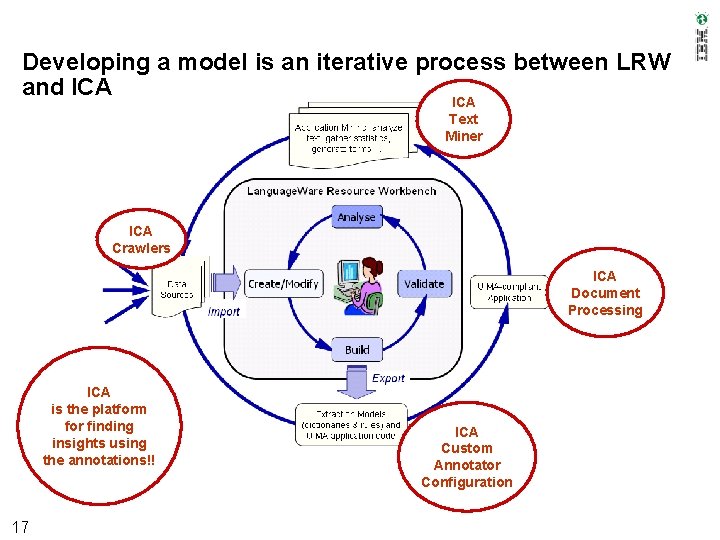

Developing a model is an iterative process between LRW and ICA Text Miner ICA Crawlers ICA Document Processing ICA is the platform for finding insights using the annotations!! 17 ICA Custom Annotator Configuration

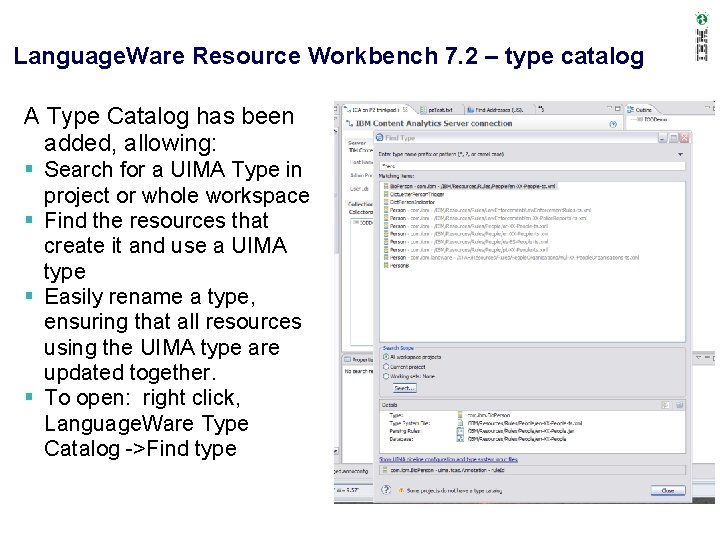

Language. Ware Resource Workbench 7. 2 – type catalog A Type Catalog has been added, allowing: Search for a UIMA Type in project or whole workspace Find the resources that create it and use a UIMA type Easily rename a type, ensuring that all resources using the UIMA type are updated together. To open: right click, Language. Ware Type Catalog ->Find type 18

Communities • On-line communities, User Groups, Technical Forums, Blogs, Social networks, and more – Find the community that interests you … • Information Management ibm. com/software/data/community • Business Analytics ibm. com/software/analytics/community • Enterprise Content Management ibm. com/software/data/contentmanagement/usernet. html • IBM Champions – Recognizing individuals who have made the most outstanding contributions to Information Management, Business Analytics, and Enterprise Content Management communities • ibm. com/champion

- Slides: 20