Introduction of Perceptron In 1957 Rosenblatt and several

Introduction of Perceptron In 1957, Rosenblatt and several other researchers developed perceptron, which used the similar network as proposed by Mc. Culloch, and the learning rule for training network to solve pattern recognition problem. (*) But, this model was later criticized by Minsky who proved that it cannot solve the XOR problem. 朝陽科技大學 李麗華 教授 2

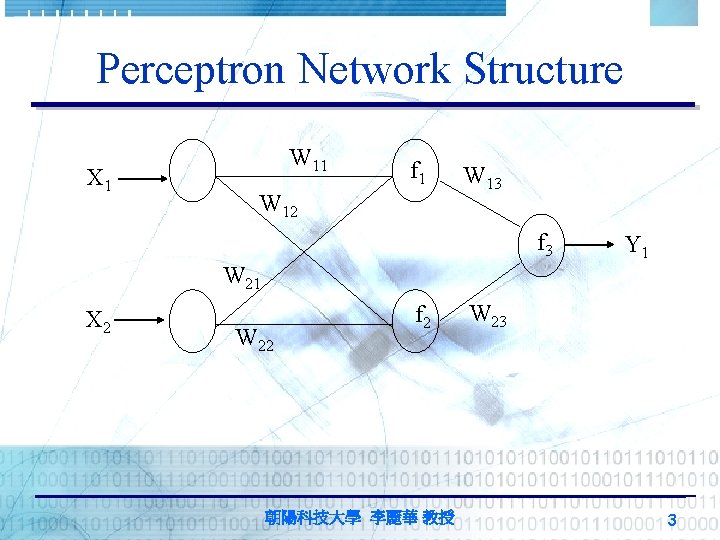

Perceptron Network Structure X 1 W 11 f 1 W 12 W 13 f 3 Y 1 W 21 X 2 W 22 f 2 朝陽科技大學 李麗華 教授 W 23 3

Perceptron Training Process 1. Set up the network input nodes, layer(s), & connections 2. Randomly assign weights to Wij & bias: 3. Input training sets Xi (preparing Tj for verification ) 4. Training computation: 1 Yj= net j > 0 if 0 net j 0 朝陽科技大學 李麗華 教授 4

The training process 4. Training computation: If than: Update weights and bias : 5. repeat 3 ~5 until every input pattern is satisfied 朝陽科技大學 李麗華 教授 5

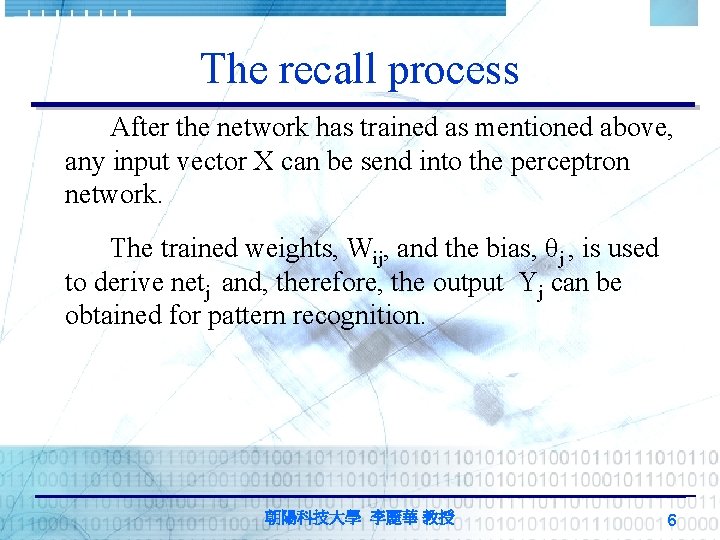

The recall process After the network has trained as mentioned above, any input vector X can be send into the perceptron network. The trained weights, Wij, and the bias, θj , is used to derive netj and, therefore, the output Yj can be obtained for pattern recognition. 朝陽科技大學 李麗華 教授 6

Exercise 1: Solving the OR problem • Let the training patterns are as follows. X 1 X 2 T 0 0 1 1 1 0 1 1 X1 f X 2 X2 f W 11 Y W 21 X 1 朝陽科技大學 李麗華 教授 7

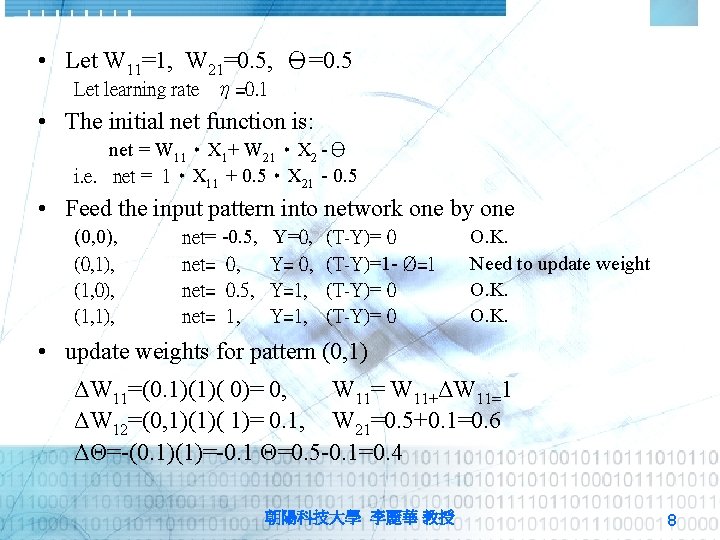

• Let W 11=1, W 21=0. 5, Θ=0. 5 Let learning rate η=0. 1 • The initial net function is: net = W 11 • X 1+ W 21 • X 2 -Θ i. e. net = 1 • X 11 + 0. 5 • X 21 - 0. 5 • Feed the input pattern into network one by one (0, 0), (0, 1), (1, 0), (1, 1), net= -0. 5, net= 1, Y=0, Y=1, (T-Y)= 0 (T-Y)=1 - Ø=1 (T-Y)= 0 O. K. Need to update weight O. K. • update weights for pattern (0, 1) ΔW 11=(0. 1)(1)( 0)= 0, W 11= W 11+ΔW 11=1 ΔW 12=(0, 1)(1)( 1)= 0. 1, W 21=0. 5+0. 1=0. 6 ΔΘ=-(0. 1)(1)=-0. 1 Θ=0. 5 -0. 1=0. 4 朝陽科技大學 李麗華 教授 8

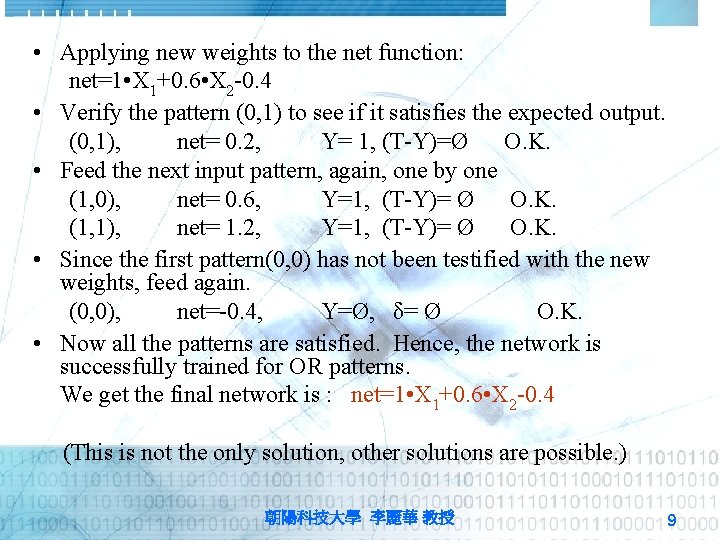

• Applying new weights to the net function: net=1 • X 1+0. 6 • X 2 -0. 4 • Verify the pattern (0, 1) to see if it satisfies the expected output. (0, 1), net= 0. 2, Y= 1, (T-Y)=Ø O. K. • Feed the next input pattern, again, one by one (1, 0), net= 0. 6, Y=1, (T-Y)= Ø O. K. (1, 1), net= 1. 2, Y=1, (T-Y)= Ø O. K. • Since the first pattern(0, 0) has not been testified with the new weights, feed again. (0, 0), net=-0. 4, Y=Ø, δ= Ø O. K. • Now all the patterns are satisfied. Hence, the network is successfully trained for OR patterns. We get the final network is : net=1 • X 1+0. 6 • X 2 -0. 4 (This is not the only solution, other solutions are possible. ) 朝陽科技大學 李麗華 教授 9

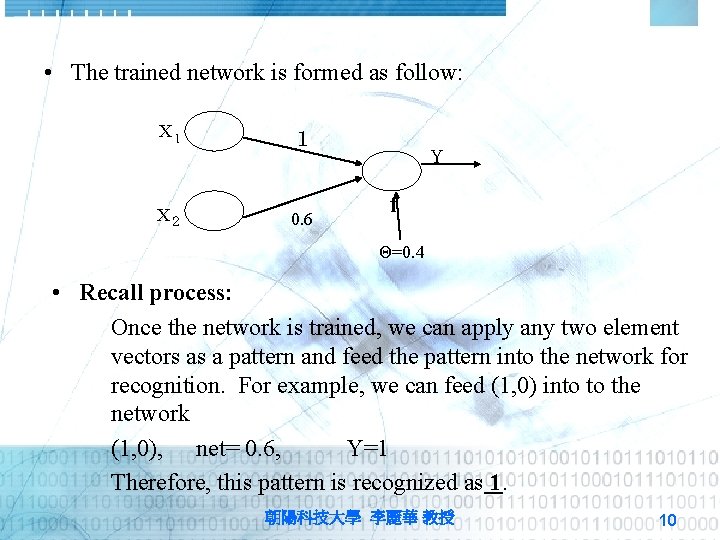

• The trained network is formed as follow: X1 1 Y X2 0. 6 f Θ=0. 4 • Recall process: Once the network is trained, we can apply any two element vectors as a pattern and feed the pattern into the network for recognition. For example, we can feed (1, 0) into to the network (1, 0), net= 0. 6, Y=1 Therefore, this pattern is recognized as 1. 朝陽科技大學 李麗華 教授 10

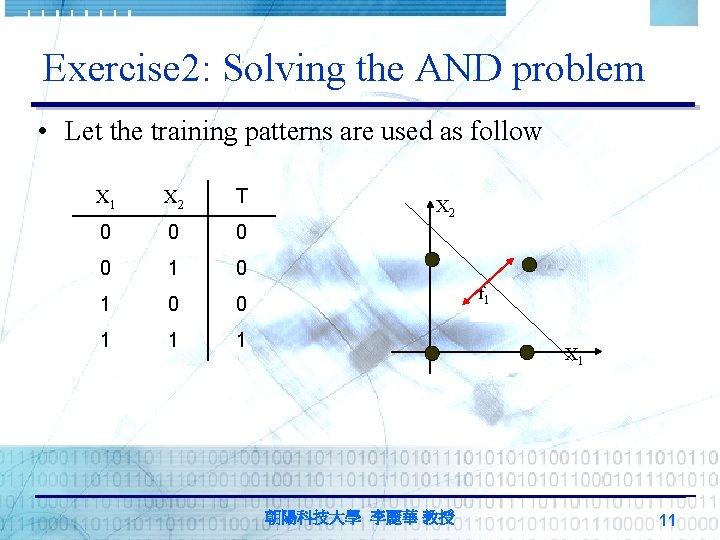

Exercise 2: Solving the AND problem • Let the training patterns are used as follow X 1 X 2 T 0 0 1 1 1 X 2 f 1 X 1 朝陽科技大學 李麗華 教授 11

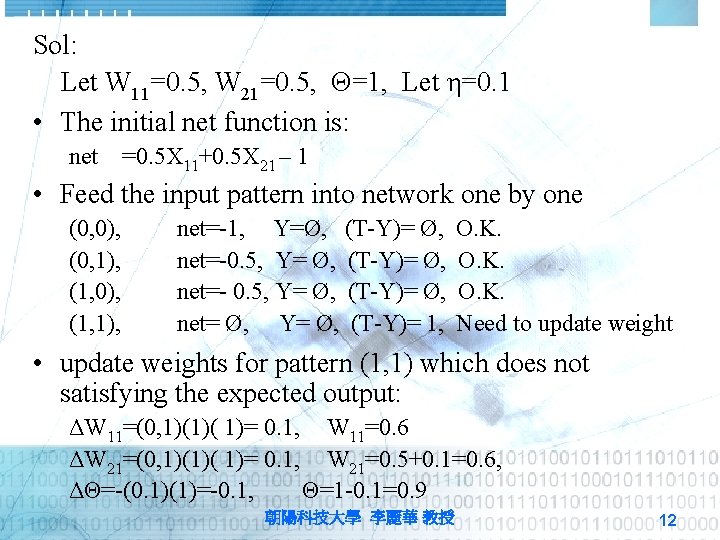

Sol: Let W 11=0. 5, W 21=0. 5, Θ=1, Let η=0. 1 • The initial net function is: net =0. 5 X 11+0. 5 X 21 – 1 • Feed the input pattern into network one by one (0, 0), (0, 1), (1, 0), (1, 1), net=-1, Y=Ø, (T-Y)= Ø, net=-0. 5, Y= Ø, (T-Y)= Ø, net=- 0. 5, Y= Ø, (T-Y)= Ø, net= Ø, Y= Ø, (T-Y)= 1, O. K. Need to update weight • update weights for pattern (1, 1) which does not satisfying the expected output: ΔW 11=(0, 1)(1)( 1)= 0. 1, W 11=0. 6 ΔW 21=(0, 1)(1)( 1)= 0. 1, W 21=0. 5+0. 1=0. 6, ΔΘ=-(0. 1)(1)=-0. 1, Θ=1 -0. 1=0. 9 朝陽科技大學 李麗華 教授 12

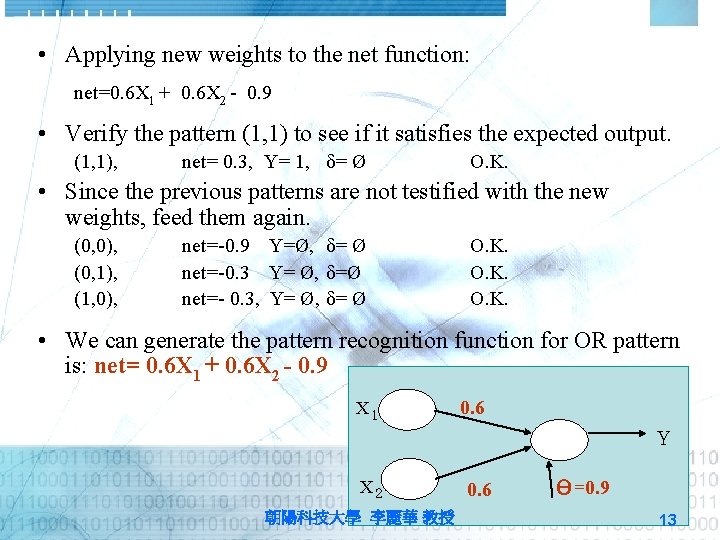

• Applying new weights to the net function: net=0. 6 X 1 + 0. 6 X 2 - 0. 9 • Verify the pattern (1, 1) to see if it satisfies the expected output. (1, 1), net= 0. 3, Y= 1, δ= Ø O. K. • Since the previous patterns are not testified with the new weights, feed them again. (0, 0), (0, 1), (1, 0), net=-0. 9 Y=Ø, δ= Ø net=-0. 3 Y= Ø, δ=Ø net=- 0. 3, Y= Ø, δ= Ø O. K. • We can generate the pattern recognition function for OR pattern is: net= 0. 6 X 1 + 0. 6 X 2 - 0. 9 X1 0. 6 Y X2 朝陽科技大學 李麗華 教授 0. 6 Θ=0. 9 13

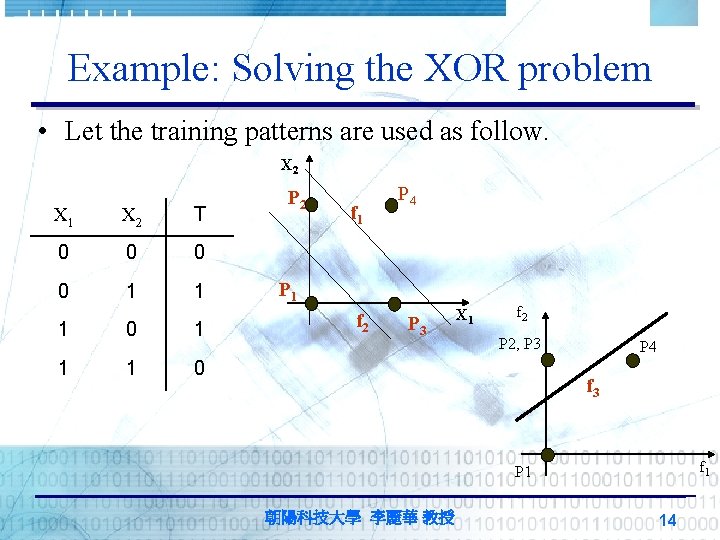

Example: Solving the XOR problem • Let the training patterns are used as follow. X 2 X 1 X 2 T 0 0 1 1 1 0 P 2 f 1 P 4 P 1 f 2 P 3 X 1 f 2 P 2, P 3 P 4 f 3 f 1 P 1 朝陽科技大學 李麗華 教授 14

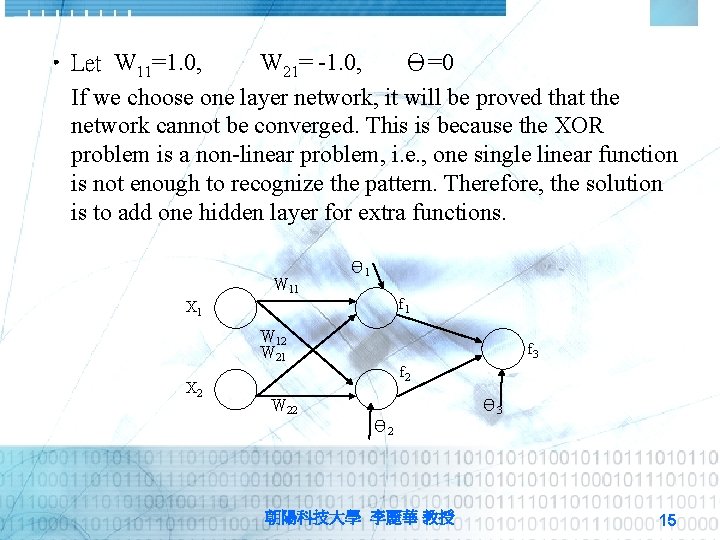

• Let W 11=1. 0, W 21= -1. 0, Θ=0 If we choose one layer network, it will be proved that the network cannot be converged. This is because the XOR problem is a non-linear problem, i. e. , one single linear function is not enough to recognize the pattern. Therefore, the solution is to add one hidden layer for extra functions. W 11 Θ 1 f 1 X 1 W 12 W 21 X 2 f 3 f 2 W 22 Θ 3 Θ 2 朝陽科技大學 李麗華 教授 15

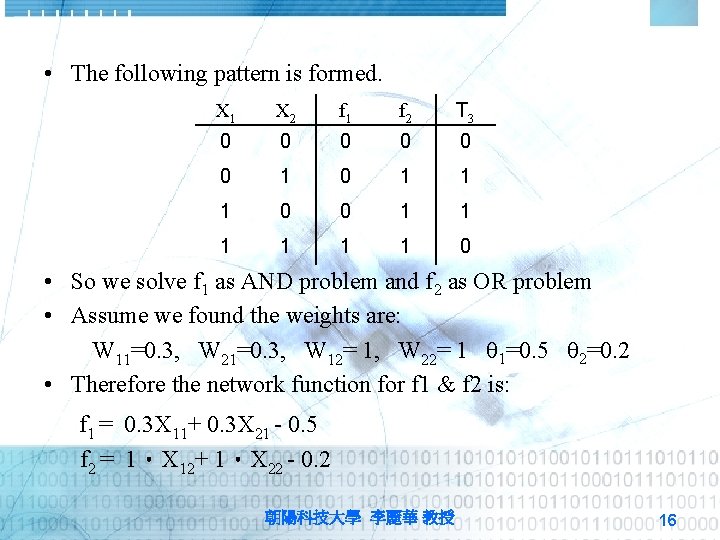

• The following pattern is formed. X 1 X 2 f 1 f 2 T 3 0 0 0 1 0 1 1 1 0 • So we solve f 1 as AND problem and f 2 as OR problem • Assume we found the weights are: W 11=0. 3, W 21=0. 3, W 12= 1, W 22= 1 θ 1=0. 5 θ 2=0. 2 • Therefore the network function for f 1 & f 2 is: f 1 = 0. 3 X 11+ 0. 3 X 21 - 0. 5 f 2 = 1 • X 12+ 1 • X 22 - 0. 2 朝陽科技大學 李麗華 教授 16

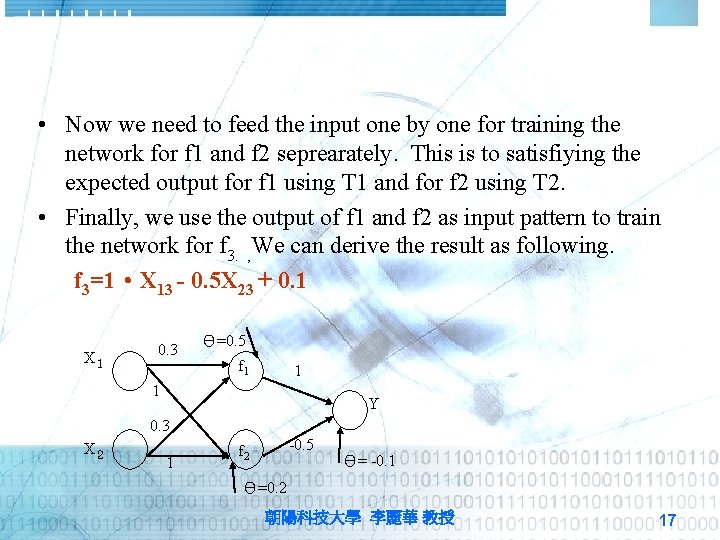

• Now we need to feed the input one by one for training the network for f 1 and f 2 seprearately. This is to satisfiying the expected output for f 1 using T 1 and for f 2 using T 2. • Finally, we use the output of f 1 and f 2 as input pattern to train the network for f 3. , We can derive the result as following. f 3=1 • X 13 - 0. 5 X 23 + 0. 1 X1 0. 3 Θ=0. 5 f 1 1 1 Y 0. 3 X2 1 -0. 5 f 2 Θ= -0. 1 Θ=0. 2 朝陽科技大學 李麗華 教授 17

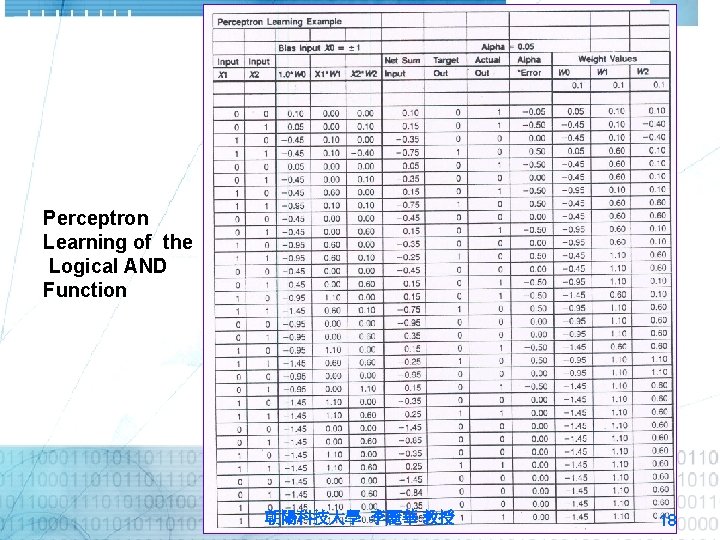

Perceptron Learning of the Logical AND Function 朝陽科技大學 李麗華 教授 18

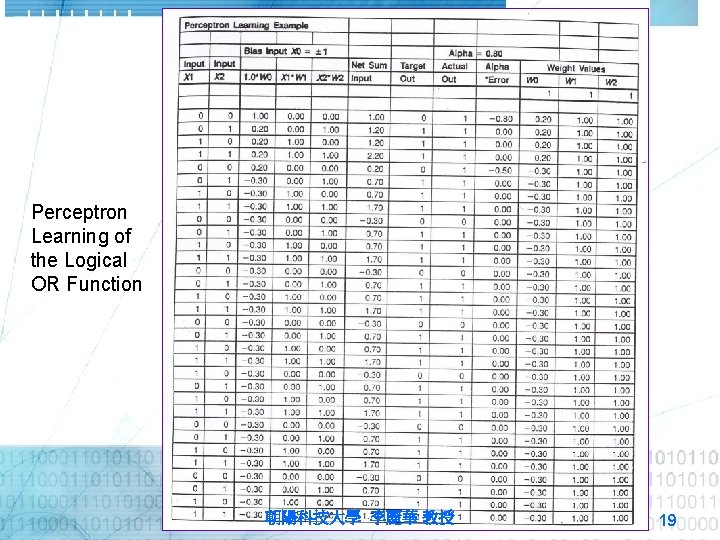

Perceptron Learning of the Logical OR Function 朝陽科技大學 李麗華 教授 19

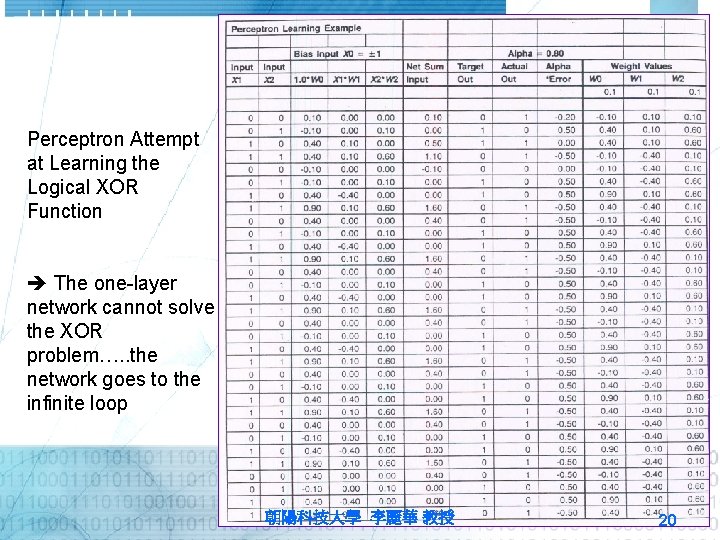

Perceptron Attempt at Learning the Logical XOR Function The one-layer network cannot solve the XOR problem…. . the network goes to the infinite loop 朝陽科技大學 李麗華 教授 20

- Slides: 20