Introduction HPC goes mainstream Chokchai Box Leangsuksun Associate

Introduction: HPC goes mainstream Chokchai Box Leangsuksun Associate Professor, Computer Science Louisiana Tech University box@latech. edu 1

Outline • Why HPC is critical technology ? • Conclusion 2

Why HPC? • High Performance Computing – Parallel , Supercomputing – Enabled by multiple high speed CPUs, networking, software etc – fastest possible solution – Technologies that help solving non-trivial tasks including scientific, engineering, medical, business entertainment and etc. • Time to insights, Time to discovery, Times to markets • BTW, HPC is not GRID!!!. 3

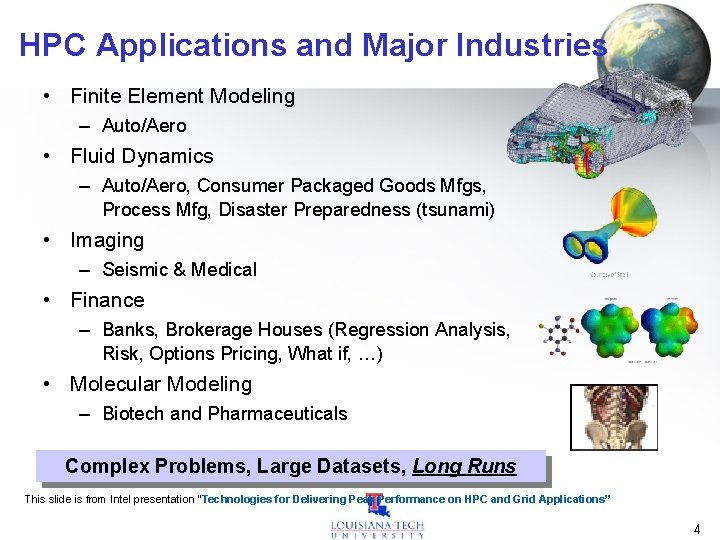

HPC Applications and Major Industries • Finite Element Modeling – Auto/Aero • Fluid Dynamics – Auto/Aero, Consumer Packaged Goods Mfgs, Process Mfg, Disaster Preparedness (tsunami) • Imaging – Seismic & Medical • Finance – Banks, Brokerage Houses (Regression Analysis, Risk, Options Pricing, What if, …) • Molecular Modeling – Biotech and Pharmaceuticals Complex Problems, Large Datasets, Long Runs This slide is from Intel presentation “Technologies for Delivering Peak Performance on HPC and Grid Applications” 4

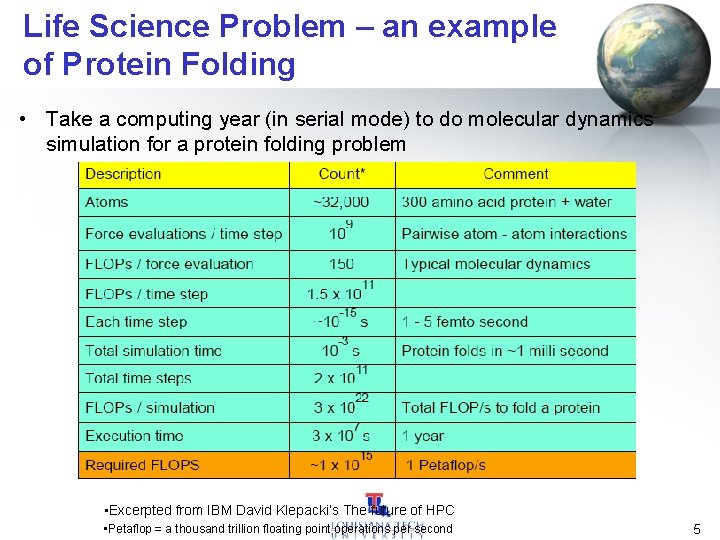

Life Science Problem – an example of Protein Folding • Take a computing year (in serial mode) to do molecular dynamics simulation for a protein folding problem • Excerpted from IBM David Klepacki’s The future of HPC • Petaflop = a thousand trillion floating point operations per second 5

Disaster Preparedness - example • Project LEAD – Severe Weather prediction (Tornado) – OU leads. • HPC & Dynamically adaptation to weather forecast • Professor Seidel’s LSU CCT – Hurricane Route Prediction – Emergency Preparedness – Show Movie – HPC-enabled Simulation 6

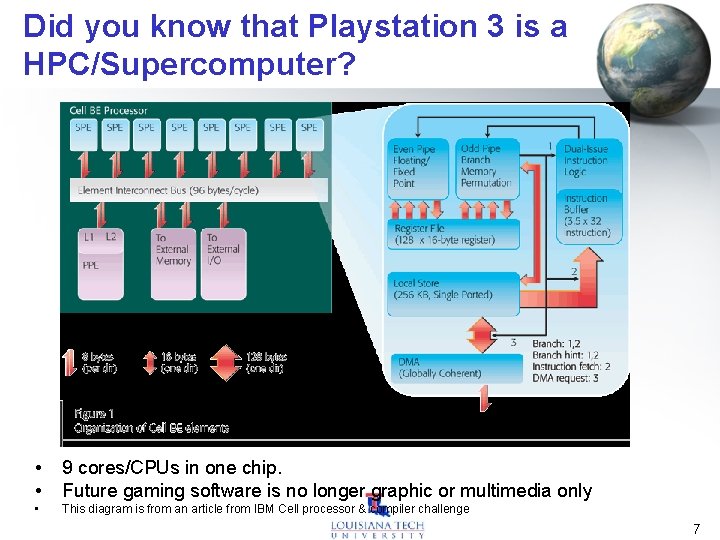

Did you know that Playstation 3 is a HPC/Supercomputer? • • 9 cores/CPUs in one chip. Future gaming software is no longer graphic or multimedia only • This diagram is from an article from IBM Cell processor & compiler challenge 7

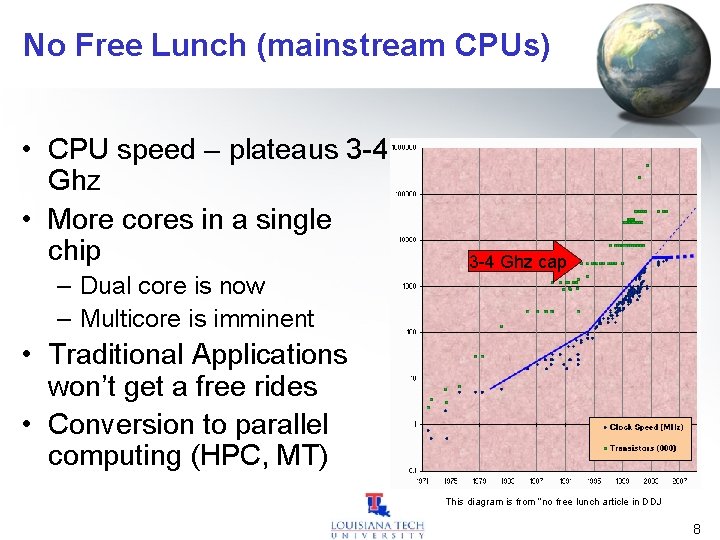

No Free Lunch (mainstream CPUs) • CPU speed – plateaus 3 -4 Ghz • More cores in a single chip – Dual core is now – Multicore is imminent 3 -4 Ghz cap • Traditional Applications won’t get a free rides • Conversion to parallel computing (HPC, MT) This diagram is from “no free lunch article in DDJ 8

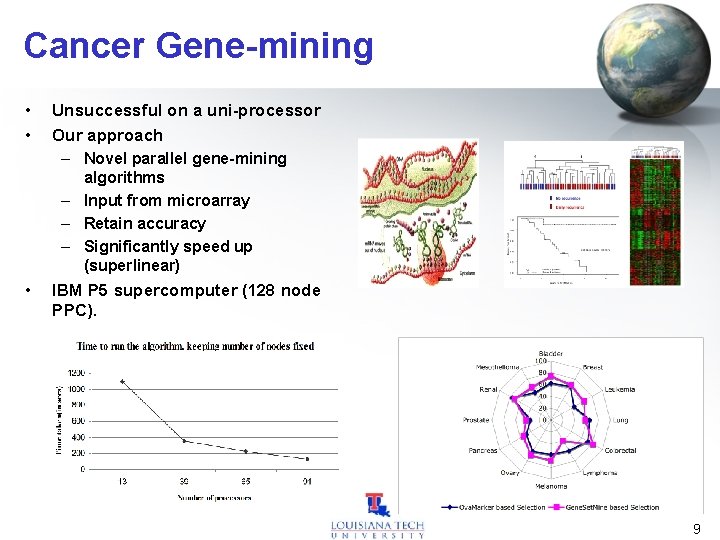

Cancer Gene-mining • • Unsuccessful on a uni-processor Our approach – Novel parallel gene-mining algorithms – Input from microarray – Retain accuracy – Significantly speed up (superlinear) • IBM P 5 supercomputer (128 node PPC). 9

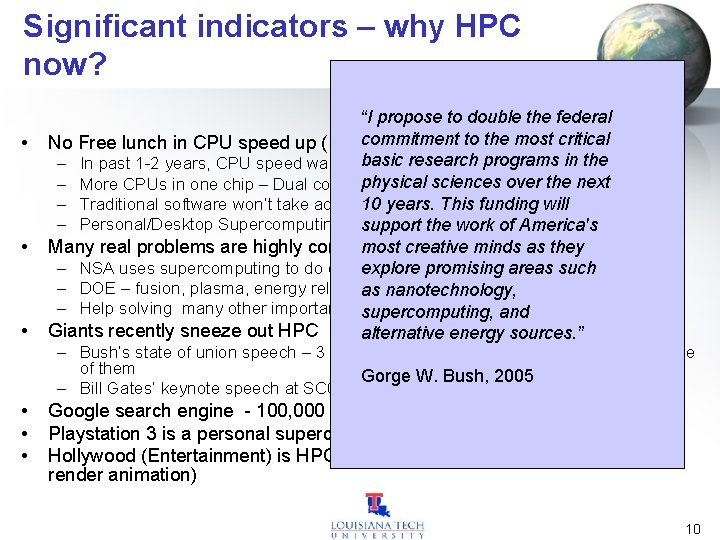

Significant indicators – why HPC now? • • • “I propose to double the federal No Free lunch in CPU speed up (Intelcommitment or AMD) to the most critical basic – In past 1 -2 years, CPU speed was flatten at research 3+ Ghz programs in the physical sciences over the next – More CPUs in one chip – Dual core, multi-core chips 10 years. This funding will – Traditional software won’t take advantage of these new processors – Personal/Desktop Supercomputing. support the work of America's most creative minds as they Many real problems are highly computational intensive. – NSA uses supercomputing to do dataexplore mining promising areas such – DOE – fusion, plasma, energy relatedas (including weaponry). nanotechnology, – Help solving many other important areas (nanotech, lifeand science etc. ) supercomputing, Giants recently sneeze out HPC alternative energy sources. ” – Bush’s state of union speech – 3 main S&T focus of which Supercomputing is one of them Gorge W. Bush, 2005 – Bill Gates’ keynote speech at SC 05 – MS goes after HPC Google search engine - 100, 000 nodes Playstation 3 is a personal supercomputing platform Hollywood (Entertainment) is HPC-bound (Pixar – more than 3000 CPUs to render animation) 10

HPC preparedness • Build work forces that understand HPC paradigm & its applications – HPC/Grid Curriculum in IT/CS/CE/ICT – Offer HPC-enabling tracks to other disciplinary (engineering, life science, physic, computational chem, business etc. . ) – Training business community (e. g. HPC for enterprise ; Fluent certification, HA SLA certification) – Bring awareness to public • . 11

Introduction to Parallel computing • Need more computing power – Improve the operating speed of processors & other components • constrained by the speed of light, thermodynamic laws, & the high financial costs for processor fabrication – Connect multiple processors together & coordinate their computational efforts • parallel computers • allow the sharing of a computational task among multiple processors 12

How to Run Applications Faster ? • There are 3 ways to improve performance: – Work Harder – Work Smarter – Get Help • Computer Analogy – Using faster hardware – Optimized algorithms and techniques used to solve computational tasks – Multiple computers to solve a particular task 13

Era of Computing – Rapid technical advances • the recent advances in VLSI technology • software technology – OS, PL, development methodologies, & tools • grand challenge applications have become the main driving force – Parallel computing • one of the best ways to overcome the speed bottleneck of a single processor • good price/performance ratio of a small cluster-based parallel computer 14

HPC Level-setting Definitions • High performance computing is: – Computing that demands more than a single highmarket-volume workstation or server can deliver • HPC is based on concurrency: – Concurrency: computing in which multiple tasks are active at the same time • Parallel computing occurs when you use concurrency to: – Solve bigger problems – Solve a fixed-size problem in less time 15

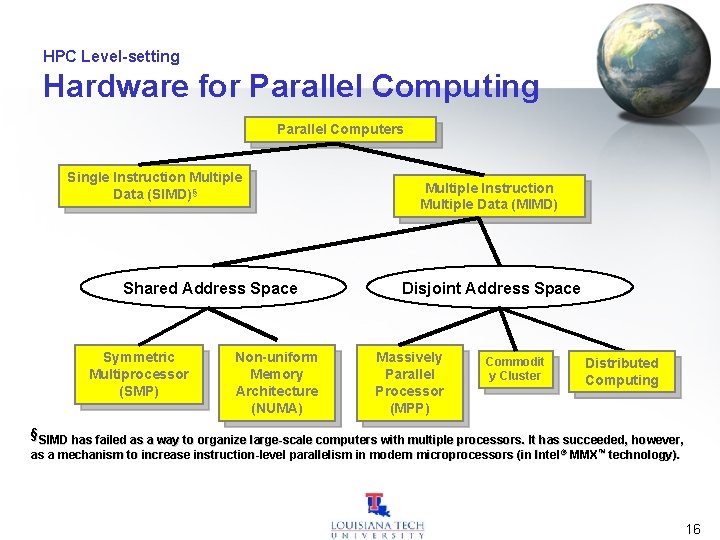

HPC Level-setting Hardware for Parallel Computing Parallel Computers Single Instruction Multiple Data (SIMD)§ Shared Address Space Symmetric Multiprocessor (SMP) Non-uniform Memory Architecture (NUMA) Multiple Instruction Multiple Data (MIMD) Disjoint Address Space Massively Parallel Processor (MPP) Commodit y Cluster Distributed Computing §SIMD has failed as a way to organize large-scale computers with multiple processors. It has succeeded, however, as a mechanism to increase instruction-level parallelism in modern microprocessors (in Intel ® MMX™ technology). 16

Scalable Parallel Computer Architectures • MPP – A large parallel processing system with a shared-nothing architecture – Consist of several hundred nodes with a high-speed interconnection network/switch – Each node consists of a main memory & one or more processors • Runs a separate copy of the OS • SMP – – 2 -64 processors today Shared-everything architecture All processors share all the global resources available Single copy of the OS runs on these systems 17

Scalable Parallel Computer Architectures • CC-NUMA – a scalable multiprocessor system having a cache-coherent nonuniform memory access architecture – every processor has a global view of all of the memory • Distributed systems – considered conventional networks of independent computers – have multiple system images as each node runs its own OS – the individual machines could be combinations of MPPs, SMPs, clusters, & individual computers • Clusters – a collection of workstations of PCs that are interconnected by a highspeed network – work as an integrated collection of resources – have a single system image spanning all its nodes 18

Cluster Computer and its Architecture • A cluster is a type of parallel or distributed processing system, which consists of a collection of interconnected stand-alone computers cooperatively working together as a single, integrated computing resource • A node – – – a single or multiprocessor system with memory, I/O facilities, & OS generally 2 or more computers (nodes) connected together in a single cabinet, or physically separated & connected via a LAN appear as a single system to users and applications provide a cost-effective way to gain features and benefits 19

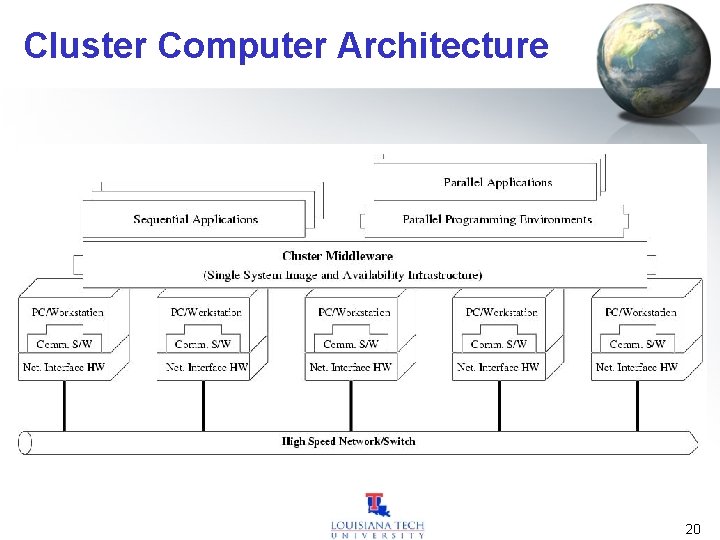

Cluster Computer Architecture 20

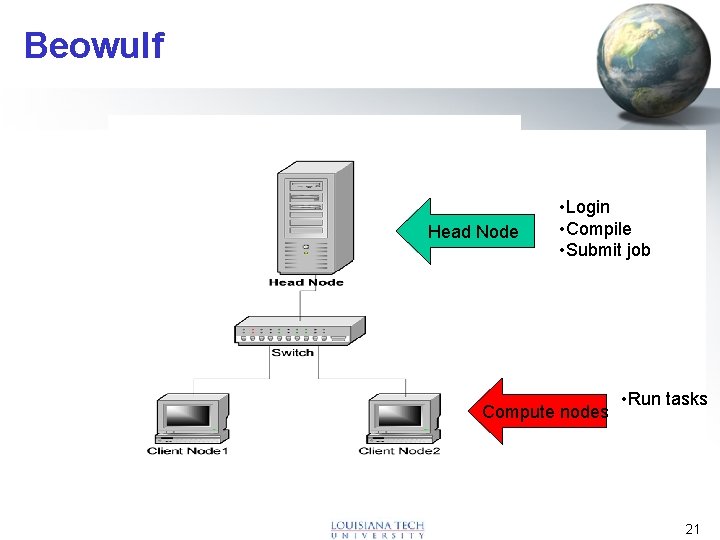

Beowulf Head Node • Login • Compile • Submit job Compute nodes • Run tasks 21

Prominent Components of Cluster Computers (I) • Multiple High Performance Computers – PCs – Workstations – SMPs (CLUMPS) – Distributed HPC Systems leading to Metacomputing 22

Prominent Components of Cluster Computers (II) • State of the art Operating Systems – – – – Linux (Beowulf) Microsoft NT (Illinois HPVM) SUN Solaris (Berkeley NOW) IBM AIX(IBM SP 2) HP UX (Illinois - PANDA) Mach (Microkernel based OS) (CMU) Cluster Operating Systems (Solaris MC, SCO Unixware, MOSIX (academic project) – OS gluing layers (Berkeley Glunix) 23

Prominent Components of Cluster Computers (III) • High Performance Networks/Switches – Ethernet (10 Mbps), Fast Ethernet (100 Mbps), – Infinite. Band (1 -8 Gbps) – Gigabit Ethernet (1 Gbps) – SCI (Dolphin - MPI- 12 micro-sec latency) – ATM – Myrinet (1. 2 Gbps) – Digital Memory Channel – FDDI 24

Prominent Components of Cluster Computers (IV) • Network Interface Card – Myrinet has NIC – Infinite. Band (HBA) – User-level access support 25

Prominent Components of Cluster Computers (VI) • Cluster Middleware – Single System Image (SSI) – System Availability (SA) Infrastructure • Hardware – DEC Memory Channel, DSM (Alewife, DASH), SMP Techniques • Operating System Kernel/Gluing Layers – Solaris MC, Unixware, GLUnix • Applications and Subsystems – – – Applications (system management and electronic forms) Runtime systems (software DSM, PFS etc. ) Resource management and scheduling software (RMS) • CODINE, LSF, PBS, NQS, etc. 26

Prominent Components of Cluster Computers (VII) • Parallel Programming Environments and Tools – Threads (PCs, SMPs, NOW. . ) • POSIX Threads • Java Threads – MPI • Linux, NT, on many Supercomputers – PVM – Software DSMs (Shmem) – Compilers • C/C++/Java • Parallel programming with C++ (MIT Press book) – RAD (rapid application development tools) • GUI based tools for PP modeling – Debuggers – Performance Analysis Tools – Visualization Tools 27

Prominent Components of Cluster Computers (VIII) • Applications – Sequential – Parallel / Distributed (Cluster-aware app. ) • Grand Challenging applications – – – Weather Forecasting Quantum Chemistry Molecular Biology Modeling Engineering Analysis (CAD/CAM) ………………. • PDBs, web servers, data-mining 28

Key Operational Benefits of Clustering • • High Performance Expandability and Scalability High Throughput High Availability 29

Divide and Conquer • Says 1 CPU – 1, 000 elements – Numerical processing for 1 element =. 1 secs – One computer will take 100, 000 secs = 27. 7 hrs • Says 100 CPUs –. 27 hr ~ 16 mins 30

Parallel Computing • A big application is divided into Multiple tasks • Total computation time – Computing time – Communication time 31

Summary • HPC helps accelerates Time to insights, time to discovery and time to Market for challenging problems • Divide and Conquer – Computing vs communication time • Cluster computing is a predominant HPC system 32

- Slides: 32