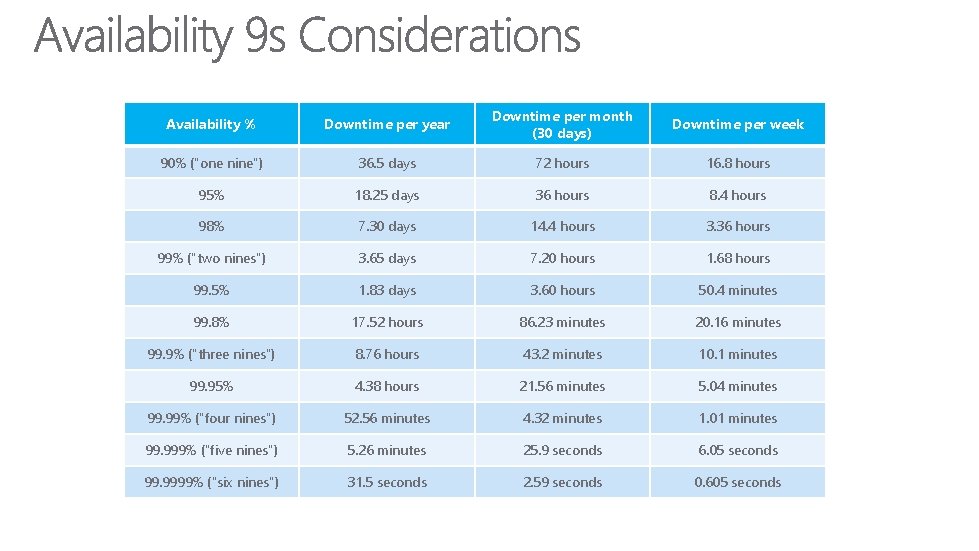

Introduction Availability Downtime per year Downtime per month

Introduction

Availability % Downtime per year Downtime per month (30 days) Downtime per week 90% ("one nine") 36. 5 days 72 hours 16. 8 hours 95% 18. 25 days 36 hours 8. 4 hours 98% 7. 30 days 14. 4 hours 3. 36 hours 99% ("two nines") 3. 65 days 7. 20 hours 1. 68 hours 99. 5% 1. 83 days 3. 60 hours 50. 4 minutes 99. 8% 17. 52 hours 86. 23 minutes 20. 16 minutes 99. 9% ("three nines") 8. 76 hours 43. 2 minutes 10. 1 minutes 99. 95% 4. 38 hours 21. 56 minutes 5. 04 minutes 99. 99% ("four nines") 52. 56 minutes 4. 32 minutes 1. 01 minutes 99. 999% ("five nines") 5. 26 minutes 25. 9 seconds 6. 05 seconds 99. 9999% ("six nines") 31. 5 seconds 2. 59 seconds 0. 605 seconds

Overview

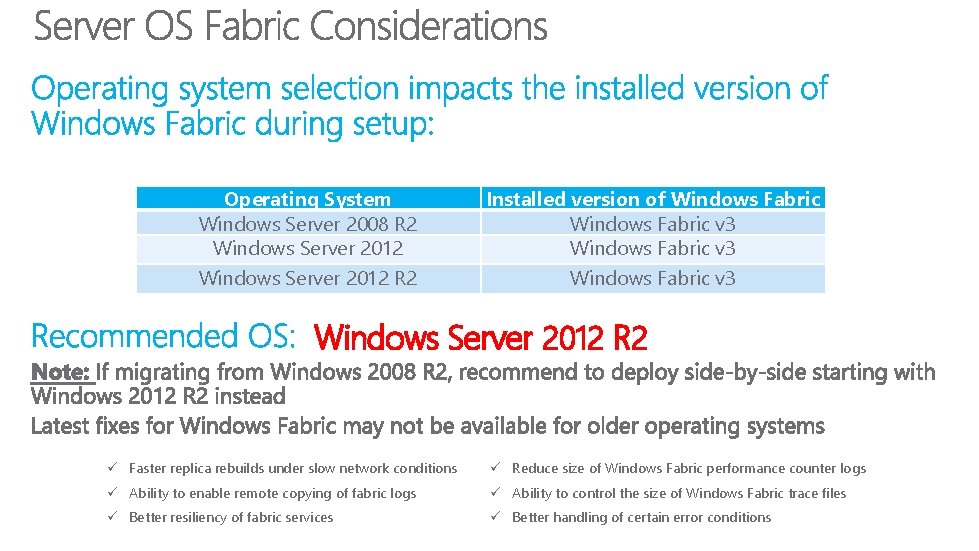

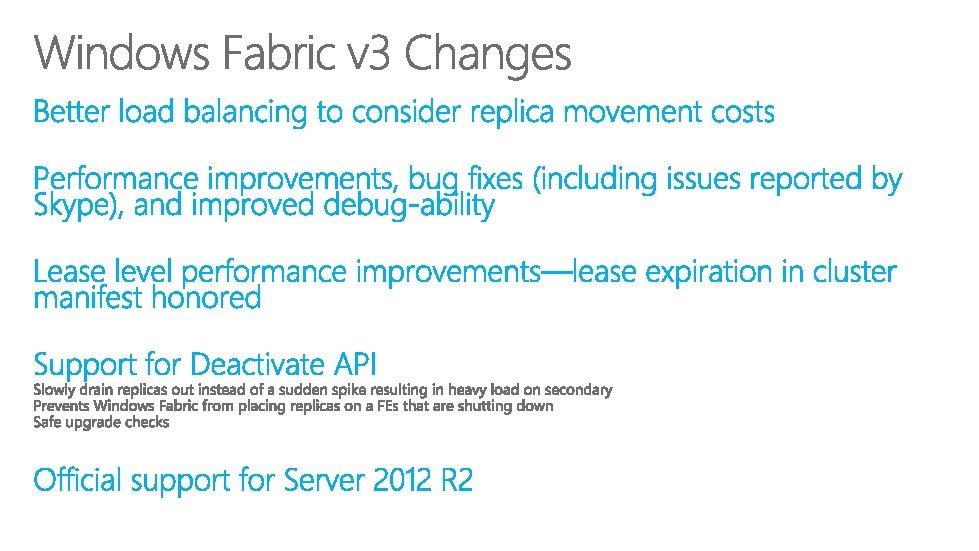

Operating System Windows Server 2008 R 2 Windows Server 2012 R 2 Installed version of Windows Fabric v 3 Windows Server 2012 R 2 ü Faster replica rebuilds under slow network conditions ü Reduce size of Windows Fabric performance counter logs ü Ability to enable remote copying of fabric logs ü Ability to control the size of Windows Fabric trace files ü Better resiliency of fabric services ü Better handling of certain error conditions

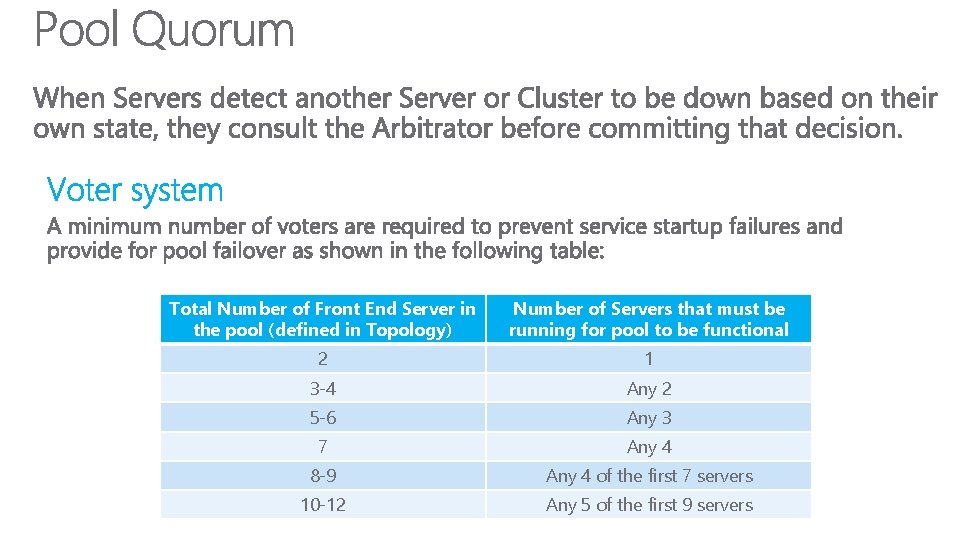

Total Number of Front End Server in the pool (defined in Topology) Number of Servers that must be running for pool to be functional 2 1 3 -4 Any 2 5 -6 Any 3 7 Any 4 8 -9 Any 4 of the first 7 servers 10 -12 Any 5 of the first 9 servers

17

18

19

20

21

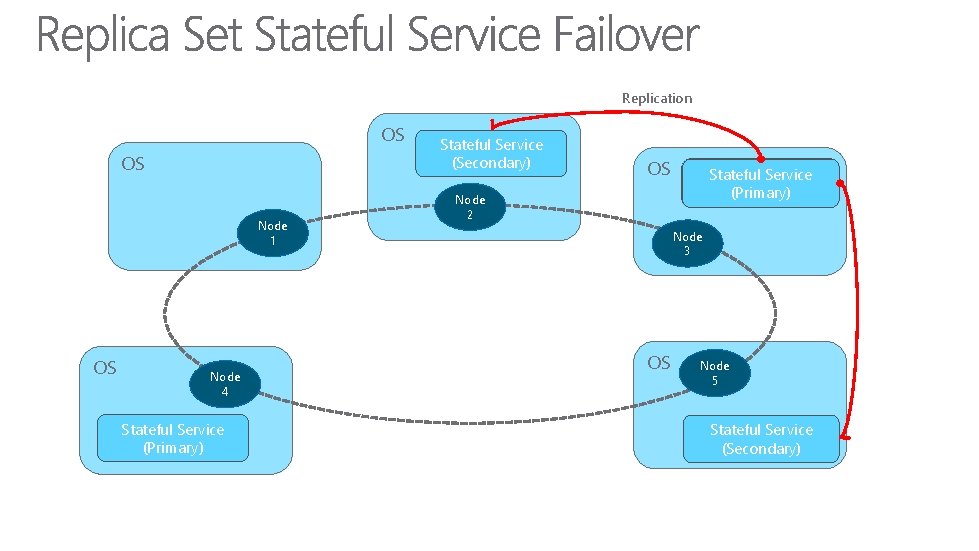

Replication OS OS Node 1 OS Node 4 Stateful Service (Primary) 22 Stateful Service (Secondary) OS Stateful Service (Secondary) (Primary) Node 2 Node 3 OS Node 5 Stateful Service (Secondary)

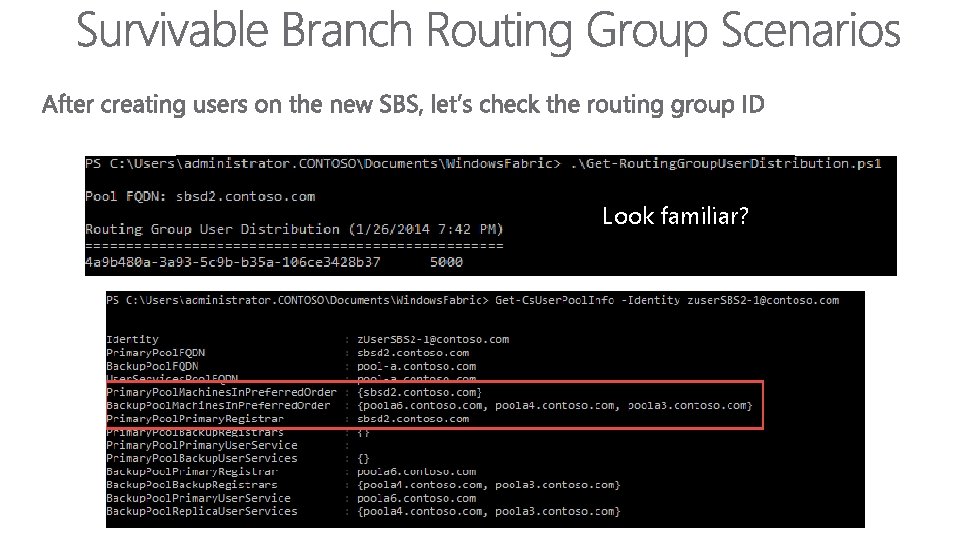

Look familiar?

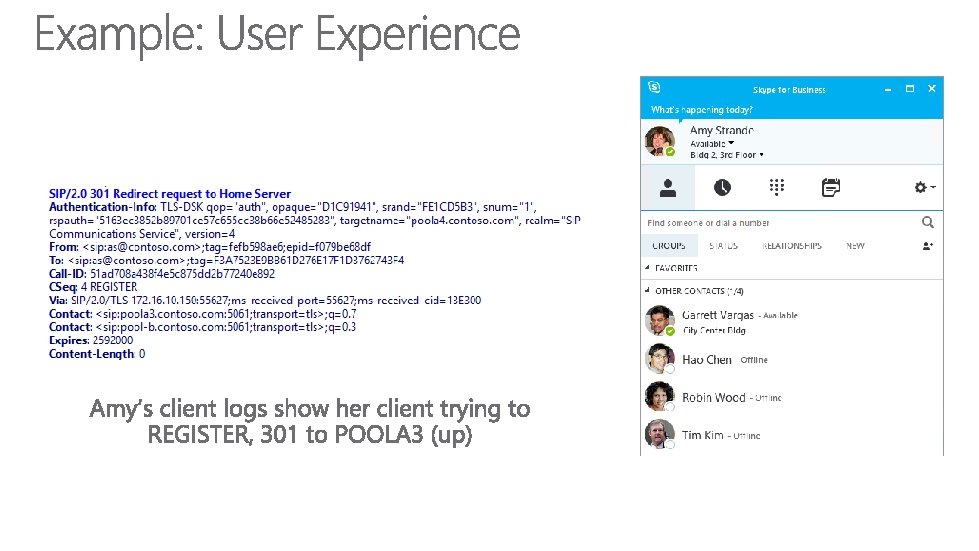

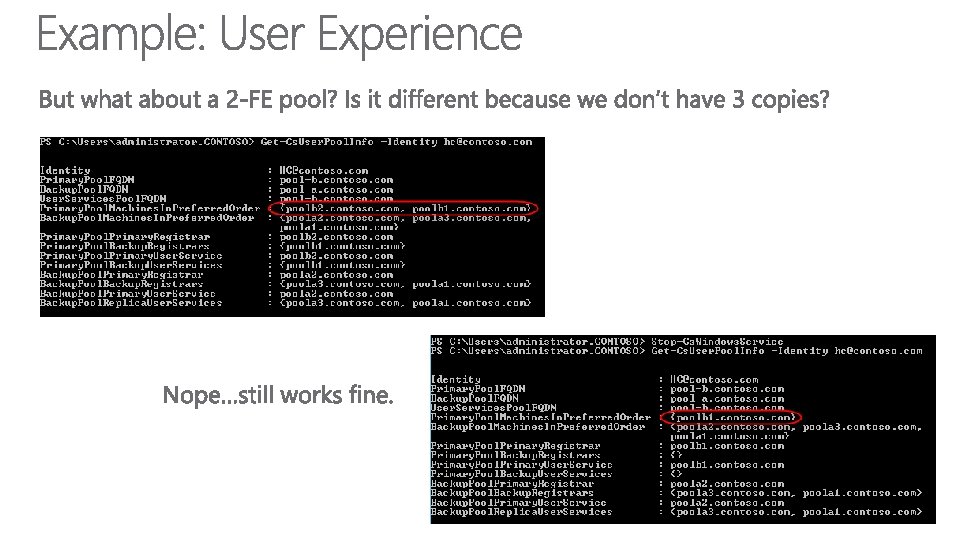

Primary Copy Offline

All Copies Offline

Patching

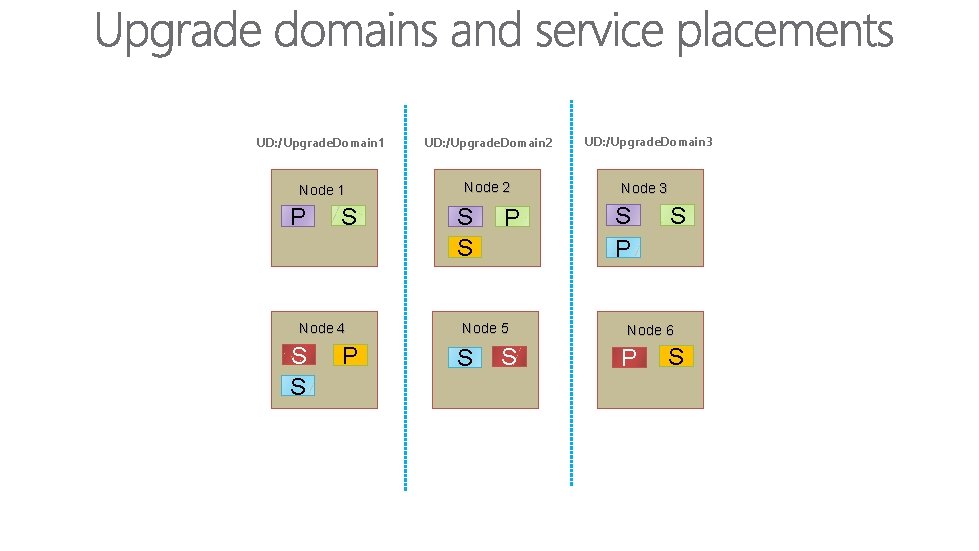

UD: /Upgrade. Domain 1 UD: /Upgrade. Domain 2 Node 1 Node 2 P S Node 4 S S P Node 5 S S UD: /Upgrade. Domain 3 Node 3 S P S Node 6 P S

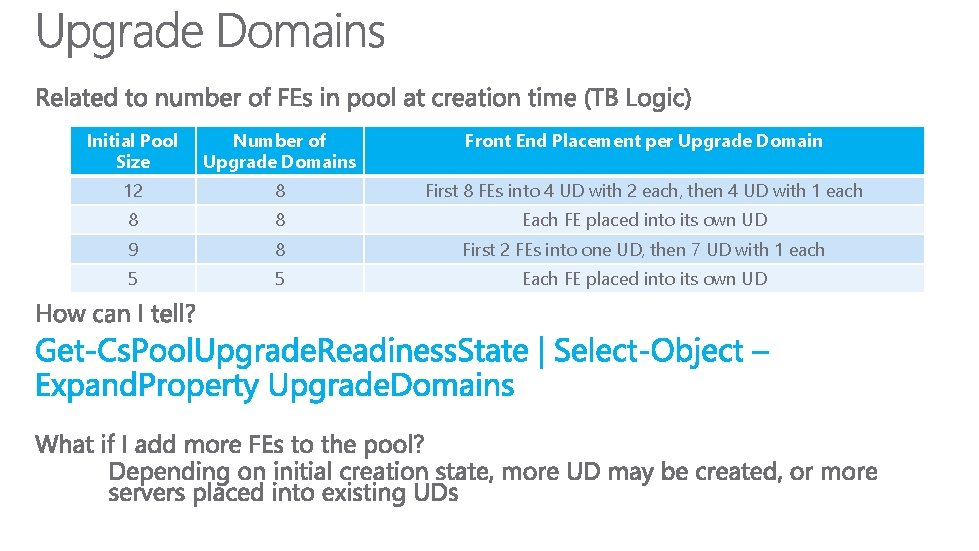

Initial Pool Size Number of Upgrade Domains Front End Placement per Upgrade Domain 12 8 First 8 FEs into 4 UD with 2 each, then 4 UD with 1 each 8 8 Each FE placed into its own UD 9 8 First 2 FEs into one UD, then 7 UD with 1 each 5 5 Each FE placed into its own UD

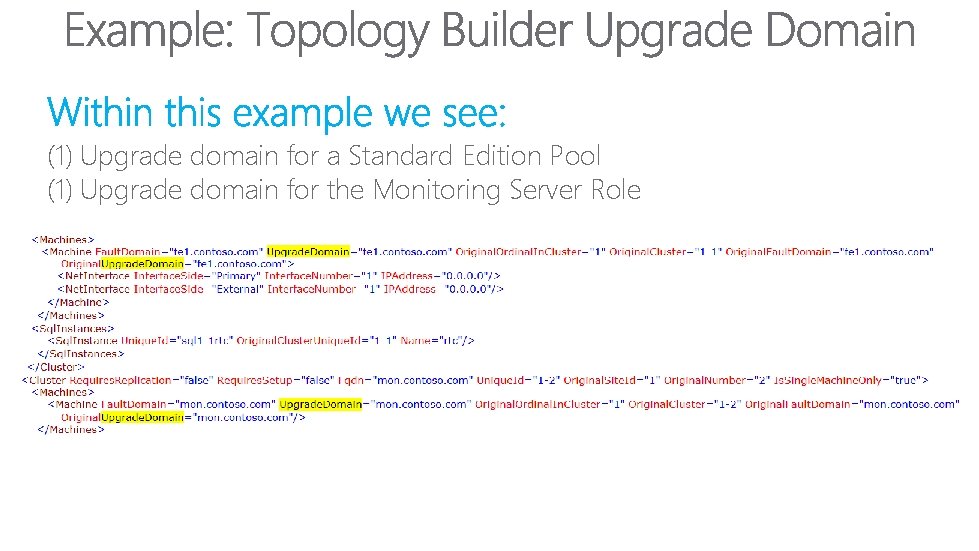

(1) Upgrade domain for a Standard Edition Pool (1) Upgrade domain for the Monitoring Server Role

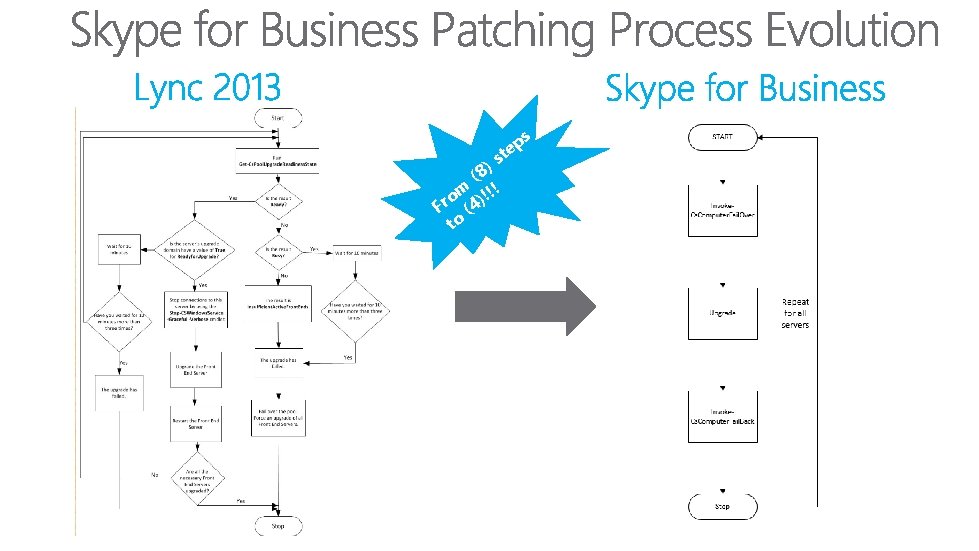

s ep t s ) (8 m )!!! o Fr (4 to

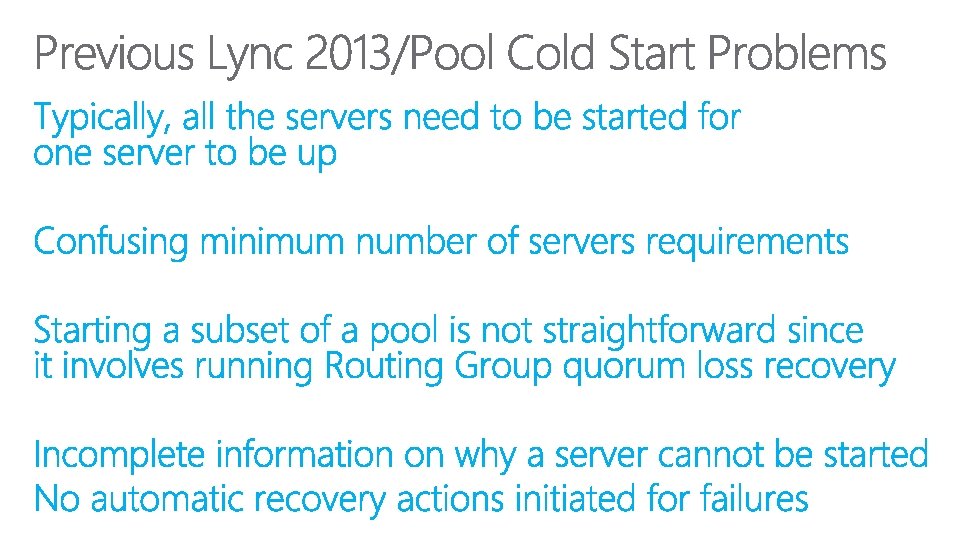

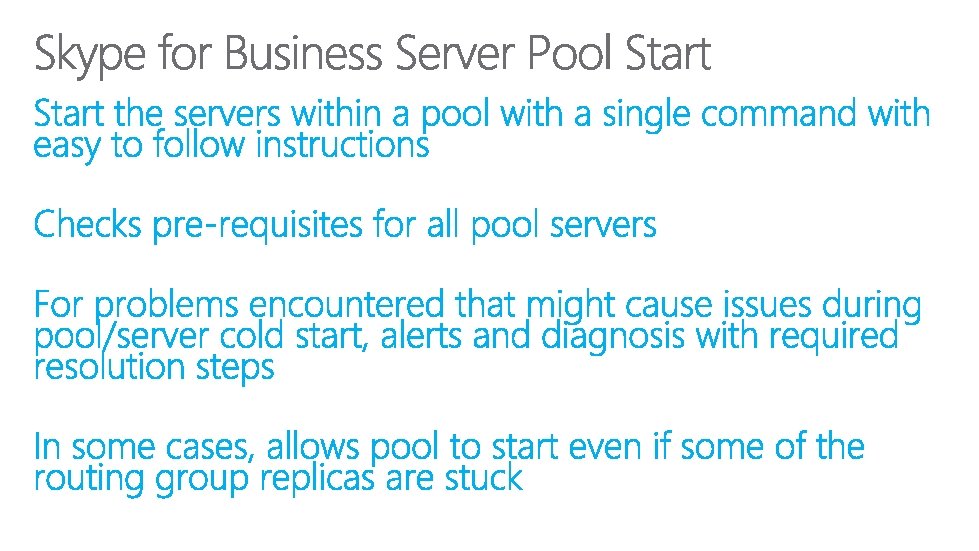

Pool Cold Start

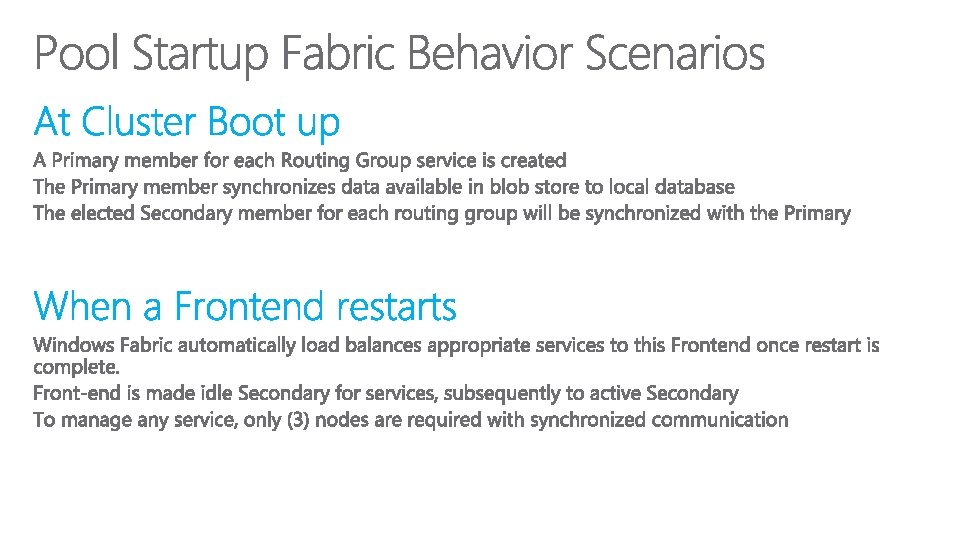

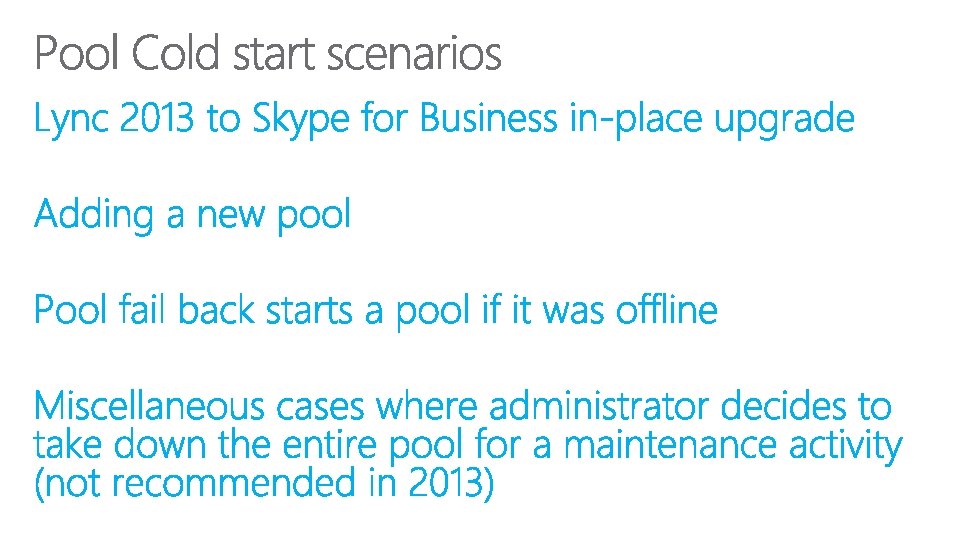

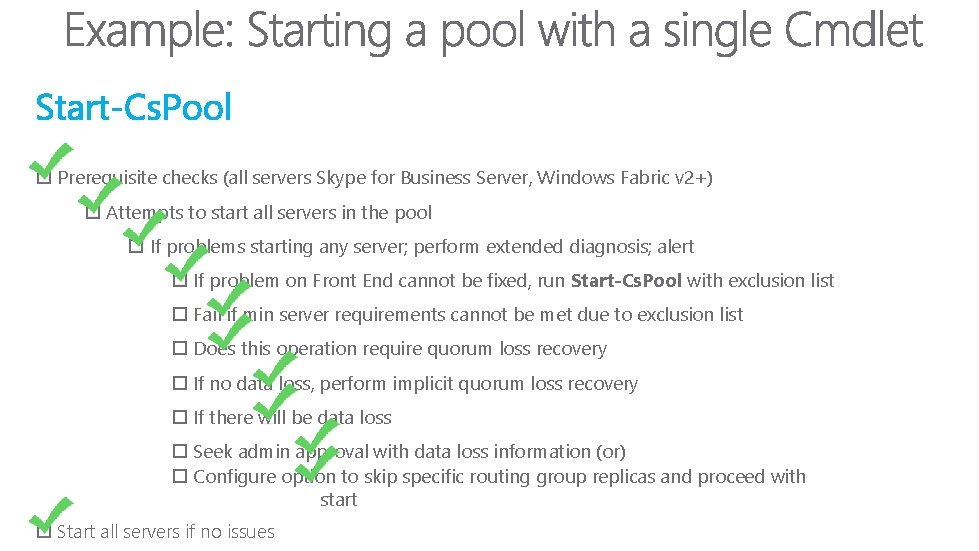

Prerequisite checks (all servers Skype for Business Server, Windows Fabric v 2+) Attempts to start all servers in the pool If problems starting any server; perform extended diagnosis; alert If problem on Front End cannot be fixed, run Start-Cs. Pool with exclusion list Fail if min server requirements cannot be met due to exclusion list Does this operation require quorum loss recovery If no data loss, perform implicit quorum loss recovery If there will be data loss Seek admin approval with data loss information (or) Configure option to skip specific routing group replicas and proceed with start Start all servers if no issues

Overview

Overview

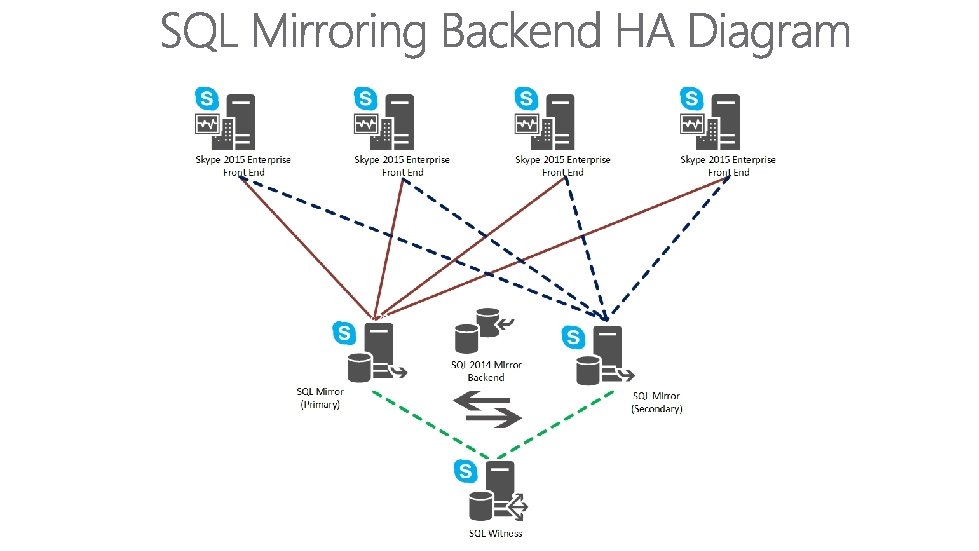

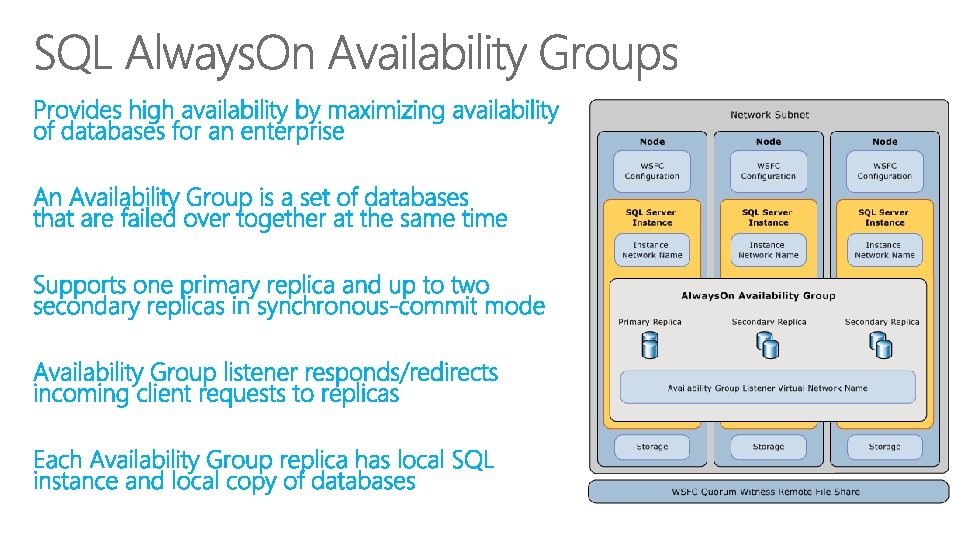

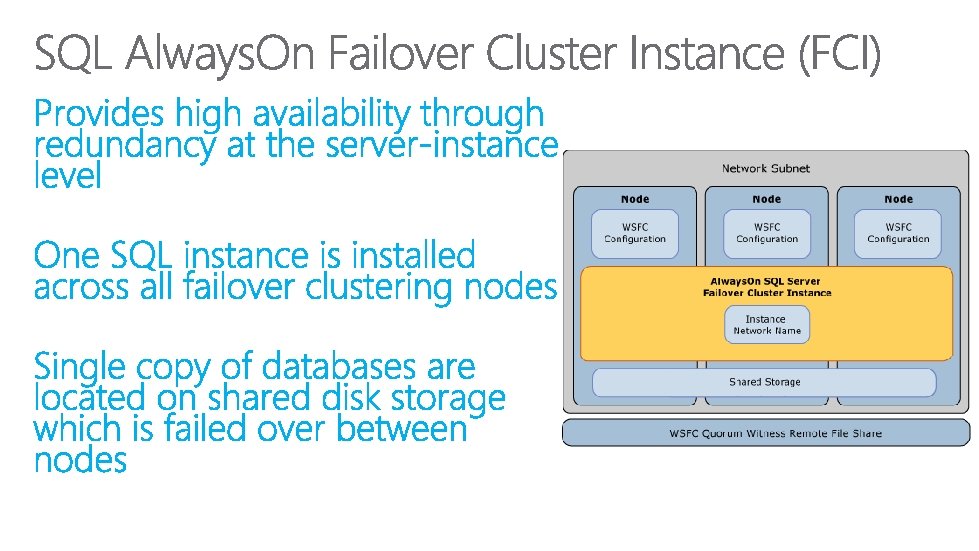

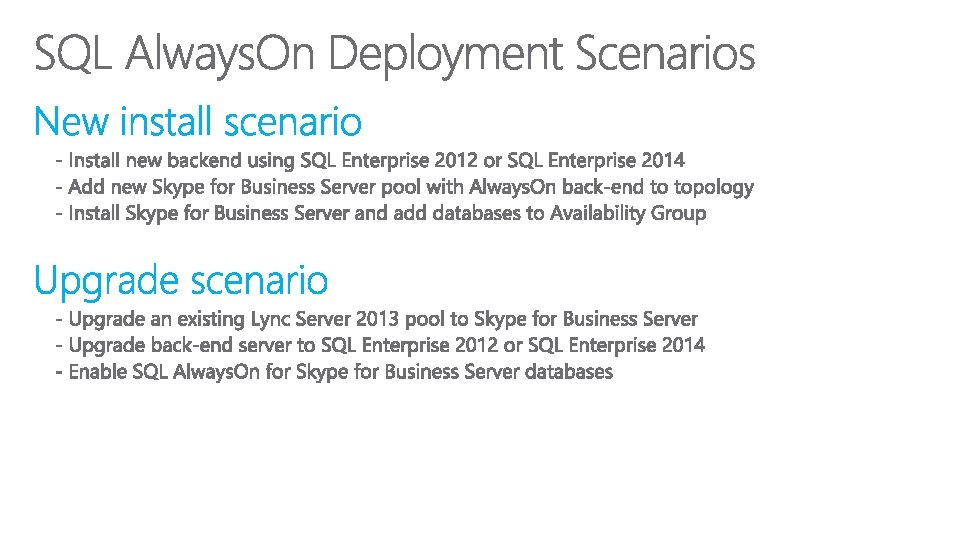

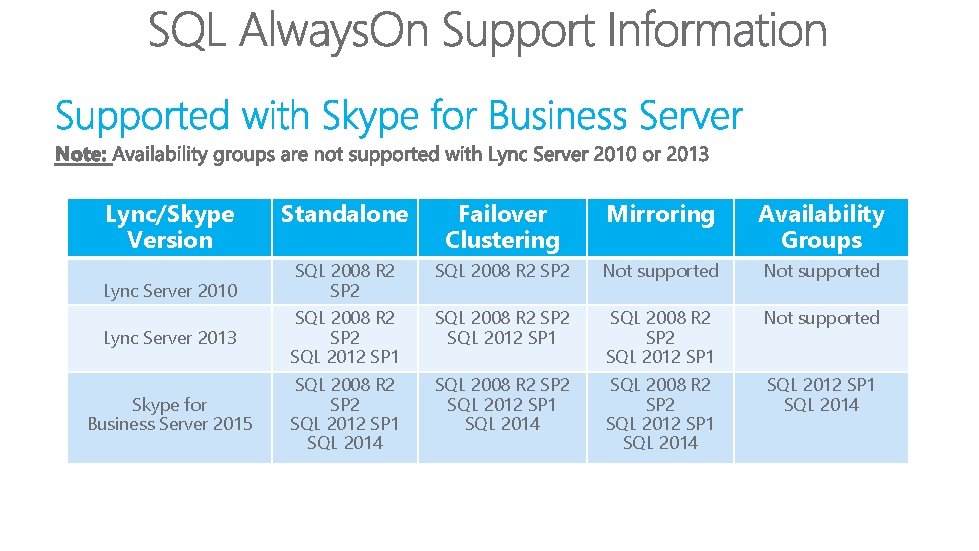

Lync/Skype Version Standalone Failover Clustering Mirroring Availability Groups SQL 2008 R 2 SP 2 Not supported Lync Server 2013 SQL 2008 R 2 SP 2 SQL 2012 SP 1 Not supported Skype for Business Server 2015 SQL 2008 R 2 SP 2 SQL 2012 SP 1 SQL 2014 Lync Server 2010

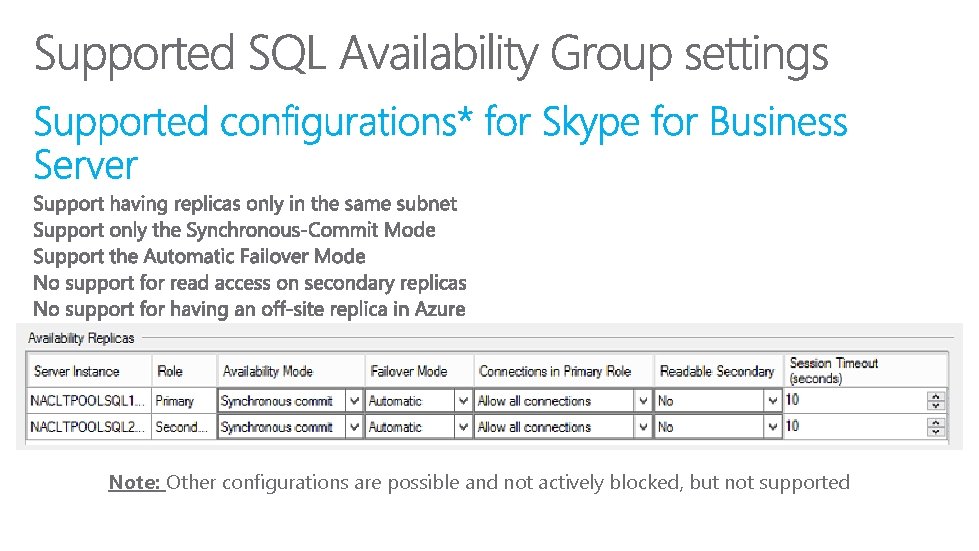

Note: Other configurations are possible and not actively blocked, but not supported

Deployment

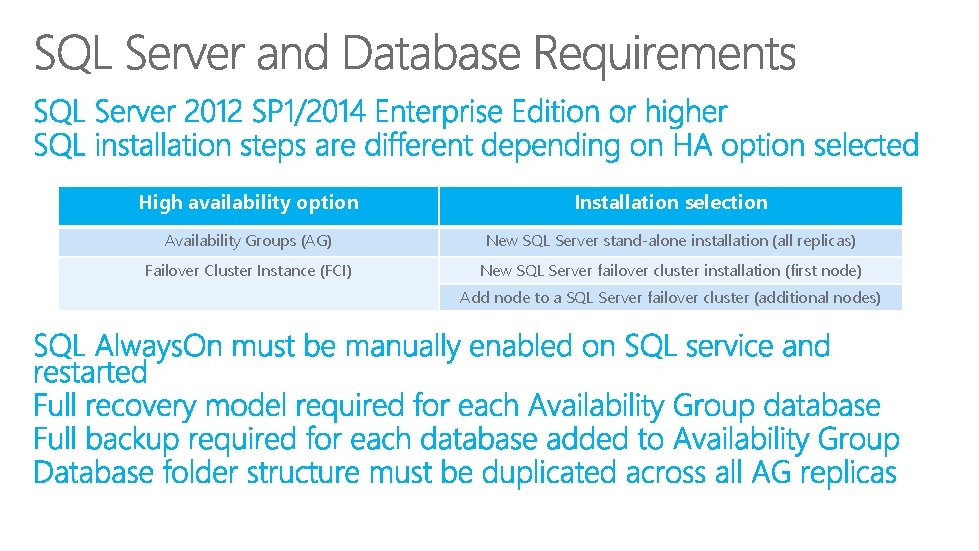

High availability option Installation selection Availability Groups (AG) New SQL Server stand-alone installation (all replicas) Failover Cluster Instance (FCI) New SQL Server failover cluster installation (first node) Add node to a SQL Server failover cluster (additional nodes)

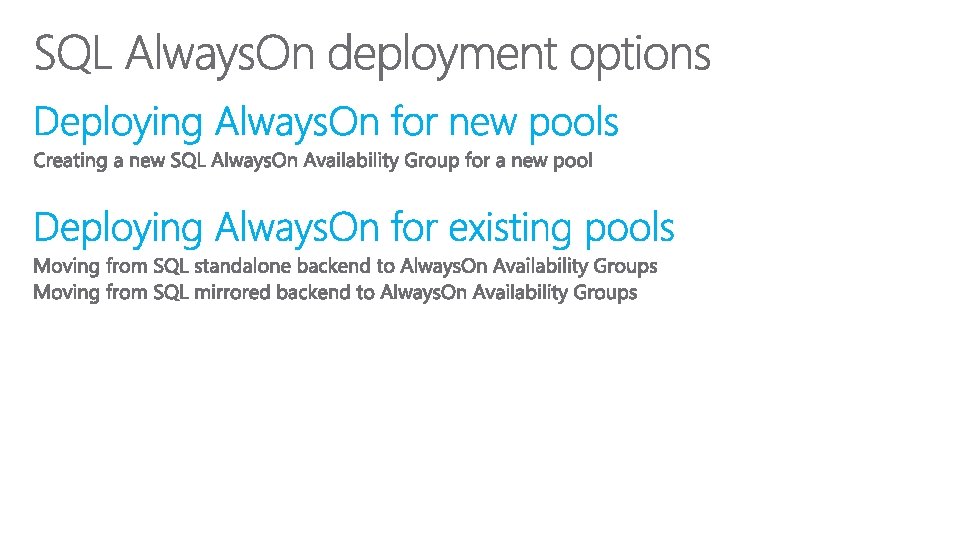

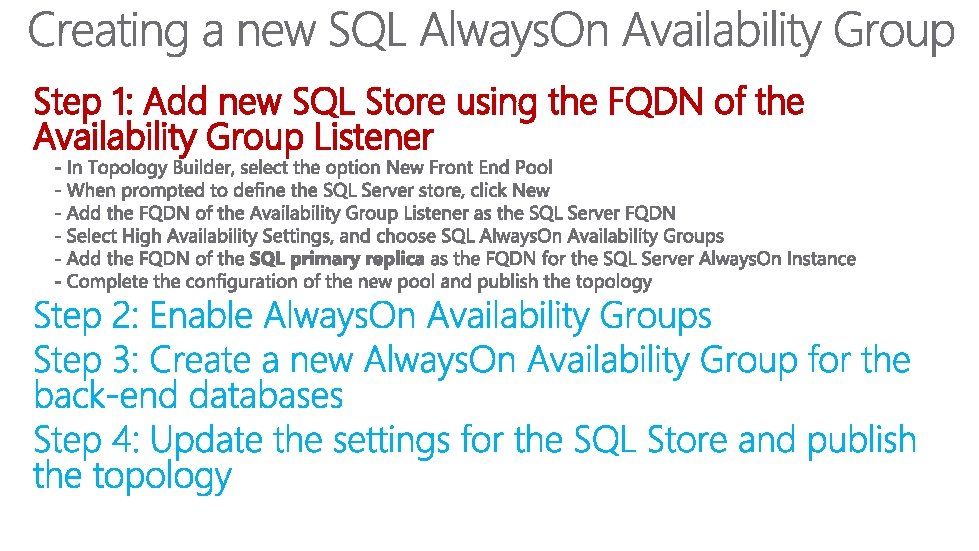

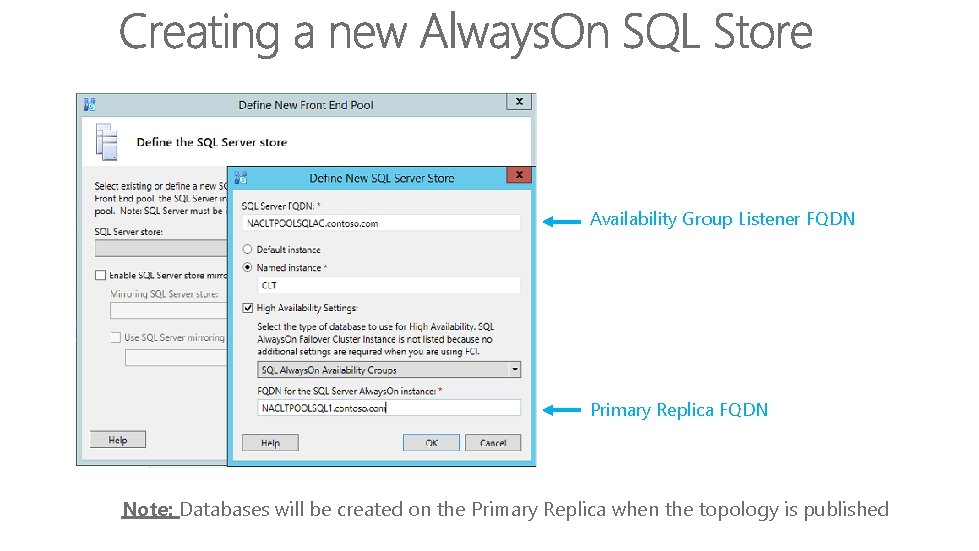

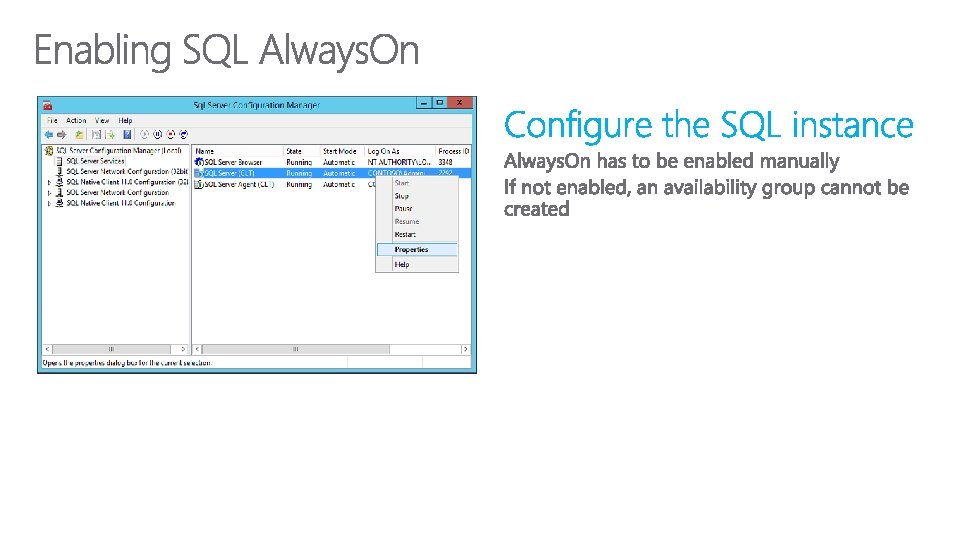

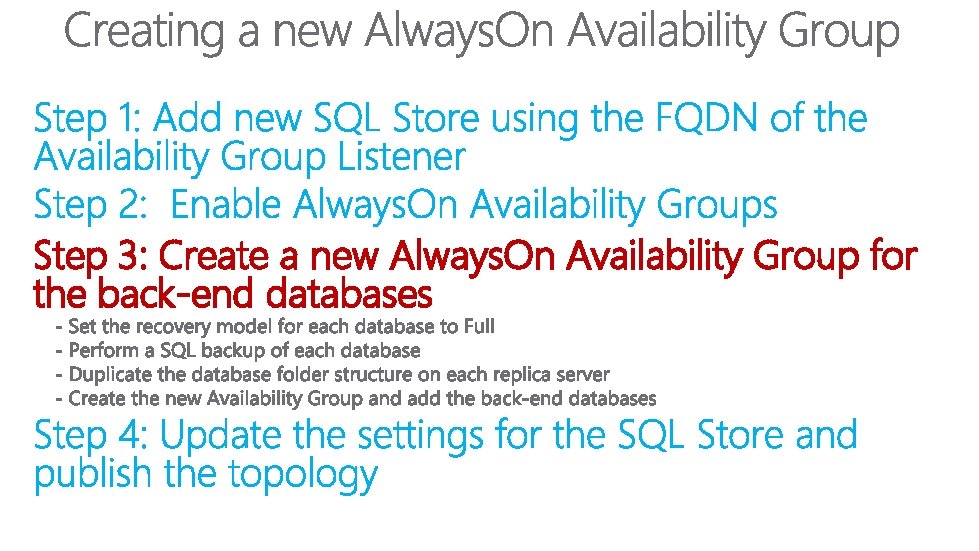

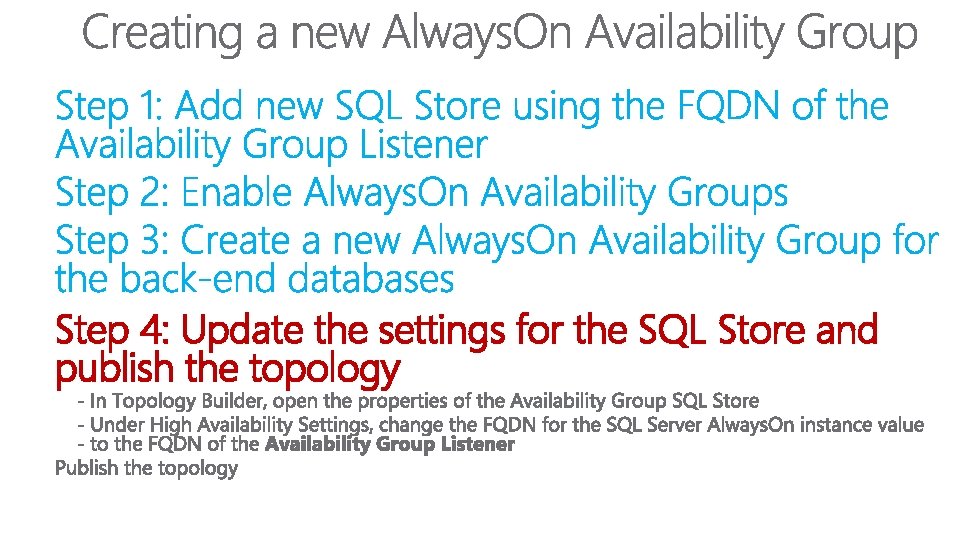

Step 1: Add new SQL Store using the FQDN of the Availability Group Listener

Availability Group Listener FQDN Primary Replica FQDN Note: Databases will be created on the Primary Replica when the topology is published

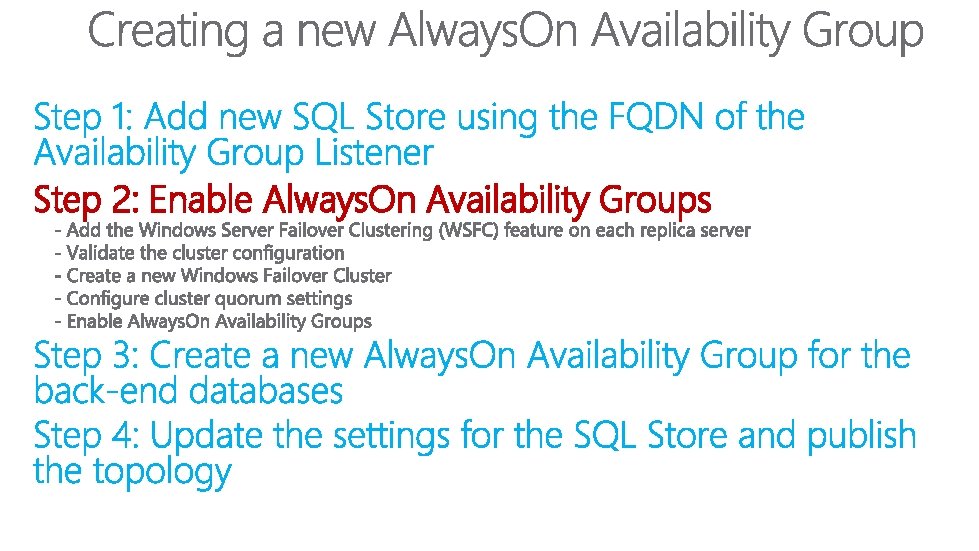

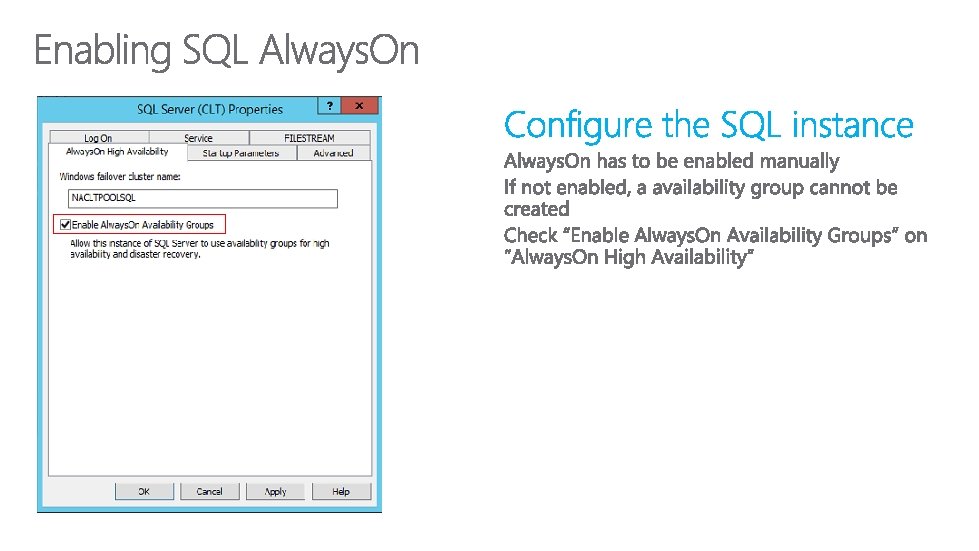

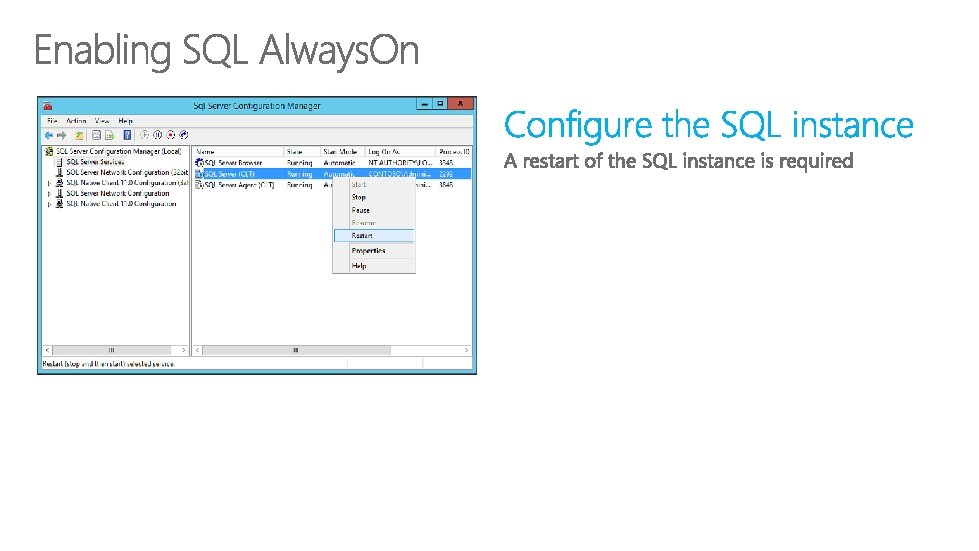

Step 2: Enable Always. On Availability Groups

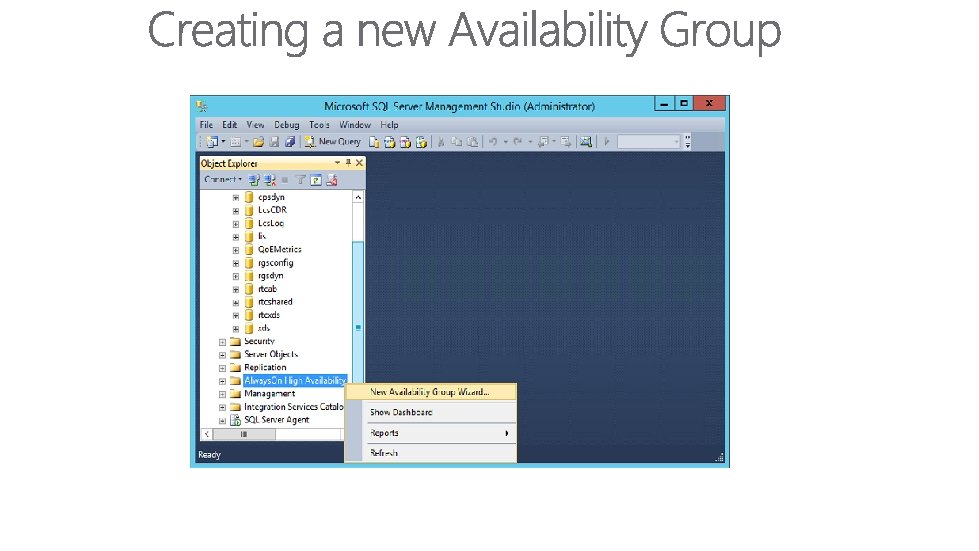

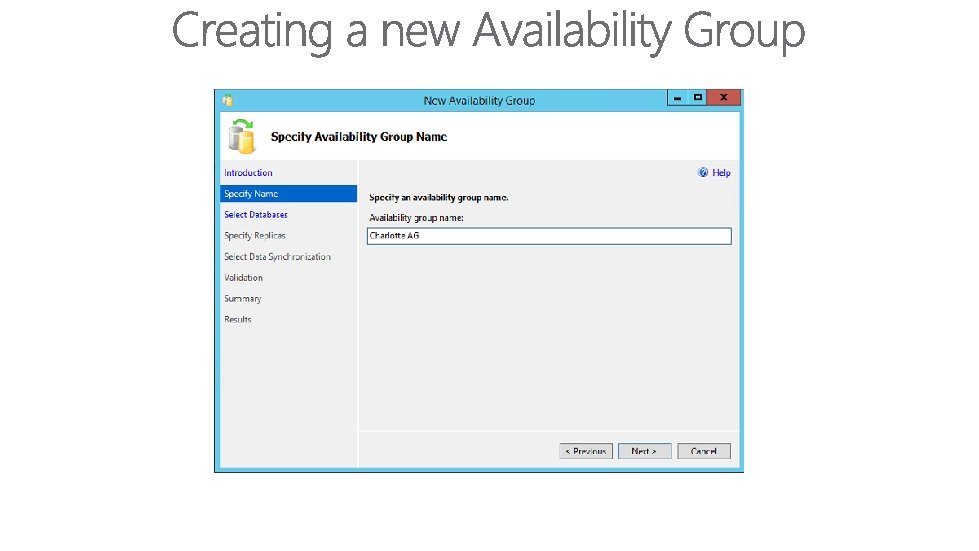

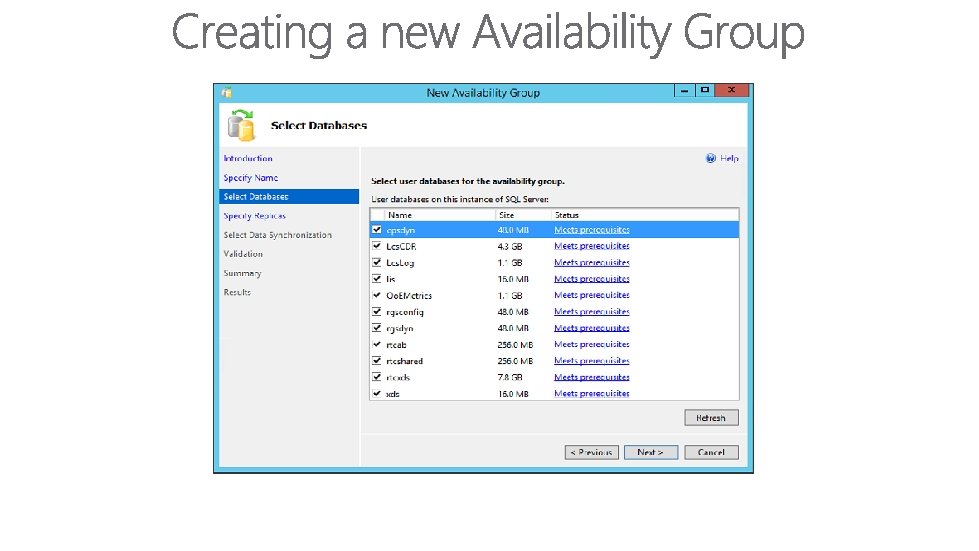

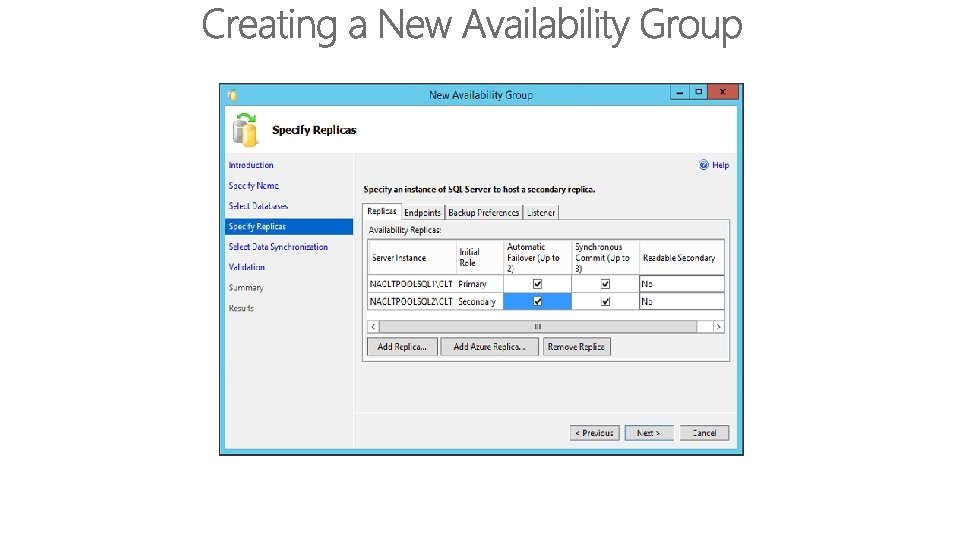

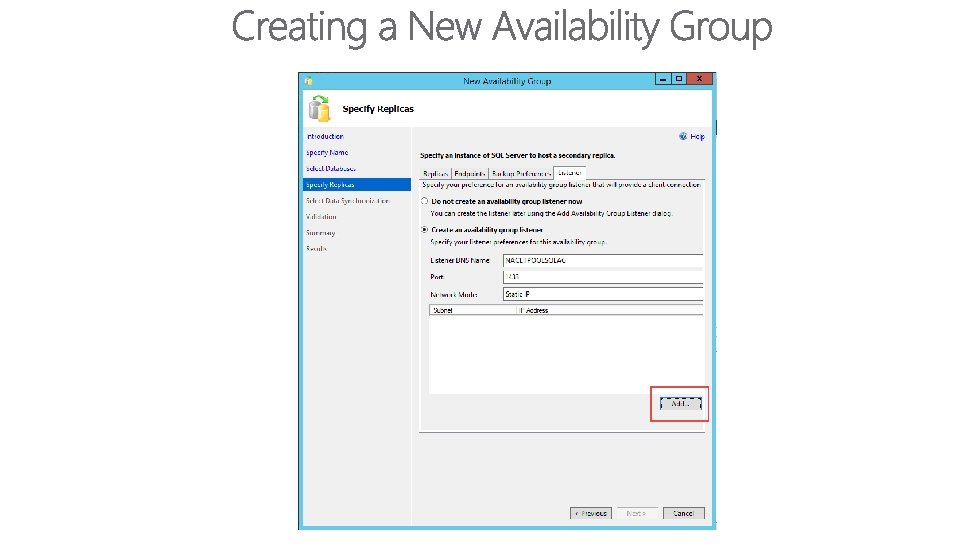

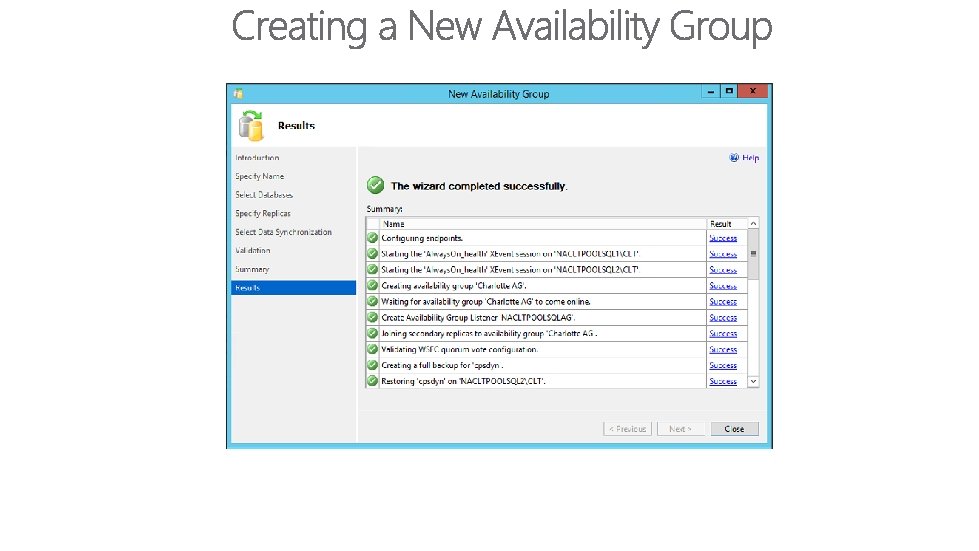

Step 3: Create a new Always. On Availability Group for the back-end databases

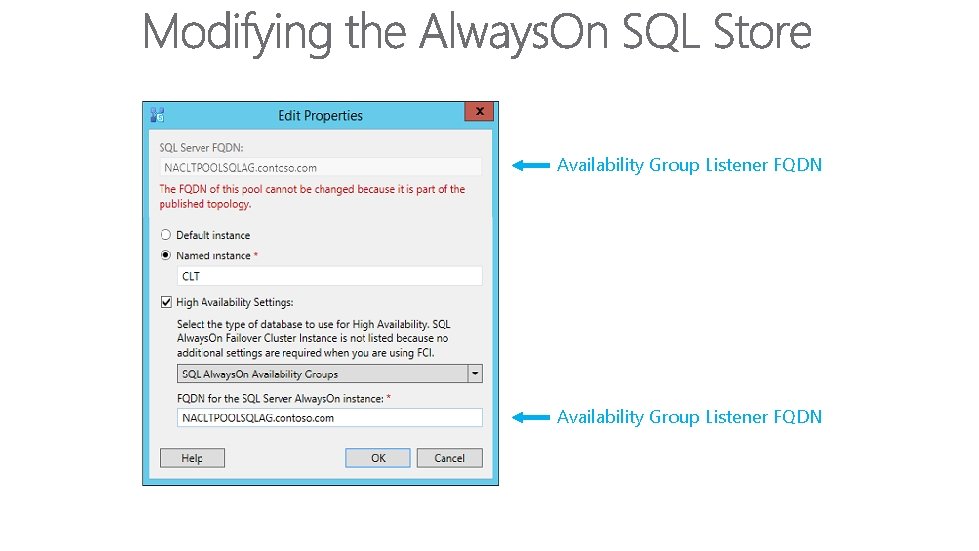

Step 4: Update the settings for the SQL Store and publish the topology

Availability Group Listener FQDN

Jabra – Evolving the Lync Communication Experience Our History § § § 145 years of communication experience Microsoft Gold Partner since 2007 Partner of the year Finalist 2013 Over 40 Lync certified devices Exclusive USB/3. 5 M controller on EVOLVE 40 and 80 Models § Over 4 million Lync Devices sold! Jabra Programs for Skype for Business § Jabra Partner Program § Pilot, POC and Deployment Offers § Device Deployment and Management Tools § Partner Demo and Seed Programs www. Jabra. com/microsoft § Contact : Bill Orlansky borlansky@Jabra. com

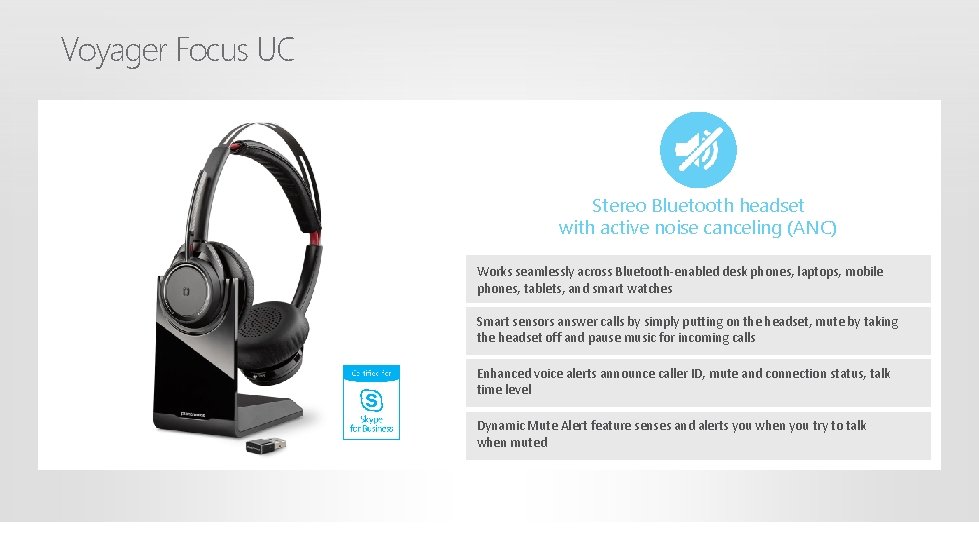

Voyager Focus UC Stereo Bluetooth headset with active noise canceling (ANC) Works seamlessly across Bluetooth-enabled desk phones, laptops, mobile phones, tablets, and smart watches Smart sensors answer calls by simply putting on the headset, mute by taking the headset off and pause music for incoming calls Enhanced voice alerts announce caller ID, mute and connection status, talk time level Dynamic Mute Alert feature senses and alerts you when you try to talk when muted

Sennheiser headsets and speakerphones provide excellent sound quality, wearing comfort and hearing protection for contact centers, offices and UC professionals Sennheiser is an ideal companion for Skype for Business www. sennheiser. com/cco

Overview

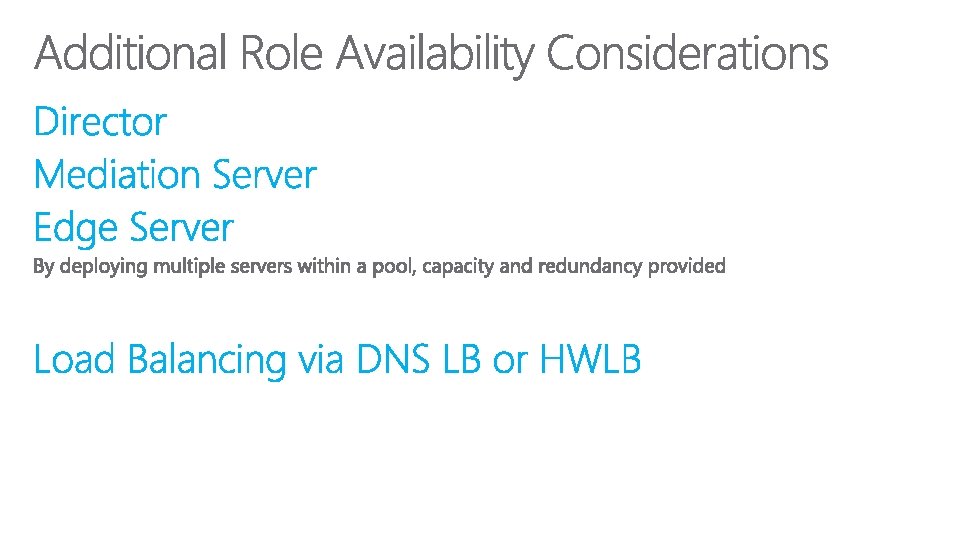

114

115

Overview

117

118

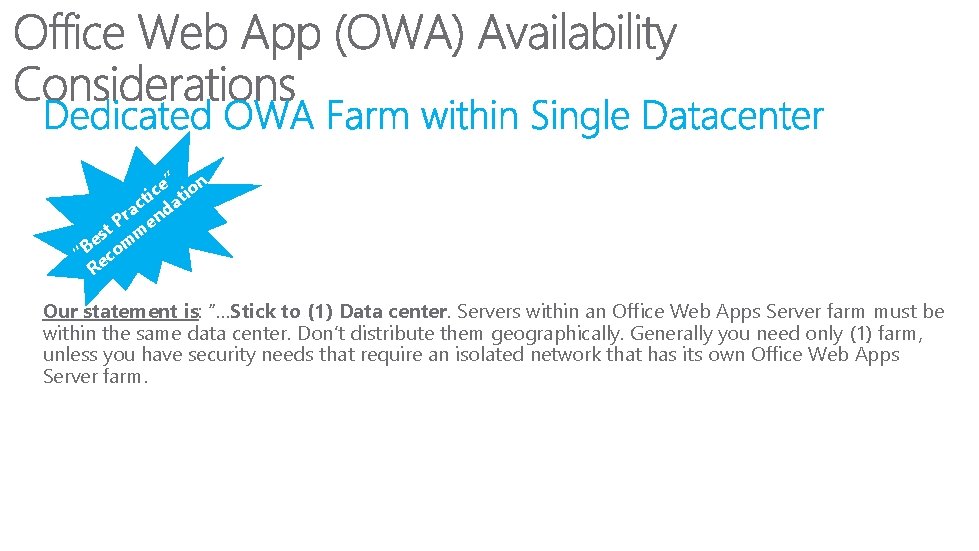

We have not tested stretch farm scenarios There is a great deal of communication between Office Web Apps servers within a farm We will not attempt to optimize this communication for stretch farms There is no reason that a stretch farm can’t work There is a consistent intra-farm latency of <1 ms one way, 99. 9% of the time over a period of ten minutes. (Intra-farm latency is commonly defined as the latency between the front-end web servers and the database servers. ) § The bandwidth speed must be at least 1 gigabit per second. § § § Our statement is: “…We will support customers who set up stretch farms; however, if an issue turns out to be the result of how the stretch farm is configured, we are not in a position to deliver a change to Office Web Apps Server to support that configuration. In cases where a stretch farm is on a very low latency network (say, fiber within the same city) and the network is configured such that all the machines in the farm appear to each other as machines in the same subnet, there is nothing about our architecture that will fail…” 119

” n e c i atio t ac nd r P e t m s e m “B co Re Our statement is: “…Stick to (1) Data center. Servers within an Office Web Apps Server farm must be within the same data center. Don’t distribute them geographically. Generally you need only (1) farm, unless you have security needs that require an isolated network that has its own Office Web Apps Server farm. 120

Overview

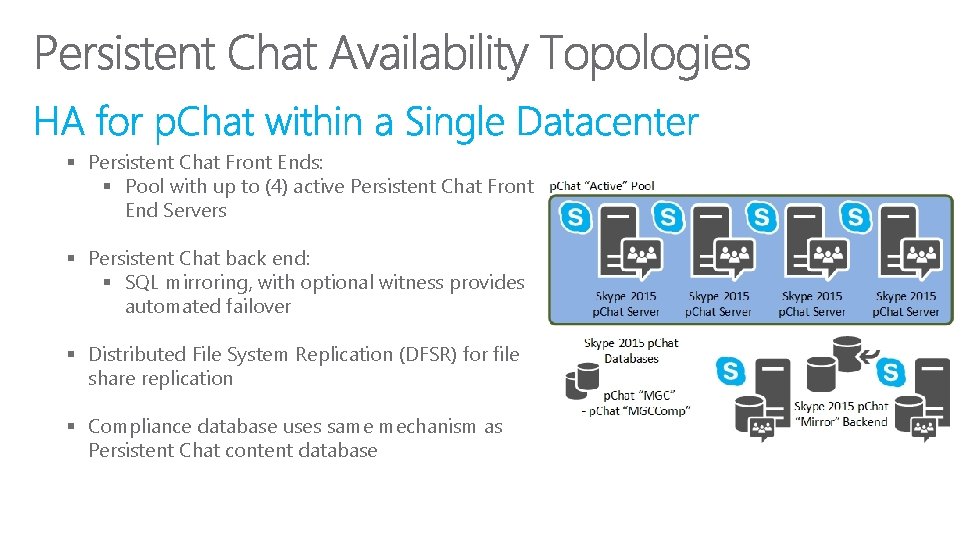

§ Persistent Chat Front Ends: § Pool with up to (4) active Persistent Chat Front End Servers § Persistent Chat back end: § SQL mirroring, with optional witness provides automated failover § Distributed File System Replication (DFSR) for file share replication § Compliance database uses same mechanism as Persistent Chat content database 122

123

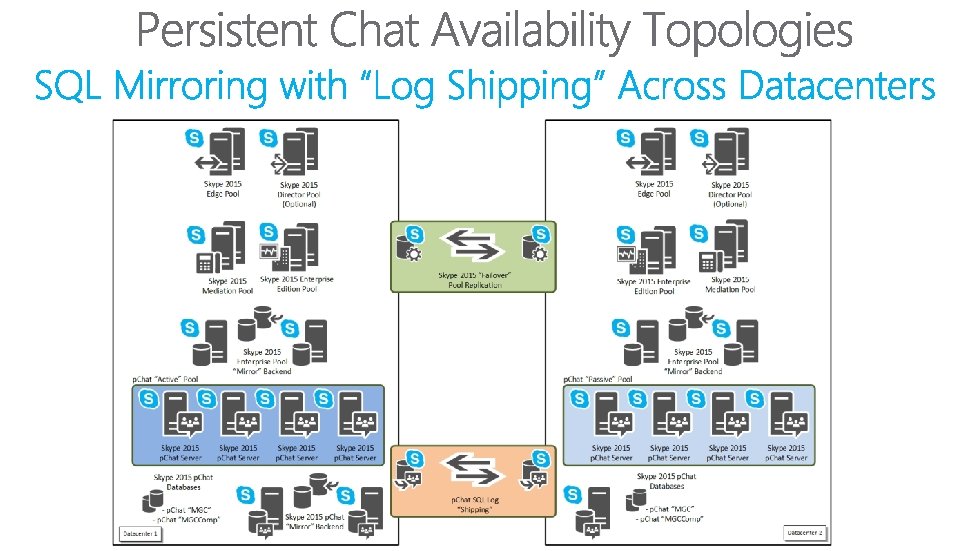

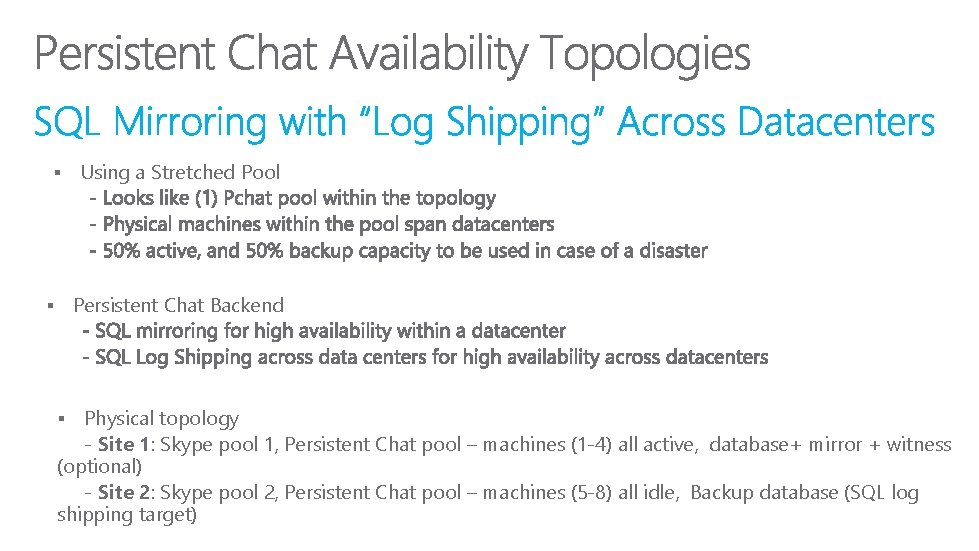

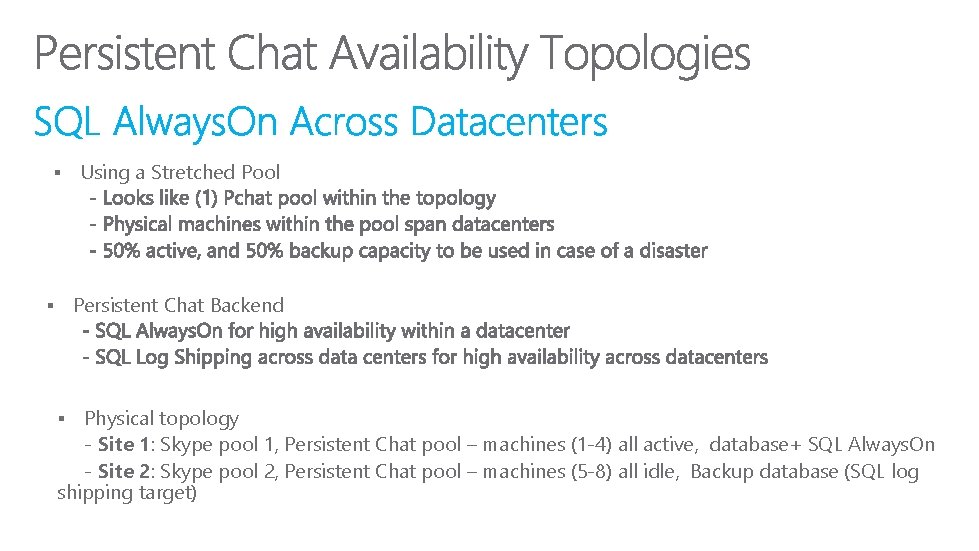

§ Persistent Chat Backend § Physical topology - Site 1: Skype pool 1, Persistent Chat pool – machines (1 -4) all active, database+ mirror + witness (optional) - Site 2: Skype pool 2, Persistent Chat pool – machines (5 -8) all idle, Backup database (SQL log shipping target) § 124 Using a Stretched Pool

125

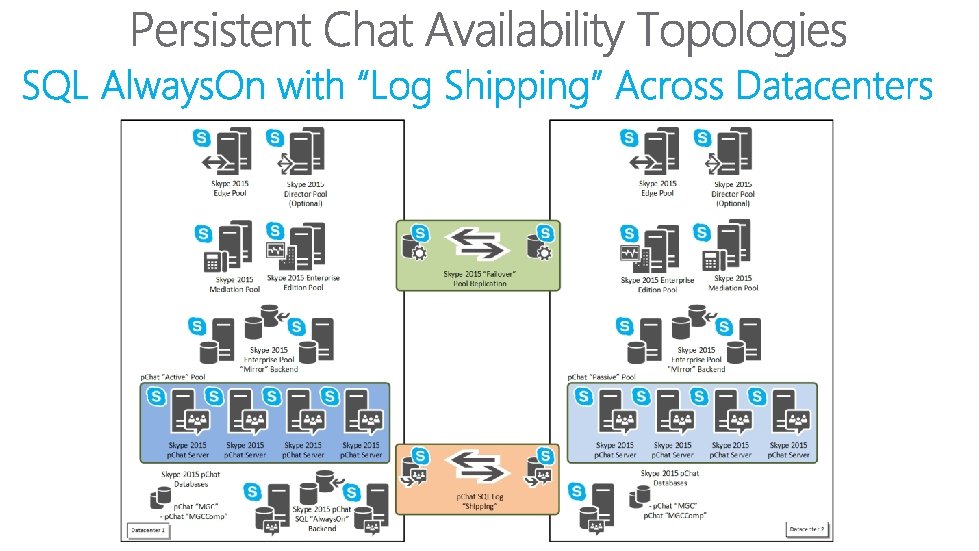

§ Using a Stretched Pool Persistent Chat Backend § Physical topology - Site 1: Skype pool 1, Persistent Chat pool – machines (1 -4) all active, database+ SQL Always. On - Site 2: Skype pool 2, Persistent Chat pool – machines (5 -8) all idle, Backup database (SQL log shipping target) § 126

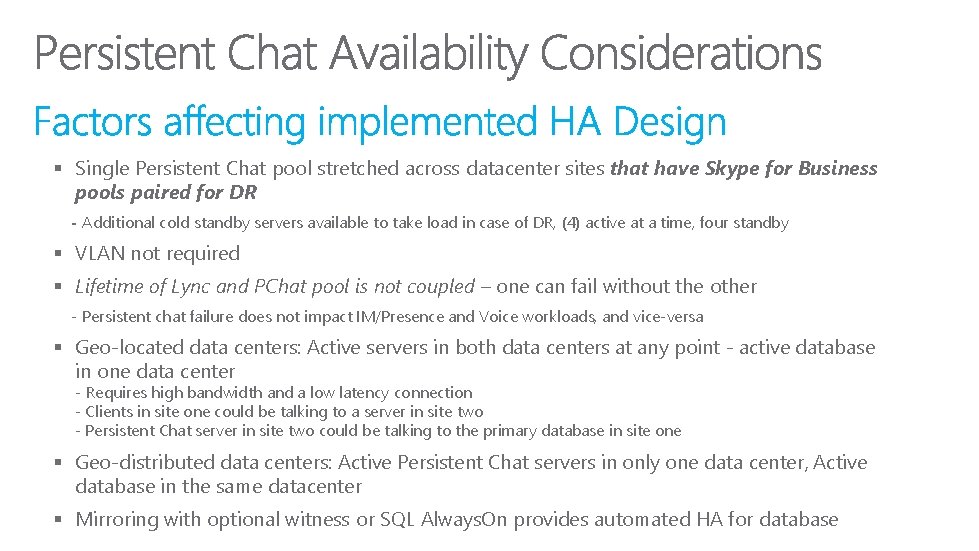

§ Single Persistent Chat pool stretched across datacenter sites that have Skype for Business pools paired for DR - Additional cold standby servers available to take load in case of DR, (4) active at a time, four standby § VLAN not required § Lifetime of Lync and PChat pool is not coupled – one can fail without the other - Persistent chat failure does not impact IM/Presence and Voice workloads, and vice-versa § Geo-located data centers: Active servers in both data centers at any point - active database in one data center - Requires high bandwidth and a low latency connection - Clients in site one could be talking to a server in site two - Persistent Chat server in site two could be talking to the primary database in site one § Geo-distributed data centers: Active Persistent Chat servers in only one data center, Active database in the same datacenter 127 § Mirroring with optional witness or SQL Always. On provides automated HA for database

Overview

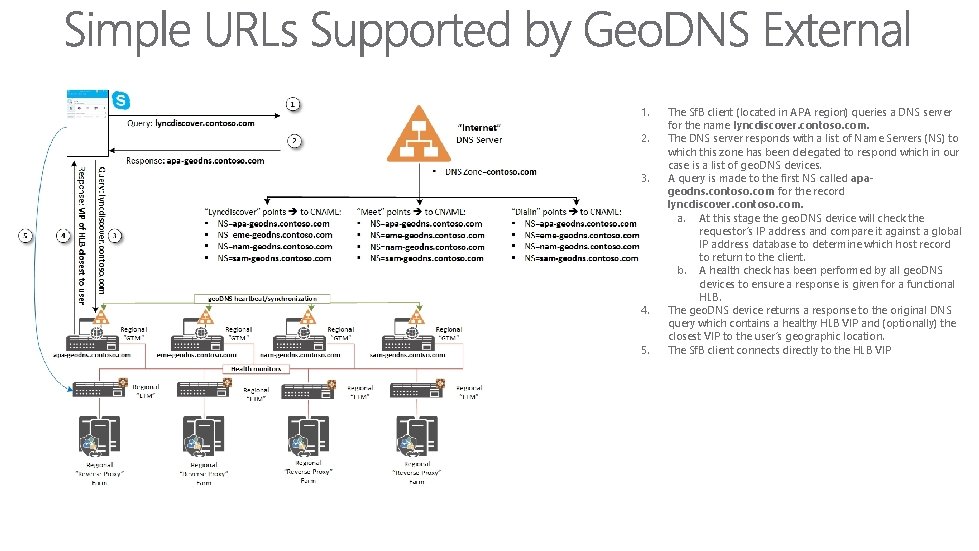

1. 2. 3. 4. 5. The Sf. B client (located in APA region) queries a DNS server for the name lyncdiscover. contoso. com. The DNS server responds with a list of Name Servers (NS) to which this zone has been delegated to respond which in our case is a list of geo. DNS devices. A query is made to the first NS called apageodns. contoso. com for the record lyncdiscover. contoso. com. a. At this stage the geo. DNS device will check the requestor’s IP address and compare it against a global IP address database to determine which host record to return to the client. b. A health check has been performed by all geo. DNS devices to ensure a response is given for a functional HLB. The geo. DNS device returns a response to the original DNS query which contains a healthy HLB VIP and (optionally) the closest VIP to the user’s geographic location. The Sf. B client connects directly to the HLB VIP

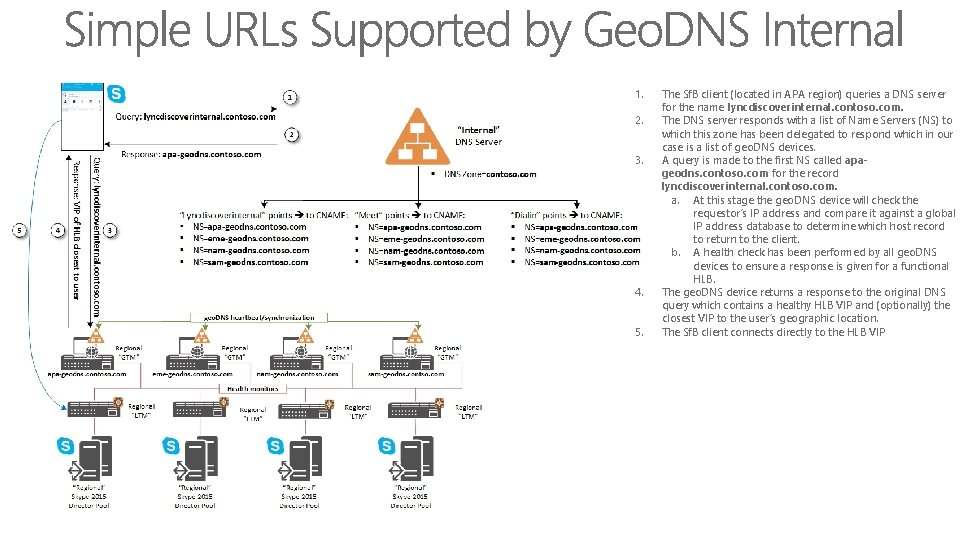

1. 2. 3. 4. 5. The Sf. B client (located in APA region) queries a DNS server for the name lyncdiscoverinternal. contoso. com. The DNS server responds with a list of Name Servers (NS) to which this zone has been delegated to respond which in our case is a list of geo. DNS devices. A query is made to the first NS called apageodns. contoso. com for the record lyncdiscoverinternal. contoso. com. a. At this stage the geo. DNS device will check the requestor’s IP address and compare it against a global IP address database to determine which host record to return to the client. b. A health check has been performed by all geo. DNS devices to ensure a response is given for a functional HLB. The geo. DNS device returns a response to the original DNS query which contains a healthy HLB VIP and (optionally) the closest VIP to the user’s geographic location. The Sf. B client connects directly to the HLB VIP

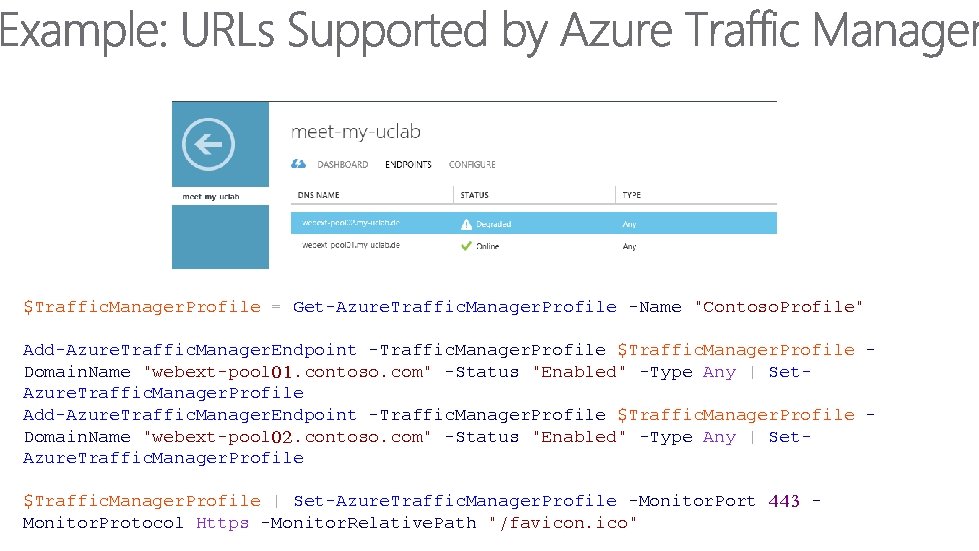

$Traffic. Manager. Profile = Get-Azure. Traffic. Manager. Profile -Name "Contoso. Profile" Add-Azure. Traffic. Manager. Endpoint -Traffic. Manager. Profile $Traffic. Manager. Profile Domain. Name "webext-pool 01. contoso. com" -Status "Enabled" -Type Any | Set. Azure. Traffic. Manager. Profile Add-Azure. Traffic. Manager. Endpoint -Traffic. Manager. Profile $Traffic. Manager. Profile Domain. Name "webext-pool 02. contoso. com" -Status "Enabled" -Type Any | Set. Azure. Traffic. Manager. Profile $Traffic. Manager. Profile | Set-Azure. Traffic. Manager. Profile -Monitor. Port 443 Monitor. Protocol Https -Monitor. Relative. Path "/favicon. ico"

- Slides: 132