Intro to AI Resolution Prolog Ruth Bergman Fall

Intro to AI Resolution & Prolog Ruth Bergman Fall 2002

Inference Rules for FOL • Inference Rules – Modus Ponens, And-Elimination, And-Introduction, Or-Introduction, Resolution • Rules for substituting variables – Universal-Elimination, Existential-Introduction

Theorem Proving Strategies • Strategy 1: Deduce everything possible from the KB, and hope to derive what you want to prove. – A forward chaining approach • Strategy 2: Find out what facts you need in order to prove theorem. Try to prove those facts. Repeat until there are no facts to prove. – A backward chaining approach

Forward Chaining Forward-chain(KB, ) 1. If is in KB return yes 2. Apply inferences rules on KB 3. Add new sentences to KB 4. Go to 2 • A “data-driven” strategy • This inference procedure will find a proof for any true sentence • For false sentences this procedure may not halt. This procedure is semi-decidable. • Generates many irrelevant conclusions.

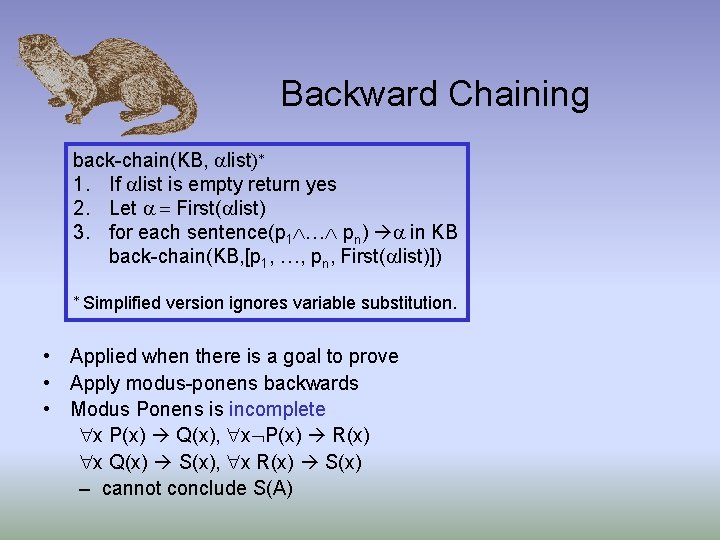

Backward Chaining back-chain(KB, list)* 1. If list is empty return yes 2. Let = First( list) 3. for each sentence(p 1 … pn) in KB back-chain(KB, [p 1, …, pn, First( list)]) * Simplified version ignores variable substitution. • Applied when there is a goal to prove • Apply modus-ponens backwards • Modus Ponens is incomplete x P(x) Q(x), x P(x) R(x) x Q(x) S(x), x R(x) S(x) – cannot conclude S(A)

Resolution : A Complete Inference Procedure • Simple resolution (for ground terms): , • Modus ponens derives only atomic conclusions, but resolution derives new implications

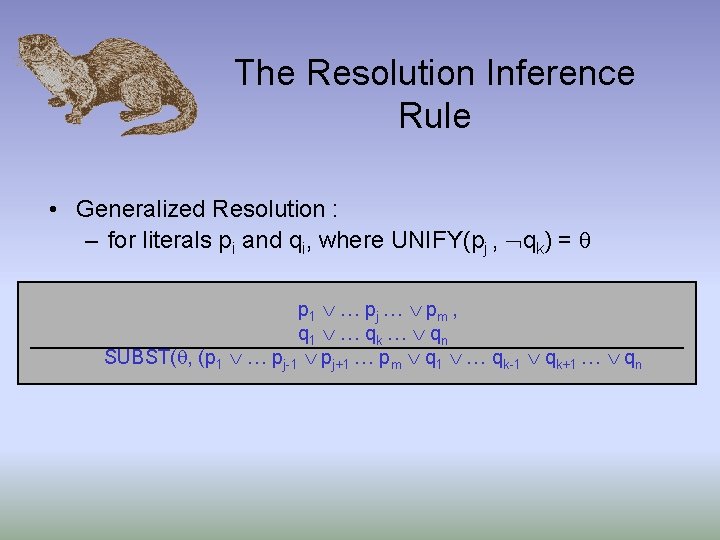

The Resolution Inference Rule • Generalized Resolution : – for literals pi and qi, where UNIFY(pj , qk) = p 1 … pj … pm , q 1 … qk … qn SUBST( , (p 1 … pj-1 pj+1 … pm q 1 … qk-1 qk+1 … qn

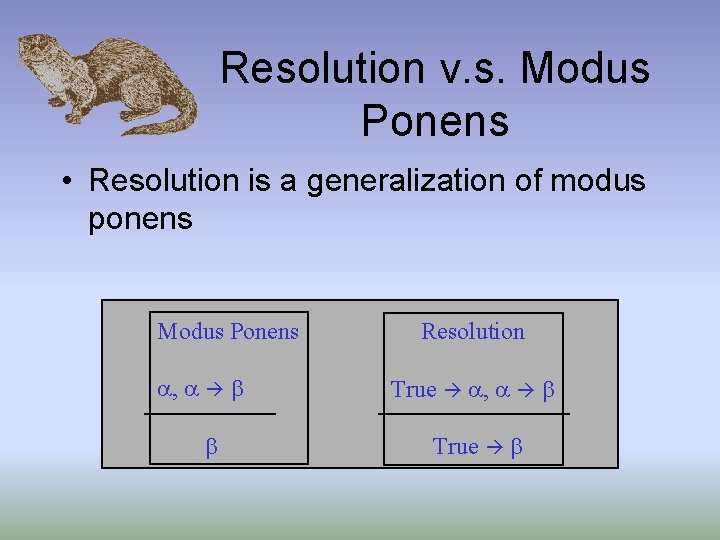

Resolution v. s. Modus Ponens • Resolution is a generalization of modus ponens Modus Ponens , Resolution True , True

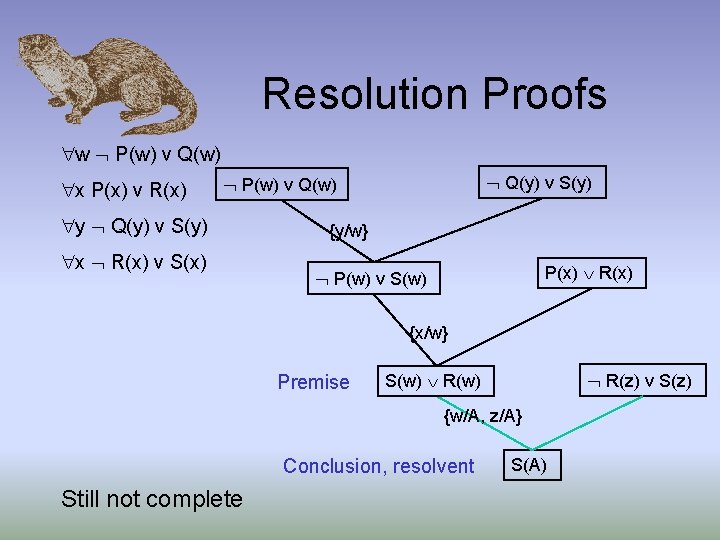

Resolution Proofs w P(w) v Q(w) x P(x) v R(x) Q(y) v S(y) P(w) v Q(w) y Q(y) v S(y) x R(x) v S(x) {y/w} P(x) R(x) P(w) v S(w) {x/w} Premise R(z) v S(z) S(w) R(w) {w/A, z/A} Conclusion, resolvent Still not complete S(A)

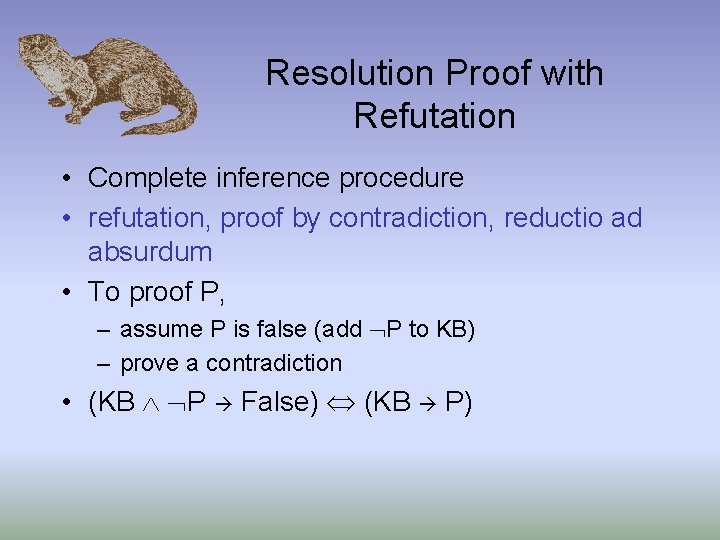

Resolution Proof with Refutation • Complete inference procedure • refutation, proof by contradiction, reductio ad absurdum • To proof P, – assume P is false (add P to KB) – prove a contradiction • (KB P False) (KB P)

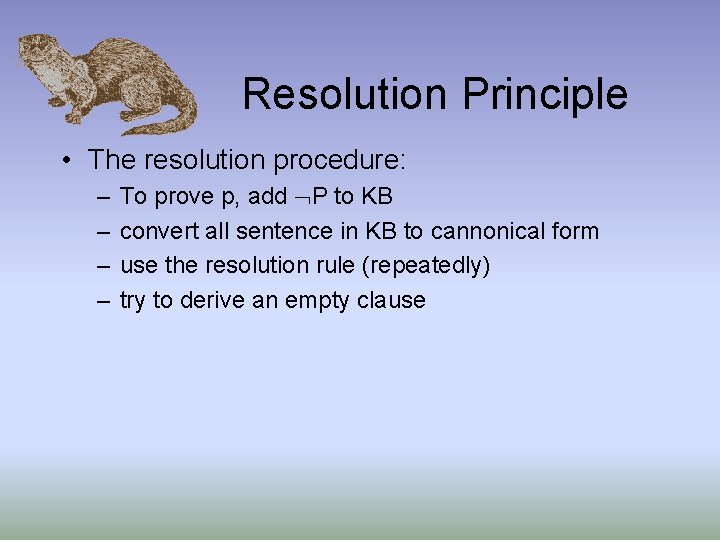

Resolution Principle • The resolution procedure: – – To prove p, add P to KB convert all sentence in KB to cannonical form use the resolution rule (repeatedly) try to derive an empty clause

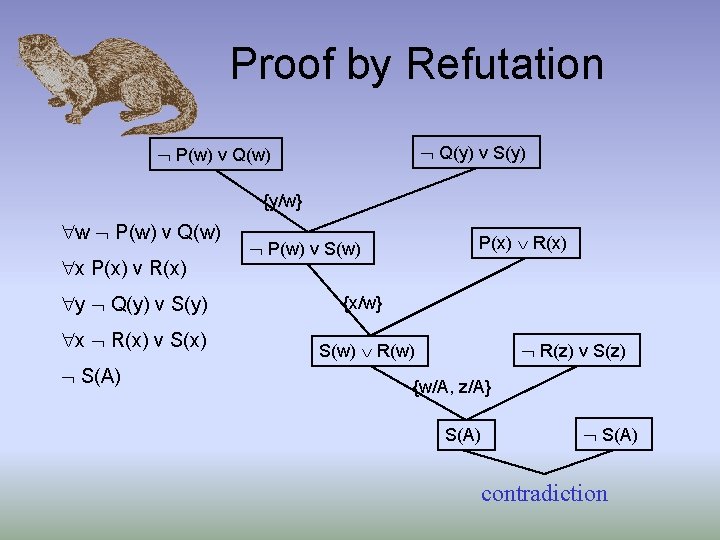

Proof by Refutation Q(y) v S(y) P(w) v Q(w) {y/w} w P(w) v Q(w) x P(x) v R(x) y Q(y) v S(y) x R(x) v S(x) S(A) P(x) R(x) P(w) v S(w) {x/w} R(z) v S(z) S(w) R(w) {w/A, z/A} S(A) contradiction

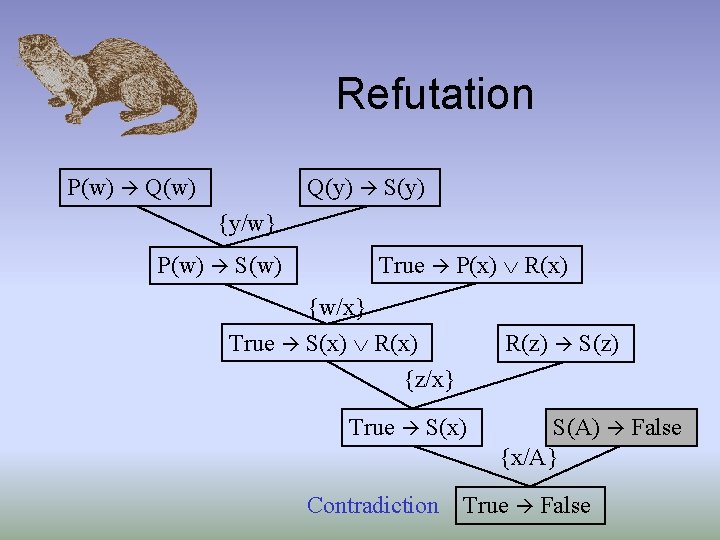

Refutation P(w) Q(w) Q(y) S(y) {y/w} P(w) S(w) True P(x) R(x) {w/x} True S(x) R(x) {z/x} R(z) S(z) True S(x) Contradiction S(A) False {x/A} True False

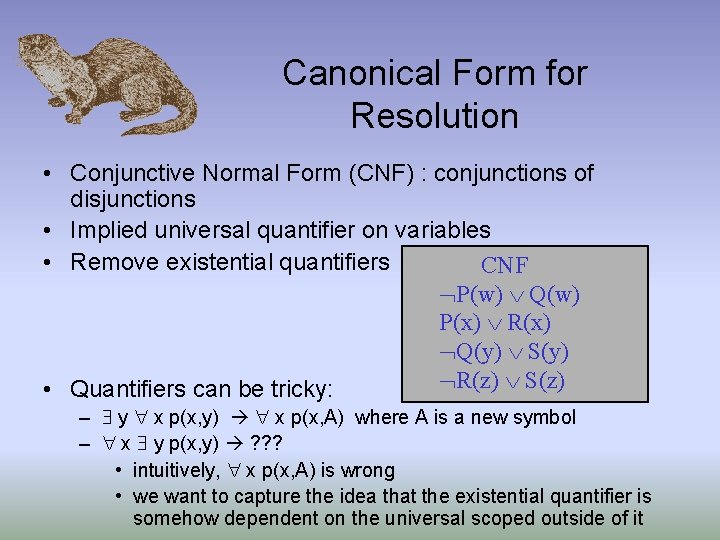

Canonical Form for Resolution • Conjunctive Normal Form (CNF) : conjunctions of disjunctions • Implied universal quantifier on variables • Remove existential quantifiers CNF P(w) Q(w) P(x) R(x) Q(y) S(y) R(z) S(z) • Quantifiers can be tricky: – y x p(x, y) x p(x, A) where A is a new symbol – x y p(x, y) ? ? ? • intuitively, x p(x, A) is wrong • we want to capture the idea that the existential quantifier is somehow dependent on the universal scoped outside of it

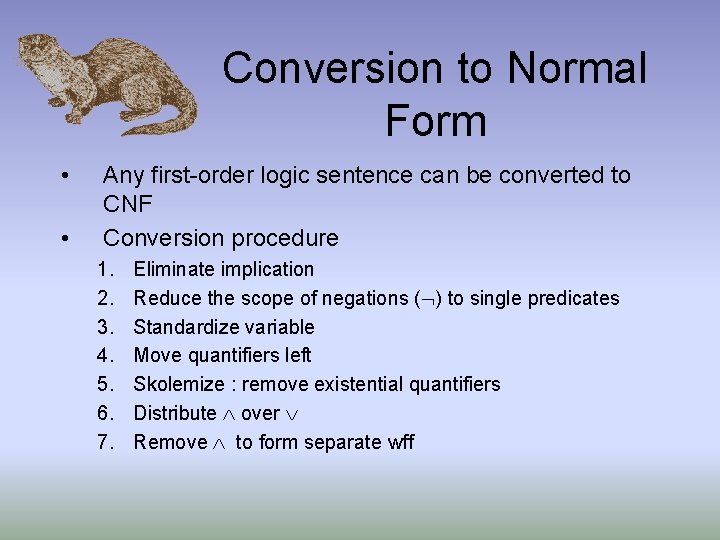

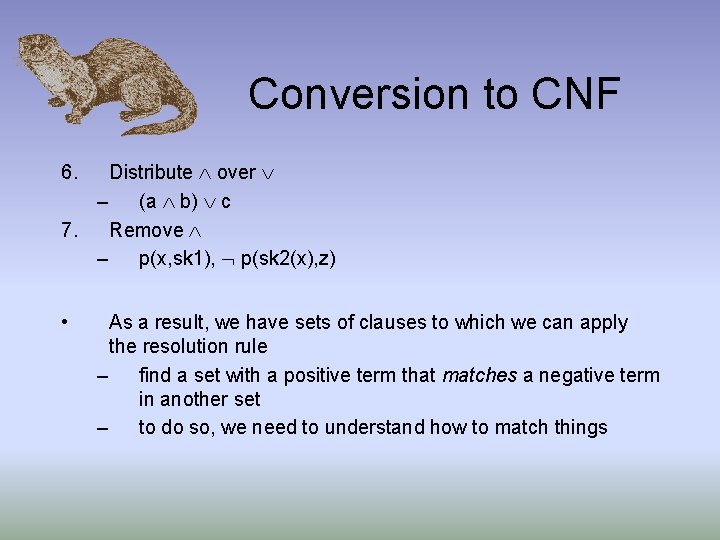

Conversion to Normal Form • • Any first-order logic sentence can be converted to CNF Conversion procedure 1. 2. 3. 4. 5. 6. 7. Eliminate implication Reduce the scope of negations ( ) to single predicates Standardize variable Move quantifiers left Skolemize : remove existential quantifiers Distribute over Remove to form separate wff

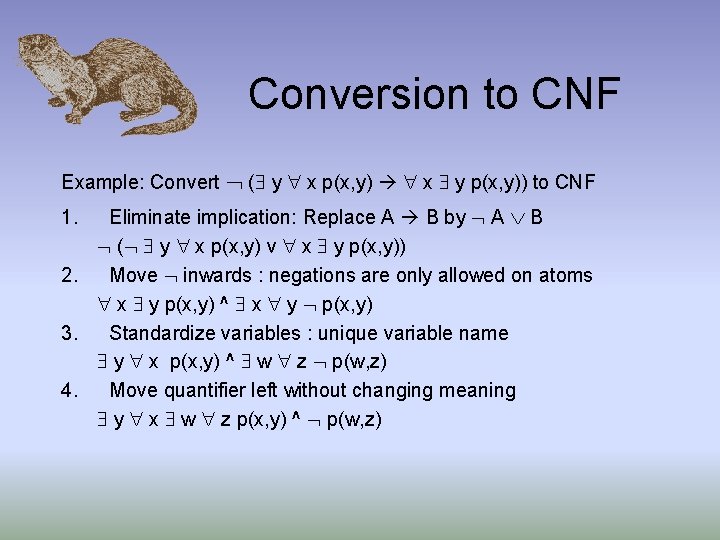

Conversion to CNF Example: Convert ( y x p(x, y) x y p(x, y)) to CNF Eliminate implication: Replace A B by A B ( y x p(x, y) v x y p(x, y)) 2. Move inwards : negations are only allowed on atoms x y p(x, y) ^ x y p(x, y) 3. Standardize variables : unique variable name y x p(x, y) ^ w z p(w, z) 4. Move quantifier left without changing meaning y x w z p(x, y) ^ p(w, z) 1.

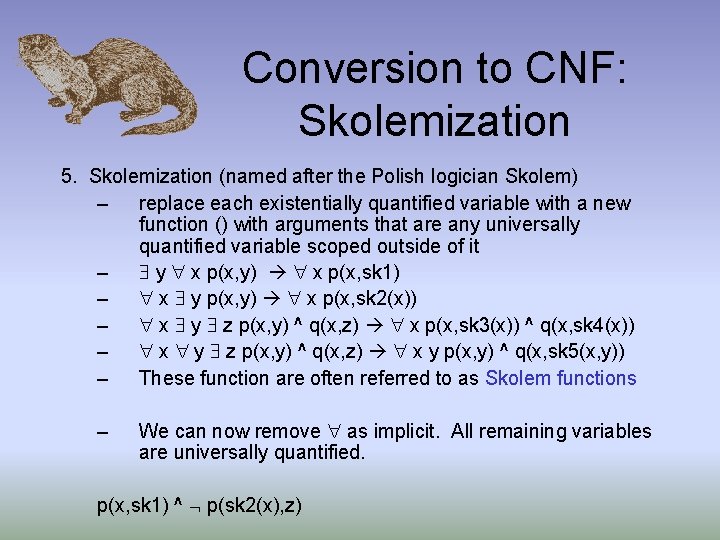

Conversion to CNF: Skolemization 5. Skolemization (named after the Polish logician Skolem) – replace each existentially quantified variable with a new function () with arguments that are any universally quantified variable scoped outside of it – y x p(x, y) x p(x, sk 1) – x y p(x, y) x p(x, sk 2(x)) – x y z p(x, y) ^ q(x, z) x p(x, sk 3(x)) ^ q(x, sk 4(x)) – x y z p(x, y) ^ q(x, z) x y p(x, y) ^ q(x, sk 5(x, y)) – These function are often referred to as Skolem functions – We can now remove as implicit. All remaining variables are universally quantified. p(x, sk 1) ^ p(sk 2(x), z)

Conversion to CNF Distribute over – (a b) c 7. Remove – p(x, sk 1), p(sk 2(x), z) 6. • As a result, we have sets of clauses to which we can apply the resolution rule – find a set with a positive term that matches a negative term in another set – to do so, we need to understand how to match things

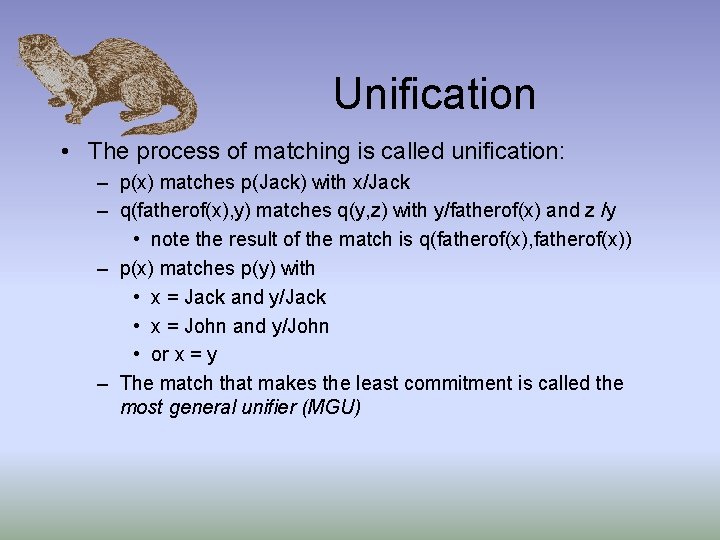

Unification • The process of matching is called unification: – p(x) matches p(Jack) with x/Jack – q(fatherof(x), y) matches q(y, z) with y/fatherof(x) and z /y • note the result of the match is q(fatherof(x), fatherof(x)) – p(x) matches p(y) with • x = Jack and y/Jack • x = John and y/John • or x = y – The match that makes the least commitment is called the most general unifier (MGU)

Substitution • We use the notation subst(t, s) to denote the application of the substitution s = {v 1/t 1, v 2/t 2. . . vn/tn} to t. • Composition of substitutions – subst(COMPOSE( 1, 2), p) = subst( 2, SUBST( 1, p))

Unification Algorithm • Assume input formulas q and q have standardize variables parentof(fatherof(John), y) parentof(y, z) parentof(fatherof(John), v 1) parentof(v 2, v 3) • Unification takes two atomic sentences, p and q, and returns a substitution that would make p and q look the same.

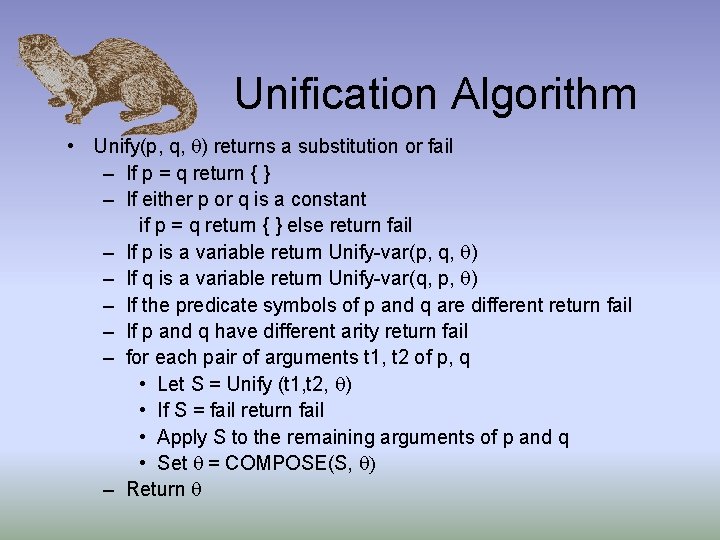

Unification Algorithm • Unify(p, q, ) returns a substitution or fail – If p = q return { } – If either p or q is a constant if p = q return { } else return fail – If p is a variable return Unify-var(p, q, ) – If q is a variable return Unify-var(q, p, ) – If the predicate symbols of p and q are different return fail – If p and q have different arity return fail – for each pair of arguments t 1, t 2 of p, q • Let S = Unify (t 1, t 2, ) • If S = fail return fail • Apply S to the remaining arguments of p and q • Set = COMPOSE(S, ) – Return

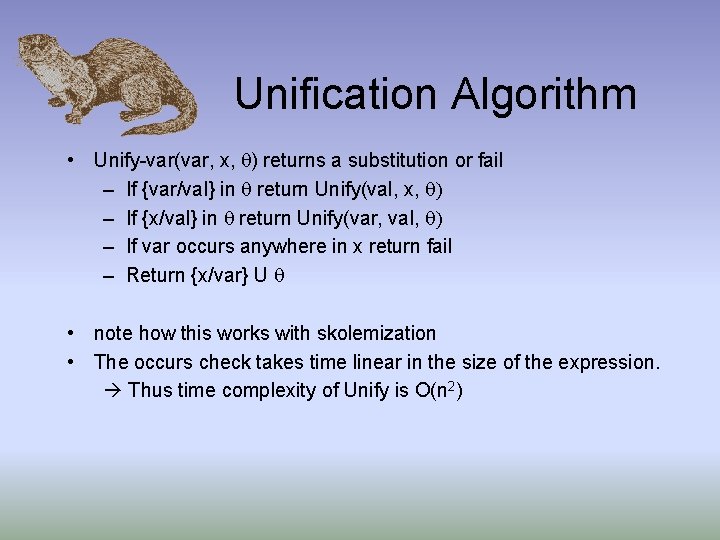

Unification Algorithm • Unify-var(var, x, ) returns a substitution or fail – If {var/val} in return Unify(val, x, ) – If {x/val} in return Unify(var, val, ) – If var occurs anywhere in x return fail – Return {x/var} U • note how this works with skolemization • The occurs check takes time linear in the size of the expression. Thus time complexity of Unify is O(n 2)

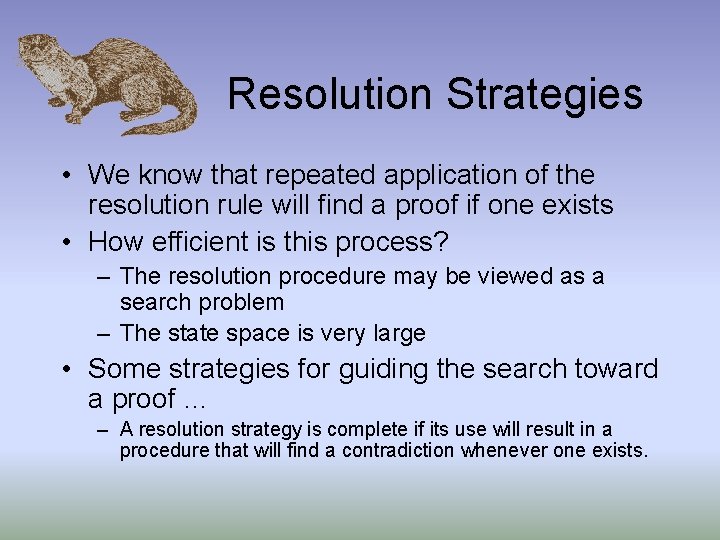

Resolution Strategies • We know that repeated application of the resolution rule will find a proof if one exists • How efficient is this process? – The resolution procedure may be viewed as a search problem – The state space is very large • Some strategies for guiding the search toward a proof … – A resolution strategy is complete if its use will result in a procedure that will find a contradiction whenever one exists.

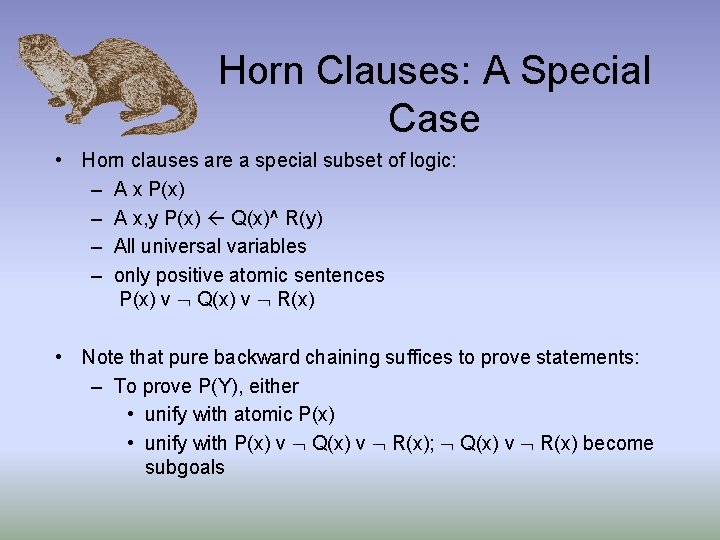

Horn Clauses: A Special Case • Horn clauses are a special subset of logic: – A x P(x) – A x, y P(x) Q(x)^ R(y) – All universal variables – only positive atomic sentences P(x) v Q(x) v R(x) • Note that pure backward chaining suffices to prove statements: – To prove P(Y), either • unify with atomic P(x) • unify with P(x) v Q(x) v R(x); Q(x) v R(x) become subgoals

Unit Resolution • Aka Unit preference – prefers to do resolution where one of the sentence is a single literal (unit clause) – we are trying to prove a sentence of length 0, so it might be a good idea to prefer inferences that produce shorter sentences – it is a useful heuristic that can be combined with other strategies – Complete for Horn clauses.

Input Resolution – every resolution combines one of the input sentences (either query or sentence in KB) – shape of a diagonal “spine” – input resolution is equivalent to unit resolution – complete for Horn form but incomplete in general case • Example: Q(u) v P(A) Q(w) v P(w) Q(x) v P(x) Q(y) v P(y)

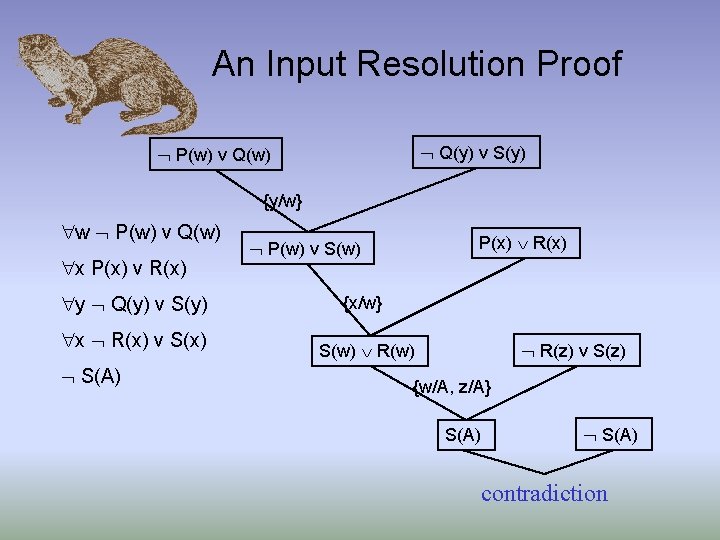

An Input Resolution Proof Q(y) v S(y) P(w) v Q(w) {y/w} w P(w) v Q(w) x P(x) v R(x) y Q(y) v S(y) x R(x) v S(x) S(A) P(x) R(x) P(w) v S(w) {x/w} R(z) v S(z) S(w) R(w) {w/A, z/A} S(A) contradiction

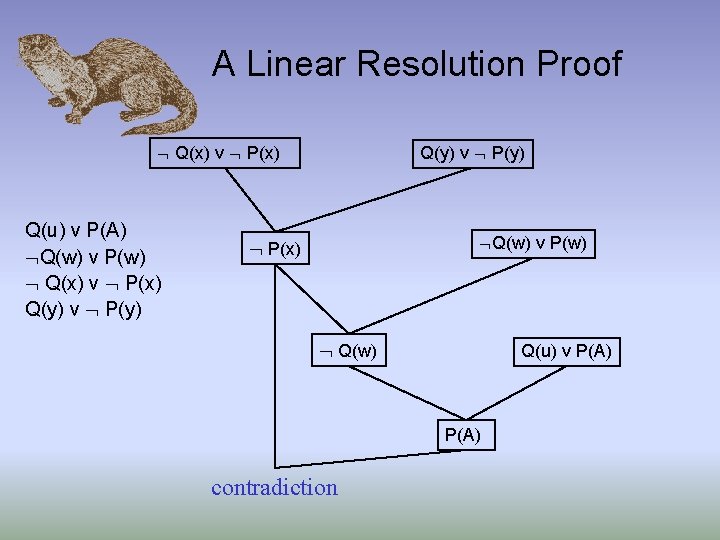

Linear Resolution • a generalization of input resolution – allow P and Q to be resolved together if P is an input sentence or P is an ancestor of Q in the proof tree – linear resolution is complete

A Linear Resolution Proof Q(x) v P(x) Q(u) v P(A) Q(w) v P(w) Q(x) v P(x) Q(y) v P(y) Q(w) v P(w) P(x) Q(w) Q(u) v P(A) contradiction

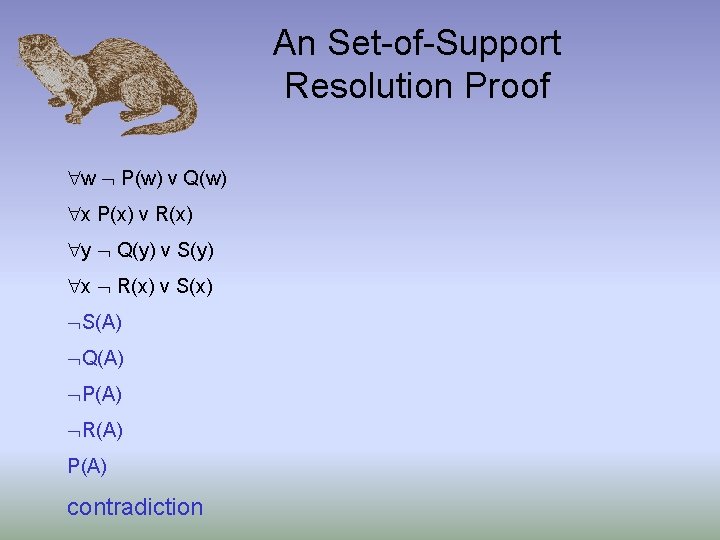

Set of support • This strategy tries to eliminate many resolutions altogether by identifying a subset of sentences called the set of support – Every resolution combines a sentence from set of support with another sentence – add resolvent into set of support – if set of support is small relative to KB, reduce search space significantly – A bad choice for the set of support will make the algorithm incomplete • If the remainder of the sentences are jointly satisfiable – Use the negated query as the set of support • goal-directed : easy to understand • Assumes the KB is consistent

An Set-of-Support Resolution Proof w P(w) v Q(w) x P(x) v R(x) y Q(y) v S(y) x R(x) v S(x) S(A) Q(A) P(A) R(A) P(A) contradiction

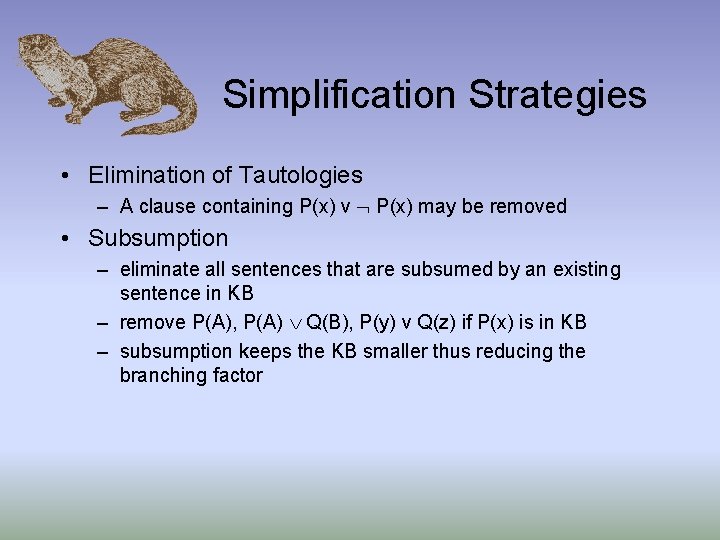

Simplification Strategies • Elimination of Tautologies – A clause containing P(x) v P(x) may be removed • Subsumption – eliminate all sentences that are subsumed by an existing sentence in KB – remove P(A), P(A) Q(B), P(y) v Q(z) if P(x) is in KB – subsumption keeps the KB smaller thus reducing the branching factor

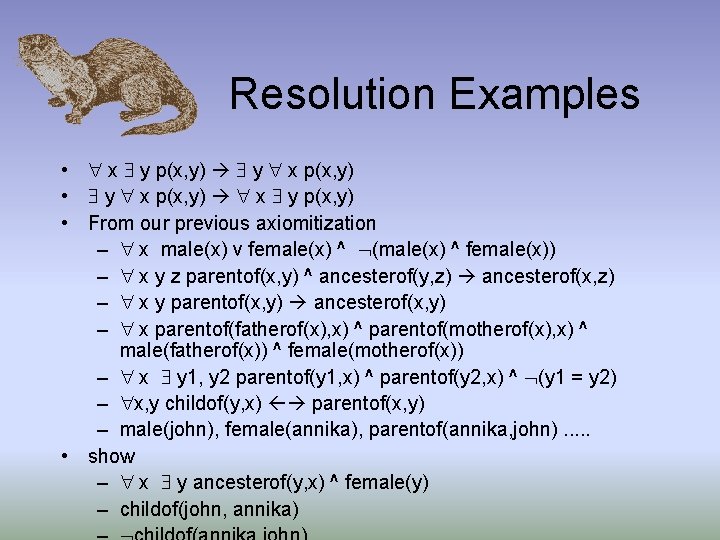

Resolution Examples • x y p(x, y) y x p(x, y) • y x p(x, y) x y p(x, y) • From our previous axiomitization – x male(x) v female(x) ^ (male(x) ^ female(x)) – x y z parentof(x, y) ^ ancesterof(y, z) ancesterof(x, z) – x y parentof(x, y) ancesterof(x, y) – x parentof(fatherof(x), x) ^ parentof(motherof(x), x) ^ male(fatherof(x)) ^ female(motherof(x)) – x y 1, y 2 parentof(y 1, x) ^ parentof(y 2, x) ^ (y 1 = y 2) – x, y childof(y, x) parentof(x, y) – male(john), female(annika), parentof(annika, john). . . • show – x y ancesterof(y, x) ^ female(y) – childof(john, annika)

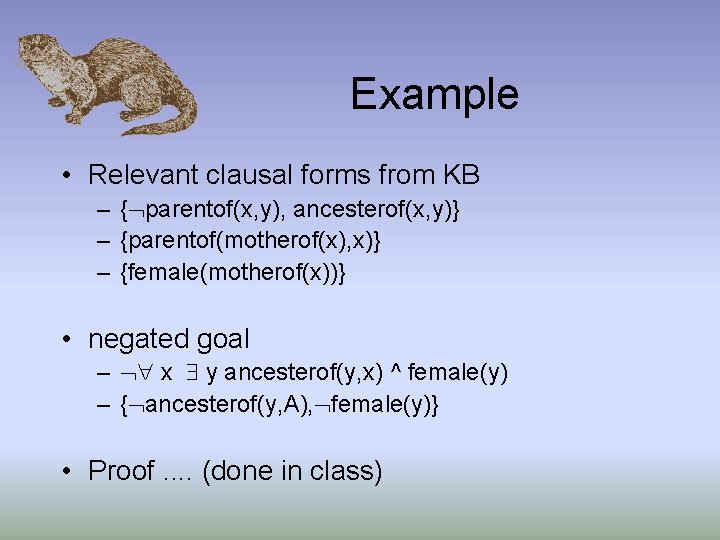

Example • Relevant clausal forms from KB – { parentof(x, y), ancesterof(x, y)} – {parentof(motherof(x), x)} – {female(motherof(x))} • negated goal – x y ancesterof(y, x) ^ female(y) – { ancesterof(y, A), female(y)} • Proof. . (done in class)

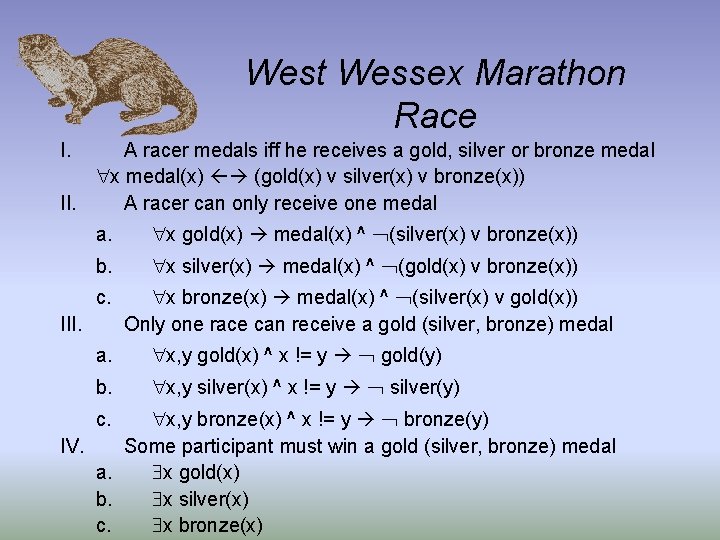

West Wessex Marathon Race I. A racer medals iff he receives a gold, silver or bronze medal x medal(x) (gold(x) v silver(x) v bronze(x)) II. A racer can only receive one medal a. x gold(x) medal(x) ^ (silver(x) v bronze(x)) b. x silver(x) medal(x) ^ (gold(x) v bronze(x)) c. x bronze(x) medal(x) ^ (silver(x) v gold(x)) Only one race can receive a gold (silver, bronze) medal III. a. x, y gold(x) ^ x != y gold(y) b. x, y silver(x) ^ x != y silver(y) x, y bronze(x) ^ x != y bronze(y) IV. Some participant must win a gold (silver, bronze) medal a. x gold(x) b. x silver(x) c. x bronze(x) c.

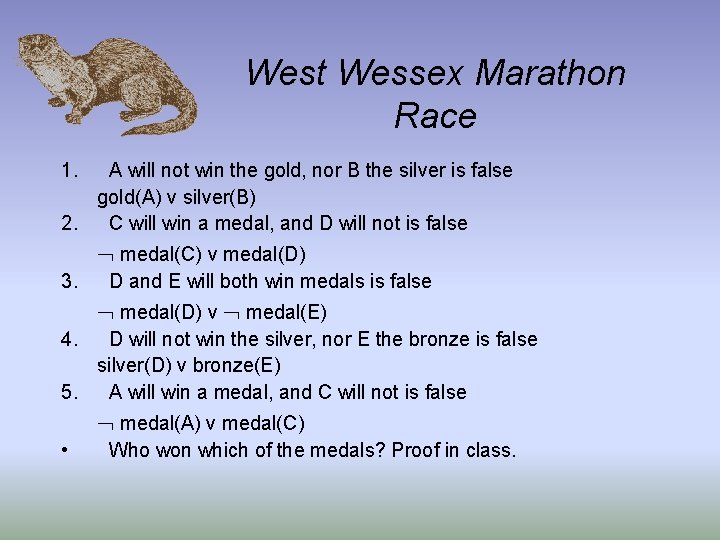

West Wessex Marathon Race 1. A will not win the gold, nor B the silver is false gold(A) v silver(B) 2. C will win a medal, and D will not is false medal(C) v medal(D) 3. D and E will both win medals is false medal(D) v medal(E) 4. D will not win the silver, nor E the bronze is false silver(D) v bronze(E) 5. A will win a medal, and C will not is false medal(A) v medal(C) • Who won which of the medals? Proof in class.

Prolog: a Logic Programming Language • A program is a sequence of sentences • Sentences are represented as Horn clauses, no negated antecedents • The Prolog representation has the consequent, or head, on the left hand side, and the antecedents, or body, on the right. • A query is a conjunction of terms; it is called the goal

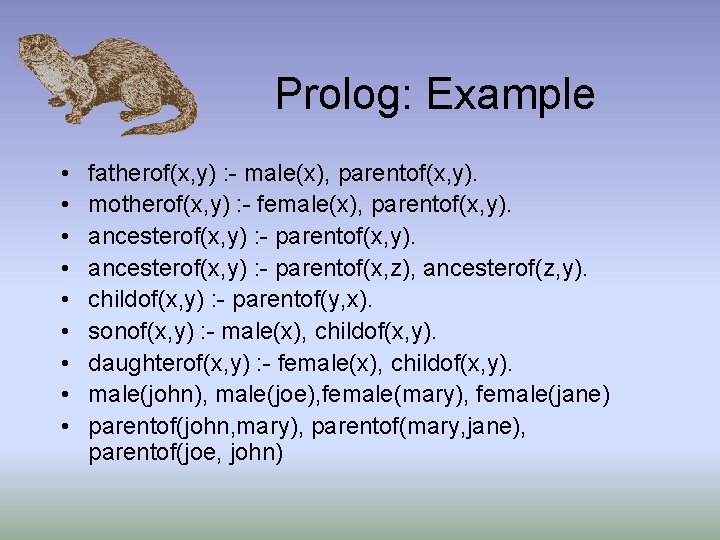

Prolog: Example • • • fatherof(x, y) : - male(x), parentof(x, y). motherof(x, y) : - female(x), parentof(x, y). ancesterof(x, y) : - parentof(x, z), ancesterof(z, y). childof(x, y) : - parentof(y, x). sonof(x, y) : - male(x), childof(x, y). daughterof(x, y) : - female(x), childof(x, y). male(john), male(joe), female(mary), female(jane) parentof(john, mary), parentof(mary, jane), parentof(joe, john)

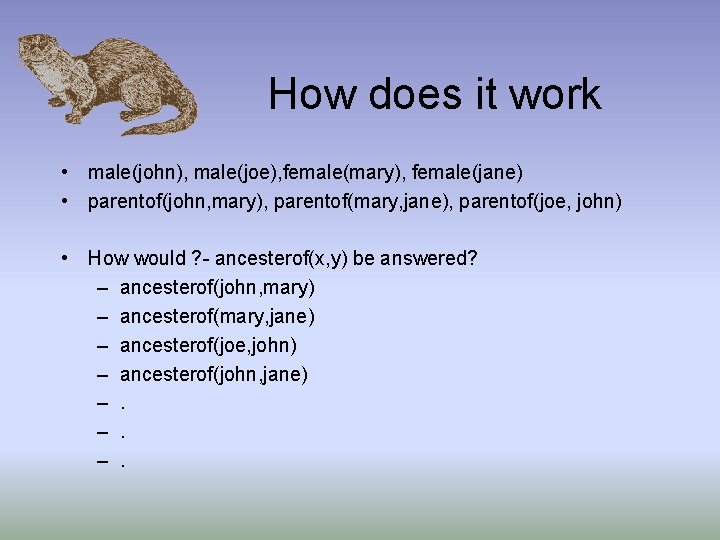

How does it work • male(john), male(joe), female(mary), female(jane) • parentof(john, mary), parentof(mary, jane), parentof(joe, john) • How would ? - ancesterof(x, y) be answered? – ancesterof(john, mary) – ancesterof(mary, jane) – ancesterof(joe, john) – ancesterof(john, jane) –. –. –.

How Does it Work? • Queries are answered by the following algorithm: – The first clause with a head that matches is chosen • if it has subgoals, they become new queries, answered in left to right order • when all goals are satisfied, an answer is returned • if another answer is requested, the search continues where it left off – When no more matches are possible with chosen clause, next clause is chosen – This is effectively a depth-first search of the solution tree • note that we need to avoid left recursion!!

Some Real Prolog examples • . . . running code in prolog. lisp. .

The Difference Between Logic and Prolog • Handling of negation: – Prolog handles negation as failure to prove, e. g. • not(fatherof(bill, mike)) will return true in Prolog, but not provable in formal logic • To save time, Prolog often runs without the occurs check for speed • Prolog programs can side-effect as they run, and possibly do computations (e. g. arithmetic) • Clause ordering affects what can be shown; ordering doesn’t matter if logic

Rules in Production Systems • A rule based system – A rule has the format if condition then action “if the condition is met in the world then take the action” - the condition states when the rule applies - the action describes the result of applying the rule

Production System A production system consists of • A knowledge base – Working memory : short-term memory • contains set of positive literals with no variable – Rule memory : long-term memory • contains set of inference rules • A control strategy • A rule interpreter

Forward-Chaining Production Systems The forward-chaining production system loop: Repeat • Match phase – computes subset of rules whose left-hand side is satisfied by current content of working memory • Conflict resolution phase – decide which rule(s) should be executed • Act phase – modifies the knowledge base, usually working memory

Match phase • A unification exercise – c cycles, w elements in WM, r rules with n elements in LHS c*w*r*n unifications • The RETE algorithm – used in the OPS-5 production system – Construct a RETE network using the working memory and the rule base – eliminate duplication btn. Rules – eliminate duplication over time

Conflict Resolution Phase • More than one rules pass the match phase • Do every actions or select one (some) • Control strategy – No duplication – Recency – Specificity • Mammal(x) add Legs(x, 4) • Mammal(x) Human(x) add Legs(x, 2) – Operation priority : Action(Dust(p)) v. s. Action(Evacuate) More on production systems when we discuss uncertain reasoning

Frame Systems & Semantic Networks Whether the language uses strings or nodes and links, and whether it is called a semantic network of a logic, has no effect on its meaning or an its implementation Semantic networks has – Graphical notation easy for people to visualize – very simple execution model easy to predict the behavior of the inference engine simple query language

Some Uses of Logic • Otter theorem prover – current system 4 th generation, dating back to the 60’s – first order logic plus equality – used as a proving assistant • To see what’s been done, go to the otter home page!

- Slides: 50