Intro ANN Fuzzy Systems Lecture 25 Radial Basis

Intro. ANN & Fuzzy Systems Lecture 25 Radial Basis Network (II) (C) 2001 by Yu Hen Hu

Intro. ANN & Fuzzy Systems Outline • Regularization Network Formulation • Radial Basis Network Type 2 • Generalized RBF network – Training algorithm – Implementation details (C) 2001 by Yu Hen Hu 2

Intro. ANN & Fuzzy Systems Properties of Regularization network • An RBF network is a universal approximator: – it can approximate arbitrarily well any multivariate continuous function on a compact support in Rn where n is the dimension of feature vectors, given sufficient number of hidden neurons. • It is optimal in that it minimizes E(F). • It also has the best approximation property. That means given an unknown nonlinear function f, there always exists a choice of RBF coefficients that approximates f better than other possible choices of models. (C) 2001 by Yu Hen Hu 3

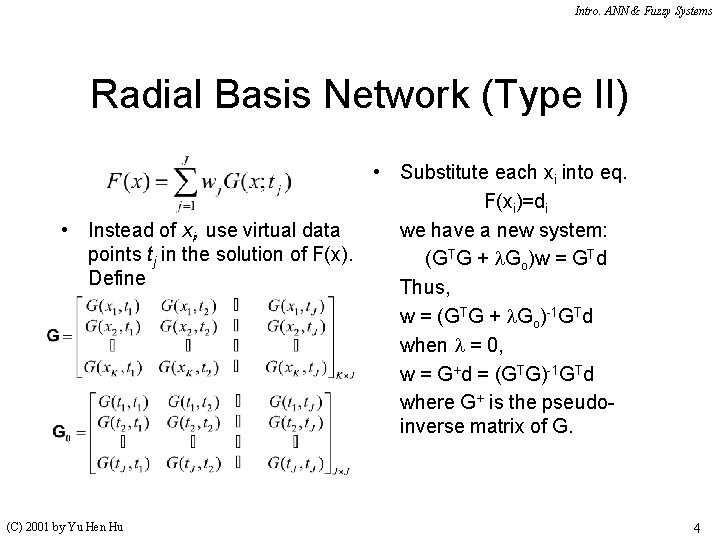

Intro. ANN & Fuzzy Systems Radial Basis Network (Type II) • Substitute each xi into eq. F(xi)=di • Instead of xi, use virtual data we have a new system: points tj in the solution of F(x). (GTG + l. Go)w = GTd Define Thus, w = (GTG + l. Go)-1 GTd when l = 0, w = G+d = (GTG)-1 GTd where G+ is the pseudoinverse matrix of G. (C) 2001 by Yu Hen Hu 4

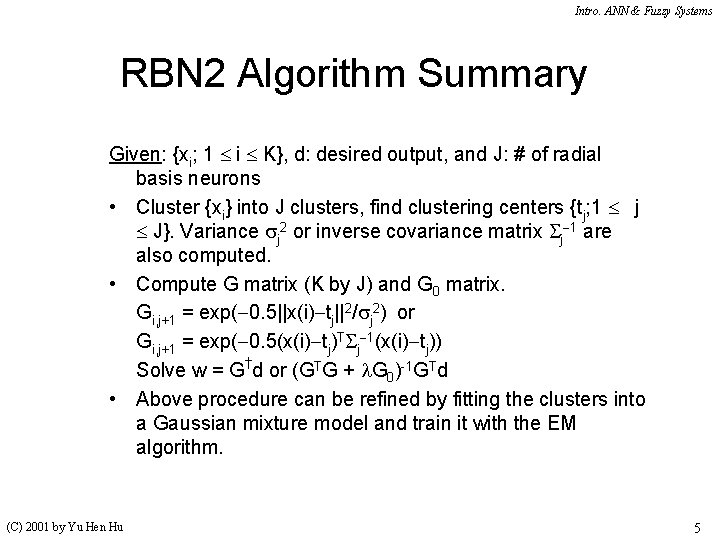

Intro. ANN & Fuzzy Systems RBN 2 Algorithm Summary Given: {xi; 1 i K}, d: desired output, and J: # of radial basis neurons • Cluster {xi} into J clusters, find clustering centers {tj; 1 j J}. Variance j 2 or inverse covariance matrix j 1 are also computed. • Compute G matrix (K by J) and G 0 matrix. Gi, j+1 = exp( 0. 5||x(i) tj||2/ j 2) or Gi, j+1 = exp( 0. 5(x(i) tj)T j 1(x(i) tj)) Solve w = G†d or (GTG + l. G 0)-1 GTd • Above procedure can be refined by fitting the clusters into a Gaussian mixture model and train it with the EM algorithm. (C) 2001 by Yu Hen Hu 5

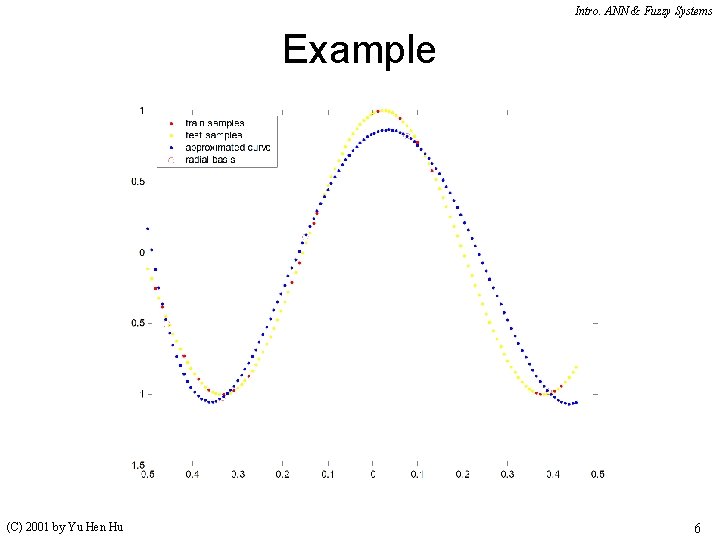

Intro. ANN & Fuzzy Systems Example (C) 2001 by Yu Hen Hu 6

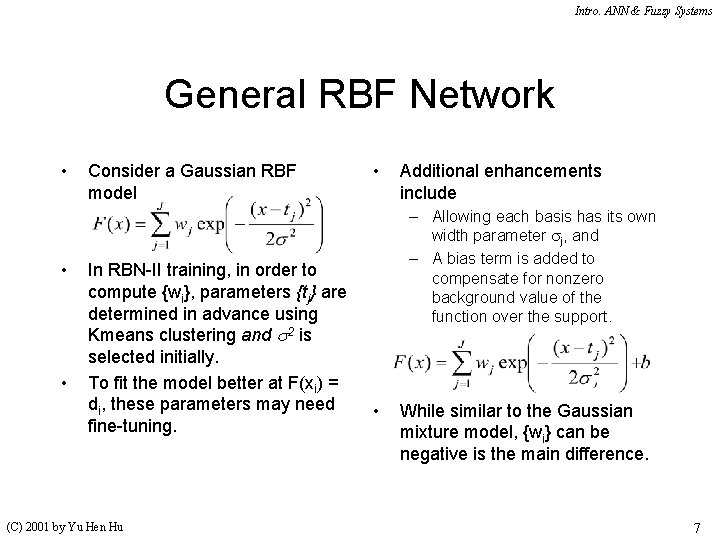

Intro. ANN & Fuzzy Systems General RBF Network • • • Consider a Gaussian RBF model In RBN-II training, in order to compute {wi}, parameters {tj} are determined in advance using Kmeans clustering and s 2 is selected initially. To fit the model better at F(xi) = di, these parameters may need fine-tuning. (C) 2001 by Yu Hen Hu • Additional enhancements include – Allowing each basis has its own width parameter j, and – A bias term is added to compensate for nonzero background value of the function over the support. • While similar to the Gaussian mixture model, {wi} can be negative is the main difference. 7

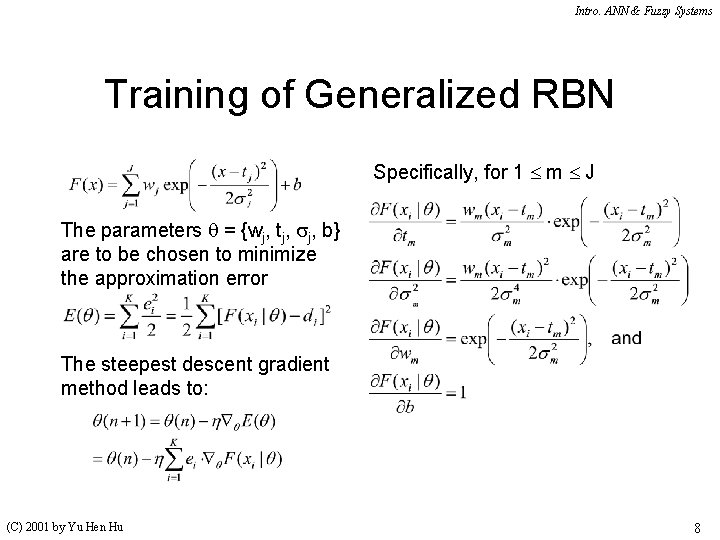

Intro. ANN & Fuzzy Systems Training of Generalized RBN Specifically, for 1 m J The parameters q = {wj, tj, j, b} are to be chosen to minimize the approximation error The steepest descent gradient method leads to: (C) 2001 by Yu Hen Hu 8

Intro. ANN & Fuzzy Systems Training … Note that Thus, the individual parameters’ online learning formula are: Hence (C) 2001 by Yu Hen Hu 9

Intro. ANN & Fuzzy Systems Implementation Details • The cost function may be augmented with additional smoothing terms for the purpose of regularization. For example, the derivative of F(x|q) may be bounded by a user-specified constant. However, this will make the training formula more complicated. • Initialization of RBF centers and variance can be accomplished using the Kmeans clustering algorithm (C) 2001 by Yu Hen Hu • Selection of the number of RBF function is part of the regularization process and often need to be done using trail-and-error, or heuristics. Cross-validation may also be used to give a more objective criterion. • A feasible range may be imposed on each parameter to prevent numerical problem. E. g. 2 e > 0 10

- Slides: 10