Interrater reliability in the KPG exams The Writing

Inter-rater reliability in the KPG exams The Writing Production and Mediation Module

Inter-rater reliability in KPG AIM: To check the effectiveness of the instruments employed throughout the rating process • Rating Grid – Assessment Criteria • Training Material & Training Seminars • On-the-spot consultancy to raters

Script Raters Profile • Experienced teachers • Underwent initial training in rating KPG scripts • Undergo specialized training for every test administration

Script rater training • Specialized training on rating scripts based on expectations for every activity § Analysis of expected output § Presentation of rated scripts § Actual rating of selected samples • Rating scripts under supervision

The rating procedure • Each script is rated by two script raters randomly selected from a pool of trained raters • Second ratings are independent of the first (no identifying information, no marks or symbols) • Constant monitoring/consultancy during the process

Computing Inter-rater reliability METHODOLOGY OF STUDY

Sampling • Random sample of at least 40% of the total number of scripts • Periods: May 2005 to November 2007 • Levels: B 1, B 2 & C 1

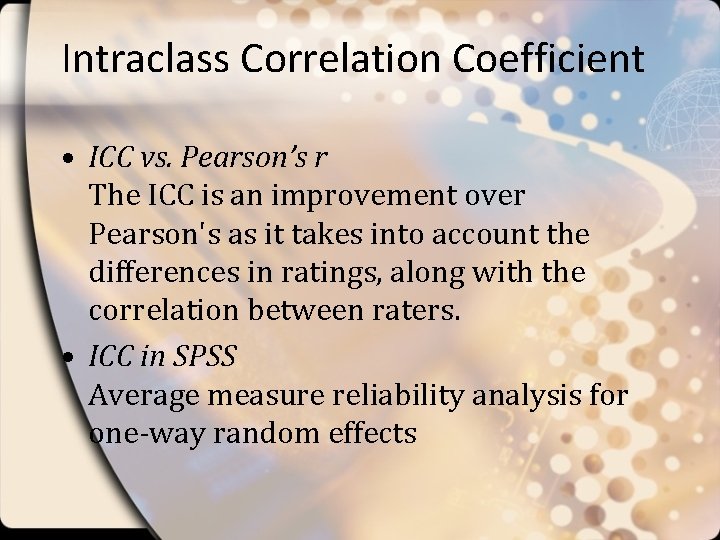

Intraclass Correlation Coefficient • ICC vs. Pearson’s r The ICC is an improvement over Pearson's as it takes into account the differences in ratings, along with the correlation between raters. • ICC in SPSS Average measure reliability analysis for one-way random effects

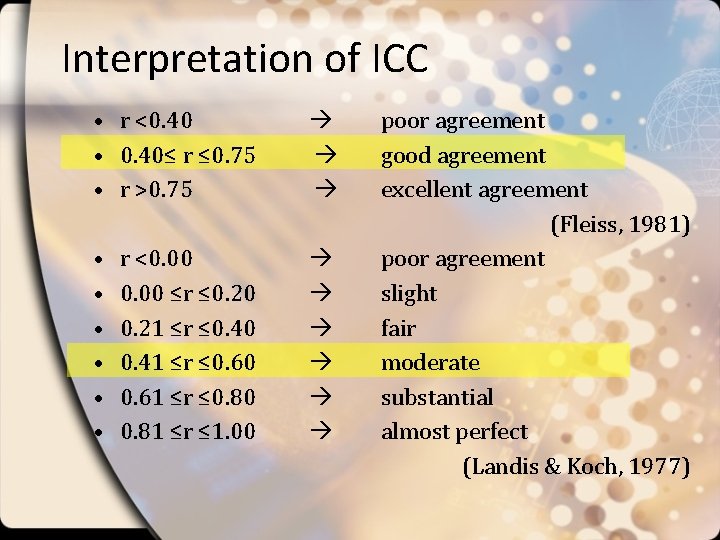

Interpretation of ICC • r <0. 40 • 0. 40≤ r ≤ 0. 75 • r >0. 75 • • • r <0. 00 ≤r ≤ 0. 20 0. 21 ≤r ≤ 0. 40 0. 41 ≤r ≤ 0. 60 0. 61 ≤r ≤ 0. 80 0. 81 ≤r ≤ 1. 00 poor agreement good agreement excellent agreement (Fleiss, 1981) poor agreement slight fair moderate substantial almost perfect (Landis & Koch, 1977)

KPG module 2 • Free writing production • Mediation

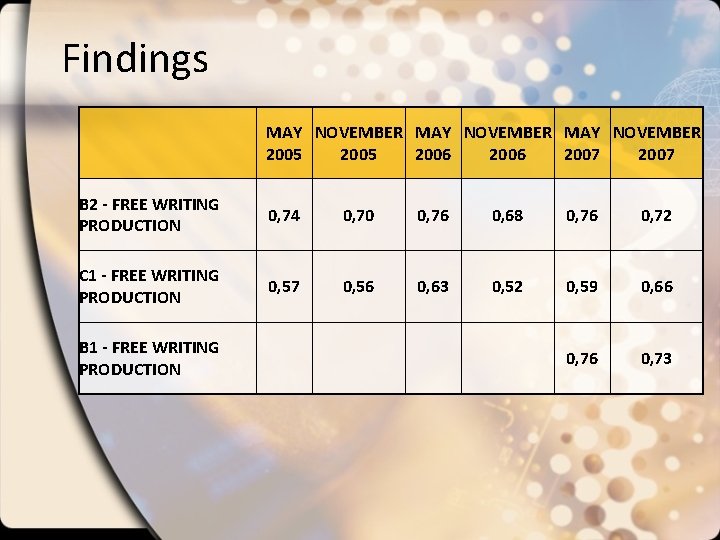

Findings MAY NOVEMBER 2005 2006 2007 B 2 - FREE WRITING PRODUCTION 0, 74 0, 70 0, 76 0, 68 0, 76 0, 72 C 1 - FREE WRITING PRODUCTION 0, 57 0, 56 0, 63 0, 52 0, 59 0, 66 0, 73 B 1 - FREE WRITING PRODUCTION

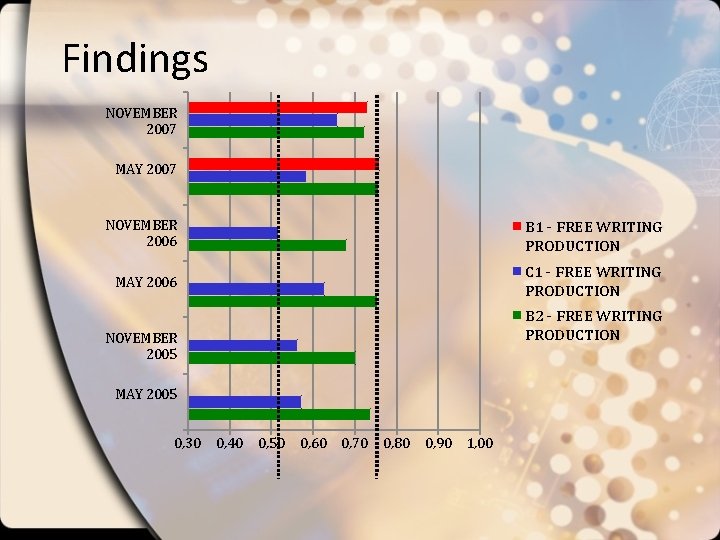

Findings NOVEMBER 2007 MAY 2007 NOVEMBER 2006 B 1 - FREE WRITING PRODUCTION MAY 2006 C 1 - FREE WRITING PRODUCTION B 2 - FREE WRITING PRODUCTION NOVEMBER 2005 MAY 2005 0, 30 0, 40 0, 50 0, 60 0, 70 0, 80 0, 90 1, 00

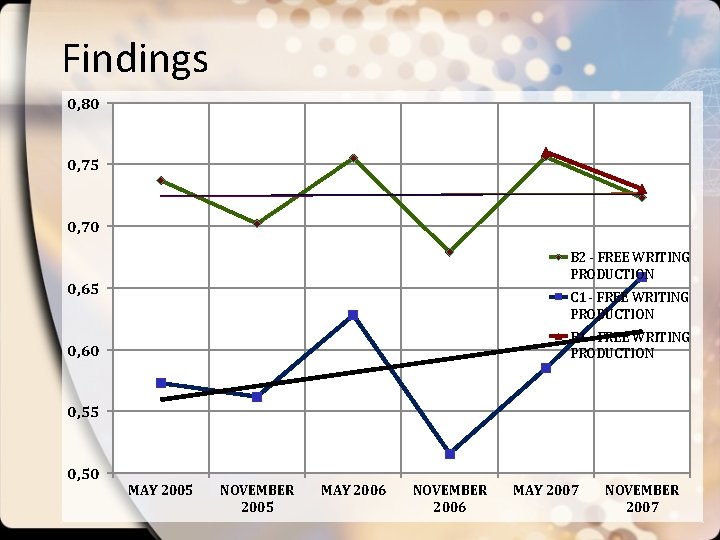

Findings 0, 80 0, 75 0, 70 B 2 - FREE WRITING PRODUCTION 0, 65 C 1 - FREE WRITING PRODUCTION B 1 - FREE WRITING PRODUCTION 0, 60 0, 55 0, 50 MAY 2005 NOVEMBER 2005 MAY 2006 NOVEMBER 2006 MAY 2007 NOVEMBER 2007

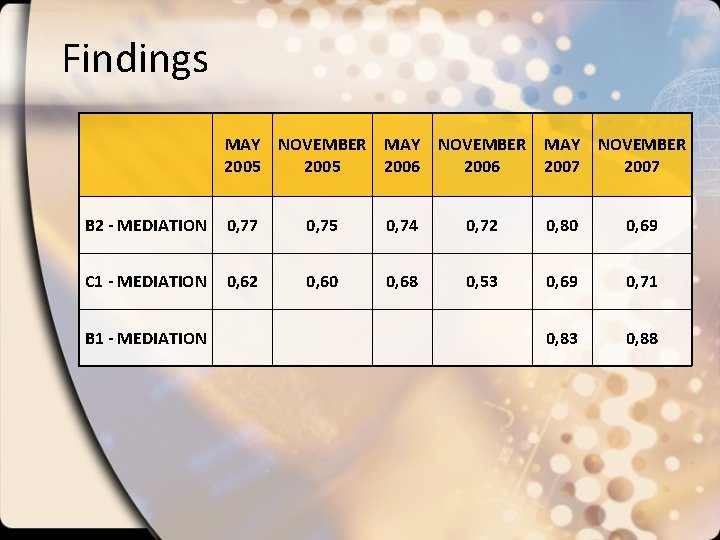

Findings MAY NOVEMBER 2005 2006 2007 B 2 - MEDIATION 0, 77 0, 75 0, 74 0, 72 0, 80 0, 69 C 1 - MEDIATION 0, 62 0, 60 0, 68 0, 53 0, 69 0, 71 0, 83 0, 88 B 1 - MEDIATION

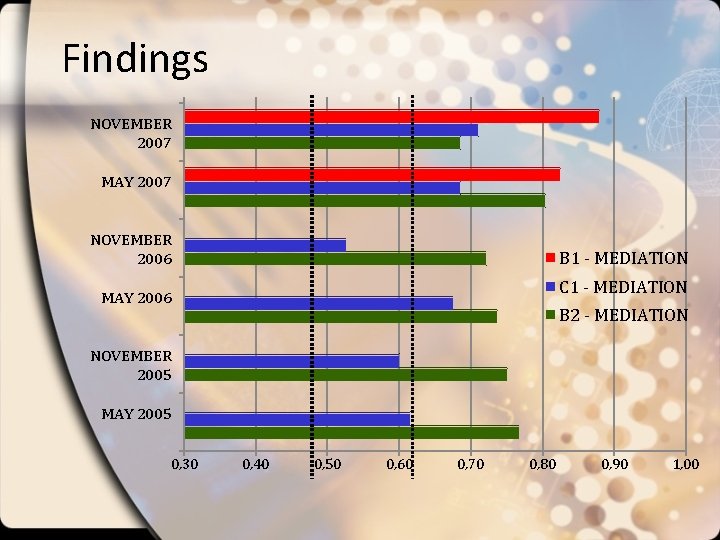

Findings NOVEMBER 2007 MAY 2007 NOVEMBER 2006 B 1 - MEDIATION C 1 - MEDIATION MAY 2006 B 2 - MEDIATION NOVEMBER 2005 MAY 2005 0, 30 0, 40 0, 50 0, 60 0, 70 0, 80 0, 90 1, 00

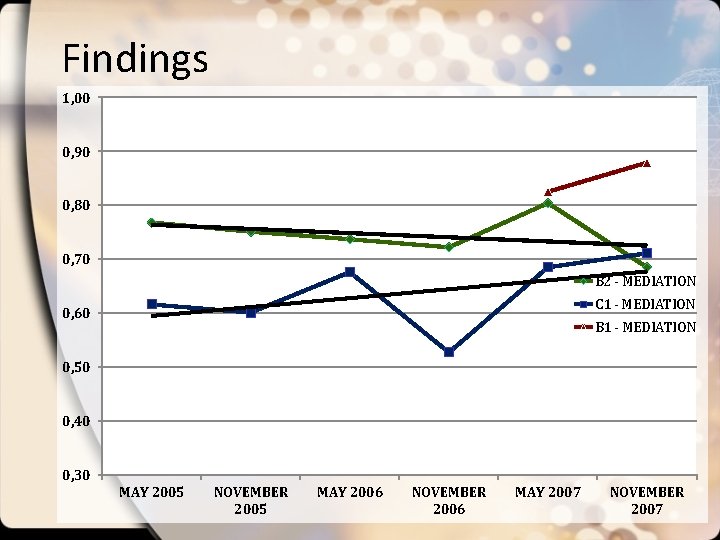

Findings 1, 00 0, 90 0, 80 0, 70 B 2 - MEDIATION C 1 - MEDIATION 0, 60 B 1 - MEDIATION 0, 50 0, 40 0, 30 MAY 2005 NOVEMBER 2005 MAY 2006 NOVEMBER 2006 MAY 2007 NOVEMBER 2007

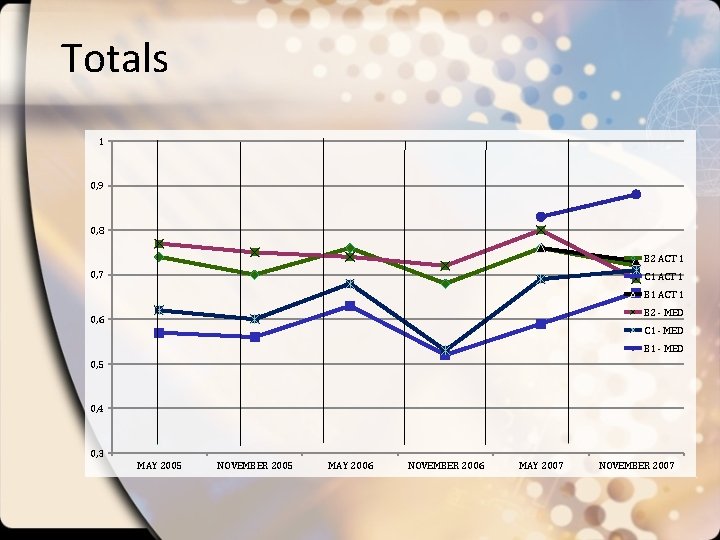

Totals Descriptive Statistics N Min. Max. Mean MAY 05 4 , 57 , 77 , 67 NOV 05 4 , 56 , 75 , 65 MAY 06 4 , 63 , 76 , 70 NOV 06 4 , 52 , 72 , 61 MAY 07 6 , 59 , 83 , 73 NOV 07 6 , 66 , 88 , 73

Totals 1 0, 9 0, 8 B 2 ACT 1 0, 7 C 1 ACT 1 B 2 - MED 0, 6 C 1 - MED B 1 - MED 0, 5 0, 4 0, 3 MAY 2005 NOVEMBER 2005 MAY 2006 NOVEMBER 2006 MAY 2007 NOVEMBER 2007

Conclusion • Correlations are high – Positive impact of instruments • Trendlines are sloping upwards – Experience in rating and training are directly related to rater agreement indices

Further research • Task Analysis to investigate correlation between item difficulty and ICC • In process: Detailed task analysis project carried out by linguists and psychologists § AIM: To determine the variables affecting the difficulty of a task

- Slides: 20