Interoperability is it feasible Peter Wittenburg The Language

Interoperability - is it feasible - Peter Wittenburg The Language Archive – Max Planck Institute for Psycholinguistics Nijmegen, The Netherlands

Why care about interoperability? • e-Science & e-Humanities • “data is the currency of modern research” • thus need to get integrated access to many data sets • data sets are • scattered across many repositories => (virtual) integration • created by different research teams using different conventions (formats, semantics) • often in bad states and quality => curation • thus interoperability most used word at ICRI conference • Big Questions: • What is meant with interoperability? • How to remove interoperability barriers to analyze large heterogeneous and probably distributed data sets? • Is interoperability something we need/want to achieve?

What is interoperability? 1. Wikipedia: Interoperability is a property of a system, whose interfaces are completely understood, to work with other systems, present or future, without any restricted access or implementation. 2. IEEE: Interoperability is the ability of two or more systems or components to exchange information and to use the information that has been exchanged. 3. O’Brian/Marakas: Being able to accomplish end-user applications using different types of computer system, operating systems, and application software, interconnected by different types of local and wide-area networks. 4. OSLC: To be interoperable one should actively be engaged in the ongoing process of ensuring that the systems, procedures and culture of an organization are managed in such a way as to maximise opportunities for exchange and re-use of information.

What is interoperability? • Technical Interoperability (techn. encoding, format, structure, API, protocol) • Semantic Interoperability • is it also about bridging understanding between two or more humans? <hund> humans – humans we better speak about understanding humans – machine same or? machine – machine well here interoperability makes sense <köter> <dog>

What is interoperability? • • seems that every one speaks about technical systems when talking about interoperability do we include feeding machines with some mapping rules specified by human users and then carrying out some automatic functions? • when linguists hear about mapping tag sets some immediately say that it is impossible and does not make sense • why: tags are part of a whole theory behind it well if you look to other disciplines (life sciences, earth observation sciences etc. ) that’s exactly what they do why • people want to work across collections and ignore theories • some see tag sets just as first help but want to work on raw data • some see the demand of politicians and society to come up with answers and not with statements about problems • AND there is much money (is it useless? )

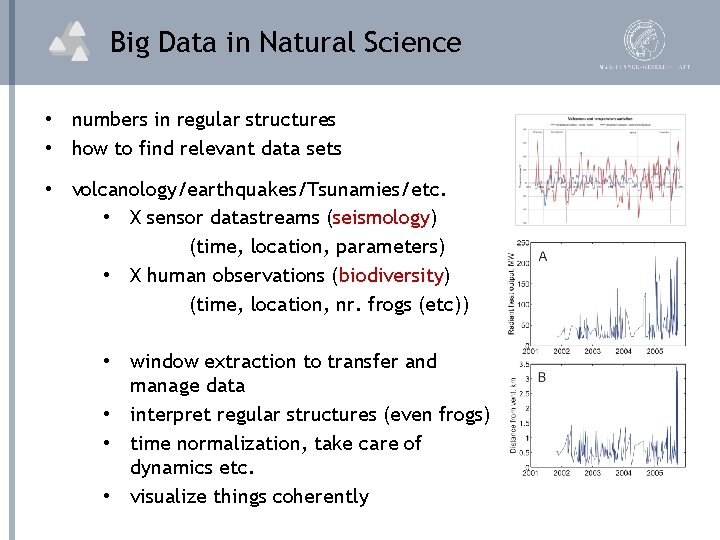

Big Data in Natural Science • numbers in regular structures • how to find relevant data sets • volcanology/earthquakes/Tsunamies/etc. • X sensor datastreams (seismology) (time, location, parameters) • X human observations (biodiversity) (time, location, nr. frogs (etc)) • window extraction to transfer and manage data • interpret regular structures (even frogs) • time normalization, take care of dynamics etc. • visualize things coherently

Big Data in Natural Science • numbers in regular structures • how to find relevant data sets • volcanology/earthquakes/Tsunamies/etc. • X sensor datastreams (seismology) (time, location, parameters) ple enough im s s k o lo y it il b • X human (biodiversity) opera interobservations bers the m u n f o s e c n e u q rns in se nr. frogs (etc)) pattelocation, just find(time, to know format you need le, but. . . ) p im s s a e it u q t (well – no • window extraction to transfer and manage data • interpret regular structures (even frogs) • time normalization, take care of dynamics etc. • visualize things coherently

Big Data in Environmental Sciences • many different types of observations • climate, weather, etc. • species and populations according to multitude of classification systems and schools • grand challenge • how can all these observations be used to stabilize our environment • how can it all be used to maintain diversity • etc.

Big Data in Environmental Sciences • many different types of observations • climate, weather, etc. • species and populations according to multitude of classification systems and schools • grand • • • our field sounds similar to challenge is tough interoperability s to stabilize our gain d e t c e p how can all these observations be used x e e r a e r but the e money environment and there is mor how can it all be used to maintain diversity l science ia c o s in o ls a k r o etc. intensive w

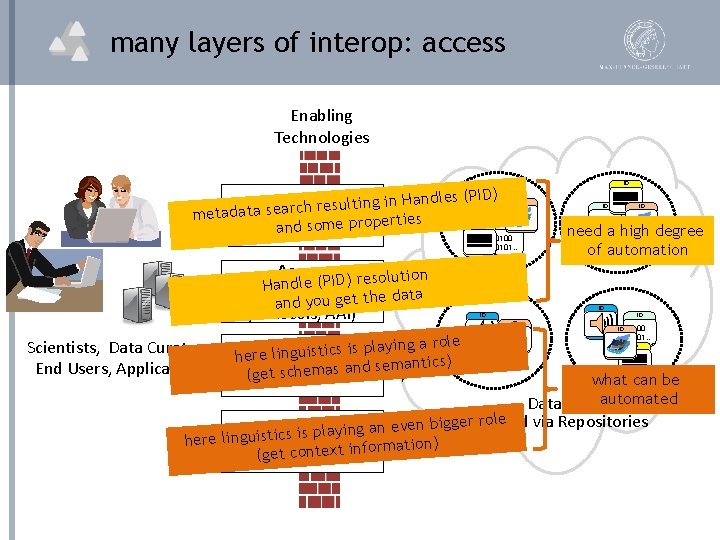

many layers of interop: access Enabling Technologies ID ndles (PID) a H in g in lt su re search 0100 metadata Discovery s ie rt e 0101. . p ro p 0100 e m so and 0101. . ID ID ID 0100 0101. . Access ) resolution ndle (PID Haresolution, (ref. data and you get the protocols, AAI) Scientists, Data Curators, End Users, Applications laying a role p is s c ti is u g n li here Interpretation d semantics) (get schemas an ID 0100 0101. . ID ID need a high degree of automation ID ID 0100 0101. . ID what can be Datasets automated r role via Repositories even bigge. Accessed n a g in y la p is s c here linguisti Reuse rmation) fo in t x te n o c t e (g

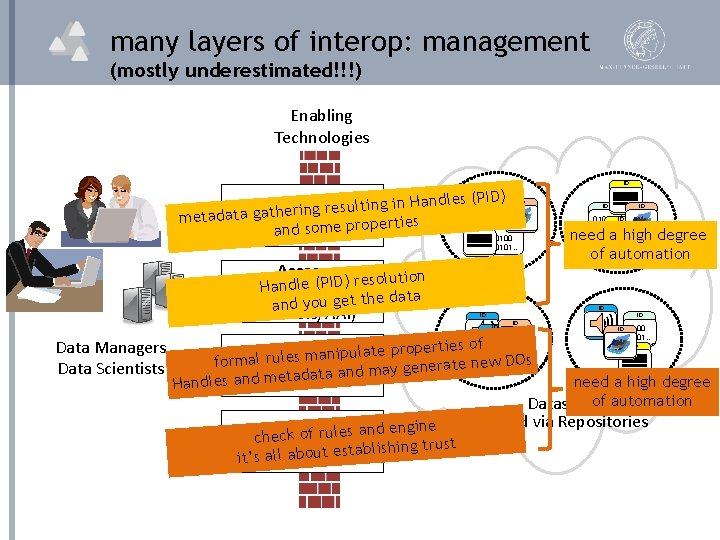

many layers of interop: management (mostly underestimated!!!) Enabling Technologies ) Handles (PID in g in Collections + lt su re g n ri 0100 metadata gathe s 0101. . ie rt e 0100 p ro p e Properties and som 0101. . 0100 ID ID ID 0100 0101. . ID need a high degree of automation ID 0101. . Access (PID) resolution le d n a H (ref. resolution, data and you get the protocols, AAI) Data Managers Data Scientists ID ID ID te properties of la u ip n a m formalized policies s le ru formal nerate new DOs e g y a m d n a ta a nd metad engine Handles aworkflow ID ID ID 0100 0101. . ID need a high degree Datasets of automation Accessed via Repositories gine n e d n a s le ru f o heck c. Assessment ablishing trust it’s all about est

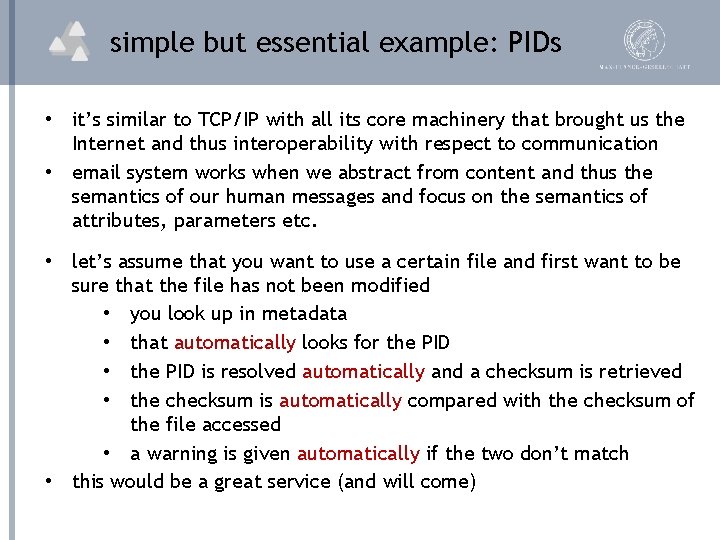

simple but essential example: PIDs • it’s similar to TCP/IP with all its core machinery that brought us the Internet and thus interoperability with respect to communication • email system works when we abstract from content and thus the semantics of our human messages and focus on the semantics of attributes, parameters etc. • let’s assume that you want to use a certain file and first want to be sure that the file has not been modified • you look up in metadata • that automatically looks for the PID • the PID is resolved automatically and a checksum is retrieved • the checksum is automatically compared with the checksum of the file accessed • a warning is given automatically if the two don’t match • this would be a great service (and will come)

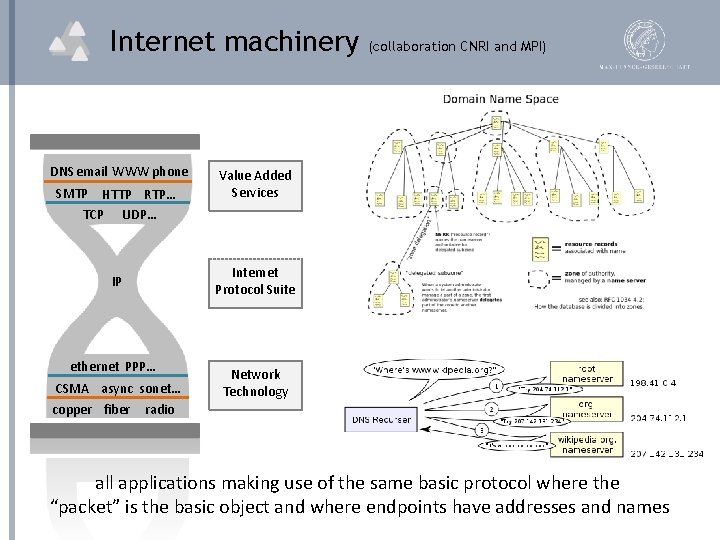

Internet machinery DNS email WWW phone SMTP HTTP RTP… TCP UDP… Value Added Services Internet Protocol Suite IP ethernet PPP… CSMA async sonet… copper fiber (collaboration CNRI and MPI) Network Technology radio all applications making use of the same basic protocol where the “packet” is the basic object and where endpoints have addresses and names

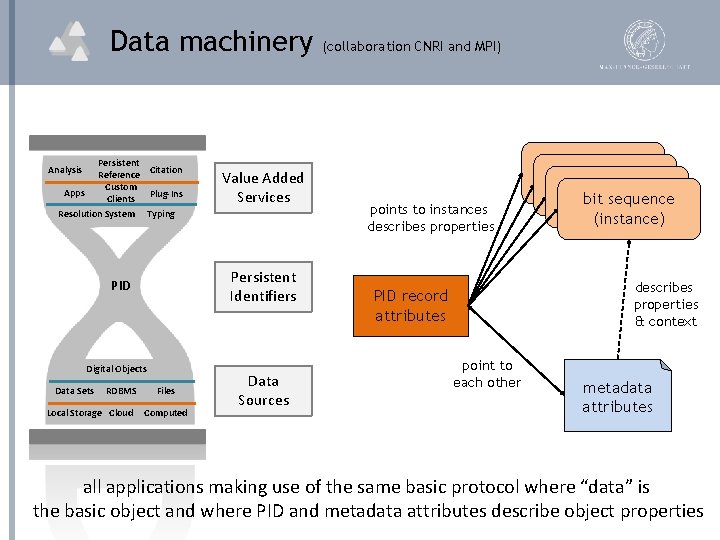

Data machinery Persistent Citation Reference Custom Plug-Ins Clients Analysis Apps Resolution System Typing Persistent Identifiers PID Digital Objects Data Sets RDBMS Local Storage Cloud Value Added Services Files Computed Data Sources (collaboration CNRI and MPI) points to instances describes properties bit sequence (instance) describes properties & context PID record attributes point to each other metadata attributes all applications making use of the same basic protocol where “data” is the basic object and where PID and metadata attributes describe object properties

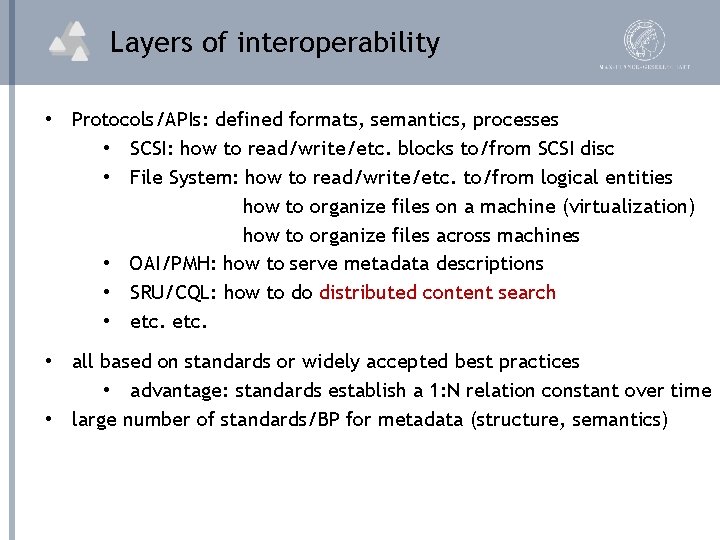

Layers of interoperability • Protocols/APIs: defined formats, semantics, processes • SCSI: how to read/write/etc. blocks to/from SCSI disc • File System: how to read/write/etc. to/from logical entities how to organize files on a machine (virtualization) how to organize files across machines • OAI/PMH: how to serve metadata descriptions • SRU/CQL: how to do distributed content search • etc. • all based on standards or widely accepted best practices • advantage: standards establish a 1: N relation constant over time • large number of standards/BP for metadata (structure, semantics)

back to linguistics • where are we in the linguistics domain? • what happened in some well-known projects • do we miss the big challenges which other disciplines have and that would force us to ignore schools, vainness, etc. • 4 examples • metadata • DOBES • TDS • CLARIN

metadata is kind of easy • DC/OLAC – CMDI mapping examples: • • • DC: language CMDI: language. In DC: language CMDI: dominant. Language DC: language CMDI: source. Language DC: language CMDI: target. Language DC: date CMDI: creation. Date element d e it m li o t e u d doable CMDI: publication. Date tics mantic mapping se. DC: date seman d e ib r c s e d ll e w DC: date CMDI: start. Year sets and to now s TEI) a h c u s s e in h c a m DC: datecept for recursive. CMDI: derivation. Date (ex oblem r p o n – y r e v o c is d DC: formatpping is used for. CMDI: media. Type ll. . . crucial for if ma e w – s ic t is t a t s r fo DC: format apping is used CMDI: mime. Type if m machine DC: format CMDI: annotation. Format processing DC: format CMDI: character. Encoding • everyone accepts now: metadata is for pragmatic purposes and not replacing the one and only one true categorization • mapping errors may influence recall and precision – but who cares really

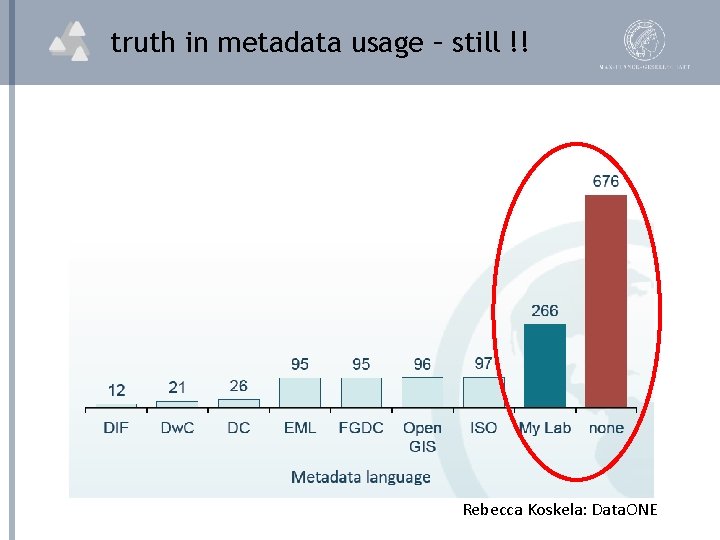

truth in metadata usage – still !! Rebecca Koskela: Data. ONE

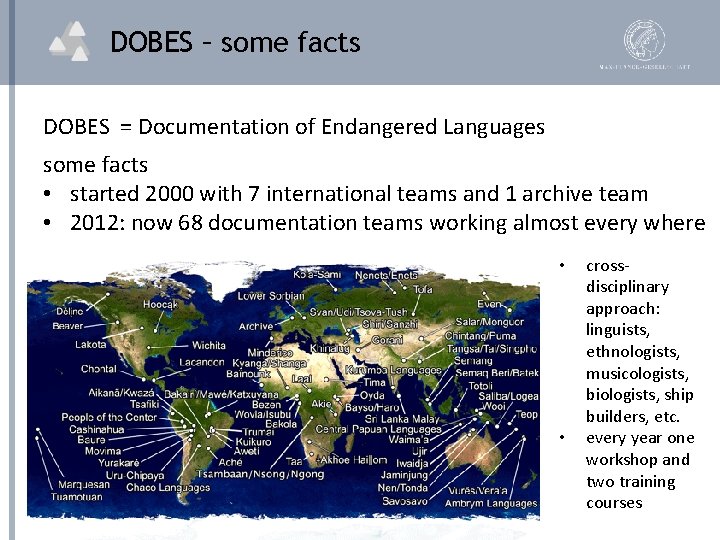

DOBES – some facts DOBES = Documentation of Endangered Languages some facts • started 2000 with 7 international teams and 1 archive team • 2012: now 68 documentation teams working almost every where • • crossdisciplinary approach: linguists, ethnologists, musicologists, biologists, ship builders, etc. every year one workshop and two training courses

DOBES Agreements • in first 2 -3 years quite some joint agreements • formats to be stored in the archive – interoperability • principles of archiving such as PIDs • workflows determining the archive-team interaction • organizational principles to manage and manipulate data • metadata to be used to manage and find data (pragmatics vs. theory) • joint agreement on Code of Conduct • short discussions on more linguistic aspects failed • agreement on joint tag set - NO • agreement on joint lexical structures - NO • etc. • good reason: the languages are so different • “bad” reason: agreements require effort

recent DOBES Questions • now after >10 years we have so much good data in the archive • what can we do with it ? ? • traditional: every researcher looks at his/her data and publishes of course taking into account what has been published by others • new: can researcher teams come to new results while working on the raw and annotated data? • what does this require in case of “automatic” or “blind” procedures? (remember that the researchers do not understand the language) • you need to know the tier labels to understand the type of annotation • you need to know the tags used to understand the results of the linguistic analysis work (morphological, syntactical, etc. )

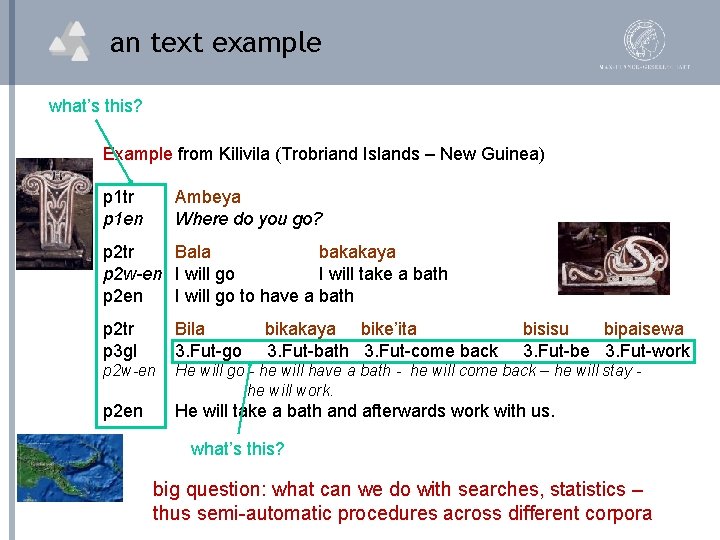

an text example what’s this? Example from Kilivila (Trobriand Islands – New Guinea) p 1 tr p 1 en Ambeya Where do you go? p 2 tr Bala bakakaya p 2 w-en I will go I will take a bath p 2 en I will go to have a bath p 2 tr p 3 gl Bila 3. Fut-go bikakaya bike’ita 3. Fut-bath 3. Fut-come back bisisu bipaisewa 3. Fut-be 3. Fut-work p 2 w-en He will go - he will have a bath - he will come back – he will stay he will work. p 2 en He will take a bath and afterwards work with us. what’s this? big question: what can we do with searches, statistics – thus semi-automatic procedures across different corpora

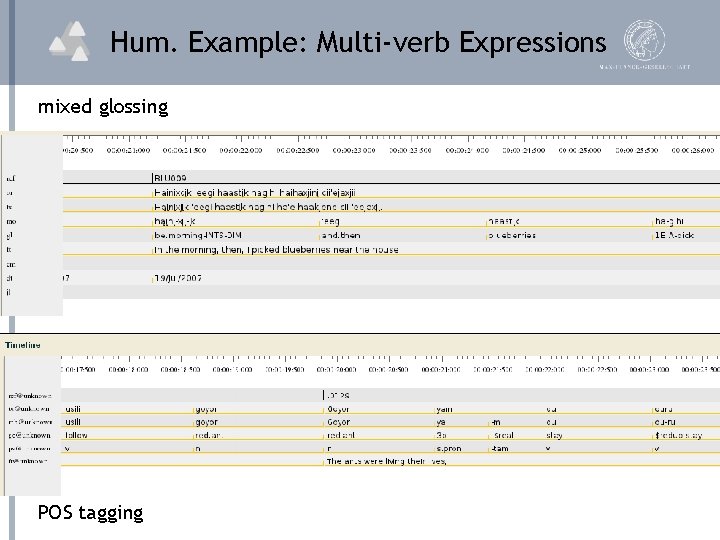

Hum. Example: Multi-verb Expressions mixed glossing POS tagging

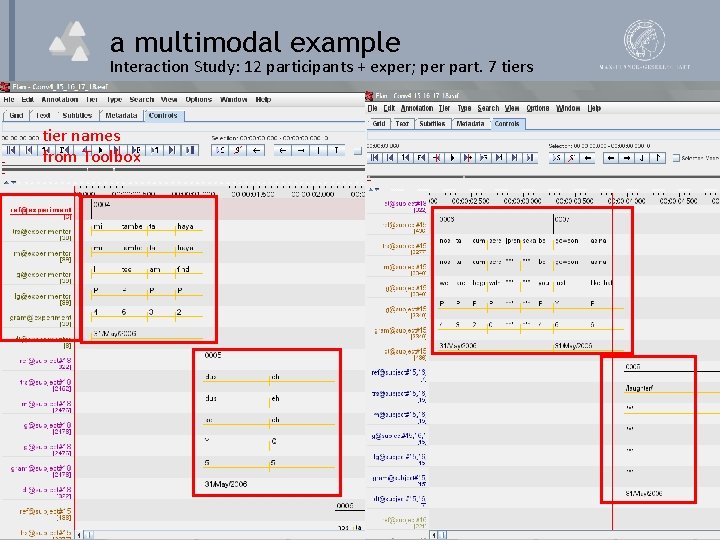

a multimodal example Interaction Study: 12 participants + exper; per part. 7 tiers tier names from Toolbox

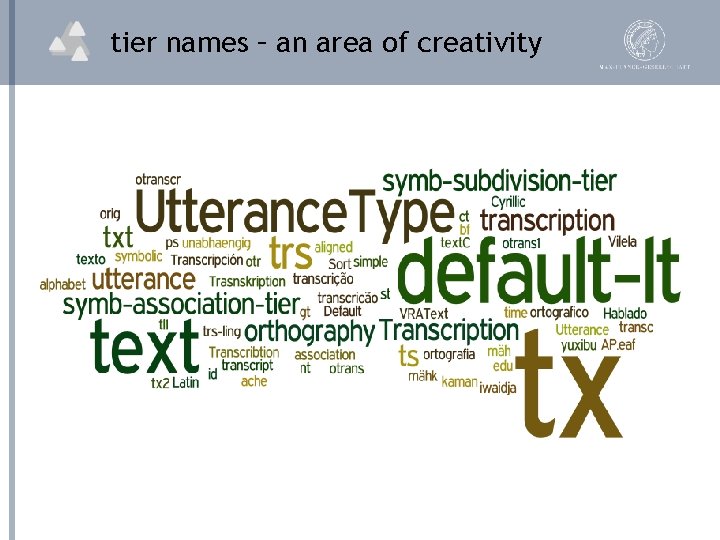

tier names – an area of creativity

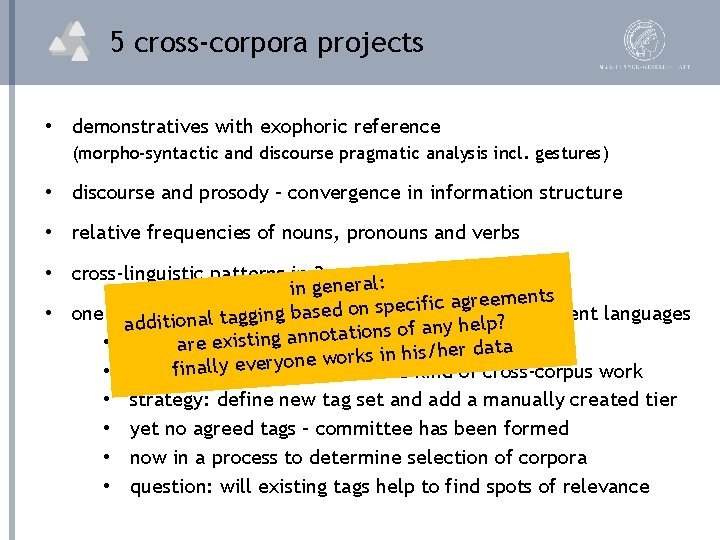

5 cross-corpora projects • demonstratives with exophoric reference (morpho-syntactic and discourse pragmatic analysis incl. gestures) • discourse and prosody – convergence in information structure • relative frequencies of nouns, pronouns and verbs • cross-linguistic patterns in 3 -participant events l: a r e in gen eements r g a ic if c e p s n o sed 13 teams covering different languages • one rather large program g bawith additional taggin any help? f o s n io t a t o n n a existin • primary is g“referentiality”is/her data aretopic s in h k r o w e n o y r e v e ally finquestion: • bigger how to do this kind of cross-corpus work • strategy: define new tag set and add a manually created tier • yet no agreed tags – committee has been formed • now in a process to determine selection of corpora • question: will existing tags help to find spots of relevance

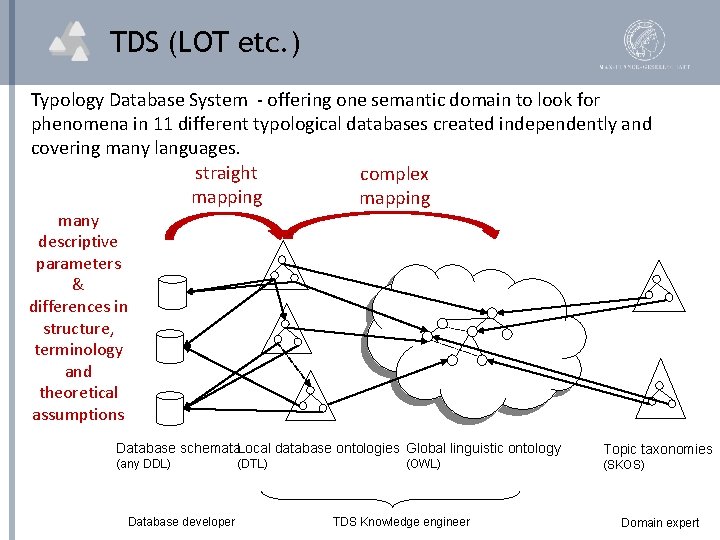

TDS (LOT etc. ) Typology Database System - offering one semantic domain to look for phenomena in 11 different typological databases created independently and covering many languages. straight complex mapping many descriptive parameters & differences in structure, terminology and theoretical assumptions Database schemata. Local database ontologies Global linguistic ontology Topic taxonomies (any DDL) (SKOS) Database developer (DTL) (OWL) TDS Knowledge engineer Domain expert

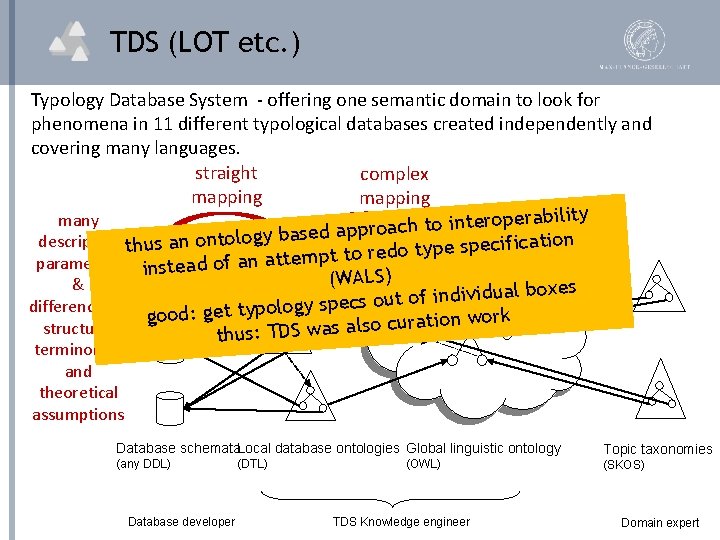

TDS (LOT etc. ) Typology Database System - offering one semantic domain to look for phenomena in 11 different typological databases created independently and covering many languages. straight complex mapping many operability r e t in o t h c a o r p ap descriptive thus an ontology based ification c e p s e p y t o d e r parameters instead of an attempt to (WALS) & ual boxes id iv d in f o t u o s differences in gy spec good: get typolo n work io t a r u c o ls a structure, s a w thus: TDS terminology and theoretical assumptions Database schemata. Local database ontologies Global linguistic ontology Topic taxonomies (any DDL) (SKOS) Database developer (DTL) (OWL) TDS Knowledge engineer Domain expert

subject-verb agreement • Q 1: which languages have subject-verb agreement? • db A: exactly this question with Boolean answer • no distinction thus simple • db B: bundle of information • sole argument of an intransitive verb • agent/patient/recipient-like arguments of transitive verb • in general “yes” for s and a cases (but not always clear) • Q 2: which languages are of type a for transitive verbs • db A: ambiguous – so give all languages or none • db B: simple answer • a pre-query stage allows user to decide about options • what when several parameters are used to describe a phenomenon

Did TDS work? • let’s assume that • the local ontologies represent the conceptualization correctly • the global ontology forms a useful unifying conceptualization (is there such an accepted unifying ontology? ) • the 2 -stage query interface offers proper help • THEN TDS sounds like an excellent, scalable approach • why did TDS not yet take up? • TRs rely on papers and are not interested in databases ? • TRs don’t understand rely on the formal semantics blurb ? • TRs would need to invest time – do they take it ? (occasional usage, small community of experts) • what is WALS then – just a glossary for non-experts ?

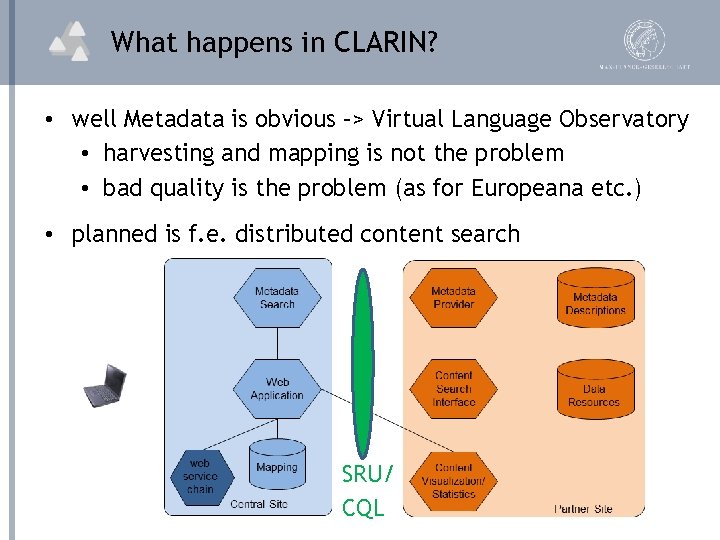

What happens in CLARIN? • well Metadata is obvious –> Virtual Language Observatory • harvesting and mapping is not the problem • bad quality is the problem (as for Europeana etc. ) • planned is f. e. distributed content search SRU/ CQL

what is comparatively easy? • what if we only look at Dutch or German texts? d on orke(collocations) w • searching justatfor textual patterns g in e b is ly t n e r this is wh cur ing so inspir. Wordnets ot SUMO, • could make use nof to extend query etc • but can/should we compete with Google? • what if we search across languages? thistextual • well – need some translation mechanism d infor e t s e r e t in y ll a e r ers are not h c r a e s e r t a h t s – could bethtrivial translation em sepatterns at is moment • does it make sense – will people use it? • AND: it is mainstream – so Google will do it

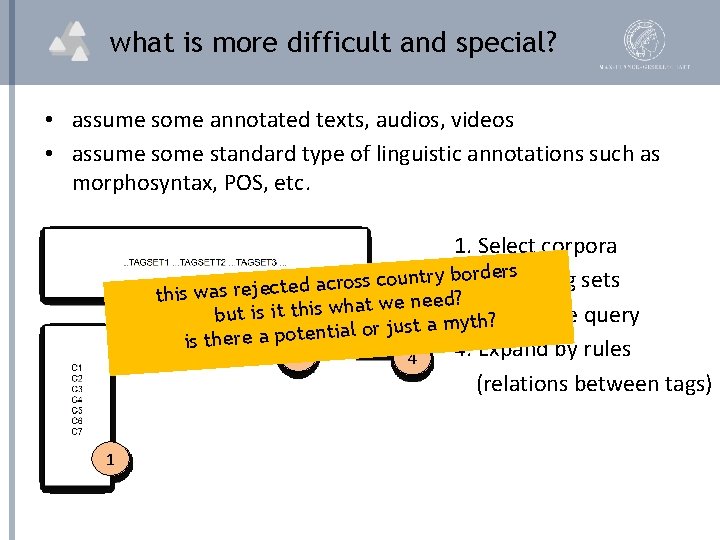

what is more difficult and special? • assume some annotated texts, audios, videos • assume some standard type of linguistic annotations such as morphosyntax, POS, etc. 1. Select corpora ders Tag sets or. Select y b 2. r t n u o c s s o r c a d this was rejecte 2 at we need? h w is h t it is but 3. yt. Formulate query ? h m a t s u j r o ial is there a potent 4. Expand by rules 3 4 (relations between tags) 1

semantic bridges: how? • assume that we have two corpora: one encoded by STTS and the other one by CGN and assume that they have some linguistic annotation (morphosyntax, POS, etc. ) to be used in a distributed search or statistics (take care: searching != statistics) • what to do now to exploit both collections? 1. do separate searches – well. . . 2. create rich umbrella ontology and complex refs (comparable to TDS) • • well - could become a never ending story. . . people disagree on relations etc. relations partly depend on pragmatic considerations expensive, static, require experts, not understandable, etc.

Are flat category registries ok? 3. flat registries of linguistic categories such as ISOcat (12620) sound like a solution for some tasks • easy mapping between two (or more) categories • users can easily create their own mappings or re-use some • maintenance is more easy and thus allows dynamics • etc. • so it seems that we could overcome the TDS barrier • but we are reducing accuracy and losing much information • too simple for statistics ? ? • sufficient for searches ? ?

What about Jan’s examples? • e 0: annotations are structured: “nps/np” • e 1: “JJR” -> “POS=adjective & degree=comparative” • e 2: “Transitive” -> “thetavp=vp 120 & synvps=[syn. NP] & case. Assigner=True” • e 3: “VVIMP” -> “POS= verb & main verb & mood=imperative” • where to put annotation complexity if “ontology” is simple • complexity needs to be put into schemas • who can do it – is it feasible? • mapping must be between combinations of cats or graphs • who can do it – is it feasible?

are there conclusions? 1. do we want/need cross-corpora operations? • for many other communities this is a MUST • don’t we have “society relevant” challenges? • do they just get more money? • given all regularity finding machines – is linguistic annotation relevant at all? 2. is it for us more difficult to do? • well - that’s what all claim – don’t believe that anymore

are there conclusions? 3. are we interested to try it out? • well – yet there are not so many people committed • is it not of relevance? • is it lack of money? • some are opposing strictly • is it a sense of reality? • is it lack of vision? • is it vainness? 4. if interested, how do we want to tackle things? • pragmatic – stepwise – simple first • will people use it then? • do we have evangelists?

Bedankt voor het luisteren.

useless Cloud debate some just call for Cloud – what does it solve? just collect also all content into one big pot all the issues about interoperability remain the same searching will be more efficient – no transport etc.

What about metadata? • TEI example 1 resp annotation supervisor and developer date from="1997" to="2004" name Claudia Kunze • which date is it? need to interpret context • which role is it? need to interpret context • TEI example 2 name Dan Tufiş resp Overal editorship name Ştefan Bruda resp Error correction and CES 1 conformance • which role is it? need to interpret context • very simple examples show • meant to be read by humans • (too) much degree of freedom • no CV for responsibility role

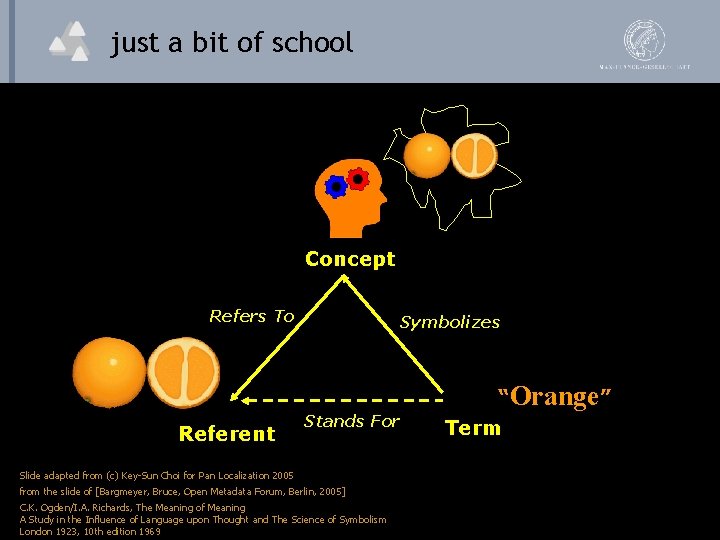

just a bit of school Concept Refers To Referent Symbolizes Stands For Slide adapted from (c) Key-Sun Choi for Pan Localization 2005 from the slide of [Bargmeyer, Bruce, Open Metadata Forum, Berlin, 2005] C. K. Ogden/I. A. Richards, The Meaning of Meaning A Study in the Influence of Language upon Thought and The Science of Symbolism London 1923, 10 th edition 1969 “Orange” Term

- Slides: 42