International Symposium on Microarchitecture MICRO 2006 UtilityBased Partitioning

International Symposium on Microarchitecture (MICRO) 2006 Utility-Based Partitioning: A Low-Overhead, High-Performance, Runtime Mechanism to Partition Shared Caches Written by Moinuddin K. Qureshi and Yale N. Patt Presented by Rubao Lee 10/16/2008 1

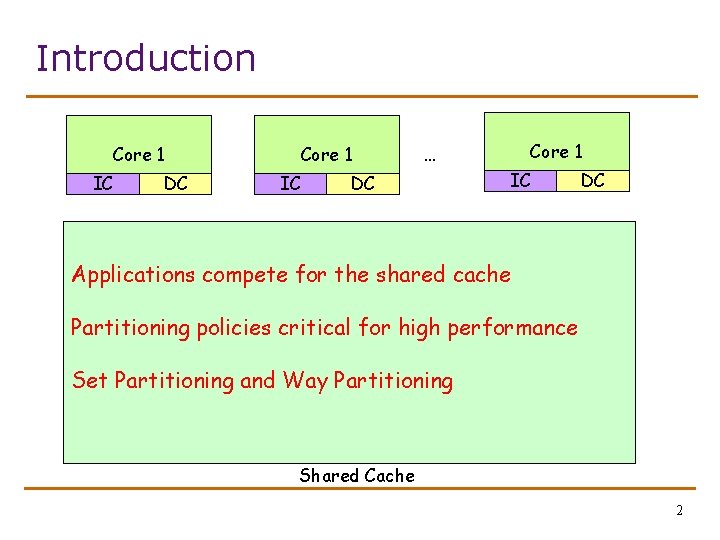

Introduction Core 1 IC DC Core 1 IC Core 1 … DC IC DC Applications compete for the shared cache Partitioning policies critical for high performance Set Partitioning and Way Partitioning Shared Cache 2

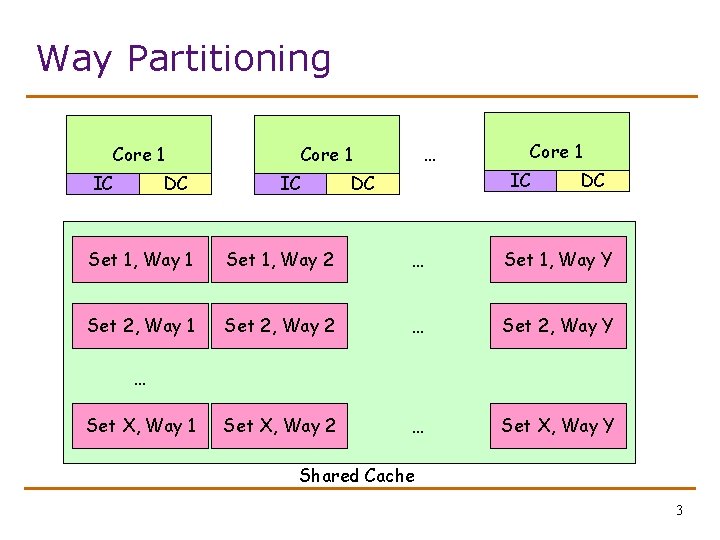

Way Partitioning Core 1 IC DC … Core 1 IC DC DC Set 1, Way 1 Set 1, Way 2 … Set 1, Way Y Set 2, Way 1 Set 2, Way 2 … Set 2, Way Y Set X, Way 2 … Set X, Way Y … Set X, Way 1 Shared Cache 3

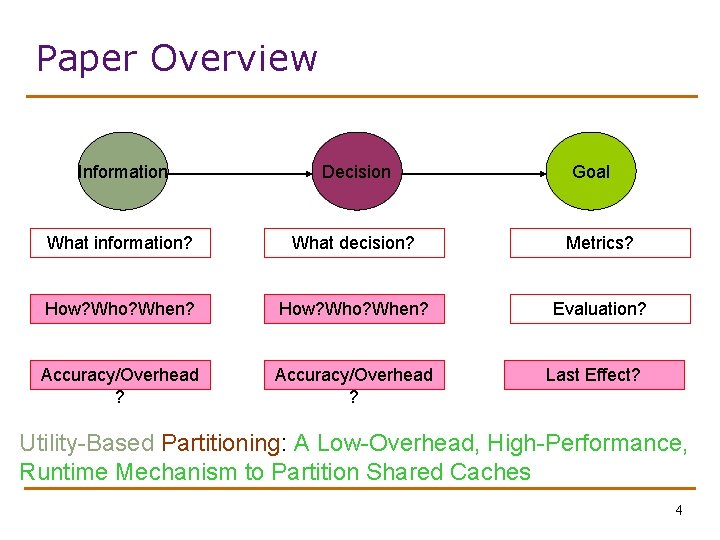

Paper Overview Information Decision Goal What information? What decision? Metrics? How? Who? When? Evaluation? Accuracy/Overhead ? Last Effect? Utility-Based Partitioning: A Low-Overhead, High-Performance, Runtime Mechanism to Partition Shared Caches 4

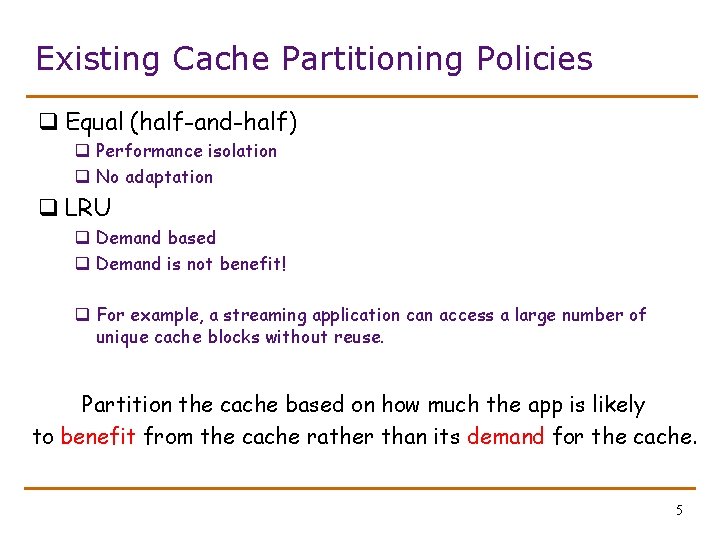

Existing Cache Partitioning Policies q Equal (half-and-half) q Performance isolation q No adaptation q LRU q Demand based q Demand is not benefit! q For example, a streaming application can access a large number of unique cache blocks without reuse. Partition the cache based on how much the app is likely to benefit from the cache rather than its demand for the cache. 5

Utility reflects benefit • Applications have different memory access patterns. • (1) Some do benefit significantly from more cache; • (2) Some do not; • (3) Some only need a fix amount of cache. • An important concept: Application’s Utility of Cache Resource 6

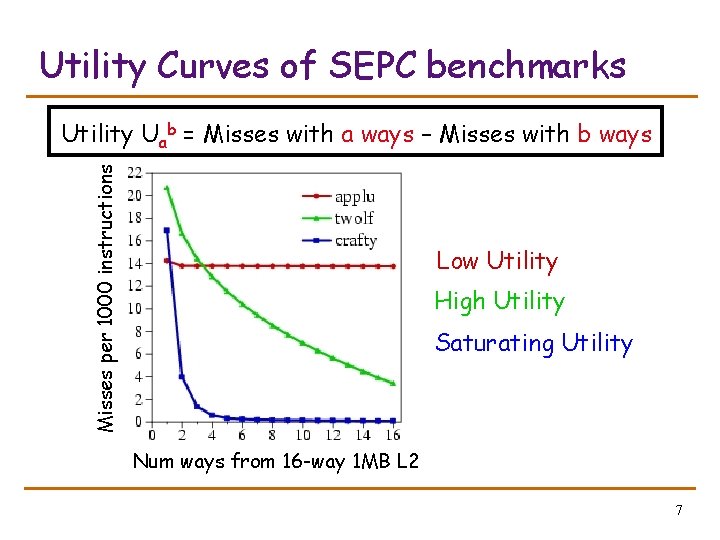

Utility Curves of SEPC benchmarks Misses per 1000 instructions Utility Uab = Misses with a ways – Misses with b ways Low Utility High Utility Saturating Utility Num ways from 16 -way 1 MB L 2 7

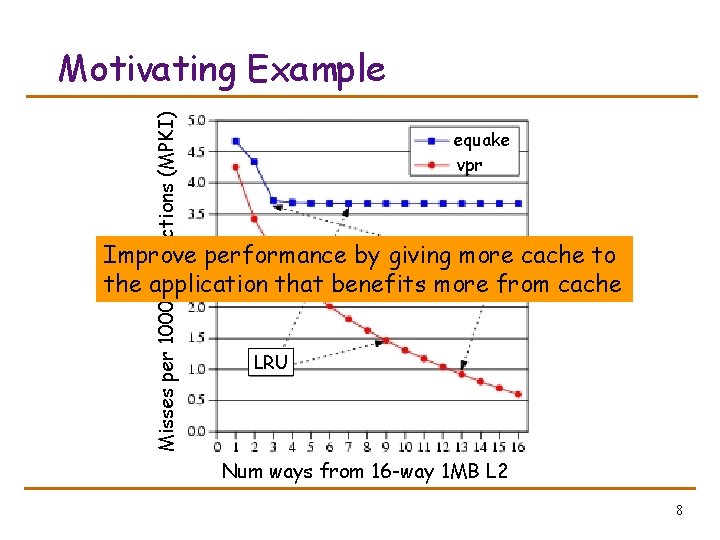

Misses per 1000 instructions (MPKI) Motivating Example equake vpr Improve performance by giving more cache to UTIL the application that benefits more from cache LRU Num ways from 16 -way 1 MB L 2 8

Outline q q q Introduction and Motivation Utility-Based Cache Partitioning Evaluation Scalable Partitioning Algorithm Related Work and Summary 9

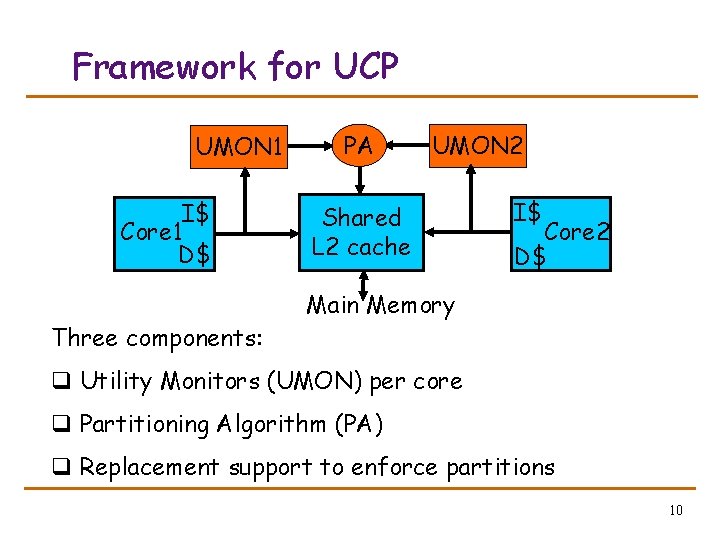

Framework for UCP UMON 1 I$ Core 1 D$ Three components: PA UMON 2 Shared L 2 cache I$ Core 2 D$ Main Memory q Utility Monitors (UMON) per core q Partitioning Algorithm (PA) q Replacement support to enforce partitions 10

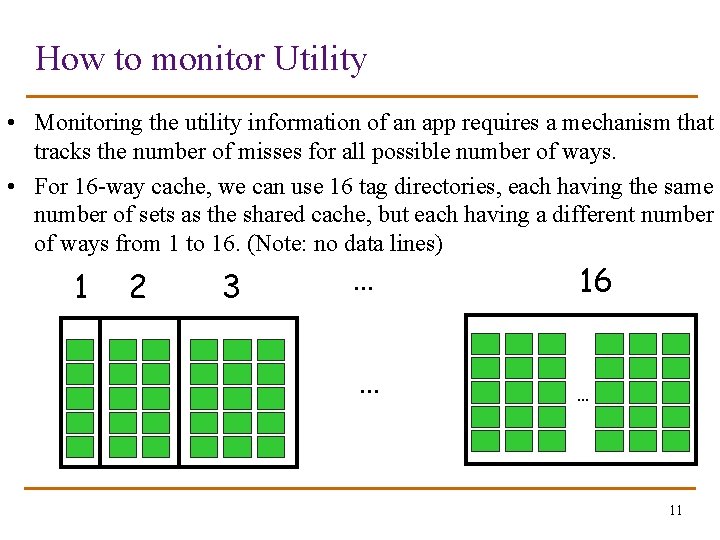

How to monitor Utility • Monitoring the utility information of an app requires a mechanism that tracks the number of misses for all possible number of ways. • For 16 -way cache, we can use 16 tag directories, each having the same number of sets as the shared cache, but each having a different number of ways from 1 to 16. (Note: no data lines) 1 2 3 … … 16 … 11

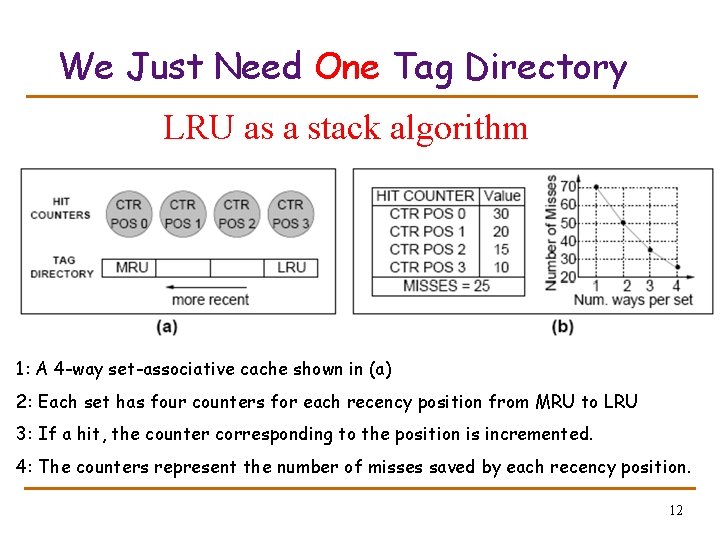

We Just Need One Tag Directory LRU as a stack algorithm 1: A 4 -way set-associative cache shown in (a) 2: Each set has four counters for each recency position from MRU to LRU 3: If a hit, the counter corresponding to the position is incremented. 4: The counters represent the number of misses saved by each recency position. 12

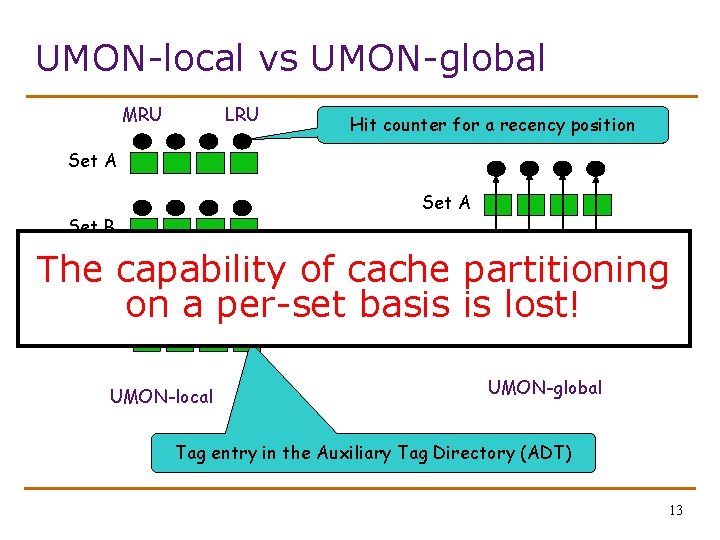

UMON-local vs UMON-global MRU LRU Hit counter for a recency position Set A Set B The Set Cpartitioning Set C capability of cache on a per-set basis is lost! Set D UMON-local UMON-global Tag entry in the Auxiliary Tag Directory (ADT) 13

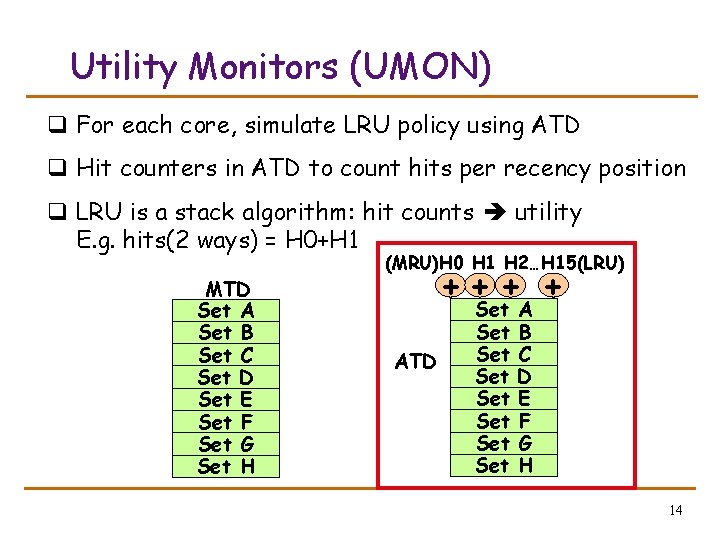

Utility Monitors (UMON) q For each core, simulate LRU policy using ATD q Hit counters in ATD to count hits per recency position q LRU is a stack algorithm: hit counts utility E. g. hits(2 ways) = H 0+H 1 MTD Set A Set B Set C Set D Set E Set F Set G Set H (MRU)H 0 H 1 H 2…H 15(LRU) +++ + ATD Set Set A B C D E F G H 14

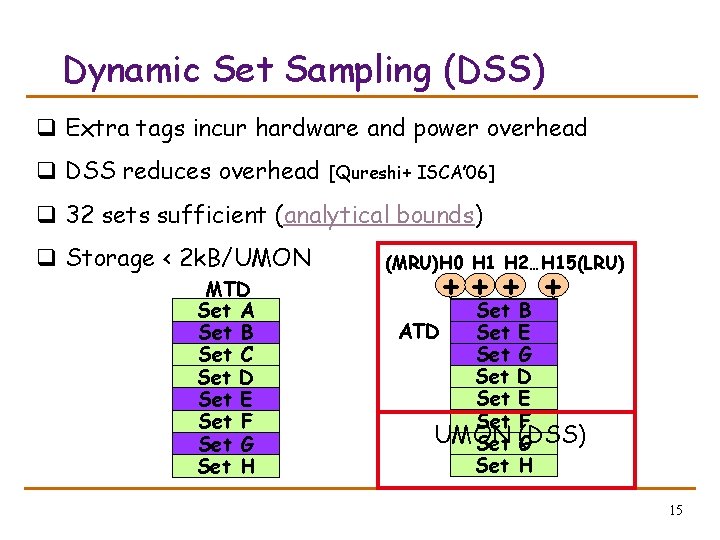

Dynamic Set Sampling (DSS) q Extra tags incur hardware and power overhead q DSS reduces overhead [Qureshi+ ISCA’ 06] q 32 sets sufficient (analytical bounds) q Storage < 2 k. B/UMON MTD Set A Set B Set C Set D Set E Set F Set G Set H (MRU)H 0 H 1 H 2…H 15(LRU) +++ + Set ATD Set Set Set UMON Set A B B E C G D E F (DSS) G H 15

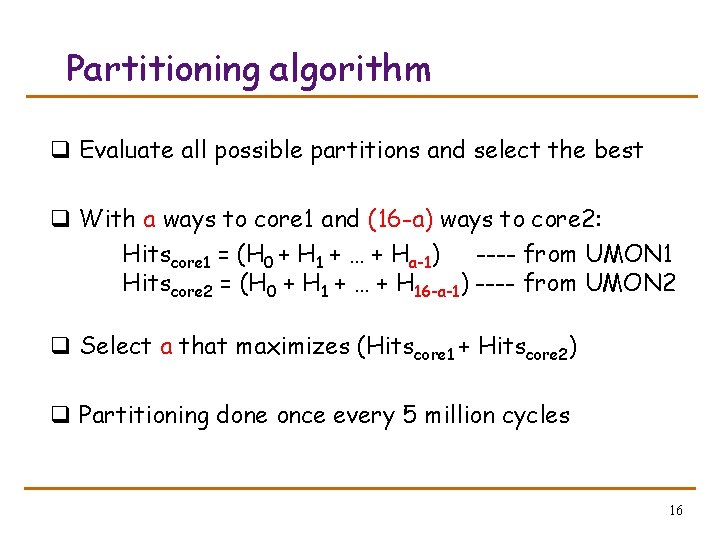

Partitioning algorithm q Evaluate all possible partitions and select the best q With a ways to core 1 and (16 -a) ways to core 2: Hitscore 1 = (H 0 + H 1 + … + Ha-1) ---- from UMON 1 Hitscore 2 = (H 0 + H 1 + … + H 16 -a-1) ---- from UMON 2 q Select a that maximizes (Hitscore 1 + Hitscore 2) q Partitioning done once every 5 million cycles 16

![Way Partitioning Way partitioning support: [Suh+ HPCA’ 02, Iyer ICS’ 04] 1. Each line Way Partitioning Way partitioning support: [Suh+ HPCA’ 02, Iyer ICS’ 04] 1. Each line](http://slidetodoc.com/presentation_image_h2/dcf5e32e074249e9b9eec5349c191530/image-17.jpg)

Way Partitioning Way partitioning support: [Suh+ HPCA’ 02, Iyer ICS’ 04] 1. Each line has core-id bits 2. On a miss, count ways_occupied in set by miss-causing app ways_occupied < ways_given Yes Victim is the LRU line from other app No Victim is the LRU line from miss-causing app 17

Outline q q q Introduction and Motivation Utility-Based Cache Partitioning Evaluation Scalable Partitioning Algorithm Related Work and Summary 18

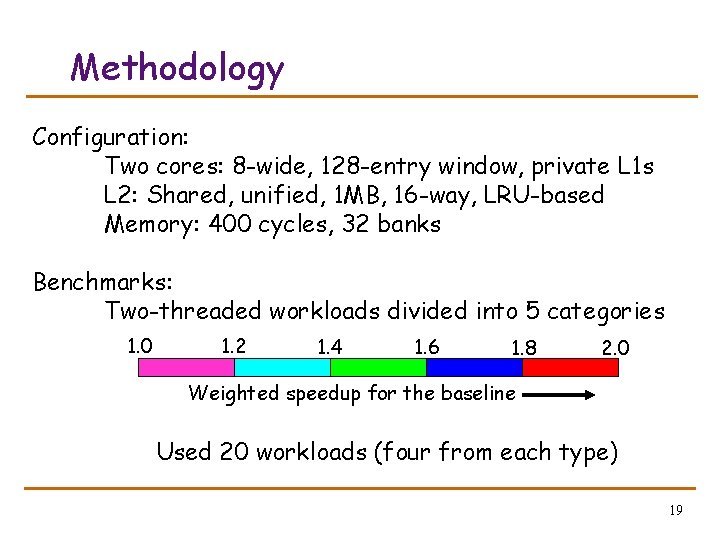

Methodology Configuration: Two cores: 8 -wide, 128 -entry window, private L 1 s L 2: Shared, unified, 1 MB, 16 -way, LRU-based Memory: 400 cycles, 32 banks Benchmarks: Two-threaded workloads divided into 5 categories 1. 0 1. 2 1. 4 1. 6 1. 8 2. 0 Weighted speedup for the baseline Used 20 workloads (four from each type) 19

Metrics Three metrics for performance: 1. Weighted Speedup (default metric) perf = IPC 1/Single. IPC 1 + IPC 2/Single. IPC 2 correlates with reduction in execution time 2. Throughput perf = IPC 1 + IPC 2 can be unfair to low-IPC application 3. Hmean-fairness perf = hmean(IPC 1/Single. IPC 1, IPC 2/Single. IPC 2) balances fairness and performance 20

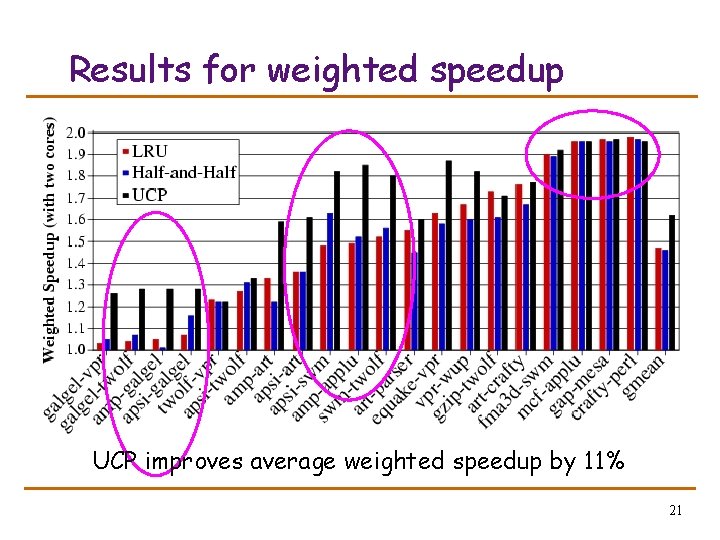

Results for weighted speedup UCP improves average weighted speedup by 11% 21

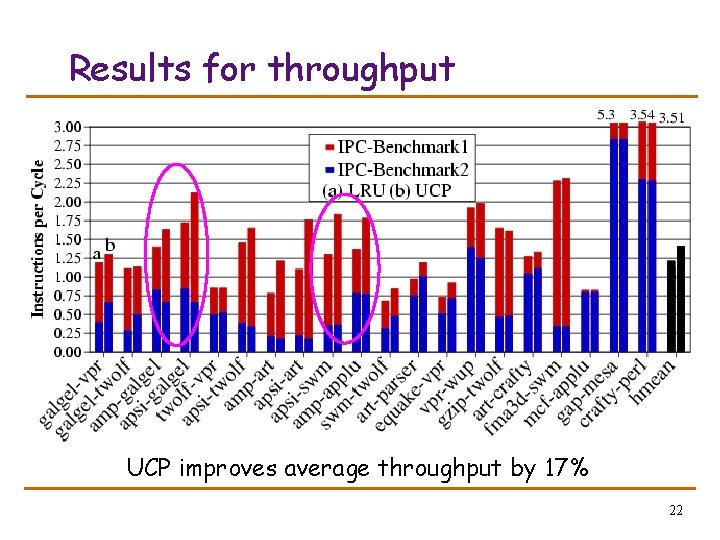

Results for throughput UCP improves average throughput by 17% 22

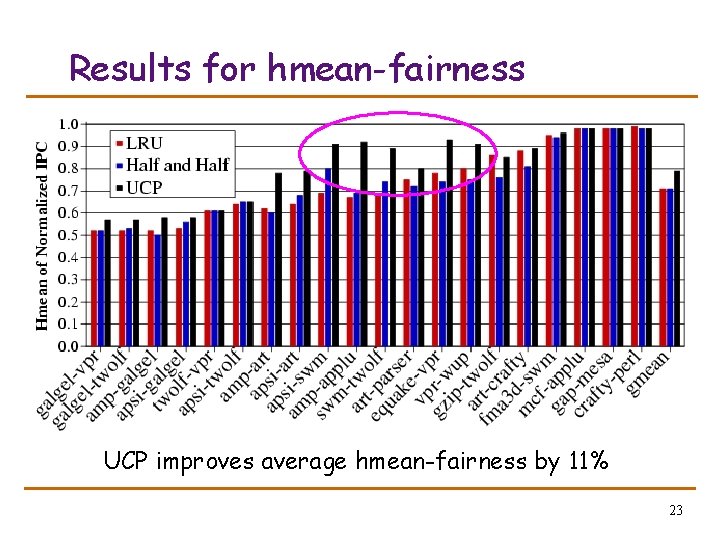

Results for hmean-fairness UCP improves average hmean-fairness by 11% 23

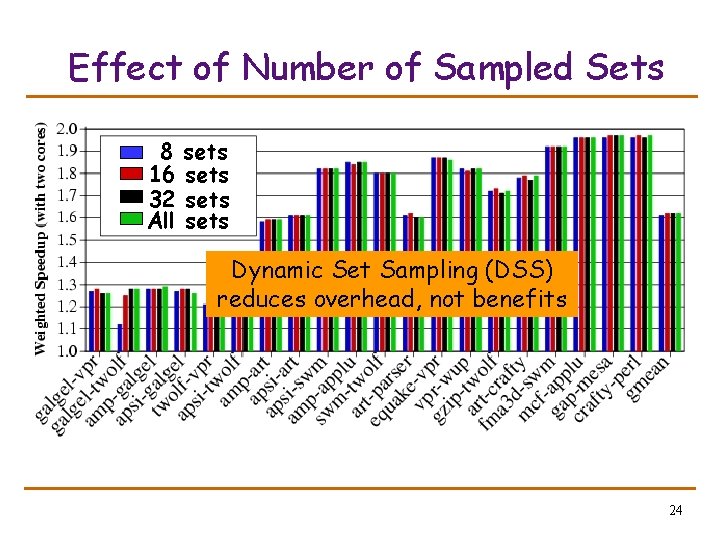

Effect of Number of Sampled Sets 8 16 32 All sets Dynamic Set Sampling (DSS) reduces overhead, not benefits 24

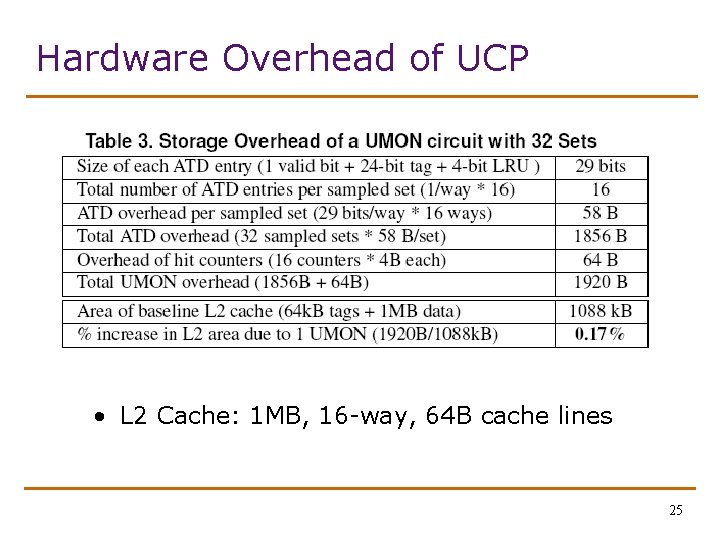

Hardware Overhead of UCP • L 2 Cache: 1 MB, 16 -way, 64 B cache lines 25

Outline q q q Introduction and Motivation Utility-Based Cache Partitioning Evaluation Scalable Partitioning Algorithm Related Work and Summary 26

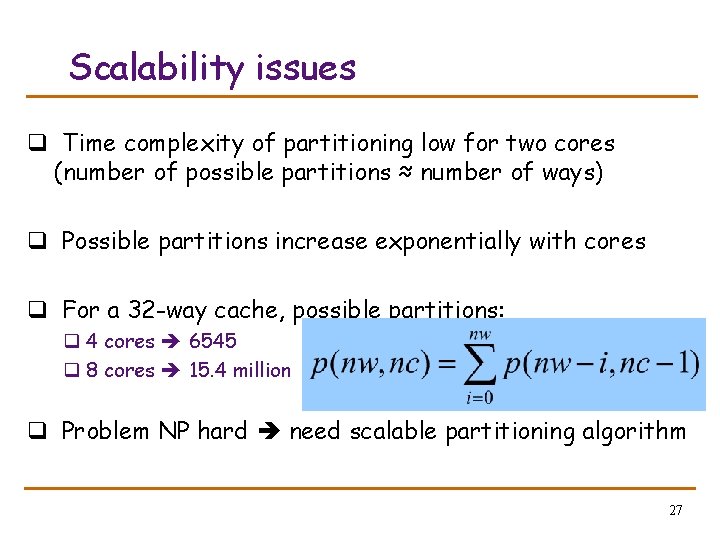

Scalability issues q Time complexity of partitioning low for two cores (number of possible partitions ≈ number of ways) q Possible partitions increase exponentially with cores q For a 32 -way cache, possible partitions: q 4 cores 6545 q 8 cores 15. 4 million q Problem NP hard need scalable partitioning algorithm 27

![Greedy Algorithm [Stone+ To. C ’ 92] q GA allocates 1 block to the Greedy Algorithm [Stone+ To. C ’ 92] q GA allocates 1 block to the](http://slidetodoc.com/presentation_image_h2/dcf5e32e074249e9b9eec5349c191530/image-28.jpg)

Greedy Algorithm [Stone+ To. C ’ 92] q GA allocates 1 block to the app that has the max utility for one block. Repeat till all blocks allocated q Pathological behavior for non-convex curves Misses per 100 instructions q Optimal partitioning when utility curves are convex Num ways from a 32 -way 2 MB L 2 28

![Greedy Algorithm [Stone+ To. C ’ 92] 29 Greedy Algorithm [Stone+ To. C ’ 92] 29](http://slidetodoc.com/presentation_image_h2/dcf5e32e074249e9b9eec5349c191530/image-29.jpg)

Greedy Algorithm [Stone+ To. C ’ 92] 29

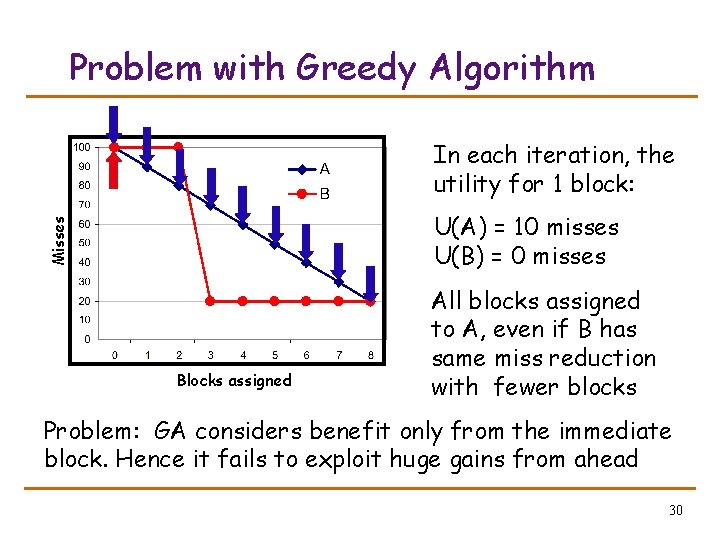

Problem with Greedy Algorithm In each iteration, the utility for 1 block: Misses U(A) = 10 misses U(B) = 0 misses Blocks assigned All blocks assigned to A, even if B has same miss reduction with fewer blocks Problem: GA considers benefit only from the immediate block. Hence it fails to exploit huge gains from ahead 30

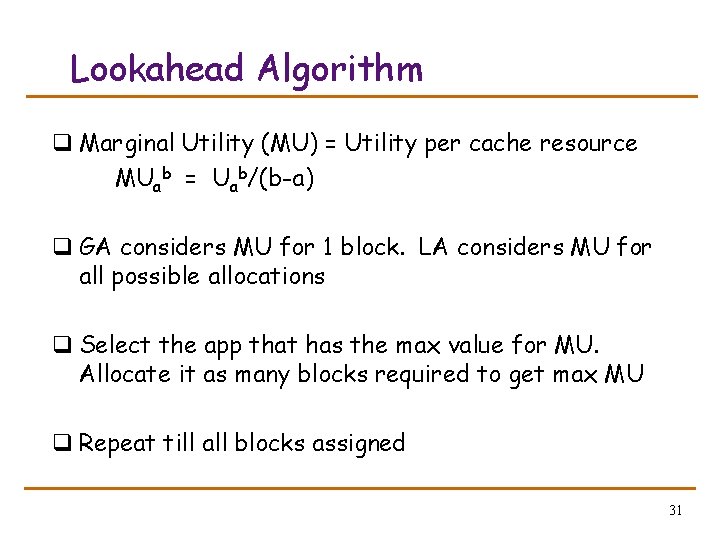

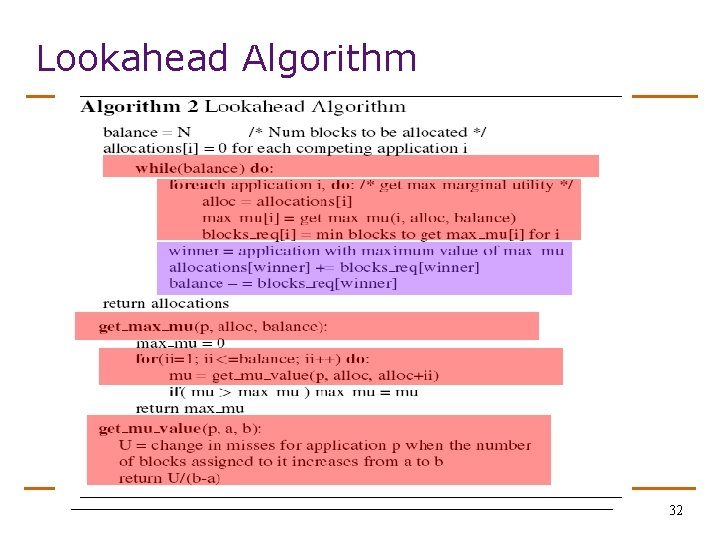

Lookahead Algorithm q Marginal Utility (MU) = Utility per cache resource MUab = Uab/(b-a) q GA considers MU for 1 block. LA considers MU for all possible allocations q Select the app that has the max value for MU. Allocate it as many blocks required to get max MU q Repeat till all blocks assigned 31

Lookahead Algorithm 32

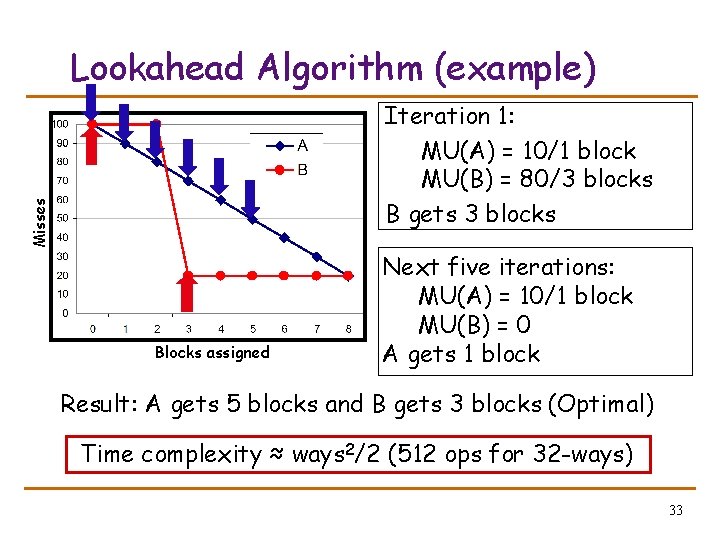

Lookahead Algorithm (example) Misses Iteration 1: MU(A) = 10/1 block MU(B) = 80/3 blocks B gets 3 blocks Blocks assigned Next five iterations: MU(A) = 10/1 block MU(B) = 0 A gets 1 block Result: A gets 5 blocks and B gets 3 blocks (Optimal) Time complexity ≈ ways 2/2 (512 ops for 32 -ways) 33

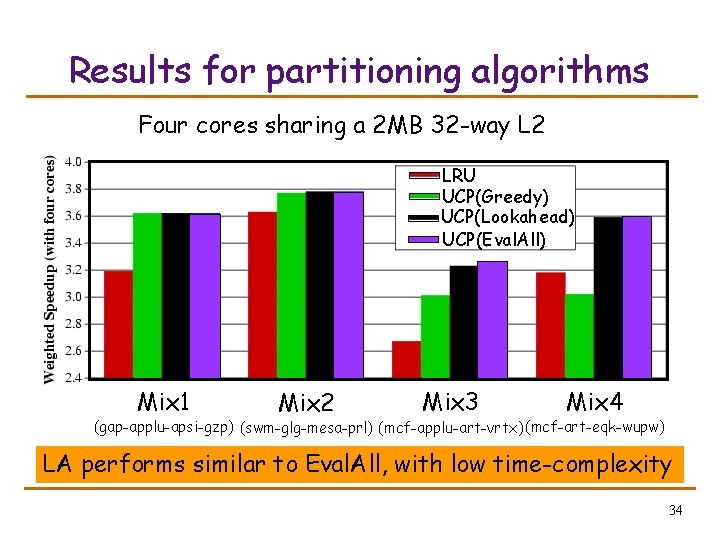

Results for partitioning algorithms Four cores sharing a 2 MB 32 -way L 2 LRU UCP(Greedy) UCP(Lookahead) UCP(Eval. All) Mix 1 Mix 2 Mix 3 Mix 4 (gap-applu-apsi-gzp) (swm-glg-mesa-prl) (mcf-applu-art-vrtx) (mcf-art-eqk-wupw) LA performs similar to Eval. All, with low time-complexity 34

Outline q q q Introduction and Motivation Utility-Based Cache Partitioning Evaluation Scalable Partitioning Algorithm Related Work and Summary 35

![Related work Performance Low Overhead High Low Suh+ [HPCA’ 02] Perf += 4% Storage Related work Performance Low Overhead High Low Suh+ [HPCA’ 02] Perf += 4% Storage](http://slidetodoc.com/presentation_image_h2/dcf5e32e074249e9b9eec5349c191530/image-36.jpg)

Related work Performance Low Overhead High Low Suh+ [HPCA’ 02] Perf += 4% Storage += 32 B/core High UCP Perf += 11% Storage += 2 k. B/core X Zhou+ [ASPLOS’ 04] Perf += 11% Storage += 64 k. B/core UCP is both high-performance and low-overhead 36

Summary q CMP and shared caches are common q Partition shared caches based on utility, not demand q UMON estimates utility at runtime with low overhead q UCP improves performance: o Weighted speedup by 11% o Throughput by 17% o Hmean-fairness by 11% q Lookahead algorithm is scalable to many cores sharing a highly associative cache 37

Questions 38

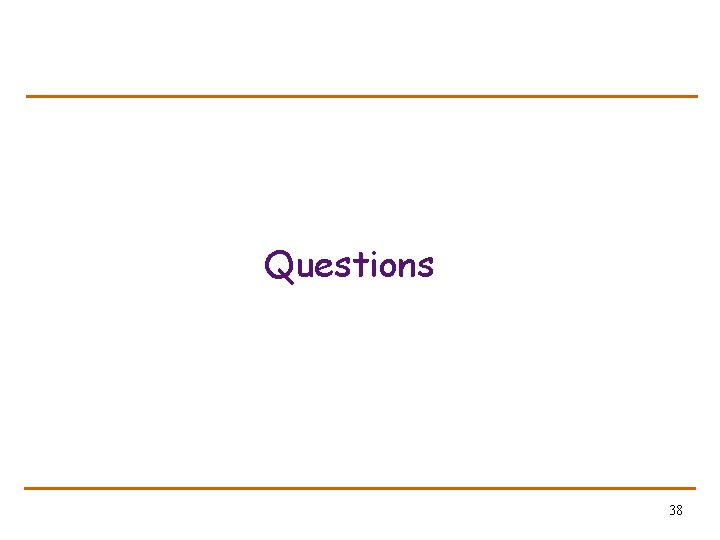

DSS Bounds with Analytical Model Us = Sampled mean (Num ways allocated by DSS) Ug = Global mean (Num ways allocated by Global) P = P(Us within 1 way of Ug) By Cheb. inequality: P ≥ 1 – variance/n n = number of sampled sets In general, variance ≤ 3 back 39

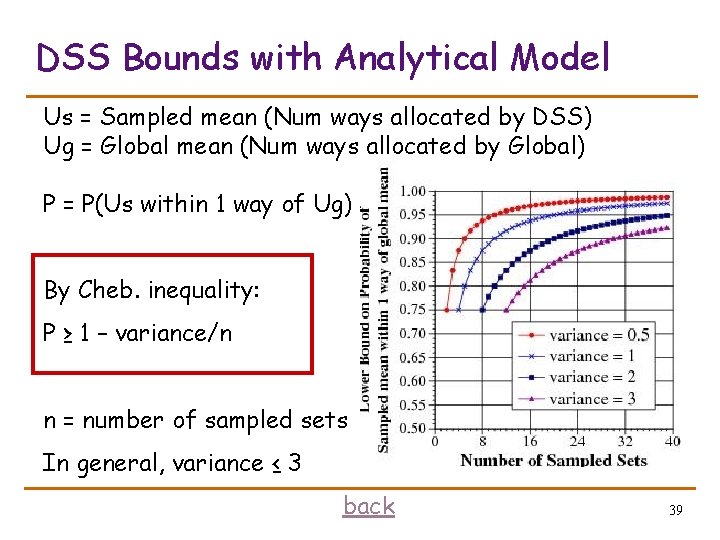

Galgel – concave utility galgel twolf parser 40

- Slides: 40