Intercomparison and Validation Task Team Breakout discussion Discussion

Inter-comparison and Validation Task Team Breakout discussion

Discussion topics • Overview of work-plan • Inter-comparison • routine inter-comparison of forecast metrics • routine inter-comparison of climate indices • multi-system ensemble approach • Validation techniques 1 ½ hours overall. ~ 20 minutes each

Overview of IV-TT work plan 1. Provide a demonstration of inter-comparison and validation in an operational framework: • write proposal for production of routine class 4 metrics. • implement at participating OOFS. • routinely upload metrics from OOFS to the US GODAE server. • after a few months, meet up to discuss progress. • once mature, report to ET-OOFS for implementation operationally. • continue to test new metrics etc in GOV and regularly update ETOOFS. 2. Improve inter-comparison and validation methodology for operational oceanography: • Obtain feedback from GOVST members participating in dedicated meetings organized by specific communities • New metrics outlined during the workshop organized by the task team • Limited inter-comparison projects between some OOFS, in order to test new metrics.

Class 4 inter-comparison Agreed at GOVST II meeting in 2010 • • Reference data to use for comparisons: – SST: surface drifters (USGODAE) and L 3 AATSR (My. Ocean) – SL : tide gauges (Coriolis) and along-track SLA data (CLS/Aviso) – T/S profile : Argo GDAC (USGODAE/Coriolis) Participants: – Bluelink, HYCOM, Mercator, FOAM already agreed. – RTOFS, C-NOOFS/CONCEPTS also expressed interest. – Any others? To set-up and run the generation of the inter-comparison plots: – USGODAE will host data – UK Met Office can produce basic regional RMSE/bias/correlation plots (other participating groups invited to investigate/develop new metrics using the database). Homogenising format of files: – Written in proposal document – Code for generating Class 4 files written at UKMO and can be made available.

Class 4 inter-comparison • • • Could produce basic monthly plots of RMSE and ACC vs forecast time in different regions. Discuss how to make use of the class 4 files. Type of averaging for statistics: vertical, horizontal and time. Which regions Routine plots, more detailed assessments, which diagnostics should be produced? How to present results: Taylor diagrams, RMS/ACC vs lead time, ….

Climate indices from GOV systems • Appropriate to produce these from GOV systems? • • Which climate indices should be produced from GOV systems? E. g. – – – – MOC -> T. Lee presentation SST -> Y. Xue presentation Temperature anomalies at depth -> Y. Xue presentation 20 deg isotherm depth -> Y. Xue presentation Heat transport -> T. Lee presentation Heat content estimates -> Y. Xue presentation Sea-ice extent -> TOPAZ /CONCEPT validation framework. s • Need for reanalysis to put real-time results in context? • How to report and who to?

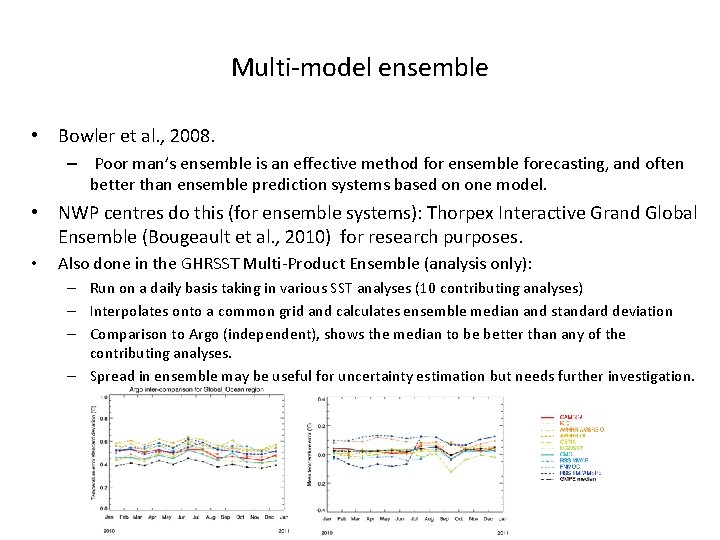

Multi-model ensemble • Bowler et al. , 2008. – Poor man’s ensemble is an effective method for ensemble forecasting, and often better than ensemble prediction systems based on one model. • NWP centres do this (for ensemble systems): Thorpex Interactive Grand Global Ensemble (Bougeault et al. , 2010) for research purposes. • Also done in the GHRSST Multi-Product Ensemble (analysis only): – Run on a daily basis taking in various SST analyses (10 contributing analyses) – Interpolates onto a common grid and calculates ensemble median and standard deviation – Comparison to Argo (independent), shows the median to be better than any of the contributing analyses. – Spread in ensemble may be useful for uncertainty estimation but needs further investigation.

Multi-model ensemble • Do a similar thing using GOV systems? • Which variables? – Could start with SST, SSS, SSH, surface u and v, sea-ice concentration. • Which processes do we want to represent and at which length scale? Common grid? • Forecast length/frequency? • Who would run such a system?

Validation techniques • New techniques? • Which variables? – T, S, SSH, sea-ice variables, u, v, (heat) transports, MOC. – Biogeochemistry? • New observation data-sets? – The role of objective analyses of data, EN 3, CORA, ARMOR 3 D • High resolution aspects. – Lagrangian metrics. – Upper ocean metrics. • What should be adopted more widely?

Updates to work-plan • Include new validation methodology: • Routine class 4 inter-comparison project updates: • Inter-comparison of climate indices: • Include plan for work on GOV Multi-Model Ensemble?

- Slides: 11