Integrated Episodic and Semantic Memory in Robotics Steve

Integrated Episodic and Semantic Memory in Robotics Steve Furtwangler, sfurtwangler@soartech. com with Robert Marinier, Jacob Crossman

Introduction • Robotics domain has some unique challenges • General patterns or issues we encountered working in robotics • Specifically, I will talk about • Measuring similarity in semantic memory • Using episodic and semantic memory together • Required or prohibited query conditions • Recreation of state 2

Using Episodic Memory for Partial Matches • The agent creates a statistical model of its world • The statistics are stored in semantic memory • Long-term identifiers created for each thing we are modeling • Statistics kept on these identifiers • Sometimes need to find similar things • Semantic memory doesn’t support partial matches • We decided to leverage episodic memory to do this instead • Example: • If the agent has no/little statistical data for this exact situation • Can ask if it was ever in a situation like this one • If so, look up statistical data for that situation in semantic memory 3

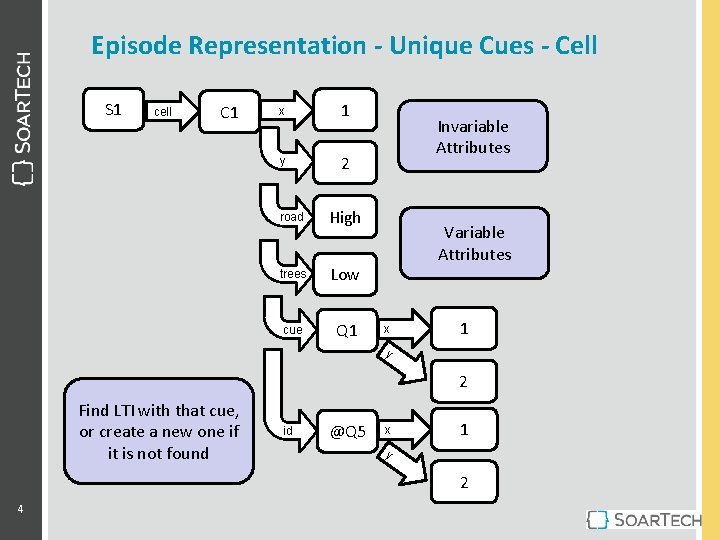

Episode Representation - Unique Cues - Cell S 1 cell C 1 x 1 y 2 road High trees Low cue Q 1 Invariable Attributes Variable Attributes x 1 y 2 Find LTI with that cue, or create a new one if it is not found id @Q 5 x 1 y 2 4

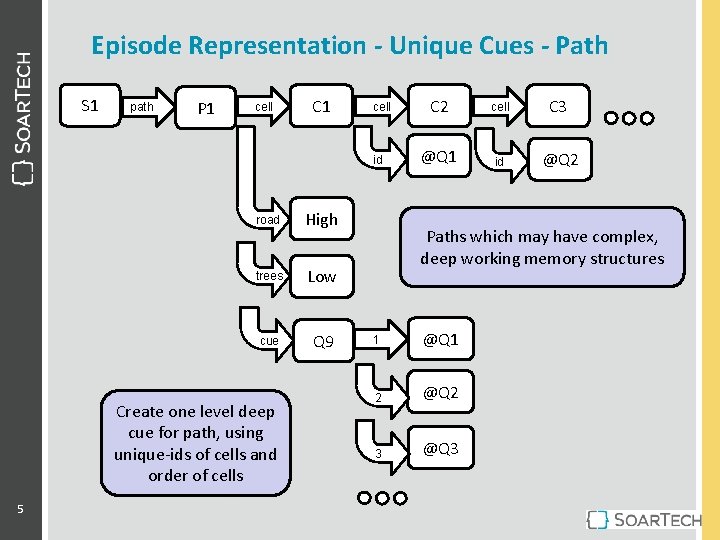

Episode Representation - Unique Cues - Path S 1 path P 1 cell C 1 cell id road High trees Low cue Q 9 Create one level deep cue for path, using unique-ids of cells and order of cells 5 C 2 cell C 3 @Q 1 id @Q 2 Paths which may have complex, deep working memory structures 1 @Q 1 2 @Q 2 3 @Q 3

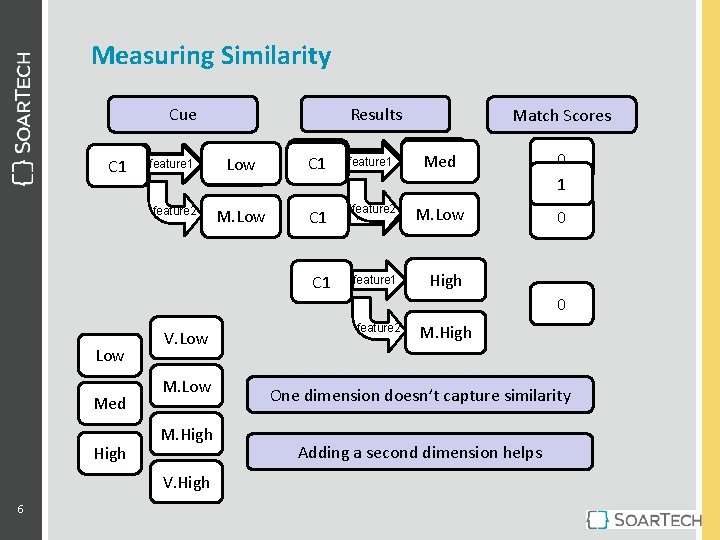

Measuring Similarity Results Cue C 1 Match Scores feature 1 Low C 1 feature 1 Med 0 1 feature 2 M. Low C 1 feature 2 feature M. Low High 0 feature 1 High C 1 0 Low Med High V. Low M. High V. High 6 feature 2 M. High One dimension doesn’t capture similarity Adding a second dimension helps

Episodic and Semantic Memory Conflicts • The objects in memory are identified in semantic memory • Some of the attributes on these objects (statistics) change over time • These long-term identifiers are referenced on the topstate • So they show up in episodic memory • However, when episodic memory recreates the episode • It recreates the attributes and values that the LTI had at the time 7

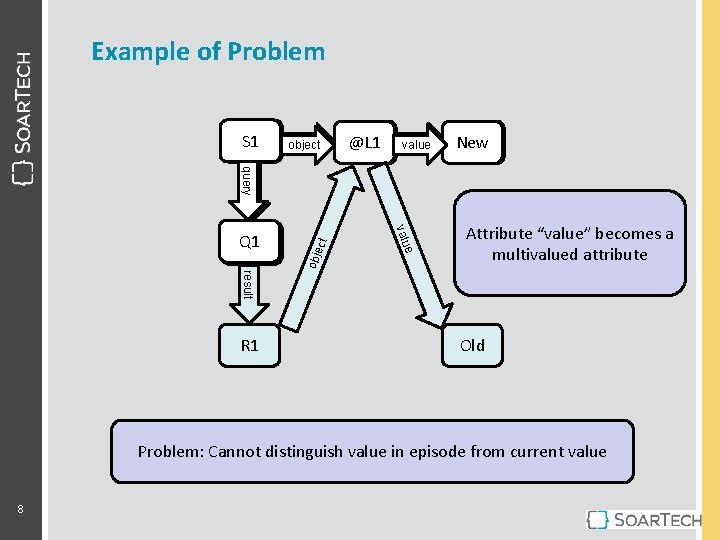

Example of Problem S 1 object @L 1 value New Old obje e valu ct query Q 1 Attribute “value” becomes a multivalued attribute result R 1 Old Problem: Cannot distinguish value in episode from current value 8

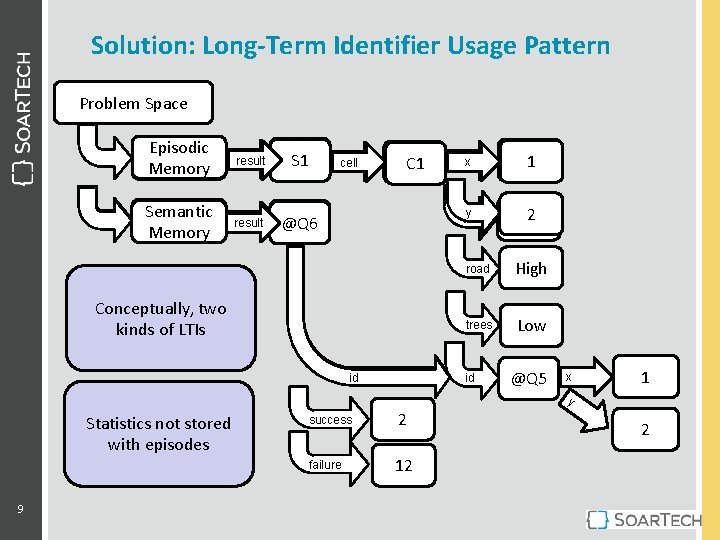

Solution: Long-Term Identifier Usage Pattern Problem Space Episodic Memory Cue result Q 1 Semantic Memory cue result Q 2 @Q 6 cell C 1 Conceptually, two kinds of LTIs id road x Med 1 y trees 2 Low road High trees Low id @Q 5 x 1 y Statistics not stored with episodes 9 success 2 failure 12 2

Required (or Prohibited) Query Conditions • Queries to Episodic Memory often have two different kinds of conditions • Things that have to exist in the episode (or should not exist) • This tends to decide of the episode is even relevant or not • Things which are optional, but should be as similar as possible • Example: • Query for a similar situation where the agent decided to go right • In order to reason about what might happen if I turn right, now • Result is a situation like the current situation, only the agent went left • Has to be prohibited, until the agent gets a memory of going right • Leads to a common pattern… 10

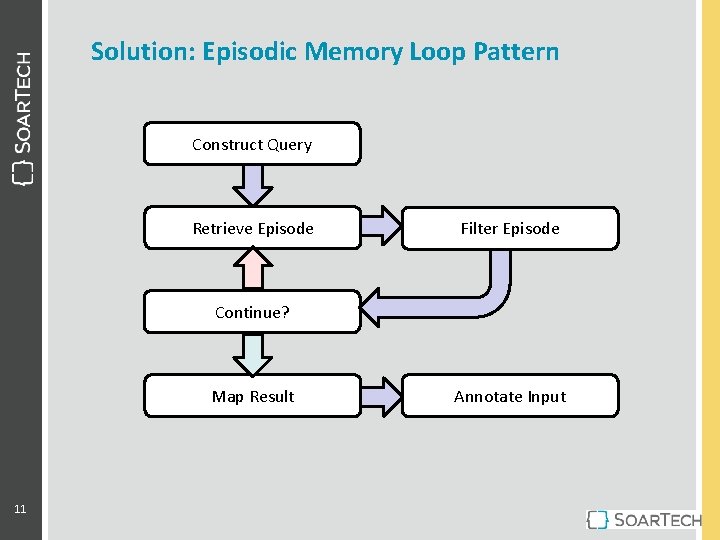

Solution: Episodic Memory Loop Pattern Construct Query Retrieve Episode Filter Episode Continue? Map Result 11 Annotate Input

Time Spent Recreating State • Often create episodic memory queries to answer a specific question • “When I was last in this location, what time of day was it? ” • Retrieving the episode creates a lot of WMEs to recreate the whole state • “Last time you were at this location, it was a Tuesday, it was raining, your fuel was at 90%. . . Yada yada… oh, and it was 5: 35 pm. ” • The time to recreate a state is, in part, based on size of that state • We often look at one small piece of that result and throw it all way • Causing all of those WMEs to immediately be removed • The filtering loop may cause this to happen many times 12

Nuggets • Reduced instances of repeated failure • Agent doesn’t do the same dumb thing twice • Constructed model for environment/plans • Accuracy of estimations improve with experience • Incorporated models of similar environments/plans • Agent came to useful conclusions for new (untested) plans 13

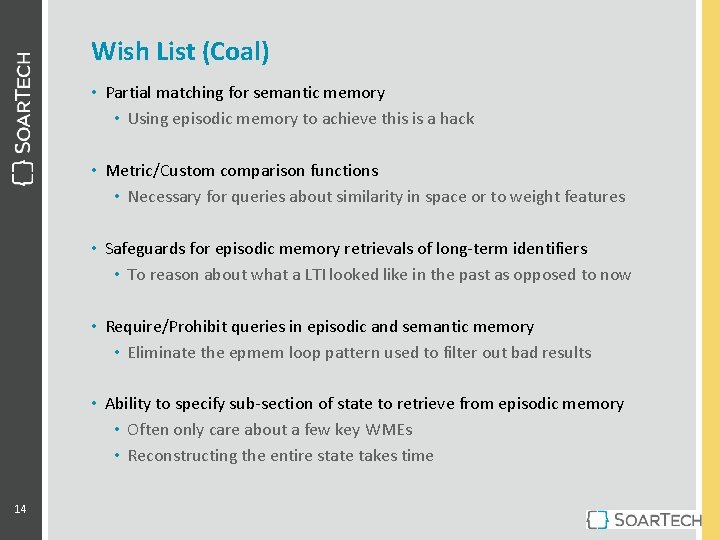

Wish List (Coal) • Partial matching for semantic memory • Using episodic memory to achieve this is a hack • Metric/Custom comparison functions • Necessary for queries about similarity in space or to weight features • Safeguards for episodic memory retrievals of long-term identifiers • To reason about what a LTI looked like in the past as opposed to now • Require/Prohibit queries in episodic and semantic memory • Eliminate the epmem loop pattern used to filter out bad results • Ability to specify sub-section of state to retrieve from episodic memory • Often only care about a few key WMEs • Reconstructing the entire state takes time 14

- Slides: 14